Journal Description

Remote Sensing

Remote Sensing

is an international, peer-reviewed, open access journal about the science and application of remote sensing technology, published semimonthly online by MDPI. The Remote Sensing Society of Japan (RSSJ) and Japan Society of Photogrammetry and Remote Sensing (JSPRS) are affiliated with Remote Sensing and their members receive discounts on the article processing charge.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), Ei Compendex, PubAg, GeoRef, Astrophysics Data System, Inspec, dblp, and other databases.

- Journal Rank: JCR - Q1 (Geosciences, Multidisciplinary) / CiteScore - Q1 (General Earth and Planetary Sciences)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 24.3 days after submission; acceptance to publication is undertaken in 2.6 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Companion journal: Geomatics.

- Journal Cluster of Geospatial and Earth Sciences: Remote Sensing, Geosciences, Quaternary, Earth, Geographies, Geomatics and Fossil Studies.

Impact Factor:

4.1 (2024);

5-Year Impact Factor:

4.8 (2024)

Latest Articles

Multi-Source Remote Sensing-Constrained Evaluation of CMAQ Aerosol Optical Depth over Major Urban Clusters in China

Remote Sens. 2026, 18(8), 1134; https://doi.org/10.3390/rs18081134 - 10 Apr 2026

Abstract

Aerosol optical depth (AOD) is a key indicator for quantifying aerosol radiative effects and evaluating air quality. However, atmospheric chemical transport models often exhibit systematic AOD biases, and model capability for column-integrated optical properties is not always consistent with that for near-surface particulate

[...] Read more.

Aerosol optical depth (AOD) is a key indicator for quantifying aerosol radiative effects and evaluating air quality. However, atmospheric chemical transport models often exhibit systematic AOD biases, and model capability for column-integrated optical properties is not always consistent with that for near-surface particulate matter concentrations. Here, we evaluate AOD simulated by the Community Multiscale Air Quality (CMAQ) model over five major urban clusters in China, including the Beijing-Tianjin-Hebei (BTH) region, Fenwei Plain (FWP), Sichuan Basin (SCB), Yangtze River Delta (YRD), and Pearl River Delta (PRD), using satellite retrievals from the Moderate Resolution Imaging Spectroradiometer (MODIS), ground-based retrievals from the Aerosol Robotic Network (AERONET), and vertical extinction profiles from the Cloud–Aerosol Lidar and Infrared Pathfinder Satellite Observation (CALIPSO). CMAQ reproduces the major spatial patterns and exhibits relatively small biases in near-surface PM2.5. However, it persistently underestimates AOD relative to MODIS, with the largest negative bias occurring in April (i.e., a typical spring month). This contrast indicates a pronounced inconsistency between column-integrated aerosol amount and surface mass density. Relative to AERONET, CMAQ shows a negative bias (NMB = −38%), whereas MODIS shows a positive bias (NMB = 56%), suggesting that both model and retrieval uncertainties contribute to the CMAQ–MODIS disagreements. CALIPSO-constrained vertical analysis further suggests that insufficient extinction above the planetary boundary layer (PBL) is an important contributor to the negative AOD bias, although the relative roles of boundary-layer and upper-layer contributions vary across regions, underscoring the importance of accurately representing aerosol vertical transport and optical processes. These results indicate that evaluations based solely on surface observations may fail to fully capture the overall structure of AOD errors, particularly given the clear differences between near-surface mass concentrations and column optical properties, which vary across regions. This also highlights the importance of improving the representation of aerosol vertical transport and optical processes in chemical transport models.

Full article

(This article belongs to the Section Atmospheric Remote Sensing)

►

Show Figures

Open AccessArticle

Integrated Air–Ground Robotic System for Autonomous Post-Blast Operations in GNSS-Denied Tunnels

by

Goretti Arias-Ferreiro, Marco A. Montes-Grova, Francisco J. Pérez-Grau, Sergio Noriega-del-Rivero, Rafael Herguedas, María T. Lázaro, Amaia Castelruiz-Aguirre, José Carlos Jimenez Fernandez, Mustafa Karahan and Antonio Alonso-Cepeda

Remote Sens. 2026, 18(8), 1133; https://doi.org/10.3390/rs18081133 - 10 Apr 2026

Abstract

Post-blast operations in tunnel construction represent a critical bottleneck due to mandatory downtime and hazardous environmental conditions. This study addresses these challenges by developing and validating an integrated cyber–physical architecture that coordinates an autonomous Unmanned Aerial Vehicle (UAV) and an Autonomous Wheel Loader

[...] Read more.

Post-blast operations in tunnel construction represent a critical bottleneck due to mandatory downtime and hazardous environmental conditions. This study addresses these challenges by developing and validating an integrated cyber–physical architecture that coordinates an autonomous Unmanned Aerial Vehicle (UAV) and an Autonomous Wheel Loader (AWL) under the supervision of a Digital Twin acting as central operational digital interface. Specifically, this technology was designed to access the tunnel, evaluate post-blasting conditions, and initiate operations during mandatory exclusion periods for personnel. The system was validated in a realistic, Global Navigation Satellite System (GNSS)-denied tunnel environment emulating post-detonation visibility constraints. The results demonstrate that the aerial agent successfully navigated and mapped the excavation front in less than 8 min, establishing a shared coordinate system for the ground machinery. Through this collaborative workflow, the autonomous deployment enabled operations to commence 50% to 80% earlier than conventional manual procedures. Furthermore, the system reduced daily operational time by approximately 8%, with an estimated return on financial investment between one and seven months. Overall, the proposed framework eliminates human exposure during high-risk inspections and transforms the fragmented excavation cycle into a continuous, data-driven process.

Full article

(This article belongs to the Special Issue Mobile Laser Scanning Systems for Underground Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Learning Domain-Invariant Prompts and Visual Representations for Cross-Domain Scene Classification

by

Weijie Hong and Chen Wu

Remote Sens. 2026, 18(8), 1132; https://doi.org/10.3390/rs18081132 - 10 Apr 2026

Abstract

Cross-domain scene classification aims to mitigate the distribution discrepancy between domains through domain adaptation techniques. With the rapid advancement of Vision–Language Models (VLMs), utilizing them for cross-domain scene classification has emerged as a promising research direction. Current methods utilize domain-specific prompts to facilitate

[...] Read more.

Cross-domain scene classification aims to mitigate the distribution discrepancy between domains through domain adaptation techniques. With the rapid advancement of Vision–Language Models (VLMs), utilizing them for cross-domain scene classification has emerged as a promising research direction. Current methods utilize domain-specific prompts to facilitate domain adaptation through the CLIP model. However, for remote sensing images, the considerable differences in visual features across domains pose significant challenges for learning domain-specific prompts, leading to suboptimal cross-domain performance. In addition, they cannot reduce the domain shift that exists between the source domain and the target domain. To address the above challenges, we propose a novel cross-domain scene classification method, DIPVR (Domain-Invariant Prompts and Visual Representations), which enhances model performance by learning domain-invariant features for both prompts and visual representations. Specifically, we propose learning domain-invariant prompts and introducing prior knowledge to guide the prompt-learning process. To learn domain-invariant visual representations, we propose a Visual Invariant Learning module that adaptively extracts the shared features between the source and target domains. Finally, visual features are matched with context features to align the domain distributions between the source and target domains. The experimental results on the cross-domain scene classification datasets demonstrate that our proposed method outperforms the baseline methods, achieving optimal cross-domain transfer performance.

Full article

(This article belongs to the Special Issue Advances in Multi-Source Remote Sensing Data Fusion and Analysis)

►▼

Show Figures

Figure 1

Open AccessReview

Applications of Deep Learning in UAV-Based Hyperspectral Remote Sensing: A Review

by

Yue Zhao and Yanchao Zhang

Remote Sens. 2026, 18(8), 1131; https://doi.org/10.3390/rs18081131 - 10 Apr 2026

Abstract

Unmanned aerial vehicle (UAV)-based hyperspectral imaging (HSI) has been increasingly utilized for fine-scale surface characterization and quantitative retrieval due to its capability of capturing dense spectral information at ultra-high spatial resolution. However, UAV-HSI analysis remains challenging due to high dimensionality, noise and within-class

[...] Read more.

Unmanned aerial vehicle (UAV)-based hyperspectral imaging (HSI) has been increasingly utilized for fine-scale surface characterization and quantitative retrieval due to its capability of capturing dense spectral information at ultra-high spatial resolution. However, UAV-HSI analysis remains challenging due to high dimensionality, noise and within-class variability, as well as limited cross-flight consistency under varying acquisition conditions. Deep learning (DL) has therefore attracted growing attention by enabling spectral-spatial representation learning and more robust inference under residual degradations and domain shifts. This review summarizes DL approaches for UAV-HSI analytics and organizes the literature along a complete workflow, from imaging principles, preprocessing, and correction to DL architectures, core tasks, and representative applications, to provide guidance for future research and applications. The reviewed papers demonstrate that DL exhibits great potential and a promising future in UAV-HSI analysis.

Full article

(This article belongs to the Special Issue Recent Progress in Hyperspectral Remote Sensing Data Processing)

Open AccessArticle

DFDP-QuadDiff: A Dual-Frequency Dual-Polarization Quad-Differential Framework for Weak-Echo Ship Target Detection in GNSS-Based Bistatic Synthetic Aperture Radar

by

Gang Yang, Tianwen Zhang, Zhen Chen, Bingxiu Yao, Yucong He, Dunyun He, Tianyi Wei and Qinglin He

Remote Sens. 2026, 18(8), 1130; https://doi.org/10.3390/rs18081130 - 10 Apr 2026

Abstract

Weak-echo ship target detection in GNSS-based bistatic synthetic aperture radar is severely limited by the coupled effects of burst-type strong windows and polarization mismatch, cross-frequency mis-registration, and long-sequence chain drift in dual-frequency dual-polarization observations. To address these issues, this paper proposes DFDP-QuadDiff, a

[...] Read more.

Weak-echo ship target detection in GNSS-based bistatic synthetic aperture radar is severely limited by the coupled effects of burst-type strong windows and polarization mismatch, cross-frequency mis-registration, and long-sequence chain drift in dual-frequency dual-polarization observations. To address these issues, this paper proposes DFDP-QuadDiff, a dual-frequency dual-polarization quad-differential framework for weak-echo ship target detection using B1/B3 × horizontal–horizontal (HH)/vertical–vertical (VV) four-channel complex range-time data. The proposed framework integrates polarization-consistency-driven strong-window suppression, intra-band adaptive polarimetric synthesis, joint delay–Doppler–phase cross-frequency registration, segment-wise Jones drift calibration, and quality-aware final fusion in a unified hierarchical processing chain. In this way, multi-source inconsistencies are progressively constrained and suppressed from the polarization level to the segment level before final accumulation and detection are performed. Experimental results on self-developed four-channel GNSS-S demonstrate that, relative to the best raw single-channel result, the proposed framework increases the median SCR from 6.51 dB to 9.04 dB (+2.53 dB), improves the P10 SCR from −1.76 dB to 3.05 dB (+4.81 dB), and raises the track continuity from 0.85 to 0.97. In addition, the standard deviation of segment-wise delay drift is reduced from 0.97 bin to 0.29 bin, and positive multi-scale accumulation gains are maintained up to the second-long integration range. These results indicate that the proposed framework not only substantially enhances the stability, continuity, and long-time integrability of weak-target responses under low-SNR maritime conditions, but also maintains robust gains under weak-visibility, interference-dominant, and mismatch-sensitive local conditions in the stratified evaluation, thereby establishing a physically interpretable and implementation-ready solution for collaborative weak-target detection in dual-band dual-polarization GNSS-S.

Full article

(This article belongs to the Special Issue Recent Advances in SAR Object Detection)

►▼

Show Figures

Figure 1

Open AccessArticle

A Lightweight and Real-Time Dual-Polarization Fusion Framework for SAR Ship Classification

by

Enrico Gărăiman and Anamaria Radoi

Remote Sens. 2026, 18(8), 1129; https://doi.org/10.3390/rs18081129 - 10 Apr 2026

Abstract

Synthetic Aperture Radar (SAR) ship classification plays a critical role in maritime surveillance, addressing challenges such as the similarity between ship categories, as well as scarcity of annotated datasets and data imbalance. In this paper, a lightweight and real-time dual-branch architecture is proposed

[...] Read more.

Synthetic Aperture Radar (SAR) ship classification plays a critical role in maritime surveillance, addressing challenges such as the similarity between ship categories, as well as scarcity of annotated datasets and data imbalance. In this paper, a lightweight and real-time dual-branch architecture is proposed to effectively address the SAR ship classification task. The proposed approach integrates dual-polarization data within a hybrid convolution-transformer framework to improve classification performance. The model fuses dual-polarization modes, combining convolutional layers for local feature extraction with transformer blocks for global contextual understanding. Evaluations on the OpenSARShip 2.0 dataset show that the proposed model achieves 97.50% accuracy in the 3-class configuration and 93.28% in the 6-class configuration. For the FUSAR-Ship dataset, which does not provide dual-polarization data for the same ship target, the single branch model achieved an accuracy of 94.92% for the 7-class configuration. Despite its dual-branch design, the model maintains computational efficiency, making it suitable for real-time maritime monitoring applications. The results demonstrate the effectiveness of polarization-aware hybrid models for scalable and robust SAR ship classification.

Full article

(This article belongs to the Special Issue Ship Imaging, Detection and Recognition for High-Resolution SAR)

►▼

Show Figures

Figure 1

Open AccessArticle

Deformation Characteristics of Lumei Landslide in the Tibetan Plateau Combined with PS-InSAR and SBAS-InSAR

by

Tao Wen, Xueqing Shi, Yankun Wang and Yunpeng Yang

Remote Sens. 2026, 18(8), 1128; https://doi.org/10.3390/rs18081128 - 10 Apr 2026

Abstract

Due to the highly complex geological environment of the Tibetan Plateau, landslides occur frequently, and signs of ancient landslide reactivation are widespread, posing significant threats to major infrastructure and local communities. Taking the Lumei landslide in Cuomei County as a case study, detailed

[...] Read more.

Due to the highly complex geological environment of the Tibetan Plateau, landslides occur frequently, and signs of ancient landslide reactivation are widespread, posing significant threats to major infrastructure and local communities. Taking the Lumei landslide in Cuomei County as a case study, detailed field investigations were conducted, and Sentinel-1A SAR data (84 scenes from January 2017 to December 2023) were collected to characterize surface deformation. Both PS-InSAR and SBAS-InSAR methods were applied for long-term time-series monitoring, and the results of the two techniques were comparatively analyzed. Furthermore, the influencing factors of landslide deformation were explored on the basis of analyzing the deformation characteristics. The findings reveal that the surface deformation rate exhibits significant spatial heterogeneity, with deformation values decreasing progressively outward from the central region. The surface deformation rates obtained from PS-InSAR and SBAS-InSAR range from −36.55 to −21.81 mm/yr and from −30 to −10 mm/yr, respectively. Both methods indicate a general subsidence trend along the line-of-sight (LOS) direction and show strong spatial consistency and high correlation. By combining the high-precision point results obtained from PS-InSAR and the spatially continuous surface results derived from SBAS-InSAR, the fine spatial deformation characteristics of the Lumei landslide are revealed. The research results can provide an important reference for landslide monitoring, disaster prevention and mitigation in this region.

Full article

(This article belongs to the Special Issue Remote Sensing for Landslide Investigation: From Ground Deformation Mapping to Hazard Assessment)

Open AccessReview

Remote Sensing of Water: The Observation-to-Inference Arc Across Six Decades and Toward an AI-Native Future

by

Daniel P. Ames

Remote Sens. 2026, 18(8), 1127; https://doi.org/10.3390/rs18081127 - 10 Apr 2026

Abstract

Over six decades, satellite remote sensing of water resources has evolved from manual interpretation of weather photographs to AI systems that learn hydrologic predictions directly from satellite imagery. This review traces that evolution through the observation-to-inference arc—a framework for the progressively tightening coupling

[...] Read more.

Over six decades, satellite remote sensing of water resources has evolved from manual interpretation of weather photographs to AI systems that learn hydrologic predictions directly from satellite imagery. This review traces that evolution through the observation-to-inference arc—a framework for the progressively tightening coupling between what satellites observe and what hydrologists infer. Using illustrative applications in precipitation, evapotranspiration, soil moisture, snow, surface water, and groundwater, we show how early observations (1960–1985) remained disconnected from operational hydrology; how calibrated retrieval algorithms (1985–2000) established a one-way pipeline from satellites to models; how operational infrastructure (2000–2015), anchored by MODIS, GRACE, GPM, and Sentinel, achieved assimilative coupling through computational feedback between models and observations; and how deep learning (2015–present) is beginning to collapse this pipeline. Multi-source data fusion has been a recurring enabler at each stage. We articulate a four-level AI vision and research trajectory, from AI-assisted interpretation through AI-native retrieval and AI-driven inference to autonomous Earth observation intelligence. Persistent challenges in mission continuity, calibration, equity of access, and translating satellite-derived information into operational water management decisions provide essential context for evaluating both the promise and limits of this trajectory.

Full article

(This article belongs to the Special Issue Mapping the Blue: Remote Sensing in Water Resource Management)

Open AccessArticle

Numerical Simulation of a Heavy Rainfall Event in Sichuan Using CMONOC Data Assimilation

by

Xu Tang, Cheng Zhang, Angdao Wu, Rui Sun and Jiayan Liu

Remote Sens. 2026, 18(8), 1126; https://doi.org/10.3390/rs18081126 - 10 Apr 2026

Abstract

►▼

Show Figures

This study evaluates the impact of assimilating the Crustal Movement Observation Network of China (CMONOC) global navigation satellite system (GNSS) tropospheric products on heavy-rainfall simulation over the complex terrain of the Sichuan Basin. Using the Weather Research and Forecasting model with the WRF

[...] Read more.

This study evaluates the impact of assimilating the Crustal Movement Observation Network of China (CMONOC) global navigation satellite system (GNSS) tropospheric products on heavy-rainfall simulation over the complex terrain of the Sichuan Basin. Using the Weather Research and Forecasting model with the WRF Data Assimilation (WRF/WRFDA) three-dimensional variational (3DVar) system, we conducted a control (CTRL) experiment and a data-assimilation (DA) experiment for a primary heavy-rainfall event during 10–12 August 2020. The DA experiment applied 6 h cycling assimilation of station-based zenith total delay (ZTD) and precipitable water vapor (PWV). Compared with CTRL, DA improved the placement of the primary rainband and the depiction of peak rainfall. On 10 August, the observed rainfall core (~40 mm) over the northwestern basin was underestimated in CTRL (~15 mm) but was strengthened in DA (~25 mm). Hourly verification at a threshold of 2 mm h−1 showed a higher maximum Threat Score (TS) in DA (0.292) than in CTRL (0.250), and the largest instantaneous gain reached 0.061. For 72 h accumulated precipitation, TS was higher in DA across multiple thresholds (≥10, ≥25, ≥50, and ≥100 mm), with the most pronounced improvement for heavier rainfall categories. Diagnostic analysis indicates that GNSS assimilation introduces dynamically consistent low-level moistening and strengthened convergence at 850 hPa, together with a better-aligned vertical ascent structure during the key stage of the event. An additional heavy-rainfall event during 21–23 August 2021 was further examined as a compact robustness test, and the results showed a generally consistent improvement in precipitation distribution and TS after GNSS assimilation. Overall, the present results suggest that cycling assimilation of CMONOC GNSS ZTD/PWV products can provide effective moisture constraints and improve heavy-rainfall simulation over the Sichuan Basin in the examined cases.

Full article

Figure 1

Open AccessArticle

Spatial Heterogeneity and Drivers of Vertical Error in Global DEMs: An Explainable Machine Learning Approach in Complex Subtropical Coastal Zones

by

Junhui Chen, Fei Tang, Heshan Lin, Bo Huang and Xueping Lin

Remote Sens. 2026, 18(8), 1125; https://doi.org/10.3390/rs18081125 - 10 Apr 2026

Abstract

►▼

Show Figures

Digital elevation models (DEMs) are foundational for critical tasks such as flood inundation simulation, disaster risk assessment, and ecosystem monitoring in coastal zones, yet their vertical accuracy is significantly compromised by complex terrain and surface characteristics. This study quantitatively decomposes the vertical errors

[...] Read more.

Digital elevation models (DEMs) are foundational for critical tasks such as flood inundation simulation, disaster risk assessment, and ecosystem monitoring in coastal zones, yet their vertical accuracy is significantly compromised by complex terrain and surface characteristics. This study quantitatively decomposes the vertical errors of three 30 m global DEMs (COP30, NASADEM, and AW3D30) across the subtropical coastal region of Southeast China using ICESat-2 ATL08 data as a reference. By integrating an eXtreme Gradient Boosting (XGBoost) model with SHapley Additive exPlanations (SHAP), we successfully decoupled systematic biases from random noise. The results show that NASADEM achieved the lowest RMSE (7.775 m), followed by COP30 and AW3D30. While the Terrain Ruggedness Index (TRI) and categorically encoded Land Cover were identified as the universally dominant error drivers across all datasets, explainable analysis revealed distinct secondary mechanisms: X-band COP30 is notably susceptible to canopy height, exhibiting significant positive bias in forests exceeding 15 m; C-band NASADEM shows a systematic bias related to topographic position, typically overestimating ridges and underestimating valleys; and optical AW3D30 is significantly affected by stereo-matching errors. Furthermore, the analysis quantified a systematic error component of ~40%. These findings provide a data-driven basis for DEM selection and highlight that accuracy improvements should prioritize vegetation removal for radar DEMs and enhanced stereo-matching for optical models.

Full article

Figure 1

Open AccessArticle

TDA-DARKNet: A Deep Learning Model Based on Dual-Polarization Radar Data for Tornado Detection

by

Guoxiu Zhang, Qiangyu Zeng, Fugui Zhang, Hao Wang and Tiantian Yu

Remote Sens. 2026, 18(8), 1124; https://doi.org/10.3390/rs18081124 - 10 Apr 2026

Abstract

Tornado is a localized, small-scale severe convective weather phenomenon characterized by extreme destructiveness. Tornado detecting and warning mainly rely on Doppler weather radar, which identifies and tracks tornadoes by recognizing the tornado vortex signature and supercells in radar data. Artificial intelligence technology has

[...] Read more.

Tornado is a localized, small-scale severe convective weather phenomenon characterized by extreme destructiveness. Tornado detecting and warning mainly rely on Doppler weather radar, which identifies and tracks tornadoes by recognizing the tornado vortex signature and supercells in radar data. Artificial intelligence technology has been applied to tornado recognition in recent years. However, existing monitoring methods, especially those using unsupervised learning algorithms, still have limited recognition accuracy and timely warning, and usually struggle to strike a balance between detection accuracy and false alarm rate. A novel tornado detection algorithm TDA-DARKNet has been proposed to address the aforementioned issues. The algorithm integrates a dual attention mechanism, dense residual connections, and Kolmogorov–Arnold network (KAN). A tornado dataset suitable for deep learning has been formed, which utilizes features including radial velocity, reflectivity, velocity spectrum width, differential reflectivity, and correlation coefficient in radar data. The TDA-DARKNet algorithm was trained and tested using the tornado dataset, and evaluated in tornado cases. The experimental results show that TDA-DARKNet improves the detection probability and extends the lead time to a maximum of 42 min in strong tornado situations, while achieving 97.11% accuracy, 95.08% precision, indicating strong overall identification performance. In addition, by directly leveraging radar-based data for tornado identification, the algorithm eliminates the need for manual feature engineering, simplifies data processing, reduces complexity, and further enhances detection effectiveness.

Full article

(This article belongs to the Topic AI for Natural Disasters Detection, Prediction and Modeling)

►▼

Show Figures

Figure 1

Open AccessArticle

A Hapke Physics-Guided Deep Autoencoder for Lunar Hyperspectral Unmixing

by

Qian Lin, Chengbao Liu, Dongxu Han, Wanyue Liu, Zheng Bo and Peng Zhang

Remote Sens. 2026, 18(8), 1123; https://doi.org/10.3390/rs18081123 - 10 Apr 2026

Abstract

►▼

Show Figures

Accurate mapping of lunar mineral distributions is essential for understanding the Moon’s origin and evolution and for enabling future in situ resource utilization (ISRU). Yet mineralogical inversion from orbital hyperspectral observations remains challenging due to limited spatial resolution, complex photometric conditions, and sparse

[...] Read more.

Accurate mapping of lunar mineral distributions is essential for understanding the Moon’s origin and evolution and for enabling future in situ resource utilization (ISRU). Yet mineralogical inversion from orbital hyperspectral observations remains challenging due to limited spatial resolution, complex photometric conditions, and sparse returned samples. We present PGU-Net, a Hapke physics-guided deep autoencoder for nonlinear blind unmixing of lunar hyperspectral data. The encoder adopts a dual-attention design to enhance discriminative spectral features. The decoder performs linear mixing in the SSA domain and then reconstructs reflectance through a lightweight nonlinear module, while physics-consistent losses encourage radiative-transfer plausibility. Experiments on a synthetic lunar regolith dataset demonstrate that PGU-Net achieves consistently lower endmember SAD and abundance aRMSE than representative baselines across multiple noise levels. Additional validations on the terrestrial AVIRIS Cuprite benchmark and on Moon Mineralogy Mapper (

Figure 1

Open AccessArticle

Comprehensive Evaluation of Multi-Version Global Satellite Mapping of Precipitation (GSMaP) Products over the Qinghai–Tibetan Plateau

by

Haowen Li, Yunde Cao, Yinan Guo, Chun Zhou, Lingling Wu, Congxiang Fan, Chuanjie Yan and Li Zhou

Remote Sens. 2026, 18(8), 1122; https://doi.org/10.3390/rs18081122 - 10 Apr 2026

Abstract

►▼

Show Figures

The terrain and climate of the Qinghai–Tibetan Plateau make it hard to assess satellite precipitation. GSMaP (Global Satellite Mapping of Precipitation) is a widely used rainfall dataset, but direct comparisons of its versions and products over the Plateau are still limited. In this

[...] Read more.

The terrain and climate of the Qinghai–Tibetan Plateau make it hard to assess satellite precipitation. GSMaP (Global Satellite Mapping of Precipitation) is a widely used rainfall dataset, but direct comparisons of its versions and products over the Plateau are still limited. In this study, we evaluate four GSMaP products—Gauge, GNRT, MVK and NRT—across four versions (v05–v08) using daily station precipitation data from 2001 to 2022 as the reference. We assess both precipitation amount and precipitation event detection. The analysis is carried out at the station scale and then examined by month, season, year, rainfall intensity and space. We also compare regional patterns across the Plateau. The results show that GSMaP performance generally improves in later versions. Among them, v08 is usually more stable and more consistent, especially for gauge-corrected products. This improvement appears not only in better agreement with station data but also in smaller differences among stations for some products. Still, the size of the improvement is not the same for all products, seasons, rainfall classes and regions. The improvement is more clear in wetter areas and in warm seasons. By contrast, uncertainty is still relatively large in cold seasons, under strong rainfall and in the high-elevation interior of the Plateau. Non-gauge products also show wider variation than the Gauge product, which suggests that gauge correction still plays an important role in improving consistency. In general, version updates help improve GSMaP performance under some conditions, but the gains are not the same across different climate settings, rainfall intensities, or elevation zones. This study provides a systematic evaluation of GSMaP over the Qinghai–Tibetan Plateau for 2001–2022 and offers practical support for choosing and using GSMaP products in complex terrain.

Full article

Figure 1

Open AccessArticle

High-Resolution Mapping of Thermal Effluents in Inland Streams and Coastal Seas Using UAV-Based Thermal Infrared Imagery

by

Sunyang Baek, Junhyeok Jung and Hyung-Sup Jung

Remote Sens. 2026, 18(8), 1121; https://doi.org/10.3390/rs18081121 - 9 Apr 2026

Abstract

Monitoring thermal effluent is critical for assessing aquatic ecosystem health, yet traditional satellite remote sensing and in situ point measurements often fail to capture fine-scale thermal dynamics in narrow streams and complex coastal areas due to spatiotemporal resolution limitations. This study establishes a

[...] Read more.

Monitoring thermal effluent is critical for assessing aquatic ecosystem health, yet traditional satellite remote sensing and in situ point measurements often fail to capture fine-scale thermal dynamics in narrow streams and complex coastal areas due to spatiotemporal resolution limitations. This study establishes a high-precision surface water temperature mapping protocol using a low-cost Unmanned Aerial Vehicle (UAV) equipped with an uncooled thermal infrared sensor (FLIR Vue Pro R) to overcome these observational gaps. We investigated two distinct hydrological environments—an inland stream and a coastal sea—to provide initial evidence for the applicability of an in situ-based linear regression calibration model across contrasting aquatic settings. The initial uncalibrated radiometric temperatures exhibited significant bias errors reaching up to 9.2 °C in the stream and 9.4 °C in the coastal area, primarily driven by atmospheric attenuation and environmental factors. However, the proposed calibration method dramatically reduced these discrepancies, achieving Root Mean Square Errors (RMSE) of 0.43 °C and 0.42 °C, respectively, with high determination coefficients (R2 > 0.87). The derived high-resolution thermal maps successfully visualized the detailed diffusion patterns of thermal plumes, revealing a steep temperature gradient of approximately 13 °C in the stream discharge zone and a distinct 5 °C elevation in the coastal effluent area relative to the ambient water. These findings demonstrate that UAV-based thermal remote sensing, when coupled with a rigorous radiometric calibration strategy, can serve as a cost-effective and reliable tool for environmental monitoring, bridging the critical scale gap between local point measurements and regional satellite observations.

Full article

(This article belongs to the Section Engineering Remote Sensing)

►▼

Show Figures

Figure 1

Open AccessArticle

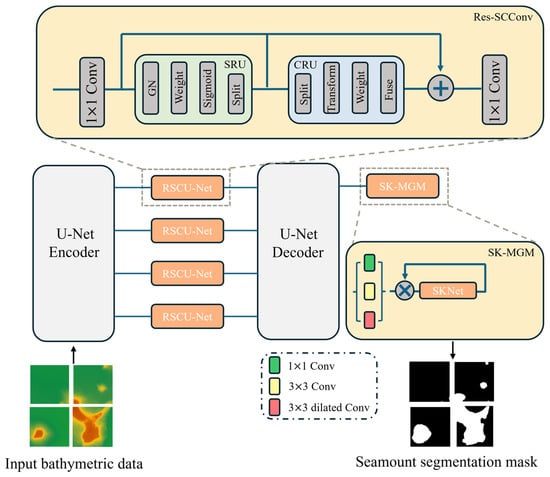

RSCU-Net: A Spatial–Channel Reconstruction U-Net for Seamount Segmentation Using GEBCO Bathymetry

by

Faran Lin, Qingsheng Guan, Tao Zhang and Hongqin Liu

Remote Sens. 2026, 18(8), 1120; https://doi.org/10.3390/rs18081120 - 9 Apr 2026

Abstract

Accurate seamount identification is important for understanding submarine tectonic and magmatic processes and for supporting deep-sea geomorphological analysis. However, seamount recognition faces a severe class imbalance as abyssal plains constitute the majority of deep-sea topography while seamounts occupy only a minimal portion, which

[...] Read more.

Accurate seamount identification is important for understanding submarine tectonic and magmatic processes and for supporting deep-sea geomorphological analysis. However, seamount recognition faces a severe class imbalance as abyssal plains constitute the majority of deep-sea topography while seamounts occupy only a minimal portion, which makes accurate segmentation difficult. To address this issue, this study proposes an improved U-Net architecture, termed Spatial–Channel Reconstruction U-Net (RSCU-Net), built upon a Residual Spatial–Channel Reconstruction Convolution (Res-SCConv) module. The Res-SCConv module is embedded into each skip connection of the U-Net architecture. The model combines a Spatial Reconstruction Unit (SRU) and a Channel Reconstruction Unit (CRU) to suppress dominant background interference and reduce channel redundancy, and further introduces a Selective Kernel-based Multi-scale Gradient Module (SK-MGM) to improve boundary refinement. Experiments on the GEBCO 2023 bathymetric dataset, including 696 training samples and 88 independent test samples, show that RSCU-Net achieves an Accuracy of 0.938, Recall of 0.833, F1-score of 0.720, and IoU of 0.563. Compared with the baseline U-Net, Recall improves from 0.741 to 0.833 and IoU from 0.405 to 0.563. Additional validation on the Suda Seamount dataset yields an Accuracy of 0.987, F1-score of 0.958, and IoU of 0.920, demonstrating the robustness and generalization capability of the proposed method.

Full article

(This article belongs to the Special Issue Artificial Intelligence for Ocean Remote Sensing (Second Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

EF-YOLO: Detecting Small Targets in Early-Stage Agricultural Fires via UAV-Based Remote Sensing

by

Jun Tao, Zhihan Wang, Jianqiu Wu, Yunqin Li, Tomohiro Fukuda and Jiaxin Zhang

Remote Sens. 2026, 18(8), 1119; https://doi.org/10.3390/rs18081119 - 9 Apr 2026

Abstract

Early detection of agricultural fires with Unmanned Aerial Vehicles (UAVs) is important for environmental safety, yet it remains difficult because ignition cues are extremely small, smoke patterns vary widely, and farmland scenes often contain strong background interference such as specular reflections. Model development

[...] Read more.

Early detection of agricultural fires with Unmanned Aerial Vehicles (UAVs) is important for environmental safety, yet it remains difficult because ignition cues are extremely small, smoke patterns vary widely, and farmland scenes often contain strong background interference such as specular reflections. Model development is further constrained by the scarcity of data from the early ignition stage. To address these challenges, we propose a joint data and model optimization framework. We first build a hybrid dataset through an ROI-guided synthesis pipeline, in which latent diffusion models are used to insert high-fidelity, carefully screened fire samples into real farmland backgrounds. We then introduce EF-YOLO, a detector designed for high sensitivity to small targets. The network uses SPD-Conv to reduce feature loss during spatial downsampling and includes a high-resolution P2 head to improve the detection of minute objects. To reduce background clutter, a Dual-Path Frequency–Spatial Enhancement (DP-FSE) module serves as a lightweight statistical surrogate that extracts global contextual cues and local salient features in parallel, thereby suppressing high-frequency noise. Experimental results show that EF-YOLO achieves an APs of 40.2% on sub-pixel targets, exceeding the YOLOv8s baseline by 15.4 percentage points. With a recall of 88.7% and a real-time inference speed of 78 FPS, the proposed framework offers a strong balance between detection performance and efficiency, making it well suited for edge-deployed agricultural fire early-warning systems.

Full article

Open AccessArticle

An Improved Framework for Forest Fire Severity Assessment in Mountainous Areas Based on the dNBR Index: A Case Study from Central Yunnan, China

by

Li Han, Yun Liu, Qiuhua Wang, Tengteng Long, Ning Lu, Leiguang Wang and Weiheng Xu

Remote Sens. 2026, 18(8), 1118; https://doi.org/10.3390/rs18081118 - 9 Apr 2026

Abstract

Forest fires pose a considerable threat to the security of ecosystems and human society, rendering accurate assessments of fire severity critical for ecological recovery and effective fire management. The differenced Normalized Burn Ratio (dNBR) has been employed to evaluate forest fire severity; however,

[...] Read more.

Forest fires pose a considerable threat to the security of ecosystems and human society, rendering accurate assessments of fire severity critical for ecological recovery and effective fire management. The differenced Normalized Burn Ratio (dNBR) has been employed to evaluate forest fire severity; however, it presents notable uncertainties owing to variations in data sources, temporal phases, and environmental factors. To address these challenges, this study analyzed 10 forest fires occurring between 2006 and 2023 in central Yunnan Province, China. First, a rapid sampling method utilizing very high-resolution imagery was developed to assess the performance of dNBR classification under varying conditions. Second, the study identified the optimal post-fire observation window and compared classification thresholds and accuracy between Landsat and Sentinel-2 imagery in assessing fire severity. Finally, the research explored the impacts of topographic correction and pre-fire vegetation differences on classification outcomes. The findings revealed the following: (1) Imagery captured in the spring of the fire year, characterized by minimal vegetation interference, demonstrated the highest classification stability and superior capability for identifying high-severity burns. (2) Landsat outperformed Sentinel-2 in regional accuracy (0.92 vs. 0.87), and direct threshold transfer between sensors resulted in a 39% underestimation of high-severity areas, underscoring the necessity for sensor-specific calibration. (3) Topographic correction provided limited practical benefits, merely yielding a marginal improvement in accuracy (+1.44%) with the SCS+C model in steep terrain, and was generally unnecessary. (4) The influence of pre-fire vegetation was discovered to be threshold-dependent: dNBR performed reliably in forests with pre-fire NDVI > 0.5, while adjusted approaches were solely recommended for sparse or heterogeneous vegetation. Overall, this study establishes a systematic framework for optimizing dNBR-based severity assessment, enhancing its accuracy and operational utility in forest fire management.

Full article

(This article belongs to the Special Issue Forest Fire Monitoring Using Remotely Sensed Imagery)

►▼

Show Figures

Figure 1

Open AccessArticle

A Hyperspectral Simulation-Driven Framework for Sub-Pixel Impervious Surface Mapping: A Case Study Using Landsat Imagery

by

Chunxiang Wang, Ping Wang and Yanfang Ming

Remote Sens. 2026, 18(8), 1117; https://doi.org/10.3390/rs18081117 - 9 Apr 2026

Abstract

►▼

Show Figures

The rapid advancement of global urbanization has rendered Impervious Surface Area (ISA) a critical indicator for monitoring urban ecological and thermal environments. However, traditional sub-pixel ISA estimation methods, such as Spectral Mixture Analysis (SMA) and machine learning regression, are significantly constrained by spectral

[...] Read more.

The rapid advancement of global urbanization has rendered Impervious Surface Area (ISA) a critical indicator for monitoring urban ecological and thermal environments. However, traditional sub-pixel ISA estimation methods, such as Spectral Mixture Analysis (SMA) and machine learning regression, are significantly constrained by spectral variability and a scarcity of high-quality training samples. To address these limitations, this study proposes a novel sub-pixel Impervious Surface Fraction (ISF) retrieval framework leveraging high-resolution airborne hyperspectral data. By simulating physically consistent multispectral reflectance and generating high-accuracy reference ISF via spatial aggregation, we construct a robust and noise-resistant training dataset. Experimental results on Landsat data demonstrate that this simulation-based approach effectively mitigates sample uncertainty, significantly enhances retrieval accuracy, and accurately preserves spatial details and boundary structures. Theoretically, the framework exhibits strong cross-sensor adaptability, as it allows for the generation of sensor-consistent training datasets for various medium-resolution satellite platforms by simply substituting the target sensor’s spectral response functions. Combined with this inherent scalability and the potential for cross-sensor model migration, this method provides a reliable and systematic paradigm for long-term, high-precision ISF mapping across multiple satellite constellations.

Full article

Figure 1

Open AccessArticle

GenGeo: Robust Cross-View Geo-Localization via Foundation Model and Dynamic Feature Aggregation

by

Rong Wang, Wen Yuan, Wu Yuan, Tong Liu, Xiao Xi and Yaokai Zhu

Remote Sens. 2026, 18(8), 1116; https://doi.org/10.3390/rs18081116 - 9 Apr 2026

Abstract

Cross-view geo-localization (CVGL) aims to match ground-level images with geo-tagged aerial imagery for precise localization, but remains challenging due to severe viewpoint discrepancies, partial correspondence, and significant domain shifts across geographic regions. While existing methods achieve high accuracy within specific datasets, their generalization

[...] Read more.

Cross-view geo-localization (CVGL) aims to match ground-level images with geo-tagged aerial imagery for precise localization, but remains challenging due to severe viewpoint discrepancies, partial correspondence, and significant domain shifts across geographic regions. While existing methods achieve high accuracy within specific datasets, their generalization ability to unseen environments is limited. In this paper, we propose GenGeo, a unified framework that integrates vision foundation model representations with a matching-aware aggregation mechanism to address these challenges. Specifically, we leverage DINOv2 to extract semantically rich and transferable features, and revisit the SALAD aggregation module in the context of CVGL. By employing a shared clustering strategy, the proposed framework projects cross-view features into a unified assignment space, enabling implicit semantic alignment across views, while the dustbin mechanism effectively filters unmatched and non-informative regions arising from partial correspondence. Extensive experiments on three large-scale benchmarks (CVUSA, CVACT, and VIGOR) demonstrate that GenGeo achieves state-of-the-art performance in cross-dataset generalization and consistently improves robustness under severe domain shifts and spatial misalignment. Notably, our method outperforms the baseline by 14.65% in Top-1 Recall on the CVUSA-to-CVACT transfer task. These results highlight the effectiveness of combining foundation model representations with matching-aware aggregation, and suggest that enforcing semantic consistency in a shared assignment space is a promising direction for generalizable cross-view geo-localization.

Full article

(This article belongs to the Section AI Remote Sensing)

►▼

Show Figures

Figure 1

Open AccessArticle

SGH-Net: An Efficient Hierarchical Fusion Network with Spectrally Guided Attention for Multi-Modal Landslide Segmentation

by

Jing Wang, Haiyang Li, Shuguang Wu, Yukui Yu, Guigen Nie and Zhaoquan Fan

Remote Sens. 2026, 18(8), 1115; https://doi.org/10.3390/rs18081115 - 9 Apr 2026

Abstract

►▼

Show Figures

Accurate landslide segmentation from remote sensing imagery is important for geohazard assessment and emergency response, yet it remains challenging because landslide regions are often spectrally confused with bare soil, riverbeds, shadows, and disturbed surfaces while also suffering from severe foreground–background imbalance. To address

[...] Read more.

Accurate landslide segmentation from remote sensing imagery is important for geohazard assessment and emergency response, yet it remains challenging because landslide regions are often spectrally confused with bare soil, riverbeds, shadows, and disturbed surfaces while also suffering from severe foreground–background imbalance. To address these issues, we propose an Efficient Spectrally Guided Hierarchical Fusion Network (SGH-Net) for multi-modal landslide segmentation. Instead of directly concatenating heterogeneous inputs at the image level, SGH-Net adopts an asymmetric encoder–decoder design in which a pretrained EfficientNet-B4 extracts RGB features, while two lightweight guidance encoders capture complementary multispectral band and DEM-derived terrain cues. These guidance features are progressively injected into the RGB backbone through multi-stage Guided Attention Blocks, enabling selective feature recalibration and reducing cross-modal interference. In addition, a hybrid Dice–Focal loss is used to alleviate class imbalance. Experiments on the Landslide4Sense dataset show that SGH-Net achieves the best overall performance among the compared methods under the adopted evaluation protocol, reaching 81.15% IoU and a 77.86% F1-score. Compared with representative multi-modal baselines, the proposed method delivers more accurate boundary delineation and fewer false alarms while maintaining favorable model complexity. These results indicate that modality-guided hierarchical fusion is an effective and efficient strategy for multi-modal landslide segmentation.

Full article

Figure 1

Journal Menu

► ▼ Journal Menu-

- Remote Sensing Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Photography Exhibition

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Land, Remote Sensing, Sensors, Applied Sciences

Recent Progress and Applications in Quantitative Remote Sensing

Topic Editors: Huawei Wan, Yu Wang, Hongmin Zhou, Longhui LuDeadline: 30 April 2026

Topic in

Applied Sciences, Architecture, Buildings, CivilEng, Energies, Materials, Remote Sensing, Sustainability

Recent Studies and Innovative Approaches to Sustainable Communities, Buildings, Cities and Infrastructure

Topic Editors: Samad Sepasgozar, Sara Shirowzhan, Mohammed Al-MhdawiDeadline: 20 May 2026

Topic in

Sustainability, Buildings, Sensors, Remote Sensing, Land, Climate, Atmosphere

Advances in Low-Carbon, Climate-Resilient, and Sustainable Built Environment

Topic Editors: Baojie He, Stephen Siu Yu Lau, Deshun Zhang, Andreas Matzarakis, Fei GuoDeadline: 25 May 2026

Topic in

Atmosphere, Forests, Geosciences, Hydrology, Land, Remote Sensing, Sustainability

Karst Environment and Global Change—Second Edition

Topic Editors: Xiaoyong Bai, Yongjun Jiang, Jian Ni, Xubo Gao, Yuemin Yue, Jiangbo Gao, Junbing Pu, Hu Ding, Qiong Xiao, Zhicai ZhangDeadline: 16 June 2026

Conferences

Special Issues

Special Issue in

Remote Sensing

Quantifying Greenhouse Gases Emissions from Remote Sensing Perspective

Guest Editor: Christoffer KaroffDeadline: 14 April 2026

Special Issue in

Remote Sensing

Advances in UAV-Based Remote Sensing for Climate-Smart Agriculture

Guest Editors: Hongquan Wang, Taifeng Dong, Liming HeDeadline: 14 April 2026

Special Issue in

Remote Sensing

Applications of Multimodal Remote Sensing Models in Ecological Environment Monitoring

Guest Editors: R. Douglas Ramsey, Jin Wu, Jing WangDeadline: 14 April 2026

Special Issue in

Remote Sensing

Coastal Dynamics Monitoring Using Remote Sensing Data

Guest Editors: Huaguo Zhang, Wenting Cao, Nurjannah NurdinDeadline: 14 April 2026

Topical Collections

Topical Collection in

Remote Sensing

The VIIRS Collection: Calibration, Validation, and Application

Collection Editors: Xi Shao, Xiaoxiong Xiong, Changyong Cao

Topical Collection in

Remote Sensing

Sentinel-2: Science and Applications

Collection Editors: Clement Atzberger, Jadu Dash, Olivier Hagolle, Jochem Verrelst, Quinten Vanhellemont, Jordi Inglada, Tuomas Häme

Topical Collection in

Remote Sensing

Discovering A More Diverse Remote Sensing Discipline

Collection Editors: Karen Joyce, Meghan Halabisky, Cristina Gómez, Michelle Kalamandeen, Gopika Suresh, Kate C. Fickas

Topical Collection in

Remote Sensing

Feature Paper Collection on Remote Sensing in Geology, Geomorphology and Hydrology Section

Collection Editors: Francesca Ardizzone, Giuseppe Casula