Topical Advisory Panel applications are now closed. Please contact the Editorial Office with any queries.

-

Design and Evaluation of a Serious Game Prototype to Stimulate Pre-Reading Fluency Processes in Paediatric Hospital Classrooms

Design and Evaluation of a Serious Game Prototype to Stimulate Pre-Reading Fluency Processes in Paediatric Hospital Classrooms -

Analyzing Player Behavior in a VR Game for Children Using Gameplay Telemetry

Analyzing Player Behavior in a VR Game for Children Using Gameplay Telemetry -

Assessment of the Validity and Reliability of Reaction Speed Measurements Using the Rezzil Player Application in Virtual Reality

Assessment of the Validity and Reliability of Reaction Speed Measurements Using the Rezzil Player Application in Virtual Reality

Journal Description

Multimodal Technologies and Interaction

Multimodal Technologies and Interaction

is an international, peer-reviewed, open access journal on multimodal technologies and interaction published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Inspec, dblp Computer Science Bibliography, and other databases.

- Journal Rank: JCR - Q2 (Computer Science, Cybernetics) / CiteScore - Q1 (Neuroscience (miscellaneous))

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.7 days after submission; acceptance to publication is undertaken in 4.6 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Journal Cluster of Artificial Intelligence: AI, AI in Medicine, Algorithms, BDCC, MAKE, MTI, Stats, Virtual Worlds and Computers.

Impact Factor:

2.4 (2024);

5-Year Impact Factor:

2.7 (2024)

Latest Articles

Augmented Reality’s Impact on Student Creativity in Design and Technology: An Immersive Learning Study

Multimodal Technol. Interact. 2026, 10(3), 25; https://doi.org/10.3390/mti10030025 - 4 Mar 2026

Abstract

This quasi-experimental study examined the effectiveness of Augmented Reality (AR)-enhanced instruction on creativity development in Malaysian Design and Technology education. Forty-six, fifteen-year-old female students were assigned to AR-enhanced (n = 23) or traditional instruction (n = 23) groups for a four-week

[...] Read more.

This quasi-experimental study examined the effectiveness of Augmented Reality (AR)-enhanced instruction on creativity development in Malaysian Design and Technology education. Forty-six, fifteen-year-old female students were assigned to AR-enhanced (n = 23) or traditional instruction (n = 23) groups for a four-week Mechatronic Design unit. Creativity was assessed using an adapted Torrance Tests of Creative Thinking-Figural (TTCT-F) instrument with expert validation and independent scoring by three raters. Bootstrapped ANCOVA (5000 iterations) controlling for pretest differences revealed significant improvements across all Guilford creativity components in the AR group: Elaboration (F = 27.093, p < 0.001, η2 = 0.387), Originality (F = 20.445, p < 0.001, η2 = 0.322), Fluency (F = 17.896, p < 0.001, η2 = 0.294), and Flexibility (F = 7.593, p = 0.008, η2 = 0.150). The differential effect pattern suggests AR operates through multiple mechanisms, primarily socio-constructivist collaborative scaffolding, followed by motivational enhancement and cognitive load reduction. These findings demonstrate AR’s substantial potential for creativity development in Design and Technology education, particularly for collaborative elaboration and generative ideation. However, single gender sampling, brief intervention duration, and quasi-experimental design limit generalizability, warranting future research with diverse populations and extended interventions.

Full article

Open AccessSystematic Review

From the Reality–Virtuality Continuum to the XR Ecosystem: A Systematic Literature Review of Definitions and Conceptual Models

by

Xiaoran Han, Teijo Lehtonen and Tuomas Mäkilä

Multimodal Technol. Interact. 2026, 10(3), 24; https://doi.org/10.3390/mti10030024 - 2 Mar 2026

Abstract

►▼

Show Figures

Extended Reality (XR) technologies are rapidly reshaping human–computer interaction; however, persistent ambiguity in the use of core terms (VR, AR, MR) hampers cumulative knowledge building, cross-study comparability, and technical standardisation. This review evaluates the XR conceptual landscape across four primary dimensions: the historical

[...] Read more.

Extended Reality (XR) technologies are rapidly reshaping human–computer interaction; however, persistent ambiguity in the use of core terms (VR, AR, MR) hampers cumulative knowledge building, cross-study comparability, and technical standardisation. This review evaluates the XR conceptual landscape across four primary dimensions: the historical evolution of core definitions, the synthesis of contemporary theoretical frameworks, the critical extensions of the Reality-Virtuality (RV) Continuum, and the alignment between academic taxonomies and industry practices. This review evaluates the XR conceptual landscape across four primary dimensions: the historical evolution of core definitions, the synthesis of contemporary theoretical frameworks, the critical extensions of the Reality-Virtuality (RV) Continuum, and the alignment between academic taxonomies and industry practices. To address this issue, we conducted a PRISMA-guided systematic literature review across four major databases (IEEE Xplore, ACM Digital Library, Scopus, and Web of Science), complemented by seminal and industry sources. Of the 173,677 retrieved records, 59 studies were included in the synthesis. Using thematic synthesis, we mapped the historical evolution of definitions and conceptual models and identified recurring analytical dimensions. The results indicate a clear paradigm shift from Milgram’s one-dimensional Reality–Virtuality continuum—originally grounded in visual display technology—towards a multidimensional conceptual space that integrates subjective user-experience constructs (e.g., coherence and plausibility) with objective system characteristics. The included studies cover 1968–2025, with marked acceleration in the 2020s: 2022 alone accounts for the highest annual count (9 studies), and nearly half of the corpus (47.5%) was published in 2021–2025. We further show that industry actors pragmatically re-bound these academic concepts for product and market positioning, leading to systematic divergences between academic and industrial definitions. By distilling key turning points and synthesising core analytical dimensions into a structured lens, this review provides a historically grounded, actionable understanding of the XR conceptual landscape to support terminological alignment across research and practice.

Full article

Figure 1

Open AccessArticle

Behavioral Engagement in VR-Based Sign Language Learning: Visual Attention as a Predictor of Performance and Temporal Dynamics

by

Davide Traini, José Manuel Alcalde-Llergo, Mariana Buenestado-Fernández, Domenico Ursino and Enrique Yeguas-Bolívar

Multimodal Technol. Interact. 2026, 10(3), 23; https://doi.org/10.3390/mti10030023 - 2 Mar 2026

Abstract

►▼

Show Figures

Understanding how learners engage with immersive sign language training environments is essential for advancing virtual reality-based education and inclusion. This study analyzes behavioral engagement in SONAR, a virtual reality application designed for sign language training and validation. We focus on three automatically derived

[...] Read more.

Understanding how learners engage with immersive sign language training environments is essential for advancing virtual reality-based education and inclusion. This study analyzes behavioral engagement in SONAR, a virtual reality application designed for sign language training and validation. We focus on three automatically derived engagement indicators (Visual Attention (VA), Video Replay Frequency (VRF), and Post-Playback Viewing Time (PPVT)) and examine their relationship with learning performance in a sample of 117 university students. Participants completed a self-paced Training phase with 12 sign language instructional videos, followed by a Validation quiz assessing retention. We employed Pearson correlation analysis to examine the relationships between engagement indicators and quiz performance, followed by binomial Generalized Linear Model (GLM) regression to assess their joint predictive contributions. Additionally, we conducted temporal analysis by aggregating moment-to-moment VA traces across all learners to characterize engagement dynamics during the learning session. Results show that VA exhibits a strong positive correlation with quiz performance (r = 0.76), followed by PPVT (r = 0.66), whereas VRF shows no meaningful association. A binomial GLM confirms that VA and PPVT are significant predictors of learning success, jointly explaining a substantial proportion of performance variance (

Figure 1

Open AccessArticle

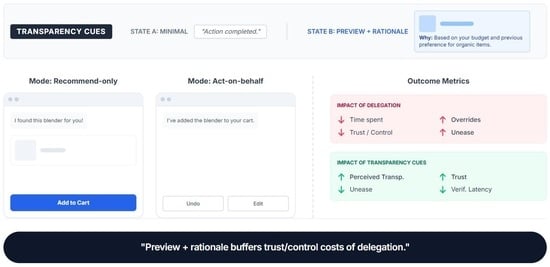

When Interfaces “Act for You”: An Eye-Tracking Experiment on Delegation, Transparency Cues, and Trust in Agentic Shopping Assistants

by

Stefanos Balaskas, Kyriakos Komis, Ioanna Yfantidou and Dimitra Skandali

Multimodal Technol. Interact. 2026, 10(3), 22; https://doi.org/10.3390/mti10030022 - 1 Mar 2026

Abstract

►▼

Show Figures

Agentic shopping assistants increasingly move beyond recommending products to executing actions in users’ workflows (e.g., adding items to cart, applying coupons, selecting shipping). This shift from advice to delegation raises questions about appropriate reliance, perceived control, and how interface cues support oversight when

[...] Read more.

Agentic shopping assistants increasingly move beyond recommending products to executing actions in users’ workflows (e.g., adding items to cart, applying coupons, selecting shipping). This shift from advice to delegation raises questions about appropriate reliance, perceived control, and how interface cues support oversight when systems can act. We report a laboratory eye-tracking experiment using a chat-only e-commerce prototype in a mixed 2 × 2 design: action autonomy varied within participants (recommend-only vs. act-on-behalf, with undo/edit), and transparency cues varied between participants (minimal statements vs. preview + rationale describing what will happen and why). Three standardized shopping tasks were completed by 72 participants. Results included behavioral logs (task time, overrides), areas-of-interest (AOI)-based eye-tracking (chat attention and verification indicators), and post-task self-reports (trust, control, uneasiness, perceived transparency). Act-on-behalf autonomy reduced completion time, but it also increased unease, decreased trust and perceived control, and increased the likelihood of an override, suggesting a trade-off between efficiency and oversight. The autonomy-related penalties for trust and perceived control under act-on-behalf execution were lessened by preview + rationale transparency, which additionally enhanced perceived transparency, trust, and unease. This mechanism coincided with eye-tracking: transparency decreased verification latency during agent actions and redirected attention toward information supplied by assistants. Transparency did not reliably reduce overrides, suggesting that minimal effective transparency can streamline supervision and improve evaluations without eliminating corrective behavior.

Full article

Graphical abstract

Open AccessArticle

Agent-Based Paradigm for the Self-Configuration of a Conceptual Mechanical Assembly Modeling Application in Virtual Reality

by

Julian Conesa, Francisco José Mula and Manuel Contero

Multimodal Technol. Interact. 2026, 10(2), 21; https://doi.org/10.3390/mti10020021 - 22 Feb 2026

Abstract

The immersive, multisensory experiences offered by virtual reality have been transformative across multiple disciplines, enhancing practical and theoretical skills while increasing user motivation and learning. On the other hand, multi-agent systems have proven to be effective in facilitating the expansion and modularity of

[...] Read more.

The immersive, multisensory experiences offered by virtual reality have been transformative across multiple disciplines, enhancing practical and theoretical skills while increasing user motivation and learning. On the other hand, multi-agent systems have proven to be effective in facilitating the expansion and modularity of computer systems. This paper presents an application developed in a virtual reality environment based on multi-agent systems for the conceptual design of mechanical assemblies from primitives. As a main novelty, the primitives can be defined by the user of the application from a set of models and images, and an Excel document, without the need for programming knowledge, taking advantage of the possibilities offered by multi-agent systems. In addition, for each primitive, it is possible to define a set of geometric and dimensional modifications, as well as a set of position relations with respect to other primitives to generate mechanical assemblies.

Full article

(This article belongs to the Topic AI-Based Interactive and Immersive Systems)

►▼

Show Figures

Figure 1

Open AccessReview

How Virtual Reality Design Reshapes Our Ecological Connection to Natural Systems

by

Ivonne Angelica Castiblanco Jimenez, Santiago Parra Barrios and Ana Maria Correa Jimenez

Multimodal Technol. Interact. 2026, 10(2), 20; https://doi.org/10.3390/mti10020020 - 20 Feb 2026

Abstract

This integrative literature review examines how virtual reality (VR) design can transform environmental understanding by changing users from passive observers to active participants in ecological systems. We aimed to analyze the interaction strategies through which VR enables environmental awareness and to identify the

[...] Read more.

This integrative literature review examines how virtual reality (VR) design can transform environmental understanding by changing users from passive observers to active participants in ecological systems. We aimed to analyze the interaction strategies through which VR enables environmental awareness and to identify the most effective approaches for fostering ecological connection. Through systematic analysis of studies published between 2015 and 2025, we found that effective VR implementations share three core design mechanisms: progressive engagement that builds connection over time, a careful balance between interaction and reflection, and multisensory integration that creates believable immersive experiences. These design mechanisms, in turn, build ecological connection through three fundamental pillars: perspective-taking that generates empathy, the creation of authentic sensory experiences, and the development of network thinking to understand complex interconnections. This review contributes to the field by mapping the development of environmental VR applications, identifying successful implementation strategies, and highlighting research gaps. Our analysis provides a comprehensive interaction framework for designing more effective environmental experiences and advancing this emerging field when innovative approaches are most needed.

Full article

(This article belongs to the Special Issue Intelligent Interaction Design: Innovative Models and the Future of Human–Computer Experience)

►▼

Show Figures

Figure 1

Open AccessArticle

MoodScape: Emotion-Informed Terrain Synthesis for Virtual Reality System

by

Rahul Kumar Rai, Reshu Bansal and Shashi Shekhar Jha

Multimodal Technol. Interact. 2026, 10(2), 19; https://doi.org/10.3390/mti10020019 - 11 Feb 2026

Abstract

(1) Background: Virtual environments (VEs) significantly influence human emotions through various elements such as lighting, color, and terrain. While the effects of lighting and color on emotions within VEs have been extensively studied, the impact of the terrain remains underexplored. This paper addresses

[...] Read more.

(1) Background: Virtual environments (VEs) significantly influence human emotions through various elements such as lighting, color, and terrain. While the effects of lighting and color on emotions within VEs have been extensively studied, the impact of the terrain remains underexplored. This paper addresses this gap by investigating the correlation between terrain characteristics in VEs and users’ emotional states. (2) Methods: We conducted a user study in which participants were exposed to various 3D terrains and used the Self-Assessment Manikin (SAM) to rate their emotional responses (valence, arousal, and dominance). Building on these insights, we propose MoodScape, an automated framework for emotion-informed terrain generation that significantly reduces the need for extensive expertise and manual effort. In the current implementation, continuous SAM valence–arousal targets are discretised into four quadrant-based affect/terrain classes, and this discrete class label conditions DH-CVAE-GAN terrain synthesis. MoodScape designs a generative adversarial network (GAN) architecture called DH-CVAE-GAN, which integrates a dual-head conditional variational autoencoder as the generator alongside a discriminator network to ensure effective and realistic terrain generation. The DH-CVAE-GAN is trained on a satellite-derived digital elevation model (DEM) dataset, which helps the generated terrains reflect realistic geographic patterns. (3) Results: Quantitative and qualitative evaluations on our study sample suggest that MoodScape can generate terrains whose perceived affective tone is broadly consistent with the specified affect-class inputs, indicating potential applications in gaming and exploratory therapeutic Virtual Reality, while formal clinical efficacy remains in future work.

Full article

(This article belongs to the Topic AI-Based Interactive and Immersive Systems)

►▼

Show Figures

Graphical abstract

Open AccessReview

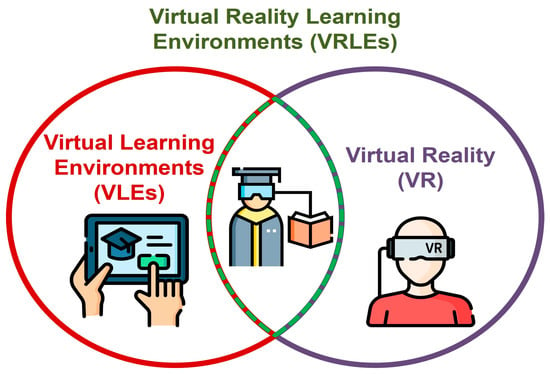

Virtual Reality Learning Environments: A Review of Support for Autonomous Learning Development

by

Pablo Fernández-Arias, Antonio del Bosque and Diego Vergara

Multimodal Technol. Interact. 2026, 10(2), 18; https://doi.org/10.3390/mti10020018 - 5 Feb 2026

Abstract

The rapid expansion of digital education in the 21st century has positioned Virtual Reality Learning Environments (VRLEs) as promising spaces for fostering greater learner autonomy. As immersive technologies become more accessible and pedagogically versatile, they offer students opportunities to regulate their learning processes,

[...] Read more.

The rapid expansion of digital education in the 21st century has positioned Virtual Reality Learning Environments (VRLEs) as promising spaces for fostering greater learner autonomy. As immersive technologies become more accessible and pedagogically versatile, they offer students opportunities to regulate their learning processes, experiment in interactive scenarios, and progress at their own pace. This review examines how autonomous learning has been conceptualized and investigated within VRLE research through a comprehensive bibliometric analysis of studies published between 2000 and 2025. The results reveal a research field shaped by two major orientations: one focused on human and pedagogical dimensions (learner diversity, instructional design, and evidence-based strategies) and another on technological innovation (artificial intelligence, machine learning, and simulation-based systems). Topic analyses show that digital and immersive education dominate current scholarly production, while areas directly related to autonomy, personalized learning, and student-centered methodologies remain comparatively less developed. Accordingly, it is crucial to reinforce pedagogical structures that enable autonomous learning in VR environments and to integrate technological advancements in a manner that translates into tangible improvements in educational quality across different settings.

Full article

(This article belongs to the Special Issue Educational Virtual/Augmented Reality)

►▼

Show Figures

Figure 1

Open AccessArticle

Beyond the Classroom: Technology-Enabled Acceleration Models for Gifted Learners in the Digital Era

by

Yusra Zaki Aboud

Multimodal Technol. Interact. 2026, 10(2), 17; https://doi.org/10.3390/mti10020017 - 4 Feb 2026

Abstract

The digital era represents a paradigm shift in gifted education, moving at an accelerating pace away from traditional models toward flexible and personalized technology-based pathways. This study investigates the impact of a model implemented via the FutureX platform in Saudi Arabia on the

[...] Read more.

The digital era represents a paradigm shift in gifted education, moving at an accelerating pace away from traditional models toward flexible and personalized technology-based pathways. This study investigates the impact of a model implemented via the FutureX platform in Saudi Arabia on the autonomy and self-regulated learning (SRL) of 63 gifted high school students. Using a quasi-experimental design, the study integrated quantitative measures (paired t-tests) with phenomenological analysis of interviews. The quantitative results showed statistically significant improvements (p < 0.001) in the dimensions of autonomy and self-regulated learning, with large Cohen’s d effect sizes for planning (d = 1.05), monitoring (d = 1.05), and cognitive control (d = 1.30). These gains were supported by a pedagogical design intentionally embedded within the platform to scaffold self-regulation. These findings were reinforced by qualitative results, with 88% of gifted students reporting that the platform provided appropriately challenging content and promoted self-learning and goal-setting behaviors.

Full article

(This article belongs to the Special Issue Human-AI Collaborative Interaction Design: Rethinking Human-Computer Symbiosis in the Age of Intelligent Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

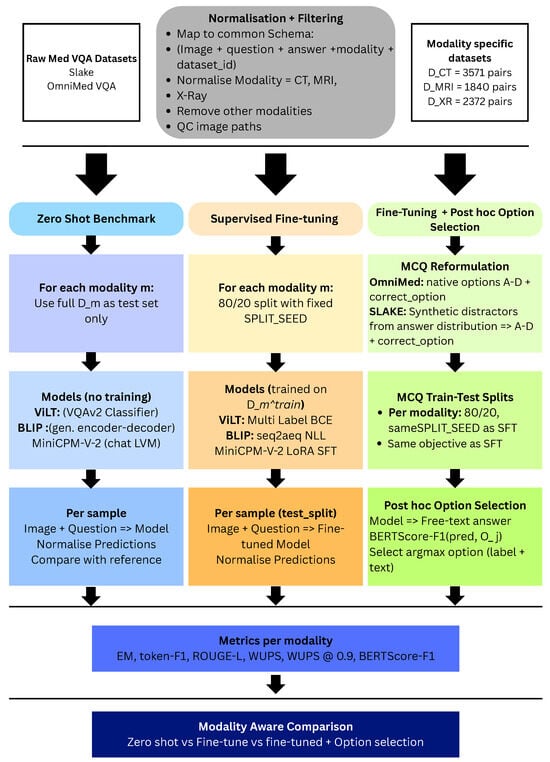

Systematic Analysis of Vision–Language Models for Medical Visual Question Answering

by

Muhammad Haseeb Shah and Heriberto Cuayáhuitl

Multimodal Technol. Interact. 2026, 10(2), 16; https://doi.org/10.3390/mti10020016 - 3 Feb 2026

Abstract

►▼

Show Figures

General-purpose vision–language models (VLMs) are increasingly applied to imaging tasks, yet their reliability on medical visual question answering (Med-VQA) remains unclear. We investigate how three state-of-the-art VLMs—ViLT, BLIP, and MiniCPM-V-2—perform on radiology-focused Med-VQA when evaluated in a modality-aware manner. Using SLAKE and OmniMedVQA-Mini,

[...] Read more.

General-purpose vision–language models (VLMs) are increasingly applied to imaging tasks, yet their reliability on medical visual question answering (Med-VQA) remains unclear. We investigate how three state-of-the-art VLMs—ViLT, BLIP, and MiniCPM-V-2—perform on radiology-focused Med-VQA when evaluated in a modality-aware manner. Using SLAKE and OmniMedVQA-Mini, we construct harmonised subsets for computed tomography (CT), magnetic resonance imaging (MRI), and X-ray, standardising schema and answer processing. We first benchmark all models in a strict zero-shot setting, then perform supervised fine-tuning on modality-specific data splits, and finally add a post-hoc semantic option-selection layer that maps free-text predictions to multiple-choice answers. Zero-shot performance is modest (exact match ≈20% for ViLT/BLIP and 0% for MiniCPM-V-2), confirming that off-the-shelf deployment is inadequate. Fine-tuning substantially improves all models, with ViLT reaching ≈80% exact match and BLIP ≈50%, while MiniCPM-V-2 lags behind. When coupled with option selection, ViLT and BLIP achieve 90–93% exact match and F1 across all modalities, corresponding to 95–97% BERTScore-F1. Our novel results show that (i) modality-specific supervision is essential for Med-VQA, and (ii) post-hoc option selection can transform strong but imperfect generative predictions into highly reliable discrete decisions on harmonised radiology benchmarks. The latter is useful for medical VLMs that combine generative responses with option or sentence selection.

Full article

Figure 1

Open AccessArticle

A User-Centered Evaluation of a VR HMD-Based Harvester Training Simulator

by

Pranjali Barve and Raffaele De Amicis

Multimodal Technol. Interact. 2026, 10(2), 15; https://doi.org/10.3390/mti10020015 - 2 Feb 2026

Abstract

►▼

Show Figures

Skilled operation of forestry harvesters is essential for ensuring safety, efficiency, and sustainability in logging practices. However, conventional training methods are often prohibitively expensive and limited by access to specialized equipment. This study delivers one of the first user-centered validations of a low-cost,

[...] Read more.

Skilled operation of forestry harvesters is essential for ensuring safety, efficiency, and sustainability in logging practices. However, conventional training methods are often prohibitively expensive and limited by access to specialized equipment. This study delivers one of the first user-centered validations of a low-cost, VR HMD-based forestry harvester simulator, directly addressing access and scalability barriers in training. With 26 participants, we quantify cognitive load, usability, user experience, and simulator sickness using established instruments. An increase in cognitive load was seen from baseline tutorial to each training module (NASA-TLX:

Figure 1

Open AccessArticle

An HCI-Centered Experiences of ICT Integration and Its Impact on Professional Competencies Supporting Formative Assessment in Higher Education e-Learning

by

Abdelaziz Boumahdi, Fadwa Ammari and Mohammed Ammari

Multimodal Technol. Interact. 2026, 10(2), 14; https://doi.org/10.3390/mti10020014 - 2 Feb 2026

Abstract

As universities expand their e-learning systems, it becomes increasingly important to understand how the use of information and communication technologies (ICTs) changes the skills needed for effective formative assessment. This study uses the principles of human–computer interaction (HCI) to create a framework for

[...] Read more.

As universities expand their e-learning systems, it becomes increasingly important to understand how the use of information and communication technologies (ICTs) changes the skills needed for effective formative assessment. This study uses the principles of human–computer interaction (HCI) to create a framework for examining how digital tools, interfaces, and modes of interaction influence the way teachers assess students in higher education. The research relies on the information provided by 115 Mohammed V University teachers, who filled out a competency-based assessment grid regarding online assessment practices. The results remain exploratory and context-dependent and do not make claims of statistical representativeness beyond the studied institutional context. The findings attest to the virtues of digital technology in improving methodological and techno-pedagogical skills, without excluding the existence of serious shortcomings in semio-ethical and evaluative skills. It is certainly useful to leverage feedback to correct imperfections in evaluation practices and make them more responsive to digital interfaces. It is becoming imperative to rethink professional skills as the regulatory halo of the online formative assessment system, in order to evaluate a more synergistic framework that can give better visibility to virtual classrooms.

Full article

(This article belongs to the Special Issue Online Learning to Multimodal Era: Interfaces, Analytics and User Experiences)

►▼

Show Figures

Figure 1

Open AccessArticle

A Mixed Reality Tool with Automatic Speech Recognition for 3D CAD Based Visualization and Automatic Dimension Generation in the Industry 5.0 Shipyard

by

Aida Vidal-Balea, Antón Valladares-Poncela, Javier Vilar-Martínez, Tiago M. Fernández-Caramés and Paula Fraga-Lamas

Multimodal Technol. Interact. 2026, 10(2), 13; https://doi.org/10.3390/mti10020013 - 1 Feb 2026

Abstract

Industry 5.0 is composed of a variety of complex tasks and challenging processes requiring specialized labor and multidisciplinary coordination. Specifically, when it comes to shipbuilding, shipyards leverage advanced technologies, seeking to replace operations that continue to rely on traditional methods, such as 2D

[...] Read more.

Industry 5.0 is composed of a variety of complex tasks and challenging processes requiring specialized labor and multidisciplinary coordination. Specifically, when it comes to shipbuilding, shipyards leverage advanced technologies, seeking to replace operations that continue to rely on traditional methods, such as 2D blueprints and paper-based documentation, which can lead to inefficiencies and alignment errors in precision-dependent tasks. For this reason, this article focuses on embracing Mixed Reality (MR) technologies to address these challenges in the context of electrical outfitting tasks. The design, development and evaluation of a MR application tailored for HoloLens 2 smart glasses aims to streamline the workflow for operators, reducing reliance on paper-based documentation and enhancing the precision of assembly processes. The proposed system allows for the precise positioning of 3D models in the real environment, ensuring accurate alignment during assembly. Additionally, it incorporates automatic dimension generation between objects in the scene. To further enhance usability, the application integrates a Galician on-device Automatic Speech Recognition (ASR) system, allowing operators to interact seamlessly with the MR interface using voice commands. The whole system has been exhaustively tested, both through usability and functionality evaluations, which validate MR as a viable tool for shipyard assembly and inspection tasks.

Full article

(This article belongs to the Special Issue Multimodal Interaction Design in Immersive Learning and Training Environments)

►▼

Show Figures

Figure 1

Open AccessArticle

Design and Prototype of a Chatbot for Public Participation in Major Infrastructure Projects

by

Jonathan Matthei, Johannes Maas, Maurice Wischum, Sven Mackenbach and Katharina Klemt-Albert

Multimodal Technol. Interact. 2026, 10(2), 12; https://doi.org/10.3390/mti10020012 - 30 Jan 2026

Abstract

►▼

Show Figures

Public participation is a central element of democratic decision-making processes, but it often faces challenges within planning approval procedures due to problems of understanding and accessibility. This paper aims to counteract these challenges through the conceptual development, prototypical implementation and validation of a

[...] Read more.

Public participation is a central element of democratic decision-making processes, but it often faces challenges within planning approval procedures due to problems of understanding and accessibility. This paper aims to counteract these challenges through the conceptual development, prototypical implementation and validation of a chatbot. The chatbot is designed to facilitate access to planning documents and improve the participation process as a whole. After presenting the theoretical foundations of chatbots and large language models (LLMs), three central use cases are described. The main tasks of the chatbot are to simplify the language of complex planning documents, find documents and information, and answer frequently asked questions. The underlying architecture of the prototype is based on the concept of retrieval augmented generation (RAG) and uses a vector database in which the information is embedded and stored as vectors. To evaluate the developed prototype, four focus workshops were conducted with professionals affiliated with road and rail infrastructure administrations at both state and federal levels in Germany. During these workshops, participants tested the core functionalities and assessed the system using both quantitative and qualitative criteria. The results indicate a strong potential for improving the handling of standard inquiries. By improving access to complex planning documents, the system may also contribute to a reduction in objections. At the same time, the evaluation emphasizes the importance of limiting hallucinations through appropriate technical safeguards and clearly indicating the use of AI to users. The insights gained from this study will be incorporated into the prototype developed within the BIM4People research project, funded by the German Federal Ministry of Transport. The aim therefore is to implement additional use cases and continuously optimize the functionality of the system through an iterative development process.

Full article

Figure 1

Open AccessArticle

Adaptive Realities: Human-in-the-Loop AI for Trustworthy XR Training in Safety-Critical Domains

by

Daniele Pretolesi, Georg Regal, Helmut Schrom-Feiertag and Manfred Tscheligi

Multimodal Technol. Interact. 2026, 10(1), 11; https://doi.org/10.3390/mti10010011 - 22 Jan 2026

Abstract

►▼

Show Figures

Extended Reality (XR) technologies have matured into powerful tools for training in high-stakes domains, from emergency response to search and rescue. Yet current systems often struggle to balance real-time AI-driven personalisation with the need for human oversight and calibrated trust. This article synthesizes

[...] Read more.

Extended Reality (XR) technologies have matured into powerful tools for training in high-stakes domains, from emergency response to search and rescue. Yet current systems often struggle to balance real-time AI-driven personalisation with the need for human oversight and calibrated trust. This article synthesizes the programmatic contributions of a multi-study doctoral project to advance a design-and-evaluation framework for trustworthy adaptive XR training. Across six studies, we explored (i) recommender-driven scenario adaptation based on multimodal performance and physiological signals, (ii) persuasive dashboards for trainers, (iii) architectures for AI-supported XR training in medical mass-casualty contexts, (iv) theoretical and practical integration of Human-in-the-Loop (HITL) supervision, (v) user trust and over-reliance in the face of misleading AI suggestions, and (vi) the role of interaction modality in shaping workload, explainability, and trust in human–robot collaboration. Together, these investigations show how adaptive policies, transparent explanation, and adjustable autonomy can be orchestrated into a single adaptation loop that maintains trainee engagement, improves learning outcomes, and preserves trainer agency. We conclude with design guidelines and a research agenda for extending trustworthy XR training into safety-critical environments.

Full article

Figure 1

Open AccessArticle

APAR: A Structural Design and Guidance Framework for Gamification in Education Based on Motivation Theories

by

J. Carlos López-Ardao, Miguel Rodríguez-Pérez, Sergio Herrería-Alonso, M. Estrella Sousa-Vieira, Alfonso Lago Ferreiro, Andrés Suárez-González and Raúl F. Rodríguez-Rubio

Multimodal Technol. Interact. 2026, 10(1), 10; https://doi.org/10.3390/mti10010010 - 10 Jan 2026

Abstract

►▼

Show Figures

Gamification is widely used to enhance student motivation, yet many educational design proposals remain conceptual and provide limited operational guidance for digital learning environments. This paper introduces APAR (Activities, Points, Achievements and Rewards), a content-independent structural framework for designing and implementing educational gamification

[...] Read more.

Gamification is widely used to enhance student motivation, yet many educational design proposals remain conceptual and provide limited operational guidance for digital learning environments. This paper introduces APAR (Activities, Points, Achievements and Rewards), a content-independent structural framework for designing and implementing educational gamification in learning platforms. Grounded in motivation theories (including Self-Determination Theory and Relatedness–Autonomy–Mastery–Purpose) and reward taxonomies (Status, Access, Power and Stuff), APAR distinguishes high-level design constructs from concrete game elements (e.g., points, badges and leaderboards) and provides a systematic design loop linking learning activities, feedback, intermediate goals and reinforcement. The contribution includes (i) a mapping table relating each APAR construct to motivation models, supported dynamics and typical learning-platform implementations; (ii) an actionable design guide; and (iii) an empirical illustration implemented in Moodle in a higher-education Computer Networks course. In this setting, the proportion of enrolled students taking the final exam increased from 58% to 72% in the first year, and the proportion of enrolled students passing increased from 17% to 38%; in 2022–2023 these values were 70% and 39%, respectively (56% of exam takers passed). While the use case relies on quantitative course-level indicators and is observational, the findings support the potential of structural gamification as an integrated methodological tool and motivate further mixed-method validations.

Full article

Figure 1

Open AccessSystematic Review

AI-Powered Procedural Haptics for Narrative VR: A Systematic Literature Review

by

Vimala Perumal and Zeeshan Jawed Shah

Multimodal Technol. Interact. 2026, 10(1), 9; https://doi.org/10.3390/mti10010009 - 9 Jan 2026

Abstract

Haptic feedback is important for narrative virtual reality (VR), yet authoring remains costly and difficult to scale due to device-specific tuning, placement constraints, and the need for semantically congruent timing. We systematically reviewed user studies on haptics in narrative VR to establish an

[...] Read more.

Haptic feedback is important for narrative virtual reality (VR), yet authoring remains costly and difficult to scale due to device-specific tuning, placement constraints, and the need for semantically congruent timing. We systematically reviewed user studies on haptics in narrative VR to establish an empirical baseline and identify gaps for AI-powered procedural haptics. Following PRISMA 2020, we searched IEEE Xplore, ACM Digital Library, Scopus, Web of Science, PubMed, and PsycINFO (English; human participants; haptics synchronized to narrative events) and performed backward/forward citation chasing (final search: 31 July 2025). We also conducted a parallel scoping scan of grey literature (arXiv and CHI/SIGGRAPH workshops/demos), finalized on 7 September 2025; these records are summarized separately and were not included in the evidence synthesis. Of 493 records screened, 26 full texts were assessed, and 10 studies were included. Quantitatively, presence improved in 6/8 studies that measured it and immersion improved in 3/3; sample sizes ranged 8–108. Across varied modalities and placements, haptics improved presence and immersion and often enhanced affect; validated measures of narrative comprehension were rare. None of the included studies evaluated AI-generated procedural haptics in user studies. We conclude by proposing a structured, three-phase research roadmap designed to bridge this critical gap, moving the field from theoretical promise to the empirical validation of intelligent systems capable of making rich, adaptive, and scalable haptic narratives a reality.

Full article

(This article belongs to the Special Issue Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives—2nd Edition)

►▼

Show Figures

Graphical abstract

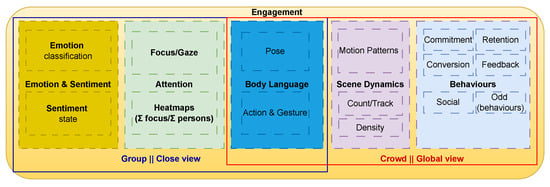

Open AccessReview

From Cues to Engagement: A Comprehensive Survey and Holistic Architecture for Computer Vision-Based Audience Analysis in Live Events

by

Marco Lemos, Pedro J. S. Cardoso and João M. F. Rodrigues

Multimodal Technol. Interact. 2026, 10(1), 8; https://doi.org/10.3390/mti10010008 - 8 Jan 2026

Abstract

The accurate measurement of audience engagement in real-world live events remains a significant challenge, with the majority of existing research confined to controlled environments like classrooms. This paper presents a comprehensive survey of Computer Vision AI-driven methods for real-time audience engagement monitoring and

[...] Read more.

The accurate measurement of audience engagement in real-world live events remains a significant challenge, with the majority of existing research confined to controlled environments like classrooms. This paper presents a comprehensive survey of Computer Vision AI-driven methods for real-time audience engagement monitoring and proposes a novel, holistic architecture to address this gap, with this architecture being the main contribution of the paper. The paper identifies and defines five core constructs essential for a robust analysis: Attention, Emotion and Sentiment, Body Language, Scene Dynamics, and Behaviours. Through a selective review of state-of-the-art techniques for each construct, the necessity of a multimodal approach that surpasses the limitations of isolated indicators is highlighted. The work synthesises a fragmented field into a unified taxonomy and introduces a modular architecture that integrates these constructs with practical, business-oriented metrics such as Commitment, Conversion, and Retention. Finally, by integrating cognitive, affective, and behavioural signals, this work provides a roadmap for developing operational systems that can transform live event experience and management through data-driven, real-time analytics.

Full article

(This article belongs to the Special Issue Human-AI Collaborative Interaction Design: Rethinking Human-Computer Symbiosis in the Age of Intelligent Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

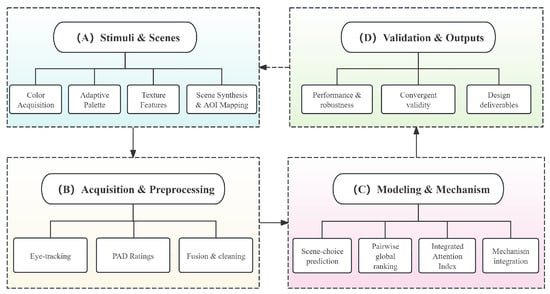

Eye-Tracking and Emotion-Based Evaluation of Wardrobe Front Colors and Textures in Bedroom Interiors

by

Yushu Chen, Wangyu Xu and Xinyu Ma

Multimodal Technol. Interact. 2026, 10(1), 7; https://doi.org/10.3390/mti10010007 - 6 Jan 2026

Abstract

►▼

Show Figures

Wardrobe fronts form a major visual element in bedroom interiors, yet material selection for their colors and textures often relies on intuition rather than evidence. This study develops a data-driven framework that links gaze behavior and affective responses to occupants’ preferences for wardrobe

[...] Read more.

Wardrobe fronts form a major visual element in bedroom interiors, yet material selection for their colors and textures often relies on intuition rather than evidence. This study develops a data-driven framework that links gaze behavior and affective responses to occupants’ preferences for wardrobe front materials. Forty adults evaluated color and texture swatches and rendered bedroom scenes while eye-tracking data capturing attraction, retention, and exploration were collected. Pairwise choices were modeled using a Bradley–Terry approach, and visual-attention features were integrated with emotion ratings to construct an interpretable attention index for predicting preferences. Results show that neutral light colors and structured wood-like textures consistently rank highest, with scene context reducing preference differences but not altering the order. Shorter time to first fixation and longer fixation duration were the strongest predictors of desirability, demonstrating the combined influence of rapid visual capture and sustained attention. Within the tested stimulus set and viewing conditions, the proposed pipeline yields consistent preference rankings and an interpretable attention-based score that supports evidence-informed shortlisting of wardrobe-front materials. The reported relationships between gaze, affect, and choice are associative and are intended to guide design decisions within the scope of the present experimental settings.

Full article

Figure 1

Open AccessArticle

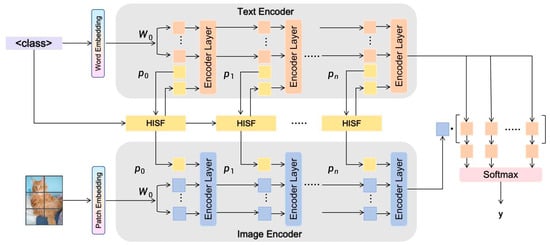

HISF: Hierarchical Interactive Semantic Fusion for Multimodal Prompt Learning

by

Haohan Feng and Chen Li

Multimodal Technol. Interact. 2026, 10(1), 6; https://doi.org/10.3390/mti10010006 - 6 Jan 2026

Abstract

►▼

Show Figures

Recent vision-language pre-training models, like CLIP, have been shown to generalize well across a variety of multitask modalities. Nonetheless, their generalization for downstream tasks is limited. As a lightweight adaptation approach, prompt learning could allow task transfer by optimizing only several learnable vectors

[...] Read more.

Recent vision-language pre-training models, like CLIP, have been shown to generalize well across a variety of multitask modalities. Nonetheless, their generalization for downstream tasks is limited. As a lightweight adaptation approach, prompt learning could allow task transfer by optimizing only several learnable vectors and thus is more flexible for pre-trained models. However, current methods mainly concentrate on the design of unimodal prompts and ignore effective means for multimodal semantic fusion and label alignment, which limits their representation power. To tackle these problems, this paper designs a Hierarchical Interactive Semantic Fusion (HISF) framework for multimodal prompt learning. On top of frozen CLIP backbones, HISF injects visual and textual signals simultaneously in intermediate layers of a Transformer through a cross-attention mechanism as well as fitting category embeddings. This architecture realizes the hierarchical semantic fusion at the modality level with structural consistency kept at each layer. In addition, a Label Embedding Constraint and a Semantic Alignment Loss are proposed to promote category consistency while alleviating semantic drift in training. Extensive experiments across 11 few-shot image classification benchmarks show that HISF improves the average accuracy by around 0.7% compared to state-of-the-art methods and has remarkable robustness in cross-domain transfer tasks. Ablation studies also verify the effectiveness of each proposed part and their combination: hierarchical structure, cross-modal attention, and semantic alignment collaborate to enrich representational capacity. In conclusion, the proposed HISF is a new hierarchical view for multimodal prompt learning and provides a more lightweight and generalizable paradigm for adapting vision-language pre-trained models.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

6 November 2025

MDPI Launches the Michele Parrinello Award for Pioneering Contributions in Computational Physical Science

MDPI Launches the Michele Parrinello Award for Pioneering Contributions in Computational Physical Science

9 October 2025

Meet Us at the 3rd International Conference on AI Sensors and Transducers, 2–7 August 2026, Jeju, South Korea

Meet Us at the 3rd International Conference on AI Sensors and Transducers, 2–7 August 2026, Jeju, South Korea

Topics

Topic in

Electronics, MTI, BDCC, AI, Virtual Worlds, Applied Sciences

AI-Based Interactive and Immersive Systems

Topic Editors: Sotiris Diplaris, Nefeli Georgakopoulou, Stefanos Vrochidis, Giuseppe Amato, Maurice Benayoun, Beatrice De GelderDeadline: 31 December 2026

Topic in

AI, Arts, Computers, MTI

Artificial Intelligence and the Future of Art

Topic Editors: Ahmed Elgammal, Marian MazzoneDeadline: 31 October 2027

Conferences

Special Issues

Special Issue in

MTI

Human-AI Collaborative Interaction Design: Rethinking Human-Computer Symbiosis in the Age of Intelligent Systems

Guest Editor: Qianling JiangDeadline: 20 April 2026

Special Issue in

MTI

Educational Virtual/Augmented Reality

Guest Editor: Arun KulshreshthDeadline: 30 April 2026

Special Issue in

MTI

Online Learning to Multimodal Era: Interfaces, Analytics and User Experiences

Guest Editors: Nikleia Eteokleous, Rita PanaouraDeadline: 31 May 2026

Special Issue in

MTI

uHealth Interventions and Digital Therapeutics for Better Diseases Prevention and Patient Care

Guest Editor: Silvia GabrielliDeadline: 30 June 2026