Journal Description

Multimodal Technologies and Interaction

Multimodal Technologies and Interaction

is an international, peer-reviewed, open access journal on multimodal technologies and interaction published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Inspec, dblp Computer Science Bibliography, and other databases.

- Journal Rank: JCR - Q2 (Computer Science, Cybernetics) / CiteScore - Q1 (Neuroscience (miscellaneous))

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.7 days after submission; acceptance to publication is undertaken in 4.6 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Journal Cluster of Artificial Intelligence: AI, AI in Medicine, Algorithms, BDCC, MAKE, MTI, Stats, Virtual Worlds and Computers.

Impact Factor:

2.4 (2024);

5-Year Impact Factor:

2.7 (2024)

Latest Articles

A Comprehensive Review of Deep Learning Approaches for Video-Based Sign Language Recognition: Datasets, Challenges and Insights

Multimodal Technol. Interact. 2026, 10(6), 58; https://doi.org/10.3390/mti10060058 - 22 May 2026

Abstract

►

Show Figures

This study presents a comprehensive review of more than 100 research papers on sign language recognition (SLR) published between 2020 and 2026. The analysis focuses on deep learning approaches applied to video-based SLR, including spatiotemporal feature extraction, temporal modeling, attention mechanisms, motion-based representations,

[...] Read more.

This study presents a comprehensive review of more than 100 research papers on sign language recognition (SLR) published between 2020 and 2026. The analysis focuses on deep learning approaches applied to video-based SLR, including spatiotemporal feature extraction, temporal modeling, attention mechanisms, motion-based representations, hybrid frameworks, transfer learning methods and other methods. Particular attention is given to how these methods model spatiotemporal dynamics and capture subtle gesture characteristics in sign language communication. The review highlights several recent developments, such as the introduction of specialized datasets, the emergence of real-time recognition systems, and the integration of multimodal fusion strategies. At the same time, persistent challenges remain, including data scarcity in low-resource sign languages, limited linguistic standardization of datasets, and insufficient model interpretability. The findings underline the importance of developing scalable and generalizable models capable of handling diverse datasets and user variability. The distinct contributions of this review are fourfold: (1) a comprehensive synthesis of over 100 studies published between 2020 and 2026, covering the full spectrum of deep learning architectures for video-based SLR; (2) a structured six-category taxonomy enabling systematic cross-architectural comparison; (3) a comprehensive focus on low-resource sign languages, which remain underrepresented in the existing literature; and (4) a critical analysis of the current benchmark landscape for low-resource sign languages, identifying key gaps and outlining strategic directions for future dataset development. These contributions are intended to guide further research toward more robust, inclusive, and universally applicable SLR systems.

Full article

Open AccessArticle

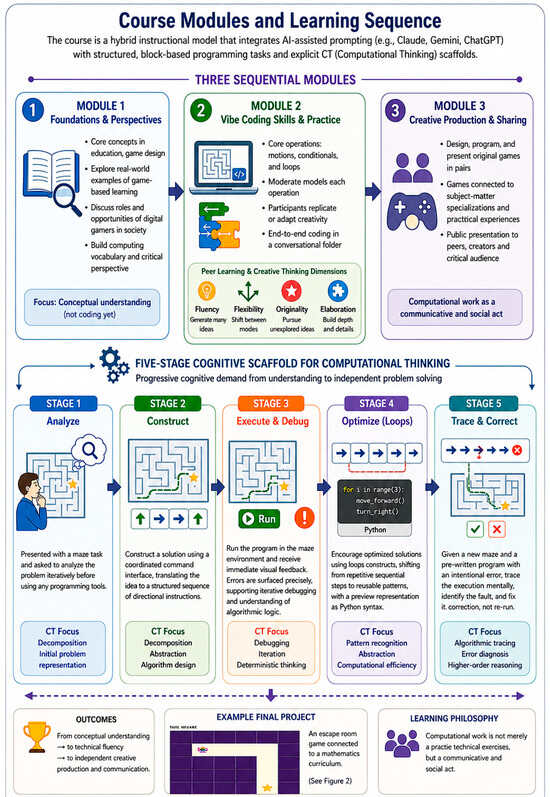

From Prompt to Play: Examining Computational Thinking Through Vibe Coding in Game Making for Pre-Service Teacher Education

by

Nikolaos Pellas

Multimodal Technol. Interact. 2026, 10(5), 57; https://doi.org/10.3390/mti10050057 - 21 May 2026

Abstract

Computational thinking (CT) is increasingly recognized as essential in education, yet teacher preparation programs struggle to develop both computational proficiency and pedagogical readiness in pre-service teachers (PSTs). This study examines an AI-mediated, game-making course grounded in the emerging “vibe coding” paradigm, where 24

[...] Read more.

Computational thinking (CT) is increasingly recognized as essential in education, yet teacher preparation programs struggle to develop both computational proficiency and pedagogical readiness in pre-service teachers (PSTs). This study examines an AI-mediated, game-making course grounded in the emerging “vibe coding” paradigm, where 24 novice PSTs iteratively constructed programs through natural language prompting. Adopting a mixed-methods design, the study drew on pre- and post-course attitude questionnaires, reflective accounts of prompting strategies, and open-ended responses. Results indicate that participants substantively engaged with core CT practices, particularly debugging, iterative refinement, and problem decomposition. Nonetheless, this downward recalibration in self-reported coding and teaching confidence represents a productive adjustment rather than a failure. Conversely, attitudes toward game-making improved significantly, with a statistically significant medium effect size for perceived instructional value (d = 0.51), the largest practical effect observed across dimensions. Most participants intended to integrate CT into future teaching. These findings suggest that prompt-driven learning environments support meaningful engagement with computational processes when carefully scaffolded, but do not inherently ensure pedagogical readiness, particularly for higher-order CT practices such as abstraction and pattern recognition. Unlike prior research that has examined game-making processes or PST attitudes toward CT in isolation, this study empirically integrates all three within a single scaffolded instructional design using vibe coding. This integration enables a process-level account of how CT is enacted—and how it develops—when code generation is partially delegated to AI systems. Beyond documenting attitude shifts, the study introduces an analytical rubric for identifying CT engagement in AI-mediated prompting and derives evidence-based design principles that specify the pedagogical conditions under which vibe coding supports, rather than bypasses, computational reasoning.

Full article

(This article belongs to the Special Issue Technology-Enhanced Game-Based Approaches in Education: Learning, Emotions, and Motivation)

►▼

Show Figures

Figure 1

Open AccessArticle

Attention-Based Multimodal Fusion for Salience-Aware Blended Emotion Recognition

by

José Salas-Cáceres, Modesto Castrillón-Santana, Oliverio J. Santana, Daniel Hernández-Sosa and Javier Lorenzo-Navarro

Multimodal Technol. Interact. 2026, 10(5), 56; https://doi.org/10.3390/mti10050056 - 20 May 2026

Abstract

►▼

Show Figures

Blended emotion recognition introduces the challenge of identifying not only which emotions are present in an expressive display but also their relative salience. The proposed methodology builds upon the pre-extracted features provided with the dataset and enhances performance through a combination of temporal

[...] Read more.

Blended emotion recognition introduces the challenge of identifying not only which emotions are present in an expressive display but also their relative salience. The proposed methodology builds upon the pre-extracted features provided with the dataset and enhances performance through a combination of temporal modeling and multimodal fusion strategies. Unimodal experiments revealed that visual encoders consistently outperformed audio ones, with the multimodal HiCMAE encoder achieving the strongest single-encoder results with 34% presence accuracy and 18.23% salience accuracy. Multimodal fusion further improved performance, with the best validation results obtained using a combination of simple concatenation and attention-based fusion, reaching 47.86% in presence accuracy and 27.92% in salience accuracy. Overall, the proposed methodology surpasses the chosen baseline introduced in the original paper across a k-fold experiment, confirming the effectiveness of multimodal attention-based fusion for the accurate prediction of both emotion presence and salience in blended affective behaviour. The experimental results further indicate that multimodal expression recognition consistently outperforms unimodal approaches, highlighting the complementary nature of cross-modal information.

Full article

Figure 1

Open AccessArticle

MAVAGEN: Multimodal Avatar Generation Framework for Personalized Human–Computer Interaction

by

Alexandr Axyonov, Elena Ryumina, Dmitry Ryumin and Alexey Karpov

Multimodal Technol. Interact. 2026, 10(5), 55; https://doi.org/10.3390/mti10050055 - 18 May 2026

Abstract

►▼

Show Figures

Digital-avatar systems still provide limited control over emotionally expressive behavior in human–computer interaction, especially in Large Language Model (LLM)-based chatbots and virtual assistants with personalized visual embodiments. To address this problem, we propose Multimodal Avatar Generation (MAVAGEN), a multimodal avatar generation framework for

[...] Read more.

Digital-avatar systems still provide limited control over emotionally expressive behavior in human–computer interaction, especially in Large Language Model (LLM)-based chatbots and virtual assistants with personalized visual embodiments. To address this problem, we propose Multimodal Avatar Generation (MAVAGEN), a multimodal avatar generation framework for synthesizing upper-body digital avatars with personalized appearance and controllable emotional expression. The user specifies the desired gender and age, as well as provides a short text input from which the target emotional state is inferred. MAVAGEN then retrieves an identity image from the HaGRIDv2-1M corpus and generates an avatar clip with synchronized facial expressions, hand gestures, and expressive speech. The framework uses the following six feature streams: textual features, emotion-distribution features, landmark-based pose features, depth-geometry features, RGB-appearance features, and acoustic features. In a quantitative evaluation against recent human animation methods, MAVAGEN achieves the best overall avatar quality, with FID 48.20, FVD 592.00, SSIM 0.741, Sync-C 7.40, HKC 0.929, HKV 25.30, CSIM 0.563, and EmoAcc 0.88. Ablation results show that emotion and acoustic features contribute most to emotional agreement, while landmark-based pose and depth features improve geometric and motion stability. These results support the practical use of MAVAGEN in personalized LLM-based assistants and other emotion-sensitive interactive systems.

Full article

Figure 1

Open AccessArticle

Haptic and Thermal Rendering of Astronomical Data: A Multimodal Approach to Inclusive Science Communication

by

Beatriz García, Johanna Casado and Alexis Mancilla

Multimodal Technol. Interact. 2026, 10(5), 54; https://doi.org/10.3390/mti10050054 - 12 May 2026

Abstract

►▼

Show Figures

Universal Accessibility in Astronomy requires a paradigm shift from visual-centric communication to multisensory data interaction. Because astronomy communication relies inherently on high-resolution imagery and visual metaphors, it creates significant accessibility barriers for blind and low-vision (BLV) audiences. To address this, multimodal encoding offers

[...] Read more.

Universal Accessibility in Astronomy requires a paradigm shift from visual-centric communication to multisensory data interaction. Because astronomy communication relies inherently on high-resolution imagery and visual metaphors, it creates significant accessibility barriers for blind and low-vision (BLV) audiences. To address this, multimodal encoding offers a feasible and meaningful solution by redistributing information across alternative sensory channels, ensuring that the absence of sight does not preclude the comprehension of spatial data. This article explores the development and evaluation of a low-cost, multimodal tool designed to represent complex astronomical concepts—specifically stellar magnitude and color—through tactile and auditory stimuli. Unlike traditional methods, our approach focuses on the haptic-cognitive link, allowing users to “feel” data through physical relief models. We present a structured impact study involving a heterogeneous group of blind, low-vision, and sighted participants. The methodology followed a mixed-methods approach, including a participatory workshop with 20 individuals and a detailed usability assessment with a core group (n= 6) of blind and low-vision participants. Preliminary results from this pilot phase demonstrate that multimodal integration effectively reduces the perceived mental effort for complex spatial data comprehension. Quantitative and qualitative feedback suggests that tactile-auditory sensory substitution not only improves accessibility but also enhances engagement and information retention across all user groups. These findings highlight the potential of multimodal models in transforming public scientific environments, such as museums and observatories, into inclusive, interactive spaces.

Full article

Figure 1

Open AccessArticle

A Conceptual Framework for Mobile Augmented-Reality Storytelling to Support Collaborative Language Learning in Vocational Education and Training

by

Eirini Maria Paraskevioti, Athanasios Christopoulos, Stylianos Mystakidis, Mikko-Jussi Laakso and Tapio Salakoski

Multimodal Technol. Interact. 2026, 10(5), 53; https://doi.org/10.3390/mti10050053 - 11 May 2026

Abstract

Augmented Reality (AR) has been found to produce significant effects on individual learning outcomes but its impact on collaborative applications remains moderate. Existing AR frameworks emphasize individual instructional design, whereas frameworks for collaborative learning rarely engage with the spatial and device-mediated affordances of

[...] Read more.

Augmented Reality (AR) has been found to produce significant effects on individual learning outcomes but its impact on collaborative applications remains moderate. Existing AR frameworks emphasize individual instructional design, whereas frameworks for collaborative learning rarely engage with the spatial and device-mediated affordances of mobile AR. In response to this inadequacy in the literature, we introduce the Mobile Augmented-Reality Storytelling for Vocational Education and Training (MARS-VET) framework, a four-dimensional conceptual architecture that integrates Computer-Supported Collaborative Learning (CSCL) scripting principles with mobile AR affordances for collaborative English as a Foreign Language (EFL) writing in Vocational Education and Training (VET) settings. MARS-VET synthesizes theoretical perspectives across four dimensions: contextual anchoring, which embeds activities within authentic workplace scenarios; collaborative orchestration, which structures group interaction through macro- and micro-level scripts; competency cultivation, which sequences writing progression from model-based reproduction toward autonomous professional text production; and capacity building, which addresses the professional-development requirements of implementing educators. Content validity was established through expert panel evaluation involving international specialists (N = 11) who rated the framework against 36 items using a four-point relevance scale and provided additional qualitative feedback. The Scale-level Content Validity Index (S-CVI/Ave = 0.91) exceeded established thresholds, with all four dimensions achieving satisfactory item-level indices. Experts reached unanimous agreement on items addressing workplace scenario identification and co-located access to linguistic resources. Qualitative feedback led to terminology refinements and clarification of orchestration mechanisms. The framework offers VET institutions and educators a reference for the design and evaluation of collaborative AR experiences in an area where integrative frameworks have so far been lacking.

Full article

(This article belongs to the Special Issue Online Learning to Multimodal Era: Interfaces, Analytics and User Experiences)

►▼

Show Figures

Figure 1

Open AccessArticle

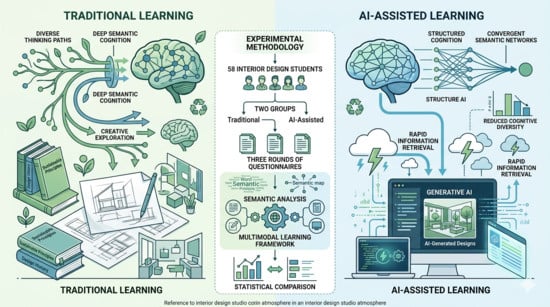

AI-Mediated Multimodal Learning and Its Impact on Sustainable Design Cognition: An Experimental Study with Interior Design Students

by

Yang Song and Shaochen Wang

Multimodal Technol. Interact. 2026, 10(5), 52; https://doi.org/10.3390/mti10050052 - 9 May 2026

Abstract

►▼

Show Figures

In recent years, artificial intelligence has been fully involved in design practice and educational activities, and its impact on practice and education has received widespread attention from the academic community. This study aimed to preliminarily explore, through a controlled experiment, the differences in

[...] Read more.

In recent years, artificial intelligence has been fully involved in design practice and educational activities, and its impact on practice and education has received widespread attention from the academic community. This study aimed to preliminarily explore, through a controlled experiment, the differences in the impact of generative artificial intelligence (AI) tools and traditional web/literature tools on the sustainable design learning outcomes of interior design students in a specific teaching context at a university in China. A study was conducted on 58 third-year college students who were divided into an AI tool group (Class B) and a traditional tool group (Class A). Three semi-structured questionnaire surveys were conducted over two months to collect data on their understanding, attitudes, and practical applications of sustainable design. Quantitative statistics and text analysis methods were used for the comparison. The results showed that under specific experimental conditions, students who used AI tools showed a more significant improvement in their self-evaluation of knowledge mastery, but their sense of recognition of the importance of knowledge and subsequent learning willingness also decreased. In subsequent design practice, students in the traditional tool group showed higher initiative in applying concepts and diversity in strategies. Text analysis further suggests that AI-assisted learning may be more conducive to the rapid structured acquisition of knowledge, while traditional learning methods exhibit different characteristics in promoting deep semantic associations. The conclusions of this study are based on short-term experimental observations of specific samples and toolsets, revealing the tension between efficiency and depth that may be faced when integrating AI tools into interior design education, providing a reference and discussion basis for broader and longer-term teaching research in the future.

Full article

Graphical abstract

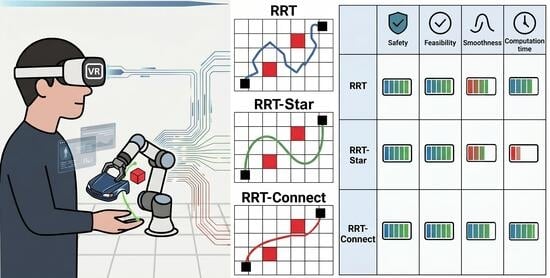

Open AccessArticle

Comparison of Path Planning Algorithms for Manipulator Robots in Collaborative Manufacturing Environments: An Immersive Virtual Reality-Based Approach

by

Jonathan David Aguilar and Carlos Felipe Rengifo

Multimodal Technol. Interact. 2026, 10(5), 51; https://doi.org/10.3390/mti10050051 - 6 May 2026

Abstract

►▼

Show Figures

Trajectory planning algorithms are essential in human–robot collaboration (HRC), as they must generate efficient trajectories for seamless interaction. Given the risks and complexity of testing in real-world scenarios, a virtual environment was developed in Unity 3D, integrating a virtual model of the UR3

[...] Read more.

Trajectory planning algorithms are essential in human–robot collaboration (HRC), as they must generate efficient trajectories for seamless interaction. Given the risks and complexity of testing in real-world scenarios, a virtual environment was developed in Unity 3D, integrating a virtual model of the UR3 robot that delivers workpieces to a user equipped with a Meta Quest device. The RRT, RRT-Star (RRTS), and RRT-Connect (RRTC) algorithms were evaluated using ANOVA and Tukey post hoc tests, considering the following response variables: safety, feasibility, smoothness, and computation time across three experimental scenarios characterized by (i) low, (ii) medium, and (iii) high levels of movement of the participant’s left hand. The statistical results indicate that RRTC exhibited the best performance in terms of smoothness and computation time. Based on these findings, a multicriteria decision-making analysis was conducted using the Analytic Hierarchy Process (AHP), combining quantitative evidence derived from the statistical analysis with expert judgments supported by bibliographic references. This multicriteria analysis enabled the coherent integration of the different evaluation criteria and concluded that RRTC is the most suitable alternative for collaborative assembly tasks in HRC environments.

Full article

Graphical abstract

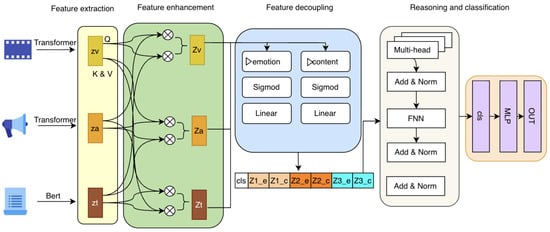

Open AccessArticle

Sentiment Analysis Based on Enhanced Feature Decoupling and Multimodal Logical Reasoning

by

Hua Yang, Ming Zhao, Yuanhao Qiu, Yuanyuan Li, Junying Guo, Ziran Zhang, Baozhou Chen, Mingzhe He and Yu Hong

Multimodal Technol. Interact. 2026, 10(5), 50; https://doi.org/10.3390/mti10050050 - 3 May 2026

Abstract

►▼

Show Figures

Despite significant advances, multimodal sentiment analysis still faces critical challenges in modeling complex cross-modal interactions and extracting discriminative sentiment features. To address these limitations, this paper proposes a hierarchical multimodal sentiment analysis framework. Specifically, a cross-modal feature enhancement module is first introduced to

[...] Read more.

Despite significant advances, multimodal sentiment analysis still faces critical challenges in modeling complex cross-modal interactions and extracting discriminative sentiment features. To address these limitations, this paper proposes a hierarchical multimodal sentiment analysis framework. Specifically, a cross-modal feature enhancement module is first introduced to capture deep correlations among textual, visual, and acoustic modalities via cross-attention mechanisms, thereby obtaining context-aware fused representations. Subsequently, an attention-gated feature disentanglement approach is employed to effectively separate sentiment-relevant information from content-specific features within the fused representations; an independence loss is further imposed to enforce orthogonality between these two feature subsets, thereby mitigating noise induced by repetitive visual frames and textual stop words. Finally, all disentangled features are integrated to facilitate high-level sentiment reasoning through a multimodal logical inference module, where supervised contrastive loss is incorporated to enhance the discriminability of sentiment expressions. Extensive experiments conducted on two public benchmarks, CMU-MOSI and CMU-MOSEI, demonstrate that the proposed framework achieves improvements of 2–6% across multiple evaluation metrics compared with state-of-the-art methods.

Full article

Figure 1

Open AccessArticle

Empirical Validation of Fitts’ Law in Virtual Reality: Modeling, Prediction, and Modality Comparison

by

Nikolina Rodin, Dario Ogrizović, Luka Batistić and Sandi Ljubic

Multimodal Technol. Interact. 2026, 10(5), 49; https://doi.org/10.3390/mti10050049 - 1 May 2026

Abstract

►▼

Show Figures

Fitts’ law is a foundational model for predicting pointing performance and has been increasingly explored in immersive virtual reality (VR) environments. This paper presents a controlled experimental framework for deriving modality-specific Fitts’ law models in VR and evaluating their predictive transfer to applied

[...] Read more.

Fitts’ law is a foundational model for predicting pointing performance and has been increasingly explored in immersive virtual reality (VR) environments. This paper presents a controlled experimental framework for deriving modality-specific Fitts’ law models in VR and evaluating their predictive transfer to applied interaction tasks. The framework comprises two scenarios. The first replicates a standardized ISO 9241 pointing task in a 3D virtual environment to derive predictive movement time models by systematically varying target distance (20–50 cm), target size (2.5–5 cm), and spatial configuration (

Figure 1

Open AccessArticle

FLAG: Fatty Liver Awareness Game for Liver Health Literacy in Last-Semester Software Engineering Students

by

Franklin Parrales-Bravo, José Borbor-Albay, Janio Jadán-Guerrero and Leonel Vasquez-Cevallos

Multimodal Technol. Interact. 2026, 10(5), 48; https://doi.org/10.3390/mti10050048 - 1 May 2026

Abstract

►▼

Show Figures

Non-alcoholic fatty liver disease affects approximately thirty percent of the global population, yet public awareness remains dangerously low among young adults facing occupational risk factors. This study introduces the Fatty Liver Awareness Game (FLAG), an educational serious game designed to improve liver health

[...] Read more.

Non-alcoholic fatty liver disease affects approximately thirty percent of the global population, yet public awareness remains dangerously low among young adults facing occupational risk factors. This study introduces the Fatty Liver Awareness Game (FLAG), an educational serious game designed to improve liver health literacy among software engineering students at the University of Guayaquil. While evaluated with this specific sample, FLAG is intended for the broader target population of young adults in developing nations who face occupational sedentary risk and limited access to preventive health education. Through a controlled experiment with fifty participants randomly assigned to game-based or traditional lecture instruction, the game demonstrated superior effectiveness, with a twenty-percentage-point advantage in post-test scores and a seventy-two percent reduction in incorrect responses compared to fifty percent in the lecture group. The large effect size (Cohen’s d = 1.43) and reduced performance variability among game participants indicate that interactive, feedback-rich learning environments can outperform passive instruction for this population and content domain. While the present design does not isolate the contribution of individual game elements—such as narrative framing, explanatory feedback, or mini-game interleaving—the results establish FLAG as a replicable model for digital health interventions targeting underserved populations at critical developmental junctures. Future component analyses are needed to determine which specific design features drive the observed advantages.

Full article

Figure 1

Open AccessArticle

Parallel Bilingual Datasets: A Multimodal Deep Learning Framework for Proficiency and Style Classification

by

Padmavathi Kesavan, Miranda Lakshmi Travis, Martin Aruldoss and Martin Wynn

Multimodal Technol. Interact. 2026, 10(5), 47; https://doi.org/10.3390/mti10050047 - 30 Apr 2026

Abstract

►▼

Show Figures

This study presents a multimodal deep learning framework for automatic proficiency and style classification of parallel Bilingual Tamil–Hindi learner data. The proposed system employs a dual-headed neural architecture to simultaneously predict proficiency levels (Basic, Advanced) and stylistic categories (Formal, Literary) using shared feature

[...] Read more.

This study presents a multimodal deep learning framework for automatic proficiency and style classification of parallel Bilingual Tamil–Hindi learner data. The proposed system employs a dual-headed neural architecture to simultaneously predict proficiency levels (Basic, Advanced) and stylistic categories (Formal, Literary) using shared feature representations. A curated dataset of bilingual text samples is utilized, along with synthetic speech generated through text-to-speech (TTS) to enable controlled multimodal experimentation. Five deep learning architectures are evaluated under text-only, audio-only, and learnable fusion settings. Experimental findings indicate that text-based models consistently achieve strong performance in both proficiency and style classification tasks. In contrast, the audio-only model demonstrates limited effectiveness, highlighting the constraints of synthetic acoustic features in capturing meaningful linguistic information. The fusion models provide only marginal improvements over text-based approaches, suggesting that textual representations play a dominant role in proficiency and stylistic classification within controlled datasets. These results emphasize the importance of linguistic features over acoustic signals for automated language assessment in low-resource settings. The proposed framework provides a scalable and reproducible approach and offers a foundation for future work incorporating real speech data and more diverse linguistic inputs.

Full article

Figure 1

Open AccessArticle

AI-Enhanced Motion Capture for Multimodal Interaction in Chinese Shadow Puppetry Heritage

by

Gaihua Wang, Hengchao Yun, Lixin Yang, Qingyuan Zheng and Tianmuran Liu

Multimodal Technol. Interact. 2026, 10(5), 46; https://doi.org/10.3390/mti10050046 - 28 Apr 2026

Abstract

This study examines how AI-enhanced motion capture (AI-MoCap) mediates the preservation, transmission, and re-creation of Chinese shadow puppetry as performative intangible cultural heritage. Through a state-of-the-art review and comparative analysis of three representative application models—technology-driven, culturally integrated, and entertainment-oriented—the paper explores how AI-MoCap

[...] Read more.

This study examines how AI-enhanced motion capture (AI-MoCap) mediates the preservation, transmission, and re-creation of Chinese shadow puppetry as performative intangible cultural heritage. Through a state-of-the-art review and comparative analysis of three representative application models—technology-driven, culturally integrated, and entertainment-oriented—the paper explores how AI-MoCap supports the digitization of performative techniques while reshaping modes of cultural presentation and interaction. Cross-case comparison highlights recurring tensions between technical standardization and cultural authenticity while also indicating possibilities for symbolic reconstruction, contextual continuity, and ethically grounded design. Based on this comparison, the paper develops a dual-channel inheritance framework—“perception–symbol” and “design–performance”—and treats cultural resolution and digital ethics as analytical and normative principles for resisting algorithmic homogenization. Rather than functioning only as a digitization tool, AI-MoCap can be understood as a mediating mechanism whose cultural value depends on how it remains embedded in community-based performative logics, symbolic systems, and ethical boundaries. The resulting framework offers transferable guidance for future research, curation, training, and policy discussion in the digital safeguarding of performance-based heritage.

Full article

(This article belongs to the Special Issue Human-AI Collaborative Interaction Design: Rethinking Human-Computer Symbiosis in the Age of Intelligent Systems)

►▼

Show Figures

Figure 1

Open AccessSystematic Review

Interactive Narratives and Serious Games in Oncology and Grief Support: A Systematic Literature Review

by

João Macieira, Marco Vale, Elena Vanica and Vitor Carvalho

Multimodal Technol. Interact. 2026, 10(5), 45; https://doi.org/10.3390/mti10050045 - 27 Apr 2026

Abstract

►▼

Show Figures

The impact of oncological diseases extends far beyond the clinical patient, profoundly affecting the mental health of caregivers, family members, and volunteers who navigate complex emotional landscapes of grief, anxiety, and trauma. While the domain of digital health has seen a proliferation of

[...] Read more.

The impact of oncological diseases extends far beyond the clinical patient, profoundly affecting the mental health of caregivers, family members, and volunteers who navigate complex emotional landscapes of grief, anxiety, and trauma. While the domain of digital health has seen a proliferation of serious games aimed at pediatric patient education and treatment adherence, the specific perspective of the “second-order patient”, the caregiver or survivor, remains significantly under-explored. The primary objective of this study is to systematically review the current state of interactive narratives in oncology, palliative care, and grief support, identifying research gaps to inform the broader design space of empathy-driven serious games. Following the PRISMA guidelines, 31 articles were selected from an initial query of 116 records. Interventions were categorized into Serious Games, Games, and Gamification. The analysis reveals a critical thematic transition: early interventions relied heavily on biological “battle” metaphors to empower patients, whereas the current literature advocates for “thanatosensitive” designs that foster empathy. However, a distinct research gap persists regarding narratives that explore post-loss meaning reconstruction and the hospital volunteer experience. Synthesizing these findings, this paper establishes an evidence-based theoretical framework demonstrating a significant opportunity for games that prioritize dialogue and emotional processing over traditional winning conditions. As a practical application of these findings, we also briefly outline the conceptualization of a prototype simulating a widower’s experience volunteering in a palliative ward, shifting the ludic focus from defeating a disease to navigating loss.

Full article

Figure 1

Open AccessProject Report

From Tradition to Technology: A Framework for Smart Pilgrim Management on the Camino de Santiago

by

Adriana Mar, Fernando Monteiro, Pedro Pereira, Jose Carlos García, João F. A. Martins and Daniel Basulto

Multimodal Technol. Interact. 2026, 10(5), 44; https://doi.org/10.3390/mti10050044 - 23 Apr 2026

Abstract

►▼

Show Figures

The Camino de Santiago, a UNESCO-listed pilgrimage route, has experienced sustained growth in visitor numbers, challenging municipalities to preserve cultural integrity while ensuring service quality. This study reviews people-counting technologies and proposes a smart pilgrim management framework grounded in flux measurement systems to

[...] Read more.

The Camino de Santiago, a UNESCO-listed pilgrimage route, has experienced sustained growth in visitor numbers, challenging municipalities to preserve cultural integrity while ensuring service quality. This study reviews people-counting technologies and proposes a smart pilgrim management framework grounded in flux measurement systems to support data-driven and sustainable decision-making. Drawing on the smart tourism literature, the conceptual framework integrates infrared counters, mobile tracking solutions, and GPS/Wi-Fi data to generate real-time insights into pilgrim flows. A pilot simulation illustrates how these data can inform operational and strategic planning. The framework enables local authorities to monitor pedestrian movements, anticipate service demands (sanitation, accommodation, and safety), and detect overcrowding in sensitive heritage areas. By incorporating technological solutions into traditionally low-tech pilgrimage settings, municipalities can transition from reactive to proactive management approaches. The paper contributes a scalable and ethically grounded framework tailored to heritage pilgrimage routes, advancing smart tourism applications in culturally significant contexts.

Full article

Graphical abstract

Open AccessSystematic Review

Who, Where, What, and How to Nudge: A Systematic Review of Co-Designed Digital Nudges for Behavioral Interventions

by

Alaa Ziyud, Khaled Al-Thelaya and Jens Schneider

Multimodal Technol. Interact. 2026, 10(4), 43; https://doi.org/10.3390/mti10040043 - 21 Apr 2026

Abstract

►▼

Show Figures

Digital nudges refer to subtle modifications in digital choice architectures that are increasingly applied across domains such as healthcare, human–computer interactions, and behavioral science. However, existing approaches often overlook users’ needs, contextual factors, and ethical considerations related to transparency and autonomy. This systematic

[...] Read more.

Digital nudges refer to subtle modifications in digital choice architectures that are increasingly applied across domains such as healthcare, human–computer interactions, and behavioral science. However, existing approaches often overlook users’ needs, contextual factors, and ethical considerations related to transparency and autonomy. This systematic literature review, guided by PRISMA 2020, examines the integration of co-design methodologies in digital nudging across four dimensions: participants, application domains, nudge forms, and development methods. The findings show that co-design is primarily driven by end-users, supported by domain experts and technology specialists. Applications are concentrated in health-related contexts, particularly chronic disease management and mental health. The effectiveness of priming varied across studies, with some reporting short-term benefits and others indicating user fatigue, suggesting context-dependent impact and limited long-term effectiveness.

Full article

Graphical abstract

Open AccessArticle

From Prompts to High-Fidelity Prototypes: A Usability Evaluation of Generative AI-Driven Prototyping Tools for Smart Mobile App Design

by

John Bustamante-Orejuela, Xavier Quiñonez-Ku and Pablo Pico-Valencia

Multimodal Technol. Interact. 2026, 10(4), 42; https://doi.org/10.3390/mti10040042 - 17 Apr 2026

Abstract

The integration of Generative Artificial Intelligence (GAI) into software design tools has transformed the early stages of mobile application development, particularly prototype creation from natural-language prompts. This study evaluates the usability and effectiveness of GAI-assisted prototyping tools for generating high-fidelity mobile application prototypes.

[...] Read more.

The integration of Generative Artificial Intelligence (GAI) into software design tools has transformed the early stages of mobile application development, particularly prototype creation from natural-language prompts. This study evaluates the usability and effectiveness of GAI-assisted prototyping tools for generating high-fidelity mobile application prototypes. A controlled laboratory usability study was conducted in which undergraduate Information Technology Engineering students used and evaluated four widely adopted prototyping platforms: Figma, Uizard, Visily, and Stitch. Participants employed these tools to recreate mobile interfaces corresponding to the interaction model of the Duolingo application. The System Usability Scale (SUS) was used to assess perceived usability and effectiveness from the users’ perspective. The results indicate that all evaluated tools enabled rapid prototype generation; however, significant differences emerged in usability, structural fidelity, and perceived control. Figma and Stitch achieved the highest usability scores and demonstrated greater alignment with the reference prototype (82.86 and 80.36, respectively). Visily achieved a favorable usability score (78.57), while Uizard obtained a moderate score (67.14). Although Uizard and Visily exhibited strong automation capabilities and faster initial generation, their outputs required additional manual refinement to achieve higher fidelity and customization. Participant feedback emphasized the importance of output quality, responsiveness, and foundational design knowledge in achieving satisfactory results. Overall, the findings suggest that current GAI-based prototyping tools are effective and valuable in real-world software development contexts. However, their effectiveness appears closely related to the degree of user control, responsiveness, and the ability to iteratively refine AI-generated interface components.

Full article

(This article belongs to the Special Issue Intelligent Interaction Design: Innovative Models and the Future of Human–Computer Experience)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Introducing Brain–Computer Interfaces in Factories and Fabrication Lines for the Inclusion of Disabled Workers–Industry 5.0—A Modern Challenge and Opportunity

by

Marian-Silviu Poboroniuc, Zoltán Nochta, Martin Klepal, Nina Hunter, Danut-Constantin Irimia, Alina Georgiana Baciu, Kelaja Schert, Tim Piotrowski and Alexandru Mitocaru

Multimodal Technol. Interact. 2026, 10(4), 41; https://doi.org/10.3390/mti10040041 - 17 Apr 2026

Abstract

►▼

Show Figures

Flexible factories and adaptive fabrication lines offer a testbed for advanced multimodal interaction concepts that can support the inclusion of disabled workers in Industry 5.0 manufacturing systems. The study synthesizes interdisciplinary data from ergonomics, industrial automation, and EU regulatory frameworks to establish a

[...] Read more.

Flexible factories and adaptive fabrication lines offer a testbed for advanced multimodal interaction concepts that can support the inclusion of disabled workers in Industry 5.0 manufacturing systems. The study synthesizes interdisciplinary data from ergonomics, industrial automation, and EU regulatory frameworks to establish a conceptual model for human-machine interaction. Building on conceptual modeling and a structured literature analysis, the study proposes a six-step integration framework that links task demands, worker capabilities, and interaction modalities within human-in-the-loop manufacturing environments. Although no empirical case study was conducted in this phase, an exemplary application is presented for a semi-automated bike wheel manufacturing process. Detailed machine-based assembly line flows and simulated process data were utilized for illustrative purposes to depict the process and validate the proposed Capability–Task Matching Matrix. The results operationalize the human-centric vision of Industry 5.0 by providing a structured methodology for the inclusion of disabled workers within fabrication environments. The findings are organized into two primary components: the conceptual development of the Integration Approach and its practical application to a semi-automated industrial use-case. Finally, a particular focus is placed on Brain–Computer Interfaces (BCIs) as an emerging interaction channel that enables non-muscular control, attention monitoring, and neuroadaptive feedback, complementing conventional interfaces rather than replacing them. The framework is illustrated through application to the same semi-automated bicycle wheel assembly line, where BCI-supported interaction, augmented interfaces, and robotic assistance are mapped to specific production tasks and assessed in terms of feasibility and technological maturity. Drawing on the paper’s results, an explanatory 10-year roadmap outlines the feasibility and phased deployment of BCI solutions. It aligns technological advances with European regulations and a vision for a fully inclusive manufacturing enterprise.

Full article

Figure 1

Open AccessArticle

The Discrimination Threshold on the Palm for Two Successive Rectangular Stimuli

by

Mayuka Kojima and Akio Yamamoto

Multimodal Technol. Interact. 2026, 10(4), 40; https://doi.org/10.3390/mti10040040 - 15 Apr 2026

Abstract

►▼

Show Figures

This study investigates tactile spatial resolution on the palm using two successive rectangular stimuli. Whereas classical tactile resolution studies have focused mainly on point or circular stimulation, less is known about how spatial resolution depends on the placement and geometry of rectangular, device-relevant

[...] Read more.

This study investigates tactile spatial resolution on the palm using two successive rectangular stimuli. Whereas classical tactile resolution studies have focused mainly on point or circular stimulation, less is known about how spatial resolution depends on the placement and geometry of rectangular, device-relevant stimuli. We measured the successive two-stimulus discrimination threshold using three rectangular stimulators across five palm areas aligned along the proximal–distal axis. Participants compared a fixed reference stimulus with a variable comparison stimulus, and the minimum separation at which the two stimuli were perceived as occurring at different locations was recorded as the threshold. The overall average threshold across all experimental conditions was approximately 5.2 mm. The threshold varied systematically across palm regions, being smallest around the palmar digital crease and the base of the fingers. In the central palm, threshold differences were more evident for changes in stimulator width than for changes in stimulator length. These results extend tactile spatial resolution research beyond point stimulation and provide design-relevant guidance for palm-based haptic devices.

Full article

Figure 1

Open AccessArticle

Multimodal Smart-Skin for Real-Time Sitting Posture Recognition with Cross-Session Validation

by

Giva Andriana Mutiara, Muhammad Rizqy Alfarisi, Paramita Mayadewi, Lisda Meisaroh and Periyadi

Multimodal Technol. Interact. 2026, 10(4), 39; https://doi.org/10.3390/mti10040039 - 9 Apr 2026

Abstract

►▼

Show Figures

Prolonged sitting with poor posture is associated with musculoskeletal disorders, reduced productivity, and long-term health risks. Many existing posture monitoring systems predominantly rely on single-modality sensing, such as pressure or vision-based approaches, limiting their ability to capture both static alignment and dynamic micro-movements.

[...] Read more.

Prolonged sitting with poor posture is associated with musculoskeletal disorders, reduced productivity, and long-term health risks. Many existing posture monitoring systems predominantly rely on single-modality sensing, such as pressure or vision-based approaches, limiting their ability to capture both static alignment and dynamic micro-movements. This study proposes a multimodal smart-skin system integrating pressure, temperature, and vibration sensors for sitting posture recognition. A total of 42 sensors distributed across 14 anatomical locations were deployed, generating 15,037 samples collected over three independent sessions to evaluate cross-session temporal generalization across nine posture classes under controlled experimental conditions. Two deep learning architectures—Temporal Convolutional Networks with Attention (TCN + Attn) and Convolutional Neural Network–Long Short-Term Memory (CNN − LSTM)—were compared under Leave-One-Session-Out (LOSO) cross-validation. TCN + Attn achieved 85.23% LOSO accuracy, outperforming CNN − LSTM by 2.56 percentage points while reducing training time by 36.7% and inference latency by 33.9%. Ablation analysis revealed that temperature sensing was the most discriminative unimodal modality (71.5% accuracy), and full multimodal fusion improved LOSO accuracy by 22.93% compared to pressure-only configurations. These results demonstrate the feasibility of multimodal smart-skin sensing combined with temporal convolutional modeling for cross-session posture recognition and indicate potential for efficient real-time, privacy-preserving ergonomic monitoring. This study should be interpreted as a controlled, single-subject proof-of-concept, and further validation in multi-subject and real-world environments is required to establish broader generalizability.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, Inventions, MTI, Robotics, Sci, Sensors, Standards, Technologies

Toward Trustworthy Human-AI Collaboration: From Interactive Intelligence to Collaborative Autonomy

Topic Editors: George Margetis, Helmut Degen, Stavroula NtoaDeadline: 30 November 2026

Topic in

Electronics, MTI, BDCC, AI, Virtual Worlds, Applied Sciences

AI-Based Interactive and Immersive Systems

Topic Editors: Sotiris Diplaris, Nefeli Georgakopoulou, Stefanos Vrochidis, Giuseppe Amato, Maurice Benayoun, Beatrice De GelderDeadline: 31 December 2026

Topic in

AI, Arts, Computers, MTI

Artificial Intelligence and the Future of Art

Topic Editors: Ahmed Elgammal, Marian MazzoneDeadline: 31 October 2027

Conferences

Special Issues

Special Issue in

MTI

Online Learning to Multimodal Era: Interfaces, Analytics and User Experiences

Guest Editors: Nikleia Eteokleous, Rita PanaouraDeadline: 31 May 2026

Special Issue in

MTI

uHealth Interventions and Digital Therapeutics for Better Diseases Prevention and Patient Care

Guest Editor: Silvia GabrielliDeadline: 30 June 2026

Special Issue in

MTI

Behavioral Cybersecurity, Deception and Secure Design

Guest Editors: Derek L. Hansen, Ben SchooleyDeadline: 30 June 2026

Special Issue in

MTI

Intelligent Interaction Design: Innovative Models and the Future of Human–Computer Experience

Guest Editor: Peng-Wei HsiaoDeadline: 30 June 2026