Journal Description

Algorithms

Algorithms

is a peer-reviewed, open access journal which provides an advanced forum for studies related to algorithms and their applications, and is published monthly online by MDPI. The European Society for Fuzzy Logic and Technology (EUSFLAT) is affiliated with Algorithms and its members receive discounts on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, and other databases.

- Journal Rank: JCR - Q2 (Computer Science, Theory and Methods) / CiteScore - Q1 (Numerical Analysis)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 19.2 days after submission; acceptance to publication is undertaken in 3.7 days (median values for papers published in this journal in the second half of 2025).

- Testimonials: See what our editors and authors say about Algorithms.

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Journal Cluster of Artificial Intelligence: AI, AI in Medicine, Algorithms, BDCC, MAKE, MTI, Stats, Virtual Worlds and Computers.

Impact Factor:

2.1 (2024);

5-Year Impact Factor:

2.0 (2024)

Latest Articles

ID-MSNet: An Enhanced Multi-Scale Network with Convolutional Attention for Pixel-Level Steel Defect Segmentation

Algorithms 2026, 19(4), 294; https://doi.org/10.3390/a19040294 (registering DOI) - 9 Apr 2026

Abstract

►

Show Figures

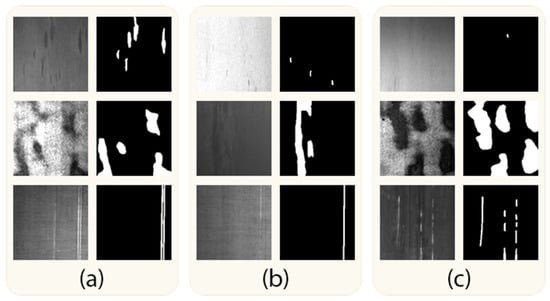

Automated pixel-level detection of steel surface defects is a critical challenge in manufacturing quality control, complicated by the variation in defect size and shape, low contrast with background textures, and the diversity of defect patterns. This paper proposes ID-MSNet, an enhanced version of

[...] Read more.

Automated pixel-level detection of steel surface defects is a critical challenge in manufacturing quality control, complicated by the variation in defect size and shape, low contrast with background textures, and the diversity of defect patterns. This paper proposes ID-MSNet, an enhanced version of the UNet3+ architecture, designed specifically for the segmentation of three common steel surface defect types: inclusions, patches, and scratches. The proposed architecture introduces three targeted modifications: (1) a multi-scale feature learning module (MSFLM) in the encoder that uses dilated convolutions at multiple rates to capture contextual features across different scales, combined with DropBlock regularization and batch normalization to improve generalization; (2) an improved down-sampling (IDS) module that replaces standard max-pooling with learnable strided convolutions fused via 1 × 1 convolution, preserving richer feature representations; and (3) a convolutional block attention module (CBAM) integrated into the skip connections to selectively focus the model on spatially and channel-wise relevant defect regions. Experiments on the publicly available SD-saliency-900 dataset demonstrate that ID-MSNet achieved an 86.19% mIoU, outperforming all compared state-of-the-art segmentation models while using only 6.7 million parameters—approximately 75% fewer than the original UNet3+. These results establish ID-MSNet as a strong and efficient baseline for steel surface defect segmentation, with potential applicability to automated quality inspection in broader manufacturing contexts.

Full article

Open AccessArticle

Hierarchical Continuous Monitoring and Resource Reallocation Under Resistance to Change: A Decision-Making Framework Balancing Skill Constraints and Managerial Capacity

by

Fotios Panagiotopoulos and Vassilios Chatzis

Algorithms 2026, 19(4), 293; https://doi.org/10.3390/a19040293 - 9 Apr 2026

Abstract

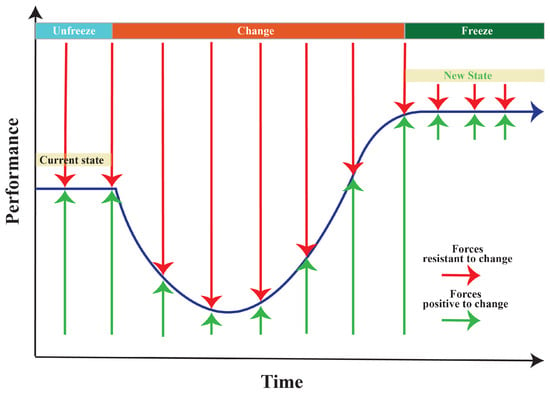

Organizational change is a complex process often accompanied by intense human reactions and increased uncertainty. Resistance to change (RtC) can cause critical performance declines during the organizational change period, which can delay implementation. The evolution of information systems and digital infrastructures provides immediate

[...] Read more.

Organizational change is a complex process often accompanied by intense human reactions and increased uncertainty. Resistance to change (RtC) can cause critical performance declines during the organizational change period, which can delay implementation. The evolution of information systems and digital infrastructures provides immediate access to operational data and analytical tools, making it possible to continuously monitor performance and timely adjust decisions during change. Although recent approaches attempt to minimize these impacts through continuous monitoring and resource reallocation, they typically view human resource allocation as a single-level problem. In hierarchical structures where work and decision-making are distributed across levels, RtC can increase backlogs, place an excessive amount of work on managers, and result in operational issues or the failure of the change. From an algorithmic perspective, the proposed method formulates a hierarchical dynamic optimization problem with two coupled assignment layers, in which the operational output of Level 1 dynamically determines the workload processed at Level 2. Both assignment problems are solved at each time step using the Hungarian algorithm, while RtC is modelled as a time-dependent stochastic process aligned with a reference change curve, allowing employee and managerial performance to be updated dynamically over the planning horizon. In contrast to static Classical Change Management Model (CCMM), large-scale experimental results demonstrate that the new approach increases total processed workload by approximately 20%, while at the peak of resistance, the improvement reaches 56.8%. At the same time, it substantially reduces backlog accumulation, maintaining very low backlog levels (18 versus 16,424 units) within the tested setting. Finally, by applying a 50% reallocation threshold, the organization maintains 98.5% of maximum performance while avoiding 45% of the reallocations. Overall, the proposed method provides a dynamic optimization framework that combines hierarchical organizational modeling with stochastic performance updates across organizational levels.

Full article

(This article belongs to the Special Issue Recent Advances in Numerical Algorithms and Their Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Hybrid Fault Prognosis Using Health Index Fusion

by

S. Mohsen Azizi and Faeze Ghofrani

Algorithms 2026, 19(4), 292; https://doi.org/10.3390/a19040292 - 9 Apr 2026

Abstract

►▼

Show Figures

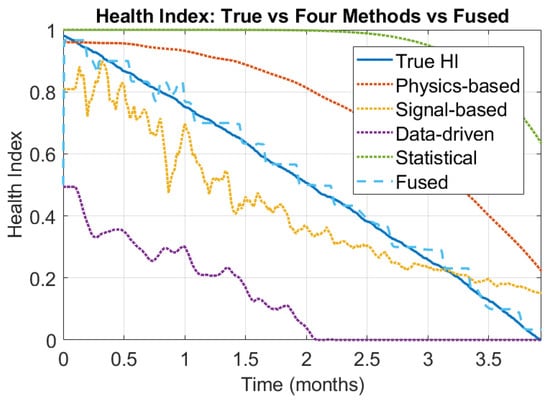

Fault prognosis is a key enabler of predictive maintenance in modern industrial systems, where heterogeneous sensing, modeling, and data analytics coexist under varying operating conditions. This paper proposes a reliability-aware health index fusion framework for hybrid fault prognosis that systematically integrates physics-based, signal-based,

[...] Read more.

Fault prognosis is a key enabler of predictive maintenance in modern industrial systems, where heterogeneous sensing, modeling, and data analytics coexist under varying operating conditions. This paper proposes a reliability-aware health index fusion framework for hybrid fault prognosis that systematically integrates physics-based, signal-based, data-driven, and statistical prognostic methods within a unified probabilistic formulation. Each prognostic output is mapped to a bounded health index, while method-specific, stage-dependent reliability is learned offline from run-to-failure data using confusion matrices over discretized health states. During online operation, health state estimates are fused using a Bayesian time-recursive framework that accounts for degradation dynamics and reliability variation. Simulation-based case studies on rotating machinery demonstrate that the proposed approach significantly improves health index estimation accuracy and reduces variance compared to individual prognostic methods, particularly near failure.

Full article

Figure 1

Open AccessArticle

An Improved H∞ Tracking Controller for Uncertain Systems Based on DDPG with Improved Exploration Strategy

by

Yujie Chen

Algorithms 2026, 19(4), 291; https://doi.org/10.3390/a19040291 - 9 Apr 2026

Abstract

►▼

Show Figures

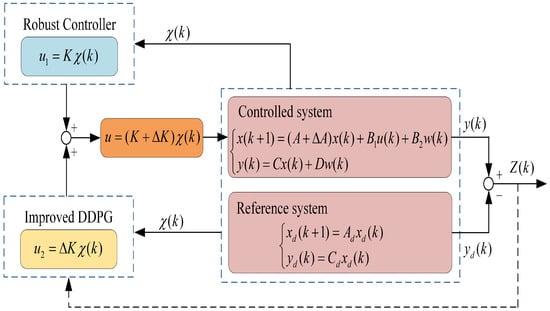

This paper proposes an integrated robust–learning control framework for uncertain systems with external disturbances. An

This paper proposes an integrated robust–learning control framework for uncertain systems with external disturbances. An

Figure 1

Open AccessArticle

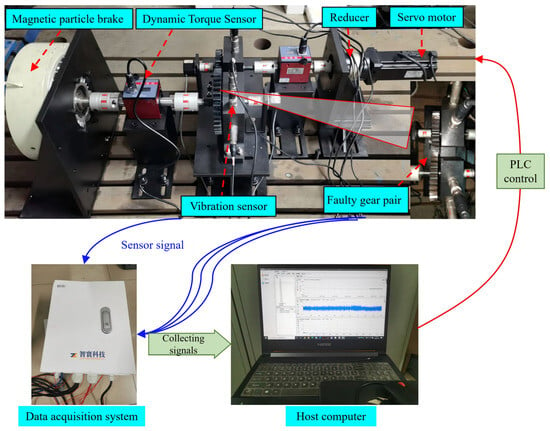

Feature Extraction of Gear Tooth Surface Fatigue Failure in Reducers Based on Vibration Signals

by

Zhenbang Cheng, Zhengyu Liu, Yu Zhou and Hongxin Wang

Algorithms 2026, 19(4), 290; https://doi.org/10.3390/a19040290 - 9 Apr 2026

Abstract

►▼

Show Figures

Extracting periodic fault pulses caused by gear surface fatigue in reducers is often hindered by transmission path interference and strong background noise. Moreover, the traditional Variational Mode Decomposition (VMD) and Maximum Correlation Kurtosis Decomposition (MCKD) method rely on manual parameter selection, which limits

[...] Read more.

Extracting periodic fault pulses caused by gear surface fatigue in reducers is often hindered by transmission path interference and strong background noise. Moreover, the traditional Variational Mode Decomposition (VMD) and Maximum Correlation Kurtosis Decomposition (MCKD) method rely on manual parameter selection, which limits its practicality. To address these issues, this paper proposes a parameter-adaptive VMD-MCKD method based on vibration signals for extracting gear surface fatigue fault features. Using the reciprocal of the peak indicator squared of decomposed signals as fitness functions, the method employs the global search capability of the Sparrow Search Algorithm to adaptively select optimal VMD-MCKD configurations. The optimized VMD-MCKD method is applied to decompose gear surface fatigue fault signals, effectively filtering out noise while highlighting periodic fault pulses caused by gear fatigue. Envelope demodulation is then performed to extract characteristic frequency components of gear surface fatigue faults. Experimental results demonstrate that the proposed method can adaptively extract periodic fault pulse components from strong noise environments, achieving a 2-fold improvement in signal kurtosis and enhanced robustness.

Full article

Figure 1

Open AccessArticle

Intelligent Scheduling of Rail-Guided Shuttle Cars via Deep Reinforcement Learning Integrating Dynamic Graph Neural Networks and Transformer Model

by

Fang Zhu and Shanshan Peng

Algorithms 2026, 19(4), 289; https://doi.org/10.3390/a19040289 - 8 Apr 2026

Abstract

►▼

Show Figures

With the rapid development of e-commerce and smart manufacturing, automated warehouse systems have become critical infrastructure for modern logistics. In China’s vast market, the dynamic scheduling of Rail-Guided Vehicles (RGVs) faces significant challenges due to complex task uncertainties, hierarchical supply chain structures, and

[...] Read more.

With the rapid development of e-commerce and smart manufacturing, automated warehouse systems have become critical infrastructure for modern logistics. In China’s vast market, the dynamic scheduling of Rail-Guided Vehicles (RGVs) faces significant challenges due to complex task uncertainties, hierarchical supply chain structures, and real-time collision avoidance requirements. Traditional rule-based methods and static optimization models often fail to adapt to such dynamic environments. To address these issues, this paper proposes a novel hybrid deep reinforcement learning framework integrating a Dynamic Graph Neural Network (DGNN) and a Transformer model. The DGNN captures the spatiotemporal dependencies of the warehouse network topology, while the Transformer mechanism enhances long-range feature extraction for task prioritization. Furthermore, we design a centralized Deep Q-network (DQN) framework with parameterized action spaces to coordinate multiple RGVs collaboratively. While the system manages multiple physical vehicles, the learning architecture employs a single-agent global scheduler to avoid the non-stationarity issues inherent in multi-agent reinforcement learning. Experimental results based on real-world data from a large-scale electronics manufacturing warehouse demonstrate that our method reduces average task completion time by 18.5% and improves system throughput by 22.3% compared to state-of-the-art baselines. The proposed approach demonstrates potential for intelligent warehouse management in dynamic industrial scenarios.

Full article

Figure 1

Open AccessArticle

PLS-SEM Algorithmic Modeling of High-Tech and High-Touch Hospitality Experiences with Moderating Roles of Employee Presence and Technology Identity

by

Ibrahim A. Elshaer, Osman Elsawy, Alaa M. S. Azazz, Mohammed Ali R. Aldossary, Mahmoud Ahmed Salama and Sameh Fayyad

Algorithms 2026, 19(4), 288; https://doi.org/10.3390/a19040288 - 8 Apr 2026

Abstract

►▼

Show Figures

As tourism businesses increasingly integrate anthropomorphic and AI-impowered technologies into service functions, a key managerial and theoretical challenge is adjusting high-tech performance with high-touch human involvement. Addressing this issue, this paper applied a PLS-SEM algorithmic modeling method to explore how anthropomorphic technological experiences

[...] Read more.

As tourism businesses increasingly integrate anthropomorphic and AI-impowered technologies into service functions, a key managerial and theoretical challenge is adjusting high-tech performance with high-touch human involvement. Addressing this issue, this paper applied a PLS-SEM algorithmic modeling method to explore how anthropomorphic technological experiences shape guests’ experiential sharing intentions (ESIs) within hospitality service environments. Drawing on social response theory and service experience theory, this research developed and practically evaluated a moderated–mediated model describing how anthropomorphic technological experiences can impact experiential sharing intentions (ESIs). Specifically, the model tested the direct and indirect impacts of anthropomorphic experience on ESI through affective experience (AF_EX) and perceived service innovation (PSI), while evaluating the moderating roles of employee presence and technology identity. The results offered strong evidence to support the developed framework. Anthropomorphic experience can positively impact guests’ affective experience, PSI, and ESI with others. Both AF_EX and PSI can act as significant predictors of ESI and can operate as complementary mediating mechanisms, implying that emotional involvement and innovation-signaling technologies reinforce guests’ advocacy through dual experiential pathways. Notably, the findings revealed a critical boundary setting. Technology identity can amplify the influence of anthropomorphic experience on both AE and PSI, signaling that guests who view technology as part of their self-concept exhibited greater levels of experiential value from human-like operations. By applying PLS-SEM algorithmic modeling to integrate anthropomorphism, perceived innovation, and experiential value within a moderated mediation framework, this paper advanced the theoretical understanding of high-tech–high-touch hospitality experiences and provided practical insights for developing synergistic technology-enabled service contexts.

Full article

Figure 1

Open AccessArticle

NAR–SPEI–NARX Hybrid Forecasting Model for Soil Moisture Index (SMI)

by

Miloš Todorov, Darjan Karabašević, Predrag M. Tekić, Dragana Dudić and Dejan Viduka

Algorithms 2026, 19(4), 287; https://doi.org/10.3390/a19040287 - 8 Apr 2026

Abstract

►▼

Show Figures

This paper introduces a new hybrid forecasting architecture that combines Nonlinear Autoregressive (NAR) models, the proxy Standardized Precipitation-Evapotranspiration Index (SPEI), and a Nonlinear Autoregressive with Exogenous Inputs (NARX) framework for Soil Moisture Index (SMI) prediction. The suggested methodology solves the crucial difficulty of

[...] Read more.

This paper introduces a new hybrid forecasting architecture that combines Nonlinear Autoregressive (NAR) models, the proxy Standardized Precipitation-Evapotranspiration Index (SPEI), and a Nonlinear Autoregressive with Exogenous Inputs (NARX) framework for Soil Moisture Index (SMI) prediction. The suggested methodology solves the crucial difficulty of combining future climatic knowledge into soil moisture forecasting by using a cascaded approach. Stage 1 uses univariate NAR models to create multi-step-ahead predictions of precipitation and temperature. Stage 2 converts these forecasts into proxy SPEI values using a physically based water balance computation, and Stage 3 employs a NARX model that uses observed historical SMI and forecast-derived proxy SPEI as exogenous inputs. The framework is assessed using high-frequency observations from 2014 to 2020, with training data through 2019 and validation covering the whole 2020 horizon. The study combining forecast-driven climatic indicators with autoregressive soil moisture dynamics resulted in prediction accuracy (R2 = 0.9888, RMSE = 0.0827, MAE = 0.0567). This study presents a new NAR–SPEI–NARX model for SMI prediction forecasting, based on three-stage modeling, where NAR models forecast precipitation and temperature and then turn them into SPEI-proxy as an exogenous input for NARX.

Full article

Figure 1

Open AccessArticle

An Edge-Enabled Predictive Maintenance Approach Based on Anomaly-Driven Health Indicators for Industrial Production Systems

by

Bouzidi Lamdjad and Adem Chaiter

Algorithms 2026, 19(4), 286; https://doi.org/10.3390/a19040286 - 8 Apr 2026

Abstract

This study develops a data-driven framework for predictive maintenance and prognostic health management in industrial systems using edge-enabled predictive algorithms. The objective is to support early identification of abnormal operating conditions and improve maintenance decision making under real production environments. The proposed approach

[...] Read more.

This study develops a data-driven framework for predictive maintenance and prognostic health management in industrial systems using edge-enabled predictive algorithms. The objective is to support early identification of abnormal operating conditions and improve maintenance decision making under real production environments. The proposed approach combines edge-level monitoring, anomaly detection, and predictive modeling to analyze operational signals and estimate system health conditions from high-frequency industrial data. Empirical validation was conducted using operational datasets collected from two industrial production facilities between 2024 and 2025. The model evaluates patterns associated with operational instability and degradation-related anomalies and translates them into interpretable health indicators that can support proactive intervention. The empirical results show strong predictive performance, with R2 reaching 0.989, a mean absolute percentage error of 3.67%, and a root mean square error of 0.79. In addition, the mitigation of early anomaly signals was associated with an observed improvement of approximately 3.99% in system stability. Unlike many existing studies that treat anomaly detection, predictive modeling, and prognostic analysis as separate tasks, the proposed framework connects these stages within a unified analytical structure designed for deployment in industrial environments. The findings indicate that edge-generated anomaly signals can provide meaningful early information about potential system deterioration and can assist in planning timely maintenance actions even when explicit failure labels are limited. The study contributes to the development of scalable predictive maintenance solutions that integrate artificial intelligence with edge-based industrial monitoring systems.

Full article

(This article belongs to the Special Issue AI-Powered Predictive Maintenance: Transforming Industrial Operations Through Intelligent Fault Diagnosis (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

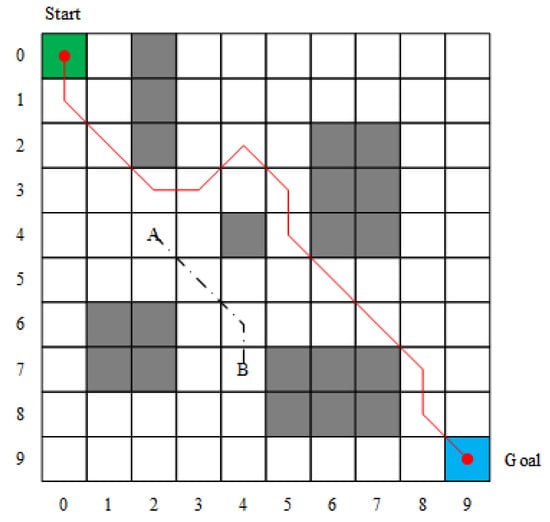

A Robot Path Planning Method Based on a Key Point Encoding Genetic Algorithm

by

Chuanyu Yang, Zhenxue He, Xiaojun Zhao, Yijin Wang and Xiaodan Zhang

Algorithms 2026, 19(4), 285; https://doi.org/10.3390/a19040285 - 7 Apr 2026

Abstract

Path planning is a key technology in robot navigation and has long attracted significant attention. However, in scenarios with high-density or unstructured obstacle distributions, path planning methods based on swarm intelligence optimization still face issues of low computational efficiency and poor path quality,

[...] Read more.

Path planning is a key technology in robot navigation and has long attracted significant attention. However, in scenarios with high-density or unstructured obstacle distributions, path planning methods based on swarm intelligence optimization still face issues of low computational efficiency and poor path quality, limiting their performance in real-time applications. To address these challenges, this paper defines path key points and proposes a path planning method based on the Key-Points Encoding Genetic Algorithm (KEGA). First, an encoding scheme is designed to map key-point sequences into binary encodings, guiding the population to explore efficiently. Then, a new path generation module is integrated using target point direction, local environment, and historical path information to generate high-quality key-point sequences, thereby improving path quality. Additionally, by evaluating key-point sequences as a proxy for full path evaluation, only one precise path construction is required per iteration, significantly reducing computational overhead. Experiments were conducted on four simulated maps with diverse obstacle distribution characteristics and eight real-world street maps to validate the method’s robustness and generalizability. The results show that, compared to the existing state-of-the-art robot path planning methods, the proposed method achieves an average runtime savings of 75.40%, a path length reduction of 35.65% and a path smoothness improvement of 68%.

Full article

(This article belongs to the Section Evolutionary Algorithms and Machine Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

Solving a Multi-Period Dynamic Pricing Problem Using Learning-Augmented Exact Methods and Learnheuristics

by

Angel A. Juan, Yangchongyi Men, Veronica Medina and Marc Escoto

Algorithms 2026, 19(4), 284; https://doi.org/10.3390/a19040284 - 7 Apr 2026

Abstract

This paper addresses a dynamic multi-period pricing problem that incorporates time-varying contextual information and inventory constraints. Sales are modeled as a function of both price and a multidimensional context vector, which may include factors such as customer location, income, loyalty, competitor prices, and

[...] Read more.

This paper addresses a dynamic multi-period pricing problem that incorporates time-varying contextual information and inventory constraints. Sales are modeled as a function of both price and a multidimensional context vector, which may include factors such as customer location, income, loyalty, competitor prices, and promotional activity. This formulation captures complex market dynamics over a finite selling horizon. The problem is formulated as a quadratic programming model, and two alternative solution approaches are proposed. The first uses a multivariate regression model to approximate the sales function linearly, allowing an exact quadratic programming solution that serves as a benchmark. The second is a ‘learnheuristic’ algorithm that combines a nonlinear sales learning model with metaheuristic optimization to generate high-quality pricing strategies under realistic operational constraints. Computational experiments demonstrate the effectiveness of the proposed learnheuristic approach.

Full article

(This article belongs to the Special Issue Hybrid Intelligent Algorithms (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Optimizing the Permutation Flowshop Scheduling Problem with an Improved Sparrow Search Algorithm

by

Maria Tsiftsoglou, Yannis Marinakis and Magdalene Marinaki

Algorithms 2026, 19(4), 283; https://doi.org/10.3390/a19040283 - 6 Apr 2026

Abstract

The Sparrow Search Algorithm (SSA) is a novel optimization method inspired by sparrows’ foraging and anti-predator behavior. It mimics their exploration and exploitation strategies to find near-optimal solutions for various optimization problems. This paper presents the first application of SSA to the widely

[...] Read more.

The Sparrow Search Algorithm (SSA) is a novel optimization method inspired by sparrows’ foraging and anti-predator behavior. It mimics their exploration and exploitation strategies to find near-optimal solutions for various optimization problems. This paper presents the first application of SSA to the widely recognized Permutation Flowshop Scheduling Problem (PFSP) with the makespan criterion as the optimization target. Our study aims to assess the effectiveness and robustness of this cutting-edge metaheuristic through computational experiments and statistical analysis. The proposed SSA is a hybrid variant that incorporates the Variable Neighborhood Search (VNS) algorithm along with a Path Relinking Strategy. The effectiveness of the proposed method is evaluated through computational experiments on PFSP benchmark instances. The performance of the hybrid SSA is compared against several well-established swarm-intelligence metaheuristics, namely Grey Wolf Optimizer (GWO), Whale Optimization Algorithm (WOA), Tuna Swarm Optimization Algorithm (TSO), Particle Swarm Optimization Algorithm (PSO), Firefly Algorithm (FA), Bat Algorithm (BA), and the Artificial Bee Colony (ABC). To ensure fair comparison, all methods are implemented within the same computational framework as the hybrid SSA. The experimental results show that the proposed hybrid SSA achieves the lowest average mean error compared with the competing methods in solving the PFSP. The results were further validated through a comprehensive non-parametric statistical analysis using Friedman, Aligned Friedman, and Quade tests, followed by post-hoc analysis with p-adjusted values, as well as Kruskal–Wallis and Wilcoxon post-hoc tests.

Full article

(This article belongs to the Special Issue Swarm Intelligence and Evolutionary Algorithms for Real World Applications (3rd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Hierarchical Reconciliation of Fifty-One Years of Highway–Rail Grade Crossing Data with Verified Multistage Inference

by

Raj Bridgelall

Algorithms 2026, 19(4), 282; https://doi.org/10.3390/a19040282 - 3 Apr 2026

Abstract

Highway–rail grade crossing (HRGC) safety research relies on federal incident and inventory datasets that span multiple decades. However, inconsistencies in geographic identifiers and incomplete reconstruction of crossing denominators can distort exposure-based rate metrics. This study develops, documents, and validates a transparent nine-stage reconciliation

[...] Read more.

Highway–rail grade crossing (HRGC) safety research relies on federal incident and inventory datasets that span multiple decades. However, inconsistencies in geographic identifiers and incomplete reconstruction of crossing denominators can distort exposure-based rate metrics. This study develops, documents, and validates a transparent nine-stage reconciliation pipeline applied to 51 years (1975–2025) of national HRGC incident data from the Federal Railroad Administration Form 57 and Form 71 datasets. The hierarchical pipeline integrated deterministic alignment and multistage inference methods to produce an audited, geographically consistent dataset. The study formalizes four longitudinal county-level cumulative exposure indices that characterize spatiotemporal patterns of incident concentration relative to static population and infrastructure denominators. These metrics include accumulated incidents per million population (AIPM), accumulated incidents per crossing (AIPC), crossings per million population (CPM), and crossings per 100 square miles (CPHSM). All four metrics exhibited pronounced right-skewness: AIPM, CPM, and CPHSM approximated exponential forms, and AIPC approximated a log-normal form. Statistical tests detected statistically significant tail deviations in three metrics; CPM did not reject the exponential fit at conventional significance levels. Spatial analysis shows coherent regional concentration in incident rates in the Central Plains and lower Mississippi corridors. The national time series exhibits a late-1970s plateau, sustained exponential decline beginning around 1980, and stabilization but persistent incident rates after 2001. Population-normalized AIPM remained statistically indistinguishable between the reconciled and record-dropped datasets; however, crossing-based metrics changed materially when reconstructing denominators from the reconciled crossing universe. Statistical comparisons confirmed that incident-only denominators introduced substantial measurement bias in local risk assessment. State-level rank reversals persisted even when omnibus distributional tests failed to reject equality. By formalizing multistage data cleaning and quantifying its analytical impact over an unprecedented longitudinal horizon, this study establishes denominator integrity and geographic reconciliation as prerequisites for valid HRGC exposure assessment and provides a framework for future predictive modeling.

Full article

(This article belongs to the Special Issue Transportation and Traffic Engineering)

►▼

Show Figures

Graphical abstract

Open AccessArticle

T2C-DETR: A Transformer + Convolution Dual-Channel Backbone Network for Underwater Sonar Image Object Detection

by

Xiaobing Wu, Panlong Tan, Xiaoyu Zhang and Hao Sun

Algorithms 2026, 19(4), 281; https://doi.org/10.3390/a19040281 - 3 Apr 2026

Abstract

►▼

Show Figures

Underwater sonar object detection is challenging because targets are often small, boundaries are blurred, background clutter is strong, and labeled sonar data are limited. To address these issues, we propose T2C-DETR, a detector built on RT-DETR with three task-oriented improvements: (i) a Transformer–Convolution

[...] Read more.

Underwater sonar object detection is challenging because targets are often small, boundaries are blurred, background clutter is strong, and labeled sonar data are limited. To address these issues, we propose T2C-DETR, a detector built on RT-DETR with three task-oriented improvements: (i) a Transformer–Convolution dual-channel backbone (TCDCNet) for complementary global-context and local-detail modeling, (ii) a Noise Filtering Module (NFM) inserted before neck fusion to suppress noise-dominated activations, and (iii) a stage-wise transfer-learning strategy tailored to small sonar datasets. We evaluate the method under three pre-training sources (COCO 2017, DOTA, and an infrared dataset) and then fine-tune on a self-built sonar dataset. Experimental results show that T2C-DETR achieves AP50 of 97.8%, 98.2%, and 98.5% at 72–73 FPS, consistently outperforming the RT-DETR baseline, YOLOv5-Imp, and MLFFNet in the accuracy–speed trade-off. These results indicate that combining global–local representation learning with targeted noise suppression is effective for practical real-time sonar detection.

Full article

Figure 1

Open AccessArticle

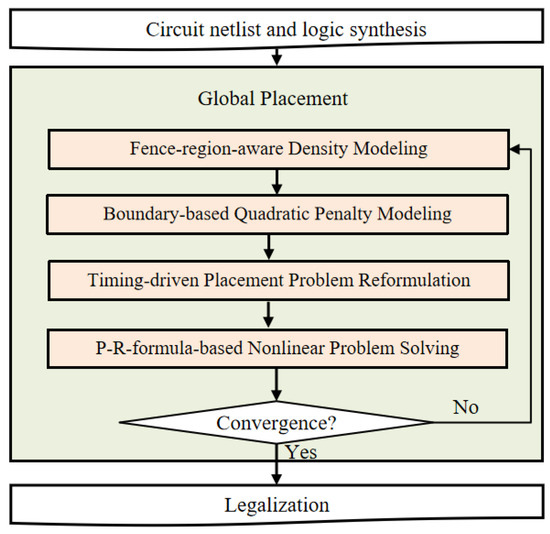

High-Performance Placement for VLSI Logic Synthesis

by

Zhifeng Lin, Yuhao Jiang, Zuodong Liu and Jiarui Chen

Algorithms 2026, 19(4), 280; https://doi.org/10.3390/a19040280 - 3 Apr 2026

Abstract

►▼

Show Figures

Logic synthesis is a critical stage in the VLSI design flow. Logic synthesis methods without considering physical information would result in inferior solutions with timing violations and fail to meet high-performance design requirements. In this paper, we present an analytical placement algorithm that

[...] Read more.

Logic synthesis is a critical stage in the VLSI design flow. Logic synthesis methods without considering physical information would result in inferior solutions with timing violations and fail to meet high-performance design requirements. In this paper, we present an analytical placement algorithm that generates timing-friendly physical information to promote high-performance logic synthesis solutions. To address the crucial congestion issue, we first propose a fence-region-aware density model. Then, a boundary-based quadratic penalty model is constructed to ensure the cells do not violate the legal boundaries. Finally, we develop a Polak–Ribière-based placement algorithm to guide the cell movement while optimizing circuit timing. Compared to the advanced placement work, the experimental results on industrial benchmarks show that our proposed algorithm achieves 7% WNS improvement and 12% TNS optimization with 3% better logic depth.

Full article

Figure 1

Open AccessArticle

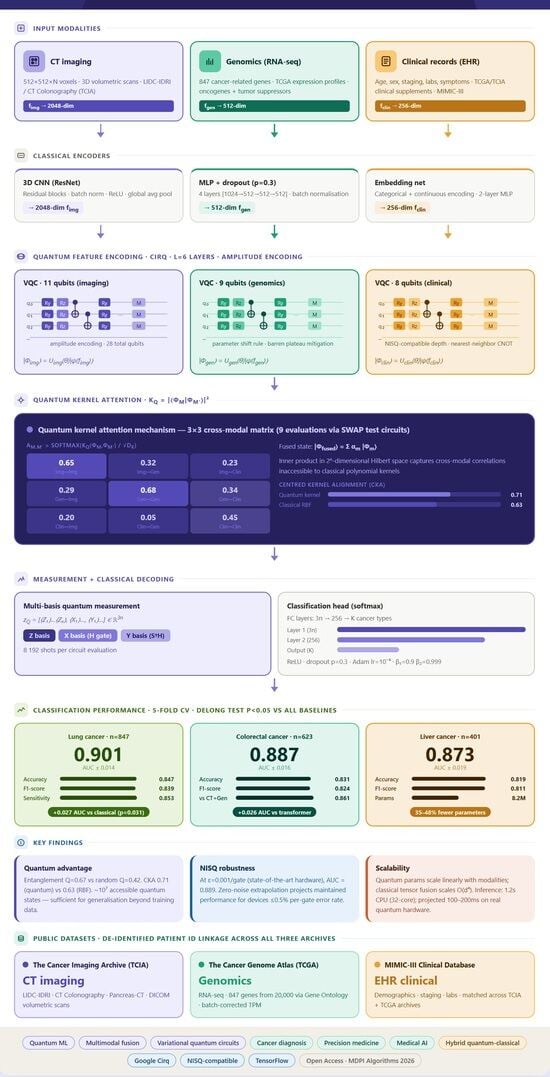

Quantum-Enhanced Multimodal Fusion Networks for Integrated Cancer Diagnosis: Combining CT, Genomics, and Clinical Records

by

Sandeep Gupta, Kanad Ray, Shamim Kaiser, Sazzad Hossain and Jocelyn Faubert

Algorithms 2026, 19(4), 279; https://doi.org/10.3390/a19040279 - 2 Apr 2026

Abstract

►▼

Show Figures

Diagnosis of cancer is one of the hardest problems faced in modern medicine and involves integrating different data sources such as medical images, genomic profiles and clinical records. Traditional machine learning methods have difficulty handling the high-dimensional and complex correlation properties of multimodal

[...] Read more.

Diagnosis of cancer is one of the hardest problems faced in modern medicine and involves integrating different data sources such as medical images, genomic profiles and clinical records. Traditional machine learning methods have difficulty handling the high-dimensional and complex correlation properties of multimodal medical data. In view of this, we propose a new Quantum-Enhanced Multimodal Fusion Network (QEMFN) framework to break through traditional image–text matching based on quantum computing principles for CT imaging with genomic sequencing data and EHR information. Our approach utilizes variational quantum circuits for feature encoding, quantum kernel methods for crossmodal attention, and hybrid quantum–classical architectures for final classification. We realize the framework using Google Cirq quantum computing library and validate it on publicly available datasets including TCIA (The Cancer Imaging Archive), TCGA (The Cancer Genome Atlas), and MIMIC-III clinical database. The matched multimodal cohort comprises 847 lung cancer patients, 623 colorectal cancer patients, and 401 liver cancer patients with complete imaging, genomic, and clinical records, assembled via de-identified patient ID linkage across the three archives. The experiment takes steps toward the realization of quantum-enhanced diagnostic systems and offers a path for subsequent experimental confirmation. We theoretically analyze the potential quantum advantage, present detailed implementation details using Cirq, and describe a roadmap to clinical translation for quantum-enhanced diagnostic tools.

Full article

Graphical abstract

Open AccessReview

A Comprehensive Review of Metaheuristics for the Modern Traveling Salesman Problem and Drone-Assisted Delivery

by

Alessio Mezzina and Mario Pavone

Algorithms 2026, 19(4), 278; https://doi.org/10.3390/a19040278 - 2 Apr 2026

Abstract

The Traveling Salesman Problem (TSP) is a fundamental challenge in combinatorial optimization, with wide-ranging applications in logistics, manufacturing, and network design. In addition to the classical formulation, recent years have witnessed the emergence of complex variants, such as the TSP with Drones (TSP-D),

[...] Read more.

The Traveling Salesman Problem (TSP) is a fundamental challenge in combinatorial optimization, with wide-ranging applications in logistics, manufacturing, and network design. In addition to the classical formulation, recent years have witnessed the emergence of complex variants, such as the TSP with Drones (TSP-D), TSP with Time Windows, and Prize-Collecting TSP, that incorporate novel constraints reflecting real-world requirements like last-mile delivery and multimodal logistics. This review presents a comprehensive survey of metaheuristic approaches for solving both the classical TSP and its emerging extensions, with particular emphasis on metaheuristic, hybrid methods, and machine learning-enhanced strategies. Recent algorithmic developments, benchmark datasets, and evaluation metrics are investigated, and critical challenges in addressing drone coordination, synchronization, and uncertainty are identified, as well. Bibliometric analysis is further provided to map research trends and the evolution of the field. By synthesizing foundational techniques and state-of-the-art innovations, this review outlines current progress and proposes future directions for metaheuristic optimization in increasingly dynamic and heterogeneous routing scenarios.

Full article

(This article belongs to the Special Issue Recent Advances in Artificial Intelligence and Metaheuristics Optimization)

►▼

Show Figures

Figure 1

Open AccessArticle

Robust Prediction of Compressive Strength of SCM Concrete with Nested Cross-Validation and Bayesian Optimization

by

Ümit Işıkdağ, Gebrail Bekdaş, Sinan Melih Nigdeli, Farnaz Ahadian and Zong Woo Geem

Algorithms 2026, 19(4), 277; https://doi.org/10.3390/a19040277 - 2 Apr 2026

Abstract

►▼

Show Figures

Concrete production is one of the main sources of CO2 emissions. The primary reason for this is the high clinker content of Portland cement. To mitigate this problem, supplementary cementitious materials (SCMs) such as fly ash, silica fume, Ground granulated blast furnace

[...] Read more.

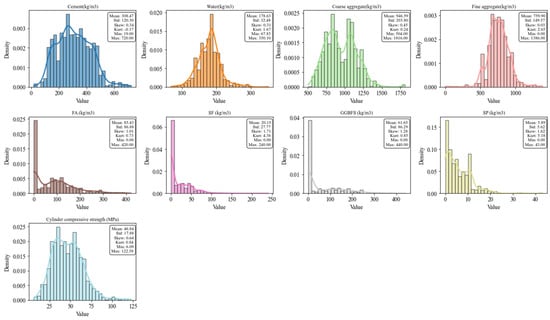

Concrete production is one of the main sources of CO2 emissions. The primary reason for this is the high clinker content of Portland cement. To mitigate this problem, supplementary cementitious materials (SCMs) such as fly ash, silica fume, Ground granulated blast furnace slag (GGBFS), rice husk ash, and natural pozzolans are increasingly being used. These materials are used as partial replacements for cement. SCMs not only reduce the environmental impact of concrete but can also improve its long-term mechanical and durability properties. The aim of this study is to develop a machine learning framework that can accurately predict the compressive strength of concrete containing SCMs. The framework includes the training and evaluation of several machine learning models. Nested cross-validation and Bayesian hyperparameter optimization were used to explore the full capacity of the models and ensure reliable evaluation. Permutation significance testing and learning curve analysis were applied to verify that the models learn meaningful patterns rather than memorize the data. Also, feature importance and SHapley Additive exPlanations analyses were performed and the key variables that influence the prediction of the compressive strength of SCM concrete were identified. The optimized XGBoost model achieved the best generalization performance with a holdout R2 of 0.8398. It confirms the effectiveness of the proposed statistically rigorous machine learning framework for reliable compressive strength prediction of SCM-blended concrete.

Full article

Figure 1

Open AccessArticle

MSA-Net: A Deep Learning Network with Multi-Axial Hadamard Attention and Pyramid Pooling for Stroke Microwave Imaging

by

Bo Han, Dongliang Li, Xuhui Zhu, Mingshuai Zhang and Peng Li

Algorithms 2026, 19(4), 276; https://doi.org/10.3390/a19040276 - 2 Apr 2026

Abstract

Microwave imaging is emerging as an alternative to conventional medical diagnostic techniques. Traditional analytical and numerical methods fail to adequately address these fundamental challenges: they often rely on strict linear approximations or simplified physical models, leading to low reconstruction accuracy, poor robustness, and

[...] Read more.

Microwave imaging is emerging as an alternative to conventional medical diagnostic techniques. Traditional analytical and numerical methods fail to adequately address these fundamental challenges: they often rely on strict linear approximations or simplified physical models, leading to low reconstruction accuracy, poor robustness, and limited generalization ability in complex clinical scenarios. As a result, they cannot meet the high-precision requirements of practical stroke microwave imaging. To further improve the accuracy of microwave imaging algorithms in recognizing stroke regions and solving the backscattering problem, this study employs a combination of methods with deep learning. It presents the Multi-Scale Attention Network (MSA-Net) for microwave imaging. The network is based on the EGE-UNet network structure with improved multi-axis Hadamard attention, incorporating null-space pyramid pooling and introducing a deep supervisory mechanism to improve the network performance further. To combine microwave imaging with deep learning, firstly, a large amount of microwave data need to be simulated with HFSS, in which the simulation model is a human brain stroke model constructed by an HFSS simulation system. Secondly, the microwave data obtained from the simulation are converted into a tensor format. Then, the tensor data are input into the MSA-Net neural network, which generates a binary mask image that can be used to detect the size and location of the stroke. This study also prompts the model to converge faster by sparsifying the microwave data to improve training efficiency. The method has been tested using simulation data, and based on the comparison experiments with other networks, MSA-Net is more accurate in detecting the location and the bleed size. The experimental results show that the proposed method is superior for stroke imaging. The experimental results show that the proposed model achieves a 1.08 improvement in peak signal-to-noise ratio and a 0.017 reduction in learned perceptual image block similarity, fully validating the effectiveness of the structural optimization strategy proposed in this paper.

Full article

(This article belongs to the Special Issue Algorithms for Computer Aided Diagnosis: 3rd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

The New Mushroom–Weed Hybrid Reproduction Optimization Algorithm and Its Application to Tourist Route Planning

by

Domagoj Palinic, Rea Aladrovic, Marina Ivasic-Kos and Jonatan Lerga

Algorithms 2026, 19(4), 275; https://doi.org/10.3390/a19040275 - 2 Apr 2026

Abstract

Nature-inspired metaheuristic algorithms are commonly applied to complex combinatorial optimization problems where exact methods are computationally impractical. Tourist route optimization is a representative multi-objective problem characterized by realistic constraints such as travel time, cost, opening hours, and transportation modes. Although Mushroom Reproduction Optimization

[...] Read more.

Nature-inspired metaheuristic algorithms are commonly applied to complex combinatorial optimization problems where exact methods are computationally impractical. Tourist route optimization is a representative multi-objective problem characterized by realistic constraints such as travel time, cost, opening hours, and transportation modes. Although Mushroom Reproduction Optimization is computationally efficient, it often experiences premature convergence in complex search spaces. This paper proposes a novel hybrid algorithm, Mushroom–Weed Hybrid Reproduction Optimization (MWHRO), which integrates the colony-based local search of the Mushroom Reproduction algorithm with the fitness-proportional reproduction and competitive elimination mechanisms of Invasive Weed Optimization. Hybridization enhances population diversity and global exploration while preserving fast convergence. The proposed algorithm is evaluated based on a realistic tourist route optimization problem using real-world data from Zagreb, Croatia, across multiple transportation modes and objective-weight scenarios. Performance is compared against Ant Colony Optimization, Invasive Weed Optimization, Particle Swarm Optimization, and standard Mushroom Reproduction Optimization under equal evaluation budgets. Experimental results demonstrate that the proposed MWHRO algorithm consistently achieves high-quality solutions with significantly lower execution times, particularly in constrained and multimodal scenarios. Statistical analysis confirms the robustness and practical suitability of the proposed approach for real-world route optimization.

Full article

(This article belongs to the Special Issue Machine Learning for Pattern Recognition (3rd Edition))

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Algorithms Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Actuators, Algorithms, BDCC, Future Internet, JMMP, Machines, Robotics, Systems

Smart Product Design and Manufacturing on Industrial Internet

Topic Editors: Pingyu Jiang, Jihong Liu, Ying Liu, Jihong YanDeadline: 30 June 2026

Topic in

Algorithms, Data, Earth, Geosciences, Mathematics, Land, Water, IJGI

Applications of Algorithms in Risk Assessment and Evaluation

Topic Editors: Yiding Bao, Qiang WeiDeadline: 31 July 2026

Topic in

Algorithms, Applied Sciences, Electronics, MAKE, AI, Software

Applications of NLP, AI, and ML in Software Engineering

Topic Editors: Affan Yasin, Javed Ali Khan, Lijie WenDeadline: 30 August 2026

Topic in

Agriculture, Energies, Vehicles, Sensors, Sustainability, Urban Science, Applied Sciences, Algorithms

Sustainable Energy Systems

Topic Editors: Luis Hernández-Callejo, Carlos Meza Benavides, Jesús Armando Aguilar JiménezDeadline: 31 October 2026

Conferences

Special Issues

Special Issue in

Algorithms

Advances in Computer Vision: Emerging Trends and Applications

Guest Editors: Noman Khan, Samee Ullah KhanDeadline: 30 April 2026

Special Issue in

Algorithms

Advances in Deep Learning-Based Data Analysis

Guest Editors: Lingyu Yan, Xing TangDeadline: 30 April 2026

Special Issue in

Algorithms

Machine Learning Algorithms for Signal Processing

Guest Editor: Fred LacyDeadline: 30 April 2026

Special Issue in

Algorithms

Advances in Parallel and Distributed AI Computing

Guest Editor: Stefan BosseDeadline: 30 April 2026

Topical Collections

Topical Collection in

Algorithms

Parallel and Distributed Computing: Algorithms and Applications

Collection Editors: Charalampos Konstantopoulos, Grammati Pantziou

Topical Collection in

Algorithms

Feature Papers in Algorithms and Mathematical Models for Computer-Assisted Diagnostic Systems

Collection Editor: Francesc Pozo

Topical Collection in

Algorithms

Algorithms for Games AI

Collection Editors: Wenxin Li, Haifeng Zhang

Topical Collection in

Algorithms

Feature Papers on Artificial Intelligence Algorithms and Their Applications

Collection Editors: Ulrich Kerzel, Mostafa Abbaszadeh, Andres Iglesias, Akemi Galvez Tomida