-

Characteristics of Eye Movements and Correlation to Cognitive Functions in Relation to the Location of Guide Signs and Driving Speed

Characteristics of Eye Movements and Correlation to Cognitive Functions in Relation to the Location of Guide Signs and Driving Speed -

Comparing Eye-Tracking Metrics with the Driver Activity Load Index

Comparing Eye-Tracking Metrics with the Driver Activity Load Index -

Simultaneous Analysis of Microsaccades and Pupil Size Variations in Age-Related Cognitive Impairment Using Eye-Tracking Technology

Simultaneous Analysis of Microsaccades and Pupil Size Variations in Age-Related Cognitive Impairment Using Eye-Tracking Technology -

A Data-Driven Approach for Comparing Gaze Allocation Across Conditions

A Data-Driven Approach for Comparing Gaze Allocation Across Conditions

Journal Description

Journal of Eye Movement Research

Journal of Eye Movement Research

(JEMR) is an international, peer-reviewed, open access journal on all aspects of oculomotor functioning including methodology of eye recording, neurophysiological and cognitive models, attention, reading, as well as applications in neurology, ergonomy, media research and other areas, and published bimonthly online by MDPI (from Volume 18, Issue 1 - 2025).

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), PubMed, PMC, and other databases.

- Journal Rank: JCR - Q1 (Ophthalmology) / CiteScore - Q2 (Ophthalmology)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 29.4 days after submission; acceptance to publication is undertaken in 4.9 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

Impact Factor:

2.8 (2024);

5-Year Impact Factor:

2.8 (2024)

Latest Articles

Machine Learning Integration of Eye-Tracking and Cognitive Screening for Detecting Cognitive Impairment

J. Eye Mov. Res. 2026, 19(3), 57; https://doi.org/10.3390/jemr19030057 - 20 May 2026

Abstract

Cognitive impairment is common in Post-COVID-19 Condition (PCC), yet full neuropsychological testing remains resource-intensive. Because eye movements are known to be altered in certain cognitive disorders, Eye-Tracking (ET) offers a fast, non-invasive complementary approach for large-scale screening. This study aimed to predict neuropsychological

[...] Read more.

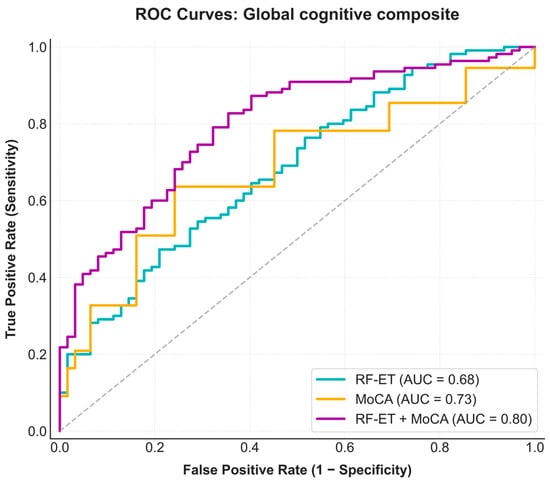

Cognitive impairment is common in Post-COVID-19 Condition (PCC), yet full neuropsychological testing remains resource-intensive. Because eye movements are known to be altered in certain cognitive disorders, Eye-Tracking (ET) offers a fast, non-invasive complementary approach for large-scale screening. This study aimed to predict neuropsychological test scores of participants with PCC from ET metrics using machine and deep learning models. ET data was collected from 172 participants performing a battery of visual tasks designed to elicit smooth pursuit and fixational eye movements, as well as pupil responses to light. Cognitive performance was assessed through established neuropsychological tests. We applied regression and classification models (e.g., Random Forest, XGBoost, and deep neural networks) to predict neuropsychological performance. Models were trained using ET data alone and in combination with the Montreal Cognitive Assessment (MoCA) scores, a widely used neuropsychological test for global cognitive screening. Although predicting individual test scores was challenging, combining them into a global composite measure improved performance. Model sensitivity and specificity reached 88% and 34% using ET data alone, and 87% and 60% when integrating ET with MoCA. This last trained model outperformed the conventional MoCA, highlighting the potential of ET as a rapid screening support tool for cognitive assessment.

Full article

(This article belongs to the Special Issue The Future Challenges of Eye Tracking Technologies)

►

Show Figures

Open AccessArticle

Ab Initio Binocular Formulation of Listing’s Law

by

Jacek Turski

J. Eye Mov. Res. 2026, 19(3), 56; https://doi.org/10.3390/jemr19030056 - 16 May 2026

Abstract

►▼

Show Figures

Human eyes do not have perfectly aligned optical components; the fovea is displaced from the posterior pole, and the crystalline lens is tilted away from the eye’s optical axis. Important in the study of vision quality, it is included here in binocular and

[...] Read more.

Human eyes do not have perfectly aligned optical components; the fovea is displaced from the posterior pole, and the crystalline lens is tilted away from the eye’s optical axis. Important in the study of vision quality, it is included here in binocular and oculomotor research. In the binocular system, with the eye’s optical asymmetry, all axes differ. The eye’s posture change is decomposed into the torsion-free part that gives the change in visual axis direction and the torsional part that best approximates the rotation about the lens’s optical axis. This geometric formulation, supported by computer simulations and modern ophthalmology studies, leads to binocular Listing’s law and the related half-angle rule, important for oculomotor control by constraining the eye’s redundant torsional degree of freedom. The eye’s primary position and the Listing plane, indispensable ingredients of Listing’s law, are replaced with the binocular eyes’ posture corresponding to the eye muscles’ natural tonus resting position, which serves as a zero-reference level for convergence effort. Further, the binocular constraints couple 3D changes in the torsional positions of the eyes within the ab initio formulation of Listing’s law here, which was previously proposed ad hoc. Finally, the noncommutativity rule underlying Listing’s law and the half-angle rule are discussed by specifying the configuration space of sequences of fixations of binocularly constrained eyes, which are visualized in 3D simulations. The results obtained in this study should be a part of the answers to the questions posted in the literature on the relevance of Listing’s law to clinical practices.

Full article

Figure 1

Open AccessArticle

Eye-Tracking Evidence That Verifiable Explanations Support Visual Evidence Checking in AI-Assisted Chest Radiograph Interpretation

by

Yong Han, Wumin Ouyang, Hemin Du, Mengyun Ma and Guanning Wang

J. Eye Mov. Res. 2026, 19(3), 55; https://doi.org/10.3390/jemr19030055 - 15 May 2026

Abstract

►▼

Show Figures

Evaluations of medical artificial intelligence (AI) explanations often rely on self-reported trust, perceived usefulness, acceptance, or final decision outcomes, while less directly characterizing whether users check evidence around AI outputs during decision making. In AI-assisted chest radiograph interpretation, a critical process-level question is

[...] Read more.

Evaluations of medical artificial intelligence (AI) explanations often rely on self-reported trust, perceived usefulness, acceptance, or final decision outcomes, while less directly characterizing whether users check evidence around AI outputs during decision making. In AI-assisted chest radiograph interpretation, a critical process-level question is whether users return from the AI output to the original image evidence when further scrutiny is needed. To address this question, we examined whether verifiable explanations—explanations designed to make AI recommendations checkable against the original image evidence—are associated with process markers of visual evidence checking in AI-assisted chest radiograph interpretation using eye-tracking and human-factors process measures. A 2 × 2 between-subjects experiment manipulated verifiable explanations (present vs. absent) and risk context (high vs. low), with AI recommendation correctness embedded at the trial level. Fifty-six clinically trained participants each completed 24 interpretation trials. Analyses focused primarily on gaze transitions between the AI output and the original image and dwell time on the original image, with response time and exploratory verification-related behavioral states used as auxiliary process measures. Verifiable explanations did not simply increase acceptance of AI recommendations. Instead, when AI recommendations were incorrect, they were most clearly associated with more frequent AI–image transitions and longer absolute dwell time on the original image evidence. Exploratory state-based analyses further suggested a lower tendency toward no-verify adopt under incorrect AI recommendations, but these findings were treated as complementary rather than primary evidence. Overall, the value of verifiable explanations lies not only in final decisions but in whether they make AI recommendations more inspectable against the original evidence. These findings provide eye-tracking evidence consistent with visual evidence checking in AI-assisted diagnostic interfaces and underscore the value of process-sensitive human-factors measures in medical AI evaluation.

Full article

Figure 1

Open AccessArticle

Cognitive Mechanisms of Predictive Processing in Chinese Reading: An Eye-Movement Analysis Based on the Ex-Gaussian Distribution

by

Wen Tong, Xiaojiao Li, Yingdi Liu and Zhifang Liu

J. Eye Mov. Res. 2026, 19(3), 54; https://doi.org/10.3390/jemr19030054 - 15 May 2026

Abstract

►▼

Show Figures

This study employed the Ex-Gaussian distribution model to analyse eye-tracking data, to elucidate the cognitive mechanisms underlying predictive processing during Chinese reading. Using a single-factor, two-level within-subjects design (contextual predictability: high vs. low), data from 32 adult readers were analysed across the pre-target

[...] Read more.

This study employed the Ex-Gaussian distribution model to analyse eye-tracking data, to elucidate the cognitive mechanisms underlying predictive processing during Chinese reading. Using a single-factor, two-level within-subjects design (contextual predictability: high vs. low), data from 32 adult readers were analysed across the pre-target and target word regions. The results revealed that predictive reading follows a three-stage cognitive model. In the expectation generation stage (pre-target region), a significant negative τ effect indicated resource pre-allocation driven by strong contextual constraints, thereby facilitating the construction of predictive lexical representations. In the verification and integration stage (target word region), a significant negative μ effect in the later measurement window indicated that successful prediction–input matching accelerated lexical identification and enhanced integration efficiency; the σ parameter did not reach significance in either measurement window. In the conflict resolution stage (pre-target and target word regions), a significant positive τ effect indicated that verification failure triggered lexical activation competition at the target word, driving regressive fixations to the pre-target region for contextual reanalysis; conflict resolution costs were markedly higher under the low-predictability condition, owing to the absence of a dominant activation anchor. These findings suggest that contextual predictability influences reading through a dual mechanism: the μ parameter modulates the automatic processing speed of lexical identification, whereas the τ parameter regulates the cognitive control processes underlying expectation generation and conflict resolution. Together, these results provide empirical support for the integration of predictive coding theory and cognitive control frameworks.

Full article

Figure 1

Open AccessArticle

Diagnostic Criteria for Convergence Excess: Diagnostic Validity of Clinical Signs Associated with Near Esophoria

by

Pilar Cacho-Martínez, Mario Cantó-Cerdán, Zaíra Cervera-Sánchez and Ángel García-Muñoz

J. Eye Mov. Res. 2026, 19(3), 53; https://doi.org/10.3390/jemr19030053 - 14 May 2026

Abstract

►▼

Show Figures

To propose which tests may be used for diagnosing convergence excess. A prospective study of a consecutive clinical sample was performed. Patients (18–35 years) attending optometric care underwent subjective refraction, cover test, and Symptom Questionnaire for Visual Dysfunctions (SQVD). Based on cover test

[...] Read more.

To propose which tests may be used for diagnosing convergence excess. A prospective study of a consecutive clinical sample was performed. Patients (18–35 years) attending optometric care underwent subjective refraction, cover test, and Symptom Questionnaire for Visual Dysfunctions (SQVD). Based on cover test and SQVD scores, two groups were recruited: 64 symptomatic subjects with near esophoria and 64 asymptomatic with normal binocular vision. Accommodative and binocular tests were assessed, identifying those with significant statistical differences between groups. Diagnostic validity was analysed using ROC curves, sensitivity, specificity, and likelihood ratios. A serial testing strategy combining tests was also evaluated. ROC analysis showed best diagnostic accuracy for binocular accommodative facility (BAF) failing with −2.00 D (area under the curve, AUC = 0.865) and vergence facility (VF) failing with base-in prisms (AUC = 0.864). Using cutoffs from ROC analysis (BAF: ≤8.25 cpm and VF ≤ 12.75 cpm), their combination showed best validity (S = 0.625, Sp = 0.938, LR+ = 10, LR− = 0.4). The combined AUC was 0.932. The proposal for diagnosing convergence excess is to use, in addition to near esophoria with normal distance heterophoria, the combination of failing BAF with negative lenses and failing vergence facility with base-in prisms.

Full article

Figure 1

Open AccessArticle

Effects of Word Frequency, Word Length, and Visual Complexity on Chinese Sentence Oral Reading: An Eye Movement Comparison Study Between Children and Adults

by

Kunyu Lian, Junhui Pei, Feifei Liang, Jie Ma, Rong Lian and Xuejun Bai

J. Eye Mov. Res. 2026, 19(3), 52; https://doi.org/10.3390/jemr19030052 - 13 May 2026

Abstract

This study investigated how word frequency, word length and visual complexity affect lexical processing during Chinese sentence oral reading, and whether these effects differ between developing and skilled readers. Third-grade children and adults read sentences aloud while their eye movements were recorded with

[...] Read more.

This study investigated how word frequency, word length and visual complexity affect lexical processing during Chinese sentence oral reading, and whether these effects differ between developing and skilled readers. Third-grade children and adults read sentences aloud while their eye movements were recorded with an EyeLink 1000 Plus eye-tracker. Linear mixed-effects models revealed three main findings. First, children showed larger word-frequency and visual-complexity effects than adults, indicating less efficient lexical processing in developing readers. Second, word length moderated the effects of word frequency and visual complexity. Frequency effects were amplified for two-character words, whereas visual-complexity effects were stronger for single-character words on early measures and followed a different pattern on some late measures. Third, at the sentence level, children exhibited shorter forward saccades, more regressions and longer total reading times than adults. These findings provide developmental evidence for the visual and linguistic constraints hypothesis and show how visual recognition and overt phonological output jointly shape foveal lexical processing in Chinese oral reading.

Full article

(This article belongs to the Special Issue Eye Movements in Reading and Related Difficulties)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Eye Movement Patterns as Robust Biomarkers for Schizophrenia Identification Using a Novel Data Transformation Approach

by

Lijin Huang, Senhao Li, Zhi Liu, Dan Zhang, Lihua Xu, Tianhong Zhang and Jijun Wang

J. Eye Mov. Res. 2026, 19(3), 51; https://doi.org/10.3390/jemr19030051 - 11 May 2026

Abstract

Although eye movement abnormalities are documented in schizophrenia (SZ), their translation into objective diagnostic biomarkers remains limited. In this study, we propose a novel identification framework that integrates a Sparsity-Scoring Kernel Entropy Component Analysis (SSKECA) algorithm with a multidimensional eye movement feature set.

[...] Read more.

Although eye movement abnormalities are documented in schizophrenia (SZ), their translation into objective diagnostic biomarkers remains limited. In this study, we propose a novel identification framework that integrates a Sparsity-Scoring Kernel Entropy Component Analysis (SSKECA) algorithm with a multidimensional eye movement feature set. A total of 40 patients with SZ and 50 healthy controls (HC) completed a free-viewing task involving 100 distinct semantic images. The proposed SSKECA algorithm optimizes multidimensional feature representations to capture latent eye movement patterns characteristic of SZ. The SSKECA–AdaBoost model achieved competitive performance, with an accuracy of 0.933 and an area under the receiver operating characteristic curve (AUC) of 0.960. Notably, when restricted to only 25 highly discriminative images, the SSKECA–XGBoost model achieved an accuracy of 0.922. Feature ablation analyses not only reproduced previously reported eye movement findings but also highlighted additional atypical patterns. Misclassification analyses revealed more pronounced eye movement deficits in incorrectly classified SZ patients. Overall, the proposed framework translates complex eye movement patterns into robust indicators for subject-level identification, offering a practical and efficient tool to support objective assessment in SZ.

Full article

(This article belongs to the Special Issue Digital Advances in Binocular Vision and Eye Movement Assessment)

►▼

Show Figures

Figure 1

Open AccessArticle

Quantification of Cognitive States via Eye Tracking and Using Artificial Intelligence to Analyze Virtual Reality Learning Experiences

by

Haram Choi and Sanghun Nam

J. Eye Mov. Res. 2026, 19(3), 50; https://doi.org/10.3390/jemr19030050 - 5 May 2026

Abstract

►▼

Show Figures

Virtual reality (VR) technology provides a high sense of immersion and presence to users and can enhance the engagement and performance of learning. However, the VR learning environment introduces more complex audio–visual stimuli than the traditional multimedia learning environment. These excessive stimuli cause

[...] Read more.

Virtual reality (VR) technology provides a high sense of immersion and presence to users and can enhance the engagement and performance of learning. However, the VR learning environment introduces more complex audio–visual stimuli than the traditional multimedia learning environment. These excessive stimuli cause negative effects such as distraction and cognitive overload. To minimize these negative impacts and improve the learning environment, we must evaluate learners’ cognitive states under the VR environment. Cognitive states can be evaluated subjectively (e.g., through questionnaires) or objectively (e.g., using biometric signals). Subjective and objective methods must be used simultaneously, and correlations between them must be analyzed for quantifying objective measures. The accurate detection of cognitive states is challenging for traditional statistical analysis methods, necessitating the exploration of artificial intelligence (AI) techniques that can classify cognitive states. This study develops a VR learning experience evaluation system based on eye-tracking data. Cognitive states during VR learning are classified as cognitive overload, immersion, and distraction. Correlations between each cognitive state and eye-tracking metrics are evaluated, and the possibility of cognitive-state quantification is discussed. An LSTM-based model developed in this study classified cognitive states from eye-tracking data with moderate accuracy (75.60%) under a subject-independent validation setting.

Full article

Figure 1

Open AccessArticle

Oculomotor Vergence Eye Movement Endurance in Normal Vision via Virtual Reality-Integrated Eye Tracking

by

Fatema F. Hirani, Farzin Hajebrahimi and Tara L. Alvarez

J. Eye Mov. Res. 2026, 19(3), 49; https://doi.org/10.3390/jemr19030049 - 5 May 2026

Abstract

►▼

Show Figures

Modern societies are becoming increasingly dependent on electronics, leading to an increase in visual symptoms. Vergence endurance, the ability to sustain performance, may serve as a quantitative metric to complement symptom surveys to assess vergence performance during near visual tasks. To quantify vergence

[...] Read more.

Modern societies are becoming increasingly dependent on electronics, leading to an increase in visual symptoms. Vergence endurance, the ability to sustain performance, may serve as a quantitative metric to complement symptom surveys to assess vergence performance during near visual tasks. To quantify vergence endurance, 48 participants, aged 15 to 23 years with normal binocular vision, completed a 15 min symmetrical disparity vergence step task to assess potential changes in peak vergence speed over the course of the experiment. Peak velocity, final amplitude, and the slope of the linear regression fit of the peak velocity as a function of stimulus recording were quantified for convergence and divergence responses using an eye tracker integrated in a virtual reality headset. Peak velocity was sustained by 63% and 69% of participants for convergence and divergence eye movements, respectively. Convergence and divergence responses were significantly different for peak velocity (p < 0.001) and vergence endurance (p < 0.03). The endurance metric tool has potential that may help shape future clinical applications for those with acquired brain injuries, including concussions or neurodegenerative diseases such as multiple sclerosis or Parkinson’s disease.

Full article

Graphical abstract

Open AccessArticle

Effects of Driving Task Demands and Information Load on AR-HUD Cognitive Efficiency: The Moderating Role of Working Memory Capacity in a VR-Based Simulated Driving Environment

by

Jing Li, Min Lin, Xinyu Feng, Hua Zhang, Chuchu Wang and Yulian Ma

J. Eye Mov. Res. 2026, 19(3), 48; https://doi.org/10.3390/jemr19030048 - 3 May 2026

Abstract

►▼

Show Figures

The driving scenario and information load jointly influence the cognitive efficiency of augmented reality head-up display (AR-HUD) interfaces. However, the moderating role of drivers’ working memory capacity (WMC) remains unclear. To investigate this mechanism, a simulated driving experiment with a mixed design was

[...] Read more.

The driving scenario and information load jointly influence the cognitive efficiency of augmented reality head-up display (AR-HUD) interfaces. However, the moderating role of drivers’ working memory capacity (WMC) remains unclear. To investigate this mechanism, a simulated driving experiment with a mixed design was conducted in a low-immersivity desktop virtual reality (VR) environment. First, 40 volunteers were screened using an automated operation span task, yielding 16 high- and low-WMC participants. They then drove under three scenarios (urban intersection, expressway, construction zone) and six levels of AR-HUD visual information load. Generalized linear models were applied to the reaction time, fixation duration, and pupil diameter. The results revealed a significant three-way interaction among WMC, scenario, and information load. High-WMC drivers maintained faster responses and lower subjective loads up to Levels 4–6, adopting a deep processing strategy; low-WMC drivers already showed cognitive overload at Level 4 and above, requiring an optimal load range of Level 2–3. The construction zone induced the steepest increase in cognitive load, whereas the expressway markedly reduced sensitivity to additional visual information. Therefore, the optimal AR-HUD information load must be adapted to drivers’ WMC: high-WMC drivers can safely handle Levels 4–6 in low- or medium-demand scenarios, whereas low-WMC drivers require a minimalist presentation of Levels 2–3 in high-demand situations. This study provides quantitative, empirically grounded guidelines for designing cognitively adaptive AR-HUD interfaces.

Full article

Figure 1

Open AccessArticle

Differential Fixation and Eye Alignment Patterns in Strabismus with and Without Amblyopia Across Viewing Conditions

by

Archayeeta Rakshit, Ibrahim M. Quagraine, Gokce Busra Cakir, Aasef G. Shaikh and Fatema F. Ghasia

J. Eye Mov. Res. 2026, 19(3), 47; https://doi.org/10.3390/jemr19030047 - 3 May 2026

Abstract

►▼

Show Figures

Fixation instability (FI) and vergence instability (VI) in amblyopia and strabismus are associated with disrupted physiologic fixation eye movements (FEMs). This study examined how viewing conditions affect FEM patterns in strabismic subjects with and without amblyopia. FEMs of the non-dominant/amblyopic and dominant/fellow eyes

[...] Read more.

Fixation instability (FI) and vergence instability (VI) in amblyopia and strabismus are associated with disrupted physiologic fixation eye movements (FEMs). This study examined how viewing conditions affect FEM patterns in strabismic subjects with and without amblyopia. FEMs of the non-dominant/amblyopic and dominant/fellow eyes were recorded using video-oculography during both-eye viewing (BEV), fellow/dominant-eye viewing (FEV/DEV), and amblyopic/non-dominant-eye viewing (AEV/NDEV) in strabismic subjects with amblyopia (SA, n = 56), without amblyopia (S, n = 19), and controls (C, n = 25). FI, VI, fast FEM amplitudes, slow FEM velocities, and time-based control of eye deviation were analyzed. The SA group showed the greatest FI in the amblyopic eye during AEV compared with the fellow eye during FEV, whereas minimal inter-ocular FI differences were observed in the S group and controls. Under monocular viewing, both SA and S groups exhibited increased FI in the non-viewing eye and higher VI than controls. Regression analyses indicated that visual acuity deficits primarily influenced viewing-eye FI and FEM dynamics, while strabismus mainly affected non-viewing-eye FI and slow FEMs. C and S groups showed the least eye deviation during BEV, whereas the SA group showed the least eye deviation—but the highest VI—during AEV, indicating a distinct pattern of incomitance. Distinct FEM patterns shaped by viewing conditions may reflect underlying visuomotor control mechanisms and serve as biomarkers for AI (artificial intelligence)-based classification.

Full article

Figure 1

Open AccessArticle

Oculometric Function More Strongly Predicts Working Memory than Stress in Military Officers

by

Mollie McGuire, Neda Bahrani, Quinn Kennedy and Dorion Liston

J. Eye Mov. Res. 2026, 19(3), 46; https://doi.org/10.3390/jemr19030046 - 2 May 2026

Abstract

►▼

Show Figures

Working memory, the capacity to store information for near-immediate use, and visual attention, the ability to focus on task-relevant information, are integral skills for military personnel. In civilian populations, stress is associated with worse skills. However, little is known about the relationship between

[...] Read more.

Working memory, the capacity to store information for near-immediate use, and visual attention, the ability to focus on task-relevant information, are integral skills for military personnel. In civilian populations, stress is associated with worse skills. However, little is known about the relationship between stress, working memory, and visual attention in military officers, who are trained to handle acute stress and operate in high-stress environments. Thirty-three military officers completed a working memory test, a Perceived Stress Questionnaire (PSQ), and an oculometric assessment of visual tracking. The oculometric test was a modified step-ramp test that produces 10 z-scored metrics. Working memory and executive function were assessed via the n-back task. Oculometric performance and self-reported stress levels were independently associated with n-back accuracy, explaining 67% of the variance (adjusted R2, n = 30). The association between oculometric performance and n-back accuracy was driven by directional anisotropy, directional noise and proportion of smooth pursuit. The association between oculometric performance and stress was complicated by sex differences. Results have important implications for the assessment of cognitive readiness in military populations. The strong relationship between oculometric performance and working memory suggests that eye-tracking-based metrics may serve as candidate indicators of cognitive function under operational demands.

Full article

Figure 1

Open AccessArticle

Effects of Cognitive, Simulator, and Real-World Training on Novice Driver Gaze Behaviour: A Pre–Post Study

by

Prem Sudhakar Lawrence and Aiswaryah Radhakrishnan

J. Eye Mov. Res. 2026, 19(3), 45; https://doi.org/10.3390/jemr19030045 - 30 Apr 2026

Abstract

►▼

Show Figures

Novice drivers demonstrate inefficient visual scanning and elevated crash risk relative to experienced drivers. Different training programmes may influence gaze behaviour and performance in distinct ways. This study compared the impact of cognitive, simulator-based, and real-world training on visual attention and driving-related outcomes

[...] Read more.

Novice drivers demonstrate inefficient visual scanning and elevated crash risk relative to experienced drivers. Different training programmes may influence gaze behaviour and performance in distinct ways. This study compared the impact of cognitive, simulator-based, and real-world training on visual attention and driving-related outcomes in novice drivers. Thirty novice drivers (18–27 years; ≤1 year driving experience) were randomized into three training groups (n = 10 each): cognitive training (PsyToolkit, Version 3.7.0), game-based simulator training, and supervised real-world driving. Baseline and post-training assessments included visuomotor performance (Fitts’ Law), attentional cueing (valid/invalid reaction time), simulator-based driving errors, and eye-tracking measures of gaze behaviour. Eye-tracking outcomes included dwell-time percentage and first-fixation order across predefined areas of interest (AOIs). Participants completed 10 consecutive days of modality-specific training. Cognitive training improved visuomotor performance and increased forward road monitoring. Game-based simulator training yielded the largest reductions in simulator driving errors, particularly lane deviations (Z = −2.89, p = 0.004). Real-world driving altered visual scanning patterns, with significant differences in rear-view mirror prioritization (p = 0.024). Across groups, gaze shifted from dashboard view toward safety-relevant AOIs. Training modifies novice drivers’ gaze behaviour in modality-specific ways, suggesting that a multimodal training approach may enhance visual attention and driving safety

Full article

Figure 1

Open AccessArticle

Validating Temporal Eye Tracking Metrics as Orthogonal Biomarkers for Aggressive Traits: A Mixed-Effects Analysis

by

Omar Alvarado-Cando, Oscar Casanova-Carvajal and José-Javier Serrano-Olmedo

J. Eye Mov. Res. 2026, 19(3), 44; https://doi.org/10.3390/jemr19030044 - 28 Apr 2026

Abstract

►▼

Show Figures

Atypical visual attention to aversive or threatening stimuli is a clinically relevant feature of aggressive behavior. However, the developmental dissociation between sustained visual allocation and early orienting remains unclear. This study examined the temporal dynamics of visual attentional biases in a sample of

[...] Read more.

Atypical visual attention to aversive or threatening stimuli is a clinically relevant feature of aggressive behavior. However, the developmental dissociation between sustained visual allocation and early orienting remains unclear. This study examined the temporal dynamics of visual attentional biases in a sample of 119 children and adolescents (51 males, 68 females), clinically and behaviorally categorized into aggressive and non-aggressive cohorts. Using a free-viewing paradigm with standardized emotional stimulus pairs selected from the International Affective Picture System (IAPS), eye-tracking analysis focused on first-fixation direction and dwell time. Inferential analyses were conducted using Linear Mixed-Effect Models (LMM) and Generalized Linear Mixed-Effects Models (GLMM). The linear model revealed a significant main effect of behavioral condition: individuals with aggressive traits, regardless of their stage of development, showed greater sustained visual allocation toward negative stimuli. In contrast, the GLMM for first-fixation direction identified a significant age-by-condition interaction, indicating that early orienting differences were more clearly expressed in the aggressive adolescent cohort. These findings suggest that sustained visual preference for negative content may represent a relatively stable correlate of aggressive traits, whereas early orienting differences may vary across developmental stages. Together, these two temporal eye-tracking measures may provide complementary information for future computational approaches to aggression screening. In conclusion, these two temporal oculomotor dimensions may provide a useful feature space for future machine-learning pipelines and may serve as complementary candidate markers for comparing computational predictions against clinically established ground truth in aggression screening research.

Full article

Figure 1

Open AccessArticle

Reversible Orbital Apex Syndrome

by

Yakov Rabinovich, Inbal Man Peles, Zina Almer, Iris Ben Bassat-Mizrachi, Jonathan Sapir, Noa Hadar, Alon Zahavi and Nitza Goldenberg-Cohen

J. Eye Mov. Res. 2026, 19(3), 43; https://doi.org/10.3390/jemr19030043 - 27 Apr 2026

Abstract

►▼

Show Figures

Orbital apex syndrome (OAS) is characterized by optic neuropathy and ophthalmoplegia and is generally associated with poor visual prognosis. The aim of this study was to describe patients with acute OAS who demonstrated substantial recovery of visual function and ocular motility. We retrospectively

[...] Read more.

Orbital apex syndrome (OAS) is characterized by optic neuropathy and ophthalmoplegia and is generally associated with poor visual prognosis. The aim of this study was to describe patients with acute OAS who demonstrated substantial recovery of visual function and ocular motility. We retrospectively reviewed the medical records of patients treated for OAS at a tertiary medical center between 2019 and 2024 whose condition ultimately proved reversible. Data on demographics, clinical findings, imaging, management, and follow-up were collected. Six patients (three female, three male; age range 14–87 years) were included and followed for a median follow-up of 7 months (range 2–31). All presented with reduced vision and ophthalmoplegia of varying severity. Underlying etiologies included inflammatory disease (n = 2), lymphoma, infection, blunt trauma, and post-surgical OAS of undetermined etiology (n = 1 each). Treatment was directed at the underlying cause. Visual acuity ranged from 20/30 to hand motion (HM) at presentation and 20/15 to 20/60 at the final visit. Improvement in vision and ocular motility occurred after a median time to clinical improvement of 2.37 months (range 0.25–5 months). Near-complete recovery of ocular motility was observed in all patients, with only one retaining mild abduction limitation. These findings highlight a subset of OAS cases with favorable outcomes and emphasize the importance of early diagnosis and etiology-directed management.

Full article

Figure 1

Open AccessReview

Eye-Tracking-Based Interventions for School-Age Specific Learning Disorders: A Narrative Review of Functional Assessment and Gaze-Contingent Training

by

Pierluigi Diotaiuti, Francesco Di Siena, Salvatore Vitiello, Alessandra Zanon, Pio Alfredo Di Tore and Stefania Mancone

J. Eye Mov. Res. 2026, 19(3), 42; https://doi.org/10.3390/jemr19030042 - 24 Apr 2026

Abstract

Eye tracking (ET) provides process-level indices of how students sample task-relevant information during core academic activities. In school-age learners (6–18 years) with specific learning disorders (SLDs; dyslexia, dysgraphia, and dyscalculia), ET can complement behavioural assessment by quantifying oculomotor patterns linked to decoding, model–production

[...] Read more.

Eye tracking (ET) provides process-level indices of how students sample task-relevant information during core academic activities. In school-age learners (6–18 years) with specific learning disorders (SLDs; dyslexia, dysgraphia, and dyscalculia), ET can complement behavioural assessment by quantifying oculomotor patterns linked to decoding, model–production coordination, and stepwise strategy execution. This narrative review synthesises ET findings in SLD across reading, handwriting/copying, and arithmetic and translates them into an applied framework for school-oriented use. We summarise key metrics and Areas of Interest (AOI)-based analyses, highlight technical and data-quality requirements for valid acquisition in educational settings, and outline compact functional assessment protocols integrated with standard academic and neuropsychological measures. Building on these foundations, we propose six hypothesis-driven gaze-contingent paradigms (H1–H6) as preliminary models for future experimental testing rather than as established interventions, and we map each to its current level of empirical support, specifying primary gaze outcomes and curriculum-relevant behavioural endpoints. We emphasise that eye-movement findings in specific learning disorders are heterogeneous and may vary as a function of age, task demands, and comorbidity. Accordingly, credible training effects require retention and transfer probes under standard, non-contingent display conditions, appropriate controls, and explicit developmental interpretation. Eye tracking is positioned as complementary functional evidence and as a platform for experimentally testable, mechanism-based interventions in school-age specific learning disorders.

Full article

(This article belongs to the Special Issue Eye Movements in Reading and Related Difficulties)

►▼

Show Figures

Figure 1

Open AccessReview

Data-Driven Insights into E-Learning: A Comprehensive Review of Eye-Tracking Applications in Learning Systems

by

Safia Bendjebar, Yacine Lafifi, Rochdi Boudjehem and Aissa Laouissi

J. Eye Mov. Res. 2026, 19(2), 41; https://doi.org/10.3390/jemr19020041 - 17 Apr 2026

Abstract

►▼

Show Figures

In the last few years, universities have increasingly implemented online learning environments, allowing students to study at their own pace. These environments utilize technological tools and implement methods to support training, deliver content, and promote the acquisition of new knowledge and skills. As

[...] Read more.

In the last few years, universities have increasingly implemented online learning environments, allowing students to study at their own pace. These environments utilize technological tools and implement methods to support training, deliver content, and promote the acquisition of new knowledge and skills. As an example of these technologies, eye tracking has emerged as a powerful tool for studying visual attention, cognitive processes, and learning behaviors. The main aim of this study is to provide a scoping review of recent eye-tracking research across diverse learner populations, ranging from K-12 students to university-level learners and educators. The present study examined recent advances in eye-tracking technologies, focusing on their potential, especially when combined with artificial intelligence (AI) techniques such as machine learning. It analyzed 54 empirical studies in the last few years, highlighting their applicability, strengths, and limitations. The research findings highlight the promise of eye-tracking technology to transform educational practices by providing data-driven insights regarding student behavior and cognitive processes. Future research must address implementation and data-analysis challenges to maximize the educational benefits of eye tracking.

Full article

Graphical abstract

Open AccessReview

Application of Eye-Tracking Technology in Assessing Binocular Vision Function in Paediatric Populations: A Scoping Review

by

Ong Huei Koon, Noor Ezailina Badarudin and Byoung-Sun Chu

J. Eye Mov. Res. 2026, 19(2), 40; https://doi.org/10.3390/jemr19020040 - 17 Apr 2026

Cited by 1

Abstract

Background: This review discusses the application of eye-tracking technology in the detection and monitoring of binocular vision anomalies among children. Methods: A scoping review using PRISMA guidelines was conducted through Scopus, ScienceDirect, and PubMed using the keywords “eye-tracking,” “binocular,” “vision,” “anomalies,” “paediatrics,” and

[...] Read more.

Background: This review discusses the application of eye-tracking technology in the detection and monitoring of binocular vision anomalies among children. Methods: A scoping review using PRISMA guidelines was conducted through Scopus, ScienceDirect, and PubMed using the keywords “eye-tracking,” “binocular,” “vision,” “anomalies,” “paediatrics,” and “children” from 2015 to 2025. Studies excluded were not written in English, did not apply the eye tracker as a research tool, involved an ineligible population, or involved non-human subjects. Results: The search strategy identified 77 citations, yet only 14 studies met the inclusion criteria. This review revealed a variety of binocular vision anomalies detectable through eye-tracking systems, along with the specific models and parameters employed in these assessments. Application of eye-tracking technology in diagnosing conditions such as strabismus and amblyopia demonstrated potential for improved accuracy and early detection. Discussion: Eye-tracking technology demonstrates considerable potential for the detection and monitoring of binocular vision anomalies in children, particularly as a non-invasive method for early screening, thereby strengthening its clinical applicability. By assessing fixation stability, saccadic movements, and vergence responses, eye-tracking allows for the early detection of subtle visual anomalies, especially in the paediatric population. Conclusions: Eye-tracking technology represents a valuable advancement in paediatric vision care, enabling the more objective and earlier detection of binocular vision anomalies in the paediatric population.

Full article

(This article belongs to the Special Issue Digital Advances in Binocular Vision and Eye Movement Assessment)

►▼

Show Figures

Graphical abstract

Open AccessSystematic Review

Virtual Reality Orthoptic Interventions for Binocular Vision Disorders: A Systematic Review and Meta-Analysis

by

Clara Martinez-Perez, Noelia Nores-Palmas, Jacobo Garcia-Queiruga, Maria J. Giraldez and Eva Yebra-Pimentel

J. Eye Mov. Res. 2026, 19(2), 39; https://doi.org/10.3390/jemr19020039 - 14 Apr 2026

Abstract

Purpose: To systematically review and meta-analyze randomized controlled trials (RCTs) evaluating digital orthoptic interventions, including virtual reality (VR)–based approaches, for convergence insufficiency and intermittent exotropia. Methods: This systematic review and meta-analysis followed PRISMA 2020 guidelines and AMSTAR-2 standards and was prospectively registered in

[...] Read more.

Purpose: To systematically review and meta-analyze randomized controlled trials (RCTs) evaluating digital orthoptic interventions, including virtual reality (VR)–based approaches, for convergence insufficiency and intermittent exotropia. Methods: This systematic review and meta-analysis followed PRISMA 2020 guidelines and AMSTAR-2 standards and was prospectively registered in PROSPERO. PubMed, Web of Science, and Scopus were searched up to December 2025. Eligible studies were RCTs comparing VR-based or digital orthoptic interventions with conventional therapy, placebo VR, or control conditions. Primary outcomes included near point of convergence, ocular deviation, fusional reserves, and stereopsis. Risk of bias was assessed using RoB 2 and certainty of evidence with GRADE. Results: Four RCTs (184 participants) were included. In convergence insufficiency, digital orthoptic interventions, including VR-based approaches, significantly reduced near heterophoria (mean difference [MD] −1.64 prism diopters; 95% CI −3.17 to −0.12), with no significant effects on near point of convergence or positive fusional reserves. In intermittent exotropia, VR-based interventions significantly improved near point of convergence (MD −1.60 cm; 95% CI −2.64 to −0.55), although this change did not reach the ≥4 cm threshold considered clinically meaningful according to the Convergence Insufficiency Treatment Trial. Improvements were also observed in stereopsis (MD −0.19 log units; 95% CI −0.33 to −0.04), while changes in near deviation were not significant. Evidence certainty ranged from low to moderate. Conclusions: VR-based and digital orthoptic interventions may offer modest, outcome-specific benefits as adjunctive treatments for selected binocular vision disorders. Larger, well-designed RCTs with standardized outcomes are needed.

Full article

(This article belongs to the Special Issue Digital Advances in Binocular Vision and Eye Movement Assessment)

►▼

Show Figures

Figure 1

Open AccessArticle

Eye-Tracked Visual Attention to Anthropomorphic Appearance and Empathic Responses in AI Medical Conversational Agents: Dissociating Trust Gains from Attentional Synergy

by

Wumin Ouyang, Hemin Du, Yong Han, Zihuan Wang and Yuyu He

J. Eye Mov. Res. 2026, 19(2), 38; https://doi.org/10.3390/jemr19020038 - 9 Apr 2026

Abstract

►▼

Show Figures

Understanding how users perceive and attend to the anthropomorphic appearance and empathic responses of artificial intelligence medical conversational agents (AIMCAs) can help reveal the key judgment cues underlying trust formation and use decisions, while also informing interface and dialog design. To this end,

[...] Read more.

Understanding how users perceive and attend to the anthropomorphic appearance and empathic responses of artificial intelligence medical conversational agents (AIMCAs) can help reveal the key judgment cues underlying trust formation and use decisions, while also informing interface and dialog design. To this end, this study employs a 3 (appearance anthropomorphism: high, medium, low) × 2 (empathic response: present, absent) within-subject eye-tracking experiment, combined with subjective scales and brief post-task open-ended feedback. During a static prototype viewing task based on hypothetical consultation scenarios, we concurrently recorded trust, behavioral intention, and visual measures for key areas of interest (AOIs; appearance area, conversational content area, and overall interface area). Eye-tracking measures were normalized by AOI coverage proportion to improve cross-AOI comparability. The results show that both anthropomorphic appearance and empathic response significantly increased users’ trust in AIMCAs and their behavioral intention. An interaction between these two types of social cues was also observed, suggesting that when visual embodiment and linguistic style are aligned at the social level, users are more likely to form favorable overall judgments. At the level of visual processing, however, no interaction effect was found, and the eye-tracking measures showed only partial main effects, indicating that subjective synergy does not necessarily correspond to synergistic changes in attentional allocation. Overall, anthropomorphic appearance and empathic response exerted consistent facilitating effects on outcome variables, but displayed different patterns of attentional allocation and information prioritization at the visual level. Accordingly, AIMCA design should emphasize consistency between appearance cues and conversational strategies, optimize users’ initial judgments and interface comprehension, and use intention through verifiable information organization and clear boundary cues.

Full article

Figure 1

Journal Menu

► ▼ Journal MenuJournal Browser

► ▼ Journal Browser-

arrow_forward_ios

Forthcoming issue

arrow_forward_ios Current issue - Volumes not published by MDPI

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Green Health, Healthcare, Sports, NeuroSci, JEMR

Perspectives in Motor Learning and Control: The Role of Neuroscience

Topic Editors: Gonçalo Dias, Mário José Martins da Silva PereiraDeadline: 31 August 2026

Special Issues

Special Issue in

JEMR

Digital Advances in Binocular Vision and Eye Movement Assessment

Guest Editors: Clara Martinez-Perez, Jacobo Garcia-QueirugaDeadline: 20 June 2026

Special Issue in

JEMR

Eye Movements in Reading and Related Difficulties

Guest Editors: Argyro Fella, Timothy C. Papadopoulos, Kevin B. Paterson, Daniela ZambarbieriDeadline: 30 June 2026

Special Issue in

JEMR

Eye Movements and Reading Comprehension

Guest Editors: Tanya R. Beelders, Anne E. CookDeadline: 5 July 2026

Special Issue in

JEMR

Eye Tracking and Visualization

Guest Editor: Michael BurchDeadline: 31 July 2026