- Article

Hardware Design Optimization of a Sparse Hyperdimensional Computing Accelerator for iEEG Seizure Detection

- Stef Cuyckens,

- Ryan Antonio and

- Marian Verhelst

- + 1 author

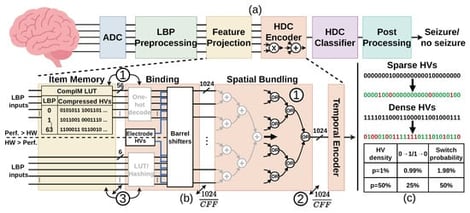

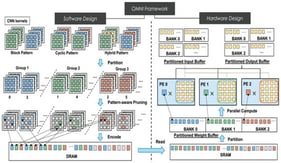

Hyperdimensional computing (HDC) provides a highly efficient alternative to neural networks for intracranial electroencephalography (iEEG) seizure detection on edge devices with strict resource limits. While sparse HDC can significantly reduce energy use, current hardware fails to capitalize on this for two reasons. First, existing designs do not optimize the encoding architecture specifically for sparse execution, leaving potential energy savings on the table. Second, researchers often ignore the “area” problem, the large physical space high-dimensional vectors take up on a chip, which must be solved to make these devices small enough for practical edge use. This work presents a sparse HDC accelerator that bridges these gaps through three key contributions. First, we streamline the sparse encoding architecture to improve energy and area efficiency by integrating a compressed item memory (CompIM) and simplified spatial bundling. Second, to address the area bottleneck and enable true edge deployment, we systematically explore area trade-offs via sequentialization techniques, evaluating both channel folding (CF) and vector folding (VF). Third, we push efficiency even further by proposing an item-memory-free (IM-free) architecture. By replacing the baseline segmented shift binding with a standard shift binding scheme, and gracefully utilizing raw local binary pattern (LBP) codes directly as shift amounts, we completely bypass the CompIM for simultaneous area and energy savings. However, this optimization incurs a drop in detection accuracy; hence, we ultimately present two tailored configurations. First, our energy-optimized IM-free design achieves a 5.55× area and 3.08× energy improvement over the sparse HDC baseline, alongside 8.20× and 13.37× improvements over the dense baseline. Second, to prioritize clinical performance, our balanced streamlined design utilizes a channel folding factor (CFF) of 4 to preserve higher accuracy. This balanced approach achieves a 5.97× area and a 4.66× energy improvement over the dense baseline, with a 4× latency increase.

23 April 2026