Abstract

Process monitoring methods play a crucial role in identifying equipment malfunctions and instrument failures, as well as in maintaining process safety and product quality. Selecting the right approach for fault detection and diagnosis is therefore vital. Several localization methods based on Kernel Principal Component Analysis (KPCA) exist, such as the partial localization approach, which is effective at detecting anomalies but does not always pinpoint faults precisely. This method often identifies a suspicious area or group of variables without isolating the exact source of the fault. In complex systems such as chemical reactors, it can produce false positives or incorrect localizations if the data are noisy or if the fault affects multiple correlated variables. Conversely, the reconstruction-based contribution approach, when integrated with Kernel Principal Component Analysis (KPCA), is both widely documented in the literature and highly effective for fault localization. This method first identifies anomalies using the Hotelling’s T2 statistic and Q (squared prediction error) statistic, then analyzes the contributions of individual variables to these indices in order to isolate the fault. However, the convergence of the optimization algorithm using the T2 index is not guaranteed. To address this limitation, we introduce RBC-KGLRT, a novel localization framework that integrates reconstruction-based contribution with KPCA and the Generalized Likelihood Ratio Test in its kernel form to improve both precision and reliability in localization tasks. This work transforms traditional KPCA and reduced-rank KPCA fault detection approaches—enhanced by the KGLRT metric—into a powerful fault localization solution through the reconstruction-based contribution (RBC) method. Its effectiveness is rigorously evaluated using the Tennessee Eastman Process (TEP), a widely recognized simulation benchmark in process control and chemical engineering.

1. Introduction

Real-time monitoring of industrial processes plays a key role in assessing operational performance and improving both product efficiency and quality [1]. Failures in sensors may significantly degrade process performance, cause unexpected shutdowns, or in extreme cases lead to serious accidents. Nevertheless, when other process elements such as controllers, actuators, and equipment operate correctly, the adverse effects of sensor malfunctions can be reduced through reliable fault detection [2,3] and diagnosis strategies.

Fault diagnosis [4] generally involves three fundamental steps:

- Detection: Identifying abnormal behavior in the process that may result from equipment faults, mechanical wear, or major disturbances during operation.

- Isolation: Determining the precise location or source of the detected fault within the system.

- Assessment: Evaluating the magnitude and consequences of the fault on the process performance.

Kernel Principal Component Analysis and several of its advanced variants [5,6] have shown strong capabilities in fault detection [7] and have been successfully applied to many industrial processes [8,9,10]. Various enhanced KPCA-based techniques [11] have been developed to further improve monitoring performance. For instance, the Feature Vector Selection-based KPCA (FVS-KPCA) approach [12] focuses on selecting the most relevant feature vectors to improve computational efficiency and detection accuracy. Another notable method combines KPCA with Kernel Density Estimation [13]. In this framework, the conventional Gaussian-based control limits are replaced with data-driven thresholds estimated using KDE, which significantly increases the reliability and accuracy of fault detection in nonlinear industrial processes [14].

In [15], the authors proposed a new fault detection strategy that integrates the Generalized Likelihood Ratio Test with the Exponentially Weighted Moving Average method. More recently, an advanced approach called RR-KGLRT was introduced in [16]. This technique combines the advantages of reduced-rank KPCA with the GLRT framework to further improve fault detection performance.

After a fault has been detected, determining which variables are associated with the abnormal behavior becomes a critical step. Fault localization, also referred to as fault identification, refers to a diagnostic system’s ability to determine the exact origin of a fault within a dynamic process. In most monitoring frameworks, the identification stage is typically closely linked to the detection method.

To determine faulty variables, several techniques based on Principal Component Analysis have been proposed in the literature. One notable approach relies on structured residuals for fault localization [17]. This method consists of generating a set of residual signals designed so that each residual responds to specific faults while remaining insensitive to others. Consequently, every fault can be associated with a theoretical signature, which facilitates identifying the variable responsible for the abnormal behavior. This strategy, commonly referred to as Partial PCA, has been successfully validated in previous studies [18].

To address nonlinear relationships between variables, researchers later introduced a kernel-based extension of PCA known as Kernel Principal Component Analysis, which has also demonstrated effective performance in fault identification tasks [19]. Subsequent research further improved these approaches by proposing Reduced KPCA and Online Reduced KPCA methods for fault isolation [20,21]. These monitoring techniques have been successfully applied to fault diagnosis in air quality monitoring networks, where they have shown strong performance in both detection and localization.

Despite their effectiveness, a major drawback of these approaches is the computational cost associated with generating partial models, which increases significantly as the number of system variables grows.

In system diagnostics, combining Kernel Principal Component Analysis with complementary analytical methods has enabled the development of advanced strategies for fault detection and isolation [22]. One notable example is the integration of KPCA with Fisher Discriminant Analysis [23], which forms an effective framework for fault identification and classification in complex systems. In this approach, KPCA first maps the original dataset into a high-dimensional feature space, allowing nonlinear relationships between variables to be captured more effectively than with linear techniques. Subsequently, FDA is applied to improve the separation between classes—such as normal and faulty operating conditions—by maximizing the ratio between inter-class variance and intra-class variance. Several studies have demonstrated that this hybrid strategy provides better diagnostic performance than using KPCA or FDA independently [24]. However, the computational burden associated with KPCA can become significant when dealing with large datasets or high-dimensional data, which may limit its practical implementation.

KPCA has also been combined with neural network-based models, including Artificial Neural Network architectures and Long Short-Term Memory networks [25]. In such hybrid frameworks, KPCA typically serves as a preprocessing step to reduce data dimensionality while preserving nonlinear patterns. The processed data are then used as inputs to neural networks that perform fault classification and identification. LSTM networks are particularly well-suited for analyzing sequential and time-dependent data, making them useful for applications involving real-time monitoring and predictive maintenance. These techniques are widely used in industrial environments, such as rotating machinery monitoring and manufacturing systems, where early detection of anomalies is essential. Nevertheless, both KPCA and LSTM models may require considerable computational resources. KPCA becomes computationally demanding for large datasets, while LSTM networks require extensive training data and significant processing power, which may limit their deployment in resource-constrained environments.

Another effective strategy involves combining KPCA with clustering algorithms [26], particularly K-means clustering. In this framework, clustering techniques are used to group similar observations, allowing the distinction between normal and faulty operating conditions without requiring labeled data. This approach is particularly useful for identifying hidden faults in industrial processes and mechanical systems characterized by nonlinear behavior [27].

In addition to detecting faults [28], system diagnosis also requires estimating the magnitude of these faults. However, such estimation cannot be performed directly in the KPCA-transformed feature space. It is therefore necessary to reconstruct the corresponding observations in the original data space. This challenge is known as the pre-image problem. Addressing this problem is essential for recovering the original variables associated with a detected fault. In practice, an exact solution may not always exist or may not be unique. The objective is therefore to find an observation in the original space whose mapped representation in the feature space is as close as possible to the considered point. Several techniques have been proposed to approximate solutions to this problem [29].

After addressing the pre-image problem, the next step in the diagnostic process is fault localization. In this context, Kernel Principal Component Analysis has been extensively studied as an effective monitoring method. For example, a reconstruction-based contribution approach, commonly referred to as RBC-KPCA, was introduced in [30] to improve fault localization accuracy. This method combines the principles of variable reconstruction and contribution analysis and relies on a fixed-point algorithm to estimate the contribution of each variable to the detected fault.

However, previous studies have indicated that reliable fault diagnosis cannot always be achieved when the monitoring scheme relies solely on Hotelling’s T-squared (T2) statistic, because the convergence of the corresponding optimization algorithm is not always guaranteed [31]. For this reason, RBC-KPCA is often implemented using the squared prediction error (Q) index or a combination of multiple monitoring indices.

Another fault diagnosis strategy was proposed in [32], where the Q statistic is employed together with the reconstruction-based contribution principle and the reduced-rank KPCA algorithm. This approach improves the identification of faulty variables by exploiting dimensionality reduction while maintaining the nonlinear modeling capability of KPCA.

In this work, we propose a new diagnostic framework called RBC-KGLRT, which is built upon the KPCA methodology and is specifically designed for sensor fault diagnosis in chemical processes. The main contribution of this approach lies in extending the reduced-rank KPCA-based fault detection method by incorporating the Generalized Likelihood Ratio Test in its kernel formulation [16,33]. More precisely, the proposed strategy combines the Kernel Generalized Likelihood Ratio Test (KGLRT) for fault detection with the reconstruction-based contribution (RBC) technique for fault localization.

By integrating these two complementary mechanisms, the proposed method enhances the reliability of fault detection and the precision of fault localization. The KGLRT index provides a strong capability for identifying abnormal process behavior, while the RBC technique enables the identification of the specific variables responsible for the fault. As a result, the RBC-KGLRT framework reduces false alarms, improves the accuracy of fault identification, and reduces the average convergence time of the diagnostic procedure.

Unlike monitoring approaches that rely solely on global statistical indices, the proposed method can determine the exact sensors or process variables contributing to the detected fault. This capability facilitates targeted corrective actions, thereby reducing downtime and minimizing operational disruptions. Overall, the combination of KGLRT for detection and RBC for localization creates a complementary and efficient diagnostic framework that significantly improves the performance of industrial process monitoring systems.

The remainder of this article is organized as follows: Section 2 provides an overview of the Kernel Principal Component Analysis (KPCA) method, together with the reduced-rank KPCA (RR-KPCA) approach. Section 3 introduces the proposed RBC-KGLRT method, including its detailed formulation. Section 4 evaluates the performance of the fault detection and localization approach using a dataset derived from the Tennessee Eastman Process (TEP), under normal and faulty operating conditions. Finally, conclusions are presented in the last section of the article.

2. Review of KPCA and RR-KPCA

2.1. Principle of KPCA

Kernel Principal Component Analysis (KPCA) has emerged as an advanced technique for diagnosing nonlinear systems, addressing the limitations of traditional Principal Component Analysis (PCA). While PCA is effective for linear systems, many industrial processes exhibit nonlinear behaviors, which PCA may fail to fully capture. KPCA overcomes this challenge by leveraging kernel functions [34] to identify and exploit nonlinear relationships among variables.

The core principle of KPCA involves transforming input data—originally structured in a way that is linearly inseparable—into a higher-dimensional feature space. In this new space, the data can be linearly separated, enabling more accurate analysis and interpretation of complex, nonlinear system behaviors.

Given an observation , (where represents a set of process variables), its transformation into the feature space is achieved through a nonlinear mapping function . This projection allows the data to be represented in a space where nonlinear relationships can be effectively analyzed.

The input observations matrix is defined as follows:

Let denote the total number of observations for each process variable. The transformed data in the feature space is represented by the following vector:

To construct the implicit KPCA model [35], it is necessary to compute the eigenvalues of the covariance matrix within the transformed feature space, where the covariance matrix is expressed as follows:

However, since the explicit form of the nonlinear mapping function is unknown, directly computing the covariance matrix becomes infeasible. To address this challenge, we employ the kernel trick, a technique initially introduced for Support Vector Machines (SVMs) [36]. This approach allows us to work with the inner product between transformed observations and , which is defined as follows:

As to why represents the kernel function, numerous kernel functions have been proposed in the literature [34]. In this work, we specifically utilize the Gaussian kernel, also referred to as the Radial Basis Function (RBF), which is defined as follows:

Using the kernel trick to compute the dot product , the KPCA model can be obtained by performing the decomposition into the eigenvalues and eigenvectors of the kernel matrix given below:

The kernel matrix decomposition is equivalent to performing PCA in the space as follows; for more details, see [31]:

The matrix of eigenvalues is . The eigenvalues are arranged in descending order and is the matrix of their corresponding eigenvectors.

To simplify this, we have assumed that the data are centered in the feature space. If it is not the case, the transformed data must be centered, leading to scaling the kernel matrix as follows:

where .

When a new observation is collected at instant t , by using the training data, the kernel vector of the new observation can be computed as follows: . The kernel vector must be scaled before projecting the test vector into the feature space by the retained eigenvectors centered. Then, we have the following equation:

where .

The number of retained Principal Components (PCs) is obtained by using the cumulative percent variance (CPV) [37]. The CPV is defined by the following:

2.2. Principle of Reduced-Rank KPCA

The core concept of this proposed method is to eliminate dependencies among variables in the feature space while retaining a reduced yet representative subset of the original dataset. , where is the number of retained observations. The RR-KPCA-based fault detection approach operates in two stages: offline model training and real-time fault detection. During the offline stage, the method constructs a compact reference model that captures the system’s normal behavior. This involves selecting observations that provide linearly independent representations in the transformed feature space, ensuring that only informative and system-relevant data are retained. In the online stage, the derived model is applied to actively monitor the system, enabling the detection of anomalies as they occur.

To build the RR-KPCA reference model, the most informative observation—in terms of system insights—is saved in a reduced training data matrix, denoted as . We suppose that the system operates under normal conditions for time steps.

At the initial time step, the reduced initial data matrix is expressed as .

At each time step , a new observation is collected. Its kernel vector, , is computed, and the kernel matrix is updated by adding a new row and column to the previous matrix, as follows [16]:

The rank of the updated kernel matrix is then calculated, giving rise to two possible scenarios:

- If the kernel matrix has full rank, indicating that the projected data in the feature space are linearly independent, the new observation is added to the reduced data matrix.

- If the kernel matrix does not have full rank, indicating linear dependencies in the projected data in the feature space, the reduced data matrix remains unchanged, and the kernel matrix is reverted to its previous state.

Once all observations have been evaluated, the reduced data matrix is obtained, and the reduced kernel matrix is constructed. Following this, the initial RR-KPCA model is estimated, including the computation of its eigenvalues and eigenvectors.

2.3. Fault Detection Index

The KPCA-based monitoring framework, similar to other statistical process control approaches, operates in two distinct phases:

- Offline Phase: This stage focuses on building an implicit KPCA model of the process, as well as determining the detection index and its confidence threshold under normal operating conditions.

- Online Phase: During this stage, the detection index is computed for each new observation to assess whether the process is operating normally or if a fault has occurred.

Among the most commonly used detection indices in the residual space are the Quadratic Prediction Error (Q) and the Kernel Generalized Likelihood Ratio Test (KGLRT).

2.3.1. Quadratic Prediction Error (Q)

The Q statistic is widely used to identify anomalies in datasets [38]. In their work, the researchers in [39] introduced a straightforward formula for computing the Q index within the feature space, expressed as follows:

A faulty condition is declared if the Q index exceeds its confidence limit at the instant , as follows:

is the confidence limit of the index, and can be calculated using the chi2 distribution , given by the following:

with degrees of freedom and is a confidence threshold with . The parameters and are determined as follows:

and represent the mean and variance of the index .

2.3.2. Kernel Generalized Likelihood Ratio Test

The Generalized Likelihood Ratio Test (GLRT) is one of the most commonly used indexes for defect detection [40] in linear [41] and nonlinear systems [9]. The Kernel GLRT index proves that it can efficiently detect the presence of a defect, with a good detection rate and low false alarm rate [16]. In [42], the authors proposed a simple expression for calculating the KGLRT index in the characteristic space at the moment , which is represented as follows:

A faulty condition is declared if the index exceeds its confidence limit at the instant , as follows:

is the confidence limit of the index, and can be calculated using the chi2 distribution , given by the following:

with degrees of freedom and is a confidence threshold with . The parameters and are determined as follows:

and represent the mean and variance of the index .

3. Proposed RBC-KGLRT Method

This section presents the reconstruction-based contribution–Kernel Generalized Likelihood Ratio Test (RBC-KGLRT), a novel approach designed to enhance fault detection and localization in complex systems. By integrating reconstruction-based contributions (RBCs) with the Kernel Generalized Likelihood Ratio Test (KGLRT), this method aims to improve both the accuracy and efficiency of fault diagnosis.

The RBC-KGLRT approach leverages the strengths of kernel-based techniques to capture nonlinear relationships in process data, while the RBC framework enables precise localization of faults by identifying the most significant contributions to anomalies. The formulation of this method is detailed in the following subsections.

3.1. Principle

A recent method, known as reconstruction-based contribution for Kernel Principal Component Analysis (RBC-KPCA), was introduced by [30]. This approach integrates the principles of contribution and reconstruction to assess the influence of individual variables on detected anomalies. Specifically, the contribution of a variable is determined by measuring the reconstructed quantity of a detection index along the direction of that variable. Consequently, the variable with the highest contribution is typically identified as faulty. However, as demonstrated by Alcala (2011) [31], fault diagnosis using Hotelling’s T2 index is not always reliable due to uncertainties in the convergence of its associated optimization algorithm. For this reason, the RBC-KPCA method is primarily applied using the Quadratic Prediction Error (Q) index and a combined index, rather than the T2 index.

In this section, we propose an extension of this framework by combining KPCA and reduced-rank KPCA (RR-KPCA) fault detection techniques with the Kernel Generalized Likelihood Ratio Test (KGLRT) for fault localization. This approach leverages the strengths of RR-KPCA while incorporating the KGLRT detection index and reconstruction-based contribution (RBC) for localization. A key advantage of this method is its ability to compute contributions not only along the direction of a specific variable but also along arbitrary directions, providing enhanced flexibility.

The following subsection will present the mathematical formulation of the proposed RBC-KPCA method with the KGLRT detection index.

3.2. Mathematical Formulation

In the presence of a fault in the sensor and for an observation , the reconstructed observation vector can be written as follows:

where is the direction of the fault and is its amplitude. The principle of the RBC approach is to determine the amplitude of such a fault, , or its direction such that the fault detection index of the reconstructed observation is minimal. To solve this optimization problem, we propose using the iterative fixed-point method. Implementing this approach requires selecting an initial point for the computation and defining a stopping criterion. The results can vary significantly depending on the chosen starting point. Additionally, the fixed-point method may exhibit instability, leading to local minima or even failure to converge. Numerical instability occurs when the denominator approaches zero.

This instability can be mitigated by using the point to be reconstructed as the initial guess. The stopping criterion is determined by the following condition: , where is a predefined threshold and represents the estimation error between two consecutive iterations.

The fault amplitude is determined by the following optimization problem:

In the case of the KPCA method, the contribution by reconstruction of the KGLRT index along the direction is determined by the difference between the detection indices of the defective observation and the reconstructed observation , as follows:

Using Equation (17), the detection KGLRT index of the reconstructed observation is defined by the following equation:

In order to compute the value in a direction for the index, where and the value one is placed in the ith position, we determine the amplitude , which minimizes the index .

Using relation (24), the derivative of with respect to is given by the following:

and are the first eigenvectors and eigenvalues of the reduced kernel matrix, respectively. Thus, the derivative of with respect to is defined by the following:

where

The derivative of with respect to can be expressed as follows:

where and .

The results of the derivative of the index with respect to can be defined as follows:

The derivative of the vector with respect to is given by the following expression:

where .

Then, the equation of the drift of the index with respect to can be rewritten as follows:

To find the amplitude that reduces the detection index associated with the reconstructed measure, we put the last equation to equal zero. The solution of is given as follows:

4. Application to the Tennessee Eastman Process (TEP)

Description of TEP

The Tennessee Eastman Process (TEP) is a well-established benchmark in chemical process control and fault detection research [43]. Introduced by Downs and Vogel in 1993, it simulates a realistic industrial chemical process. The TEP is commonly [32,40] used to evaluate and compare control strategies, fault diagnosis methods, and process monitoring techniques. Its widespread adoption stems from its ability to provide a practical and comprehensive testbed for these applications. The Tennessee Eastman Process (TEP) [44] models a chemical manufacturing plant that synthesizes two products, labeled G and H, from four reactants: A, C, D, and E. The process consists of five key units:

- ▪

- Reactor: The core unit where the chemical reactions take place.

- ▪

- Condenser: Cools the effluent stream from the reactor.

- ▪

- Separator: Separates the liquid and gas phases of the cooled effluent.

- ▪

- Stripper: Purifies the liquid product by removing impurities.

- ▪

- Recycle Compressor: Recycles unreacted gases back into the reactor for further processing.

The chemical reactions produced by the reactor are as follows:

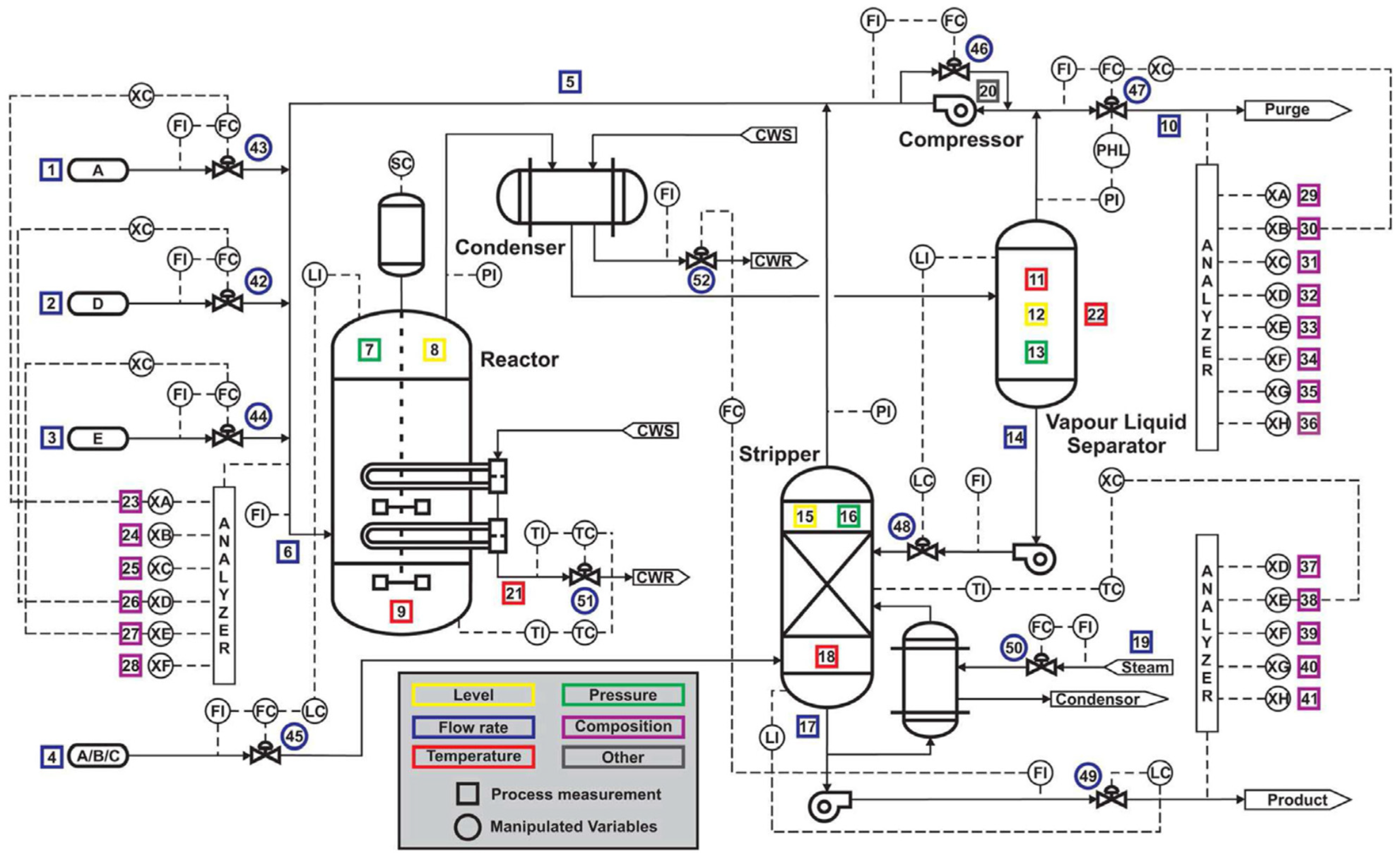

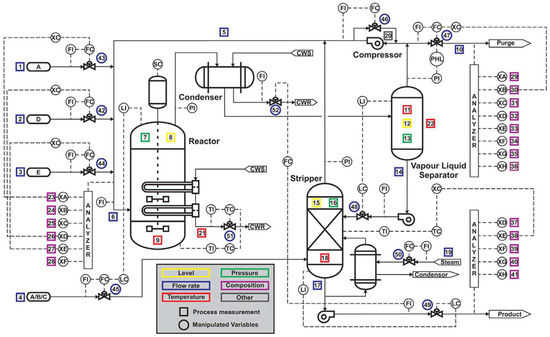

The TEP contains [45] a total of 53 variables. These variables are subdivided into 12 manipulated variables and 41 measured variables. The 41 variables measured comprise 22 variables measured continuously and 19 variables measured in sampling. A detailed diagram of the TEP is shown in Figure 1.

Figure 1.

Diagram of the Tennessee Eastman Process.

In this work, the 22 continuously measured variables are used to construct the data matrix. These variables are presented in Table 1.

Table 1.

Measured variables that are monitored in the TEP.

The observation vector comprises 22 measured variables, expressed as follows:

Additionally, TEP [46,47] data are generated with 21 different defects. These faults are introduced after observation 224 and continue until the end of the dataset.

For the Tennessee Eastman Process (TEP), the formation of training and test datasets typically follows these principles:

- Training Data: The training set is usually composed of data collected during periods of normal operation (fault-free conditions). These data are used to characterize the nominal behavior of the process, allowing faults to be detected as deviations from this baseline. The training data are often generated from simulations running for a fixed duration, such as 25 h, with samples taken at regular intervals (every 3 min).

- Test Data: The test set includes both fault-free and faulty data, often generated from simulations running for a longer duration, such as 48 h. The faulty data represent various process faults introduced at specific times. The test data are kept completely separate from the training data.

In this work, there are three faults, which are presented in Table 2. The faults are used to evaluate the effectiveness of the proposed method.

Table 2.

Process faults for TEP.

There are 1000 measurements for each variable in normal operation and 1000 measurements of the data generated with the various faults. The kernel used to build the KPCA model is the Gaussian kernel (RBF), whose parameter is equal to 10.

The performance of RBC-KPCA and RBC-RRKPCA, evaluated using the Q index, is compared with the proposed RBC-KGLRT method developed under both traditional KPCA and RR-KPCA frameworks. The comparative analysis is carried out on the Tennessee Eastman Process (TEP) benchmark process [48].

The performance metrics considered are as follows:

- ✓

- The false alarm rate “FAR” is calculated as follows:

- ✓

- The good detection rate “GDR” is calculated as follows:

- ✓

- The good localization rate “GLR” is typically calculated using the following formula:

- ✓

- Average convergence time “TC”.

- ✓

- Minimal computing time allowed for fault detection “CT”.

5. Discussion

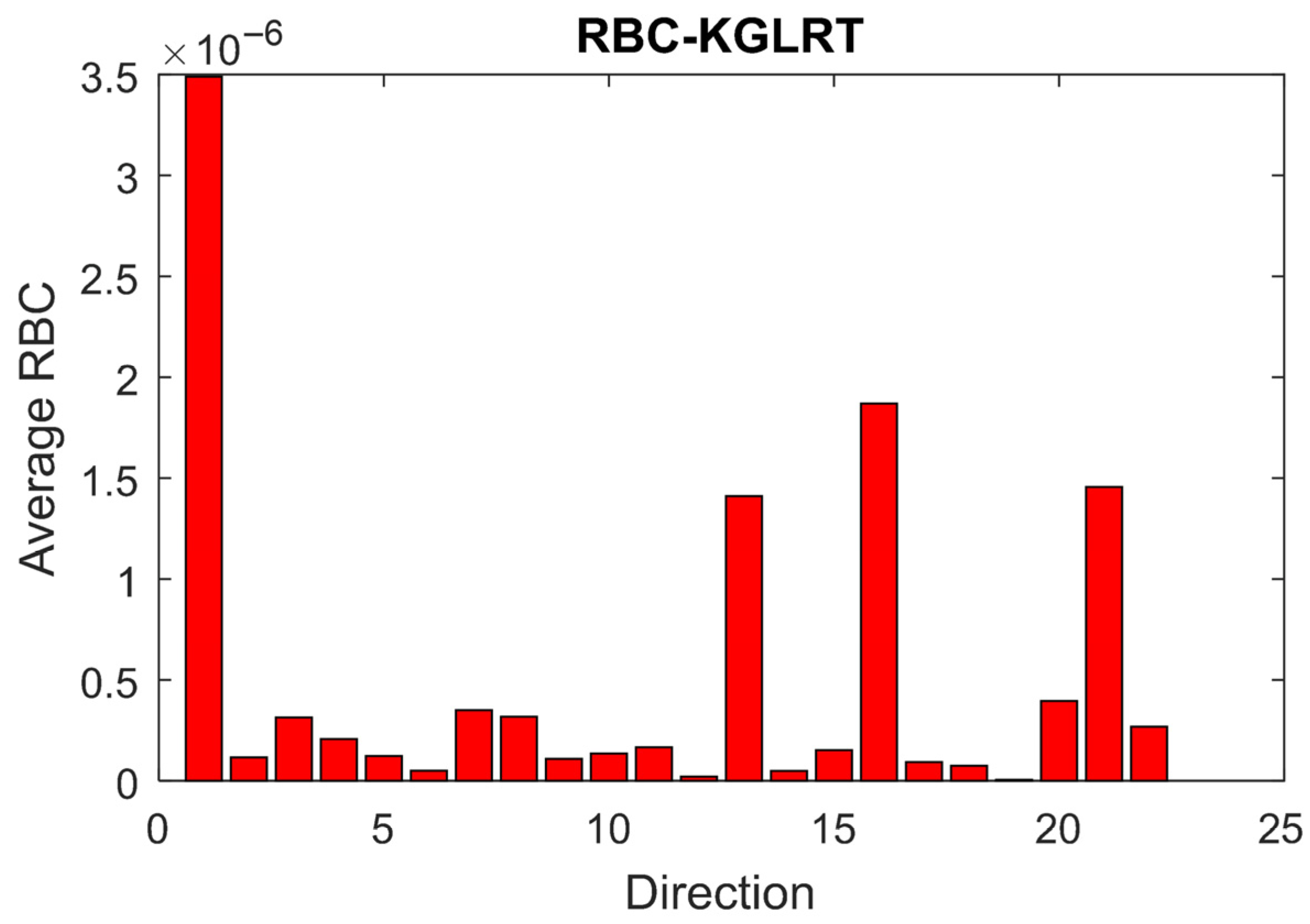

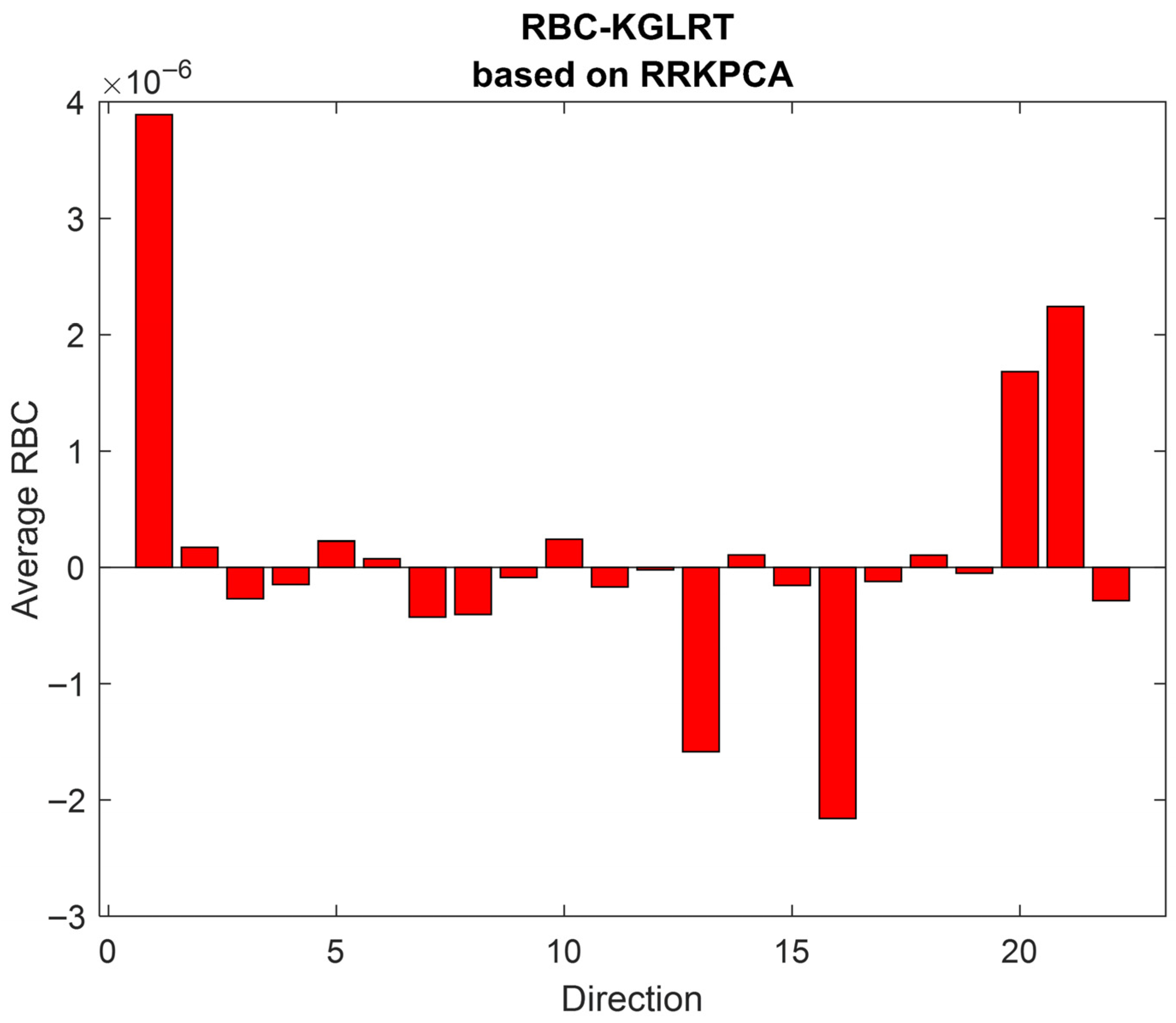

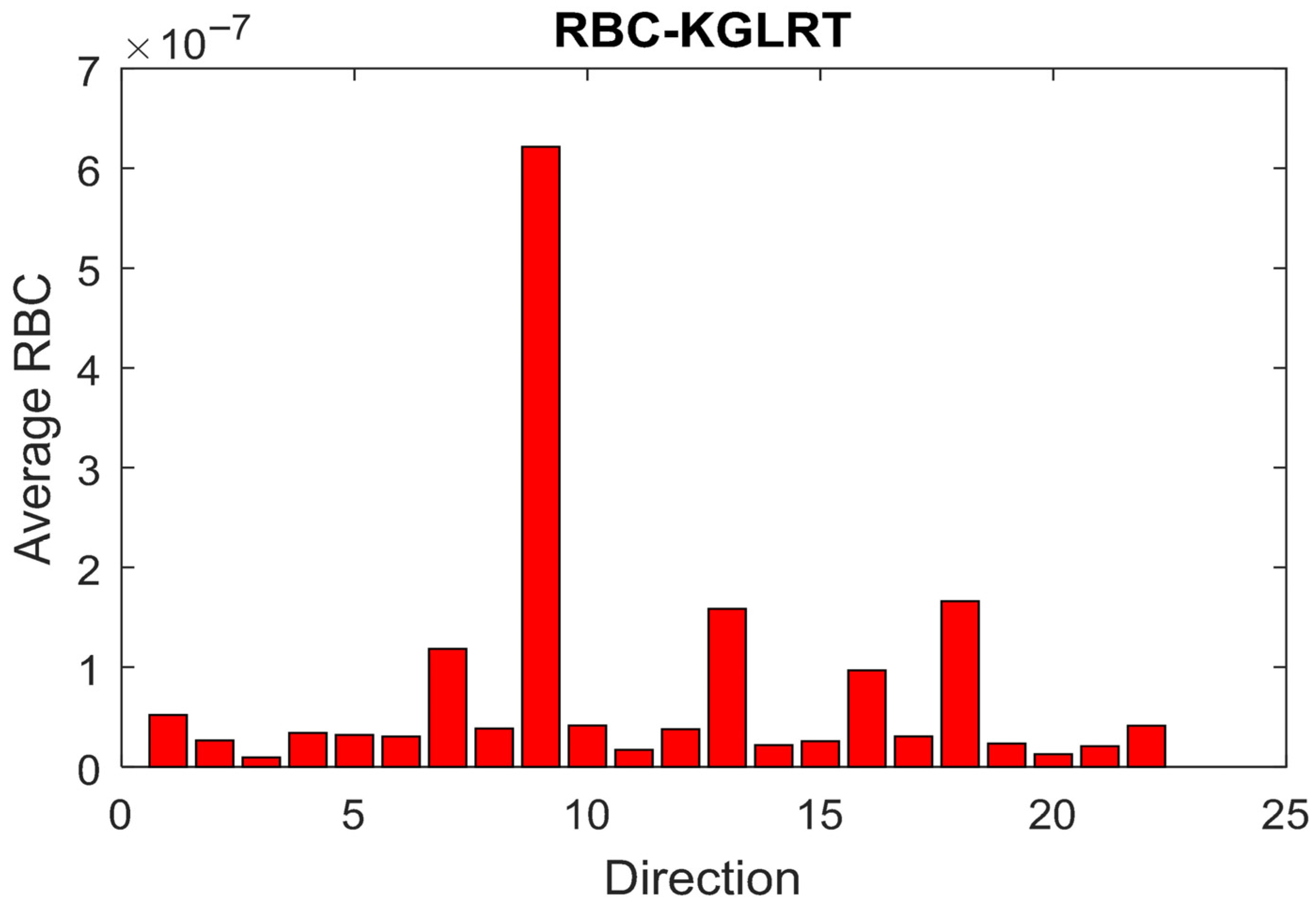

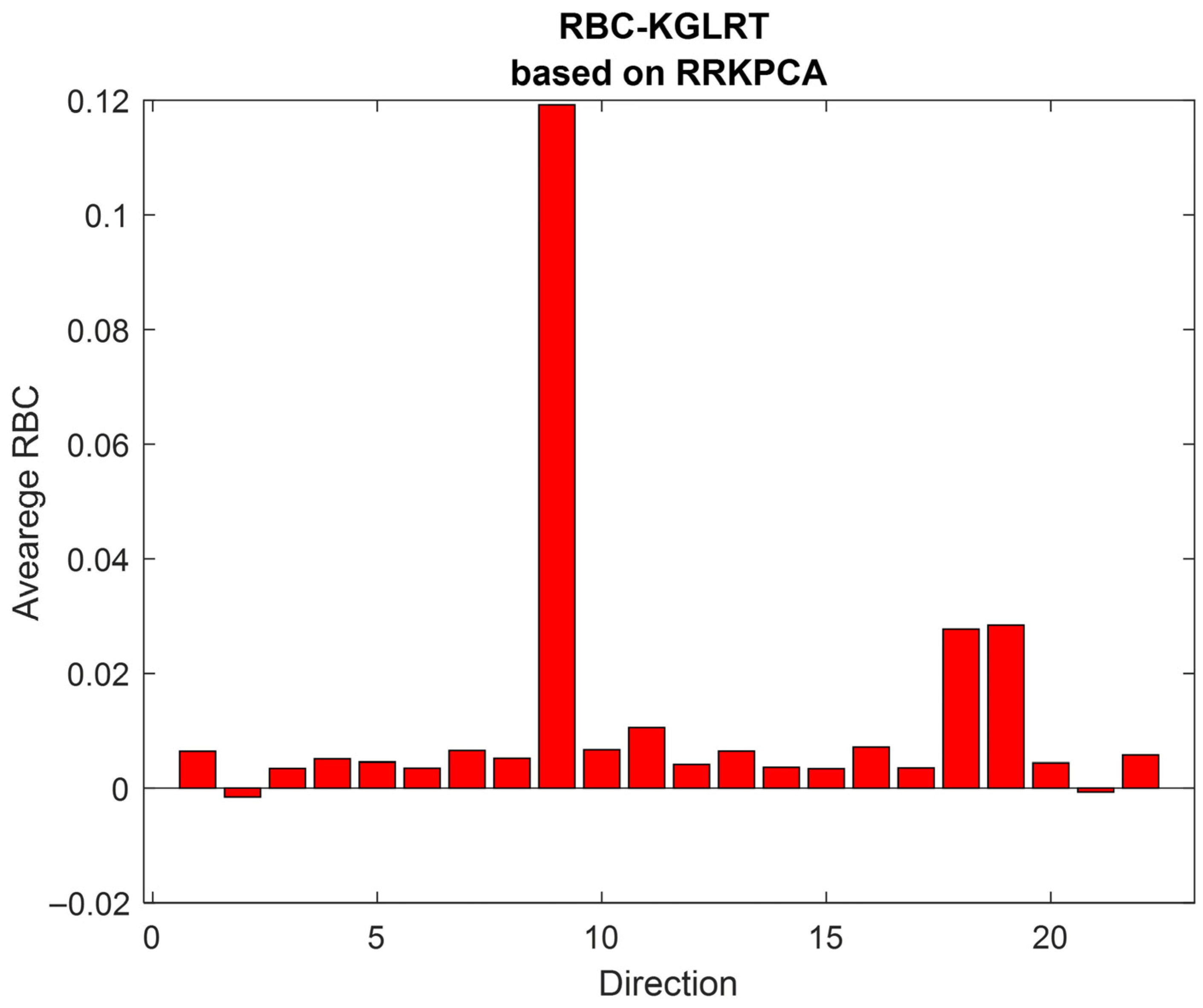

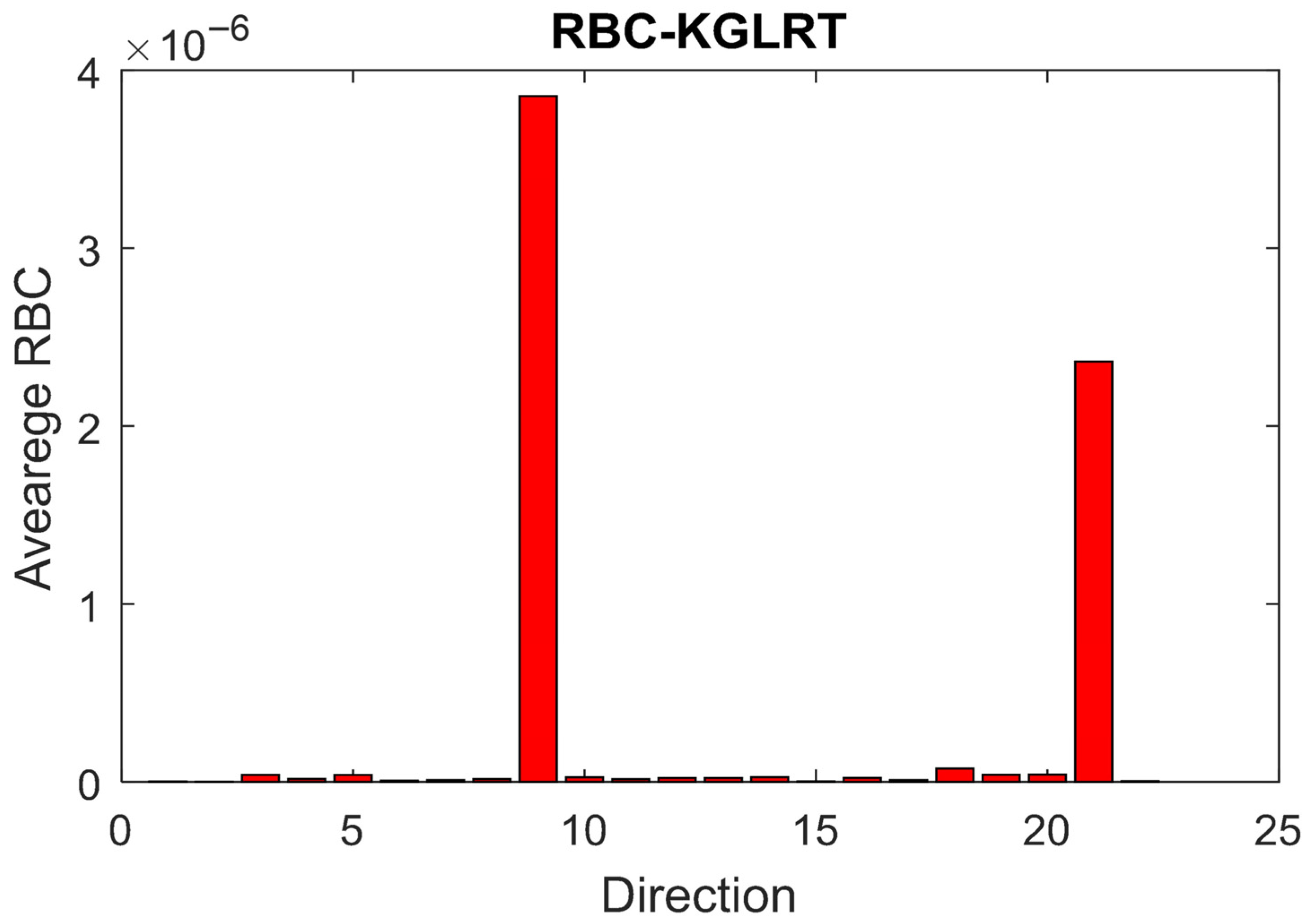

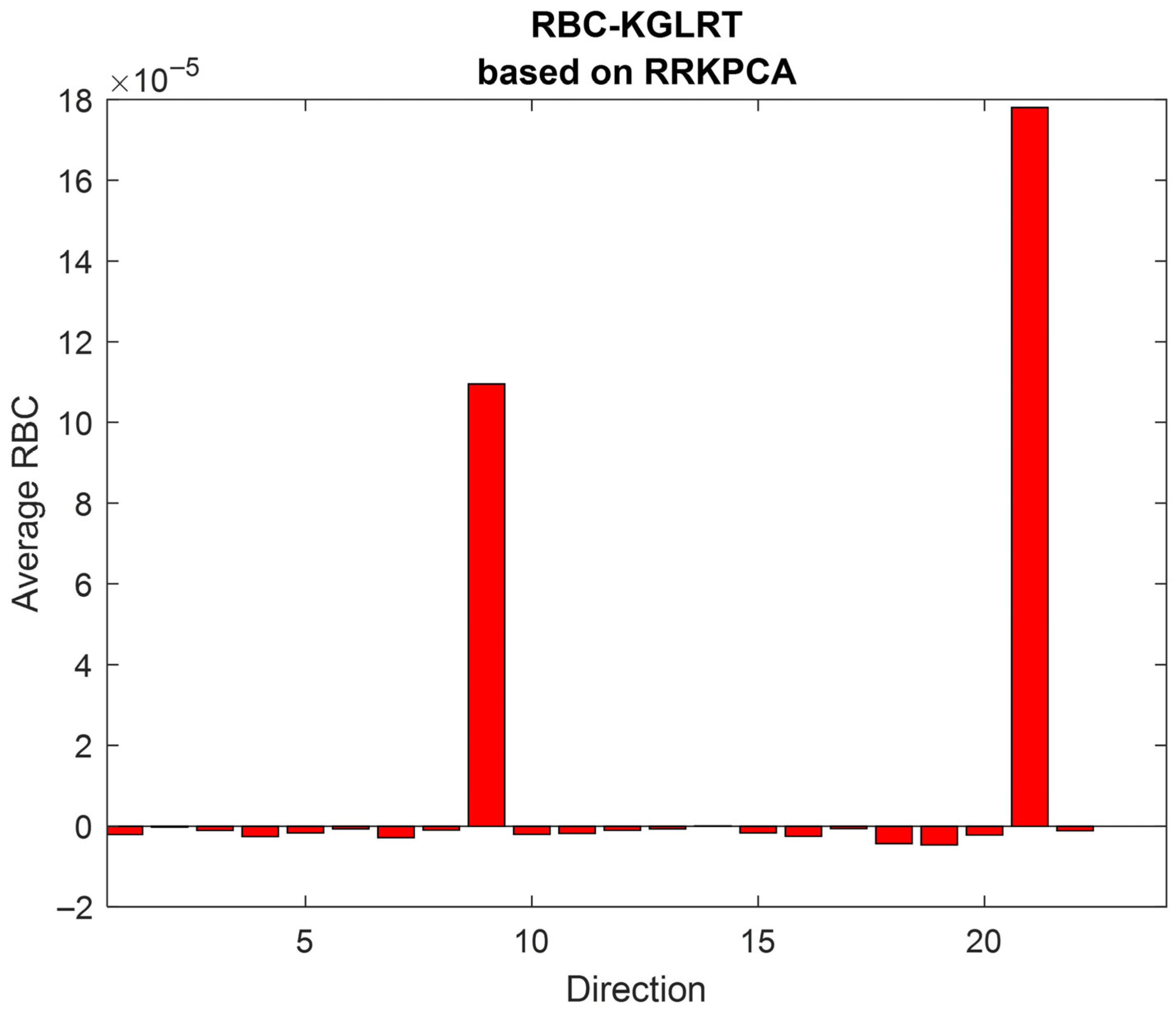

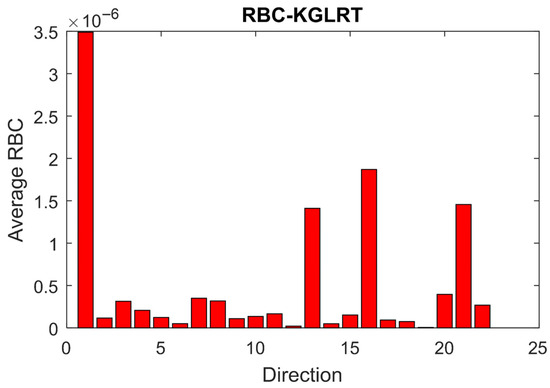

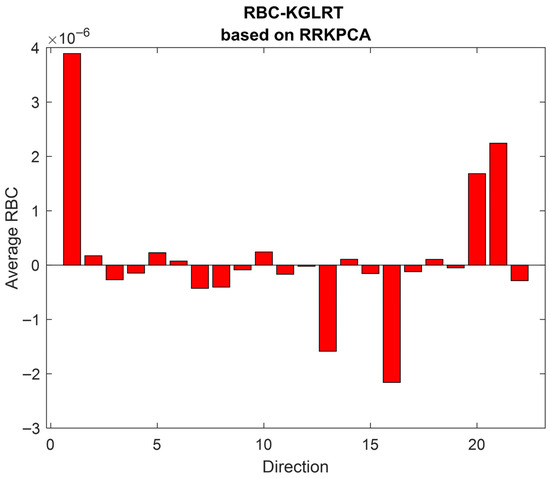

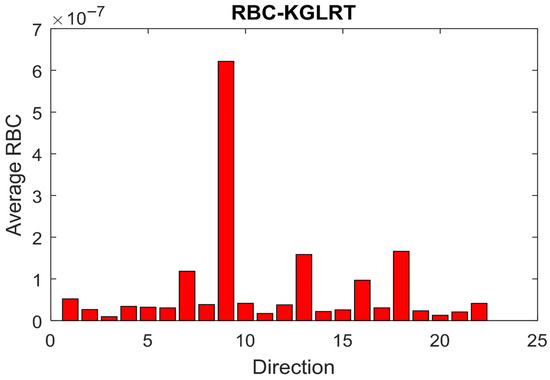

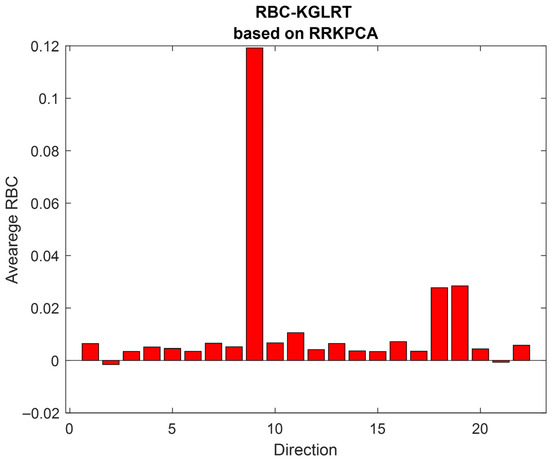

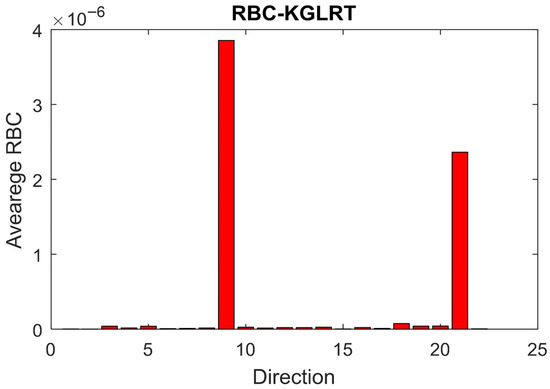

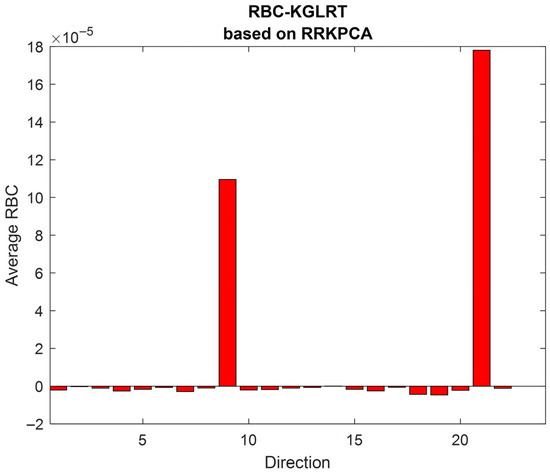

Figure 2, Figure 3, Figure 4, Figure 5, Figure 6 and Figure 7 present the average RBC values using the proposed RBC-KGLRT methods based on KPCA and RR-KPCA, computed for each variable of the three analyzed defects.

Figure 2.

Fault localization for fault IDV (6) using RBC-KGLRT based on KPCA.

Figure 3.

Fault localization for fault IDV (6) using RBC-KGLRT based on RRKPCA.

Figure 4.

Fault localization for fault IDV (11) using RBC-KGLRT based on KPCA.

Figure 5.

Fault localization for fault IDV (11) using RBC-KGLRT based on RRKPCA.

Figure 6.

Fault localization for fault IDV (14) using RBC-KGLRT based on KPCA.

Figure 7.

Fault localization for fault IDV (14) using RBC-KGLRT based on RRKPCA.

The first defect is IDV (6), which is a step change in the flow in feed A. The second defect is IDV (11), which is a random variation in the reactor cooling water inlet temperature that affects the reactor temperature. The third defect is IDV (14), which is the sticking of the reactor cooling water valve.

To demonstrate the advantages of the proposed fault diagnosis method over the RBC-KPCA and RBC-RRKPCA approaches based on the Q index, three representative fault scenarios—IDV (6), IDV (11), and IDV (14)—are selected for detailed analysis in this study.

Table 3 and Table 4 present the results related to fault detection and fault localization performance, respectively.

Table 3.

Fault detection performances.

Table 4.

Fault localization performances.

Table 3 summarizes the evaluated fault detection metrics used to compare the RBC-KPCA and RBC-RRKPCA techniques, both relying on the Q statistic, with the proposed RBC-KGLRT approach built on Kernel Principal Component Analysis and reduced-rank KPCA.

Table 4 summarizes the evaluated fault localization metrics used to compare the RBC-KPCA and RBC-RRKPCA techniques, both relying on the Q statistic, with the proposed RBC-KGLRT approach built on KPCA and reduced-rank KPCA. As indicated in the table, the developed RBC-KGLRT method based on RRKPCA ensures accurate fault localization while achieving a shorter average convergence time.

The results indicate that faults IDV (6) and IDV (14) are successfully detected by all considered methods. However, the RBC-KPCA and RBC-RRKPCA approaches show relatively high false alarm rates. Although the RBC-RRKPCA method reduces the computational time required for fault detection compared with the traditional RBC-KPCA approach, it still results in a significant number of false alarms.

In contrast, the proposed RBC-KGLRT strategy significantly decreases the false alarm rate while maintaining a high detection capability. Furthermore, the method demonstrates superior performance in detecting the IDV (11) fault scenario, achieving a detection rate of 95%, whereas the RBC-KPCA method achieves only 65.07%.

Overall, these results confirm the effectiveness of the proposed RBC-KGLRT framework in improving fault detection reliability.

The first fault scenario considered is IDV (6), which corresponds to a sudden reduction in the flow of stream A. This disturbance causes significant variations in several process variables, particularly the A feed flow (stream 1) and the total feed flow (stream 4). The diagnostic results presented in Figure 2 and Figure 3 indicate that the variable x1 exhibits the highest contribution among all monitored variables. This observation suggests that the measured variable associated with the A feed flow is the primary source of the detected fault.

The IDV (11) fault scenario corresponds to random fluctuations in the reactor cooling water inlet temperature, which consequently lead to an increase in the reactor temperature.

Figure 4 and Figure 5 present the average values of the reconstruction-based contributions (RBCs) obtained using the RBC-KGLRT method implemented using KPCA and the RR-KPCA variant. Under the IDV (11) fault condition, the results show that the average RBC associated with the reactor temperature (direction 9) is considerably higher than the other monitored variables for both approaches. This observation indicates that the reactor temperature is the variable most strongly affected by this disturbance.

Fault IDV (14) corresponds to a blockage in the reactor cooling water valve. This obstruction affects both the reactor temperature (direction 9) and the temperature of the reactor cooling water (direction 21). The impact of this blockage is evident in the diagnostic results, as illustrated in Figure 6 and Figure 7. The reactor temperature and the reactor cooling water flow exhibit the highest average RBC values compared with the other variables.

As a result, the RBC-KGLRT framework reduces false alarms, improves the accuracy of fault identification, and shortens the average convergence time of the diagnostic procedure.

Future work may focus on extending the proposed framework to large-scale industrial datasets and real-time monitoring environments, as well as exploring its integration with other data-driven monitoring techniques.

6. Conclusions

In this study, a new fault diagnosis strategy, referred to as RBC-KGLRT, is proposed. This approach combines the reconstruction-based contribution (RBC) principle with a monitoring chart derived from the Generalized Likelihood Ratio Test in its kernel formulation. To evaluate the effectiveness of the proposed method, a comprehensive case study based on the Tennessee Eastman Process (TEP) is conducted. The simulation results demonstrate that the proposed technique achieves a balanced trade-off among the considered performance criteria.

Fault diagnosis plays a fundamental role in ensuring the safe operation of industrial systems, involving the detection of abnormal behavior and the accurate localization of faults within the process. In practice, once a fault occurs, it must be identified and localized as quickly as possible to prevent performance degradation or potential safety issues.

The conventional RBC-KPCA approach, based on the Q (squared prediction error) index, often requires considerable computational time for both detection and localization. This limitation is mainly due to the large size of the kernel matrix in Kernel Principal Component Analysis, which increases the computational cost of monitoring. To address this issue, the RBC-RRKPCA method was proposed. By incorporating the reduced-rank KPCA framework, this technique decreases the average convergence time and enables faster detection and localization of faults. However, simulation results indicate that this method may suffer from a relatively high false alarm rate—for instance, approximately 32.14% under the IDV (6) fault scenario—compared with the traditional RBC-KPCA approach.

The proposed RBC-KGLRT method significantly reduces the false alarm rate, to approximately 0.44% under the IDV (6) fault scenario, compared with both RBC-KPCA and RBC-RRKPCA techniques relying on the Q statistic. In addition, the RBC-KGLRT method built on the reduced-rank KPCA framework achieves improved detection performance. For example, in the IDV (11) fault scenario, the detection rate reaches 95%, whereas the RBC-RRKPCA approach achieves approximately 89.17%.

By combining the strong anomaly detection capability of the Kernel Generalized Likelihood Ratio Test with the precise localization ability of the reconstruction-based contribution method, the proposed framework provides a more reliable and efficient fault diagnosis solution. This integration not only reduces false alarms but also improves the accuracy of identifying faulty variables.

Author Contributions

Conceptualization, I.H.; formal analysis, I.H. and H.L.; methodology, O.T., H.L. and A.A.; software, I.H.; validation, O.T., A.A. and H.L.; writing—original draft preparation, I.H.; writing—review and editing, H.L., E.A., and O.T.; visualization, I.H. and E.A.; supervision, O.T., A.A. and E.A.; project administration, O.T., A.A. and E.A. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest to report regarding the present study.

Abbreviations

| KPCA | Kernel Principal Component Analysis |

| RBC | Reconstruction Based Contribution |

| KGLRT | Kernel Generalized Likelihood Ratio Test |

| RR-KPCA | Reduced Rank KPCA |

| RR-KGLRT | Reduced Rank KPCA based on Kernel Generalized Likelihood Ratio Test |

| Q | Squared Prediction error |

| RBC-KGLRT | Reconstruction Based Contribution based on Kernel Generalized Likelihood Ratio Test |

| RBC-RRKPCA | Reconstruction Based Contribution based on Q index combining with RR-KPCA method |

References

- Babak, V.; Zaporozhets, A.; Zvaritch, V.; Scherbak, L.; Myslovych, M.; Kuts, Y. Models and measures in theory and practice of manufacturing processes. IFAC-Pap. 2022, 55, 1956–1961. [Google Scholar] [CrossRef]

- Wang, J.; Zhou, Z.; Li, Z.; Du, S. A novel fault detection scheme based on mutual k-nearest neighbor method: Application on the industrial processes with outliers. Processes 2022, 10, 497. [Google Scholar] [CrossRef]

- Ziane, A.; Mekhilef, S.; Dabou, R.; Sahouane, N.; Necaibia, A.; Rouabhia, A.; Izem, T.A. Online fault detection in grid-connected PV system using nonlinear multivariate statistical analysis. Electr. Eng. 2026, 108, 107. [Google Scholar] [CrossRef]

- Gravanis, G.; Dragogias, I.; Papakiriakos, K.; Ziogou, C.; Diamantaras, K. Fault detection and diagnosis for non-linear processes empowered by dynamic neural networks. Comput. Chem. Eng. 2022, 156, 107531. [Google Scholar] [CrossRef]

- Lahdhiri, H.; Elaissi, I.; Taouali, O.; Harakat, M.F.; Messaoud, H. Nonlinear process monitoring based on new reduced Rank-KPCA method. Stoch. Environ. Res. Risk Assess. 2017, 32, 1833–1848. [Google Scholar] [CrossRef]

- Wang, Q.; Liu, Y.B.; He, X.; Liu, S.Y.; Liu, J.H. Fault diagnosis of bearing based on KPCA and KNN method. Adv. Mater. Res. 2014, 986, 1491–1496. [Google Scholar] [CrossRef]

- Zhang, Y.; Ma, C. Fault diagnosis of nonlinear processes using multiscale KPCA and multiscale KPLS. Chem. Eng. Sci. 2011, 66, 64–72. [Google Scholar] [CrossRef]

- Fazai, R.; Taouali, O.; Harkat, M.F.; Nasreddine, B. A new fault detection method for nonlinear process monitoring. Int. J. Adv. Manuf. Technol. 2016, 87, 3425–3436. [Google Scholar] [CrossRef]

- Fezai, R.; Mansouri, M.; Trabelsi, M.; Hajji, M.; Nounou, H.; Nounou, M. Online reduced kernel GLRT technique for improved fault detection in photovoltaic systems. Energy 2019, 179, 1133–1154. [Google Scholar] [CrossRef]

- Cho, J.-H.; Lee, J.-M.; Choi, S.W.; Lee, D.; Lee, I.-B. Fault identification for process monitoring using kernel principal component analysis. Chem. Eng. Sci. 2005, 60, 279–288. [Google Scholar] [CrossRef]

- Shen, J.; Xu, F. Method of fault feature selection and fusion based on poll mode and optimized weighted KPCA for bearings. Measurement 2022, 194, 110950. [Google Scholar] [CrossRef]

- Zhang, Q.; Li, P.; Lang, X.; Mia, A. Improved dynamic kernel principal component analysis for fault detection. Measurement 2020, 158, 107738. [Google Scholar] [CrossRef]

- Lang, C.I.; Sun, F.K.; Lawler, B.; Dillon, J.; Al Dujaili, A.; Ruth, J.; Boning, D.S. One class process anomaly detection using kernel density estimation methods. IEEE Trans. Semicond. Manuf. 2022, 35, 457–469. [Google Scholar] [CrossRef]

- Samuel, R.T.; Cao, Y. Nonlinear process fault detection and identification using kernel PCA and kernel density estimation. Syst. Sci. Control. Eng. 2016, 4, 165–174. [Google Scholar] [CrossRef]

- Harkat, M.F.; Mansouri, M.; Nounou, M.; Nounou, H. Enhanced data validation strategy of air quality monitoring network. Environ. Res. 2018, 160, 183–194. [Google Scholar] [CrossRef] [PubMed]

- Lahdhiri, H.; Taouali, O. Reduced Rank KPCA based on GLRT chart for sensor fault detection in nonlinear chemical process. Measurement 2021, 169, 108342. [Google Scholar] [CrossRef]

- Gertler, J.; McAvoy, T. Principal Component Analysis and Parity Relations—A Strong Duality; IFAC Conference SAFEPROCESS: Hull, UK, 1997; pp. 837–842. [Google Scholar]

- Harkat, M.-F.; Mourot, G.; Ragot, J. An improved PCA scheme for sensor FDI: Application to an air quality monitoring network. J. Process Control 2006, 16, 625–634. [Google Scholar] [CrossRef]

- Hamrouni, I.; Lahdhiri, H.; Abdellafou, K.B.; Aljuhani, A.; Taouali, O.; Bouzrara, K. Anomaly Detection and Localization for Process Security Based on the Multivariate Statistical Method. Math. Probl. Eng. 2022, 2022, 5580774. [Google Scholar] [CrossRef]

- Fazai, R.; Abdellafou, K.B.; Said, M.; Taouali, O. Online fault detection and isolation of an AIR quality monitoring network based on machine learning and metaheuristic methods. Int. J. Adv. Manuf. Technol. 2018, 99, 2789–2802. [Google Scholar] [CrossRef]

- Lahdhiri, H.; Said, M.; Abdellafou, K.B.; Taouali, O.; Harkat, M.F. Supervised process monitoring and fault diagnosis based on machine learning methods. Int. J. Adv. Manuf. Technol. 2019, 102, 2321–2337. [Google Scholar] [CrossRef]

- Gharahbagheri, H.; Imtiaz, S.A.; Khan, F. Root cause diagnosis of process fault using KPCA and Bayesian network. Ind. Eng. Chem. Res. 2017, 56, 2054–2070. [Google Scholar] [CrossRef]

- Cui, P.; Li, J.; Wang, G. Improved kernel principal component analysis for fault detection. Expert Syst. Appl. 2008, 34, 1210–1219. [Google Scholar] [CrossRef]

- Yang, J.; Jin, Z.; Yang, J.-Y.; Zhang, D.; Frangi, A.F. Essence of kernel Fisher discriminant: KPCA plus LDA. Pattern Recognit. 2004, 37, 2097–2100. [Google Scholar] [CrossRef]

- Jafari, N.; Lopes, A.M. Fault Detection and Identification with Kernel Principal Component Analysis and Long Short-Term Memory Artificial Neural Network Combined Method. Axioms 2023, 12, 583. [Google Scholar] [CrossRef]

- Wang, H.; Peng, M.J.; Yu, Y.; Saeed, H.; Hao, C.M.; Liu, Y.K. Fault identification and diagnosis based on KPCA and similarity clustering for nuclear power plants. Ann. Nucl. Energy 2021, 150, 107786. [Google Scholar] [CrossRef]

- Sinaga, K.P.; Hussain, I.; Yang, M.-S. Entropy K-means clustering with feature reduction under unknown number of clusters. IEEE Access 2021, 9, 67736–67751. [Google Scholar] [CrossRef]

- Ni, J.; Zhang, C.; Yang, S.X. An adaptive approach based on KPCA and SVM for real-time fault diagnosis of HVCBs. IEEE Trans. Power Deliv. 2011, 26, 1960–1971. [Google Scholar] [CrossRef]

- Honeine, P. Analyzing sparse dictionaries for online learning with kernels. IEEE Trans. Signal Process. 2015, 63, 6343–6353. [Google Scholar] [CrossRef]

- Alcala, C.F.; Qin, S.J. Reconstruction-based contribution for process monitoring with kernel principal component analysis. Ind. Eng. Chem. Res. 2010, 49, 7849–7857. [Google Scholar] [CrossRef]

- Alcala, C.F. Fault Diagnosis with Reconstruction Based Contributions for Statistical Process Monitoring. Ph.D. Thesis, L’école USC Université de California de Sud, Los Angeles, CA, USA, 2011. [Google Scholar]

- Lahdhiri, H.; Aljuhani, A.; Abdellafou, K.B.; Taouali, O. An Improved Fault Diagnosis Strategy for Process Monitoring Using Reconstruction Based Contributions. IEEE Access 2021, 9, 79520–79533. [Google Scholar] [CrossRef]

- Mansouri, M.; Nounou, M.; Nounou, H.; Karim, N. Kernel PCA-based GLRT for nonlinear fault detection of chemical processes. J. Loss Prev. Process Ind. 2016, 40, 334–347. [Google Scholar] [CrossRef]

- Lee, J.M.; Yoo, C.; Lee, I.B. Fault detection of batch processes using multiway kernel principal component analysis. Comput. Chem. Eng. 2004, 28, 1837–1847. [Google Scholar] [CrossRef]

- Hu, Q.; Qin, A.; Zhang, Q.; He, J.; Sun, G. Fault diagnosis based on weighted extreme learning machine with wavelet packet decomposition and KPCA. IEEE Sens. J. 2018, 18, 8472–8483. [Google Scholar] [CrossRef]

- Chen, Z.; Zhao, W.; Shen, P.; Wang, C.; Jiang, Y. A fault diagnosis method for ultrasonic flow meters based on KPCA-CLSSA-SVM. Processes 2024, 12, 809. [Google Scholar] [CrossRef]

- Valle, S.; Li, W.; Qin, S.J. Selection of the number of principal components: The variance of the reconstruction error criterion with a comparison to other methods. Ind. Eng. Chem. Res. 1999, 38, 4389–4401. [Google Scholar] [CrossRef]

- Zeng, L.; Long, W.; Li, Y. A Novel Method for Gas Turbine Condition Monitoring Based on KPCA and Analysis of Statistics T2 and SPE. Processes 2019, 7, 124. [Google Scholar] [CrossRef]

- Choi, S.; Changkyu, L.; Lee, J.; Park, L.; Lee, I. Fault detection and identification of nonlinear processes based on kernel PCA. Chemom. Intell. Lab. Syst. 2005, 75, 55–67. [Google Scholar] [CrossRef]

- Sheriff, M.Z.; Mansouri, M.; Karim, M.N.; Nounou, H.; Nounou, M. Fault detection using multiscale PCA-based moving window GLRT. J. Process Control 2017, 54, 47–64. [Google Scholar] [CrossRef]

- Harrou, F.; Nounou, M.N.; Nounou, H.N.; Madakyaru, M. Statistical fault detection using PCA-based GLR hypothesis testing. J. Loss Prev. Process Ind. 2013, 26, 129–139. [Google Scholar] [CrossRef]

- Fezai, R.; Mansouri, M.; Abodayeh, K.; Nounou, H.; Nounou, M.; Messaoud, H. Partial kernel PCA-based GLRT for fault diagnosis of nonlinear processes. J. Intell. Fuzzy Syst. 2020, 38, 4829–4843. [Google Scholar] [CrossRef]

- Zhu, J.; Shi, H.; Song, B.; Tan, S.; Tao, Y. Deep neural network based recursive feature learning for nonlinear dynamic process monitoring. Can. J. Chem. Eng. 2020, 98, 919–933. [Google Scholar] [CrossRef]

- Lee, G.; Han, C.; Yoon, E.S. Multiple-fault diagnosis of the Tennessee Eastman process based on system decomposition and dynamic PLS. Ind. Eng. Chem. Res. 2004, 43, 8037–8048. [Google Scholar] [CrossRef]

- Ma, L.; Dong, J.; Peng, K. A novel key performance indicator oriented hierarchical monitoring and propagation path identification framework for complex industrial processes. ISA Trans. 2020, 96, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Bolboacă, R.; Haller, P.; Genge, B. Feature analysis and ensemble-based fault detection techniques for nonlinear systems. Neural Comput. Appl. 2025, 37, 10465–10489. [Google Scholar] [CrossRef]

- Kaib, M.T.H.; Kouadri, A.; Harkat, M.F.; Bensmail, A.; Mansouri, M. Improving kernel PCA-based algorithm for fault detection in nonlinear industrial process through fractal dimension. Process Saf. Environ. Prot. 2023, 179, 525–536. [Google Scholar] [CrossRef]

- Shahzad, F.; Huang, Z.; Memon, W. Process Monitoring Using Kernel PCA and Kernel Density Estimation-Based SSGLR Method for Nonlinear Fault Detection. Appl. Sci. 2022, 12, 2981. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.