NightTrack: Joint Night-Time Image Enhancement and Object Tracking for UAVs

Highlights

- We propose NightTrack, a unified framework that jointly optimizes low-light enhancement and object tracking, outperforming state-of-the-art methods in night-time UAV scenarios.

- By introducing Pyramid Attention Modules (PAMs) and jointly estimating illumination and noise, the framework significantly enhances the discriminability of features in low-light conditions.

- The unified paradigm of integrating enhancement and tracking offers a more effective solution for tasks with competing objectives than traditional two-stage pipelines.

- The proposed framework substantially improves the robustness and precision of night-time UAV tracking, presenting a novel perspective to enable practical applications in challenging low-light environments.

Abstract

1. Introduction

- (1)

- A novel end-to-end framework that jointly optimizes low-light image enhancement and object tracking, which significantly improves tracking performance at night.

- (2)

- Pyramid Attention Modules (PAMs) that each aggregate multi-scale contextual information, thereby substantially enhancing the underlying network’s representation capability and facilitating better detail restoration in night scenes.

- (3)

- An integration of Retinex theory-based illumination and noise curves within the enhancement stage, coupled with a no-reference loss function that enables unsupervised learning of the feature transformation without paired data, counteracting the degradation caused by low-light conditions.

- (4)

- A systematic experimental evaluation of the proposed joint optimization method, demonstrating its SOTA performance on multiple night-time tracking benchmarks, outperforming baseline tracking networks.

2. Related Work

2.1. Low-Light Image Enhancement Methods

2.2. Image Enhancement for Downstream Vision Tasks

3. Methodology

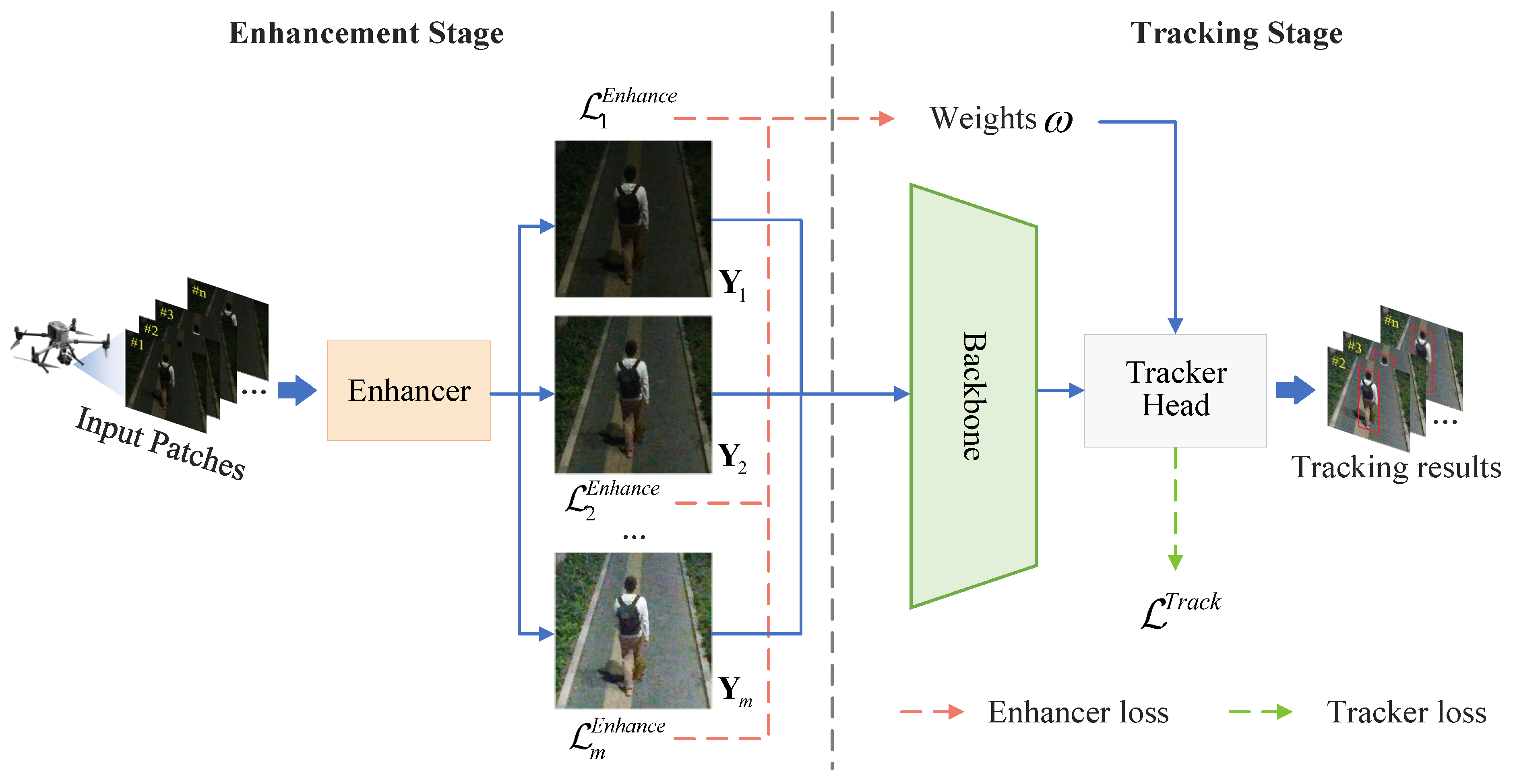

3.1. Overall Framework

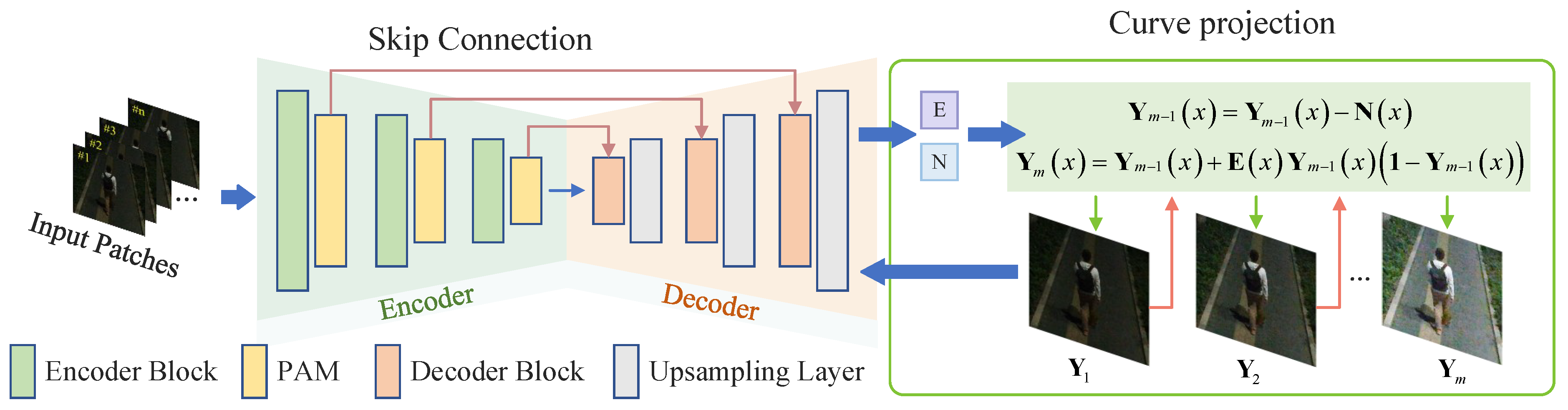

3.2. Enhancement Stage

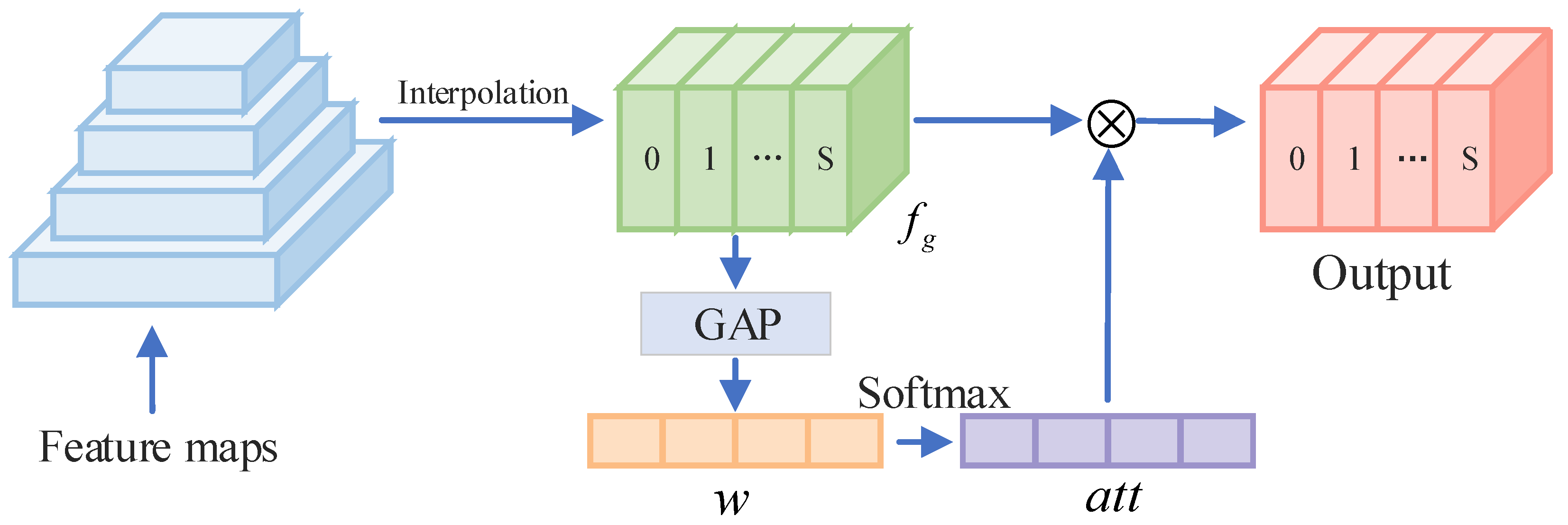

3.2.1. Pyramid Attention Module

| Algorithm 1 Pyramid Attention Module (PAM) algorithm |

| Input: Feature map Parameter: Set the pooling sizes: Define is the size of , S is the number of sub-features Output: The feature map of the K-th encoder

|

3.2.2. Curve Projection Based on Retinex Theory

3.2.3. Loss Functions

3.3. Tracking Stage

4. Experimental Evaluation

4.1. Experimental Setup

4.2. Evaluation Metrics

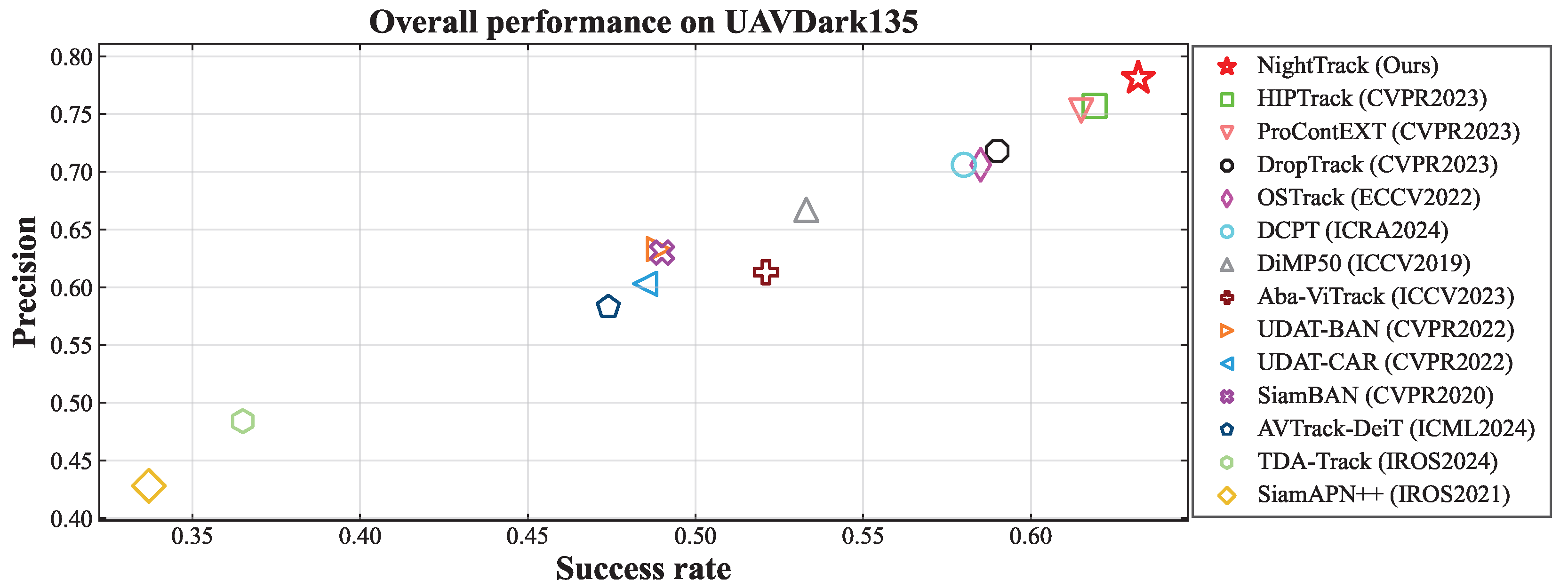

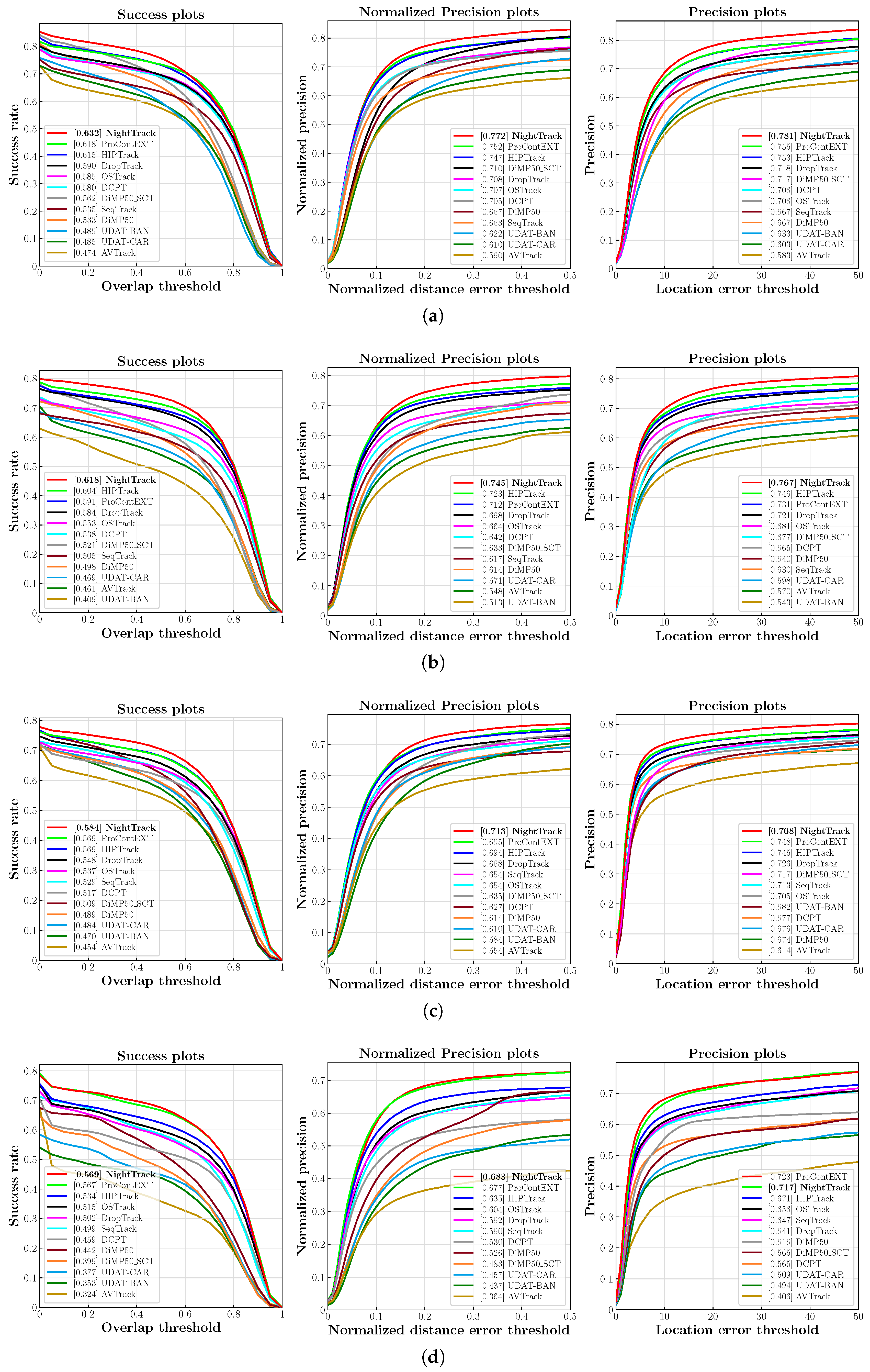

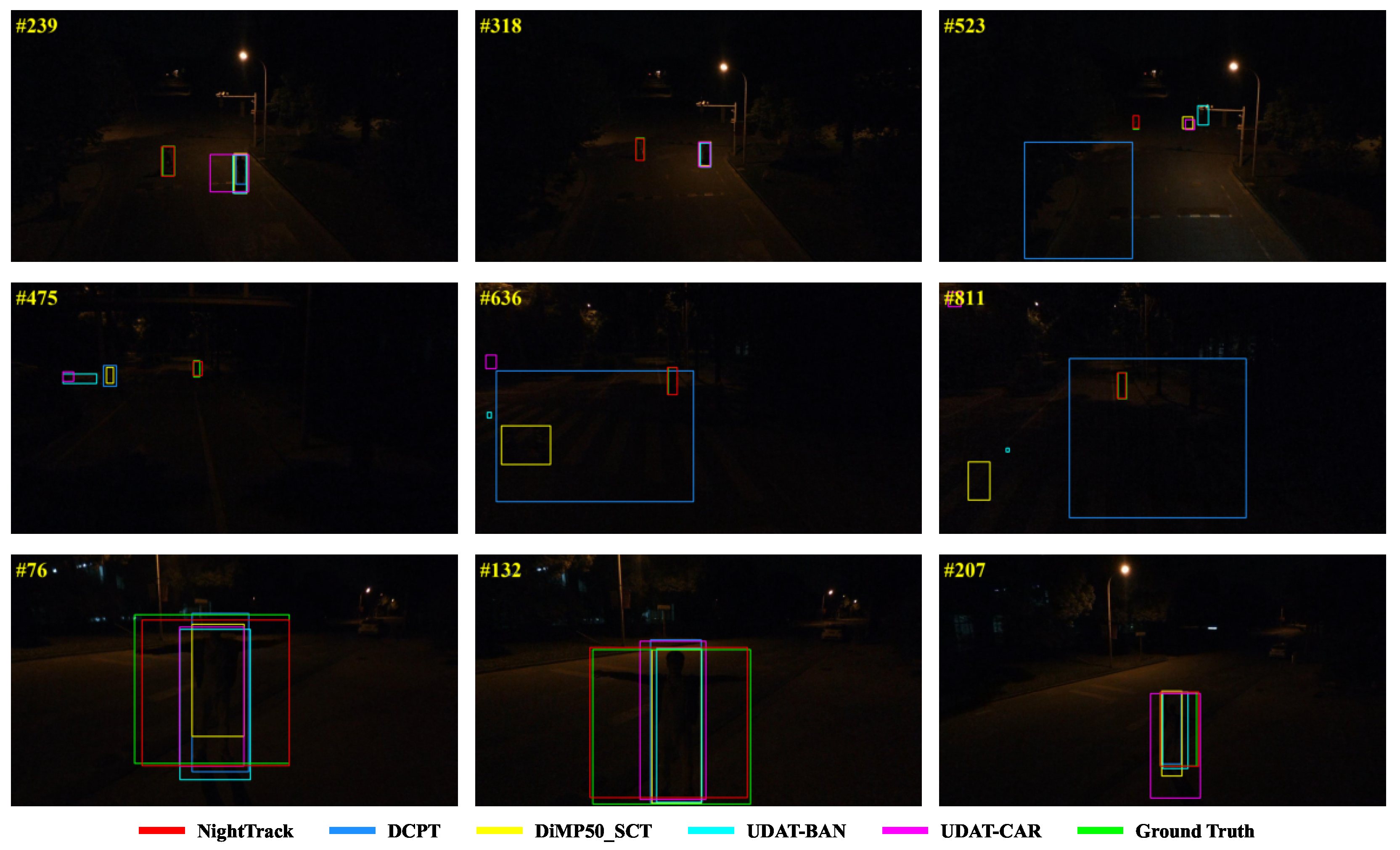

4.3. Overall Performance

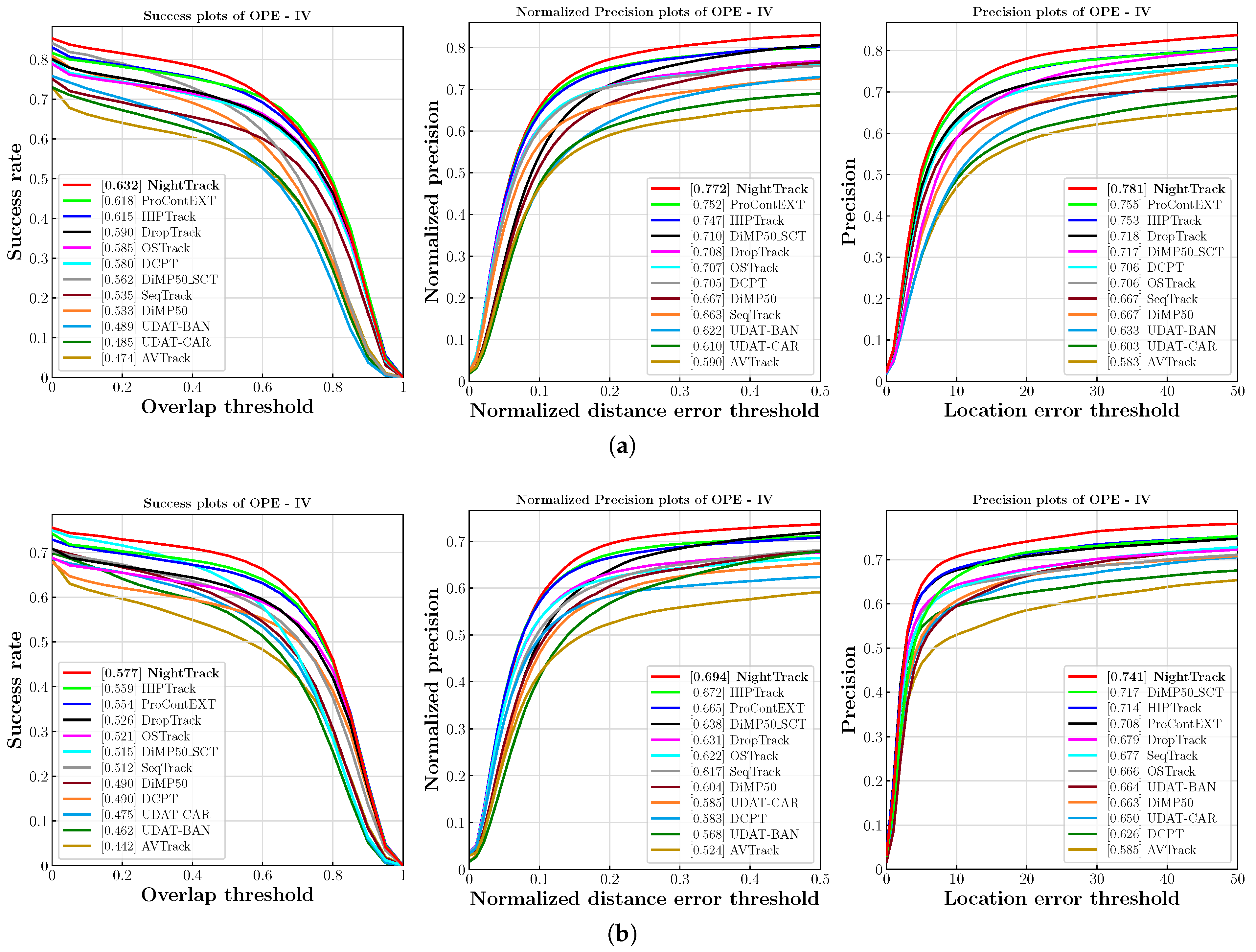

4.4. Illumination-Oriented Evaluation

4.5. Ablation Study

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| UAV | Unmanned Aerial Vehicle |

| PAMs | Pyramid Attention Modules |

| SOTA | State-of-the-art |

| OPE | One-Pass Evaluation |

| SP | Success Plot |

| AUC | Area Under the Curve |

| CLE | Center Location Error |

| PP | Precision Plot |

| NAS | Neural Architecture Search |

Appendix A. Hyperparameter Selection

Appendix A.1. Primary Balancing Coefficient (γ)

Appendix A.2. Internal Balancing Coefficients (λcen, λill, λcol, λnoi)

Appendix B. Table of Notations

| Symbol | Description | Location |

|---|---|---|

| C | The total number of channels in the global feature . | Section 3.2.1 |

| The two fully connected layers used to compute attention weights. | Section 3.2.1 (Equation (1)) | |

| The height and width of the input image . | Section 3.2 | |

| K | The number of encoders (and decoders) in the U-Net backbone. | Section 3.2 |

| M | The total number of iterations in the curve mapping process. | Section 3.2.2 |

| S | The number of sub-features the global feature is partitioned into. | Section 3.2.1 |

| T | The number of non-overlapping patches the image is divided into. | Section 3.2.3 (Equation (13)) |

| e | Euler’s number, used in the calculation of the weight map . | Section 3.2.3 (Equation (13)) |

| The height and width of the feature map after K downsampling operations. | Section 3.2 | |

| The spatial coordinates (row and column) of a patch. | Section 3.2.3 (Equation (13)) | |

| Indices for color channels, used in the color balance loss. | Section 3.2.3 (Equation (15)) | |

| The empirically set target average illumination value for the patches (0.6). | Section 3.2.3 (Equation (13)) | |

| A matrix of ones with the same dimensions as the input image. | Section 3.2.2 (Equation (8)) | |

| The learned parameter matrix for the m-th iteration, controlling the mapping intensity. | Section 3.2.2 (Equation (9)) | |

| The final, recalibrated attention weight after Softmax normalization. | Section 3.2.1 | |

| A matrix composed of the average intensity values of each patch. | Section 3.2.3 (Equation (13)) | |

| The reciprocal of the illumination map, defined as . | Section 3.2.2 (Equation (8)) | |

| The input low-light image, with dimensions . | Section 3.2 | |

| The estimated illumination map, with dimensions . | Section 3.2 | |

| The estimated noise map, with dimensions . | Section 3.2 | |

| The final weighted output feature for the i-th sub-feature. | Section 3.2.1 (Equation (4)) | |

| The reflection component, representing the intrinsic properties of objects. | Section 3.2.2 | |

| A spatial weight map that emphasizes the central area of an image. | Section 3.2.3 (Equation (13)) | |

| The enhanced image at the m-th iteration. | Section 3.2 | |

| The brightness response of the image at pixel x after the m-th iteration. | Section 3.2.2 | |

| The p-th color channel (e.g., R, G, B) of the enhanced image . | Section 3.2.3 (Equation (15)) | |

| The global context feature aggregated by the Pyramid Attention Module (PAM). | Section 3.2.1 | |

| The i-th sub-feature, with dimensions . | Section 3.2.1 | |

| The initial attention weight for before normalization. | Section 3.2.1 | |

| The channel descriptor for after global average pooling. | Section 3.2.1 | |

| The denoised version of the output from the -th iteration. | Section 3.2.2 (Equation (10)) | |

| The ReLU activation function. | Section 3.2.1 (Equation (1)) | |

| The primary balancing coefficient for the enhancement loss. | Section 3.2.2 (Equation (12)) | |

| Internal balancing coefficient for the center exposure intensity loss. | Section 3.2.3 (Equation (17)) | |

| Internal balancing coefficient for the color balance loss. | Section 3.2.3 (Equation (17)) | |

| Pre-defined hyperparameters to balance the GIoU loss. | Section 3.3 (Equation (19)) | |

| Internal balancing coefficient for the illumination estimation loss. | Section 3.2.3 (Equation (17)) | |

| Pre-defined hyperparameters to balance the L1 loss. | Section 3.3 (Equation (19)) | |

| Pre-defined hyperparameters to balance the location loss. | Section 3.3 (Equation (19)) | |

| Internal balancing coefficient for the noise estimation loss. | Section 3.2.3 (Equation (17)) | |

| ∇ | The first-order differential operator (gradient). | Section 3.2.3 (Equation (14)) |

| ⊙ | The element-wise (Hadamard) multiplication operator. | Section 3.2.1 |

| The weight for the original (non-enhanced) image, which is fixed to 1. | Section 3.3 (Equation (18)) | |

| The weight assigned to the tracking loss of the m-th iteration, computed from its enhancement loss. | Section 3.3 (Equation (18)) | |

| ⊘ | The pixel-wise (element-wise) division operator. | Section 3.2.2 (Equation (7)) |

| The Sigmoid activation function. | Section 3.2.1 (Equation (1)) | |

| The total loss for the enhancement stage, defined as the sum of all iterative losses. | Section 3.2.2 | |

| The total tracking loss from the HipTrack baseline, a weighted sum of its components. | Section 3.3 (Equation (19)) | |

| The Generalized Intersection over Union (GIoU) loss component. | Section 3.3 (Equation (19)) | |

| The L1 norm loss component. | Section 3.3 (Equation (19)) | |

| The location classification loss component. | Section 3.3 (Equation (19)) | |

| The total loss for the end-to-end joint optimization of the enhancer and tracker. | Section 3.3 (Equation (20)) | |

| The enhancement loss corresponding to the output of the m-th iteration. | Section 3.2.2 | |

| The center exposure intensity loss, focusing on the brightness of the central area. | Section 3.2.3 (Equation (13)) | |

| The color balance loss, minimizing intensity differences between color channels. | Section 3.2.3 (Equation (15)) | |

| The illumination estimation loss, enforcing the smoothness of the illumination map . | Section 3.2.3 (Equation (14)) | |

| The noise estimation loss, used to suppress the estimated noise component . | Section 3.2.3 (Equation (16)) |

References

- Aziz, N.N.A.; Mustafah, Y.M.; Azman, A.W.; Shafie, A.A.; Yusoff, M.I.; Zainuddin, N.A.; Rashidan, M.A. Features-based moving objects tracking for smart video surveillances: A review. Int. J. Artif. Intell. Tools 2018, 27, 1830001. [Google Scholar] [CrossRef]

- Chuang, H.M.; He, D.; Namiki, A. Autonomous target tracking of UAV using high-speed visual feedback. Appl. Sci. 2019, 9, 4552. [Google Scholar] [CrossRef]

- Muller, M.; Bibi, A.; Giancola, S.; Alsubaihi, S.; Ghanem, B. Trackingnet: A large-scale dataset and benchmark for object tracking in the wild. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 300–317. [Google Scholar]

- Huang, L.; Zhao, X.; Huang, K. Got-10k: A large high-diversity benchmark for generic object tracking in the wild. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 43, 1562–1577. [Google Scholar] [CrossRef] [PubMed]

- Fan, H.; Lin, L.; Yang, F.; Chu, P.; Deng, G.; Yu, S.; Bai, H.; Xu, Y.; Liao, C.; Ling, H. Lasot: A high-quality benchmark for large-scale single object tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–17 June 2019; pp. 5374–5383. [Google Scholar]

- Cai, W.; Liu, Q.; Wang, Y. Hiptrack: Visual tracking with historical prompts. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024; pp. 19258–19267. [Google Scholar]

- Lan, J.P.; Cheng, Z.Q.; He, J.Y.; Li, C.; Luo, B.; Bao, X.; Xiang, W.; Geng, Y.; Xie, X. Procontext: Exploring progressive context transformer for tracking. In Proceedings of the ICASSP 2023—2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Rhodes Island, Greece, 4–10 June 2023; IEEE: New York, NY, USA, 2023; pp. 1–5. [Google Scholar]

- Wu, Q.; Yang, T.; Liu, Z.; Wu, B.; Shan, Y.; Chan, A.B. Dropmae: Masked autoencoders with spatial-attention dropout for tracking tasks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 14561–14571. [Google Scholar]

- Lin, B.; Zheng, J.; Xue, C.; Fu, L.; Li, Y.; Shen, Q. Motion-aware correlation filter-based object tracking in satellite videos. IEEE Trans. Geosci. Remote Sens. 2024, 62, 1–13. [Google Scholar] [CrossRef]

- Chen, Q.; Wang, X.; Liu, F.; Zuo, Y.; Liu, C. Spatial-Temporal Contextual Aggregation Siamese Network for UAV Tracking. Drones 2024, 8, 433. [Google Scholar] [CrossRef]

- Ahmed, M.; Rasheed, B.; Salloum, H.; Hegazy, M.; Bahrami, M.R.; Chuchkalov, M. Seal pipeline: Enhancing dynamic object detection and tracking for autonomous unmanned surface vehicles in maritime environments. Drones 2024, 8, 561. [Google Scholar] [CrossRef]

- Li, B.; Fu, C.; Ding, F.; Ye, J.; Lin, F. ADTrack: Target-aware dual filter learning for real-time anti-dark UAV tracking. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; IEEE: New York, NY, USA, 2021; pp. 496–502. [Google Scholar]

- Ye, J.; Fu, C.; Zheng, G.; Cao, Z.; Li, B. Darklighter: Light up the darkness for uav tracking. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; IEEE: New York, NY, USA, 2021; pp. 3079–3085. [Google Scholar]

- Ye, J.; Fu, C.; Cao, Z.; An, S.; Zheng, G.; Li, B. Tracker meets night: A transformer enhancer for UAV tracking. IEEE Robot. Autom. Lett. 2022, 7, 3866–3873. [Google Scholar] [CrossRef]

- Huang, X.; Wu, Z.; Li, Y.; Shang, C.; Shen, Q. Pyramid Attention Enhancement Network for Nighttime UAV Tracking. In Proceedings of the ICASSP 2025—2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; IEEE: New York, NY, USA, 2025; pp. 1–5. [Google Scholar]

- Li, B.; Fu, C.; Ding, F.; Ye, J.; Lin, F. All-day object tracking for unmanned aerial vehicle. IEEE Trans. Mob. Comput. 2022, 22, 4515–4529. [Google Scholar] [CrossRef]

- Ibrahim, H.; Kong, N.S.P. Brightness preserving dynamic histogram equalization for image contrast enhancement. IEEE Trans. Consum. Electron. 2007, 53, 1752–1758. [Google Scholar] [CrossRef]

- Abdullah-Al-Wadud, M.; Kabir, M.H.; Dewan, M.A.A.; Chae, O. A dynamic histogram equalization for image contrast enhancement. IEEE Trans. Consum. Electron. 2007, 53, 593–600. [Google Scholar] [CrossRef]

- Land, E.H. The retinex theory of color vision. Sci. Am. 1977, 237, 108–129. [Google Scholar] [CrossRef] [PubMed]

- Guo, X.; Li, Y.; Ling, H. LIME: Low-light image enhancement via illumination map estimation. IEEE Trans. Image Process. 2016, 26, 982–993. [Google Scholar] [CrossRef] [PubMed]

- Park, S.; Yu, S.; Moon, B.; Ko, S.; Paik, J. Low-light image enhancement using variational optimization-based retinex model. IEEE Trans. Consum. Electron. 2017, 63, 178–184. [Google Scholar] [CrossRef]

- Li, M.; Liu, J.; Yang, W.; Sun, X.; Guo, Z. Structure-revealing low-light image enhancement via robust retinex model. IEEE Trans. Image Process. 2018, 27, 2828–2841. [Google Scholar] [CrossRef] [PubMed]

- Lore, K.G.; Akintayo, A.; Sarkar, S. LLNet: A deep autoencoder approach to natural low-light image enhancement. Pattern Recognit. 2017, 61, 650–662. [Google Scholar] [CrossRef]

- Wei, C.; Wang, W.; Yang, W.; Liu, J. Deep retinex decomposition for low-light enhancement. arXiv 2018, arXiv:1808.04560. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, J.; Guo, X. Kindling the darkness: A practical low-light image enhancer. In Proceedings of the 27th ACM International Conference on Multimedia, Nice, France, 21–25 October 2019; pp. 1632–1640. [Google Scholar]

- Guo, C.; Li, C.; Guo, J.; Loy, C.C.; Hou, J.; Kwong, S.; Cong, R. Zero-reference deep curve estimation for low-light image enhancement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 1780–1789. [Google Scholar]

- Liu, W.; Ren, G.; Yu, R.; Guo, S.; Zhu, J.; Zhang, L. Image-adaptive YOLO for object detection in adverse weather conditions. Proc. AAAI Conf. Artif. Intell. 2022, 36, 1792–1800. [Google Scholar] [CrossRef]

- Liu, W.; Li, W.; Zhu, J.; Cui, M.; Xie, X.; Zhang, L. Improving nighttime driving-scene segmentation via dual image-adaptive learnable filters. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 5855–5867. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Guo, H.; Lu, T.; Wu, Y. Dynamic low-light image enhancement for object detection via end-to-end training. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR), Milan, Italy, 10–15 January 2021; IEEE: New York, NY, USA, 2021; pp. 5611–5618. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. In Advances in Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2015; Volume 28. [Google Scholar]

- Wu, X.; Wu, Z.; Guo, H.; Ju, L.; Wang, S. Dannet: A one-stage domain adaptation network for unsupervised nighttime semantic segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 15769–15778. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; Springer: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar]

- Bridle, J. Training stochastic model recognition algorithms as networks can lead to maximum mutual information estimation of parameters. In Advances in Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 1989; Volume 2. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2881–2890. [Google Scholar]

- LeCun, Y.; Bottou, L.; Orr, G.B.; Müller, K.R. Efficient backprop. In Neural Networks: Tricks of the Trade; Springer: Berlin/Heidelberg, Germany, 2002; pp. 9–50. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Ye, J.; Fu, C.; Zheng, G.; Paudel, D.P.; Chen, G. Unsupervised domain adaptation for nighttime aerial tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 8896–8905. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Computer Vision–ECCV 2014, Proceedings of the 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Springer: Cham, Switzerland, 2014; pp. 740–755. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv 2017, arXiv:1711.05101. [Google Scholar]

- Zhu, J.; Tang, H.; Cheng, Z.Q.; He, J.Y.; Luo, B.; Qiu, S.; Li, S.; Lu, H. Dcpt: Darkness clue-prompted tracking in nighttime uavs. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 13–17 May 2024; IEEE: New York, NY, USA, 2024; pp. 7381–7388. [Google Scholar]

- Yu, F.; Chen, H.; Wang, X.; Xian, W.; Chen, Y.; Liu, F.; Madhavan, V.; Darrell, T. Bdd100k: A diverse driving dataset for heterogeneous multitask learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 2636–2645. [Google Scholar]

- Sun, T.; Segu, M.; Postels, J.; Wang, Y.; Van Gool, L.; Schiele, B.; Tombari, F.; Yu, F. SHIFT: A synthetic driving dataset for continuous multi-task domain adaptation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Denver, CO, USA, 2–6 June 2022; pp. 21371–21382. [Google Scholar]

- Loh, Y.P.; Chan, C.S. Getting to know low-light images with the exclusively dark dataset. Comput. Vis. Image Underst. 2019, 178, 30–42. [Google Scholar] [CrossRef]

- Wu, Y.; Lim, J.; Yang, M.H. Online object tracking: A benchmark. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 2411–2418. [Google Scholar]

- Ye, B.; Chang, H.; Ma, B.; Shan, S.; Chen, X. Joint feature learning and relation modeling for tracking: A one-stream framework. In Computer Vision–ECCV 2022, Proceedings of the 17th European Conference, Tel Aviv, Israel, 23–27 October 2022; Springer: Cham, Switzerland, 2022; pp. 341–357. [Google Scholar]

- Chen, X.; Peng, H.; Wang, D.; Lu, H.; Hu, H. Seqtrack: Sequence to sequence learning for visual object tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 14572–14581. [Google Scholar]

- Bhat, G.; Danelljan, M.; Gool, L.V.; Timofte, R. Learning discriminative model prediction for tracking. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 6182–6191. [Google Scholar]

- Li, Y.; Liu, M.; Wu, Y.; Wang, X.; Yang, X.; Li, S. Learning Adaptive and View-Invariant Vision Transformer for Real-Time UAV Tracking. In Proceedings of the Forty-first International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024. [Google Scholar]

| Method | UAVDark135 | DarkTrack2021 | NAT2021-test | NAT2021-L-test | ||||

|---|---|---|---|---|---|---|---|---|

| Success (↑) | Precision (↑) | Success (↑) | Precision (↑) | Success (↑) | Precision (↑) | Success (↑) | Precision (↑) | |

| Base | 61.5 | 75.3 | 60.4 | 74.6 | 56.9 | 74.5 | 53.4 | 67.1 |

| Base + PAM | 62.3 (0.8↑) | 76.7 (1.4↑) | 61.3 (0.9↑) | 75.9 (1.3↑) | 57.8 (0.9↑) | 76.0 (1.5↑) | 55.3 (1.9↑) | 69.2 (2.8↑) |

| Base + Noise | 62.1 (0.6↑) | 76.2 (0.9↑) | 61.2 (0.8↑) | 75.4 (0.8↑) | 57.5 (0.6↑) | 75.6 (1.1↑) | 54.3 (0.9↑) | 68.7 (1.6↑) |

| Base + Enhancer | 62.3 (0.8↑) | 75.9 (0.6↑) | 60.3 (0.1↓) | 74.9 (0.3↑) | 57.4 (0.5↑) | 75.5 (1.0↑) | 54.7 (1.3↑) | 69.0 (1.9↑) |

| Base + PAM + Noise | 63.2 (1.7↑) | 78.1 (2.8↑) | 61.8 (1.4↑) | 76.7 (2.1↑) | 58.4 (1.5↑) | 76.8 (2.3↑) | 56.9 (3.5↑) | 71.7 (4.6↑) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Huang, X.; Bai, Y.; Ma, J.; Li, Y.; Shang, C.; Shen, Q. NightTrack: Joint Night-Time Image Enhancement and Object Tracking for UAVs. Drones 2025, 9, 824. https://doi.org/10.3390/drones9120824

Huang X, Bai Y, Ma J, Li Y, Shang C, Shen Q. NightTrack: Joint Night-Time Image Enhancement and Object Tracking for UAVs. Drones. 2025; 9(12):824. https://doi.org/10.3390/drones9120824

Chicago/Turabian StyleHuang, Xiaomin, Yunpeng Bai, Jiaman Ma, Ying Li, Changjing Shang, and Qiang Shen. 2025. "NightTrack: Joint Night-Time Image Enhancement and Object Tracking for UAVs" Drones 9, no. 12: 824. https://doi.org/10.3390/drones9120824

APA StyleHuang, X., Bai, Y., Ma, J., Li, Y., Shang, C., & Shen, Q. (2025). NightTrack: Joint Night-Time Image Enhancement and Object Tracking for UAVs. Drones, 9(12), 824. https://doi.org/10.3390/drones9120824