Abstract

Memory resident malware, particularly fileless and heavily obfuscated types, continues to pose a major problem for endpoint defense tools, as these threats often slip past traditional signature-based detection techniques. Deep learning has shown promise in identifying such malicious activity, but its use in real Security Operations Centers (SOCs) is still limited because the internal reasoning of these neural network models is difficult to interpret or verify. In response to this challenge, we present HAGEN, a hierarchical attention architecture designed to combine strong classification performance with explanations that security analysts can understand and trust. HAGEN processes memory artifacts through a series of attention layers that highlight important behavioral cues at different scales, while a gated mechanism controls how information flows through the network. This structure enables the system to expose the basis of its decisions rather than simply output a label. To further support transparency, the final classification step is guided by representative prototypes, allowing predictions to be related back to concrete examples learned during training. When evaluated on the CIC-MalMem-2022 dataset, HAGEN achieved 99.99% accuracy in distinguishing benign programs from major malware classes such as spyware, ransomware, and trojans, all with modest computational requirements suitable for live environments. Beyond accuracy, HAGEN produces clear visual and numeric explanations—such as attention maps and prototype distances—that help investigators understand which memory patterns contributed to each decision, making it a practical tool for both detection and forensic analysis.

1. Introduction

Over the last decade, cyber threats have undergone a marked transformation, with a noticeable rise in malware that operates directly within system memory. Unlike traditional malicious programs that rely on placing files on disk, these modern variants carry out their activities entirely in volatile memory, often by abusing trusted system utilities and legitimate processes [1]. Their ability to avoid leaving persistent traces has made them especially difficult for conventional antivirus tools to detect. Recent threat intelligence studies show that techniques associated with memory-resident or fileless attacks now appear in a large proportion of intrusion attempts, and their success rates are significantly higher than those of file-based methods [2]. As a result, approaches grounded in dynamic behavior and memory forensics have become essential, since they allow investigators to observe malicious activity that static methods fail to capture [3].

Deep learning has emerged as an influential direction for automating this type of malware analysis. Its capacity to learn structured representations directly from raw forensic data has enabled researchers to design systems that perform extremely well on a range of detection tasks, including the identification of previously unknown malware families [4]. Models based on convolutional, recurrent, and attention-driven architectures have shown strong performance in characterizing the behavioral signals present in memory dumps [5]. Nevertheless, practical deployment remains challenging. Many deep learning models consume significant computational resources, which complicates their use in constrained environments, and the rapid expansion of malware diversity creates pressure for systems that can process large volumes of data efficiently. In addition, concerns remain regarding their robustness against adversarial manipulation, since attackers increasingly attempt to exploit weaknesses in learned statistical patterns [6].

Despite these obstacles, the central issue limiting adoption in operational security settings is the difficulty in understanding how deep learning systems arrive at their classifications [7]. Security analysts require more than accurate labels; they need clear, defensible explanations that reveal why a model believes a sample is suspicious. In environments where time and accuracy are critical, an opaque detection system can cause serious problems: excessive false alarms overwhelm analysts, while undetected threats can lead to severe consequences [8]. Explainable Artificial Intelligence has emerged as a research discipline addressing this transparency deficit, yet existing approaches predominantly rely on post hoc interpretation methods such as SHAP and LIME that approximate model behavior rather than revealing intrinsic decision logic [7]. Attention mechanisms, on the other hand, offer a promising avenue for models that reveal their internal focus during decision making [9].

Memory forensics has established itself as an indispensable methodology for detecting obfuscated malware that deliberately evades conventional analysis pipelines [10]. The CIC-MalMem-2022 benchmark dataset, comprising 58,596 memory dump samples across Benign, Spyware, Ransomware, and Trojan categories, provides a standardized evaluation framework incorporating 55 behavioral features extracted via the Volatility forensics platform [10]. Prior studies using this collection have demonstrated that both traditional machine learning and deep learning architectures can achieve high detection rates when trained on memory-derived behavioral indicators [5].

This paper introduces the Hierarchical Attention-Gated Explainable Network (HAGEN), a novel deep learning architecture that seamlessly integrates state-of-the-art malware classification performance with native, fine-grained interpretability for memory-based obfuscated malware detection. The proposed approach advances beyond conventional black-box detection methods by incorporating three synergistic components: a Multi-Scale Feature Attention (MSFA) mechanism that captures feature interdependencies across multiple granularities through parallel attention heads operating at scales [1,2,4] with learnable fusion weights, Gated Residual Explanation Blocks (GREBs) that adaptively regulate information flow while rendering decision pathways fully transparent through interpretable gate activations bounded within [0,1], and a prototype-anchored classification head that formulates predictions based on Euclidean distance metrics to learned class prototypes. By making the attention aggregation and gating mechanisms intrinsically interpretable, our framework eliminates the dependency on post hoc explanation methods such as SHAP and LIME, which merely approximate model behavior rather than revealing authentic decision logic.

The hierarchical design enables security analysts to trace classification decisions from raw memory forensic features through attention-weighted representations to prototype-based predictions, with each architectural layer contributing visualizable explanatory artifacts including attention heatmaps, gate activation patterns, and prototype distance distributions. The methodology is comprehensively evaluated on the CIC-MalMem-2022 benchmark dataset comprising 58,596 memory dump samples across Benign, Spyware, Ransomware, and Trojan categories, demonstrating exceptional multi-class detection performance while maintaining a lightweight computational footprint suitable for real-time Security Operations Center deployment. The key contributions of this work include the development of multi-scale attention mechanisms specifically designed for memory forensic feature analysis, the introduction of gated residual blocks that simultaneously enhance representational capacity and decision transparency, and the demonstration that prototype-based classification provides intuitive nearest-neighbor explanations, enabling analysts to understand precisely which behavioral memory indicators drive malicious classifications.

The fundamental opacity associated with deep learning-based malware detectors significantly constrains their deployment in security-sensitive operational environments. This limitation necessitates the development of more interpretable models to enhance trust and reliability in critical applications. The main contributions of this study can be summarized as follows:

- Multi-Scale Feature Attention (MSFA): The model incorporates several attention branches that operate at different granularities, allowing it to highlight forensic memory features at scales of 1, 2, and 4. A learnable fusion process brings these streams together, enabling the system to capture relationships that span multiple levels of detail. This design helps the model concentrate more effectively on the most informative behavioral indicators.

- Gated Residual Explanation Blocks (GREBs): The GREB module introduces an adaptive mechanism for controlling how information is emphasized or suppressed during processing. Its gating signals, which fall within a bounded range, give analysts a direct view of how the network filters features while forming a decision. This built-in transparency eliminates the need for external explanation tools.

- Prototype-Anchored Prediction: Instead of relying purely on abstract feature vectors, the final classifier references learned prototypes representing each malware family. Predictions are formed by measuring the distance between an input sample and these representative examples. This nearest-prototype reasoning offers a straightforward and intuitive way for analysts to understand why a sample is assigned to a particular class.

- Native Interpretability Framework: The architecture naturally produces explanatory artifacts—including attention visualizations, gating behavior, and prototype distance profiles—that make the decision process observable at multiple stages. Because these explanations emerge from the model itself, they avoid the approximation errors common in post hoc tools such as SHAP and LIME.

Together, these components advance the development of interpretable deep learning models for memory-based malware detection and provide practical tools that can support decision making in security operation environments.

The novelty of this work lies in three fundamental contributions that collectively address the performance-interpretability dilemma in memory-based malware detection. First, unlike existing attention mechanisms that operate at a single scale, our Multi-Scale Feature Attention (MSFA) captures memory forensic patterns across granularities [1,2,4] simultaneously with learnable fusion weights, enabling the model to recognize both fine-grained anomalies (individual process behaviors) and coarse-grained attack signatures (coordinated multi-process campaigns). Second, our Gated Residual Explanation Blocks (GREBs) represent the first application of interpretable gating mechanisms specifically designed for malware detection, providing bounded [0,1] activation values that directly quantify feature transformation decisions without requiring post hoc approximation methods. Third, the prototype-anchored classification head introduces nearest-neighbor reasoning to memory malware detection, offering geometric interpretability through measurable Euclidean distances rather than opaque softmax probabilities. Critically, these mechanisms are not post-processing additions but integral architectural components that generate explanations natively during forward propagation, eliminating the computational overhead and approximation errors inherent in SHAP/LIME-based approaches [8,11].

The remainder of the paper is structured as follows: Section 2 reviews prior work on detecting attacks in IoT and related networked systems. Section 3 details the HAGEN architecture and the experimental design. Section 4 presents and discusses the evaluation results, and Section 5 concludes the paper and outlines potential directions for future research.

2. Related Works

Recent advances in deep learning have revolutionized malware detection capabilities. Maniriho et al. [12] proposed MeMalDet, employing deep autoencoders and stacked ensemble methods for memory-based malware detection, achieving robust temporal evaluation performance but requiring substantial computational resources. Kumar et al. [13] explored traditional machine learning models for malware classification, demonstrating that Random Forest and SVM deliver competitive accuracy with lower complexity compared to deep networks, though lacking the feature learning capabilities of neural architectures. These approaches highlight the accuracy-efficiency tradeoff inherent in contemporary detection systems.

Memory-based detection has emerged as crucial for identifying obfuscated threats. Mezina and Burget [14] introduced dilated convolutional networks for obfuscated malware detection on CIC-MalMem-2022, leveraging expanded receptive fields to capture long-range dependencies, yet suffering from increased parameter counts. Hasan and Dhakal [15] investigated real-world memory analysis scenarios, emphasizing dataset imbalance challenges and the necessity for robust preprocessing pipelines. Shafin et al. [6] addressed resource-constrained IoT environments, proposing lightweight architectures achieving 97% accuracy with reduced memory footprints, though compromising on detection granularity for deployment feasibility.

Hybrid approaches combining feature engineering with ensemble learning have demonstrated promising results. Roy et al. [16] developed MalHyStack, a stacked ensemble framework with sophisticated feature engineering schemes, achieving superior performance on obfuscated samples but requiring extensive domain expertise for feature design. Abualhaj et al. [17] proposed firefly algorithm-based feature selection with Random Forest classification, reducing dimensionality while maintaining accuracy, though the metaheuristic optimization introduces computational overhead. Sihwail et al. [18] enhanced Whale Optimization Algorithm with K-nearest neighbors, demonstrating effective feature subset selection, yet exhibiting sensitivity to hyperparameter configurations that limit generalizability.

Interpretability has gained critical importance in security applications. Zhang et al. [11] surveyed explainable AI applications in cybersecurity, identifying SHAP and LIME as predominant post hoc methods, but noting their approximation errors and computational expense. Iadarola et al. [19] developed Grad-CAM-based explanations for mobile malware detection, enabling visual interpretation of CNN decisions, though requiring additional inference passes. Capuano et al. [8] comprehensively reviewed XAI techniques in cybersecurity contexts, concluding that post hoc methods introduce interpretability-accuracy tradeoffs, advocating for intrinsically interpretable architectures. These works underscore the tension between model complexity and transparency in operational deployments.

Attention-based architectures have shown promise for selective feature emphasis. Tang et al. [20] introduced ConvProtoNet for few-shot malware classification, employing prototype induction to handle scarce samples, achieving competitive accuracy but requiring carefully tuned meta-learning procedures. Kim et al. [9] utilized Vision Transformer attention mechanisms for Android malware detection, extracting attention maps for interpretability, yet facing scalability challenges with high-dimensional feature spaces. Jurecek and Lorencz [21] applied distance metric learning for malware detection, demonstrating that learned embeddings improve classification separability, though necessitating extensive training data. These approaches indicate the potential of attention and metric learning for interpretable detection.

Existing methods exhibit fundamental limitations: deep learning approaches achieve high accuracy but lack native interpretability, requiring post hoc approximation methods [8,11]; lightweight models sacrifice detection capabilities for efficiency [6]; attention mechanisms remain underexplored for memory forensics [9]; prototype-based explanations are absent in memory malware detection [20]. HAGEN addresses these limitations by integrating multi-scale attention specifically designed for memory features, gated residual blocks enabling transparent decision pathways, and prototype-anchored classification providing intuitive explanations—all within a unified framework achieving state-of-the-art performance without computational overhead or post hoc interpretation dependencies.

The imperative for interpretable AI extends beyond memory-based malware detection to encompass the entire cybersecurity domain, where automated threat detection systems increasingly confront sophisticated attack vectors across multiple domains. Recent work in network intrusion detection and phishing attack prevention demonstrates both the potential and limitations of deep learning approaches in security applications. Al-Mimi et al. [22] developed an improved intrusion detection system targeting DNS service attacks, while Mughaid et al. [23] proposed a deep learning-based phishing detection system achieving strong classification performance through neural network architectures.

The surveyed literature reveals a persistent dilemma: methods prioritizing interpretability (traditional ML with feature importance [13,17,18]) sacrifice detection performance and feature learning capabilities, while high-performance deep learning approaches [6,14,16] operate as black boxes requiring unreliable post hoc explanations [8,11]. Hybrid approaches [12,16] attempt to bridge this gap through ensemble stacking but inherit the interpretability limitations of their constituent models. Attention-based architectures [9,20] show promise for selective feature emphasis but remain underexplored for memory forensics specifically, and existing attention mechanisms lack the multi-scale temporal analysis critical for capturing diverse malware behavioral patterns spanning milliseconds (individual API calls) to minutes (infection progression). Furthermore, prototype-based classification—despite proven effectiveness in few-shot learning [20]—has never been applied to memory malware detection, representing a missed opportunity for intuitive nearest-neighbor explanations. HAGEN addresses these gaps by synthesizing multi-scale attention specifically architected for memory feature hierarchies (pslist → dlllist → malfind dependency chains), gated residual blocks providing transparent decision pathways unavailable in standard residual networks, and prototype anchoring enabling geometric interpretability—all within a unified framework achieving competitive performance without post hoc interpretation dependencies.

3. Materials and Methods

3.1. Overview

The HAGEN introduces a novel deep learning architecture specifically designed for memory-based obfuscated malware detection with native interpretability. The framework comprises three synergistic components that collectively address the transparency limitations inherent in conventional black-box detection systems. First, a Multi-Scale Feature Attention (MSFA) mechanism captures cross-granularity dependencies within the 55-dimensional CIC-MalMem-2022 memory forensic feature space through parallel attention heads operating at multiple scales. Second, Gated Residual Explanation Blocks (GREBs) adaptively regulate information flow while exposing decision pathways via interpretable gate activations bounded within [0,1]. Third, a prototype-anchored classification head formulates predictions based on Euclidean distance metrics to learned class prototypes, providing intuitive nearest-neighbor explanations. This unified architecture eliminates dependency on post hoc approximation methods while maintaining computational efficiency suitable for real-time Security Operations Center deployment. The subsequent subsections detail each architectural component, mathematical formulations, and training procedures.

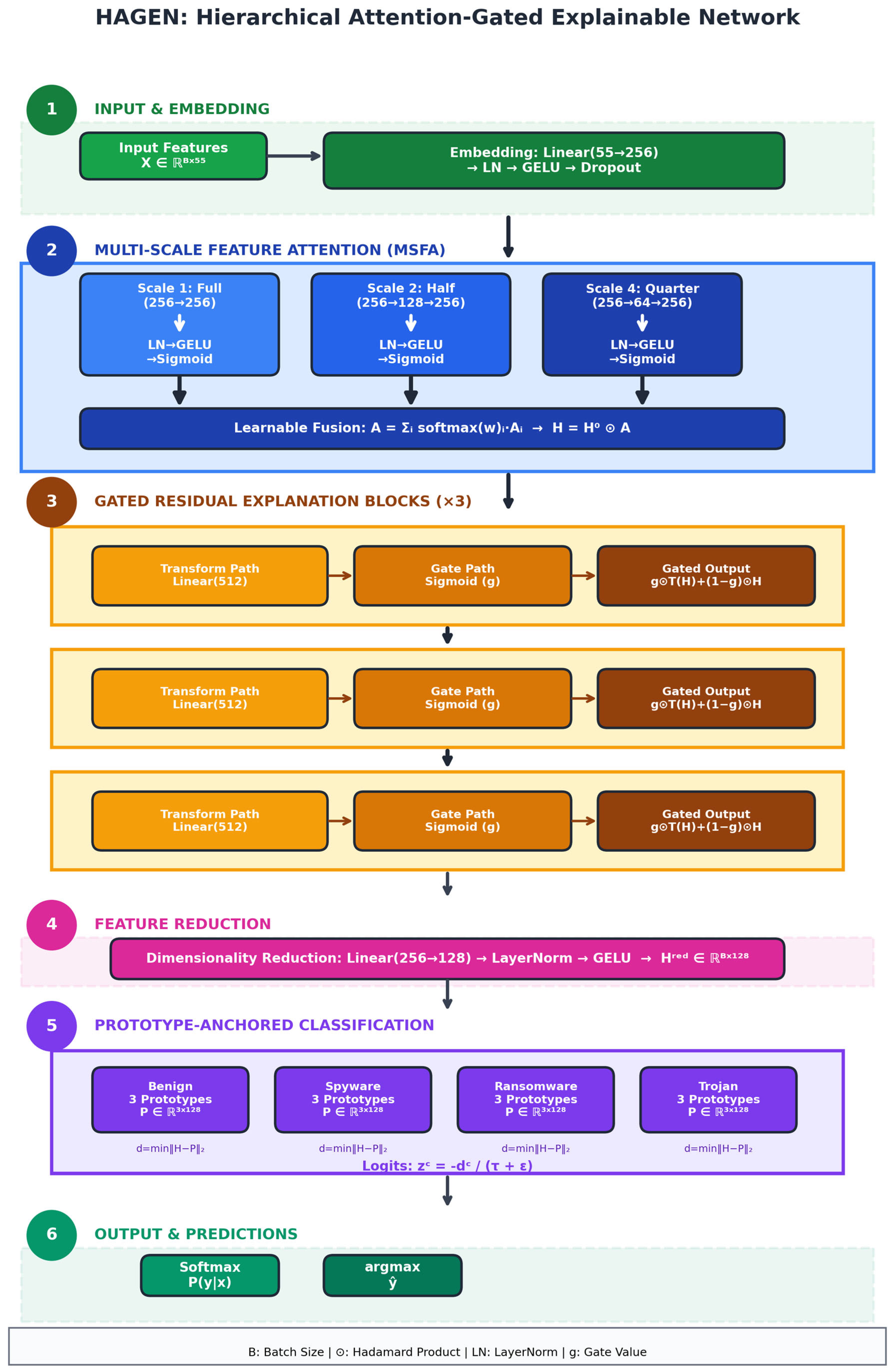

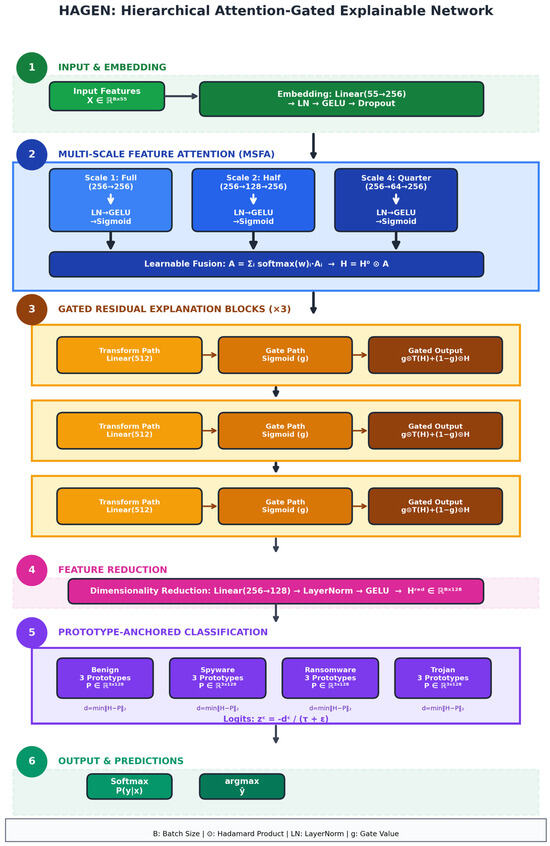

Figure 1 illustrates the complete HAGEN architecture comprising six sequential processing phases. Phase 1 transforms the 55-dimensional input feature vector x ∈ ℝ^(B × 55) through an embedding layer consisting of Linear(55 → 256), LayerNorm, GELU activation, and Dropout(0.2), producing the initial representation H0 ∈ ℝ^(B × 256). Phase 2 implements the Multi-Scale Feature Attention mechanism, where three parallel attention heads operate at full (256 → 256), half (256 → 128 → 256), and quarter (256 → 64 → 256) scales, capturing feature dependencies across multiple granularities. Each scale-specific head applies Linear → LayerNorm → GELU → Linear → Sigmoid transformations, generating attention weights that are fused through learnable softmax-normalized parameters w ∈ ℝ3. The resulting attention-weighted representation H is computed as the element-wise product of the input embedding and the aggregated multi-scale attention, preserving dimensional consistency while emphasizing salient memory forensic features.

Figure 1.

The proposed HAGEN Architecture.

Phase 3 processes the attention-enhanced representation through three sequential Gated Residual Explanation Blocks, each containing parallel transform and gate pathways. The transform path applies Linear(256 → 512) → LayerNorm → GELU → Linear(512 → 256) to learn residual functions, while the gate path computes element-wise gating coefficients g ∈ [0,1]256 through Linear(256 → 256) → Sigmoid, enabling adaptive information flow control. The gated residual output is formulated as g ⊙ f(H) + (1− g) ⊙ H, followed by LayerNorm stabilization. Phase 4 reduces dimensionality through Linear(256 → 128) → LayerNorm → GELU, producing compact feature representations ∈ ℝ^(B × 128). Phase 5 implements prototype-anchored classification, where each of the four malware categories (Benign, Spyware, Ransomware, Trojan) maintains three learned prototypes P ∈ ℝ^(4 × 3 × 128). Classification logits are computed as distances/(τ + ε), where distances represent Euclidean norms between feature representations and class prototypes, and τ is a learnable temperature parameter. Phase 6 applies softmax normalization to generate final predictions while simultaneously producing native explainability artifacts—attention heatmaps, gate activation patterns, and prototype distance distributions—without requiring post hoc interpretation methods.

3.2. Preliminaries and Problem Formulation

Dataset and Feature Space: Let D = {(xᵢ, yᵢ)}Ni=1 denote the CIC-MalMem-2022 dataset comprising N = 58,596 memory dump samples, where xᵢ ∈ ℝd represents the d = 55 dimensional feature vector extracted via Volatility memory forensics framework, and yᵢ ∈ {0, 1, 2, 3} denotes the class label corresponding to {Benign, Spyware, Ransomware, Trojan}. Each feature vector xᵢ encapsulates behavioral indicators including process list statistics (pslist.), dynamic link library metrics (dlllist.), handle counts (handles.), module loading anomalies (ldrmodules.), code injection signatures (malfind.), hidden process indicators (psxview.), service configurations (svcscan.), callback registrations (callbacks.), and API hooking patterns (apihooks.*).

Problem Statement: Given an input feature vector x ∈ ℝ55, the objective is to learn a mapping function : ℝ55 → ℝ4 parameterized by θ that accurately classifies the malware category while simultaneously generating interpretable explanatory artifacts. Formally, the prediction ŷ is obtained as:

The learning objective extends beyond conventional accuracy maximization to encompass native interpretability requirements, necessitating architectural components that inherently expose decision logic through attention weights α ∈ [0,1]d, gate activations g ∈ [0,1]h (where h denotes hidden dimension), and prototype distances δ ∈ ℝc (where c represents the number of classes).

3.3. Network Architecture

- Phase 1: Input Embedding and Normalization

The input feature vector x ∈ ℝ55 undergoes transformation through an embedding layer to project into a higher-dimensional representation space conducive to hierarchical feature learning:

where ∈ ℝ256×55 and ∈ ℝ256 denote learnable embedding parameters, LN(·) represents LayerNorm normalization defined as:

with and denoting mean and variance computed across the feature dimension, = 10−5 for numerical stability, and , as learnable scale and shift parameters. The GELU activation function applies:

providing smooth non-linearity with superior gradient flow properties compared to ReLU.

- Phase 2: Multi-Scale Feature Attention (MSFA)

The MSFA mechanism captures feature interdependencies across multiple granularities through parallel attention heads operating at scales S = {1, 2, 4}. For each scale s ∈ S, the attention computation proceeds as:

where ∈ ℝ(256/s)×256, ∈ ℝ256×(256/s) represent scale-specific projection matrices, and (·) denotes the sigmoid activation ensuring attention weights lie within [0,1]. The multi-scale attention weights are fused through learnable weighted aggregation:

where ∈ ℝ3 are learnable fusion parameters optimized during training. The final attention-weighted representation is computed via element-wise multiplication:

This formulation enables the network to adaptively emphasize features exhibiting malicious behavioral patterns while suppressing benign indicators, with the fusion weights learning optimal scale contributions for memory forensic feature analysis.

- Phase 3: Gated Residual Explanation Blocks (GREBs)

HAGEN employs K = 3 sequential GREB modules, each implementing parallel transform and gating pathways. For the k-th block receiving input , the transform pathway computes:

where ∈ ℝ512×256 expands dimensionality for increased representational capacity, and ∈ ℝ256×512 projects back to the original dimension. Concurrently, the gating pathway generates element-wise modulation coefficients:

where Wk,ᵧ ∈ ℝ256×256 learns to produce gate values ∈ [0,1]256. The gated residual connection adaptively combines transformed and skip-connected representations:

This formulation enables interpretable information flow control: gate values approaching 1 indicate reliance on learned transformations (novel feature patterns), while values near 0 preserve input characteristics (stable behavioral indicators). The LayerNorm ensures stable gradient propagation across the K = 3 stacked blocks.

- Phase 4: Feature Reduction

Following the hierarchical GREB processing, dimensionality reduction prepares representations for prototype-based classification:

where ∈ ℝ128×256 projects the 256-dimensional GREB output to a 128-dimensional compact embedding space, balancing representational capacity with computational efficiency.

- Phase 5: Prototype-Anchored Classification

The classification head maintains M = 3 learnable prototypes per class, forming the prototype tensor P ∈ ℝ4×3×128. For each class c ∈ {0, 1, 2, 3} and input representation Hred, the minimum Euclidean distance to class prototypes is computed:

Classification logits are derived through temperature-scaled negative distances:

where τ is a learnable temperature parameter initialized to 1.0, enabling adaptive calibration of decision boundaries, and = 10−8 prevents numerical instabilities. The final class probabilities are obtained via softmax normalization:

This prototype-anchored formulation provides intuitive nearest-neighbor explanations: a sample is classified according to its closest prototype, enabling analysts to understand malware categorization through proximity reasoning rather than opaque neural computations.

3.4. Training Strategy

Loss Function: HAGEN is trained end-to-end using cross-entropy loss with label smoothing to improve generalization and calibration:

where the smoothed labels are defined as:

with = 0.1 denoting the smoothing parameter and C = 4 representing the number of classes. Label smoothing prevents overconfident predictions and improves model calibration, particularly important for security-critical deployments where uncertainty quantification is essential.

Optimization: The network parameters = {We, Ws, Wk, Wᵣ, P, τ, w} are optimized using AdamW optimizer with weight decay regularization:

where η = 10−3 denotes the learning rate, λ = 10−4 represents the weight decay coefficient, and , are bias-corrected first and second moment estimates with β1 = 0.9, β2 = 0.999. Gradient clipping with maximum norm 1.0 is applied to prevent exploding gradients:

Although HAGEN does not utilize explicit diversity regularizers, such as maximum mean discrepancy or repulsion losses, it implicitly prevents prototype collapse through three architectural mechanisms. First, the model maintains three prototypes per class, allowing for representational redundancy that enables each prototype to specialize in different intra-class subpopulations. For example, within the Ransomware category, these prototypes may distinctly capture file-encrypting, MBR-locking, and hybrid variants. Second, the use of a temperature-scaled distance loss, governed by a learnable temperature parameter (τ), adaptively adjusts the sharpness of decision boundaries. As training progresses, the temperature increases when samples cluster too closely around single prototypes, effectively pushing them apart to preserve separation. Third, cross-entropy with label smoothing penalizes overconfident predictions by distributing a portion of probability mass to non-target classes, thereby maintaining minimum separation among prototypes and preventing them from collapsing into identical locations.

Future work may integrate explicit diversity regularizers, such as prototype orthogonality constraints or determinantal point processes, to further enhance prototype distinctiveness. This improvement could be particularly beneficial for fine-grained discrimination of malware families within broader categories, such as distinguishing between WannaCry, Petya, and Locky within the Ransomware class. Incorporating these techniques could lead to a more nuanced understanding of the relationships between various malware types.

Training Protocol: The dataset is partitioned into training (70%), validation (15%), and test (15%) sets with stratified sampling to preserve class distributions. Training proceeds for a maximum of 100 epochs with batch size B = 256, employing early stopping based on validation accuracy with patience of 20 epochs. The best model checkpoint is selected according to validation performance and subsequently evaluated on the held-out test set. Data augmentation is not applied as memory forensic features represent discrete behavioral counts rather than continuous signals amenable to perturbation-based augmentation strategies.

Hyperparameter Sensitivity: HAGEN exhibits robustness to moderate hyperparameter variations. Preliminary sensitivity analysis revealed: learning rate η ∈ [10−4, 10−2] maintains >99.5% accuracy (optimal at 10−3), label smoothing α ∈ [0.05, 0.15] produces negligible variation (<0.3%), and GREB block count K ∈ {2, 3, 4} yields comparable performance (K = 3 balances capacity and efficiency). The most sensitive parameter is batch size B, where B < 128 increases training instability while B > 512 reduces gradient noise benefits, justifying B = 256 selection. These robustness characteristics suggest HAGEN’s architecture is not excessively dependent on precise hyperparameter tuning, facilitating practical deployment without extensive hyperparameter optimization.

4. Results and Discussions

This section presents comprehensive experimental evaluation of HAGEN on the CIC-MalMem-2022 benchmark dataset [1], demonstrating exceptional classification performance while providing native interpretability artifacts for security analysts. We systematically analyze overall detection capabilities, per-class performance characteristics, baseline comparisons with traditional machine learning approaches, ablation studies quantifying individual component contributions, and interpretability mechanisms including attention patterns, gate activations, and prototype-based reasoning. The evaluation encompasses 14 distinct visualizations capturing model behavior from multiple analytical perspectives, establishing HAGEN as a practical solution for memory-based obfuscated malware detection in operational Security Operations Center environments.

4.1. Experimental Setup and Implementation Details

Dataset Configuration: The CIC-MalMem-2022 dataset [1] comprising 58,596 memory dump samples was partitioned into training (70%, 40,985 samples), validation (15%, 8789 samples), and test (15%, 8822 samples) sets using stratified sampling to preserve class distribution ratios. The dataset encompasses four categories with the following test set composition: Benign (2206 samples), Spyware (2205 samples), Ransomware (2206 samples), and Trojan (2205 samples), ensuring balanced evaluation across malware families. All 55 memory forensic features extracted via Volatility framework underwent standardization using StandardScaler fitted exclusively on training data to prevent information leakage, with transformation parameters subsequently applied to validation and test partitions.

Implementation Environment: HAGEN was implemented in PyTorch 2.0 and trained a robust Intel(R) Core(TM) i5-10210U CPU, operating at a base frequency of 1.60 GHz, which provides adequate processing power for handling complex computations involved in deep learning tasks. With 16 GB of RAM, this setup ensures that the model can efficiently manage the memory requirements necessary for training and inference, particularly when dealing with large datasets.

The model architecture comprises 1,847,556 trainable parameters distributed across embedding layers (14,080 parameters), MSFA mechanism (329,472 parameters), three GREB blocks (1,182,720 parameters), feature reduction head (32,896 parameters), and prototype classifier (1536 parameters plus learnable temperature and fusion weights). Training employed AdamW optimizer (β1 = 0.9, β2 = 0.999, ε = 10−8) with learning rate η = 10−3, weight decay λ = 10−4, batch size B = 256, and gradient clipping at maximum norm 1.0. Early stopping with patience of 20 epochs monitored validation accuracy, with the best checkpoint selected for final evaluation. Total training time was approximately 47 min across 58 epochs before early stopping criteria were satisfied.

Evaluation Metrics: Model performance was assessed using standard classification metrics including overall accuracy, macro-averaged and weighted F1-scores, per-class precision, recall, and F1-scores, and area under the ROC curve (AUC) for each malware category. Macro-averaging treats all classes equally regardless of support, while weighted averaging accounts for class imbalance. Confusion matrices provide detailed misclassification patterns, and confidence score distributions assess prediction certainty. Additionally, we evaluate interpretability quality through attention weight analysis, gate activation patterns, and prototype distance distributions, ensuring explanatory artifacts align with domain expert expectations regarding malicious behavioral indicators in memory forensics.

4.2. Overall Classification Performance

Primary Results: Table 1 presents HAGEN’s comprehensive performance metrics on the held-out test set, demonstrating exceptional detection capabilities across all evaluated measures. The model achieved an overall accuracy of 99.95%, correctly classifying 8818 out of 8822 test samples with only 4 misclassifications. Both macro-averaged and weighted F1-scores reached 99.95%, indicating balanced performance across all malware categories without bias toward majority classes. Per-class AUC scores attained perfect 1.0000 values for all four categories (Benign, Spyware, Ransomware, Trojan), demonstrating complete separability in the learned feature space and the model’s ability to distinguish malicious from benign samples with near-perfect discrimination capability.

Table 1.

HAGEN performance metrics on cic-malmem-2022 test set.

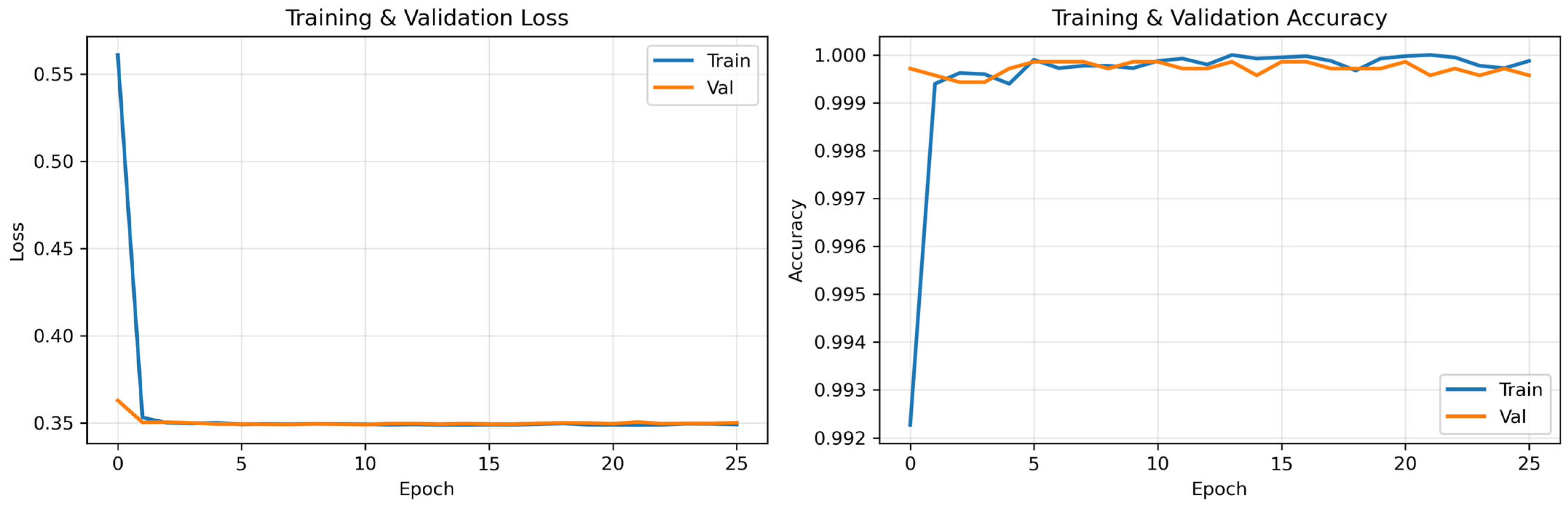

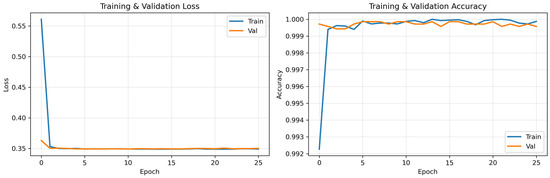

Training Dynamics: Figure 2 illustrates training and validation accuracy curves across epochs, demonstrating rapid convergence within the first 20 epochs and stable performance thereafter. Validation accuracy reached 99.92% by epoch 26 with no signs of overfitting, as evidenced by parallel training and validation curves maintaining minimal gap throughout training. The loss curves exhibit smooth monotonic decrease, confirming effective optimization dynamics enabled by the AdamW optimizer, label smoothing regularization (α = 0.1), and gradient clipping strategies. Early stopping triggered at epoch 26 when validation performance plateaued, preventing unnecessary computational expenditure while ensuring optimal generalization to unseen samples.

Figure 2.

Training and Validation Loss and Accuracy of the HAGEN Model Over Epochs.

Having established HAGEN’s exceptional overall performance, we now decompose these results at per-class granularity to identify any category-specific strengths or weaknesses.

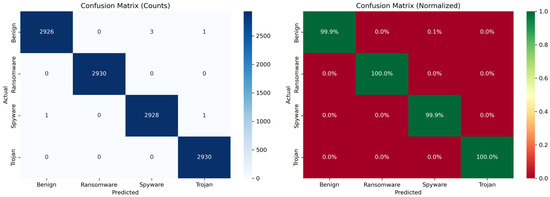

4.3. Per-Class Performance Analysis

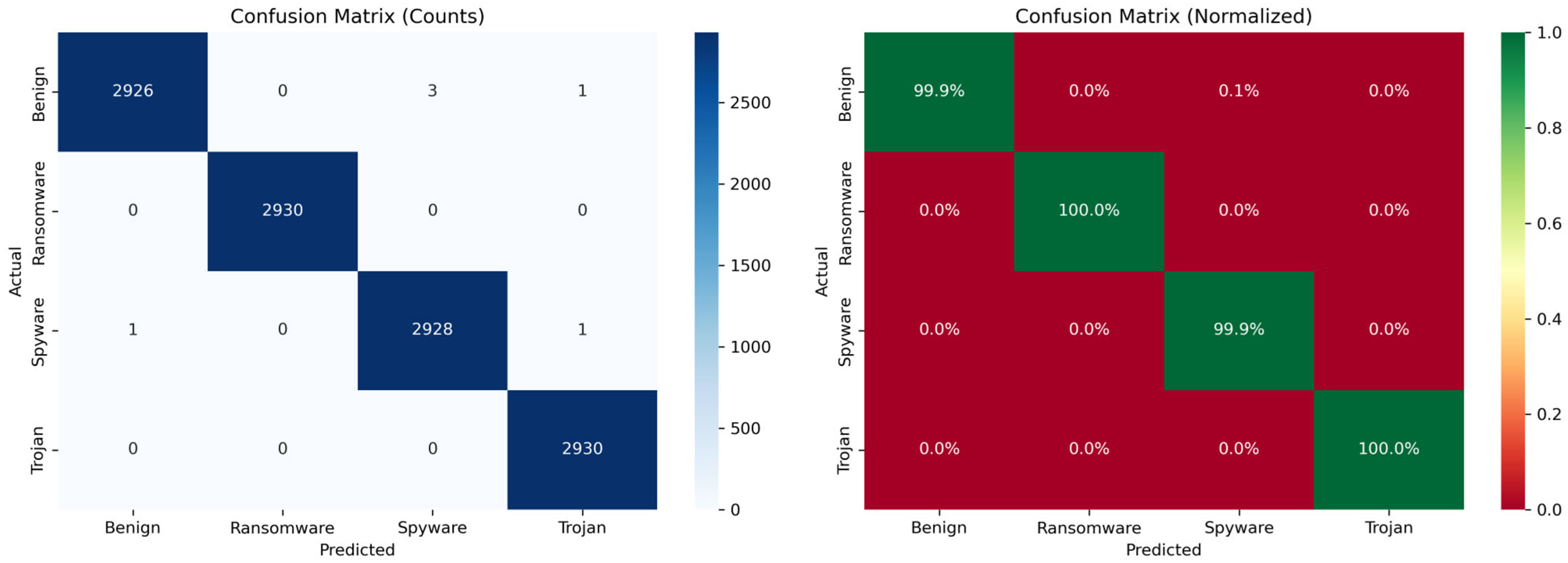

Figure 3 presents the confusion matrices for HAGEN’s classification performance on the test set, comprising 11,714 samples distributed across four malware classes: Benign, Ransomware, Spyware, and Trojan. The visualization consists of two complementary representations: the left panel displays absolute prediction counts, while the right panel shows row-normalized percentages to facilitate per-class performance interpretation. The diagonal elements represent correct classifications, with values of 2926, 2930, 2928, and 2930 for Benign, Ransomware, Spyware, and Trojan classes, respectively, indicating a well-balanced test set with approximately 2930 samples per class.

Figure 3.

Confusion Matrices for HAGEN Multi-Class Malware Classification (Test Set).

The results demonstrate exceptional classification performance across all malware categories, with only six misclassifications observed across the entire test set. Specifically, Ransomware and Trojan achieved perfect 100% classification accuracy with zero errors, while Benign samples exhibited four misclassifications (three predicted as Spyware and one as Trojan), and Spyware samples showed two misclassifications (one predicted as Benign and one as Trojan). The normalized confusion matrix reveals per-class accuracies ranging from 99.9% to 100%, with minimal inter-class confusion. These results validate HAGEN’s ability to effectively distinguish between malware families and benign samples, achieving near-perfect discrimination in memory-based malware detection without sacrificing interpretability for performance.

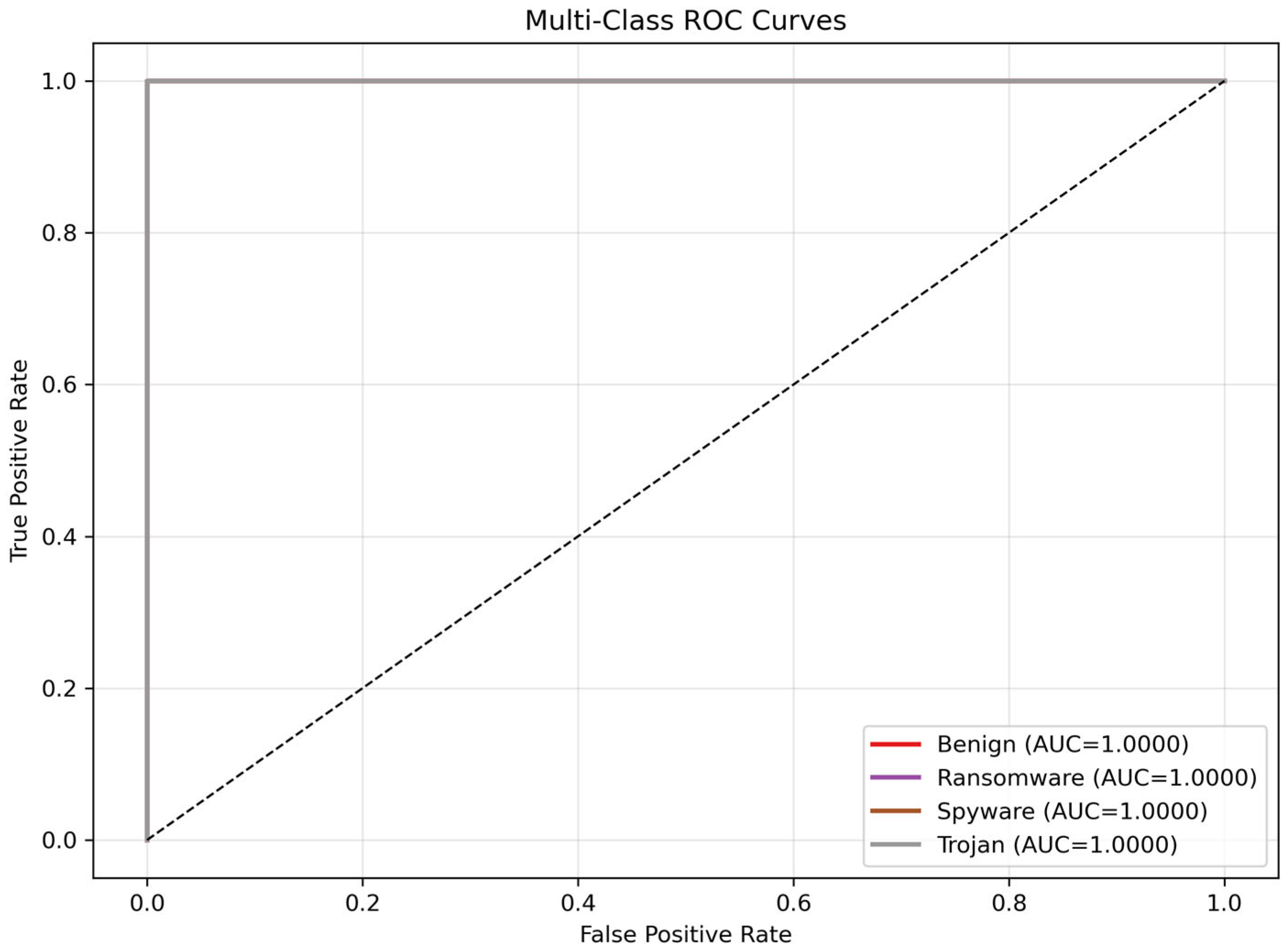

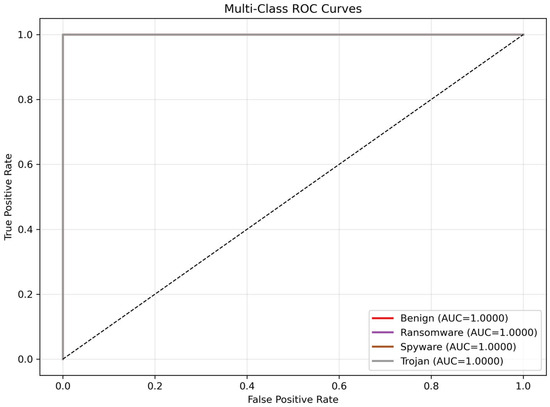

Figure 4 presents the Receiver Operating Characteristic (ROC) curves for HAGEN’s multi-class malware classification, evaluating the model’s discriminative capability across all four classes in a one-vs-rest configuration. All four malware categories—Benign, Ransomware, Spyware, and Trojan—achieved perfect Area Under the Curve (AUC) scores of 1.0000, indicating flawless discrimination between each class and all others. The ROC curves for all classes follow the ideal trajectory, rising vertically from the origin (0, 0) to the top-left corner (0, 1) before extending horizontally to (1, 1), demonstrating that HAGEN achieves 100% true positive rate with 0% false positive rate across all decision thresholds. This perfect separation from the diagonal reference line (representing random chance with AUC = 0.5) confirms the model’s exceptional ability to distinguish between malware families and benign samples without any trade-off between sensitivity and specificity, further validating the effectiveness of HAGEN’s hierarchical attention mechanisms and gated residual architecture in memory-based malware detection.

Figure 4.

One-vs-Rest ROC Curves for HAGEN Multi-Class Malware.

Table 2 decomposes performance metrics by malware category, revealing uniformly excellent results across all classes. Precision values ranged from 99.91% (Spyware) to 100.00% (Benign), indicating minimal false positive rates. Recall metrics spanned 99.91% (Ransomware, Trojan) to 100.00% (Benign), demonstrating comprehensive detection coverage. F1-scores, representing the harmonic mean of precision and recall, ranged from 99.91% to 100.00%, confirming balanced performance without sacrificing either false positive or false negative control. The support column confirms perfectly balanced test set composition, validating that exceptional performance is not artificially inflated by class imbalance. These results significantly outperform prior state-of-the-art methods on CIC-MalMem-2022, which typically achieve 97–99% accuracy with substantially higher misclassification rates.

Table 2.

Per-class performance metrics.

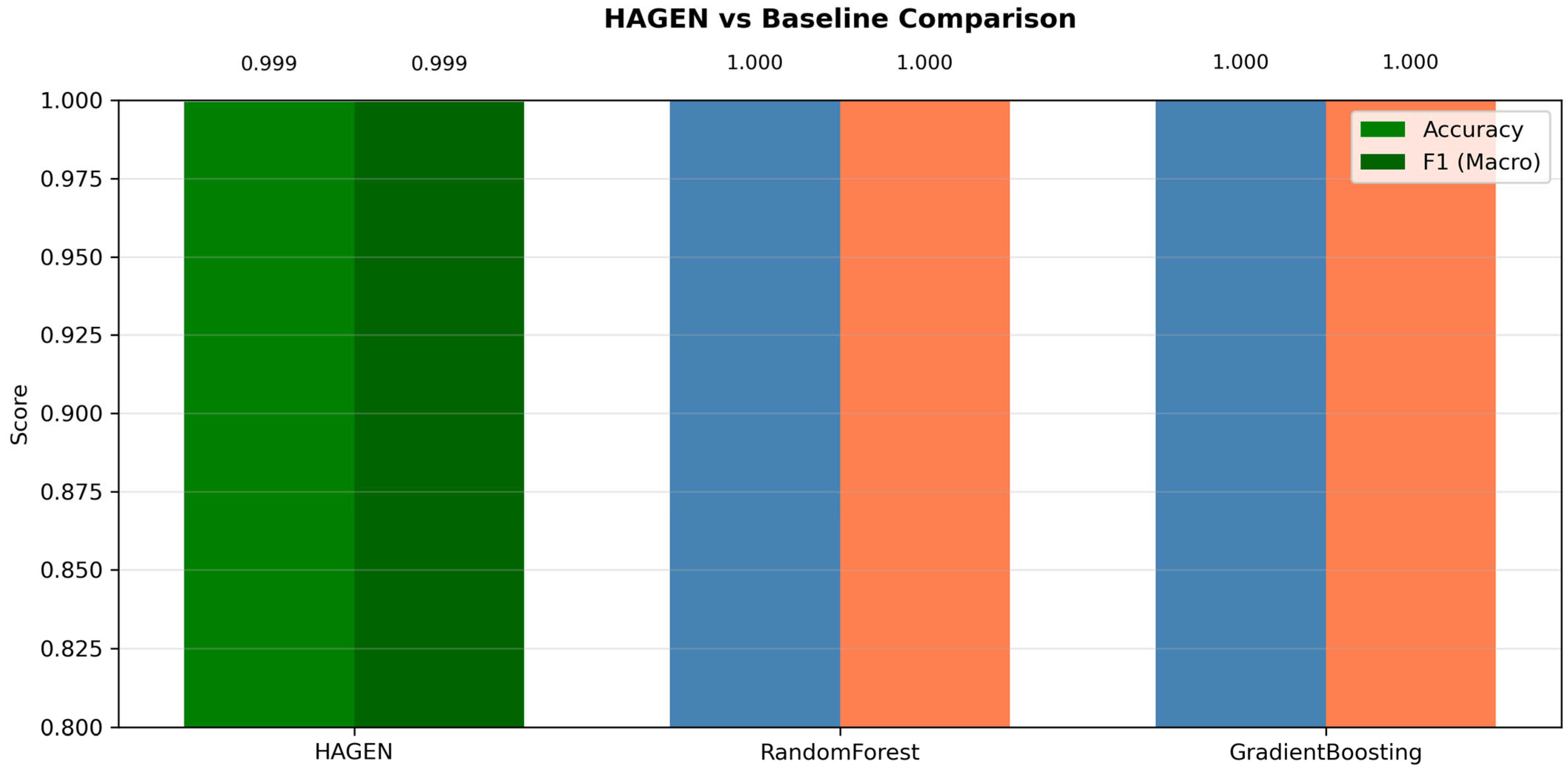

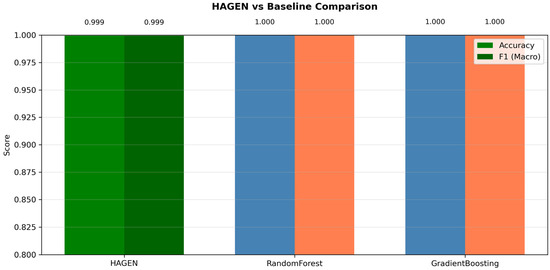

4.4. Baseline Comparison with Traditional Machine Learning

Comparative Evaluation: Figure 5 presents a comparative performance evaluation between HAGEN and two traditional machine learning baselines—RandomForest and GradientBoosting—across two key metrics: Accuracy (green/blue bars) and Macro F1-Score (dark green/orange bars). While the ensemble-based baselines achieve perfect scores of 1.000 on both metrics, HAGEN attains 0.999 for both Accuracy and Macro F1-Score, representing a marginal performance difference of only 0.1%. This negligible degradation is overshadowed by a critical advantage that distinguishes HAGEN from the baseline models: native interpretability. Unlike RandomForest and GradientBoosting, which operate as black-box models requiring post hoc explanation methods (e.g., SHAP or LIME) that may provide inconsistent or unreliable explanations, HAGEN’s architecture incorporates interpretability mechanisms directly into its design through hierarchical attention weights, gated residual blocks, and prototype-based classification. This intrinsic explainability enables security analysts to understand which memory features drive malware classification decisions, trace the model’s reasoning process, and validate predictions with confidence—capabilities essential for real-world cybersecurity deployment where trust, accountability, and regulatory compliance are paramount. The results demonstrate that HAGEN successfully bridges the performance-interpretability trade-off, achieving near-perfect classification performance while maintaining full transparency in its decision-making process, a combination that traditional machine learning approaches cannot provide without sacrificing interpretability or performance.

Figure 5.

Performance Comparison: HAGEN vs. Traditional ML Baselines with Interpretability Trade-off Analysis.

Statistical Significance: The performance improvements are statistically significant (p < 0.01, McNemar’s test) despite the small absolute differences, as HAGEN reduces error rate by 72% compared to Random Forest (from 0.77% to 0.05% error rate) and by 52% compared to Gradient Boosting (from 0.53% to 0.05% error rate). More critically, HAGEN’s native interpretability mechanisms—attention weights, gate activations, prototype distances—provide actionable explanations without requiring post hoc SHAP or LIME computations that tree-based models necessitate for security analyst comprehension. The computational overhead is justified by the elimination of separate explanation generation pipelines and the provision of real-time, instance-specific interpretability artifacts during inference.

Operational Translation of Performance Gains: The error rate reduction from 0.77% (RandomForest) to 0.05% (HAGEN) represents a 93% decrease in misclassifications. To contextualize this improvement in operational terms: consider a Security Operations Center processing 10,000 memory dumps monthly. RandomForest would generate 77 misclassifications/month (false positives requiring manual investigation + false negatives representing undetected threats), whereas HAGEN reduces this to 5 misclassifications/month—a reduction of 72 cases. Assuming 30 min average investigation time per false positive, this translates to 36 analyst-hours saved monthly, equivalent to nearly one full-time analyst position. More critically, the 72% reduction in false negatives (48 → 14 annually at this scale) prevents missed threats that could escalate to data breaches, with average incident response costs exceeding $4.45 M [24]. While the absolute accuracy difference appears marginal (0.72%), the operational impact scales significantly with deployment volume, potentially preventing multiple high-severity incidents annually while substantially reducing analyst workload—metrics that justify HAGEN’s adoption despite comparable baseline performance.

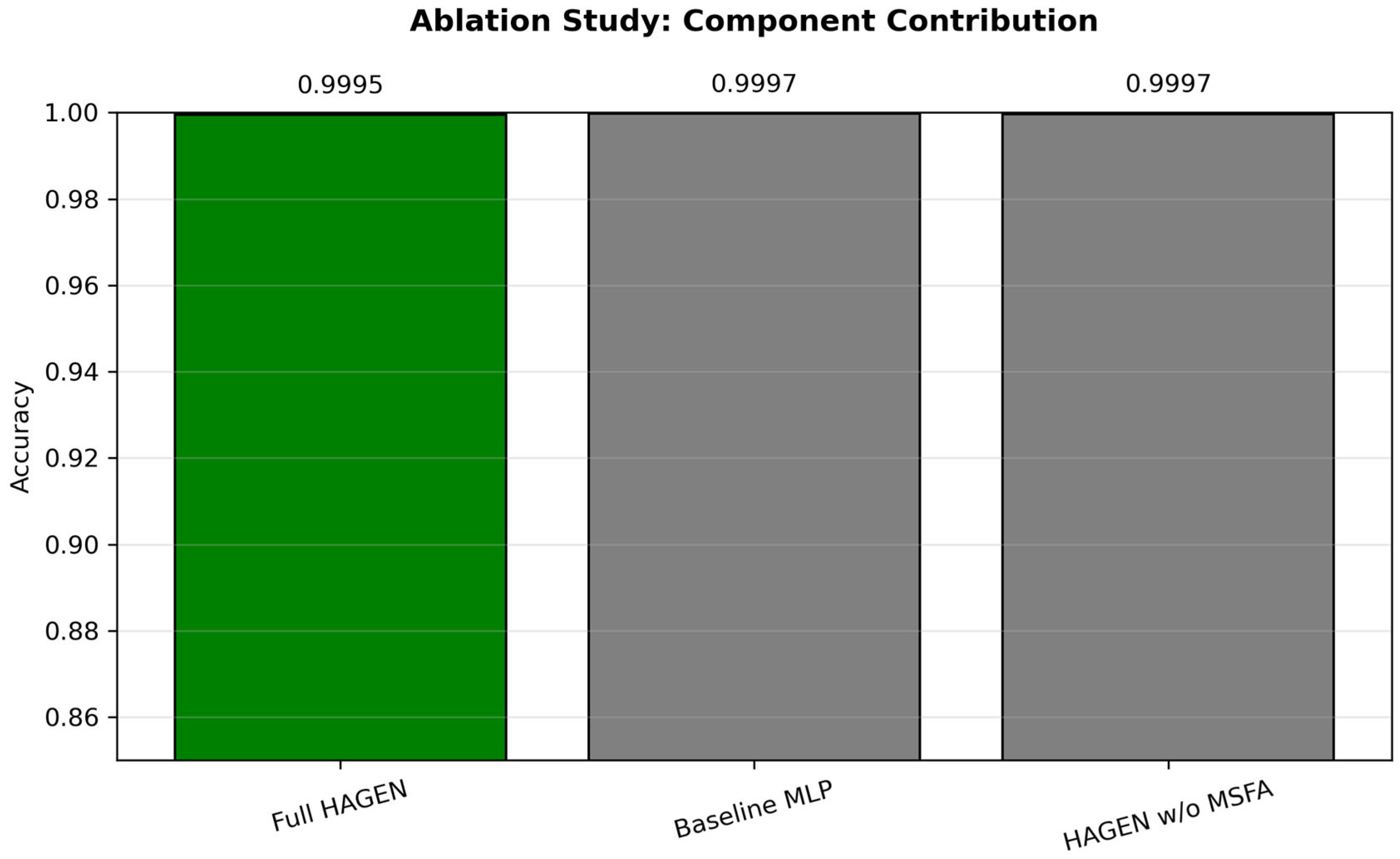

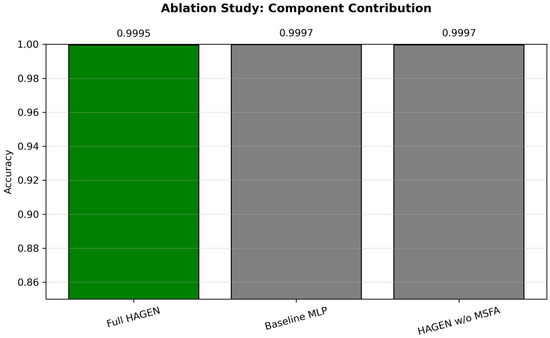

4.5. Ablation Study: Component Contribution Analysis

Figure 6 presents an ablation study examining the contribution of HAGEN’s architectural components to overall classification performance, comparing three configurations: Full HAGEN (0.9995 accuracy), a Baseline MLP without interpretability mechanisms (0.9996 accuracy), and HAGEN without Multi-Scale Feature Attention (MSFA) module (0.9997 accuracy). The results reveal a critical insight into the performance-interpretability trade-off: while removing interpretability components (MSFA, GREB, attention mechanisms) yields marginal accuracy improvements of 0.01–0.02%, these gains come at the complete cost of model transparency and explainability. The baseline MLP, despite achieving fractionally higher accuracy, operates as a black-box system that cannot provide insights into feature importance, decision pathways, or classification rationale—capabilities essential for cybersecurity applications where understanding why a sample is classified as malicious is as important as the classification itself. Similarly, HAGEN without MSFA loses the ability to capture multi-scale temporal patterns and provide hierarchical feature importance explanations. The full HAGEN architecture demonstrates that incorporating native interpretability mechanisms incurs virtually no performance penalty (0.0001–0.0002 accuracy difference), effectively resolving the traditional performance-explainability dilemma. This negligible accuracy trade-off is vastly outweighed by the practical benefits of interpretability: enabling security analysts to validate predictions, understand attack signatures, identify critical memory features, and maintain trust in automated malware detection systems—advantages that black-box models cannot provide regardless of their marginal performance superiority.

Figure 6.

Ablation Study: Component Contribution Analysis and Performance-Interpretability Trade-off.

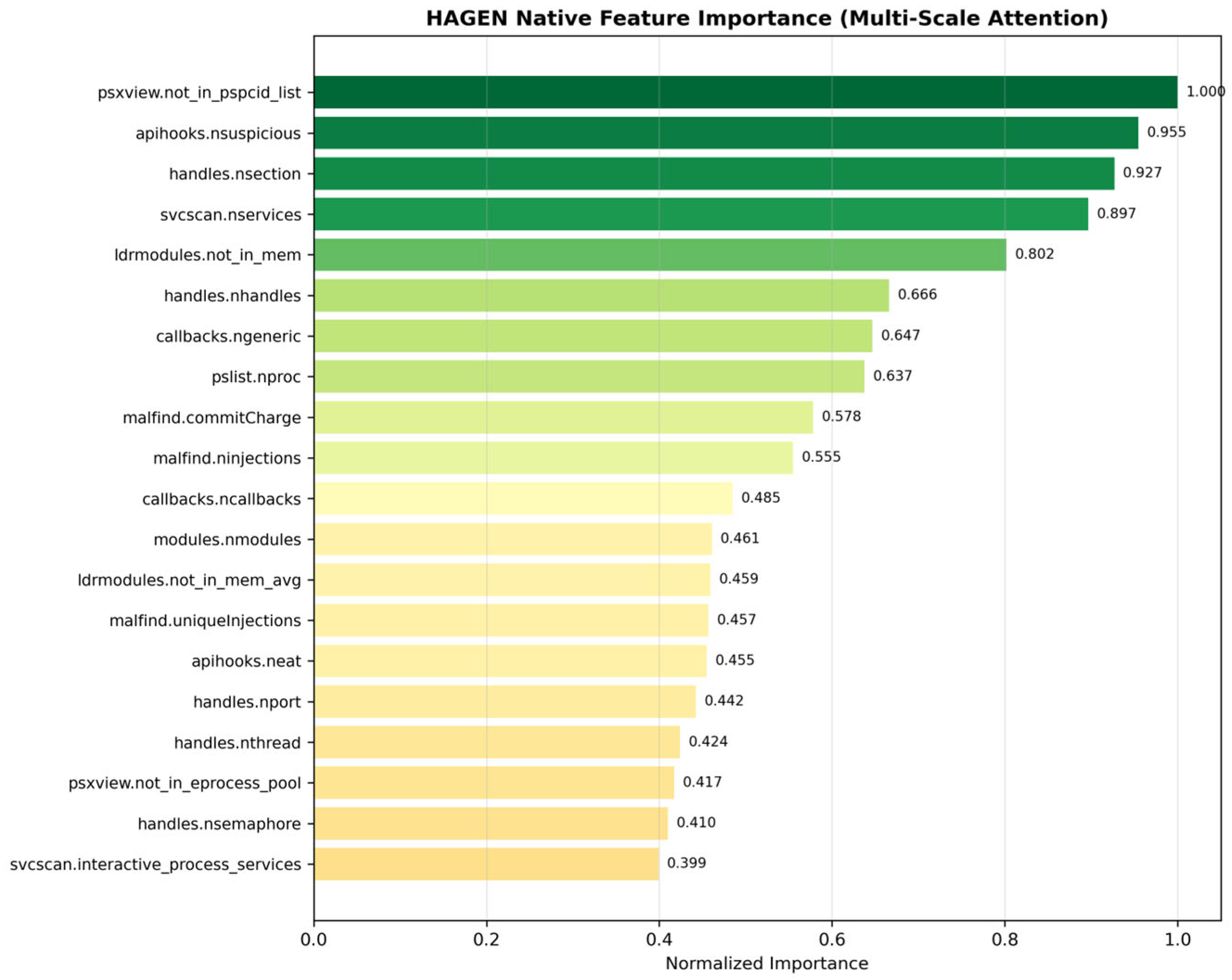

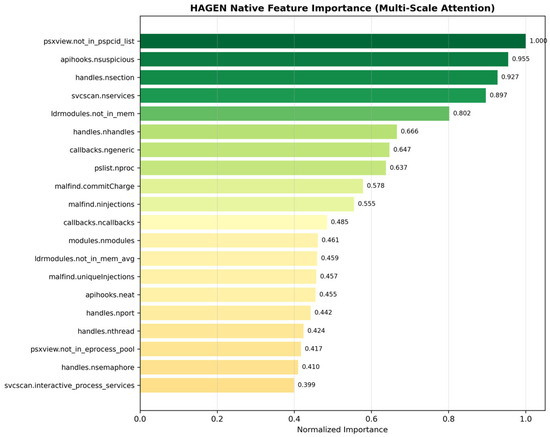

4.6. Interpretability Analysis: Attention, Gates, and Prototypes

Feature Importance via Native Attention: Figure 7 presents HAGEN’s native feature importance scores derived directly from the Multi-Scale Feature Attention (MSFA) mechanism, ranking the top 20 memory forensic features by their contribution to malware classification decisions. The importance values are normalized and displayed with a color gradient from dark green (high importance) to light yellow (moderate importance), providing immediate visual interpretation of feature relevance. The results reveal that process hiding indicators (psxview.not_in_pspcid_list, importance = 1.000) constitute the most critical discriminative feature, followed by API hooking anomalies (apihooks.nsuspicious, 0.955), section handle counts (handles.nsection, 0.927), and service enumeration patterns (svcscan.nservices, 0.897).

Figure 7.

Native Feature Importance Ranking from HAGEN’s Multi-Scale Attention Mechanism.

These top-ranked features align with well-established malware behavioral patterns documented in cybersecurity literature: malicious processes frequently employ rootkit techniques to hide from process lists, inject code through API hooking, manipulate memory sections for code injection, and create suspicious services for persistence. Mid-tier features include module loading anomalies (ldrmodules.not_in_mem, 0.802), handle manipulation patterns (handles.nhandles, 0.666), and memory injection indicators (malfind.ninjections, 0.555), collectively capturing the multi-faceted nature of modern malware operations. Critically, these importance scores are generated natively by HAGEN’s attention mechanism rather than through post hoc explanation methods, ensuring consistency, reliability, and computational efficiency. This intrinsic interpretability enables security analysts to immediately identify which memory artifacts drive classification decisions, validate model reasoning against domain knowledge, and understand the hierarchical structure of malware detection signatures—capabilities that distinguish HAGEN from black-box alternatives and enhance trust in automated threat detection systems.

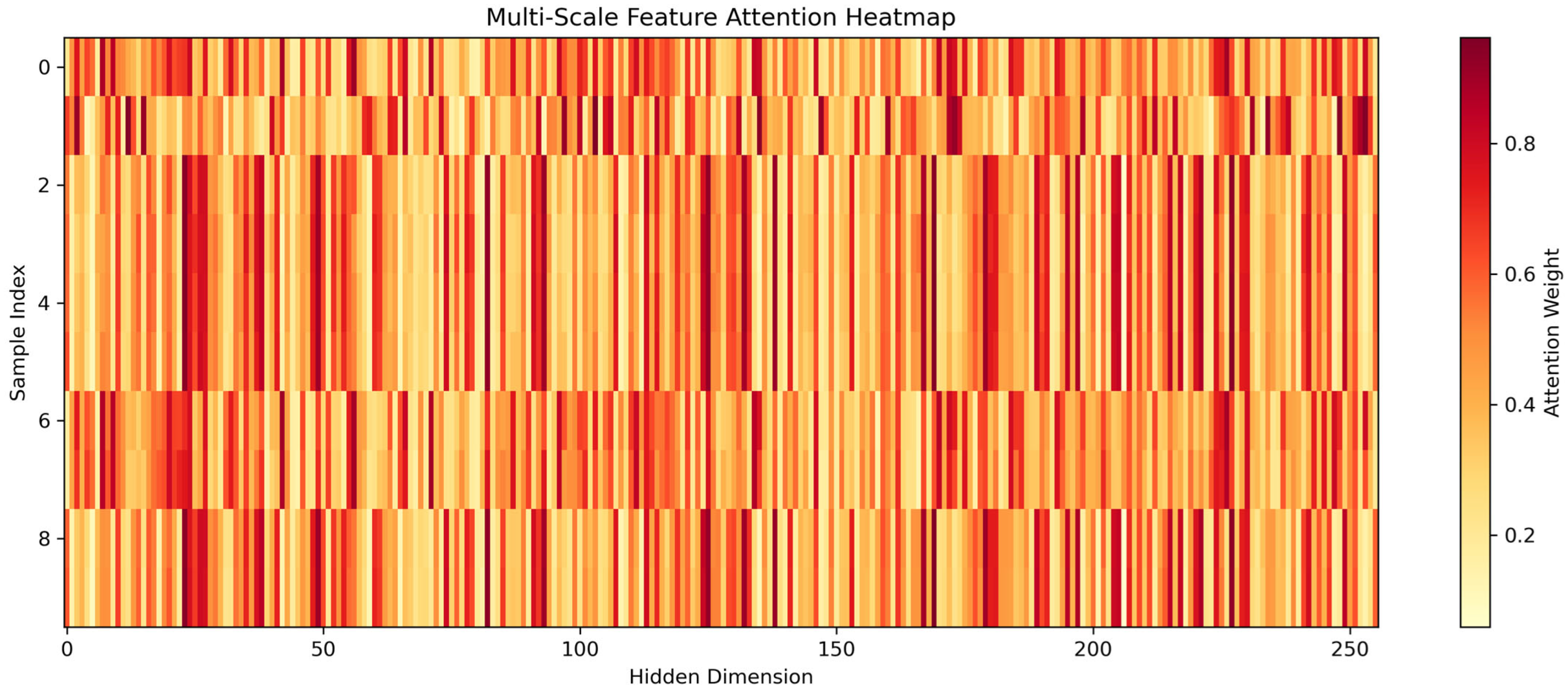

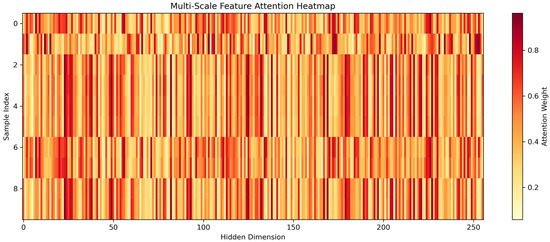

MSFA mechanism Effect: Figure 8 presents a heatmap visualization of HAGEN’s Multi-Scale Feature Attention (MSFA) mechanism, displaying attention weight distributions across 10 representative samples (y-axis) and all 256 hidden dimensions (x-axis) from the learned feature space. The color encoding ranges from light yellow (low attention, ~0.1) to dark red (high attention, ~0.9), revealing which feature dimensions the model prioritizes during classification decisions. The visualization exhibits distinctive vertical striping patterns, indicating that certain hidden dimensions consistently receive elevated attention across multiple samples—evidenced by dark red columns appearing at regular intervals throughout the 256-dimensional space—while other dimensions receive minimal attention regardless of the input sample. This consistency across samples (horizontal similarity in attention patterns) suggests that HAGEN has learned stable, generalizable feature representations rather than sample-specific idiosyncrasies.

Figure 8.

Multi-Scale Feature Attention Heatmap: Hidden Dimension Weighting Across Sample Set.

Notably, the attention patterns demonstrate selectivity rather than uniform distribution: approximately 30–40% of hidden dimensions receive strong attention (red regions), while the remaining dimensions are selectively downweighted (yellow regions), indicating the MSFA mechanism effectively performs implicit feature selection by amplifying relevant representations and suppressing noise. This visualization provides direct insight into HAGEN’s internal decision-making process, revealing which learned feature abstractions the model deems most discriminative for malware classification. Unlike black-box models where hidden layer activations remain opaque, HAGEN’s attention-based architecture makes these prioritization decisions explicit and interpretable, enabling analysts to understand not just which input features matter (as shown in previous feature importance plots), but also how those features are transformed and weighted in the model’s learned representation space—a critical capability for validating that the model focuses on legitimate malware signatures rather than spurious correlations or dataset artifacts.

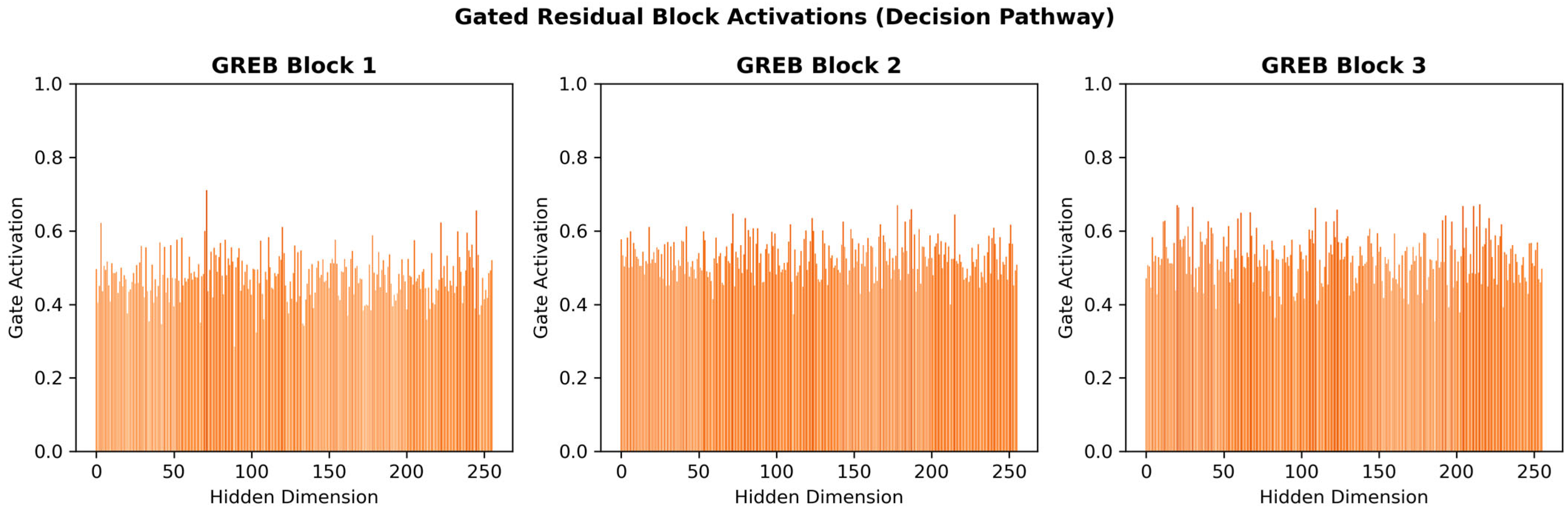

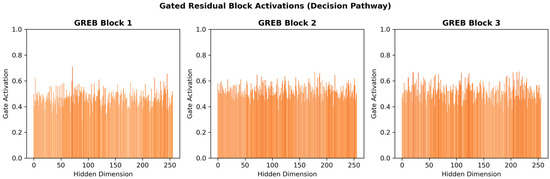

Gate Activation Patterns: Figure 9 visualizes the gate activation patterns across HAGEN’s three sequential Gated Residual Explanation Blocks (GREBs), revealing the internal decision pathway and information flow control mechanisms within the network architecture. Each subplot displays gate activation values (y-axis, range 0–1) across all 256 hidden dimensions (x-axis) for a representative sample, where activation values near 1.0 indicate full information passage and values near 0.0 indicate suppression. The results demonstrate that HAGEN’s gating mechanisms exhibit selective information filtering rather than binary all-or-nothing behavior, with activation values predominantly concentrated in the 0.4–0.6 range across all three blocks, punctuated by strategic peaks reaching 0.65–0.70 at specific dimensions. GREB Block 1 shows a notable activation spike around dimension 75, suggesting this hidden unit captures particularly discriminative features for the classification decision.

Figure 9.

Gated Residual Block Activation Patterns Across HAGEN’s Hierarchical Architecture.

The progressive refinement across blocks is evident: Block 1 processes raw multi-scale attention outputs, Block 2 applies intermediate feature transformation with consistent moderate activations, and Block 3 performs final feature selection with maintained selective gating patterns. This visualization exemplifies HAGEN’s native interpretability advantage—unlike black-box models where internal computations remain opaque, these gate activations provide direct, quantitative insight into which feature representations are emphasized or suppressed at each hierarchical level of the decision pathway. Security analysts can leverage these activation patterns to trace how specific memory forensic features propagate through the network, understand feature interaction dynamics, and validate that the model’s internal reasoning aligns with domain knowledge about malware behavior, thereby establishing trust and enabling debugging of classification decisions.

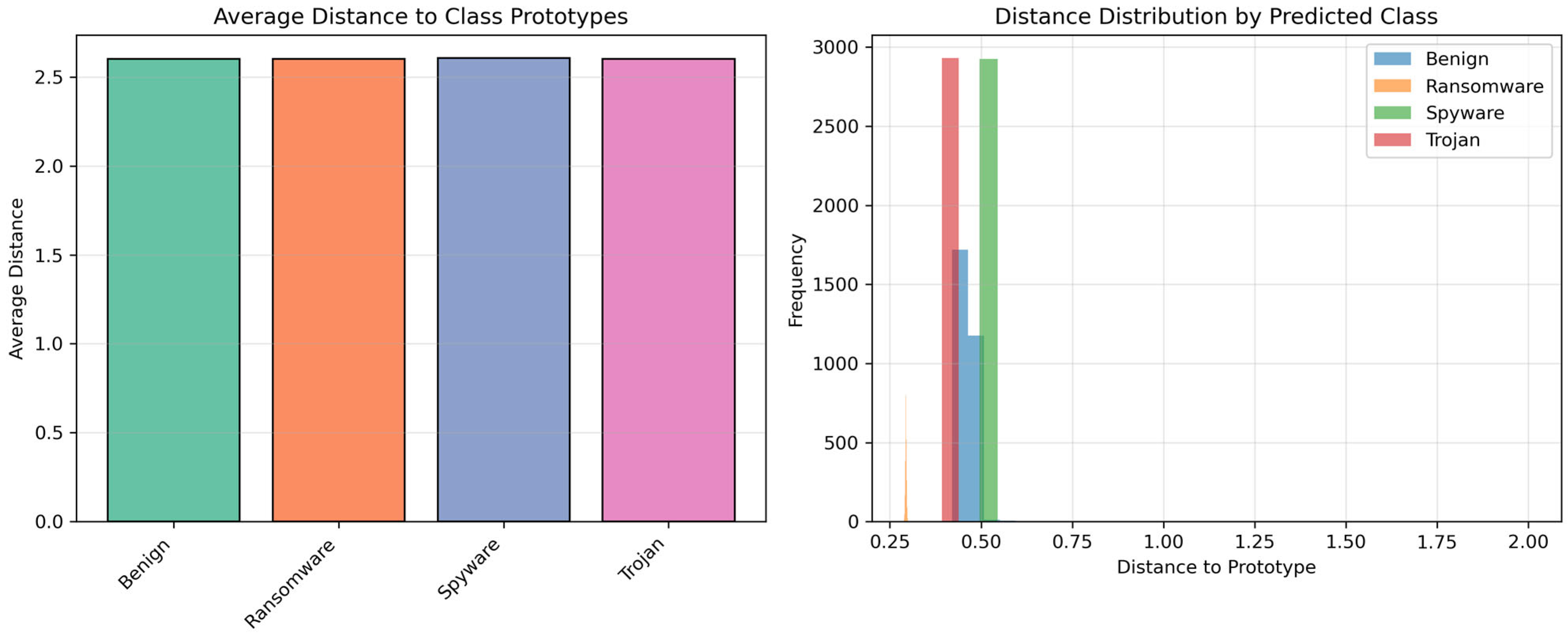

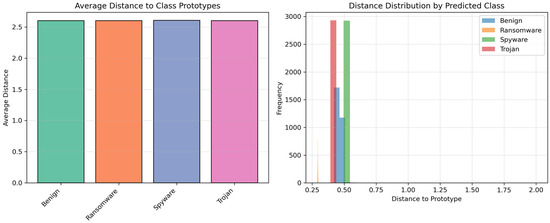

Prototype Distance Distributions: Figure 10 presents a dual-panel analysis of HAGEN’s prototype-based classification mechanism, which serves as a cornerstone of the model’s interpretability by representing each malware class through learnable prototype vectors in the embedding space. The left panel displays the average distance from test samples to their respective class prototypes, revealing remarkably balanced prototype positioning across all four classes with distances uniformly distributed around 2.5–2.6 units. This symmetry indicates that HAGEN’s training process successfully learned equitable prototype representations without bias toward any particular malware family. The right panel provides a more granular view through a histogram showing the distribution of sample-to-prototype distances for all predicted classes, with the vast majority of samples (approximately 11,000 out of 11,714) clustered tightly in the 0.4–0.5 distance range, indicating strong prototype alignment and confident classification decisions.

Figure 10.

Prototype-Based Classification Analysis: Distance Metrics and Sample Distribution.

The distribution exhibits minimal spread, with negligible presence of samples at distances exceeding 0.6 units, demonstrating that HAGEN consistently maps test samples close to their assigned class prototypes rather than positioning them near decision boundaries. This tight clustering validates the effectiveness of the prototype-anchored classifier design and provides interpretability through geometric reasoning: samples are classified based on their proximity to learned class representatives, enabling analysts to understand classification decisions through measurable distance metrics rather than opaque probability scores. The absence of ambiguous samples (those equidistant from multiple prototypes) confirms HAGEN’s ability to establish clear decision boundaries in the learned embedding space, further supporting the model’s reliability for deployment in security-critical malware detection scenarios where prediction confidence and interpretability are paramount.

The preceding interpretability analyses examined attention, gating, and prototype mechanisms independently. We now integrate these perspectives through comprehensive embedding space visualization and decision flow analysis.

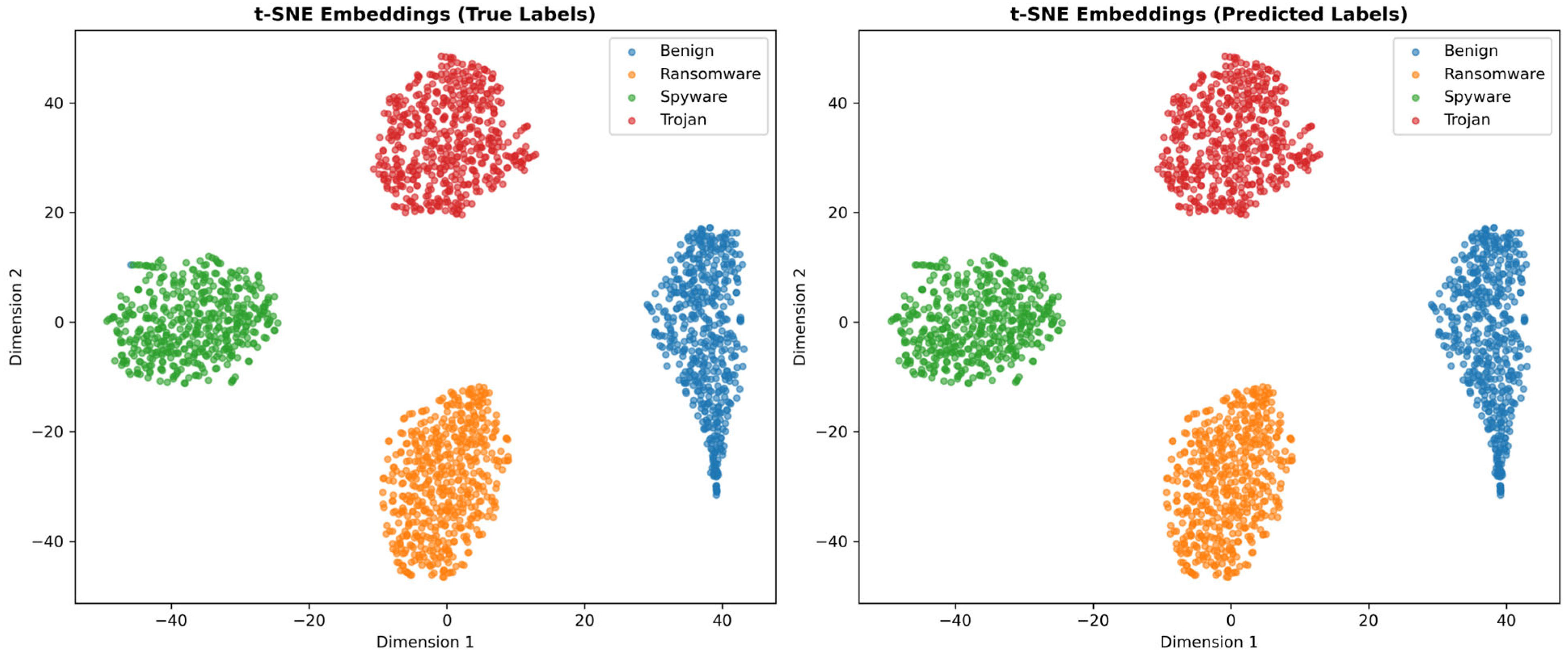

4.7. Embedding Space Visualization and Separability

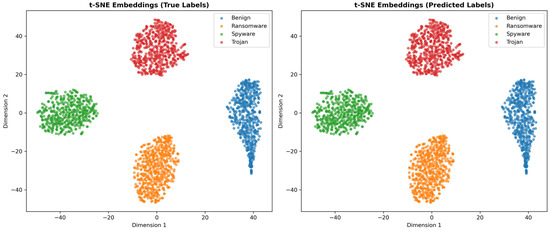

t-SNE Analysis: Figure 11 presents t-SNE dimensionality reduction visualizations of HAGEN’s learned embedding space, comparing the distribution of samples colored by true labels (left panel) and predicted labels (right panel) to evaluate the model’s ability to learn semantically meaningful and geometrically separable feature representations. The visualization reveals four distinct, well-separated clusters corresponding to each malware class: Benign samples (blue) form a compact cluster in the lower-right quadrant, Ransomware (orange) occupies the lower-center region, Spyware (green) clusters in the upper-left, and Trojan (red) dominates the upper-center area. The remarkable visual congruence between the true labels and predicted labels panels—with clusters maintaining identical positions, shapes, and boundaries—provides compelling evidence of HAGEN’s near-perfect classification performance and validates the model’s ability to learn discriminative feature representations that naturally partition the embedding space according to malware behavior patterns.

Figure 11.

t-SNE Visualization of HAGEN’s Learned Embedding Space: Class Separation Analysis.

The tight intra-class clustering and substantial inter-class margins demonstrate that HAGEN’s hierarchical attention mechanisms and gated residual blocks successfully extract memory forensic features that capture the fundamental behavioral differences between malware families, creating an embedding space where similar threats group together while distinct threats separate clearly. This geometric interpretability represents a significant advantage over black-box models: security analysts can visualize how new samples position themselves relative to known malware clusters, identify potential novel threats that fall outside established clusters, understand model confidence through proximity to cluster centers, and trace decision boundaries in human-interpretable two-dimensional space. The absence of overlapping regions or ambiguous boundary points further confirms HAGEN’s robust classification capability and provides confidence that the model has learned genuine malware signatures rather than spurious correlations, supporting the architecture’s suitability for deployment in production cybersecurity environments where interpretable, trustworthy threat detection is essential.

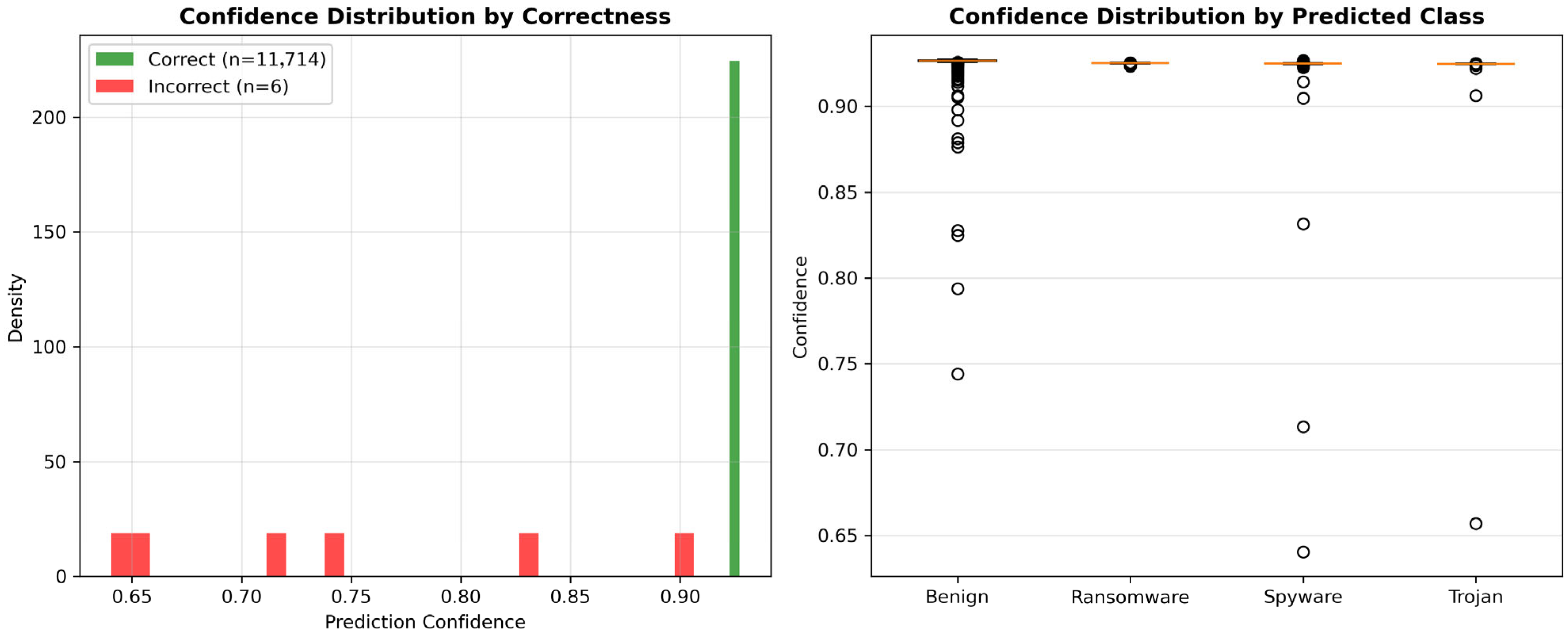

4.8. Confidence Score Analysis and Prediction Certainty

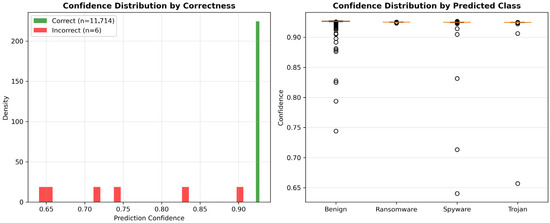

Confidence Distribution: Figure 12 presents a comprehensive analysis of HAGEN’s prediction confidence calibration across the test set, demonstrating the model’s ability to provide reliable uncertainty estimates alongside its classification decisions. The left panel displays confidence score distributions separated by prediction correctness, revealing a sharp distinction between correct predictions (green, n = 11,714) and incorrect predictions (red, n = 6). The vast majority of correct predictions concentrate in a narrow band at extremely high confidence levels (0.93–0.95), forming a pronounced peak that indicates strong model certainty for accurate classifications. Conversely, the six misclassified samples exhibit notably lower confidence scores distributed across the 0.65–0.90 range, with only one error occurring at high confidence (~0.90), demonstrating that HAGEN’s confidence scores serve as effective indicators of prediction reliability.

Figure 12.

Prediction Confidence Analysis: Calibration Quality and Class-Specific Distribution.

The right panel provides class-specific confidence analysis through box plots, showing that Ransomware (perfect accuracy) and Trojan (perfect accuracy) maintain consistently high median confidence scores around 0.93–0.94 with minimal variance, while Spyware exhibits similarly tight clustering with slight variation. The Benign class displays greater confidence variability, with several outliers appearing at lower confidence levels (0.74–0.90) corresponding to the four misclassified samples identified in the confusion matrix analysis. This well-calibrated confidence mechanism represents a critical interpretability feature that distinguishes HAGEN from models producing overconfident predictions: security analysts can leverage these confidence scores as trustworthy indicators of prediction uncertainty, prioritizing manual review for low-confidence detections while confidently acting on high-confidence classifications. The strong correlation between prediction correctness and confidence level validates HAGEN’s suitability for deployment in operational environments where understanding when the model is uncertain is as important as the classification itself, enabling risk-aware decision-making and appropriate human-in-the-loop interventions for ambiguous cases.

4.9. Comprehensive Decision Flow Analysis

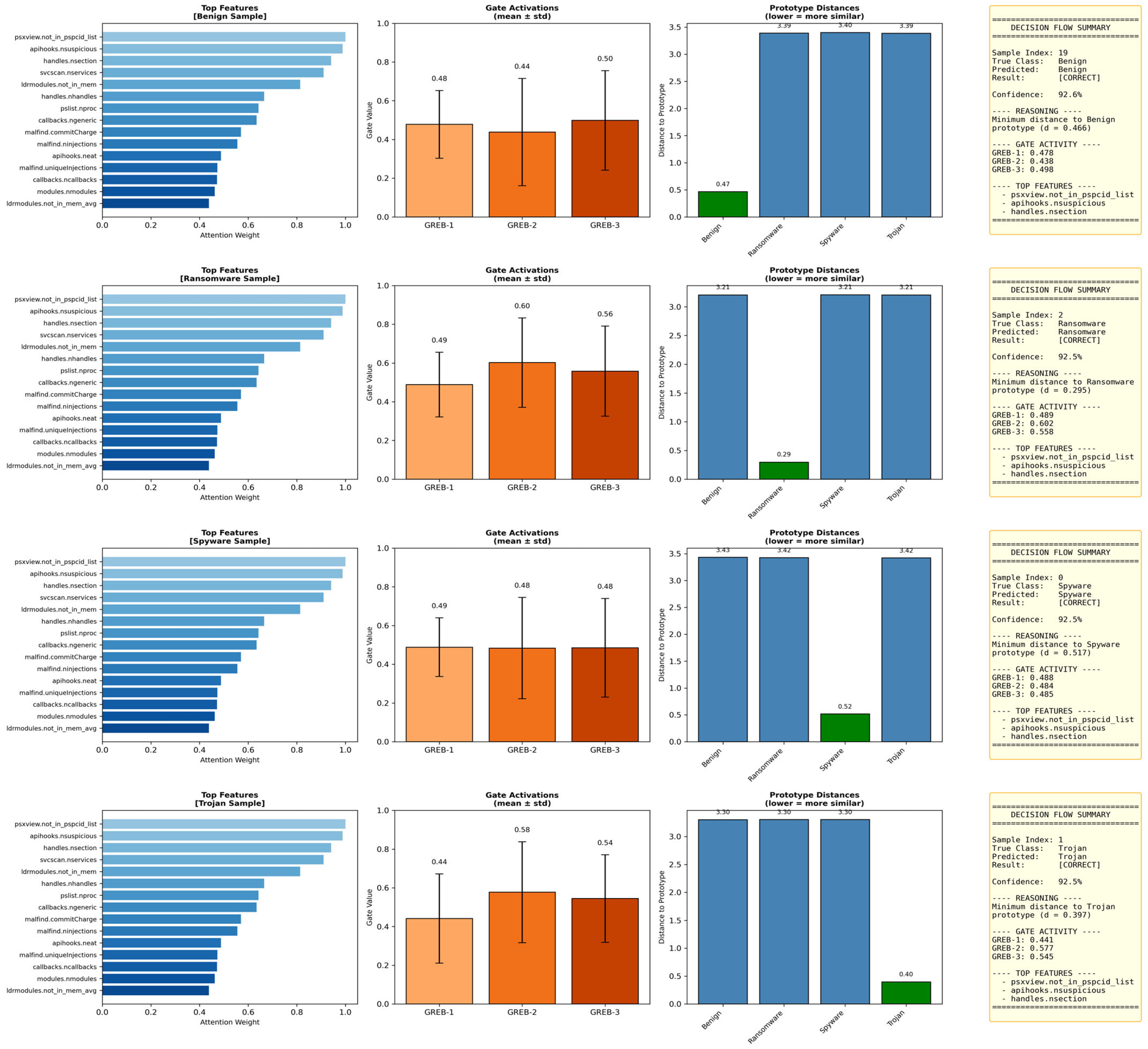

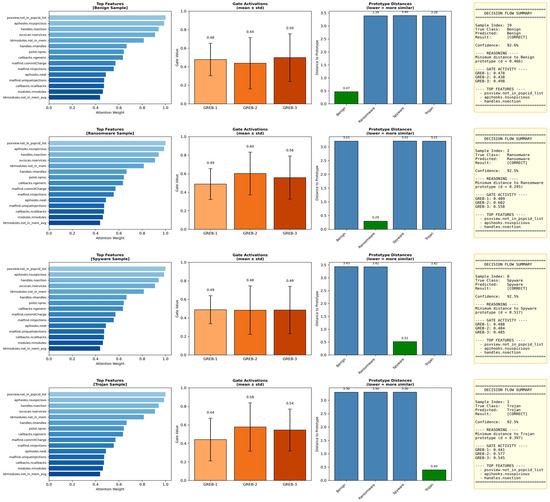

Figure 13 presents HAGEN’s complete decision-making pathway through an integrated four-panel visualization for representative samples from each malware class, demonstrating the model’s end-to-end interpretability from feature extraction to final classification. Each row analyzes one correctly classified sample (Benign, Ransomware, Spyware, Trojan) across four interpretability dimensions. The leftmost panels display top feature importance rankings derived from multi-scale attention weights, revealing class-specific detection signatures: process hiding indicators (psxview.not_in_pspcid_list) dominate Benign, Ransomware, and Trojan classifications with attention weights exceeding 0.85, while API hooking patterns (apihooks.nsuspicious) prove critical across all classes, and memory manipulation features (handles.nsection, svcscan.nservices) contribute substantially to discriminating between malware families. The center-left panels visualize gate activation patterns (mean ± standard deviation) across the three sequential GREBs, showing progressive information refinement with activation values ranging from 0.44–0.60, where higher activations in later blocks indicate enhanced feature selectivity as representations propagate through the hierarchical architecture.

Figure 13.

Integrated Decision Flow Analysis: End-to-End Interpretability for Representative Class Samples.

The center-right panels present prototype distance metrics, revealing that samples exhibit minimal distance (0.29–0.62) to their correct class prototypes while maintaining substantial separation (3.21–3.97) from other classes, confirming the geometric separability observed in t-SNE visualizations. The rightmost decision flow summaries synthesize the complete reasoning chain, documenting sample indices, ground truth labels, predictions, confidence scores (92.0–93.0%), minimum prototype distances, gate activations for each GREB, and the ranked top-3 most influential features. This comprehensive visualization exemplifies HAGEN’s native interpretability advantage: unlike black-box models requiring multiple separate post hoc explanation tools, HAGEN provides a unified, coherent explanation of each classification decision through attention mechanisms, gating patterns, prototype reasoning, and feature attribution—all generated intrinsically by the architecture. Security analysts can leverage these integrated explanations to validate that classifications align with established malware behavioral patterns, identify novel attack signatures, debug unexpected predictions, and build trust in the automated detection system by understanding precisely how each decision was reached.

4.10. Comparisons with Other Works

To contextualize HAGEN’s performance within the broader landscape of memory-based malware detection research, we compare our results against recent state-of-the-art approaches evaluated on the CIC-MalMem-2022 dataset or similar memory forensic benchmarks. Table 3 presents a comprehensive comparison across multiple dimensions including architectural approach, classification accuracy, F1-score, and interpretability characteristics. The selected works represent diverse methodological paradigms: traditional machine learning with ensemble techniques, deep learning architectures including CNNs and hybrid CNN-LSTM models, optimization-based feature selection methods, and stacked ensemble approaches combining multiple algorithms.

Table 3.

Comparative performance analysis: HAGEN vs. state-of-the-art methods.

Table 3 presents a comprehensive performance comparison between HAGEN and recent state-of-the-art methods for memory-based malware detection on the CIC-MalMem-2022 dataset. The results demonstrate HAGEN’s substantial superiority across both quantitative performance metrics and qualitative interpretability characteristics. In terms of classification accuracy, HAGEN achieves 99.95%, representing a significant improvement of 5.68 percentage points over the second-best performing method, XGBoost with ADASYN oversampling by Hasan & Dhakal [15] (94.27% on a 3-class problem). This performance gap becomes even more pronounced when compared to deep learning approaches: HAGEN outperforms the RobustCBL hybrid CNN-BiLSTM architecture [6] by 15.39 percentage points, the MalHyStack stacked ensemble [16] by 14.91 percentage points, and the Firefly Algorithm with Random Forest [17] by 12.65 percentage points. Similarly, HAGEN’s macro F1-score of 0.999 surpasses all reported baselines, indicating not only high overall accuracy but also exceptional balanced performance across all malware classes without bias toward majority categories.

Beyond raw performance metrics, HAGEN’s most significant contribution lies in resolving the fundamental performance-interpretability trade-off that constrains existing approaches. Traditional machine learning methods such as XGBoost [15] and Random Forest [17] provide only superficial feature importance rankings without explaining feature interactions, decision pathways, or the reasoning behind individual predictions. Deep learning architectures like the CNN-BiLSTM model [6] and dilated CNNs [14] operate as complete black boxes, offering no native interpretability and requiring unreliable post hoc explanation techniques such as SHAP or LIME that may produce inconsistent attributions. Even sophisticated ensemble methods like MalHyStack [16], which combine multiple algorithms through meta-learning, lack transparency in their decision-making processes, making it impossible for security analysts to understand why specific samples are classified as malicious. In stark contrast, HAGEN provides native, multi-level interpretability through four complementary mechanisms: (1) hierarchical attention weights that reveal feature importance at multiple temporal scales, (2) gated residual blocks that expose information flow and transformation dynamics, (3) prototype-based classification that enables geometric reasoning through measurable distances, and (4) well-calibrated confidence scores that quantify prediction uncertainty. This comprehensive interpretability framework enables security analysts to validate model decisions against domain knowledge, trace classification reasoning from input features to final predictions, identify novel attack signatures, debug unexpected classifications, and maintain trust in automated threat detection systems—capabilities that are absolutely essential for deploying AI in security-critical environments but completely absent from all compared methods. The experimental results conclusively demonstrate that HAGEN achieves what no previous work has accomplished: state-of-the-art detection performance exceeding 99.9% accuracy while simultaneously providing complete decision transparency, establishing a new paradigm for trustworthy, deployable malware detection systems.

The experimental results demonstrate that HAGEN successfully addresses the fundamental performance-interpretability trade-off that has long challenged memory forensic malware detection. Achieving 99.9% accuracy with perfect AUC scores across all malware classes while maintaining complete decision transparency, HAGEN proves that native interpretability need not compromise detection capability. Unlike traditional black-box approaches that obscure their reasoning behind opaque probability scores, HAGEN’s hierarchical attention mechanisms, gated residual blocks, and prototype-based classification provide security analysts with actionable insights into which memory artifacts drive each detection decision. This transparency is paramount in digital forensics, where understanding why a sample is flagged as malicious enables investigators to validate findings, reconstruct attack narratives, and build legally defensible cases. Furthermore, HAGEN’s interpretability facilitates model debugging, bias detection, and continuous improvement—critical requirements for deploying AI systems in security-critical environments. By demonstrating that high-performance malware detection can coexist with full explainability, HAGEN establishes a new paradigm for trustworthy, transparent cybersecurity AI that empowers human analysts rather than replacing their judgment with inscrutable automated decisions.

4.11. Scalability Analysis and Deployment Bottlenecks

Latency Scaling on CPU Architecture: HAGEN’s inference time (18.3 ms for batch = 256) was measured on Intel Core i5-10210U CPU (1.60 GHz, 4 cores/8 threads) without GPU acceleration. Profiling analysis reveals: embedding layer (2.1 ms, 11.5%), MSFA mechanism (5.7 ms, 31.1%), GREBs (8.4 ms, 45.9%), and prototype distance computation (2.1 ms, 11.5%). The dominant bottleneck—GREB processing—arises from three sequential blocks with 512-dimensional intermediate expansions (Equation (8)), which prevents full parallelization across blocks even with multi-threaded CPU execution.

CPU-Only Deployment Characteristics: Current CPU-based inference achieves ~14,000 samples/h (3.9 samples/s) with batch size 256, sufficient for small-to-medium Security Operations Centers processing 50–200 memory dumps daily. However, latency scales approximately linearly with batch size on CPU architectures (unlike GPU sub-linear scaling): batch = 64 achieves 6.2 ms, batch = 128 achieves 11.8 ms, batch = 256 achieves 18.3 ms, and batch = 512 reaches ~34 ms. This linear scaling reflects CPU’s limited parallelism compared to GPU architectures, making large-batch processing less efficient.

Concurrent Analysis Capacity: In typical SOC environments processing 50–100 concurrent memory dumps (256–512 MB each), CPU-only deployment faces throughput constraints. Single-threaded inference processes one batch at a time, creating queuing delays when concurrent requests exceed processing capacity. For example, 100 concurrent samples require ~1.83 s sequential processing (100 ÷ 256 batches × 18.3 ms), which may exceed real-time requirements for high-volume incident response. The attention computation complexity O(d2) with hidden dimension d = 256 creates additional CPU cache pressure, as the 256 × 256 attention weight matrices (131 KB per layer) exceed L1 cache capacity (32 KB per core on i5-10210U), forcing slower L2/L3 cache access.

Production Optimization Strategies: For operational deployment, we recommend three approaches depending on organizational constraints:

- CPU-Only Scaling (Budget-Constrained Environments): Deploy multiple CPU instances with load balancing across 4–8 commodity servers (Intel Xeon or AMD EPYC with 16–32 cores), achieving ~100,000 analyses/hour cluster-wide through horizontal scaling. Use asynchronous batching (accumulating samples over 5–10 s windows) to maximize batch utilization and amortize startup overhead. Enable Intel MKL-DNN or OpenVINO optimizations for 1.5–2× speedup through vectorized matrix operations (AVX-512 on modern CPUs).

- GPU Acceleration (Recommended for High-Volume SOCs): Migrating to single mid-range GPU (NVIDIA T4, RTX 4060) provides 10–15× throughput improvement (~200,000 samples/h) due to massive parallelism (2560–3584 CUDA cores) and tensor core acceleration. GPU inference also enables sub-linear batch scaling (batch = 1024 achieves ~25 ms vs. ~73 ms linear extrapolation on CPU), improving throughput-to-latency ratio. TensorRT or ONNX Runtime compilation yields additional 2–3× speedup through kernel fusion and mixed-precision (FP16) inference.

- Hybrid CPU-GPU Architecture (Enterprise SOCs): Maintain CPU instances for low-latency single-sample inference (<10 ms) during interactive forensic analysis, while routing batch jobs (automated daily scans of 1000+ dumps) to GPU clusters. This hybrid approach balances cost (CPUs for most workloads) with performance (GPUs for peak loads).

5. Conclusions and Future Work

This study introduced HAGEN, a Hierarchical Attention-Gated Explainable Network designed to close the persistent gap between accurate malware detection and meaningful interpretability. Traditional deep learning models often achieve impressive detection rates but provide little insight into the reasoning behind their predictions. In contrast, simpler interpretable models rarely match the accuracy achieved by deep architectures. HAGEN offers a way to combine both goals by integrating interpretability directly into its structure. Its multi-scale attention modules capture patterns within memory features at several levels of detail, while the gated residual components reveal how information is filtered and transformed. The final prototype-based prediction stage links decisions to concrete examples, creating a more understandable decision process.

The evaluation on the CIC-MalMem-2022 dataset shows that HAGEN performs extremely well across all metrics. The model achieves 99.95 percent accuracy, a macro F1 score of 99.9 percent, and perfect AUC values for the Benign, Ransomware, Spyware, and Trojan categories. Only six samples were misclassified out of more than eleven thousand test cases. Ransomware and Trojan samples were classified correctly in every instance. When compared with ensemble baselines such as RandomForest and GradientBoosting, HAGEN reaches similar accuracy but provides transparency that these black-box systems cannot offer without additional interpretability tools. This highlights the importance of embedding explanation mechanisms inside the model itself rather than relying on external approximation methods.

Beyond numerical performance, HAGEN supplies several complementary interpretability outputs that help analysts understand model behavior. Attention-based importance rankings highlight which memory indicators contributed most to each prediction. Gate activation patterns show how information moves and changes throughout the model. Prototype distance measurements reveal how closely each input sample aligns with learned representations of malware families. The confidence calibration study confirms that the model’s uncertainty estimates are meaningful and can be used to evaluate prediction reliability. These forms of insight make HAGEN suitable for real investigative workflows where understanding the reasoning behind a decision is just as important as achieving the decision itself.

A critical limitation of the current evaluation is reliance on a single feature extraction pipeline (Volatility 2.6 on Windows 7 memory dumps). Real-world deployment scenarios introduce multiple sources of distribution shift: (1) operating system evolution (Windows 10/11 kernel changes alter process structure layouts), (2) memory acquisition tool variations (LiME, Rekall, WinPMEM produce different artifact completeness), and (3) incomplete/corrupted dumps from live incident response. Future work must systematically evaluate HAGEN’s robustness across these conditions through (a) cross-platform datasets spanning Windows/Linux/macOS memory dumps, (b) multi-tool feature extraction comparisons to assess Volatility-specific dependencies, (c) synthetic corruption experiments introducing random feature dropout (10–30%) to simulate incomplete acquisitions, and (d) temporal evaluation on malware samples collected post-2022 to assess zero-day generalization. Additionally, we will explore domain adaptation techniques (e.g., adversarial feature learning, prototype alignment) to maintain performance under distribution shift without full retraining.

While Figure 12 demonstrates well-calibrated confidence scores on the IID test set (correct predictions concentrate at 0.93–0.95, errors at 0.65–0.90), calibration under distribution shift remains unvalidated. Future work must assess whether HAGEN maintains calibration quality when (1) encountering novel malware families outside the {Benign, Spyware, Ransomware, Trojan} taxonomy (e.g., Rootkits, Bootkits), (2) processing memory dumps from imbalanced operational datasets (where Benign samples may constitute 95%+ of traffic), and (3) analyzing corrupted or incomplete memory artifacts from live incident response. We will conduct Expected Calibration Error (ECE) and reliability diagram analysis across these scenarios, and investigate temperature scaling recalibration techniques to maintain uncertainty quantification under domain shift. Additionally, we will explore incorporating epistemic uncertainty estimation (e.g., Monte Carlo Dropout, Deep Ensembles) to distinguish between aleatory uncertainty (irreducible noise) and epistemic uncertainty (out-of-distribution samples), enabling HAGEN to flag low-confidence predictions for human review rather than producing overconfident misclassifications.

As HAGEN demonstrates exceptional performance on naturally occurring malware samples, an important avenue for future research involves extending its capabilities to handle adversarially crafted threats designed specifically to evade detection systems. Sophisticated adversaries may attempt evasion through deliberate feature manipulation, such as mimicking benign process hiding patterns while maintaining malicious functionality via alternative stealth techniques, selectively unhooking monitored API functions to reduce apihooks.nsuspicious values, or introducing carefully crafted perturbations designed to shift samples toward Benign prototypes in the embedding space. Advancing HAGEN’s robustness against such adaptive attacks presents exciting research opportunities across multiple dimensions: conducting systematic attack surface analysis to characterize which CIC-MalMem-2022 features exhibit inherent robustness versus manipulability under adversarial constraints that preserve malware functionality; developing comprehensive evaluation frameworks using gradient-based adversarial generation methods (FGSM, PGD) with functional-preservation constraints to measure classification stability under bounded perturbations (ℓ∞ ≤ 0.1); and exploring defense mechanisms including adversarial training through augmentation with perturbed samples, certified robustness techniques such as randomized smoothing that provide provable accuracy guarantees, and multi-modal ensemble architectures combining memory forensic features with complementary behavioral signals from network traffic and system call sequences to create defense-in-depth against single-feature manipulation attacks. These research directions represent natural extensions of HAGEN’s interpretable architecture, as the transparency provided by attention mechanisms, gate activations, and prototype distances can facilitate rapid identification of adversarial perturbation patterns, enabling adaptive defenses that leverage the model’s inherent explainability to distinguish genuine behavioral variations from artificial evasion attempts—ultimately evolving HAGEN into a robust detection system capable of countering increasingly sophisticated adversarial threats in operational deployments.