Due to scheduled maintenance work on our servers, there may be short service disruptions on this website between 11:00 and 12:00 CEST on March 28th.

Journal Description

Computation

Computation

is a peer-reviewed journal of computational science and engineering published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), CAPlus / SciFinder, Inspec, dblp, and other databases.

- Journal Rank: JCR - Q2 (Mathematics, Interdisciplinary Applications) / CiteScore - Q1 (Applied Mathematics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 14.8 days after submission; acceptance to publication is undertaken in 5.6 days (median values for papers published in this journal in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Journal Cluster of Mathematics and Its Applications: AppliedMath, Axioms, Computation, Fractal and Fractional, Geometry, International Journal of Topology, Logics, Mathematics and Symmetry.

Impact Factor:

1.9 (2024);

5-Year Impact Factor:

1.9 (2024)

Latest Articles

Multiregional Forecasting of Traffic Accidents Using Prophet Models with Statistical Residual Validation

Computation 2026, 14(4), 78; https://doi.org/10.3390/computation14040078 - 26 Mar 2026

Abstract

This study develops a multiregional forecasting framework for road traffic accidents in Ecuador, addressing a critical limitation in existing predictive approaches that rely predominantly on point error metrics without validating the statistical assumptions underlying forecast uncertainty. Although the analysis is conducted at the

[...] Read more.

This study develops a multiregional forecasting framework for road traffic accidents in Ecuador, addressing a critical limitation in existing predictive approaches that rely predominantly on point error metrics without validating the statistical assumptions underlying forecast uncertainty. Although the analysis is conducted at the provincial level, the spatial dimension is used primarily for cross-regional comparison and risk classification rather than for explicit spatial interaction modeling. Using a dataset of 27,648 monthly observations covering all 24 provinces from 2014 to 2025, the study applies the Prophet model within a Design Science Research paradigm and a CRISP-DM implementation cycle. Separate provincial models are estimated with a 24-month forecasting horizon, and methodological rigor is ensured through systematic residual diagnostics using the Shapiro–Wilk test for normality and the Ljung–Box test for temporal independence. Empirical results indicate that the Prophet-based artifact outperforms a naïve seasonal benchmark in 70.8% of the provinces, demonstrating excellent predictive accuracy in structurally stable regions such as Tungurahua (MAPE = 10.9%). At the same time, the framework enables the identification of critical emerging risks in provinces such as Santo Domingo and Cotopaxi, where projected increases exceed 49% despite acceptable point forecasts. The findings confirm that point accuracy alone does not guarantee the validity of confidence intervals and that residual validation is essential for trustworthy uncertainty quantification. Overall, the proposed approach provides a robust foundation for a predictive surveillance system capable of supporting differentiated, evidence-based road safety policies in territorially heterogeneous contexts.

Full article

(This article belongs to the Section Computational Engineering)

►

Show Figures

Open AccessArticle

Patient-Specific CFD Analysis of Carotid Artery Haemodynamics: Impact of Anatomical Variations on Atherosclerotic Risk

by

Abhilash Hebbandi Ningappa, S. M. Abdul Khader, Harishkumar Kamat, Masaaki Tamagawa, Ganesh Kamath, Raghuvir Pai B., Prakashini Koteswar, Irfan Anjum Badruddin, Mohammad Zuber, Kevin Amith Mathias and Gowrava Shenoy Baloor

Computation 2026, 14(4), 77; https://doi.org/10.3390/computation14040077 - 26 Mar 2026

Abstract

Understanding the hemodynamics of the carotid artery is essential for assessing atherosclerotic disease progression and identifying regions vulnerable to plaque formation. Background: Disturbed flow patterns and abnormal shear stresses, particularly near the carotid bifurcation, are known to influence endothelial dysfunction; therefore, this study

[...] Read more.

Understanding the hemodynamics of the carotid artery is essential for assessing atherosclerotic disease progression and identifying regions vulnerable to plaque formation. Background: Disturbed flow patterns and abnormal shear stresses, particularly near the carotid bifurcation, are known to influence endothelial dysfunction; therefore, this study aims to quantify the impact of patient-specific carotid artery geometry on key hemodynamic parameters associated with atherosclerotic risk. Methods: Four patient-specific carotid artery geometries were reconstructed from medical imaging data, processed using MIMICS, and analyzed using computational fluid dynamics in ANSYS Fluent, with blood modeled as an incompressible non-Newtonian fluid using the Carreau–Yasuda viscosity model under pulsatile flow conditions; velocity streamlines, pressure distribution, time-averaged wall shear stress (TAWSS), and oscillatory shear index (OSI) were evaluated at early systole, peak systole, and peak diastole. Results: The simulations revealed complex flow behaviour, including flow reversal, pressure build-up, and low-shear regions concentrated near the carotid bulb and bifurcation, with TAWSS consistently identifying low-shear zones (<1 Pa) across all geometries and OSI exhibiting pronounced directional oscillations in models with increased curvature and wider bifurcation angles. Conclusions: These findings demonstrate that geometric characteristics such as bifurcation angle, vessel tortuosity, and asymmetry play a critical role in shaping local haemodynamics, underscoring the utility of patient-specific CFD analysis as a diagnostic and predictive tool for atherosclerotic risk assessment and supporting more informed, personalized clinical decision-making.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Computational Economics of Circular Construction: Machine Learning and Digital Twins for Optimizing Demolition Waste Recovery and Business Value

by

Marta Torres-Polo and Eduardo Guzmán Ortíz

Computation 2026, 14(4), 76; https://doi.org/10.3390/computation14040076 - 25 Mar 2026

Abstract

Construction and demolition waste (CDW) represents a critical environmental challenge in the building sector, with global generation exceeding 3.57 billion tonnes annually. The circular economy (CE) framework offers a transformative pathway through selective deconstruction and material recovery, yet implementation faces significant barriers including

[...] Read more.

Construction and demolition waste (CDW) represents a critical environmental challenge in the building sector, with global generation exceeding 3.57 billion tonnes annually. The circular economy (CE) framework offers a transformative pathway through selective deconstruction and material recovery, yet implementation faces significant barriers including information asymmetry, supply chain fragmentation, and regulatory uncertainty. This study conducts a systematic literature review using the Context–Mechanism–Outcome (CMO) framework to analyze how computational methods, specifically Digital Twins (DT), Building Information Modeling (BIM), Internet of Things (IoT), blockchain, artificial intelligence, and robotics, act as enablers for resilience in CDW management. Following PRISMA 2020 guidelines and realist synthesis principles, we analyzed 42 high-quality empirical studies from Web of Science and Scopus (2015–2025). Our analysis identifies seven primary mechanisms: traceability (M1), simulation (M2), classification (M3), tracking (M4), collaboration (M5), analytics (M6) and robotics (M7). These mechanisms interact with four critical contexts (information asymmetry, supply chain fragmentation, economic uncertainty, operational risks) to generate outcomes at two levels: resilience capabilities (visibility, monitoring, collaboration, flexibility, anticipation) and performance indicators (recovery rates, cost reduction, CO2 emissions mitigation, occupational safety). Key findings from the CMO analysis reveal that blockchain-enabled traceability increases material recovery rates by 15–25%, DT simulation reduces deconstruction costs by 20–30%, and computer vision automation improves sorting accuracy to 85–95%. The study contributes middle-range theories explaining how digital technologies enable circular transitions under specific contextual conditions, offering actionable strategic implications for researchers, project managers, technology developers, and policymakers committed to advancing computational economics in sustainable construction.

Full article

(This article belongs to the Special Issue Modern Applications for Computational Methods in Applied Economics and Business Engineering)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Reinforcement-Learning-Based Optimization of Convective Fluxes for High-CFL Finite-Volume Schemes

by

Andrey Rozhkov, Andrey Kozelkov, Vadim Kurulin and Maxim Shishlenin

Computation 2026, 14(4), 75; https://doi.org/10.3390/computation14040075 - 24 Mar 2026

Abstract

In this article, we explore the possibility of using reinforcement learning to create convective flow approximation schemes that maintain accuracy and stability at high Courant-Friedrichs-Lewy (CFL) numbers in the finite-volume discretization of advection equations. Unlike most existing data-driven discretization methods, which primarily concentrate

[...] Read more.

In this article, we explore the possibility of using reinforcement learning to create convective flow approximation schemes that maintain accuracy and stability at high Courant-Friedrichs-Lewy (CFL) numbers in the finite-volume discretization of advection equations. Unlike most existing data-driven discretization methods, which primarily concentrate on spatial grid refinement, this work emphasizes increasing the allowable time step without compromising solution accuracy. This approach reduces the total number of time integration steps, thereby enabling faster computation. A neural network is used as a surrogate model for reconstructing the convective flow, which takes as input local information about the flow, scalars, and geometry and predicts scalar values at node points. Reinforcement learning is used for training and is formulated as a policy optimization problem, where the long-term reward is defined as the difference between the numerical and reference solutions over the entire simulation period. Both the genetic algorithm and the Deep Deterministic Policy Gradient (DDPG) method are investigated. The effectiveness of the approach is evaluated using a one-dimensional nonlinear advection problem with a constant velocity field. Despite the simplicity of the test case, the results demonstrate that the trained convective flux approximation scheme achieves accuracy comparable to or better than the classical second-order linear upwind (LUD) scheme, while operating at CFL numbers 2–50 times higher than the optimal CFL for LUD, thereby reducing the simulation time by the same factor. This allows for a wider range of stability and accuracy in the finite-volume method and the use of larger time steps without compromising the quality of the solution. The study is intentionally limited to a single spatial dimension and serves as a basic analysis of the method’s applicability. The results demonstrate that reinforcement learning can successfully find more convective flow approximation schemes that improve efficiency at high CFL numbers than conventional explicit second-order schemes, establishing a framework that is subsequently extended in our follow-up work to improve training methods and three-dimensional complex transport problems. The proposed method improves the spatial discretization of convective fluxes, which is independent of the choice of time integration scheme. Therefore, the neural reconstruction can in principle be used in both explicit and implicit finite-volume solvers.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Heat Transfer Coefficient Between Spherical Particles in Low-Conducting Fluid

by

Andrei I. Malinouski, Oscar S. Rabinovich and Heorhi U. Barakhouski

Computation 2026, 14(3), 74; https://doi.org/10.3390/computation14030074 - 20 Mar 2026

Abstract

Calculation of heat transfer in granular materials is an important task for many applications, from thermal management in electronics to exploring celestial soils. Usually, an effective thermal-conductivity model is employed to predict heat flux in unstructured granular media, such as a packed bed.

[...] Read more.

Calculation of heat transfer in granular materials is an important task for many applications, from thermal management in electronics to exploring celestial soils. Usually, an effective thermal-conductivity model is employed to predict heat flux in unstructured granular media, such as a packed bed. However, a more advanced approach, the discrete element method (DEM), can capture the complex effects of mechanical loading and material mixtures on thermal transport coefficients, which traditional models struggle with. Pivotal for this approach is knowing the heat transfer coefficient between two adjacent particles. Currently, in most DEM-capable software, only particles in direct surface contact are considered to have non-zero heat conduction. We propose considering particles that are close to each other but don’t have a contact area with a non-zero surface area. We perform numerical modeling of the conductive heat transfer coefficient between equal spherical particles separated by media, assuming the fluid’s thermal conductivity is at least an order of magnitude lower. We use numerical solutions of differential equations to account for both thermal resistance within particles and through the gap between them. We found a simple generalized correlation for the heat transfer coefficient between particles and a general formula for the angular distribution of heat flux density across the particle surface. By employing a non-dimensional approach, the obtained formulas are constructed using non-dimensional parameters: the ratio of the particle’s thermal conductivity to that of the medium, and the ratio of the gap width between particles to their radius. The resulting formula is simple and convenient for DEM heat transfer calculations in packed and fluidized beds.

Full article

(This article belongs to the Special Issue Computational Heat and Mass Transfer (ICCHMT 2025))

►▼

Show Figures

Graphical abstract

Open AccessArticle

Online Point-of-Interest Recommendations in Data Streams

by

Giannis Christoforidis and Apostolos N. Papadopoulos

Computation 2026, 14(3), 73; https://doi.org/10.3390/computation14030073 - 20 Mar 2026

Abstract

In recent years, social networks have shown a great influx of new users and traffic. As their popularity grows, so does the interest in researching ways to process the information available, in order to produce useful knowledge. One direction is making personalized recommendations

[...] Read more.

In recent years, social networks have shown a great influx of new users and traffic. As their popularity grows, so does the interest in researching ways to process the information available, in order to produce useful knowledge. One direction is making personalized recommendations based on users’ preferences and on their social behavior and related characteristics in general. Static recommendations, however, are proven to be highly inaccurate, since as time progresses, people tend to change their preferences, making different decisions than the ones predicted previously. This calls for an adaptive algorithm that shifts according to the changes in preferences and habits of the users. Handling the stream of information is challenging, as the new data can severely change the recommendations to many users. In this work, we propose a novel streaming Point-of-Interest recommendation algorithm that explicitly incorporates location-aware features into its dynamic update mechanism, enabling continuous adaptation to newly arriving data. The proposed approach is experimentally evaluated based on real-life data sets containing the network structure as well as check-in information. The results demonstrate high accuracy, achieving at the same time significant performance gains with respect to runtime costs compared to conventional approaches.

Full article

(This article belongs to the Special Issue Computational Social Science and Complex Systems—2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Comparative Analysis of Machine Learning Algorithms to Predict Municipal Solid Waste

by

Pedro Aguilar-Encarnacion, Pedro Peñafiel-Arcos, Marcos Barahona Morales and Wilson Chango

Computation 2026, 14(3), 72; https://doi.org/10.3390/computation14030072 - 19 Mar 2026

Abstract

The management of municipal solid waste in intermediate cities exhibits high daily variability and source heterogeneity, which hinders operational sizing and material recovery. Reliable predictions are required from heterogeneous and often-scarce data. However, studies that compare multiple machine learning algorithms with temporal validation

[...] Read more.

The management of municipal solid waste in intermediate cities exhibits high daily variability and source heterogeneity, which hinders operational sizing and material recovery. Reliable predictions are required from heterogeneous and often-scarce data. However, studies that compare multiple machine learning algorithms with temporal validation on short time series in intermediate cities are still limited. This study compares fourteen machine learning algorithms to predict the daily generation of organic and inorganic waste in La Joya de los Sachas, Ecuador, formulating the problem as a multi-output regression problem. An adapted CRISP-DM design was employed, using primary data from a waste characterization campaign, temporal feature engineering, variable encoding, and an expanding-window backtesting protocol against lag-7 persistence and ARIMA. Tree-based ensembles achieved the best performance. AdaBoost provided the best organic forecasts (

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Sensitivity Analysis of CO2 Emitted in Clinker and Cement Production

by

Dimitris Tsamatsoulis

Computation 2026, 14(3), 71; https://doi.org/10.3390/computation14030071 - 18 Mar 2026

Abstract

This study performs a sensitivity analysis of CO2 emissions from clinker and cement production using life cycle assessment (LCA). Both local and global sensitivity analyses (LSA and GSA) are conducted. LSA uses outputs from the GCCA EPD tool—developed by the Global Cement

[...] Read more.

This study performs a sensitivity analysis of CO2 emissions from clinker and cement production using life cycle assessment (LCA). Both local and global sensitivity analyses (LSA and GSA) are conducted. LSA uses outputs from the GCCA EPD tool—developed by the Global Cement and Concrete Association to facilitate Environmental Product Declarations—and examines correlations between perturbed input variables and the resulting output changes. For GSA, we present an analytical derivation of Sobol’ indices. We derive quantitative relationships between alternative materials and fuels and key technical indices, while preserving clinker and cement quality throughout the sensitivity analysis. Increasing the share of the alternative fuels (AFs) categories and of recycled concrete produces a negative percentage change in CO2 emitted from the clinker (CO2/CL). The largest CO2/CL reductions arise from high-biomass fuels, followed by alternative solid fuels and refuse-derived fuels, shredded tires, and, lastly, recycled concrete. The clinker-to-cement ratio (CL/CEM) dominates the CO2 emitted in cement production (1% change → 0.926–0.956% change), while clinker-level CO2 reductions transmit to cement with only minor variation, confirmed by Sobol’ indices. Aside from reducing CO2/CL by increasing alternative materials and fuels, the two principal approaches to lowering CO2/CEM are: (i) minimizing clinker content in cement where permitted by applicable standards while maintaining the same performance, and (ii) designing new cement types that deliver equivalent performance with lower clinker content.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Optimization-Driven Multimodal Brain Tumor Segmentation Using α-Expansion Graph Cuts

by

Roaa Soloh, Bilal Nakhal and Abdallah El Chakik

Computation 2026, 14(3), 70; https://doi.org/10.3390/computation14030070 - 15 Mar 2026

Abstract

Precise segmentation of brain tumors from multimodal MRI scans is essential for accurate neuro-oncological diagnosis and treatment planning. To address this challenge, we propose a label-free optimization-driven segmentation framework based on the

Precise segmentation of brain tumors from multimodal MRI scans is essential for accurate neuro-oncological diagnosis and treatment planning. To address this challenge, we propose a label-free optimization-driven segmentation framework based on the

(This article belongs to the Section Computational Biology)

►▼

Show Figures

Figure 1

Open AccessArticle

A Hybrid Model Reduction Method for Dual-Continuum Model with Random Inputs

by

Lingling Ma

Computation 2026, 14(3), 69; https://doi.org/10.3390/computation14030069 - 13 Mar 2026

Abstract

In this paper, a hybrid model reduction method for solving flows in fractured media is proposed. The approach integrates the Generalized Multiscale Finite Element Method (GMsFEM) with a novel variable-separation (VS) technique. Compared with many widely used variable-separation methods, the proposed model reduction

[...] Read more.

In this paper, a hybrid model reduction method for solving flows in fractured media is proposed. The approach integrates the Generalized Multiscale Finite Element Method (GMsFEM) with a novel variable-separation (VS) technique. Compared with many widely used variable-separation methods, the proposed model reduction method shares their merits but has lower computation complexity and higher efficiency. Within this framework, we can get the low-rank variable-separation expansion of dual-continuum model solutions in a systematic enrichment manner. No iteration is performed at each enrichment step. The expansion is constructed using two sets of basis functions: stochastic basis functions and deterministic physical basis functions, both derived from offline, model-oriented computations. To efficiently construct the stochastic basis functions, the original model is used to learn stochastic information. Meanwhile, the deterministic physical basis functions are trained using solutions obtained by applying an uncoupled GMsFEM to the dual-continuum system at a select number of optimal samples. Once these bases are established, the online evaluation for each new random sample becomes highly efficient, allowing for the computation of a large number of stochastic realizations at minimal cost. To demonstrate the performance of the proposed method, two numerical examples for dual-continuum models with random inputs are presented. The results confirm that the hybrid model reduction method is both efficient and achieves high approximation accuracy.

Full article

(This article belongs to the Special Issue Advances in Computational Methods for Fluid Flow)

►▼

Show Figures

Figure 1

Open AccessArticle

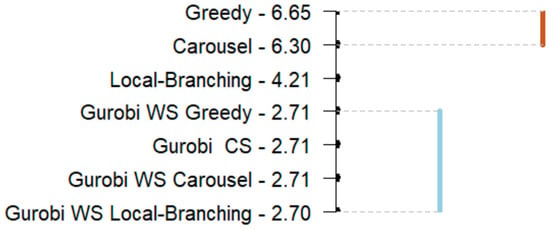

Determining When Gurobi Generates Optimal Solutions for the Partial Coverage Weighted Set Covering Problem

by

Myung Soon Song, Amber Kulp, Yun Lu and Francis J. Vasko

Computation 2026, 14(3), 68; https://doi.org/10.3390/computation14030068 - 12 Mar 2026

Abstract

The partial coverage weighted set covering problem (PCWSCP) allows for less than 100% of the rows to be satisfied in a weighted set covering problem (WSCP). This paper does not claim to contribute to operations research (OR) theory or methodology. Instead, it demonstrates

[...] Read more.

The partial coverage weighted set covering problem (PCWSCP) allows for less than 100% of the rows to be satisfied in a weighted set covering problem (WSCP). This paper does not claim to contribute to operations research (OR) theory or methodology. Instead, it demonstrates that a large number of PCWSCPs based on WSCPs from the OR literature can be efficiently solved using the software Gurobi 12 with default parameter settings on a standard PC. This is an important practical result because it indicates what types of PCWSCPs can be solved optimally using commercial software without resorting to customized algorithms that do not guarantee optimums or even bounds on their solutions. Specifically, using 105 WSCP instances from the literature, 420 PCWSCP instances are generated with 105 instances at 80%, 85%, 90%, and 95% coverage respectively. It is shown that using Gurobi on a standard PC, optimal solutions could be obtained within 300 s (average of 17 s) for instances with up to 800 rows by 8000 columns by 2% density. This is about 86% of the 420 instances. As expected, in general, the execution time decreases as the row coverage decreases. Furthermore, it is shown that initializing (“warm-starting”) Gurobi with solutions from either a greedy, carousel greedy, or local branching algorithm results in no statistically significant difference in performance compared to Gurobi’s cold start. Hence, there is no advantage to “warm-starting” Gurobi with one of these common heuristic approaches when solving PCWSCPs. Finally, this is the first time the weighted version of the partial coverage set covering problem is discussed in the literature. All previous discussions dealt only with solution approaches specifically developed for the unit-cost version of the problem.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Performance Analysis of the YOLO Object Detection Algorithm in Embedded Systems: Generated Code vs. Native Implementation

by

Pablo Martínez Otero, Alberto Tellaeche and Mar Hernández Melero

Computation 2026, 14(3), 67; https://doi.org/10.3390/computation14030067 - 12 Mar 2026

Abstract

This paper evaluates the current maturity of automatic code-generation workflows for deploying modern CNN-based object detectors on embedded GPU platforms. We compare a native pipeline against a code generation pipeline through a Model-Based Engineering (MBE) approach, using YOLOv8/YOLOv9 inference on NVIDIA Jetson Orin

[...] Read more.

This paper evaluates the current maturity of automatic code-generation workflows for deploying modern CNN-based object detectors on embedded GPU platforms. We compare a native pipeline against a code generation pipeline through a Model-Based Engineering (MBE) approach, using YOLOv8/YOLOv9 inference on NVIDIA Jetson Orin Nano and Jetson AGX Orin as representative edge-GPU workloads. We report detection-quality metrics (mAP, PR curves) and system-level metrics (latency distribution and initialization overhead) under a controlled single-class scenario based on a CARLA-generated sequence with frame-level annotations. Absolute accuracy and latency values are scenario-dependent and may vary under different camera optics, illumination, motion blur, sensor noise, occlusion patterns, and multi-class scene. Results quantify the performance gap between code generation and native pipelines and show that, for the evaluated workloads, the automated pipeline remains less competitive in both latency and accuracy. We discuss the implications of this gap for deployment workflows in safety-oriented domains, and we outline bottlenecks that should be addressed. The study is intended as a controlled traffic-light detection micro-benchmark and does not aim to validate full ADAS perception stacks.

Full article

(This article belongs to the Special Issue Object Detection Models for Transportation Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Advanced Thick FGM Plate–Cylindrical Shells in Supersonic Air Flow by Navier–Stokes Equation Analytical–Numerical Flow Model

by

Chih-Chiang Hong

Computation 2026, 14(3), 66; https://doi.org/10.3390/computation14030066 - 6 Mar 2026

Abstract

The thermal vibrations of a thick-walled functionally graded material (FGM) plate–cylindrical shells in unsteady supersonic flow with a Navier–Stokes equation analytical–numerical flow model and third-order shear deformation theory (TSDT) displacement models are investigated. The aerodynamic pressure load can be provided by using the

[...] Read more.

The thermal vibrations of a thick-walled functionally graded material (FGM) plate–cylindrical shells in unsteady supersonic flow with a Navier–Stokes equation analytical–numerical flow model and third-order shear deformation theory (TSDT) displacement models are investigated. The aerodynamic pressure load can be provided by using the Navier–Stokes equation analytical–numerical flow model. The data regarding the effect of the aerodynamic pressure load and TSDT model of the motion equation on the thermal stress and displacement of the FGM plate–cylindrical shells in unsteady supersonic flow are calculated with the generalized differential quadrature (GDQ) method. The Navier–Stokes equation analytical–numerical flow model, TSDT model, and advanced shear correction coefficient provide an additional effect on the values of displacement and stress.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploring a Family-Based Approach as a Control Strategy for Gastric Ulcers and Gastric Cancer: A Mathematical Modeling Approach

by

Glory Kawira Mutua, Musyoka Kinyili and Dominic Makaa Kitavi

Computation 2026, 14(3), 65; https://doi.org/10.3390/computation14030065 - 5 Mar 2026

Abstract

This study formulates a deterministic model to assess the effect of a family-based control and management (FBCM) strategy against the transmission of Helicobacter pylori infection and its consequent development of gastric ulcers and gastric cancer. The model includes nine epidemiological compartments to model

[...] Read more.

This study formulates a deterministic model to assess the effect of a family-based control and management (FBCM) strategy against the transmission of Helicobacter pylori infection and its consequent development of gastric ulcers and gastric cancer. The model includes nine epidemiological compartments to model disease transmission and contact epidemiology between susceptible and infected individuals. In the model analysis, we compute positivity, the invariant region, equilibria, stabilities, and bifurcation analysis. We calculate the control reproduction number

(This article belongs to the Section Computational Biology)

►▼

Show Figures

Figure 1

Open AccessArticle

Lyapunov-Based Synthesis of Self-Organizing Nonlinear Integrators for Stage Motion Control Under Parametric Uncertainty

by

Raigul Tuleuova, Nurgul Shazhdekeyeva, Sharbat Nurzhanova, Aigul Myrzasheva, Saltanat Sharmukhanbet, Maxot Rakhmetov, Makhatova Valentina and Lyailya Kurmangaziyeva

Computation 2026, 14(3), 64; https://doi.org/10.3390/computation14030064 - 3 Mar 2026

Abstract

Linear integrators are traditionally used in motion control systems to compensate for static effects and suppress low-frequency disturbances. However, their use is inevitably accompanied by phase delays that limit the performance and robustness of control systems, especially in conditions of parametric uncertainty. In

[...] Read more.

Linear integrators are traditionally used in motion control systems to compensate for static effects and suppress low-frequency disturbances. However, their use is inevitably accompanied by phase delays that limit the performance and robustness of control systems, especially in conditions of parametric uncertainty. In this regard, nonlinear integrators have been considered for several decades as a promising alternative that can weaken phase constraints and improve the quality of transients. In this paper, the concept of nonlinear integrators is reinterpreted in the context of self-organizing motion control of precision stages. In contrast to traditional approaches focused primarily on frequency analysis and the method of describing the function, a method is proposed for the synthesis of a self-organizing control system for nonlinear SISO objects based on catastrophe theory, namely in the class of elliptical dynamics with the property of structural stability. The control action is formed in such a way that transitions between stable modes occur due to bifurcation-conditioned self-organization, without using external switching logic. To ensure strict analytical guarantees of stability, the Lyapunov gradient-velocity vector function method is used, which guarantees aperiodic robust stability, suppression of oscillatory and chaotic modes, as well as monotonic convergence of trajectories under conditions of parameter uncertainty. The parameters of the nonlinear integrator are adapted using Self-Organizing Maps (SOM), while any parameter changes are allowed only within the regions that meet the conditions of Lyapunov stability. This approach ensures the alignment of analytical and data-oriented methods without violating the structural stability of the system. The results of numerical experiments demonstrate the superiority of the proposed method in comparison with classical linear and adaptive regulators in problems of controlling the movement of stages, especially near bifurcation boundaries and with significant parametric uncertainty. The results obtained confirm that the integration of nonlinear integrators with catastrophe theory and self-organization mechanisms forms a promising basis for the creation of robust and high-precision motion control systems of a new generation.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Surrogate-Based Multi-Objective Bayesian Optimization for Automated Parameter Identification in 3D Mesoscale Concrete Fatigue Modeling

by

Himanshu Rana and Adnan Ibrahimbegovic

Computation 2026, 14(3), 63; https://doi.org/10.3390/computation14030063 - 2 Mar 2026

Abstract

Prediction of fatigue failure in concrete structures remains a major challenge due to progressive material degradation. Reliable prediction, therefore, requires modeling the 3D heterogeneous microstructure of concrete to explain the underlying mechanisms governing fatigue failure. While such mesoscale models can reliably predict the

[...] Read more.

Prediction of fatigue failure in concrete structures remains a major challenge due to progressive material degradation. Reliable prediction, therefore, requires modeling the 3D heterogeneous microstructure of concrete to explain the underlying mechanisms governing fatigue failure. While such mesoscale models can reliably predict the fatigue-induced fracture mechanisms, the identification of the associated material parameters remains a significant challenge due to the high-dimensional parameter space introduced by the model. The key challenge addressed in this study is to capture microcrack initiation and coalescence under fatigue loading, using a model capable of representing fracture process: crack initiation, crack propagation, and final failure. Firstly, concrete domain is discretized into Voronoi cells, enabling explicit representation of aggregates and mortar by randomly assigning cohesive links connecting Voronoi cells as aggregates and mortar. After this, mortar links are modeled as coupled damage–plasticity 3D Timoshenko beam elements with nonlinear kinematic hardening and isotropic softening introduced using embedded discontinuity formulation, enabling fracture Modes I–III, whereas aggregate links are modeled as elastic 3D Timoshenko beam elements. The model efficiency is additionally reinforced by using surrogate model approach, with corresponding material parameter identification carried out by multi-objective Bayesian optimization framework to reproduce experimental results. The performance of the proposed model is illustrated by reproducing experimental results obtained from concrete cube compression test and three-point bending test under low-cycle fatigue loading, where the errors between experimental and numerical results are reduced by 82% (stress) and 88% (energy) for the cube test and by 86% (force) and 93% (energy) for the bending test, relative to the initial dataset error.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

MSB-UNet: A Multi-Scale Bifurcation U-Net Architecture for Precise Segmentation of Breast Cancer in Histopathology Images

by

Arda Yunianta

Computation 2026, 14(3), 62; https://doi.org/10.3390/computation14030062 - 2 Mar 2026

Abstract

Accurate segmentation of breast cancer regions in histopathological images is critical for advancing computer-aided diagnostic systems, yet challenges persist due to heterogeneous tissue structures, staining variations, and the need to capture features across multiple scales. This study introduces MSB-UNet, a novel Multi-Scale Bifurcated

[...] Read more.

Accurate segmentation of breast cancer regions in histopathological images is critical for advancing computer-aided diagnostic systems, yet challenges persist due to heterogeneous tissue structures, staining variations, and the need to capture features across multiple scales. This study introduces MSB-UNet, a novel Multi-Scale Bifurcated U-Net architecture designed to address these challenges through a dual-pathway encoder–decoder framework that processes images at multiple resolutions simultaneously. By integrating a bifurcated encoder with a Feature Fusion Module, MSB-UNet effectively captures fine-grained cellular details and broader tissue-level patterns. MSB-UNet is formulated as a binary segmentation framework (tumor vs. outside region of interest), producing a two-channel probability map via a channel-wise Softmax output. Evaluated on a publicly available breast cancer histopathology dataset, MSB-UNet achieves a Dice Similarity Coefficient (DSC) of 91.3% and a mean Intersection over Union (mIoU) of 84.4%, outperforming state-of-the-art segmentation models. The architecture demonstrates better results compared to other baseline methods and has the potential to enhance automated diagnostic tools for breast cancer histopathology.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Modeling a High-Efficiency BMS for Light Electromobility and Energy Storage in Critical Environments

by

Manuel J. Pasion-Fuentes, Mauricio P. Galvez-Legua and Diego E. Galvez-Aranda

Computation 2026, 14(3), 61; https://doi.org/10.3390/computation14030061 - 2 Mar 2026

Abstract

Recent advances in energy storage systems and in increasingly efficient, safe, and energy-dense cell chemistries have driven the need for commercial Battery Management System (BMS) architectures with greater control, data acquisition, and communication capabilities, primarily oriented towards customization. This demand introduces a significant

[...] Read more.

Recent advances in energy storage systems and in increasingly efficient, safe, and energy-dense cell chemistries have driven the need for commercial Battery Management System (BMS) architectures with greater control, data acquisition, and communication capabilities, primarily oriented towards customization. This demand introduces a significant change in how electrical systems are modeled and simulated when they integrate active electrochemical elements such as lithium-ion cells. This work presents the development and modeling of a BMS for critical and high-efficiency applications, based on active balancing techniques and incorporating an additional safety stage to respond to failures when charging

(This article belongs to the Special Issue Energy and Advanced Computing in the Age of Machine Learning: From Quantum to Grid)

►▼

Show Figures

Figure 1

Open AccessArticle

A Novel Approach to Mitigate Blade-to-Blade Interactions in Vertical-Axis Wind Turbines Suitable for Urban Areas

by

Ion Mălăel

Computation 2026, 14(3), 60; https://doi.org/10.3390/computation14030060 - 2 Mar 2026

Abstract

With the growth of urban zones and the increasing need for energy, the use of renewable energy solutions in the built environment becomes a must. Due to their small size and the ability to capture wind from any direction, vertical-axis wind turbines are

[...] Read more.

With the growth of urban zones and the increasing need for energy, the use of renewable energy solutions in the built environment becomes a must. Due to their small size and the ability to capture wind from any direction, vertical-axis wind turbines are an alternative to conventional wind energy generators. However, the use of these turbines in the built environment faces difficulties due to performance inefficiencies, particularly because of the intricate aerodynamic characteristics of the blades. This work investigates a method for increasing the efficiency of VAWTs by addressing blade-to-blade interactions using Computational Fluid Dynamics simulations. The research aims to improve turbine design for urban locations, which motivates the application context of the study. The present numerical model employs a uniform inflow to isolate blade–blade interaction mechanisms under controlled conditions. The paper presents a design that minimizes aerodynamic losses, decreases turbulence-induced drag, and increases overall energy capture efficiency by modeling different blade configurations and their interactions. The performance of four asymmetric configurations of blade chord and radius was numerically studied and compared to a symmetric configuration.

Full article

(This article belongs to the Special Issue Advances in Computational Methods for Fluid Flow)

►▼

Show Figures

Figure 1

Open AccessArticle

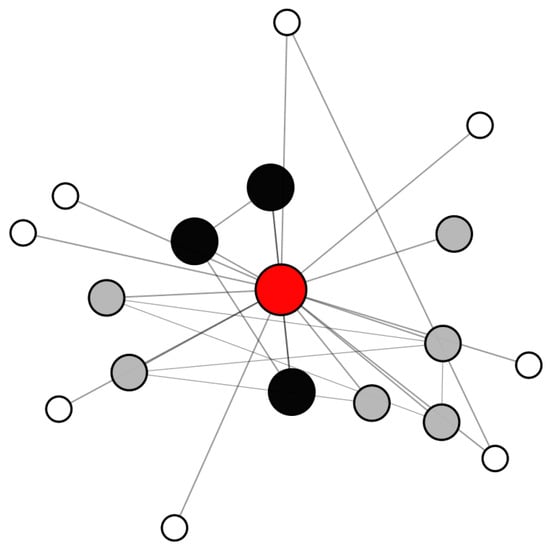

Incremental Recall: An Efficient Method for Estimating Egocentric Network Density

by

Chad A. Davis and Caimiao Liu

Computation 2026, 14(3), 59; https://doi.org/10.3390/computation14030059 - 2 Mar 2026

Abstract

Accurate estimation of network density is central to egocentric social network analysis, yet existing survey-based methods require researchers to balance accuracy against participant burden and systematic recall bias. Traditional approaches, such as fixed-list name generators, tend to overrepresent salient ties. Although the more

[...] Read more.

Accurate estimation of network density is central to egocentric social network analysis, yet existing survey-based methods require researchers to balance accuracy against participant burden and systematic recall bias. Traditional approaches, such as fixed-list name generators, tend to overrepresent salient ties. Although the more recent random sampling method yields better accuracy, it relies on exhaustive free recall, which can be cognitively demanding and impractical for researchers. In this study, we introduce and evaluate an alternative approach—incremental recall—that structures alter nomination across relationship categories to improve coverage of differing tie strengths while reducing respondent burden. Using a large-scale Monte Carlo simulation encompassing over 9 million egocentric networks, we compare incremental recall against traditional fixed-list recall and random sampling across a wide range of network sizes, compositions, and recall bias assumptions. Results show that the incremental recall method consistently outperforms traditional fixed-list recall and performs comparably to or better than random sampling under unbiased and moderately biased recall conditions. Performance advantages persist even when respondents are unable to provide the full number of alters specified by design. We further validate these findings using empirical egocentric network data from 103 participants. Treating observed networks as proxy ground truths, empirical results closely mirror the simulation patterns, confirming the robustness of incremental recall under real-world reporting conditions. These findings demonstrate that incremental recall addresses a central practical challenge in egocentric social network research: balancing feasibility and accuracy in density estimation. The proposed method maintains strong performance while substantially reducing respondent burden and simplifying administration for applied studies. For researchers conducting large scale surveys where network density is one of several measures, incremental recall provides a practical and validated alternative to exhaustive recall that maintains robustness to realistic reporting biases.

Full article

(This article belongs to the Special Issue Applications of Machine Learning and Data Science Methods in Social Sciences)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Axioms, Computation, Fractal Fract, Mathematics, Symmetry

Fractional Calculus: Theory and Applications, 2nd Edition

Topic Editors: António Lopes, Liping Chen, Sergio Adriani David, Alireza AlfiDeadline: 30 May 2026

Topic in

Brain Sciences, NeuroSci, Applied Sciences, Mathematics, Computation

The Computational Brain

Topic Editors: William Winlow, Andrew JohnsonDeadline: 31 July 2026

Topic in

Sustainability, Remote Sensing, Forests, Applied Sciences, Computation

Artificial Intelligence, Remote Sensing and Digital Twin Driving Innovation in Sustainable Natural Resources and Ecology

Topic Editors: Huaiqing Zhang, Ting YunDeadline: 31 January 2027

Topic in

Algorithms, AppliedMath, Computation, Mathematics, Symmetry, Sci, Applied Sciences

Intelligent Optimization Algorithm: Theory and Applications, 2nd Edition

Topic Editors: Shi Cheng, Chaomin Luo, Shangce GaoDeadline: 31 March 2027

Conferences

Special Issues

Special Issue in

Computation

Mathematical and Computational Modeling of Natural and Artificial Human Senses

Guest Editors: Gustavo Olague, Rocío Ochoa-Montiel, Isidro Robledo-Vega, Juan-Manuel Ahuactzin, Marlen Meza-SánchezDeadline: 30 March 2026

Special Issue in

Computation

Recent Advances on Computational Linguistics and Natural Language Processing

Guest Editors: Khaled Shaalan, Filippo PalombiDeadline: 30 April 2026

Special Issue in

Computation

Selected Papers from the 57th International Carnahan Conference on Security Technology (the 57th Annual ICCST)

Guest Editors: Garzia Fabio, Gordon Thomas, Soodamani RamalingamDeadline: 30 April 2026

Special Issue in

Computation

Optimizing Resource Provisioning and Scheduling in Distributed Systems

Guest Editor: Yogesh SharmaDeadline: 30 April 2026