Journal Description

AI in Education

AI in Education

is an international, peer-reviewed, scholarly, open access journal on both the theoretical and practical applications of artificial intelligence (AI) within educational environments published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- Rapid Publication: first decisions in 19 days; acceptance to publication in 8 days (median values for MDPI journals in the second half of 2025).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- AI in Education is a companion journal of Education Sciences.

- Journal Cluster of Education and Psychology: Adolescents, AI in Education, Behavioral Sciences, Education Sciences, International Journal of Cognitive Sciences, Journal of Intelligence, Psychology International and Youth.

Latest Articles

Decoding Student–Chatbot Dialogues: How Interaction Structure Is Associated with Learning Gains in AI-Assisted Programming

AI Educ. 2026, 2(2), 15; https://doi.org/10.3390/aieduc2020015 - 9 May 2026

Abstract

►

Show Figures

The study examines how secondary school students interacted with an AI-powered educational chatbot, MyBotBuddy, while working on a programming task, and how observed dialogue structures were associated with differences in pre- to post-test performance. Fifty students first completed an unassisted pre-test, then attempted

[...] Read more.

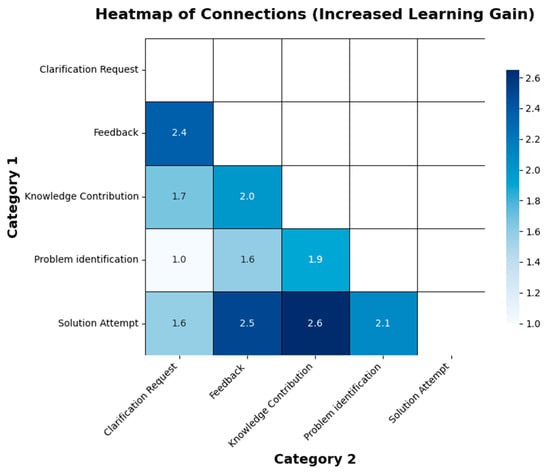

The study examines how secondary school students interacted with an AI-powered educational chatbot, MyBotBuddy, while working on a programming task, and how observed dialogue structures were associated with differences in pre- to post-test performance. Fifty students first completed an unassisted pre-test, then attempted a chatbot-supported programming task, and finally completed an unassisted post-test. Based on score change, students were grouped into learning gain, no gain, and learning loss categories. Dialogue transcripts were analyzed using Epistemic Network Analysis to identify co-occurring discourse patterns, alongside descriptive sentiment analysis to characterize lexical tone. Students in the learning gain group showed more connected multi-turn patterns involving solution attempts, feedback uptake, knowledge-related contributions, and clarification following feedback. In contrast, the no gain and learning loss groups showed less iterative and less systematically connected interaction structures. Average sentiment polarity differed only slightly across groups and is interpreted cautiously because the dialogue was technical and programming focused. The findings are associational and exploratory rather than causal and suggest that learner engagement with a chatbot may be more informative than interaction frequency alone. We discuss implications for educational chatbot design, especially the potential value of multi-turn scaffolding and reflective prompting, while outlining the need for future validation, baseline-controlled analyses, and experimental work.

Full article

Open AccessArticle

Integrating AI Literacy in Chemistry Graduate Education: Harnessing the Power of Transformer-Based Models

by

Yulia V. Sevryugina, Kevyn Collins-Thompson and Nils G. Walter

AI Educ. 2026, 2(2), 14; https://doi.org/10.3390/aieduc2020014 - 4 May 2026

Abstract

►▼

Show Figures

Rapid adoption of general-purpose generative AI (GenAI) tools, such as ChatGPT, is reshaping teaching, learning, and assessment in chemical education. In this study, we expanded the implementation of GenAI tools within an upper-level undergraduate biochemistry course, providing students access to four distinct platforms:

[...] Read more.

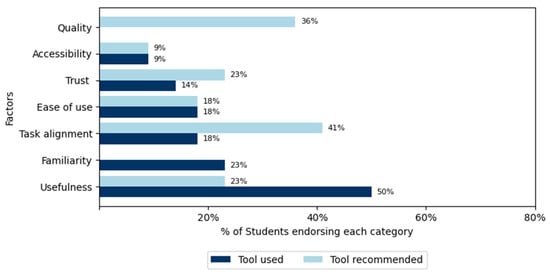

Rapid adoption of general-purpose generative AI (GenAI) tools, such as ChatGPT, is reshaping teaching, learning, and assessment in chemical education. In this study, we expanded the implementation of GenAI tools within an upper-level undergraduate biochemistry course, providing students access to four distinct platforms: commercial chatbots (ChatGPT and LearningClues) and in-house tools developed at the University of Michigan (U-M GPT and U-M Maizey). We analyzed student learning outcomes from GenAI-enhanced writing assignments using pre- and post-surveys. Our results show that integrating GenAI into biochemistry coursework promoted effective and responsible usage, enhanced students’ prompt literacy, built ethical awareness, and increased confidence in utilizing these tools. The study specifically examined factors influencing GenAI acceptance: familiarity, perceived usefulness, ease of use, and trust. Trust emerged as the most significant criterion, with a majority of students recommending in-house chatbots for future cohorts due to strong privacy and ethical standards. Over the last year, we observed a shift in student sentiment from excitement about efficiency to emerging concerns about creativity silencing. This highlights the importance of addressing both capabilities and risks of using AI-tools through teaching AI literacy.

Full article

Figure 1

Open AccessReview

Intelligent Immersion: AI and VR Tools for Next-Generation Higher Education

by

Konstantinos Liakopoulos and Anastasios Liapakis

AI Educ. 2026, 2(2), 13; https://doi.org/10.3390/aieduc2020013 - 1 May 2026

Abstract

Learning is fundamentally human, even as Artificial Intelligence (AI) challenges human exclusivity. AI, along with Virtual Reality (VR), emerges as a powerful tool that is set to transform higher education, the institutional embodiment of this pursuit at its highest level. These technologies offer

[...] Read more.

Learning is fundamentally human, even as Artificial Intelligence (AI) challenges human exclusivity. AI, along with Virtual Reality (VR), emerges as a powerful tool that is set to transform higher education, the institutional embodiment of this pursuit at its highest level. These technologies offer the potential not to replace the human factor, but to enhance our ability to create more adaptive, immersive, and truly human-centric learning experiences, aligning powerfully with the emerging vision of Education 5.0, which emphasizes ethical, collaborative learning ecosystems. This research maps how AI and VR tools act as a disruptive force, examining additionally their capabilities and limitations. Moreover, it explores how AI and VR interact to overcome traditional pedagogy’s constraints, fostering environments where technology serves human learning goals. Employing a comprehensive two-month audit of over 60 AI, VR, and AI-VR hybrid tools, the study assesses their functionalities and properties such as technical complexity, cost structures, integration capabilities, and compliance with ethical standards. Findings reveal that AI and VR systems provide significant opportunities for the future of education by providing personalized and captivating environments that encourage experiential learning and improve student motivation across disciplines. Nonetheless, numerous challenges limit widespread adoption, such as advanced infrastructure requirements and strategic planning. By articulating a structured evaluative framework and highlighting emerging trends, this paper provides practical guidance for educational stakeholders seeking to select and implement AI and VR tools in higher education.

Full article

Open AccessArticle

From Cognitive Necessity to Cognitive Choice: Higher Education Assessment and Learning in the Age of Generative AI

by

Matthew Montebello

AI Educ. 2026, 2(2), 12; https://doi.org/10.3390/aieduc2020012 - 16 Apr 2026

Abstract

►▼

Show Figures

The widespread adoption of generative artificial intelligence in higher education has intensified debates around assessment, authorship, and academic integrity. This paper argues that such debates obscure a more fundamental pedagogical shift, namely, the decoupling of assessment performance from cognitive engagement. Historically, assessment functioned

[...] Read more.

The widespread adoption of generative artificial intelligence in higher education has intensified debates around assessment, authorship, and academic integrity. This paper argues that such debates obscure a more fundamental pedagogical shift, namely, the decoupling of assessment performance from cognitive engagement. Historically, assessment functioned not only as a measure of learning, but also as a structural mechanism that implicitly enforced cognitive engagement. With the advent of GenAI, learners can increasingly produce assessment outputs without necessarily engaging in the cognitive processes traditionally associated with learning. As a result, cognitive engagement has shifted from being a pedagogical necessity to an intentional learner choice. This paper conceptualises this shift as the cognitive engagement gap, wherein successful assessment completion no longer reliably indicates learning or epistemic development. Through a theory-informed conceptual analysis, the paper examines how GenAI reconfigures learning processes, challenges the validity of assessment as a proxy for learning, and exposes long-standing assumptions embedded in assessment-centred pedagogies. In response, the paper proposes a Cognitive Engagement-Centred Assessment (CECA) framework, offering principled guidance for designing assessment that foregrounds cognitive processes, metacognition, and learning assurance in AI-mediated environments. The paper concludes by positioning GenAI not as a threat to assessment, but as a catalyst for more intentional, transparent, and learning-centred pedagogical design.

Full article

Graphical abstract

Open AccessSystematic Review

Pedagogical Use of Responsible Generative AI in Higher Education; Opportunities and Challenges: A Systematic Literature Review

by

Md Zainal Abedin, Ahmad Hayajneh and Bijan Raahemi

AI Educ. 2026, 2(2), 11; https://doi.org/10.3390/aieduc2020011 - 10 Apr 2026

Abstract

►▼

Show Figures

Generative Artificial Intelligence (GenAI) is transforming higher education in terms of pedagogy, student involvement, and academic management. This systematic literature review examines 30 peer-reviewed articles published from 2019 to 2025, adhering to PRISMA 2020 and Kitchenham’s methodologies. Descriptive and thematic analyses highlight five

[...] Read more.

Generative Artificial Intelligence (GenAI) is transforming higher education in terms of pedagogy, student involvement, and academic management. This systematic literature review examines 30 peer-reviewed articles published from 2019 to 2025, adhering to PRISMA 2020 and Kitchenham’s methodologies. Descriptive and thematic analyses highlight five opportunities: (a) tailored and adaptive education; (b) deliberate fostering of critical thinking; (c) enhanced accessibility for varied learners; (d) teaching innovation via multimodal content development and feedback; and (e) collaborative methods that regard AI as a co-teacher. Four ongoing challenge categories also surface: (a) risks to academic integrity; (b) excessive dependence on GenAI that may hinder learner independence; (c) inconsistent faculty preparedness and change-management abilities; and (d) differences in infrastructure and policy both regionally and globally. Intersecting ethical issues, such as data privacy, algorithmic bias, transparency, and accountability, highlight the necessity for governance that aligns with institutional risk and reflects societal values. Analyzing the recent literature, this systematic review offers four contributions: (a) a recommendation model for responsible GenAI implementation in higher education institutions; (b) a framework for sustainable integration of GenAI; (c) a highlight of the future research recommendations; and (d) an integrated policy and pedagogical recommendations roadmap. These models emphasize the integration of AI literacy, ethical considerations, and critical thinking goals into educational programs. The review advocates for a strategic, stakeholder-focused approach to implementation that enhances rather than replaces human instruction, thus connecting GenAI’s educational potential with ethical, context-aware avenues for institutional transformation.

Full article

Figure 1

Open AccessEssay

Mobile AI as Relational Infrastructure: Translating Meaning and Belonging in International Student Onboarding

by

Jimmie Manning, Md Mahmudur Rahman and Ngozi Oguejiofor

AI Educ. 2026, 2(2), 10; https://doi.org/10.3390/aieduc2020010 - 7 Apr 2026

Abstract

Generative artificial intelligence in higher education is typically framed as either a student productivity tool or an institutional disruption. This agenda-setting essay advances a third position: mobile generative AI functions as relational infrastructure—a persistent communicative presence that mediates identity, meaning-making, and belonging

[...] Read more.

Generative artificial intelligence in higher education is typically framed as either a student productivity tool or an institutional disruption. This agenda-setting essay advances a third position: mobile generative AI functions as relational infrastructure—a persistent communicative presence that mediates identity, meaning-making, and belonging during institutional transition. Focusing on international graduate student onboarding, we abductively “think through” two complementary theoretical lenses. Constitutive Artificial Intelligence Identity Theory (CAIIT) conceptualizes AI as a co-constitutive participant in identity formation through recursive communicative feedback loops. Language Convergence/Meaning Divergence (LC/MD) theory explains how shared institutional language masks interpretive gaps across intercultural and bureaucratic contexts. Reading narrative vignettes through these frameworks, we argue that generative AI is neither simple curricular tool nor personal aid, but both relational and organizational infrastructure, redistributing translational, emotional, and interpretive labor in higher education. We outline four design principles for AI-integrated onboarding: distinguish communicative scaffolding from cognitive replacement; design systems that assume meaning divergence; center equity in AI-mediated transitions; and anticipate ethical risk. Reframing AI as relational infrastructure shifts AI-in-education research toward relational accountability and institutional care.

Full article

Open AccessArticle

Using an Ethical Framework to Examine K-12 Leaders’ Perceived Risks About AI

by

Raffaella Borasi, Jonathan Herington, Karen J. DeAngelis, Yu Jung Han, Sharon Mason, Patricia Vaughan-Brogan and David E. Miller

AI Educ. 2026, 2(2), 9; https://doi.org/10.3390/aieduc2020009 - 1 Apr 2026

Abstract

►▼

Show Figures

This article contributes to current debates around the ethics of using AI in K-12 education by extending an ethical framework based on the constructs of wellbeing, autonomy and justice to examine how AI may differentially impact specific stakeholders. Data about K-12 building

[...] Read more.

This article contributes to current debates around the ethics of using AI in K-12 education by extending an ethical framework based on the constructs of wellbeing, autonomy and justice to examine how AI may differentially impact specific stakeholders. Data about K-12 building and district leaders’ perceptions of AI risks were collected during the 2023–24 school year in Western New York as part of an exploratory sequential mixed methods study, which included semi-structured interviews with a diverse group of 36 K-12 leaders, followed by a survey (n = 160). Survey findings confirm K-12 leaders’ widespread recognition, although at varying levels of concern, of AI risks related to (a) students cheating, (b) students’ other questionable AI uses, (c) educators’ questionable AI uses, (d) increasing inequities due to AI, (e) cybersecurity and privacy breaches, and to a much lesser extent, the (f) potential for job replacement. The ethical analysis reveals major differences in the implications of each of these six kinds of AI risk for the wellbeing, autonomy, and justice of K-12 educators, K-12 students, and society, respectively, as well as tensions between competing needs and values, which in turn call for risk-specific strategies as well as inevitable tradeoffs. A comparison with a study of musicians’ perceptions of AI using the same ethical framework reveals interesting similarities and differences in ethical concerns about AI in different fields, suggesting the value of more cross-disciplinary studies.

Full article

Figure 1

Open AccessArticle

Auditing GenAI Literature Search Workflows: A Replicable Protocol for Traceable, Accountable Retrieval in Student-Facing Inquiry

by

Cristo Leon and Michelle Kudelka

AI Educ. 2026, 2(2), 8; https://doi.org/10.3390/aieduc2020008 - 25 Mar 2026

Abstract

►▼

Show Figures

Generative AI systems increasingly mediate how students retrieve literature and generate citations, shifting methodological rigor toward the maintenance of an auditable evidence trail. This study audits the search stage of AI-assisted literature review work, focusing on retrieval performance and citation traceability rather than

[...] Read more.

Generative AI systems increasingly mediate how students retrieve literature and generate citations, shifting methodological rigor toward the maintenance of an auditable evidence trail. This study audits the search stage of AI-assisted literature review work, focusing on retrieval performance and citation traceability rather than downstream screening or synthesis. Four widely accessible tools were compared across two retrieval postures, and Boolean queries were executed against Scopus and evaluated against a DOI-verified librarian baseline built from Scopus, Web of Science, and Google Scholar. Using a canonical prompt and a bounded top-k capture rule (k = 20), each bibliographic record was evaluated for DOI traceability, DOI resolution integrity, metadata accuracy, and run-to-run drift. Records were screened through staged title/abstract and full-text eligibility review, and the final set included 37 studies after quality appraisal was 37 studies. Across sixteen audit runs, natural-language prompting frequently produced under-target yields, recurrent integrity failures, and low overlap with the librarian benchmark. Boolean translation improved run completion and increased the proportion of auditable records, but reproducibility remained unstable across repeated runs. These findings show that correctness at the record level does not ensure stability at the evidence-set level. Limitations include the bounded tool set, the search-stage focus, and the absence of downstream screening or synthesis evaluation. Retrieval posture, therefore, emerges as a practical governance lever for AI-assisted literature review workflows and supports the use of a student-facing verification checklist anchored in DOI verification and transparent protocol capture. This research received no external funding. OSF registration: Open Science Framework, 10.17605/OSF.IO/U8NHT. The manuscript reports the final included set as n = 37, states no external funding, and lists the OSF registration DOI.

Full article

Graphical abstract

Open AccessArticle

Something Old, Something New: WebQuests and GenAI in Teacher Education

by

Peter Tiernan, Enda Donlon, Mahmoud Hamash and James Lovatt

AI Educ. 2026, 2(1), 7; https://doi.org/10.3390/aieduc2010007 - 11 Mar 2026

Abstract

►▼

Show Figures

Generative artificial intelligence (GenAI) has rapidly emerged as a transformative educational technology, raising questions about how educators and pre-service teachers critically engage with AI-produced content. This case study investigates how WebQuests, a long-established, inquiry-based pedagogical model, can foster critical engagement with GenAI tools.

[...] Read more.

Generative artificial intelligence (GenAI) has rapidly emerged as a transformative educational technology, raising questions about how educators and pre-service teachers critically engage with AI-produced content. This case study investigates how WebQuests, a long-established, inquiry-based pedagogical model, can foster critical engagement with GenAI tools. Situated within an initial teacher education programme, a WebQuest, incorporating GenAI sources, was implemented with 24 pre-service language teachers, who engaged with curated resources alongside ChatGPT and Copilot to produce infographics for secondary school audiences. Data were collected through semi-structured interviews and were analysed using Braun and Clarke’s thematic analysis. Findings indicate that scaffolded engagement with GenAI encouraged participants to compare AI-generated outputs with trusted sources, critically evaluate accuracy and reliability, and reflect on integration into their future practice. Whilst pre-service teachers valued GenAI’s accessibility and efficiency, they expressed concerns about clarity, verbosity, and trustworthiness. The WebQuest model effectively supported synthesis of multiple information sources, fostering functional AI engagement and critical evaluation of its affordances and limitations. This case study concludes that integrating GenAI within structured, inquiry-based pedagogies advances digital and AI literacy in initial teacher education, whilst highlighting the need for institutional guidance, professional development, and further research in this area.

Full article

Figure 1

Open AccessArticle

Robust Deep Knowledge Tracing with Out-of-Distribution Detection

by

Riyan Hasan and Yupei Zhang

AI Educ. 2026, 2(1), 6; https://doi.org/10.3390/aieduc2010006 - 9 Mar 2026

Abstract

►▼

Show Figures

Modeling the temporal dynamics of student learning is a central goal in educational data mining. Deep Knowledge Tracing (DKT) has emerged as a key approach, yet existing models are highly sensitive to out-of-distribution (OOD) inputs, such as those arising from curriculum changes, new

[...] Read more.

Modeling the temporal dynamics of student learning is a central goal in educational data mining. Deep Knowledge Tracing (DKT) has emerged as a key approach, yet existing models are highly sensitive to out-of-distribution (OOD) inputs, such as those arising from curriculum changes, new assessment formats, or behavioral noise, which severely degrade predictive reliability. To address this challenge, we propose Energy-Based Out-of-Distribution Deep Knowledge Tracing (EB-OOD DKT), a unified framework that integrates energy-based uncertainty estimation and contrastive representation learning within a transformer-based DKT architecture. The model computes energy scores via the negative log-sum-exponential of prediction logits, serving as confidence indicators for detecting OOD inputs during inference. Additionally, an InfoNCE-based contrastive loss enhances representation robustness by aligning in-distribution samples and separating OOD cases in latent space. Temporal and behavioral context features, such as normalized response intervals and cumulative attempt counts, are incorporated to enrich cognitive-behavioral modeling. Experiments on four public educational datasets demonstrate consistent improvements in prediction accuracy and OOD detection. EB-OOD DKT provides a promising approach for more reliable student modeling across educational platforms with different content distributions.

Full article

Figure 1

Open AccessArticle

A Critical AI Media Literacy Perspective on the Future of Higher Education with Artificial Intelligence Through Communities of Practice on Reddit

by

Olivia G. Stewart

AI Educ. 2026, 2(1), 5; https://doi.org/10.3390/aieduc2010005 - 9 Mar 2026

Cited by 1

Abstract

As artificial intelligence (AI) becomes increasingly integrated into higher education, instructors and institutions face urgent questions about its implications for teaching, learning, and scholarly practice as well as power, agency, and access. This study draws on a critical AI media literacy framework to

[...] Read more.

As artificial intelligence (AI) becomes increasingly integrated into higher education, instructors and institutions face urgent questions about its implications for teaching, learning, and scholarly practice as well as power, agency, and access. This study draws on a critical AI media literacy framework to analyze user-generated discussions in the two largest higher education subreddits on Reddit.com. Through thematic content analysis, I explore faculty perceptions, pedagogical tensions, and imaginative possibilities surrounding AI’s academic role in shaping the current and future landscape of higher education. Findings reveal that discussions of student cheating, AI policies, writing practices, and faculty labor are not merely technical debates but sites where surveillance regimes, accountability structures, and academic precarity are negotiated in real time. Ultimately, I argue that AI in higher education is not simply a technological shift but a structural transformation requiring deliberate, critically informed governance grounded in equity and human agency.

Full article

Open AccessReview

Open-Source Large Language Models in Education: A Narrative Review of Evidence, Pedagogical Roles, and Learning Outcomes

by

Michael Pin-Chuan Lin, Jing-Yuan Huang, Daniel H. Chang, Gerald Tembrevilla, G. Michael Bowen, Eric Poitras, Vasudevan Janarthanan and Jeeho Ryoo

AI Educ. 2026, 2(1), 4; https://doi.org/10.3390/aieduc2010004 - 27 Feb 2026

Abstract

►▼

Show Figures

Open-source large language models (LLMs) are increasingly explored in educational contexts due to their transparency, adaptability, and alignment with institutional governance and equity considerations. Despite growing interest, empirical research on how open-source LLMs are deployed in education and what evidence currently supports their

[...] Read more.

Open-source large language models (LLMs) are increasingly explored in educational contexts due to their transparency, adaptability, and alignment with institutional governance and equity considerations. Despite growing interest, empirical research on how open-source LLMs are deployed in education and what evidence currently supports their integration remains limited and fragmented. This paper presents a state-of-the-art narrative review of peer-reviewed, human empirical studies examining the use of open-source LLMs in education. Guided by three questions, the review synthesizes how open-source LLMs are deployed across instructional contexts, what learner-related evidence is reported, and how teachers engage in human–AI collaboration. The reviewed literature is concentrated in higher education, particularly within computer science and programming domains, with applications focused on post-class tutoring, guidance, and formative feedback. Learner perceptions are generally positive, but evidence linking open-source LLM use to measurable learning outcomes remains emerging and inconsistent. Through interpretive synthesis, the review articulates a four-role model—Designer, Facilitator, Monitor, and Evaluator—that captures how teacher agency is enacted across AI-supported instructional workflows. This review maps recurring orchestration dimensions, decision points, and tensions that characterize early implementations, and it proposes a minimal orchestration reporting scaffold (configuration, boundaries, logging, adjudication) intended to support auditability and cross-study comparison as the empirical base develops.

Full article

Figure 1

Open AccessTutorial

CREDIBLE: A Framework for Critical Source Evaluation—From Information Consumers to Critical Evaluators

by

Zoi A. Traga Philippakos

AI Educ. 2026, 2(1), 3; https://doi.org/10.3390/aieduc2010003 - 9 Feb 2026

Abstract

With the rise of social media and the sharing of information, as well as the use of AI tools like ChatGPT in education, the ability to evaluate information credibility has become a crucial skill. The CREDIBLE framework, standing for Credibility, Reliability, Evidence, Date,

[...] Read more.

With the rise of social media and the sharing of information, as well as the use of AI tools like ChatGPT in education, the ability to evaluate information credibility has become a crucial skill. The CREDIBLE framework, standing for Credibility, Reliability, Evidence, Date, Intent, Bias, Logic, and Expertise, offers a practical, student-friendly approach to source evaluation, especially suited for secondary and postsecondary learners. Unlike models and frameworks designed for higher education, CREDIBLE helps learners critically assess both online and AI-generated content. This paper introduces the framework and explores how educators can embed it into instruction to foster critical thinking, academic integrity, and responsible digital literacy.

Full article

Open AccessArticle

Key Features to Distinguish Between Human- and AI-Generated Texts: Perspectives from University Professors

by

Georgios P. Georgiou

AI Educ. 2026, 2(1), 2; https://doi.org/10.3390/aieduc2010002 - 2 Feb 2026

Abstract

►▼

Show Figures

This study provides direct evidence from university professors’ experiences regarding the key features they use to identify artificial intelligence (AI)–generated texts and ranks these features by their perceived importance. The research was conducted in two phases. In Phase 1, online interviews were used

[...] Read more.

This study provides direct evidence from university professors’ experiences regarding the key features they use to identify artificial intelligence (AI)–generated texts and ranks these features by their perceived importance. The research was conducted in two phases. In Phase 1, online interviews were used to identify the most salient features professors reported using to detect AI-generated texts. In Phase 2, an online survey asked professors to rate the extent to which each identified feature contributes to the successful detection of AI-generated text. The interview data yielded seven features that professors reported using when they suspected a text was AI-generated. Survey ratings varied across features, with hallucinated facts or explanations, nonexistent sources, and the absence of language errors receiving the highest mean ratings in this sample. The use of difficult words received the lowest mean rating. These results have important pedagogical implications, as they can inform the development of more effective detection tools and guide the design of academic integrity policies and instructional strategies to address the challenges posed by AI-generated content.

Full article

Figure 1

Open AccessArticle

Processing Data Visualizations with Seductive Details Using AI-Enabled Analysis of Eye Movement Saliency Maps

by

Kristine Zlatkovic, Pavlo Antonenko, Do Hyong Koh and Poorya Shidfar

AI Educ. 2026, 2(1), 1; https://doi.org/10.3390/aieduc2010001 - 22 Jan 2026

Abstract

►▼

Show Figures

Understanding how learners process data visualizations with seductive details is essential for improving comprehension and engagement. This study examined the influence of task-relevant and task-irrelevant seductive details on attentional distribution and comprehension in the context of data story learning, using COVID-19 data visualizations

[...] Read more.

Understanding how learners process data visualizations with seductive details is essential for improving comprehension and engagement. This study examined the influence of task-relevant and task-irrelevant seductive details on attentional distribution and comprehension in the context of data story learning, using COVID-19 data visualizations as experimental materials. A gaze-based methodology was applied, using eye-movement data and saliency maps to visualize learners’ attentional patterns while processing bar graphs with varying embellishments. Results showed that task-relevant seductive details supported comprehension for learners with higher visuospatial abilities by guiding attention toward textual information, while task-irrelevant details hindered comprehension, particularly for those with lower visuospatial abilities who focused disproportionately on visual elements. Working memory capacity emerged as a significant predictor of attentional distribution. Additionally, repeated exposure to data visualizations enhanced participants’ ability to recognize visualization types, improving efficiency and reducing reliance on legends and supplementary text. Overall, this study highlights the cognitive mechanisms underlying visualization processing in data story learning and provides practical implications for education, human–computer interaction, and adaptive technology design, emphasizing the importance of tailoring visualization strategies to individual learner differences.

Full article

Figure 1

Open AccessArticle

Comparative Study of Gamification Interventions for Enhancing Statistics Learning in AI-Focused Education

by

Hongwei Wang, Deepak Ganta, Maria Vasquez and Khaled Enab

AI Educ. 2025, 1(1), 5; https://doi.org/10.3390/aieduc1010005 - 12 Dec 2025

Abstract

►▼

Show Figures

Statistical education is a crucial yet often overlooked aspect of AI in higher education. However, traditional approaches usually focus heavily on procedural knowledge, leaving students anxious about statistics and less confident in applying concepts to real-world problems. This study examines a method that

[...] Read more.

Statistical education is a crucial yet often overlooked aspect of AI in higher education. However, traditional approaches usually focus heavily on procedural knowledge, leaving students anxious about statistics and less confident in applying concepts to real-world problems. This study examines a method that enhances statistical learning outcomes by integrating data visualization and gamification strategies. Students were randomly assigned to either a control group (CG) or an intervention group (IG), and each group was further divided into teams. The curriculum was enhanced in a college statistics course offered for both engineering and math majors. IG students applied data visualization and gamification in a hands-on group project aimed at solving a real-world problem and competed as they presented their results. The effectiveness of this approach was assessed through statistical analyses comparing the performance of IG and CG in surveys, final grades, and project grades. The results from evaluation methods indicated that IG students outperformed CG students, demonstrating a positive impact of gamification on statistics education.

Full article

Figure 1

Open AccessArticle

STEM Undergraduates’ Perceptions of AI Chatbots: A Cross-Sectional Descriptive Survey

by

Kamalanathan Kajan, Wenyuan Shi and Dariusz Wanatowski

AI Educ. 2025, 1(1), 4; https://doi.org/10.3390/aieduc1010004 - 18 Nov 2025

Cited by 2

Abstract

►▼

Show Figures

We surveyed 297 STEM undergraduates at a single English-medium Sino–UK joint institution to document perceptions of AI chatbots for learning. Students reported high willingness to adopt AI chatbots (78%; 95% CI: 73.1–82.4) alongside concerns about over-reliance (67%; 95% CI: 61.4–72.1), content quality (52%;

[...] Read more.

We surveyed 297 STEM undergraduates at a single English-medium Sino–UK joint institution to document perceptions of AI chatbots for learning. Students reported high willingness to adopt AI chatbots (78%; 95% CI: 73.1–82.4) alongside concerns about over-reliance (67%; 95% CI: 61.4–72.1), content quality (52%; 95% CI: 46.2–57.5), and reduced human interaction (42%; 95% CI: 36.5–47.8). Over half (52%; 95% CI: 46.3–57.7) requested language/terminology support features, whereas only 16.8% reported language-related barriers. We attempted exploratory factor analysis and k-means clustering, but neither met the inclusion criteria; therefore, we report item-level frequencies only. The findings are descriptive and not generalisable (53% first-year, 80% male convenience sample). These patterns generate testable hypotheses about verification scaffolds, language support utility, and human–AI balance that warrant investigation through controlled studies.

Full article

Figure 1

Open AccessArticle

AI, Ethics, and Cognitive Bias: An LLM-Based Synthetic Simulation for Education and Research

by

Ana Luize Bertoncini, Raul Matsushita and Sergio Da Silva

AI Educ. 2025, 1(1), 3; https://doi.org/10.3390/aieduc1010003 - 4 Oct 2025

Cited by 1

Abstract

►▼

Show Figures

This study examines how cognitive biases may shape ethical decision-making in AI-mediated environments, particularly within education and research. As AI tools increasingly influence human judgment, biases such as normalization, complacency, rationalization, and authority bias can lead to ethical lapses, including academic misconduct, uncritical

[...] Read more.

This study examines how cognitive biases may shape ethical decision-making in AI-mediated environments, particularly within education and research. As AI tools increasingly influence human judgment, biases such as normalization, complacency, rationalization, and authority bias can lead to ethical lapses, including academic misconduct, uncritical reliance on AI-generated content, and acceptance of misinformation. To explore these dynamics, we developed an LLM-generated synthetic behavior estimation framework that modeled six decision-making scenarios with probabilistic representations of key cognitive biases. The scenarios addressed issues ranging from loss of human agency to biased evaluations and homogenization of thought. Statistical summaries of the synthetic dataset indicated that 71% of agents engaged in unethical behavior influenced by biases like normalization and complacency, 78% relied on AI outputs without scrutiny due to automation and authority biases, and misinformation was accepted in 65% of cases, largely driven by projection and authority biases. These statistics are descriptive of this synthetic dataset only and are not intended as inferential claims about real-world populations. The findings nevertheless suggest the potential value of targeted interventions—such as AI literacy programs, systematic bias audits, and equitable access to AI tools—to promote responsible AI use. As a proof-of-concept, the framework offers controlled exploratory insights, but all reported outcomes reflect text-based pattern generation by an LLM rather than observed human behavior. Future research should validate and extend these findings with longitudinal and field data.

Full article

Figure 1

Open AccessArticle

Student Perceptions of AI-Assisted Writing and Academic Integrity: Ethical Concerns, Academic Misconduct, and Use of Generative AI in Higher Education

by

Brady Lund, Nishith Reddy Mannuru, Zoë Abbie Teel, Tae Hee Lee, Nathanlie Jugan Ortega, Sara Simmons and Evelyn Ward

AI Educ. 2025, 1(1), 2; https://doi.org/10.3390/aieduc1010002 - 2 Sep 2025

Cited by 12

Abstract

The rise of generative AI in higher education has disrupted our traditional understandings of academic integrity, moving our focus from clear-cut infractions to evolving ethical judgment. In this study, a survey of 401 students from major U.S. universities provides insight into how beliefs,

[...] Read more.

The rise of generative AI in higher education has disrupted our traditional understandings of academic integrity, moving our focus from clear-cut infractions to evolving ethical judgment. In this study, a survey of 401 students from major U.S. universities provides insight into how beliefs, behaviors, and policy awareness intersect in shaping how students interact with AI-assisted writing. The findings indicate that students’ ethical beliefs—not institutional policies—are the strongest predictors of perceived misconduct and actual AI use in writing. Policy awareness was found to have no significant effect on ethical judgments or behavior. Instead, students who believe AI writing is cheating were found to be substantially less likely to view it as ethical or engage with it. These findings suggest that many students do not treat AI use in learning activities as an extension of conventional cheating (e.g., plagiarism), but rather as a distinct category of academic conduct/misconduct. Rather than using punitive models to attempt to punish students for using AI, this study suggests that education about AI ethics and the risk of AI overreliance may prove more successful for curbing unethical AI use in higher education.

Full article

Open AccessEditorial

AI in Education: Towards a Pedagogically Grounded and Interdisciplinary Field

by

Savvas A. Chatzichristofis

AI Educ. 2025, 1(1), 1; https://doi.org/10.3390/aieduc1010001 - 28 Aug 2025

Cited by 1

Abstract

The rapid expansion of Artificial Intelligence in Education (AIED) has created both remarkable opportunities and pressing concerns. Applications of intelligent tutoring systems, learning analytics, generative models, and educational robotics illustrate the transformative momentum of the field, yet they also raise fundamental questions regarding

[...] Read more.

The rapid expansion of Artificial Intelligence in Education (AIED) has created both remarkable opportunities and pressing concerns. Applications of intelligent tutoring systems, learning analytics, generative models, and educational robotics illustrate the transformative momentum of the field, yet they also raise fundamental questions regarding ethics, equity, and sustainability. The mission of AI in Education (MDPI) is to provide a rigorous, interdisciplinary, and inclusive platform where these debates can unfold. The journal bridges pedagogy and engineering, welcomes both empirical evidence of positive impacts and critical examinations of systemic risks, and advances responsible innovation in real educational settings. By integrating methodological standards, governance perspectives, and pedagogical ethics, including teacher-centered validation approaches, AI in Education positions itself as a space for constructive dialogue that values both enthusiasm and critique. Above all, the journal is committed to a human-centered vision for AIED, so that innovation in classrooms remains grounded in care, responsibility, and educational purpose.

Full article

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics