Abstract

This paper identifies theoretical vulnerabilities in quantum integrity verification by demonstrating that Bell inequality (BI) violations, central to the detection of quantum entanglement, can align with predictions from hidden variable theories (HVTs) under specific measurement configurations. By invoking a Heisenberg-inspired measurement resolution constraint and finite-resolution positive operator-valued measures (POVMs), we identify “convergence vicinities” where the statistical outputs of quantum and classical models become operationally indistinguishable. These results do not challenge Bell’s theorem itself; rather, they expose a vulnerability in quantum integrity frameworks that treat observed Bell violations as definitive, experiment-level evidence of nonclassical entanglement correlations. We support our theoretical analysis with simulations and experimental results from IBM quantum hardware. Our findings call for more robust quantum-verification frameworks, with direct implications for the security of quantum computing, quantum-network architectures, and device-independent cryptographic protocols (e.g., device-independent quantum key distribution (DIQKD)).

1. Introduction

The trustworthiness of quantum systems is critically dependent on the ability to verify nonclassical behavior through observed violations of Bell inequalities (BI) [1,2,3]. In the idealized setting of Bell’s theorem, such violations exclude all Bell-local hidden variable explanations under the standard assumptions (locality, realism, and measurement independence) [4]. For this reason, Bell-test statistics have become a central operational primitive in tasks ranging from entanglement verification to DIQKD [5,6,7].

In practical integrity tests, however, the key issue is not Bell’s theorem itself but the way Bell violations are measured, thresholded, and trusted under finite experimental precision. Real devices implement measurement settings with nonzero calibration error, drift, and finite angular or basis resolution. Moreover, verification pipelines often operate near a narrow set of nominally “optimal” configurations rather than across the full configuration space. These constraints motivate a concrete question: when does a Bell-based integrity check become operationally non-discriminating, i.e., unable to reliably distinguish between genuine quantum behavior and a classical adversary exploiting finite precision?

This work analyzes two complementary mechanisms that create such non-discriminating regimes:

- Honest-device finite precision: finite-resolution POVM effects and hardware noise compress the observed Clauser–Horne–Shimony–Holt (CHSH) value toward the classical threshold, reducing the statistical gap between quantum and classical models. This paper assumes communication with an honest device addressing vulnerabilities communicating with that device but does not address the integrity of the device itself; i.e., one is communicating with the desired device.

- Adversarial finite-resolution exploitation: if finite setting resolution permits setting-dependent coarse graining (equivalently, limited measurement independence/setting control), then classical strategies can reproduce Bell-test statistics that would otherwise be interpreted as evidence of entanglement. This corresponds to a known loophole class in the Bell literature (measurement dependence/setting control) rather than a refutation of Bell’s theorem [8,9,10].

We call the resulting near-indistinguishable regions of configuration space convergence vicinities. These are regions where either (i) honest quantum behavior becomes statistically close to Bell-local baselines due to finite precision, or (ii) adversarial setting-dependent coarse graining enables classical mimicry within operational acceptance thresholds. This creates a vulnerability for integrity frameworks that treat a single Bell witness (e.g., CHSH) as definitive experiment-level evidence of nonclassicality.

The main novelties of this paper are (i) an explicit operational definition of convergence vicinities using distributional distance and CHSH-level tolerances; (ii) a unified treatment of finite-resolution measurements via POVMs and their classical coarse-grained analogues; and (iii) a security-focused interpretation that maps convergence vicinities onto concrete verification and DIQKD acceptance logic, including the effect of shot budgets and device drift.

We structure this work as follows: Section 2 reviews CHSH tests, clarifies which Bell assumptions are used, and formalizes convergence vicinities. Section 3 defines the simulation models and operational indistinguishability criteria. Section 4 presents simulation results, and Section 5 validates key observations using IBM quantum hardware and analyzes implications for DIQKD-style acceptance thresholds. Section 6 concludes with mitigation directions, including multi-witness testing and dynamic angle randomization.

2. Theoretical Framework

This section introduces the theoretical constructs underpinning our analysis: Bell inequalities, local hidden variable theories (HVTs), and the Heisenberg Uncertainty Principle (HUP) [11,12]. We establish the mathematical structure used to examine convergence scenarios between quantum predictions and classical probabilistic outcomes under constrained uncertainty.

2.1. Bell Inequalities and Quantum Nonlocality

Bell inequalities quantify the statistical limits of correlations in systems governed by local realism [2]. In their most widely used form, the Clauser–Horne–Shimony–Holt (CHSH) inequality constrains the outcomes of bipartite measurements performed on spatially separated systems [3]. For two observers, Alice and Bob, each choosing between two observables ( and ), the CHSH parameter is defined as

where denotes the expectation value of joint outcomes. Classical local hidden variable theories impose the bound , while quantum mechanics allows violations up to under optimal entanglement [1,13].

2.2. Hidden Variable Models and Measurement Configuration

A hidden variable model assumes that outcomes of measurements are predetermined by a set of underlying variables , drawn from a probability distribution [2]. The observable correlations are computed as

These models can, in some configurations, closely approximate quantum predictions depending on the choice of and deterministic response functions [4]. Our analysis identifies a subset of measurement vector configurations for which this classical approximation converges to the quantum violation regime.

2.3. Heisenberg-Inspired Resolution as an Operational Constraint

In quantum mechanics, the Heisenberg Uncertainty Principle (HUP) bounds the simultaneous sharpness of incompatible observables, e.g., [14,15]. In this work, we do not assume that the HUP literally constrains classical hidden variables. Instead, we use it as motivation for an operational statement: real experiments have finite setting resolution and finite detector/estimation resolution, so the implemented measurement differs from the commanded idealization.

A standard and rigorous way to model finite-resolution measurements is via POVMs (unsharp or coarse-grained measurements) [16]. For a qubit measurement along a Bloch-sphere direction (restricted to a plane for simplicity), the ideal projective measurement is described by POVM elements, as follows:

Finite angular resolution can be represented by a smeared POVM, as follows:

where is a normalized resolution kernel (e.g., boxcar or Gaussian) of characteristic width . For symmetric kernels, the smeared measurement is equivalent to an “unsharp” measurement with an effective sharpness factor, as follows:

so that expectation values are reduced by in the measurement plane.

This immediately yields a kernel-sensitivity statement: different kernels of comparable width produce similar effects at leading order because the reduction is governed by (i.e., primarily by the kernel’s low-order moments). For example, a boxcar kernel gives , while a Gaussian of variance gives . Thus, qualitative “convergence” behavior is not an artifact of choosing a boxcar kernel.

In the classical simulations below, we introduce an analogous finite-resolution parameter (denoted ) where coarse-grains response functions. The role of is epistemic/operational: it captures finite resolution in the implemented settings and in the estimator, not a fundamental ontic limitation on classical variables.

2.4. Convergence Vicinities and Probabilistic Geometry

We define a convergence vicinity as a region in measurement-configuration space where the classical (coarse-grained) and quantum models are operationally indistinguishable at the level of observable statistics.

Let and denote Alice’s and Bob’s setting choices, and let denote outcomes. Let and denote the quantum and coarse-grained classical joint distributions (parameterized by the resolution parameter ). We use the total variation (TV) distance, as follows:

We also consider CHSH-level indistinguishability via

A configuration belongs to a convergence vicinity if it satisfies both a witness-level tolerance and a distribution-level tolerance, e.g.,

In the main text, we use as a conservative and easily interpretable criterion, and we report the distribution-level metric in the revised discussion (Section 5) to clarify when “CHSH-close” also means “distribution-close”.

Operationally, convergence vicinities arise when finite resolution and/or noise compresses observed quantum statistics toward the classical region, and when adversaries can exploit finite setting resolution to induce setting-dependent coarse graining. These effects do not overturn Bell’s theorem; they highlight that integrity verification depends on experimental resolution and on which Bell premises are guaranteed in the implementation.

2.5. Which Bell Assumptions Are Modified in the Threat Model

The standard CHSH bound holds for Bell-local models under (at least) the following conditions: locality (parameter independence and outcome independence), binary outcomes, no post-selection, and measurement independence (also called settings independence or “free choice”), i.e., the hidden-variable distribution is independent of the chosen settings .

In our baseline classical simulation (Section 3.2), we assume measurement independence and no post-selection; therefore, the Bell-local bound applies and . Any “classical violation” would indicate that at least one Bell premise has been relaxed or that a numerical/estimation artifact is present.

The security-relevant threat model considered here is that finite setting resolution enables setting-dependent coarse graining equivalently, limiting measurement independence or setting control, so that the effective sampling of hidden variables can depend weakly on the nominal setting pair, i.e., . In that case, the standard CHSH derivation does not apply and relaxed Bell inequalities bound S as a function of the degree of measurement dependence [8,9,10].

3. Methods

This section details the methodology used to construct convergence scenarios between hidden variable theories (HVTs) and quantum mechanical predictions within Bell inequality experiments. We define how classical response functions are synthesized, describe the angular measurement strategy, and introduce uncertainty-constrained sampling that allows mimicry of quantum correlations.

3.1. Parameterizing the Measurement Space

To explore the overlap between classical and quantum regimes, we fix a two-party CHSH configuration with dichotomic measurements and on a shared state. Each observable measurement is associated with a unit vector in an abstract angle space: [3]. The angles between these vectors determine the expectation value structure. In particular, we vary across a discrete grid and monitor the CHSH parameter S to identify candidate regions for classical-quantum convergence.

3.2. Constructing Hidden-Variable Simulations

We define local deterministic response functions by thresholding continuous hidden variables [2,17], as follows:

where we take for and for . The set of points where has measure zero in the continuum integral; for numerical stability, we use the convention above (so ). The case is non-singular and simply yields outcome .

The response functions are integrated over using a piecewise-constant approximation of , assuming either uniform or modulated priors. The integral is numerically computed to yield each term in the CHSH sum. Closed-form expressions for this baseline deterministic model and the corresponding CHSH bound under measurement independence are provided in Appendix A.

3.3. Implementing Finite-Resolution (HUP-Inspired) Coarse Graining

To incorporate finite measurement precision into the classical simulation, we introduce a dimensionless resolution parameter where coarse-grains response functions over neighborhoods in the hidden-variable phase. Concretely, we use a normalized kernel (default: boxcar) and define smoothed responses, as follows:

with circular boundary conditions on .

Physical interpretation of . Because the deterministic responses depend on the phase , coarse graining in is equivalent to coarse graining in angle. A window of width in corresponds to an effective angular resolution, as follows:

Thus, can be interpreted as a compact parameter that captures finite basis resolution, calibration drift, and effective estimator coarse graining. This is “HUP-inspired” in the sense of modeling an operational resolution floor, not in the sense that classical ontic variables obey quantum uncertainty relations.

Justification of the sweep range. We sweep for two reasons. First, the lower bound is chosen to be comfortably above the discretization step of the hidden-variable grid used in simulations (here ), ensuring that the kernel is resolved numerically. Second, the upper bound spans a regime from near-projective behavior (small ) to strong coarse graining (large ), covering angular resolutions from to via Equation (11).

The effective expectation becomes

where is uniform in the baseline (measurement-independent) classical model. In the adversarial threat model (Section 2.5), finite setting resolution can induce weak setting dependence, i.e., , which is analyzed via relaxed Bell bounds in Appendix B.

3.4. When Can a Classical Model Yield ? (CHSH Proof vs. Relaxed Premises)

For deterministic Bell-local models with binary outputs, the CHSH inequality follows from a pointwise bound. For each hidden variable , define

Since , one can write

and because , it follows that for all . Under measurement independence (a single setting-independent ) and no post-selection, the observed CHSH value is

hence, .

Therefore, any report of from a classical model requires at least one relaxed premise: e.g., setting-dependent sampling (measurement dependence/setting control), post-selection/detection inefficiency, or nonlocal/contextual dependence of one party’s outcome on the other party’s setting. In this paper, we focus on the setting-dependent coarse-graining interpretation motivated by finite setting resolution. Under measurement dependence, the CHSH expression is a sum of correlators evaluated under a different effective , so the step that combines terms into a single integral no longer applies.

3.5. Defining the Convergence Vicinity Criterion

We quantify CHSH-level indistinguishability via

If , the configuration is classified as belonging to a convergence vicinity (see also the distribution-level definition in Equation (8)).

In this study, we adopt as a pragmatic operational tolerance for visualizing convergence in parameter scans. To relate to experimentally meaningful error budgets, note that if each correlator is estimated from N shots with binary outcomes, then a conservative bound on the standard deviation is . Assuming independent estimates for the four correlators,

Thus, for shots per correlator, , while for shots, . In security-critical settings (e.g., DIQKD), additional systematic contributions from calibration drift, device aging, and adversarial perturbations can dominate shot noise, motivating conservative acceptance margins that are typically larger than the purely statistical bound [9].

3.6. Simulation Parameters and Implementation

Simulations were performed using Python (version 3.12.7, 2024) with NumPy (version 2.0.2, 2024) and Matplotlib (version 3.9.2, 2024) for numerical integration and visualization. Measurement angles and were sampled at resolution in the range . Hidden variables were discretized into points, and uncertainty windows were swept logarithmically from to [18]. A simulation code is available from the corresponding author upon request.

4. Results

This section presents the empirical and simulation results supporting our theoretical claim that hidden variable theories (HVTs), when constrained by Heisenberg-like uncertainty, can converge with quantum predictions in Bell-type experiments. We analyze multiple measurement configurations, aligned, orthogonal, and random, to demonstrate this convergence and explore its security implications.

4.1. Simulation of CHSH Parameter Under Angular Variation

We simulated CHSH experiments using the Qiskit AerSimulator [19] and IBM Q’s real hardware [20], employing measurement angles and spanning the full range at resolution. The CHSH parameter S was computed across this configuration space under both quantum mechanical and HVT-constrained models, incorporating Heisenberg-inspired resolution averaging, as described in Section 3.3.

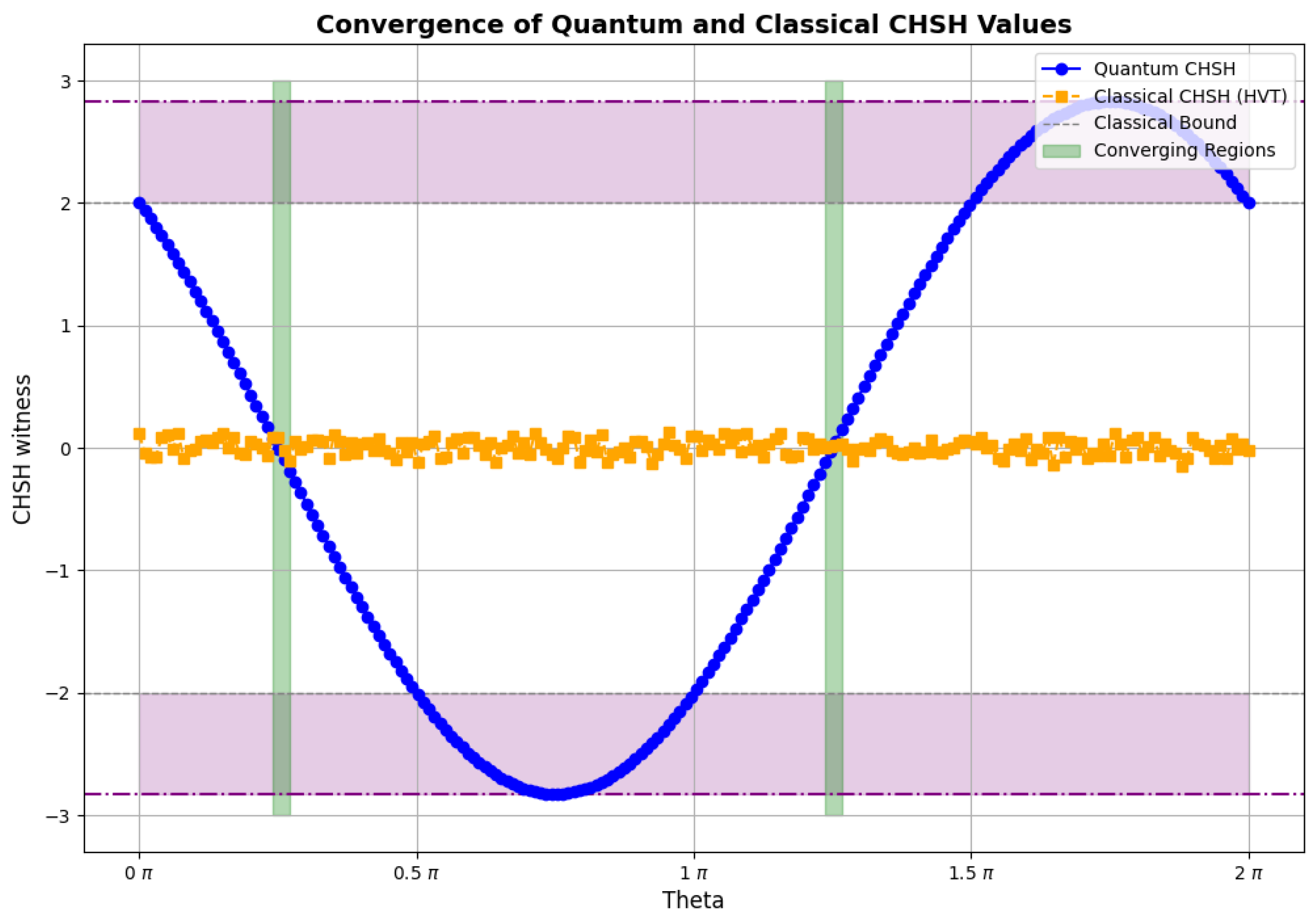

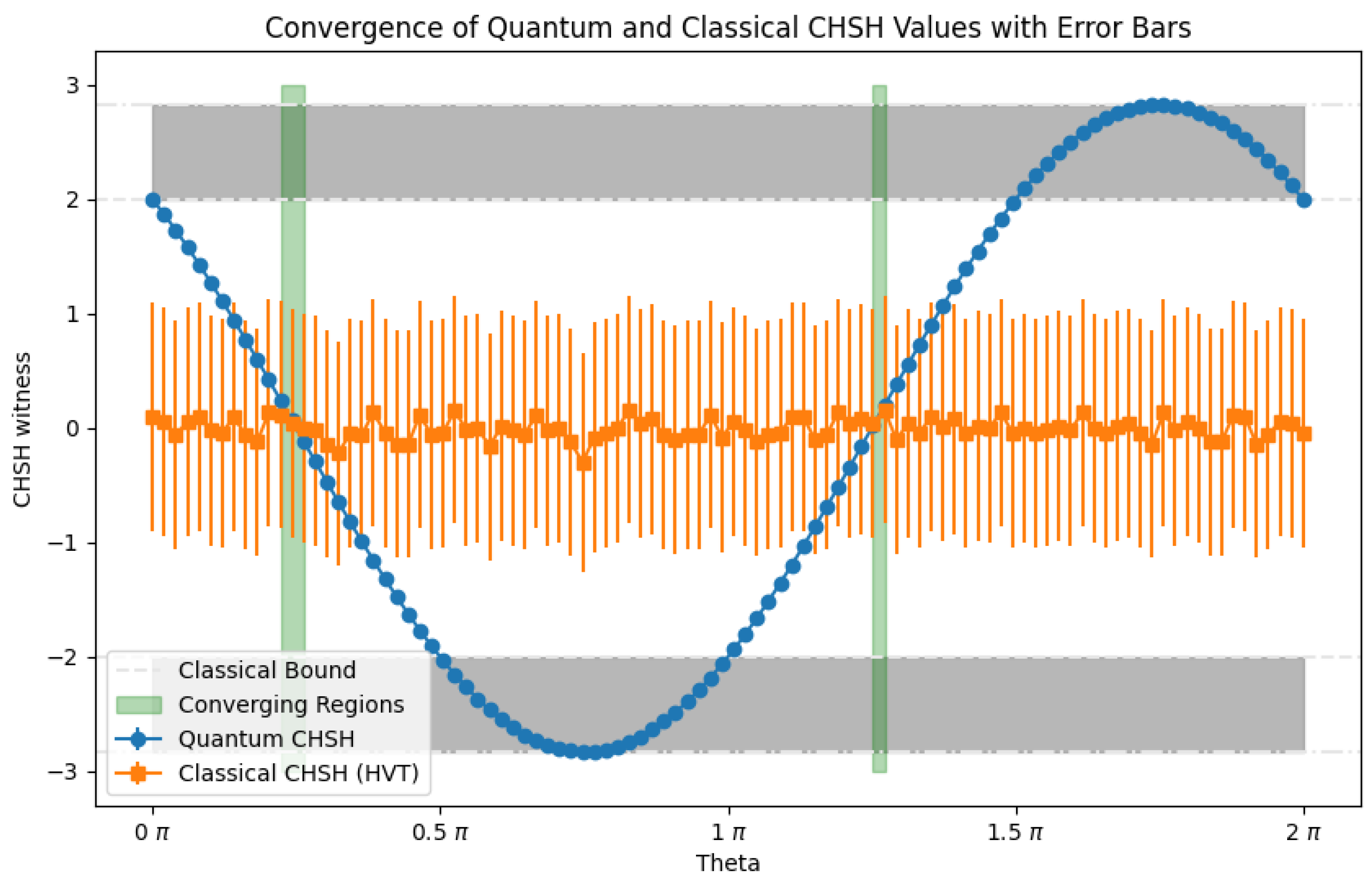

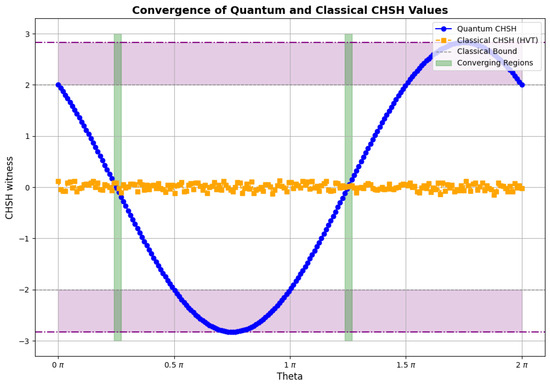

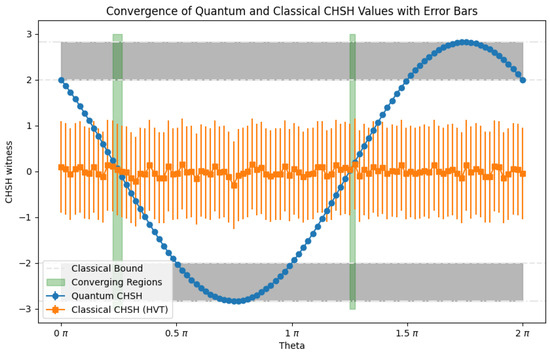

As shown in Figure 1 and Figure 2, adapted from our previous work [21], we identified distinct “convergence vicinities”, angular intervals where classical HVT predictions (orange curve) nearly replicate the quantum values (blue curve). In these zones, the simulated CHSH values under HVT assumptions reach up to , closely tracking the quantum violations up to , particularly when uncertainty radius . This numerical behavior confirms our theoretical prediction of near indistinguishability between classical and quantum statistics within certain measurement intervals. The corresponding verification-threat model used to interpret these results is summarized in Figure 3.

Figure 1.

Numerical simulations of the CHSH witness S as a function of the scanned measurement-angle parameter. Blue curve: ideal noise-free quantum prediction (computed from the quantum model; in our implementation via noiseless simulation/analytic expectation). Orange curve: coarse-grained classical model with resolution parameter (Section 3.3). Green-shaded regions indicate “convergence vicinities”, where the two models are indistinguishable within the operational tolerance . All results shown here are classical computations (no quantum hardware data are used in this figure).

Figure 2.

CHSH witness S as a function of the scanned measurement-angle parameter with sampling variability. Blue curve: ideal noise-free quantum prediction. Orange curve: coarse-grained classical model. Error bars indicate finite-shot (finite-sample) variability. Green-shaded regions highlight angles where the curves overlap within that variability. As in Figure 1, these are simulations rather than quantum hardware results.

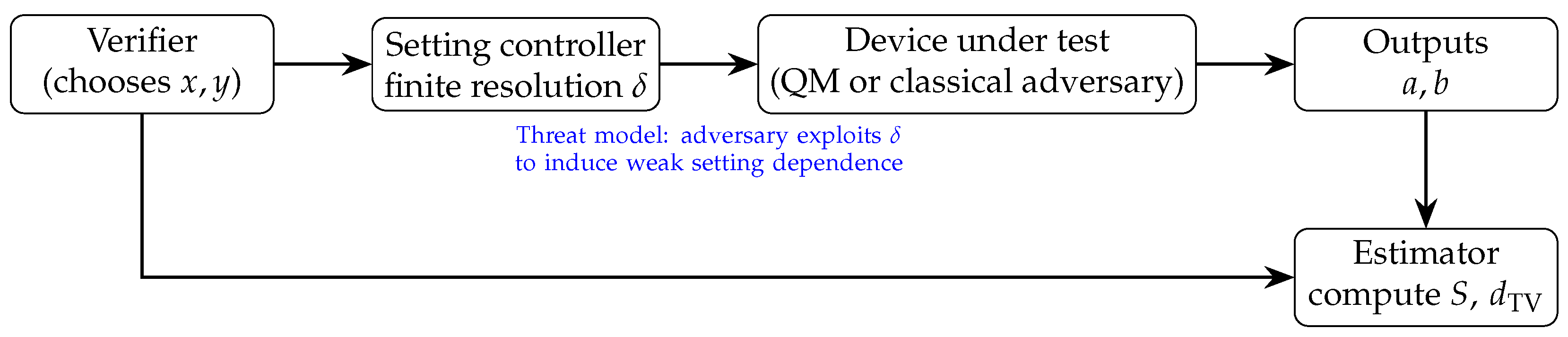

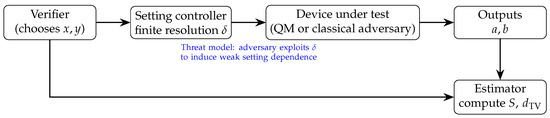

Figure 3.

System model for Bell-based integrity verification. Finite resolution can reduce quantum violations for an honest device and, under adversarial setting control, can induce setting-dependent coarse graining (measurement dependence), under which relaxed Bell bounds apply.

4.2. Convergence Patterns Across Measurement Configurations

Table 1 from [21] summarizes the correlation function behavior under different vector configurations. Aligned directions yield maximal correlations for both quantum and HVT models. Orthogonal settings predict null correlations in both regimes. Critically, in the “vicinity” of these configurations, defined by small perturbations of measurement vectors constrained by the Heisenberg Uncertainty Principle, the outcome distributions remain nearly identical across quantum and classical models, as also explored in [17].

Table 1.

Correlation function predictions for key measurement configurations. Both quantum and classical models display convergence within Heisenberg-defined vicinities (adapted with permission from Rosas-Bustos et al., 2024 [21]).

These findings illustrate that violations of Bell inequalities are not uniquely quantum under such constraints, an insight aligned with earlier loophole analyses [4,17] and extended here under Heisenberg-constrained HVT scenarios.

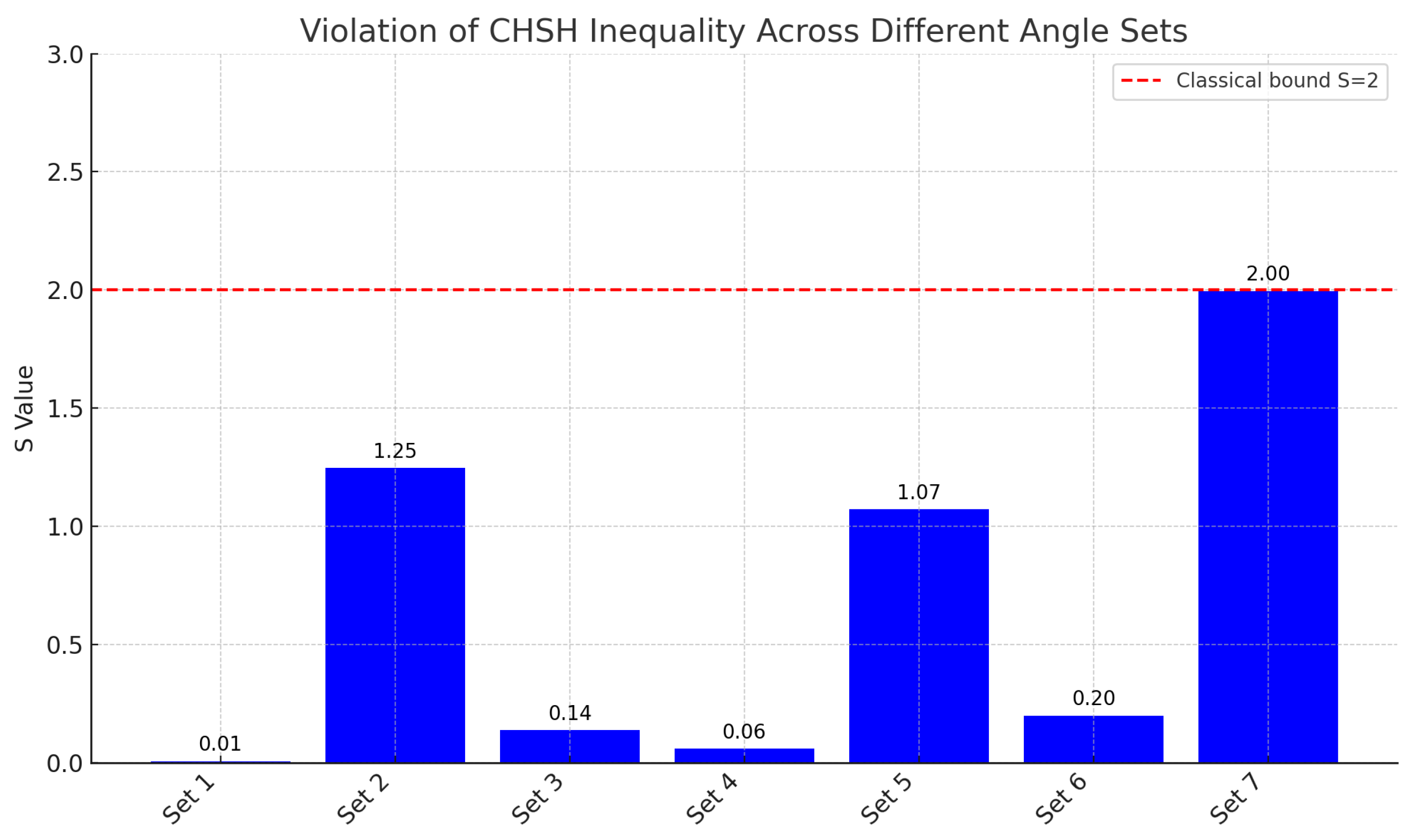

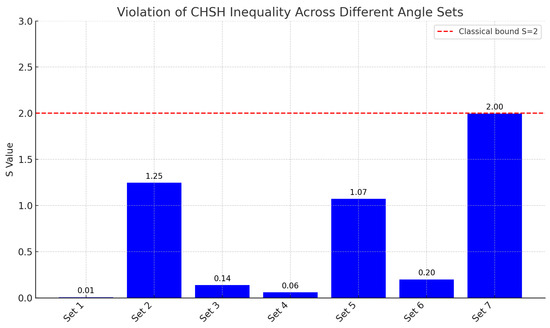

4.3. Quantum Hardware Validation and Noise Considerations

To validate the simulated CHSH violations under real-world conditions, we used Qiskit to program and execute the CHSH circuits on IBM’s publicly accessible quantum backends [18]. As expected, the experimentally measured S values were suppressed relative to their noiseless simulation counterparts, owing to cumulative effects from gate infidelities, decoherence, and readout noise.

As shown in Figure 4, even angle sets that theoretically produce maximal CHSH violations failed to consistently exceed the classical bound of . This result underscores a crucial limitation: in realistic hardware, the statistical signatures of entanglement may fall within classical tolerances, making convergence regions between quantum and classical models practically undetectable. This challenge is in line with findings from recent superconducting Bell tests that highlight loophole sensitivities in noisy quantum devices [22].

Figure 4.

CHSH inequality results from IBM quantum hardware. Despite the use of theoretically optimal entanglement angles, none of the tested configurations surpass the classical threshold (), underscoring the significant influence of quantum hardware noise and decoherence. Angle sets correspond to discrete angular configurations intended to probe maximum and near-maximum violation regimes under experimental conditions (adapted with permission from Rosas-Bustos et al., 2024 [21]).

4.4. Security Implications for DIQKD and Integrity Protocols

The identified convergence zones, particularly under aligned or nearly aligned configurations, constitute a new class of vulnerabilities. They may allow adversaries to emulate quantum entanglement with classically engineered statistics in device-independent QKD (DIQKD) protocols, effectively bypassing entanglement verification tests without triggering integrity alarms [5,7].

Our findings necessitate a re-examination of measurement strategies in quantum cryptography and propose that additional entanglement witnesses or hybrid certification mechanisms be deployed to detect such subtle convergence-based threats.

5. Discussion

The results presented here reveal a subtle but consequential challenge to the conventional interpretation of Bell inequality violations as unambiguous indicators of quantum entanglement. By incorporating Heisenberg-like uncertainty constraints into classical hidden variable simulations, we identified non-trivial regions, termed convergence vicinities, where classical and quantum statistical predictions become nearly indistinguishable. These findings contribute to a growing body of work that questions the robustness of quantum verification in device-independent settings, especially when noise, measurement resolution, and loophole sensitivities are taken into account [17,22,23].

From a foundational perspective, the existence of convergence vicinities calls for a re-evaluation of the boundary between classical and quantum regimes. While Bell’s theorem formally excludes local realism under ideal assumptions, our results suggest that, under realistic constraints, such as finite detector resolution and angular uncertainty derived from the Heisenberg Uncertainty Principle (HUP), this distinction may blur in operational practice. This is not a refutation of quantum nonlocality, but rather a demonstration that its empirical detection depends sensitively on how precisely one can resolve measurement settings and isolate systems from noise.

5.1. Mapping Convergence Vicinities to Known Loophole Classes

Convergence vicinities can be interpreted within the established loophole taxonomy [17]. In the honest-device regime, finite-resolution POVMs and hardware noise compress toward the classical threshold, reducing discriminative power even when Bell premises hold. In the adversarial regime, finite setting resolution can enable setting-dependent coarse graining (measurement dependence/setting control), i.e., , for which relaxed Bell inequalities bound S as a function of the measurement-dependence degree [8,9,10,24]. This is conceptually distinct from detection-loophole post-selection models [25,26], but it is operationally similar in the sense that a verification pipeline can accept statistics that do not uniquely certify nonclassicality unless the relevant premise (here, setting independence) is enforced.

5.2. How the Tolerance Scales with Device and Security Parameters

The operational tolerance determines the practical “attack surface” of a convergence vicinity. Even when shot-noise bounds are small, systematic contributions from calibration drift, crosstalk, and adversarial perturbations can dominate. In DIQKD-style settings, security proofs introduce additional finite-size terms and confidence parameters, so the effective acceptance margin on S can be significantly larger than the naive sampling error. Therefore, while we use for visualization in scans, a conservative deployment should treat the effective operational tolerance as a device- and protocol-dependent quantity that must include systematic margins and worst-case adversarial modeling.

5.3. DIQKD Case Study: CHSH-Based Acceptance Under Finite Resolution

Consider a standard CHSH-based DIQKD protocol family [5] in which the parties estimate a CHSH value from finite samples and proceed only if . In the ideal device-independent model, bounds Eve’s information via the observed nonlocality. However, if finite setting resolution permits weak setting dependence (measurement independence is partially lost), then a classical adversary can reproduce while retaining full knowledge of the output bits.

A quantitative way to express this is via relaxed Bell bounds. For example, Hall constructs deterministic locally causal models with measurement dependence parameter M for which the CHSH value can reach

under his M-definition [8]. Thus, an observed “weak violation” such as is consistent with a locally causal model with only a small measurement-dependence budget . Operationally, if setting control at the resolution scale permits correlations between the implemented settings and internal device variables, then acceptance based only on may not guarantee secrecy. This motivates integrity tests that (i) enforce high-quality, side-channel-resistant randomness for settings; (ii) randomize measurement angles dynamically; and (iii) include additional cross-checks beyond a single witness (e.g., multiple observables, distribution-level metrics, and consistency checks across setting subsets).

From a practical perspective, especially in the context of quantum cryptography and quantum computing, these results raise urgent questions regarding the reliability of quantum integrity verification. Device-independent quantum key distribution (DIQKD) protocols, for instance, hinge critically on the assumption that any observed violation of a Bell inequality signals genuine quantum entanglement between untrusted devices [5,7]. The demonstrated ability of classical models, when constrained by HUP-informed resolution, to produce near-identical CHSH statistics within these bounded vicinities underscores a potential exploit whereby adversaries mimic entanglement. This indistinguishability could be exploited by adversaries to simulate entanglement using classical resources.

Furthermore, our quantum hardware validation shows that noise and decoherence exacerbate these concerns. Even under theoretically optimal configurations, real quantum devices often fail to demonstrate Bell violations exceeding the classical bound, especially as system size or complexity increases. This implies that even well-intentioned implementations may inadvertently operate within convergence zones, mistakenly interpreting classical mimicry as quantum behavior.

Given these findings, several implications arise for the design of secure quantum protocols. First, reliance on a single statistical witness, such as the CHSH parameter, may be insufficient for robust entanglement verification in adversarial environments. Second, additional observables or joint statistical tests that incorporate temporal correlations or cross-device symmetries could provide better discrimination between classical and quantum origins. Third, protocol designers must account for device imperfections not merely as nuisance parameters, but as exploitable dimensions within an adversary’s toolkit.

In summary, this study underscores the necessity of rethinking quantum verification strategies under more nuanced models of classical mimicry and quantum indistinguishability. It also highlights the importance of experimental fidelity, not only to observe Bell violations, but to maintain the operational security guarantees that depend on them.

6. Conclusions

This study has introduced and formalized the concept of convergence vicinities, measurement configurations where classical hidden variable theories (HVTs), under Heisenberg-inspired uncertainty constraints, become statistically indistinguishable from quantum mechanical predictions. We demonstrated both analytically and through simulation that these vicinities enable classical models to replicate CHSH violations, traditionally considered unique indicators of entanglement.

By embedding Heisenberg-like resolution limits into deterministic response functions, our findings reveal a subtle erosion of the boundary between classical and quantum descriptions. When applied to device-independent quantum verification protocols, this indistinguishability manifests as a latent vulnerability. In particular, quantum integrity verification schemes such as device-independent quantum key distribution (DIQKD) may be susceptible to adversarial mimicry if convergence vicinities are not adequately accounted for.

Our hardware validation using IBM’s quantum devices further substantiates the practical implications of this convergence. The inability of current noisy intermediate-scale quantum (NISQ) systems to consistently surpass the classical CHSH bound undercuts the empirical reliability of Bell-based verification protocols in realistic deployments. These results are consistent with emerging evidence from loophole-free Bell tests conducted under superconducting architectures.

Future work should focus on three critical directions: first, extending convergence analysis to multipartite and high-dimensional entanglement scenarios; second, developing statistical frameworks that incorporate multi-observable tests to discriminate classical mimicry from genuine quantum correlations; and third, improving hardware-level mitigation of decoherence and noise to strengthen the operational gap between classical and quantum statistical regimes.

Ultimately, this work calls for a revised epistemology in quantum certification, one that recognizes the fragility of entanglement indicators under both theoretical and hardware-imposed constraints. A deeper integration of physical uncertainty bounds into verification models may yield more resilient quantum integrity mechanisms, reinforcing the trust architecture required for scalable quantum communication and computation. In particular, we advocate including randomized angle scrambling (dynamic measurement setting variation) as a built-in subroutine of Bell-based verification protocols. The mechanism is straightforward: convergence vicinities exploited by a classical adversary are typically localized in configuration space and rely on pre-optimizing response functions for a small set of nominal angles. If angles are varied dynamically and unpredictably across trials, the adversary must either (i) track and re-optimize its strategy across a much larger setting space (increasing detection risk and reducing achievable mimicry), or (ii) fall back to a conservative strategy that no longer matches the verifier’s acceptance statistics. In addition, dynamic variation enables stronger consistency tests, because the verifier can compare distribution-level metrics across disjoint random subsets of settings rather than relying on a single aggregated S.

Author Contributions

Conceptualization, J.R.R.-B.; methodology, J.R.R.-B. and J.V.G.T.; investigation, J.R.R.-B.; validation, J.V.G.T. and R.A.F.; formal analysis, J.R.R.-B.; writing, original draft, J.R.R.-B.; writing, review and editing, J.V.G.T., R.A.F., S.R.V., N.S. and A.T.; supervision, R.A.F. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded in part by the Natural Sciences and Engineering Research Council of Canada (NSERC), Discovery Grants Program, Grant No. RGPIN-2023-04513.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Data available from the corresponding author upon reasonable request.

Acknowledgments

The authors thank the IBM Quantum initiative for providing access to experimental platforms used in this research. We acknowledge the support of the Natural Sciences and Engineering Research Council of Canada (NSERC), RGPIN-2023-04513. Cette recherche a été financée par le Conseil de recherches en sciences naturelles et en génie du Canada (CRSNG), RGPIN-2023-04513.

Conflicts of Interest

Authors Jose R. Rosas-Bustos and Jesse Van Griensven Thé were employed by the company LAKES Environmental Research Inc. Author Andy Thanos was employed by the company Cisco Systems, Inc. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Abbreviations

| BI | Bell inequality |

| DIQKD | device-independent QKD |

| HUP | Heisenberg Uncertainty Principle |

| HVT | hidden variable theory |

| QKD | quantum key distribution |

| CHSH | Clauser–Horne–Shimony–Holt |

| LHVT | local hidden variable theory |

Appendix A. Closed-Form Classical Correlations and CHSH Bounds

This appendix provides closed-form expressions for the baseline deterministic classical model used in Section 3.2, and clarifies why holds under measurement independence.

Appendix A.1. Baseline Correlation Function for the Deterministic Model

For the response functions in Equation (9) with uniform , the correlation depends only on the relative angle (modulo ). One finds, for ,

with the extension to by symmetry and periodic continuation. This piecewise-linear “V-shaped” correlation is the familiar result for sign-thresholded cosine response functions with uniform hidden phase.

Appendix A.2. CHSH Bound Under Measurement Independence

Using the pointwise argument in Section 3.4, any model with binary outcomes and a single setting-independent obeys . This remains true under local coarse graining that preserves measurement independence and does not introduce post-selection, because the CHSH proof uses only the algebraic constraints on the outputs.

Appendix B. Relaxed Bell Bounds Under Setting Dependence (Measurement Dependence)

If finite setting resolution enables setting-dependent effective sampling of hidden variables, i.e., , then the standard CHSH derivation no longer applies because the four correlators entering S are computed under different effective distributions. A quantitative characterization of this situation is given by relaxed Bell inequalities [8,9,10,24]. In particular, Hall constructs deterministic locally causal models for which the CHSH value can reach the bound given in Equation (18), where M is a measure of the degree of measurement dependence (loss of setting independence) [8]. This provides a rigorous way to interpret weak apparent violations (e.g., ) as consistent with locally causal behavior when setting dependence is present, and motivates mitigation mechanisms that enforce setting independence and detect setting-control side channels.

Where M is a measure of the degree of measurement dependence (loss of setting independence) [8]. This provides a rigorous way to interpret weak apparent violations (e.g., ) as consistent with locally causal behavior when setting dependence is present, and motivates mitigation mechanisms that enforce setting independence and detect setting-control side channels.

References

- Aspect, A.; Dalibard, J.; Roger, G. BI Experimental Test of Bell’s Inequalities Using Time- Varying Analyzers. Phys. Rev. Lett. 1982, 49, 1804–1807. [Google Scholar] [CrossRef]

- Bell, J.S. On the Einstein Podolsky Rosen paradox. Phys. Phys. Fiz. 1964, 1, 195–200. [Google Scholar] [CrossRef]

- Clauser, J.F.; Horne, M.A.; Shimony, A.; Holt, R.A. Proposed Experiment to Test Local Hidden-Variable Theories. Phys. Rev. Lett. 1969, 23, 880–884. [Google Scholar] [CrossRef]

- Brunner, N.; Cavalcanti, D.; Pironio, S.; Scarani, V.; Wehner, S. Bell nonlocality. Rev. Mod. Phys. 2014, 86, 419–478. [Google Scholar] [CrossRef]

- Acín, A.; Brunner, N.; Gisin, N.; Massar, S.; Pironio, S.; Scarani, V. Device-Independent Security of Quantum Cryptography against Collective Attacks. Phys. Rev. Lett. 2007, 98, 230501. [Google Scholar] [CrossRef] [PubMed]

- Pironio, S.; Acín, A.; Massar, S.; De La Giroday, A.B.; Matsukevich, D.N.; Maunz, P.; Olmschenk, S.; Hayes, D.; Luo, L.; Manning, T.A.; et al. Random numbers certified by Bell’s theorem. Nature 2010, 464, 1021–1024. [Google Scholar] [CrossRef] [PubMed]

- Pironio, S.; Acín, A.; Brunner, N.; Gisin, N.; Massar, S.; Scarani, V. Device-independent quantum key distribution secure against collective attacks. New J. Phys. 2009, 11, 045021. [Google Scholar] [CrossRef]

- Hall, M.J.W. Local Deterministic Model of Singlet State Correlations Based on Relaxing Measurement Independence. Phys. Rev. Lett. 2010, 105, 250404. [Google Scholar] [CrossRef]

- Koh, D.E.; Hall, M.J.W.; Setiawan; Pope, J.E.; Marletto, C.; Kay, A.; Scarani, V.; Ekert, A. Effects of Reduced Measurement Independence on Bell-Based Randomness Expansion. Phys. Rev. Lett. 2012, 109, 160404. [Google Scholar] [CrossRef]

- Pütz, G.; Rosset, D.; Barnea, T.J.; Liang, Y.C.; Gisin, N. Arbitrarily Small Amount of Measurement Independence Is Sufficient to Manifest Quantum Nonlocality. Phys. Rev. Lett. 2014, 113, 190402. [Google Scholar] [CrossRef]

- Aspect, A.; Grangier, P.; Roger, G. EPR Experimental Realization of Einstein-Podolsky-Rosen-Bohm Gedankenexperiment: A New Violation Bell’s Inequalities. Phys. Rev. Lett. 1982, 49, 91–94. [Google Scholar] [CrossRef]

- Leggett, A.J. Nonlocal Hidden-Variable Theories and Quantum Mechanics: An Incompatibility Theorem. Found. Phys. 2003, 33, 1469–1493. [Google Scholar] [CrossRef]

- Cirel’son, B.S. Quantum generalizations of Bell’s inequality. Lett. Math. Phys. 1980, 4, 93–100. [Google Scholar] [CrossRef]

- Heisenberg, W. Über den anschaulichen Inhalt der quantentheoretischen Kinematik und Mechanik. Z. Für Phys. 1927, 43, 172–198. [Google Scholar] [CrossRef]

- Robertson, H.P. The Uncertainty Principle. Phys. Rev. 1929, 34, 163–164. [Google Scholar] [CrossRef]

- Busch, P.; Heinonen, T.; Lahti, P. Heisenberg’s uncertainty principle. Phys. Rep. 2007, 452, 155–176. [Google Scholar] [CrossRef]

- Larsson, J.Å. Loopholes in Bell inequality tests of local realism. J. Phys. A Math. Theor. 2014, 47, 424003. [Google Scholar] [CrossRef]

- Aleksandrowicz, G.; Alexander, T.; Barkoutsos, P.; Bello, L.; Ben-Haim, Y.; Bucher, D.; Cabrera-Hernández, F.J.; Carballo-Franquis, J.; Chen, A.; Chen, C.F.; et al. Qiskit: An Open-Source Framework for Quantum Computing, Version 0.7.2; Zenodo: Geneva, Switzerland, 2019.

- Qiskit/Qiskit-Aer. 2024. Available online: https://github.com/Qiskit/qiskit-aer (accessed on 5 January 2025).

- IBM Quantum. IBM Quantum Platform (IBM Quantum Cloud). 2024. Available online: https://quantum.cloud.ibm.com/ (accessed on 3 January 2026).

- Rosas-Bustos, J.R.; Thé, J.V.G.; Fraser, R.A. Unveiling Hidden Vulnerabilities in Quantum Systems by Expanding Attack Vectors through Heisenberg’s Uncertainty Principle. arXiv 2024, arXiv:2409.18471. [Google Scholar] [CrossRef]

- Storz, S.; Schär, J.; Kulikov, A.; Magnard, P.; Kurpiers, P.; Lütolf, J.; Walter, T.; Copetudo, A.; Reuer, K.; Akin, A.; et al. Loophole-free Bell inequality violation with superconducting circuits. Nature 2023, 617, 265–270. [Google Scholar] [CrossRef]

- Wiseman, H.M. The two Bell’s theorems of John Bell. J. Phys. A Math. Theor. 2014, 47, 424001. [Google Scholar] [CrossRef]

- Friedman, A.S.; Guth, A.H.; Hall, M.J.W.; Kaiser, D.I.; Gallicchio, J. Relaxed Bell Inequalities with Arbitrary Measurement Dependence for Each Observer. Phys. Rev. A 2019, 99, 012121. [Google Scholar] [CrossRef]

- Pearle, P.M. Hidden-Variable Example Based upon Data Rejection. Phys. Rev. D 1970, 2, 1418–1425. [Google Scholar] [CrossRef]

- Eberhard, P.H. Background Level and Counter Efficiencies Required for a Loophole-Free Einstein-Podolsky-Rosen Experiment. Phys. Rev. A 1993, 47, R747–R750. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.