Abstract

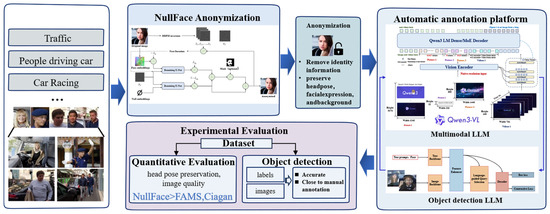

Large-scale collection and annotation of sensitive facial data in real-world traffic scenarios face significant hurdles regarding privacy protection, temporal consistency, and high costs. To address these issues, this work proposes an integrated method specifically designed for sensitive information anonymization and semi-automatic image annotation (AIA). Specifically, the Nullface anonymization model is applied to remove identity information from facial data while preserving non-identity attributes including pose, expression, and background that are relevant to downstream vision tasks. Secondly, the Qwen3-VL multimodal foundation model is combined with the Grounding DINO detection model to build an end-to-end annotation platform using the Dify workflow, covering data cleaning and automated labeling. A traffic-sensitive information dataset with diverse and complex backgrounds is then constructed. Subsequently, the systematic experiments on the WIDER FACE subset show that Nullface significantly outperforms baseline methods including FAMS and Ciagan in head pose preservation and image quality. Finally, evaluation on object detection further confirms the effectiveness of the proposed approach. The accuracy achieved by the proposed method reaches 91.05%, outperforming AWS, and is almost identical to the accuracy of manual annotation. This demonstrates that the anonymization process maintains critical semantic details required for effective object detection.

1. Introduction

Image data in traffic scenarios carries rich environmental information and behavioral characteristics. Generally, these data are regarded as the core input for perception and decision making in intelligent connected vehicles [1], supporting applications including traffic condition understanding, driver assistance, and behavior analysis. However, images and videos captured by vehicle cameras, dashcams, and in-cabin monitoring often contain highly identifiable information, particularly license plates and faces [2]. Once exchanged across domains or stored remotely, such data may be misused or maliciously re-identified, leading to precise tracking or privacy breaches [3]. Therefore, to meet the visual information needs of intelligent mobility services while complying with privacy regulations, notably the General Data Protection Regulation (GDPR), effective desensitization of license plates and faces is vitally important throughout the data processing pipeline [4]. However, due to the massive scales of camera data, efficiently detecting and cleaning sensitive information remains a major challenge.

Traditionally, the sensitive information is cleaned by manual annotation and processing. Staff manually mark faces and license plates and then occlude or blur them. Manual processing cannot scale to the growing volume of surveillance data. The traditional methods are slow, prone to missed or inconsistent labels, and often fail to meet strict privacy requirements. With the maturing of natural language processing (NLP) and computer vision (CV), automatic image annotation (AIA) has rapidly developed into a key task in artificial intelligence (AI) [5]. AI, pattern recognition, and CV are the primary technologies for AIA and are employed to analyze visual features of digital images and assign metadata as captions or keywords [6]. Automatic annotation of objects in traffic scenes enables fast detection and classification of vehicles, pedestrians, and other traffic elements. This step forms the basis for later desensitization and directly determines the effectiveness of anonymization.

Existing AIA methods typically rely on not fully supervised deep learning algorithms. The goal of the AIA methods is to reduce the effort required to obtain labeled data and to lower model dependence on labels while keeping annotation accuracy. Common approaches include semi-supervised learning [7], transfer learning [8], reinforcement learning [9], and active learning [10]. Transfer learning moves knowledge from one task or domain to another to reduce labeling needs for the new task. In image annotation, common practices are domain adaptation and pretraining with fine-tuning. For cross-domain annotation, Yang et al. [11] proposed frequency domain adaptation, which reduces distribution gaps by swapping low-frequency components between the source and target domains, while semi-supervised learning leverages unlabeled data to further strengthen model training. Tan et al. [12] proposed a deep semi-supervised paradigm for powder bed defect segmentation that enables learning with limited annotations. However, the theoretical assumptions underlying many semi-supervised methods may not be applicable to the actual data distribution in the real world, resulting in the model learning incorrect information.

Active learning shares similarities with semi-supervised learning. Unlabeled data are utilized in both approaches to train and improve models. However, active learning is distinguished by the use of specific query strategies. More representative samples are selected from unlabeled data. These samples are handed over to annotators for labeling. A new training set is formed to continue the model training process. Hoxha et al. [13] proposed annotation cost-efficient active learning (ANNEAL), a cost-efficient active learning method for remote sensing built on content-based image retrieval. ANNEAL aims to build a small but informative training set composed of similar and dissimilar image pairs to learn a precise metric space. Reinforcement learning has also been applied to annotation tasks to optimize labeling strategies. A prominent line of inquiry formulates active learning within the framework of a Markov decision process (MDP), leveraging deep reinforcement learning to derive optimal sample selection policies. Jiu et al. [14] introduced deep reinforcement active learning, a method that prioritizes unlabeled samples based on uncertainty and subsequently utilizes the ranked feature representations as state inputs for an actor–critic agent. By employing the deep deterministic policy gradient algorithm and utilizing label feedback, the agent acquires a dynamic sampling policy. This method can adaptively select samples for annotation based on the current model and data distribution, avoiding errors from fixed heuristic rules. Such methods usually assume strong distribution consistency between unlabeled data and labeled data or rely on fixed heuristic sample selection strategies. However, in real-traffic scenarios, these assumptions often fail due to the high diversity of weather conditions, viewpoints, illumination, and imaging devices. This mismatch can lead to the accumulation of annotation errors and limited model generalization ability. In recent years, foundation models have shown significant advantages in multimodal understanding and semantic reasoning. Their introduction into automated data annotation pipelines has partially alleviated issues such as high label noise and weak cross-scene generalization in traditional methods. This progress makes large-scale dataset construction with low human involvement feasible.

However, the improvement of automatic annotation capability also drives traffic data collection toward large-scale and highly automated processes. This trend further amplifies the potential risk of large-scale identification and dissemination of sensitive information, such as faces and license plates, in raw data. Without effective privacy protection mechanisms, automated annotation systems may increase the risk of personal privacy leakage while improving data utilization efficiency. Traditional desensitization methods, including pixel-level replacement and occlusion, struggle to balance privacy and visual usability. Pixelation and blurring can make sensitive content unrecognizable to human viewers, while automated models can still extract sensitive features. For this reason, generative methods have been proposed in recent years. Generative approaches modify or replace original image content to protect privacy while keeping the image visually coherent [15]. Goodfellow et al. [16] introduced generative adversarial networks (GANs), which facilitate the synthesis of realistic samples via a generator network while generated samples are distinguished from real data by an adversarial discriminator, driving the generator toward the true data distribution. GANs are well-suited to face deidentification because they can generate new samples that follow the learned input distribution.

Recently, diffusion models have achieved breakthroughs in image generation and editing. Their high generation quality and stable training have made them a new direction for image privacy research [17,18,19]. Diffusion models generate high-fidelity images by gradually adding and removing noise [20]. Compared with GANs, they offer more stable generation and greater diversity. They have been widely applied to anonymization, face swapping, and image restoration in privacy-related tasks [21,22]. In earlier research, Li et al. [23] proposed the latent diffusion-based face anonymization two-stage diffusion anonymization framework. This method first uses a face detection model to locate the face region, and then uses a latent diffusion model (LDM) to perform generative inpainting on that region, thereby synthesizing an anonymized face image. Since LDM samples and generates in latent space rather than pixel space, it better preserves image semantics and background consistency [24]. Chen et al. [25] introduced Diff-Privacy, which utilizes a custom image inversion module to map images into latent space for identity replacement while maintaining content fidelity. Shaheryar et al. [26] proposed a dual-conditional diffusion-based identity anonymization method that integrates identity and non-identity features to achieve controllable, high-quality face deidentification.

Although generative anonymization and AIA have advanced significantly, their coupling in real traffic scenarios remains limited. Few works consider the temporal consistency of generative anonymization together with seamless integration into AIA and quantitative evaluation of privacy utility. Therefore, there is an urgent need for a solution that can provide high-quality visual anonymization while ensuring downstream annotation accuracy and temporal consistency to support large-scale data collection and semi-automatic annotation in real-world traffic scenarios. Accordingly, to meet this need, this study proposes an anonymization and AIA method for sensitive information in complex traffic environments. Firstly, NullFace diffusion anonymization is applied to the collected face dataset to satisfy strict requirements on target fidelity and background realism. Then the Dify engine visual workflow orchestration is combined with Qwen3-VL and Grounding DINO to link cleaning and detection steps. This enables text-driven data cleaning and open vocabulary AIA and produces a traffic-sensitive information dataset with diverse scenes. Through empirical analysis on the constructed traffic scene dataset, we verified the applicability and annotation performance of this method in diverse scenarios. The comparison of the proposed method with existing methods is shown in Table 1.

Table 1.

Comparison of the proposed method with existing methods.

The remainder of this paper is organized as follows. Section 2 presents the anonymization method. Section 3 gives an overview of automatic annotation for sensitive information. Section 4 describes the dataset and evaluation metrics. Section 5 presents the experimental setup and results. Section 6 concludes the paper and outlines future work.

2. Privacy Protection and Automated Data Construction

With tightening privacy regulations, protecting personally identifiable information in traffic monitoring and autonomous driving datasets is essential. To remove sensitive facial identity while preserving non-sensitive attributes that matter for downstream training, including gaze, pose, and expression, this study adopts the NullFace anonymization framework [27] for the front end of the data processing pipeline. The framework is based on denoising diffusion probabilistic models, which use diffusion inversion to extract an initial noise representation of each image. A two-path conditional guidance mechanism is then applied to directionally erase and reconstruct facial identity features in traffic scenes without any model fine-tuning.

2.1. Diffusion Model Inversion and Latent Space Mapping

The inversion mechanism of the denoising diffusion probabilistic model (DDPM) in the NullFace anonymization model can generate a deterministic noise representation of the input image. This representation helps ensure high structural consistency between the anonymized output and the original image. Unlike noise drawn by random sampling, the inversion process seeks the specific initial noise and the corresponding intermediate noise trajectory that can precisely reconstruct the original input. Using the DDPM scheduler, the initial latent variable is determined and the noise term required for transitions between diffusion steps is computed. The noise term is computed as follows:

where denotes the image latent variable at the previous time step, and represents the conditional input, which is usually set to the empty condition during the inversion stage. is the posterior mean predicted by the denoising U-Net given the current noisy input . is a predefined variance schedule parameter associated with the time step. Through this inversion process, the system obtains a specific noise sequence and an initial Gaussian noise . This memory sequence enables the model, during the subsequent generation stage, to enforce pixel-level alignment with the original image structure by fixing . As a result, it mitigates the structure drift problem in conventional generative methods and effectively preserves the physical basis of the background, illumination, and facial geometric details. The pseudocode for the inversion process is shown in Table 2.

Table 2.

Pseudocode for the inversion process.

2.2. Dual-Path Denoising with Identity Negative Guidance

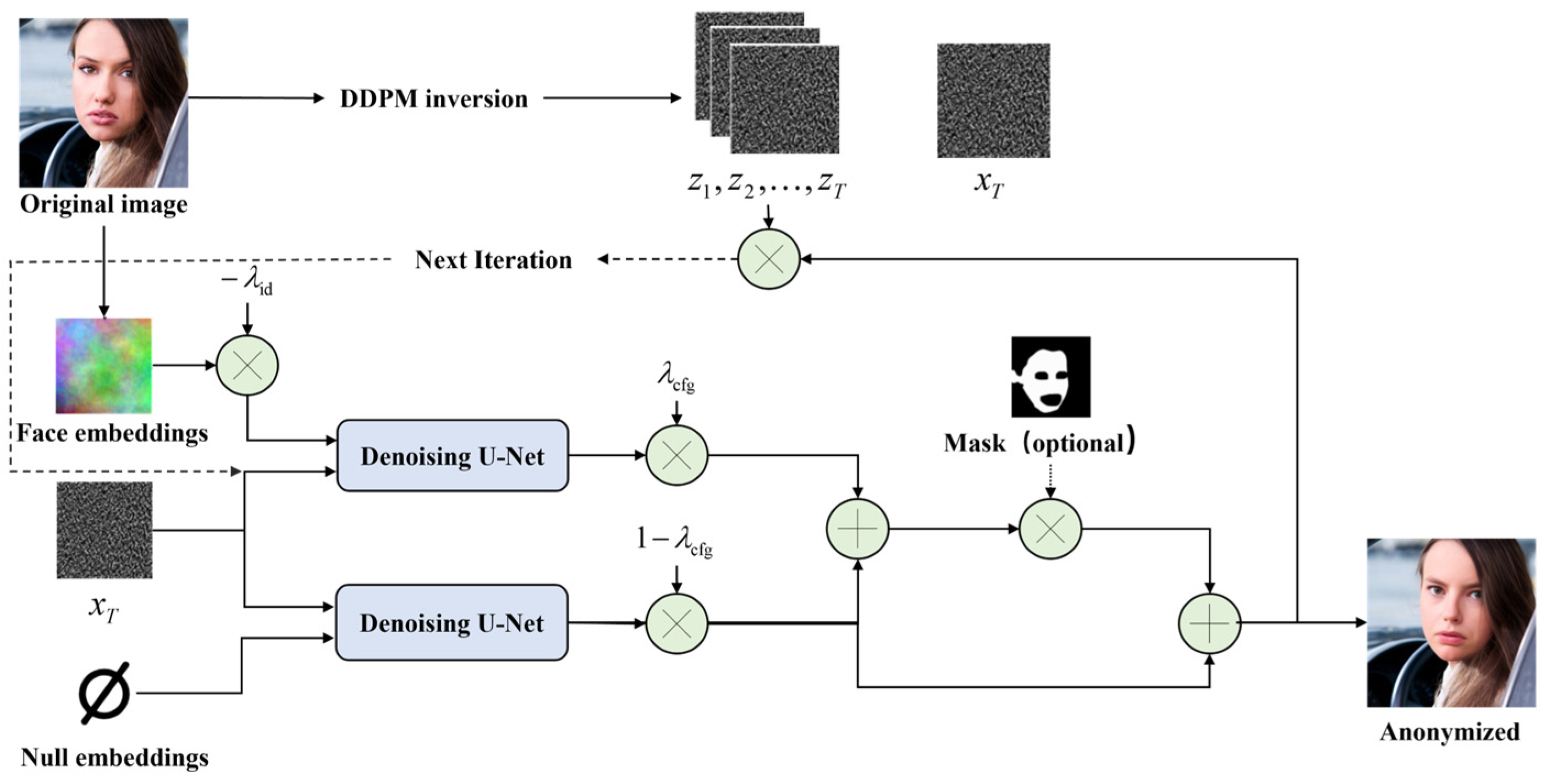

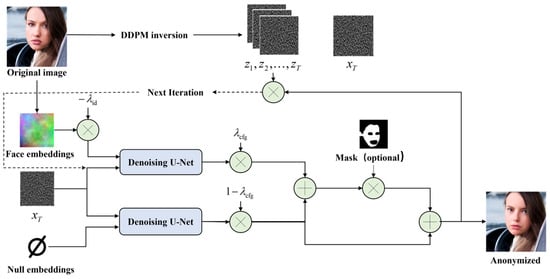

After obtaining the latent representation that contains the structural information of the original image, the NullFace framework performs identity removal through a dual-path conditional injection strategy combined with an IP-Adapter. The core idea is to construct accurate negative identity guidance. The overall model architecture is shown in Figure 1.

Figure 1.

NullFace Model Structure.

To capture high-dimensional identity features, the system first uses a pretrained face recognition model, ArcFace [28], to extract an identity embedding vector from the input image. Traditional personalized generation aims to approach a target identity, while anonymization is an inverse process. Therefore, the framework introduces a tunable anonymization strength parameter with to construct a negative identity condition . Specifically, identity features are mapped and inverted using . As increases, the conditional vector moves farther away from the original identity in the feature space, and the generated facial features become more distinct.

During denoising inference, the network runs two generation paths in parallel to balance the anonymization effect and image quality. The first path is the conditional path that receives the negative identity condition . This guides the model to suppress features related to the original identity, including facial shape and unique skin texture. The second path is the unconditional path that receives a null embedding , which assists the model to focus on general priors that are independent of identity, including common face structure and ambient lighting. The final noise prediction is fused using a modified classifier-free guidance formula. Equation (2) shows this fusion,

where is the guidance scale parameter, which determines how strongly the final generation follows the negative identity condition. The formulation shows that the final denoising direction is mainly guided by the unconditional path to preserve naturalness, while an additional gradient component pushes the result away from the original identity. In practice, plays a key role in balancing the degree of identity removal and the quality of image generation. A larger value strengthens the modification of the original identity features. Experiments on the CelebA HQ and FFHQ datasets show that the parameter setting and can exploit the general response of pretrained diffusion models in the embedding space. This setting enables robust control from slight feature adjustment to complete identity reconstruction [27].

2.3. Region-Aware Pixel-Level Fusion Strategy

Although diffusion inversion provides strong structure preservation, slight semantic drift may still occur in non-face regions, such as background vehicles and road signs, during full image generation. To meet the strict requirements on background realism in traffic datasets, this study adopts a semantic segmentation-based local repainting strategy provided by NullFace.

In the implementation, the system integrates a pretrained BiSeNet model [29]. This model is trained on the CelebAMask HQ dataset and can accurately parse an image into 19 semantic categories, including skin, facial components, hair, and background. In this work, semantic labels related to skin and facial components are merged to generate a high-precision binary face mask , where face regions are marked as 1 and background regions are marked as 0. At each time step of the denoising process, the system spatially blends the anonymized noise ϵ generated by the model with the background noise obtained from inversion of the original image. The computation is defined as follows:

This mechanism ensures that anonymization is strictly confined to the masked regions, while areas outside the mask directly reuse the noise distribution computed during the inversion stage. As a result, the original background details are perfectly preserved at the pixel level. Regarding the impact of segmentation accuracy on generation quality, this strategy shows strong robustness. In cases of under-segmentation, facial boundary regions that are not covered by the mask reuse the inversion noise that contains the original pixel details. These regions naturally recover the original image texture, which enables seamless integration between the anonymized area and the background and effectively avoids the hard boundary artifacts commonly observed in traditional inpainting methods. In cases of over-segmentation, the strong contextual awareness of the diffusion model ensures that mistakenly included background pixels remain semantically consistent with their surroundings. This local processing strategy not only improves overall image fidelity but also completely eliminates boundary artifacts, ensuring that the anonymized data remain suitable for high-precision traffic scene analysis.

3. Semi-AIA System Based on Multimodal Foundation Models

Traffic data processed by NullFace must be converted into a standard dataset with precise semantic labels before it can serve downstream training. Manual annotation cannot scale to large volumes, which is slow, costly, and inconsistent. To address this, we designed and implemented a semi-automatic pipeline based on the Dify workflow engine. The system integrates state-of-the-art foundation models, including Qwen3-VL, Grounding DINO, and Deepseek-R1, to automate the full process from data cleaning to standardized output.

3.1. Data Cleaning by Visual Instruction Fine-Tuning

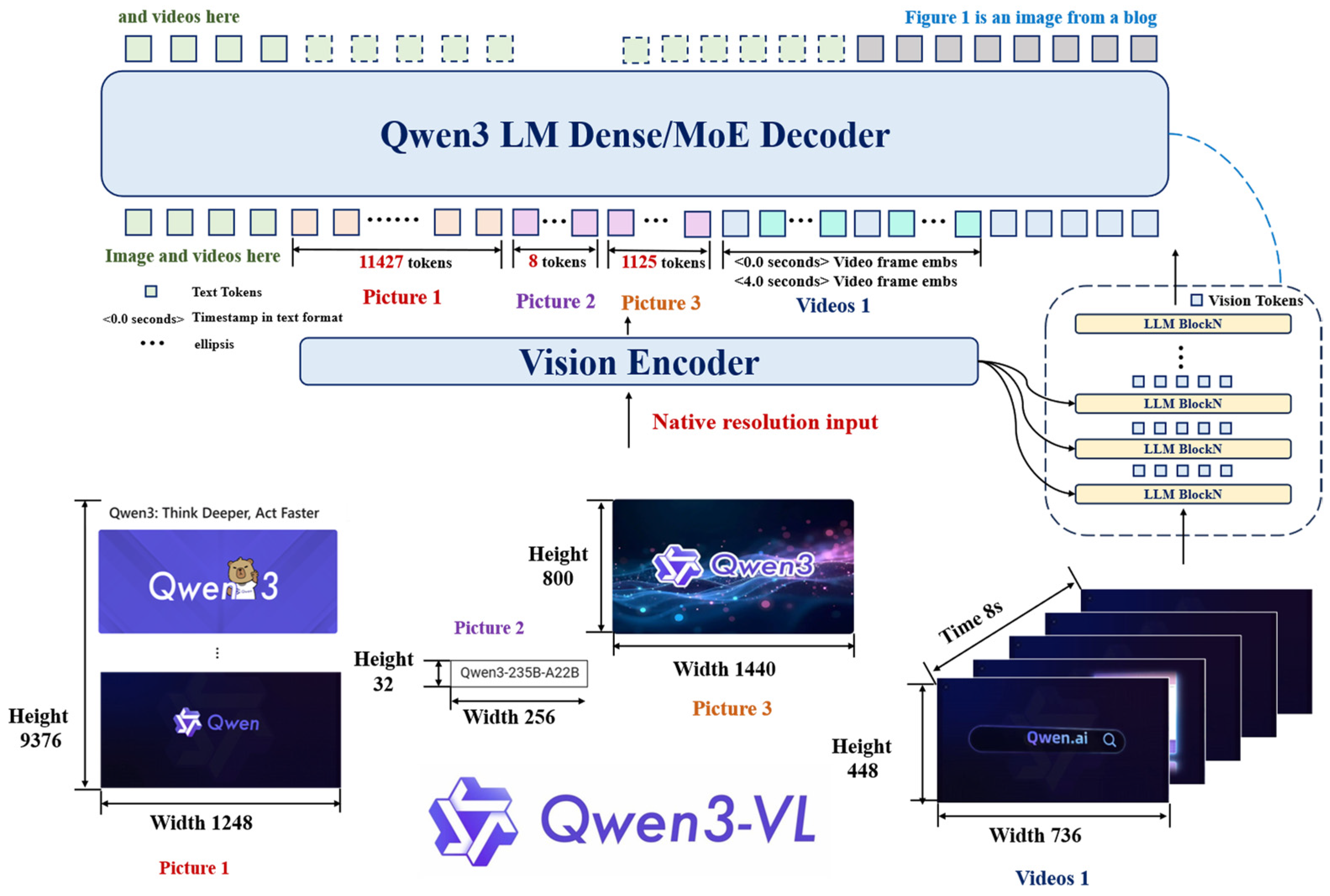

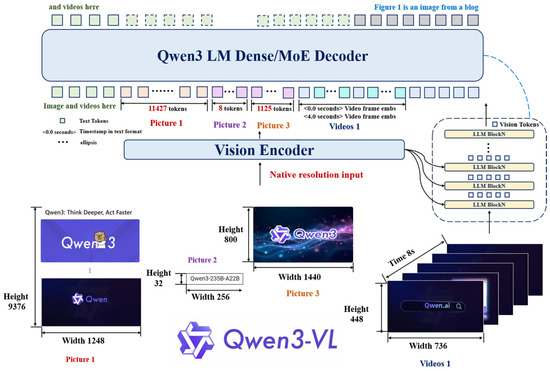

Data cleaning is the first line of defense for building a high-quality dataset. To quickly remove empty frames with no vehicles or no faces from massive surveillance video, the Qwen3-VL family of multimodal foundation models is applied as an intelligent filter. The model architecture is shown in Figure 2.

Figure 2.

Qwen3-VL model structure.

Qwen3-VL adopts a deeply coupled architecture that combines a Vision Transformer and a large language model. This design provides strong visual understanding and instruction following capability. The data cleaning task is formulated as a standardized visual question answering process. Each image is input to the model together with a carefully designed prompt. The prompt explicitly asks the model to determine whether a face is present in the image and strictly constrains the output to a JSON format, such as {“face”: false} or {“face”: true}. Through this prompt design, the system can directly parse the returned JSON object. When all key fields are false, the sample is automatically marked as invalid and removed from the processing queue. This mechanism fully exploits the zero-shot generalization ability of multimodal large models. It avoids the cost of training a dedicated classifier and ensures that computational resources in the subsequent annotation stage are focused on valid data.

To support large-scale deployment, the platform uses the Qwen3-VL 30B A3B Instruct model. Since the data cleaning task only requires basic binary existence judgment, the 30B parameter model shows clear performance redundancy in this scenario. Therefore, we adopt 4-bit quantized loading and multi-GPU parallel inference to improve resource efficiency. It is worth noting that no valid samples are missed during the subsequent manual review of the constructed dataset. This empirical result confirms that the 4-bit quantized model achieves very high recall and reliability in data cleaning tasks.

3.2. Open Vocabulary Detection and Annotation

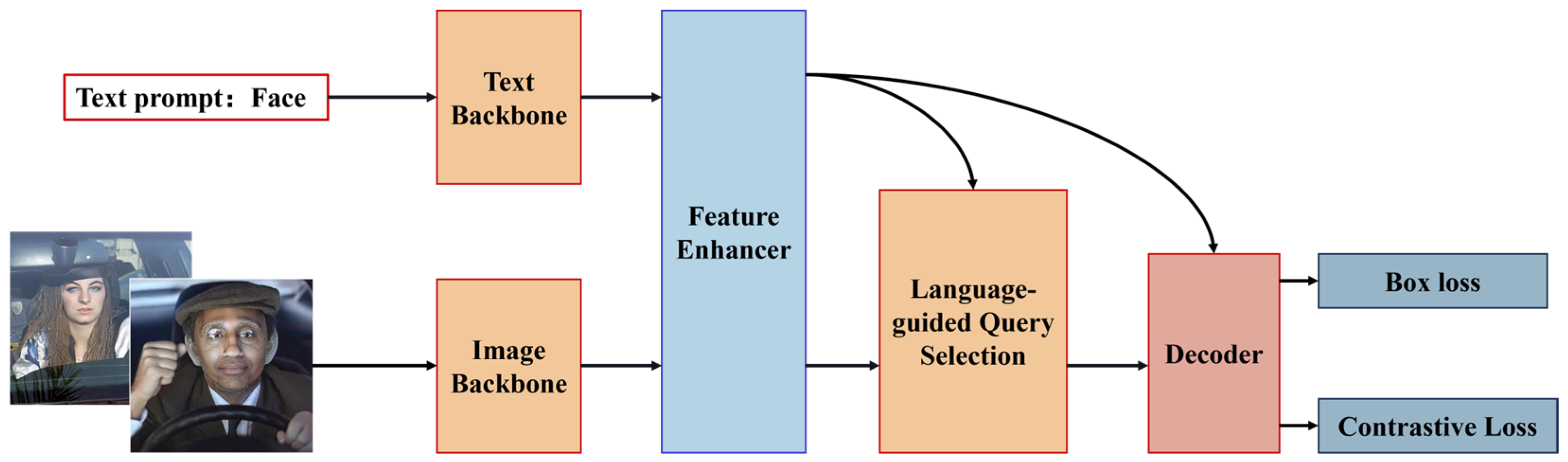

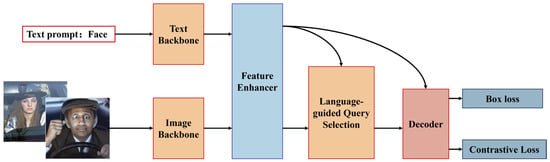

Valid samples pass to the core automatic annotation stage. To overcome the limitations of traditional detectors including You Look Only Once (YOLO) and Faster R-CNN, which are restricted to predefined categories, this study introduces the Grounding DINO model, which possesses open-set detection capabilities, as shown in Figure 3. This lets users detect arbitrary classes with natural language prompts.

Figure 3.

Grounding DINO model structure.

In our system, we call Grounding DINO via API and set the text prompt to the word face. The prompt directs the model to search for regions that match the face concept in feature space. The model outputs detection boxes, confidence scores, and short phrase labels. A postprocessing module converts these outputs for common training frameworks, which denormalizes box coordinates, applies a confidence threshold, and converts formats. The result is a standard YOLO annotation file containing class ID, center coordinates, width, and height. In practice, this method keeps recall high and processes each image in only a few seconds, far faster than manual labeling.

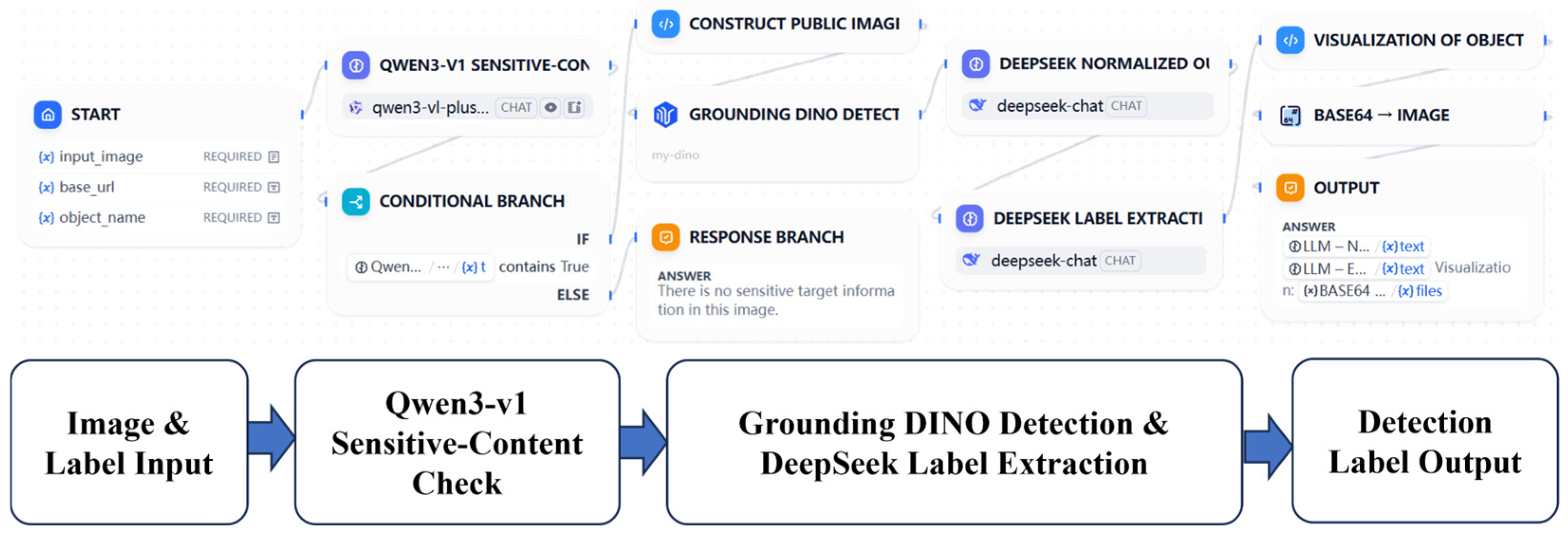

3.3. Dify Workflow Orchestration and Human–Machine Collaboration

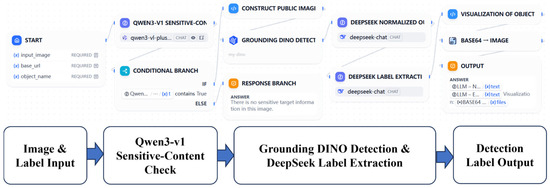

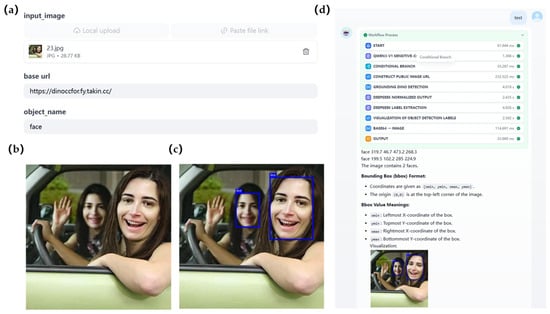

To make the model modules usable in production, we built a unified system using Dify workflow visual orchestration. The architecture is shown in Figure 4. The workflow links cleaning and detection steps and also integrates Deepseek-R1 to improve interpretability.

Figure 4.

Semi-AIA workflow platform structure.

After detection, the workflow calls Deepseek-R1 for secondary processing. Deepseek-R1 acts both as an information extractor and as a semantic explainer. It pulls key statistics from the raw Grounding DINO JSON and converts coordinate data into natural language descriptions including object count, object positions, YOLO labels, and the meaning of each label value. These descriptions provide intuitive reference information for manual review.

To balance throughput and label quality, the platform supports two operation modes. The first mode is an interactive review interface for human verification, as shown in Figure 5. In this mode, the system retains Deepseek-R1 explanations and presents the image, annotation boxes, and semantic explanations to the operator in a question-and-answer style. Operators can quickly inspect and slide through results and manually correct difficult or ambiguous samples. This mode takes about 14.92 s per image but substantially reduces human cognitive load. The second mode is an API batch mode for large-scale dataset construction. For maximum efficiency, this mode skips Deepseek-R1 explanation generation and runs only the cleaning and detection steps. This reduces per-image time to about 6.12 s. At that rate, a single workflow instance can automatically label more than 14,000 images per day. This delivers a model driven, human-reviewed data production paradigm. Table 1 reports the timing for each workflow step for a 640 by 640 image. Grounding DINO inference and semantic extraction by Deepseek-R1 form the main sources of latency in the workflow. The latter is enabled only in the dialogue interface mode to generate interpretable descriptions. The system provides an effective balance between interpretability and throughput, which supports flexible configuration for data needs of different scales.

Figure 5.

Semi-AIA workflow platform dialogue interface: (a) workflow parameter configuration. (b) input image. (c) output image. (d) dialogue mode.

The proposed pipeline adopts a strictly sequential design. Only the Qwen3-VL model is deployed locally, while the Grounding DINO and Deepseek-R1 modules are accessed via external APIs. Although network communication latency between modules is not independently profiled, it is implicitly captured in the per-module runtime analysis presented in Table 3, as all API processing and data transformation steps are implemented via explicit code nodes. Furthermore, since only the Qwen3-VL model runs on the local GPU, cross-module GPU memory sharing is not applicable in this deployment.

Table 3.

Performance comparison of different annotation methods.

4. Dataset and Evaluation Metrics

4.1. Datasets

To evaluate temporal consistency, we adopt the DH-FaceVid-1K dataset [30], which provides continuous facial tracks across video frames and is suitable for measuring identity stability over time. Temporal consistency is an identity-level property, which mainly depends on whether the anonymized face remains stable for the same individual across consecutive frames, rather than on scene semantics or background complexity. Therefore, we used the WIDER FACE [31] and RealFace [32] datasets to conduct anonymization quality assessment and downstream annotation experiments in traffic scenarios. Both datasets contain rich traffic-related backgrounds and are more representative of scene conditions related to intelligent connected vehicle traffic.

The WIDER FACE dataset was jointly released by the Chinese University of Hong Kong and SenseTime, and its images were carefully selected from the publicly available WIDER dataset. It has become one of the most challenging benchmarks for evaluating face detection algorithms. The dataset contains a total of 32,203 images, designed to push the development of face detection technology for real-world complex scenarios through its unprecedented diversity and complexity.

A key feature of the WIDER FACE dataset is its comprehensive simulation of various real-world challenges. The dataset includes a wide range of head poses, covering frontal, side, upward, and downward angles. The lighting conditions vary dramatically, including strong light, shadows, and backlighting, with many faces partially occluded by objects like glasses and hats. It also includes diverse natural facial expressions, including smiling, closed eyes, and open mouths, and various complex backgrounds, including natural environments, urban streets, and indoor settings. However, the dataset also contains densely crowded scenes with severe occlusion between faces, including very small faces smaller than 10 pixels. These small faces are often unclear and do not pose a privacy concern. Therefore, we selected the “Medium” and “Easy” difficulty subsets from typical traffic scenes, including driving, traffic, and racing, for anonymization, and then annotated the anonymized data.

We first applied YOLO-based object detection to the original dataset. The overlap between the predicted bounding boxes and the ground-truth boxes was measured using intersection over union (IoU). Based on the IoU values, the samples were divided into three subsets, namely Easy, Medium, and Hard. Specifically, samples with IoU ≥ 0.7 were classified as Easy, those with 0.3 ≤ IoU < 0.7 were classified as Medium, and the remaining samples were labeled as Hard. The Easy and Medium samples were selected as training samples. After filtering, the image ratio among the driving, traffic, and racing categories was set to 2:2:1. In addition, experiments were conducted on the RealFace dataset. This dataset contains more than 11,000 pedestrian images captured in real-world scenarios and is well-suited for street and traffic surveillance environments. The difficulty levels of this dataset were also categorized and filtered using the same criteria. The number of images in the WIDER dataset and the RealFace dataset before and after filtering is shown in Table 4.

Table 4.

The number of images in the dataset before and after filtering.

4.2. Evaluation Metrics

The proposed method was assessed from three aspects, namely identity attributes, non-identity attributes, and image quality to evaluate anonymization. For identity attributes, the reidentification rate was evaluated. ArcFace was used to measure the probability that the original identity could be reidentified based on feature similarity matching. For non-identity attributes, three factors were considered, including head pose, gaze direction, and facial expression. Head pose is represented by Euler angles, including yaw, pitch, and roll [33]. These angles represent the left or right rotation, the upward or downward tilt, and the left or right tilt of the head, respectively. In a 3D coordinate system, these three rotations correspond to rotations around fixed axes, typically defined as pitch around the X axis, yaw around the Y axis, and roll around the Z axis. The combination of these three Euler angles can uniquely define the head’s orientation relative to a reference coordinate system. Gaze direction was evaluated using the Gaze360 [34] model. The main metric was the angular error between the predicted gaze vector and the ground-truth gaze vector . This error was computed as follows:

Facial expression preservation was measured using the consistency rate. This evaluation was conducted with the Emo-AffectNet model [35].

Next, the image quality metric uses the FID [36] score to assess image quality by measuring the difference in feature space between the generated image distribution and the real image distribution. Research shows that FID correlates well with human visual quality judgment and can be used to evaluate the quality of image samples. The basic idea of FID is to compute the Fréchet distance between the Gaussian distributions of the feature representations of two image networks:

where and are the means of the real and generated distributions, and are the covariances of the real and generated distributions, respectively. The FID value consists of the mean difference term and the covariance difference term. The mean difference reflects the overall position shift between the two image distributions in the feature space, while the covariance difference expresses the shape difference of the distributions. A smaller FID value indicates that the distributions of the two sets of images are closer, meaning the generated images have higher quality and diversity. Ideally, if the distributions are identical, FID equals 0. Since FID cannot pinpoint quality issues in a single image, the multiscale image quality (MUSIQ) [37] evaluation method was also used. MUSIQ, based on the Transformer architecture, directly handles images at original resolution and predicts human subjective image quality scores by integrating multiscale information. Its core idea is to simulate how the human visual system perceives image information at different scales.

Finally, to evaluate the performance of the AIA method, precision and recall were calculated for each label in the test set. denotes the number of images labeled with label in the original manual annotation, and denotes the number of images labeled with label in the test process, where is the number of correctly labeled images. The calculations for precision , recall , and the score are as follows:

By anonymizing the WIDER FACE subdataset and then using the proposed semi-AIA platform to annotate the anonymized images, a deidentified dataset suitable for downstream tasks is obtained. The overall framework of the work is shown in Figure 6.

Figure 6.

Overall framework diagram for traffic data privacy protection and collaborative annotation.

5. Experimental Results and Analysis

In the experiment, we first conducted consistency verification and hyperparameter sensitivity analysis to determine the parameters of the anonymization model. Secondly, to evaluate the effectiveness of the proposed anonymization model, we compared the proposed method with existing approaches on the WIDER FACE subset and RealFace dataset to assess its ability to preserve non-identity facial attributes while removing personally identifiable information. Secondly, we validate the advantages of the proposed AIA method by training an object detection model on the anonymized dataset. Finally, we investigate the impact of anonymization on downstream performance by training object detection models on both the anonymized and original datasets.

5.1. Hardware Configuration and Scalability

The experiments were conducted on a Windows 11 operating system. The hardware configuration included an AMD Ryzen 9 9950X 16 Core Processor and an NVIDIA GeForce RTX 4090 D graphics card with 24 GB of video memory. The CUDA version was 11.1. All experiments were implemented using the PyTorch 1.8 deep learning framework under the Python 3.12 environment.

The proposed anonymization and annotation pipeline required 17.22 s to process a single 640 × 640 image. During this time, the Nullface-based anonymization method required 2.30 s per image. The semi-automated annotation platform used an interactive review mode with human verification and required approximately 14.92 s to process one image. When constructing large-scale datasets, an API-based batch processing mode can be adopted. In this case, the annotation time per image can be reduced to 6.12 s. At this processing speed, the overall workflow can handle more than 420 images per hour, which meets the requirements of large-scale dataset applications. The models’ performance remains consistent on larger volumes, as each image is processed with the same learned capabilities.

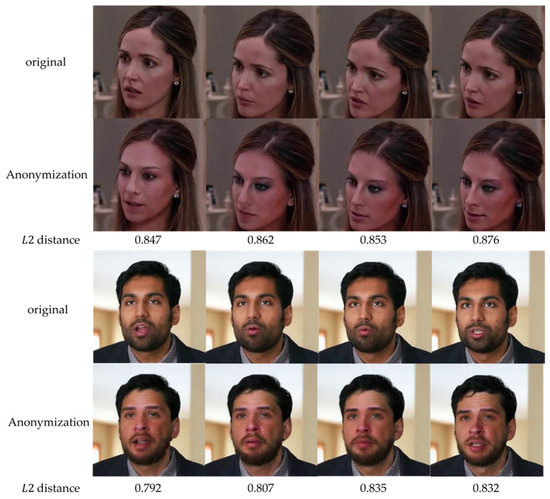

5.2. Consistency Verification and Sensitivity Analysis

To verify the temporal consistency of anonymization, experiments were first conducted on the DH-FaceVid-1K dataset. The stability of anonymization results across video frame sequences was evaluated. A fixed random seed was used during anonymization to ensure consistent anonymized identities for the same target across different frames. This strategy maintained the same anonymized identity for the same subject throughout the video, as illustrated in Figure 7. The high visual similarity across consecutive frames and the small L2 Euclidean distance differences between faces before and after anonymization indicate that the anonymization results for the same target remain stable across adjacent frames.

Figure 7.

Temporal consistency verification.

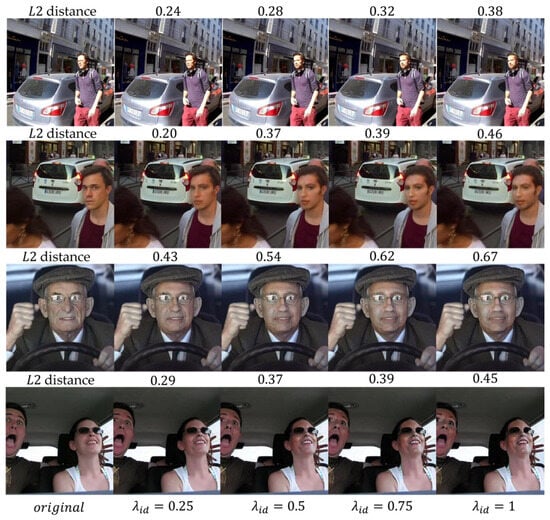

We first conducted a sensitivity analysis of the controllable anonymization strength parameter on the WIDER FACE and RealFace datasets to illustrate the degree of anonymization achieved by the model. As shown in Figure 8, the original face and four anonymized versions generated with 0.25, 0.50, 0.75, and 1.0 are presented. Identity variation was quantified by computing the Euclidean distance between the anonymized faces and the original face using the ArcFace model. As increases, both visual inspection and the identity Euclidean distance indicate a larger deviation from the original identity.

Figure 8.

The impact of the anonymization strength parameter on the degree of anonymization.

Based on this result, was selected as the identity strength coefficient for subsequent training. The remaining hyperparameter settings are summarized in Table 5. NullFace adopts Stable Diffusion 1.5 as the pretrained generative model. The total number of diffusion sampling steps was set to 100 to balance generation quality and computational cost. The conditional guidance strength was set to 10, which is a commonly used configuration in Stable Diffusion. This setting ensures that conditional information provides sufficient guidance during the denoising process and helps preserve the overall facial structure and visual consistency. The IP-Adapter conditional channel strength was set to 1 to maintain stable conditioning during cross-attention injection and to avoid unintended structural distortions caused by excessive amplification or suppression of conditional features. During denoising, the model skipped the first 70 steps and started reverse diffusion from an intermediate timestep with lower noise levels. This strategy preserves facial pose, illumination, and geometric structure while only moderately reconstructing identity-related features. In the sampling equation, the noise coefficient η was set to 1 to introduce moderate randomness. This enhances generation diversity and identity irreversibility, thereby further reducing the risk of identity recovery.

Table 5.

Nullface Hyperparameter Configuration.

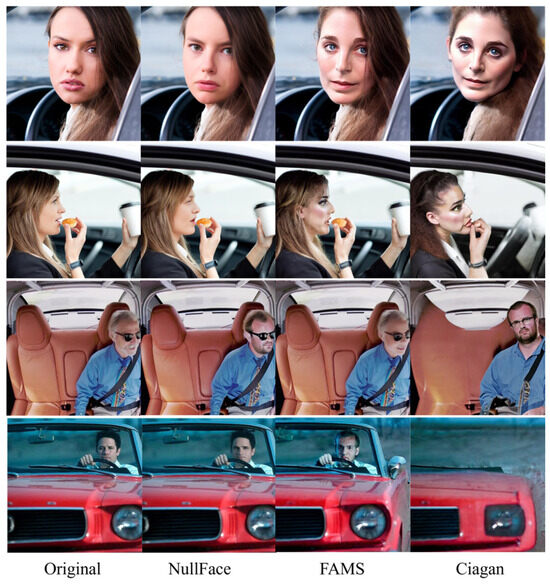

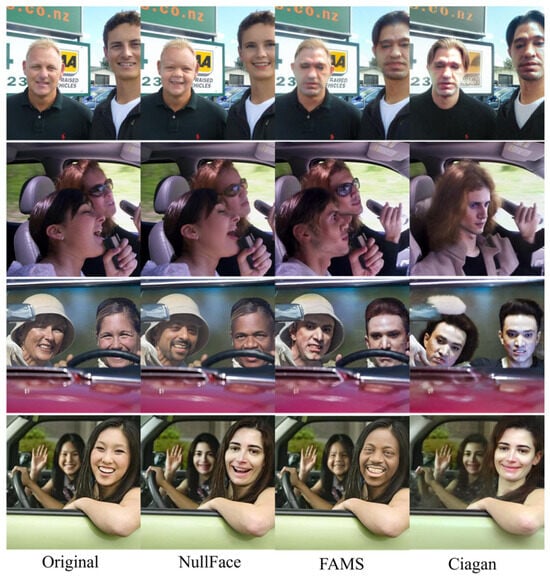

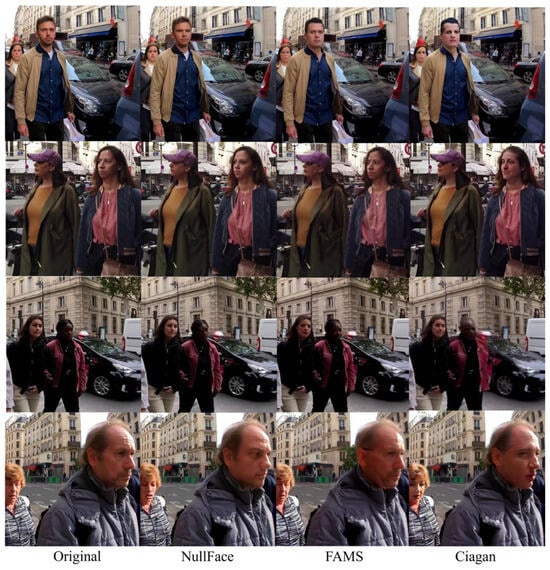

5.3. Comparison of Model Results Before and After Anonymization

To demonstrate the advantage of the NullFace anonymization method, we evaluated the proposed approach on the WIDER FACE dataset and RealFace dataset and compared it with two baselines, FAMS [38] and Ciagan [39]. Table 6 and Table 7 present comparisons. First, in terms of the reidentification metric, all three anonymization methods reduce identity reidentification rates. Among them, NullFace achieves the lowest Re-ID scores on both datasets, indicating the most effective identity anonymization performance. For attribute preservation, head pose, expression consistency, and gaze direction error were evaluated. A head pose estimation model [33] was used to predict facial orientations in both the generated images and the original images. The quaternion angular distance between these orientations was then computed. NullFace performs slightly worse than FAMS on the pitch angle metric but shows a clear improvement over Ciagan. In addi-tion, NullFace exhibits more stable performance in expression consistency and gaze direction error. These results suggest that NullFace effectively removes identity infor-mation while preserving the original facial geometry and semantic attributes, which supports downstream detection tasks.

Table 6.

Comparison of face anonymization methods on the WIDER FACE dataset.

Table 7.

Comparison of face anonymization methods on the RealFace dataset.

We first measured image quality using FID. The proposed method achieved the best result and yielded the lowest FID on both datasets, substantially outperforming Ciagan. We also measured the MUSIQ score difference between generated images and original images to assess quality retention. The NullFace model showed a strong ability to retain the original image quality. On the WIDER FACE dataset, methods like FAMS produced higher MUSIQ scores but generated larger MUSIQ distance from the original images because they performed visible enhancement. By contrast, due to the inversion process, NullFace preserves the source image quality instead of applying unnatural enhancement.

Figure 9 and Figure 10 show results under single-person conditions including occluded and extreme-angle faces from the WIDER FACE and RealFace datasets. The proposed method effectively anonymizes identity while preserving identity-independent details, including pose, expression, and background. The outputs are visually realistic. Compared with other methods, NullFace does not introduce obvious artifacts or geometric distortions, and it does not break in the scene layout, accessories, or other details relevant to downstream tasks. FAMS failed to preserve participant expressions. Ciagan produced less realistic outputs and missed anonymization targets.

Figure 9.

Comparison results in single-person scenarios from the WIDER FACE dataset evaluated. A head pose estimation model [33] was used to predict facial orientations in both the generated images and the original images. The quaternion angular distance between these orientations was then computed. NullFace performs slightly worse than FAMS on the pitch angle metric but shows a clear improvement over Ciagan. In addition, NullFace exhibits more stable performance in expression consistency and gaze direction error. These results suggest that NullFace effectively removes identity information while preserving the original facial geometry and semantic attributes, which supports downstream detection tasks.

Figure 10.

Comparison results of single-person scenarios from the RealFace dataset.

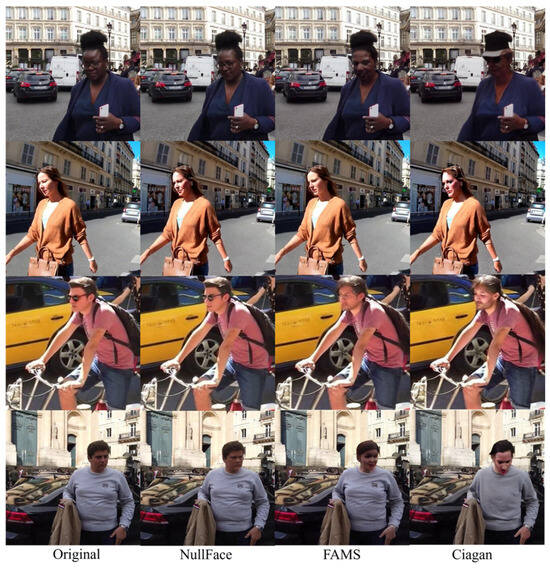

As shown in Figure 11 and Figure 12, in the two-person scene of the WIDER FACE and RealFace datasets, NullFace can anonymize multiple participants in the same image and generate internally consistent and mutually distinguishable new identities for each participant. Across different viewpoints, each participant’s facial appearance remains coherent in expression and pose while background details are preserved. Other methods often mix faces or produce inconsistent poses and expressions for multiple people. NullFace remains stable in two-person scenes and produces high-quality anonymization, which demonstrates stronger adaptation to complex scenarios and better privacy protection.

Figure 11.

Comparison results in two-person scenarios from the WIDER FACE dataset.

Figure 12.

Comparison results in two-person scenarios from the RealFACE dataset.

5.4. Evaluation of Object Detection Models Under Different Annotation Methods

After anonymization, images were annotated using the foundation-model-based method described in Section 2. To compare the impact of different AIA methods on detector training, the YOLOv8 model was trained using two annotation sets, manual labels and semi-automatic labels. Training used gradient descent with an initial learning rate of 0.01. Models were trained for 60 epochs with batch size of 32 and an input image size of 512. Besides the default augmentation, we applied rotation, shear, perspective transforms, and vertical flip. No additional pretraining was used. Data augmentation was disabled for the final 10 epochs. Early stopping was set to 30 epochs.

Table 8 reports the performance comparison for different annotation methods. AWS is the Amazon Web Services SageMaker Ground Truth annotation service. Using the default built-in algorithm with bounding box annotations, an automatic labeling task was constructed for face detection. Consistent with the proposed strategy, an IoU threshold of 0.5 was adopted as the matching criterion. As expected, the manually annotated dataset gave the best performance. The semi-automatic annotated dataset achieved results similar to manual annotation. The AWS annotation method performed the worst. These results indicate that the proposed annotation pipeline is clearly superior to the AWS labeling approach. Overall, precision values for all models were similar and above 90 percent, which is a strong score for detection tasks.

Table 8.

Performance comparison of different annotation methods on the WIDER FACE and RealFace dataset.

Finally, we evaluated the annotated datasets with the trained detectors and show results in Figure 13. Under the same training and evaluation settings, YOLOv8 maintained desirable detection performance on anonymized images. Although confidence scores dropped slightly after anonymization, the detector still localized targets accurately and produced high-confidence predictions. This finding indicates that the anonymization removed identity features while preserving head and face geometry and semantic context, keeping the data useful for downstream visual tasks.

Figure 13.

Comparison of dataset detection before and after annotation.

6. Conclusions

This paper addresses privacy protection and annotation needs in real traffic data collection. We propose a unified framework that deeply couples generative anonymization with foundation-model-driven automatic annotation. The main conclusions are listed below.

First, the NullFace diffusion anonymization method shows superior performance in complex traffic scenes. Quantitative evaluations show that it achieves the lowest reidentification rate while effectively preserving head pose, facial expression, and gaze direction. In addition, NullFace attains the lowest FID and MUSIQ scores, indicating superior visual quality. The method outperforms mainstream techniques in producing high-quality and realistic anonymized images that remain close to the original image distribution. It effectively removes identity features while preserving expression, head pose, and in-cabin background details, and it maintains consistency across scenarios.

Second, the semi-AIA system, which integrates Qwen3-VL, Grounding DINO, and Deepseek-R1, automates the full pipeline from data cleaning to standardized output. The system balances processing efficiency and label quality. Detection metrics for models trained on data from this pipeline match those trained on manual labels and are significantly better than results from the AWS auto-labeling service.

Finally, the tightly coupled anonymization and semi-AIA pipeline preserves downstream usability. YOLOv8 comparison experiments show that models trained on data processed and labeled by our method retain detection performance comparable to preanonymization levels. This indicates that the anonymization did not damage the geometric and semantic context that is critical for downstream vision tasks.

This work fills a gap in applying generative anonymization and automatic annotation to real traffic scenes. It provides a reference for future research on privacy protection and intelligent annotation for larger-scale, multimodal, and cross-domain traffic data.

Author Contributions

Conceptualization, T.W.; methodology, F.G.; software, Z.M. and P.Z.; validation, Z.M. and P.Z.; formal analysis, G.D.; writing—original draft preparation, T.W.; writing—review and editing, H.X.; visualization, Z.M. and P.Z.; supervision, H.X. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Conflicts of Interest

Authors Tong Wang, Feng Gao, Zian Meng, and Guohao Duan were employed by CATARC Europe Testing and Certification GmbH. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AIA | Automatic Image Annotation |

| GDPR | General Data Protection Regulation |

| NLP | Natural Language Processing |

| CV | Computer Vision |

| AI | Artificial Intelligence |

| ANNEAL | Annotation Cost-Efficient Active Learning |

| GANs | Generative Adversarial Networks |

| LDM | Latent Diffusion Model |

| DDPM | Denoising Diffusion Probabilistic Model |

| YOLO | You Only Look Once |

| MUSIQ | Multiscale Image Quality |

References

- Li, Y.; Moreau, J.; Ibanez-Guzman, J. Emergent visual sensors for autonomous vehicles. IEEE Trans. Intell. Transp. Syst. 2023, 24, 4716–4737. [Google Scholar] [CrossRef]

- Qi, Y.; Bi, J.; Li, L.; Peng, H.; Zhang, R.; He, X. A quantum-resistant lightweight hierarchical privacy protection scheme for traffic images. IEEE Internet Things J. 2025, 12, 24615–24630. [Google Scholar] [CrossRef]

- He, X.; Li, L.; Peng, H.; Tong, F. A multi-level privacy-preserving scheme for extracting traffic images. Signal Process. 2024, 220, 109445. [Google Scholar] [CrossRef]

- Lu, Z.; Qu, G.; Liu, Z. A survey on recent advances in vehicular network security, trust, and privacy. IEEE Trans. Intell. Transp. Syst. 2018, 20, 760–776. [Google Scholar] [CrossRef]

- Li, H.; Li, W.; Zhang, H.; He, X.; Zheng, M.; Song, H. Automatic image annotation by sequentially learning from multi-level semantic neighborhoods. IEEE Access 2021, 9, 135742–135754. [Google Scholar] [CrossRef]

- Lotfi, F.; Jamzad, M.; Beigy, H.; Farhood, H.; Sheng, Q.Z.; Beheshti, A. Knowledge graph construction in hyperbolic space for automatic image annotation. Image Vis. Comput. 2024, 151, 105293. [Google Scholar] [CrossRef]

- Chen, S.; Zhang, Z. A Semi-Automatic Magnetic Resonance Imaging Annotation Algorithm Based on Semi-Weakly Supervised Learning. Sensors 2024, 24, 3893. [Google Scholar] [CrossRef] [PubMed]

- Yu, Z.; Ye, Y.; Pan, J. scMapNet: Marker-based cell type annotation of scRNA-seq data via vision transfer learning with tabular-to-image transformations. J. Adv. Res. 2025, 76, 715–729. [Google Scholar] [CrossRef] [PubMed]

- Rennie, S.J.; Marcheret, E.; Mroueh, Y.; Ross, J.; Goel, V. Self-Critical Sequence Training for Image Captioning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 7008–7024. [Google Scholar] [CrossRef]

- Boukthir, K.; Qahtani, A.M.; Almutiry, O.; Dhahri, H.; Alimi, A.M. Reduced annotation based on deep active learning for Arabic text detection in natural scene images. Pattern Recognit. Lett. 2022, 157, 42–48. [Google Scholar] [CrossRef]

- Yang, Y.; Soatto, S. FDA: Fourier Domain Adaptation for Semantic Segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 4085–4095. [Google Scholar] [CrossRef]

- Tan, K.; Tang, J.; Zhao, Z.; Wang, C.; Zhang, X.; Miao, H.; Chen, X. Learning with limited annotations: Deep semi-supervised learning paradigm for layer-wise defect detection in laser powder bed fusion. Opt. Laser Technol. 2025, 185, 112586. [Google Scholar] [CrossRef]

- Hoxha, G.; Sumbul, G.; Henkel, J.; Möllenbrok, L.; Demir, B. Annotation Cost-Efficient Active Learning for Deep Metric Learning-Driven Remote Sensing Image Retrieval. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5636211. [Google Scholar] [CrossRef]

- Jiu, M.; Song, X.; Sahbi, H.; Li, S.; Chen, Y.; Guo, W.; Guo, L.; Xu, M. Image classification with deep reinforcement active learning. arXiv 2024, arXiv:2412.19877. [Google Scholar] [CrossRef]

- Wen, W.; Yuan, Z.; Zhang, Y.; Wang, T.; Xiao, X.; Zhao, R.; Fang, Y. Image Privacy Protection: A Survey. arXiv 2024, arXiv:2412.15228. [Google Scholar] [CrossRef]

- Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. In Proceedings of the 27th International Conference on Neural Information Processing Systems (NeurIPS 2014), Montréal, QC, Canada, 8–13 December 2014; pp. 2672–2680. [Google Scholar] [CrossRef]

- Ho, J.; Jain, A.; Abbeel, P. Denoising Diffusion Probabilistic Models. Adv. Neural Inf. Process. Syst. 2020, 33, 6840–6851. [Google Scholar] [CrossRef]

- Nichol, A.Q.; Dhariwal, P. Improved Denoising Diffusion Probabilistic Models. In Proceedings of the 38th International Conference on Machine Learning (ICML 2021), Virtual Event (Originally Vienna, Austria), 18–24 July 2021; Volume 139, pp. 8162–8171. [Google Scholar] [CrossRef]

- Song, J.; Meng, C.; Ermon, S. Denoising Diffusion Implicit Models. In Proceedings of the International Conference on Learning Representations (ICLR 2021), Virtual Event (Vienna, Austria), 3–7 May 2021. [Google Scholar] [CrossRef]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 10684–10695. [Google Scholar] [CrossRef]

- Saharia, C.; Chan, W.; Ho, J.; Kim, J.; Salimans, T.; Denton, E.; Ghasemipour, S.K.S.; Ayan, B.K.; Mahdavi, S.S.; Lopes, R.G.; et al. Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding. In Proceedings of the 36th International Conference on Neural Information Processing Systems (NeurIPS 2022), New Orleans, LA, USA, 28 November–9 December 2022; Volume 35, pp. 36479–36494. [Google Scholar] [CrossRef]

- Dhariwal, P.; Nichol, A. Diffusion Models Beat GANs on Image Synthesis. In Proceedings of the 35th International Conference on Neural Information Processing Systems (NeurIPS 2021), Virtual Event, 6–14 December 2021; Volume 34, pp. 8780–8794. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, T.; Wang, J.; Zhao, Q. LDFA: Latent Diffusion-Based Face Anonymization Framework. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Vancouver, BC, Canada, 18–22 June 2023; pp. 3198–3204. [Google Scholar] [CrossRef]

- Kingma, D.P.; Welling, M. Auto-Encoding Variational Bayes. In Proceedings of the International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar] [CrossRef]

- Chen, H.; Liu, Z.; Wang, Y.; Zhang, H. Diff-Privacy: Personalized Face Privacy Protection via Diffusion Models. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023. [Google Scholar] [CrossRef]

- Shaheryar, M.; Lee, J.T.; Jung, S.K. IDDiffuse: Dual-Conditional Diffusion Model for Enhanced Facial Image Anonymization. In Proceedings of the 17th Asian Conference on Computer Vision (ACCV 2024), Singapore, 2–6 December 2024; pp. 4017–4033. [Google Scholar] [CrossRef]

- Kung, H.-W.; Varanka, T.; Sim, T.; Sebe, N. NullFace: Training-Free Localized Face Anonymization. arXiv 2025, arXiv:2503.08478. [Google Scholar] [CrossRef]

- Deng, J.; Guo, J.; Xue, N.; Zafeirio, S. ArcFace: Additive Angular Margin Loss for Deep Face Recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–19 June 2019; pp. 4690–4699. [Google Scholar] [CrossRef]

- Yu, C.; Wang, J.; Peng, C.; Gao, C.; Yu, G.; Sang, N. BiSeNet: Bilateral segmentation network for real-time semantic segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 325–341. [Google Scholar]

- Di, D.; Feng, H.; Sun, W.; Ma, Y.; Li, H.; Chen, W.; Fan, L.; Su, T.; Yang, X. DH-FaceVid-1K: A Large-Scale High-Quality Dataset for Face Video Generation. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Honolulu, HI, USA, 21–23 October 2025; pp. 12124–12134. [Google Scholar]

- Yang, S.; Luo, P.; Loy, C.C.; Tang, X. WIDER FACE: A Face Detection Benchmark. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 5525–5533. [Google Scholar] [CrossRef]

- Thomas, L.R. RealFace–Pedestrian Face Dataset. arXiv 2024, arXiv:2409.00283. [Google Scholar]

- Ruiz, N.; Chong, E.; Rehg, J.M. Fine-Grained Head Pose Estimation Without Keypoints. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Salt Lake City, UT, USA, 18–22 June 2018; pp. 2074–2083. [Google Scholar] [CrossRef]

- Kellnhofer, P.; Recasens, A.; Stent, S.; Matusik, W.; Torralba, A. Gaze360: Physically Unconstrained Gaze Estimation in the Wild. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 6912–6921. [Google Scholar] [CrossRef]

- Ryumina, E.; Dresvyanskiy, D.; Karpov, A. In Search of a Robust Facial Expressions Recognition Model: A Large-Scale Visual Cross-Corpus Study. Neurocomputing 2022, 514, 435–450. [Google Scholar] [CrossRef]

- Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; Hochreiter, S. GANs trained by a two time-scale update rule converge to a local Nash equilibrium. In Proceedings of the 30th International Conference on Neural Information Processing Systems (NeurIPS 2017), Long Beach, CA, USA, 4–9 December 2017; pp. 6626–6637. [Google Scholar] [CrossRef]

- Ke, J.; Wang, Q.; Wang, Y.; Milanfar, P.; Yang, F. MUSIQ: Multi-Scale Image Quality Transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Virtual Event (Montreal, QC, Canada), 11–17 October 2021; pp. 5148–5157. [Google Scholar] [CrossRef]

- Kung, H.-W.; Varanka, T.; Saha, S.; Sim, T.; Sebe, N. Face Anonymization Made Simple. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Tucson, AZ, USA, 1–3 March 2025; pp. 1040–1050. [Google Scholar] [CrossRef]

- Maximov, M.; Elezi, I.; Leal-Taixé, L. CIAGAN: Conditional Identity Anonymization Generative Adversarial Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 5447–5456. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Published by MDPI on behalf of the World Electric Vehicle Association. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.