1. Introduction

The successful use of automated vehicles will have a great impact on human live [

1]. It will bring more convenient and easier driving experiences for human. How to offer accurate, stable, and reliable navigation information is pivotal in order to keep an automated vehicle safe. The inertial navigation system (INS) has been widely used for automated vehicle positioning and navigating because of its high autonomy, concealment, continuum, insusceptible climate, and the successive supply of position, velocity, and attitude (PVA) information [

2]. The strapdown inertial navigation system (SINS) is simple, small in size, and is convenient in maintenance compared with plat INS (PINS) and is also very popular in vehicle navigation systems [

3,

4]. However, the random drift error of gyroscope in SINS may lead to serious accumulated navigation errors during the long operation of SINS alone. As a result, how to keep the accuracy of SINS has attracted the attention of many researchers.

There are two ways to improve the accuracy of SINS. One uses an enhanced production technology of IMU such as improving the structure of the gyroscope [

5,

6,

7] and using more advanced materials [

8,

9] to improve the accuracy of SINS. However, these methods will increase the production cost and make a longer research cycle of IMU. The other way is to combine IMU with other sensors to mitigate the SINS drift. Integrated IMU with Global Navigation Satellite System (GNSS) is a widely used approach to improve the accuracy of SINS [

10,

11,

12]. Map-based navigation is another approach to get high accuracy navigation [

13]. Fusing map matching with SINS can also improve the accuracy of vehicle navigation [

14]. However, the signals of GNSS and satellite imageries can be jammed easily and are sensitive to weather and environmental conditions [

15]. In addition, using GNSS and map matching will cause the navigation system to lose the high autonomy of SINS.

Machine vision is increasingly being used in automated driving. An automated car is usually driven on structural roads [

16]. The characteristics of structural roads include clear road markings, a sample background, and obvious geometric shapes. Satzoda et al. propose a drive analysis method using a camera, IMU, and the Global Position System (GPS) to get the position of the vehicle [

17]. Vivacqua et al. propose the low-cost sensors approach for accurate vehicle localization and autonomous driving that uses a camera and SINS [

18]. However, in these applications, the visual sensor is only used for the lanes detection and the visual information is not fused for navigation. An improved Features from Accelerated Segment Test (FAST) feature extraction based on the Random Sample Consensus (RANSAC) method is proposed to remove the mismatched points in Reference [

19]. It uses point feature extraction to improve the accuracy of the navigation system during driving. These studies are all about moving vehicles. There is no study on the inertial and visual integrated navigation system when the vehicle stops. However, stops, such as waiting for the traffic lights, comity pedestrians that are inevitable in automated driving. The navigation information in static state is an important part of navigation systems as well. Thus, it is necessary to study the application of the navigation system at a static situation. Moreover, all these published papers on integrated navigation systems are combined with GNSS and lose the autonomy of navigation systems.

In this paper, a novel integrated navigation method only based on inertial visual sensors is proposed. The camera is not used for lane detection. It is the first time that a combined line feature in the image with SINS, in order to constitute an integrated navigation system, has been presented. Additionally, the feasibility of the proposed method is proved by the static experiment. It lays a theoretical foundation for the research of the proposed method in a dynamic situation. Experimental results show that the proposed inertial-visual integrated navigation system can improve the accuracy and reliability of the navigation system.

3. Visual Image Processing

In this section, we discuss how to obtain the line feature from the image, and how to translate the line feature to the navigation information, which can be combined with SINS. Firstly, the line feature includes the angle feature parameter

, which is extracted by the Hough transform [

22]. Next, by analyzing the mean of

, we get the relative attitude error of the vehicle when it stops. Then, it projected the relative attitude error from the

im-coordinate to the

b-coordinate. Thus, the relative attitude error can be combined with the attitude error of SINS via KF.

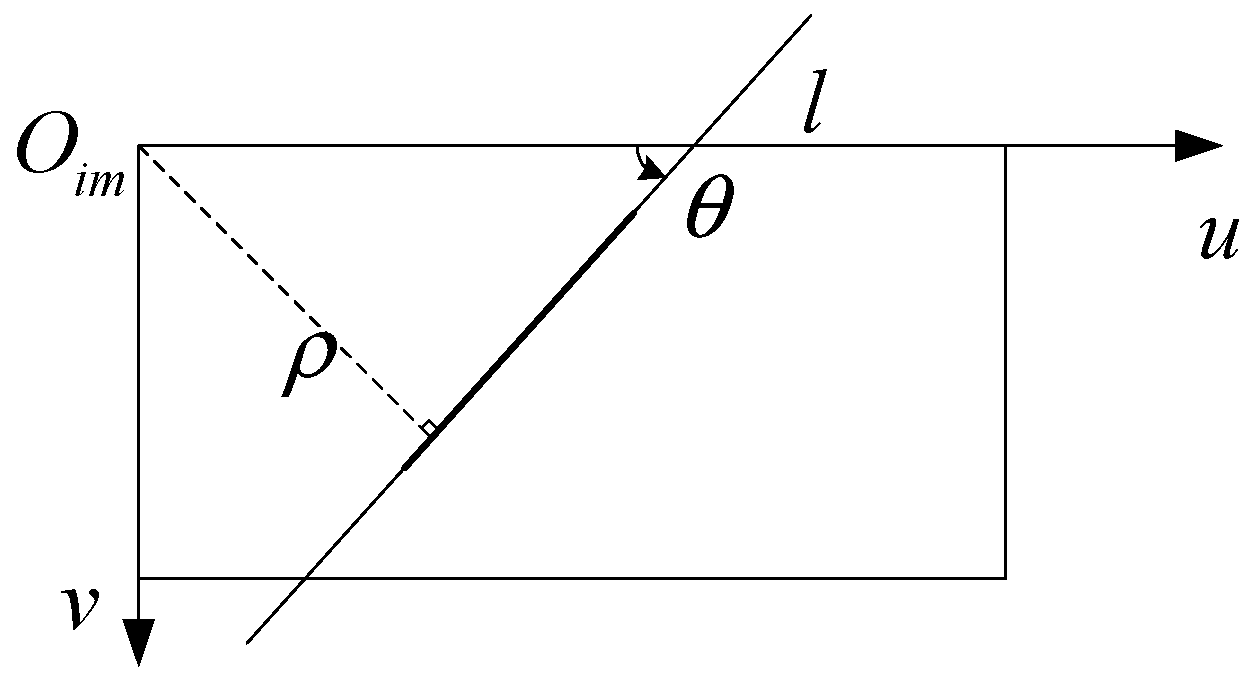

In

Figure 1, the line feature extracted by the Hough transform in image processing is given by [

22]

where

,

, and

denote the origin point and axis of the image plane, and the axis of the image plane,

denotes the angle of the line and axis

,

,

denote the distance of line and

.

It is important to transform the line feature in the n-coordinate as a navigation parameter for fusing image information into the navigation system. As per the discussion below, we can obtain a related attitude error, which can be combined with SINS, from the angle feature parameter .

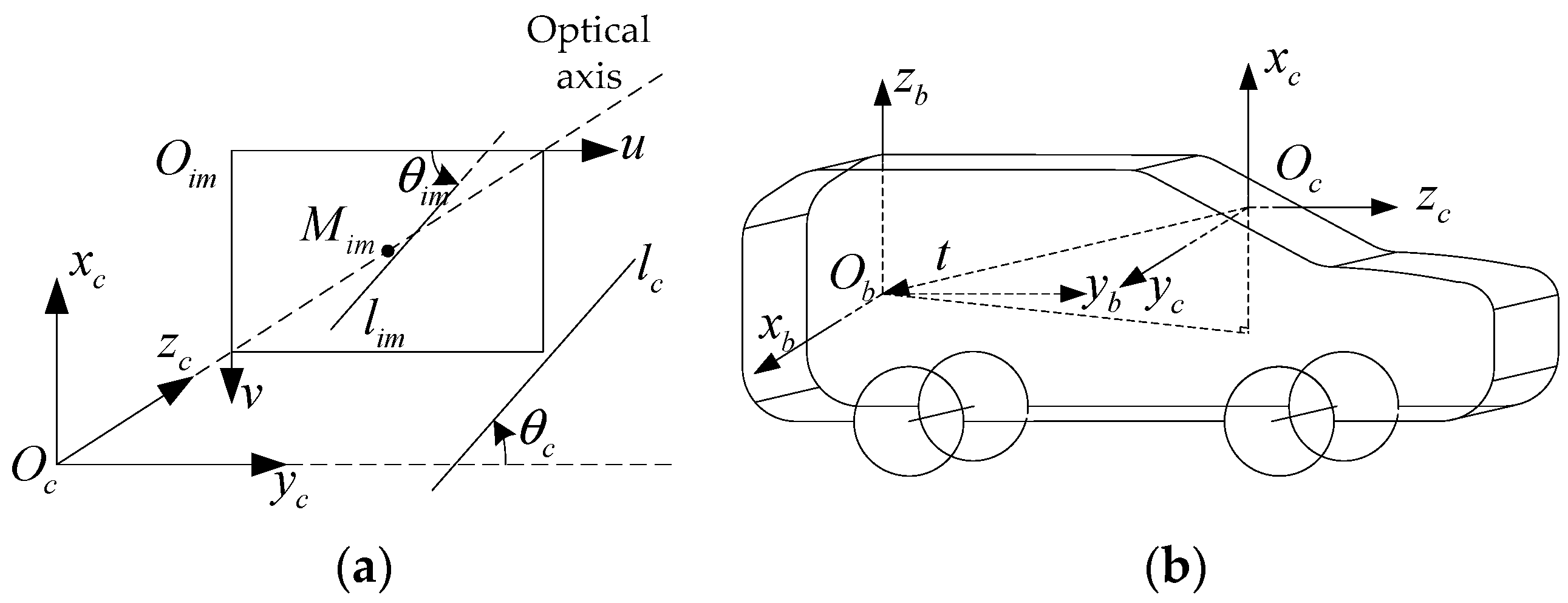

From the process of a camera record, as is known to all, we can get the relationship between the

im-coordinate and the

c-coordinate, as shown in

Figure 2a. The optical axis of the camera superposes with the

axis and crosses the image plane in the middle point

.

In

Figure 2a, the line

lim is in the

im-coordinate, and its angle feature parameter is

θim. The projection of

in

c-coordinate is

, and the angle feature parameter is

. According to the principle of camera imaging, the image plane parallels with the

plane in the

c-coordinate. So,

. Thus,

. In other words, the feature angle in the

c-coordinate is the same as within the

im-coordinate.

Figure 2b shows the relationship between the

c-coordinate and

b-coordinate. From it, the transfer matrix between the

c-coordinate and

b-coordinate is given by

The vehicle attitude includes the pitching angle, rolling angle, and heading angle. They mean the angle

b-coordinate rotates around the

,

, and

axis, respectively. In this paper, we set that the

c-coordinate is orthogonal to the

b-coordinate. So, the angle caused by heading and pitching will be not projected onto the

zc axis. Thus, when the vehicle is rolling,

θc will be changed. Additionally, the pitching and heading of the vehicle cannot change

. Thus, we can use

to describe the vehicle-rolling angle. We define the vehicle rolling angle error as

, it is the difference between

and the mean value of

. Thus, the visual attitude error

in the

c-coordinate is derived as

Thus,

can be translated into the

b-coordinate as

, which is expressed as

Thus, the visual attitude error in the

n-coordinate is derived as

4. The Proposed Fusion Algorithm

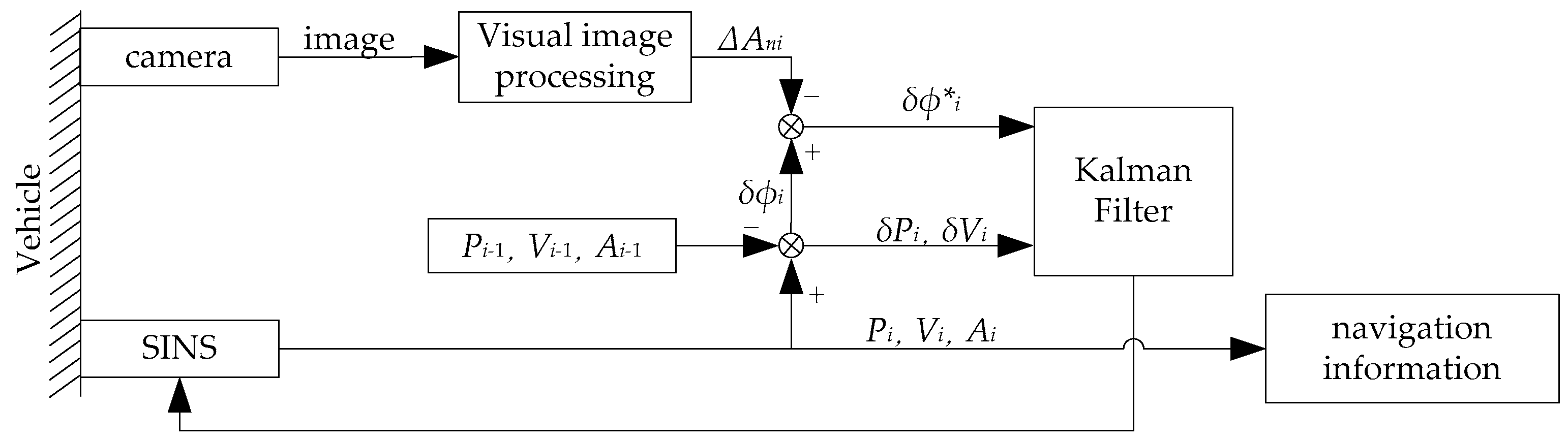

In this section, we describe how to fuse the visual attitude error from images with SINS. The inertial-visual integrated navigation system schematic is shown in

Figure 3. We fuse the visual attitude error with the SINS attitude error. Then, using Kalman Filter to estimate errors of position, velocity and attitude to improve the accuracy of SINS. In this section, we discuss the algorithm of how to fuse the visual attitude error with SINS.

To fuse the visual attitude error with SINS, the attitude error of inertial-visual integrated navigation system can be obtained as

Thus, the new measurement vector is expressed as

An image is constituted by pixels. The image offered by the camera, which is fixed on the static vehicle, only has slight variations. The line feature in those images is a random value in finite countable values. It is not vailed that applying the white noise model to the inertial-visual integrated navigation system measurement noise. Thus, we cannot fuse the visual attitude error with the SINS directly. It is necessary to find a new model for the inertial-visual integrated navigation system measurement noise.

Ideally, the line feature of images from the camera fixed on static vehicles is the same. That means that the visual attitude error of static vehicles is zero. However, there are some noise sources, like the shuddering of the engine and the actions of the driver and passengers. Thus, can be thought of as the visual attitude measurement noise.

In a known size image, the status number of line feature

is finite and enumerable. For an appointed line, in all the

frames of the video, line feature

is only related to the last one. In other words, we define

as the state space of

. For any

, there are

,

,…,

. When it is known that

,

,…,

, the condition probability curve is related to

, and is not related to

,

,…,

. That means

Thus, the visual attitude measurement noise can be modeled by the first-order Markov Process.

The inertial-visual integrated navigation system measurement noise,

, includes SINS measurement noise and the visual attitude measurement noise. SINS measurement noise is a Gaussian White noise. Additionally, the inertial-visual integrated navigation system is a linear system. Thus,

also can be modeled by the first-order Markov Process. Thus,

satisfies the equation as

where

,

is the inverse correlation time constant of the first-order Markov Process,

is the sample interval,

is white noise with a zero mean and is uncorrelated with

[

20].

From Equation (12),

can be expressed as

We have combined the Equations (1), (22), and (23) into the right side of Equation (2) here

By rearranging Equation (24), it becomes

The measurement matrix and measurement noise vector can then be expressed as

Additionally, the new measurement equation becomes

The mean and variance of

can be expressed as

where

is white noise with a zero-mean. Thus, the covariance matrix of

can be expressed as

is correlated with

, and the correlation coefficient is

. Therefore, Equations (3), (5), and (7) become

5. Experiment and Results

As shown in

Figure 4, an experimental system is assembled to evaluate the proposed approach. The system includes an IMU, a camera, a GPS, and a power system on a vehicle. IMU is constituted by a three-axis fiber-optic gyroscope with three accelerometers on each gyro-axis. The camera is a vehicle data recorder. The IMU, GPS receiver, and power system are in the vehicle trunk. The IMU is fixed on the vehicle via a steel plate which is parallel with the under panel of the vehicle. The GPS antennas are on the top of the vehicle. The camera is attached to the windshield. When installing the camera, the Gradienter, and the Vertical marker are used to make sure the optical axis is parallel with the North-East plane of the

n-coordinate.

During the experiment, the vehicle is started and stopped. In this experiment, the GPS is used to provide a coarse alignment of the position system and to provide a position reference. In the experiment, the frequency of the IMU is 100 Hz, the frequency of the video is 25 Hz, the image resolution is 1920 × 1080, and the static time length is two min.

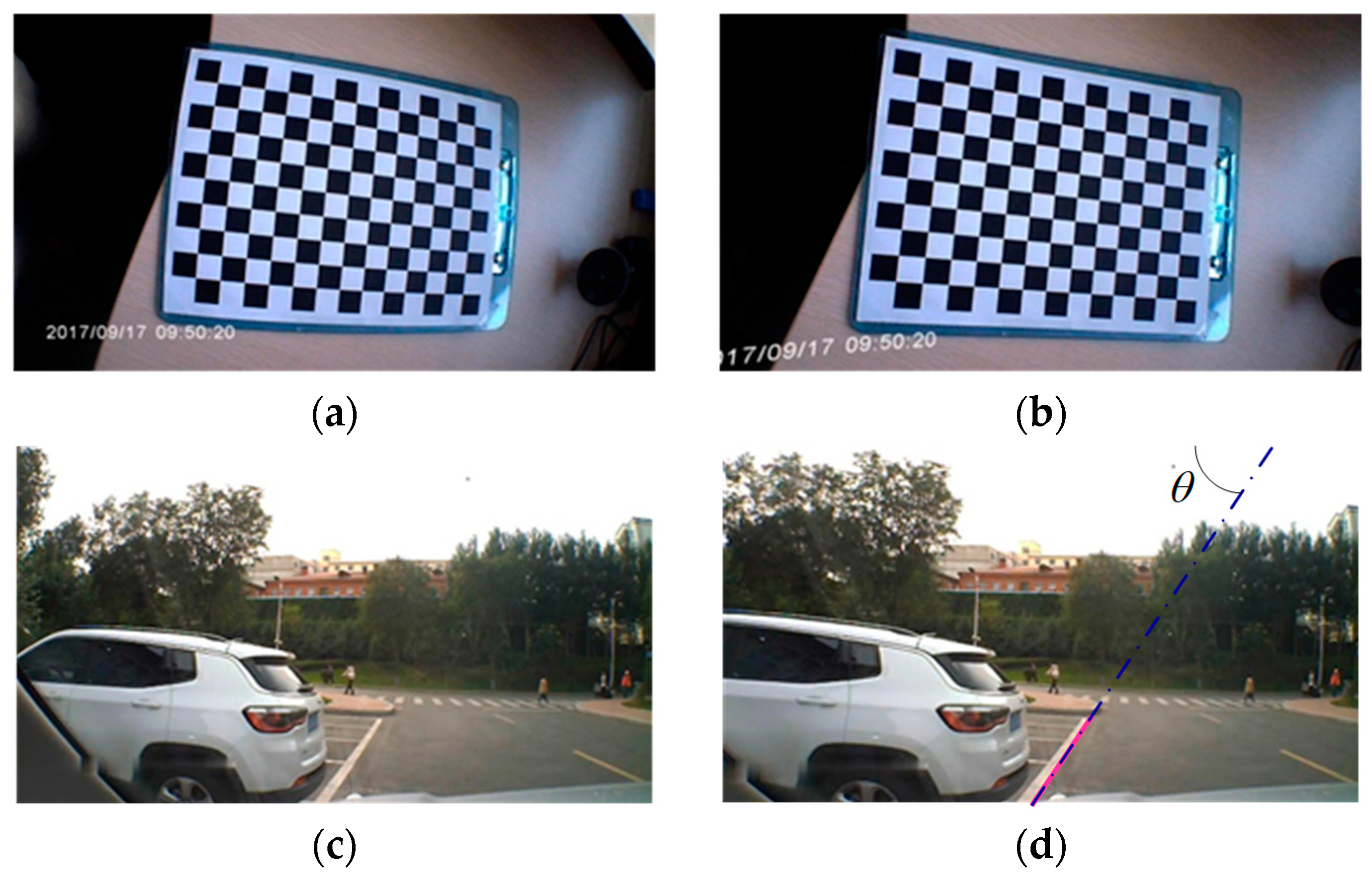

5.1. Camera Information Pre-Processing

The camera used in the experiment is a wide-angle automobile data recorder. As the picture taken from the wide-angle is distorted, the picture needs calibration. Thus, the picture needs pre-process to remove its distortion. We calibrated the camera in a lab environment to get the parameters of the camera using the Lee-method [

23]. Camera calibration results are shown in

Figure 5a,b. We then used the parameters from the calibration to calibrate the on-road experiment images, as shown in

Figure 5c,d. Apparently, the distortion was reduced.

During the experiment, the vehicle stops, the view of the camera is fixed. There are two-lane lines, the white solid line, and the yellow dotted line. We chose the white one as the reference object and extracted its line feature parameters, as shown in Equation (18). As shown in

Figure 5d, the pink line is the extraction line, and the blue solid-dotted line is the schematic of the extraction line. After extracting all images in the video during this static experiment, we get

in Equation (14) of all the images.

Based on the line feature parameter

, and the transfer matrixes

and

, calculated the attitude error

offered by image processing as shown in

Section 3.

5.2. Experimental Results and Discussions

In this section, we discuss the experimental results from two aspects, static attitude error and static position estimation. The experimental results prove that the proposed inertial-visual integrated navigation system can improve the accuracy and stability of the navigation system.

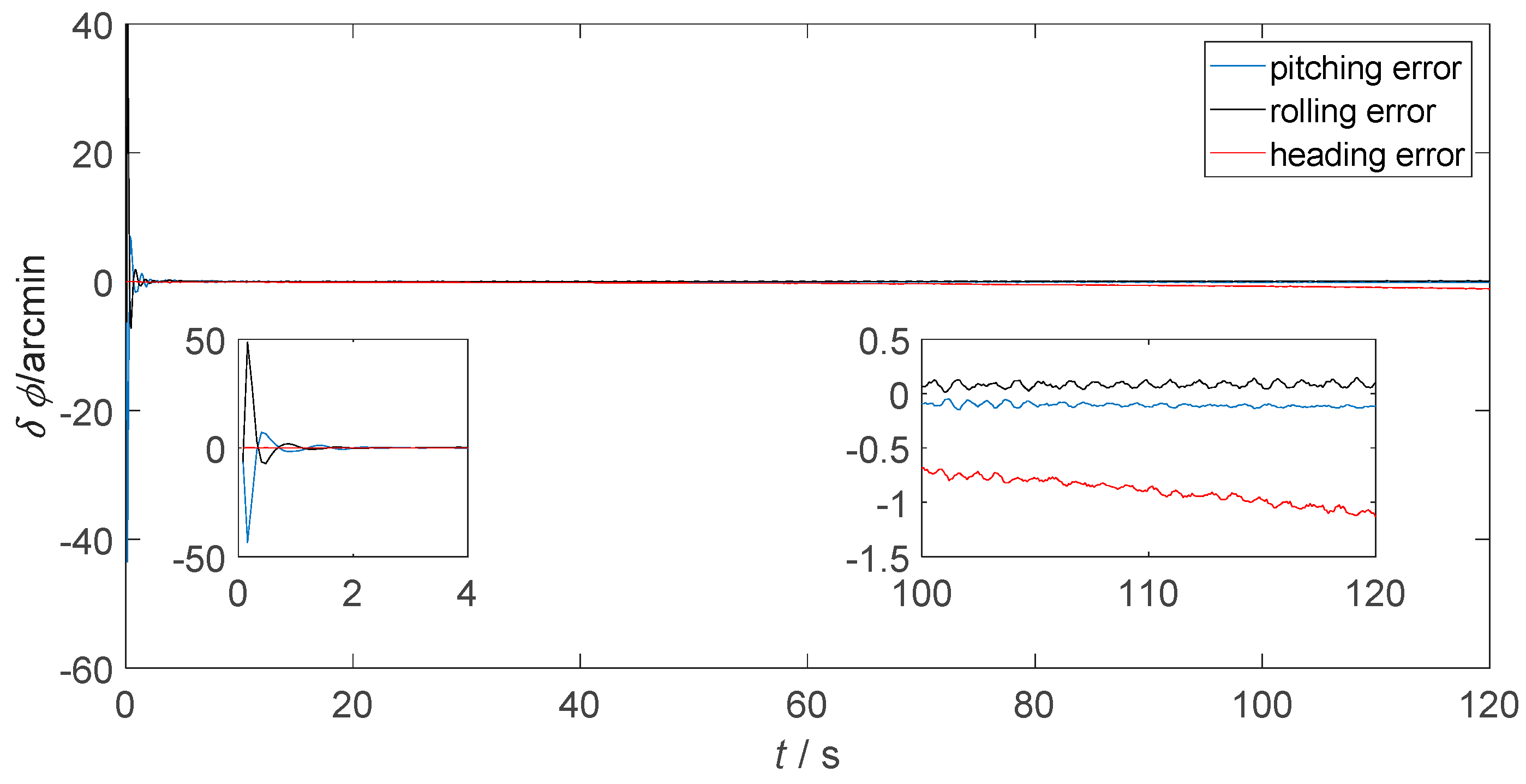

Figure 6 presents the static attitude error of only-SINS.

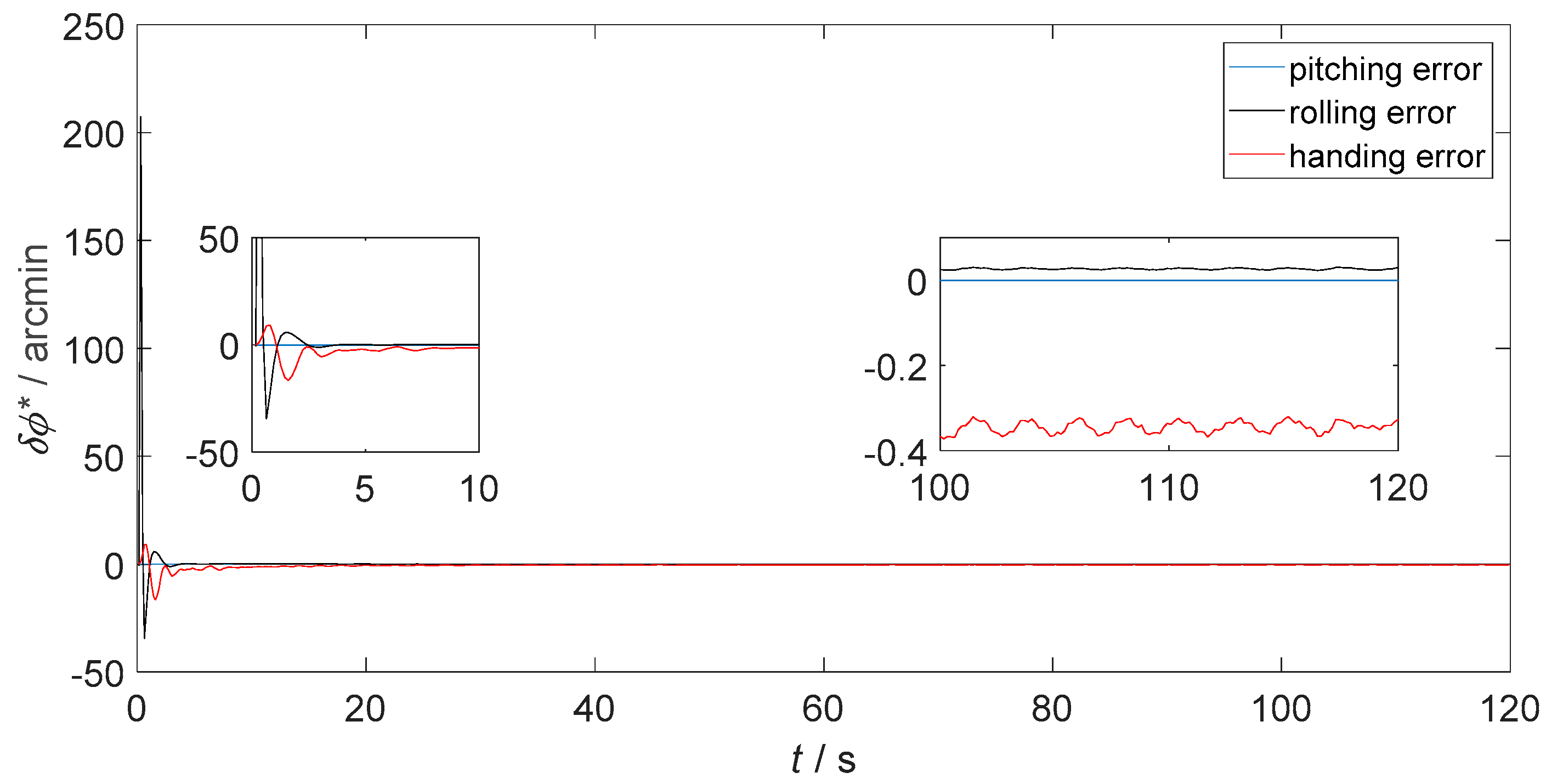

Figure 7 and

Figure 8 show the static attitude error of the inertial-visual integrated navigation system that fused directly and as proposed, respectively. The attitude error includes the pitching error, rolling error, and heading error, as shown in the legend. While, the initial alignment times of integrated navigation systems are longer than only-SINS. The initial alignment time of the proposed inertial-visual integrated navigation system meets the requirements of automated vehicle applications. Besides, the integrated navigation system has a longer initial alignment time that resulted from the data rate of the camera being lower than that of the IMU. Thus, using high data rate cameras is a useful method to reduce the initial alignment time of the integrated navigation system [

24].

The attitude errors from 100 s to 120 s are shown in the enlarged inset pictures of

Figure 6,

Figure 7 and

Figure 8. For only-SINS, the heading error was increased, up to −0.65 arcmin at 120 s. For the direct inertial-visual integrated navigation system, the heading error was increased too, up to −1.20 arcmin at 120 s. It confirms that the white noise model does not fit to the inertial-visual integrated navigation system measurement noise, while the attitude errors of the proposed model are stable, as shown in

Figure 8. The heading error keeps at −0.35 arcmin in the enlarged picture. The heading error of the proposed integrated method is more stable than the only-SINS and the direct integrated method during, from 20 s to 120 s. It is decreased by 46.15% compared to the heading error of only-SINS at 120 s. The

Figure 6,

Figure 7 and

Figure 8 show that the proposed inertial-visual integrated navigation system improves the accuracy and stability of the navigation system.

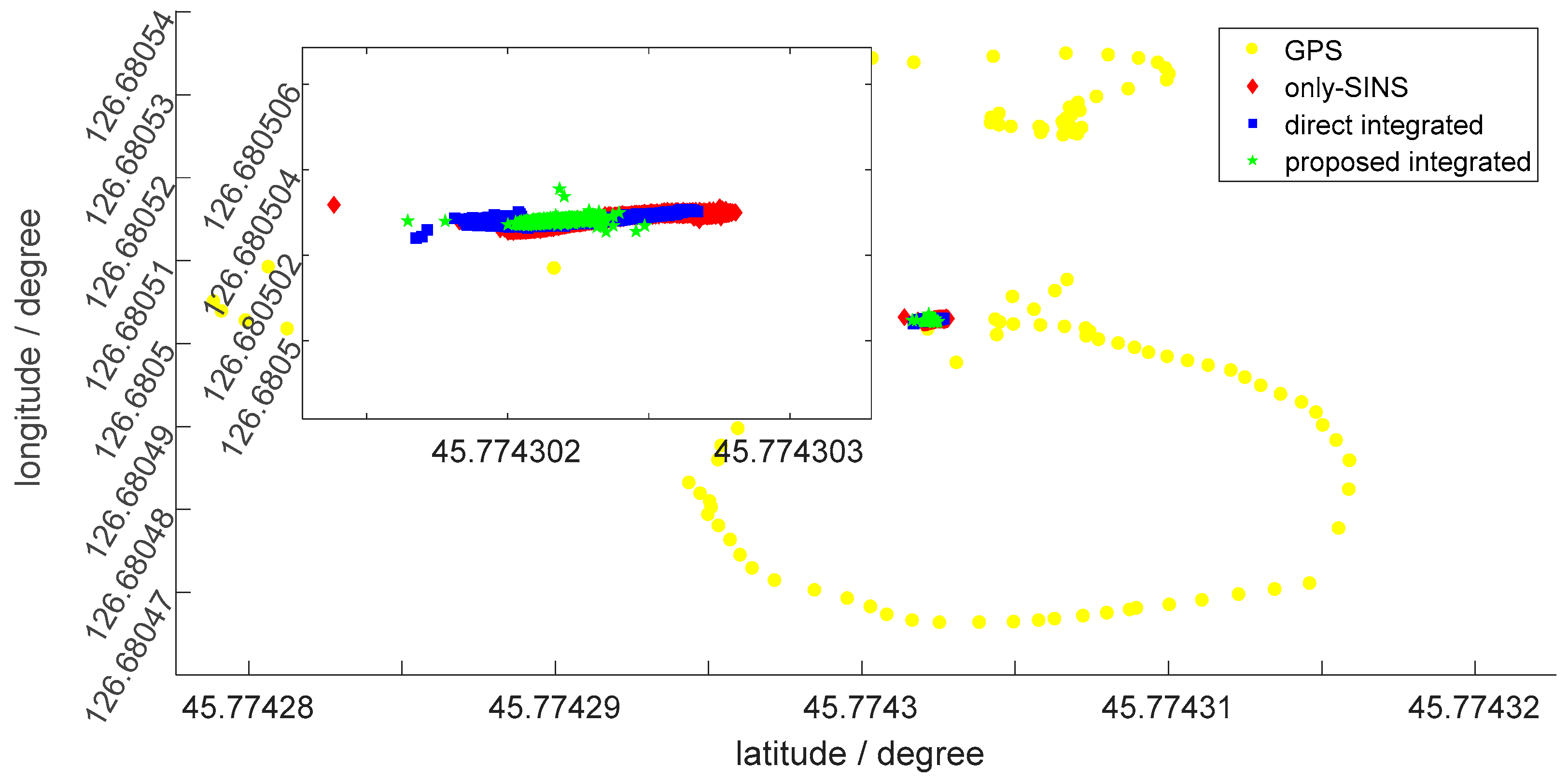

Figure 9 shows the static position, during 120 s, by the latitude and longitude of the GPS, only-SINS, direct inertial-visual integrated navigation system, and the proposed inertial-visual integrated navigation system, as shown in the legend. The enlarged inset picture indicates the position of the only-SINS and integrated navigation system. As shown in

Figure 9, the position estimation range of the proposed inertial-visual integrated navigation system is more concentrated than the only-SINS and direct inertial-visual integrated navigation system. For GPS, the amplitude variation of latitude and longitude are 4 × 10

−50 and 8 × 10

−50, respectively. The GPS position can prove that the position estimations of the other three navigation modes is receivable. For the only-SINS, the amplitude variation of latitude and longitude are 1.4236 × 10

−60 and 6.3310 × 10

−70, respectively. For the direct inertial-visual integrated navigaiton system, the amplitude variation of latitude and longitude are 9.9625 × 10

−70 and 6.7123 × 10

−70, respectively. The position range is not obvious difference with only-SINS. Thus, the direct integrated method cannot improve the accuracy of the navigation system. For the proposed inertial-visual integrated navigaiton system, the amplitude variation of latitude and longitude are 8.3885 × 10

−70 and 3.5869 × 10

−70, respectively. The position estimation of the proposed inertial-visual integrated navigation system is more stable than that of only-SINS and direct inertial-visual integrated navigation system. It also can be reflected by the position standard deviation, as listed in

Table 1.

Figure 9 shows that the proposed inertial-visual integrated navigation system improves the accuracy of the position estimation.

Table 1 lists the position standard deviation of GPS, only-SINS direct inertial-visual integrated navigation system, and the proposed inertial-visual integrated navigation system. It shows the standard deviation of the proposed inertial-visual integrated navigation system is lower than only-SINS and direct inertial-visual integrated navigation systems. Compared with only-SINS, standard deviation of the proposed inertial-visual integrated navigation system decreases by 69.34% and 21.27% of latitude and longitude, respectively. The latitude and longitude standard deviation of the proposed inertial-visual integrated navigation system are 35.59% and 72.99% of the direct inertial-visual integrated navigation system. The data in

Table 1 verifies that the inertial-visual fusion method can improve the stability of the navigation system.

6. Conclusions

In this paper, it is the first time that the inertial-visual integrated navigation system, which combined the line feature in the image with SINS via KF, has been proposed. The experimental results show that the proposed inertial-visual integrated navigation system improves the accuracy and reliability of the navigation system prominently. In the meantime, the inertial-visual integrated system can keep the autonomy of the SINS. This method provides a high accuracy inertial-visual integrated navigation system and fills the static condition of an automated vehicle. At the same time, it lays a theoretical foundation for the research of the proposed method in dynamic situation.

The future work plan includes the proposed inertial-visual integrated system used on automated vehicle moves, like straight driving and making a turn. For the information fusion algorithm, the improved Unscented Kalman Filter (UKF) will be used to reduce the computational load and improve the robustness of the KF [

25,

26]. Additionally, the line feature recognition algorithm will be perfected to improve the accuracy, stability, and reliability of the navigation system.