Abstract

Engineering laboratory courses are essential for developing conceptual understanding and practical skills; however, the time students spend assembling prototypes and troubleshooting wiring issues often reduces opportunities for analysis, programming, and reflective learning. To address this limitation, this study designed and evaluated an integrated STM32-based educational laboratory that consolidates the main peripherals required in a microcontroller course into a single Printed Circuit Board (PCB) platform. A quasi-experimental intervention was implemented with 40 engineering students divided into a control group using traditional STM32 Blue Pill and breadboard connections and an experimental group using the integrated platform. Throughout ten laboratory sessions, data were collected through pre- and post-tests, laboratory logs, and the Motivated Strategies for Learning Questionnaire Short Form (MSLQ-SF). Results showed that the experimental group achieved a Hake normalized learning gain of 40.09% compared with 16.22% in the control group, also showing that it completed the sessions an average of 27 min faster and facilitated a substantial reduction in hardware- and connection-related errors. Significant improvements were also observed in metacognitive and improved motivational and self-regulated learning scores. Overall, the findings indicate that reducing operational barriers in laboratory work enhances both cognitive and motivational learning processes, supporting the adoption of integrated educational hardware to optimize learning outcomes in engineering laboratory courses.

1. Introduction

Education 4.0 promotes an educational model that integrates digital technologies, artificial intelligence, automation, and connectivity to address the demands of the fourth industrial revolution (Bygstad et al., 2022; Chaka, 2022). This approach creates interactive learning environments that blend theory, practice, and collaboration to develop technical, analytical, and socio-emotional skills (Otto et al., 2024). Within the context of STEM disciplines (Science, Technology, Engineering, and Mathematics), this paradigm encourages students to adapt to evolving technological environments, solve complex problems, and apply their knowledge in real-world situations (Cao et al., 2025).

Engineering disciplines focused on the development of electronic, mechatronic, and embedded systems rely significantly on hands-on learning (Charitopoulos et al., 2022). In these fields, the laboratory plays a crucial role in the educational process, enabling students to apply theoretical principles, validate models, design circuits, and develop practical skills (Das et al., 2022). Courses such as electrical circuits, analog and digital electronics, instrumentation, microcontrollers and sensors require practical experience to demonstrate the physical behavior of systems. This hands-on approach fosters meaningful, contextually relevant learning closely aligned with the technological demands of modern industry (Li & Liang, 2024).

In applied engineering education, traditional methods often require students to completely build and set up circuits or systems before they can conduct experiments. While this approach helps strengthen fundamental assembly and verification skills, it requires significant time spent on operational tasks and troubleshooting (Tokatlidis et al., 2024). Consequently, the time available for analysis, interpretation, and understanding operating principles is diminished, limiting the depth of learning and the overall benefits of laboratory work (Moloi, 2024).

At more advanced levels of training, the goal of practical exercises shifts from circuit assembly to the direct application of course knowledge. This includes tasks such as designing control algorithms, programming microcontrollers, and analyzing signals (Smolaninovs & Terauds, 2025). To achieve this objective, it is essential to have pre-structured platforms that allow laboratory time to be dedicated to experimentation, analysis, and concept validation (Escobar-Castillejos et al., 2024). This approach ensures a more efficient learning experience that focuses on a functional understanding of systems (Nazarov & Jumayev, 2022).

To effectively implement practical exercises and maximize laboratory time, it is essential to include structured learning resources that support their execution and maintain consistent working conditions (Weisberg & Dawson, 2023). In this context, integrated instructional laboratories built on compact Printed Circuit Board (PCB)-based platforms consolidate the critical elements of the experimental environment into a single system. This simplification reduces errors during the execution of practical exercises (Luo et al., 2025). By relying on such integrated laboratory platforms, students can dedicate more time to analysis, programming, and conceptual application, thereby enhancing technical understanding and fostering meaningful learning (Alessa, 2025).

In this context, the Robotics and Mechatronics Engineering Program at the Autonomous University of Zacatecas proposes the implementation of a customized integrated educational laboratory that incorporates peripherals and modules representing the main topics of the microcontroller course. This tool is designed as a compact and functional laboratory that allows students to carry out the practical exercises corresponding to the course content without requiring external setups.

This work presents the design and implementation of an integrated educational laboratory built on a compact STM32-based PCB platform. Conceived as a unified system with General-Purpose Input/Output (GPIO) pins, Analog-to-Digital Converters (ADCs), timers, and Inter-Integrated Circuit (I2C), Serial Peripheral Interface (SPI) communication interfaces, as well as sensors and motor-control modules, the platform eliminates the need for external hardware during practical exercises. Additionally, this study includes a pedagogical evaluation conducted with 40 students enrolled in a microcontroller course. The evaluation methodology comprised diagnostic and post-test surveys, as well as a motivation survey, to assess the integrated laboratory’s impact on learning outcomes, laboratory efficiency, and student perceptions in comparison to traditional teaching methods.

The main contributions of this paper are summarized as follows:

- The hardware design was developed in alignment with the educational and thematic goals of the microcontroller course, ensuring that each module and peripheral of the integrated laboratory directly enhances the theoretical concepts and practical skills of the subject supporting remote integration, ensuring scalability and long-term adaptability for microcontroller learning environments.

- Institutional autonomy and capacity for technological innovation are promoted by developing an educational resource adapted to the specific needs of the academic program.

- A comprehensive pedagogical evaluation is included to assess the impact of using the PCB on student learning, the efficiency of practice development, learning strategies, and motivation compared to traditional methods.

- The quasi-experimental intervention offers evidence of the platform’s impact, showing improvements in conceptual understanding, reductions in execution time, fewer operational errors, and enhanced motivational and self-regulated learning outcomes.

In this context, the following research question arises: To what extent can an integrated educational platform enhance learning compared to traditional methods by reducing operational errors, optimizing practice time, and improving perceived conceptual understanding and laboratory efficiency?

The remainder of this paper is organized as follows: Section 2 reviews related work on the use of educational platforms and their impact on engineering learning. Section 3 describes the development of the integrated laboratory built on a compact STM32-based PCB platform. Section 4 details the experimental study design, the academic context, the participants, and the instruments used to evaluate student performance and perception. Section 5 presents the results, while Section 6 discusses the impact of using the integrated laboratory on the teaching and learning process. Finally, Section 7 presents the conclusions and future perspectives of this work.

2. Related Work

Recent literature has emphasized the importance of educational hardware as a crucial tool for enhancing hands-on learning and conceptual understanding in engineering education. The research reviewed over the past few years includes the design of open-source platforms, as well as the development of educational kits for microcontrollers and the Internet of Things (IoT). While not all studies directly compare traditional methods with hardware-based approaches, there is consensus that interacting with physical devices boosts student motivation, improves knowledge retention, and supports the acquisition of practical skills.

Among the works that have most contributed to the integration of hardware for educational purposes. Notably, Cornetta et al. (2025) and Takács et al. (2023) developed open platforms for teaching multisensor instrumentation and automatic control, respectively. These systems, which utilize ESP32 and STM32 microcontrollers, enabled students to engage in experimental embedded design and programming activities, leading to improved understanding and engagement.

Building on these initiatives, other researchers have focused on developing custom hardware boards. Holub et al. (2025) highlighted the extensive use of commercial kits and simulations for teaching analog circuits. Their findings indicated sustained improvements in student performance and satisfaction. Similarly, Ramos et al. (2020) introduced HOPPY, an open-source educational robot designed for teaching dynamics and control, which significantly increased participation in mechatronics courses.

The use of custom-developed hardware has become increasingly important in recent years. Sabari Raj et al. (2023) proposed an educational board based on the Raspberry Pi Pico microcontroller to teach embedded systems concepts in an accessible and reproducible environment. Similarly, Budihal et al. (2022) redesigned the Digital Circuits course by incorporating PCB projects and results-based learning, resulting in a significant improvement in student motivation and mastery of simulation tools.

Several studies have specifically examined microcontroller-based education, focusing on both hardware development and pedagogical effectiveness. Onyeka et al. (2022) created a kit using the Atmega32A microcontroller with integrated peripherals, while Eliza et al. (2025) designed a trainer kit based on the Arduino UNO, which enhanced student motivation and active participation. Both studies highlight the significance of hardware accessibility and modularity in fostering autonomous learning. Similarly, Mahmood et al. (2022) developed a training kit for blended learning environments that used the ATMEGA2560, combining face-to-face practice with simulation in Proteus, resulting in a 34% increase in perceived learning.

Recent studies have connected microcontroller kits with IoT technologies and more rigorous assessments of student learning. Habibi and Buditjahjanto (2024) demonstrated that a NodeMCU ESP8266-based kit enhances cognitive outcomes when compared to a conventional kit. Additionally, Habibi et al. (2025) found significant improvements in psychomotor skills using an experimental design with Analysis of Variance (ANOVA). Meanwhile, Tkachuk et al. (2025) integrated microprocessor simulation and programming tools, observing an increase of 17% in academic performance and over 40% in digital skills within the experimental group. Collectively, these studies underscore the positive effects of educational hardware on students’ cognitive abilities, practical skills, and digital competencies.

Alternative materials and approaches for teaching electronics have also been explored. Peppler et al. (2023) compared paper circuits with traditional breadboards and concluded that the former promotes a deeper understanding of polarity and PCB design in the early stages of learning. These results, although at a pre-university engineering level, support the idea that the type of hardware directly influences how students conceptualize circuits.

Recent studies that formally compare traditional methods with dedicated hardware are closely aligned with this research. Peter et al. (2024) conducted a quasi-experimental study comparing a customized educational laboratory to the traditional method using breadboards and conventional instrumentation. The results demonstrated that utilizing dedicated hardware improved students’ initial conceptual understanding by 23% (83% for the hardware group compared to 60% for the traditional group), increased knowledge retention by 4% (80% versus 76%), and achieved 100% hands-on participation. In contrast, the traditional group did not reach this level of engagement. Additionally, sentiment analysis using BERT revealed that 75% of students expressed positive perceptions of the PCB experience.

3. Design and Development of the Educational PCB

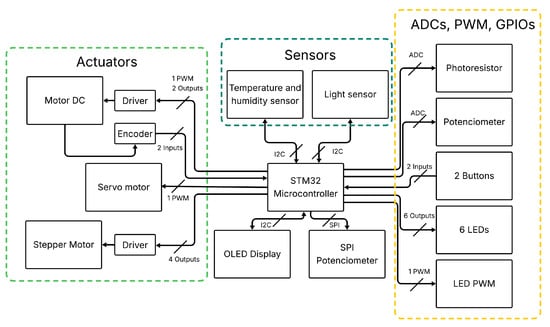

The integrated educational laboratory serves as a comprehensive teaching tool to optimize practical exercises in the microcontroller course. It was developed to address the need for a device that combines a microcontroller’s primary peripherals on a single platform, thereby eliminating reliance on external components and minimizing connection errors. The developed integrated laboratory, called STM32Lab, involved a hardware requirements analysis and the corresponding circuit and PCB design.

3.1. Hardware Characteristics

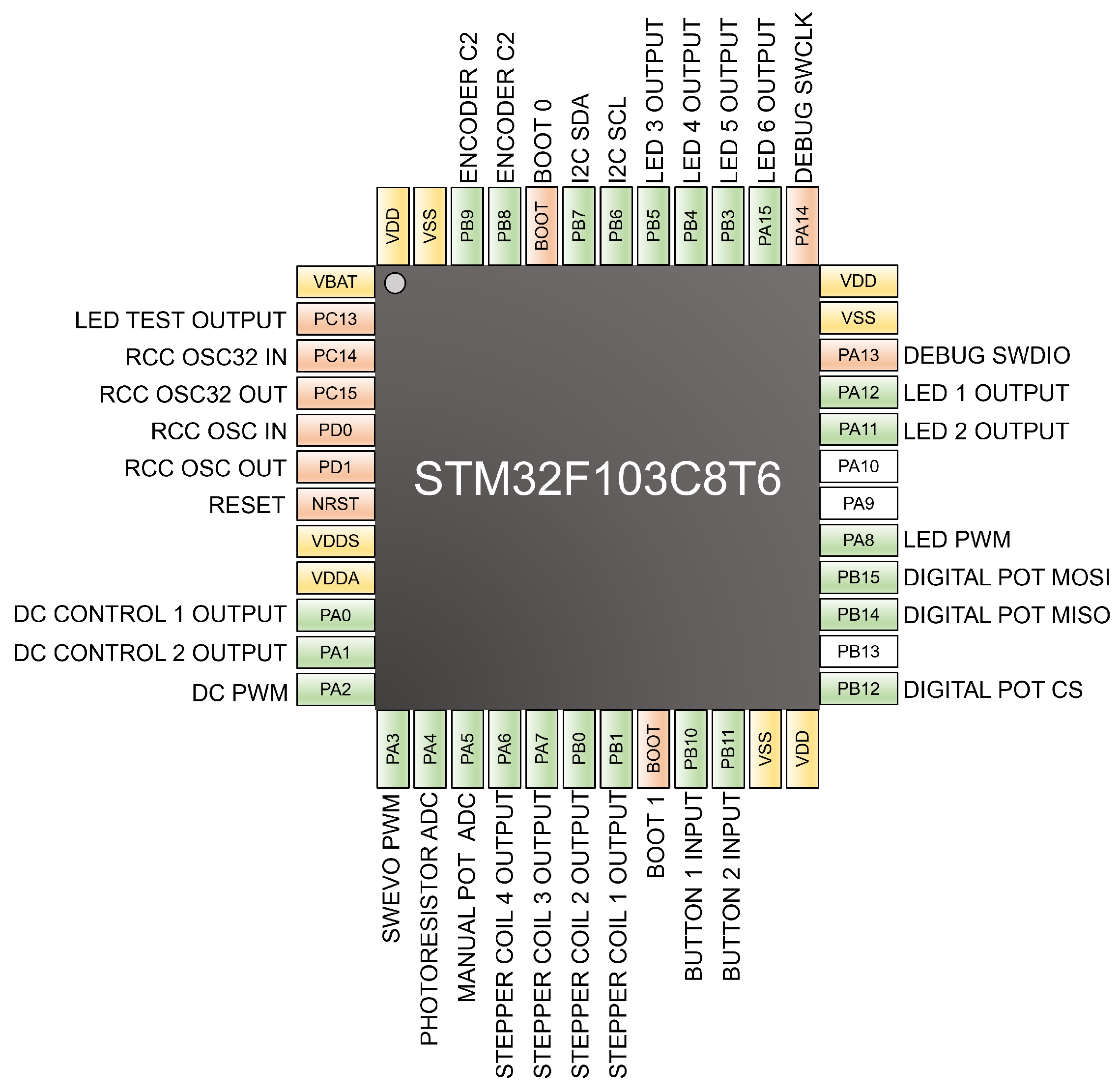

The STM32F103C8T6 microcontroller (STM32F103C8T6, 2023) is the core of the STM32Lab, chosen for its effective balance of performance, cost, and availability in academic environments (Jacko et al., 2022; Sozański, 2023). This device, based on the Advanced RISC Machine (ARM) Cortex-M3 core, delivers a high-performance 32-bit processing architecture capable of running at up to 72 MHz, supporting efficient interrupt handling, precise timer operations, and low-latency peripheral control—key features that allow students to work with real-world embedded applications rather than simplified abstractions. In addition, it integrates essential peripherals for modern microcontroller education, including 12-bit ADC converters, Pulse-Width Modulation (PWM) timers, and communication interfaces such as USART, SPI, and I2C. Its compatibility with widely used development tools, such as STM32CubeMX (STMicroelectronics, 2025) and Keil uVision (Arm Ltd., 2024), further facilitates the creation of structured, reproducible exercises within the course.

The STM32Lab architecture is designed with a modular approach. Each component represents a key peripheral of the STM32 microcontroller and directly aligns with the themes of the course. This design ensures that each module contributes to the gradual development of the technical skills outlined in the academic program.

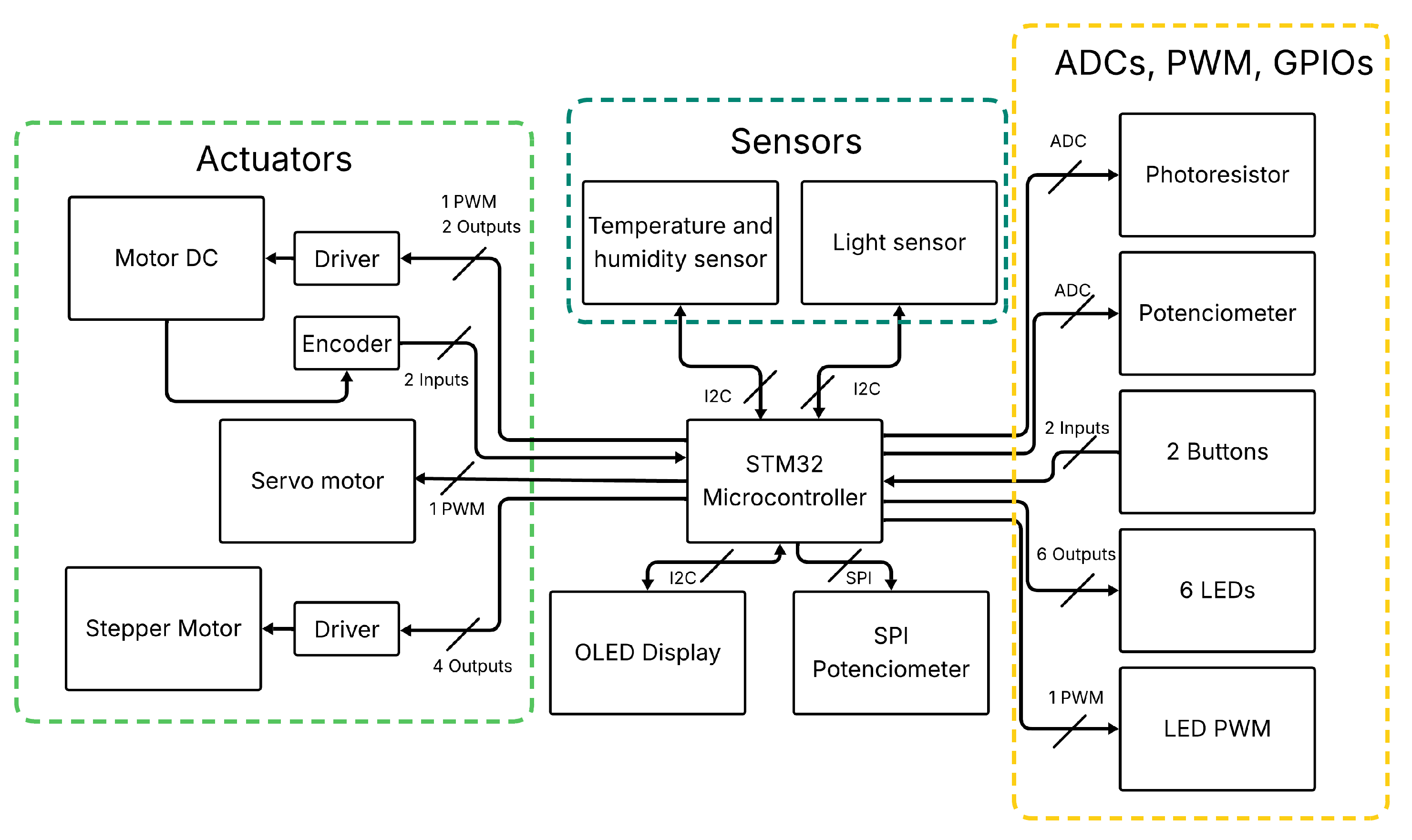

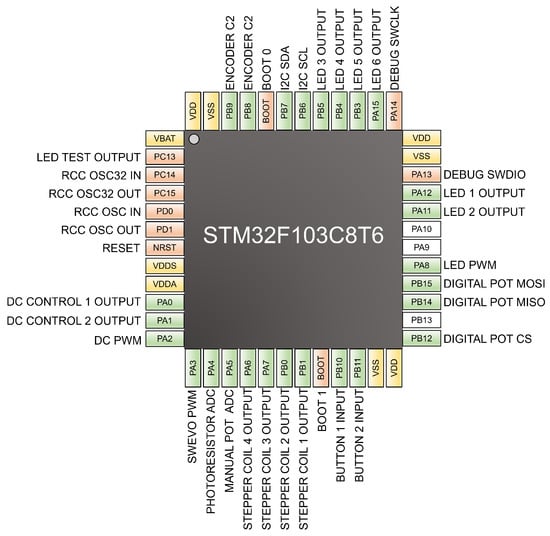

Figure 1 shows the general architecture of the STM32Lab, organized into functional blocks representing the main microcontroller peripherals and their relationships to the input, output, sensing, and control modules. In total, the PCB uses 26 signals that students can manipulate directly during laboratory activities.

Figure 1.

Functional architecture of the STM32Lab integrated educational laboratory.

The STM32Lab architecture consists of thirteen main modules, which are described as follows:

- 1.

- 6 indicator Light-Emitting Diode (LEDs): Connected to digital outputs with current-limiting resistors. Used for digital control exercises and modular function implementation.

- 2.

- 2 push Buttons with Pull-Up Resistors: Configurable as interrupt or polling inputs. Used to study events, external interrupts, and debounce techniques.

- 3.

- 10 k Potentiometer: Acts as a voltage divider for analog signal acquisition. Enables ADC conversion and data measurement activities.

- 4.

- Digital Potentiometer AD8400ARZ1: Programmable resistor controlled via SPI, featuring an LED at its output for visual feedback. Used for SPI communication and mixed-signal control applications.

- 5.

- 0.96” Organic Light-Emitting Diode (OLED) Display (SSD1306): Monochrome 128 × 64 graphical display managed through I2C. Supports data visualization and interface handling.

- 6.

- Temperature and Humidity Sensor (HTU21D): Digital sensor for temperature and relative humidity. Used for environmental data acquisition through I2C communication.

- 7.

- Ambient Light Sensor (VEML7700-TR): Measures illuminance in lux using I2C communication. Supports activities on light sensing and data interpretation.

- 8.

- Light-Dependent Resistor (LDR) (TEMT6000X01): Analog sensor for light intensity measurement. Enables comparison between analog and digital sensing methods.

- 9.

- External LED PWM Connector: Output for brightness control via pulse-width modulation. Used for experiments on PWM-based analog signal emulation.

- 10.

- Direct Current (DC) Motor N20 with TB6612FNG Driver: Bidirectional DC motor with speed and direction control using GPIO and PWM signals.

- 11.

- Microservo MG90S: Metal gear servo with angular position control through PWM signals.

- 12.

- Stepper Motor 28BYJ-48 with ULN2003ADR Driver: Unipolar stepper motor controlled by sequential GPIO signals. Used for timing and step sequence control activities.

- 13.

- Debug Connector: External header that allows monitoring and measurement of signals during laboratory activities, facilitating real-time debugging and verification of microcontroller outputs.

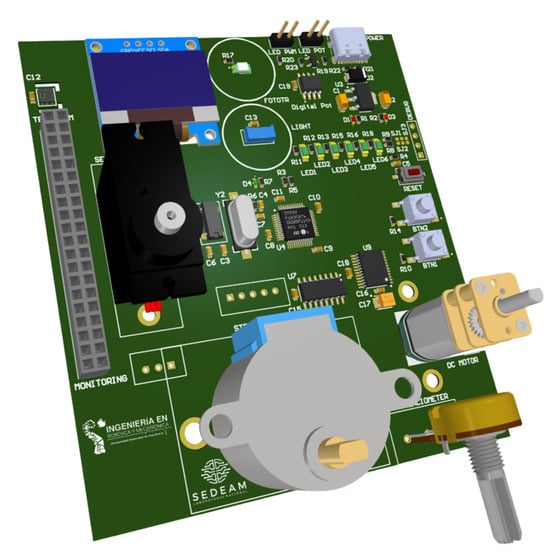

3.2. STM32Lab PCB Design

The PCB design was developed with a focus on engineering principles that emphasize reliability and ease of manufacturing. Key elements of the design included careful component layout, minimizing electromagnetic interference, and separating analog and digital signals. A four-layer configuration was chosen, incorporating dedicated ground and power planes to ensure lower impedance and improve measurement stability.

The power, control, and sensing modules were organized hierarchically, making it straightforward to identify each functional block during practical exercises. Additionally, the placement of external headers and test points was thoughtfully planned to ensure that students could easily access the most relevant system signals for analysis or debugging in the laboratory.

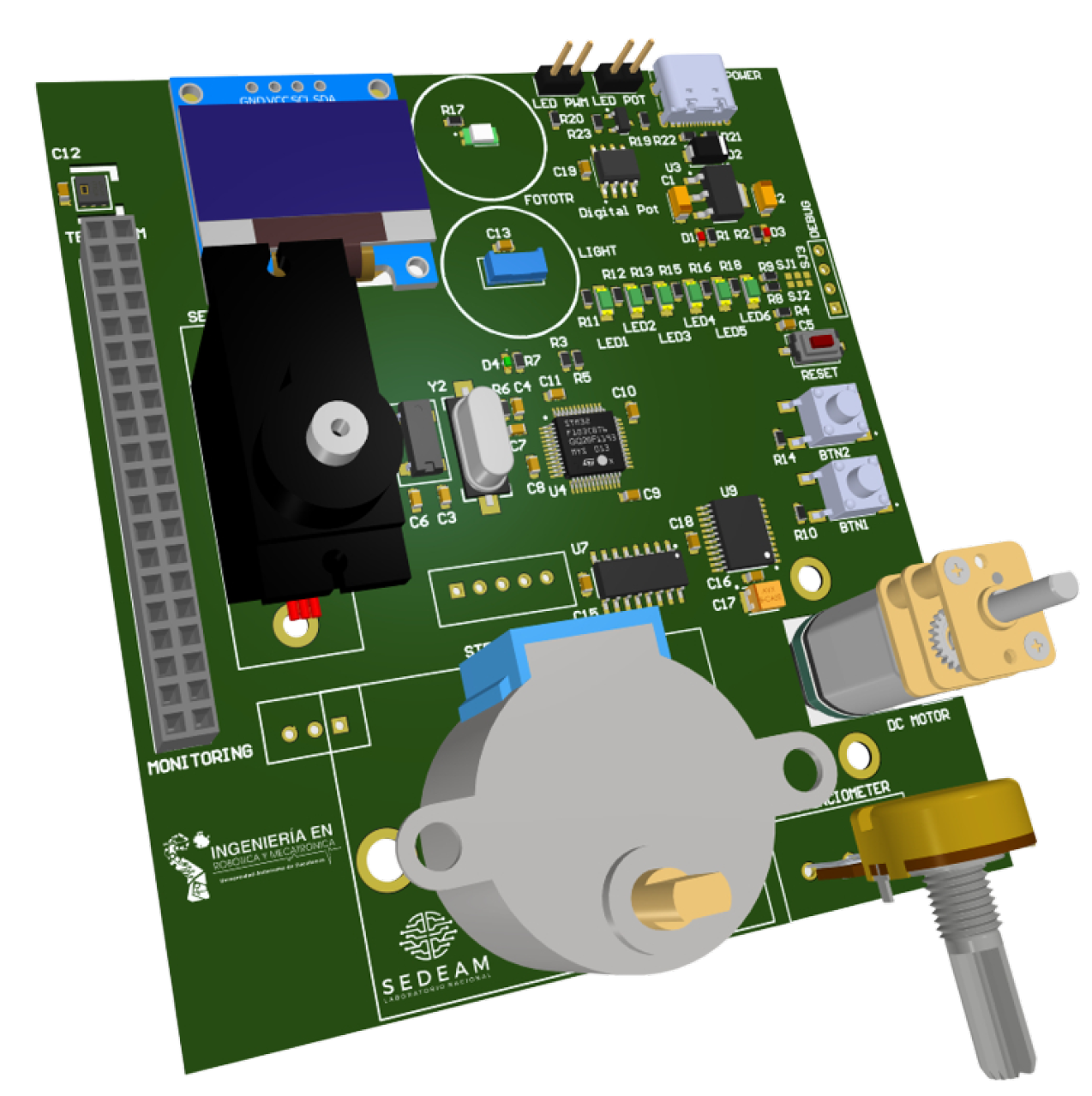

Figure 2 shows the electronic design of the STM32Lab PCB.

Figure 2.

PCB layout of the STM32Lab integrated laboratory platform.

The PCB STM32Lab measures 10 cm by 9 cm. It is powered via a USB Type-C connector, making it easy for students to connect without an external power source. The system operates at 5 V for the actuators and 3.3 V for the microcontroller and other peripherals. It features a linear regulator equipped with reverse-polarity and overcurrent protection. Additionally, an external debugging connector allows monitoring both analog and digital signals with an oscilloscope or a multimeter.

Figure 3 presents a descriptive overview of the 26 signals available on the STM32Lab, illustrating their association with the corresponding peripherals and hardware elements that students can manipulate during laboratory activities. This diagram is also used in the introductory session to familiarize students with the board’s pinout and signal distribution.

Figure 3.

Signals overview and their corresponding peripherals on the STM32Lab board.

4. Methodology of Educational Study

To evaluate the pedagogical effectiveness of using STM32Lab, a quasi-experimental study with a quantitative, descriptive design was conducted. The methodology accounted for the educational context, the participants, the evaluation instruments, the plan for practical exercises, and the data analysis.

4.1. Educational Context

The study was conducted at the Autonomous University of Zacatecas (UAZ), specifically in the Academic Unit of Electrical Engineering, within the Robotics and Mechatronics Engineering Program, which lasts ten semesters. This program aims to provide students with a solid foundation in engineering fundamentals, integrating mechanics, electronics, computer science, and control systems, equipping students with the skills to understand and solve complex problems in robotics and mechatronics.

The implementation took place in the microcontroller course, which corresponds to the fifth semester of the curriculum. This course consists of two hours of lectures and three hours of practical sessions each week. It enhances the graduate’s profile by equipping students with essential skills in the design, programming, and application of microcontroller-based systems, which are vital in modern engineering. Throughout the course, students learn to create software using low- and high-level programming languages, such as assembly and C, while also integrating interfaces and peripherals for applications in embedded systems, robotics, IoT, and automation.

The course is designed using a competency-based approach combined with project-based learning principles, emphasizing the practical application of knowledge in real-world contexts and the creation of functional solutions. Key topics include GPIOs, ADCs, PWM generation, timers, serial communication, and the interaction with sensors and actuators, which together form the experimental foundation of the course.

Laboratory sessions are conducted in person in an environment equipped with computers, oscilloscopes, power supplies, signal generators, and electronic components (resistors, capacitors, potentiometers, LEDs, buttons, motors, and photoresistors). In the traditional approach, students use STM32 boards such as Blue Pill (STM32, 2026), breadboards, and discrete connections, resulting in lengthy assembly and verification times before conducting experiments. Therefore, this course is an ideal opportunity to evaluate the effectiveness of new teaching tools that integrate the necessary hardware for practical exercises.

4.2. Participants and Study Design

The study involved 40 students, comprising 6 women (15%) and 34 men (85%), all aged 20–24 years.

In this study, the students were divided into two groups, each consisting of 20 members. Group 1 (the control group) employed traditional laboratory methods, assembling circuits using STM32 Blue Pill boards, breadboards, and various external components. Group 2 (the experimental group) utilized the STM32Lab.

The microcontroller course is mandatory in the Robotics and Mechatronics Engineering program and is offered during the fifth semester. Before the semester began, the institution set up two parallel course sections with different schedules, allowing students to enroll based on their availability. Each section accommodates up to 20 students. This administrative self-enrollment process defined student group assignment and was independent of the research intervention. One section was designated as the control group, while the other served as the experimental group prior to the start of the course. The researchers did not influence student enrollment or group composition, so the study used a quasi-experimental design with pre-existing groups.

The same instructor instructed both groups. They followed an identical syllabus, learning objectives, contact hours for theoretical and practical sessions, and assessment criteria, aligned with the institutionally approved course curriculum. The only difference between groups was the laboratory platform used during practical sessions.

During laboratory sessions, students in both the control and experimental groups worked together in small teams. The teams were formed by the students themselves within each course section, and the research intervention did not influence this formation. The same collaborative work dynamics, team size criteria, and evaluation procedures were applied to both groups, ensuring that teamwork did not serve as a confounding variable in the study outcomes.

4.3. Evaluation Instruments

To obtain a comprehensive understanding of how different teaching methodologies influenced learning in the microcontroller course, the study employed quantitative and qualitative evaluation instruments. This combination enabled the collection of information on perceived conceptual understanding, practical performance, student motivation, and the self-regulation processes involved in learning. Data from an ad hoc questionnaire, practice-based work logs, and the Motivated Strategies for Learning Questionnaire Short Form (MSLQ-SF) enabled a broader, more contextually informed interpretation of the results.

4.3.1. Ad Hoc Pre- and Post-Test Questionnaire

To assess changes in students perceived learning and learning-related perceptions, an ad hoc questionnaire was administered at two points: at the beginning of the course (pre-test) and after the completion of the practical activities (post-test). The instrument consisted of 17 Likert-type items, with response options ranging from 1 (strongly disagree) to 5 (strongly agree), along with three open-ended qualitative questions, for a total of 20 items.

The questions were organized into five sections that addressed core components of the learning process: perceived conceptual understanding and practical application, experimentation and design skills, peripheral integration and communication, self-efficacy and motivation, and a final section with open-ended questions aimed at exploring qualitative perceptions regarding limitations, suggestions, and proposals related to the course and its practical activities.

The ad hoc design of the questionnaire enabled its alignment with the course content, competencies, and instructional methodologies, consistent with the recommendations of Creswell and Creswell (2017) and Artino et al. (2014), who emphasize that context-specific instruments are particularly appropriate when evaluating targeted learning outcomes or course-related experiences.

Before accessing the questionnaire, students received the institutional privacy notice and an informed consent form, according to the ethical guidelines for data collection.

To ensure the quality of the ad hoc questionnaire, a two-stage validation process was conducted. First, content validity was established through a panel of academic experts in embedded systems and microcontroller education, who verified the alignment of the 17 items with the course’s learning objectives and disciplinary standards. Second, the instrument’s internal consistency was examined using Cronbach’s alpha, a widely used reliability indicator for Likert-type scales (Tavakol & Dennick, 2011). The analysis yielded excellent reliability coefficients for both the pre-test () and the post-test (). These results significantly exceed the standard threshold of 0.70, confirming that the instrument provides a highly consistent and robust measurement of the targeted conceptual constructs.

4.3.2. Practice-Based Work Logs

Practice-based work logs were employed as a qualitative tool for procedural analysis, capturing detailed information about students’ performance. This included execution time, process observations, difficulties encountered, errors made, and problem-solving strategies. The instructor completed the worksheets at the end of each session, after providing direct guidance to students during the practical activities.

Their use is grounded in the need to capture direct evidence of learning. According to Kolb (2014), documenting the experience, reflection, conceptualization, and experimentation cycle is essential for fostering deep learning in engineering and applied science contexts. In this sense, the work logs facilitate the identification of cognitive and procedural processes underlying the resolution of practical problems.

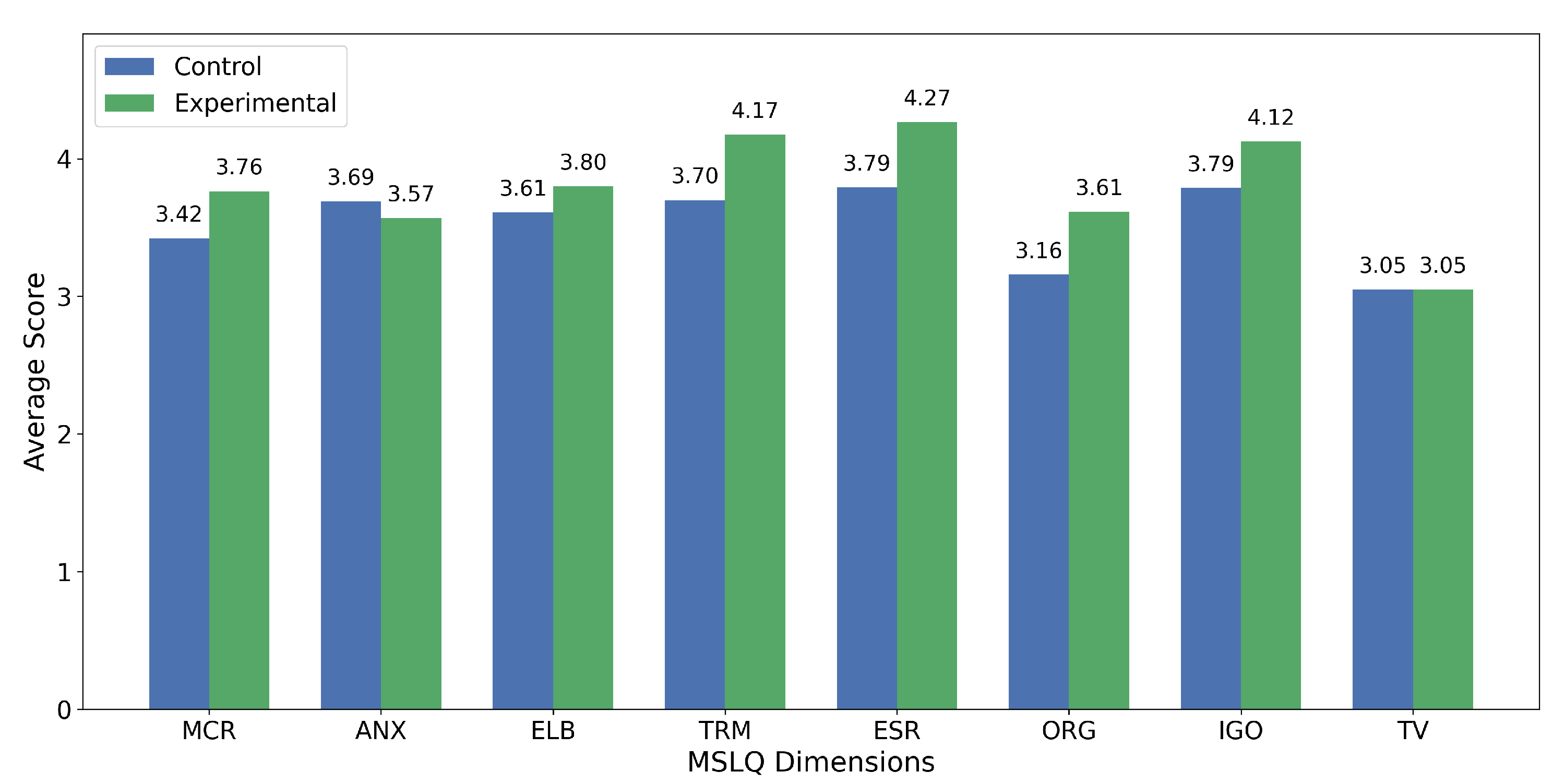

4.3.3. Motivated Strategies for Learning Questionnaire (MSLQ-SF)

Instrument used to evaluate motivational, cognitive, and self-regulatory processes associated with academic performance in university contexts. Its theoretical basis is Pintrich’s Model of Self-Regulated Learning, which conceptualizes learning as comprising three major components: motivational beliefs, cognitive strategies, and self-regulation of learning (Pintrich & De Groot, 1990; Pintrich, 1991). From this perspective, students learn more effectively when they can monitor, regulate, and control their cognitions, motivations, and behaviors throughout the academic process.

The MSLQ-SF was administered only once, at the end of the intervention. This decision was made to prevent survey fatigue during the five-week laboratory sequence and to focus on comparing motivational and self-regulatory differences between the control and experimental groups, rather than on measuring changes over time. Consequently, the results are interpreted as a cross-sectional comparison at the conclusion of the educational experience rather than as evidence of longitudinal change.

Within its general structure, the MSLQ-SF integrates eight specific factors that enable a detailed examination of the processes involved: Task Value (TV), Effort Regulation (ESR), Anxiety (ANX), Elaboration (ELB), Organization (ORG), Intrinsic Goal Orientation (IGO), Metacognitive & Behavioral Self-Regulation (MCR), and Time & Resource Management (TRM). Previous research has shown that these components are significant predictors of academic performance and active engagement in fields such as engineering, applied sciences, and related areas (Vargas-Mendoza & Gallardo, 2023).

For this study, we used the 37-item version validated in Latin American populations, as proposed by Masso et al. (2024). This instrument reports a Cronbach’s alpha of 0.88 and a Kaiser–Meyer–Olkin (KMO) value of 0.89, supporting its validity and reliability in university settings. It is organized into two overarching scales—Learning Strategies (six dimensions) and Motivation (two dimensions)—and evaluated using a five-point Likert scale (1 = Strongly disagree; 5 = Strongly agree) (see Table 1) (Tinoco et al., 2011; Vargas-Mendoza & Gallardo, 2023).

Table 1.

Structure of the 37-item MSLQ: Scales, dimensions, and corresponding items.

4.4. Educational Plan and Laboratory Practices

The practical activities program was structured as a progressive sequence of ten experimental practices, designed to address the main peripherals and functions of the STM32Lab gradually. Each exercise was intentionally planned to integrate theoretical content and key competencies from the microcontroller course, ensuring a precise alignment between learning objectives and practical applications. This structure allowed students to advance from basic concepts of digital configuration and control to more complex embedded applications that incorporate multiple system modules.

Table 2 summarizes the overall plan of activities conducted during the five weeks of the educational intervention.

Table 2.

Summary of laboratory practices developed during the educational intervention.

4.5. Data Collection and Analysis

The pre-test, post-test, and MSLQ-SF questionnaires were administered digitally via Google Forms. This approach ensured efficient distribution, automatic recording, and centralized storage of student responses. The collected data were then processed and analyzed using Python-based notebooks (Python 3.10) in Google Colab. This setup allowed for the calculation of descriptive statistics, normalized learning gains, and inferential analyses, as well as the creation of visualizations.

5. Results

The results are presented across four main areas: functional validation of the STM32Lab; perceived conceptual performance evaluated through pre-test and post-test analyses; laboratory performance assessed via observation logs and recorded errors; and evaluation conducted using the MSLQ-SF questionnaire.

5.1. Functional Validation of the STM32Lab PCB

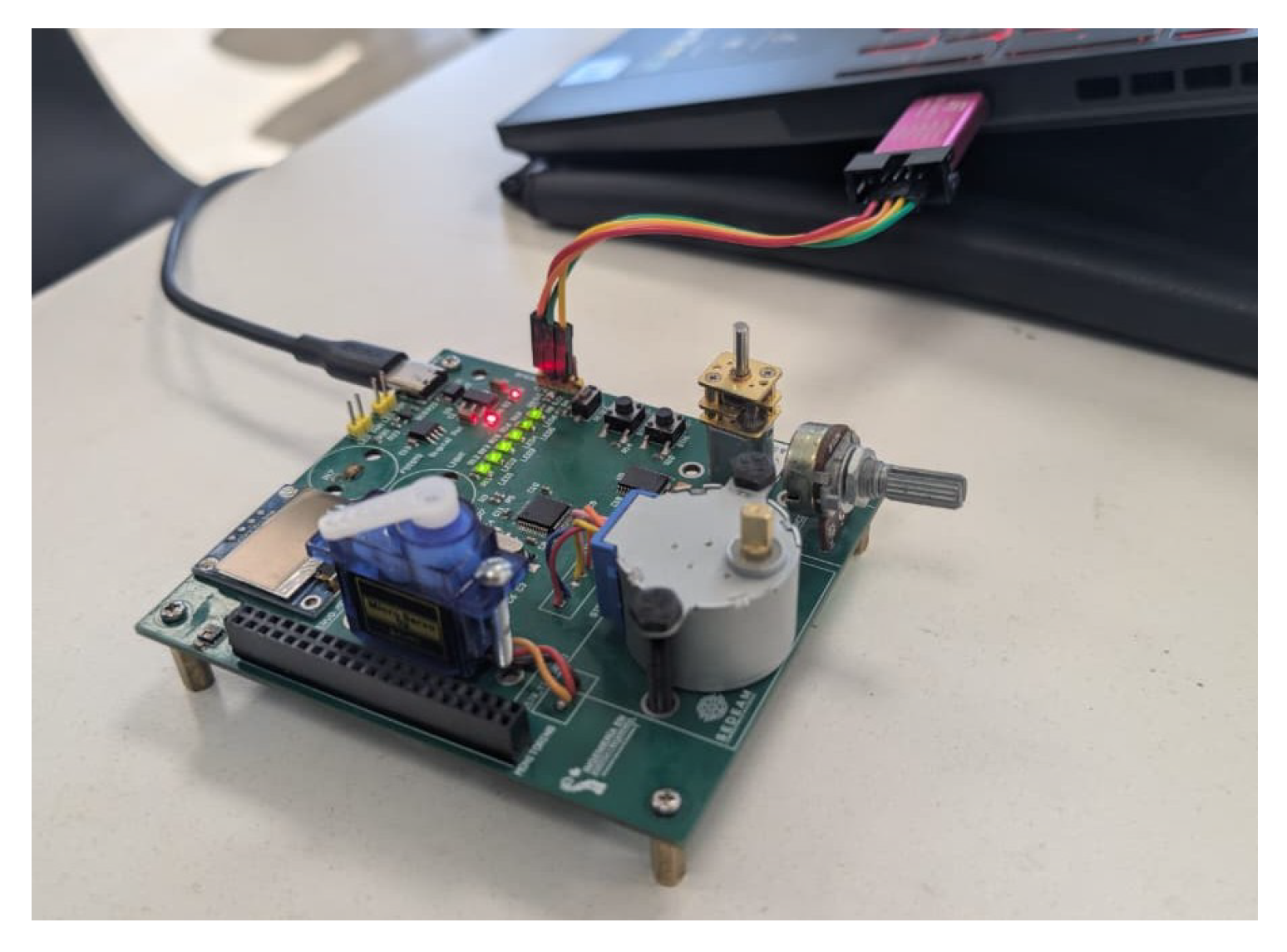

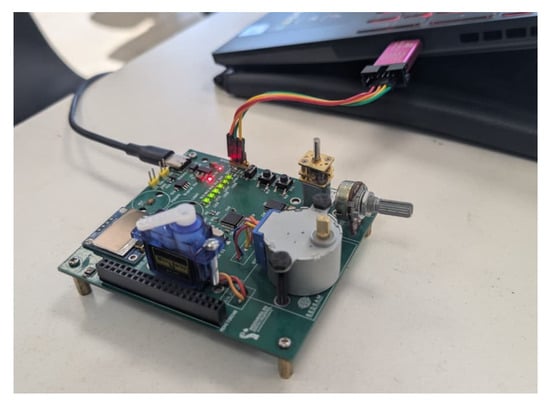

Before beginning the educational intervention, the functionality of the STM32Lab was validated. Figure 4 illustrates the STM32Lab PCB, highlighting the arrangement of sensors, actuators, and peripherals integrated into the laboratory.

Figure 4.

STM32Lab educational PCB overview.

- Analog Acquisition: The responses of the potentiometer and photoresistor were verified, confirming their stability and the ADC’s linearity across different input levels.

- PWM Generation: The PWM signals for the external LED, DC motor, and microservo were evaluated, confirming temporal stability and appropriate duty cycle variation within the expected operating ranges.

- SPI Communication: Communication with the AD8400 digital potentiometer was validated through successful write and update sequences, including responses from the programmable resistive network.

- I2C Communication: Read and write operations were verified with the SSD1306 OLED display, HTU21D sensor, and VEML7700 on a shared I2C bus, with no bus contention or synchronization errors.

- Actuator Control: The performance of the N20 DC motor, MG90S micro servo, and 28BYJ-48 stepper motor was tested for speed, position, and step-sequence variations, confirming that their behavior aligned with the expected electrical specifications.

- Debugging Connector: The exposed signals were inspected using instrumentation, including an oscilloscope and a multimeter, which confirmed their stability and utility for real-time diagnostics during experimental activities.

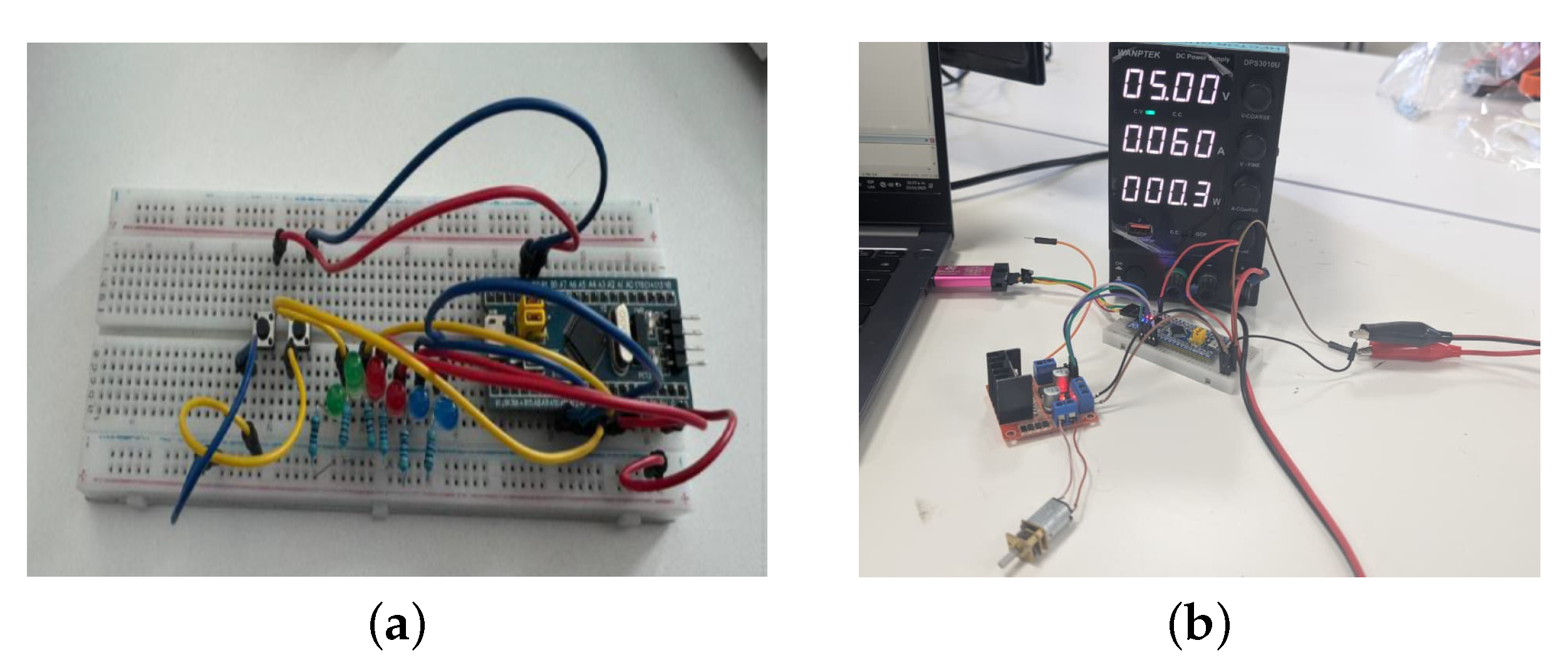

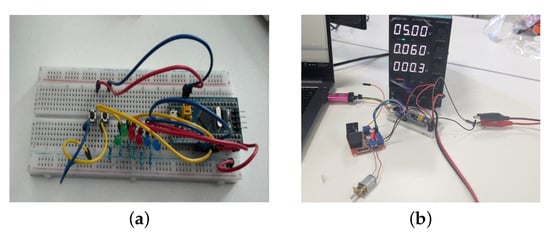

After completing the functional validation, the educational intervention began. At the end of each practice, each student submitted a technical report detailing the configuration, code implementation, and experimental results obtained during the session. Figure 5 and Figure 6 illustrate how students conducted the laboratory exercises using both the traditional approach and the integrated STM32Lab platform.

Figure 5.

Traditional laboratory implementation using the STM32 Blue Pill and breadboard connections. (a) LED control, (b) DC motor control practices.

Figure 6.

Laboratory implementation using the integrated STM32Lab platform.

5.2. Pre-Test and Post-Test Performance

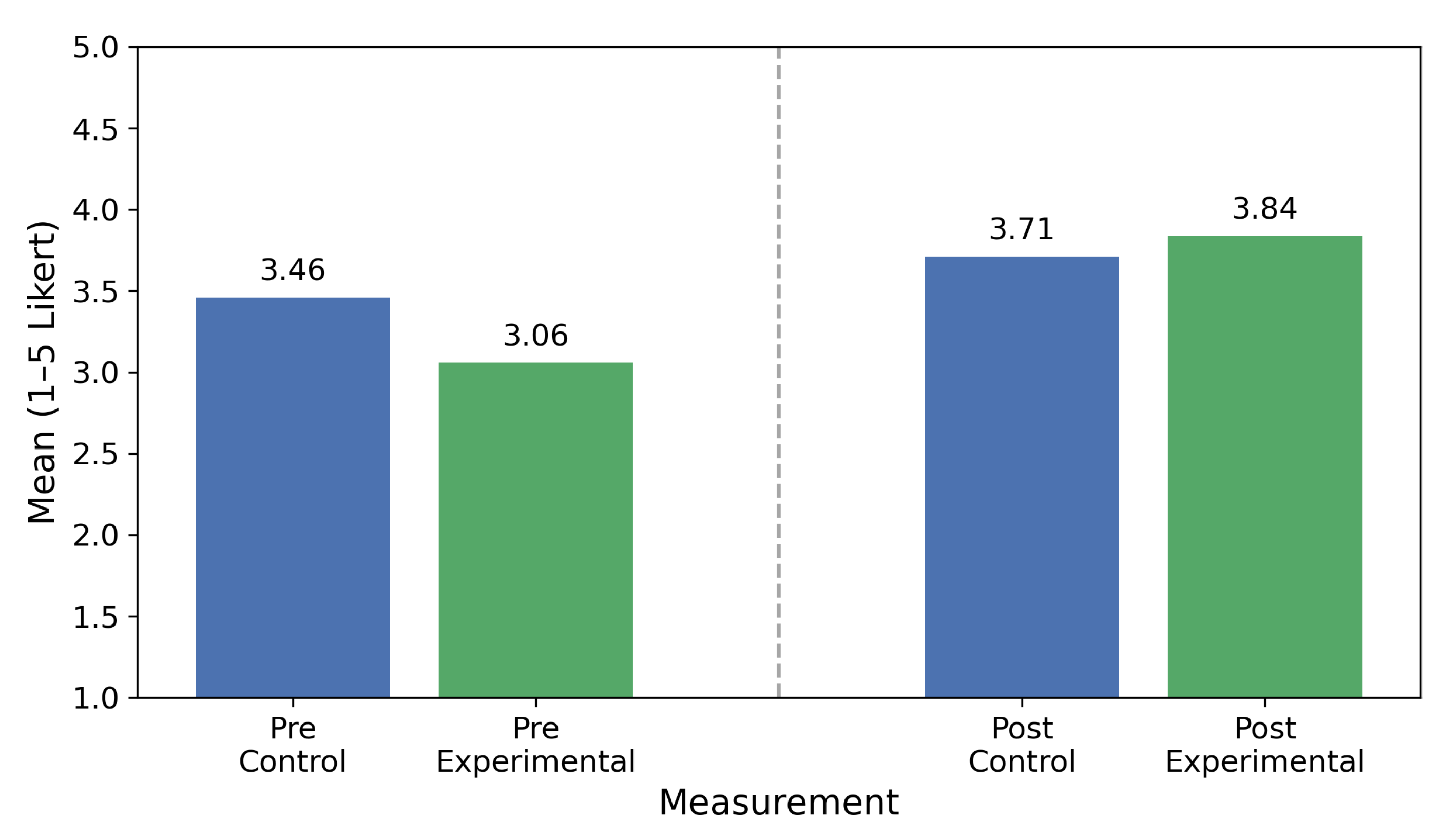

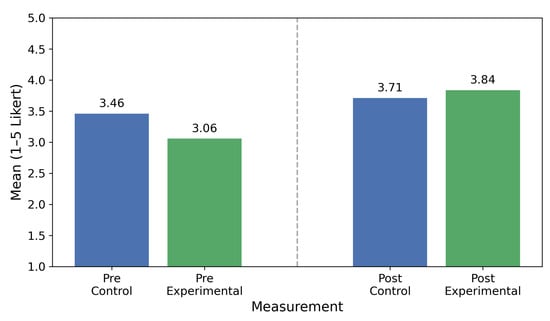

Both groups took the pre-test during the introductory session and completed the post-test at the end of the intervention. Figure 7 displays the overall results, illustrating the average scores for both groups in the pre-test and post-test. In addition, Table 3 reports the corresponding descriptive statistics (mean ± Standard Deviation (SD)).

Figure 7.

Mean pre-test and post-test scores for the control and experimental groups.

Table 3.

Pre-test and post-test descriptive statistics (mean ± SD).

Given the quasi-experimental nature of the study and the use of pre-existing groups, differences in pre-test scores were expected and interpreted as baseline variability. To account for these initial disparities, the analysis focused on the relative improvement observed between the pre-test and post-test within each group. Consequently, the Hake normalized learning gain was employed. This metric effectively addresses the ceiling effect by calculating the ratio of the actual average gain to the maximum possible average gain (Ruiz Loza et al., 2022), as shown in the Equation (1):

where and represent the mean pre-test and post-test scores, respectively, and corresponds to the maximum value of the scale. In this study, the pre-test and post-test scores were obtained from a 5-point Likert scale; therefore, was set to 5. This indicator reflects the fraction of the total possible improvement achieved during the intervention, taking values between 0 and 1, with values closer to 1 indicating a greater normalized learning gain.

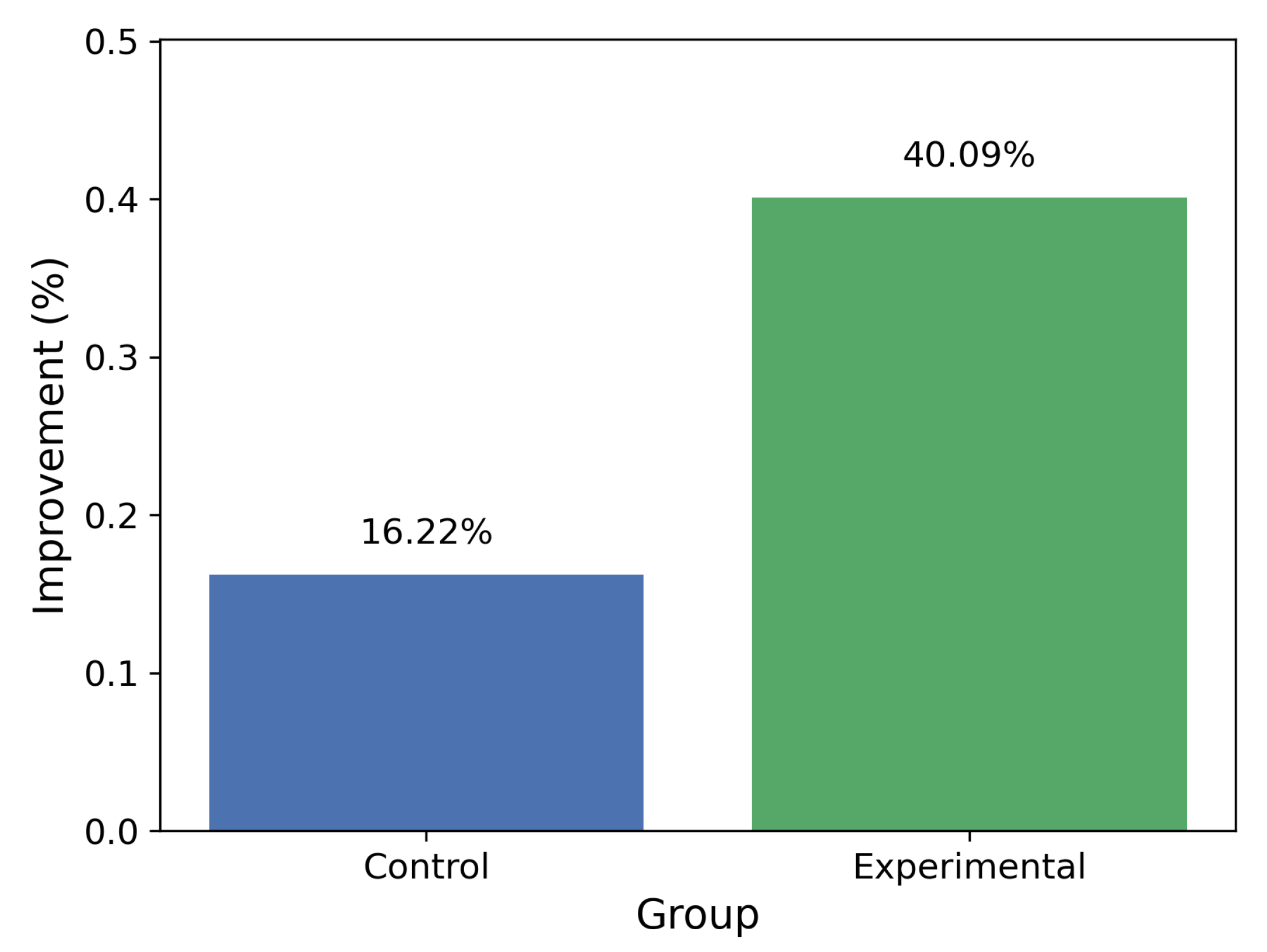

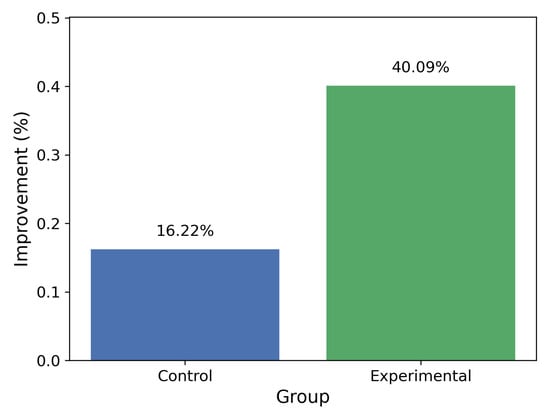

Using the pre-test and post-test means, the hake gain was calculated for each group, quantifying the improvement relative to the maximum possible gain (Ruiz Loza et al., 2022). Figure 8 shows these gains, highlighting the difference in perceived conceptual improvement between the two groups.

Figure 8.

Overall improvement obtained by each group based on the difference between pre-test and post-test averages.

The analysis of average scores indicates that the experimental group (STM32Lab) experienced a greater improvement than the control group. Specifically, the experimental group demonstrated a 40.09% increase from the pre-test to the post-test, while the control group improved by 16.22%. This difference highlights the positive effect of using the STM32Lab on students’ perceived conceptual understanding and overall progress during the intervention.

Table 4 shows the detailed performance of each of the 17 items. For each item, the pre-test and post-test means are presented for both groups, along with the corresponding normalized learning gain.

Table 4.

Pre-test and post-test results per item for the control and experimental groups.

5.3. Laboratory Log Results: Time Efficiency and Error Analysis

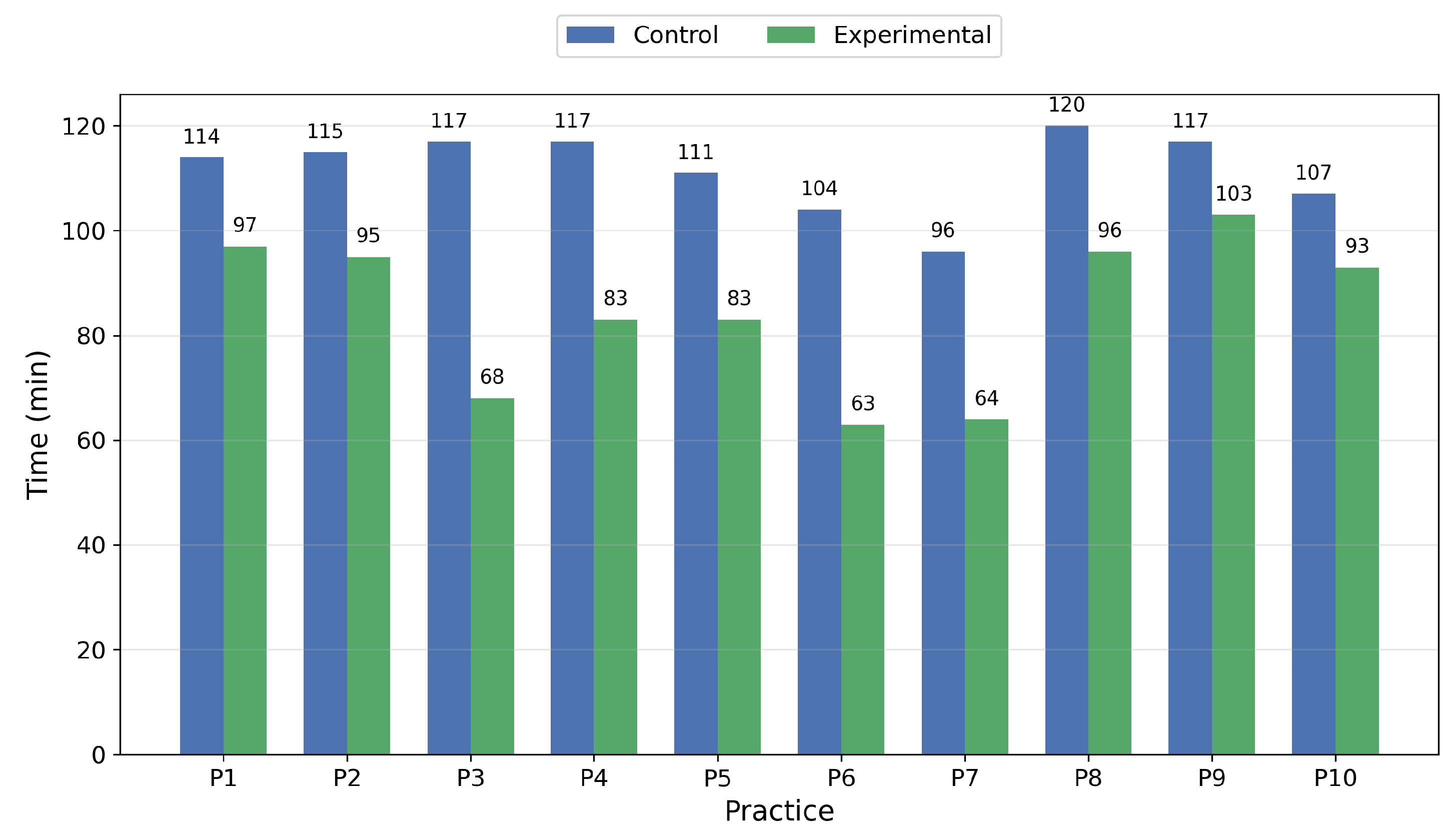

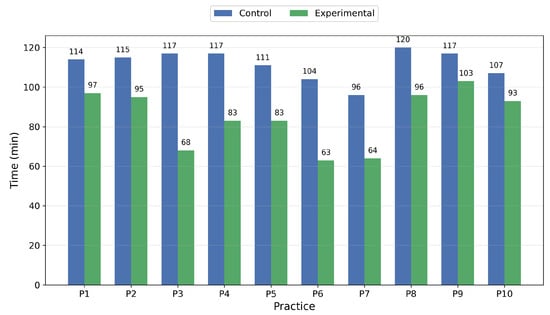

An analysis of the recorded times shows distinct differences in operational efficiency between the two groups. In most practices, the control group took longer to complete the tasks, primarily due to the assembly and verification of connections on the breadboard. In contrast, the experimental group demonstrated shorter and more consistent completion times.

Figure 9 shows a comparison of the average time per exercise between both groups.

Figure 9.

Average completion time per practice for the control and experimental groups.

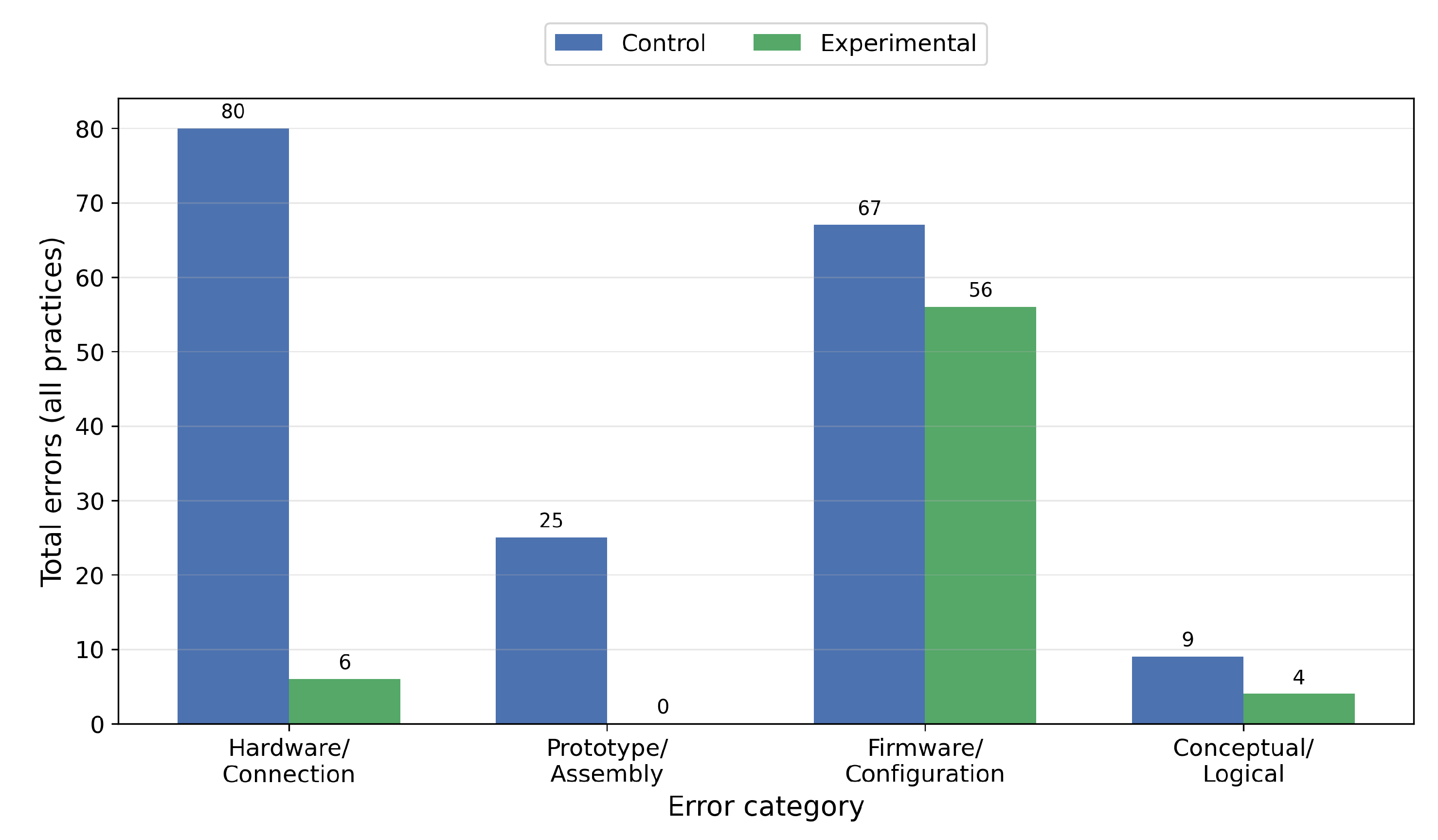

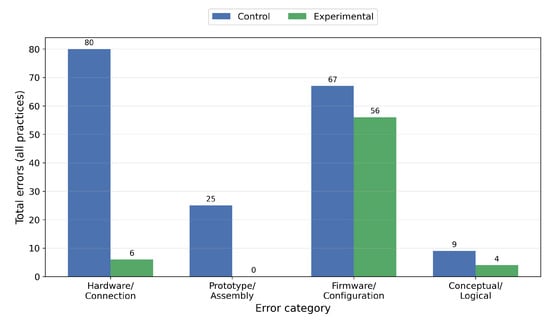

To analyze the errors systematically, they were classified into categories to help identify their nature and the types of difficulties encountered during the activities:

- Hardware/Connection: errors related to wiring, continuity, polarity, or incorrect pin connections.

- Prototype/Assembly: failures associated with the physical assembly of the prototype, loose components, or improper assembly.

- Firmware/Configuration: errors in microcontroller configuration, incorrect initialization of peripherals, or incorrectly defined parameters in the code.

- Conceptual/Logical: failures resulting from an incomplete understanding of the system’s operation, incorrect logical sequences, or inappropriate use of functions.

Based on this classification, a quantitative analysis was conducted to compare the total error frequency across ten practical sessions for each group. The results clearly indicate that the platform type affects both error patterns and the nature of difficulties encountered during experimental development. Figure 10 illustrates the overall distribution of errors by category for both groups, emphasizing the differences in the quantity and types of errors recorded.

Figure 10.

Total number of errors per category for the control and experimental groups.

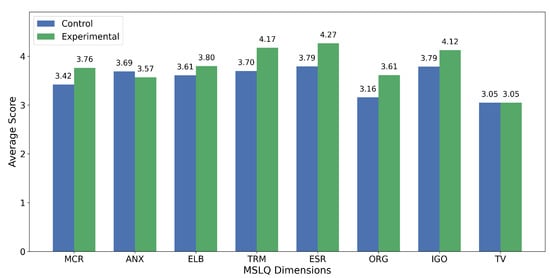

5.4. MSLQ-SF Performance

At the end of the intervention, students completed the MSLQ-SF to evaluate their motivational and self-regulated learning. Figure 11 presents the average scores for both groups across the eight MSLQ-SF dimensions. Each bar in the figure represents the mean value of the items associated with its corresponding dimension and group.

Figure 11.

Average scores of the eight MSLQ-SF dimensions for the control and experimental groups.

To assess whether the differences between the control and experimental groups were statistically significant, an independent-samples t-test was conducted for each MSLQ-SF dimension. The p-value indicates the likelihood that the observed difference in means occurred by random chance under the null hypothesis (Gong et al., 2024). The t-statistic used to compute the p-value is defined as Equation (2):

where and are the means of the control and experimental groups, and are their variances, and and represent the respective sample sizes.

Furthermore, the effect size was determined using Cohen’s d, a widely recognized metric in educational and behavioral research that quantifies the magnitude of the difference between two independent groups, irrespective of sample size (Ting et al., 2023). Cohen’s d is computed as Equation (3):

where the pooled standard deviation is defined as Equation (4):

where, and denote the means of the experimental and control groups, respectively, and combines the variability of both groups into a single standardized measure.

Table 5 summarizes, for each dimension, the control and experimental group means, the p-value of the t-test, and the effect size (Cohen’s d).

Table 5.

MSLQ-SF dimension scores reported as mean ± SD, with p-values, and effect sizes.

6. Discussion

The study’s results provide insights into the pedagogical and operational impact of the STM32-based integrated educational laboratory. Beyond comparing different groups, the experience gained from the ten sessions highlights important aspects of the hardware design, its suitability for the course, and the opportunities it presents for enhancing practical training in subsequent subjects. As a result, the discussion focuses on the technical, educational, and performance implications observed during the intervention.

6.1. Scope of the STM32Lab Modules Used

The STM32Lab consists of thirteen modules to cover the course topics, but not all were used in this work. This is because the Microcontrollers program focuses on essential peripherals, which require an understanding of basic register configuration, clock initialization, interrupt handling, and the direct use of GPIO, ADC, PWM, and low-level communication interfaces. However, unused modules, particularly those for additional sensors and actuators, can be incorporated into courses such as Sensors and Actuators. These courses share a similar educational context and explore the characterization and experimental comparison of various devices. In that setting, these modules enable students to fully harness the hardware’s potential and enhance their opportunities for hands-on learning.

6.2. Perceived Conceptual Performance

Although Hake’s normalized learning gain was initially proposed for objective concept tests, recent studies in engineering and computing education have demonstrated that normalized gain metrics can also serve as comparative indicators of pre- and post-change using Likert-type instruments (Mendoza Diaz & Sotomayor, 2023; Payne & Crippen, 2022; Toor, 2025). Therefore, in this study, normalized gain is viewed as a measure of relative change in students’ perceived conceptual understanding rather than a direct measure of objective learning outcomes. Therefore, interpretation of these normalized gain values should be approached with caution, as they reflect changes in students’ perceived understanding rather than direct measures of objective content mastery.

According to widely used interpretation thresholds in the literature, normalized learning gains are classified as follows: low for gains below 30%, medium for gains between 30% and 70%, and high for gains above 70%. This classification provides a meaningful indicator of relative conceptual change during an intervention (Ruiz Loza et al., 2022). Based on these criteria, the experimental group achieved a gain of 40.09%, which falls within the medium-gain level. In contrast, the control group achieved a gain of 16.22%, which was categorized as a low gain. This comparison shows that the integrated STM32Lab platform was more effective in helping students develop a greater perceived conceptual understanding than the traditional approach.

The most significant improvements were observed in the key conceptual items of the questionnaire. Among these items, the highest normalized learning gains were noted for Q17 (“I understand the course competencies and their professional usefulness,” with a gain of 68.0%, categorized as medium-high), followed by Q1 (“I understand the basic principles of microcontrollers,” showing a 62.5% gain, categorized as medium), Q2 (“I feel capable of programming a microcontroller,” with a 51.2% gain, also medium), and Q3 (“I can explain how microcontrollers communicate with other devices,” exhibiting a 48.8% gain, classified as medium). These results indicate that the integrated laboratory significantly enhanced students’ self-reported understanding of fundamental concepts related to microcontroller architecture, communication interfaces, and embedded programming practices.

Despite the observed progress, negative normalized gains were noted in the control group. Specifically, question 14 (“I feel prepared to work on interdisciplinary projects”) showed a 9.1% decrease, while question 17 (“I understand how this course contributes to professional competencies”) experienced an even larger decline of 36.4%. These negative results suggest that the traditional approach, which primarily focuses on basic prototyping and discrete wiring, may not adequately help students recognize the broader interdisciplinary significance of the course or its impact on their professional development.

6.3. Analysis of Times and Error Patterns in Laboratory Logs

An analysis of the lab logs showed differences in operational efficiency between the two groups. Over ten sessions, the experimental group completed the exercises in an average of 84.5 min, while the control group took an average of 111.8 min. The gap of more than 27 min per session indicates that using an integrated platform significantly reduces the time spent on setup, connection verification, and initial diagnostics, which can be redirected toward completing additional practices or reinforcing more advanced concepts.

The logs indicated that the control group encountered more errors related to hardware, connections, and prototype assembly. These issues included continuity problems, incorrect wiring, reversed polarities, and unstable breadboard setups. The frequency of these errors was directly correlated with increased execution time; the prototyping exercises with the most errors were the ones that took the longest to complete. In contrast, the experimental group demonstrated fewer errors of this kind, supporting the notion that an integrated platform helps reduce operational failures and enhance efficiency during practical sessions.

6.4. Analysis and Interpretation of MSLQ-SF

The MSLQ-SF results offer insight into how students perceived their motivational and self-regulated learning strategies. Statistical significance was evaluated using an independent-samples t-test; values of indicate significant differences between groups (Gong et al., 2024). Additionally, the magnitude of these differences was examined using Cohen’s d, interpreted conventionally as small (), medium (), or large () (Ting et al., 2023).

Based on these criteria, the differences observed are discussed as follows:

- MCR: The experimental group achieved a higher mean score than the control group, with a statistically significant difference () and a medium effect size (). This indicates that students using the STM32Lab demonstrated better planning, monitoring, and regulation of their learning behaviors compared to those using traditional setups.

- ANX: No significant difference was observed between groups (), and the effect size was negligible (). This suggests that the intervention did not meaningfully decrease test-related anxiety, and both groups experienced similar levels of apprehension throughout the course.

- ELB: Although the experimental group reported a higher mean, the difference was not statistically significant (). However, the effect size was in the small-to-medium range (), suggesting a positive trend toward deeper processing of information.

- TRM: A statistically significant advantage was observed for the experimental group () with a medium effect size (). This finding aligns with the laboratory logs, which indicate that the experimental group completed the practices in shorter and more consistent times than the control group. This suggests a direct relationship between reduced operational overhead and improved time management.

- ESR: This dimension revealed one of the most significant differences in the dataset, with a notable p-value () and a large effect size (). These results suggest that students in the experimental group were more effective in persisting through challenging tasks, likely because they experienced fewer operational setbacks.

- ORG: The mean score for the experimental group was substantially higher than that of the control group, with a significant difference () and a medium-to-large effect size (). These findings suggest that the STM32Lab offered a more structured environment, which helped improve the organization of materials and study routines.

- IGO: Although the difference between the groups did not reach statistical significance (p = 0.073), the effect size was medium (). This trend indicates that the experimental group may have experienced higher levels of interest and internal motivation, even though the sample size might limit the ability to detect statistical significance.

- TV: Both groups reported the identical mean scores, resulting in and . This indicates that students had similar valuations of the course content, regardless of the instructional materials used.

Overall, the strongest improvements observed in the experimental group were associated with effort regulation, organization, and time/resource management, suggesting that reducing operational friction in laboratory work can positively influence self-regulation processes central to engineering learning.

6.5. Design Considerations for Remote Laboratory Integration

From its inception, the STM32Lab was designed to operate in a hybrid mode, facilitating both face-to-face sessions where students interact directly with the hardware and remote sessions aimed at integrating it into a remotely microcontroller laboratory. This approach leverages the program’s previous experience, particularly with the remote laboratory infrastructure used in courses such as digital systems. In that course, students program and verify Field-Programmable Gate Array (FPGA) devices through a web platform (Guerrero-Osuna et al., 2024). Although this infrastructure has not yet been extended to the microcontroller course, its existence allows for the potential integration of STM32Lab into the UAZ Labs ecosystem without necessitating significant structural modifications.

6.6. Comparison with Related Works

Several studies in the literature present educational hardware tools designed to improve learning outcomes compared to traditional methods; however, these studies are highly diverse and lack a standardized methodology for evaluating the effectiveness of these platforms. Among them, the study by Peter et al. (2024) is most closely aligned with this research, as it directly compares traditional breadboard-based practices with a dedicated educational PCB using a quasi-experimental design. Their results demonstrated significant improvements: a 23% increase in initial conceptual understanding (83% versus 60%), a 4% gain in retention (80% versus 76%). However, it is important to note that their work does not report normalized learning gains, which limits comparability across different cohorts. Additionally, while their study employs the full version of the MSLQ, the present research uses the validated short form, reflecting a different approach to assessing motivational and self-regulated learning dimensions within the context of laboratory-based instruction.

Despite these contributions, existing research has not consistently combined quasi-experimental designs with quantitative learning metrics within curricular engineering contexts. Most prior work emphasizes platform design or isolated learning indicators rather than integrating multiple dimensions of learning within a single analytical framework. This study addresses this methodological gap by proposing an integrated analytical approach that triangulates (1) cognitive improvement using Hake’s normalized gain from pre-test and post-test assessments, (2) technical proficiency through laboratory performance indicators, and (3) motivational and self-regulatory dimensions measured by the MSLQ-SF. This unified approach advances beyond descriptive analysis and provides a transferable framework for evaluating the educational impact of dedicated instructional hardware.

7. Conclusions

This study presents the design, implementation, and pedagogical evaluation of an integrated educational laboratory based on an STM32 microcontroller. It was created as an integrated platform to enhance practical exercises in a microcontroller course. The paper outlines the technical characteristics, the functional organization of the modules, and the methodology used to compare its performance with the traditional approach, which utilizes the STM32 Blue Pill and a breadboard. The study comprised 10 experimental sessions and employed conceptual, motivational, and observational assessment tools, enabling a thorough analysis of the platform’s impact on learning.

The results indicate that the hypothesis that an integrated platform reduces operational errors, decreases the time needed to complete practical exercises, and simultaneously improves students’ perceived conceptual understanding has been empirically validated. The experimental group demonstrated a normalized learning gain of 40.09%, compared to just 16.22% for the control group. Additionally, the integrated platform reduced the time required to complete each practical exercise by an average of 27 min per session, eliminating the need for assembly and connection verification. A significant reduction in hardware, connection, and assembly errors was also noted, allowing students to concentrate on analysis, programming, and result validation. These findings suggest that an integrated platform fosters more efficient and more consistent learning. The MSLQ-SF results further supported these findings, revealing that the experimental group exhibited higher levels of metacognitive and behavioral self-regulation, effort regulation, organization, and time and resource management. These dimensions are closely associated with effective learning processes in engineering. The observed improvements, which showed statistically significant differences and medium-to-large effect sizes, suggest that reducing operational friction not only enhances performance but also strengthens students’ motivational and self-regulatory strategies.

One of the main limitations of the study is that the implementation lasted only five weeks, corresponding to the C programming language module in the microcontroller program. Additionally, the present study focused on students’ perceived learning outcomes; therefore, future work will incorporate objective assessments to directly measure content knowledge and complement the perception-based analysis. Although the integrated laboratory incorporates 13 modules, we have used only those relevant to the course content, meaning its full potential has yet to be evaluated. Future work will involve extending the application of the integrated laboratory to cover the assembly language unit and increasing the number of experimental sessions. Additionally, we plan to integrate the platform into the UAZ Labs remote laboratory, leveraging the existing infrastructure for remote practices. This will enable remote programming, monitoring, and control of the microcontroller, thereby expanding access to and maintaining the continuity of hands-on learning.

Author Contributions

Conceptualization, H.A.G.-O., L.F.L.-V. and T.I.-P.; methodology, A.C.-A. and F.G.-V.; software, F.G.-V., S.V.-R. and J.A.N.-P.; validation, A.C.-A., P.C.M.-B. and J.A.N.-P.; formal analysis, A.C.-A., P.C.M.-B. and M.d.R.M.-B.; investigation, A.C.-A. and P.C.M.-B.; resources, H.A.G.-O. and T.I.-P.; data curation, F.G.-V. and J.A.N.-P.; writing—original draft preparation, A.C.-A., S.V.-R. and P.C.M.-B.; writing—review and editing, H.A.G.-O., L.F.L.-V. and T.I.-P.; visualization, F.G.-V. and M.d.R.M.-B.; supervision, H.A.G.-O. and L.F.L.-V.; project administration, H.A.G.-O. and S.V.-R.; funding acquisition, T.I.-P. and M.d.R.M.-B. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Data supporting the reported results can be found at https://github.com/fabianngv29/STM32Lab-Intrument-Data (accessed on 1 December 2025).

Acknowledgments

The authors want to thank the Mexican Secretariat of Science, Humanities, Technology and Innovation (SECIHTI by its initials in Spanish) for its support of the National Laboratory of Embedded Systems, Advanced Electronics Design and Micro Systems (LN-SEDEAM by its initials in Spanish)(project numbers 282357, 293384, 299061, 314841, 315947, and 321128 and scholarship numbers 1012274 and 856644).

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| ADC | Analog-to-Digital Converter |

| ANX | Anxiety |

| ARM | Advanced RISC Machine |

| DC | Direct Current |

| ELB | Elaboration |

| ESR | Effort Regulation |

| GPIO | General-Purpose Input/Output |

| I2C | Inter-Integrated Circuit |

| IGO | Intrinsic Goal Orientation |

| LDR | Light-Dependent Resistor |

| LED | Light-Emitting Diode |

| MCR | Metacognitive & Behavioral Self-Regulation |

| MSLQ-SF | Motivated Strategies for Learning Questionnaire Short Form |

| OLED | Organic Light-Emitting Diode |

| ORG | Organization |

| PCB | Printed Circuit Board |

| PWM | Pulse-Width Modulation |

| RAM | Random Access Memory |

| SD | Standard Deviation |

| SPI | Serial Peripheral Interface |

| STM32 | 32-bit Microcontroller Family by STMicroelectronics |

| TRM | Time and Resource Management |

| TV | Task Value |

| USART | Universal Synchronous/Asynchronous Receiver-Transmitter |

| USB | Universal Serial Bus |

References

- Alessa, M. A. (2025). Exploring the influence of science teachers’ conceptions of teaching and learning on technology-enhanced instructional strategies. International Journal of Instruction, 18(3), 76–96. [Google Scholar] [CrossRef]

- Arm Ltd. (2024). Keil MDK uVision integrated development environment for ARM Cortex-M microcontrollers. Version 5.40. Arm Ltd. Available online: https://www.keil.com/mdk5/uvision/ (accessed on 15 January 2026).

- Artino, A. R., Jr., La Rochelle, J. S., Dezee, K. J., & Gehlbach, H. (2014). Developing questionnaires for educational research: AMEE guide no. 87. Medical Teacher, 36(6), 463–474. [Google Scholar] [CrossRef] [PubMed]

- Budihal, S., Salunke, M., Kinnal, B., & Iyer, N. (2022). Redesign of digital circuits course for enhanced learning. Journal of Engineering Education Transformations, 35(8), 163–170. [Google Scholar] [CrossRef]

- Bygstad, B., Øvrelid, E., Ludvigsen, S., & Dæhlen, M. (2022). From dual digitalization to digital learning space: Exploring the digital transformation of higher education. Computers & Education, 182, 104463. [Google Scholar] [CrossRef]

- Cao, X., Lu, H., Wu, Q., & Hsu, Y. (2025). Systematic review and meta-analysis of the impact of STEM education on students learning outcomes. Frontiers in Psychology, 16, 1579474. [Google Scholar] [CrossRef] [PubMed]

- Chaka, C. (2022). Is Education 4.0 a sufficient innovative and disruptive educational trend to promote sustainable open education for higher education institutions? A review of literature trends. Frontiers in Education, 7, 824976. [Google Scholar] [CrossRef]

- Charitopoulos, A., Rangoussi, M., & Koulouriotis, D. (2022). Blending e-learning with hands-on laboratory instruction in engineering education: An experimental study on early prediction of student performance and behavior. International Journal of Emerging Technologies in Learning, 17(20), 213–230. [Google Scholar] [CrossRef]

- Cornetta, G., Fatimi, S., Kochaji, A., Moussa, O., Almaleky, M. S., Lamrini, M., & Touhafi, A. (2025). An open-source educational platform for multi-sensor environmental monitoring applications. Hardware, 3(4), 13. [Google Scholar] [CrossRef]

- Creswell, J. W., & Creswell, J. D. (2017). Research design: Qualitative, quantitative, and mixed methods approaches. SAGE Publications. [Google Scholar]

- Das, S., Chin, C., & Hill, S. (2022, June 26–29). Development of open-source comprehensive circuit analysis laboratory instructional resources for improved student competence [Conference paper]. ASEE Annual Conference & Exposition, Minneapolis, MN, USA. [Google Scholar]

- Eliza, F., Candra, O., Mukhaiyar, R., Fadli, R., & Wibowo, Y. E. (2025). Development of a microcontroller training kit to increase student learning motivation. Engineering, Technology & Applied Science Research, 15(3), 22319–22326. [Google Scholar] [CrossRef]

- Escobar-Castillejos, D., Sigüenza-Noriega, I., Noguez, J., Escobar-Castillejos, D., & Berumen-Glinz, L. A. (2024). Enhancing methods engineering education with a digital platform: Usability and educational impact on industrial engineering students. Frontiers in Education, 9, 1438882. [Google Scholar] [CrossRef]

- Gong, J., Cai, S., & Cheng, M. (2024). Exploring the effectiveness of flipped classroom on STEM student achievement: A meta-analysis. Technology, Knowledge and Learning, 29(2), 1129–1150. [Google Scholar] [CrossRef]

- Guerrero-Osuna, H. A., García-Vázquez, F., Ibarra-Delgado, S., Mata-Romero, M. E., Nava-Pintor, J. A., Ornelas-Vargas, G., Castañeda-Miranda, R., Rodríguez-Abdalá, V. I., & Solís-Sánchez, L. O. (2024). Developing a cloud and IoT-integrated remote laboratory to enhance education 4.0: An approach for FPGA-based motor control. Applied Sciences, 14(22), 10115. [Google Scholar] [CrossRef]

- Habibi, M. W., & Buditjahjanto, I. G. P. A. (2024). Impact of training kit-based internet of things to learn microcontroller viewed in cognitive domain. TEM Journal, 13(2), 1157–1164. [Google Scholar] [CrossRef]

- Habibi, M. W., Buditjahjanto, I. G. P. A., Rijanto, T., Anifah, L., Abdillah, R., Al Rosyid, H., Hakiki, M., Wibawa, R. C., & Husnaini, S. J. (2025). The effect of an IoT-based microcontroller training kit on psychomotor learning outcomes in microcontroller and microprocessor programming. E3S Web of Conferences, 645, 06003. [Google Scholar] [CrossRef]

- Holub, J., Svatos, J., & Vedral, J. (2025). Teaching of electronic circuits for measuring technology at the Faculty of electrical engineering, department of measurement, CTU in Prague. Measurement: Sensors, 38, 101312. [Google Scholar]

- Jacko, P., Bereš, M., Kováčová, I., Molnár, J., Vince, T., Dziak, J., Fecko, B., Gans, Š., & Kováč, D. (2022). Remote IoT education laboratory for microcontrollers based on the STM32 chips. Sensors, 22(4), 1440. [Google Scholar] [CrossRef]

- Kolb, D. A. (2014). Experiential learning: Experience as the source of learning and development. FT Press. [Google Scholar]

- Li, J., & Liang, W. (2024). Effectiveness of virtual laboratory in engineering education: A meta-analysis. PLoS ONE, 19(12), e0316269. [Google Scholar] [CrossRef]

- Luo, Y., Qin, S. B., & Wang, D. S. (2025). Reform of embedded system experiment course based on engineering education accreditation. International Journal of Electrical Engineering & Education, 62(1), 3–16. [Google Scholar]

- Mahmood, A. F., Ahmed, K. A., & Mahmood, H. A. (2022). Design and implementation of a microcontroller training kit for blended learning. Computer Applications in Engineering Education, 30(4), 1236–1247. [Google Scholar] [CrossRef]

- Masso Viatela, J., & Fonseca Gómez, L. R. (2024). Cuestionario de motivación y estrategias de aprendizaje forma corta—MSLQ SF en estudiantes universitarios: Análisis de la estructura interna. Comunicaciones En Estadística, 17(1), 81-–97. [Google Scholar] [CrossRef]

- Mendoza Diaz, N. V., & Sotomayor, T. (2023). Effective teaching in computational thinking: A bias-free alternative to the exclusive use of students’ evaluations of teaching (SETs). Heliyon, 9(8), e18997. [Google Scholar] [CrossRef]

- Moloi, M. J. (2024, August 2–4). Research and teaching methods for electrical circuits in science, technology, and engineering: A Bibliometric Analysis [Conference paper]. International Academic Conference on Education, Stockholm, Sweden. [Google Scholar]

- Nazarov, S., & Jumayev, B. (2022). Project-based laboratory assignments to support digital transformation of education in Turkmenistan. Journal of Engineering Education Transformations, 36(1), 40–48. [Google Scholar] [CrossRef]

- Onyeka, E., Chibuzor, O., Nicholas, O., Jacob, E., Prince, E., & Emmanuel, O. (2022). Design and implementation of a microcontroller development kit for STEM education. Global Scientific Journal (GSJ), 10(7), 1897–1907. [Google Scholar]

- Otto, S., Bertel, L. B., Lyngdorf, N. E. R., Markman, A. O., Andersen, T., & Ryberg, T. (2024). Emerging digital practices supporting student-centered learning environments in higher education: A review of literature and lessons learned from the COVID-19 pandemic. Education and Information Technologies, 29(2), 1673–1696. [Google Scholar] [CrossRef] [PubMed]

- Payne, C., & Crippen, K. J. (2022, February 20–23). Promoting first-semester persistence of engineering majors with design experiences in general chemistry laboratory [Conference paper]. 2022 CoNECD (Collaborative Network for Engineering & Computing Diversity), Nueva Orleans, LA, USA. [Google Scholar]

- Peppler, K. A., Sedas, R. M., & Thompson, N. (2023). Paper circuits vs. breadboards: Materializing learners’ powerful ideas around circuitry and layout design. Journal of Science Education and Technology, 32(4), 469–492. [Google Scholar] [CrossRef]

- Peter, O. O. E., Akingbola, O. G., Abiodun, P. O., Rahman, M. M., Shourabi, N. B., Goddard, L., & Owolabi, O. A. (2024, June 23–26). Comparative study of digital electronics learning: Using PCB versus traditional methods in an Experiment-Centered Pedagogy (ECP) approach for engineering students [Conference paper]. 2024 ASEE Annual Conference & Exposition, Portland, OR, USA. [Google Scholar]

- Pintrich, P. R. (1991). A manual for the use of the Motivated Strategies for Learning Questionnaire (MSLQ). University of Michigan. [Google Scholar]

- Pintrich, P. R., & De Groot, E. V. (1990). Motivational and self-regulated learning components of classroom academic performance. Journal of Educational Psychology, 82(1), 33–40. [Google Scholar] [CrossRef]

- Ramos, J., Ding, Y., Sim, Y. W., Murphy, K., & Block, D. (2020). Hoppy: An open-source and low-cost kit for dynamic robotics education. arXiv, arXiv:2010.14580. [Google Scholar]

- Ruiz Loza, S., Medina Herrera, L. M., Molina Espinosa, J. M., & Huesca Juárez, G. (2022). Facilitating mathematical competencies development for undergraduate students during the pandemic through ad-hoc technological learning environments. Frontiers in Education, 7, 830167. [Google Scholar] [CrossRef]

- Sabari Raj, R., Singaravel, M., & Pradeepkumar, D. (2023). Hoppy: Design and development of open source embedded hardware board for education use. International Research Journal of Engineering and Technology (IRJET), 10, 235–240. [Google Scholar]

- Smolaninovs, V., & Terauds, M. (2025). Microcontroller-based electronic laboratory measurement device for distance education. Electronics, 14(3), 438. [Google Scholar] [CrossRef]

- Sozański, K. (2023). Low cost PID controller for student digital control laboratory based on Arduino or STM32 modules. Electronics, 12(15), 3235. [Google Scholar] [CrossRef]

- STM32-Base Project. (2026). STM32F103C8T6 blue pill board reference. Available online: https://stm32-base.org/boards/STM32F103C8T6-Blue-Pill.html (accessed on 15 January 2026).

- STM32F103C8T6. (2023). STM32F103C8T6 datasheet: ARM Cortex-M3 32-bit MCU with 64 kB flash, 20 kB RAM, ADC, timers, USART, SPI, I2C. Revision 18. STMicroelectronics. Available online: https://www.st.com/resource/en/datasheet/stm32f103c8.pdf (accessed on 15 January 2026).

- STMicroelectronics. (2025). STM32CubeMX: Graphical tool for STM32 microcontroller configuration and initialization code generation. Version 6.11.0. STMicroelectronics. Available online: https://www.st.com/en/development-tools/stm32cubemx.html (accessed on 15 January 2026).

- Takács, G., Mikuláš, E., Gulan, M., Vargová, A., & Boldocký, J. (2023). Automationshield: An open-source hardware and software initiative for control engineering education. IFAC-PapersOnLine, 56(2), 9594–9599. [Google Scholar] [CrossRef]

- Tavakol, M., & Dennick, R. (2011). Making sense of Cronbach’s alpha. International Journal of Medical Education, 2, 53–55. [Google Scholar] [CrossRef]

- Ting, F. S., Shroff, R. H., Lam, W. H., Garcia, R. C., Chan, C. L., Tsang, W. K., & Ezeamuzie, N. O. (2023). A meta-analysis of studies on the effects of active learning on Asian students’ performance in science, technology, engineering and mathematics (STEM) subjects. The Asia-Pacific Education Researcher, 32(3), 379–400. [Google Scholar] [CrossRef]

- Tinoco, L. F. S., Heras, E. B., Hern, A., & Zapata, L. (2011). Validación del cuestionario de motivación y estrategias de aprendizaje forma corta–MSLQ SF, en estudiantes universitarios de una institución pública–Santa Marta. Psicogente, 14(25), 36–50. [Google Scholar]

- Tkachuk, V. V., Yechkalo, V. Y. V., & Ruban, S. A. (2025, May 12). Implementation of educational tools for microprocessor systems design: Fostering digital competence among engineering students [Conference paper]. CEUR Workshop Proceedings, Kyiv, Ukraine. [Google Scholar]

- Tokatlidis, C., Tselegkaridis, S., Rapti, S., Sapounidis, T., & Papakostas, D. (2024). Hands-on and virtual laboratories in electronic circuits learning—Knowledge and skills acquisition. Knowledge and Skills Acquisition, 15(11), 672. [Google Scholar] [CrossRef]

- Toor, I. U. (2025). Integrating active learning in an undergraduate corrosion science and engineering course—KFUPM’s active learning initiative. Sustainability, 17(23), 10704. [Google Scholar] [CrossRef]

- Vargas-Mendoza, L., & Gallardo, K. (2023). Influence of self-regulated learning on the academic performance of engineering students in a blended-learning environment. International Journal of Engineering Pedagogy, 13(8), 84. [Google Scholar] [CrossRef]

- Weisberg, L., & Dawson, K. (2023). The intersection of equity pedagogy and technology integration in preservice teacher education: A scoping review. Journal of Teacher Education, 74(4), 327–342. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.