Abstract

In the Ultra-High-Definition (UHD) domain, blind image quality assessment remains challenging due to the high dimensionality of UHD images, which exceeds the input capacity of deep learning networks. Motivated by the visual discrepancies observed between high- and low-quality images after down-sampling and Super-Resolution (SR) reconstruction, we propose a SUper-Resolved Pseudo References In Dual-branch Embedding (SURPRIDE) framework tailored for UHD image quality prediction. SURPRIDE employs one branch to capture intrinsic quality features from the original patch input and the other to encode comparative perceptual cues from the SR-reconstructed pseudo-reference. The fusion of the complementary representation, guided by a novel hybrid loss function, enhances the network’s ability to model both absolute and relational quality cues. Key components of the framework are optimized through extensive ablation studies. Experimental results demonstrate that the SURPRIDE framework achieves competitive performance on two UHD benchmarks (AIM 2024 Challenge, PLCC = 0.7755, SRCC = 0.8133, on the testing set; HRIQ, PLCC = 0.882, SRCC = 0.873). Meanwhile, its effectiveness is verified on high- and standard-definition image datasets across diverse resolutions. Future work may explore positional encoding, advanced representation learning, and adaptive multi-branch fusion to align model predictions with human perceptual judgment in real-world scenarios.

1. Introduction

Blind Image Quality Assessment (BIQA) plays a critical role in a wide range of applications, including device benchmarking and image-driven pattern analysis. Its influence spans multiple domains from data compression, signal transmission, media quality assurance, image enhancement, to content understanding [1,2,3,4]. Unlike full- or reduced-reference methods [5,6,7,8], BIQA aims to estimate perceptual image quality solely based on the distorted image itself, without access to any corresponding reference image or related metadata. This no-reference characteristic makes BIQA especially well-suited for real-world deployments in industrial and consumer-level scenarios, where reference images are typically unavailable. BIQA is not only foundational for improving automated visual systems but also essential for ensuring quality consistency in ubiquitous imaging.

With the rapid advancement of imaging hardware and the widespread deployment of high-performance chips, UHD images have become increasingly accessible, not only through professional imaging equipment but also via consumer-grade mobile devices. This surge in image resolution presents significant challenges for UHD-BIQA. The resolution of realistic images in public datasets has increased from ≈500 × 500 pixels in early databases, such as LIVE Challenge (CLIVE) [9], to 3840 × 2160 pixels in recent benchmarks [10]. Despite this progress in data availability, effectively handling UHD images remains an open problem due to high computational costs, increased visual complexity, and the scale sensitivity of existing models.

To address these challenges, existing approaches offer valuable insights into modeling perceptual quality under High-Resolution (HR) imaging conditions. These methods can be categorized based on their methodological foundations, architectural designs, and feature extraction strategies. (a) Machine learning-based methods quantify an input image using a compact feature vector, which serves as the input to a regression model for score prediction. Chen et al. compute maximum gradient variations and local entropy across multiple image channels, which are combined to assess image sharpness [11]. Yu et al. treat the outputs of BIQA indicators as mid-level features, and various regression models are investigated for scoring perceptual quality [12]. Wu et al. collect multi-stage semantic features from a pre-trained deep neural network (DNN) and refine them using a multi-level channel attention module to improve prediction accuracy [13]. Chen and Yu utilize a pre-trained DNN as a fixed feature extractor and evaluate a range of regression models [14]. Although these feature-based approaches [11,12,13,14,15] are computationally efficient, their reliance on handcrafted features often limits their representational capacity, making it challenging to capture the rich visual structures and complex distortions of UHD images. (b) Patch-based deep learning methods sample numerous sub-regions from an input image and feed these patches into a neural network. The quality of the image is predicted through jointly optimizing the feature representations and network parameters. Yu et al. develop a shallow network, and random patches from each image are used to train the network by minimizing the prediction error [16,17]. Bianco et al. explore the features from pre-trained networks and fine-tuning strategies, and the final image quality score is obtained by average-pooling the predicted scores across multiple patches [18]. Ma et al. propose a multi-task learning network that simultaneously predicts distortion types and image quality by using two sub-networks [19]. Su et al. introduce a hyper-network architecture that divides the BIQA process into content understanding, perception rule learning, and self-adaptive score regression [20]. While these deep learning methods [16,17,18,19,20] are effective in a data-efficient manner, they typically assign the same global quality score to all patches regardless of local variation. This simplification overlooks the spatial heterogeneity and region-specific distortions pronounced in UHD images.

To the best of our knowledge, only a few studies have attempted to address UHD-BIQA challenges. These efforts have explored various strategies for the high computational and perceptual complexity of UHD content. Huang et al. integrate ResNet [21], Vision Transformer (ViT) [22], and recurrent neural network (RNN) [23] to benchmark their curated HR image database [24]. This hybrid architecture is designed to combine spatial feature extraction, global context modeling, and sequential processing. Sun et al. develop a multi-branch framework that leverages to extract features corresponding to global aesthetic attributes, local technical distortions, and salient content. These diverse feature representations of perceptual quality are fused and regressed into final quality scores [25]. Tan et al. explore Swin Transformer [26] to process full-resolution images. Their approach mimics human visual perception by assigning adaptive weights to different sub-regions and incorporates fine-grained frequency-domain information to enhance prediction accuracy [27]. However, these approaches [24,25,27] rely on complex multi-branch architectures and Transformer-based pyramid perception mechanisms, which demand substantial computational resources and processing times. These limitations hinder their scalability and practicality in real-world UHD-BIQA applications.

This study presents a novel framework, SUper-resolved Pseudo References In Dual-branch Embedding (SURPRIDE), to address UHD-BIQA challenges. The core idea is to leverage SR as a lightweight and deterministic transformation to generate pseudo-reference from the distorted input. Although SR is not intended to restore ground-truth quality, it introduces structured distortions that provide informative visual contrasts. Further, by pairing each distorted image with its corresponding SR version, we construct a dual-branch architecture that simultaneously learns intrinsic quality representations from the original input and comparative quality cues from the pseudo-reference. This design enables the model to better capture fine-grained differences that are especially critical in UHD images. The main contributions of this work are summarized as follows:

- We propose the SURPRIDE framework, which leverages SR reconstruction as a self-supervised transformation to generate external quality representations.

- We implement a dual-branch network that jointly models intrinsic quality from distorted images and comparative cues from generated pseudo-references.

- We design a hybrid loss function incorporating cosine similarity between dual-branch output features, enabling the model to learn from the relational differences between input patch pairs.

- We conduct extensive experiments on UHD, high-definition (HD), and standard-definition (SD) image datasets, demonstrating SURPRIDE’s competitive performance and strong generalization ability in BIQA tasks.

The rest of this paper is organized as follows. Section 2 details the proposed framework, including the motivation behind the dual-branch architecture and descriptions of its core components: patch preparation, the dual-branch network, and the hybrid loss function. Section 3 outlines the experimental setup, including datasets, baseline BIQA models for comparison, implementation details, and evaluation protocol. Section 4 presents a comprehensive analysis of the experimental results across UHD, HD, and SD image databases, along with supporting ablation studies on the UHD-IQA database [10] and the intuitive explanation of the proposed hybrid loss function from the perspective of feature similarity change. Section 5 discusses key findings, practical implications, and current limitations of the proposed framework. Finally, Section 6 summarizes the work and highlights potential directions for future UHD-BIQA research.

2. The Proposed SURPRIDE Framework

2.1. One Phenomenon Observed in Image Quality Degradation

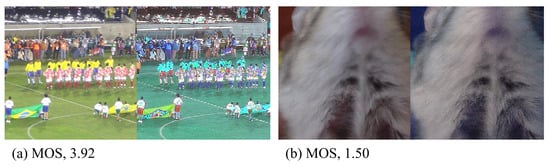

Figure 1 illustrates two images before and after SR reconstruction. The image on the left has a subjective mean opinion score (MOS) of 3.92, indicating high perceived visual quality, while the image on the right has a MOS of 1.50, reflecting significantly lower quality. In this example, each original image is first down-sampled to one-quarter of its original resolution, and then an SR algorithm of HAT [28] is employed to upscale the low-resolution version by a factor of four. The original and SR-reconstructed images are shown side by side for visual perception and comparison.

Figure 1.

Original images with varying MOS values and corresponding reconstructed images side by side. Notably, higher-quality images exhibit more noticeable distortions after SR reconstruction.

In Figure 1, a notable phenomenon is observed: a higher-quality image tends to exhibit more visible degradation after SR reconstruction. These degradations manifest as altered colors, distorted shapes, and loss of structural details. In contrast, lower-quality images undergo comparatively minor perceptual changes when reconstructed, making them visually more consistent with their original versions. This observation suggests that SR reconstruction can serve as a meaningful auxiliary signal, as it introduces distortion patterns that align well with human visual sensitivity to quality differences.

This phenomenon is closely related to the concept of just noticeable difference [29], which describes the smallest perceptible change that can be detected by the human visual system. In the context of image processing, the concept typically refers to the minimum level of distortion or variation, such as changes in brightness, color, or texture, that a viewer can perceive. By identifying the threshold at which visual differences become noticeable, it serves as a perceptual boundary for distinguishing between imperceptible and perceivable distortions [30].

Inspired by this insight, we propose leveraging both the original distorted image and its SR reconstructed image within a dual-branch deep learning architecture. This design enables the model to simultaneously capture intrinsic quality characteristics from the input image and extract comparative perceptual cues from the reconstructed image for enhancing the overall UHD-BIQA performance.

2.2. The Proposed Framework

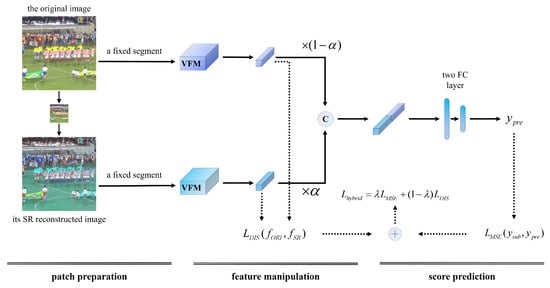

Figure 2 illustrates the SURPRIDE framework, which comprises patch preparation, feature manipulation, and score prediction. Specifically, in the dual-branch structure, one branch processes the original distorted image, while the other processes its SR reconstruction. For each branch, a fixed segment is passed through a visual foundation model (VFM) to extract high-level representations. This results in two feature vectors, from the original image and from the SR-reconstructed image. These two vectors are then weighted and concatenated to form a comprehensive embedding that captures both intrinsic and comparative cues. Finally, the concatenated feature vector is passed through a two-layer full connection (FC) network to predict the final score of image quality. This architecture is lightweight yet effective, enabling the model to leverage both content and restoration differences for improved quality estimation.

Figure 2.

The framework consists of two branches, each followed by a VFM backbone for feature extraction. The output features are then weighted and concatenated as the input of a module with two FC layers. In model training, added to forms a hybrid loss function for guiding the model toward improved performance. Here, denotes the scalar weighting parameter; “×” denotes scalar—vector multiplication; VFM refers to the vision foundation model used as the backbone.

Segment Preparation

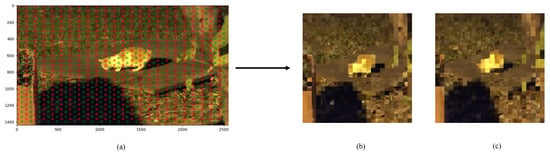

Patch-based sampling is effective in preserving spatial relationships and texture structures in images [10,25]. Inspired by these approaches, an adaptive fixed-position patch segmentation strategy was designed which extracts patches uniformly along the horizontal and vertical directions of the images, as shown in Figure 3. These patches are reassembled into a square segment while preserving their spatial relationships, ensuring that both the original and super-resolved images undergo the same patch segmentation process. This consistency preserves meaningful comparative information and fine-grained local details. Therefore, it can yield a segment of size [384, 384] from successive patches of typical size [16, 16], and such patch segments can be extracted many times strategically.

Figure 3.

Illustration of segment preparation. (a) An exemplary image with a resolution of 2560 × 1440, red and green squares denote the basic patches sized 16 × 16. (b) An image patch sized 384 × 384 composed of successive red patches. (c) An image patch sized 384 × 384 formed by successive green patches. Notably, these patches are uniformly sampled across the entire image. Throughout the training process, both the original and SR reconstructed images are subjected to an identical and fixed sampling strategy.

In the current study, to improve the receptive field of the network, a two-stage sampling strategy is used. In other words, the image is sampled twice at different locations, generating two pairs of segments. In a single training loop, these two pairs of segments flow sequentially through the network, yielding model outputs two times, and the final model output is the average of these two outputs.

2.3. A Dual-Branch Network Architecture

The dual-branch network architecture consists of a branch that receives the original image patch as input, and a branch that receives the SR reconstructed image patch as input. This proposal is distinctive to those models that exclusively employ VFM backbones as the backbone, circumventing the extraction of intermediate feature layers and the addition of various specialized modules to alter the model architecture. This methodology fully capitalizes on the inherent capabilities of these VFM backbone, ensuring computational efficiency and ease of reproducibility, while avoiding the complexity and potential over-engineering associated with extensive architectural modifications. By preserving the integrity of the VFM backbone’s original structure, we anticipate the model’s performance will naturally advance in tandem with the evolution of the VFM backbone.

Specifically, for the original image patch , it is firstly resized to the specified size to obtain by using Bi-cubic interpolation. Next, is magnified by an SR reconstruction technique at the specified scaling factor, and the resulting image is denoted as . Equation (1) shows the entire process.

The fixed segments, and , are then fed into its sequential VFM, generating two feature vectors, and , correspondingly. After that, features are weighted and concated to generate a longer feature vector, , as shown in Equation (2), where is the weighting parameter.

Finally, a two FC layer multi-layer perceptron (MLP) network is designed to regress the feature to get a predicted score of image quality, .

2.4. The Proposed Hybrid Loss Function

The MSE is commonly used as a loss function to minimize the difference between the predicted quality score and the subjective ground truth score in regression tasks. When using m segments for model optimization, the MSE loss function can be formulated as in Equation (3) where j stands for the j-th image segment.

As shown in Figure 1, a higher-quality image tends to exhibit more visible degradation after SR reconstruction. Therefore, we introduce another loss function that measures the dissimilarity between the i-th features from the original branch () and the SR branch () by using the cosine similarity. Equation (4) shows the loss function in which stands for the vector similarity measure by using the cosine function.

Subsequently, the hybrid loss function is defined as the weighting of and as shown in Equation (5), where is a weighting parameter to balance the loss functions.

Therefore, a hybrid loss function concerning both prediction difference and feature dissimilarity is proposed for the UHD-BIQA problem.

3. Materials and Methods

3.1. Databases

Six databases with authentic distortions are analyzed which encompass a wide range of contents, distortions, and imaging devices. Image resolution ranges from SD (the CLIVE [9] and BIQ2021 [31] databases), to HD (the CID [32] and KonIQ-10k [33] databases), and to UHD (the HRIQ [24] and UHD-IQA [10] databases). Due to input size requirements for sequential analysis, the images are resized to specific resolutions using B-cubic interpolation. Table 1 summarizes the database configurations.

Table 1.

Summary of database configurations.

3.2. Involved BIQA Models

The proposed SURPRIDE framework is evaluated and compared to those state-of-the-art (SOTA) works. On the HRIQ [24] and BIQ [31] datasets, the best-performing methods are summarized. On the UHD-IQA dataset [10], the top-ranking teams in the AIM 2024 challenge [34] are presented. On the CID dataset [32], the works include MetaIQA [35], DACNN [36], GCN-IQD [37], and MFFNet [38]. On the KonIQ-10k [33] and CLIVE [9] datasets, the methods are HyperIQA [20], ReIQA [39], TReS [40], QCN [41], ATTIQA [42], GMC-IQA [43], Prompt-IQA [44], and SGIQA [45].

3.3. Experimental Design and Performance Evaluation

The proposed framework adopts a dual-branch architecture, where one branch processes the original input images and the other processes their SR counterparts. This design enables the model to leverage complementary information from both native and SR images, thereby improving the robustness and accuracy.

To extract rich visual representations from both branches, we employ SOTA pre-trained models, specifically DeiT [46] and ConvNeXt [47], as candidate VFMs. These models are chosen based on their demonstrated superiority over architectures such as ViT [22] and ResNet [21], in challenging visual tasks [48].

To explore the impact of input resolution, we evaluate the segment sizes of 224 × 224 and 384 × 384. As for the SR branch, SwinFIR [49] and HAT [28] are considered, and two up-scaling factors of ×2 and ×4 are examined for comparative analysis.

We further investigate the effect of varying basic patch sizes, testing 8 × 8, 16 × 16, and 32 × 32. Hyperparameters and , which control the reference feature weighting and the loss balance, respectively, are selected through a grid search ( and ).

The training setup is configured as follows: the model is optimized using the AdamW optimizer [50] with a learning rate of , a weight decay of , and a batch size of 4. Training is conducted for 15 epochs, and the best-performing model on the validation set is retained for final testing.

Unless otherwise stated, the default configuration uses a ConvNeXt backbone with an input resolution of 384 × 384 and a patch size of 16 × 16. The weighting parameters are set to for the reference features and for the cosine similarity loss component.

All experiments are run on an NVIDIA RTX 3090 GPU with 24 GB VRAM and implemented by using Python 3.10, CUDA 12.1, and PyTorch 2.1.0. The source code is available online at https://github.com/LoudLove/SURPRIDE (accessed on 28 August 2025).

Finally, the performance of each BIQA model is evaluated using Pearson’s linear correlation coefficient (PLCC) and Spearman’s rank-order correlation coefficient (SRCC) to assess the correlation between predicted and ground truth quality scores.

4. Results

This section presents the BIQA performance of SOTA methods and the SURPRIDE framework across several public databases. Given the diversity of these databases, a wide range of BIQA methods are selected for comprehensive comparison. For each database, the highest metric values are underlined for comparison.

4.1. Performance on the UHD-IQA Image Database

4.1.1. Achievement on the Database

Table 2 presents the top-performing results from participant teams in the AIM 2024 Challenge [34] on the UHD-IQA database [10]. Using the officially fixed subsets, the proposed SURPRIDE demonstrated competitive performance on the testing set and the validation set. It ranked within the top five among the achievements on the database, slightly below the top-ranking models. Notably, the SJTU team designed three branches of feature extraction [25], in which each branch employed a Swin Transformer [26] pre-trained on a large-scale aesthetic image dataset [51] as the backbone for quality representation. Although this approach achieved top performance, it required substantial computational resources and extensive fine-tuning, incurring very high time and resource costs.

Table 2.

Achievement on the AIM 2024 Challenge using official data splitting.

4.1.2. Computing Efficiency

The computing efficiency related parameters are shown in Table 3, from the training time in hours (h), whether training on extra databases, the number of embedded parameters in millions (M) to the deployment on GPU hardware.

Table 3.

Computing efficiency analysis using different hardware.

The proposed SURPRIDE achieves competitive training time (6 h) without requiring extra data when running on an RTX 3090 GPU. Despite having the largest parameter count (176.2 M) among the listed models, SURPRIDE maintains a reasonable training cost comparable to the baseline and lower than many others, such as MobileNet-IQA (48 h) and EQCNet (22 h). Several models, such as ICL and CLIP-IQA, offer faster training but rely on high-end GPUs (A100, A6000), possibly due to pre-trained components. The SJTU model requires longer training times (12 h) and has fewer parameters, yet it does not well leverage additional data as effectively. Overall, SURPRIDE balances high model capacity with efficient training time and standard hardware, making it both scalable and practical for UHD-BIQA.

4.1.3. Inference Time in SR Reconstruction

Table 4 shows the inference time in SR reconstruction. The SR processing time increases with image resolution. For UHD images, the average time is 3.98 s; while for HD images (1024 × 768), it drops significantly to 0.89 s; and for SD images (496 × 496), the SR time is only 0.34 s. This demonstrates the scalability of the SR module, with efficiency maintained at lower resolutions and predictable cost growth at higher resolutions.

Table 4.

SR time cost across different image resolutions.

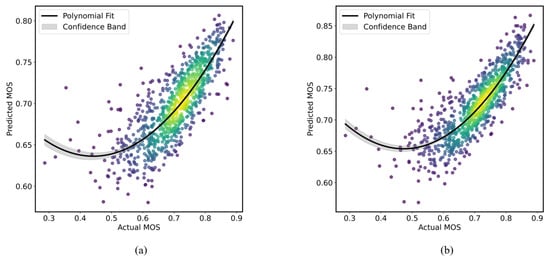

4.1.4. Perceived BIQA Performance on the UHD-IQA Dataset

Figure 4 shows the relationship between the predicted and subjective MOS values on the officially fixed testing split. Each plot includes a quadratic polynomial fit with a gray confidence band, and a yellow-highlighted region via the Gaussian kernel density estimation. As shown in Figure 4a, the proposed SURPRIDE framework achieves a good correlation, comparable to that of the top-performing model [25] displayed in Figure 4b.

Figure 4.

Scatter plots of predicted MOS versus subjective MOS values. (a) The proposed SURPRIDE algorithm; (b) The UIQA model. Each plot includes a quadratic fit line with confidence bands through Gaussian kernel density estimation.

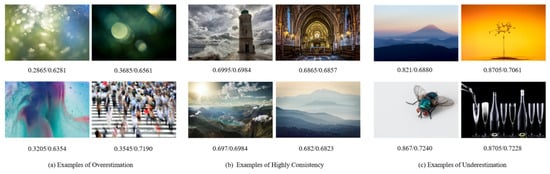

4.1.5. Visual Perception of BIQA Prediction

Figure 5 presents examples of predicted MOS scores which are categorized into overestimation (a), high consistency (b), and underestimation (c) cases. Overestimation often occurs in UHD images with high visual complexity and blurriness. These characteristics may lead the model to misinterpret smoothness or artistic effects as indicators of high quality, thereby predicting higher scores than the actual MOS scores. In contrast, images that are visually well-composed, sharp, and rich in detail, such as landscapes or scenes, typically result in highly consistent predictions. Their clear structures and balanced compositions align well with learned quality indicators, leading to accurate MOS estimations. Underestimation tends to happen with images that contain fine detail and clarity. Despite high visual quality, these images may receive lower predicted scores, possibly reflecting a bias toward more dynamic or textured visuals that are often perceived as more engaging.

Figure 5.

Examples of overestimated, consistent, and underestimated prediction cases (ground/predicted MOS score).

4.2. Ablation Studies on the UHD-IQA Image Database

On the UHD-IQA database, the ablation studies examine the effects of various factors in the proposed SURPRIDE framework on BIQA performance, including different SR methods, scaling factors, parameter configurations ( and ), patch sizes, dual-branch network settings, input image sizes, and loss functions.

4.2.1. Setting of the Dual-Branch Networks

Both ViT and CNN architectures are explored as the candidate VFM backbones [21,22]. For the ViT-based approach, DeiT [46] is used, which produces a 768-dimensional (d) feature vector (Pre-trained DeiT model: https://huggingface.co/facebook/deit-base-distilled-patch16-384 (accessed on 28 August 2025)). For the CNN-based approach, ConvNeXt [47] is used, generating a 1024-d output Pre-trained ConvNeXt model: https://huggingface.co/facebook/convnext-base-384-22k-1k (accessed on 28 August 2025). To ensure compatibility between different backbone outputs, the 768-d feature from DeiT is passed through a FC layer to project itself into a 1024-d space, matching the output of ConvNeXt. When both branches use DeiT, their outputs have the same dimensionality, and no additional FC layers are needed.

On the UHD-IQA dataset, the backbone networks of the dual-branch framework are determined by comparing the different combinations of DeiT [46] and ConvNeXt [47]. As shown in Table 5, the BIQA performance reaches the highest values when both the original and the SR branch use ConvNeXt [47]. When ConvNeXt performs as the original branch, its prediction results are much higher than that using DeiT as the original branch. Therefore, the dual branches, i.e., the original branch and the SR branch, are settled with the pre-trained ConvNeXt [47] in this study.

Table 5.

Dual-branch settings on the UHD-IQA database.

4.2.2. Determination of the Input Image Sizes

Table 6 summarizes the performance of the VFMs using the ConvNeXt model at two different input resolutions (224 × 224 and 384 × 384) on the UHD-IQA dataset. Increasing the resolution from 224 × 224 to 384 × 384 results in a noticeable improvement in both PLCC (+2.45%) and SRCC (+2.86%). This suggests that higher-resolution inputs enable ConvNeXt to capture more detailed information for better performance.

Table 6.

Effect of input image sizes on the UHD-IQA database.

4.2.3. Effect of SR Methods and Scaling Factors

Different SR reconstruction methods and scaling factors are investigated on the UHD-IQA dataset. The SR methods include SwinFIR [49] and HAT [28], and the scaling factors are considered as ×2 and ×4. The BIQA results are shown in Table 7.

Table 7.

Selection of SR reconstruction methods and scaling factors.

The proposed SURPRIDE framework demonstrates stable performance across different SR methods and scaling factors. Overall, both SR methods tend to perform best with a scaling factor of ×4 on the UHD-IQA dataset, and the differences in metric values remain small. To balance BIQA performance, inference time, and computational cost, SwinFIR and a scaling factor of ×4 are selected as the final setting due to its favorable overall performance and limited computational request.

4.2.4. Optimization of Parameter and Configurations

The parameters and were optimized through grid searching, with the corresponding BIQA results shown in Table 8. Generally, the proposed SURPRIDE framework achieves PLCC/SRCC values with minimal fluctuation when the parameters change. The best result is obtained when and , yielding a PLCC of 0.784 and an SRCC value of 0.819, both slightly higher than those obtained with other parameter pairs.

Table 8.

Effect of parameter configurations of and on the UHD-IQA database.

4.2.5. Effect of the Basic Patch Sizes

Table 9 shows the effect of patch sizes for the fixed input segments sized [384, 384]. On the UHD-IQA database, it is found that increasing patch sizes slightly improves the PLCC and SRCC values, and the patch size of 16 × 16 can well balance local texture embedding and prediction performance.

Table 9.

Effect of patch sizes for the fixed input segments.

4.2.6. Effect of the SR Branch on Score Prediction

The SURPRIDE framework consists of dual branches that use the ConvNeXt model as the backbone. Table 10 shows the BIQA performance with regard to input image sizes and with (w) or without (w/o) the SR branch on three databases.

Table 10.

Effect of input resolution and SR branch.

Several findings are observed. First, when improving the image resolution, the metric values are correspondingly increased, with or without the SR branch. Second, when using an image segment sized 384 × 384 as input, an additional branch further improve the BIQA performance on the three databases. Specifically, the dual-branch network with higher input resolution leads to better BIQA performance.

4.2.7. Effect of the Loss Function

The effect of the loss function is investigated on the BIQA performance by using different input image sizes. The results are shown in Table 11. It is observed that, additionally using the loss function corresponds to an increase in metric values regardless of input image sizes except for the KonIQ-10k database. Meanwhile, increasing the input image size from 224 × 224 to 384 × 384 positively improves metric values, with an increase of ≈0.02 observed across the databases.

Table 11.

Effect of the loss function.

4.3. Visual Understanding of the Hybrid Loss Function

To evaluate the representational contribution and learning dynamics of the dual-branch architecture, we conduct a comprehensive analysis, including gradient norm monitoring per loss component and branch, global representation similarity via linear centered kernel alignment (CKA) [52], and t-distributed stochastic neighbor embedding (t-SNE) visualization with sample-wise correspondence [53]. These components aim to visualize how the hybrid loss facilitates complementary learning between the internal and comparative branches in feature representation.

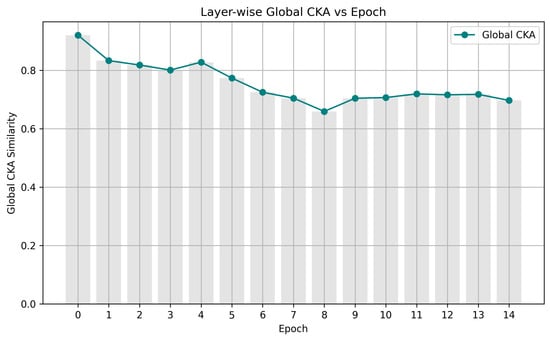

4.3.1. Linear CKA for Global Feature Similarity

To quantify the alignment of learned representations, we compute the linear CKA between the dual-branch output features of and on the UHD-IQA database as shown in Figure 6. This metric measures the similarity of representational subspaces without being affected by orthogonal transformations.

Figure 6.

Global CKA similarity between the two branches over training epochs.

Initially, both branches learn highly aligned global representations (CKA ≈ 0.92) at epoch 0, and a steady decline in CKA similarity to about 0.80 over several epochs, indicating that the branches progressively learn more diverse or specialized representations. The similarity continues to decrease, reaching its lowest point (≈0.65) at epoch 8, suggesting that the maximum divergence between the branches. After that, the similarity stabilizes around 0.70, and the learned representations maintain a moderate level of divergence while still sharing some common structure. Overall, a growing divergence in the features is captured by each branch, aligning with the goal of disentangled representation learning driven by distinct loss components.

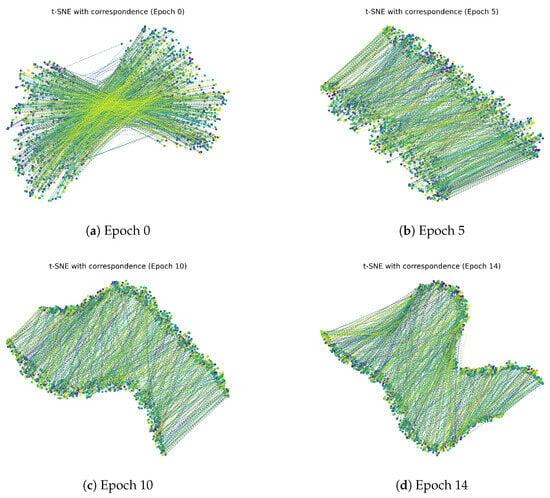

4.3.2. The t-SNE Visualization of Feature Similarity

The t-SNE visualization on and features is shown in Figure 7 with sample-wise dashed lines connecting the feature projections at the 0th, 5th, 10th, and 14th epochs when training the model on the UHD-IQA database.

Figure 7.

The t-SNE distribution of the similarity between the feature vectors.

Generally, the features of the training samples from the two branches cluster closely, denoting shared information, and the inter-branch gap for several points also grows as training advances. At the 0th epoch, the feature points from the two branches are highly intermixed with numerous crossing correspondence lines, indicating that the initial feature representations are unstructured and lack discriminative separation. At the 5th epoch, the feature points begin to form more coherent clusters, and correspondence lines are shorter and more aligned, suggesting improved alignment and partial separation in feature space. At the 10th epoch, the clusters become more compact, with clearer separation and more localized correspondence, reflecting enhanced discriminative power and stronger inter-branch consistency. At the 14th epoch, the feature distributions remain well-clustered and aligned, indicating that the model has stabilized and learned robust, consistent representations across branches. The progression from the 0th epoch to the 14th epoch illustrates how training improves the separation, compactness, and correspondence of features between the two branches, moving from chaotic initial embeddings to well-structured and aligned feature spaces. This visually supports that the branches, though initially synchronized, gradually specialize toward different representational regimes.

4.3.3. Intuitive Summarization

The perceived analyses from the linear CKA similarity (Figure 6) and the t-SNE visualization (Figure 7) validate that our proposed hybrid loss leads to a functional specialization of the two branches. The internal branch benefits from direct regression (), while the comparative branch emphasizes relational consistency (). Gradient-based and representation-based diagnostics both confirm that the branches learn complementary cues rather than redundantly increasing complexity.

4.4. Performance on Several Other Image Databases

The proposed dual-branch network, SURPRIDE, is further evaluated on one additional UHD image database (HRIQ [24]), two HD image databases (CID [32] and KonIQ-10k [33]), and two SD image databases (CLIVE [9] and BIQ2021 [31]). The performance of corresponding SOTA models are also introduced.

4.4.1. Results on Another UHD Image Database

As shown in Table 12, SURPRIDE performs slightly below the top-performing algorithm HR-BIQA [24], with 0.041 lower PLCC and 0.047 lower SRCC values, while outperforming the other three SOTA methods.

Table 12.

Achievement on the HRIQ database.

Interestingly, HR-IQA [24] consists of two streams for the original and down-sampled resolution, patch-based feature extraction, spatial pooling, and score regression. The two streams are the pre-trained ResNet50 [21] fine-tuned for image quality representation and the ViT model [22] used for semantic content embedding, and RNN [23] is modified for score prediction.

4.4.2. Results on Two HD Image Databases

Performance on the CID Database. As shown in Table 13, SURPRIDE outperforms several strong baselines, including MFFNet [38], GCN-IQD [37], and DACNN [36]. It establishes a new benchmark on this dataset by achieving the highest PLCC and SRCC values, indicating superior consistency with human perceptual judgments.

Table 13.

Achievement on the CID database.

Notably, MFFNet [38] employs a dual-branch multi-layer feature fusion architecture, where the primary branch is responsible for extracting and integrating fine-grained semantic features, while the secondary sub-branch focuses on capturing local visual cues at the super-pixel level.

Performance on the KonIQ-10k dataset. As shown in Table 14, SURPRIDE achieves comparable performance to the ATTIQA model [42], and outperforms several other prominent BIQA methods, including HyperIQA [20], TReS [40], and ReIQA [39].

Table 14.

Performance on the KonIQ-10k database.

Notably, ATTIQA [42] leverages a vision–language pre-trained large model and selectively extracts quality-relevant features using attention-based mechanisms. In addition, carefully crafted text prompts are incorporated to guide the network in focusing on key perceptual attributes associated with image quality.

4.4.3. Results on Two SD Image Databases

Performance on the CLIVE dataset. As shown in Table 15, SURPRIDE demonstrates competitive performance compared to several SOTA works, including Prompt-IQA [44], GMC-IQA [43], ATTIQA [42], and SGIQA [45], with minor differences in evaluation metrics. At the same time, SURPRIDE consistently outperforms other methods such as HyperIQA [20], TReS [40], and ReIQA [39].

Table 15.

Performance on the CLIVE database.

In particular, Prompt-IQA [44] benefits from being trained on a combination of multiple databases with extensive data augmentation, enabling rapid generalization to new datasets without the need for fine-tuning. Moreover, it employs image–score pairings that are tailored for the specific characteristics of the target datasets.

Performance on the BIQ2021 dataset. As shown in Table 16, SURPRIDE achieves the highest SRCC of 0.895 among the five BIQA models [6,56,57,58], and its SRCC outperforms the second-best model, IQA-NRTL [58], by a margin of 0.045. While its PLCC is slightly lower than that of the top-performing method, SURPRIDE shows consistent overall performance of the metrics on the database.

Table 16.

Performance on the BIQ2021 database.

It is worth noting that IQA-NRTL [58] captures multi-scale semantic features to model complex perceptual quality, and these features are adaptively fused and regressed to generate final image quality scores.

5. Discussion

The UHD-BIQA task remains challenging. Most existing approaches rely heavily on handcrafted features [11,12,13,14,15] and patch-based deep learning [16,17,18,19,20,43]. A limited number of models have been specifically tailored for UHD images [24,25,27], and these typically involve complex architectures and computationally intensive modules such as Transformers [22,26,45,59]. While effective, such designs are often impractical for real-world deployment due to their high resource requirements and limited scalability. To address these limitations, we propose a lightweight dual-branch framework, SURPRIDE, to represent image quality by learning from both the original input and a super-resolved pseudo-reference. Specifically, ConvNeXt [47] is employed as the VFM for efficient feature extraction, and SwinFIR [49] is used for fast SR reconstruction. Inspired by the visual differences observed between high- and low-quality images after down-sampling and SR reconstruction, a hybrid loss function is introduced that balances prediction accuracy with feature similarity. This design enables the network to effectively learn both absolute and comparative quality cues.

The SURPRIDE framework demonstrates top-tier BIQA performance on the UHD-IQA (Table 2, Figure 4) and HR-IQA (Table 12) databases, both of which contain authentic UHD images. The effectiveness of the proposed method can be attributed to several key factors. First, the use of the pre-trained ConvNeXt [47] as the VFM backbone in both branches allows for the extraction of intrinsic characteristics from the original input and comparative embeddings from the SR-reconstructed patch (Table 5), while the primary branch plays the dominant role in BIQA performance, the SR branch provides valuable complementary information, leading to further improvements (Table 10). Motivated by the observed phenomenon that higher-quality images often exhibit more noticeable degradation after SR reconstruction (Figure 1), the features from both branches are weighted and concatenated to form a more robust representation for quality prediction. Second, critical parameters, including input image size, patch size, SR method, and scaling factor, are systematically optimized through extensive ablation studies (Table 6, Table 7, Table 8, Table 9 and Table 10). These settings enable the framework to achieve optimal performance, with additional applications across HD and SD databases. Third, the proposed hybrid loss function encourages the learning of comparative embedding by leveraging differences introduced through SR reconstruction. This loss formulation enhances the network’s ability to model perceptual quality more accurately (Table 8 and Table 11). In summary, SURPRIDE combines a deep learning architecture with strategically tuned components and loss design to deliver superior or competitive results on the UHD image databases, while remaining efficient and scalable for real-world deployment.

The proposed SURPRIDE framework demonstrates strong performance not only on the UHD image databases (Table 2 and Table 12), but also on the HD (Table 13 and Table 14) and SD (Table 15 and Table 16) image databases. Falling under the category of patch-based deep learning methods, SURPRIDE randomly samples patches from the original images and reconstructs corresponding SR patches [49]. These pairs of patches are used to extract deep representative features, which are weighted and concatenated for robust quality representation and score prediction. Unlike earlier approaches that use small patches of size 16 × 16 or 32 × 32 [16,17,58], SURPRIDE adopts a much larger patch size of 384 × 384 (Table 6), which better captures contextual and structural information. Prior studies suggest that larger patch sizes contribute positively to performance in BIQA tasks [18,25,37,41,43]. Notably, SURPRIDE avoids reliance on high-computation modules or complex attention mechanisms. Its use of ConvNeXt [47] for feature extraction proves effective across a wide range of image resolutions. However, the proposed SURPRIDE also shows slightly inferior performance compared with certain specialized BIQA algorithms, including HR-BIQA [24] on the HRIQ database (Table 12), ATTIQA [42] on the KonIQ-10k database (Table 14), and Prompt-IQA [44] on the CLIVE database (Table 15). This inferiority may be attributed to the use of highly customized architectural designs in these models. For example, HR-BIQA [24] incorporates semantic content embeddings, ATTIQA [42] leverages vision–language-based quality-relevant feature extraction, and Prompt-IQA [44] benefits from image–score pairing in combination with extensive data augmentation tailored to the target databases. In addition, the diverse and dataset-specific characteristics [14] pose inherent challenges in BIQA, making it difficult for a single algorithm to achieve consistent superiority across all benchmarks. Therefore, further investigation is needed to understand the underlying causes of SURPRIDE’s performance limitations on these particular databases and to explore potential strategies for improving its adaptability.

The success of SURPRIDE across databases with varying resolutions (Table 4) can be attributed to several key factors. (a) HR inputs enable fine-grained distortion learning. Large patch sizes (384 × 384 or 224 × 224) retain sufficient local information while also capturing broader spatial context. These patches represent meaningful regions of the image, enabling the model to learn fine-grained distortion patterns that are often consistent within HR content [41,55]. (b) Training with multiple patches per image increases data diversity and supports better generalization [39,43,55,58]. By sampling image patches either randomly or strategically, the model is exposed to a diverse range of distortions and scene content, helping to compensate for the unavailability of full-resolution images during training. (c) Patch-level features tend to be scale-invariant, allowing models trained on HR patches to generalize well across different image sizes [39,43,55]. Local distortions in UHD images often exhibit characteristics similar to those in HD or SD images. (d) Moreover, processing entire UHD images directly is computationally prohibitive in terms of memory and training time. Patch-based learning provides a practical alternative, enabling the reuse of deep networks [21,22,26,37,42,43,47,59] without compromising batch size or training stability. (e) The proposed SURPRIDE learns image quality representation without large-scale external datasets. Many SOTA works require external data, HR inputs, or large computational budgets to perform well on certain datasets [34]. However, such resources are not always accessible in real-world applications. By generating pseudo-reference images directly from a single image, we provide a self-sufficient mechanism that preserves performance without relying on external ground truths. That ensures distributional consistency between the input image and the generated supervision and mitigates the domain gap typically introduced by training on external datasets with potentially mismatched distributions. In conclusion, patch-based learning strategies remain highly effective for UHD-BIQA, offering a favorable balance between computational efficiency and model accuracy.

Unsurprisingly, dual- and multi-branch network architectures have gained increasing prominence in advancing BIQA tasks [24,25,27,37,38]. This trend reflects the growing demand for richer and more nuanced modeling of perceptual image quality that single-branch models often struggle to achieve effectively. First, these architectures enable the separation and specialization of complementary information [37,39,55,58]. Dual-branch networks typically extract different feature types, such as global semantics (or quality-aware encoding) in one branch and local distortions (or content-aware encoding) in the other. Multi-branch networks may explicitly model multiple perceptual dimensions, including aesthetic quality, data fidelity, object saliency, and content structure. This separation facilitates better feature disentanglement, allowing the network to handle the complex, multidimensional characteristics of human visual perception effectively [37,39]. Second, robustness and adaptability across distortion types are enhanced. Real-world images often exhibit mixed or unknown distortion types, and no single representation is sufficient to capture all relevant quality variations [43,51]. Branching allows each sub-network to specialize in detecting specific distortions or perceptual cues, leading to improved generalization and performance across diverse scenarios. Third, dual- and multi-branch architectures provide flexibility for adaptive fusion [37,38,39,55,58]. By incorporating attention mechanisms, gating functions, or learned weighting schemes, these models can dynamically integrate information from multiple branches. This enables the network to emphasize the most relevant features at inference time, which is particularly important for UHD content or images with complex structures, where different regions may contribute unequally to perceived quality. Ultimately, the popularity of dual- and multi-branch networks is driven by their superior ability to align with human perceptual processes. Their modular and interpretable design supports the modeling of multi-scale, multi-type distortions in a way that more closely reflects how humans assess image quality. As a result, such architectures have consistently demonstrated strong performance on challenging, distortion-rich datasets in both synthetic and authentic environments.

To improve understanding of the proposed hybrid loss function, we employed both linear CKA (Figure 6) and t-SNE (Figure 7) to analyze the feature representations learned by the network. The hybrid loss was designed to balance prediction accuracy (via quality regression loss, ) and feature similarity (via perceptual alignment loss, ), thereby encouraging the model to capture not only absolute quality cues but also relative perceptual differences. This combination helps stabilize training and guides the network toward more discriminative and perceptually aligned representations. Visualization techniques offer an intuitive perspective on the effectiveness of the hybrid loss from representational consistency [60] and representation learning [61,62] by examining the behavior of dual-branch architectures and their learned feature spaces. Linear CKA provides a quantitative measure of similarity between feature spaces under different training objectives, revealing how the hybrid loss promotes stronger alignment between features from the original and reconstructed images [52]. Meanwhile, t-SNE maps high-dimensional features into a low-dimensional space, allowing us to visually observe cluster separation and structural consistency among images of different quality levels [53]. Together, these analyses confirm that the hybrid loss promotes functional disentanglement across branches and enhances robustness and generalization in perceptual quality prediction without incurring redundant architectural complexity, ultimately contributing to improved BIQA performance.

Several limitations remain in the current study. First, the design and exploration of loss functions are not comprehensive. Alternative loss functions, such as marginal cosine similarity loss [63], may offer additional benefits for enhancing feature similarity learning and improving quality prediction accuracy. Second, the feature fusion strategy employed is relatively simplistic. More advanced fusion techniques, including feature distribution matching [64] and cross-attention-based fusion [65], could be explored to enrich the representational power of the dual-branch framework. Third, the choices of backbone and SR architectures are not exhaustively evaluated. Integrating other promising modules, such as MobileMamba [66], arbitrary-scale SR [67], uncertainty-aware models [68], or sequential feature processing and score prediction [69], may further boost performance. Fourth, the proposed framework lacks fine-grained optimization of hyper-parameters and architectural configurations. A more systematic exploration of design choices could lead to additional performance gains for UHD images. Fifth, the time cost of the SR branch remains a major bottleneck of the real-time UHD-BIQA task (Table 4). Promising solutions can be drawn from lightweight SR networks, which leverage techniques such as knowledge distillation, algorithmic and procedural optimization, problem re-parameterization, dedicated DNN architecture design, and model quantization [70]. These strategies not only reduce computational burden but also preserve quality-related feature embedding, thereby making real-time deployment more feasible. Finally, Transformer-based and other advanced large multi-modal models [22,26,59] that exploit image content and additional text cues could be investigated in the UHD-BIQA field.

6. Conclusions

The SURPRIDE framework is proposed to address the UHD-BIQA challenges. Key components of the framework, including the VFM backbone, SR algorithm, scaling factor, weighting parameters ( and ), input size, and patch size, are determined via comprehensive ablation studies. The effectiveness of SURPRIDE is demonstrated on two UHD image databases and further validated on two HD and two SD databases, confirming its robustness across varying image resolutions. Motivated by the observed visual discrepancies between high- and low-quality images after down-sampling and SR reconstruction, the framework employs a dual-branch architecture. One branch extracts intrinsic quality features from the original input image, while the other captures comparative perceptual cues from the SR-reconstructed counterpart. The fusion of these two complementary streams enables richer and more discriminative quality representations, leading to improved performance. In future work, attention could be directed toward the integration of positional encoding, advanced representation learning, and adaptive dual- or multi-branch fusion to better align algorithmic predictions with human visual perception in real-world UHD scenarios.

Author Contributions

Conceptualization, J.G., Q.M. and Q.S.; Data curation, J.G. and Q.M.; Formal analysis, S.Y. and Q.S.; Funding acquisition, S.Y. and Q.S.; Investigation, S.Y. and Q.S.; Methodology, J.G., Q.M., S.Z. and Y.W.; Project administration, Q.S.; Software, J.G., Q.M., S.Z. and Y.W.; Supervision, Q.S.; Validation, S.Z. and Y.W.; Visualization, J.G., S.Z. and Y.W.; Writing—Original draft, J.G. and S.Y.; Writing—Review and editing, S.Y. and Q.S. All authors have read and agreed to the published version of the manuscript.

Funding

The work was in part supported by the National Key Research and Develop Program of China (Grant No. 2022ZD0115901), the National Natural Science Foundation of China (Grant No. 62177007), the China-Central Eastern European Countries High Education Joint Education Project (Grant No. 202012), the Application of Trusted Education Digital Identity in the Construction of Smart Campus in Vocational Colleges (Grant No. 2242000393), the Knowledge Blockchain Research Fund (Grant No. 500230), and the Medium- and Long-term Technology Plan for Radio, Television and Online Audiovisual (Grant No. ZG23011).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The datasets supporting the current study are available online: the CLIVE database at https://live.ece.utexas.edu/research/ChallengeDB/, the BIQ2021 database at https://github.com/nisarahmedrana/BIQ2021, the CID database at https://qualinet.github.io/databases/image/cid2013_camera_image_database/, the KonIQ-10k database at https://database.mmsp-kn.de/koniq-10k-database.html, the HRIQ database at https://github.com/jarikorhonen/hriq, and the UHD-IQA database at https://database.mmsp-kn.de/uhd-iqa-benchmark-database.html. The pre-trained VFM used in this study are publicly available at https://huggingface.co/facebook/convnext-base-384-22k-1k and https://huggingface.co/facebook/deit-base-distilled-patch16-384. The SR models are obtained from https://github.com/Zdafeng/SwinFIR and https://github.com/XPixelGroup/HAT (all accessed on 1 July 2025).

Conflicts of Interest

The authors declare no conflicts of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript; or in the decision to publish the results.

Abbreviations

The following abbreviations are used in this manuscript:

| UHD | ultra-high-definition |

| SURPRIDE | super-resolved pseudo references in dual-branch embedding |

| SR | super-resolution |

| BIQA | blind image quality assessment |

| CLIVE | LIVE challenge |

| HR | high-resolution |

| DNN | deep neural network |

| ViT | Vision Transformer |

| RNN | recurrent neural network |

| MOS | mean opinion score |

| VFM | visual foundation model |

| FC | full connection |

| MLP | multi-layer perceptron |

| SOTA | state-of-the-art |

| PLCC | Pearson’s linear correlation coefficient |

| SRCC | Spearman’s rank-order correlation coefficient |

| CKA | centered kernel alignment |

| t-SNE | t-distributed stochastic neighbor embedding |

References

- Dai, G.; Wang, Z.; Li, Y.; Chen, Q.; Yu, S.; Xie, Y. Evaluation of no-reference models to assess image sharpness. In Proceedings of the 2017 IEEE International Conference on Information and Automation (ICIA), Macau, China, 18–20 July 2017; pp. 683–687. [Google Scholar]

- Zhai, G.; Min, X. Perceptual image quality assessment: A survey. Sci. China Inf. Sci. 2020, 63, 1–52. [Google Scholar] [CrossRef]

- Yang, P.; Sturtz, J.; Qingge, L. Progress in blind image quality assessment: A brief review. Mathematics 2023, 11, 2766. [Google Scholar] [CrossRef]

- Zhang, Q.; Zhu, Y.; Chen, Q.; Cordeiro, F. PSSCL: A progressive sample selection framework with contrastive loss designed for noisy labels. Pattern Recognit. 2025, 161, 111284. [Google Scholar] [CrossRef]

- Chen, L.; Jiang, F.; Zhang, H.; Wu, S.; Yu, S.; Xie, Y. Edge preservation ratio for image sharpness assessment. In Proceedings of the 2016, 12th World Congress on Intelligent Control and Automation (WCICA), Guilin, China, 12–15 June 2016; pp. 1377–1381. [Google Scholar]

- Lang, S.; Liu, X.; Zhou, M.; Luo, J.; Pu, H.; Zhuang, X.; Wang, J.; Wei, X.; Zhang, T.; Feng, Y.; et al. A full-reference image quality assessment method via deep meta-learning and conformer. IEEE Trans. Broadcast. 2023, 70, 316–324. [Google Scholar] [CrossRef]

- Soundararajan, R.; Bovik, A.C. RRED indices: Reduced reference entropic differencing for image quality assessment. IEEE Trans. Image Process. 2011, 21, 517–526. [Google Scholar] [CrossRef]

- Wu, J.; Lin, W.; Shi, G.; Liu, A. Reduced-reference image quality assessment with visual information fidelity. IEEE Trans. Multimed. 2013, 15, 1700–1705. [Google Scholar] [CrossRef]

- Ghadiyaram, D.; Bovik, A.C. Massive online crowdsourced study of subjective and objective picture quality. IEEE Trans. Image Process. 2015, 25, 372–387. [Google Scholar] [CrossRef] [PubMed]

- Hosu, V.; Agnolucci, L.; Wiedemann, O.; Iso, D.; Saupe, D. UHD-IQA benchmark database: Pushing the boundaries of blind photo quality assessment. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 467–482. [Google Scholar]

- Chen, J.; Li, S.; Lin, L. A no-reference blurred colourful image quality assessment method based on dual maximum local information. Iet Signal Process. 2021, 15, 597–611. [Google Scholar] [CrossRef]

- Yu, S.; Wang, J.; Gu, J.; Jin, M.; Ma, Y.; Yang, L.; Li, J. A hybrid indicator for realistic blurred image quality assessment. J. Vis. Commun. Image Represent. 2023, 94, 103848. [Google Scholar] [CrossRef]

- Wu, W.; Huang, D.; Yao, Y.; Shen, Z.; Zhang, H.; Yan, C.; Zheng, B. Feature rectification and enhancement for no-reference image quality assessment. J. Vis. Commun. Image Represent. 2024, 98, 104030. [Google Scholar] [CrossRef]

- Chen, Z.; Yu, S. Taylor expansion-based Kolmogorov-Arnold network for blind image quality assessment. J. Vis. Commun. Image Represent. 2025, 112, 104571. [Google Scholar] [CrossRef]

- Yu, S.; Chen, Z.; Yang, Z.; Gu, J.; Feng, B.; Sun, Q. Exploring Kolmogorov-Arnold networks for realistic image sharpness assessment. In Proceedings of the ICASSP 2025–2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; pp. 1–5. [Google Scholar]

- Yu, S.; Jiang, F.; Li, L.; Xie, Y. CNN-GRNN for image sharpness assessment. In Proceedings of the Asian Conference on Computer Vision, Taipei, Taiwan, 20–24 November 2016; pp. 50–61. [Google Scholar]

- Yu, S.; Wu, S.; Wang, L.; Jiang, F.; Xie, Y.; Li, L. A shallow convolutional neural network for blind image sharpness assessment. PLoS ONE 2017, 12, e0176632. [Google Scholar] [CrossRef]

- Bianco, S.; Celona, L.; Napoletano, P.; Schettini, R. On the use of deep learning for blind image quality assessment. Signal Image Video Process. 2018, 12, 355–362. [Google Scholar] [CrossRef]

- Ma, K.; Liu, W.; Zhang, K.; Duanmu, Z.; Wang, Z.; Zuo, W. End-to-end blind image quality assessment using deep neural networks. IEEE Trans. Image Process. 2017, 27, 1202–1213. [Google Scholar] [CrossRef]

- Su, S.; Yan, Q.; Zhu, Y.; Zhang, C.; Ge, X.; Sun, J.; Zhang, Y. Blindly assess image quality in the wild guided by a self-adaptive hyper network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 3667–3676. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef] [PubMed]

- Huang, H.; Wan, Q.; Korhonen, J. High resolution image quality database. In Proceedings of the ICASSP 2024–2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Republic of Korea, 14–19 April 2024. [Google Scholar]

- Sun, W.; Zhang, W.; Cao, Y.; Cao, L.; Jia, J.; Chen, Z.; Zhang, Z.; Min, X.; Zhai, G. Assessing UHD image quality from aesthetics, distortions, and saliency. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October; Springer: Berlin/Heidelberg, Germany, 2025; pp. 109–126. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Virtual, 11–17 October 2021; pp. 10012–10022. [Google Scholar]

- Tan, X.; Zhang, J.; Quan, Y.; Li, J.; Wu, Y.; Bian, Z. Highly efficient no-reference 4k video quality assessment with full-pixel covering sampling and training strategy. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, Australia, 28 October–1 November 2024; pp. 9913–9922. [Google Scholar]

- Chen, X.; Wang, X.; Zhang, W.; Kong, X.; Qiao, Y.; Zhou, J.; Dong, C. Hat: Hybrid attention transformer for image restoration. arXiv 2023, arXiv:2309.05239. [Google Scholar] [CrossRef]

- Stern, M.K.; Johnson, J.H. Just noticeable difference. In The Corsini Encyclopedia of Psychology; Wiley: Hoboken, NJ, USA, 2010; pp. 1–2. [Google Scholar]

- Yu, A.; Grauman, K. Just noticeable differences in visual attributes. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 2416–2424. [Google Scholar]

- Ahmed, N.; Asif, S. BIQ2021: A large-scale blind image quality assessment database. J. Electron. Imaging 2022, 31, 053010. [Google Scholar] [CrossRef]

- Virtanen, T.; Nuutinen, M.; Vaahteranoksa, M.; Oittinen, P.; Häkkinen, J. CID2013: A database for evaluating no-reference image quality assessment algorithms. IEEE Trans. Image Process. 2014, 24, 390–402. [Google Scholar] [CrossRef]

- Hosu, V.; Lin, H.; Sziranyi, T.; Saupe, D. KonIQ-10k: An ecologically valid database for deep learning of blind image quality assessment. IEEE Trans. Image Process. 2020, 29, 4041–4056. [Google Scholar] [CrossRef] [PubMed]

- Hosu, V.; Conde, M.V.; Agnolucci, L.; Barman, N.; Zadtootaghaj, S.; Timofte, R.; Sun, W.; Zhang, W.; Cao, Y.; Cao, L.; et al. AIM 2024 challenge on UHD blind photo quality assessment. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; Springer: Berlin/Heidelberg, Germany, 2025; pp. 261–286. [Google Scholar]

- Zhu, H.; Li, L.; Wu, J.; Dong, W.; Shi, G. MetaIQA: Deep meta-learning for no-reference image quality assessment. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 14143–14152. [Google Scholar]

- Pan, Z.; Zhang, H.; Lei, J.; Fang, Y.; Shao, X.; Ling, N.; Kwong, S. DACNN: Blind image quality assessment via a distortion-aware convolutional neural network. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 7518–7531. [Google Scholar] [CrossRef]

- Gao, Y.; Min, X.; Cao, Y.; Liu, X.; Zhai, G. No-Reference Image Quality Assessment: Obtain MOS from Image Quality Score Distribution. IEEE Trans. Circuits Syst. Video Technol. 2024, 35, 1840–1854. [Google Scholar] [CrossRef]

- Zhao, W.; Li, M.; Xu, L.; Sun, Y.; Zhao, Z.; Zhai, Y. A Multi-Branch Network with Multi-Layer Feature Fusion for No-Reference Image Quality Assessment. IEEE Trans. Instrum. Meas. 2024, 73, 5021511. [Google Scholar]

- Saha, A.; Mishra, S.; Bovik, A.C. Re-iqa: Unsupervised learning for image quality assessment in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 5846–5855. [Google Scholar]

- Golestaneh, S.A.; Dadsetan, S.; Kitani, K.M. No-reference image quality assessment via transformers, relative ranking, and self-consistency. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2022; pp. 1220–1230. [Google Scholar]

- Shin, N.H.; Lee, S.H.; Kim, C.S. Blind image quality assessment based on geometric order learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 12799–12808. [Google Scholar]

- Kwon, D.; Kim, D.; Ki, S.; Jo, Y.; Lee, H.E.; Kim, S.J. ATTIQA: Generalizable Image Quality Feature Extractor using Attribute-aware Pretraining. In Proceedings of the Asian Conference on Computer Vision, Hanoi, Vietnam, 8–12 December 2024; pp. 4526–4543. [Google Scholar]

- Chen, Z.; Wang, J.; Li, B.; Yuan, C.; Hu, W.; Liu, J.; Li, P.; Wang, Y.; Zhang, Y.; Zhang, C. Gmc-iqa: Exploiting global-correlation and mean-opinion consistency for no-reference image quality assessment. arXiv 2024, arXiv:2401.10511. [Google Scholar]

- Chen, Z.; Qin, H.; Wang, J.; Yuan, C.; Li, B.; Hu, W.; Wang, L. Promptiqa: Boosting the performance and generalization for no-reference image quality assessment via prompts. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; Springer: Berlin/Heidelberg, Germany, 2024; pp. 247–264. [Google Scholar]

- Pan, L.; Zhang, X.; Xie, F.; Zhang, H.; Zheng, Y. SGIQA: Semantic-guided no-reference image quality assessment. IEEE Trans. Broadcast. 2024, 40, 1292–1301. [Google Scholar] [CrossRef]

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jégou, H. Training data-efficient image transformers & distillation through attention. In Proceedings of the International Conference on Machine Learning, Vienna, Austria, 18–24 July 2021; pp. 10347–10357. [Google Scholar]

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A convnet for the 2020s. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 11976–11986. [Google Scholar]

- Hatamizadeh, A.; Kautz, J. Mambavision: A hybrid mamba-transformer vision backbone. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 25261–25270. [Google Scholar]

- Zhang, D.; Huang, F.; Liu, S.; Wang, X.; Jin, Z. Swinfir: Revisiting the swinir with fast fourier convolution and improved training for image super-resolution. arXiv 2012, arXiv:2208.11247. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv 2017, arXiv:1711.05101. [Google Scholar]

- Murray, N.; Marchesotti, L.; Perronnin, F. AVA: A large-scale database for aesthetic visual analysis. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2408–2415. [Google Scholar]

- Kornblith, S.; Norouzi, M.; Lee, H.; Hinton, G. Similarity of neural network representations revisited. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 3519–3529. [Google Scholar]

- Maaten, L.v.d.; Hinton, G. Visualizing data using t-SNE. J. Mach. Learn. Res. 2008, 9, 2579–2605. [Google Scholar]

- Yang, S.; Wu, T.; Shi, S.; Lao, S.; Gong, Y.; Cao, M.; Wang, J.; Yang, Y. Maniqa: Multi-dimension attention network for no-reference image quality assessment. In Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 1191–1200. [Google Scholar]

- Huang, Z.; Liu, H.; Jia, Z.; Zhang, S.; Zhang, Y.; Liu, S. Texture dominated no-reference quality assessment for high resolution image by multi-scale mechanism. Neurocomputing 2025, 636, 130003. [Google Scholar] [CrossRef]

- Valicharla, S.K.; Li, X.; Greenleaf, J.; Turcotte, R.; Hayes, C.; Park, Y.L. Precision detection and assessment of ash death and decline caused by the emerald ash borer using drones and deep learning. Plants 2023, 12, 798. [Google Scholar] [CrossRef]

- König, M.; Seeböck, P.; Gerendas, B.S.; Mylonas, G.; Winklhofer, R.; Dimakopoulou, I.; Schmidt-Erfurth, U.M. Quality assessment of colour fundus and fluorescein angiography images using deep learning. Br. J. Ophthalmol. 2024, 108, 98–104. [Google Scholar] [CrossRef]

- Yang, Y.; Liu, C.; Wu, H.; Yu, D. A quality assessment algorithm for no-reference images based on transfer learning. PeerJ Comput. Sci. 2025, 11, e2654. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30, 5998–6008. [Google Scholar]

- Shi, R.; Li, T.; Zhang, L.; Yamaguchi, Y. Visualization comparison of vision transformers and convolutional neural networks. IEEE Trans. Multimed. 2023, 26, 2327–2339. [Google Scholar] [CrossRef]

- Lu, Y.; Li, X.; Liu, J.; Chen, Z. StyleAM: Perception-Oriented Unsupervised Domain Adaption for No-Reference Image Quality Assessment. IEEE Trans. Multimed. 2024, 27, 2043–2058. [Google Scholar] [CrossRef]

- Srinath, S.; Mitra, S.; Rao, S.; Soundararajan, R. Learning generalizable perceptual representations for data-efficient no-reference image quality assessment. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2024; pp. 22–31. [Google Scholar]

- Yao, J.; Yang, B.; Wang, X. Reconstruction vs. generation: Taming optimization dilemma in latent diffusion models. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 15703–15712. [Google Scholar]

- Zhou, Y.; Ye, Y.; Zhang, P.; Wei, X.; Chen, M. Exact fusion via feature distribution matching for few-shot image generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–21 June 2024; pp. 8383–8392. [Google Scholar]

- Yu, S.; Meng, J.; Fan, W.; Chen, Y.; Zhu, B.; Yu, H.; Xie, Y.; Sun, Q. Speech emotion recognition using dual-stream representation and cross-attention fusion. Electronics 2024, 13, 2191. [Google Scholar] [CrossRef]

- He, H.; Zhang, J.; Cai, Y.; Chen, H.; Hu, X.; Gan, Z.; Wang, Y.; Wang, C.; Wu, Y.; Xie, L. Mobilemamba: Lightweight multi-receptive visual mamba network. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 4497–4507. [Google Scholar]

- Yue, Z.; Liao, K.; Loy, C.C. Arbitrary-steps image super-resolution via diffusion inversion. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 23153–23163. [Google Scholar]

- Zhang, L.; You, W.; Shi, K.; Gu, S. Uncertainty-guided Perturbation for Image Super-Resolution Diffusion Model. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 17980–17989. [Google Scholar]

- Chan, K.H.; Im, S.K. Sentiment analysis by using Naïve-Bayes classifier with stacked CARU. Electron. Lett. 2022, 58, 411–413. [Google Scholar] [CrossRef]

- Rasool, M.A.; Ahmad, S.; Mardieva, S.; Akter, S.; Whangbo, T.K. A comprehensive survey on real-time image super-resolution for IoT and delay-sensitive applications. Appl. Sci. 2024, 15, 274. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).