Abstract

Crop row detection is one of the foundational and pivotal technologies of agricultural robots and autonomous vehicles for navigation, guidance, path planning, and automated farming in row crop fields. However, due to a complex and dynamic agricultural environment, crop row detection remains a challenging task. The surrounding background, such as weeds, trees, and stones, can interfere with crop appearance and increase the difficulty of detection. The detection accuracy of crop rows is also impacted by different growth stages, environmental conditions, curves, and occlusion. Therefore, appropriate sensors and multiple adaptable models are required to achieve high-precision crop row detection. This paper presents a comprehensive review of the methods and applications related to crop row detection for agricultural machinery navigation. Particular attention has been paid to the sensors and systems used for crop row detection to improve their perception and detection capabilities. The advantages and disadvantages of current mainstream crop row detection methods, including various traditional methods and deep learning frameworks, are also discussed and summarized. Additionally, the applications for different crop row detection tasks, including irrigation, harvesting, weeding, and spraying, in various agricultural scenarios, such as dryland, the paddy field, orchard, and greenhouse, are reported.

1. Introduction

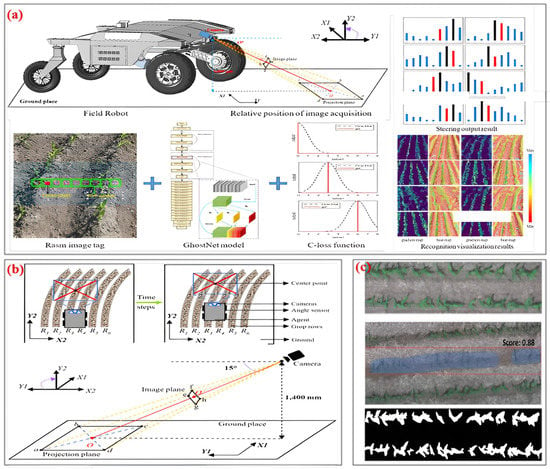

The global population and food challenges have called for advances in agricultural science. Integrating advanced technologies such as artificial intelligence, navigation, sensing systems, and communication, modern agricultural equipment can improve agricultural productivity and promote the development of smart agriculture [1]. Autonomous navigation technology is essential in realizing the intellectualization and modernization of agricultural machinery. This technology allows machinery to move with precision and accuracy, perform field tasks efficiently, and monitor crop growth and health. Figure 1 depicts a few examples of field applications, including fertilization robots, irrigation robots, weeding robots, and picking robots. However, the complexity and unstructured nature of the agricultural environment pose a challenge to achieve accurate navigation and the autonomous operation of agricultural machinery. Accurate row detection can effectively promote autonomous navigation and the safe operation of robots and vehicles in agricultural environments [2].

Figure 1.

Applications of agricultural robots working in row-crop fields.

The application of robotic diversification operations through row detection makes great sense for precision agriculture [3,4]. Based on crop row detection results, the motion controller guides the agricultural robot to operate automatically and safely without damaging the crop by adjusting the forward speed and direction of the front and rear wheels. The row detection-based navigation of agricultural machinery is widely used in different agricultural tasks such as spraying, mowing, irrigation, harvesting, fertilization, and plant protection [5]. The complexity and diversity of the agricultural environment necessitate varying requirements to be met for crop row detection and the autonomous navigation of agricultural robots. Typical agricultural scenarios include drylands, paddy fields, orchards, and greenhouses. Drylands are usually uneven, and crop growth can be messy; paddy fields have different water depths, and different crops can grow together; orchards have a dense canopy of fruit trees, and there may be other weeds and plants growing with the fruit trees; greenhouses have a variety of plants that need to be distinguished [6]. In addition, curved crop rows are common in some agricultural fields due to topography. Curved crop rows usually have complex shapes and occur not only in agricultural terraces but also in flat plots with irregular geometry. This makes it difficult to accurately detect and measure target crop rows, and the traditional line detection algorithm is difficult to cope with in this situation as it can bring certain challenges for the safe navigation of agricultural robots [7]. Therefore, in order to improve the accuracy of crop row detection, appropriate sensors, and computer algorithms need to be selected according to different application scenarios [8].

With the development of computer technology and intelligent equipment, various types of intelligent sensors and systems have been widely used for row detection in agricultural environments to help solve the problem of the autonomous navigation of agricultural robots [9,10]. Vision sensors have been extensively used as a reliable source of information for agricultural robots due to the advantages of a wide measurement range, rich signal information, and low costs [11,12]. Based on the detected crop row images, the information on crop row spacing, contours, and obstacle locations can be obtained in real-time for the robot to achieve row tracking and navigation [13,14]. Scholars have developed many algorithms for crop row recognition, and path extraction based on the above vision sensors and systems, including traditional methods and learning-based methods. Traditional crop row detection methods are simple to implement, widely applicable, cost-effective, and well-visualized [15]. However, environmental factors such as lighting and shading have a deep impact on the accuracy and reliability of traditional crop row detection methods in practical applications. In recent years, the development and application of machine learning and deep learning have provided strong theoretical support for vision-based crop row detection [16]. In image segmentation, feature detection, target recognition and other visual information processing and machine learning can replace traditional methods to reduce the interference of environmental noise and vegetation overlap and improve the accuracy of crop row detection [17,18]. Moreover, traditional visual inspection techniques are prone to occlusion and missed detection in agricultural environments with high crop density, such as fruit gardens, sorghum fields, and corn fields. In this case, LIDAR is a good alternative, with strong penetration and high accuracy. In addition, multi-sensor fusion is an important development direction in crop row detection. By integrating information from multiple sensors, multi-sensor fusion makes up for the limitations of a single sensor and improves the accuracy and robustness of crop row detection. In general, the application of different sensors and their corresponding algorithms can effectively improve the crop row detection accuracy and navigation robustness of agricultural robots and promote the transition of modern agriculture to high efficiency, automation, and precision [19,20].

Despite the increasing popularity of this topic in agricultural navigation, the related methods and applications based on crop row detection have not been summarized in detail or systematically. This is detrimental to the understanding of agricultural robot navigation methods based on crop row detection. Therefore, in order to promote the further development of crop row detection technology and agricultural robotics navigation, this paper provides a comprehensive review of the literature regarding the current state of research on crop row detection. This paper is organized as follows: Section 2 provides a comprehensive introduction to the concepts of sensors and systems in navigation systems, as well as their advantages and disadvantages. In Section 3, recognition and detection algorithms and methods of crop row are classified into two main categories: traditional methods and machine vision methods. The applications of crop row detection in robot navigation are shown in Section 4 in accordance with various perceptual conditions. Section 5 discusses the challenges and prospects of the theme.

2. Sensors and Systems for Crop-Row Detection

2.1. Monocular Cameras

Monocular vision is a fundamental building block for other vision systems, such as binocular stereo-vision and multi-vision systems [21]. The imaging principle of a monocular camera is to generate a projection onto the camera plane, reflecting the three-dimensional (3D) world in a two-dimensional (2D) form [22]. According to different signal readout processes, monocular cameras are usually divided into two kinds of image sensors: the charge-coupled device (CCD) and the complementary metal-oxide-semiconductor (CMOS) [23]. The advantages of the monocular camera include a simple structure, low cost, and low power consumption, making it a convenient tool for crop row detection. Additionally, monocular cameras can detect color, texture, and other features in agricultural scenes, providing useful information for the positioning and navigation of agricultural robots and vehicles [24]. However, due to the limited amount of information provided by a single camera, auxiliary algorithms are often required to determine the distance of the relationship between the target and the camera [25,26]. Moreover, depth information cannot be directly collected from monocular cameras because of their single-view angle.

2.2. Binocular Cameras

The binocular stereo vision technique is commonly utilized in crop row detection because it can provide precise and efficient depth information [27]. A binocular camera comprises two monocular cameras and is commonly used as a passive rangefinder. By capturing two images of an object from different positions, binocular cameras can determine the 3D geometric information of the object by calculating the position deviation between the corresponding points of the images [28]. This process is based on the principle of parallax, and it enables the camera to accurately measure the distance to the object, providing important information for crop row detection [29]. Stereo vision-based crop row detection has demonstrated superior performance in challenging field conditions such as high crop density or varying lighting [30]. Compared to monocular vision, stereo vision is less sensitive to the effects of shadows and sunlight, providing more reliable results in these environments [31]. However, the configuration and calibration of binocular cameras can be complex and requires careful attention to detail. Additionally, in the absence of texture features, stereo-matching algorithms can fail to accurately identify the corresponding points between images [32].

2.3. RGB-D Cameras

RGB-D cameras have become increasingly widespread in crop row detection due to their capacity to capture both color and depth information [33]. Structured light and Time-of-Flight (TOF) cameras, which are capable of measuring both RGB and depth information, fall under the category of RGB-D cameras [34]. Structured light systems can capture both 2D planar and 3D depth information [35]. This system is composed of projectors, cameras, image acquisition systems, and processing systems, which work together to project a particular mode of a light signal onto the surface of the object to be measured and then calculate the position and depth information according to the changes in the light signal on the surface of the object [36]. ToF cameras work on the principle of emitting a short burst of infrared light and measuring the time it takes for the light to return after reflecting off objects in the scene [37]. The camera sensor detects the reflected light and calculates the distance to each point based on the time of flight. These data are then used to create a depth map of the scene, which provides information about the distances between the camera and various objects within the field of view [38]. Unlike the passive range of binocular cameras, RGB-D sensors can actively emit signal waves and capture these waves, which are reflected back from objects [39]. The depth information obtained from RGB-D cameras provides additional geometric information that can be used to detect crop rows and estimate plant height more accurately [40]. However, the performance of RGB-D cameras may be limited in situations where the crop density is high or occlusion. In such scenarios, some crop plants may be hidden from view, or the depth of data may be noisy, leading to inaccurate detection [41]. In addition, the processing of large amounts of 3D data generated by RGB-D cameras is also a computationally intensive and time-consuming process [42].

2.4. Panorama Cameras

Panoramic cameras can enable the large-scale and dead-angle-free monitoring of crops in the field [43]. Based on bionics technology, panoramic cameras work by using the spherical mirror transmission and reflection of physical optics for imaging. By rotating the camera, the photographic field can be scanned at a large angle, and the panoramic range can be achieved by stitching technology, which is enough to reach 360° [44]. The remarkable feature of panoramic cameras is that they can capture a large amount of the surrounding crop structure information in a single image, making them more suitable for large-scale farmland monitoring [45]. Fisheye panoramic cameras are one of the most commonly used types of panoramic cameras in agriculture due to their broad field of view and ability to capture rich environmental information [46]. However, the images captured by fisheye panoramic cameras suffer from large distortion and lack the details required for accurate object detection, which can limit their usefulness in certain applications [47,48]. To address these issues, deformation correction techniques, such as equidistant, stereographic, or orthographic projection models, can be used to rectify these images and remove the distortion [49].

2.5. Spectral Imaging Systems

The spectral imaging system is a combination of imaging technology and spectral information acquisition technology, which shows great potential in crop detection applications [50]. This system obtains the data cube composed of 2D spatial information and the one-dimensional (1D) spectral information of the measured object by spectral scanning [51]. According to different spectral resolution capabilities, common spectral imaging techniques can be divided into multi-spectral, hyper-spectral, and ultra-spectral [52]. Hyperspectral imaging systems provide a higher spectral resolution, enabling the more detailed and accurate identification of different objects in the field. By acquiring spectral data and analyzing the differences in their spectral reflection, this system can differentiate between crops and non-crop areas more accurately [53,54]. However, for early-growing crops, which may have similar spectral characteristics to weeds, spectral detection may not be as effective [55]. In addition, processing a large amount of imaging spectral data quickly and reliably remains a challenge for spectral imaging systems [56].

2.6. LiDAR Sensors

LiDAR, which stands for Light Detection and Ranging, is a highly advanced and reliable sensor that has been widely used in the field of crop row detection and robot navigation. This sensor is known for its high precision, wide range, and powerful anti-jamming capabilities [57]. The principle of the LiDAR operation is based on the emission of visible or near-infrared light waves by the transmitting system. These waves are then reflected off the target and detected by the receiving system. The data obtained are subsequently processed to produce parameter information, including distance [58]. LiDAR sensors have been utilized in crop row detection to provide highly accurate and detailed 3D maps of crop canopies [59]. Additionally, LiDAR sensors are able to penetrate vegetation and capture data from the ground surface, which can aid in detecting crop rows even in highly vegetated fields [60]. However, the high cost of LiDAR sensors remains a foremost drawback, limiting their use in small-scale farming operations. Additionally, LiDAR sensors require high computational power to process large amounts of data, which could be a bottleneck in real-time applications [61].

2.7. Multi-Sensor Fusion Systems

As mentioned above, both vision and LiDAR sensors have their own limitations in crop row detection and robot navigation. The multi-sensor fusion system can exploit the complementary and redundant nature of multiple sensor data to fuse different environmental information and enhance the performance of crop row detection [62,63]. Considering the variability of soil disturbance, vegetation level, and machinery speed in agricultural environments, the fusion of LiDAR and vision sensors can enable robust weed detection and crop row tracking tasks [64]. Combining the plane data given by the LiDAR with the colorful representation provided by the images, the noise of grass or leaves in the environment can be eliminated, and the detection ability of crop rows improved [65]. Since the boundaries between harvested and unharvested crops in the field are not always straight lines, the fusion of vision sensors with the Global Positioning System (GPS) is also an effective fusion scheme [66]. The use of vision sensors alone for crop row detection may result in missed detections during image processing while using a GPS device alone can produce certain errors in determining the navigation baseline. The fusion of vision and GPS improves the integrity of crop row feature information extraction and enhances localization accuracy and detection robustness. In addition, the fusion of vision sensors, encoders, and inertial measurement units (IMU) is also common in crop row detection [67]. Although multi-sensor fusion systems have additional complexities, these can be effectively mitigated by employing optimal techniques [68]. When the data are optimally integrated, the information from different sensors can give an accurate crop row detection model in the current agricultural environment.

3. Methods and Algorithms for Crop-Row Detection

3.1. Traditional Methods

3.1.1. Hough Transform (HT)

HT is a classical computer vision algorithm for crop row detection and navigation line extraction [69]. The idea behind this approach is to transform the image-coordinate space to the Hough-parameter space using the mapping relationship between points and lines, followed by detecting the target lines in the image. The HT-based detection approach is robust to image noise and outliers and performs well even in parallel structure crop fields with gaps [70]. To improve the efficiency and accuracy of these inspection results, edge detection, and image binarization are often performed prior to the HT-based detection process [71]. One limitation of the classic Hough transform is its high computational complexity, which makes it unsuitable for real-time applications. Another limitation of the classic Hough transform is its sensitivity to noise and outliers. To address this issue, researchers have proposed various modifications to HT, such as the Probabilistic Hough Transform (PHT), which uses a probabilistic voting scheme to reduce the effect of noise and outliers [72]. Other modifications include the Directional Hough Transform (DHT), which was designed to detect lines with a specific orientation [73], and the Multi-scale Hough Transform (MHT), which detects lines of different scales [74].

3.1.2. Linear Regression Method (LRM)

LRM is a widely utilized technique in detecting row crops in agriculture through image analysis. In regression analysis, one or more independent variables are studied to determine their impact on the dependent variable, with the aim of generating a hypothesis analysis [75]. The most common implementation of LRM is the least squares method, where the sum of the squared errors between the predicted and actual values is minimized to find the best-fit line. In the context of crop row detection, LRM can be used to predict the position and orientation of crop rows using image data. The goal is to find a linear relationship between the independent variables (such as pixel coordinates) and the dependent variable (crop row position or orientation). Before applying LRM to crop row detection, image preprocessing steps such as image segmentation and feature extraction can be performed to isolate the crop rows from the background and extract useful features for regression analysis [76]. One of the advantages of LRM is its simplicity and computational efficiency. However, it may encounter difficulties in handling complex data with noise in farmlands. In such cases, additional preprocessing steps, such as separating weed and crop pixels or using non-linear regression techniques, may be necessary to improve the accuracy of the model [77].

3.1.3. Horizontal Strips Method

The horizontal strips method is a reliable approach for detecting crop rows using agronomic image analysis [78]. The key concept of this technique is to divide the input image into several horizontal strips, which can serve as regions of interest (ROI). Within each ROI, feature points are determined based on the calculated center of gravity. Compared with other crop row detection methods, the horizontal strip analysis method does not require an additional image segmentation step, which improves the computational efficiency of image processing and reduces storage space [79]. Moreover, this technique was clearly superior in terms of real-time performance and precision in continuous crop rows with low weed density. Nevertheless, the horizontal strip method might not perform well in agricultural environments where crop rows are partially missing or overgrown with weeds, as these factors can affect the accuracy of feature point detection. Furthermore, the accuracy of this method is sensitive to the camera angle, which can affect the determination of feature pixel values. To mitigate this issue, the vertical projection method is often used in conjunction with the horizontal strip method to enhance accuracy [80].

3.1.4. Blob Analysis (BA)

The Blob Analysis (BA) method is a useful technique for crop row detection that operates on binarized images to group connected pixels into blobs with the same gray value [81]. The blobs that contain more than a certain number of pixels are then used to generate straight lines that represent crop rows. Unlike other machine vision techniques, BA considers features in an image as objects rather than individual pixels or lines, leading to more accurate identification of crop rows [82]. This approach leverages the unique shape and color characteristics of crop rows to accurately locate and identify them by calculating the center of gravity and principal axis position of each crop row [83]. In crop row detection, the BA technique has proven effective, particularly in situations where the crop rows have a clear definition and a distinct contrast with the surrounding field, such as in the case of newly planted crops with a different color or texture than the soil. However, BA may have limitations in fields with a high weed density or an unclear crop row definition. In such cases, the noise in the clustered blobs can lead to errors, which can affect the accuracy of the crop row detection results [84].

3.1.5. Random Sample Consensus (RANSAC)

The RANSAC algorithm is a robust and widely used technique for row detection in crops. The algorithm estimates a mathematical model and calculates the optimal solution of parameters from a dataset that may contain outliers [85]. In crop row detection, outliers can be weed points, soil points, or other objects that do not belong to the crop row. This property makes it suitable for the centerline fitting of crop rows, even when a significant proportion of weed data points are present [86]. Furthermore, the RANSAC algorithm can optimize point cloud matching and 3D coordinate calculations for complex 3D crop row detection [87]. However, the effectiveness of the RANSAC algorithm depends on several factors, such as the number of iterations, the threshold values, and the size of the data set. In the case of crop row detection, the quality of the feature points extracted from the image data also plays a crucial role in the success of the algorithm [88]. In recent years, several variations of the RANSAC algorithm have been proposed to address some of its limitations in crop row detection, such as the Progressive Sample Consensus (PROSAC) algorithm and the M-estimator Sample Consensus (MSAC) algorithm [89].

3.1.6. Frequency Analysis

Frequency analysis is a signal processing technique for analyzing local spatial patterns, which is widely used in crop row detection [90]. This mathematical method involves converting images from the image space to the frequency space through frequency domain filtering. By analyzing the resulting spectrum, this method can extract details from the image and enhance object detection with some simple logical operations. Common methods used in frequency-domain characterization include Fourier transform (FT), fast Fourier transform (FFT), and wavelet analysis [91]. Through these methods, the grayscale levels of weeds and shadows (tractors or crops) in field images can be attenuated, enabling the efficient detection of the position and direction of crop rows [92]. However, the frequency analysis method may not be suitable for the detection of curved crop rows with irregular crop spacing. Furthermore, the accuracy of this method may be affected by factors such as lighting conditions and the presence of noise in the image [93].

3.2. Machine Learning Methods

3.2.1. Clustering

The clustering algorithm is an unsupervised learning method that automatically groups data points into clusters according to various standard attributes or features like color, texture, or edge information [94]. This method does not require labeled data, which makes it a useful tool for detecting crop rows. The cluster-based algorithm is known for its quick detection of objects, high efficiency, and fast operation speed [95]. Data clustering methods mainly include partition-based methods, density-based methods, and hierarchical methods. Among these, the K-means clustering algorithm is the simplest and most commonly used method in crop row detection [96]. It can cluster data effectively, even when weed pixels are present between rows and are significantly smaller than planting crops. The scalability and efficiency of the K-means algorithm make it suitable for processing large datasets in cropland [97]. However, it has been noted that the K-means algorithm assumes that the clusters are spherical, equally sized, and have similar densities, which can lead to over-clustering or under-clustering in certain situations [98]. In recent years, several studies have attempted to address the limitations of traditional clustering algorithms in crop row detection. For example, some researchers have used hybrid clustering algorithms that combine the strengths of multiple clustering methods to achieve better results. Others have developed clustering algorithms that can detect irregularly shaped clusters, such as Gaussian mixture models (GMMs) or fuzzy clustering algorithms [99].

3.2.2. Deep Learning

Deep learning is a new research direction of machine learning that has been applied to crop row detection [100]. Unlike traditional shallow learning, deep learning places more emphasis on the depth and feature learning of model structures, with the goal of establishing a neural network that can analyze and learn in a manner similar to the human brain. This method has demonstrated significant improvements over traditional computer vision algorithms for identifying crop rows, especially in challenging conditions such as variable lighting, weather, and field conditions [101]. One of the main advantages of deep learning is that it can autonomously learn from large datasets and adapt to new data distributions. This makes it well-suited for precision agriculture, where it can be used to identify crops, pests, and diseases, optimize planting patterns, and monitor crop growth and health. Object detection and semantic segmentation play crucial roles in crop row detection by enhancing the accuracy and understanding of field images. Object detection algorithms enable the identification and localization of crop rows within an image, allowing for the precise mapping and measurement of their positions. This helps when optimizing planting patterns and ensuring uniform spacing between the rows. Moreover, object detection enables the detection of other objects or obstacles in the field, such as machinery or structures, which can help to avoid potential collisions or disturbances during farming operations [102]. On the other hand, semantic segmentation goes beyond object detection by providing detailed pixel-level labeling of an image. In the context of crop row detection, semantic segmentation helps differentiate the crop rows from other objects or background elements that are present in the image. By accurately segmenting the crop rows, semantic segmentation facilitates the analysis of their spatial distribution and arrangement [103]. It enables the identification of irregularities or gaps between rows, which can indicate potential issues such as missing plants, weed infestations, or uneven growth. This information is invaluable for farmers when making informed decisions regarding subsequent farming operations. Recent studies have used deep learning techniques such as Faster R-CNN, YOLOv3, Mask R-CNN, and DeepLabv3+ to detect crop rows from images captured by drones, tractors, or robots [104]. The significant challenge of deep learning-based crop detection is a lack of annotated training data for specific crops, growth stages, and field conditions [105]. Creating such datasets requires significant time and resources, and their quality and size can significantly impact the accuracy and robustness of the models. Moreover, the computational cost of training deep learning models can be prohibitive for resource-constrained devices and systems [106].

5. Conclusions

In conclusion, crop row detection is a critical task in precision agriculture that enables various agricultural applications, including pesticide spraying, crop health monitoring, and weed detection. Traditional image processing techniques, machine learning-based approaches, and deep learning-based methods are all viable options for crop row detection, each with its own advantages and limitations. Nonetheless, recent advances in computer vision and machine learning technologies have made deep learning-based methods, notably convolutional neural networks, the most promising option for crop row detection.

Deep learning methods have demonstrated superior performance in various computer vision tasks, including object detection and semantic segmentation, which are fundamental to crop row detection. CNNs excel at learning complex features and patterns from large datasets, enabling them to automatically extract relevant information from field images and accurately identify crop rows. The ability of deep learning models to generalize well to different lighting conditions, weather variations, and field environments has further contributed to their suitability for crop row detection in real-world scenarios.

Despite the progress made, several challenges persist in the field of crop row detection. One challenge involves ensuring the robustness of detection algorithms to handle diverse lighting conditions and environmental factors, as agricultural settings can be highly variable and unpredictable. Additionally, their scalability to different crops is essential, as crop rows can exhibit variations in shape, size, and appearance across different plant species. Integrating crop row detection with other agricultural technologies, such as robotics, drones, and data analytics, also presents another challenge that requires seamless integration and interoperability.

The prospects for crop row detection in precision agriculture are promising. Researchers and industry experts are actively working on developing more accurate, efficient, and scalable methods that address existing challenges. Advancements in deep learning architectures, such as novel CNN architectures and attention mechanisms, hold the potential to further improve crop row detection performance. Furthermore, the fusion of crop row detection with other advanced technologies, including remote sensing, the Internet of Things (IoT), and big data analytics, could enhance the overall effectiveness of precision agriculture systems.

The future of crop row detection in precision agriculture looks promising as researchers and industry experts continue to develop more accurate, efficient, and scalable methods that can benefit farmers and improve agricultural sustainability. Overall, the advancement of crop row detection in precision agriculture has the potential to revolutionize farming practices, leading to improved productivity, resource management, and agricultural sustainability. By leveraging the power of deep learning and embracing collaboration, the future of crop row detection holds great promise for enhancing crop yield, minimizing the environmental impact, and transforming the agricultural industry as a whole.

Author Contributions

Conceptualization, J.S. and Y.B.; methodology, J.S. and Y.B.; analysis, J.S.; investigation, J.S., Y.B., Z.D., J.Z., X.Y. and B.Z.; resources, Z.D. and B.Z.; data curation, J.S.; writing—original draft preparation, J.S.; writing—review and editing, J.S., Y.B. and X.Y.; visualization, J.S.; supervision, J.Z. and Z.D.; funding acquisition, B.Z. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by the Jiangsu Modern Agricultural Equipment and Technology Demonstration & Promotion Project (project No. NJ2021-60), the National Natural Science Foundation of China (project No. 31901415), and the Jiangsu Agricultural Science and Technology Innovation Fund (JASTIF) (Grant No. CX (21) 3146).

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Maddikunta, P.K.R.; Hakak, S.; Alazab, M.; Bhattacharya, S.; Gadekallu, T.R.; Khan, W.Z.; Pham, Q.V. Unmanned aerial vehicles in smart agriculture: Applications, requirements, and challenges. IEEE Sens. J. 2021, 21, 17608–17619. [Google Scholar] [CrossRef]

- Subeesh, A.; Mehta, C.R. Automation and digitization of agriculture using artificial intelligence and internet of things. Artif. Intell. Agric. 2021, 5, 278–291. [Google Scholar] [CrossRef]

- Karkee, M. Fundamentals of Agricultural and Field Robotics; Zhang, Q., Ed.; Springer International Publishing: Cham, Switzerland, 2021. [Google Scholar]

- Plessen, M.G. Freeform path fitting for the minimisation of the number of transitions between headland path and interior lanes within agricultural fields. Artif. Intell. Agric. 2021, 5, 233–239. [Google Scholar] [CrossRef]

- Shalal, N.; Low, T.; McCarthy, C.; Hancock, N. A preliminary evaluation of vision and laser sensing for tree trunk detection and orchard mapping. In Proceedings of the Australasian Conference on Robotics and Automation (ACRA 2013), Sydney, Australia, 2–4 December 2013; pp. 1–10, Australasian Robotics and Automation Association. [Google Scholar]

- McCarthy, C.L.; Hancock, N.H.; Raine, S.R. Applied machine vision of plants: A review with implications for field deployment in automated farming operations. Intell. Serv. Robot. 2010, 3, 209–217. [Google Scholar] [CrossRef]

- Rocha, B.M.; Vieira, G.S.; Fonseca, A.U.; Sousa, N.M.; Pedrini, H.; Soares, F. Detection of Curved Rows and Gaps in Aerial Images of Sugarcane Field Using Image Processing Techniques. IEEE Can. J. Electr. Comput. Eng. 2022, 45, 303–310. [Google Scholar] [CrossRef]

- Singh, N.; Tewari, V.K.; Biswas, P.K.; Pareek, C.M.; Dhruw, L.K. Image processing algorithms for in-field cotton boll detection in natural lighting conditions. Artif. Intell. Agric. 2021, 5, 142–156. [Google Scholar] [CrossRef]

- Emmi, L.; Gonzalez-de-Soto, M.; Pajares, G.; Gonzalez-de-Santos, P. Integrating sensory/actuation systems in agricultural vehicles. Sensors 2014, 14, 4014–4049. [Google Scholar] [CrossRef]

- Bonadies, S.; Gadsden, S.A. An overview of autonomous crop row navigation strategies for unmanned ground vehicles. Eng. Agric. Environ. Food 2019, 12, 24–31. [Google Scholar] [CrossRef]

- Vázquez-Arellano, M.; Griepentrog, H.W.; Reiser, D.; Paraforos, D.S. 3-D imaging systems for agricultural applications—A review. Sensors 2016, 16, 618. [Google Scholar] [CrossRef]

- Tian, H.; Wang, T.; Liu, Y.; Qiao, X.; Li, Y. Computer vision technology in agricultural automation—A review. Inf. Process. Agric. 2020, 7, 1–19. [Google Scholar] [CrossRef]

- English, A.; Ross, P.; Ball, D.; Corke, P. Vision based guidance for robot navigation in agriculture. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Beijing, China, 20–21 May 2014; pp. 1693–1698. [Google Scholar]

- Zhai, Z.; Zhu, Z.; Du, Y.; Zhang, S.; Mao, E. Method for detecting crop rows based on binocular vision with Census transformation. Trans. Chin. Soc. Agric. Eng. 2016, 32, 205–213. [Google Scholar]

- Tang, Y.; Chen, M.; Wang, C.; Luo, L.; Li, J.; Lian, G.; Zou, X. Recognition and localization methods for vision-based fruit picking robots: A review. Front. Plant Sci. 2020, 11, 510. [Google Scholar] [CrossRef]

- Pajares, G.; García-Santillán, I.; Campos, Y.; Montalvo, M.; Guerrero, J.M.; Emmi, L.; Gonzalez-de-Santos, P. Machine-vision systems selection for agricultural vehicles: A guide. J. Imaging 2016, 2, 34. [Google Scholar] [CrossRef]

- Zheng, Y.Y.; Kong, J.L.; Jin, X.B.; Wang, X.Y.; Su, T.L.; Zuo, M. CropDeep: The crop vision dataset for deep-learning-based classification and detection in precision agriculture. Sensors 2019, 19, 1058. [Google Scholar] [CrossRef] [PubMed]

- Fayyad, J.; Jaradat, M.A.; Gruyer, D.; Najjaran, H. Deep learning sensor fusion for autonomous vehicle perception and localization: A review. Sensors 2020, 20, 4220. [Google Scholar] [CrossRef] [PubMed]

- R Shamshiri, R.; Weltzien, C.; Hameed, I.A.; J Yule, I.; E Grift, T.; Balasundram, S.K.; Chowdhary, G. Research and development in agricultural robotics: A perspective of digital farming. Int. J. Agric. Biol. Eng. 2018, 11, 1–14. [Google Scholar] [CrossRef]

- Han, X.; Xu, L.; Peng, Y.; Wang, Z. Trend of Intelligent Robot Application Based on Intelligent Agriculture System. In Proceedings of the 2021 3rd International Conference on Artificial Intelligence and Advanced Manufacture (AIAM), Manchester, UK, 23–25 October 2021; pp. 205–209. [Google Scholar]

- Delmerico, J.; Scaramuzza, D. A benchmark comparison of monocular visual-inertial odometry algorithms for flying robots. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018; pp. 2502–2509. [Google Scholar]

- Aharchi, M.; Ait Kbir, M. A review on 3D reconstruction techniques from 2D images. In Proceedings of the 4th International Conference on Smart City Applications (SCA‘19), Casablanca, Morocco, 2–4 October 2019; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 510–522, Innovations in Smart Cities Applications Edition 3. [Google Scholar]

- Huang, R.; Yamazato, T. A Review on Image Sensor Communication and Its Applications to Vehicles. Photonics 2023, 10, 617. [Google Scholar] [CrossRef]

- Ai, C.; Geng, D.; Qi, Z.; Zheng, L.; Feng, Z. Research on AGV Navigation System Based on Binocular Vision. In Proceedings of the 2021 IEEE International Conference on Real-time Computing and Robotics (RCAR), Xining, China, 15–19 July 2021; pp. 851–856. [Google Scholar]

- Chen, Y.; Hou, C.; Tang, Y.; Zhuang, J.; Lin, J.; He, Y.; Luo, S. Citrus tree segmentation from UAV images based on monocular machine vision in a natural orchard environment. Sensors 2019, 19, 5558. [Google Scholar] [CrossRef]

- Zhou, C.; Ye, H.; Hu, J.; Shi, X.; Hua, S.; Yue, J.; Yang, G. Automated counting of rice panicle by applying deep learning model to images from unmanned aerial vehicle platform. Sensors 2019, 19, 3106. [Google Scholar] [CrossRef]

- Ball, D.; Upcroft, B.; Wyeth, G.; Corke, P.; English, A.; Ross, P.; Bate, A. Vision-based obstacle detection and navigation for an agricultural robot. J. Field Robot. 2016, 33, 1107–1130. [Google Scholar] [CrossRef]

- Vrochidou, E.; Oustadakis, D.; Kefalas, A.; Papakostas, G.A. Computer vision in self-steering tractors. Machines 2022, 10, 129. [Google Scholar] [CrossRef]

- Ren, J.; Guan, F.; Wang, T.; Qian, B.; Luo, C.; Cai, G.; Li, X. High Precision Calibration Algorithm for Binocular Stereo Vision Camera using Deep Reinforcement Learning. Comput. Intell. Neurosci. 2022, 2022, 6596868. [Google Scholar] [CrossRef]

- Königshof, H.; Salscheider, N.O.; Stiller, C. Realtime 3d object detection for automated driving using stereo vision and semantic information. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 1405–1410. [Google Scholar]

- Kneip, J.; Fleischmann, P.; Berns, K. Crop edge detection based on stereo vision. Robot. Auton. Syst. 2020, 123, 103323. [Google Scholar] [CrossRef]

- Lati, R.N.; Filin, S.; Eizenberg, H. Plant growth parameter estimation from sparse 3D reconstruction based on highly-textured feature points. Precis. Agric. 2013, 14, 586–605. [Google Scholar] [CrossRef]

- Aghi, D.; Mazzia, V.; Chiaberge, M. Local motion planner for autonomous navigation in vineyards with a RGB-D camera-based algorithm and deep learning synergy. Machines 2020, 8, 27. [Google Scholar] [CrossRef]

- Giancola, S.; Valenti, M.; Sala, R. A Survey on 3D Cameras: Metrological Comparison of Time-of-Flight, Structured-Light and Active Stereoscopy Technologies; Springer Nature: Berlin/Heidelberg, Germany, 2018. [Google Scholar]

- Rosell-Polo, J.R.; Cheein, F.A.; Gregorio, E.; Andújar, D.; Puigdomènech, L.; Masip, J.; Escolà, A. Advances in structured light sensors applications in precision agriculture and livestock farming. Adv. Agron. 2015, 133, 71–112. [Google Scholar]

- Wu, D.; Chen, T.; Li, A. A high precision approach to calibrate a structured light vision sensor in a robot-based three-dimensional measurement system. Sensors 2016, 16, 1388. [Google Scholar] [CrossRef]

- Zanuttigh, P.; Marin, G.; Dal Mutto, C.; Dominio, F.; Minto, L.; Cortelazzo, G.M. Time-of-flight and structured light depth cameras. In Technology and Applications; Springer International: Berlin/Heidelberg, Germany, 2016. [Google Scholar] [CrossRef]

- Condotta, I.C.; Brown-Brandl, T.M.; Pitla, S.K.; Stinn, J.P.; Silva-Miranda, K.O. Evaluation of low-cost depth cameras for agricultural applications. Comput. Electron. Agric. 2020, 173, 105394. [Google Scholar] [CrossRef]

- Shahnewaz, A.; Pandey, A.K. Color and depth sensing sensor technologies for robotics and machine vision. In Machine Vision and Navigation; Springer: Cham, Switzerland, 2020; pp. 59–86. [Google Scholar]

- Chen, M.K.; Liu, X.; Wu, Y.; Zhang, J.; Yuan, J.; Zhang, Z.; Tsai, D.P. A Meta-Device for Intelligent Depth Perception. Adv. Mater. 2022, 9, 2107465. [Google Scholar] [CrossRef]

- Qiu, R.; Zhang, M.; He, Y. Field estimation of maize plant height at jointing stage using an RGB-D camera. Crop J. 2022, 10, 1274–1283. [Google Scholar] [CrossRef]

- Milella, A.; Marani, R.; Petitti, A.; Reina, G. In-field high throughput grapevine phenotyping with a consumer-grade depth camera. Comput. Electron. Agric. 2019, 156, 293–306. [Google Scholar] [CrossRef]

- Birklbauer, C.; Bimber, O. Panorama light-field imaging. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2014; Volume 33, pp. 43–52. [Google Scholar]

- Gao, S.; Yang, K.; Shi, H.; Wang, K.; Bai, J. Review on Panoramic Imaging and Its Applications in Scene Understanding. arXiv 2014, arXiv:2205.05570. [Google Scholar] [CrossRef]

- Lai, J.S.; Peng, Y.C.; Chang, M.J.; Huang, J.Y. Panoramic Mapping with Information Technologies for Supporting Engineering Education: A Preliminary Exploration. ISPRS Int. J. Geo-Inf. 2020, 9, 689. [Google Scholar] [CrossRef]

- Yang, K.; Hu, X.; Chen, H.; Xiang, K.; Wang, K.; Stiefelhagen, R. Ds-pass: Detail-sensitive panoramic annular semantic segmentation through swaftnet for surrounding sensing. In Proceedings of the 2020 IEEE Intelligent Vehicles Symposium (IV), Las Vegas, NV, USA, 19 October–13 November 2020; pp. 457–464. [Google Scholar]

- Häne, C.; Heng, L.; Lee, G.H.; Fraundorfer, F.; Furgale, P.; Sattler, T.; Pollefeys, M. 3D visual perception for self-driving cars using a multi-camera system: Calibration, mapping, localization, and obstacle detection. Image Vis. Comput. 2017, 68, 14–27. [Google Scholar] [CrossRef]

- Kumar, V.R.; Eising, C.; Witt, C.; Yogamani, S. Surround-view Fisheye Camera Perception for Automated Driving: Overview, Survey and Challenges. arXiv 2022, arXiv:2205.13281. [Google Scholar] [CrossRef]

- Chan, S.; Zhou, X.; Huang, C.; Chen, S.; Li, Y.F. An improved method for fisheye camera calibration and distortion correction. In Proceedings of the 2016 International Conference on Advanced Robotics and Mechatronics (ICARM), Macau, China, 18–20 August 2016; pp. 579–584. [Google Scholar]

- Signoroni, A.; Savardi, M.; Baronio, A.; Benini, S. Deep learning meets hyperspectral image analysis: A multidisciplinary review. J. Imaging 2019, 5, 52. [Google Scholar] [CrossRef]

- Liu, X.; Jiang, Z.; Wang, T.; Cai, F.; Wang, D. Fast hyperspectral imager driven by a low-cost and compact galvo-mirror. Optik 2020, 224, 165716. [Google Scholar] [CrossRef]

- Shaikh, M.S.; Jaferzadeh, K.; Thörnberg, B.; Casselgren, J. Calibration of a hyper-spectral imaging system using a low-cost reference. Sensors 2021, 21, 3738. [Google Scholar] [CrossRef]

- Lottes, P.; Hoeferlin, M.; Sander, S.; Müter, M.; Schulze, P.; Stachniss, L.C. An effective classification system for separating sugar beets and weeds for precision farming applications. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 5157–5163. [Google Scholar]

- Louargant, M.; Jones, G.; Faroux, R.; Paoli, J.N.; Maillot, T.; Gée, C.; Villette, S. Unsupervised classification algorithm for early weed detection in row-crops by combining spatial and spectral information. Remote Sens. 2018, 10, 761. [Google Scholar] [CrossRef]

- Su, W.H. Crop plant signaling for real-time plant identification in smart farm: A systematic review and new concept in artificial intelligence for automated weed control. Artif. Intell. Agric. 2020, 4, 262–271. [Google Scholar] [CrossRef]

- Adão, T.; Hruška, J.; Pádua, L.; Bessa, J.; Peres, E.; Morais, R.; Sousa, J.J. Hyperspectral imaging: A review on UAV-based sensors, data processing and applications for agriculture and forestry. Remote Sens. 2017, 9, 1110. [Google Scholar] [CrossRef]

- Wang, X.; Pan, H.; Guo, K.; Yang, X.; Luo, S. The evolution of LiDAR and its application in high precision measurement. IOP Conf. Ser.: Earth Environ. Sci. 2020, 502, 012008. [Google Scholar] [CrossRef]

- Chazette, P.; Totems, J.; Hespel, L.; Bailly, J.S. Principle and physics of the LiDAR measurement. In Optical Remote Sensing of Land Surface; Elsevier: Amsterdam, The Netherlands, 2016; pp. 201–247. [Google Scholar]

- Moreno, H.; Valero, C.; Bengochea-Guevara, J.M.; Ribeiro, Á.; Garrido-Izard, M.; Andújar, D. On-ground vineyard reconstruction using a LiDAR-based automated system. Sensors 2020, 20, 1102. [Google Scholar] [CrossRef] [PubMed]

- Liu, J.; Sun, Q.; Fan, Z.; Jia, Y. TOF lidar development in autonomous vehicle. In Proceedings of the 2018 IEEE 3rd Optoelectronics Global Conference (OGC), Shenzhen, China, 4–7 September 2018; pp. 185–190. [Google Scholar]

- Wang, T.; Chen, B.; Zhang, Z.; Li, H.; Zhang, M. Applications of machine vision in agricultural robot navigation: A review. Comput. Electron. Agric. 2022, 198, 107085. [Google Scholar] [CrossRef]

- Gao, X.; Li, J.; Fan, L.; Zhou, Q.; Yin, K.; Wang, J.; Wang, Z. Review of wheeled mobile robots’ navigation problems and application prospects in agriculture. IEEE Access 2018, 6, 49248–49268. [Google Scholar] [CrossRef]

- Qu, Y.; Yang, M.; Zhang, J.; Xie, W.; Qiang, B.; Chen, J. An outline of multi-sensor fusion methods for mobile agents indoor navigation. Sensors 2021, 21, 1605. [Google Scholar] [CrossRef]

- Shalal, N.; Low, T.; McCarthy, C.; Hancock, N. A review of autonomous navigation systems in agricultural environments. In Proceedings of the SEAg 2013: Innovative Agricultural Technologies for a Sustainable Future, Barton, Australia, 22–25 September 2013. [Google Scholar]

- Benet, B.; Lenain, R. Multi-sensor fusion method for crop row tracking and traversability operations. In Proceedings of the Conférence AXEMA-EURAGENG 2017, Paris, France, 10–11 February 2017; p. 10. [Google Scholar]

- Shaikh, T.A.; Rasool, T.; Lone, F.R. Towards leveraging the role of machine learning and artificial intelligence in precision agriculture and smart farming. Comput. Electron. Agric. 2022, 198, 107119. [Google Scholar] [CrossRef]

- Yan, Y.; Zhang, B.; Zhou, J.; Zhang, Y.; Liu, X.A. Real-Time Localization and Mapping Utilizing Multi-Sensor Fusion and Visual–IMU–Wheel Odometry for Agricultural Robots in Unstructured, Dynamic and GPS-Denied Greenhouse Environments. Agronomy 2022, 12, 1740. [Google Scholar] [CrossRef]

- Kolar, P.; Benavidez, P.; Jamshidi, M. Survey of datafusion techniques for laser and vision based sensor integration for autonomous navigation. Sensors 2020, 20, 2180. [Google Scholar] [CrossRef]

- de Silva, R.; Cielniak, G.; Gao, J. Towards agricultural autonomy: Crop row detection under varying field conditions using deep learning. arXiv 2021, arXiv:2109.08247. [Google Scholar]

- Meng, Q.; Qiu, R.; He, J.; Zhang, M.; Ma, X.; Liu, G. Development of agricultural implement system based on machine vision and fuzzy control. Comput. Electron. Agric. 2015, 112, 128–138. [Google Scholar] [CrossRef]

- Xu, Z.; Shin, B.S.; Klette, R. Closed form line-segment extraction using the Hough transform. Pattern Recognit. 2015, 48, 4012–4023. [Google Scholar] [CrossRef]

- Marzougui, M.; Alasiry, A.; Kortli, Y.; Baili, J. A lane tracking method based on progressive probabilistic Hough transform. IEEE Access 2020, 8, 84893–84905. [Google Scholar] [CrossRef]

- Chung, K.L.; Huang, Y.H.; Tsai, S.R. Orientation-based discrete Hough transform for line detection with low computational complexity. Appl. Math. Comput. 2014, 237, 430–437. [Google Scholar] [CrossRef]

- Chai, Y.; Wei, S.J.; Li, X.C. The multi-scale Hough transform lane detection method based on the algorithm of Otsu and Canny. Adv. Mater. Res. 2014, 1042, 126–130. [Google Scholar] [CrossRef]

- Akinwande, M.O.; Dikko, H.G.; Samson, A. Variance inflation factor: As a condition for the inclusion of suppressor variable(s) in regression analysis. Open J. Stat. 2015, 5, 754. [Google Scholar] [CrossRef]

- Andargie, A.A.; Rao, K.S. Estimation of a linear model with two-parameter symmetric platykurtic distributed errors. J. Uncertain. Anal. Appl. 2013, 1, 13. [Google Scholar] [CrossRef]

- Milioto, A.; Lottes, P.; Stachniss, C. Real-time semantic segmentation of crop and weed for precision agriculture robots leveraging background knowledge in CNNs. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018; pp. 2229–2235. [Google Scholar]

- Yang, Z.; Yang, Y.; Li, C.; Zhou, Y.; Zhang, X.; Yu, Y.; Liu, D. Tasseled Crop Rows Detection Based on Micro-Region of Interest and Logarithmic Transformation. Front. Plant Sci. 2022, 13, 916474. [Google Scholar] [CrossRef]

- Zheng, L.Y.; Xu, J.X. Multi-crop-row detection based on strip analysis. In Proceedings of the 2014 International Conference on Machine Learning and Cybernetics, Lanzhou, China, 13–16 July 2014; Volume 2, pp. 611–614. [Google Scholar]

- Zhou, Y.; Yang, Y.; Zhang, B.; Wen, X.; Yue, X.; Chen, L. Autonomous detection of crop rows based on adaptive multi-ROI in maize fields. Int. J. Agric. Biol. Eng. 2021, 14, 217–225. [Google Scholar] [CrossRef]

- Zhai, Z.; Zhu, Z.; Du, Y.; Song, Z.; Mao, E. Multi-crop-row detection algorithm based on binocular vision. Biosyst. Eng. 2016, 150, 89–103. [Google Scholar] [CrossRef]

- Benson, E.R.; Reid, J.F.; Zhang, Q. Machine vision–based guidance system for an agricultural small–grain harvester. Trans. ASAE 2003, 46, 1255. [Google Scholar] [CrossRef]

- Fontaine, V.; Crowe, T.G. Development of line-detection algorithms for local positioning in densely seeded crops. Can. Biosyst. Eng. 2006, 48, 7. [Google Scholar]

- Wang, A.; Zhang, W.; Wei, X. A review on weed detection using ground-based machine vision and image processing techniques. Comput. Electron. Agric. 2019, 158, 226–240. [Google Scholar] [CrossRef]

- Zhou, M.; Xia, J.; Yang, F.; Zheng, K.; Hu, M.; Li, D.; Zhang, S. Design and experiment of visual navigated UGV for orchard based on Hough matrix and RANSAC. Int. J. Agric. Biol. Eng. 2021, 14, 176–184. [Google Scholar] [CrossRef]

- Khan, N.; Rajendran, V.P.; Al Hasan, M.; Anwar, S. Clustering Algorithm Based Straight and Curved Crop Row Detection Using Color Based Segmentation. In Proceedings of the ASME 2020 International Mechanical Engineering Congress and Exposition, Virtual, 16–19 November 2020; American Society of Mechanical Engineers: New York, NY, USA, 2020; Volume 84553, p. V07BT07A003. [Google Scholar]

- Ghahremani, M.; Williams, K.; Corke, F.; Tiddeman, B.; Liu, Y.; Wang, X.; Doonan, J.H. Direct and accurate feature extraction from 3D point clouds of plants using RANSAC. Comput. Electron. Agric. 2021, 187, 106240. [Google Scholar] [CrossRef]

- Guo, J.; Wei, Z.; Miao, D. Lane detection method based on improved RANSAC algorithm. In Proceedings of the 2015 IEEE Twelfth International Symposium on Autonomous Decentralized Systems, Taichung, Taiwan, 25–27 March 2015; pp. 285–288. [Google Scholar]

- Ma, S.; Guo, P.; You, H.; He, P.; Li, G.; Li, H. An image matching optimization algorithm based on pixel shift clustering RANSAC. Inf. Sci. 2021, 562, 452–474. [Google Scholar] [CrossRef]

- Bossu, J.; Gée, C.; Jones, G.; Truchetet, F. Wavelet transform to discriminate between crop and weed in perspective agronomic images. Comput. Electron. Agric. 2009, 65, 133–143. [Google Scholar] [CrossRef]

- Arts, L.P.; van den Broek, E.L. The fast continuous wavelet transformation (fCWT) for real-time, high-quality, noise-resistant time–frequency analysis. Nat. Comput. Sci. 2022, 2, 47–58. [Google Scholar] [CrossRef]

- Hague, T.; Tillett, N.D. A bandpass filter-based approach to crop row location and tracking. Mechatronics 2001, 11, 1–12. [Google Scholar] [CrossRef]

- García-Santillán, I.D.; Montalvo, M.; Guerrero, J.M.; Pajares, G. Automatic detection of curved and straight crop rows from images in maize fields. Biosyst. Eng. 2017, 156, 61–79. [Google Scholar] [CrossRef]

- Saxena, A.; Prasad, M.; Gupta, A.; Bharill, N.; Patel, O.P.; Tiwari, A.; Lin, C.T. A review of clustering techniques and developments. Neurocomputing 2017, 267, 664–681. [Google Scholar] [CrossRef]

- Vidović, I.; Scitovski, R. Center-based clustering for line detection and application to crop rows detection. Comput. Electron. Agric. 2014, 109, 212–220. [Google Scholar] [CrossRef]

- Behura, A. The cluster analysis and feature selection: Perspective of machine learning and image processing. Data Anal. Bioinform. Mach. Learn. Perspect. 2021, 10, 249–280. [Google Scholar]

- Steward, B.L.; Gai, J.; Tang, L. The use of agricultural robots in weed management and control. Robot. Autom. Improv. Agric. 2019, 44, 1–25. [Google Scholar]

- Yu, Y.; Bao, Y.; Wang, J.; Chu, H.; Zhao, N.; He, Y.; Liu, Y. Crop row segmentation and detection in paddy fields based on treble-classification otsu and double-dimensional clustering method. Remote Sens. 2021, 13, 901. [Google Scholar] [CrossRef]

- Ezugwu, A.E.; Ikotun, A.M.; Oyelade, O.O.; Abualigah, L.; Agushaka, J.O.; Eke, C.I.; Akinyelu, A.A. A comprehensive survey of clustering algorithms: State-of-the-art machine learning applications, taxonomy, challenges, and future research prospects. Eng. Appl. Artif. Intell. 2022, 110, 104743. [Google Scholar] [CrossRef]

- Lachgar, M.; Hrimech, H.; Kartit, A. Optimization techniques in deep convolutional neuronal networks applied to olive diseases classification. Artif. Intell. Agric. 2022, 6, 77–89. [Google Scholar]

- Kamilaris, A.; Prenafeta-Boldú, F.X. Deep learning in agriculture: A survey. Comput. Electron. Agric. 2018, 147, 70–90. [Google Scholar] [CrossRef]

- De Castro, A.I.; Torres-Sánchez, J.; Peña, J.M.; Jiménez-Brenes, F.M.; Csillik, O.; López-Granados, F. An automatic random forest-OBIA algorithm for early weed mapping between and within crop rows using UAV imagery. Remote Sens. 2018, 10, 285. [Google Scholar] [CrossRef]

- You, J.; Liu, W.; Lee, J. A DNN-based semantic segmentation for detecting weed and crop. Comput. Electron. Agric. 2020, 178, 105750. [Google Scholar] [CrossRef]

- Doha, R.; Al Hasan, M.; Anwar, S.; Rajendran, V. Deep learning based crop row detection with online domain adaptation. In Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery Data Mining, Singapore, 14–18 August 2021; pp. 2773–2781. [Google Scholar]

- Picon, A.; San-Emeterio, M.G.; Bereciartua-Perez, A.; Klukas, C.; Eggers, T.; Navarra-Mestre, R. Deep learning-based segmentation of multiple species of weeds and corn crop using synthetic and real image datasets. Comput. Electron. Agric. 2022, 194, 106719. [Google Scholar] [CrossRef]

- de Silva, R.; Cielniak, G.; Wang, G.; Gao, J. Deep learning-based Crop Row Following for Infield Navigation of Agri-Robots. arXiv 2022, arXiv:2209.04278. [Google Scholar]

- Kumar, R.; Singh, M.P.; Kumar, P.; Singh, J.P. Crop Selection Method to maximize crop yield rate using machine learning technique. In Proceedings of the 2015 International Conference on Smart Technologies and Management for Computing, Communication, Controls, Energy and Materials (ICSTM), Avadi, India, 6–8 May 2015; pp. 138–145. [Google Scholar]

- Fue, K.G.; Porter, W.M.; Barnes, E.M.; Rains, G.C. An extensive review of mobile agricultural robotics for field operations: Focus on cotton harvesting. AgriEngineering 2020, 2, 150–174. [Google Scholar] [CrossRef]

- Sankaran, S.; Khot, L.R.; Espinoza, C.Z.; Jarolmasjed, S.; Sathuvalli, V.R.; Vandemark, G.J.; Pavek, M.J. Low-altitude, high-resolution aerial imaging systems for row and field crop phenotyping: A review. Eur. J. Agron. 2015, 70, 112–123. [Google Scholar] [CrossRef]

- Rejeb, A.; Rejeb, K.; Zailani, S.; Keogh, J.G.; Appolloni, A. Examining the interplay between artificial intelligence and the agri-food industry. Artif. Intell. Agric. 2022, 6, 111–128. [Google Scholar] [CrossRef]

- Jiang, Y.; Li, C.; Paterson, A.H.; Sun, S.; Xu, R.; Robertson, J. Quantitative analysis of cotton canopy size in field conditions using a consumer-grade RGB-D camera. Front. Plant Sci. 2018, 8, 2233. [Google Scholar] [CrossRef]

- Yao, Y.; Sun, J.; Tian, Y.; Zheng, C.; Liu, J. Alleviating water scarcity and poverty in drylands through telecouplings: Vegetable trade and tourism in northwest China. Sci. Total Environ. 2020, 741, 140387. [Google Scholar] [CrossRef]

- Jha, K.; Doshi, A.; Patel, P.; Shah, M. A comprehensive review on automation in agriculture using artificial intelligence. Artif. Intell. Agric. 2019, 2, 1–12. [Google Scholar] [CrossRef]

- Yu, J.; Cheng, T.; Cai, N.; Zhou, X.G.; Diao, Z.; Wang, T.; Zhang, D. Wheat Lodging Segmentation Based on Lstm_PSPNet Deep Learning Network. Drones 2023, 7, 143. [Google Scholar] [CrossRef]

- Emmi, L.; Herrera-Diaz, J.; Gonzalez-de-Santos, P. Toward Autonomous Mobile Robot Navigation in Early-Stage Crop Growth. In Proceedings of the 19th International Conference on Informatics in Control 2022, Automation and Robotics-ICINCO, Lisbon Portugal, 14–16 July 2022; pp. 411–418. [Google Scholar]

- Liang, X.; Chen, B.; Wei, C.; Zhang, X. Inter-row navigation line detection for cotton with broken rows. Plant Methods 2022, 18, 1–12. [Google Scholar] [CrossRef]

- Wei, C.; Li, H.; Shi, J.; Zhao, G.; Feng, H.; Quan, L. Row anchor selection classification method for early-stage crop row-following. Comput. Electron. Agric. 2022, 192, 106577. [Google Scholar] [CrossRef]

- Winterhalter, W.; Fleckenstein, F.; Dornhege, C.; Burgard, W. Localization for precision navigation in agricultural fields—Beyond crop row following. J. Field Robot. 2021, 38, 429–451. [Google Scholar] [CrossRef]

- Bakken, M.; Ponnambalam, V.R.; Moore, R.J.; Gjevestad, J.G.O.; From, P.J. Robot-supervised Learning of Crop Row Segmentation. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 2185–2191. [Google Scholar]

- Xie, Y.; Chen, K.; Li, W.; Zhang, Y.; Mo, J. An Improved Adaptive Threshold RANSAC Method for Medium Tillage Crop Rows Detection. In Proceedings of the 2021 6th International Conference on Intelligent Computing and Signal Processing (ICSP), Xi’an, China, 9–11 April 2021; pp. 1282–1286. [Google Scholar]

- He, C.; Chen, Q.; Miao, Z.; Li, N.; Sun, T. Extracting the navigation path of an agricultural plant protection robot based on machine vision. In Proceedings of the 2021 40th Chinese Control Conference (CCC), Shanghai, China, 26–28 July 2021; pp. 3576–3581. [Google Scholar]

- Gai, J.; Xiang, L.; Tang, L. Using a depth camera for crop row detection and mapping for under-canopy navigation of agricultural robotic vehicle. Comput. Electron. Agric. 2021, 188, 106301. [Google Scholar] [CrossRef]

- Ahmadi, A.; Nardi, L.; Chebrolu, N.; Stachniss, C. Visual servoing-based navigation for monitoring row-crop fields. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May 2020; pp. 4920–4926. [Google Scholar]

- Iqbal, J.; Xu, R.; Sun, S.; Li, C. Simulation of an autonomous mobile robot for LiDAR-based in-field phenotyping and navigation. Robotics 2020, 9, 46. [Google Scholar] [CrossRef]

- Ponnambalam, V.R.; Bakken, M.; Moore, R.J.; Glenn Omholt Gjevestad, J.; Johan From, P. Autonomous crop row guidance using adaptive multi-roi in strawberry fields. Sensors 2020, 20, 5249. [Google Scholar] [CrossRef] [PubMed]

- Velasquez, A.E.B.; Higuti, V.A.H.; Guerrero, H.B.; Gasparino, M.V.; Magalhaes, D.V.; Aroca, R.V.; Becker, M. Reactive navigation system based on H∞ control system and LiDAR readings on corn crops. Precis. Agric. 2020, 21, 349–368. [Google Scholar] [CrossRef]

- Xiuzhi, L.I.; Xiaobin, P.E.N.G.; Huimin, F.A.N.G. Navigation path detection of plant protection robot based on RANSAC algorithm. Nongye Jixie Xuebao/Trans. Chin. Soc. Agric. Mach. 2020, 51, 41–46. [Google Scholar]

- Liao, J.; Wang, Y.; Zhu, D.; Zou, Y.; Zhang, S.; Zhou, H. Automatic segmentation of crop/background based on luminance partition correction and adaptive threshold. IEEE Access 2020, 8, 202611–202622. [Google Scholar] [CrossRef]

- Simon, N.A.; Min, C.H. Neural Network Based Corn Field Furrow Detection for Autonomous Navigation in Agriculture Vehicles. In Proceedings of the 2020 IEEE International IOT, Electronics and Mechatronics Conference (IEMTRONICS), Vancouver, BC, Canada, 9–12 September 2020; pp. 1–6. [Google Scholar]

- Higuti, V.A.; Velasquez, A.E.; Magalhaes, D.V.; Becker, M.; Chowdhary, G. Under canopy light detection and ranging-based autonomous navigation. J. Field Robot. 2019, 36, 547–567. [Google Scholar] [CrossRef]

- Winterhalter, W.; Fleckenstein, F.V.; Dornhege, C.; Burgard, W. Crop row detection on tiny plants with the pattern hough transform. IEEE Robot. Autom. Lett. 2018, 3, 3394–3401. [Google Scholar] [CrossRef]

- Zhang, X.; Li, X.; Zhang, B.; Zhou, J.; Tian, G.; Xiong, Y.; Gu, B. Automated robust crop-row detection in maize fields based on position clustering algorithm and shortest path method. Comput. Electron. Agric. 2018, 154, 165–175. [Google Scholar] [CrossRef]

- Li, J.; Zhu, R.; Chen, B. Image detection and verification of visual navigation route during cotton field management period. Int. J. Agric. Biol. Eng. 2018, 11, 159–165. [Google Scholar] [CrossRef]

- Meng, Q.; Hao, X.; Zhang, Y.; Yang, G. Guidance line identification for agricultural mobile robot based on machine vision. In Proceedings of the 2018 IEEE 3rd Advanced Information Technology, Electronic and Automation Control Conference (IAEAC), Chongqing, China, 12–14 October 2018; pp. 1887–1893. [Google Scholar]

- Yang, S.; Mei, S.; Zhang, Y. Detection of maize navigation centerline based on machine vision. IFAC-PapersOnLine 2018, 51, 570–575. [Google Scholar] [CrossRef]

- Reiser, D.; Miguel, G.; Arellano, M.V.; Griepentrog, H.W.; Paraforos, D.S. Crop row detection in maize for developing navigation algorithms under changing plant growth stages. In Proceedings of the Robot 2015: Second Iberian Robotics Conference, Lisbon, Portugal, 19–21 November 2016; Springer: Cham, Switzerland, 2016; pp. 371–382. [Google Scholar]

- Liu, L.; Mei, T.; Niu, R.; Wang, J.; Liu, Y.; Chu, S. RBF-based monocular vision navigation for small vehicles in narrow space below maize canopy. Appl. Sci. 2016, 6, 182. [Google Scholar] [CrossRef]

- Jiang, G.; Wang, X.; Wang, Z.; Liu, H. Wheat rows detection at the early growth stage based on Hough transform and vanishing point. Comput. Electron. Agric. 2016, 123, 211–223. [Google Scholar] [CrossRef]

- Tu, C.; Van Wyk, B.J.; Djouani, K.; Hamam, Y.; Du, S. An efficient crop row detection method for agriculture robots. In Proceedings of the 2014 7th International Congress on Image and Signal Processing, Dalian, China, 14–16 October 2014; pp. 655–659. [Google Scholar]

- Zhu, Z.X.; He, Y.; Zhai, Z.Q.; Liu, J.Y.; Mao, E.R. Research on cotton row detection algorithm based on binocular vision. Appl. Mech. Mater. 2014, 670, 1222–1227. [Google Scholar] [CrossRef]

- Su, D.; Qiao, Y.; Kong, H.; Sukkarieh, S. Real time detection of inter-row ryegrass in wheat farms using deep learning. Biosyst. Eng. 2021, 204, 198–211. [Google Scholar] [CrossRef]

- Du, Y.; Mallajosyula, B.; Sun, D.; Chen, J.; Zhao, Z.; Rahman, M.; Jawed, M.K. A Low-cost Robot with Autonomous Recharge and Navigation for Weed Control in Fields with Narrow Row Spacing. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27–30 September 2021; pp. 3263–3270. [Google Scholar]

- Rabab, S.; Badenhorst, P.; Chen, Y.P.P.; Daetwyler, H.D. A template-free machine vision-based crop row detection algorithm. Precis. Agric. 2021, 22, 124–153. [Google Scholar] [CrossRef]

- Czymmek, V.; Schramm, R.; Hussmann, S. Vision based crop row detection for low cost uav imagery in organic agriculture. In Proceedings of the 2020 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Dubrovnik, Croatia, 25–28 May 2020; pp. 1–6. [Google Scholar]

- Pusdá-Chulde, M.; Giusti, A.D.; Herrera-Granda, E.; García-Santillán, I. Parallel CPU-based processing for automatic crop row detection in corn fields. In Proceedings of the XV Multidisciplinary International Congress on Science and Technology, Quito, Ecuador, 19–23 October 2020; Springer: Cham, Switzerland, 2020; pp. 239–251. [Google Scholar]

- Kulkarni, S.; Angadi, S.A.; Belagavi, V.T.U. IoT based weed detection using image processing and CNN. Int. J. Eng. Appl. Sci. Technol. 2019, 4, 606–609. [Google Scholar]

- Czymmek, V.; Harders, L.O.; Knoll, F.J.; Hussmann, S. Vision-based deep learning approach for real-time detection of weeds in organic farming. In Proceedings of the 2019 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Auckland, New Zealand, 20–23 May 2019; pp. 1–5. [Google Scholar]

- Hassanein, M.; Khedr, M.; El-Sheimy, N. Crop row detection procedure using low-cost UAV imagery system. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, 42, 349–356. [Google Scholar] [CrossRef]

- Bah, M.D.; Hafiane, A.; Canals, R. CRowNet: Deep network for crop row detection in UAV images. IEEE Access 2019, 8, 5189–5200. [Google Scholar] [CrossRef]

- Tenhunen, H.; Pahikkala, T.; Nevalainen, O.; Teuhola, J.; Mattila, H.; Tyystjärvi, E. Automatic detection of cereal rows by means of pattern recognition techniques. Comput. Electron. Agric. 2019, 162, 677–688. [Google Scholar] [CrossRef]

- García-Santillán, I.; Guerrero, J.M.; Montalvo, M.; Pajares, G. Curved and straight crop row detection by accumulation of green pixels from images in maize fields. Precis. Agric. 2018, 19, 18–41. [Google Scholar] [CrossRef]

- Kaur, M.; Min, C.H. Automatic crop furrow detection for precision agriculture. In Proceedings of the 2018 IEEE 61st International Midwest Symposium on Circuits and Systems (MWSCAS), Windsor, ON, Canada, 5–8 August 2018; pp. 520–523. [Google Scholar]

- Hamuda, E.; Mc Ginley, B.; Glavin, M.; Jones, E. Improved image processing-based crop detection using Kalman filtering and the Hungarian algorithm. Comput. Electron. Agric. 2018, 148, 37–44. [Google Scholar] [CrossRef]

- Bah, M.D.; Hafiane, A.; Canals, R. Deep learning with unsupervised data labeling for weed detection in line crops in UAV images. Remote Sens. 2018, 10, 1690. [Google Scholar] [CrossRef]

- Malavazi, F.B.; Guyonneau, R.; Fasquel, J.B.; Lagrange, S.; Mercier, F. LiDAR-only based navigation algorithm for an autonomous agricultural robot. Comput. Electron. Agric. 2018, 154, 71–79. [Google Scholar] [CrossRef]

- Lavania, S.; Matey, P.S. Novel method for weed classification in maize field using Otsu and PCA implementation. In Proceedings of the 2015 IEEE International Conference on Computational Intelligence Communication Technology, Ghaziabad, India, 13–14 February 2015; pp. 534–537. [Google Scholar]

- Nan, L.; Chunlong, Z.; Ziwen, C.; Zenghong, M.; Zhe, S.; Ting, Y.; Junxiong, Z. Crop positioning for robotic intra-row weeding based on machine vision. Int. J. Agric. Biol. Eng. 2015, 8, 20–29. [Google Scholar]

- Pérez-Ortiz, M.; Peña, J.M.; Gutiérrez, P.A.; Torres-Sánchez, J.; Hervás-Martínez, C.; López-Granados, F. A semi-supervised system for weed mapping in sunflower crops using unmanned aerial vehicles and a crop row detection method. Appl. Soft Comput. 2015, 37, 533–544. [Google Scholar] [CrossRef]

- Kiani, S.; Jafari, A. Crop detection and positioning in the field using discriminant analysis and neural networks based on shape features. J. Agr. Sci. Tech. 2012, 14, 755–765. [Google Scholar]

- Burgos-Artizzu, X.P.; Ribeiro, A.; Guijarro, M.; Pajares, G. Real-time image processing for crop/weed discrimination in maize fields. Comput. Electron. Agric. 2011, 75, 337–346. [Google Scholar] [CrossRef]

- Hemming, J.; Nieuwenhuizen, A.T.; Struik, L.E. Image Analysis System to Determine Crop Row and Plant Positions for an Intra-Row Weeding Machine. 2011, 6, pp. 1–7. Available online: https://edepot.wur.nl/180044 (accessed on 11 June 2023).

- Ota, K.; Kasahara, J.Y.L.; Yamashita, A.; Asama, H. Weed and Crop Detection by Combining Crop Row Detection and K-means Clustering in Weed Infested Agricultural Fields. In Proceedings of the 2022 IEEE/SICE International Symposium on System Integration (SII), Narvik, Norway, 9–12 January 2022; pp. 985–990. [Google Scholar]

- Cao, M.; Tang, F.; Ji, P.; Ma, F. Improved Real-Time Semantic Segmentation Network Model for Crop Vision Navigation Line Detection. Front. Plant Sci. 2022, 13, 898131. [Google Scholar] [CrossRef] [PubMed]

- Basso, M.; Pignaton de Freitas, E. A UAV guidance system using crop row detection and line follower algorithms. J. Intell. Robot. Syst. 2020, 97, 605–621. [Google Scholar] [CrossRef]

- Fue, K.; Porter, W.; Barnes, E.; Li, C.; Rains, G. Evaluation of a stereo vision system for cotton row detection and boll location estimation in direct sunlight. Agronomy 2020, 10, 1137. [Google Scholar] [CrossRef]

- Fareed, N.; Rehman, K. Integration of remote sensing and GIS to extract plantation rows from a drone-based image point cloud digital surface model. ISPRS Int. J. Geo-Inf. 2020, 9, 151. [Google Scholar] [CrossRef]

- Wang, L.; Yang, Y.; Shi, J. Measurement of harvesting width of intelligent combine harvester by improved probabilistic Hough transform algorithm. Measurement 2020, 151, 107130. [Google Scholar] [CrossRef]

- Li, X.; Lloyd, R.; Ward, S.; Cox, J.; Coutts, S.; Fox, C. Robotic crop row tracking around weeds using cereal-specific features. Comput. Electron. Agric. 2022, 197, 106941. [Google Scholar] [CrossRef]

- Casuccio, L.; Kotze, A. Corn planting quality assessment in very high-resolution RGB UAV imagery using Yolov5 and Python. AGILE GISci. Ser. 2022, 3, 28. [Google Scholar] [CrossRef]

- LeVoir, S.J.; Farley, P.A.; Sun, T.; Xu, C. High-Accuracy adaptive low-cost location sensing subsystems for autonomous rover in precision agriculture. IEEE Open J. Ind. Appl. 2020, 1, 74–94. [Google Scholar] [CrossRef]

- Tian, Z.; Junfang, X.; Gang, W.; Jianbo, Z. Automatic navigation path detection method for tillage machines working on high crop stubble fields based on machine vision. Int. J. Agric. Biol. Eng. 2014, 7, 29. [Google Scholar]

- Ulloa, C.C.; Krus, A.; Barrientos, A.; del Cerro, J.; Valero, C. Robotic fertilization in strip cropping using a CNN vegetables detection-characterization method. Comput. Electron. Agric. 2022, 193, 106684. [Google Scholar] [CrossRef]

- Azeta, J.; Bolu, C.A.; Alele, F.; Daranijo, E.O.; Onyeubani, P.; Abioye, A.A. Application of Mechatronics in Agriculture: A review. J. Phys. Conf. Ser. 2019, 1378, 032006. [Google Scholar] [CrossRef]

- Klein, L.J.; Hamann, H.F.; Hinds, N.; Guha, S.; Sanchez, L.; Sams, B.; Dokoozlian, N. Closed loop controlled precision irrigation sensor network. IEEE Internet Things J. 2018, 5, 4580–4588. [Google Scholar] [CrossRef]

- Rehman, A.; Saba, T.; Kashif, M.; Fati, S.M.; Bahaj, S.A.; Chaudhry, H. A revisit of internet of things technologies for monitoring and control strategies in smart agriculture. Agronomy 2022, 12, 127. [Google Scholar] [CrossRef]

- Wu, J.; Deng, M.; Fu, L.; Miao, J. Vanishing Point Conducted Diffusion for Crop Rows Detection. In Proceedings of the International Conference on Intelligent and Interactive Systems and Applications, Bangkok, Thailand, 28–30 June 2018; Springer: Cham, Switzerland, 2018; pp. 404–416. [Google Scholar]

- Ronchetti, G.; Mayer, A.; Facchi, A.; Ortuani, B.; Sona, G. Crop row detection through UAV surveys to optimize on-farm irrigation management. Remote Sens. 2020, 12, 1967. [Google Scholar] [CrossRef]

- Singh, A.K.; Tariq, T.; Ahmer, M.F.; Sharma, G.; Bokoro, P.N.; Shongwe, T. Intelligent Control of Irrigation Systems Using Fuzzy Logic Controller. Energies 2022, 15, 7199. [Google Scholar] [CrossRef]

- Pang, Y.; Shi, Y.; Gao, S.; Jiang, F.; Veeranampalayam-Sivakumar, A.N.; Thompson, L.; Liu, C. Improved crop row detection with deep neural network for early-season maize stand count in UAV imagery. Comput. Electron. Agric. 2020, 178, 105766. [Google Scholar] [CrossRef]

- Zhang, W.; Miao, Z.; Li, N.; He, C.; Sun, T. Review of Current Robotic Approaches for Precision Weed Management. Curr. Robot. Rep. 2022, 3, 139–151. [Google Scholar] [CrossRef]

- Li, Y.; Guo, Z.; Shuang, F.; Zhang, M.; Li, X. Key technologies of machine vision for weeding robots: A review and benchmark. Comput. Electron. Agric. 2022, 196, 106880. [Google Scholar] [CrossRef]

- Wendel, A.; Underwood, J. Self-supervised weed detection in vegetable crops using ground based hyperspectral imaging. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 5128–5135. [Google Scholar]

- Zhao, Y.; Gong, L.; Huang, Y.; Liu, C. A review of key techniques of vision-based control for harvesting robot. Comput. Electron. Agric. 2016, 127, 311–323. [Google Scholar] [CrossRef]

- Li, B.; Yang, Y.; Qin, C.; Bai, X.; Wang, L. Improved random sampling consensus algorithm for vision navigation of intelligent harvester robot. Ind. Robot. Int. J. Robot. Res. Appl. 2020, 47, 881–887. [Google Scholar] [CrossRef]

- Benson, E.R.; Reid, J.F.; Zhang, Q.; Pinto, F.A.C. An adaptive fuzzy crop edge detection method for machine vision. In Proceedings of the Annual International Meeting Paper, New York, NY, USA, 3–5 July 2000; No. 001019. pp. 49085–49659. [Google Scholar]

- Pilarski, T.; Happold, M.; Pangels, H.; Ollis, M.; Fitzpatrick, K.; Stentz, A. The demeter system for automated harvesting. Auton. Robot. 2002, 13, 9–20. [Google Scholar] [CrossRef]

- Chen, J.; Qiang, H.; Wu, J.; Xu, G.; Wang, Z. Navigation path extraction for greenhouse cucumber-picking robots using the prediction-point Hough transform. Comput. Electron. Agric. 2021, 180, 105911. [Google Scholar] [CrossRef]

- Xu, S.; Wu, J.; Zhu, L.; Li, W.; Wang, Y.; Wang, N. A novel monocular visual navigation method for cotton-picking robot based on horizontal spline segmentation. In MIPPR 2015: Automatic Target Recognition and Navigation; SPIE: Bellingham, WA, USA, 2015; Volume 9812, pp. 310–315. [Google Scholar]

- Choi, K.H.; Han, S.K.; Park, K.H.; Kim, K.S.; Kim, S. Vision based guidance line extraction for autonomous weed control robot in paddy field. In Proceedings of the 2015 IEEE International Conference on Robotics and Biomimetics (ROBIO), Zhuhai, China, 6–9 December 2015; pp. 831–836. [Google Scholar]

- Li, D.; Li, B.; Long, S.; Feng, H.; Xi, T.; Kang, S.; Wang, J. Rice seedling row detection based on morphological anchor points of rice stems. Biosyst. Eng. 2023, 226, 71–85. [Google Scholar] [CrossRef]

- Hu, Y.; Huang, H. Extraction Method for Centerlines of Crop Row Based on Improved Lightweight Yolov4. In Proceedings of the 2021 6th International Symposium on Computer and Information Processing Technology (ISCIPT), Changsha, China, 11–13 June 2021; pp. 127–132. [Google Scholar]

- Tao, Z.; Ma, Z.; Du, X.; Yu, Y.; Wu, C. A crop root row detection algorithm for visual navigation in rice fields. In Proceedings of the 2020 ASABE Annual International Virtual Meeting, Joseph, MI, USA, 3–5 April 2020; p. 1. [Google Scholar]

- Kanagasingham, S.; Ekpanyapong, M.; Chaihan, R. Integrating machine vision-based row guidance with GPS and compass-based routing to achieve autonomous navigation for a rice field weeding robot. Precis. Agric. 2020, 21, 831–855. [Google Scholar] [CrossRef]