Abstract

As artificial intelligence (AI) drives significant challenges in education, understanding and addressing the training needs of in-service teachers has become a critical issue for ensuring a responsible and long-term technological transition. Framed within Sustainable Development Goal 4 (SDG 4) and the principles of Education for Sustainable Development (ESD), teacher preparation in AI is increasingly recognized as a key mechanism for promoting ethical, equitable, and inclusive educational transformation. This study explores the influence of several key variables on intention and learning behaviors in relation to AI among a sample of 704 Spanish in-service teachers (71% women) from all compulsory educational levels. Using a validated questionnaire, this study assessed teachers’ anxiety towards AI, basic AI knowledge, personal relevance of AI, AI for social good, perceived self-efficacy, social pressure, and perceived usefulness of AI. Structural equation modeling (SEM) was employed to analyze the direct and indirect relationships among these variables. The results indicate that the perceived usefulness of AI and self-efficacy directly and positively influence the behavioral intention to learn about AI. Furthermore, social pressure and basic AI knowledge indirectly influence this intention. In turn, both behavioral intention and social pressure significantly predicted AI learning behaviors. The model demonstrates strong explanatory power, accounting for 91% of the variance in the behavioral intention to learn about AI. These findings provide evidence to inform the design of teacher training initiatives and policies that promote responsible, ethical, and inclusive integration of AI in educational settings, contributing to sustainable development through education.

1. Introduction

1.1. AI, Education and Sustainability: Challenges and Opportunities

Artificial intelligence (AI) in education extends far beyond the deployment of digital tools; it represents a transformative approach to learning, teaching, and assessment. Rather than focusing solely on technology, AI in education encompasses how intelligent systems can personalize instruction, support teachers’ decision-making, and foster inclusive and equitable learning environments [1]. Within this context, AI serves as a catalyst for reimagining educational practices aligned with the broader sustainability goals, particularly by promoting accessible, efficient, and lifelong learning opportunities for diverse learner populations.

However, the adoption of AI in educational contexts brings pedagogical and ethical challenges that require teachers to develop new competencies. As reported by Adil [2], educators are not merely implementers of AI tools, but critical mediators who must interpret, adapt, and evaluate these technologies within real classroom contexts. Addressing these challenges goes beyond traditional digital literacy and points toward AI literacy, understood as the combination of technical knowledge and critical, ethical engagement with AI. Without adequately prepared teachers, the rapid expansion of AI risks exacerbating digital divides and undermining the goal of sustainable, human-centered education. Developing teachers’ competencies in AI literacy—particularly ethical awareness and reflective practice—is therefore essential for achieving a responsible technological transition. Tagare et al. [3] argue that ethical competencies like fairness and transparency are essential for AI in schools. These values ensure that technology promotes social justice and sustainability instead of increasing bias and exclusion. In this sense, teacher preparation emerges as a key lever for aligning AI’s adoption in education with the principles of sustainability and long-term societal well-being.

1.2. Teacher Transformation and AI Literacy in Education

The rapid growth of AI is changing the teaching profession and the way teachers, students, and knowledge interact. As AI takes over tasks once performed by educators, their role is shifting toward guiding and managing the learning process with critical judgment. Without proper support, this change can overwhelm teachers who are not ready to use AI, highlighting the urgent need for continuous professional development [4]

A major challenge is teacher training. Tedre et al. [5] note that many educators still struggle to integrate computational thinking and basic AI concepts into their lessons. Therefore, as highlighted by Bellas et al. [6], high-quality resources and effective professional development are essential to build teachers’ confidence and competence in AI. At the core of this transformation is the need to foster AI literacy among both teachers and students. Adams et al. [7] describe AI literacy as an extension of digital literacy that includes understanding AI concepts, programming skills, and ethical awareness. Hollands et al. [8] argue that while not all students must learn to develop AI applications, achieving universal AI literacy is both attainable and essential. More recently, Druga et al. [9] have highlighted the ways in which existing AI resources support pedagogical practices and teachers, while pointing out that limitations in resource personalization and prevailing attitudes toward AI remain barriers to effective integration. The evolution of the teaching role, AI literacy, and teacher training are all interconnected. To ensure that AI integration creates inclusive and ethical learning environments, we need more than just technical training. It is also essential to invest in teacher-centered design, recognizing educators as active contributors to AI-supported learning.

1.3. AI and Education for Sustainable Development

The integration of AI in education is increasingly framed within the global agenda for sustainable development, where education is recognized as a foundational enabler of social, economic, and technological transformation. In this context, Sustainable Development Goal 4 (SDG 4) explicitly positions education as a key driver for ensuring inclusive and equitable quality education and promoting lifelong learning opportunities for all. From this perspective, the relevance of AI in education lies not in technological innovation per se but in its potential to strengthen education systems’ long-term capacity to deliver equitable and high-quality learning. International organizations emphasize that AI should be understood as a strategic tool to support educational inclusion, informed decision-making, and system-level effectiveness, provided that its adoption is guided by human-centered and ethical principles. UNESCO explicitly frames AI as a potential accelerator for progress toward SDG 4 when embedded within national education systems and aligned with the Education 2030 Agenda [10]. This framing indicates that AI integration in education must prioritize pedagogical value, equity, and institutional viability over short-term efficiency gains. This perspective is reinforced by UNESCO’s Guidance for Generative AI in Education and Research [11]. This document aligns emerging AI technologies with the Education 2030 Agenda, suggesting that their use must support inclusive, ethical, and human-centered development. Earlier international reports [12,13] further emphasized how emerging digital technologies, including AI, can contribute to sustainable development and broader societal well-being.

Teacher competence in AI is therefore not merely a technical requirement but a structural condition for aligning technological adoption with broader educational and societal goals. This perspective is reflected in the UNESCO AI Competency Framework for Teachers [14]. This framework defines the ethical, pedagogical, and professional capacities teachers need to engage critically with AI and integrate it meaningfully into the classroom. In the context of this study, sustainability in AI-related teacher learning is operationalized not as a general normative principle but as a set of concrete educational conditions. Specifically, it refers to teachers’ capacity for continuous and self-directed learning about AI, the ethical and inclusive use of AI in pedagogical practice, and the existence of institutional structures that support scalable and long-term professional development. This operationalization aligns sustainability with lifelong learning, equity, and system-level viability, consistent with the objectives of SDG 4 and Education for Sustainable Development.

By conceptualizing AI literacy as a core component of Education for Sustainable Development, this study positions teacher professional development as a key mechanism through which SDG 4 can be operationalized in digitally transformed education systems. This framing provides the conceptual bridge between the global sustainability agenda and the theoretical models of teacher knowledge and learning examined in the following section.

1.4. Conceptual Bases and Theoretical Models

To address the gaps in AI curriculum design and the pedagogical use of technology, Falloon [15] argued that teachers should not only possess technical proficiency but also demonstrate ethical and pedagogical awareness when using digital tools. Within the Teacher Digital Competency (TDC) framework, technology is framed as a means to enhance learning, foster student engagement, and address diverse learning needs through reflective and informed practice. Another influential model is the Technological Pedagogical Content Knowledge (TPACK) framework developed by Mishra and Koehler [16], which conceptualizes the integration of technological, pedagogical, and content knowledge. According to Crompton et al. [17], “the TPACK framework continues to serve as a foundational model for guiding educators in the effective integration of technology into teaching practices.” Celik [18] further asserted that “TPACK, when aligned with the technological and pedagogical contributions of AI, provides a robust framework for understanding teacher knowledge for AI-based instruction.”

In the context of AI education, these frameworks also emphasize the importance of sustained teacher engagement and professional learning as prerequisites for meaningful classroom integration. Research on AI education initiatives shows that teachers’ participation in AI-related projects and professional development is essential for translating conceptual frameworks into pedagogical practice [19,20].

Building upon these frameworks, Chiu et al. [21] identified four fundamental approaches to curriculum design that focus on content, product, process, and praxis, which provide a structured basis for developing AI curricula that connect theory with practice. Similarly, Ng et al. [22] integrated the concept of AI literacy within the TPACK framework, showing how AI can strengthen teachers’ technological, pedagogical, and content knowledge. This approach aligns with previous studies by Reyes et al. [23] and Tondeur et al. [24], who recommended using TPACK as a foundation for teacher education programs.

Within K-12 AI education, the AI4K12 project represents a prominent initiative focused on developing a national framework for AI curricula in the United States, grounded in the Five Big Ideas in AI proposed by Touretzky and Gardner-McCune [25]. These ideas—Perception, Representation, Learning, Natural Interaction, and Societal Impact—serve to introduce foundational AI concepts and foster ethical and societal awareness. Marques et al. [26] expanded these ideas as student competencies, building upon the earlier conceptualization by Touretzky et al. [27]. Together, these frameworks establish the pedagogical foundation upon which empirical studies have begun to explore teachers’ perceptions, motivations, and intentions to engage with AI education.

1.5. Previous Studies on AI Teacher Training

Recent studies have explored teachers’ training needs, barriers, and attitudes toward AI integration. Tedre et al. [5] and Bellas et al. [6] highlighted the lack of pedagogical and technical preparation among educators and the need for ongoing professional development to support effective AI-related teaching practices. Similar challenges have also been reported by Williams et al. [28] and Cohn [29]. Conversely, facilitators include access to professional learning communities, high-quality instructional resources, and institutional support structures that enable sustained engagement with AI-related learning [9,21,24]. These studies emphasize that teachers’ opportunities to meaningfully engage with AI are shaped not only by individual motivation but also by organizational and contextual conditions that support experimentation and reflective practice. Despite these advances, few studies have empirically modeled how these factors interact to influence teachers’ learning and adoption of AI. Existing research has tended to examine training needs or attitudes in isolation, without integrating these dimensions into a unified explanatory framework. This gap justifies the need for integrative theoretical approaches. By combining psychological, pedagogical, and contextual variables, we can better understand how teachers learn how to use AI and how their professional development trajectories evolve.

1.6. Studies Using TAM, TPB, and SEM Approaches

Several models have been employed to analyze teachers’ technology adoption and learning behavior, most notably the Technology Acceptance Model (TAM) and the Theory of Planned Behavior (TPB). These frameworks have been widely used to examine how attitudes, perceived usefulness, self-efficacy, and social norms shape teachers’ intentions to engage with emerging technologies. Empirical applications of these models in AI-related contexts have further demonstrated their suitability for analyzing teachers’ learning intentions and behaviors. For instance, Lu [30] applied an integrated TAM–TPB model to examine teachers’ willingness to use generative AI, revealing that attitudes, perceived usefulness, and social norms significantly predicted behavioral intention. Similarly, Choi et al. [31] used Self-Determination Theory (SDT) and the Motivation–Opportunity–Ability (MOA) framework to study teacher motivation. Their findings emphasize that both intrinsic motivation and contextual conditions are essential for teachers to engage with AI learning.

Studies such as that of Sanusi et al. [32] have further validated the application of TPB in understanding AI learning intentions, highlighting how anxiety, self-efficacy, and perceived relevance shape behavioral intention. Structural equation modeling (SEM) has been widely used to test these relationships, offering a comprehensive view of direct and indirect effects. Taken together, these findings provide a rationale for applying an integrated structural model in the present study to examine the determinants of teachers’ AI learning behavior.

1.7. Research Gap and Contribution

While research on AI in education has expanded, most studies focus on pre-service teachers or theoretical frameworks rather than the empirical learning behaviors of in-service educators. Furthermore, few studies integrate psychological, pedagogical, and contextual variables within a sustainability framework. Addressing this gap is essential to understand how teachers develop the competencies for ethical AI use, moving beyond stated intentions to actual, sustained learning behaviors. This insight is crucial for designing effective professional development and supporting teachers’ adaptation to emerging technologies.

This study therefore contributes to the literature by empirically validating a structural model that explains teachers’ behavioral intentions and AI learning behaviors, integrating elements from the Technology Acceptance Model (TAM), the Theory of Planned Behavior (TPB), and related motivational perspectives. By simultaneously examining cognitive, motivational, and social factors, the model provides a comprehensive account of the mechanisms underlying teachers’ engagement with AI learning. In addition, by aligning teacher AI learning with Sustainable Development Goal 4 (SDG 4), this study frames professional development as an essential tool for high-quality, inclusive education. In doing so, it highlights how training supports the transition toward equitable and digitally transformed school systems. While sustainability is not treated as a direct analytical construct within the model, it provides the policy and educational context that underscores the relevance of fostering teachers’ long-term capacity for ethical and responsible engagement with AI.

1.8. The Present Study

Building upon the identified gaps and prior research, such as that of Sanusi et al. [32], the present study proposes a structural model to examine the relationships among key psychological and contextual factors influencing in-service teachers’ learning of AI. The model integrates variables such as anxiety toward AI, basic AI knowledge, personal relevance, perceived self-efficacy, AI for social good, social pressure, and perceived usefulness of AI, and explores their effects on both behavioral intention and AI learning behaviors.

This study offers an empirical contribution by empirically validating a structural model that explains teachers’ behavioral intentions and AI learning behaviors, integrating elements from TAM, TPB, and sustainability-oriented education frameworks. Accordingly, this study is guided by the following research question:

RQ: What psychological and contextual factors influence in-service teachers’ intention to learn about artificial intelligence and their AI learning behaviors?

1.9. Objectives and Hypotheses

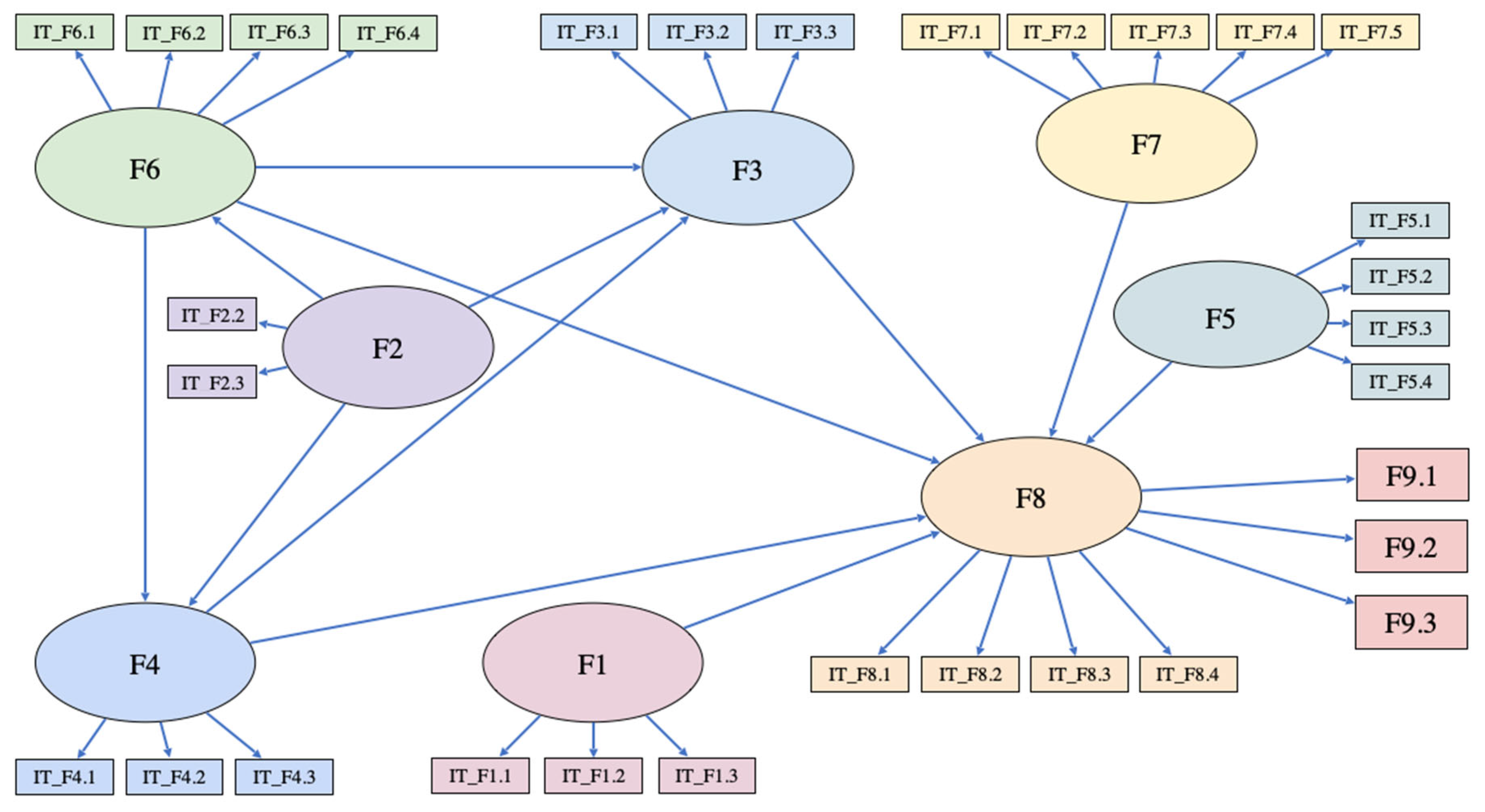

The structural model developed in this work aims to integrate the relationships between several key variables—including anxiety toward AI, basic AI knowledge, personal relevance, perceived self-efficacy, AI for social good, social pressure, and perceived usefulness of AI—and their influence on the intention to learn and AI learning behaviors among in-service teachers. As shown in Figure 1, our initial model leads to the following hypotheses:

Figure 1.

Hypothetical model (Model 1). F1 = AI anxiety; F2 = basic AI knowledge; F3 = personal relevance; F4 = perceived self-efficacy; F5 = AI for social good; F6 = social pressure; F7 = perceived usefulness of AI; F8 = behavioral intention to learn AI; F9.1 = AI learning behaviors via books and research papers; F9.2 = AI learning behaviors via training courses; F9.3 = AI learning behaviors via apps.

H1.

AI anxiety will exert a negative direct effect on in-service teachers’ behavioral intention toward AI learning.

H2.

Basic AI knowledge will exert a positive effect on behavioral intention, personal relevance, perceived self-efficacy, and social pressure associated with learning AI.

H3.

Social pressure will exert a positive effect on in-service teachers’ behavioral intention, personal relevance, and perceived self-efficacy toward AI learning.

H4.

Perceived self-efficacy will exert a positive effect on behavioral intention and personal relevance regarding AI learning.

H5.

Personal relevance will exert a positive effect on behavioral intention toward AI learning.

H6.

AI for social good will have a positive effect on behavioral intention toward AI learning.

H7.

Perceived usefulness of AI will have a positive effect on behavioral intention toward AI learning.

H8.

Behavioral intention toward AI learning will have a positive effect on in-service teachers’ AI learning behaviors through the following:

H8.1.

Books and research papers.

H8.2.

Training courses.

H8.3.

Apps.

2. Materials and Methods

2.1. Participants

The sample included in-service teachers from a wide range of demographic and professional backgrounds. Participants represented diverse age groups, with the largest proportion aged between 46 and 55 years (36.1%), followed by teachers aged 36–45 (22.6%) and 25–35 (19.7%). Teaching experience was also heterogeneous, with a substantial proportion of participants reporting more than 20 years of professional experience (41.6%), alongside early- and mid-career teachers.

In terms of educational background, most participants held a university degree or higher, including undergraduate degrees (37.0%), long-cycle level qualifications (30.3%), and master’s degrees (28.3%), while a smaller proportion reported doctoral-level studies. The sample covered early childhood education (15.8%), primary education (35.0%), and compulsory secondary education (49.2%), and included teachers from public institutions (87.4%), state-subsidized schools (9.7%), and private schools (2.9%).

Finally, participants reported varying levels of prior familiarity with AI tools, with 47.0% indicating low familiarity, 44.9% moderate familiarity, and 8.2% high familiarity.

Participation was open to in-service teachers working in formal educational settings. No additional inclusion criteria were applied beyond current teaching status. Given the voluntary, email-based recruitment procedure, an exact response rate could not be calculated. Responses with substantial missing data were excluded prior to analysis.

2.2. Measures

The instrument used in this study was a Spanish adaptation of the questionnaire originally proposed by Sanusi et al. [32]. The validated Spanish version consisted of 28 items grouped into 8 factors: anxiety toward AI (3 items), basic AI knowledge (2 items), personal relevance of AI (3 items), perceived self-efficacy (3 items), AI for social good (4 items), social pressure to learn AI (4 items) (in line with the Theory of Planned Behavior, the construct referred to as social pressure in this study corresponds to subjective norms, understood as teachers’ perceptions of institutional and professional expectations regarding AI-related learning), perceived usefulness of AI (5 items), and behavioral intention to learn about AI (4 items).

The reliability of the questionnaire factors for the study sample ranged from α = 0.705, ω = 0.707 for Factor 2 (basic AI knowledge) to α = 0.914, ω = 0.919 for Factor 8 (behavioral intention to learn AI).

Additionally, the factor of AI learning behaviors was measured using three self-reported items assessing teachers’ engagement in different AI learning modalities: participation in AI-related courses (“I have attended AI-related classes or courses inside or outside the school”), use of AI applications to understand how they function (“I have used AI applications to understand how they work”), and learning through books and academic articles (“I have learned about AI through books and articles”). Responses were collected using a five-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree). These items capture teachers’ accumulated engagement in AI learning activities and were not restricted to a specific time frame. In accordance with established models of technology adoption proposed by Davis [33] and later extended by Venkatesh et al. [34], the factor of AI learning behaviors was operationalized through behavioral engagement metrics. The frequency and variety of learning-related actions—such as attending courses, consulting specialized literature, or exploring AI tools—serve as valid proxies for professional development engagement [35]. This methodological choice allows for the assessment of the teachers’ proactive transition from intention to practical skill-seeking behaviors within the structural model, consistent with previous research on digital competency acquisition [36].

2.3. Procedure

As the original scale by Sanusi et al. [32] lacked established psychometric properties within the Spanish context, we adapted it following a rigorous parallel back-translation procedure as described by Brislin [37], in accordance with the protocols set by the International Test Commission as outlined by Muñiz et al. [38]. The process unfolded in several stages:

- Forward Translation: Two independent translators produced separate Spanish versions of the original scale.

- Expert Review: A panel of three experts in educational psychology and AI reviewed both translations. They assessed the quality and clarity of each item, eliminating ambiguities, and ensuring the language was relevant and natural for teachers in early education.

- Back-Translation: We provided the translators with the final consensus version in Spanish for back-translation into English. This step allowed the research team to detect and correct any semantic or conceptual drifts.

- Final Consensus: The back-translated version was reviewed, and a final Spanish version was approved after full expert agreement.

A Confirmatory Factor Analysis (CFA) was performed to establish the reliability and validity of the adapted scale, ensuring internal consistency and construct validity within the target population.

Ethical oversight was provided by the University of Alicante Ethics Committee (Ref. UA-2025-09-16), confirming that all procedures adhered to ethical standards regarding data protection and participant rights. Participants were fully informed about this study’s purpose and characteristics. Confidentiality was guaranteed, and informed consent was obtained from each participant, emphasizing the voluntary nature of their participation and their right to withdraw at any time by contacting the research team.

Following the consent process, participants completed the study scale online.

2.4. Design and Data Analysis

This study employed a correlational and predictive design. Structural equation modeling (SEM) was used to examine the proposed associations between the variables.

Given sample size and data characteristics we employed the Robust Diagonally Weighted Least Squares (DWLS) estimator, specifically the WLSMV implementation, which is specifically designed for ordinal data derived from Likert scales, a suitable approach for non-normal data, as noted by Kyriazos and Poga-Kyriazou [39]. The model was estimated using a polychoric correlation matrix, which provides more precise parameter estimates and less biased fit indices than Pearson-based methods when dealing with non-continuous variables. All analyses were conducted using the lavaan infrastructure, ensuring that the standard errors and fit indices were adjusted for robust estimation.

After identifying potential outliers, the initial model fit was evaluated using a combination of absolute and comparative fit indices: chi-square (χ2), Goodness of Fit Index (GFI), Standardized Root Mean Square Residual (SRMR), Root Mean Square Error of Ap-proximation (RMSEA), and Comparative Fit Index (CFI). Model acceptance was based on conventional thresholds: values greater than 0.95 for GFI and CFI, and values lower than 0.06 for RMSEA and SRMR, following Byrne [40]. Finally, direct, indirect, and total effects among the variables were examined. All statistical analyses were conducted using JASP, version 0.19.3 [41].

3. Results

Before testing the structural relationships, a Confirmatory Factor Analysis (CFA) was conducted to assess the psychometric properties of the measurement instrument. The model showed an excellent fit to the data: χ2 = 1050.267, df = 322, CFI = 0.940, RMSEA = 0.057, and SRMR = 0.054.

As shown in Table 1, all standardized factor loadings were significant (p < 0.001) and ranged from 0.542 to 0.903, confirming strong indicator reliability. In addition, convergent validity was established as the Average Variance Extracted (AVE) for all constructs exceeding the 0.50 threshold. Furthermore, discriminant validity was rigorously tested using the Heterotrait/Monotrait (HTMT) ratio. All HTMT values were below the conservative threshold of 0.85 (0.132–0.794), ensuring that each construct is empirically distinct and represents a unique dimension of the model.

Table 1.

Standardized factor loadings.

The internal consistency of the constructs was evaluated using three indicators, namely, Cronbach’s alpha (α), McDonald’s omega coefficient (ω), and the Spearman–Brown coefficient, the latter being the most appropriate for scales composed of only two items [42]. As detailed in Table 2, all constructs exceeded the recommended threshold of 0.70.

Table 2.

Measurement instrument reliability.

Table 3 shows the correlations between the study variables.

Table 3.

Correlations between the study variables.

Absolute and comparative fit indices were employed to assess the initial model’s overall fit (see Table 4).

Table 4.

Fit indices.

As shown in Table 4, the χ2 statistic was significant across all six tested models. This outcome suggests that none of the models are perfectly aligned with the collected data. Nevertheless, the χ2 statistic is prone to inflation as the sample size increases and may be unreliable when n > 200 [43,44]. For this reason, relying on alternative indices, such as those mentioned below, is preferred.

In Model 1 (the initial model), the Comparative Fit Index (CFI) reached a value of 0.989, surpassing the recommended threshold of 0.95 [45]. The Root Mean Square Error of Approximation (RMSEA) is considered acceptable within the range of 0.05–0.10 [46]; for Model 1, its value was 0.029. Similarly, a Standardized Root Mean Square Residual (SRMR) value between 0.05 and 0.08 suggests an acceptable fit [47]; in this case, Model 1 demonstrated good fit with a value of 0.052.

In Model 1, all regression coefficients were statistically significant with the exception of the relationships among F3–F4, F8–F1, F8–F3, F8–F5, and F8–F2. These non-significant paths were removed in Model 2, but this action provided no significant increment in the model’s fit.

In Model 2, all regression coefficients were found to be significant except F3–F6. Therefore, this relationship was removed in Model 3, achieving a minor improvement in the model’s fit.

In Model 3, the F4–F6 regression coefficient was not significant, so it was subsequently removed. This resulted in all regression coefficients becoming significant in Model 4, though this provided no significant increment in the model’s fit.

To enhance the model’s fit, the researchers consulted the modification indices (MI), which led to the introduction of the influence of F6 on F9.2 (MI = 130.225). Once this change was incorporated, an improvement was observed across all fit indices in Model 5. However, the F8–F6 regression coefficient then became non-significant, leading to its elimination in Model 6.

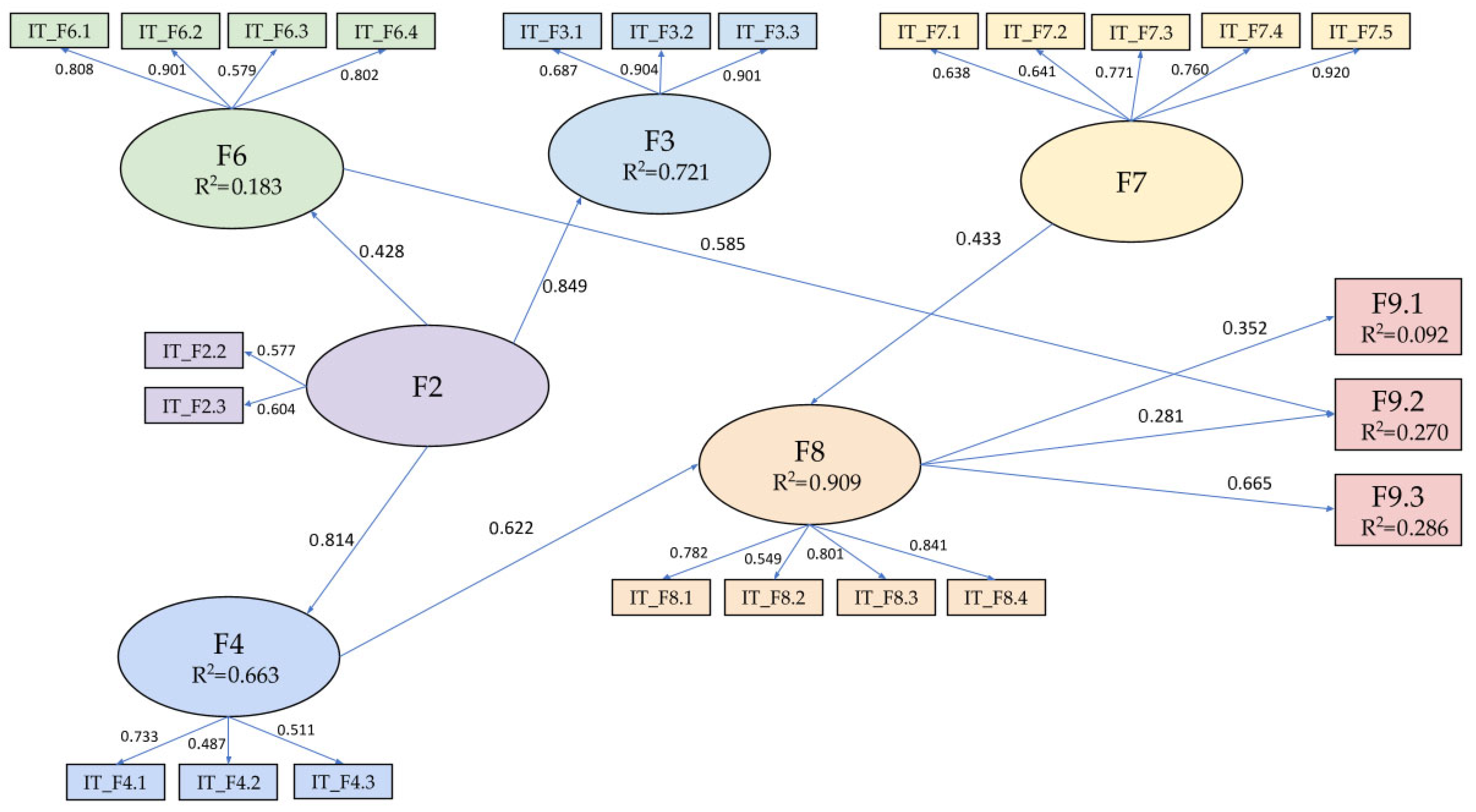

Model 6 exhibited the following fit values: CFI = 0.997; GFI = 0.988; RMSEA = 0.018; and SRMR = 0.047. These values consistently indicate a good model fit, resulting in the model’s selection as the final one (illustrated in Figure 2). Although Model 5 showed excellent fit, Model 6 was selected as the final model because it maintained the same level of Comparative Fit Index (CFI = 0.997) and Root Mean Square Error of Approximation (RMSEA = 0.018) while gaining an additional degree of freedom (df = 239). Following the criteria of Cheung and Rensvold [48], the changes in ΔCFI and ΔRMSEA between the final iterations were well within the recommended thresholds (≤0.01), confirming that Model 6 represents the most robust and parsimonious explanation of the data. Furthermore, a bootstrap procedure with 2000 resamples was conducted. All path coefficients remained significant within the 95% bias-corrected confidence intervals.

Figure 2.

Final model (Model 6). F2: basic AI knowledge; F3: personal relevance; F4: perceived self-efficacy; F6: social pressure; F7: perceived usefulness of AI; F8: behavioral intention to learn AI; F9.1: AI learning behaviors via books and research papers; F9.2: AI learning behaviors via training courses; F9.3: AI learning behaviors via apps.

This model accounts for 91% of the variance in the behavioral intention to learn about AI. Furthermore, the model explains 10% of the AI learning behaviors achieved through books and articles, 27% of the AI learning behaviors achieved through following training courses, and 29% of the AI learning behaviors achieved through applications.

Despite the high explained variance in behavioral intention (R2 = 0.91), diagnostic analyses ruled out multicollinearity or construct redundancy. All Variance Inflation Factors (VIF) were below 2.01, which is well under the threshold of 3.3 [49]. These results, coupled with HTMT ratios below 0.85, confirm that the predictors maintain statistical independence and that the magnitude of the R2 reflects the robustness of the structural relationships within the analyzed sample of teachers.

The final structural model is visually represented in Figure 2, while the specific standardized path coefficients, standard errors, and significance levels for each relationship are detailed in Table 5. The most prominent direct relationship stems from basic AI knowledge to personal relevance, F2 → F3 (β = 0.849, p < 0.001). Basic AI knowledge has a high direct effect on perceived self-efficacy, F2 → F4 (β = 0.841, p < 0.001) and an indirect effect on behavioral intention to learn AI, F2 → F4 → F8 (β = 0.506, p < 0.001) through perceived self-efficacy, and on AI learning behaviors via books and research papers, F2 → F4 → F8 → F9.1 (β = 0.178, p < 0.001), on AI learning behaviors via training courses, F2 → F4 → F8 → F9.2 (β = 0.142, p < 0.001), and on AI learning behaviors via apps, F2 → F4 → F8 → F9.3 (β = 0.337, p < 0.001) through self-efficacy and behavioral intention to learn AI.

Table 5.

Standardized direct and indirect effects within the structural model.

Meanwhile perceived self-efficacy exerts a direct effect on behavioral intention to learn AI, F4 → F8 (β = 0.622, p < 0.001) and an indirect effect on AI learning behaviors via books and research papers, F4 → F8 → F9.1 (β = 0.219, p < 0.001), on AI learning behaviors via training courses, F4 → F8 → F9.2 (β = 0.175, p < 0.001), and on AI learning behaviors via apps, F4 → F8 → F9.3 (β = 0.414, p < 0.001) through behavioral intention to learn AI.

In addition, basic AI knowledge exerts a direct effect on social pressure, F2 → F6 (β = 0.428, p < 0.001) and an indirect effect on AI learning behaviors via training courses, F2 → F6 → F9.2 (β = 0.251, p < 0.001) through social pressure. Social pressure has a direct effect on AI learning behaviors via training courses, F6 → F9.2 (β = 0.585, p < 0.001).

Furthermore, perceived usefulness of AI has a direct effect on behavioral intention to learn AI, F7 → F8 (β = 0.433, p < 0.001) and an indirect effect on AI learning behaviors via books and research papers, F7 → F8 → F9.1 (β = 0.153, p < 0.001), AI learning behaviors via training courses, F7 → F8 → F9.2 (β = 0.122, p < 0.001) and AI learning behaviors via apps, F7 → F8 → F9.3 (β = 0.288, p < 0.001) through behavioral intention to learn AI.

Finally, behavioral intention to learn AI has a direct effect on AI learning behaviors via books and research papers, F8 → F9.1 (β = 0.352, p < 0.001), AI learning behaviors via training courses, F8 → F9.2 (β = 0.281, p < 0.001) and AI learning behaviors via apps, F8 → F9.3 (β = 0.665, p < 0.001).

4. Discussion

This study aimed to develop and test a structural model integrating several key factors—including anxiety toward AI, basic AI knowledge, personal relevance, perceived self-efficacy, AI for social good, social pressure, and perceived usefulness of AI—to predict in-service teachers’ behavioral intention to learn about AI and their AI learning behaviors. The final model explained a notably high proportion of the variance in behavioral intention to learn AI (91%), highlighting the central role of perceived self-efficacy and perceived usefulness of AI as its strongest direct predictors. Regarding AI learning behaviors, the model demonstrated predictive capacities of 10% for learning through books and research papers, 27% through training courses, and 29% through applications. Accordingly, these indicators should be interpreted as self-reported engagement behaviors in AI-related learning activities rather than objectively verified learning outcomes. The high explanatory power for behavioral intention contrasts with the more modest variance explained for AI learning behaviors. This discrepancy highlights the “intention–behavior gap” frequently observed in professional development contexts [50]. While in-service teachers may exhibit a strong commitment and intention to engage with AI due to perceived usefulness and social pressure, the translation of this intent into concrete actions—such as attending courses or consulting the specialized literature—might be moderated by structural constraints [51]. Factors such as time poverty, lack of institutional resources, or the absence of immediate pedagogical rewards may hinder the practical implementation of these intentions. Acknowledging this gap is crucial for policy design, as it suggests that fostering positive attitudes alone is insufficient. Training programs must also provide the necessary structural support to facilitate the transition from intention to active learning behavior. Future research should further explore these moderating environmental factors to better understand how to bridge this gap in sustainable teacher development.

These findings confirm some, but not all, of the proposed hypotheses, revealing marked differences between the factors predicting in-service teachers’ intention to learn AI and those predicting pre-service teachers [32]. Accordingly, the observed differences between in-service and pre-service teachers should be interpreted as context-dependent associations rather than causal effects attributable to professional status. While pre-service teachers operate within a structured academic environment where learning is the primary organizational goal, in-service educators must integrate AI upskilling with established workloads and administrative demands. In this context, structural constraints—particularly time scarcity and institutional pressure—act as organizational barriers that limit the translation of intention into sustained learning behavior [52]. These factors function as boundary conditions that moderate the strength of psychological predictors, such as anxiety or self-efficacy. From a strategic management perspective, this suggests that institutional measures, such as protected professional development time, are necessary to mitigate systemic stressors and complement individual motivation.

Hypothesis 1 was not supported. In the correlation analysis, AI anxiety (F1) showed a significant negative correlation with most variables, particularly self-efficacy (F3) and behavioral intention (F8). This suggests that, anxiety initially acts as a psychological barrier that undermines teacher confidence. However, it is important to note that AI anxiety was removed from the final structural model because it did not reach statistical significance within the complex network of paths. This implies that while anxiety exists, its effect may be absorbed or better explained by other cognitive factors once all variables are analyzed simultaneously. Contrary to the results obtained by Sanusi et al. [32] with pre-service teachers—where AI anxiety was negatively associated with the behavioral intention to learn AI. Our analysis with in-service teachers showed that AI anxiety was not associated with either their intention to learn AI or their AI learning behaviors. This suggests that practicing teachers may have already overcome initial apprehensions about AI, or that their professional experience moderates such feelings.

Hypothesis 2 was partially confirmed. Although basic AI knowledge was positively associated with the personal relevance of learning AI, perceived self-efficacy, and social pressure, it did not show a direct association with the behavioral intention to learn AI. However, it showed an indirect association through perceived self-efficacy. A significant mechanism emerged from the data: basic AI knowledge did not yield a direct statistical link to AI learning intentions; instead, it operated through a mediated pathway via self-efficacy. Additionally, the statistical relationship between AI knowledge and personal relevance represented the strongest association identified within the structural model, suggesting that for teachers, the value of initial AI knowledge lies primarily in its capacity to strengthen confidence in their own competence.

This result aligns with previous studies demonstrating that AI training significantly enhances teachers’ self-efficacy [47]. In practical terms, this implies that the initial stages of AI training should prioritize accessible, confidence-building content rather than technical mastery, thus laying the psychological foundation for sustained learning. In fact, even a basic understanding of AI may be sufficient to foster practical adoption in the classroom, as suggested by Bellas et al. [6].

Hypothesis 3 was not supported. Our analysis revealed no significant association between social pressure and the behavioral intention to learn AI among in-service teachers, the perceived personal relevance of learning AI, or perceived self-efficacy. However, in the final model, social pressure did exhibit a significant relationship with AI learning behaviors through AI training courses. This finding suggests that social or institutional expectations may correlate with participation in formal training, even when they do not show a direct statistical link to personal motivation. This pattern aligns with the institutional context of the Spanish education system, where professional development is largely centrally coordinated and policy-driven. Participation in formal AI-related courses may thus reflect institutional expectations rather than intrinsic motivation, explaining why social pressure predicts engagement in structured training but not in self-initiated learning.

Hypothesis 4 was partially confirmed. While perceived self-efficacy did not show a significant association with the personal relevance of learning AI, it emerged as a positive predictor of behavioral intention. This finding aligns with Social Cognitive Theory, which identifies self-efficacy as a pivotal determinant of intention and action [53]. If teachers do not feel capable, they are unlikely to engage in active training. Therefore, the technical empowerment of teachers, as a prerequisite and indispensable step for any successful technological transition, should be prioritized in educational sustainability strategies.

Within the context of AI, numerous studies have highlighted teachers’ technological self-efficacy as a key determinant of successful technology adoption and classroom integration [47,54,55]. Teachers’ beliefs in their ability to use technology effectively show a significant association with their motivation and persistence when implementing new digital tools. Additionally, self-efficacy serves a mediating role between TPACK and educators’ intentions to adopt AI in their teaching practices [18]. Strengthening teachers’ self-efficacy therefore enhances their readiness to integrate AI-driven pedagogies over time.

Beyond intention formation, perceived self-efficacy has been linked to teachers’ persistence and sustained engagement in the integration of digital technologies into classroom practice. Empirical studies indicate that higher self-efficacy supports continued professional learning and experimentation with digital tools [56,57,58]. Self-efficacy mediates the link between frameworks like TPACK and teachers’ intentions to use technology. This reinforces its central role in long-term AI integration [18].

Hypotheses 5 and 6 were not supported, as neither personal relevance nor AI for social good showed a significant association with the behavioral intention to learn AI. This suggests that personal relevance may emerge as a consequence of knowledge rather than a precursor to intention. Teachers only perceive AI as personally meaningful after acquiring a foundational understanding, which reinforces the need for initial mandatory training that builds both competence and relevance awareness. Similarly, AI for social good may be perceived as a relatively abstract or distal consideration compared to more immediate professional concerns. While ethical and societal dimensions are conceptually central to Education for Sustainable Development, behavioral intentions in this context may be more strongly shaped by proximal and pragmatic motivations, such as classroom applicability or workload efficiency.

Hypothesis 7 was supported. Perceived usefulness of AI was positively associated with the behavioral intention to learn AI. This relationship indicates that teachers are willing to learn about AI as long as they perceive these tools as having a direct and beneficial application in their daily work. The relevance of perceived usefulness of AI further suggests that teachers’ intentions may be primarily shaped by pragmatic evaluations of AI’s professional value, particularly in relation to improving classroom practices and student learning outcomes. In practice, to bridge the skills gap, institutions should focus on demonstrating real-world use cases that save time or improve student learning, moving beyond abstract technological theory.

Finally, the model identifies key factors associated with AI learning behaviors, categorized by the resources used (books/articles, training courses, and apps). Behavioral intention to learn AI showed a positive and significant direct path toward all three forms of AI learning behaviors, emerging as a consistent predictor of these activities.

This confirms Hypothesis 8 and underscores the pivotal position of intention as a direct predictor of AI learning behaviors across various modalities, with application use showing the strongest statistical association

Regarding the non-significant roles of AI anxiety and AI for social good, these findings merit further reflection. AI for social good is conceptually central to Education for Sustainable Development (ESD). However, its lack of statistical significance in this model suggests that Spanish in-service teachers prioritize pragmatic motivations (Performance Expectancy) over altruistic goals when deciding to engage in AI training. Similarly, the non-significance of AI anxiety may indicate that the perceived professional urgency to acquire AI skills outweighs the technostress associated with its adoption. To maintain model parsimony and focus on the most robust predictors of behavioral intention, these variables were excluded from the final structural model. However, their conceptual relevance remains, and they should be explored in future studies as the teachers’ AI literacy matures.

The link between social pressure and AI learning behaviors constitutes a relevant finding and highlights this study’s theoretical contribution. In contrast to traditional Theory of Planned Behavior (TPB) findings, social pressure was not significantly related to the general intention to learn. However, it displayed a robust positive association with AI learning behaviors conducted through training courses, pointing toward the role of institutional mandates in shaping formal upskilling activities.

This pattern of results indicates a functional separation between self-initiated motivation and institutionally driven learning behaviors. The behavioral intention to learn AI, guided by self-efficacy, predicts self-directed learning. Meanwhile, the subjective norm directly predicts formal learning (courses), suggesting that teachers often participate in these programs to comply with institutional expectations. In this context, social pressure can be understood as reflecting institutional expectations that facilitate participation in formally structured training activities, rather than directly shaping intrinsic motivation. The prominence of perceived usefulness suggests that teachers’ intentions are primarily anchored in pragmatic evaluations of AI’s direct classroom and workload-related value.

Within the specific landscape of the Spanish educational system, the significant link between social pressure and professional development activities appears to stem from a dual mechanism that integrates organizational expectations with collective professional identity. On one hand, there is a significant institutional incentive system where the administration provides professional credits and career advancement rewards (such as certified professional development credits) for certified training. On the other hand, there is a powerful horizontal influence among colleagues, suggesting that the decision to engage with AI is also driven by the informal professional community. Furthermore, the strong association between perceived ease of use and self-efficacy suggests that, in this context, autonomy is closely linked to reducing the initial technological barrier to AI, which may be perceived as more disruptive than previous digital tools.

From a theoretical perspective, these findings suggest that self-efficacy and social pressure operate through complementary rather than overlapping mechanisms. While self-efficacy supports autonomous motivation and sustained engagement with AI learning, social pressure primarily functions as an external regulatory factor that facilitates participation in formal, institutionally organized training. This interpretation aligns with Self-Determination Theory, which distinguishes between autonomous and controlled motivation. It also aligns with extended TPB frameworks, which allow contextual and organizational factors to directly influence behavior without necessarily shaping intention.

Overall, the findings offer an integrated view of the psychological and contextual factors underlying in-service teachers’ AI learning. They highlight self-efficacy and perceived usefulness as key predictors of autonomous learning, while institutional expectations align more closely with participation in formal training. From a systemic sustainability perspective, AI-related professional learning should not be interpreted merely as technological upgrading, but as an investment in human capital that strengthens the long-term adaptive capacity of educational institutions. Sustainable educational ecosystems depend on teachers’ ability to critically and responsibly integrate emerging technologies into pedagogical practice. In the absence of such distributed professional competence, AI integration risks becoming fragmented, inequitable, or compliance-driven without pedagogical grounding, as critically discussed by Selwyn [59]. These insights inform the design of pedagogically meaningful, confidence-building, and institutionally supported AI-related professional development, reinforcing the importance of teachers’ AI literacy for responsible and sustainable educational practice. In practical terms, AI-related professional development should include progressive, guided modules designed to gradually strengthen teachers’ self-efficacy. Institutions can also reinforce constructive social norms through formal recognition mechanisms and peer-support structures that encourage sustained engagement with AI learning.

From a practical perspective, the results indicate that AI-related professional development for in-service teachers should be structured progressively. It should begin with low-threshold introductory modules that prioritize confidence-building and perceived usefulness over technical complexity. Such training can scaffold teachers’ self-efficacy—a key driver of autonomous learning intention. Finally, institutionally supported courses can serve as entry points, complemented by voluntary and self-directed learning opportunities that foster sustained engagement over time.

Limitations and Future Research Directions

Although the present study offers relevant insights into the mechanisms underlying in-service teachers’ AI learning, several limitations should be acknowledged. Firstly, the sample was composed exclusively of Spanish in-service teachers, which may limit the generalizability of the findings to other cultural or educational contexts. In addition, participants were recruited through voluntary, email-based participation, which may have introduced self-selection bias by favoring teachers with a greater interest in technology or professional development. Future studies could apply the same structural model to different countries or educational levels to compare cross-cultural patterns. Future research could also examine the structural model across relevant subgroups (e.g., teaching level, gender, or years of professional experience) to explore whether contextual or demographic factors moderate the observed associations.

Secondly, the cross-sectional design restricts causal interpretations of the relationships between variables. From a methodological standpoint, it is important to address the potential threats to validity inherent in cross-sectional, self-reported data. The high correlations observed in our structural model may be susceptible to common method variance (CMV), as respondents might seek internal consistency in their answers regarding intentions and behaviors [60]. Furthermore, the issue of endogeneity cannot be fully ruled out; for instance, unmeasured variables such as ‘proactive personality’ or ‘prior digital competence’ could simultaneously reduce AI-related anxiety and increase learning intentions. This possibility is particularly relevant when explaining the ‘intention–behavior gap’ [61], where high intentions do not always translate into action due to these unmeasured individual or structural factors. While our study identifies robust predictors, these should be interpreted as context-dependent associations rather than strictly causal mechanisms. Future research employing longitudinal designs or objective behavioral metrics (e.g., platform logs) would be essential to control for these trait-based omitted variables and to establish more definitive causal paths.

Thirdly, the evaluation of learning and behavioral changes relied primarily on self-report measures (e.g., items F9.1–F9.3). While these indicators offer valuable information on perceived engagement, they lack behavioral validation through objective triangulation, such as usage logs or completion tracking. Consequently, the reliability of the dependent variable may be influenced by social desirability or recall bias. Future research should aim to incorporate objective metrics and data-driven logs to complement self-reported data and provide a more robust assessment of AI learning behaviors. In addition, including more detailed demographic variables in future studies would enhance the generalizability and explanatory power of the proposed model.

Fourthly, while the model explained a substantial proportion of variance in behavioral intention, the explained variance for AI learning behaviors was more modest. This suggests that other unmeasured variables—such as institutional support, access to resources, or attitudes toward generative AI—may also play important roles.

In addition, future studies should examine how key variables such as self-efficacy, self-transcendence, and social pressure interact with teachers’ ethical awareness and sustainability-oriented attitudes toward AI. Mixed-methods approaches combining quantitative and qualitative data may provide richer insights into teachers’ experiences of AI integration. Moreover, further research should explicitly investigate the role of contextual and organizational conditions. This includes technological infrastructure, institutional support, and school leadership, as these factors shape teachers’ opportunities to engage with AI learning. Understanding these conditions is essential to observe how motivation translates into sustained professional practice. These factors may act as enabling or constraining conditions that moderate the relationship between individual dispositions and AI learning behaviors.

Furthermore, the brevity of some scales (such as those for knowledge and self-efficacy) constitutes a limitation regarding the extensive coverage of the construct. Although these items capture the core dimensions of the constructs, future research should consider expanding the measurement breadth to include more specific technical and ethical facets of AI knowledge to reduce potential attenuation effects.

Finally, although the present study identifies a robust model for the entire sample, preliminary analyses revealed significant differences in the baseline levels of certain constructs across educational stages. However, a unified structural approach was prioritized to establish a generalizable framework for the teaching profession. This decision is aligned with the European Framework for the Digital Competence of Educators (DigCompEdu), which establishes a common set of digital skills for all teachers regardless of their level [62]. Furthermore, within the Spanish context, the LOMLOE [63] and subsequent regulations for the Digital Competence of Teachers [64] treat AI literacy as a transversal professional requirement. Future research should employ multi-group methodologies to investigate how specific pedagogical environments moderate the identified structural paths. Additionally, future studies should formally evaluate the instrument’s metric equivalence across early childhood, elementary, and secondary school teachers.

5. Conclusions

Building upon the presented findings, this study provides valuable implications for teacher education, institutional policy, and future research. The results highlight the need for comprehensive strategies that strengthen both intrinsic motivation and institutional support to promote long-term and ethically grounded AI literacy among in-service teachers. This dual approach can ensure that AI integration in education remains ethical, equitable, and aligned with the principles of sustainable development.

These findings can also serve as a foundation for future research and professional development programs aimed at improving teachers’ readiness for AI integration, thereby enhancing the overall sustainability of educational systems. In particular, teacher training initiatives should take into account psychological and contextual factors—such as self-efficacy and social pressure—that have been shown to influence both behavioral intention and AI learning behaviors.

Furthermore, the results underscore the importance of institutional policies and continuous professional development strategies in driving formal AI training. While self-efficacy fosters autonomy and self-directed learning, social pressure remains an effective mechanism for ensuring teacher participation in structured training programs. Such programs are crucial for achieving the ethical and strategic integration of AI into education, as emphasized by Peñafiel-Jurado et al. [65].

In conclusion, the novelty of this study lies in its focus on practicing teachers and the simultaneous examination of multiple cognitive, social, and personal variables—an approach that has been underexplored in existing research. By integrating these dimensions within a structural model, this work contributes to a deeper understanding of how intention and behavior interact in the context of teachers’ professional learning about AI. The model and its findings provide an empirical basis for developing targeted interventions that promote a responsible and sustainable adoption of artificial intelligence in education, contributing to the objectives of Sustainable Development Goal 4 as emphasized by UNESCO [10].

Author Contributions

Conceptualization, I.C., R.G.-C. and M.P.; methodology, R.G.-C. and M.P.; software, R.G.-C. and M.P.; validation, I.C., R.G.-C. and M.P.; formal analysis, I.C.; investigation, I.C., R.G.-C. and M.P.; resources, M.P.; data curation, R.G.-C.; writing—original draft preparation, I.C., R.G.-C. and M.P.; writing—review and editing, I.C., R.G.-C. and M.P.; visualization, I.C.; supervision, R.G.-C. and M.P.; project administration, M.P.; funding acquisition, M.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Generalitat Valenciana (Department of Education, Research, Culture and Sport) through the project NL4DISMIS: Natural Language Technologies to Address Disinformation and Misinformation (CIPROM/2021/021).

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and approved by the Ethics Committee of University of Alicante (UA-2025-09-16, 10/02/2025).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors on request.

Conflicts of Interest

The authors declare no conflicts of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript; or in the decision to publish the results.

References

- Tan, Q.; Tang, X. Unveiling AI literacy in K-12 education: A systematic literature review of empirical research. Interact. Learn. Environ. 2025, 33, 5347–5363. [Google Scholar] [CrossRef]

- Adil, J. AI in Education: A Systematic Literature Review of Emerging Trends, Benefits, and Challenges. Semin. Med. Writ. Educ. 2025, 4, 795. [Google Scholar] [CrossRef]

- Tagare, D.; Karki, T.; Yu, W. K-12 teachers’ ethical competencies for AI literacy: Insights from a systematic literature review. Comput. Educ. 2025, 239, 105435. [Google Scholar] [CrossRef]

- Ilkka, T. The Impact of Artificial Intelligence on Learning, Teaching, and Education: Policies for the Future; European Commission Joint Research Centre (JRC): Luxembourg, 2018; EUR 29442 EN. [Google Scholar]

- Tedre, M.; Vartiainen, H.; Kahila, J.; Pellas, L.; Jormanainen, I.; Valtonen, T. Teaching Machine Learning in K–12 Classroom: Pedagogical and Technological Trajectories for Artificial Intelligence Education. IEEE Access 2021, 9, 110558–110572. [Google Scholar] [CrossRef]

- Bellas, F.; Naya-Varela, M.; Mallo, A.; Paz-Lopez, A. Education in the AI era: A long-term classroom technology based on intelligent robotics. Humanit. Soc. Sci. Commun. 2024, 11, 1425. [Google Scholar] [CrossRef]

- Adams, C.; Pente, P.; Lemermeyer, G.; Rockwell, G. Ethical principles for artificial intelligence in K-12 education. Comput. Educ. Artif. Intell. 2023, 4, 100131. [Google Scholar] [CrossRef]

- Hollands, F.M.; DiPaola, D.; Breazeal, C.; Ali, S. AI mastery may not be for everyone, but AI literacy should be. In Proceedings of the 2024 ACM Virtual Global Computing Education Conference (V.1), Virtual Event, NC, USA, 5–8 December 2024; pp. 60–66. [Google Scholar] [CrossRef]

- Druga, S.; Otero, N.; Ko, A.J. The landscape of teaching resources for AI education. In Proceedings of the 27th ACM Conference on Innovation and Technology in Computer Science Education, Dublin, Ireland, 8–13 July 2022; pp. 96–102. [Google Scholar] [CrossRef]

- UNESCO. AI and Education: Guidance for Policy-Makers; UNESCO: Paris, France, 2021; ISBN 978-92-3-100447-6. [Google Scholar]

- UNESCO. Guidance for Generative AI in Education and Research; UNESCO: Paris, France, 2023; Available online: https://www.unesco.org/en/articles/guidance-generative-ai-education-and-research (accessed on 20 October 2025).

- UNESCO. Artificial Intelligence for Sustainable Development: Synthesis Report; UNESCO: Paris, France, 2019. [Google Scholar]

- UNESCO. Advancing Progress on the Sustainable Development Goals through UNESCO’s AI Initiatives; UNESCO: Paris, France, 2021. [Google Scholar]

- UNESCO. UNESCO AI Competency Framework for Teachers; UNESCO: Paris, France, 2024. [Google Scholar]

- Falloon, G. From digital literacy to digital competence: The teacher digital competency (TDC) framework. Educ. Technol. Res. Dev. 2020, 68, 2449–2472. [Google Scholar] [CrossRef]

- Koehler, M.J.; Mishra, P. What is technological pedagogical content knowledge (TPACK)? Contemp. Issues Technol. Teach. Educ. 2009, 9, 60–70. [Google Scholar] [CrossRef]

- Crompton, H.; Jones, M.V.; Burke, D. Affordances and challenges of artificial intelligence in K-12 education: A systematic review. J. Res. Technol. Educ. 2024, 56, 248–268. [Google Scholar] [CrossRef]

- Celik, I. Towards Intelligent-TPACK: An empirical study on teachers’ professional knowledge to ethically integrate (AI)-based tools into education. Comput. Hum. Behav. 2023, 138, 107468. [Google Scholar] [CrossRef]

- Van Brummelen, J.; Lin, P.; Patton, R.; Long, D. Teaching Artificial Intelligence in K-12: Lessons Learned from AI4K12 Initiatives. Proc. AAAI Conf. Artif. Intell. 2021, 35, 15633–15640. [Google Scholar] [CrossRef]

- Touretzky, D.; Gardner-McCune, C.; Seehorn, D. Machine learning and the five big ideas in AI. Int. J. Artif. Intell. Educ. 2023, 33, 233–266. [Google Scholar] [CrossRef]

- Chiu, T.K.; Ahmad, Z.; Ismailov, M.; Sanusi, I.T. What are artificial intelligence literacy and competency? A comprehensive framework to support them. Comput. Educ. Open 2024, 6, 100171. [Google Scholar] [CrossRef]

- Ng, D.T.K.; Leung, J.K.L.; Chu, S.K.W.; Qiao, M.S. Conceptualizing AI literacy: An exploratory review. Comput. Educ. Artif. Intell. 2021, 2, 100041. [Google Scholar] [CrossRef]

- Reyes, V.C., Jr.; Reading, C.; Doyle, H.; Gregory, S. Integrating ICT into teacher education programs from a TPACK perspective Exploring perceptions of university lecturers. Comput. Educ. 2017, 115, 1–19. [Google Scholar] [CrossRef]

- Tondeur, J.; Aesaert, K.; Pynoo, B.; Van Braak, J.; Fraeyman, N.; Erstad, O. Developing a validated instrument to measure preservice teachers’ ICT competencies. Br. J. Educ. Technol. 2017, 48, 462–472. [Google Scholar] [CrossRef]

- Touretzky, D.S.; Gardner-McCune, C.; Martin, F.G.; Seehorn, D. Envisioning AI for K-12: What should every child know about AI? Proc. AAAI Conf. Artif. Intell. 2019, 33, 9795–9799. [Google Scholar] [CrossRef]

- Marques, L.S.; Gresse von Wangenheim, C.; Hauck, J.C.R. Teaching machine learning in school: A systematic mapping of the state of the art. Inform. Educ. 2020, 19, 283–321. [Google Scholar] [CrossRef]

- Touretzky, D.; Gardner-McCune, C.; Breazeal, C.; Martin, F.; Seehorn, D. A year in K-12 AI education. AI Mag. 2019, 40, 88–90. [Google Scholar] [CrossRef]

- Williams, R.; Park, H.J.; Son, J. Teacher readiness for artificial intelligence integration in the classroom. Br. J. Educ. Technol. 2021, 52, 2419–2433. [Google Scholar] [CrossRef]

- Cohn, C.; Snyder, C.; Fonteles, J.H.; TS, A.; Montenegro, J.; Biswas, G. A multimodal approach to support teacher, researcher and AI collaboration in STEM+C learning environments. Br. J. Educ. Technol. 2024, 56, 595–620. [Google Scholar] [CrossRef]

- Lu, H.; He, L.; Yu, H.; Pan, T.; Fu, K. A study on teachers’ willingness to use generative AI technology and its influencing factors: Based on an integrated model. Sustainability 2024, 16, 7216. [Google Scholar] [CrossRef]

- Choi, S.; Jeon, J.; Jang, Y. Exploring teacher intention to teach AI: Self-determination theory (SDT) and motivation–opportunity–ability (MOA) perspectives. Educ. Inf. Technol. 2025, 30, 24173–24200. [Google Scholar] [CrossRef]

- Sanusi, I.; Ayanwale, M.; Tolorunleke, A. Investigating pre-service teachers’ artificial intelligence perception from the perspective of planned behavior theory. Comput. Educ. Artif. Intell. 2024, 6, 100202. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Venkatesh, V.; Morris, M.G.; Davis, G.B.; Davis, F.D. User acceptance of information technology: Toward a unified view. MIS Q. 2003, 27, 425–478. [Google Scholar] [CrossRef]

- Venkatesh, V.; Bala, H. Technology acceptance model 3 and a research agenda on interventions. Decis. Sci. 2008, 39, 273–315. [Google Scholar] [CrossRef]

- Scherer, R.; Siddiq, F.; Tondeur, J. The technology acceptance model (TAM): A meta-analytic structural equation modeling approach to explaining teachers’ adoption of digital technology in education. Comput. Educ. 2019, 128, 13–35. [Google Scholar] [CrossRef]

- Brislin, R.W. The wording and translation of research instruments. In Field Methods in Cross-Cultural Research; Lonner, W.J., Berry, J.W., Eds.; Sage: Beverly Hills, CA, USA, 1986; pp. 137–164. [Google Scholar]

- Muñiz, J.; Elosua, P.; Hambleton, R.K. International Test Commission guidelines for test translation and adaptation: Second edition. Psicothema 2013, 25, 151–157. [Google Scholar] [CrossRef]

- Kyriazos, T.; Poga-Kyriazou, M. Applied psychometrics: Estimator consideration in commonly encountered conditions in CFA, SEM, and EFA practice. Psychology 2023, 14, 799–828. [Google Scholar] [CrossRef]

- Byrne, B.M. Structural Equation Modeling with EQS: Basic Concepts, Applications, and Programming; Routledge: New York, NY, USA, 2006. [Google Scholar]

- JASP Team. JASP, Version 0.19.3; JASP Team: Amsterdam, The Netherlands, 2024. Available online: https://jasp-stats.org/download/ (accessed on 8 September 2025).

- Eisinga, R.; Te Grotenhuis, M.; Pelzer, B. The reliability of a two-item scale: Pearson, Cronbach, or Spearman-Brown? Int. J. Public Health 2013, 58, 637–642. [Google Scholar] [CrossRef] [PubMed]

- Bollen, K.A. Structural Equations with Latent Variables; John Wiley & Sons: New York, NY, USA, 1989. [Google Scholar]

- Hu, L.-T.; Bentler, P.M. Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Struct. Equ. Model. 1999, 6, 1–55. [Google Scholar] [CrossRef]

- Browne, M.W.; Cudeck, R. Single sample cross-validation indices for covariance structures. Multivar. Behav. Res. 1989, 24, 445–455. [Google Scholar] [CrossRef]

- Kline, R.B. Principles and Practice of Structural Equation Modeling, 2nd ed.; The Guilford Press: New York, NY, USA, 2015. [Google Scholar]

- Wang, Y.-Y.; Wang, Y.-S. Development and validation of an artificial intelligence anxiety scale: An initial application in predicting motivated learning behavior. Interact. Learn. Environ. 2022, 30, 619–634. [Google Scholar] [CrossRef]

- Cheung, G.W.; Rensvold, R.B. Evaluating goodness-of-fit indexes for testing measurement invariance. Struct. Equ. Model. 2002, 9, 233–255. [Google Scholar] [CrossRef]

- Kock, N. Common method bias in PLS-SEM: A full collinearity assessment approach. Int. J. E-Collab. 2015, 11, 1–10. [Google Scholar] [CrossRef]

- Sheeran, P.; Webb, T.L. The intention–behavior gap. Soc. Personal. Psychol. Compass 2016, 10, 503–518. [Google Scholar] [CrossRef]

- Ajzen, I. Theory of planned behavior. Organ. Behav. Hum. Decis. Process. 1991, 50, 179–211. [Google Scholar] [CrossRef]

- Kyriacou, C. Teacher stress: Directions for future research. Educ. Rev. 2001, 53, 27–35. [Google Scholar] [CrossRef]

- Bandura, A. Self-Efficacy: The Exercise of Control; W.H. Freeman and Company: New York, NY, USA, 1997. [Google Scholar]

- Chai, C.S.; Lin, P.Y.; Jong, M.S.Y.; Dai, Y.; Chiu, T.K.F.; Qin, J. Perceptions of and behavioral intentions towards learning artificial intelligence in primary school students. Educ. Technol. Soc. 2021, 24, 89–101. [Google Scholar]

- Li, X.; Jiang, M.Y.-c.; Jong, M.S.-y.; Zhang, X.; Chai, C.-s. Understanding medical students’ perceptions of and behavioral intentions toward learning artificial intelligence: A survey study. Int. J. Environ. Res. Public Health 2022, 19, 8733. [Google Scholar] [CrossRef] [PubMed]

- Herzallah, A.M.; Makaldy, R. Technological self-efficacy and sense of coherence: Key drivers in teachers’ AI acceptance and adoption. Comput. Educ. Artif. Intell. 2025, 8, 100377. [Google Scholar] [CrossRef]

- Dahri, N.A.; Al-Rahmi, W.M.; Almogren, A.S.; Yahaya, N.; Vighio, M.S.; Al-maatuok, Q.; Al-Rahmi, A.M.; Al-Adwan, A.S. Acceptance of mobile learning technology by teachers: Influencing mobile self-efficacy and 21st-century skills-based training. Sustainability 2023, 15, 8514. [Google Scholar] [CrossRef]

- Krismiyati, K. Technology readiness segment analysis of teachers in using mobile-based teaching applications: An Indonesian context. J. Technol. Sci. Educ. 2025, 15, 588–601. [Google Scholar] [CrossRef]

- Selwyn, N. Should Robots Replace Teachers? AI and the Future of Education; Polity Press: Cambridge, UK, 2020. [Google Scholar]

- Podsakoff, P.M.; MacKenzie, S.B.; Podsakoff, N.P. Sources of method bias in social science research and recommendations on how to control it. Annu. Rev. Psychol. 2012, 63, 539–569. [Google Scholar] [CrossRef]

- Sheeran, P. Intention—Behavior relations: A conceptual and empirical review. Eur. Rev. Soc. Psychol. 2002, 12, 1–36. [Google Scholar] [CrossRef]

- Redecker, C.; Punie, Y. European Framework for the Digital Competence of Educators: DigCompEdu; Publications Office of the European Union: Luxembourg, 2017. [Google Scholar] [CrossRef]

- Agencia Estatal Boletin Oficial del Estado. Ley Orgánica 3/2020, de 29 de diciembre, por la que se modifica la Ley Orgánica 2/2006, de 3 de mayo, de Educación (LOMLOE). Boletín Oficial del Estado, 30 December 2020. [Google Scholar]

- Agencia Estatal Boletin Oficial del Estado. Resolución de 4 de mayo de 2022, de la Dirección General de Evaluación y Cooperación Territorial, por la que se publica el Acuerdo de la Conferencia Sectorial de Educación sobre la actualización del Marco de Referencia de la Competencia Digital Docente. Boletín Oficial del Estado, 16 May 2022. [Google Scholar]

- Peñafiel-Jurado, R.; Márquez-Márquez, N.; Guamán-Villa, I. Inteligencia artificial en la educación: Revisión sistemática de perspectivas, beneficios y desafíos en la práctica docente. S. Am. Res. J. 2024, 4, 5–15. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.