Abstract

This paper introduces a novel methodology that integrates 6G wireless Federated Edge Learning (FEEL) frameworks with MAC to PHY cross layer optimization strategies. In the context of mobile edge computing, typically ensuring robust channel estimation within the 6G network use cases presents critical challenges, particularly in managing data retransmissions. Inaccurate updates from distributed 6G devices can undermine the reliability of federated learning, affecting its overall performance. To address this, rather than relying on direct evaluations of the objective function, we propose an AI/ML-assisted algorithm for global optimization based on radial basis functions (RBFs) decision-making process to assess learned preference options.

1. Introduction

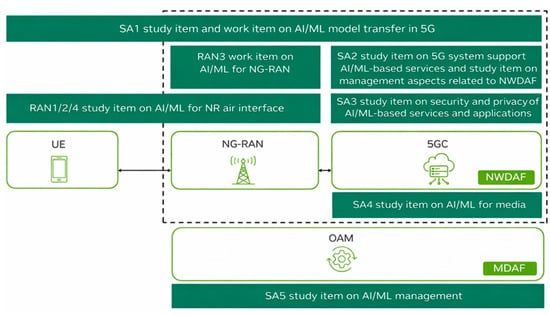

Federated learning (FL), as defined by 3GPP [1] and IEEE [2,3], represents an innovative paradigm in machine learning that addresses the challenges of decentralized data environments [4]. The integration of artificial intelligence (AI) into the 3GPP framework has reached a significant milestone, with ongoing research and specifications aimed at enhancing data utilization and predictive capabilities expected to be featured in 3GPP Release 19 and Release 20. This development underscores the organization’s commitment to leveraging advanced analytical techniques for improving telecommunications standards. Within this context, both the Technical Specification Group for Radio Access Networks (TSG RAN) and the Technical Specification Group for Service and System Aspects (TSG SA) have outlined specific requirements to incorporate AI alongside its companion, machine learning (ML).

1.1. Background and Motivation

Notably, Working Group RAN3 has finalized Technical Report 37.817, which investigates enhancements for data collection relating to New Radio (NR) and Evolved Universal Terrestrial Radio Access (ENDC). This report highlights three primary use cases where AI and ML can provide meaningful solutions. Starting with Network Energy Savings, it addresses strategies such as traffic offloading, modifications to coverage, and cell deactivation to reduce energy consumption. Moreover, a further focus on Load Balancing supports the implementation techniques to optimize load distribution across cells or groups of cells in multi-frequency and multi-radio access technology (RAT) environments, thereby improving overall network performance based on predictive analytics. Finally, a focus on Mobility Optimization will practically apply a robust network performance during user equipment (UE) mobility events by selecting optimal mobility targets grounded in predictive assessments of service delivery. These focal points illustrated a strategic approach towards evolving network management through intelligent data-driven decision-making.

The FL architecture is supported by the NWDAF in the crucial 3GPP standard method [5]. It efficiently collects data from user equipment, network functions, Operation and Maintenance (OAM) systems within the 5G Core, Cloud, and Edge networks. This wealth of data is then utilized to trigger powerful 5G analytics, enabling better insights and actions to enhance the overall end-user experience. In any case the Federated Edge Learning (FEEL) shall comply with the existing NWDAF framework and 5GS framework as specified in [6,7,8,9]. Artificial intelligence (AI) and machine learning (ML) over NWDAF have become pivotal technologies driving advancements in wireless communication networks. These cutting-edge tools offer innovative solutions to enhance the efficiency, scalability, and performance of modern network infrastructures. Recognizing their potential, 3GPP has integrated AI/ML technologies into the Radio Access Network (RAN) as part of Release 18, marking the beginning of 5G-Advanced [10]. The inclusion of AI/ML in Release 18 underscores its importance in advancing the capabilities of 5G networks.

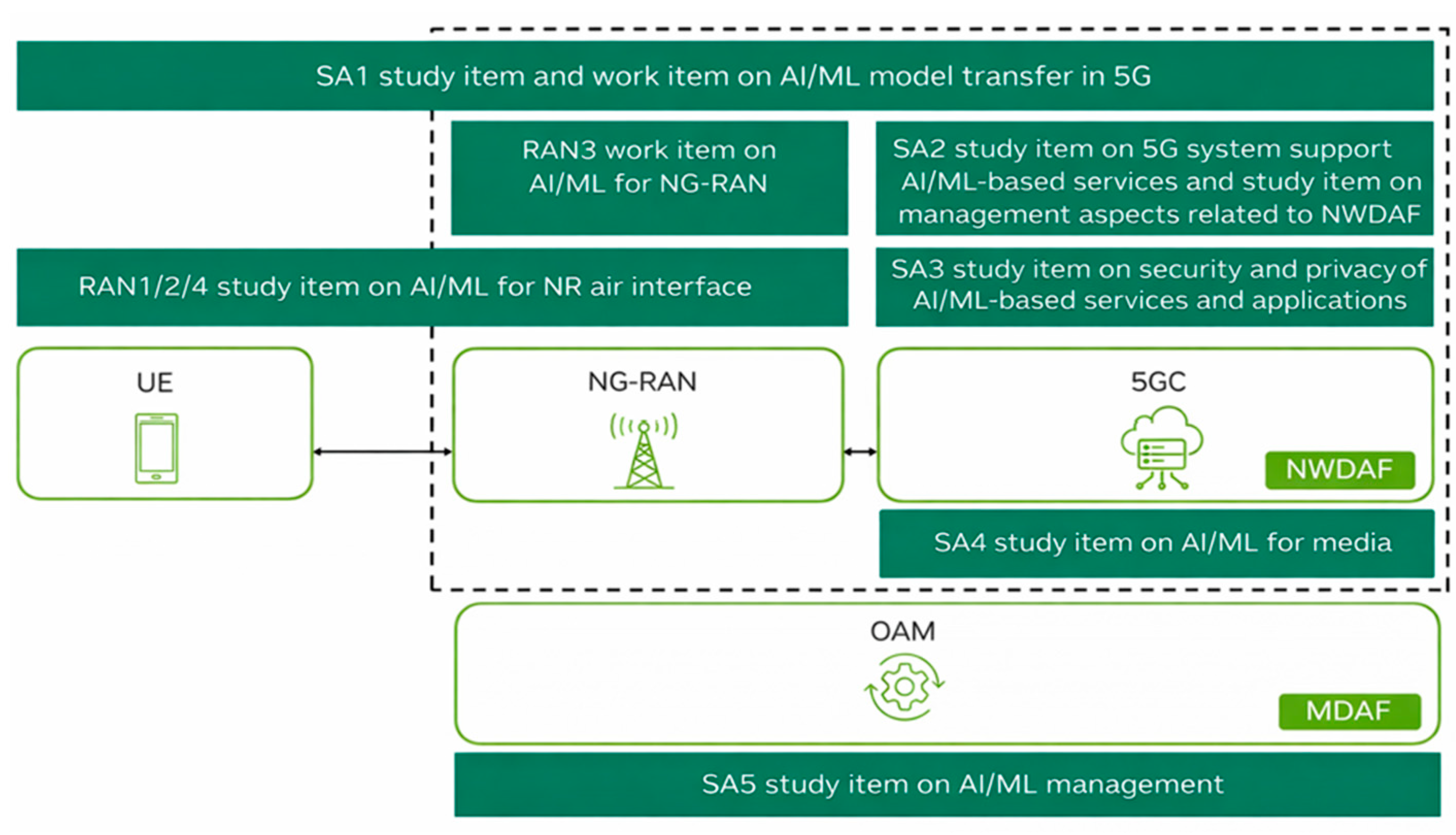

The Management Data Analytics Function (MDAF) serves as a fundamental component for enabling network automation and intelligence by processing data on network conditions and service events to generate detailed analytics reports and utilizing data from various network functions (e.g., NWDAF) and entities (e.g., 6G NB or 5G gNB). MDAF aims to deliver comprehensive, end-to-end or cross-domain analytics [11]. Efforts within 3GPP to integrate AI/ML and advanced data analytics into 5G system design, prior to Release 18, have established a robust framework for further development in 5G-Advanced. However, release 18 incorporates an extensive range of studies and work items related to AI/ML, involving contributions across multiple 3GPP working groups, thereby paving the way for enhanced capabilities in network optimization and intelligence, as indicated in Figure 1 [11].

Figure 1.

5G-Advanced AI/ML in 3GPP Release 18.

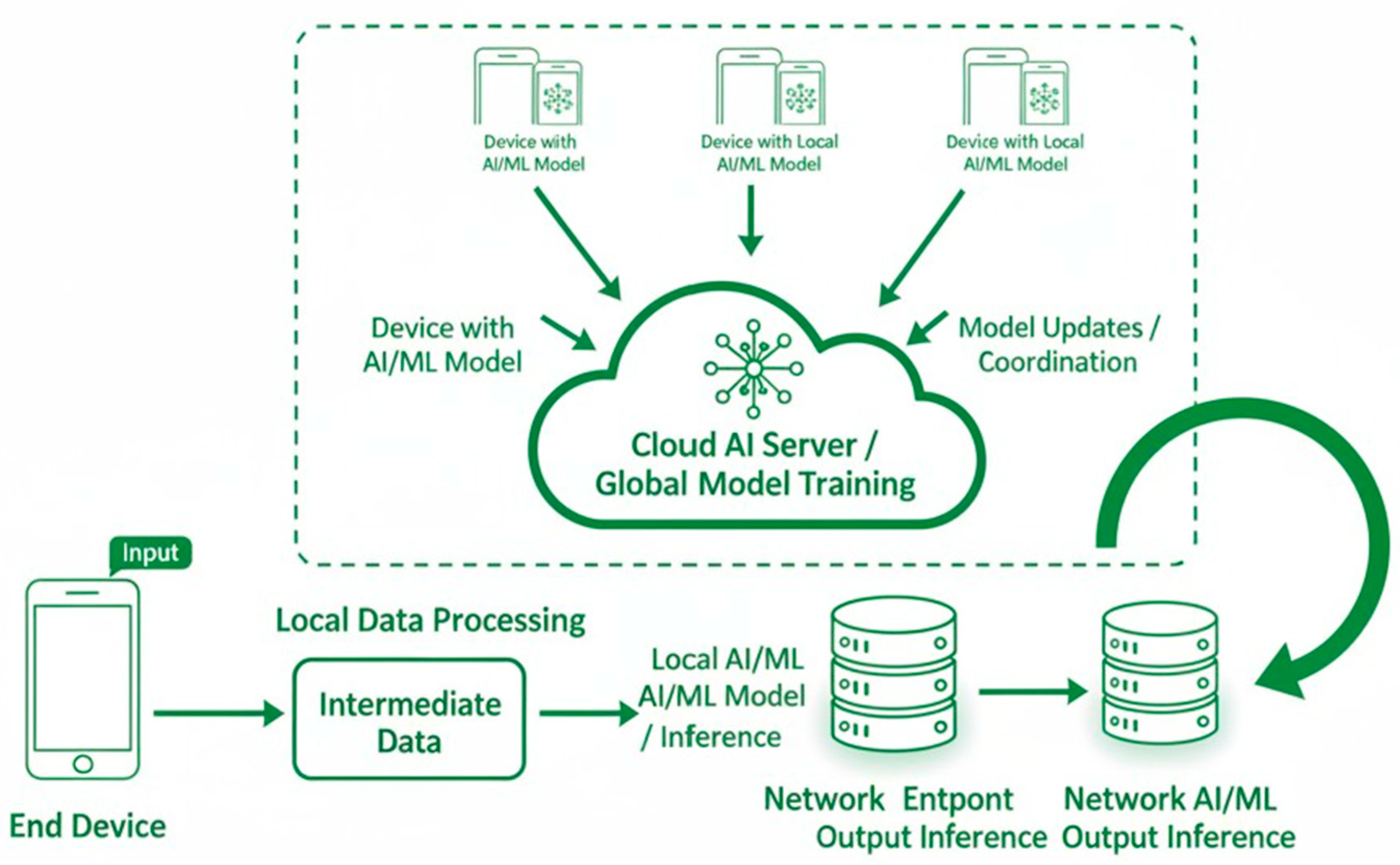

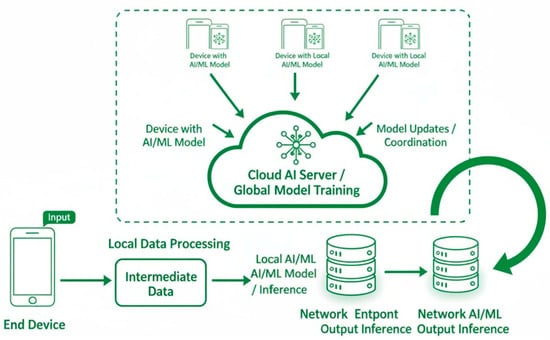

AI/ML technologies play a crucial role in mobile devices within the 5G ecosystem, supporting functionalities such as image recognition, speech processing, and video analysis. However, preloading all possible AI/ML models onto user equipment (UE) is impractical. As a result, models often need to be downloaded dynamically aligned with specific requirements. Additionally, some UEs may lack the computational resources needed to perform inference operations locally, necessitating the offloading of these tasks to the 5G cloud core or edge infrastructure. Furthermore, collaborative training of global AI/ML models across multiple entities in the 5G framework requires efficient mechanisms for sharing training data. The growing demand for transferring both AI/ML models and data introduces a new category of network traffic to be accommodated within 5G systems. 3GPP SA1 group, tasked with defining service and performance requirements for 3GPP systems, initiated a study in Release 18 to explore use cases and establish the requirements for AI/ML model transfers [12]. This study identified three key types of AI/ML operations, as shown in Figure 2 [11].

Figure 2.

Federated AI/ML over 5G/6G 3GPP networks.

The first type, i.e., AI/ML Operation Splitting, divides tasks between endpoints. Privacy-sensitive or latency-critical operation components are retained within the UE, while computationally intensive tasks are offloaded to the network endpoints. The second type, i.e., Model and Data Distribution, focuses on enabling adaptive model downloading from network endpoints to UEs, as per specific need. The third type, i.e., Distributed or FL, allows UEs to perform partial training on local datasets, with the use of a central entity to properly aggregate these results towards the formation of a unified global model [13]. The study identified potential service requirements and performance metrics, including those related to training, inference, distribution, monitoring, prediction, and management of AI/ML models within the 5G ecosystem. Following this initial exploration, 3GPP SA1 launched a subsequent work item in Release 18 to define normative service and performance requirements, mainly building on the findings of the study to address the evolving demands of AI/ML integration into the 5G systems [13]. Moreover, the 3GPP study in [14] aimed to lay the groundwork for leveraging AI/ML to enhance the air interface, addressing multiple critical dimensions. Key areas of focus included the definition of AI/ML algorithm deployment stages, the determination of the required degree of collaboration between the gNB and UE, the identification of the necessary datasets for AI/ML model training, validating and testing purposes, and, finally, managing the entire AI/ML model life cycle. These efforts are essential for ensuring the effective and efficient AI/ML technologies integration into the current and future network architectures, setting the pace and the footprint stage towards AI/ML-based 6G.

1.2. The International Literature Survey

Unlike traditional centralized and aggregated data learning approaches, the FEEL-based AI/ML enables multiple entities, often referred to as clients, to collaboratively train a shared model while ensuring their data remains localized. This approach is particularly significant in scenarios where data privacy, security, and regulatory compliance are of critical concern, such as in the mobile and wireless telecommunications sector scenarios. The distinguishing characteristic of FEEL lies in its approach to data heterogeneity. In decentralized configurations, data samples across clients are not guaranteed to be independently and identically distributed (non-IID). This stands in contrast to centralized models, where uniform data distribution is a common practice or at least assumption. This inherent heterogeneity of FEEL systems poses unique challenges and necessitates tailored algorithms to ensure effective learning across diverse data distributions. A key motivation for FEEL is its potential to address data minimization and optimization challenges, especially in fields where data privacy, bandwidth efficiency and throughput optimization are critical. By training models locally on client devices or nodes and sharing only model parameters—such as weights and biases, FEEL minimizes the need for raw data exchange [15]. This not only reduces privacy risks but also mitigates bandwidth constraints, making it an appealing solution for large-scale, distributed systems like the current 5G and future 6G telecommunications networks.

At the core of the FEEL paradigm is a collaborative training process that iteratively combines local computations into a global model. Each client trains a model on its local dataset and periodically transmits the updated parameters to a central aggregator. The aggregator then consolidates these updates to refine the global model, which is subsequently shared back with the clients. This iterative process continues until the model achieves a predefined level of performance [16]. The potential of FEEL extends far beyond data privacy. It aligns seamlessly with the growing emphasis on distributed computing and edge intelligence in modern network architectures. For example, in 5G/6G telecommunication networks, FEEL can facilitate real-time optimization of network resources, enhance service delivery, and drive innovation in predictive maintenance and user behavior analytics [17]. However, implementing FEEL in practice introduces several challenges, including communication overhead, computational constraints at edge devices, and the need for robust algorithms to handle non-IID data. Addressing these challenges requires interdisciplinary efforts that span machine learning, distributed computing, and network optimization [18].

Recent advances in FEEL have explored techniques to improve communication efficiency, such as model compression and adaptive update mechanisms. Additionally, privacy-preserving technologies, including secure aggregation and differential privacy, are being increasingly integrated into FEEL frameworks to ensure the sensitive information protection throughout the training process [19]. In 6G networks and in mobile communications, general FEEL holds promises for transforming network management and optimization. By leveraging localized data at various network nodes, operators can enhance coverage, capacity, and user experience while adhering to stringent privacy regulations. Furthermore, FEEL aligns with the broader trend toward 6G networks, which emphasize edge intelligence, data efficiency, and distributed learning [20]. The significance of FEEL is underscored by its applicability across a diverse range of domains, including healthcare, finance, and industrial automation.

In the international literature, several different algorithms for federated optimization have been already proposed. Deep learning training often utilizes variations of Federated stochastic gradient descent (FedSGD), where gradients are computed on a randomly selected portion of the dataset and then used to update the model through a single step of gradient descent [21]. Another algorithm is the Federated Averaging (FedAvg) which builds upon the concept of FedSGD by enabling local nodes to conduct multiple updates on their respective local datasets before sharing their parameters with the central server. Unlike FedSGD, where the exchanged information consists of gradients calculated after a single update, FedAvg directly aggregates the locally updated model weights [22]. The fundamental insight underpinning this approach is that, when local models originate from identical initial conditions, averaging their gradients in FedSGD is mathematically analogous to averaging the model weights. However, FedAvg goes further ahead by leveraging the averaged weights from locally tuned models, a process that maintains—if not enhances—the performance of the aggregated global model. This enhancement arises because the averaging process effectively captures the learning progress made by each node, even when working with heterogeneous local data distributions.

FL with dynamic regularization (FedDyn) addresses a critical challenge—handling heterogeneous data distributions across devices [23]. When device datasets are non-identically distributed, minimizing individual device loss functions does not necessarily align with minimizing the overarching global loss function. FedDyn introduces a dynamic regularization mechanism to adjust each device’s local loss function, ensuring that the aggregated modifications contribute effectively to the global loss minimization. By aligning local losses with the global objective, FedDyn becomes robust to certain varying degrees of heterogeneity, enabling devices to locally perform full optimization without sacrificing overall model convergence. Dynamic Aggregation Using Inverse Distance Weighting (IDA) is another innovative adaptive technique designed to address challenges associated with unbalanced and non-independent identically distributed (non-iid) data in FL environments. This method dynamically assigns weights to clients based on meta-information, mainly focusing on both the robustness and efficiency of model aggregation improvement [24]. The key principle of IDA lies in leveraging the distance between model parameters. By using this distance as a weighting factor, the method reduces the influence of outlier models, which could or might arise due to significant data distribution differences or irregular client behavior. This strategy not only mitigates the negative impact of outliers but also enhances the global model’s convergence speed by ensuring that updates from more representative or reliable clients carry greater significance in the aggregation process. The integration of IDA into federated frameworks demonstrates promising results, as evidenced by improved model accuracy and faster convergence rates across various experimental setups. This method represents a significant step toward more adaptive and resilient FL systems, where the quality and relevance of client contributions are dynamically optimized.

Combining the FL approach with the aid of optimization algorithms has also been studied in the international literature. For example, the study in [25] addresses an FL scenario operating over wireless channels, explicitly considering coding rates and packet transmission errors. The communication channels are modeled as packet erasure channels (PEC), where the probability of packet erasure is influenced by factors such as block length, coding rate, and signal-to-noise ratio (SNR). To mitigate the adverse effects of packet erasure on FL performance, two optimization strategies are introduced where the central node (CN) either utilizes past local updates or reverts to previous global parameters in instances of packet loss. The following study in [26] addresses the critical challenge of unreliable communication in decentralized FL by introducing the Soft-DSGD algorithm. Unlike traditional and legacy FL approaches, which depend on a central node CN for parameter aggregation, decentralized methods allow devices to exchange model updates directly. However, existing frameworks for decentralized learning often assume idealized conditions with perfect communication among devices. In such scenarios, devices are expected to reliably exchange information, such as gradients or model parameters, without any loss or error. Unfortunately, real-world communication networks prove to be different and are indeed rarely that reliable, as they are susceptible to issues like packet loss and transmission errors. Thus, ensuring communication reliability often comes at a significant cost. Moreover, to the previous analysis in [26], authors in [27] presented a robust solution for decentralized learning in dynamic and unreliable wireless environments. The proposed approach is specifically tailored for decentralized learning in wireless networks characterized by random, time-varying communication topologies. In these networks, participating devices may experience communication impairments, and some devices can become stragglers—failing to meet computational or communication demands—at any point during the training process. To mitigate the impact of these challenges, the algorithm incorporates a novel consensus strategy by leveraging time-varying mixing matrices that dynamically adjust based on the instantaneous state of the network. By adapting to the current network topology, the algorithm ensures robust communication and improves the overall efficiency of the learning process.

Based on the previous literature review, our paperwork is motivated by the observation that retransmission mechanisms are nowadays the common deterioration scenario to many modern wireless communication networks based on standards like 3GPP 5G-Advanced gNBs and IEEE WiFi. However, while extensively explored in traditional communication systems, the application of Hybrid Automatic Repeat Request (HARQ) retransmission in distributed learning remains relatively under-researched. Indeed, the paperwork in [28] presents a statistical quality-of-service (QoS) analysis for a block-fading device-to-device (D2D) communication link within a multi-tier cellular network, comprising a macro base station (BSMC) and a micro base station (BSmC), both operating in full-duplex (FD) mode. Effective capacity (EC) is computed for the D2D link, assuming no channel state information (CSI) at the transmitting D2D node, which operates at a fixed transmission rate and power. The communication link is modeled as a six-state Markov system under both overlay and underlay configurations. To enhance throughput, the study incorporates HARQ and truncated HARQ schemes, along with two queue models based on responses to decoding failures. Simulation results reveal superior self-interference cancellation at BSmC and BSMC in FD mode enhancing EC. However, to our knowledge, there is not any similar analysis in FL 6G networks with multiple collaborative devices.

The paper in [29] delves into the complex distributed intelligence landscape, critically analyzing key research advancements in the field; however, the HARQ retransmissions MAC (Layer 2) importance is not fully exploited. Moreover, a semantic-aware HARQ (SemHARQ) framework for robust and efficient transmission was introduced in [30]. A multi-task semantic encoder enhances semantic coding robustness, while a feature importance ranking (FIR) method prioritizes critical feature delivery under constrained channel resources. Additionally, a distortion evaluation (FDE) network novel feature detects transmission errors and supports an efficient HARQ scheme simply by retransmitting corrupted features with incremental updates; however, the important MAC HARQ retransmissions for cooperative FL devices is not mentioned nor studied.

1.3. Paper Contribution

The closest HARQ analysis for FL exists in [31], where a FEEL framework to address the challenges posed by unreliable wireless channels is introduced, utilizing gradients from local devices as being divided into packets and subject to packet error rates (PERs). Unreliable transmissions introduce bias between the actual and theoretical global gradients, adversely affecting the model training. Proper mathematical analysis evaluates the impact of PER on convergence rates and the communication cost while an optimized device retransmission selection scheme is proposed based on a classical convex optimization obtained solution through the Karush–Kuhn–Tucker (KKT) condition, managing to balance the convergence performance versus the communication overhead. The paper derives the optimal retransmission strategy to enhance model training efficiency and provides an analysis framework of its effectiveness.

The motivation of our paperwork is to examine the implications of retransmission strategies on distributed learning, while focusing on balancing the dual objectives of reliability (throughput) and timeliness, in order to optimize performance in diverse communication environments. Unreliable wireless channels could fundamentally challenge 6G FEEL networks since gradient delivery is constrained not only by packet error rates but also by stringent timeliness requirements. Existing HARQ-aware FL analyses, notably in [31], focus primarily on retransmission reliability and convergence under packet error probability, implicitly assuming that delayed yet successful transmissions remain equally valuable. However, this assumption neglects the concept of eventual throughput, i.e., the effective contribution of information that arrives after its learning utility has expired. In dynamic 6G environments with fast model evolution, excessive retransmissions can render gradients stale, introducing implicit learning inefficiency that is not captured by reliability-only metrics. Our paper studies the challenges of the unreliable wireless channels by considering the timeliness impact of data transmission, as per [31], but further improved the subsequent analysis simply by including the concept of “eventual throughput”. In certain scenarios, prioritizing timeliness over reliability might be a desirable trade-off.

To optimize the performance of FL over unreliable faded wireless channels, we are in favor of the paper analysis in [32] where the decentralized stochastic gradient descent (DSGD) solution to large-scale AI/ML problems in ideal communication D2D topologies is activated, thus guaranteeing the convergence to optimality solutions under the assumptions of convexity and connectivity. In our opinion the DSGD algorithm is a superior alternative to the classical convex optimization approach using KKT for large-scale FEEL under fading wireless channels. While KKT-based algorithms provide an elegant framework for finding optimal solutions in convex problems, their reliance on centralized computation and global knowledge of constraints limit their applicability to large-scale decentralized environments. In such systems, the dynamic and distributed nature of data across devices, combined with the unpredictability of fading wireless channels, poses significant challenges to centralized KKT-based methods. DSGD excels in these environments since it enables local updates on individual devices, which are then aggregated through peer-to-peer communication. This solution reduces the need for centralized control, making DSGD scalable to large networks with many devices. Furthermore, DSGD is robust to communication impairments caused by fading channels, as it can operate effectively with partial or asynchronous updates, mitigating the impact of packet loss or delays that often hinder KKT-based approaches. Finally, KKT-based solutions typically involve solving complex optimization problems that require significant computational resources and are sensitive to changes in network conditions. DSGD, on the other hand, employs stochastic updates, allowing devices to significantly compute gradients on smaller data subsets, by reducing computation and energy requirements. This makes DSGD particularly well-suited for FEEL resource-constrained devices in FEEL settings, where the iterative nature of DSGD ensures gradual convergence even in non-ideal conditions. The algorithm dynamically adapts to variations in the wireless channel conditions by integrating local updates and employing mixing matrices or weights that account for communication reliability. This adaptability is critical for maintaining performance in environments with time-varying channel quality, where centralized KKT-based methods struggle to maintain consistency.

In general, optimizing HARQ retransmissions under the constraints of unreliable wireless channels with fading conditions presents a significant challenge due to the dynamic nature of the environment and the processing load required. The constraints and variables involved in the optimization process change more rapidly than the feedback rate, i.e., the mechanism with which the system can provide updates. In the context of 6G networks, the FEEL paradigm, which involves many devices collaborating in a decentralized manner, adds further complexity to this optimization task. Traditional global optimization techniques are focusing on finding the global min/max of a function, even when its analytical expression is unavailable but can be estimated. However, such estimations are often computationally expensive, particularly in the context of wireless networks like 5G and 6G with critical real-time processing. A promising development in this domain is the adoption of techniques based on general radial basis functions (RBFs) which demonstrate significant potential in tackling global optimization problems, especially for partially known functions [33]. The effectiveness and strength of RBF methods lie in their ability to approximate complex functions effectively, providing a practical way to navigate optimization landscapes where explicit mathematical formulations are infeasible. In the specific context of 6G networks, RBFs have facilitated advancements in federated edge computing and learning [34]. These methods enable efficient optimization by leveraging the decentralized nature of FL, distributing the computational load across edge devices while accounting for the unreliability of wireless channels. By approximating the cost and utility functions with RBFs, it becomes possible to make near-optimal decisions in real time, despite the rapidly changing network conditions [35].

The RBF approach offers distinct advantages over the DSGD algorithm for HARQ retransmissions global optimization in large-scale FEEL under fading wireless channels. Unlike DSGD, which is iterative and dependent on stochastic updates, RBF methods construct surrogate models that approximate the underlying cost function. This allows RBF to evaluate complex, partially known functions with fewer iterations, making it more computationally efficient for resource-constrained FEEL scenarios. Another key advantage is the ability of RBF to adapt to limited and noisy feedback from the network, reducing dependency on gradient information that may be unreliable in fading wireless environments. While DSGD relies on consistent communication among devices for gradient updates, RBF can operate effectively with sparse or incomplete data, leveraging its interpolation capabilities. This flexibility makes them particularly suitable for HARQ retransmissions in FL scenarios, where the communication and computation interplay is demanding careful consideration. Several recent studies investigate FL in IoT and cross-domain environments, focusing on service provisioning, trust establishment, authentication, and cross layer orchestration. While these works demonstrate the broad applicability of FL in distributed systems, they do not address MAC layer HARQ dynamics, non-convex retransmission optimization, or learning-driven PHY/MAC adaptation. The present work complements these studies by explicitly modeling and optimizing the interaction between FL and HARQ mechanisms at the MAC layer. Hence, to our knowledge, there is not any recent study to address the challenge of minimizing HARQ retransmission delays, while maximizing data reliability in 5G or 6G networks, by using the RBF global optimization approach.

In this work, we propose a methodology based on RBF preference learning to optimize key parameters of an FL system. It is important to note that the proposed approach is not an FL algorithm itself, but rather an external optimization framework applied to a model of the FEEL system. The method aims to tune specific system parameters to improve performance, such as convergence speed and resource efficiency, without modifying the underlying learning protocols. This distinction is central to the contribution of the paper: while conventional FEEL research focuses on the design of aggregation rules, model updates, or communication-efficient protocols, our work demonstrates how an external optimizer can leverage system-level models to guide parameter selection and enhance overall performance. Our paperwork scope is to take an optimal HARQ retransmission global decision by selecting specific variable values that yield the most desirable outcomes. By enabling adaptive decision-making and reducing processing overhead, HARQ retransmissions provide a robust framework for addressing the complexities of 6G networks and FEEL environments. Ultimately, this approach represents a significant step forward in achieving efficient and scalable HARQ retransmission optimization under challenging wireless channel conditions.

As a summary, our paper contributes to prior paper research work by proposing to explicitly incorporate eventual throughput into the FEEL–HARQ interaction, revealing a fundamental reliability–timeliness trade-off that governs learning performance under fading wireless channels. By accounting for latency-induced obsolescence of updates, the proposed framework more accurately captures the practical learning dynamics of decentralized 6G systems, where delayed yet reliable updates may lose their learning relevance. The analysis demonstrates that prioritizing reliability through aggressive HARQ retransmissions is not universally optimal; instead, controlled information loss can, in many regimes, improve global learning efficiency. To operationalize this trade-off, our paper introduces an RBF-based global optimization framework that optimizes key FEEL system parameters directly at the communication–learning interface. Rather than modifying the FL algorithm itself, RBF optimization is employed to tune HARQ-related control variables, including the average retransmission index , the retransmission–latency trade-off factor λ, packet error probability (PER), and the resulting latency inflation factor. By constructing a surrogate model of the non-convex gain–cost function that jointly captures retransmission-induced reliability gains and latency penalties, the RBF approach enables efficient, scalable, and feedback-driven optimization under unreliable channel conditions. This perspective extends beyond [31,32] by moving from reliability-centric retransmission control to learning-aware, system-level optimization, aligning communication-layer decisions with learning relevance and enabling more robust and scalable FEEL operation in 6G networks.

1.4. Paper Organization

The remainder of the paper is organized as follows. Section 2 presents the theoretical framework, including the modeling of HARQ retransmissions, the derivation of packet error probability, and the definition of retransmission gain and latency inflation factors in FEEL environments. Section 3 introduces the proposed RBF-based optimization approach, detailing its integration with the HARQ-FEEL system, the preference learning mechanism, and the formulation of the cost function. Section 4 provides a comprehensive discussion of the simulation setup, key parameters, and performance comparison with benchmark algorithms, including KKT, DSGD, and SGD, highlighting system-level outcomes such as retransmissions, PER, latency, and training efficiency. Finally, Section 5 concludes the paper, summarizing the main contributions, key findings, and potential directions for future research in adaptive HARQ optimization under dynamic wireless conditions.

2. The Cross Layer Optimization Model

Modern 5G and potential 6G services operate exclusively on IP-based technology. In this framework, IP service packets are segmented at the RLC/MAC layer into smaller MAC segments (i.e., transport blocks, Trblk), which are then allocated to scheduling blocks (SBs) for transmission over the air interface resources. Each MAC Trblk packet must be accepted into several retransmissions across the air interface with several other packets in parallel, before the next group of packets can begin transmission, adhering to a Transmission Time Interval (TTI) duration depending on the sub-carrier spacing. On the uplink, multiple MAC packets are queued at device (i.e., user equipment (UE)) transmitter), awaiting scheduling and mapping onto SBs. Upon reaching the edge server receiver, these packets are acknowledged via a new UL granted packet over PDCCH scheduling grant.

Following the analysis in [31] an FL system is considered with one edge server (i.e., a 6G node) and k = 0, 1, …, K connected devices. Each single device out of the k devices stores data for transmission and the total amount of data in the entire edge server system can be represented as . The uploaded data rate for each of the k devices in the FL system, during the training period τ, is defined as or

where , is the transmitted UL power of device k, is the channel power gain between the device and the edge server, and N0 is the noise power over the whole bandwidth B. Consequently ≈ Rk/τ assuming negligible overhead bits during transmission.

In the context of our mathematical analysis, we consider IP packets that are segmented at the upper layers and processed at the MAC layer. The MAC scheduler acts as a single edge server, distributing in the downlink and receiving in the uplink packets across multiple resources. These resources, termed channels in our model, correspond to the scheduling blocks (SBs). For simplicity, the analysis assumes that there are m parallel channels in the queue model. The IP packet’s Trblk fragments are stored in a finite-length queue before scheduling. The queue is considered empty when there is n < m packets in the system, and the number of occupied resources is less than the maximum m channels available on the air interface; otherwise, any additional IP MAC Trblk fragments are held in the queue. The arrival of IP packets for both uplink and downlink follow a Poisson distribution with an average overall arrival (service dependent) input rate (also called intensities) as IP packets/s. Due to the PDCP, RLC and MAC protocols, the IP packets are fragmented to MAC Trblk and control symbols are added to each packet before the information is sent, leading into MAC Trblk transmission information intensities MAC information packets/s.

The uplink and downlink MAC Trblk packet transmissions are related to the process of MAC HARQ where the base station receives an information packet and determines whether it is correctable or not. For each non-correctable information packet, the MAC sends a negative acknowledgment (NACK) report. The intensity of these NACK reports is denoted as (NACK packets/s) while the intensity of retransmissions (retransmitted packets/s after NACK indication) is denoted as . The intensity of positive acknowledgments sent from the uplink or downlink path is denoted as . The MAC packet feedback is informed via a feedback link of HARQ acknowledgment packets (including the positive acknowledgments and the negative acknowledgments) with an intensity of acknowledgment packets/s and the intensity of correctable received MAC packets, to be forwarded to upper protocol layers, is denoted as . The overall input (uplink or downlink) MAC transmission intensities is then declared as (packets/s). Based on 3GPP layer 2 MAC functionality, when an information packet has been retransmitted K times and is still corrupted, retransmission stops and the corrupted MAC packet is then forwarded to receiving RLC/PDCP layers with the intensity .

The service time for each channel is assumed to be constant, reflecting minimal variation in transmission delays due to minor processor load fluctuations. Transit time effects are excluded from this analysis because the MAC scheduler operates continuously, ensuring a seamless scheduling process without interruptions or transit delays. This assumption allows for a simplified yet practical model of the 5G/6G MAC layer operation and its scheduling dynamics. For queue equilibrium, mathematical analysis considers always that the system operates under the condition m > k.

2.1. The Average HARQ Retransmissions

Let us define the probability of n MAC packets in both queue and in service (scheduled) at a given time τ, the probability that no more than n MAC packets exists in the system model at given time τ and finally the probability that no more than zero packets exist in the queue as long as m MAC packets exist in the server at the beginning of unit of time. For a constant service time we assume the service time as the typical unit of time. Then the probability that specifically MAC packet exists in the system at the unit of time equals [36,37]:

The probability of non-existent packets in the buffer (i.e., the condition that there are n < m occupied channels over the air interface), named as non-delay probability P0, is given by [37]:

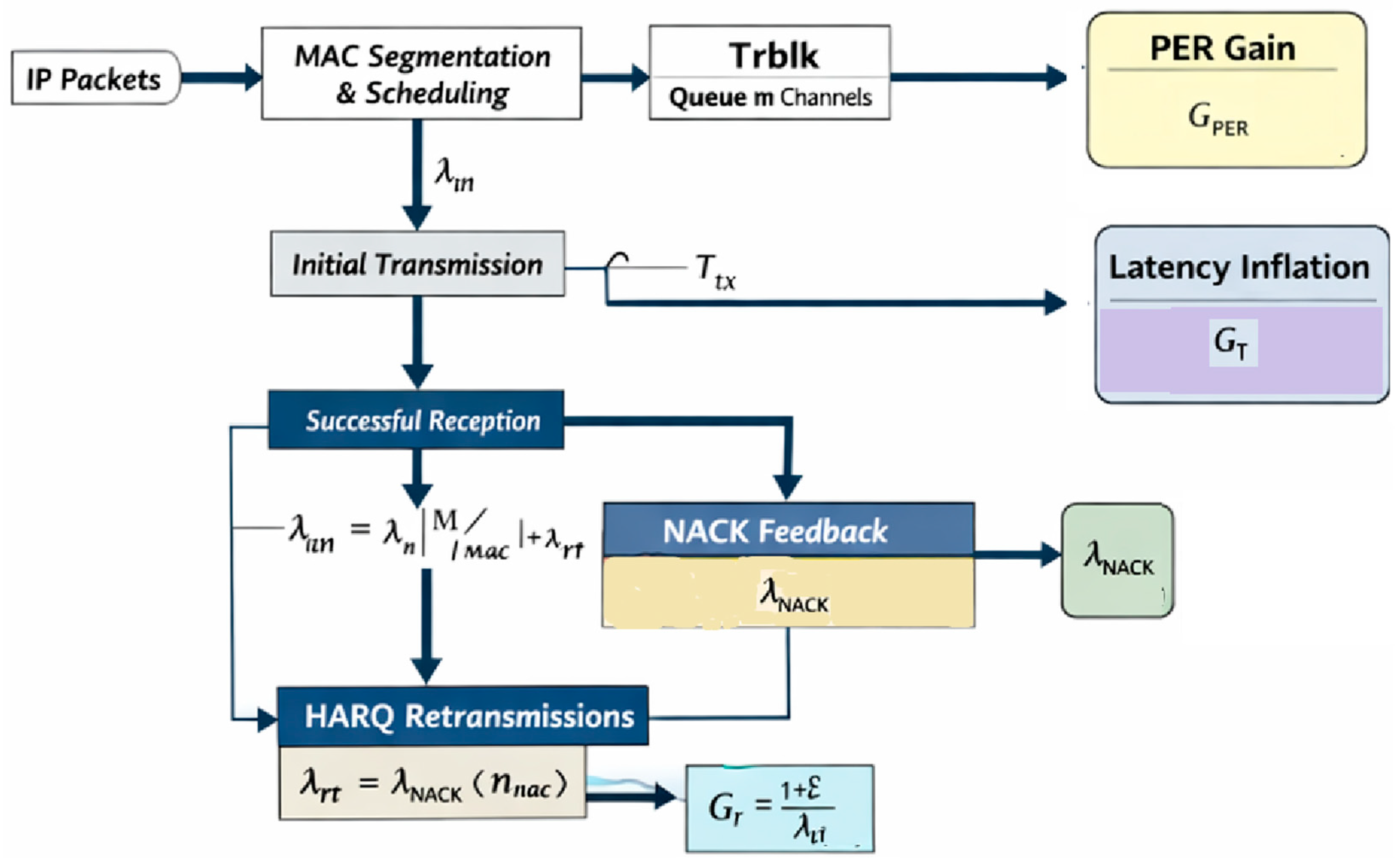

In 5G/6G Layer2 MAC scheduler, scheduling decisions are mostly restricted by multiple typical constraints such as the QoS profile, the radio link quality (i.e., SINR) CQI reports, BLER/HARQ retransmissions and UE uplink buffer sizes BSR (signaled to the edge server using available uplink resources and procedures) [38,39,40]. In Figure 3 a consecutive packet flow conditional analysis is illustrated to clarify our further mathematical analysis on the packet transmission/retransmission cases. A service produces IP information packets with a rate (intensity) of (IP packets/s). An IP information packet of variable bits per packet and average bits per IP packet is considered to be segmented into average number of MAC packets per IP information packet, where each MAC packet has variable length (bits per MAC packet), containing a fixed number of overhead bits per packet [37]. For overall MAC overhead, the total average MAC transmitted bits will be and the average MAC transmission intensity (MAC packets/s):

Figure 3.

The 3GPP based 5G/6G networks cross layer optimization model.

Wireless channels are generally considered to be unreliable implying that, due to the uplink power limited conditions, the uplink channel errors need to be considered for the whole uplink transmission during the AI/ML training, where each Trblk transmission has redundant CRC encoding for error detection and HARQ retransmission as per 5G/6G. Average successful packet delivery in the transmission process is expected to have retransmissions over HARQ [38,39,40], contributing to an increased transmission delay. The corrupted packets in the transmission process are uncorrelated between each other. In this analysis the Code Block Groups (CBGs) of LDPC coding is not considered, leaving the analysis to a simpler approach, though practical and realistic for many vendor equipment solutions in the sub-6 GHz band of operation, with existing vendor proprietary features based on single Code-Word transmission based on packet error rate (PER) performance. To conclude, the PER expression for kth device SINR should be assessed. Indeed, for a single MAC Trblk packet transmission, the probability of successful delivery depends on whether the signal quality is sufficient to meet the decoding requirements, often defined by a Modulation & Coding (MCS) decision threshold SINRthr, which in turn depends on a BLER (%) threshold. Hence a packet is successfully decoded if the received SNR exceeds a threshold, i.e., SNRk ≥ SINRthr. The average number of retransmissions nmac is a function of the MAC PER. Any initial uplink MAC Trblk transmission is received successfully and decoded correctly with packet success probability p at the first transmission interval, p(1 − p) at the second transmission interval and so on up to νth maximum transmission attempt (ν is a 3GPP MAC layer parameter [38,39,40]) with probability p(1 − p)v−1. If after the νth transmissions the packet is still corrupted it will be finally forwarded to the upper RLC layer with probability of a packet failing all attempts as for further RLC ARQ functionality [38,39,40] and the mean number of retransmissions can be calculated as

Leading into (geometric series expansion),

Since , the mean number of HARQ packet retransmissions is estimated to be [41]

PER is the number of incorrectly received data packets divided by the total number of received packets. The expectation value of the PER for each of the device k is denoted packet error probability pp = 1 − (packet success probability) = 1 − p. Considering our previous federated edge server model with the transmitted UL power of device k, the channel power gain between the device and the edge server, and N0 the noise power over the whole bandwidth B, the successful decoding of a Trblk MAC packet depends on the where the packet error probability (probability of failure) pp is then expressed as [36]

And the mean number of HARQ packet retransmissions is finally expressed as

From Equation (9), it is obvious that the average number of retransmissions depends explicitly on the maximum number of HARQ attempts v, on the and on the size of the MAC packet . Due to vendor specific 5G/6G HARQ functional implementation, one MAC packet will be retransmitted a maximum number of v times under the restriction TTI < τmax ≤ (n + ν) Ts where considering the restriction: .

The NACK transmission intensity is estimated as

And the intensity of HARQ packet retransmissions is given by

Given the previous analysis, the transmission intensity of MAC information packets (being retransmitted ν times with but still corrupted), which is forwarded to the receiving RLC/PDCP layers, is given by

Moreover, the intensity of correctable received MAC packets to be forwarded to upper receiving RLC/PDCP layers is denoted as :

2.2. The HARQ Retransmission Gain

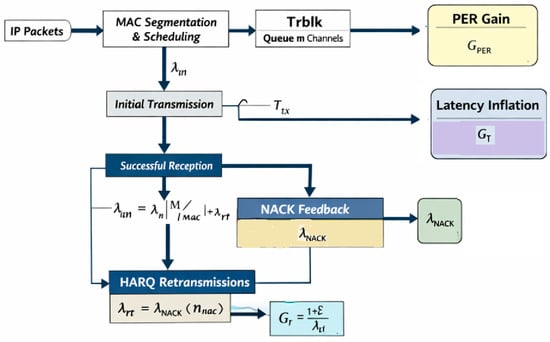

The current HARQ implementation in 5G-Advanced and potential implementation in the emerging 6G networks marks a significant step toward improving data reliability and optimizing spectral efficiency in wireless communications. HARQ integrates forward error correction (FEC) with CBG and retransmission mechanisms to counteract packet losses, ensuring dependable data transmission even in fluctuating and complex channel environments. The iterative nature of HARQ retransmissions, especially when using incremental redundancy, enhances overall system performance by boosting throughput and reducing latency through the avoidance of redundant retransmissions. FEEL, as a decentralized machine learning framework, allows edge devices to collaboratively train models without exchanging raw data, thereby maintaining privacy while leveraging distributed intelligence. Integrating FEEL with HARQ retransmissions offers notable advantages by optimizing a defined cost function. Figure 4 illustrates the proposed HARQ–FEEL optimization model, highlighting the joint impact of HARQ retransmissions on transmission reliability and latency in a federated learning environment. The figure conceptually captures the dual effect of retransmissions: improving packet decoding success (PER reduction) while inflating transmission delay. This interaction directly affects the timeliness and relevance of local model updates in FEEL. The depicted gain–cost trade-off motivates the subsequent mathematical formulation of retransmission gain, latency inflation, and eventual throughput. Based on this model, an optimization framework is developed to balance reliability and timeliness under fading wireless channels.

Figure 4.

The proposed HARQ/FEEL optimization model.

Following Figure 4, FEEL algorithms can predict optimal transmission settings, such as MCS, using locally observed channel conditions, thereby improving HARQ performance. Secondly, FEEL supports adaptive learning across diverse devices, enabling HARQ mechanisms to dynamically adjust based on changing channel conditions and user demands. Furthermore, the synergy between HARQ and FEEL helps tackle key challenges in 5G-Advanced. FEEL-driven predictive models can anticipate retransmission-prone scenarios, allowing proactive adjustments in transmission power and resource allocation. Additionally, by distributing computational tasks across the network, FEEL reduces the processing load on central units, complementing the decentralized architecture of 5G networks. Preliminary research [31], incorporating insights from 3GPP Release 18 specifications and recent IEEE contributions, suggests that HARQ mechanisms enhanced by FEEL can improve spectral efficiency by up to 20% and reduce latency by approximately 15% compared to conventional methods, as in [42].

The new and important idea is the combination of FEEL with HARQ retransmissions presenting significant opportunities for improving system performance. By integrating a cost function that balances HARQ retransmission benefits with potential latency increase, FEEL algorithms can optimize both communication and computational efficiency. Radial basis functions are especially effective in capturing the nonlinear relationships between retransmission performance and latency trade-offs. The fusion of HARQ and FEEL, supported by RBF-based cost functions, enables the development of resilient, low latency, and scalable next-generation communication networks. To systematically derive the gain function for PER reduction after a single retransmission for device k, we begin with a simplified analysis. The initial PER for device k is given in Equation (8). Suppose that after an initial transmission failure, the packet undergoes average nmac retransmissions. Throughout this process, the probability of error decreases due to enhanced redundancy in encoding or improved channel conditions. For simplicity, we assume that the effective SINR experiences a retransmission gain factor Gr > 1 because of HARQ, contributing to improved reliability.

The SINR gain due to HARQ retransmissions in 5G/6G depends on several factors, including the number of retransmissions, channel response characteristics, and fluctuating radio link conditions. The PER improvement (gain) is defined as the ratio of the initial packet error probability p before retransmissions to the packet error probability after retransmissions. The initial probability of failure (PER) per device k before retransmissions is given by Equation (8). Each retransmission improves the effective SINR due to redundancy and potential channel variations. Defining Gr as the retransmission gain factor, which accounts for the improvement in SINR after retransmissions, then the effective SINR after nmac retransmissions becomes , and the new packet error probability after retransmissions can be written as

In practical 6G FEEL deployments, where the training occurs at the network edge gNBs as a native federated learning optimized for edge-native telecom systems, the reliability of the global learning process is strongly influenced by the accuracy and timeliness of the local updates transmitted by distributed devices. Due to wireless channel impairments [43], limited uplink power, and dynamic interference conditions, locally computed gradients or model parameters may be corrupted, excessively delayed, or lost during transmission. Inaccurate updates can therefore enter the aggregation process at the edge server, leading to biased gradient estimates, slower convergence, or even divergence of the global model.

This issue is exacerbated in low-SINR regimes, where repeated HARQ retransmissions increase latency and cause local updates to become stale with respect to the current global model state. Such outdated or partially erroneous updates undermine the reliability of FL beyond mere privacy considerations, as they directly affect learning stability, convergence speed, and final model accuracy. Consequently, reliability-aware communication mechanisms are essential to ensure that only timely and sufficiently accurate updates contribute to global aggregation.

Motivated by this observation, the proposed framework explicitly incorporates HARQ retransmission behavior into the optimization process. By jointly considering packet error probability, retransmission intensity, and latency inflation, the proposed RBF-based optimization aims to improve the fidelity of transmitted updates while controlling delays. This coupling between communication reliability and learning dynamics is fundamental for robust FEEL operation in 6G wireless environments.

The HARQ process improves the SINR due to error correction mechanisms and retransmission diversity (soft combining or incremental redundancy). The retransmission intensity represents the additional attempts to successfully decode a packet. At this stage an effective SINR gain due to HARQ retransmissions is introduced as a simplified, heuristic representation. While a detailed derivation from communication theory would require modeling mutual information accumulation or soft combining per code block, this linear approximation provides a tractable system-level estimate of PER improvement and facilitates the FEEL optimization framework. Hence the retransmission gain factor is modeled as a linear function of the normalized retransmission intensity . This linear approximation is motivated by the Chase Combining principle, where the effective SNR increases roughly linearly with the number of retransmissions under quasi-static channel conditions. The scaling factor captures system-level effects such as combining efficiency and channel variations.

Hence, the effective SINR improvement for average retransmissions can be simplified and modeled as a function of the ratio between retransmission intensity, the total transmitted packet intensity , and the acknowledgment intensity :

where the factor represents the retransmission efficiency contributing to SINR improvements. The PER improvement (gain) due to retransmissions is defined as the ratio of initial to post-retransmission PER, e.g.,

2.3. Transmission Latency Inflation Factor Due to HARQ Retransmissions

HARQ plays a critical role in improving transmission efficiency in wireless networks by enhancing data reliability and mitigating packet losses, as described so far in Section 2.2. By leveraging error detection and correction mechanisms, HARQ dynamically retransmits erroneous packets, ensuring successful data delivery even under adverse channel conditions. This results in improved spectral efficiency, reduced packet loss rates, and enhanced overall network performance. The combination of FEC and retransmission strategies, such as soft combining and incremental redundancy, further optimizes link adaptation, leading to higher throughput and reduced retransmission overhead.

However, while HARQ improves transmission reliability, it introduces additional transmission latency due to repeated packet retransmissions. Each retransmission incurs a round-trip time (RTT) delay due to MAC scheduler decisions, which accumulates as the number of retransmissions increases. This delay is particularly critical in ultra-low latency applications, such as real-time communications and autonomous systems, where even small delays can impact performance. However other services like Mobile Broadband (MBB) suffers from throughput reduction. Furthermore, higher retransmission intensities increase network congestion, leading to additional queuing delays and resource contention. Thus, while HARQ enhances network efficiency by improving packet delivery success rates, it simultaneously introduces trade-offs in transmission latency.

Concluding, the MAC transmission latency due to HARQ retransmissions is influenced by the additional time required for retransmissions before a packet is successfully decoded or discarded. The total transmission delay consists of initial transmission delay Ttx as the time taken for the first transmission attempt, retransmission delay Trt which accumulates with each failed attempt and total HARQ latency THARQ which accounts for the number of retransmissions per packet. Define KHARQ to be the average number of retransmissions per packet and TRTT the round-trip time for HARQ feedback, then each retransmission incurs an additional HARQ RTT delay before the next attempt. The total latency due to retransmissions is given by and the latency inflation factor is defined as

3. The Optimization Approach

In a network setup with a single edge server and k devices, as described in [31], collaborative network optimization through FL enables all k devices to train a shared machine learning model w using only their locally available data. This training process is coordinated with the edge server and follows an SGD methodology. SGD is an iterative optimization technique designed for objective functions that exhibit suitable smoothness properties, such as differentiability or sub-differentiability. Unlike conventional gradient descent, which computes gradients based on the entire dataset, SGD estimates gradients using randomly selected data subsets. This approach reduces computational complexity, particularly in high-dimensional optimization scenarios, allowing for faster iterations. However, this efficiency comes at the cost of a potentially slower convergence rate compared to full-batch gradient descent.

3.1. The Optimization Problem Statement

Both statistical estimation and machine learning address the task of sum-minimizing an objective function Q based on an estimated parameter w (known as the machine learning training model) for the associated Qi summand to the observation set, which takes the following form:

In statistical learning theory, empirical risk minimization is a foundational concept that underpins a family of learning algorithms designed to assess performance on a given, fixed dataset. This approach leverages the law of large numbers, focusing on minimizing the empirical risk, which is the average loss calculated over the training data. The “true risk” (the expected loss over the actual data distribution) cannot be directly evaluated since the true distribution of the data is unknown. Empirical risk serves as a practical proxy, enabling optimization of the algorithm’s performance based on the observed training dataset. The loss function, a key element in this framework, quantifies the discrepancy between predicted and actual outcomes, forming the basis for the sum-minimization objective in risk analysis. This is particularly relevant in the stochastic gradient descent method, where iterative updates aim to minimize the empirical risk by approximating the global minimum. Hence Qi(w) could also be named as the loss value function in the ith example measurement. During Q(w) sum-minimization within the SGD procedure, a gradient descent method performs the following iteration updates (i.e., ) in a time frame τ with the learning rate η (i.e., a tuning parameter in an optimization algorithm that determines the step size (i.e., τ) at each iteration while moving toward a minimum of a loss function):

As analyzed in the previous sections, the principle of data retransmission is widely recognized as an effective solution to address unreliable data transmission. This approach is well-suited for conventional communication systems where throughput and reliability are the primary performance metrics. However, as already discussed in Section 1.3, in the context of FEEL the focus shifts significantly. The main objective in FEEL systems is not solely reliability or throughput but maximizing training accuracy within a constrained training period. This distinct goal arises from the nature of FEEL, where collaborative model training across distributed devices must balance communication efficiency with learning performance. Prioritizing this shift, conventional retransmission protocols are insufficient for FEEL’s unique requirements. Instead, there is a need for an optimization strategy especially designed to enhance training accuracy while efficiently utilizing the available training period. Such a protocol would align with FEEL’s goals, ensuring effective communication without compromising the FL process.

Optimizing the HARQ retransmission for FEEL systems requires balancing training accuracy with the added communication overhead introduced by retransmissions. Retransmissions help mitigate packet errors, ensuring that edge server’s gradient updates are closer to the true gradients. This improvement can enhance the convergence rate and increase the accuracy of the trained model [31]. On the other hand, retransmissions inherently lead to higher communication latency, which can extend the overall training duration [31]. This trade-off between accuracy and latency is a critical challenge in FEEL system design. To address it effectively, a strategic approach is needed to determine the average retransmission attempts to contribute significantly to improving model accuracy while minimizing unnecessary delays.

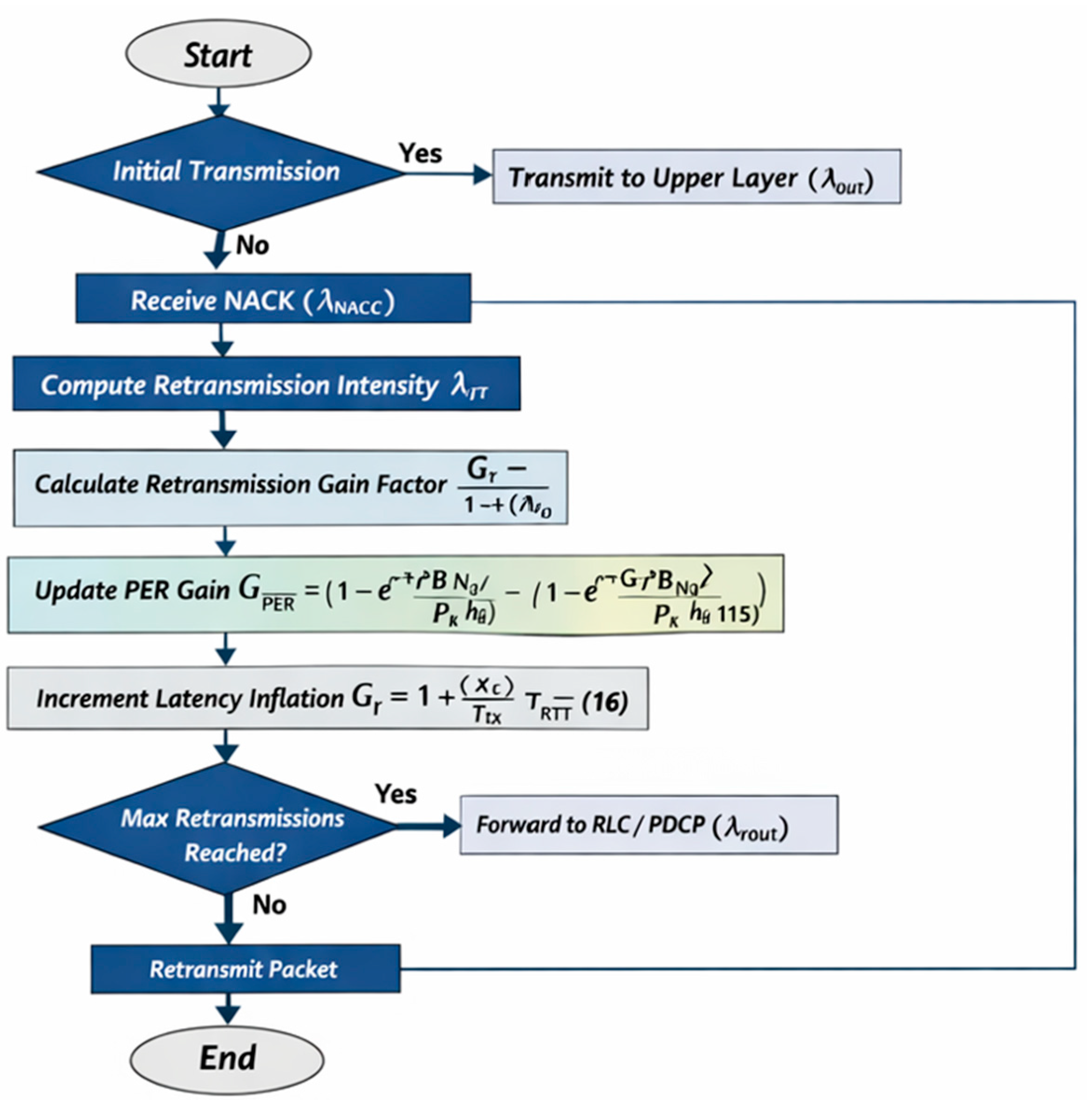

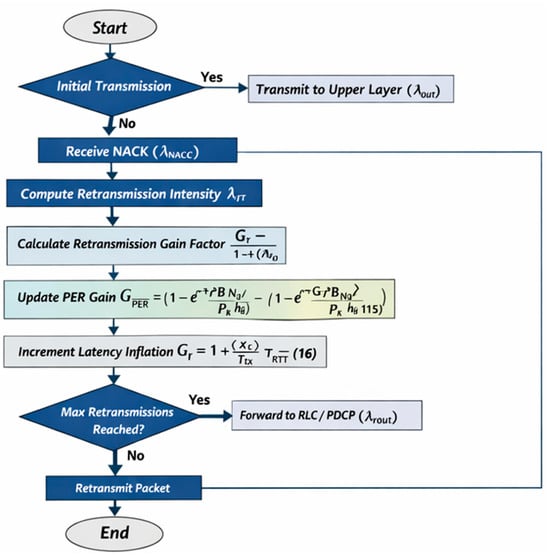

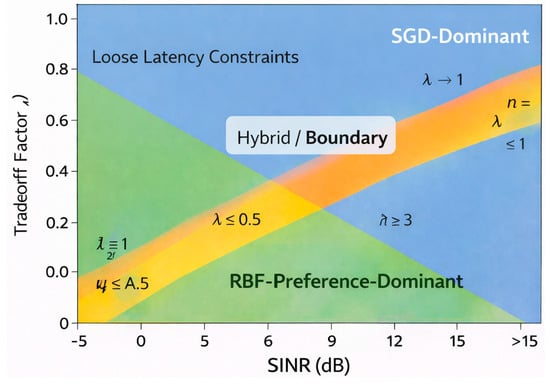

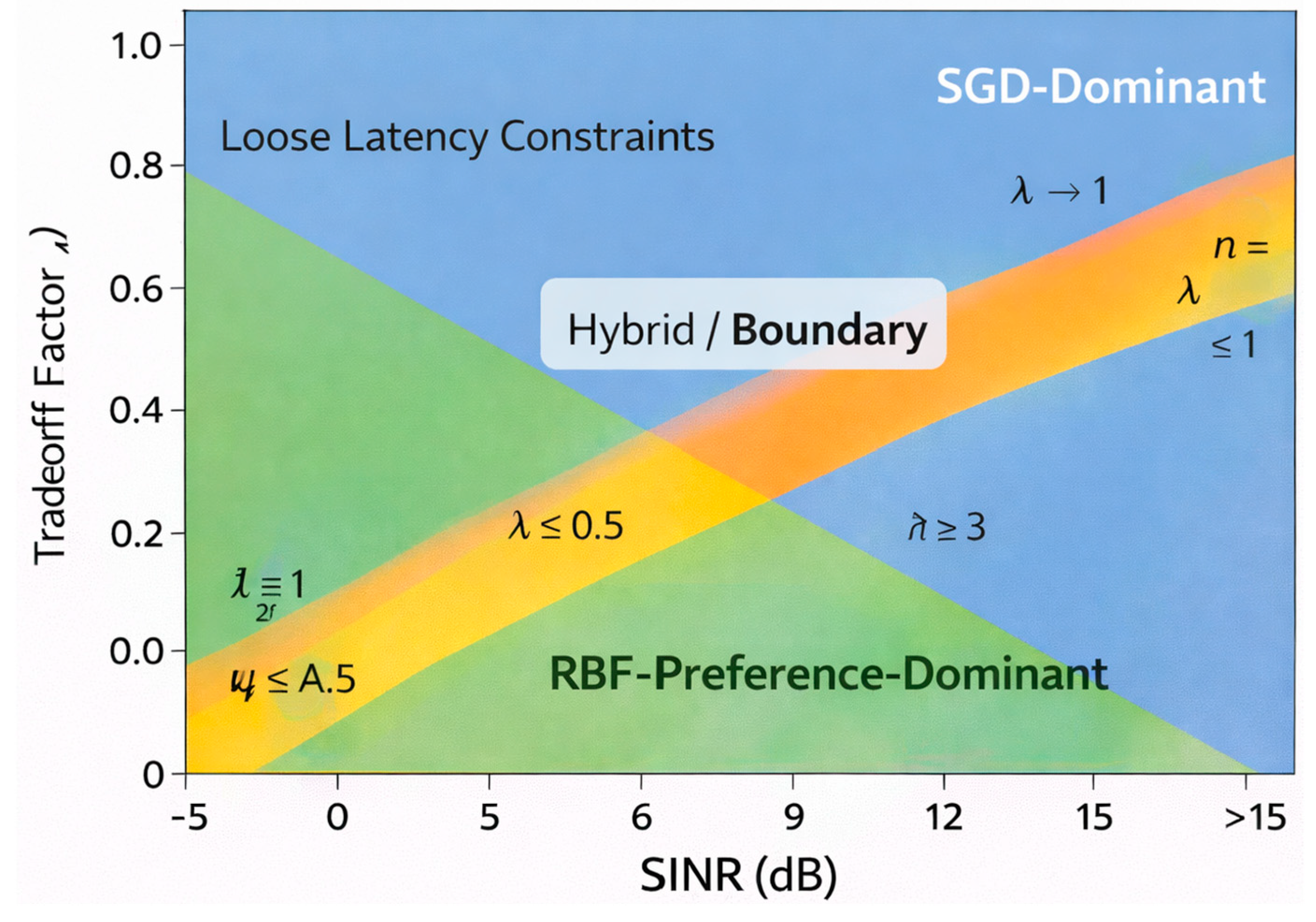

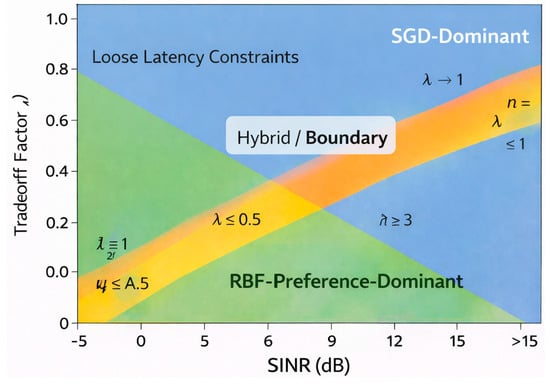

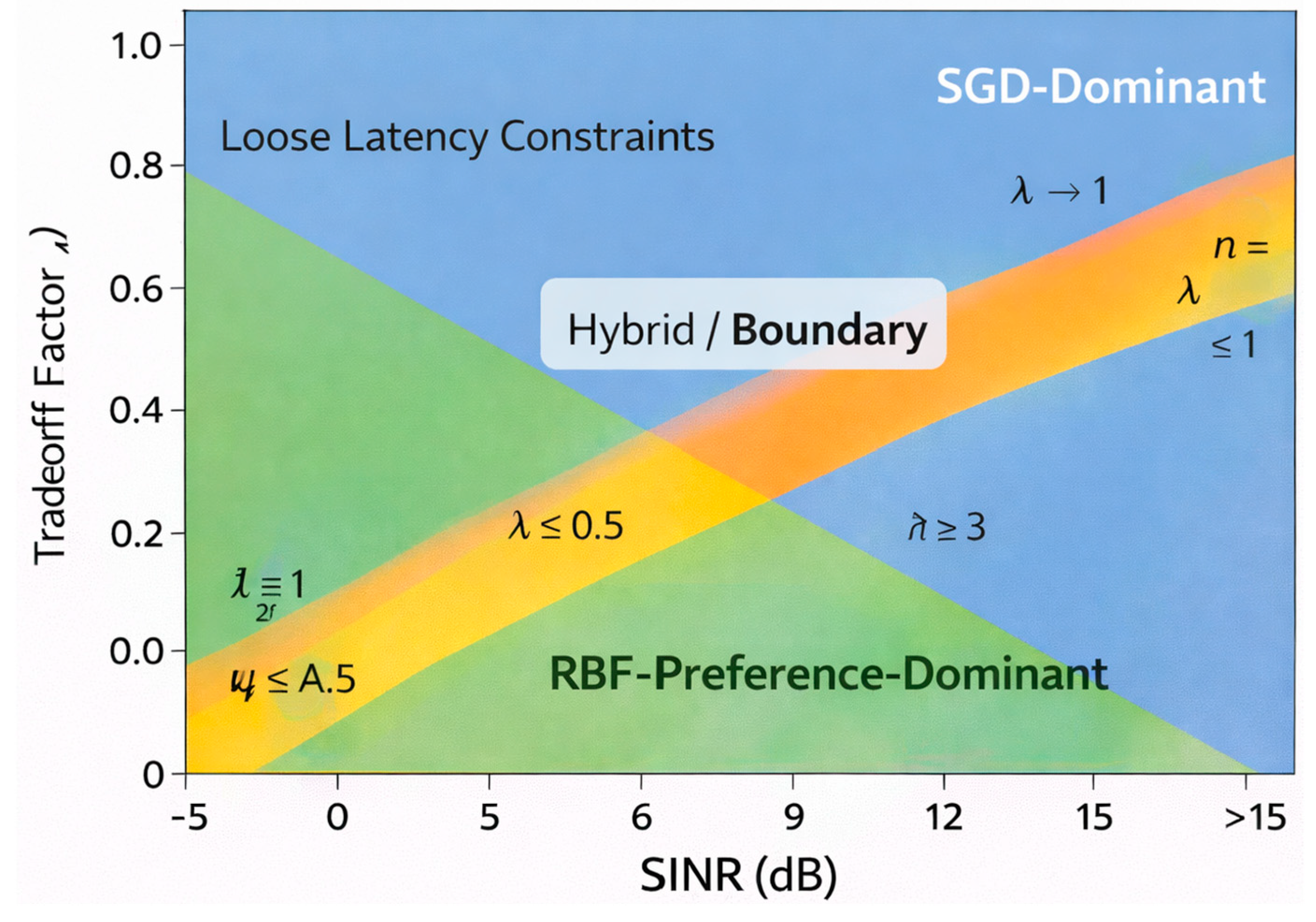

There is a need for an efficient cost function to account for the benefits and losses associated with retransmissions and to provide an optimized performance, carefully balancing the trade-off between the gains and costs when designing a retransmission strategy. Figure 5 depicts the proposed optimization approach for HARQ-aware FEEL based on RBF modeling. The framework transforms the discrete, non-convex retransmission control problem into a tractable global optimization task. RBF surrogate functions are used to approximate the retransmission gain and latency cost components of the system. This enables efficient exploration of the reliability–timeliness trade-off under dynamic channel conditions. The figure motivates the subsequent mathematical formulation of the RBF-based cost function and preference-driven optimization process. The target objective is to optimize the number of retransmissions nmac to maximize the performance improvement gained from retransmissions while minimizing the associated communication expenses. To address this, we introduce a trade-off factor, λ ∈ [0, 1], which represents the balance between retransmission gain and cost. Using this factor, the retransmission gain–cost trade-off can be formulated into a mathematical optimization problem. The goal is to derive a scheme that efficiently allocates retransmission resources, ensuring optimal learning performance without unnecessary increases in communication overhead, moving toward a minimum of a loss function):

s.t: λ ∈ [0, 1]: trade-off factor between retransmission gain and latency cost .

Figure 5.

The optimization approach based on FEEL with radial basis functions (RBFs).

nmac ∈ {0,1,…ν}: denotes the retransmission index for device k, with ν as the maximum retransmission limit.

- Up to our knowledge this is a discrete, non-convex optimization problem due to the constraints nmac ∈ {0, 1, …ν}. Solving this directly with SGD involves approximating gradients and handling combinatorial constraints.

3.2. The Proposed Optimization Algorithm

Optimization of complex cost functions is a fundamental challenge in machine learning and engineering applications, particularly when dealing with non-convexity, high-dimensional spaces, or inherent noise. Traditional approaches such as SGD are widely used, but they come with several limitations, including their reliance on local gradient information and susceptibility to getting stuck in local minimum. In contrast, global optimization strategies that integrate active preference learning with RBF support provide a more effective alternative by leveraging structured exploration and efficient function approximation, as seen in Figure 5.

Active preference learning is a methodology that dynamically refines the search space by incorporating feedback from a user or an automated system. This feedback helps direct the optimization process toward promising regions, avoiding exhaustive exploration of the entire solution space. By combining this approach with radial basis functions, a surrogate model can be constructed to approximate the cost function globally. The RBF model serves as an interpolative framework, capturing the underlying structure of the objective function and reducing the reliance on direct evaluations of the often-expensive cost function.

A major advantage of this approach lies in its ability to efficiently explore and exploit the search space. Unlike SGD, which depends solely on local gradient information and updates parameters iteratively based on stochastic estimates, RBF-based optimization methods utilize a global perspective. The surrogate model identifies promising regions with greater precision, thus accelerating the convergence process. This is particularly useful in scenarios where the cost function exhibits multiple local optima, rendering traditional gradient-based techniques less effective. Another significant benefit of RBF-based optimization is computational efficiency. Cost function evaluations can be expensive, particularly in problems where each function call involves complex simulations or large-scale computations. By employing an RBF surrogate model, the number of direct evaluations is significantly reduced, alleviating the computational burden. In contrast, SGD relies on repeated gradient calculations, which can be resource-intensive, especially in high-dimensional spaces or when dealing with large datasets. This reduction in computational load makes RBF-based methods more practical for real-world optimization tasks where efficiency is crucial. Furthermore, the robustness of RBF-based optimization against noisy data provides a notable advantage. Noise in optimization problems can arise from measurement inaccuracies, stochastic system behavior, or inherent uncertainties in the data. The smooth approximation properties of RBF mitigate these issues by filtering out noise and producing a more stable optimization landscape. SGD, on the other hand, is highly sensitive to noise, as its reliance on local gradients can lead to erratic updates and poor convergence behavior in the presence of high variance in the data.

An additional strength of RBF-based optimization is its applicability to non-differentiable cost functions. Many real-world problems involve discontinuities or non-smooth objective functions, where gradient-based approaches struggle due to undefined or misleading gradients. Since RBF methods construct an approximation based on function values rather than derivatives, they can effectively handle these challenges, broadening their applicability to a wider range of optimization problems. Active preference learning further enhances the effectiveness of this approach by prioritizing regions of interest based on available information. Unlike SGD, which follows a predefined learning rate and update schedule, active preference learning enables the optimization process to dynamically allocate resources where they are most needed. This results in a more efficient and adaptive optimization process, ensuring that computational effort is focused on the most promising areas of the search space.

FL involves decentralized model training across multiple devices with constraints on communication bandwidth and computational resources. Traditional SGD-based optimization in FL can suffer from inefficiencies due to high communication overhead and slow convergence rates. Transforming the problem into a global optimization framework with active preference learning and RBF, it becomes possible to balance retransmission gains against cost factors more effectively. The advantages of this approach extend beyond FL to other domains, including engineering design, machine learning hyperparameter tuning, and automated decision-making systems. The ability to incorporate domain knowledge through active preference learning, combined with the global modeling capabilities of RBFs, makes this methodology highly adaptable to diverse optimization challenges.

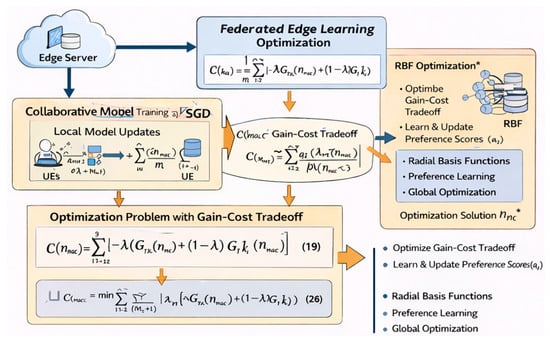

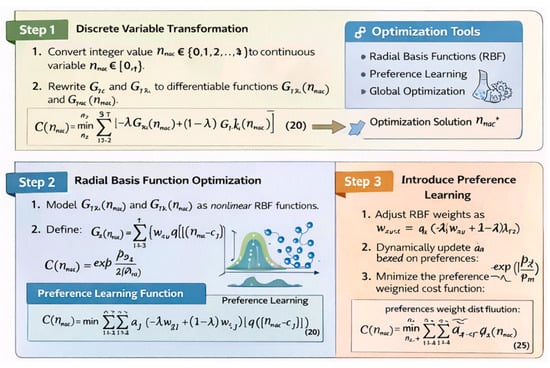

To address the retransmission gain–cost trade-off optimization problem in an FL setup, we seek to transform SGD optimization problem into a global optimization problem of a cost function, incorporating active preference learning RBF, Figure 6.

Figure 6.

The FEEL/RBF optimization approach steps.

- Step 1: Discrete Variables transformation

To facilitate gradient-based optimization:

- Convert integer value nmac ∈ [0, 1, 2, …,ν] to a continuous variable: nmac ∈ [0, ν].

- Rewrite and as continuous differentiable functions and .

And the converted problem (19) is transformed into

s.t: λ ∈ [0, 1],

- Step 2: Radial Basis Functions

RBFs are used to model and as nonlinear functions for further optimization feasibility. By definition a radial function is a function and when paired with a norm (i.e., squared Euclidean distance) on a vector space a function is a radial kernel centered at c ∈ V [44]. An RBF model approximates a function as Gaussian:

where is the Gaussian RBF kernel with parameter controlling the spread, are the RBF centers, and are the weights, approximating the retransmission gain and the latency cost as

Hence the global cost function, for all K devices, is becoming

- Step 3: Introduce a Preference Learning variant

After solving the relaxed optimization problem, the continuous optimal value is mapped to an integer via the projection This nearest-integer projection ensures feasibility with respect to HARQ protocol constraints. The retransmission cost function is monotonic and piecewise smooth in . Since assumes only a small, bounded integer range, the expected projection error satisfies the range .

As a result, the objective deviation introduced by projection is upper-bounded and does not alter the qualitative behavior of the solution. Empirically, no noticeable performance degradation is observed compared to exhaustive integer evaluation in the considered parameter range.

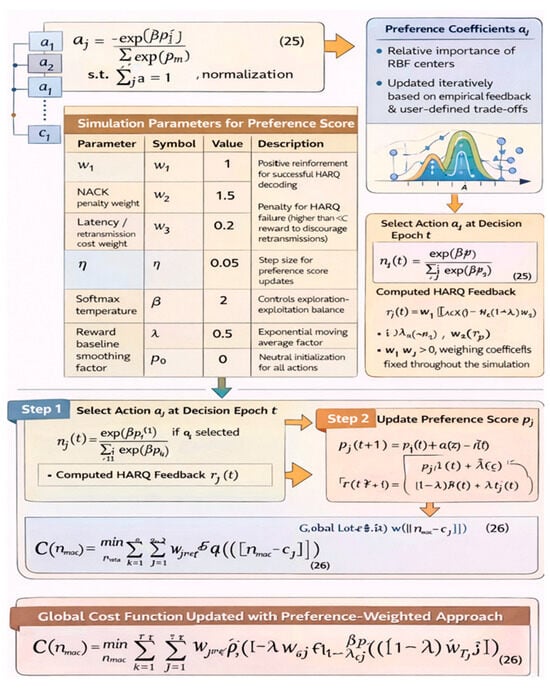

Preference learning is incorporated to adjust the trade-off dynamically based on user or system preferences, Figure 7. Preference learning introduces a weighting or ranking scheme for the RBF components based on prior knowledge or empirical data by assigning higher weights to RBF centers corresponding to desirable trade-offs between and and/or use domain-specific knowledge to determine the relative importance of and . Substituting the learning weights as per Table 1 it is proposed to modify the RBF weights to reflect preferences as , where is the preference factor for the ith RBF center, derived from preference learning algorithms. The preference coefficients represent the relative importance of different RBF centers in modeling the global cost function. These coefficients are dynamically updated using active preference learning, which adapts to empirical data or user-defined trade-offs between and .

Figure 7.

The proposed RBF preference learning algorithm.

Table 1.

Simulation parameters for preference score action set.

To ensure a smooth preference transition and maintain convexity in the optimization framework, we define as per Figure 7 the preference coefficient factors as

s.t. normalization , preserving the probabilistic interpretation of the preference coefficient factors.

- where in Table 1

is the preference score assigned to the jth RBF center, computed based on empirical feedback or predefined heuristics.

is a scaling parameter that controls the sensitivity of the preference weighting distribution.

Let denote the finite action set (e.g., candidate HARQ-related control actions or scheduling configurations). Each action is associated with a preference score , representing the algorithm’s confidence in the long-term utility of selecting action at decision epoch .

During the simulation procedure, at initialization ∀j ∈ {1,…,J} where is a neutral prior (set to zero in all simulations). At each decision epoch , the probability of selecting action is obtained using a softmax policy:

where controls the exploration–exploitation trade-off.

After executing action , the system observes a scalar feedback signal , computed from measurable HARQ-level performance metrics. In the simulations, the feedback signal is defined as where

- and are binary indicators of HARQ success or failure,

- is the normalized retransmission or latency cost,

- are weighing coefficients fixed throughout the simulation.

The preference scores are updated online using a stochastic gradient ascent rule inspired by policy-gradient methods:

where

- is the learning rate,

- is an exponential moving average baseline, used to reduce variance and stabilize convergence.

The global cost function, for all K devices, is becoming

With and being updated iteratively based on active preference learning decisions, the above algorithm converges in finite steps (Appendix A).

Finally, it is worth mentioning that the trade-off factor controls the relative importance between retransmission-related gain and latency cost. This formulation corresponds to a scalarization of a bi-objective optimization problem, where varying moves the operating point along the same Pareto optimal frontier without altering the feasible set. Since both cost components are bounded and continuous, the resulting objective function is Lipschitz continuous with respect to . Moreover, the RBF-based approximation preserves this continuity, as the basis functions are independent of and only the associated weights are linearly scaled. Consequently, the solution obtained via the RBF method varies smoothly with , ensuring robustness of the proposed approach across a wide range of trade-off values (Appendix B).

The computational complexity and overhead cost analysis in Appendix C demonstrates that the proposed RBF-based active preference learning optimization framework is substantially more efficient than conventional SGD-based approaches in FEEL systems. SGD incurs a computational complexity that scales linearly with both the training dataset size and the model dimensionality, i.e., , requiring repeated transmission of high-dimensional gradient vectors under HARQ. Our proposed method replaces iterative gradient evaluations with a global surrogate model of complexity , where the number of RBF centers and preference learning iterations is typically small and bounded. Moreover, the communication overhead is significantly reduced, as the proposed framework relies on scalar HARQ-level feedback and preference scores rather than full gradient exchanges, resulting in an overhead of order , compared to for SGD-based FEEL.

Although the proposed RBF-based preference learning framework provides substantial gains in low-SINR and latency-constrained FEEL scenarios, SGD may outperform the proposed method in regimes characterized by very high SINR, negligible HARQ retransmissions, and reliability-dominated optimization objectives (), as seen in Appendix C. In such cases, gradient variance is low, retransmission overhead is minimal, and the asymptotic convergence guarantees of SGD become dominant. However, as SINR degrades, HARQ retransmissions increase, or latency constraints tighten, the effective SGD convergence rate deteriorates proportionally to the average retransmission count, while the proposed approach maintains stable performance through global surrogate modeling and preference-guided optimization. This establishes a clear operational boundary under which the proposed method is preferable for practical FEEL deployments.

Concluding, the proposed approach explicitly optimizes the retransmission index through a preference-weighted cost function, further limiting unnecessary HARQ retransmissions, yielding additional latency and energy savings. Overall, this analysis confirms that the proposed algorithm achieves superior scalability and communication efficiency while maintaining effective optimization performance, making it particularly well-suited for FEEL under stringent latency and bandwidth constraints.

4. Discussion

The theoretical framework outlined above establishes the foundational principles for optimizing HARQ retransmissions in FEEL environments. To summarize, the proposed framework explicitly addresses the inherent trade-off between transmission reliability and latency introduced by HARQ retransmissions. On one hand, additional retransmissions increase decoding reliability by improving the effective SINR through redundancy and diversity gain, thereby reducing the packet error rate (PER). On the other hand, each retransmission incurs an additional round-trip delay, leading to transmission latency inflation and increased resource occupation. These two objectives are fundamentally conflicting, particularly in dynamic wireless environments where channel conditions fluctuate rapidly.

This trade-off is formally captured in the proposed cost function through the weighting parameter , which controls the relative importance of HARQ retransmission gain versus latency penalty. A larger value of biases the optimization toward reliability, favoring retransmission-intensive strategies that minimize PER, while a smaller value prioritizes latency reduction by limiting retransmissions. The proposed RBF-based optimization framework learns this balance adaptively from observed system behavior, enabling dynamic adjustment of retransmission strategies without requiring an explicit analytical model of the underlying wireless channel.

Importantly, the trade-off is not resolved by selecting a single optimal operating point, but by allowing the optimizer to continuously adapt decisions based on instantaneous HARQ outcomes, channel conditions, and learning dynamics. This adaptive handling of the reliability–latency trade-off is a key advantage of the proposed approach over static or expectation-based optimization methods and is particularly well-suited for FEEL-enabled 6G systems where both ultra-reliability and low latency are critical performance requirements.

To validate the efficacy of the proposed methodologies, simulation-based analyses were conducted under realistic wireless conditions. These simulations account for fluctuating SINR, packet error rates (PERs), and resource constraints inherent to wireless RF communication scenarios. To validate the proposed methodology, simulations were conducted under realistic wireless communication conditions.

Key parameters included

- Network Topology: A single edge server with K connected devices, each storing nk local datasets.

- Wireless Environment: Fading channels with varying SINR and packet error rates (PER).

- Compared Algorithms: The proposed RBF-based optimization was benchmarked against SGD.

- Metrics Evaluated: Convergence rate, accuracy, retransmissions, and latency.

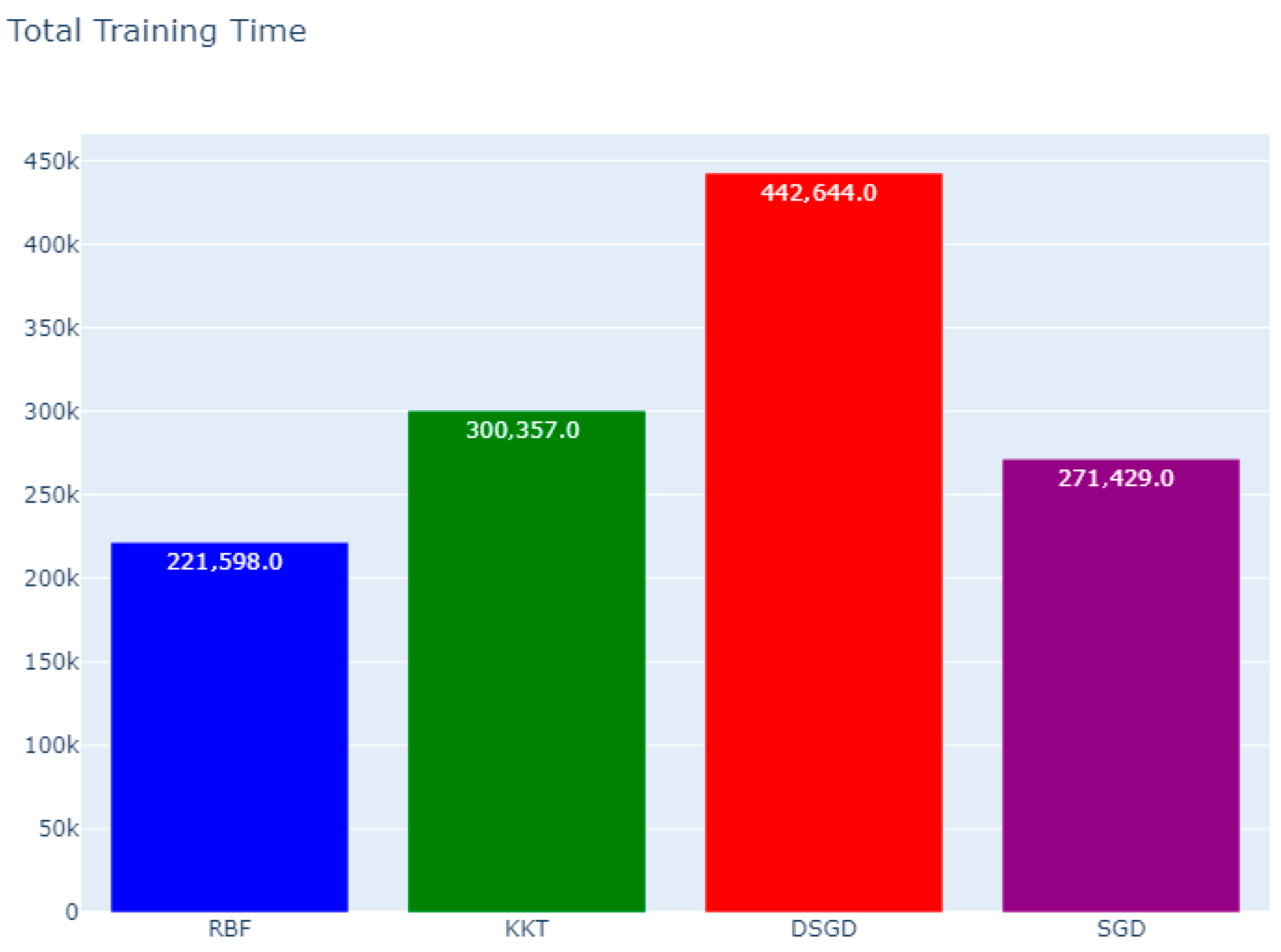

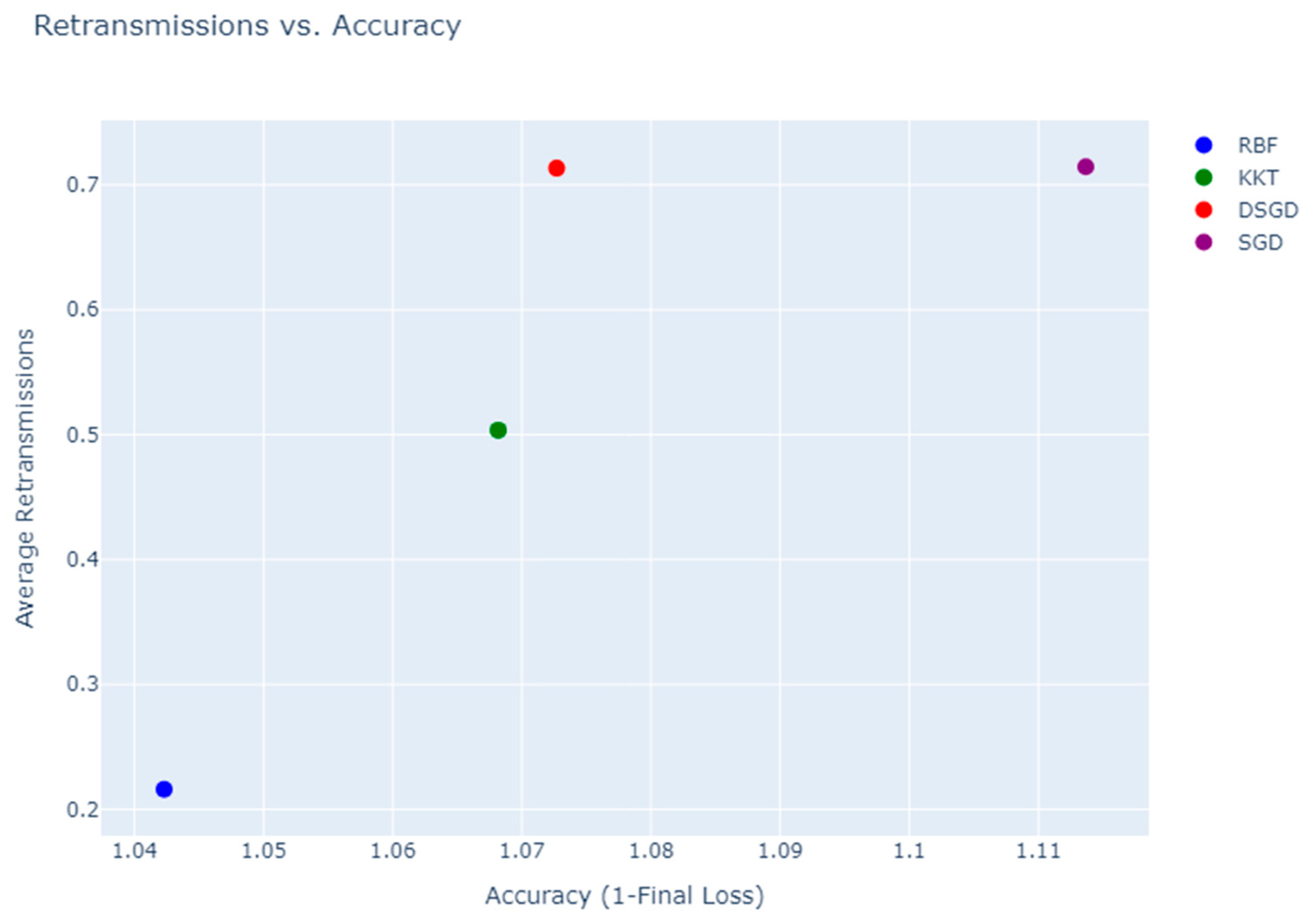

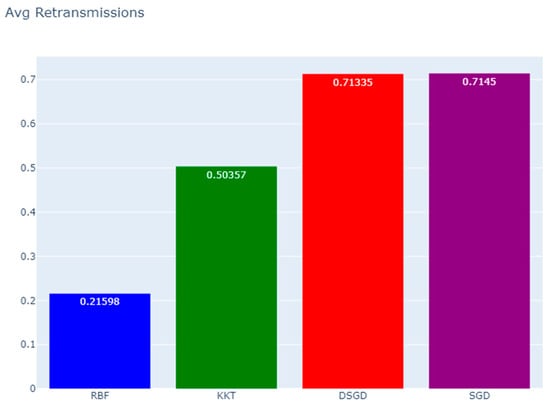

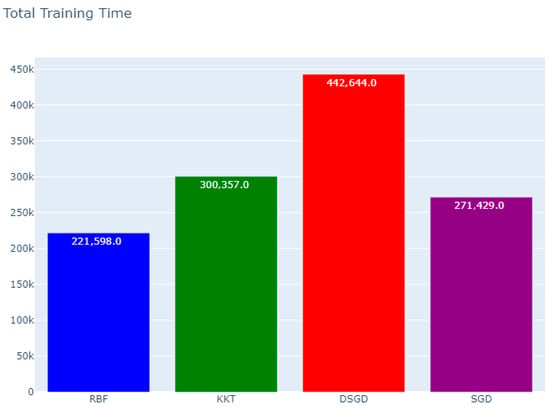

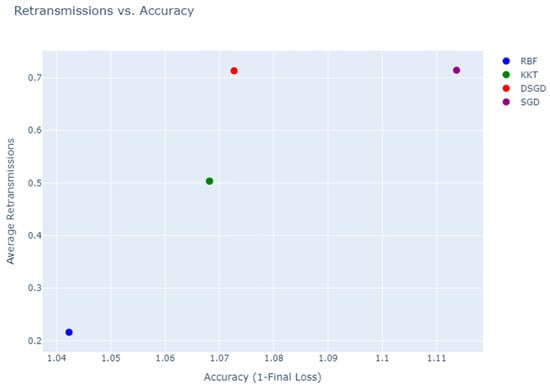

The goal of this simulation is to assess and compare four optimization techniques—radial basis functions (RBFs), stochastic gradient descent (SGD), decentralized stochastic gradient descent (DSGD), and Karush–Kuhn–Tucker (KKT)—within an FL like FEEL framework. The focus is on optimizing HARQ retransmissions to achieve an optimal balance between reliability, latency, and convergence speed. The intent is to demonstrate that RBF surpasses the other methods in terms of convergence, final global loss, and communication efficiency.

Although 6G systems are expected to operate across heterogeneous propagation environments, Rayleigh fading remains a well-justified and widely adopted baseline model for analyzing learning–communication interactions under channel uncertainty. In our analysis of the distributed and federated learning over unreliable wireless networks, the primary objective is not to capture environment-specific propagation details, but to characterize the stochastic unreliability of packet delivery, which directly affects gradient timeliness, retransmission behavior, and learning convergence. Rayleigh fading accurately models rich-scattering, non-line-of-sight (NLoS) conditions commonly encountered in dense deployments, indoor scenarios, cell-edge operation, and ultra-dense edge networks—all of which are central to practical FEEL implementations. More importantly, Rayleigh fading induces random, memoryless packet error behavior, enabling analytically tractable and statistically representative modeling of PER and HARQ retransmission dynamics. This is essential for isolating the fundamental reliability–timeliness trade-off that the paper investigates. From a learning perspective, the proposed framework is agnostic to the specific fading distribution; it relies on the induced PER and retransmission-induced latency inflation rather than on channel-specific parameters. The concept of eventual throughput, as introduced in this work, captures the effective learning contribution of updates under delayed or unreliable delivery, independent of whether the underlying fading follows Rayleigh, Rician, or composite models. As such, Rayleigh fading serves as a conservative and worst-case baseline, ensuring that the derived insights remain valid under more favorable propagation conditions. Finally, the use of Rayleigh fading aligns with established practice in both 5G and emerging 6G literature when evaluating protocol-level and learning-aware mechanisms, particularly when the goal is to demonstrate robustness and generality rather than environment-specific optimization. Extending the analysis to alternative fading models is straightforward and left as future work, without affecting the validity of the conclusions drawn in this paper.

The simulation as in Table 2 is based on the following network and learning conditions:

Table 2.

Overall simulation parameters.

- Channel Model: Rayleigh fading to simulate dynamic wireless channel behavior.

- SINR Variations: SINR is dynamic per round, varying between 0 and 12 dB.

- HARQ Model: Maximum 10 retransmissions per packet based on packet error rate (PER).

For the FL Framework, the simulation assumptions comprise 20 edge devices collaboratively train a global model, where each device holds 100 data points for a linear regression task. For the global aggregation a centralized FEEL framework where local gradients are transmitted to a parameter server. The optimization methods to be compared are

- RBF: Utilizes surrogate modeling for efficient global optimization.

- KKT: Applies a fixed retransmission-based optimization strategy.

- DSGD: A decentralized learning framework where nodes exchange information among themselves.