Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks

Abstract

1. Introduction

- Introducing a Q-learning-based approach with a novel three-phase adaptive routing framework, including exploration, exploitation, and monitoring to effectively improve RPL data forwarding performance.

- Utilizing well-selected routing metrics, including Buffer Utilization, Energy Level, Received Signal Strength Indicator (RSSI), Overflow Ratio, and Child Count, to provide nodes with the ability to observe the neighborhood.

- Applying a hybrid topology monitoring mechanism that suppresses DIOs during stability and monitors overflow ratio to reactively trigger updates, balancing overhead and responsiveness.

2. Preliminaries

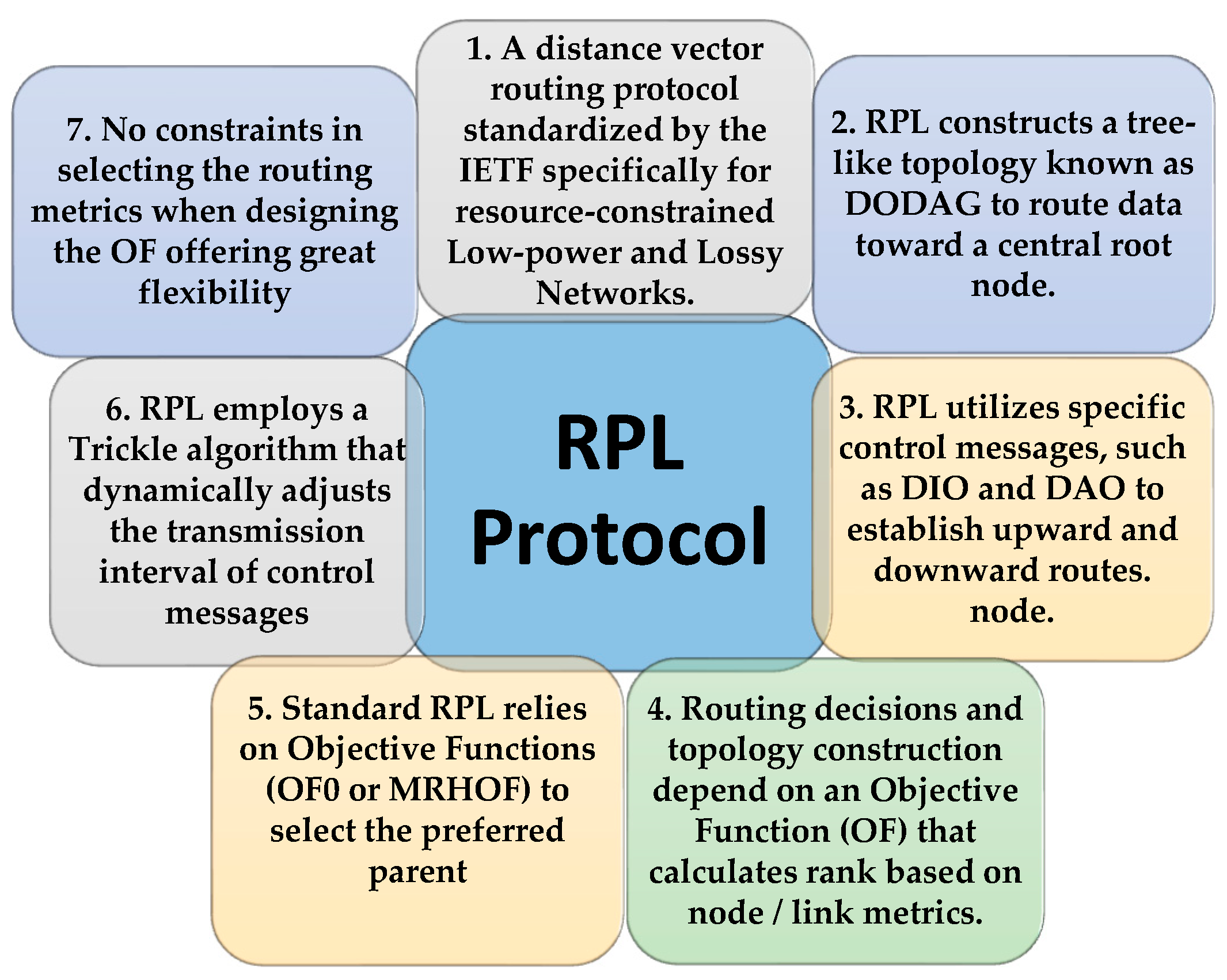

2.1. RPL Protocol Overview

2.2. Reinforcement Learning Overview

3. Related Work

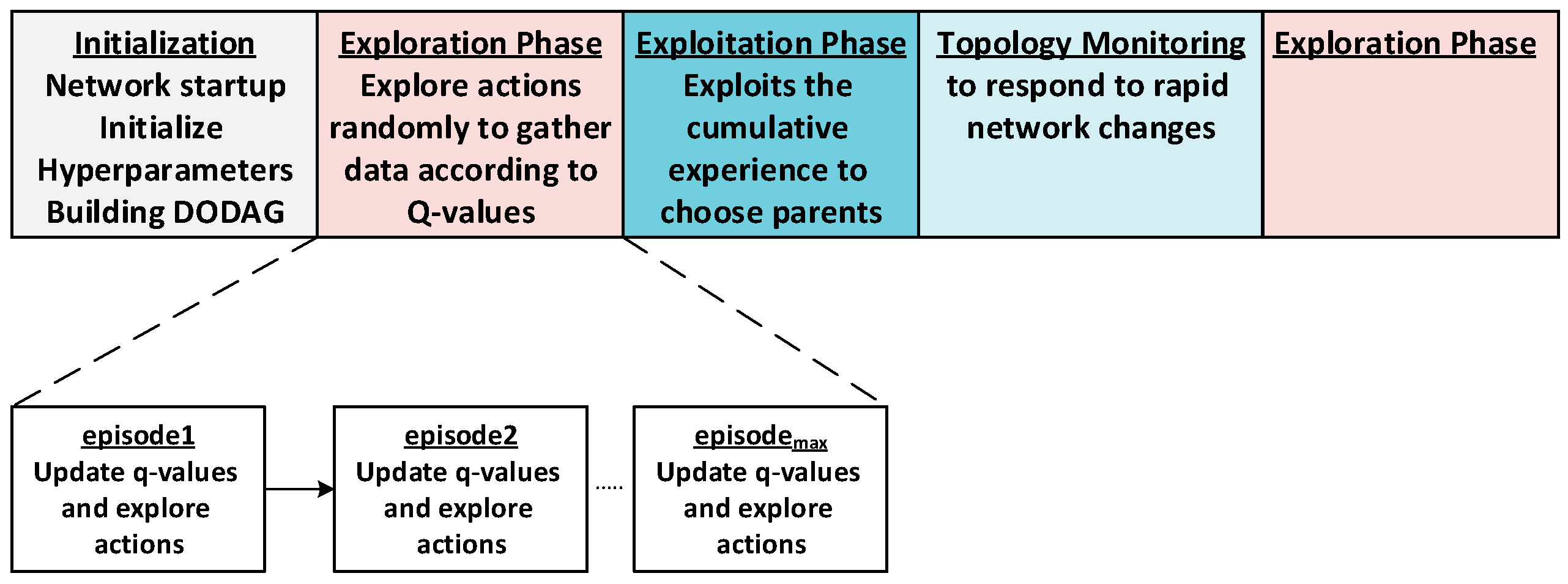

4. Methodology of the Proposed Routing Mechanism

4.1. System Model and Assumptions

- State Space : is a set of finite states in the Environment that represents the WSN network. Every node is defined as a current state in which the agent takes an action. Here, Q-Learning uses the current state to identify potential actions and evaluate their expected rewards. The next node becomes the new state. The state space is defined as .

- Action Space : when the agent resides in the state , it observes the environment, takes an action , and then the current state moves to the next state . An agent (the node) selects one of its neighbors as the next forwarder. Therefore, the current node’s neighbor set represents the action set as where represents the node’s neighbor number. Q-Learning leverages this action space to update Q-values for each possible action in the current state and refine its decision-making process.

- Reward is calculated by the agent upon receiving a signal from a state in the environment based on the employed node/link metrics. According to (1), the Q-learning agent will learn all available actions in each state and update the required Q-values using the reward signals. Finally, it can choose the best action with the maximum cumulative reward. More details about the reward will be in the reward function section.

- Distributed Network: The network is decentralized, without any central controller.

- Adherence to RPL Standards: Aris-RPL follows the RPL standards. All nodes (excluding the root node) are homogeneous regarding resource constraints such as power and radio range specifications.

- Symmetrical and Bidirectional Interactions: Communication between nodes is bidirectional and symmetrical. Network connectivity is dynamic according to network and node condition changes.

- Single/Multi-Hop Communication: The sink receives data packets from network nodes via single or multi-hop paths, depending on the nodes’ position.

4.2. Routing Metrics Used in Aris-RPL

4.2.1. Received Signal Strength Indicator (RSSI)

4.2.2. Buffer Utilization (BU)

4.2.3. Overflow Ratio (OFR)

4.2.4. Reminder Energy (RE)

4.2.5. Child Count

4.3. The Q-Learning Algorithm

4.3.1. The Reward Function

4.3.2. Q-Learning Value Function

| Algorithm 1 The Q-learning Algorithm | |||

| Input: Incoming DIO message from Neighboring j | |||

| Output: Outgoing DIO message with Updated metrics, Update the Q-Table | |||

| 1 | Define: D_Factor , L_Rate , Buffer Utilization threshold , | ||

| 2 | Overflow ratio Utilization threshold RSSImax, RSSImin, | ||

| 3 | Max Child Count (CC), Extra, Penalty, Rmax; | ||

| 4 | Define: Routing Metrics (BU, OFR, RE, CC); | ||

| 5 | Neighboring_Node Nj, Receiving_Node Ni | ||

| 6 | Begin: | ||

| 7 | RSSIj ← Calculate RSSIj; | ||

| 8 | Calculate OFR, BU according to (6) and (7); | ||

| 9 | Normalize RSSIj; | ||

| 10 | Rewardj ← Calculate Reward (Routing Metrics); | ||

| 11 | if Nj is new then | ||

| 12 | Parent-Set[j].ParentID ← DIOj.NodeID; | ||

| 13 | Q-Table[j].ParentID ← Parent-Set[j].ParentID; | ||

| 14 | Q-Table[j].Parentqvalue ← QValueInitial; | ||

| 15 | end | ||

| 16 | if Nj is not new then | ||

| 17 | if Nj is the Netwotk_root then | ||

| 18 | Q-Table[j].Parentqvalue is updated with (Rmax); | ||

| 19 | end | ||

| 20 | else if Nj is a root’s child then | ||

| 21 | Q-Table[j].Parentqvalue is updated with Rewardj + Extra; | ||

| 22 | end | ||

| 23 | else if Nj.Rank >Ni.Rank or CC is maximum then | ||

| 24 | Q-Table[j].Parentqvalue is updated with Penalty; | ||

| 25 | end | ||

| 26 | else | ||

| 27 | Q-Table[j].Parentqvalue is updated with Rewardj; | ||

| 28 | end | ||

| 29 | end | ||

| 30 31 | /Outgoing DIO message contains Updated routing metrics, and the max neighboring Q-value; | ||

| 32 | exit: | ||

4.4. Selection Algorithm

| Algorithm 2 Subsequent Node Selection Algorithm | ||||

| Input: Data Packet from a child node | ||||

| Parent-set and Q-table of the current node | ||||

| Output: Preferred next hop node (Parent) | ||||

| 1 | Define: Init value , episodeMax, | |||

| 2 | Begin: | |||

| 3 | if (Parent-set is Empty) then | |||

| 4 | broadcast a DIS message; | |||

| 5 | end | |||

| 6 | if episode ≤ episodeMax then //Exploration | |||

| 7 | according to (12); | |||

| 8 | Preferred-Parrent ← Select from parent-set according to PRi(j) in (11); | |||

| 9 | episode++; | |||

| 10 | end | |||

| 11 | if episod > episodMax then//Exploitation | |||

| 12 | Pmax ← Find QvalueMax(Parent-set, Q-Table); | |||

| 13 | return Pmax; | |||

| 14 | end | |||

| 15 | exit: Selected preferred parent | |||

4.5. Network Topology Monitoring Management Function

| Algorithm 3 Topology Monitoring and Management | ||||

| Input: Data Packet from a child node, | ||||

| Q-table of the current node | ||||

| Output: DIO message, possible exploration activating | ||||

| 1 | Define: Timer , congestion threshold | |||

| 2 | Begin: | |||

| 3 | while Exploitation do | |||

| 4 | Start TIMER T; | |||

| 5 | Calculate OFR, and other metrics every T; | |||

| 6 | if OFR ≥ then | |||

| 7 | broadcast a DIO message with the last node metrics; | |||

| 8 | end exit; | |||

| 9 | end | |||

| 10 | if local or global repair then | |||

| 11 | Reset the Trickle timer t; | |||

| 12 | Reset episode counter; | |||

| 13 | end | |||

| 14 | exit: | |||

5. System Setup and Results

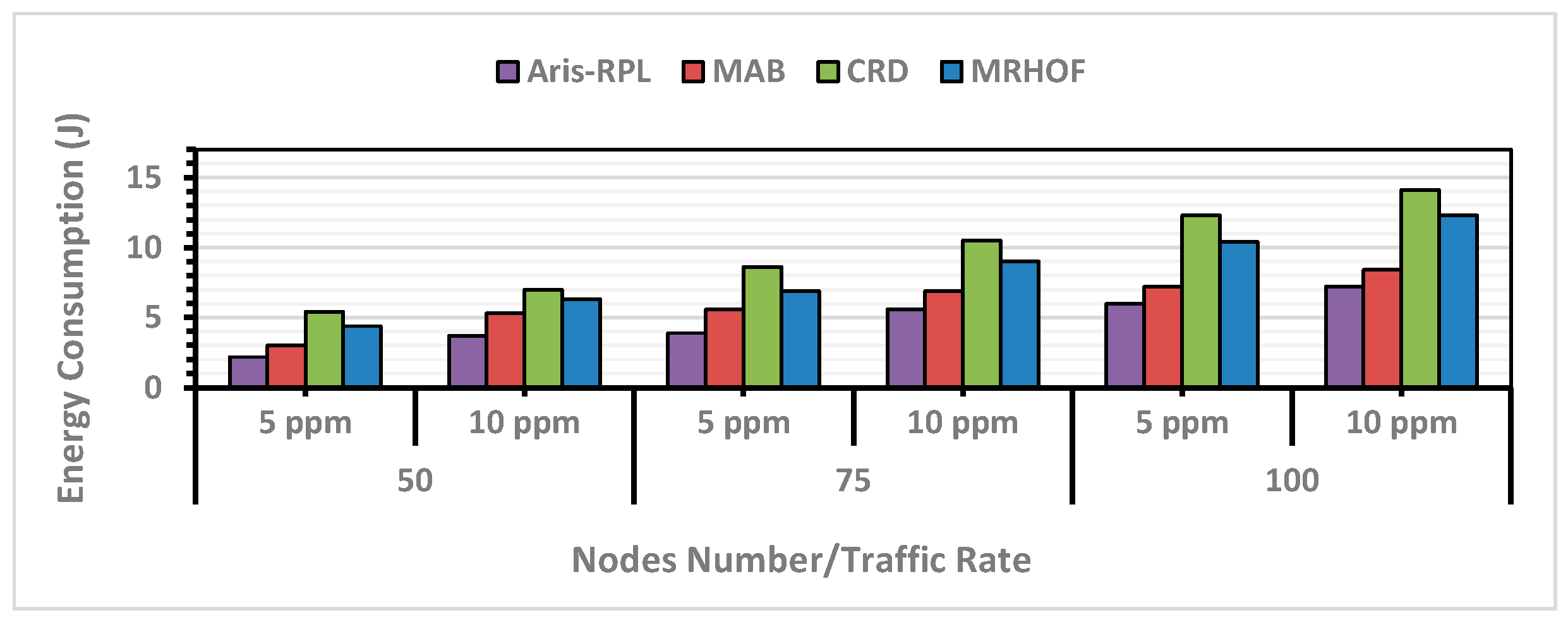

- RPL- MRHOF represents the original version of RPL. Its objective function uses the ETX link quality metric as the selection criterion of the preferred parents [21]. The comparative analysis and performance evaluation with RPL-MRHOF allow us to highlight the improvements achieved by Aris-RPL over traditional RPL.

- A Learning-Based Resource Management for Low Power and Lossy IoT Networks [9], which is referred to as MAB in later sections. It is a machine learning approach that operates on distributed nodes within the Contiki/RPL framework like Aris-RPL, and utilizes the multiarmed bandit (MAB) technique to optimize performance in dynamic IoT networks. According to the MABs authors, it has demonstrated superior results against load-balancing and congestion-aware RPL enhancements such as [26,27]. Algorithms 4 and 5 explain the MAB approach used in this study, and the related simulation settings are detailed in Table 4.

- A congestion-aware routing algorithm in Dynamic IoT Networks [41], which is referred to as CRD in later sections. It is a Q-learning approach for dynamic IoT networks under heavy-load traffic scenarios. CRD aligns closely with the reinforcement learning principles used in Aris-RPL. It adopts the Q-learning algorithm at each node to learn an optimal parent selection policy to tackle the load-balancing challenges in RPL networks. Algorithms 6 and 7 explain the MAB approach used in this study, and the related simulation settings are detailed in Table 5.

| Algorithm 4 Multi-armed Bandit-learning-based algorithm MAB | |||

| Input: Neighbor set N(x) for each node x that contains n neighbors. | |||

| Output: Update the Q-Table | |||

| 1 | Define: L_Rate , r+, r−, Neighbor j; | ||

| 2 | re_limitsmax = 3 | ||

| 3 | ba-off_stagesmax = 5 | ||

| 4 | Define: CWmin = 0, CWmax = 31 | ||

| 5 | c_reward = 0, Qn(a) = 0, Qn+1(a) = 0 | ||

| 6 | Begin: | ||

| 7 | ETXj ← Calculate ETX using neighbor_link_callback (); | ||

| 8 | EEXj ← Calculate EEX from ETX; | ||

| 9 | Evaluate reward; | ||

| 10 | if C_EEX ≤ Pr_EEX then reward = r+ | ||

| 11 | else reward = r− | ||

| 12 | For Action (a) update r_table; | ||

| 13 | Using the following update the Q-values table | ||

| 14 | |||

| 15 | end if | ||

| 16 | End | ||

| 17 | exit: | ||

| Algorithm 5 MAB Selection algorithm | |||

| Input: Data Packet from a child node | |||

| Output: Preferred next hop node (Parent) | |||

| 1 | Define: I = Imin, counter c = 0, Root = R, Node = n, Parent = p; | ||

| 2 | Begin: | ||

| 3 | set I = I × 2 | ||

| 4 | if Imax ≤ I then I = Imax | ||

| 5 | end if | ||

| 6 | if exploitation then | ||

| 7 | Select parent y with minimum Q-value ∀ y ∈ N(x) | ||

| 8 | Suppress DIO transmissions | ||

| 9 | end if | ||

| 10 | if exploration then | ||

| 11 | if n = R then R rank = 1 | ||

| 12 | end if | ||

| 13 | if p = null then rank = pathankmax | ||

| 14 | end if | ||

| 15 | if p != null then rank = h + Rank(pi) + r_increase | ||

| 16 | r_increase = EEX | ||

| 17 | end if | ||

| 18 | return MIN (Baserank + r_increase) | ||

| 19 | embed r_increase in DIO | ||

| 20 | ttimer = random [I/2, I] | ||

| 21 | if (network is stable) then counter ++ | ||

| 22 | else | ||

| 23 | I = Imin | ||

| 24 | if (ttimer expires) then broadcast DIO | ||

| 25 | end if | ||

| 26 | end if | ||

| 27 | end | ||

| 28 | exit: Selected preferred parent | ||

| Algorithm 6 Congestion-Aware Routing using Q-Learning (CRD) | |||

| Input: Incoming DIO message from Neighboring j | |||

| Output: Outgoing DIO message with Updated metrics, Update the Q-Table | |||

| 1 | Define: BFth, L_Rate , η; | ||

| 2 | Define: Routing Metrics (BFj, HCj); | ||

| 3 | Neighboring_Node Nj, Receiving_Node Ni | ||

| 4 | Begin: | ||

| 5 | ETXj ← Calculate ETXj; | ||

| 6 | Decode BFj and HCj from the received DIO message; | ||

| 7 | max (BFj/BFth, 1 − BFj/BFth) | ||

| 8 | Rj ← BFj + ETXj + HCj | ||

| 9 | if Nj is new then | ||

| 10 | Parent-Set[j].ParentID ← DIOj.NodeID; | ||

| 11 | Q-Table[j].ParentID ← Parent-Set[j].ParentID; | ||

| 12 | Q-Table[j].Parentqvalue ← QValueInitial; | ||

| 13 | end | ||

| 14 | if Nj is not new then | ||

| 15 | Q-Table[j].Parentqvalue Qold(j) + [Rj − Qold(j)]; | ||

| 16 | end | ||

| 17 | BFi | ← Calculate BFi | |

| 18 | HCi | ← Calculate HCi | |

| 19 | Encode BFi and HCi in the Outgoing DIO message | ||

| 20 | /Outgoing DIO message contains updated routing metrics; | ||

| 21 | exit: | ||

| Algorithm 7 CRD Selection Algorithm | |

| Input: Data Packet from a child node | |

| Parent-set and Q-table of the current node | |

| Output: Preferred next hop node (Parent) | |

| 1 | Define: exploration factor , Consecutive q_losses ,, Timer X, Imin |

| 2 | Begin: |

| 3 | if (Parent-set is Empty) then |

| 4 | broadcast a DIS message; end |

| 5 | Compute selection probabilities using: |

| 6 | Select the preferred parent y with the highest probability Px(y) |

| 7 | Reset Trickle Timer: |

| 8 | Reset the Trickle timer to Imin if the node detects consecutive queue losses |

| 9 | Increase by after each reset to limit overhead |

| 10 | Reinitialize timer values if no losses occur within interval X |

| 11 | exit: Selected preferred parent |

| Parameter | Value |

|---|---|

| Micro-Controller Unit (MCU) | 2nd MSP430 generation |

| Architecture | 16-bit RISC (Upgraded to 20 bits) |

| Radio Module | CC2420 |

| Operating MCU Voltage Range | 1.8 V < V < 3.6 V |

| CC2420 Voltage Range | 2.1 V < V < 3.6 V |

| Operating Temperature | −40 °C < θ < +85 °C |

| Off Mode Current | 0.1 µA |

| Radio Transmitting Mode @ 0 dBm | 17.4 mA |

| Radio Receiving Mode Current | 18.8 mA |

| Radio IDLE Mode Current | 426 µA |

| Parameter | Value |

|---|---|

| Area | 100 × 100 m2 |

| Nodes’ Number | 50, 75, 100 |

| Sink Number | 1 |

| Radio Channel Model | UDGM Distance Loss |

| Com./Interference Range | 30 m/40 m |

| Traffic Rate | 5 ppm, 10 ppm |

| α, γ | 0.4, 0.7 |

| 0.3, 0.7 | |

| Penalty | −3.0 |

| RMax, Rextra | 100, 5.0 |

| Max Child Count CC | 5 |

| , , | 2.0, 0.2, 0.3 |

| Episodemax | 10 |

| Initialization time | 180 s |

| Simulation Duration | 3600 s |

| Simulation Speed | No speed limit |

| Simulation Iteration Number | 5 times |

| Parameter | Value |

|---|---|

| PHY and MAC protocol | IEEE 802.15.4 with CSMA/CA |

| Radio model Unit disk graph medium (UDGM) | UDGM—Radio model Unit disk graph medium |

| Buffer size | 4 packets |

| UIP payload size | 140 bytes |

| Imin | 10 |

| Imax | 8 doubling |

| Initial reward = 0 | 0 |

| Initial Q1(a) = 0 | 0 |

| Learning Rate (α) | 0.6 |

| Reward Function | +1 (improved EEX), −1 (worsened EEX) |

| Maximum retry limit | 3 |

| Maximum Backoff stage | 5 |

| Number of stored reward values for action a | 5 |

| Parameter | Value |

|---|---|

| PHY and MAC protocol | IEEE 802.15.4 with CSMA/CA |

| Radio model | UDGM |

| Learning rate (α) | 0.3 |

| Congestion threshold (BFth) | 0.5 |

| η the positive integer to enable decoding correctly | 100 |

| Exploration factor (θ) | 2 |

| defines the number of consecutive queue losses of a node | 2 |

| Timer X | 100 ms |

| Imin | 3 s |

5.1. Packet Delivery Ratio (PDR)

5.2. Control Traffic Overhead

5.3. Energy Consumption

5.4. E2E Delay

5.5. Computational Complexity Analysis

5.6. Protocol Implementation of Aris-RPL

5.7. Comparative Study

| Characteristic | RPL-MRHOF | CRD | MAB | Aris-RPL |

|---|---|---|---|---|

| Objective Function Criteria | The standardized RPL objective function with a focus on minimizing ETX | Multi-metric Q-learning-based optimal parent selection to address congestion and load-balancing in dynamic networks | Multi-armed bandit-based adaptive parent selection in dynamic networks with a focus on energy efficiency | Three-phase Q-learning framework with an effective hybrid monitoring mechanism. Using a composite reward metric. |

| Complexity per Node | O(n) (number of neighbors) | O(n) (number of neighbors) | O(n) (number of neighbors) | O(n) (number of neighbors) |

| Performance in Large Networks | Good-Low PDR, High C. Overhead, High E. Consumption, Moderate E2E delay | Good PDR, High C. Overhead, High E. Consumption, Moderate E2E delay | Good PDR, Moderate C. Overhead Moderate E. Consumption, Moderate-High E2E delay | Good PDR, Moderate C. Overhead, Low-Moderate E. Consumption, Moderate-High E2E delay |

5.8. Sensitivity Analysis

6. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| IETF | Internet Engineering Task Force |

| RPL | Routing Protocol for Low Power and Lossy Networks |

| LLN | Low-Power and Lossy Network |

| WSN/IoT | Wireless Sensor Network/Internet of Things |

| RL | Reinforcement Learning |

| PDR | Packet Delivery Ratio |

| E2E | End-to-End Delay |

| RSSI | Received Signal Strength Indicator |

| DAG | Directed Acyclic Graph |

| DODAG | Destination-Oriented Directed Acyclic Graph |

| DIO | DODAG Information Object |

| DAO | Destination Advertisement Object |

| DAO-ACK | Destination Advertisement Object-Acknowledgment |

| DIS | DODAG Information Solicitation |

| OF | Objective Function |

| MRHOF | Minimum Rank with Hysteresis Objective Function |

| HC | Hop Count |

| QU | Queue Utilization |

| ETX | Expected Transmission Count |

| BU | Buffer Utilization |

| OFR | Overflow Ratio |

| EWMA | Exponentially Weighted Moving Average |

| RE | Reminder Energy |

| CC | Child Count |

| Imin | Minimum Trickle Algorithm Interval |

| UBD | Ubber Bound on Recovery Delay |

| PPM | Packet Per Minute |

| ROM/RAM | Read-Only Memory/Random Access Memory |

References

- Qiu, T.; Chi, J.; Zhou, X.; Ning, Z.; Atiquzzaman, M.; Wu, D.O. Edge Computing in Industrial Internet of Things: Architecture, Advances and Challenges. IEEE Commun. Surv. Tutor. 2020, 22, 2462–2488. [Google Scholar] [CrossRef]

- Abdulsattar, N.F.; Abbas, A.H.; Mutar, M.H.; Hassan, M.H.; Jubair, M.A.; Habelalmateen, M.I. An Investigation Study for Technologies, Challenges and Practices of IoT in Smart Cities. In Proceedings of the 2022 5th International Conference on Engineering Technology and Its Applications (IICETA), Al-Najaf, Iraq, 31 May–1 June 2022; IEEE: Al-Najaf, Iraq, 2022; pp. 554–557. [Google Scholar]

- Alshehri, F.; Muhammad, G. A Comprehensive Survey of the Internet of Things (IoT) and AI-Based Smart Healthcare. IEEE Access 2021, 9, 3660–3678. [Google Scholar] [CrossRef]

- Ibrahim, O.A.; Sciancalepore, S.; Di Pietro, R. MAG-PUFs: Authenticating IoT Devices via Electromagnetic Physical Unclonable Functions and Deep Learning. Comput. Secur. 2024, 143, 103905. [Google Scholar] [CrossRef]

- Ni, Q.; Guo, J.; Wu, W.; Wang, H. Influence-Based Community Partition with Sandwich Method for Social Networks. IEEE Trans. Comput. Soc. Syst. 2023, 10, 819–830. [Google Scholar] [CrossRef]

- Rehman, S.; Tu, S.; Rehman, O.; Huang, Y.; Magurawalage, C.; Chang, C.-C. Optimization of CNN through Novel Training Strategy for Visual Classification Problems. Entropy 2018, 20, 290. [Google Scholar] [CrossRef] [PubMed]

- Darabkh, K.A.; Al-Akhras, M.; Zomot, J.N.; Atiquzzaman, M. RPL Routing Protocol over IoT: A Comprehensive Survey, Recent Advances, Insights, Bibliometric Analysis, Recommendations, and Future Directions. J. Netw. Comput. Appl. 2022, 207, 103476. [Google Scholar] [CrossRef]

- Darabkh, K.A.; Al-Akhras, M. RPL over Internet of Things: Challenges, Solutions, and Recommendations. In Proceedings of the 2021 IEEE International Conference on Mobile Networks and Wireless Communications (ICMNWC), Tumkur, Karnataka, 3–4 December 2021; IEEE: Tumkur, Karnataka, India, 2021; pp. 1–7. [Google Scholar]

- Musaddiq, A.; Ali, R.; Kim, S.W.; Kim, D.-S. Learning-Based Resource Management for Low-Power and Lossy IoT Networks. IEEE Internet Things J. 2022, 9, 16006–16016. [Google Scholar] [CrossRef]

- Musaddiq, A.; Zikria, Y.B.; Zulqarnain; Kim, S.W. Routing Protocol for Low-Power and Lossy Networks for Heterogeneous Traffic Network. J. Wirel. Com. Netw. 2020, 2020, 21. [Google Scholar] [CrossRef]

- Lamaazi, H.; Benamar, N. A Comprehensive Survey on Enhancements and Limitations of the RPL Protocol: A Focus on the Objective Function. Ad Hoc Netw. 2020, 96, 102001. [Google Scholar] [CrossRef]

- Pourghebleh, B.; Hayyolalam, V. A Comprehensive and Systematic Review of the Load Balancing Mechanisms in the Internet of Things. Clust. Comput. 2020, 23, 641–661. [Google Scholar] [CrossRef]

- Pancaroglu, D.; Sen, S. Load Balancing for RPL-Based Internet of Things: A Review. Ad Hoc Netw. 2021, 116, 102491. [Google Scholar] [CrossRef]

- Barto, A.; Sutton, R.S. Reinforcement Learning: An Introduction; MIT Press: Cambridge, MA, USA, 1998; Volume 10. [Google Scholar]

- Van Hasselt, H.; Guez, A.; Silver, D. Deep Reinforcement Learning with Double Q-Learning. In Proceedings of the AAAI Conference Artificial Intelligence, Phoenix, AZ, USA, 12–17 February 2016; Volume 30, pp. 2094–2100. [Google Scholar] [CrossRef]

- Lei, J.; Liu, J. Reinforcement Learning-Based Load Balancing for Heavy Traffic Internet of Things. Pervasive Mob. Comput. 2024, 99, 101891. [Google Scholar] [CrossRef]

- Tran, T.-N.; Nguyen, T.-V.; Shim, K.; Da Costa, D.B.; An, B. A Deep Reinforcement Learning-Based QoS Routing Protocol Exploiting Cross-Layer Design in Cognitive Radio Mobile Ad Hoc Networks. IEEE Trans. Veh. Technol. 2022, 71, 13165–13181. [Google Scholar] [CrossRef]

- Brandt, A.; Hui, J.; Kelsey, R.; Levis, P.; Pister, K.; Struik, R.; Vasseur, J.P.; Alexander, R. RPL: IPv6 Routing Protocol for Low Power and Lossy Networks; Winter, T., Thubert, P., Eds.; IETF (Internet Engineering Task Force): Fremont, CA, USA, 2012. [Google Scholar]

- Youssef, M.; Youssef, A.; Younis, M. Overlapping Multihop Clustering for Wireless Sensor Networks. IEEE Trans. Parallel Distrib. Syst. 2009, 20, 1844–1856. [Google Scholar] [CrossRef]

- Thubert, P. Objective Function Zero for the Routing Protocol for Low-Power and Lossy Networks (RPL); IETF (Internet Engineering Task Force): Fremont, CA, USA, 2012. [Google Scholar]

- Gnawali, O.; Levis, P. The Minimum Rank with Hysteresis Objective Function; IETF (Internet Engineering Task Force): Fremont, CA, USA, 2012. [Google Scholar]

- Levis, P.; Clausen, T.; Hui, J.; Gnawali, O.; Ko, J. The Trickle Algorithm; IETF (Internet Engineering Task Force): Fremont, CA, USA, 2011. [Google Scholar]

- Russel, S.; Norvig, P. Artificial Intelligence: A Modern Approach; Pearson: Harlow, UK, 2016. [Google Scholar]

- Lindelauf, R. Nuclear Deterrence in the Algorithmic Age: Game Theory Revisited. In NL ARMS Netherlands Annual Review of Military Studies 2020; Osinga, F., Sweijs, T., Eds.; NL ARMS; T.M.C. Asser Press: The Hague, The Netherlands, 2021; pp. 421–436. ISBN 978-94-6265-418-1. [Google Scholar]

- Musaddiq, A.; Olsson, T.; Ahlgren, F. Reinforcement-Learning-Based Routing and Resource Management for Internet of Things Environments: Theoretical Perspective and Challenges. Sensors 2023, 23, 8263. [Google Scholar] [CrossRef] [PubMed]

- Musaddiq, A.; Nain, Z.; Ahmad Qadri, Y.; Ali, R.; Kim, S.W. Reinforcement Learning-Enabled Cross-Layer Optimization for Low-Power and Lossy Networks under Heterogeneous Traffic Patterns. Sensors 2020, 20, 4158. [Google Scholar] [CrossRef]

- Kim, H.-S.; Kim, H.; Paek, J.; Bahk, S. Load Balancing Under Heavy Traffic in RPL Routing Protocol for Low Power and Lossy Networks. IEEE Trans. Mob. Comput. 2017, 16, 964–979. [Google Scholar] [CrossRef]

- Singh, P.; Chen, Y.-C. RPL Enhancement for a Parent Selection Mechanism and an Efficient Objective Function. IEEE Sens. J. 2019, 19, 10054–10066. [Google Scholar] [CrossRef]

- Behrouz Vaziri, B.; Toroghi Haghighat, A. Brad-OF: An Enhanced Energy-Aware Method for Parent Selection and Congestion Avoidance in RPL Protocol. Wirel. Pers. Commun. 2020, 114, 783–812. [Google Scholar] [CrossRef]

- Seyfollahi, A.; Ghaffari, A. A Lightweight Load Balancing and Route Minimizing Solution for Routing Protocol for Low-Power and Lossy Networks. Comput. Netw. 2020, 179, 107368. [Google Scholar] [CrossRef]

- Safaei, B.; Monazzah, A.M.H.; Ejlali, A. ELITE: An Elaborated Cross-Layer RPL Objective Function to Achieve Energy Efficiency in Internet-of-Things Devices. IEEE Internet Things J. 2021, 8, 1169–1182. [Google Scholar] [CrossRef]

- Acevedo, P.D.; Jabba, D.; Sanmartin, P.; Valle, S.; Nino-Ruiz, E.D. WRF-RPL: Weighted Random Forward RPL for High Traffic and Energy Demanding Scenarios. IEEE Access 2021, 9, 60163–60174. [Google Scholar] [CrossRef]

- Pushpalatha, M.; Anusha, T.; Rama Rao, T.; Venkataraman, R. L-RPL: RPL Powered by Laplacian Energy for Stable Path Selection during Link Failures in an Internet of Things Network. Comput. Netw. 2021, 184, 107697. [Google Scholar] [CrossRef]

- Kalantar, S.; Jafari, M.; Hashemipour, M. Energy and Load Balancing Routing Protocol for IoT. Int. J. Commun. 2023, 36, e5371. [Google Scholar] [CrossRef]

- Gaddour, O.; Koubaa, A.; Baccour, N.; Abid, M. OF-FL: QoS-Aware Fuzzy Logic Objective Function for the RPL Routing Protocol. In Proceedings of the 2014 12th International Symposium on Modeling and Optimization in Mobile, Ad Hoc, and Wireless Networks (WiOpt), Hammamet, Tunisia, 12–16 May 2014; IEEE: Hammamet, Tunisia, 2014; pp. 365–372. [Google Scholar]

- Kechiche, I.; Bousnina, I.; Samet, A. A Novel Opportunistic Fuzzy Logic Based Objective Function for the Routing Protocol for Low-Power and Lossy Networks. In Proceedings of the 2019 15th International Wireless Communications & Mobile Computing Conference (IWCMC), Tangier, Morocco, 24–28 June 2019; IEEE: Tangier, Morocco, 2019; pp. 698–703. [Google Scholar]

- Frikha, M.S.; Gammar, S.M.; Lahmadi, A.; Andrey, L. Reinforcement and Deep Reinforcement Learning for Wireless Internet of Things: A Survey. Comput. Commun. 2021, 178, 98–113. [Google Scholar] [CrossRef]

- Park, H.; Kim, H.; Kim, S.-T.; Mah, P. Multi-Agent Reinforcement-Learning-Based Time-Slotted Channel Hopping Medium Access Control Scheduling Scheme. IEEE Access 2020, 8, 139727–139736. [Google Scholar] [CrossRef]

- Banerjee, P.S.; Mandal, S.N.; De, D.; Maiti, B. RL-Sleep: Temperature Adaptive Sleep Scheduling Using Reinforcement Learning for Sustainable Connectivity in Wireless Sensor Networks. Sustain. Comput. Inform. Syst. 2020, 26, 100380. [Google Scholar] [CrossRef]

- Saleem, A.; Afzal, M.K.; Ateeq, M.; Kim, S.W.; Zikria, Y.B. Intelligent Learning Automata-Based Objective Function in RPL for IoT. Sustain. Cities Soc. 2020, 59, 102234. [Google Scholar] [CrossRef]

- Farag, H.; Stefanovic, C. Congestion-Aware Routing in Dynamic IoT Networks: A Reinforcement Learning Approach. In Proceedings of the 2021 IEEE Global Communications Conference (GLOBECOM), Madrid, Spain, 7–11 December 2021; IEEE: Madrid, Spain, 2021; pp. 1–6. [Google Scholar]

- Zahedy, N.; Barekatain, B.; Quintana, A.A. RI-RPL: A New High-Quality RPL-Based Routing Protocol Using Q-Learning Algorithm. J. Supercomput. 2024, 80, 7691–7749. [Google Scholar] [CrossRef]

- Han, X.; Xie, M.; Yu, K.; Huang, X.; Du, Z.; Yao, H. Combining Graph Neural Network with Deep Reinforcement Learning for Resource Allocation in Computing Force Networks. Front. Inf. Technol. Electron. Eng. 2024, 25, 701–712. [Google Scholar] [CrossRef]

- Manogaran, N.; Raphael, M.T.M.; Raja, R.; Jayakumar, A.K.; Nandagopal, M.; Balusamy, B.; Ghinea, G. Developing a Novel Adaptive Double Deep Q-Learning-Based Routing Strategy for IoT-Based Wireless Sensor Network with Federated Learning. Sensors 2025, 25, 3084. [Google Scholar] [CrossRef] [PubMed]

- Zhang, S.; Tong, X.; Chi, K.; Gao, W.; Chen, X.; Shi, Z. Stackelberg Game-Based Multi-Agent Algorithm for Resource Allocation and Task Offloading in MEC-Enabled C-ITS. IEEE Trans. Intell. Transport. Syst. 2025, 26, 17940–17951. [Google Scholar] [CrossRef]

- Scarvaglieri, A.; Panebianco, A.; Busacca, F. MAGELLAN: A Distributed MAB-Based Algorithm for Energy-Fair and Reliable Routing in Multi-Hop LoRa Networks. In Proceedings of the GLOBECOM 2024—2024 IEEE Global Communications Conference, Cape Town, South Africa, 8–12 December 2024; IEEE: Cape Town, South Africa, 2024; pp. 2250–2255. [Google Scholar]

- Santana, P.; Moura, J. A Bayesian Multi-Armed Bandit Algorithm for Dynamic End-to-End Routing in SDN-Based Networks with Piecewise-Stationary Rewards. Algorithms 2023, 16, 233. [Google Scholar] [CrossRef]

- Tanyingyong, V.; Olsson, R.; Hidell, M.; Sjodin, P.; Ahlgren, B. Implementation and Deployment of an Outdoor IoT-Based Air Quality Monitoring Testbed. In Proceedings of the 2018 IEEE Global Communications Conference (GLOBECOM), Abu Dhabi, United Arab Emirates, 9–13 December 2018; IEEE: Abu Dhabi, United Arab Emirates, 2018; pp. 206–212. [Google Scholar]

- Teles Hermeto, R.; Gallais, A.; Theoleyre, F. On the (over)-Reactions and the Stability of a 6TiSCH Network in an Indoor Environment. In Proceedings of the 21st ACM International Conference on Modeling, Analysis and Simulation of Wireless and Mobile Systems, Montreal, QC, Canada, 28 October–2 November 2018; ACM: Montreal, QC, Canada, 2018; pp. 83–90. [Google Scholar]

- Bildea, A.; Alphand, O.; Rousseau, F.; Duda, A. Link Quality Metrics in Large Scale Indoor Wireless Sensor Networks. In Proceedings of the 2013 IEEE 24th Annual International Symposium on Personal, Indoor, and Mobile Radio Communications (PIMRC), London, UK, 8–11 September 2013; IEEE: London, UK, 2013; pp. 1888–1892. [Google Scholar]

- Srinivasan, K.; Levis, P. RSSI Is under Appreciated. In Proceedings of the Third Workshop on Embedded Networked Sensors (EmNets 2006), Cambridge, MA, USA, 30–31 May 2006; Volume 2006, pp. 1–5. [Google Scholar]

- Osterlind, F.; Dunkels, A.; Eriksson, J.; Finne, N.; Voigt, T. Cross-Level Sensor Network Simulation with COOJA. In Proceedings of the 2006 31st IEEE Conference on Local Computer Networks, Tampa, FL, USA, 14–16 November 2006; IEEE: Tampa, FL, USA, 2006; pp. 641–648. [Google Scholar]

- Eriksson, J.; Österlind, F.; Finne, N.; Tsiftes, N.; Dunkels, A.; Voigt, T.; Sauter, R.; Marrón, P.J. COOJA/MSPSim: Interoperability Testing for Wireless Sensor Networks. In Proceedings of the Second International ICST Conference on Simulation Tools and Techniques, Rome, Italy, 2–6 March 2009; ICST: Rome, Italy, 2009. [Google Scholar]

- Contiki: The Open Source Operating System for the Internet of Things. Available online: http://www.contikios.org (accessed on 5 October 2025).

- Shirbeigi, M.; Safaei, B.; Mohammadsalehi, A.; Monazzah, A.M.H.; Henkel, J.; Ejlali, A. A Cluster-Based and Drop-Aware Extension of RPL to Provide Reliability in IoT Applications. In Proceedings of the 2021 IEEE International Systems Conference (SysCon), Vancouver, BC, Canada, 15 April–15 May 2021; IEEE: Vancouver, BC, Canada, 2021; pp. 1–7. [Google Scholar]

- Al-Hadhrami, Y.; Hussain, F.K. Real Time Dataset Generation Framework for Intrusion Detection Systems in IoT. Future Gener. Comput. Syst. 2020, 108, 414–423. [Google Scholar] [CrossRef]

- Sun, G.; Liu, Y.; Chen, Z.; Wang, A.; Zhang, Y.; Tian, D.; Leung, V.C.M. Energy Efficient Collaborative Beamforming for Reducing Sidelobe in Wireless Sensor Networks. IEEE Trans. Mob. Comput. 2021, 20, 965–982. [Google Scholar] [CrossRef]

- Dunkels, A.; Osterlind, F.; Tsiftes, N.; He, Z. Software-Based on-Line Energy Estimation for Sensor Nodes. In Proceedings of the 4th Workshop on Embedded Networked Sensors, Cork Ireland, Republic of Ireland, 25–26 June 2007; ACM: Cork, Ireland, 2007; pp. 28–32. [Google Scholar]

- Zolertia Z1 Mote. Available online: https://github.com/Zolertia/Resources/wiki/The-Z1-mote (accessed on 15 October 2025).

- Thubert, P. Compression Format for IPv6 Datagrams over IEEE 802.15.4-Based Networks; IETF (Internet Engineering Task Force): Fremont, CA, USA, 2011. [Google Scholar]

- Vandervelden, T.; Deac, D.; Van Glabbeek, R.; De Smet, R.; Braeken, A.; Steenhaut, K. Evaluation of 6LoWPAN Generic Header Compression in the Context of a RPL Network. Sensors 2023, 24, 73. [Google Scholar] [CrossRef] [PubMed]

| Ref./Pub. Year | Methodology | Research Field | Key Metrics | Simulator | Major Contributions/Results | Limitations |

|---|---|---|---|---|---|---|

| [27] 2017 | Rule-Based Objective Function | Routing optimization for RPL networks | QU, HC, ETX | Testbed | According to the simulation scenarios, it improved the packet delivery ratio (PDR) by up to 147% compared to standard RPL. | Not considering metrics like energy usage limits the applicability to dynamic network changes. |

| [28] 2019 | Rule-Based Objective Function | Routing optimization for RPL networks | ETX, QU, Node Lifetime, Latency, Bottleneck Nodes | COOJA | According to the simulation scenarios, it improved energy consumption by 46.5% and communication overhead by 60%, while incurring a 27% increase in delay compared to standard RPL. | Lacked handling of dynamics in larger networks. |

| [29] 2020 | Rule-based objective function | Congestion control in RPL networks | ETX, Residual Energy, Delay, Traffic Intensity | NS-2 | According to the simulation scenarios, it reduced energy consumption by up to 40% compared to RPL-MRHOF while maintaining a balanced distribution of traffic load. | Scalability challenges in large networks. |

| [30] 2020 | Rule-based objective function | QoS and load balancing in RPL networks | Parent Conditions, Child Count, Parent Rank | COOJA | According to the simulation scenarios, it reduced energy consumption by 23% and network overhead by 12%, while improving PDR compared to RPL-MRHOF. | Could not handle network dynamics effectively. |

| [31] 2021 | Rule-based objective function | Energy efficiency in RPL Networks | Energy Efficiency, MAC, and RPL Layer Metrics | COOJA | According to the simulation scenarios, it enhanced control overhead, PDR, and energy consumption compared to counterparts by 36.6%, 11.7%, and 39%. | Constrained performance under high traffic loads with high E2E delay. |

| [32] 2021 | Rule-based objective function | Congestion control in RPL Networks | Congestion Rates, HC, Remaining Energy | COOJA | According to the simulation scenarios, it improved PDR and control overhead compared to RPL-MRHOF by an average of 11% and 20%. | Lacked link-quality metrics for decision-making. |

| [33] 2021 | Rule-based objective function | Communication quality in RPL networks | ETX, Link Estimation | COOJA | According to the simulation scenarios, it enhanced PDR, Delay, and Energy Consumption compared to other counterparts by 20%, 24%, and 36%. | High overhead. |

| [34] 2023 | Rule-based objective function | QoS and load balancing in RPL networks | Multi-Metric Parent Selection, Traffic Distribution | COOJA | According to the simulation scenarios, it improved PDR, Delay, and Control Overhead compared to standard RPL by 25%, 20%, and 22%. | High overhead and challenges with dynamic node conditions. |

| [35] 2014 | Fuzzy logic objective function | Effective objective function design for RPL using fuzzy rules | Link Quality, E2E Delay, HC, Energy Consumption | COOJA | According to the simulation scenarios, it reduced the delay by up to 15% and achieved a more balanced energy distribution compared to standard RPL | Limited adaptability due to reliance on predefined rules. |

| [36] 2019 | Fuzzy logic objective function | Reliability optimization in RPL networks | ETX, HC, Parent load, Neighbor Load | COOJA | According to the simulation scenarios, it maintained a stable PDR above 94% and reduced delay while effectively balancing node load using the novel Children Number metric compared to standard RPL. | Limitations in handling network dynamics in large networks. |

| [40] 2020 | Reinforcement Learning objective function | Performance optimization in RPL Networks | ETX, Packet Loss Ratio | COOJA | According to the simulation scenarios, it improved Packet Reception Ratio (PRR) by an average of 7.04%. Energy Consumption by 17.52%. An 18.72% reduction in DIO overhead, and performed marginally better than MRHOF by reducing transmission delays. | Not addressing load balancing explicitly. |

| [9] 2022 | Reinforcement Learning objective function | Performance optimization in RPL networks | EEX (Energy-Enhanced ETX) | COOJA | According to the simulation scenarios, it improved PDR, reduced Energy Consumption and Control Overhead, and maintained lower delay compared to MRHOF. | Not considering complicated evaluation scenarios with different traffic rates. |

| [26] 2020 | Reinforcement Learning objective function | Performance optimization in RPL networks | MAC Contention Metrics | COOJA | According to the simulation scenarios, it improved PRR, reducing Control Overhead by approximately 25–50%, and lowering Total Energy Consumption compared to MRHOF. | Experiencing increased computational complexity in large networks. |

| [41] 2021 | Reinforcement Learning objective function | Congestion control in dynamic RPL networks | Buffer Utilization, HC, ETX | MATLAB | According to the simulation scenarios, it improved network performance by reducing node congestion, resulting in a 16% enhancement in PDR and a 27% reduction in Delay compared to RPL-MRHOF. | Not integrating broader metrics like energy consumption. |

| [42] 2024 | Reinforcement Learning objective function | Quality optimization in RPL Networks | Transmission Success Probability, Residual Energy, Buffer Utilization, HC, ETX | COOJA | According to the simulation scenarios, it enhanced successful delivery, delay, and energy consumption, compared to other counterparts on average by 15.39%, 8.66%, and 25.23%. | High overhead in larger networks and a lack of load-balancing considerations. |

| [43] 2024 | Hybrid GNN and DRL | Dynamic resource allocation | Allocation efficiency, Topology generalization | Python/TensorFlow | According to the simulation scenarios, it enhanced cumulative reward compared to Net_First and Com_First baselines across all topologies on average by 58.0% and 111.2%. | Requires extra techniques to handle heavy computation |

| [44] 2025 | Federated Double Deep Q-Learning | WSN Energy-efficient | Energy, latency, lifetime | MATLAB | According to the simulation scenarios, it enhanced PDR by 5.74%, while significantly reducing delay, energy consumption, and message overhead compared to the baselines. | High computational cost and model synchronization overhead. |

| [45] 2025 | Stackelberg Game-Based Multi-Agent Policy Gradient (SG-MAPG) | Task Offloading in MEC-Enabled C-ITS | Computation Rate, Bandwidth, Channel Gain | Numerical Simulation | Distributed game-theoretic RL approach for joint task offloading and bandwidth resource allocation. | Less focus on the network layer routing |

| [46] 2024 | Distributed routing algorithm based on Multi-Armed Bandit (MAB) learning | Wireless Sensor Networks focusing on multi-hop LoRa networking. | Energy consumption, PDR | LoRa EnergySim | According to the simulation scenarios, it introduced MAGELLAN, which reduced energy consumption by up to 10%, improving PDR by 14% compared to state-of-the-art baselines. | While it provided contributions in their domains, the LoRa is a message-based communication environment that is different compared to the IEEE 802.15.4 standard used in Aris-RPL |

| [47] 2023 | Proposed React-UCB, a Multi-Armed Bandit Bayesian-based algorithm | Wireless Sensor Networks (WSN) and environmental monitoring. | Throughput, delay, and control overhead. | Numerical analysis and case study comparison. | According to the simulation scenarios, it achieved performance enhancements regarding scalability, throughput and delay. | While it provided contributions in its domains, the SDN-based approach relies on a centralized controller to manage routing tables and calculate rewards, while Aris-RPL is distributed |

| Proposed | Reinforcement Learning objective function | Adaptive routing in dynamic RPL networks | Buffer Utilization, RSSI, Overflow Ratio, Child Count, Energy Usage | COOJA | According to the simulation scenarios, it enhanced Control Overhead, PDR, Delay, and Energy Consumption results compared to other counterparts for all scenarios on average by 39%, 25%, 7%, and 38%. | Lack of latency metric consideration, slightly higher E2E delay in large scenarios |

| RPL Approach | ROM Code Size (Bytes) | % Change in ROM | RAM Consumption (Bytes) | % Change in RAM |

|---|---|---|---|---|

| Standard RPL (MRHOF) | 50,974 | --- | 6030 | --- |

| CRD | 51,147 | +0.34% | 6136 | +1.76% |

| MAB | 51,176 | +0.4% | 6056 | +0.43% |

| Aris-RPL | 52,142 | +2.3% | 6060 | +0.50% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Halloum, N.; Ahmadi, A.; Darmani, Y. Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks. Future Internet 2026, 18, 72. https://doi.org/10.3390/fi18020072

Halloum N, Ahmadi A, Darmani Y. Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks. Future Internet. 2026; 18(2):72. https://doi.org/10.3390/fi18020072

Chicago/Turabian StyleHalloum, Najim, Ali Ahmadi, and Yousef Darmani. 2026. "Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks" Future Internet 18, no. 2: 72. https://doi.org/10.3390/fi18020072

APA StyleHalloum, N., Ahmadi, A., & Darmani, Y. (2026). Aris-RPL: A Multi-Objective Reinforcement Learning Framework for Adaptive and Load-Balanced Routing in IoT Networks. Future Internet, 18(2), 72. https://doi.org/10.3390/fi18020072