MobilePrune: Neural Network Compression via ℓ0 Sparse Group Lasso on the Mobile System

Abstract

:1. Introduction

2. Related Work

2.1. Sparsity for Deep Learning Models

2.2. Learning Algorithms for Norm

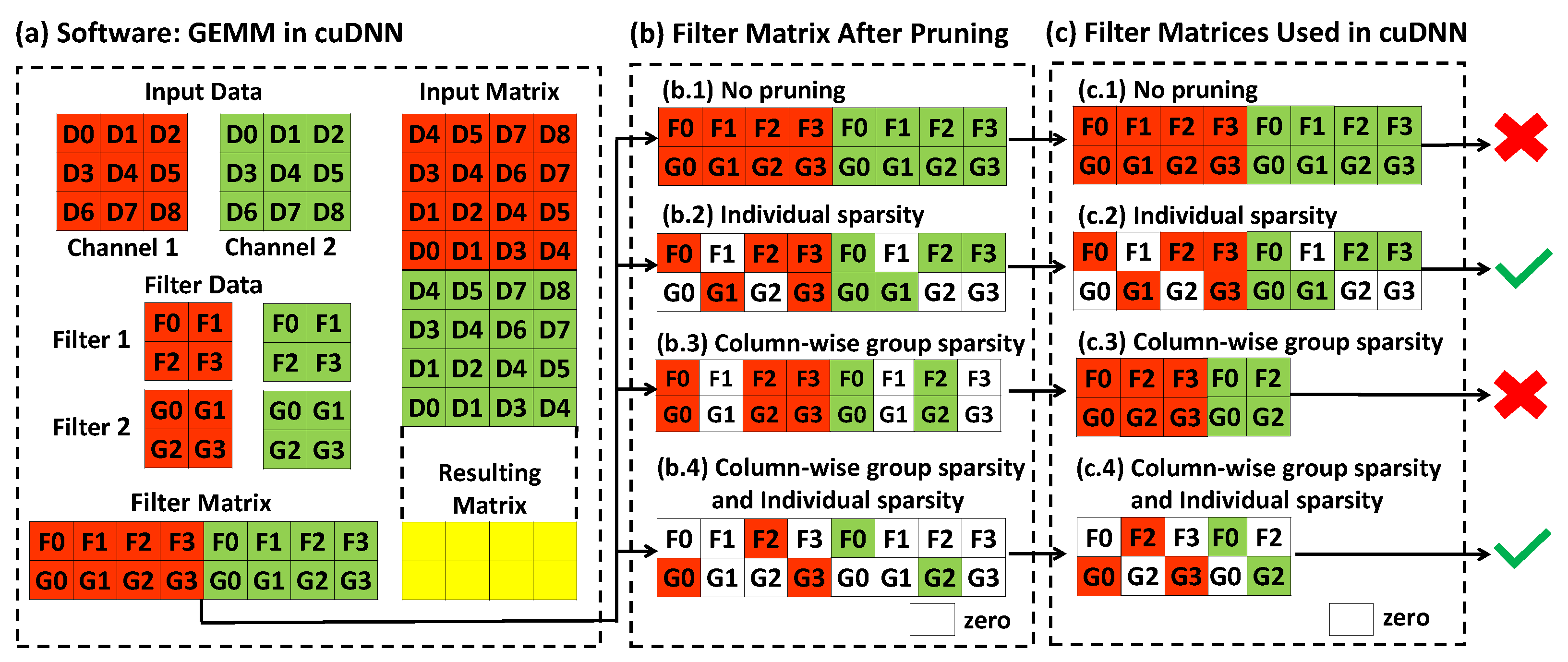

2.3. Software & Hardware Compatibility

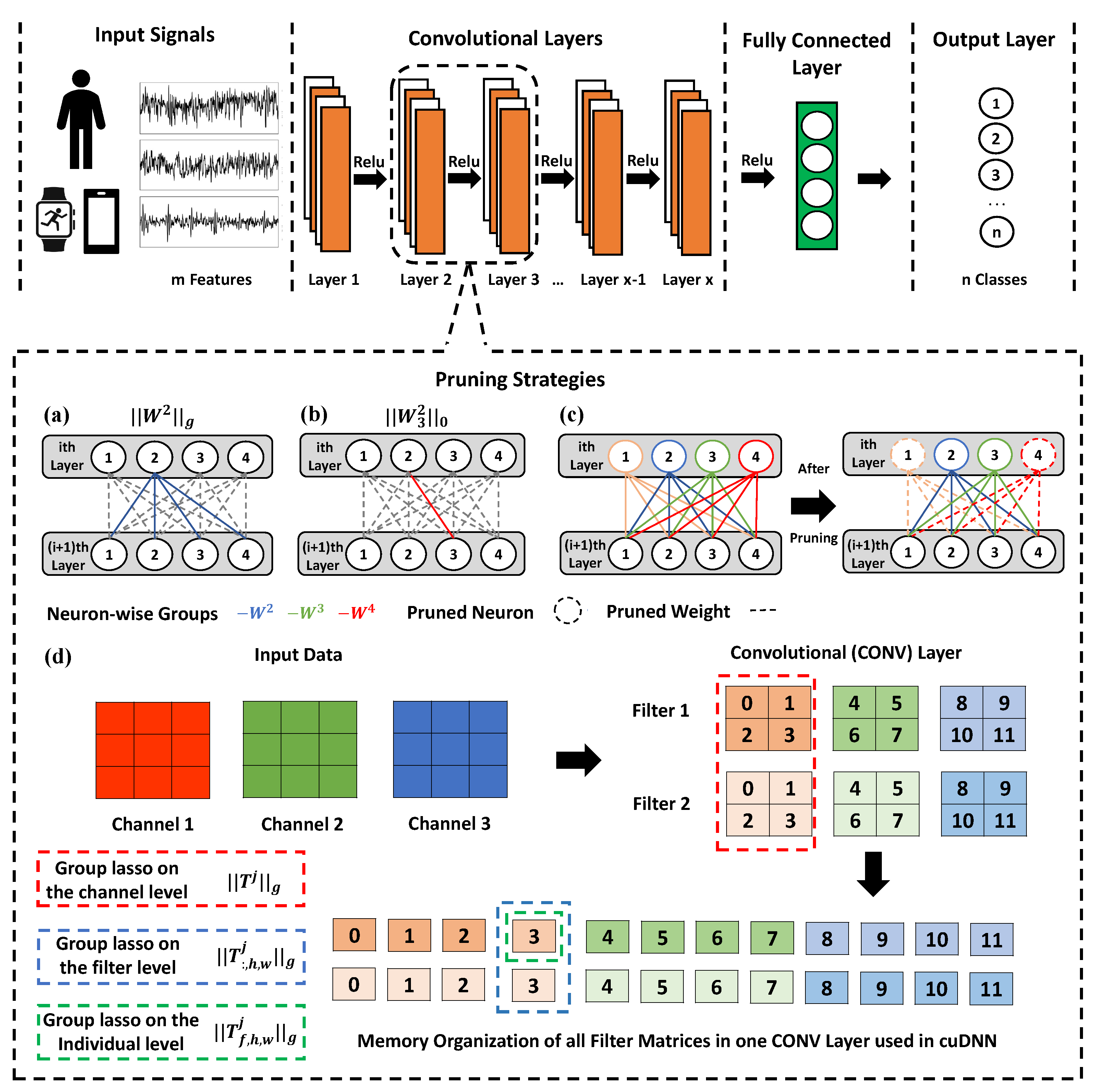

3. Overview

4. Methods

4.1. Sparse Group Lasso

4.2. Exact Optimization by PALM

| Algorithm 1 The framework of MobilePrune Algorithm. |

|

4.3. Efficient Computation of Proximal Operators

4.3.1. Proximal Operator

| Algorithm 2 Efficient calculation of |

|

4.3.2. Proximal Operator

| Algorithm 3 Efficient calculation of |

|

5. Experimental Setup and Results

5.1. Performance on Image Benchmarks

5.1.1. MNIST Dataset

5.1.2. CIFAR-10 Dataset

5.1.3. Tiny-ImageNet Dataset

5.2. Performance on Human Activity Recognition Benchmarks

5.2.1. Performance on the Desktop

5.2.2. Performance of Mobile Phones

5.3. Ablation Studies

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix A.1. The Convergence Analysis of Applying PALM Algorithm to Deep Learning Models

- 1.

- The regularization functions are lower semi-continuous.

- 2.

- The derivatives of the loss function ℓ and all activation functions are bounded and Lipschitz continuous.

- 3.

- The loss function ℓ, activation function , and the regularization function are either real analytic or semi-algebraic [37], and continuous on their domains.

- 1.

- is a Kurdyka-Lojasiewicz (KL) function.

- 2.

- The partial gradient is Lipschitz continuous and there exist positive constants such that .

- 3.

- has Lipschitz constant on any bounded set.

| Algorithm A1 PALM Algorithm for Deep Learning Models |

|

Appendix A.2. The Lemma Used in the Proof of Theorem 1

Appendix A.3. The Algorithm for ℓ 1 Sparse Group Lasso

| Algorithm A2 DNN_PALM Algorithm for norm Group Lasso |

Appendix B

Appendix B.1. Iterative Method

Appendix B.2. Hyper-Parameter Settings

| Hyper-Parameter | LeNet300 | LeNet5 | VGG-Like | ResNet-32 | VGG-19 | Description |

|---|---|---|---|---|---|---|

| learning rate | 1 × 10−3 | 1 × 10−3 | 1 × 10−3 | 1 × 10−3 | 1 × 10−3 | The learning rate used in retraining process |

| gradient momentum | 0.9 | 0.9 | 0.9 | 0.9 | 0.9 | The gradient momentum used in retraining process |

| weight decay | 1 × 10−4 | 1 × 10−5 | 5 × 10−4 | 1 × 10−4 | 1 × 10−4 | The weight decay factor used in retraining process |

| minibatch size | 1 × 102 | 6 × 102 | 1 × 103 | 3 × 102 | 4 × 102 | The number of training samples over which each SGD update is computed during the retraining process |

| norm factor | 4 × 10−4 | 2 × 10−4 | 1 × 10−6 | 1 × 10−8 | 1 × 10−10 | The shrinkage coefficient for norm regularization |

| channel factor | - | 1 × 10−3 | 1 × 10−3–1 × 10−2 1 | 5 × 10−2 | 5 × 10−2 | The shrinkage coefficient of channels for group Lasso |

| neuron factor | 2 × 10−4 | 2 × 10−4 | 1 × 10−4 | 0 | 1 × 10−2 | The shrinkage coefficient of neurons for group Lasso |

| filter size factor | - | 1 × 10−3 | 1 × 10−4 | 1 × 10−4 | 1 × 10−4 | The shrinkage coefficient of filter shapes for group Lasso |

| pruning frequency (epochs/minibatches) | 10 | 10 | 1 | 2 | 1 | No. of epochs(LeNet)/minibatches(VGGNet/ResNet) for pruning before retraining |

| retraining epochs | 30 | 30 | 20 | 30 | 15 | The number of retraining epochs after pruning |

| iterations | 74 | 102 | 63 | 2 | 66 | The number of iterations for obtaining the final results |

Appendix C

Appendix C.1. Computational Efficiency

Appendix C.2. Results about the SSL

| Method | Base/Pruned Accuracy (%) | Filter Size | Remaining Filters | Remaining Parameters | FLOPs (K) |

|---|---|---|---|---|---|

| Baseline | - | 25–500 | 20–50 | 500–25,000 | 2464 |

| SSL [24] | 99.10/99.00 | 7–14 | 1–50 | - | 63.82 |

| MobilePrune | 99.12/99.03 | 14–9 | 4–16 | 46–26 | 51.21 |

Appendix C.3. Additional Ablation Studies

| Penalty | Base/Pruned Accuracy (%) | Original/Remaining Parameters (K) | Pruned Architecture | Filter Size | FLOPs (K) | Sparsity (%) |

|---|---|---|---|---|---|---|

| norm | 99.12/99.20 | 431/321.00 | 20-50-800-500 | 25–500 | 2293.0 | 74.48 |

| Group Lasso | 99.12/99.11 | 431/8.81 | 4-19-301-29 | 25–99 | 187.00 | 2.04 |

| Group Lasso | 99.12/99.03 | 431/9.98 | 4-17-271-82 | 23–99 | 183.83 | 2.32 |

| sparse group lasso | 99.12/99.11 | 431/2.31 | 5-14-151-57 | 16–65 | 113.50 | 1.97 |

| Penalty | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | Pruned Architecture | FLOPs (Mil) |

|---|---|---|---|---|

| norm | 92.96/93.40 | 15/3.39 | 18-43-92-99-229-240-246-507-504-486-241-114-428-168 | 210.94 |

| Group Lasso | 92.96/92.47 | 15/0.84 | 17-43-89-99-213-162-93-42-32-28-8-5-429-168 | 78.07 |

| Group Lasso | 92.96/92.90 | 15/0.61 | 17-43-92-99-229-240-246-323-148-111-41-39-159-161 | 134.35 |

| sparse group lasso | 92.96/92.94 | 15/0.60 | 17-43-87-99-201-185-80-37-27-25-9-4-368-167 | 77.83 |

| Penalty | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | FLOPs (Mil) | Sparsity (%) |

|---|---|---|---|---|

| norm | 95.29/95.68 | 7.42/6.74 | 993.11 | 90.84 |

| Group Lasso | 95.29/95.30 | 7.42/3.43 | 393.09 | 45.95 |

| Group Lasso | 95.29/95.04 | 7.42/5.66 | 735.12 | 76.28 |

| sparse group lasso | 95.29/95.47 | 7.42/2.93 | 371.30 | 39.49 |

| Penalty | Test Accuracy (%) | Remaining Parameters (Mil) | Pruned Architecture | FLOPs (Mil) |

|---|---|---|---|---|

| Baseline | 61.56 | 20.12 | 64-64-128-128-256-256-256-256-512-512-512-512-512-512-512-512 | 1592.53 |

| norm | 61.99 | 19.29 | 45-64-114-128-256-256-256-256-512-511-512-509-512-512-512-512 | 1519.23 |

| Group Lasso | 53.25 | 5.93 | 23-61-80-128-122-114-164-253-255-322-412-462-23-93-129-512 | 683.99 |

| Group Lasso | 53.97 | 0.21 | 29-64-109-128-254-246-254-256-510-509-509-509-512-512-484-512 | 1282.82 |

| sparse group lasso | 56.27 | 4.05 | 19-48-57-102-79-83-100-179-219-273-317-341-256-158-116-512 | 407.37 |

Appendix C.4. Additional Comparison between ℓ 0 Sparse Group Lasso and ℓ 1 Norm Sparse Group Lasso

Appendix C.5. The Effect of the Coefficient of ℓ 0 Norm Regularizer

| Penalty Coefficient | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | Pruned Architecture | FLOPs (Mil) |

|---|---|---|---|---|

| 1 × 10−4 | 92.96/89.77 | 15/0.06 | 17-43-83-99-161-105-57-28-24-15-11-4-104-157 | 56.43 |

| 1 × 10−5 | 92.96/92.19 | 15/0.30 | 16-43-85-99-171-155-75-33-23-18-10-3-264-167 | 66.82 |

| 1 × 10−6 | 92.96/92.94 | 15/0.60 | 17-43-87-99-201-185-80-37-27-25-9-4-368-167 | 77.83 |

| 1 × 10−7 | 92.96/92.54 | 15/0.74 | 17-43-87-99-213-188-91-40-26-27-9-4-400-168 | 81.64 |

| Penalty Coefficient | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | FLOPs (Mil) | Sparsity |

|---|---|---|---|---|

| 1 × 10−6 | 95.29/95.11 | 7.42/2.06 | 330.90 | 27.76 |

| 1 × 10−7 | 95.29/95.33 | 7.42/2.72 | 369.36 | 36.66 |

| 1 × 10−8 | 95.29/95.47 | 7.42/2.93 | 77.83 | 39.49 |

| 1 × 10−9 | 95.29/95.44 | 7.42/3.02 | 372.98 | 40.70 |

Appendix D

Appendix D.1. Har Dataset Description

Appendix D.1.1. Wisdm Dataset

Appendix D.1.2. UCI-HAR Dataset

Appendix D.1.3. PAMAP2 Dataset

Appendix D.2. 1D CNN Model

Appendix D.3. Data Pre-Processing

Appendix D.3.1. Re-Scaling and Standardization

Appendix D.3.2. Segmentation

Appendix D.3.3. K-Fold Cross-Validation

Appendix D.4. Hyper-Parameters Tuning

Appendix D.4.1. Cross-Validation Tuning

Appendix D.4.2. Learning Rate Tuning

| Dataset | Type | Value | Base/Pruned Accuracy (%) | Parameter Nonzero (%) | Parameter Remaining (%) | Node Remaining (%) |

|---|---|---|---|---|---|---|

| WISDM | Fold Number | 1 | 93.52/92.68 | 11.64 | 32.49 | 57.42 |

| 2 | 94.88/93.70 | 10.03 | 30.35 | 55.08 | ||

| 3 | 94.45/93.48 | 9.45 | 27.97 | 52.13 | ||

| 4 | 94.97/94.65 | 9.52 | 28.03 | 53.52 | ||

| 5 | 93.52/92.68 | 11.64 | 32.49 | 57.42 | ||

| Learning Rate | 1.0 | 89.55/86.72 | 27.09 | 93.50 | 96.68 | |

| 5.0 | 92.93/84.36 | 9.41 | 40.44 | 64.06 | ||

| 1.0 | 94.97/94.65 | 27.09 | 28.03 | 53.52 | ||

| 1.5 | 94.96/94.88 | 10.38 | 27.26 | 52.54 | ||

| 1.0 | 94.65/94.57 | 10.54 | 32.38 | 56.84 | ||

| UCI-HAR | Fold Number | 1 | 78.42/78.08 | 15.53 | 31.99 | 56.64 |

| 2 | 89.89/89.28 | 32.49 | 64.29 | 80.27 | ||

| 3 | 79.13/79.37 | 16.02 | 32.25 | 56.84 | ||

| 4 | 78.22/78.22 | 18.69 | 40.02 | 63.48 | ||

| 5 | 90.06/89.96 | 23.00 | 46.83 | 68.75 | ||

| Learning Rate | 1.0 | 85.27/85.51 | 77.98 | 94.66 | 97.27 | |

| 5.0 | 89.38/89.24 | 16.69 | 85.77 | 92.58 | ||

| 1.0 | 90.06/89.96 | 23.00 | 46.83 | 68.75 | ||

| 1.5 | 90.94/90.91 | 16.69 | 31.04 | 56.45 | ||

| 2.0 | 90.40/90.43 | 13.24 | 29.10 | 54.10 | ||

| PAMAP2 | Fold Number | 1 | 96.89/96.95 | 1.26 | 3.72 | 10.74 |

| 2 | 92.29/92.28 | 1.27 | 3.15 | 10.35 | ||

| 3 | 96.49/96.28 | 1.81 | 4.74 | 14.84 | ||

| 4 | 95.08/94.99 | 1.20 | 3.42 | 10.55 | ||

| 5 | 94.81/94.81 | 1.46 | 3.71 | 11.52 | ||

| Learning Rate | 1.0 | 93.63/85.80 | 7.93 | 28.61 | 49.22 | |

| 5.0 | 94.25/93.89 | 3.90 | 11.81 | 28.32 | ||

| 1.0 | 96.89/96.95 | 1.26 | 3.72 | 10.74 | ||

| 1.5 | 96.57/96.62 | 1.12 | 2.36 | 7.62 | ||

| 2.0 | 94.89/94.99 | 0.68 | 2.02 | 7.62 |

Appendix D.4.3. Number of Epochs Tuning

Appendix D.4.4. Prune Threshold Tuning

References

- Frankle, J.; Carbin, M. The Lottery Ticket Hypothesis: Finding Sparse, Trainable Neural Networks. In Proceedings of the 7th International Conference on Learning Representations, ICLR 2019, New Orleans, LA, USA, 6–9 May 2019; Available online: https://arxiv.org/abs/1803.03635 (accessed on 24 May 2022).

- Hassibi, B.; Stork, D. Second order derivaties for network prunning: Optimal brain surgeon. In Advances in Neural Information Processing Systems 5 (NIPS 1992); Morgan Kaufmann Publishers Inc.: San Francisco, CA, USA, 1993. [Google Scholar]

- Castellano, G.; Fanelli, A.M.; Pelillo, M. An iterative pruning algorithm for feedforward neural networks. IEEE Trans. Neural Netw. 1997, 8, 519–531. [Google Scholar] [CrossRef] [PubMed]

- Han, S.; Pool, J.; Tran, J.; Dally, W. Learning both Weights and Connections for Efficient Neural Network. In Advances in Neural Information Processing Systems 28; Curran Associates, Inc.: Red Hook, NY, USA, 2015; pp. 1135–1143. [Google Scholar]

- Chetlur, S.; Woolley, C.; Vandermersch, P.; Cohen, J.; Tran, J.; Catanzaro, B.; Shelhamer, E. cuDNN: Efficient Primitives for Deep Learning. arXiv 2014, arXiv:1410.0759. [Google Scholar]

- Ding, X.; Ding, G.; Guo, Y.; Han, J.; Yan, C. Approximated oracle filter pruning for destructive cnn width optimization. In Proceedings of the 36th International Conference on Machine Learning, PMLR 97, Long Beach, CA, USA, 9–15 June 2019; pp. 1607–1616. [Google Scholar]

- Neklyudov, K.; Molchanov, D.; Ashukha, A.; Vetrov, D.P. Structured Bayesian Pruning via Log-Normal Multiplicative Noise. In Advances in Neural Information Processing Systems 30; Curran Associates, Inc.: Red Hook, NY, USA, 2017; pp. 6775–6784. [Google Scholar]

- Louizos, C.; Welling, M.; Kingma, D.P. Learning Sparse Neural Networks through L_0 Regularization. In Proceedings of the International Conference on Learning Representations, Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Louizos, C.; Ullrich, K.; Welling, M. Bayesian compression for deep learning. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 3288–3298. [Google Scholar]

- Liu, Z.G.; Whatmough, P.N.; Mattina, M. Sparse Systolic Tensor Array for Efficient CNN Hardware Acceleration. arXiv 2020, arXiv:2009.02381. [Google Scholar]

- Liu, Z.; Whatmough, P.N.; Mattina, M. Systolic Tensor Array: An Efficient Structured-Sparse GEMM Accelerator for Mobile CNN Inference. IEEE Comput. Archit. Lett. 2020, 19, 34–37. [Google Scholar] [CrossRef]

- Pilanci, M.; Wainwright, M.J.; El Ghaoui, L. Sparse learning via Boolean relaxations. Math. Prog. 2015, 151, 63–87. [Google Scholar] [CrossRef]

- Bertsimas, D.; Pauphilet, J.; Parys, B.V. Sparse Regression: Scalable algorithms and empirical performance. arXiv 2019, arXiv:1902.06547. [Google Scholar]

- Tibshirani, R. Regression Shrinkage and Selection Via the Lasso. J. R. Stat. Soc. Ser. B 1994, 58, 267–288. [Google Scholar] [CrossRef]

- Zou, H.; Hastie, T. Regularization and variable selection via the Elastic Net. J. R. Stat. Soc. Ser. B 2005, 67, 301–320. [Google Scholar] [CrossRef] [Green Version]

- Fan, J.; Li, R. Variable selection via nonconcave penalized likelihood and its oracle properties. J. Am. Stat. Assoc. 2001, 96, 1348–1360. [Google Scholar] [CrossRef]

- Zhang, C.H. Nearly unbiased variable selection under minimax concave penalty. Ann. Stat. 2010, 38, 894–942. [Google Scholar] [CrossRef] [Green Version]

- Hazimeh, H.; Mazumder, R. Fast Best Subset Selection: Coordinate Descent and Local Combinatorial Optimization Algorithms. arXiv 2018, arXiv:1803.01454. [Google Scholar] [CrossRef]

- Guo, Y.; Yao, A.; Chen, Y. Dynamic Network Surgery for Efficient DNNs. In Advances in Neural Information Processing Systems 29; Curran Associates, Inc.: Red Hook, NY, USA, 2016; pp. 1379–1387. [Google Scholar]

- Ding, X.; Ding, G.; Zhou, X.; Guo, Y.; Han, J.; Liu, J. Global Sparse Momentum SGD for Pruning Very Deep Neural Networks. In Advances in Neural Information Processing Systems 32; Curran Associates, Inc.: Red Hook, NY, USA, 2019; pp. 6379–6391. [Google Scholar]

- Xiao, X.; Wang, Z.; Rajasekaran, S. AutoPrune: Automatic Network Pruning by Regularizing Auxiliary Parameters. In Advances in Neural Information Processing Systems 32; Curran Associates, Inc.: Red Hook, NY, USA, 2019; pp. 13681–13691. [Google Scholar]

- Alvarez, J.M.; Salzmann, M. Learning the Number of Neurons in Deep Networks. In Advances in Neural Information Processing Systems 29; Curran Associates, Inc.: Red Hook, NY, USA, 2016; pp. 2270–2278. [Google Scholar]

- Liu, B.; Wang, M.; Foroosh, H.; Tappen, M.; Penksy, M. Sparse Convolutional Neural Networks. In Proceedings of the Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; p. 7298681242022. Available online: https://ieeexplore.ieee.org/document/7298681 (accessed on 24 May 2022).

- Wen, W.; Wu, C.; Wang, Y.; Chen, Y.; Li, H. Learning Structured Sparsity in Deep Neural Networks. In Proceedings of the Advances in Neural Information Processing Systems, Barcelona, Spain, 5–10 December 2016; Available online: https://arxiv.org/abs/1608.03665 (accessed on 24 May 2022).

- Yang, C.; Yang, Z.; Khattak, A.M.; Yang, L.; Zhang, W.; Gao, W.; Wang, M. Structured Pruning of Convolutional Neural Networks via L1 Regularization. IEEE Access 2019, 7, 106385–106394. [Google Scholar] [CrossRef]

- Yang, H.; Gui, S.; Zhu, Y.; Liu, J. Automatic Neural Network Compression by Sparsity-Quantization Joint Learning: A Constrained Optimization-based Approach. arXiv 2020, arXiv:1910.05897. [Google Scholar]

- Zhang, T.; Ye, S.; Zhang, K.; Tang, J.; Wen, W.; Fardad, M.; Wang, Y. A Systematic DNN Weight Pruning Framework using Alternating Direction Method of Multipliers. arXiv 2018, arXiv:1804.03294. [Google Scholar]

- He, Y.; Liu, P.; Wang, Z.; Hu, Z.; Yang, Y. Filter Pruning via Geometric Median for Deep Convolutional Neural Networks Acceleration. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Ren, A.; Zhang, T.; Ye, S.; Li, J.; Xu, W.; Qian, X.; Lin, X.; Wang, Y. ADMM-NN: An Algorithm-Hardware Co-Design Framework of DNNs Using Alternating Direction Methods of Multipliers. In Proceedings of the Twenty-Fourth International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS ’19), Providence, RI, USA, 13–17 April 2019; Association for Computing Machinery: New York, NY, USA, 2019; pp. 925–938. [Google Scholar] [CrossRef]

- Liu, J.; Ye, J. Moreau-Yosida Regularization for Grouped Tree Structure Learning. In Advances in Neural Information Processing Systems 23; Lafferty, J.D., Williams, C.K.I., Shawe-Taylor, J., Zemel, R.S., Culotta, A., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2010; pp. 1459–1467. [Google Scholar]

- Collins, M.D.; Kohli, P. Memory Bounded Deep Convolutional Networks. arXiv 2014, arXiv:1412.1442. [Google Scholar]

- Li, H.; Kadav, A.; Durdanovic, I.; Samet, H.; Graf, H.P. Pruning Filters for Efficient ConvNets. In Proceedings of the International Conference on Learning Representations, Toulon, France, 24–26 April 2017. [Google Scholar]

- Liu, Z.; Li, J.; Shen, Z.; Huang, G.; Yan, S.; Zhang, C. Learning efficient convolutional networks through network slimming. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2736–2744. [Google Scholar]

- Yoon, J.; Hwang, S.J. Combined Group and Exclusive Sparsity for Deep Neural Networks. In Proceedings of the 34th International Conference on Machine Learning, Sydney, NSW, Australia, 6–11 August 2017; PMLR: Proceedings of Machine Learning Research, Precup, D., Teh, Y.W., Eds.; International Convention Centre: Sydney, Australia, 2017; Volume 70, pp. 3958–3966. [Google Scholar]

- Scardapane, S.; Comminiello, D.; Hussain, A.; Uncini, A. Group sparse regularization for deep neural networks. Neurocomputing 2017, 241, 81–89. [Google Scholar] [CrossRef] [Green Version]

- Liu, Z.G.; Whatmough, P.N.; Zhu, Y.; Mattina, M. S2TA: Exploiting Structured Sparsity for Energy-Efficient Mobile CNN Acceleration. In Proceedings of the 2022 IEEE International Symposium on High-Performance Computer Architecture (HPCA), Seoul, Korea, 2–6 April 2022. [Google Scholar]

- Bolte, J.; Sabach, S.; Teboulle, M. Proximal alternating linearized minimization for nonconvex and nonsmooth problems. Math. Program. 2014, 146, 459–494. [Google Scholar] [CrossRef]

- Beck, A.; Teboulle, M. A fast iterative shrinkage-thresholding algorithm for linear inverse problems. Siam J. Imaging Sci. 2009, 2, 183–202. [Google Scholar] [CrossRef] [Green Version]

- Dai, B.; Zhu, C.; Guo, B.; Wipf, D. Compressing Neural Networks using the Variational Information Bottleneck. In Proceedings of the 35th International Conference on Machine Learning (ICML 2018), Stockholm, Sweden, 10–15 July 2018. [Google Scholar]

- LeCun, Y.; Denker, J.S.; Solla, S.A. Optimal Brain Damage. In Advances in Neural Information Processing Systems 2; Touretzky, D.S., Ed.; Morgan-Kaufmann: Burlington, MA, USA, 1990; pp. 598–605. [Google Scholar]

- Zeng, W.; Urtasun, R. MLPrune: Multi-Layer Pruning for Automated Neural Network Compression. 2019. Available online: https://openreview.net/forum?id=r1g5b2RcKm (accessed on 24 May 2022).

- Wang, C.; Grosse, R.; Fidler, S.; Zhang, G. EigenDamage: Structured Pruning in the Kronecker-Factored Eigenbasis. In Proceedings of the Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; Volume 97, pp. 6566–6575. [Google Scholar]

- Deng, L. The mnist database of handwritten digit images for machine learning research. IEEE Signal Process. Mag. 2012, 29, 141–142. [Google Scholar] [CrossRef]

- Zagoruyko, S. 92.45% on CIFAR-10 in Torch. 2015. Available online: http://torch.ch/blog/2015/07/30/cifar.html (accessed on 24 May 2022).

- Zhang, G.; Wang, C.; Xu, B.; Grosse, R. Three Mechanisms of Weight Decay Regularization. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Krizhevsky, A.; Nair, V.; Hinton, G. CIFAR-10 (Canadian Institute for Advanced Research). Available online: http://www.cs.toronto.edu/~kriz/cifar.html (accessed on 24 May 2022).

- Le, Y.; Yang, X.S. Tiny ImageNet Visual Recognition Challenge. CS 231N 2015, 7, 3. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the International Conference on Learning Representations, San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Kwapisz, J.R.; Weiss, G.M.; Moore, S.A. Activity Recognition Using Cell Phone Accelerometers. SIGKDD Explor. Newsl. 2011, 12, 74–82. [Google Scholar] [CrossRef]

- WISDM: Wireless Sensor Data Mining. Available online: https://www.cis.fordham.edu/wisdm/dataset.php (accessed on 24 May 2022).

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J. A Public Domain Dataset for Human Activity Recognition using Smartphones. In Proceedings of the 21th international European symposium on artificial neural networks, computational intelligence and machine learning, Bruges, Belgium, 24–26 April 2013. [Google Scholar]

- Human Activity Recognition Using Smartphones Data Set. Available online: https://archive.ics.uci.edu/ml/datasets/human+activity+recognition+using+smartphones (accessed on 24 May 2022).

- Reiss, A.; Stricker, D. Introducing a New Benchmarked Dataset for Activity Monitoring. In Proceedings of the 2012 16th International Symposium on Wearable Computers, Newcastle, UK, 18–22 June 2012. [Google Scholar] [CrossRef]

- Reiss, A.; Stricker, D. Creating and Benchmarking a New Dataset for Physical Activity Monitoring. In PETRA ’12, Proceedings of the 5th International Conference on PErvasive Technologies Related to Assistive Environments, Crete, Greece, 6–8 June 2012; Association for Computing Machinery: New York, NY, USA, 2012. [Google Scholar] [CrossRef]

- PAMAP2 Physical Activity Monitoring Data Set. Available online: https://archive.ics.uci.edu/ml/datasets/PAMAP2+Physical+Activity+Monitoring (accessed on 24 May 2022).

- Google Colab. Available online: https://research.google.com/colaboratory/faq.html (accessed on 24 May 2022).

- Pytorch Mobile. Available online: https://pytorch.org/mobile/android/ (accessed on 24 May 2022).

- Profile Battery Usage with Batterystats and Battery Historian. Available online: https://developer.android.com/topic/performance/power/setup-battery-historian (accessed on 24 May 2022).

- Yuan, L.; Liu, J.; Ye, J. Efficient Methods for Overlapping Group Lasso. In Advances in Neural Information Processing Systems 24; Shawe-Taylor, J., Zemel, R.S., Bartlett, P.L., Pereira, F., Weinberger, K.Q., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2011; pp. 352–360. [Google Scholar]

- Zeng, J.; Lau, T.T.K.; Lin, S.B.; Yao, Y. Global convergence of block coordinate descent in deep learning. In Proceedings of the 36th International Conference on Machine Learning, ICML 2019, Long Beach, CA, USA, 9–15 June 2019; Available online: https://arXiv:1803.00225 (accessed on 24 May 2022).

- Bao, C.; Ji, H.; Quan, Y.; Shen, Z. L0 norm based dictionary learning by proximal methods with global convergence. In Proceedings of the Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014. [Google Scholar] [CrossRef]

- Lau, T.T.K.; Zeng, J.; Wu, B.; Yao, Y. A Proximal Block Coordinate Descent Algorithm for Deep Neural Network Training. In Proceedings of the 6th International Conference on Learning Representations, ICLR 2018—Workshop Track Proceedings, Vancouver, BC, Canada, 3 May–30 April 2018; Available online: arxiv.org/abs/1803.09082 (accessed on 24 May 2022).

- Attouch, H.; Bolte, J. On the convergence of the proximal algorithm for nonsmooth functions involving analytic features. Math. Program. 2009, 116, 5–16. [Google Scholar] [CrossRef]

- Bach, F.R.; Mairal, J.; Ponce, J. Convex Sparse Matrix Factorizations. arXiv 2008, arXiv:0812.1869. [Google Scholar]

- Shomron, G.; Weiser, U. Non-Blocking Simultaneous Multithreading: Embracing the Resiliency of Deep Neural Networks. In Proceedings of the 2020 53rd Annual IEEE/ACM International Symposium on Microarchitecture (MICRO), Athens, Greece, 17–21 October 2020; Available online: arxiv.org/abs/2004.09309 (accessed on 24 May 2022).

- Wang, Z.; Oates, T. Imaging Time-Series to Improve Classification and Imputation. arXiv 2015, arXiv:1506.00327. [Google Scholar]

- Tamilarasi, P.; Rani, R. Diagnosis of Crime Rate against Women using k-fold Cross Validation through Machine Learning. In Proceedings of the 2020 Fourth International Conference on Computing Methodologies and Communication (ICCMC), Erode, India, 11–13 March 2020; pp. 1034–1038. [Google Scholar] [CrossRef]

- Brownlee, J. Understand the Impact of Learning Rate on Neural Network Performance. 2019. Available online: https://machinelearningmastery.com/understand-the-dynamics-of-learning-rate-on-deep-learning-neural-networks (accessed on 24 May 2022).

| Dataset | Model | Methods | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | FLPOs (Mil) |

|---|---|---|---|---|---|

| MNIST | BC-GNJ [9] | 98.40/98.20 | 267.00/28.73 | 28.64 | |

| BC-GHS [9] | 98.40/98.20 | 267.00/28.17 | 28.09 | ||

| LeNet-300-100 | L0 [8] | -/98.60 | - | 69.27 | |

| L0-sep [8] | -/98.20 | - | 26.64 | ||

| MobilePrune | 98.24/98.23 | 267.00/5.25 | 25.79 | ||

| SBP [7] | -/99.14 | - | 212.80 | ||

| BC-GNJ [9] | 99.10/99.00 | 431.00/3.88 | 282.87 | ||

| BC-GHS [9] | 99.10/99.00 | 431.00/2.59 | 153.38 | ||

| LeNet-5 | L0 [8] | -/99.10 | - | 1113.40 | |

| L0-sep [8] | -/99.00 | - | 390.68 | ||

| MobilePrune | 99.12/99.11 | 431.00/2.31 | 113.50 | ||

| CIFAR-10 | Original [44] | -/92.45 | 15.00/- | 313.5 | |

| PF [32] | -/93.40 | 15.00/5.4 | 206.3 | ||

| VGG-like | SBP [7] | 92.80/92.50 | 15.00/- | 136.0 | |

| SBPa [7] | 92.80/91.00 | 15.00/- | 99.20 | ||

| VIBNet [39] | -/93.50 | 15.00/0.87 | 86.82 | ||

| MobilePrune | 92.96/92.94 | 15.00/0.60 | 77.83 | ||

| C-OBD [40] | 95.30/95.27 | 7.42/2.92 | 488.85 | ||

| C-OBS [2] | 95.30/95.30 | 7.42/3.04 | 378.22 | ||

| ResNet32 | Kron-OBD [40,41] | 95.30/95.30 | 7.42/3.26 | 526.17 | |

| Kron-OBS [2,41] | 95.30/95.46 | 7.42/3.23 | 524.52 | ||

| EigenDamage [42] | 95.30/95.28 | 7.42/2.99 | 457.46 | ||

| MobilePrune | 95.29/95.47 | 7.42/2.93 | 371.30 | ||

| NN slimming [33] | 61.56/40.05 | 20.12/5.83 | 158.62 | ||

| C-OBD [40] | 61.56/47.36 | 20.12/4.21 | 481.90 | ||

| C-OBS [2] | 61.56/39.80 | 20.12/6.55 | 210.05 | ||

| Tiny-ImageNet | VGG-19 | Kron-OBD [40,41] | 61.56/44.41 | 20.12/4.72 | 298.28 |

| Kron-OBS [2,41] | 61.56/44.54 | 20.12/5.26 | 266.43 | ||

| EigenDamage [42] | 61.56/56.92 | 20.12/5.21 | 408.17 | ||

| MobilePrune | 61.56/56.27 | 20.12/4.05 | 407.37 |

| Dataset | Penalty | Base/Pruned Accuracy (%) | Parameter Nonzero (%) | Parameter Remaining (%) | Node Remaining (%) | Base/Pruned Response Delay (s) | Time Saving Percentage (%) |

|---|---|---|---|---|---|---|---|

| WISDM | norm | 94.72/94.79 | 63.36 | 100.00 | 100.00 | 0.38/0.39 | 0.00 |

| norm | 94.30/93.84 | 13.58 | 46.26 | 68.16 | 0.38/0.24 | 36.84 | |

| norm | 94.61/94.54 | 56.28 | 90.46 | 95.12 | 0.38/0.35 | 7.89 | |

| Group lasso | 94.68/94.32 | 48.23 | 89.73 | 94.73 | 0.38/0.35 | 7.89 | |

| sparse Group lasso | 94.81/94.79 | 17.91 | 53.41 | 73.83 | 0.41/0.26 | 36.59 | |

| MobilePrune | 94.97/94.65 | 9.52 | 28.03 | 52.52 | 0.50/0.17 | 66.00 | |

| UCI-HAR | norm | 91.52/91.48 | 88.49 | 100.00 | 100.00 | 0.84/0.80 | 4.76 |

| norm | 90.46/90.33 | 81.58 | 98.47 | 99.22 | 0.81/0.82 | 0.00 | |

| norm | 91.01/90.94 | 88.35 | 100.00 | 100.00 | 0.79/0.80 | 0.00 | |

| Group lasso | 90.80/90.84 | 82.91 | 100.00 | 100.00 | 0.83/0.78 | 6.02 | |

| sparse Group lasso | 91.11/91.04 | 81.21 | 97.70 | 98.83 | 0.84/0.80 | 4.76 | |

| MobilePrune | 90.06/89.96 | 23.00 | 46.83 | 68.75 | 1.01/0.43 | 57.43 | |

| PAMAP2 | norm | 93.15/93.07 | 69.27 | 100.00 | 100.00 | 0.41/0.41 | 0.00 |

| norm | 95.22/95.29 | 1.46 | 7.28 | 19.73 | 0.40/0.08 | 80.00 | |

| norm | 92.08/92.09 | 65.32 | 94.93 | 97.27 | 0.41/0.39 | 4.88 | |

| Group lasso | 93.30/93.28 | 61.78 | 100.00 | 100.00 | 0.41/0.41 | 0.00 | |

| sparse Group lasso | 96.87/97.20 | 2.67 | 9.72 | 26.17 | 0.40/0.10 | 75.00 | |

| MobilePrune | 96.89/96.95 | 1.26 | 3.72 | 10.74 | 0.51/0.05 | 90.20 |

| Dataset | Device | Penalty | Base/Pruned Response Delay (s) | Time Saving Percentage (%) | Based/Pruned Device Estimated Battery Use (%/h) | Battery Saving Percentage (%) |

|---|---|---|---|---|---|---|

| WISDM | Huawei P20 | norm | 1.40/1.27 | 9.29 | 0.71/0.70 | 1.41 |

| norm | 1.33/0.71 | 46.62 | 0.74/0.65 | 12.16 | ||

| norm | 1.28/1.21 | 5.47 | 0.74/0.77 | 0.00 | ||

| Group lasso | 1.27/1.27 | 0.00 | 0.74/0.77 | 0.00 | ||

| sparse Group lasso | 1.25/0.81 | 35.20 | 0.74/0.68 | 8.11 | ||

| MobilePrune | 1.34/0.51 | 61.94 | 0.72/0.45 | 37.50 | ||

| OnePlus 8 Pro | norm | 0.57/0.49 | 14.04 | 0.34/0.32 | 5.88 | |

| norm | 0.48/0.34 | 29.17 | 0.35/0.30 | 14.29 | ||

| norm | 0.48/0.40 | 16.67 | 0.34/0.34 | 0.00 | ||

| Group lasso | 0.49/0.45 | 8.16 | 0.34/0.35 | 0.00 | ||

| sparse Group lasso | 0.48/0.33 | 31.25 | 0.35/0.30 | 14.29 | ||

| MobilePrune | 0.48/0.23 | 52.08 | 0.34/0.23 | 32.35 | ||

| HCI-HAR | Huawei P20 | norm | 1.43/1.43 | 0.00 | 0.84/0.84 | 0.00 |

| norm | 1.42/1.42 | 0.00 | 0.85/0.84 | 1.18 | ||

| norm | 1.43/1.43 | 0.00 | 0.84/0.84 | 0.00 | ||

| Group lasso | 1.43/1.43 | 0.00 | 0.84/0.82 | 2.38 | ||

| sparse Group lasso | 1.42/1.41 | 0.70 | 0.85/0.82 | 3.53 | ||

| MobilePrune | 1.42/0.85 | 40.14 | 0.84/0.55 | 34.52 | ||

| OnePlus 8 Pro | norm | 0.53/0.53 | 0.00 | 0.35/0.35 | 0.00 | |

| norm | 0.54/0.51 | 5.56 | 0.37/0.36 | 2.70 | ||

| norm | 0.54/0.53 | 1.85 | 0.37/0.37 | 0.00 | ||

| Group lasso | 0.53/0.52 | 1.89 | 0.36/0.36 | 0.00 | ||

| sparse Group lasso | 0.53/0.52 | 1.89 | 0.36/0.36 | 0.00 | ||

| MobilePrune | 0.54/0.42 | 22.22 | 0.36/0.29 | 19.44 | ||

| PAMAP2 | Huawei P20 | norm | 2.64/2.72 | 0.00 | 0.76/0.79 | 0.00 |

| norm | 2.74/0.45 | 83.58 | 0.79/0.53 | 32.91 | ||

| norm | 2.67/2.56 | 4.12 | 0.78/0.78 | 0.00 | ||

| Group lasso | 2.67/2.68 | 0.00 | 0.78/0.78 | 0.00 | ||

| sparse Group lasso | 2.69/0.55 | 79.55 | 0.79/0.57 | 27.85 | ||

| MobilePrune | 2.70/0.32 | 88.15 | 0.79/0.50 | 36.71 | ||

| OnePlus 8 Pro | norm | 0.94/0.93 | 1.06 | 0.88/0.88 | 0.00 | |

| norm | 0.93/0.25 | 73.12 | 0.87/0.55 | 36.78 | ||

| norm | 0.93/0.91 | 2.15 | 0.88/0.87 | 1.14 | ||

| Group lasso | 0.94/0.95 | 0.00 | 0.89/0.89 | 0.00 | ||

| sparse Group lasso | 0.95/0.29 | 69.47 | 0.88/0.59 | 32.95 | ||

| MobilePrune | 0.94/0.21 | 77.66 | 0.87/0.54 | 37.93 |

| Network Model | Penalty | Base/Pruned Accuracy (%) | Original/Remaining Parameters (Mil) | FLOPs | Sparsity (%) |

|---|---|---|---|---|---|

| LetNet-300 | norm | 98.24/98.46 | 267 K/57.45 K | 143.20 | 21.55 |

| Group lasso | 98.24/98.17 | 267 K/32.06 K | 39.70 | 12.01 | |

| sparse group lasso | 98.24/98.00 | 267 K/15.80 K | 25.88 | 5.93 | |

| sparse group lasso | 98.24/98.23 | 267 K/5.25 K | 25.79 | 1.97 | |

| LetNet-5 | norm | 99.12/99.20 | 431 K/321.0 K | 2293.0 | 74.48 |

| Group lasso | 99.12/99.11 | 431 K/8.81 K | 187.00 | 2.04 | |

| sparse group lasso | 99.12/99.03 | 431 K/9.98 K | 183.83 | 2.32 | |

| sparse group lasso | 99.12/99.11 | 431 K/2.31 K | 113.50 | 0.54 | |

| VGG-like | norm | 92.96/93.40 | 15 M/3.39 M | 210.94 | 22.6 |

| Group lasso | 92.96/92.47 | 15 M/0.84 M | 78.07 | 5.60 | |

| sparse group lasso | 92.96/92.90 | 15 M/0.61 M | 134.35 | 4.06 | |

| sparse group lasso | 92.96/92.94 | 15 M/0.60 M | 77.83 | 4.00 | |

| ResNet-32 | norm | 95.29/95.68 | 7.42 M/6.74 M | 993.11 | 90.84 |

| Group lasso | 95.29/95.30 | 7.42 M/3.03 M | 373.09 | 40.84 | |

| sparse group lasso | 95.29/95.04 | 7.42 M/5.66 M | 735.12 | 76.28 | |

| sparse group lasso | 95.29/95.47 | 7.42 M/2.93 M | 371.30 | 39.49 | |

| VGG-19 | norm | 61.56/61.99 | 138 M/19.29 M | 1519.23 | 13.98 |

| Group lasso | 61.56/53.25 | 138 M/5.93 M | 683.99 | 4.30 | |

| sparse group lasso | 61.56/53.97 | 138 M/0.21 M | 1282.82 | 0.15 | |

| sparse group lasso | 61.56/56.27 | 138 M/4.05 M | 407.37 | 2.93 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shao, Y.; Zhao, K.; Cao, Z.; Peng, Z.; Peng, X.; Li, P.; Wang, Y.; Ma, J. MobilePrune: Neural Network Compression via ℓ0 Sparse Group Lasso on the Mobile System. Sensors 2022, 22, 4081. https://doi.org/10.3390/s22114081

Shao Y, Zhao K, Cao Z, Peng Z, Peng X, Li P, Wang Y, Ma J. MobilePrune: Neural Network Compression via ℓ0 Sparse Group Lasso on the Mobile System. Sensors. 2022; 22(11):4081. https://doi.org/10.3390/s22114081

Chicago/Turabian StyleShao, Yubo, Kaikai Zhao, Zhiwen Cao, Zhehao Peng, Xingang Peng, Pan Li, Yijie Wang, and Jianzhu Ma. 2022. "MobilePrune: Neural Network Compression via ℓ0 Sparse Group Lasso on the Mobile System" Sensors 22, no. 11: 4081. https://doi.org/10.3390/s22114081

APA StyleShao, Y., Zhao, K., Cao, Z., Peng, Z., Peng, X., Li, P., Wang, Y., & Ma, J. (2022). MobilePrune: Neural Network Compression via ℓ0 Sparse Group Lasso on the Mobile System. Sensors, 22(11), 4081. https://doi.org/10.3390/s22114081