Abstract

Invertebrates are abundant in horticulture and farming environments, and can be detrimental. Early pest detection for an integrated pest-management system with an integration of physical, biological, and prophylactic methods has huge potential for the better yield of crops. Computer vision techniques with multispectral images are used to detect and classify pests in dynamic environmental conditions, such as sunlight variations, partial occlusions, low contrast, etc. Various state-of-art, deep learning approaches have been proposed, but there are some major limitations to these methods. For example, labelled images are required to supervise the training of deep networks, which is tiresome work. Secondly, a huge in-situ database with variant environmental conditions is not available for deep learning, or is difficult to build for fretful bioaggressors. In this paper, we propose a machine-vision-based multispectral pest-detection algorithm, which does not require any kind of supervised network training. Multispectral images are used as input for the proposed pest-detection algorithm, and each image provides comprehensive information about different textural and morphological features, and visible information, i.e., size, shape, orientation, color, and wing patterns for each insect. Feature identification is performed by a SURF algorithm, and feature extraction is accomplished by least median of square regression (LMEDS). Feature fusion of RGB and NIR images onto the coordinates of Ultraviolet (UV) is performed after affine transformation. The mean identification errors of type I, II, and total mean error surpass the mean errors of the state-of-art methods. The type I, II, and total mean errors, with 6.672% UV weights, were emanated to 1.62, 40.27, and 3.26, respectively.

1. Introduction

Early real-time pest detection and identification can improve pest management, resulting in the reduction in crop damage, and pesticide costs. Using a multi-spectral machine vision as a component of an integrated pest-management (IPM) system, we can provide a more robust and flexible solution for the pest scouting of a wide range of invertebrates (e.g., the pink bollworm). Thermal imaging, as a component of the same machine vision system, can be used to detect and identify diverse vertebrates (e.g., wild boars and rodents). This demands real-time pest scouting, which is a tedious and non-trivial task for a human pest scouter, involving conventional sweep nets that use traps or beat-sheet methods to sample pests in the fields. Therefore, pest/vertebrate scouting through an autonomous aerial vehicle, e.g., a quad-copter equipped with a multi-spectral and thermal imaging system, can be utilized to scout the cotton crop in day or night. The real-time autonomous scouting will also provide information on the population size and the location of pests and vertebrates. In turn, specific pesticides can be sprayed in the small, affected area, instead of using a broad-spectrum pesticide spray in the whole field. Consequently, appropriate measures can be taken against vertebrates. A modern IPM and precision agriculture system using a multispectral and thermal machine vision can improve crop production with minimal damage and pesticide cost. Furthermore, use of pesticides also kills beneficial species such as bees, and predators of pests [1].

Liu et al. [2] achieved good pest-detection results by using Near Infrared (NIR) images in the range of 700–1500 nm, and soft X-rays images between 0.1 nm and 10 nm to detect invertebrates. It was found that the selection of weights was crucial, and more errors can be introduced if weights are set to either extreme. Boissard and martin et al. [3] proposed an early pest-detection algorithm using a cognitive vision approach. Their work is limited due to the usage of a sensor that captures still images. Ye and Sakai et al. [4] explored the possibility of using a ground-based hyperspectral imaging system. Their work is limited to small, captured images of the scene acquired by the sensors, hence disabling the applications of the wide-scale vegetation. Feng et al. [5] used an RGB imaging system for plant-disease diagnosis, observable in color imaging range, that often yielded impaired results for disease detection. This system can be improved with multispectral imaging which can provide better results. Sanchez et al. [6] proposed a machine-vision technique for scouting whiteflies in greenhouse settings. The efficiency of their system can be increased by adding different types of pests and performing the same experiments in the lab and fields.

In this article, we propose a machine-vision-based multispectral pest-detection algorithm which does not require supervised network training. Multispectral images are used in this study, because these can deal with dynamic environmental conditions (i.e., sunlight variation, partial occlusion, etc.) better than RGB images. The UV light between 100 nm and 400 nm has not yet been explored for invertebrate detection; therefore, our approach is towards the evaluation of the UV spectrum of green leaves, on nine invertebrate species for their detection and identification.

2. Materials and Methods

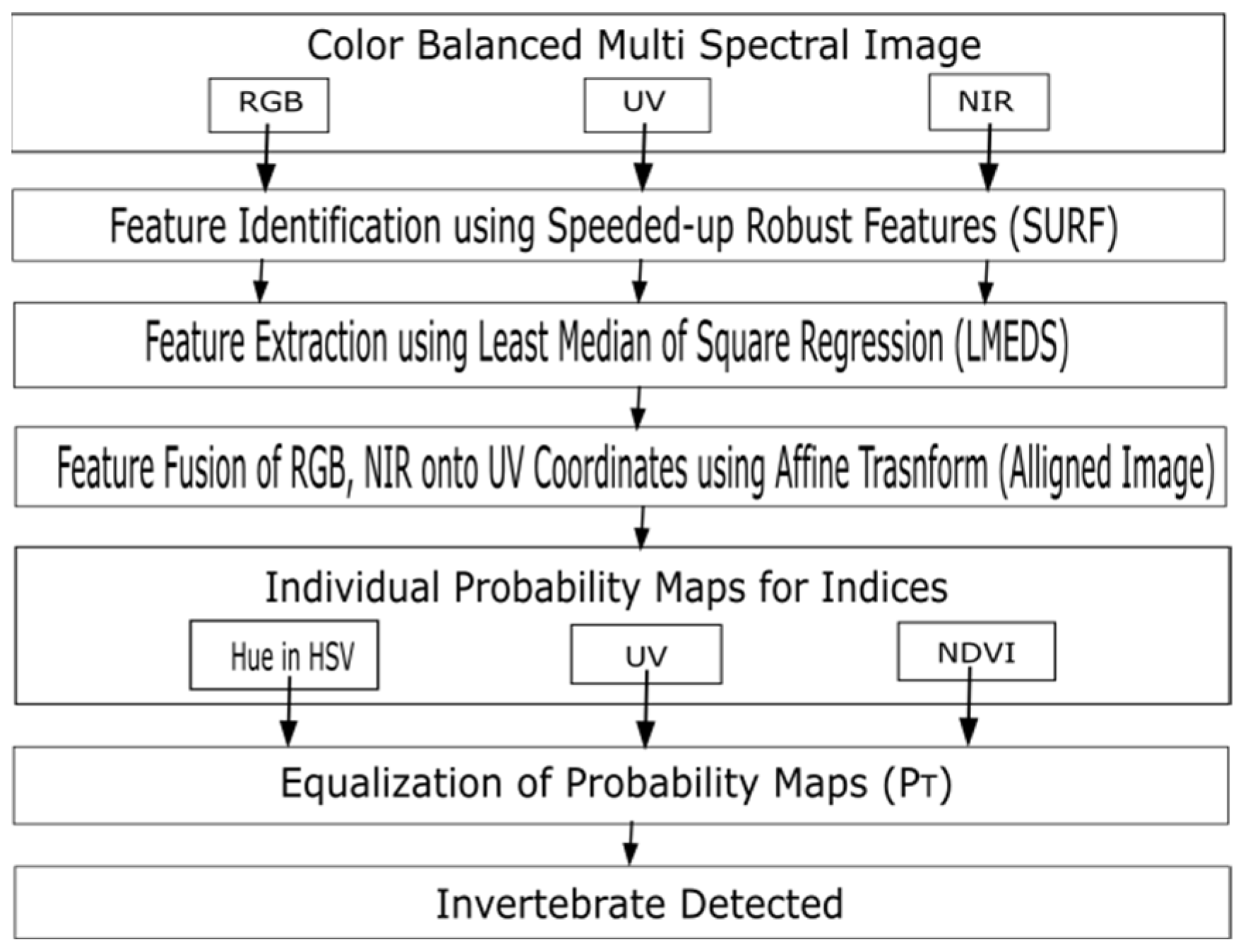

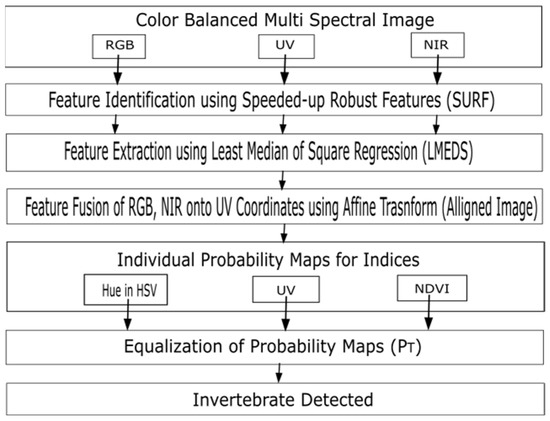

Multispectral images are used for the detection of pests with UV, RGB and NIR wavebands. The color balance of the input images is the first step for estimating the contribution of each wavelength image for the identification of invertebrates on plants as shown in Figure 1.

Figure 1.

Block diagram of the proposed pest-detection algorithm. The first block from the top represents the multiple color balanced images as input. In the second block, salient features are extracted using SURF. In the third block, features are selected using Least Median of Square Regression (LMEDS). In the fourth block, feature fusion takes place using affine transformation. In the fifth block, feature classification is performed using different indices. In the sixth block, total probability is calculated from the individual probability of each index, and finally the invertebrate is identified.

The next step was to align the coordinates of the RGB and NIR images with the coordinates of the UV images, using the Speeded-Up Robust Feature (SURF) algorithm which identified the interest points on the RGB, UV and NIR images simultaneously. An image transform model was then estimated using the least median of square regression (LMEDS) approach. An affine transform was used to counter-check the displacement error after fusing the coordinates of the RGB, NIR images onto the UV coordinates. After the feature fusion, in the final step, three indices UV, Normalized Difference Vegetation Index (NDVI), and hue in Hue, Saturation, Value (HSV) were used for the identification of invertebrates on host plants. To evaluate the contribution of these indices, probability maps, i.e., PUV, PHUE, PNDVI, were drawn based on their intensity values. In order to increase the reliability of probability, the maps were combined. By selecting a proper threshold value T, a pixel can be recognized as belonging to an invertebrate or plant.

3. Results

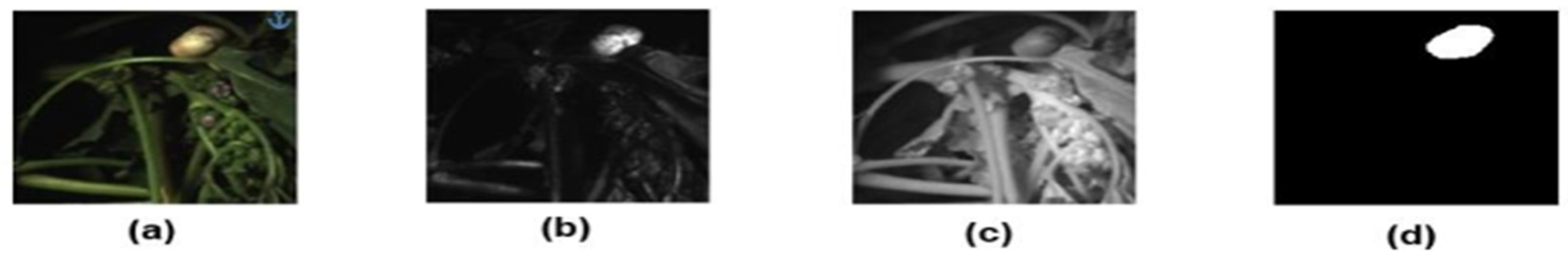

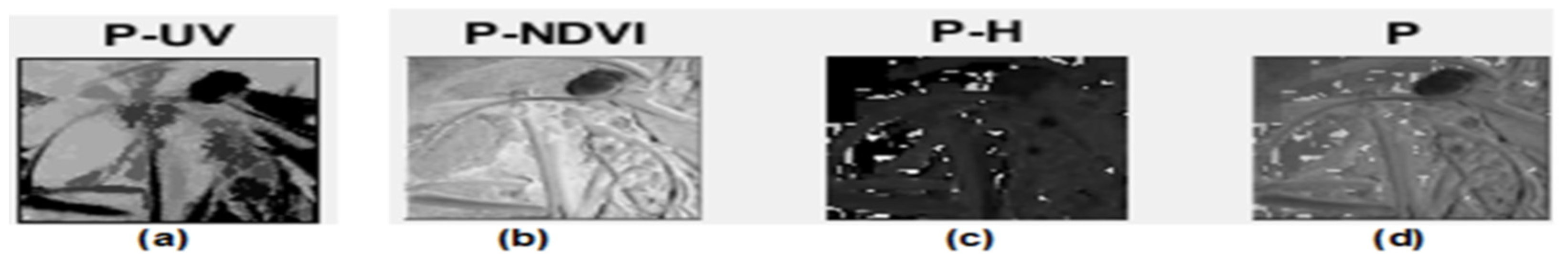

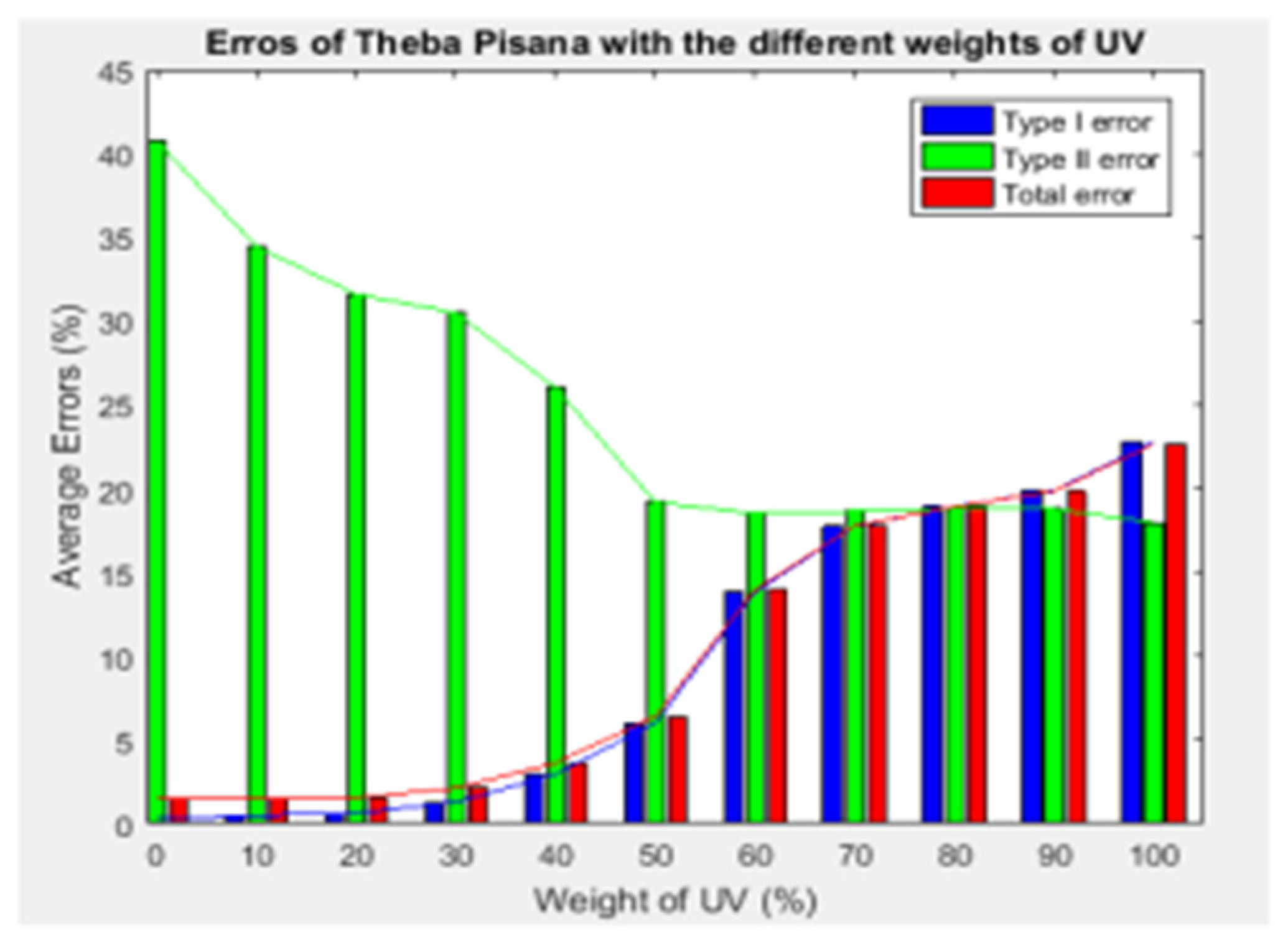

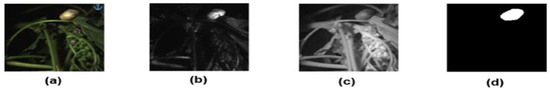

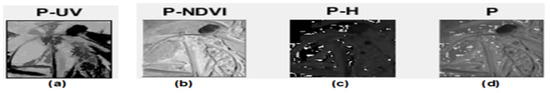

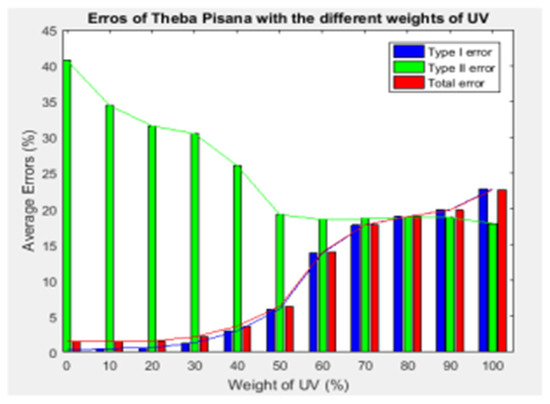

The performance of the proposed pest-detection algorithm was evaluated using the multispectral pest images taken from [2]. Nine sets of images, with three images in each set (RGB, UV, and NIR), were obtained to identify invertebrates on leaves and on the stems of different plants. Figure 2, as an example, shows the multispectral images of Theba pisana invertebrates, along with a ground truth image. The computed probability maps are shown in Figure 3. Figure 4 shows that how efficiently the proposed algorithm predicted the invertebrate. The contribution of the UV image was also determined with its different weights. The effect of UV spectral weights on the proposed system prediction accuracy, in form of Type I, II, and total errors, is shown in Figure 5. The results of the proposed algorithm surpass the state-of-art works in terms of Type I, and II, and the total errors, which were reduced significantly with the proposed algorithm.

Figure 2.

(a) Color image of an insect (Theba pisana) with brown strips on its back. The size of phylliidae varies between 5 mm and 10 mm; (b) image of Theba pisana insect captured in ultraviolet spectrum; (c) image of Theba pisana insect captured in near-infrared spectrum; (d) manual segmentation of Theba pisana insect as a ground truth image.

Figure 3.

(a) Probability map of UV for Theba pisana calculated by the algorithm; (b) probability map of NDVI for Theba pisana calculated by the algorithm; (c) probability map of HUE for Theba pisana calculated by the algorithm; (d) total probability map for Theba pisana calculated by the algorithm.

Figure 4.

(a) Manually segmented image of Theba pisana used for ground truth image; (b) automatically segmented image of Theba pisana generated by the algorithm.

Figure 5.

Average errors of Theba Pisana with blue, green and red bar graphs representing type I, type II, and total errors, respectively. Average errors were plotted against eleven different weights of UV.

Mean errors for Type I, II and the total, were calculated for the weightage of the UV (WUV = 0, 6.672 and 100%). A comprehensive comparison between a previously published paper [2] and the proposed technique is provided in Table 1. Type I errors indicated the area of an image identified as an insect, and where it belongs on the leaf. The reduction in Type I error means an increase in the probability of identifying insects. The average Type I error was reduced from 22 to 3.75, at 0% involvement with UV. At 6.672% weightage of UV, the Type I error was reduced to 1.62. Average total errors were reduced from 19 to 4.78 at 0% involvement with UV. By introducing a 6.672% and 33% weightage of the UV, the average total of errors reduced to 3.26 and 5.51, respectively. Type II errors showed an increase due to insufficient information exhibited by the multispectral images. Each input image was cropped from [2].

Table 1.

Error comparison with [2] and the proposed method with UV weights contribution.

4. Conclusions

In this paper, a multispectral pest-detection algorithm was proposed. The images from three spectrums (i.e., RGB, UV, and NIR) were used to identify nine different pest species. The proposed approach performed exceptionally, and attained the lowest Type I, II, and total errors, of values 1.62, 40.27, and 3.26, respectively. The performance of the proposed algorithm can be further validated with a wider range of pests, as a future work.

Funding

This work was funded by Higher Education Commission, Pakistan under NRPU project number 9595.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Schellhorn, N.; Renwick, A.; Macfadyen, S. The real cost of pesticides in Australia’s food boom. Conversat. Aust. Retrieved July 2013, 10, 2016. [Google Scholar]

- Liu, H.; Lee, S.A.; Chahl, J.S. A review of recent sensing technologies to detect invertebrates on crops. Precis. Agric. 2016, 18, 635–666. [Google Scholar] [CrossRef]

- Boissard, P.; Martin, V.; Moisan, S. A Cognitive Vision Approach to Early Pest Detection in Greenhouse Crops. Comput. Electron. Agric. 2010, 62, 81–93. [Google Scholar] [CrossRef] [Green Version]

- Ye, X.; Sakai, K.; Okamoto, H.; Garciano, L.O. A ground based hyper spectral imaging system for characterizing vegetation spectral features. Comput. Electron. Agric. 2008, 63, 13–21. [Google Scholar] [CrossRef]

- Feng, J.; Li, H.; Shi, J.; Yang, W.; Liao, N. Cucumber Diseases Diagnosis using Multispectral Images. In Proceedings of the PIAGENG 2009: Image Processing and Photonics for Agricultural Engineering, Zhangjiajie, China, 11–12 July 2009. [Google Scholar]

- Solis-Sánchez, L.O.; García-Escalante, J.J.; Castañeda-Miranda, R.; Torres-Pacheco, I.; Guevara-González, R. Machine vision algorithm for whiteflies (Bemisia tabaci Genn.) scouting under greenhouse environment. J. Appl. Entomol. 2009, 133, 546–552. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).