Abstract

The macroscopic Fourier ptychography (FP) is regarded as a highly promising approach of creating a synthetic aperture for macro visible imaging to achieve sub-diffraction-limited resolution. However most existing macro FP techniques rely on the high-precision translation stage to drive laser or camera scanning, thereby increasing system complexity and bulk. Meanwhile, the scanning process is slow and time-consuming, hindering the ability to achieve rapid imaging. In this paper, we introduce an innovative illumination scheme that employs a spatial light modulator to achieve precise programmable variable-angle illumination at a relatively long distance, and it can also freely adjust the illumination spot size through phase coding to avoid the issues of limited field of view and excessive dispersion of illumination energy. Coupled with a camera array, this could significantly reduce the number of shots taken by the imaging system and enable a lightweight and highly efficient solid-state macro FP imaging system with a large equivalent aperture. The effectiveness of the method is experimentally validated using various optically rough diffuse objects and a USAF target at laboratory-scale distances.

1. Introduction

In long-distance imaging systems, spatial resolution degradation caused by diffraction blur from limited aperture sizes remains a critical challenge. A conventional solution involves employing large-aperture telephoto lenses; however, these devices are often prohibitively expensive, bulky, and exhibit exponentially increasing costs and weight with aperture size [1,2]. Fourier ptychography (FP), initially developed for microscopic imaging, offers an alternative by synthesizing a larger numerical aperture computationally, thereby overcoming the diffraction limit of the objective lens [3]. Inspired by its success in microscopy, recent efforts have extended FP to macroscopic and long-range imaging to address diffraction-induced resolution limitations [2,4,5,6,7,8,9,10,11].

The camera scanning FP technique was pioneered by Dong et al. [5]. It exploits the natural Fourier-plane constraint of a lens aperture by physically shifting the camera to capture overlapping spectral bands of stationary far-field objects. This method firstly achieved super-diffraction imaging at 0.7 m, later extended to 1.5 m by Holloway et al. [2] and adapted for reflective setups, being suitable for objects with optically rough surfaces [7].

The mechanisms that enable the camera to conduct high-precision scanning are typically quite large, which results in prolonged scanning times and delays in capturing low-resolution images. To tackle this challenge, Holloway et al. [2] initially suggested utilizing coherent camera arrays to enhance the efficiency of data collection. However this approach still requires angle-varied illumination to ensure that there is spectral overlap among each sub-aperture. And recently, more and more studies [12,13,14] are attempting to utilize deep learning techniques, relying solely on low-resolution intensity images corresponding to different non-overlapping spectral regions, to reconstruct a super-resolved high-quality image. However, deep learning technology itself requires a large amount of high-quality, diverse training data to improve the model’s generalization ability, which hinders its practical application. Subsequently, Li et al. [10] introduced a one-shot macroscopic Fourier ptychography technique that employs three-wavelength illumination multiplexing alongside camera array acquisition. Nevertheless, this method is most effective when applied to targets exhibiting minimal color variation, as the intensities at the different wavelengths remain closely correlated due to the three-wavelength illumination.

And the converging light is typically used for illumination to satisfy the far-field condition, which would lead to a restricted field of view (FOV) for camera scanning FP. Li et al. [8] places a pair of lenses in front of the aperture to facilitate spectrum reformation, enabling the use of a quasi-plane wave to illuminate the target across a large FOV. Furthermore, building on a new principle on the far-field condition for an optically rough object, a more recent study [9] developed and experimentally validated several FP systems that utilize divergent illuminated beams, achieving optical synthetic aperture imaging with an imaging aperture of 200 mm over a distance of 120 m.

Notably, angle-varied illumination is a key stage in Fourier ptychographic microscopy (FPM), enabling the relative movement between the sample spectrum and camera aperture, which can be easily implemented through various methods. For instance, an LED array has been used from the outset to provide angle-varied illumination [3,15], while other methods such as utilizing a mirror array [16] and a digital micromirror device (DMD) [17] allow for rapid adjustments to the laser illumination angle. However, achieving precise angle-varied illumination over long distances proves to be quite difficult. And the laser-scanning FP techniques were another type of macro FP approach, which resembles angle-varied illumination used in FPM. The quasi-plane wave illumination scheme was firstly introduced by Xiang et al. [4]. In this method, the laser source is positioned at the focal plane of the illumination lens to produce a quasi-plane wave, and moving the light source spatially generates angle-varied coherent light to illuminate the sample, resulting in a shift in the frequency spectrum. Next, Tian et al. [11] introduced a second scheme that incorporates divergent light illumination. In this method, the wavefront in front of the object is represented as a spherical wave, and by shifting the light source, a linear phase term is altered, resulting in relative shifts in the spectrum of the dummy object. And the divergent light illumination allows for a significant increase in both the imaging FOV and the operational distance.

A comprehensive analysis of existing macro FP technology indicates that most current solutions require the assistance of high-precision mechanical displacement devices in either the illumination path or the detection path, resulting in a complex and cumbersome overall system. This also leads to a slow and time-consuming scanning process, making it difficult to achieve fast imaging.

In the theory and method Section of the paper, we first demonstrate, through a combination of theory and simulated experiments, that the far-field diffraction condition is not necessary for macroscopic FP imaging of objects with rough optical surfaces. This analysis holds true for all other types of macroscopic FP methods, meaning that for camera scanning FP or camera array FP, we are no longer constrained to using convergent illumination to illuminate the object to satisfy the far-field diffraction condition. This alleviates practical application constraints and enhances their usability.

In addition, we proposed a novel illumination scheme suitable for macro FP, using spatial light modulator (SLM) to programmatically modulate the coherent illumination light to achieve quick and accurate angle-varied illumination without any mechanism, as shown in Figure 1A, which can also freely adjust the illumination spot size through phase coding to achieve different FOVs, thus avoiding the issues of limited FOV and excessive dispersion of illumination energy. There is a quasi-Fourier transform relationship between the illumination light field and the complex field loaded on the SLM, and the spatial translation of the phase block on the SLM will add a linear phase term to the illumination light, which works as an angle-varied illumination wave vector, causing a relative shift in object spectrum.

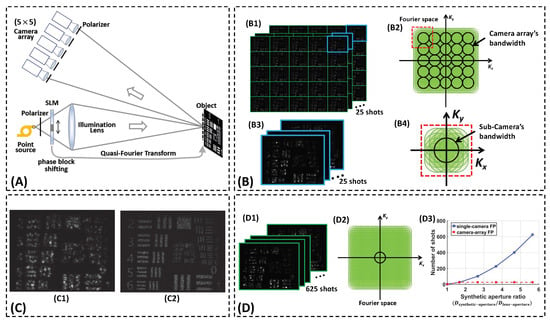

Figure 1.

(A) Schematic diagram of our camera array FP. The imaging end is composed of multiple small-aperture cameras, while the illumination end employs the solid-state novel macro FP illumination scheme based on SLM. The innovative illumination scheme provides accurate variable-angle illumination to ensure the overlap between each adjacent sub-camera’s bandwidth in Fourier space, ultimately integrating multiple small-aperture cameras into an equivalent large-aperture camera. (B) the camera array FP setup can achieve spectral overlap with only 25 shots under different angle illuminations. Each shot from camera array is capable of simultaneously acquiring low-resolution images and their spectra in the Fourier space are adjacent but non-overlapping, collectively forming the bandwidth of the camera array. The sample raw data and Fourier coverage of the camera array are shown in (B1,B2) separately. And the images captured by one single camera in the camera array (Marked with a blue border), along with their corresponding spectral overlaps (Marked by the red dashed border) are shown in (B3,B4) separately. (C) The resolution improvement of our method is demonstrated by the comparison between (C1) low-resolution image captured by a sub-camera with small aperture and (C2) high-resolution reconstruction image through our camera array FP. (D) To achieve the same SAR and spectral overlap as the camera array FP setup, the single camera scanning FP typically requires 625 shots. (D1,D2) The sample raw data captured by 625 shots and the corresponding Fourier coverage for the single camera scanning FP. (D3) Compared to camera array FP, the single camera FP requires a significantly larger number of shots as the SAR increases. This relationship exhibits nearly exponential growth.

Moreover, we utilize this illumination scheme in conjunction with a camera array to achieve a large equivalent aperture and fast macro FP imaging system with high acquisition efficiency. The overall scheme is illustrated in Figure 1A.

The imaging end is composed of multiple small-aperture cameras, while the illumination end employs the solid-state novel macro FP illumination scheme based on SLM. The innovative illumination scheme provides accurate variable-angle illumination to ensure the overlap between each adjacent sub-camera’s bandwidth in Fourier space, achieving the concept that was not specifically realized in Ref. [2], and ultimately integrating multiple small-aperture cameras into an equivalent large-aperture camera.

Based on the experimental setup in the schematic diagram, each shot from camera array is capable of simultaneously acquiring low-resolution images. Their spectra in the Fourier space are adjacent but non-overlapping, collectively forming the bandwidth of the camera array, as shown in Figure 1(B2). Each illumination at a different angle causes the object’s entire spectrum to shift relative to the camera array’s bandwidth, thereby ensuring sufficient overlap in the Fourier space between low-resolution images captured in different shots.

For example, the proposed camera array FP setup can achieve spectral overlap and a synthetic aperture ratio of 5 with only 25 shots under different angle illuminations. Note that the synthetic aperture ratio (SAR) is given as the ratio between the synthetic aperture diameter and lens aperture diameter. And the sample raw data and Fourier coverage are shown in Figure 1(B1,B2). Additionally, the 25 low-resolution images captured by one sub-camera, along with their corresponding spectral overlaps, are illustrated as in Figure 1(B3,B4).

To achieve the same SAR and spectral overlap as the proposed camera array FP setup, the single camera scanning FP typically requires 625 shots as shown in Figure 1(D1,D2). Moreover, it is readily observable that, compared to camera array FP, the single camera FP requires a significantly larger number of shots under the same spectral overlap condition as the desired SAR increases. This relationship exhibits nearly exponential growth, as illustrated in Figure 1(D3).

Besides the actual experimental reconstruction results in Section 3.3 demonstrate that the reconstruction quality of the proposed camera array FP is comparable to that of the single camera scanning FP. This indicates that the proposed camera array FP can significantly improve both data acquisition efficiency and imaging speed without sacrificing image quality.

2. Theory and Method

2.1. Imaging by Sub-Cameras in a Camera Array

In this subsection, we will first demonstrate, through a combination of theory and simulated experiments, that the far-field diffraction condition is not necessary for macroscopic FP imaging of objects with rough optical surfaces. This is a crucial theoretical component of our entire methodology.

The sample object would be directly coherently imaged by sub-cameras in different positions, and this process can be formally expressed as follows:

where and represent the Fourier transform and the inverse Fourier transform, respectively. The distance from the object to the imaging lens is given by . The quadratic phase term introduced by the free diffraction over this distance is denoted as . The pupil function of the lens is represented as , and the vector is the frequency coordinate. Besides represents the position of the nth sub-camera in the camera array, and the vector is the spatial coordinate.

Note that we do not take into account the offset of images between sub-cameras here; it is assumed that the images are already registered. From Equation (1), it can be seen that the purpose of using convergent illumination in camera scanning FP is to eliminate the quadratic phase term , approximately satisfying the far-field diffraction condition. Only when this condition is met, the lens aperture would naturally serve as an aperture constraint at the object’s Fourier plane.

However, we can actually define a dummy object as follows: , and Equation (1) can be rewritten in the following form:

This indicates that if the camera scanning or camera array FP does not employ convergent illumination, the high-resolution information recovered by the reconstruction algorithm actually belongs to the dummy object .

For objects with optically rough surfaces, their phases are typically random, and this phase information is generally not what we are interested in. Instead, we mainly care about the intensity information of the object’s imaging.

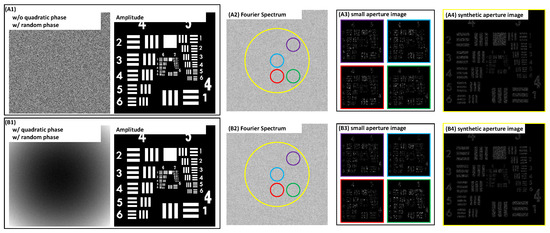

A simple simulation experiment can demonstrate that for optically rough diffuse objects, the synthetic aperture recovery results obtained through FP techniques for the dummy object containing the quadratic phase term show no significant difference in image intensity compared to the real object , as shown in Figure 2. In addition, the corresponding Fourier spectrum and four small aperture images for diffuse objects with and without quadratic phase term are also similar. Therefore, it would be reasonable to set dummy object as the target for high-resolution FP reconstruction in this case.

Figure 2.

Simulation experiment: FP-based synthetic aperture imaging for diffuse objects with and without quadratic phase term. (A1–A4) The phase image and amplitude image of usaf target with random phase and without quadratic phase, the corresponding Fourier spectrum, the four small aperture images, and the synthetic aperture image. (B1–B4) The phase image and amplitude image of usaf target with random phase and with quadratic phase, the corresponding Fourier spectrum, the four small aperture images, and the synthetic aperture image. The color circles in Fourier spectrum represent the different spectral bands of objects, and the corresponding images are also marked with borders in their respective colors.

This also indicates that for imaging optically rough diffuse objects using camera scanning or camera array FP, the far-field diffraction condition is no longer a necessary requirement. This is an important finding of the paper. Crucially, our proposed method does not recover the object’s true phase information, meaning it is unsuitable for objects that necessitate high-resolution phase information.

2.2. Illumination Light Analysis

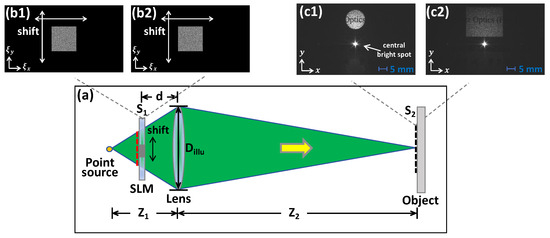

In this section, we will conduct a detailed analysis of the illumination scheme through Figure 3a.

Figure 3.

(a) The illumination scheme for the proposed camera array FP. (b1,b2) Two specially designed phase blocks generated with the GS algorithm. (c1,c2) the corresponding actual circular and rectangular spots.

A pure-phase SLM is used in this article, and the zeroth-order light that is not modulated by the SLM will converge to form a central bright spot in the actual illumination. And this central bright spot is separated from the illumination light of the SLM’s moving phase block through the incorporation of a blazed grating phase into the moving phase pattern, as demonstrated in Figure 3(c1).

The encoding of phase blocks can be achieved through various iterative methods in the computer-generated holography (CGH), such as Gerchberg–Saxton (GS) algorithm [18], Wirtinger algorithm [19], and non-convex optimization algorithm [20]. And we can achieve different sizes and shapes of the illumination light spots by encoding the phase blocks, thus avoiding the issues of limited FOV and excessive dispersion of illumination energy. Two specially encoding phase blocks generated with the GS algorithm are shown in Figure 3(b1,b2) and the corresponding actual circular and rectangular are shown in Figure 3(c1,c2).

As observed in Figure 3a, the light field is positioned on the imaging plane of the point source. In other words, the distances and adhere to the lens law of geometrical optics:

where represents the focal length of the illumination lens. Consequently, drawing from the fundamental principles of Fourier optics [21], we can infer that there exists a quasi-Fourier transform relationship between the illumination light field and the complex field encoded on the SLM, which can be formally articulated as follows:

where and .

When the phase block placed on the SLM is shifted in the spatial domain, the resulting illumination light field can be described in accordance with the properties of the Fourier transform as follows:

2.3. Forward Imaging Model

With the modulated light illuminating the sample object, the complete imaging model is derived by combining Equations (1) and (5):

where denotes the image captured by the nth sub-camera when the illumination light is modulated by the SLM for the ith time.

Subsequently, we redefine a dummy object as follows:

The target directly detected by the imaging system is the overall complex field of the object under illumination, denoted as , which differs from the dummy object by a quadratic phase term . Consequently, consistent with our previous analysis, when dealing with optically rough diffuse objects, it would be reasonable to set the dummy object as the target for high-resolution FP reconstruction.

2.4. Reconstruction Algorithm

Based on the forward imaging model derived from the previous analysis, it can be seen that the reconstruction algorithm we need is essentially aimed at solving the FP phase retrieval problem, subject to the constraint of spectrum overlapping of different sub-apertures. While a substantial amount of innovative work [22,23,24,25,26,27,28,29,30] has been conducted in the field to address this problem, the Alternating Projection (AP) method, derived from the Gerchberg–Saxton (GS) algorithm, remains a stable and widely used solution.

In the mth iteration, the current estimates of the object spectrum and the pupil function are denoted as and . The sub-spectrum estimate corresponding to the th image could be:

And it could be inversely Fourier transformed to form the estimate of the image field, denoted as follows:

Then we impose the magnitude constraint to in the image domain as follows:

Back to the spectral domain, we can obtain the updated sub-spectrum estimate as follows:

Next, and could be updated as follows:

The Fourier transform of the average of all captured intensity images is chosen to initialize . Besides the initial estimate of the pupil function is defined as follows:

It should be mentioned that, for the sake of simplicity in the theoretical analysis presented in this paper, we assume a uniform aperture function across all sub-cameras in the camera array. However, this assumption may not hold in practical scenarios. This limitation can be readily addressed by modifying the reconstruction algorithm to accommodate individual aperture functions for each sub-camera. And the appropriate aperture function can then be selected in the update process.

3. Experiments

3.1. Experimental Setup

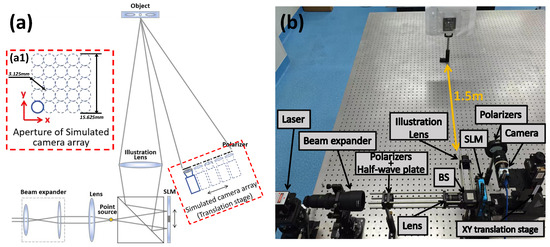

In this study, a reflective pure-phase SLM was employed as a substitute for a transmissive configuration due to the existing laboratory constraints. This adaptation resulted in slight deviations from the theoretical optical path sketch illustrated in Figure 1A, with the actual experimental setup depicted in Figure 4a. In addition, we created a camera array prototype by mounting a single camera on an XY translation stage and moving it spatially, also due to the limitations of the laboratory conditions.

Figure 4.

(a) Schematic diagram of the actual experimental setup. (a1) The aperture of the simulated camera array. (b) The scenario of experimental setup. A reflective pure-phase SLM was utilized instead of a transmissive one for the actual experiments according to the existing conditions in our laboratory. And a camera array prototype was created by mounting a single camera on an XY translation stage and moving it spatially.

Here, it should be noted that although the experiments in this paper use a pure-phase SLM, theoretically, other types of SLMs can also be used to modulate the illumination light required for macro FP, such as amplitude-type SLMs like a digital micromirror device (DMD).

Within the illumination subsystem, a 532 nm semiconductor laser beam undergoes collimation via a beam expander before being focused into a point source through a convex lens. Subsequent light modulation is achieved using a reflective SLM (FSLM-2K55-P02, Cas Microstar, Xi’an, China; resolution, 6.4 m pixel pitch), integrated into the optical train through a beam-splitting (BS) prism. The illumination lens comprises a 25.4 mm diameter plano-convex element with a 175 mm focal length ( mm).

Besides our imaging system incorporates a digital camera (MER2-630-60U3M-L, Daheng Imaging, Beijing, China; 2.4 m pixel pitch) coupled with an Edmund optics lens with a focal length of 50 mm and an aperture diameter of mm (). And it is mounted on an XY translation stage (GCD-202100M, Daheng Optics, Beijing, China) and moves in the XY directions at mm intervals to create a simulated camera array. This is illustrated in Figure 4(a1).

Imaging targets are placed 1.5 m away from the imaging system and the illumination system, i.e., mm. By adjusting the illumination path, the distance between the point source and the illumination lens is set to mm, ensuring that the object is located on the imaging plane of the point source. In addition, the distance between the SLM and the illumination lens is set to mm.

The size of the phase block is set to pixels and it moves in increments of 20 pixels along both X and Y axes from to 40 pixels, taking the center of the SLM as the origin. A grid of low-resolution images is collected for reconstruction with only 25 shots, and the overlap ratio between adjacent measurements could be about in the Fourier domain. The final equivalent aperture of the system would be approximately 5 times the aperture of the imaging lens, theoretically reaching 15.625 mm, as shown in Figure 4(a1).

3.2. Experiments on Optically Rough Diffuse Objects

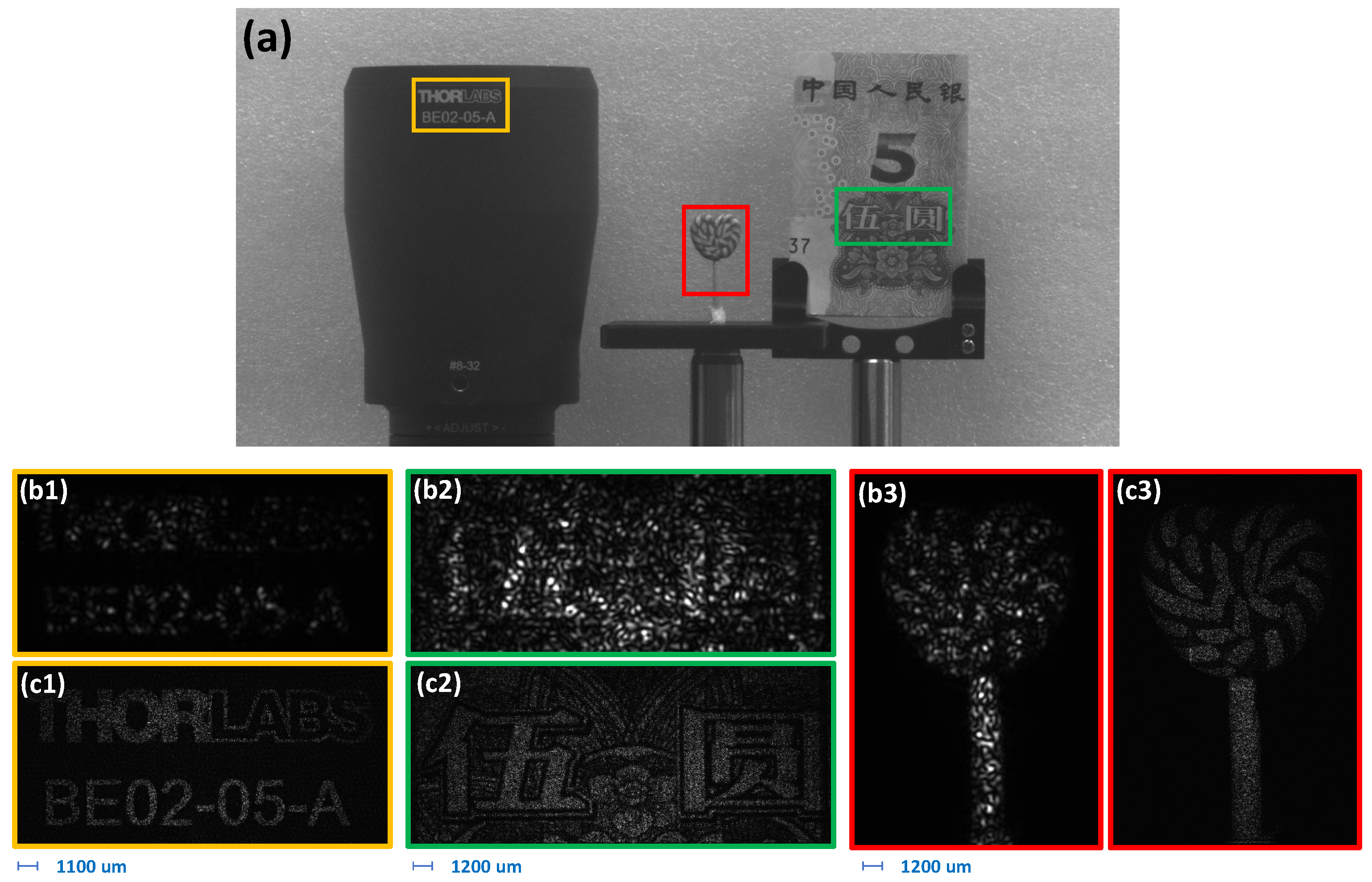

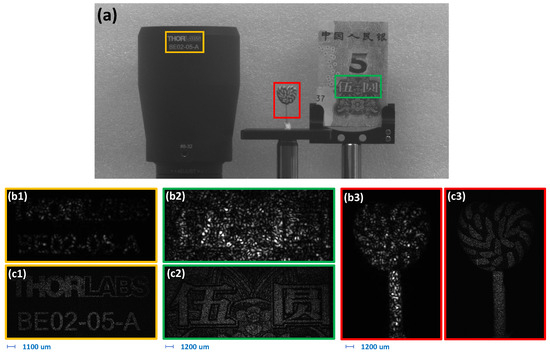

Three optically rough diffuse objects were selected for imaging experiments to verify the validity of the proposed method, including the logo on the Optical Beam Expander (BE02-05-A, THORLABS, Newton, NJ, USA) produced by THORLABS, a CNY 5 paper note and a miniature plastic lollipop. And the imaging scenarios of the three imaging targets is presented in Figure 5a. Subsequent Figure 5(b1–b3) demonstrates the individual raw image captures of each object, which exhibit close correspondence to conventional imaging outputs obtained under uniform laser illumination conditions. Finally, the computationally reconstructed results achieved through the proposed technique are displayed in Figure 5(c1–c3), illustrating the method’s enhancement capabilities.

Figure 5.

(a) The imaging scenarios of the three optically rough diffuse objects, including the logo on the Optical Beam Expander (BE02-05-A) produced by THORLABS, a CNY 5 paper note and a miniature plastic lollipop. They are marked with different colored boxes, and their corresponding raw images and reconstructed images are also annotated with borders of the same colors. (b1–b3) The single raw images of all objects. (c1–c3) The corresponding recovered high-resolution images.

For coherent imaging of optically rough diffuse objects, a small camera aperture not only causes severe diffraction blur, but also large-sized imaging speckles. This is evident in Figure 5(b1–b3), where the details of the three objects are essentially drowned out by the speckle noise and diffraction blur, making them indistinguishable.

In coherent optical imaging systems employing conventional small-aperture configurations for macroscopic diffuse objects, two fundamental limitations emerge: (1) pronounced diffraction-induced spatial blurring, and (2) the formation of large-scale speckle patterns. These artifacts are demonstrated in Figure 5(b1–b3), where object-specific features become effectively obscured by the compounded interference of speckle noise and diffraction effects, rendering structural details below the speckle correlation length visually irresolvable.

Employing FP reconstruction, the system’s theoretical synthetic aperture is demonstrably expanded relative to the original aperture. Consequently, both the effects of diffraction-induced blurring and the resulting speckle size are significantly reduced, thereby facilitating the discernible revelation of numerous fine image details, as evidenced in Figure 5(c1–c3).

3.3. Experiments on USAF Target

Further, the USAF target was used as an imaging object to quantitatively explore the performance of the method proposed in this paper.

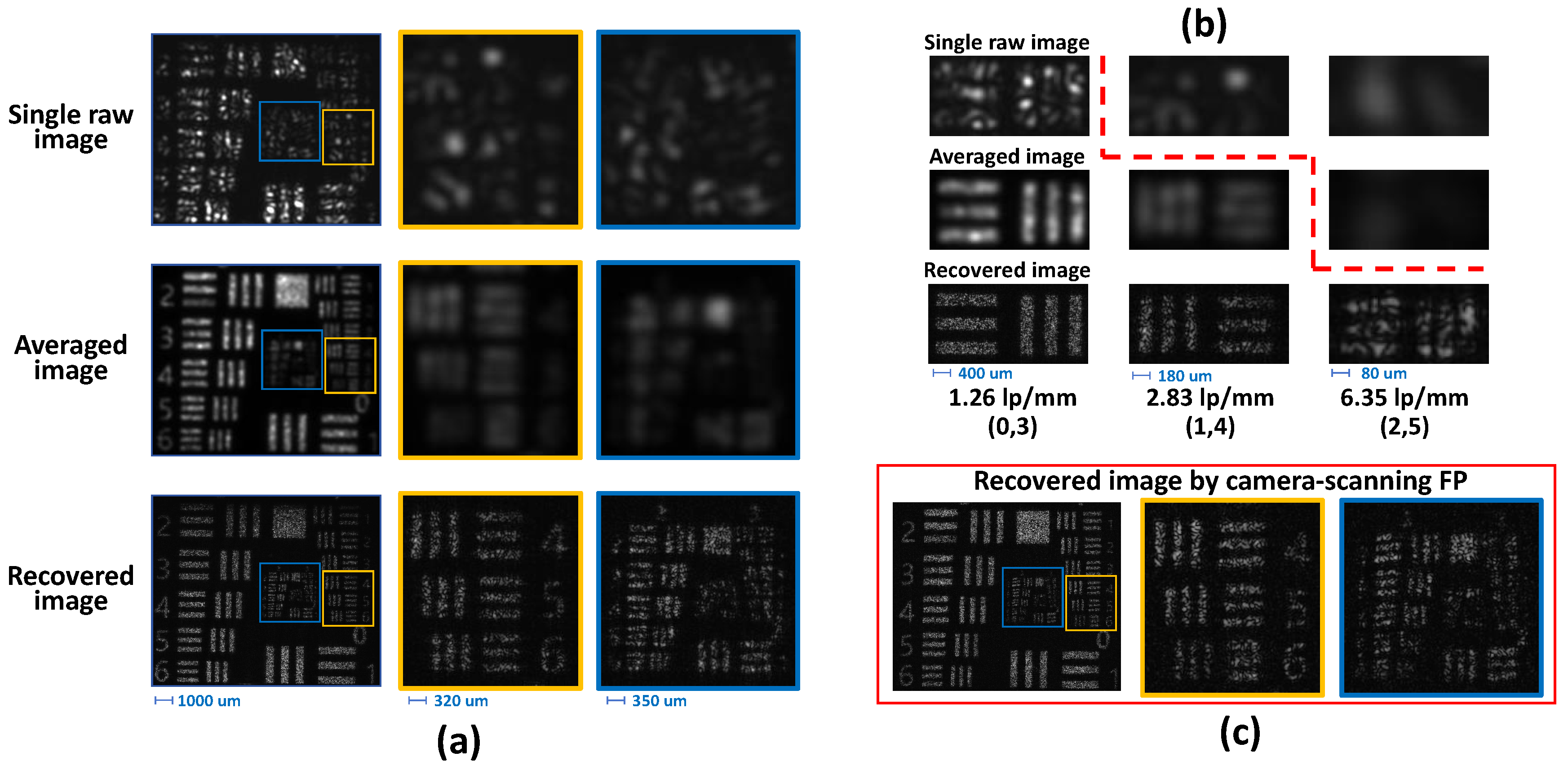

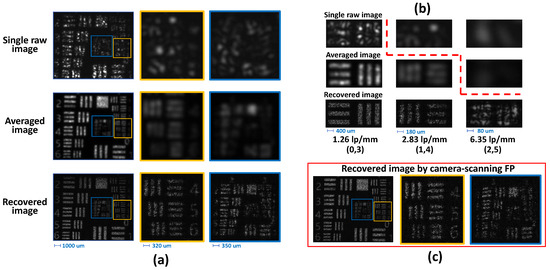

The experimental results demonstrate characteristic speckle patterns in the acquired raw dataset, as exemplified by the representative single-frame capture presented in Figure 6a. Quantitative resolution analysis reveals that individual raw images exhibit a resolution limited to 1.26 line pairs per millimeter (lp/mm), constrained by the compounded effects of speckle noise and diffraction-induced blurring.

Figure 6.

(a) Resolution of a USAF target under various imaging modalities. Row 1: Captured raw images exhibit large diffraction blur and speckle. Row 2: Averaging all the raw images could reduce the speckle noise. Row 3: SLM Macro FP create a synthetic aperture to achieve sub-diffraction-limited resolution. The original view and the magnified view of the same image region are marked with bounding boxes of the same color. (b) Magnified regions of various bar groups recovered by the three techniques. The dashed red line demarcates resolvable features. (c) Recovered results by typical camera scanning FP.

Following established practice in coherent imaging, speckle noise reduction and resolution enhancement could be achieved by multiple images of a varying statistically independent speckle field, which could be generated by different illumination angles [31]. And the inherent speckle noise was readily suppressed in this experiment by the straightforward method of averaging all raw images, which correspond to the imaging outcomes under distinct illumination angles, as shown in the average image in Figure 6a, resulting in a higher resolution of 2.83 lp/mm. Despite the averaging method effectively suppressing speckle noise and enhances resolution, it still suffers from noticeable diffraction blur, as seen in the zoomed-in view in Figure 6a.

In contrast, the proposed camera array FP configuration enables a larger synthetic aperture. It not only reduces speckle size but also diminishes diffraction blurring, leading to enhanced resolution. As a result, features as small as 6.35 lp/mm are resolvable, as demonstrated by the reconstructed image in Figure 6a. This represents approximately a five-fold improvement in resolution compared to direct imaging, a value consistent with theoretical predictions.

The bar groups corresponding to the limiting resolutions of the three results are magnified and compared in Figure 6b, in which images above the dashed red line are not resolvable.

In addition, we also conducted a camera scanning FP experiment using a translation stage to move the camera. The SAR was set to 5, with a spectral overlap rate of approximately 80%, totaling 625 shots. It essentially took five times more shots than our proposed method. The reconstruction results (Figure 6c) demonstrate a resolution comparable to our proposed method. This further confirms that, compared to typical camera scanning FP techniques, our approach eliminates the need for bulky high-precision translation stages, resulting in a more compact and lightweight system. Moreover, it can significantly improve both data acquisition efficiency and imaging speed without sacrificing image quality.

4. Discussion and Conclusions

In this work, we present a novel efficient solid-state macro FP imaging scheme based on programmable illumination and camera array. The proposed innovative illumination scheme provides accurate programmable variable-angle illumination via SLM to ensure the overlap between each adjacent sub-camera’s bandwidth in Fourier space, ultimately integrating multiple small-aperture cameras into an equivalent large-aperture camera through FP reconstruction algorithm.

And we conducted imaging experiments on three various optically rough diffuse objects, and the results demonstrate the practical effectiveness of the new technique. In addition, we performed a quantitative analysis of performance using a USAF target, and the results reveal that, compared to direct imaging, the resolution of the proposed camera array FP is increased by about five times, which is equal to the theoretical value. Furthermore, we also showed that our reconstruction results are comparable to those of the typical camera scanning FP method. This indicates that our new approach can yield a more compact and lightweight overall system, while significantly improving data acquisition efficiency and imaging speed without sacrificing image quality. Nevertheless, it should be pointed out that our proposed method cannot recover the true phase information of objects, and therefore it is not applicable to objects that require high-resolution phase information.

It is worth noting that one recent research [9] has achieved super-resolution imaging over a distance of 120 m using the typical camera scanning FP methods. And this suggests, to some extent, that although our novel efficient solid-state macro FP imaging scheme has only been tested at lab-scale range currently, it has the capability for further scaling to a longer range.

Finally, it is important to acknowledge that this work represents an initial step. As with other existing macro FP techniques, several challenges must be addressed for the practical realization of long-distance FP imaging, including the mitigation of atmospheric turbulence and stray light effects. We are indebted to Ref. [11] for suggesting several promising solutions that warrant further investigation, and believe that the proposed efficient solid-state macro FP imaging scheme has the potential to be integrated with micro Unmanned Aerial Vehicle (micro-UAV) systems in the future to enhance their existing imaging resolution. These will be the focus of our future research.

Author Contributions

Conceptualization, D.Y. and H.M.; methodology, D.Y.; software, D.Y.; validation, G.R., H.M.; resources, G.R., H.M.; writing—original draft preparation, D.Y.; writing—review and editing, D.Y., H.M.; visualization, D.Y.; project administration, H.M.; funding acquisition, H.M. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Open Research Fund of State Key Laboratory of Pulsed Power Laser Technology (SKL2018KF05), National Natural Science Foundation of China (62005289, 62175243), Excellent Youth Foundation of Sichuan Scientific Committee (2019JDJQ0012), Youth Innovation Promotion Association of the Chinese Academy of Sciences (2018411, 2020372).

Data Availability Statement

The data mentioned in the manuscript may be requested by email from the author.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- van Belle, G.T.; Meinel, A.B.; Meinel, M.P. The scaling relationship between telescope cost and aperture size for very large telescopes. In Ground-Based Telescopes, Proceedings of the SPIE Astronomical Telescopes + Instrumentation, Scotland, UK, 21–24 June 2004; SPIE: Bellingham, WA, USA, 2004; Volume 5489, pp. 563–570. [Google Scholar] [CrossRef]

- Holloway, J.; Asif, M.S.; Sharma, M.K.; Matsuda, N.; Horstmeyer, R.; Cossairt, O.; Veeraraghavan, A. Toward Long-Distance Subdiffraction Imaging Using Coherent Camera Arrays. IEEE Trans. Comput. Imaging 2016, 2, 251–265. [Google Scholar] [CrossRef]

- Zheng, G.; Horstmeyer, R.; Yang, C. Wide-field, high-resolution Fourier ptychographic microscopy. Nat. Photonics 2013, 7, 739–745. [Google Scholar] [CrossRef] [PubMed]

- Xiang, M.; Pan, A.; Zhao, Y.; Fan, X.; Zhao, H.; Li, C.; Yao, B. Coherent synthetic aperture imaging for visible remote sensing via reflective Fourier ptychography. Opt. Lett. 2021, 46, 29. [Google Scholar] [CrossRef] [PubMed]

- Dong, S.; Horstmeyer, R.; Shiradkar, R.; Guo, K.; Ou, X.; Bian, Z.; Xin, H.; Zheng, G. Aperture-scanning Fourier ptychography for 3D refocusing and super-resolution macroscopic imaging. Opt. Express 2014, 22, 13586. [Google Scholar] [CrossRef]

- Dong, S.; Nanda, P.; Guo, K.; Liao, J.; Zheng, G. Incoherent Fourier ptychographic photography using structured light. Photonics Res. 2015, 3, 19. [Google Scholar] [CrossRef]

- Holloway, J.; Wu, Y.; Sharma, M.K.; Cossairt, O.; Veeraraghavan, A. SAVI: Synthetic apertures for long-range, subdiffraction-limited visible imaging using Fourier ptychography. Sci. Adv. 2017, 3, 1–12. [Google Scholar] [CrossRef]

- Li, S.; Wang, B.; Liang, K.; Chen, Q.; Zuo, C. Far-Field Synthetic Aperture Imaging via Fourier Ptychography with Quasi-Plane Wave Illumination. Adv. Photonics Res. 2023, 4, 2300180. [Google Scholar] [CrossRef]

- Zhang, Q.; Lu, Y.; Guo, Y.; Shang, Y.; Pu, M.; Fan, Y.; Zhou, R.; Li, X.; Pan, A.; Zhang, F.; et al. 200 mm optical synthetic aperture imaging over 120 m distance via macroscopic Fourier ptychography. Opt. Express 2024, 32, 44252. [Google Scholar] [CrossRef]

- Li, S.; Wang, B.; Guan, H.; Zheng, G.; Chen, Q.; Zuo, C. Snapshot macroscopic Fourier ptychography: Far-field synthetic aperture imaging via illumination multiplexing and camera array acquisition. Adv. Imaging 2024, 1, 011005. [Google Scholar] [CrossRef]

- Tian, Z.; Zhao, M.; Yang, D.; Wang, S.; Pan, A. Optical remote imaging via Fourier ptychography. Photonics Res. 2023, 11, 2072. [Google Scholar] [CrossRef]

- Wang, C.; Hu, M.; Takashima, Y.; Schulz, T.J.; Brady, D.J. Snapshot ptychography on array cameras. Opt. Express 2022, 30, 2585. [Google Scholar] [CrossRef] [PubMed]

- Zhou, R.; Guo, Y.; Zhang, Q.; Pu, M.; Lu, Y.; Shang, Y.; Li, X.; Zhang, F.; Xu, M.; Luo, X. TurbFPNet: Neural far-field turbulent Fourier ptychography with a camera array. Optica 2025, 12, 1068. [Google Scholar] [CrossRef]

- Wang, B.; Li, S.; Chen, Q.; Zuo, C. Learning-based single-shot long-range synthetic aperture Fourier ptychographic imaging with a camera array. Opt. Lett. 2023, 48, 263. [Google Scholar] [CrossRef] [PubMed]

- Tian, L.; Li, X.; Ramchandran, K.; Waller, L. Multiplexed coded illumination for Fourier Ptychography with an LED array microscope. Biomed. Opt. Express 2014, 5, 2376. [Google Scholar] [CrossRef]

- Chung, J.; Lu, H.; Ou, X.; Zhou, H.; Yang, C. Wide-field Fourier ptychographic microscopy using laser illumination source. Biomed. Opt. Express 2016, 7, 4787. [Google Scholar] [CrossRef]

- Kuang, C.; Ma, Y.; Zhou, R.; Lee, J.; Barbastathis, G.; Dasari, R.R.; Yaqoob, Z.; So, P.T.C. Digital micromirror device-based laser-illumination Fourier ptychographic microscopy. Opt. Express 2015, 23, 26999. [Google Scholar] [CrossRef]

- Gerchberg, R.; Saxton, W. A practical algorithm for the determination of phase from image and diffraction plane pictures. Optik 1971, 35, 237–250. [Google Scholar]

- Chakravarthula, P.; Peng, Y.; Kollin, J.; Fuchs, H.; Heide, F. Wirtinger holography for near-eye displays. ACM Trans. Graph. 2019, 38, 1–13. [Google Scholar] [CrossRef]

- Zhang, J.; Pégard, N.; Zhong, J.; Adesnik, H.; Waller, L. 3D computer-generated holography by non-convex optimization. Optica 2017, 4, 1306. [Google Scholar] [CrossRef]

- Goodman, J.W.; Cox, M.E. Introduction to Fourier Optics. Phys. Today 1969, 22, 97–101. [Google Scholar] [CrossRef]

- Ou, X.; Zheng, G.; Yang, C. Embedded pupil function recovery for Fourier ptychographic microscopy: Erratum. Opt. Express 2015, 23, 33027. [Google Scholar] [CrossRef]

- Yeh, L.H.; Dong, J.; Zhong, J.; Tian, L.; Chen, M.; Tang, G.; Soltanolkotabi, M.; Waller, L. Experimental robustness of Fourier ptychography phase retrieval algorithms. Opt. Express 2015, 23, 33214. [Google Scholar] [CrossRef]

- Zuo, C.; Sun, J.; Chen, Q. Adaptive step-size strategy for noise-robust Fourier ptychographic microscopy. Opt. Express 2016, 24, 20724. [Google Scholar] [CrossRef]

- Bian, L.; Suo, J.; Zheng, G.; Guo, K.; Chen, F.; Dai, Q. Fourier ptychographic reconstruction using Wirtinger flow optimization. Opt. Express 2015, 23, 4856. [Google Scholar] [CrossRef] [PubMed]

- Bian, L.; Suo, J.; Chung, J.; Ou, X.; Yang, C.; Chen, F.; Dai, Q. Fourier ptychographic reconstruction using Poisson maximum likelihood and truncated Wirtinger gradient. Sci. Rep. 2016, 6, 27384. [Google Scholar] [CrossRef] [PubMed]

- Chen, S.; Xu, T.; Zhang, J.; Wang, X.; Zhang, Y. Optimized Denoising Method for Fourier Ptychographic Microscopy Based on Wirtinger Flow. IEEE Photonics J. 2019, 11, 1–14. [Google Scholar] [CrossRef]

- Liu, J.; Li, Y.; Wang, W.; Tan, J.; Liu, C. Accelerated and high-quality Fourier ptychographic method using a double truncated Wirtinger criteria. Opt. Express 2018, 26, 26556. [Google Scholar] [CrossRef]

- Zhang, Y.; Song, P.; Dai, Q. Fourier ptychographic microscopy using a generalized Anscombe transform approximation of the mixed Poisson-Gaussian likelihood. Opt. Express 2017, 25, 168. [Google Scholar] [CrossRef]

- Fan, Y.; Sun, J.; Chen, Q.; Wang, M.; Zuo, C. Adaptive denoising method for Fourier ptychographic microscopy. Opt. Commun. 2017, 404, 23–31. [Google Scholar] [CrossRef]

- Goodman, J.W. Speckle Phenomena in Optics: Theory and Applications, 2nd ed.; SPIE: Bellingham, WA, USA, 2020. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.