Abstract

This study examines the relationship between writing performance, assessed with the Early Writing Alert System (SISAT), and linguistic patterns in student narratives from one public and one private university in northeastern Mexico. Variables such as lexical density and richness, text volume, and thematic progression were analyzed to explore how institutional context influences narrative writing and its assessment. A non-experimental, descriptive–comparative design with interpretive triangulation was employed. The corpus comprised 148 narratives produced over three academic periods, analyzed using automated linguistic tools alongside SISAT scores. Descriptive statistics, Spearman correlations, and Kruskal–Wallis tests were applied to examine differences between the two institutions and across periods. The results indicate intermediate performance at both universities, with differentiated patterns: at the public university, lexical richness and density positively correlated with SISAT scores, while greater text volume was negatively associated; at the private university, both text length and diversity were positively related, though excessive lexical density appeared counterproductive. No statistically significant differences were observed between periods or between the two universities. Our findings highlight that quantitative linguistic indicators complement normative assessment and underscore the role of institutional context in writing development. The study also emphasizes the formative and expressive functions of narrative writing, supporting pedagogical strategies that integrate automated assessment with qualitative analysis to foster self-regulation, symbolic expression, and ethical reflection.

1. Introduction

1.1. Academic Writing in Higher Education: An Integrative Approach

Academic writing in higher education requires an integrative theoretical framework that captures its multiple dimensions. It can be understood simultaneously as a cognitive process, a discursive and rhetorical practice, a linguistic phenomenon, and a situated social activity. Within this perspective, the present study brings together five complementary approaches—rhetorical, linguistic, sociocultural, didactic, and technological—which underpin the automated analysis of university students’ narrative writing and its relationship with institutional writing assessment.

1.2. Writing as a Cognitive and Self-Regulated Process

From the perspective of cognitive psychology, writing has been conceptualized as a complex process involving planning, textualization, and revision, mediated by working memory and prior knowledge (Flower & Hayes, 1981). This model established writing as a recursive and non-linear activity in which writers continually make decisions about content, organization, and audience.

Self-regulated learning theory subsequently expanded this view by emphasizing learners’ active role in planning, monitoring, and evaluating their own writing processes and outcomes (Zimmerman, 2000). Academic writing therefore requires metacognitive skills that allow students to evaluate idea clarity, adjust discourse strategies, and respond to external quality criteria. Empirical studies consistently show that higher levels of self-regulation are associated with more coherent, structured, and genre-appropriate academic texts (Chaverra Fernández et al., 2022; Sandoval-Cárcamo et al., 2024). This perspective is particularly relevant for automated writing assessment, as artificial intelligence-based systems can scaffold self-regulation through immediate, objective, and iterative feedback, thereby supporting cycles of continuous writing improvement.

1.3. Rhetorical and Discursive Perspectives on Academic Writing

From a rhetorical standpoint, academic writing is conceived as a communicative and persuasive act oriented toward a specific audience and governed by shared disciplinary conventions. Its foundations can be traced to Aristotle’s classical rhetoric, particularly the principles of ethos, pathos, and logos, which remain relevant in contemporary academic discourse.

In research on academic genres, Swales (1990) introduced the notion of discourse communities and the CARS (Create a Research Space) model, highlighting the strategic rhetorical moves that structure research writing. Hyland (2002, 2005) further developed this perspective by examining the interpersonal dimension of academic discourse and the role of metadiscourse, stance, and evaluative resources in constructing authorial voice and credibility. From this view, academic writing involves positioning knowledge claims in relation to prior research, anticipating readers’ expectations, and guiding interpretation. Automated narrative assessment can operationalize these principles by identifying discursive organization patterns, thematic development, and argumentative markers relevant to writing quality.

1.4. Linguistic Approach to Academic Writing

The linguistic approach focuses on the formal language features that support meaning construction in academic texts. Halliday and Hasan’s (1976) concept of textual cohesion highlights mechanisms such as reference, ellipsis, conjunction, and lexical repetition as essential for coherence. Within systemic functional linguistics, language is further described through three metafunctions: ideational, interpersonal, and textual (Halliday, 1985).

Research indicates that academic writing typically exhibits high lexical density, frequent nominalizations, abstract nouns, impersonal constructions, and logical connectors, with variation across genres, disciplines, and educational levels (Chyzhykova, 2024; Dong et al., 2023; Lipková, 2024; Istiqomah & Basthomi, 2024; Nguyen & Edwards, 2015). Corpus-based approaches have enabled the empirical description of these features through large-scale analyses of expert and student texts (Biber et al., 1999; McEnery & Hardie, 2012). Learner corpora, in particular, have been widely used for diagnostic, evaluative, and pedagogical purposes in higher education writing research (Boulton & Cobb, 2017; Ma et al., 2023; Ueno & Takeuchi, 2023; Granger, 2024). In this context, automated linguistic analysis allows for the objective measurement of variables such as lexical density, lexical diversity, and grammatical pattern frequency, providing quantitative evidence to support writing assessment.

1.5. Sociocultural Perspectives and Academic Literacy

From a sociocultural perspective, academic writing is understood as a situated social practice shaped by institutional norms, power relations, and cultural values (Barton & Hamilton, 1998; Street, 1984, 2015). Learning to write at university entails appropriating disciplinary genres and legitimized ways of constructing and communicating knowledge (Lea & Street, 1998). This perspective has highlighted the challenges faced by first-generation students, learners from underrepresented backgrounds, and second-language writers, for whom academic writing may function as a mechanism of exclusion (Lillis, 2001).

In response, academic literacy models have emphasized the integration of tutoring, collaborative writing, and formative feedback within disciplinary teaching (Calvo et al., 2020; Davis et al., 2025; Fuster-Barcelo et al., 2025; Zheldibayeva, 2025). Automated assessment systems, by offering continuous and accessible feedback, have the potential to support more equitable writing development, provided they are implemented ethically and in coordination with instructor guidance.

1.6. Didactic Approach and Artificial Intelligence in Writing Instruction

The didactic approach views academic writing as a competence that must be taught explicitly, progressively, and in close alignment with disciplinary curricula. Carlino (2005, 2013, 2023) argues that writing is not a generic skill acquired prior to university study, but a situated disciplinary practice that requires sustained pedagogical support. Consequently, effective instruction should integrate critical reading, guided text production, revision processes, and metacognitive reflection. Narrative and creative writing have also been incorporated as pedagogical strategies to enhance motivation, expressiveness, and engagement with academic discourse (Barbara et al., 2024; Bruner, 2001).

However, the assessment of academic writing remains constrained by subjectivity, inter-rater variability, and instructor workload (Hyland, 2019; Sánchez-Rivas et al., 2023). In this context, artificial intelligence-based language models such as ChatGPT 5.2 offer new possibilities for automated text analysis, enabling the evaluation of coherence, grammatical accuracy, discursive structure, and linguistic patterns, as well as the generation of immediate and adaptive feedback (Amabile, 2018; OpenAI, 2023). Recent studies suggest that these tools support idea organization, linguistic revision, and autonomous learning among both students and pre-service teachers (Güler et al., 2025; Kaur & Kapoor, 2025; Ravšelj et al., 2025). From rhetorical, linguistic, sociocultural, and didactic perspectives, artificial intelligence is therefore conceptualized as a complementary resource that enhances formative assessment rather than replacing teacher judgment.

1.7. Theoretical Articulation and Projection of the Study

Based on this theoretical convergence, this article proposes a technical–methodological model for the automated evaluation of narrative writing based on the architecture of language models such as ChatGPT. The model integrates contributions from rhetoric, linguistics, socioculturality, and didactics, together with the pedagogical benefits of narrative and creative writing. It is proposed that combining traditional pedagogical approaches with artificial intelligence technologies can improve the quality of academic writing, strengthen student self-regulation, and offer immediate and personalized feedback in diverse university contexts. The study aims to analyze the relationship between institutional writing assessment and linguistic patterns present in the narratives of higher education students, identify linguistic and rhetorical indicators associated with different levels of performance, compare institutional contexts, and explore the pedagogical potential of automated assessment as a complement to traditional academic writing assessment.

2. Materials and Methods

Large-scale assessments conducted by the Secretaría de Educación Pública (SEP, Mexico’s Ministry of Education) and the Comisión Nacional para la Mejora Continua de la Educación (MEJOREDU, National Commission for the Continuous Improvement of Education) have consistently reported persistent weaknesses in students’ writing competence, particularly in orthography, textual cohesion, and communicative clarity at the secondary level. Regional assessments such as APRENDE Tamaulipas, a state-level educational assessment program, further indicate that these difficulties affect both public and private institutions, with no sustained improvement over time. However, while these evaluations provide valuable diagnostic information for basic education, little is known about whether such deficiencies persist in higher education, or how they are manifested in university-level writing across different institutional contexts.

2.1. Contextualization of the Study

This study was conducted at two universities located in Tamaulipas, a state in northeastern Mexico. One institution is public, and the other is private. These universities were selected intentionally to explore potential differences in students’ writing performance associated with institutional type. Public and private universities in Tamaulipas differ in terms of student population, available resources, pedagogical practices, and access to academic support, which may influence the quality of written expression. By examining both types of institutions, the study aims to identify not only general patterns of writing competence among university students but also the ways in which institutional context shapes linguistic and discursive performance. This regional focus also contributes to understanding writing development in northeastern Mexico, a context for which there is limited empirical evidence at the higher education level.

2.2. Theoretical Linkage and Operationalization

The study integrates cognitive, self-regulation, rhetorical–discursive, and sociocultural frameworks. These perspectives guided the following: Selection of automatic metrics—lexical density, lexical richness, syntactic complexity, and thematic progression. Narrative analysis—cohesion, planning, and argumentation. Institutional comparison—public vs. private universities to capture sociocultural differences (Table 1).

Table 1.

Theoretical–metric linkage.

2.3. Corpus and Unit of Analysis

The corpus consisted of 148 narratives produced by university students, distributed equally between public and private universities (74 texts per institution) in the first, second, and third periods of 2025. The unit of analysis was the complete narrative text, which allowed for the identification of collective linguistic and discursive patterns. The texts were regular academic productions, without experimental intervention. All participating students provided written informed consent, agreeing to the collection and analysis of their narrative texts anonymously and voluntarily. Confidentiality and the right of participants to withdraw at any time were guaranteed. To protect the identity of the participants, alphanumeric codes were assigned to each text (e.g., S01 and S02).

2.4. Procedure and Analysis of the Texts

The design of the analysis puts into practice the theoretical frameworks presented in the Introduction; the steps for identifying lexical–discursive patterns reflect the integration of cognitive, rhetorical, linguistic, sociocultural, and didactic perspectives in the automated evaluation of academic writing. Thus, each methodological stage was selected and applied to capture both the linguistic competence and the discursive and pedagogical dimensions of the students’ texts.

2.4.1. Institutional Assessment: SISAT

To contextualize the linguistic results, the Early Warning System in Writing (SISAT) developed by the Ministry of Public Education as used. This instrument assesses six writing indicators on a scale from 1 to 3, giving a total score between 6 and 18 and classifying performance into three levels: Needs support (≤9), Developing (10–14), and Expected level (15–18). This assessment provides the institutional benchmark with which the automatically extracted linguistic indicators are correlated. Table A1 presents the SISAT performance scale. A detailed description of the instrument is provided in Appendix A. Table A2 and Table A3 and Appendix B. Student Narratives.

2.4.2. Preprocessing: Tokenization and Lexical Normalization

Before analysis, the texts underwent tokenization and lexical normalization.

Tokenization segments the text into minimal units of analysis—words and punctuation marks—facilitating the identification of lexical patterns, spelling errors, and grammatical relationships. For example, the text “The girl wrote a story.” is tokenized as: (“The,” “girl,” “wrote,” “a,” “story,” “.”). This allows the software to analyze each word independently and calculate automatic metrics such as word frequency, sentence length, and grammatical patterns.

Lexical normalization unifies morphological forms (run, ran, running → run), standardizes the use of lowercase letters, and corrects frequent typos. This step ensures that morphological variation or minor errors do not distort the results, improving the accuracy of metrics such as lexical diversity and syntactic complexity.

2.4.3. Extraction of Linguistic Metrics

After preprocessing, the following metrics were calculated automatically:

- (a)

- Lexical density and lexical richness, to assess the variety and sophistication of vocabulary.

- (b)

- Frequency of grammatical categories, such as verbs, nouns, and adjectives, to examine discourse structure.

- (c)

- Number of sentences and syntactic complexity, reflecting the organization of ideas.

- (d)

- Thematic progression, to identify content coherence and sequencing.

These metrics allow us to relate objective linguistic variables to the performance levels established by SISAT and to possible differences between public and private universities, in line with cognitive and self-regulated learning frameworks.

2.4.4. Identification of Lexical–Discursive Patterns

Based on the metrics extracted, a qualitative and quantitative analysis of the texts was performed to identify recurring lexical–discursive patterns associated with performance levels and institutional differences:

- (a)

- Automated analysis: Co-occurrence of words, frequency of grammatical structures, and syntactic patterns.

- (b)

- Interpretative analysis: Thematic progression, textual cohesion, and discursive organization.

The integration of these techniques allowed for a contextualized diagnosis of written competence, connecting linguistic evidence with institutional assessments and providing a solid basis for pedagogical recommendations. The choice of metrics and procedures was based on the following:

- (a)

- Cognitive and self-regulation perspectives: To capture planning, clarity, and textual cohesion.

- (b)

- Rhetorical and discursive principles: To analyze narrative organization, thematic progression, and argumentative markers.

- (c)

- Systemic functional linguistics: To select indicators of lexical density, richness, and syntactic complexity.

- (d)

- Sociocultural perspective: To justify the comparison between public and private universities.

- (e)

- Didactic and technological perspectives: To integrate artificial intelligence-based tools that provide objective, immediate, and individualized feedback, complementing traditional methods of institutional assessment.

2.4.5. Software and Analytical Tools

To ensure a systematic, replicable, and context-sensitive analysis of students’ narrative texts, this study employed a combination of corpus-based analytical frameworks and digital tools specifically selected to support each stage of the linguistic and discourse analysis. The software and tools were aligned with established practices in corpus linguistics and automated writing assessment, enabling the integration of quantitative metric extraction and qualitative pattern identification.

Corpus-Based Analytical Framework

The overall analytical design was based on the principles of corpus linguistics, which allow for the empirical examination of authentic texts using computational methods (Biber et al., 1999; McEnery & Hardie, 2012). This framework guided the selection of linguistic variables, the organization of the textual dataset, and the interpretation of the structural and frequency-based patterns observed in the students’ narratives.

Analytical Tools Developed by the Researchers

Customized tools integrated by the researchers were used for text preprocessing and automated linguistic analysis. These tools enabled tokenization, lexical normalization, metric calculation, and the identification of recurring lexical–discursive patterns. Their design responded to the specific linguistic characteristics of academic writing in Spanish and to the evaluative criteria established by the institutional evaluation system (SISAT), which facilitated alignment between linguistic metrics and educational performance indicators.

Integration and Documentation of the Analytical Procedures

The combination of corpus-based software and researcher-developed tools allowed for efficient processing of the text corpus and ensured consistency across the different analytical stages. Detailed descriptions of these tools, their functions, and access links are provided (see Figure A1, Figure A2 and Figure A3; Appendix C) to ensure transparency and replicability, while avoiding interruptions to the methodological flow of the main text.

2.4.6. Data Analysis

Data analysis followed a multilevel strategy aligned with the study’s objectives:

- Descriptive analysis: Means, percentages, and distributions by academic period and university, providing an overview of the characteristics of the corpus.

- Correlational analysis: Spearman’s correlation to explore relationships between institutional performance (SISAT) and linguistic variables. This was calculated separately for public and private universities, considering institutional differences in resources, student profiles, and teaching practices.

- Comparative analysis: Kruskal–Wallis tests to examine differences between academic periods and between institutions. Although inferential tests are not the main focus, they provide additional context to the descriptive and correlational findings.

- Quantitative–qualitative triangulation: Statistical results combined with analysis of lexical–discursive and rhetorical patterns, offering an integrated interpretation of writing development according to institutional context.

This strategy allows us to link metrics of lexical richness, cohesion, and narrative structure with institutional performance, demonstrating how differences in context can influence writing competence.

3. Results

3.1. Characteristics of the Public University Corpus

The corpus consisted of 74 narratives from public university students, distributed across three academic periods. Lexical density varied between 21.5% and 29.6%, indicating texts of intermediate lexical complexity. Lexical richness (unique words) was higher in the first period and slightly lower in the third period, reflecting the evolution of writing towards more experiential and descriptive narratives (Table 2).

Table 2.

Relationship between lexical metrics and writing performance (SISAT) by academic term at public university.

3.2. Linguistic and Thematic Patterns

Nouns related to childhood, family, and school predominate, reflecting an affective and educational focus in the narratives. Four recurring thematic nuclei were identified: family/affection, mystery/fantasy, space/fear, and loss/rebirth. Youth narratives are characterized by tripartite structures (beginning–climax–ending) and limited use of connectors, especially at ‘Developing’ performance levels (Table 3).

Table 3.

Relationship between SISAT levels and dominant linguistic features.

3.3. General Trends in Narrative Corpus

The highest lexical density was observed in the second period, suggesting texts with greater semantic load. The third period shows greater thematic and expressive diversity, although with slightly lower lexical density, indicating a transition towards more descriptive and experiential narratives. The corporation reflects a coherent youth narrative universe, in which writing fulfills affective, identity, and learning functions.

3.4. Characteristics of the Private University Corpus

The corpus included 74 narratives, distributed across three academic periods. Lexical density ranged from 22% to 39.6%, and vocabulary richness varied according to the period, reflecting an evolution towards more diverse and expressive narratives (Table 4).

Table 4.

Relationship between lexical metrics and writing performance (SISAT) by academic terms.

3.5. Linguistic and Thematic Patterns

Nouns related to childhood, family, time, and values predominate, evidencing an affective, moral, and formative approach. Recurring thematic nuclei include familial–affective, symbolic–existential, social–everyday, and moral–formative. The narrative has a tripartite structure (beginning → conflict → lesson), with a predominance of the imperfect and perfect past tenses, simple temporal connectors and reflective closure at intermediate levels. The most frequent rhetorical figures were metaphor, personification, anaphora, hyperbole and antithesis, contributing to a style of symbolic and moralizing realism (Table 5).

Table 5.

Relationship between SISAT levels and dominant linguistic features.

Most students are at the “Developing” level, with functional narrative skills but room for improvement in cohesion, lexical variety and discourse complexity. The correlation between SISAT and linguistic analysis confirms that the instrument reflects performance patterns consistent with textual quality.

3.6. General Trends in Narrative Corpus

The public university has a higher text volume, while lexical density is higher in the private university during the first period. The average SISAT score shows slightly higher trends in the private institution (Table 6 and Table 7).

Table 6.

Comparison of lexical metrics and SISAT performance by institution.

Table 7.

Spearman correlations between linguistic indicators and SISAT scores by type of university.

The justification for calculating correlations separately is that, as indicated in the Methods section, SISAT scores are ordinal, some linguistic variables are not normally distributed, and the sample size per period is limited. Therefore, Spearman’s correlation was used. Correlations were calculated separately for public and private universities to explore whether the relationship between linguistic characteristics and performance varied according to institutional context, given that student profiles and educational practices differ between types of institutions. In the private university, some linguistic variables (unique words and text volume) varied linearly with SISAT scores in this sample, resulting in a perfect correlation of ρ = 1.00. Although uncommon, this outcome reflects the homogeneity of narrative development in this institution for the analyzed period, considering the limited sample size (n = 74). This strategy allows the results to be interpreted in a contextualized manner, rather than assuming a homogeneous relationship for both groups (Table 8).

Table 8.

Kruskal–Wallis comparisons of SISAT scores across periods and institutions.

At the aggregate level, no statistically significant differences are observed between periods or institutions. However, descriptive and correlational analyses show distinct institutional patterns in the relationship between lexical richness, lexical density, textual volume, and SISAT performance. This highlights the importance of combining statistical and descriptive analyses to interpret the development of creative writing according to institutional context.

4. Discussion

The analysis of narrative corpora from students at a public and a private university allows us to reflect on academic writing in higher education, integrating rhetorical, linguistic, sociocultural, cognitive, and didactic perspectives. The use of corpus linguistics as a systematic and empirical framework facilitates the identification of lexical–grammatical, discursive, and rhetorical patterns associated with different levels of performance (Biber et al., 1999; Granger, 2024; Hyland, 2016, 2019; McEnery & Hardie, 2012). Metrics such as lexical density and richness, text volume, syntactic complexity, and thematic progression provide quantitative evidence of writing proficiency and its relationship with institutional criteria (Barbara et al., 2024; Biber, 2012; Lusta et al., 2023; Ten Peze et al., 2024; Ueno & Takeuchi, 2023).

The use of ad hoc corpora and automated techniques based on artificial intelligence enables efficient tokenization, tagging, and calculation of linguistic indicators, integrating institutional performance analysis with pedagogical evidence (O’Donnell et al., 2025). This demonstrates how automated assessment can complement teaching by providing objective and timely feedback without replacing pedagogical judgment.

From the perspective of cognitive psychology, writing involves planning, textualization, and revision mediated by working memory and prior knowledge (Flower & Hayes, 1981). The results show that lexical richness and density are associated with performance on the SISAT, although patterns vary according to institutional context: in public universities, a greater volume of text does not guarantee high scores, while in private universities, textual diversity and length are related to better results, reflecting differences in self-regulation strategies (Zimmerman, 2000).

From a rhetorical perspective, thematic progression, strategic organization, and the use of discourse markers reveal the audience’s expectations and the author’s position (Hyland, 2002; Swales, 1990). Automated assessment that identifies these patterns allows for a comprehensive evaluation of writing. Lexical richness and the use of connectors, consistent with systemic functional linguistics and corpus studies (Biber et al., 1999; Halliday, 1985), stand out as key indicators. Texts from public universities show greater volume but lower lexical density, while those from private universities tend to be more cohesive and denser, influenced by the sociocultural context (Barton & Hamilton, 1998; Street, 1984).

Narrative and creative writing strengthen self-regulation, symbolic expression, and ethical reflection (Barbara et al., 2024; Bruner, 2001). The application of automated techniques offers immediate and objective feedback, although it must be complemented by teacher assessment to preserve creativity and stylistic diversity.

Taken together, these findings show that academic writing develops gradually and is mediated by multiple factors. Although the study is limited to two universities and does not allow for broad generalizations, it provides novel evidence on how institutional context influences the relationship between textual characteristics and assessment performance. The results suggest the need for differentiated pedagogical strategies and the integration of automated assessment with qualitative analysis, promoting expressive, critical, and reflective skills in various university contexts.

5. Conclusions

The differences in the relationship between text length, lexical richness, and writing performance between the two universities suggest that the institutional context shapes how writing skills develop in university students, providing evidence that academic writing depends not only on individual skills but also on educational and sociocultural factors. Lexical diversity and textual cohesion emerge as consistent indicators of performance, validating the usefulness of automated and corpus metrics to complement institutional assessment by providing objective data on areas for improvement in academic writing. Narratives show that writing fulfills expressive, reflective, and formative functions beyond institutional assessment, suggesting that narrative work can strengthen self-regulation, symbolic expression, and ethical reflection by integrating the academic dimension with the personal and social dimensions of learning. Although this study is limited to two universities, its findings offer insights applicable to the teaching of university writing, particularly regarding how to adapt pedagogical strategies to different institutional contexts and how to integrate automated tools with qualitative analysis to strengthen writing competence.

Author Contributions

N.B.R.: Conceptualization, Methodology, Drafting—Original Draft. D.D.B.G.: Validation, Data Curation, Drafting—Revision and Editing. M.L.R.C.: Supervision, Formal Analysis. C.A.C.: Software, Visualization. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki, and approved by the Directorate of Research and Technological Development (DIDT), Autonomous University of Tamaulipas (protocol code UAT/SIP/PIRP/2023/025 and date of approval 15 August 2025).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

Data are available upon request due to ethical restrictions.

Conflicts of Interest

The authors declare no conflicts of interest.

Appendix A. SISAT Scale and Narrative Analysis Examples

Appendix A.1. SISAT Scale for Written Text Exploration (SEP, 2019)

The evaluation of student narratives was conducted using the SISAT scale, designed to explore the quality of written texts in higher education. Performance is classified into three levels based on total score: Expected Level—15–18 points; Developing Level—10–14 points and Requires Support—9 points or less. The scale evaluates six dimensions: readability, fulfillment of communicative purpose, relationship between words and sentences, lexical diversity, correct use of punctuation, and proper application of spelling rules. Internal consistency is α ≈ 0.74, indicating reliability.

Table A1.

SISAT Scale for written text exploration.

Table A1.

SISAT Scale for written text exploration.

| Dimension | Score 3 | Score 2 | Score 1 |

|---|---|---|---|

| I. Readability | Legible: correct word separation, hyphenation, and letter spelling | Moderately legible: substitution, omission, or addition errors; partial word separation | Illegible: pre-alphabetic writing; incorrect separation; unreadable |

| II. Communicative Purpose | Clear, organized, and coherent message | Partially clear: incomplete or mixed ideas; missing components | Not clear: message confused, sequence lost, no proper organization |

| III. Word–Sentence Relationship | Correct verb tenses, gender, number; variety of connectors | Some errors in tenses, gender, number; limited connectors | Incorrect use of tenses, gender, number; no connectors |

| IV. Lexical Diversity | Rich, varied, and context-appropriate vocabulary | Limited or repetitive; some inappropriate words | Very reduced or irrelevant vocabulary; minimal text production |

| V. Punctuation | Correct use of period, comma, and other required marks (?, !, quotes) | Partial use, some omissions | Punctuation absent or incorrect throughout |

| VI. Spelling Rules | Correct capitalization, accents, and letters representing sounds | Minor errors; incorrect accentuation in common words | Serious errors: missing capitals, accents, incorrect letters |

Note: Total score is the sum of all dimensions (3, 2, or 1 point per dimension).

Appendix A.2. Narrative Examples and Linguistic Analysis

Appendix A.2.1. Example 1: Developing Level (Public University)

Excerpt 1 (SISAT: Developing Level): “Es una niña llamada Isabella, procedente de una familia con bajos ingresos, que quería tener un gran futuro para ayudar a sus padres. La pequeña Isabella tenía muchos sueños que quería cumplir. Un día decidió esforzarse para cambiar su vida y apoyar a su familia, porque sabía que su futuro dependía de ello.”

Linguistic and Discursive Analysis: Clear narrative purpose and thematic continuity focused on family and personal aspiration. Recurrent orthographic errors and limited punctuation.

- Extended syntactic structures with weak segmentation.

- Moderate lexical density, with repetition of general verbs (quería and tener).

- Cohesion relies mainly on chronological sequencing, without explicit connectors.

SISAT Level: Developing.

Appendix A.2.2. Example 2: Expected Level (Private University)

Excerpt 2 (SISAT: Expected Level): “Desde la infancia, Troy sintió que el mundo se extendía más allá de las montañas que rodeaban su pueblo. Mientras ayudaba a su abuelo en el campo, imaginaba caminos que lo conducirían a otros lugares. Al regresar a casa, comprendió que la verdadera magia no estaba en los objetos, sino en la capacidad de imaginar y decidir su propio destino.”

Linguistic and Discursive Analysis:

- Clear cohesion, varied and precise vocabulary, controlled syntactic structures.

- Medium–high lexical density, supported by abstract nouns and specific verbs (comprendió, imaginaba, and conducirían).

- Temporal and cohesive connectors enhance thematic progression.

- Reflective closure integrated naturally into the narrative.

SISAT Level: Expected.

Appendix A.3. Comparative Summary of Linguistic Features

| Feature | Developing Level | Expected Level |

| Narrative Purpose | Clear but basic | Clear and rhetorically sustained |

| Lexical Density | Moderate | Medium–high |

| Lexical Richness | Limited, repetitive | Varied and precise |

| Cohesion | Chronological | Explicit cohesive devices |

| Syntax | Long, weakly segmented clauses | Balanced coordination and subordination |

| Orthography | Frequent inaccuracies | Mostly correct |

| Reflective Closure | Explicit and simple | Integrated and elaborated |

Appendix A.4. Pedagogical Relevance

These examples illustrate how narrative writing quality varies according to performance level and institutional context. The contrast between Developing and Expected levels supports the study’s quantitative findings, showing that automated linguistic indicators such as lexical density and richness meaningfully correspond to institutional assessment. Including narrative exemplars enhances transparency and provides concrete pedagogical insight into the developmental nature of academic writing in higher education.

Appendix B. Student Narratives

Table A2.

Text production at the public university by period.

Table A2.

Text production at the public university by period.

| First Period | Second Period | Third Period |

|---|---|---|

| SO1—My Friend the Bunny | SO1—The Macabre House | SO1—Luly’s Magical Adventure |

| SO2—The Girl and the Fairy | SO2—My Life Changed | SO2—The Kingdom of Luminara |

| SO3—SN | SO3—Fly | SO3—The Discovery of Reading |

| SO4—The Last Song | SO4—The City Girl | SO4—SN |

| SO5—The Ballerina | SO5—Distance | SO5—Love and Sadness |

| SO6—SN | SO6—Love for Soccer | SO6—The Betrayal of the Valley |

| SO7—The Happy Farm | SO7—The Island | SO7—The Power of Love |

| SO8—The Love-Struck Rat | SO8—The Lion Who Couldn’t Write | SO8—The Medium |

| SO9—The Village | SO9—SN | SO9—The Shadow in the Mirror |

| SO10—The Garden of Dreams | SO10—Rap Monster | SO10—The Shadow of the Lake |

| SO11—The Need | SO11—Yeji’s Bakery | SO11—SN |

| SO12—Forever | SO12—Ross’s Dream | SO12—Learning to Love Myself |

| SO13—Heart’s Desire | SO13—Shared Destiny | SO13—SN |

| SO14—When Love Changes | SO14—The Last Train | SO14—The City of Valdia |

| SO15—SN | SO15—Distance and Love | SO15—SN |

| SO16—Sometimes They Are Only for a Moment | SO16—SN | SO16—Between Desks and Offices |

| SO17—The Witch | SO17—A Great Future | SO17—Motherly Love |

| SO18—The Dog City and Coco | SO18—The Forest of Lost Voices | SO18—SN |

| SO19—The Little Star | SO19—July 15 | SO19—Encounter in the Rain |

| SO20—Juanito | SO20—My Dream Come True | SO20—My Little Star |

| SO21—Maria | SO21—Sofia and Emotional Intelligence | SO21—Love with Prejudices |

| SO22—Bruno the Math Donkey | SO22—The Hero’s Journey | SO22—The Last Train to Hope |

| SO23—Rescue at the Zoo | SO23—The Boy and the Balloon | SO23—SN |

| SO24—SN | SO24—The Lost Girl of the Forest | SO24—The Library of Destiny |

| SO25—— | SO25—Wonderful World of Aurora | SO25—SN |

Note: SN = untitled story.

Table A3.

Text production at the private university by period.

Table A3.

Text production at the private university by period.

| First Period | Second Period | Third Period |

|---|---|---|

| SO1—— | SO1—The Clock | SO1—Hallway 9 |

| SO2—The Last Threshold | SO2—I Will No Longer Suffer | SO2—Butterflies and Loyalty |

| SO3—SN | SO3—Juan and the Fairy | SO3—The Big Salad |

| SO4—SN | SO4—Road Trip | SO4—The Call of the Ashes |

| SO5—Troy and the Magic Box | SO5—Alba and Her Friend Esthertita | SO5—In the Huasteca |

| SO6—The Princess and the Ghost | SO6—The Boy and the Elf | SO6—The Adventure of Luno, the Curious Spider |

| SO7—SN | SO7—Alba | SO7—The Lost Mushrooms |

| SO8—Jason’s First Splash | SO8—The Abandoned Penguin | SO8—The Whisper of the Sunset |

| SO9—Nothing Is as It Seems | SO9—The Hard Farewell | SO9—Behind You… |

| SO10—The Trapped Peasant Girl | SO10—Morgana’s Magic | SO10—The Halloween Wish |

| SO11—SN | SO11—Hanna’s Shadow | SO11—The Last Memory |

| SO12—SN | SO12—SN | SO12—The Path of a Life |

| SO13—SN | SO13—One Last Journey | SO13—The Coffee Fairies |

| SO14—One Goal, One Dream, and a Source of Energy | SO14—The Evicted | SO14—Sporlax: Under the Microscope |

| SO15—The Time Traveler | SO15—The Magic Portal | SO15—Night of Eternal Friendships |

| SO16—One More Doctor | SO16—The Return of Memories | SO16—Alinne’s Labyrinths |

| SO17—A Journey Around the World | SO17—I Can Write My Way | SO17—A Night of Terror |

| SO18—The Story of Lia the Squirrel | SO18—My Friend and I | SO18—The Tree of Dreams |

| SO19—SN | SO19—First Day of Classes | SO19—Not Visible |

| SO20—Max’s Journey | SO20—Heaven’s Limbo | SO20—Not Visible |

| SO21—The Story of Love | SO21—SN | SO21—The Hug of the Stars |

| SO22—Panadizo | SO22—DIEGO IT’S A LEGEND | SO22—Leonardo and All the Animals |

| SO23—Respect | SO23—The Last Summer Night | SO23—Story “The Friends” |

| SO24—Beyond the Moon and the Stars | SO24—A Dream | SO24—The Midnight Star |

| SO25—— | SO25—Melody of Melancholy | SO25—A Night of Memories |

Note: SN = untitled story.

Appendix C. Automated Linguistic Analysis and Study Variables

Appendix C.1. Automated Linguistic Analysis

Automated linguistic analysis was conducted using text processing techniques, including tokenization and lexical normalization. These procedures enabled the calculation of quantitative linguistic indicators such as lexical density, lexical richness, and the frequency of words and grammatical categories, as well as the identification of frequent words, key phrases, and thematic categories.

The analysis was carried out using custom-developed online tools to ensure consistency, transparency, and replicability of the evaluation process.

Appendix C.2. Online Tools

Appendix C.2.1. Linguistic Pattern Evaluator

The first tool, Linguistic Patterns, is an online linguistic pattern evaluator developed by one of the researchers and is fully available in Spanish. This tool performs lexical and grammatical analysis of written texts and provides automated metrics related to lexical density, lexical richness, and thematic distribution.

URL: https://chatgpt.com/g/g-682b7d9107d88191850ccd0c8e8c58a7 (accessed on 23 January 2026).

Figure A1.

Linguistic pattern evaluator interface.

Figure A1.

Linguistic pattern evaluator interface.

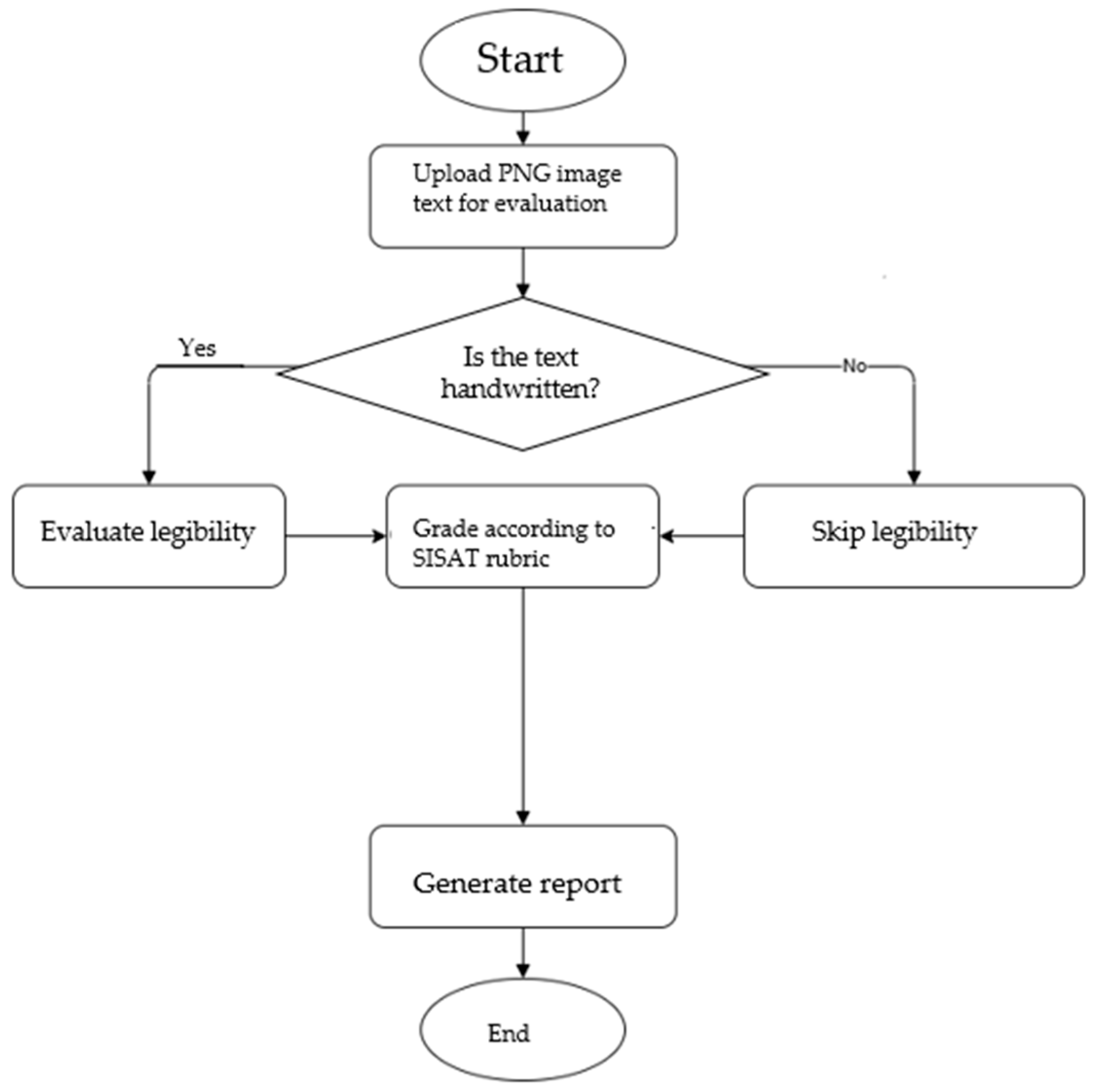

Appendix C.2.2. SISAT Evaluator

The second tool is the SISAT evaluator, also developed by one of the researchers and available entirely in Spanish. This system processes texts extracted from PNG images.

As shown in Figure A3, the system first determines whether the input text is handwritten. If handwriting is detected, the system evaluates text legibility. Subsequently, the text is assessed using the corresponding SISAT rubric, and an automated evaluation report is generated.

URL: https://chatgpt.com/g/g-67e1b0bffcb4819198589c95fb6f7ce7 (accessed on 5 July 2025).

Figure A2.

SISAT evaluator interface.

Figure A2.

SISAT evaluator interface.

Figure A3.

Flowchart of the SISAT evaluation model.

Figure A3.

Flowchart of the SISAT evaluation model.

Appendix C.3. Study Variables

The study variables were classified into institutional and linguistic variables.

- Institutional variable:

- ○

- SISAT performance level (Requires support, In development, and Expected level).

- Linguistic variables:

- ○

- Lexical density.

- ○

- Lexical richness.

- ○

- Frequency of grammatical categories.

- ○

- Number of sentences.

- ○

- Thematic complexity.

References

- Amabile, T. M. (2018). Creativity in context: Update to the social psychology of creativity. Routledge. [Google Scholar] [CrossRef]

- Barbara, S. W. Y., Afzaal, M., & Aldayel, H. S. (2024). A corpus-based comparison of linguistic markers of stance and genre in the academic writing of novice and advanced engineering learners. Humanities and Social Sciences Communications, 11(1), 284. [Google Scholar] [CrossRef]

- Barton, D., & Hamilton, M. (1998). Local literacies: Reading and writing in one community. Routledge. [Google Scholar]

- Biber, D. (2012). Register, genre, and style. Cambridge University Press. [Google Scholar]

- Biber, D., Conrad, S., Reppen, R., & Leech, G. (1999). Corpus linguistics: Investigating language structure and use. International Journal of Corpus Linguistics, 4(1), 185–188. [Google Scholar] [CrossRef]

- Boulton, A., & Cobb, T. (2017). Corpus use in language learning: A meta-analysis. Language Learning, 67(2), 348–393. [Google Scholar] [CrossRef]

- Bruner, J. S. (2001). El proceso mental en el aprendizaje (Vol. 88). Narcea Ediciones. [Google Scholar]

- Calvo, S., Celini, L., Morales, A., Martínez, J. M. G., & Núñez-Cacho Utrilla, P. (2020). Academic literacy and student diversity: Evaluating a curriculum-integrated inclusive practice intervention in the United Kingdom. Sustainability, 12(3), 1155. [Google Scholar] [CrossRef]

- Carlino, P. (2005). Escribir, leer y aprender en la universidad. Fondo de Cultura Económica. [Google Scholar]

- Carlino, P. (2013). Alfabetización académica diez años después. Revista Mexicana de Investigación Educativa, 18(57), 355–381. [Google Scholar]

- Carlino, P. (2023). Leer y escribir en la universidad: Nuevas perspectivas. Paidós. [Google Scholar]

- Chaverra Fernández, D. I., Calle-Álvarez, G. Y., Hurtado Vergara, R. D., & Bolívar Buriticá, W. A. (2022). Revisión de investigaciones sobre escritura académica para la construcción de un centro de escritura digital en educación superior. Íkala, Revista de Lenguaje y Cultura, 27(1), 224–247. [Google Scholar] [CrossRef]

- Chyzhykova, O. (2024). Analyzing lexical features and academic vocabulary in academic writing. International Journal of Philology, 28(1), 72–80. [Google Scholar] [CrossRef]

- Davis, C., Lawson, K., & Duffy, L. (2025). Academic literacy in enabling education programs in Australian universities: A shared pedagogy. The Australian Educational Researcher, 52, 539–561. [Google Scholar] [CrossRef]

- Dong, J., Wang, H., & Buckingham, L. (2023). Mapping out the disciplinary variation of syntactic complexity in student academic writing. System, 113, 1–15. [Google Scholar] [CrossRef]

- Flower, L., & Hayes, J. (1981). A cognitive process theory of writing. College Composition and Communication, 32(4), 365–387. [Google Scholar] [CrossRef]

- Fuster-Barcelo, C., Rios-Munoz, G. R., & Munoz-Barrutia, A. (2025). Scaffolding collaborative learning in STEM: A two-year evaluation of a tool-integrated project-based methodology. arXiv, arXiv:2509.02355. [Google Scholar] [CrossRef]

- Granger, S. (2024). From early to future learner corpus research. International Journal of Learner Corpus Research, 10(2), 247–279. [Google Scholar] [CrossRef]

- Güler, M., Çekmez, E., & Arslan, Z. (2025). Future mathematics teachers’ perceptions of using ChatGPT in the classroom. Innoeduca: International Journal of Technology and Educational Innovation, 11(2), 25–41. [Google Scholar] [CrossRef]

- Halliday, M. A. K. (1985). An introduction to functional grammar. Edward Arnold. [Google Scholar]

- Halliday, M. A. K., & Hasan, R. (1976). Cohesion in English. Longman. [Google Scholar]

- Hyland, K. (2002). Authority and invisibility. Journal of Pragmatics, 34(8), 1091–1112. [Google Scholar] [CrossRef]

- Hyland, K. (2005). Metadiscourse: Exploring interaction in writing. Continuum. [Google Scholar]

- Hyland, K. (2016). Academic publishing and the myth of linguistic injustice. Journal of Second Language Writing, 31, 58–69. [Google Scholar] [CrossRef]

- Hyland, K. (2019). Second language writing. Cambridge University Press. [Google Scholar]

- Istiqomah, F., & Basthomi, Y. (2024). Exploring nominalization and lexical density deployed within research article abstracts: A grammatical metaphor analysis. Englisia: Journal of Language, Education, and Humanities, 11(2), 14–28. [Google Scholar] [CrossRef]

- Kaur, D., & Kapoor, V. (2025). Perspectivas de los estudiantes sobre los beneficios educativos de ChatGPT: Una exploración cuantitativa. Innoeduca: International Journal of Technology and Educational Innovation, 11(2), 5–24. [Google Scholar] [CrossRef]

- Lea, M. R., & Street, B. V. (1998). Student writing in higher education: An academic literacies approach. Studies in Higher Education, 23(2), 157–172. [Google Scholar] [CrossRef]

- Lillis, T. (2001). Student writing: Access, regulation, desire. Routledge. [Google Scholar]

- Lipková, M. (2024). Lexical density in academic writing: Lexical features and learner corpora analysis in L2 tertiary students’ essays and didactic implications. In Proceedings of the Asian conference on education 2023: Official conference proceedings, Tokyo, Japan, November 22–25 (pp. 1175–1188). The International Academic Forum (IAFOR). [Google Scholar] [CrossRef]

- Lusta, A., Demirel, Ö., & Mohammadzadeh, B. (2023). Language corpus and data driven learning (DDL) in language classrooms: A systematic review. Heliyon, 9(12), e22731. [Google Scholar] [CrossRef]

- Ma, H., Wang, J., & He, L. (2023). Linguistic features distinguishing students’ writing ability aligned with CEFR levels. Applied Linguistics, 45(4), 637–657. [Google Scholar] [CrossRef]

- McEnery, T., & Hardie, A. (2012). Corpus linguistics: Method, theory and practice. Cambridge University Press. [Google Scholar]

- Nguyen, T. H. T., & Edwards, E. C. (2015). An investigation of nominalization and lexical density in undergraduate research proposals. Language Education in Asia, 6(1), 17–30. [Google Scholar] [CrossRef]

- O’Donnell, F., Porter, M., & Fitzgerald, D. S. (2025). The role of artificial intelligence in higher education: Higher education students’ use of AI in academic assignments. Irish Journal of Technology Enhanced Learning, 8(1). [Google Scholar] [CrossRef]

- OpenAI. (2023). GPT-4 technical report. arXiv, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Ravšelj, D., Keržič, D., Tomaževič, N., Umek, L., Brezovar, N., Iahad, N. A., Abdulla, A. A., Akopyan, A., Aldana Segura, M. W., AlHumaid, J., Allam, M. F., Alló, M., Andoh, R. P. K., Andronic, O., Arthur, Y. D., Aydın, F., Badran, A., Balbontín-Alvarado, R., Ben Saad, H., … Aristovnik, A. (2025). Higher education students’ perceptions of ChatGPT: A global study of early reactions. PLoS ONE, 20(2), e0315011. [Google Scholar] [CrossRef] [PubMed]

- Sandoval-Cárcamo, J., Arias-Roa, N., & Arancibia-Gutiérrez, B. M. (2024). Cognitive skills and critical thinking interventions for the development of academic writing in higher education students: A systematic review. Ciencia y Tecnología, 4, 698. [Google Scholar] [CrossRef]

- Sánchez-Rivas, E., Ramos Núñez, M. F., Ramos Navas-Parejo, M., & De La Cruz-Campos, J. C. (2023). Narrative-based learning using mobile devices. Education + Training, 65(2), 284–297. [Google Scholar] [CrossRef]

- Secretaría de Educación Pública (SEP). (2019). Orientaciones para el establecimiento del Sistema de Alerta Temprana SisAT. Available online: https://siase2.edomex.gob.mx/documents/MANUALES/Manual%20SisAT.pdf (accessed on 19 January 2026).

- Street, B. (1984). Literacy in theory and practice. Cambridge University Press. [Google Scholar]

- Street, B. (2015). Social literacies. Routledge. [Google Scholar]

- Swales, J. (1990). Genre analysis. Cambridge University Press. [Google Scholar]

- Ten Peze, A., Janssen, T., Rijlaarsdam, G., & Van Weijen, D. (2024). Instruction in creative and argumentative writing: Transfer and crossover effects on writing process and text quality. Instructional Science, 52(3), 341–383. [Google Scholar] [CrossRef]

- Ueno, S., & Takeuchi, O. (2023). Effective corpus use in second language learning: A meta analytic approach. Applied Corpus Linguistics, 3(3), 100076. [Google Scholar] [CrossRef]

- Zheldibayeva, R. (2025). The impact of AI and peer feedback on research writing skills: A study using the CGScholar platform among Kazakhstani scholars. Scientific Journal of Astana IT University, 21, 186–195. [Google Scholar] [CrossRef]

- Zimmerman, B. J. (2000). Attaining self-regulation. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 13–39). Academic Press. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.