Abstract

Graph Neural Networks (GNNs) have become a central methodology for modelling biological systems where entities and their interactions form inherently non-Euclidean structures. From protein interaction networks and gene regulatory circuits to molecular graphs and multi-omics integration, the relational nature of biological data makes GNNs particularly well-suited for capturing complex dependencies that traditional deep learning methods fail to represent. Despite their rapid adoption, the effectiveness of GNNs in bioinformatics depends not only on model design but also on how biological graphs are constructed, parameterised and trained. In this review, we provide a structured framework for understanding and applying GNNs in bioinformatics, organised around three key dimensions: (1) graph construction and representation, including strategies for deriving biological networks from heterogeneous sources and selecting biologically meaningful node and edge features; (2) GNN architectures, covering spectral and spatial formulations, representative models such as Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), Graph Sample and AggregatE (GraphSAGE) and Graph Isomorphism Network (GIN), and recent advances including transformer-based and self-supervised paradigms; and (3) applications in biomedical domains, spanning disease–gene association prediction, drug discovery, protein structure and function analysis, multi-omics integration and biomedical knowledge graphs. We further examine training considerations, including optimisation techniques, regularisation strategies and challenges posed by data sparsity and noise in biological settings. By synthesising methodological foundations with domain-specific applications, this review clarifies how graph quality, architectural choice and training dynamics jointly influence model performance. We also highlight emerging challenges such as modelling temporal biological processes, improving interpretability, and enabling robust multimodal fusion that will shape the next generation of GNNs in computational biology.

1. Introduction

Biological systems are fundamentally organised as multi-scale, interdependent networks wherein genes, proteins, metabolites and phenotypes interact through highly structured yet heterogeneous relationships. These interactions are not arbitrary but shaped by biological principles such as molecular specificity, hierarchical regulation, pathway modularity, and spatial or temporal constraints. Advances in high-throughput sequencing, proteomics, chemical biology, and functional genomics have enabled the systematic characterisation of these interactions on an unprecedented scale, yielding diverse graph-based representations including protein–protein interaction networks, gene regulatory networks, molecular graphs, and multi-omics association graphs [1,2,3].

Biological systems pose a distinctive class of inference problems in which scientific questions are fundamentally relational rather than independent. Central tasks in bioinformatics–including disease–gene association discovery, molecular property prediction, drug–target interaction modelling, protein structure and function inference, pathway analysis, and multi-omics integration–require reasoning over complex networks of interacting biological entities. In these settings, biological meaning emerges not from isolated features but from patterns of connectivity, hierarchy, and context across genes, proteins, molecules, and phenotypes.

Crucially, the complexity of biomolecular systems manifests not only in the volume of data but also in the relational dependencies encoded within these graphs, encompassing non-Euclidean topologies, variable neighbourhood structures, and multi-scale connectivity. Addressing such tasks is essential for advancing disease modelling, therapeutic design, and precision medicine. However, traditional computational approaches often struggle to jointly capture long-range dependencies, multiscale organisation, and uncertainty inherent in biological systems, while also providing interpretable outputs that can support experimental validation and hypothesis-driven research [4,5].

Graph-based representations provide a natural abstraction for modelling these biological systems, as they explicitly encode molecular interactions, regulatory relationships, spatial proximity, and functional associations. Graph neural networks (GNNs) extend this representation by enabling end-to-end learning over biological graphs, allowing models to propagate, integrate, and transform information across interacting entities [6]. Importantly, GNNs are not merely predictive tools; when appropriately designed, they offer a framework for generating experimentally testable hypotheses by identifying influential nodes, critical interactions, and dysregulated network modules.

In biomolecular research, the value of graph neural networks extends beyond improved predictive performance to the level of molecular interpretation and mechanistic hypothesis generation. By operating directly on molecular graphs, interaction networks, and regulatory architectures, GNNs enable the systematic integration of structural, biochemical, and contextual information. In drug discovery, this includes learning structure–activity relationships, identifying functionally relevant substructures, and prioritising candidate compound–target interactions for downstream validation [7]. In protein science, GNN-based models can capture residue-level dependencies, domain organisation, and interaction interfaces that underpin structure–function relationships. In systems and regulatory biology, graph-based learning supports the identification of dysregulated pathways, key regulatory nodes, and multi-step signalling dependencies that are difficult to infer from feature-based models alone.

Importantly, these capabilities position GNNs as computational tools for narrowing experimental search spaces and generating biologically grounded hypotheses, rather than as substitutes for biochemical or cellular validation. When combined with domain knowledge and experimental evidence, graph-based models can help translate large-scale molecular data into testable insights about biomolecular function, interaction, and regulation.

For biological and clinical researchers, the appeal of GNNs lies in their ability to balance predictive accuracy with interpretability, to model long-range biological dependencies, and to scale to large, sparse, and heterogeneous datasets commonly encountered in genomics, proteomics, pharmacology, and systems biology. These properties position GNNs as a promising computational paradigm for bridging high-throughput data analysis with mechanistic biological understanding [3,8,9].

Despite the rapid growth of GNN applications in bioinformatics, existing surveys remain largely method-driven and may be difficult to navigate for biologists and physicians seeking to understand the practical benefits and limitations of these approaches [6,10,11,12]. Many reviews focus on cataloguing architectural variants or benchmarking performance, while providing limited guidance on how modelling choices relate to biological assumptions, experimental constraints, or interpretability requirements. As a result, critical challenges such as data noise, incompleteness, heterogeneity, uncertainty, and biological plausibility are often discussed in isolation rather than as central design considerations. Additionally, existing reviews seldom provide systematic coverage of biological datasets, leaving gaps in the documentation of data provenance, scale, modality, and evaluation protocols, which limits reproducibility and cross-study comparison. Furthermore, most prior works introduce GNN models without integrating biological constraints such as graph noise, sparsity, heterogeneity, interpretability requirements, and temporal dynamics. Methodologically, most reviews enumerate models without a unified framework linking Graph -> GNN -> GCN, limiting both depth and generalisation [13,14,15,16,17].

With the goal of making graph learning approaches more accessible and actionable for biological and clinical researchers, this review provides a widely, biologically grounded, and process-oriented synthesis of graph neural networks in bioinformatics. Our work differs from the existing literature through a multi-level integrative framework that organises the field across four conceptual layers: (1) biological graph construction grounded in domain principles; (2) methodological taxonomy spanning classical to modern GNN architectures; (3) systematised benchmarking through harmonised datasets and evaluation protocols; and (4) domain-centred applications highlighting both performance trends and biological constraints. This hierarchical organisation enables a coherent narrative from theoretical origins to application-level insights, unifying components that existing reviews treat separately. Our contributions are fourfold:

First, we establish a unified framework for understanding how biological data are transformed into graph representations, clarifying the principles, assumptions and limitations underlying PPI networks, gene regulatory graphs, molecular structures and multi-omics association graphs.

Second, we systematically organise GNN methodologies, ranging from spectral and spatial formulations to modern architectures, such as attention-based, structure-aware and transformer-integrated models, and contextualise their design choices in terms of biological relevance.

Third, we synthesise recent advances across major bioinformatics tasks, including disease–gene association prediction, drug discovery, protein structure and function modelling, multi-omics integration and multimodal biomedical analysis, providing a coherent comparison of application settings, datasets and performance trends.

Fourth, we analyse a broad collection of graph-based biological datasets, including molecular-level benchmarks, protein interaction resources, gene regulatory networks, drug–target interaction datasets and multimodal biomedical graphs. It offers an organised reference that supports reproducible evaluation and facilitates future GNN research in the life sciences. We further highlight overarching challenges such as graph construction quality, data noise, scalability, interpretability and temporal dynamics, offering perspectives on future methodological directions essential for enabling robust and biologically meaningful GNN models.

In summary, our main contributions are as follows:

- We synthesise recent GNN developments relevant to biological network modelling, spanning both classical and emerging architectures.

- We introduce a unified methodological framework that links graph construction, model design and training principles.

- We curate and systematise biological graph datasets across molecular, protein, regulatory, pharmacological and multimodal domains.

- We review major applications in bioinformatics and clinical analysis, covering molecular prediction, disease association, drug discovery and protein modelling.

- We identify key limitations and outline future research directions centred on graph quality, heterogeneity, scalability, interpretability and dynamic modelling.

2. Biological Networks and Data Representation

2.1. Overview of Biological Graph Structures in Bioinformatics: PPI, GRN, Molecular Graphs, Knowledge Graphs

A central premise of graph learning in bioinformatics is that many core biological questions are inherently relational. Genes do not act in isolation, proteins exert function through physical and regulatory interactions, and molecular phenotypes emerge from coordinated activity across multiple biological scales. Importantly, different biological tasks give rise to distinct graph structures, each reflecting specific biological assumptions and constraints.

Biological systems are inherently relational. Genes regulate each other, proteins assemble into complexes, cells communicate through ligand–receptor interactions, and tissues maintain structural organisation through spatial coordination. These biological dependencies naturally induce graph structures, in which nodes represent biological entities and edges encode functional, physical, or statistical relationships. Graph representations provide a principled way to formalise these dependencies by encoding biological entities as nodes and their relationships as edges. Importantly, different biological tasks give rise to distinct graph structures, each reflecting specific biological assumptions and constraints.

2.1.1. Protein–Protein Interaction (PPI)

Protein–protein interaction (PPI) networks model physical or functional interactions among proteins and serve as a foundational abstraction for studying cellular machinery, signalling pathways, and disease mechanisms. In PPI graphs, nodes represent proteins and edges denote experimentally measured or computationally inferred interactions. These networks implicitly assume that protein function is shaped by local neighbourhood context and network topology, an assumption supported by observations that disease-associated proteins often cluster within interaction modules [18]. PPI graphs are therefore well suited for tasks such as disease–gene association prediction, pathway enrichment analysis, and drug target identification, where relational proximity and network centrality carry biological meaning.

2.1.2. Gene Regulatory Networks (GRNs)

Gene regulatory networks (GRNs) encode directed and often hierarchical relationships between transcription factors, regulatory elements, and target genes. Unlike PPIs, GRNs reflect causal or directional influence, capturing how regulatory signals propagate across molecular layers. Nodes correspond to genes or regulatory factors, while edges represent activation or repression relationships inferred from transcriptomic, epigenomic, or perturbation data. GRNs embody biological assumptions of regulatory hierarchy, context dependence, and temporal control, making them particularly relevant for modelling cell fate decisions, developmental processes, and disease-associated dysregulation [19]. Their directed and dynamic nature poses additional challenges for graph learning, as models must account for asymmetry, feedback loops, and condition-specific rewiring.

2.1.3. Molecular Graphs

Molecular graphs represent individual chemical compounds or biomolecules at atomic or residue resolution, where nodes correspond to atoms (or amino acid residues) and edges encode covalent bonds or spatial proximity. These graphs are grounded in physicochemical principles, assuming that molecular properties and biological activity arise from local chemical environments and structural configuration. Molecular graphs underpin tasks such as quantum property prediction, drug-like molecule screening, and drug–target interaction modelling [7]. In this setting, graph representations must preserve chemical validity, stereochemistry, and local geometric constraints, highlighting the importance of biologically and physically informed graph construction.

2.1.4. Knowledge Graphs and Heterogeneous Biological Graphs

Knowledge graphs and heterogeneous biological graphs, beyond single-modality networks, integrate multiple entity types into a unified relational framework, such as genes, proteins, diseases, drugs, phenotypes, and pathways. These graphs capture cross-scale and cross-domain relationships derived from curated databases, literature mining, and experimental evidence. By explicitly modelling heterogeneous node and edge semantics, knowledge graphs support integrative tasks including drug repurposing, disease mechanism elucidation, and multi-omics association analysis. Their structure reflects the assumption that biological insight emerges from the interaction of diverse evidence sources rather than any single data modality [20,21].

Across these graph types, a common challenge lies in balancing biological fidelity with computational tractability. Biological graphs are often incomplete, noisy, and context dependent, reflecting limitations of experimental measurement and biological variability. Consequently, graph construction is not a neutral preprocessing step but a biologically informed modelling decision that strongly influences downstream learning and interpretation.

In general, nodes represent phenomena spanning multiple biological scales. At the molecular level, nodes may correspond to genes, proteins, miRNAs, or metabolites, each characterised by expression profiles, sequence features, or structural descriptors. Edges describe the relationships governing biological systems, reflecting diverse forms of biological knowledge, including physical interaction edges, regulatory edges, metabolic edges, spatial edges, and statistical relationships. The construction of such graphs plays a central and scientifically pivotal role in biosystem formation. The choice of how nodes and edges are defined determines the flow of biological logical information within GNNs, directly shaping the model’s interpretability, generalisability, and capacity to reflect underlying biological mechanisms. Understanding the assumptions embedded in different graph structures is therefore essential for selecting appropriate graph neural network architectures and for interpreting model outputs in a biologically meaningful way [20].

2.2. Data Characteristics and Challenges (Noise, Incompleteness, Heterogeneity)

Biological data are inherently relational. Genes regulate each other through transcriptional programmes, proteins interact to form functional complexes, and small molecules bind to targets to modulate physiological processes. These interactions naturally form networks whose topology encodes mechanistic and functional dependencies within living systems [20]. As a result, the construction and curation of biological graph datasets have become a central component in computational biology, particularly as graph neural networks (GNNs) increasingly serve as powerful tools for analysing such structured information.

Unlike generic graph datasets used in computer science, biological graph datasets are derived from experimental measurements, curated biomedical knowledge, or multi-omics integration pipelines. They are often noisy, incomplete, heterogeneous across data sources, and shaped by biological constraints such as molecular structure, evolutionary conservation, or physical interaction interfaces [22]. These characteristics introduce unique modelling challenges but also provide rich opportunities for extracting biologically meaningful patterns [19].

The rapid adoption of GNNs in bioinformatics has accelerated the development of diverse biological graph datasets—ranging from protein–protein interaction networks and drug–target interaction databases to molecular graphs, gene regulatory networks, knowledge graphs, and multimodal association graphs derived from genomics, transcriptomics, and clinical data. Systematic investigation of these datasets is essential not only for benchmarking GNN algorithms but also for understanding how graph construction choices, annotation quality, and biological context affect downstream model performance.

Therefore, summarising representative biological graph datasets provides a foundation for evaluating existing GNN-based approaches and guiding future research on more biologically informed, robust, and interpretable graph learning models in the life sciences.

In this section, we will briefly introduce some existing datasets related to graph neural networks. To avoid conceptual ambiguity and to improve clarity regarding data provenance and reproducibility, we explicitly distinguish three complementary levels of biological data resources used in graph learning research, which are summarised in Table 1, Table 2 and Table 3.

Table 1.

Representative Benchmark Datasets for Graph Learning in Bioinformatics.

Table 2.

Major Biological Databases and Knowledgebases Used for Dataset Construction.

Table 3.

Dataset statistics commonly reported in graph learning benchmarks.

Table 1 presents representative benchmark datasets that are commonly used for training and evaluating graph neural networks in bioinformatics. These datasets correspond to fixed, task-defined collections of graphs (e.g., molecular property prediction benchmarks, protein classification datasets, or disease association datasets) with clearly specified learning objectives and labels. They serve as standardised evaluation benchmarks and enable controlled comparison of GNN architectures across studies.

Table 2 summarises major biological databases and knowledgebases that provide the raw experimental evidence or curated biological knowledge from which many benchmark datasets are derived. Resources such as ChEMBL, STRING, and KEGG are continuously updated and do not constitute datasets in a strict sense; instead, they function as foundational data sources supplying molecular interactions, pathways, and annotations that are subsequently filtered, sampled, or integrated to construct task-specific graph datasets.

Table 3 reports dataset statistics commonly cited in graph learning benchmarks, including the number of graphs, nodes, edges, feature dimensions, and class labels. For datasets originating from continuously evolving databases (e.g., ChEMBL or ZINC), the reported statistics correspond to representative subsets or snapshots adopted in prior studies, rather than the complete underlying resource. This table is included to facilitate methodological comparison and computational cost assessment across GNN models, while preserving transparency regarding the nature of the underlying data sources.

2.2.1. Quantum/Property Prediction

QM9

Ramakrishnan et al. [23] assembled a dataset intending to offer quantum chemical attributes across a pertinent, coherent, and extensive range of minor organic molecules. This dataset encompasses computations encompassing geometric, energetic, electronic, and thermodynamic characteristics of stable minor organic molecules, totalling 134,000 entries. These molecules constitute a subset of the complete 133,885 distinct species possessing up to 9 heavy atoms (CONFs) within the expansive GDB-17 chemical domain, consisting of a staggering 166 billion organic molecules. This repository serves as a valuable resource for the assessment and validation of current methodologies, as well as the formulation of novel approaches like hybrid quantum mechanics/machine learning, and the elucidation of relationships between molecular structure and properties.

ZINC

Irwin and Shoichet [24] collected ZINC database is a curated collection of commercially available compounds designed to support large-scale virtual screening and molecular property prediction. It provides three-dimensional structures, molecular descriptors, and bioactive-relevant chemical scaffolds for millions of drug-like molecules, and in total 12,000 graphs. The subset commonly used in graph learning research contains carefully filtered small molecules with balanced physicochemical characteristics, ensuring their relevance for pharmaceutical discovery. Due to its breadth and diversity, ZINC serves as an essential benchmark for evaluating molecular representation learning methods, enabling the development of models that generalise across heterogeneous chemical spaces and support downstream applications such as de novo drug design, lead optimisation, and quantitative structure–activity relationship analysis.

2.2.2. Drug-like Molecule Collections

D&D

Dobson and Doig [25] constructed a dataset containing 1178 protein structures and their corresponding secondary structure content, amino acid obligations, surface properties, and ligands. This dataset is used to predict protein structure or analyse protein function.

PROTEIN

Borgwardt et al. [26] collected a dataset for protein function prediction. This dataset contains information on the sequence, structure, chemical properties, amino acid motifs, and interaction partners or physiological profiles of proteins. Researchers can use protein information to predict functional class membership of enzymes and non-enzymes. This dataset contains 1113 samples.

ChEMBL

Gaulton et al. [27] collected the ChEMBL database, which is a manually curated repository of bioactive molecules with experimentally measured interactions against a diverse array of biological targets. It integrates chemical structures, binding affinities, pharmacokinetic metadata, and assay information derived from the medicinal chemistry literature. The dataset encompasses millions of compound–target associations, covering enzymes, GPCRs, ion channels, and other therapeutically relevant proteins. Owing to its scale and biological diversity, ChEMBL provides a rich foundation for training graph-based models in drug–target interaction prediction, mechanism-of-action inference, polypharmacology analysis, and multi-target drug discovery. Its comprehensive coverage positions it as a cornerstone dataset for method development in computational drug design. This dataset contains 5200 protein targets.

MoleculeNet

Wu et al. [28] introduced MoleculeNet, which is an extensive benchmark suite introduced to standardise the evaluation of machine learning models in molecular science. It aggregates a broad spectrum of datasets derived from quantum chemistry, physical chemistry, biophysics, and physiology, including Tox21, HIV, BACE, and FreeSolv. These datasets encompass tasks ranging from toxicity prediction and biochemical binding affinity estimation to solubility and hydration energy regression. MoleculeNet further provides split protocols, evaluation metrics, and consistent preprocessing pipelines, thereby enabling rigorous and reproducible comparisons across modelling approaches. As such, it has become a foundational resource for assessing the capability of graph neural networks to capture complex chemical semantics and molecular structure–property relationships.

2.2.3. Systems Biology Datasets

PPI

Greene et al. [29] utilised low-throughput tissue-specific gene expression data to map genes onto the Human Protein Reference Database (HPRD) tissues. This gene-to-tissue mapping was then integrated with the human protein–protein interaction (PPI) network. The outcome was a multi-layer tissue network comprising 107 layers, each representing a tissue-specific PPI network. The initial human PPI network was curated from diverse sources, encompassing studies by Orchard et al. (2013) [30], Rolland et al. (2014) [31], Chatr-Aryamontri et al. (2014) [32], Prasad et al. (2008) [33], Ruepp et al. (2009) [34], and Menche et al. (2015) [35]. This collection specifically focused on physical PPIs that were substantiated by experimental evidence, excluding interactions based on gene expression and evolutionary data. The comprehensive, unweighted human PPI network consisted of 21,557 interconnected proteins, forming 342,353 interactions. For each of the 107 unique tissues, a tissue-specific PPI network was generated from the overarching PPI network. During this process, every link within the overarching PPI network was identified as specifically co-expressed within that particular tissue. This categorisation followed the criteria outlined by Greene, et al. [29], where interactions were labelled as specifically co-expressed if both associated proteins were tissue-exclusive or if one was tissue-specific and the other broadly expressed. The list of specifically co-expressed proteins was extracted from Greene, et al. [29]. Ultimately, a specific tissue’s PPI network constitutes a subset of the overall PPI network, formed by the collection of edges that are specifically co-expressed within that tissue.

2.2.4. Bio-Chemical Graph Classification Benchmarks

NCI-I

Wale, et al. [36] extended the dataset, which is obtained from the National Cancer Institute’s DTP AIDS Antiviral Screen programme, based on vectors to enable classification and sorting retrieval in the process of studying the underlying molecular topology of drug compounds. The dataset has 4110 graphs and 37 features.

PTC

Toivonen, et al. [37] collected this dataset for studying the relationship between chemical molecular structure and carcinogenicity. The dataset contains 2694 samples, including 1954 for training and 740 for testing.

MUTAG

Debnath, et al. [38] collected the MUTAG dataset, which consists of nitroaromatic compounds labelled according to their mutagenic effects on the bacterium Salmonella typhimurium, which includes 7831 graphs. Each molecule is encoded as a graph with atoms as nodes and chemical bonds as edges, supplemented with functional group information known to influence mutagenicity. As one of the earliest benchmarks in chemical graph analysis, MUTAG offers a compact yet biologically meaningful classification task. Its well-characterised structure–activity relationships make it suitable for assessing the ability of graph neural networks to capture molecular substructures associated with toxicological outcomes.

2.3. Common Graph Construction Strategies in Bioinformatics

Constructing biologically meaningful graphs is a foundational step for applying graph neural networks (GNNs) to bioinformatics tasks. Biological datasets are inherently heterogeneous, noisy, and often incomplete, requiring graph construction strategies that balance biological plausibility with computational tractability. This section summarises common principles and methodological categories for constructing graphs from biological data, and highlights their relevance across molecular biology, systems biology, and biomedical informatics.

When applying graph neural networks to biomedical information systems (such as gene regulatory networks, single-cell atlases, and drug–target interaction graphs), raw data typically exists in high-dimensional, sparse, and heterogeneous forms (e.g., gene expression matrices, protein sequences, and electronic health record event streams). Consequently, the core task of data processing is to transform raw biomedical data into graph representations that are structurally coherent, noise-controlled, and information-rich.

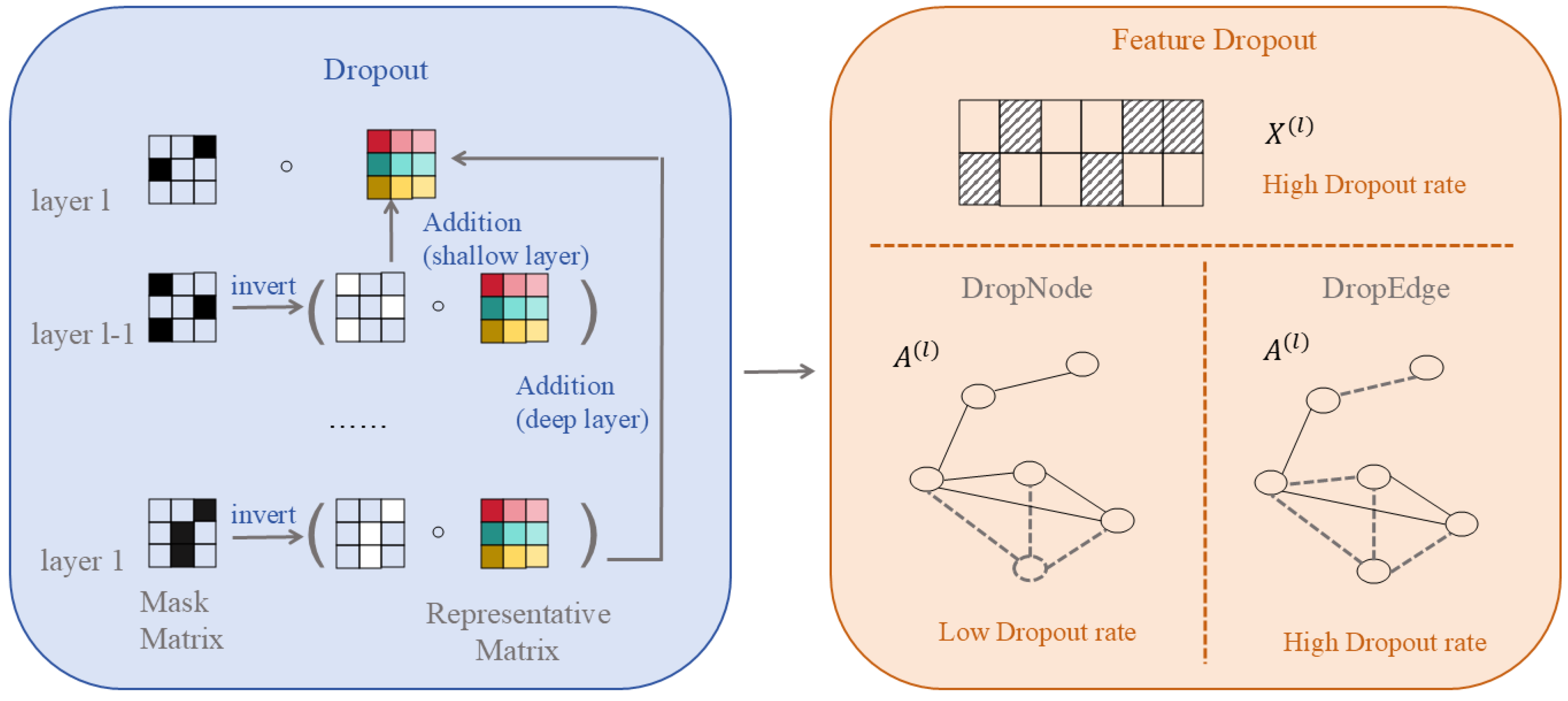

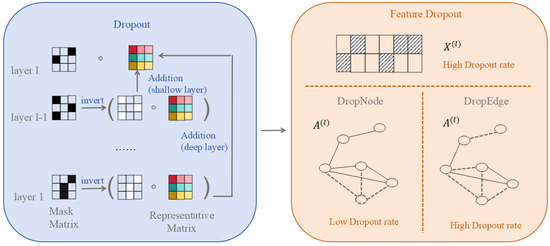

It should be noted that graph structure optimisation can be categorised into two types: one involves learning a fixed optimised graph (such as GSL or curvature reconnection) prior to or during training, constituting data preprocessing; the other entails randomly perturbing the graph structure during each training iteration (such as DropEdge), representing a training regularisation strategy, which will be detailed in Section 2.3.

Graph construction involves mapping raw biological entities and their relationships onto nodes and edges. In biomedical contexts, common construction strategies include:

Co-occurrence/similarity-based: For instance, in single-cell RNA-seq analysis, cells serve as nodes, with edges constructed by calculating inter-cellular similarity via k-nearest neighbours (kNN) or Gaussian kernels; in disease–gene–gene association studies, nodes represent ICD codes or Gene Ontology (GO) terms, with co-occurrence frequency serving as edge weight.

Prior knowledge-based: For instance, utilising the STRING database [39] to construct protein–protein interaction (PPI) networks, or building metabolic reaction graphs based on KEGG pathways [40].

Multimodal fusion graphs, e.g., unifying heterogeneous entities such as genes, cells, and drugs into a heterogeneous graph, defining cross-type relationships via meta-paths.

High-quality graph construction directly impacts downstream task performance, necessitating a balance between biological plausibility and computational scalability.

In biomedical contexts, graph construction involves mapping biological entities and their relationships onto nodes and edges. These relationships are derived from experimental measurements, prior knowledge, or statistical associations. Common construction strategies include:

(1) Graph Structure Learning (GSL): Treating the adjacency matrix as learnable parameters, dynamically adjusting edge weights or topology via end-to-end optimisation. For instance, combining node embedding similarity with the original structure to generate an optimised adjacency matrix through attention or interpolation mechanisms.

(2) Curvature Rewiring: Uses Jost–Liu curvature [41], a graph-based analogue of Ricci curvature that measures how easily information can be transported across an edge, to distinguish bottleneck edges connecting different network modules from redundant edges within densely connected regions. Edges with negative curvature typically indicate structural bridges, whereas positive curvature reflects local redundancy. Curvature-guided edge addition or removal helps regulate message propagation and alleviates over-smoothing and over-squeezing in biological graphs.

(3) Effective Resistance Optimisation: By minimising the graph’s total effective resistance, this approach holistically regulates edge deletion (reducing redundancy) and edge insertion (alleviating sparsity), thereby enhancing connectivity and information propagation efficiency.

(4) Edge Removal Sampling: By probabilistically or strategically removing edges—particularly those connecting nodes of different classes or with low task-specific importance—this strategy sparsifies the graph to slow down over-convergence of node representations, thereby improving computational efficiency and mitigating over-smoothing while preserving essential topological properties.

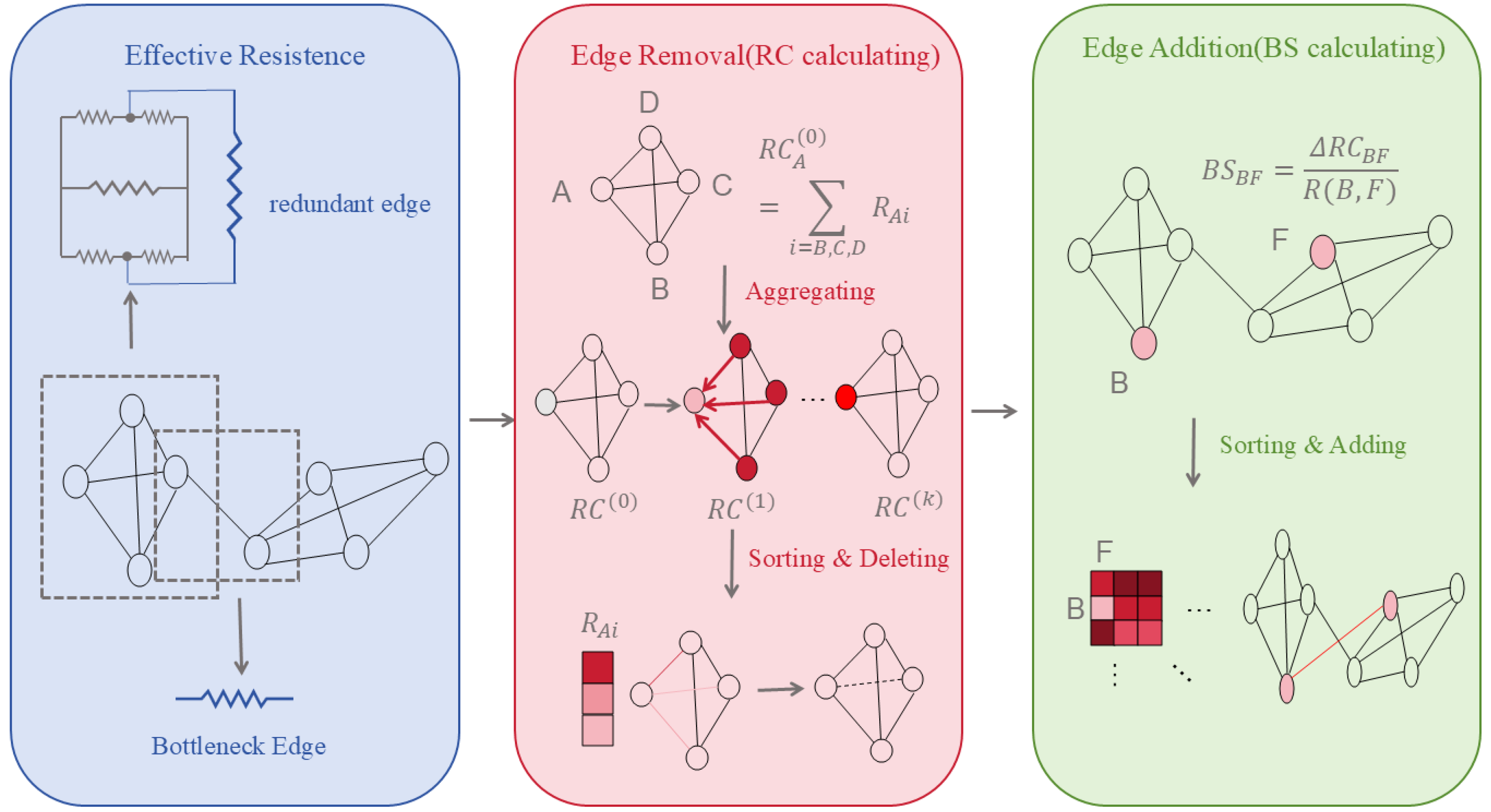

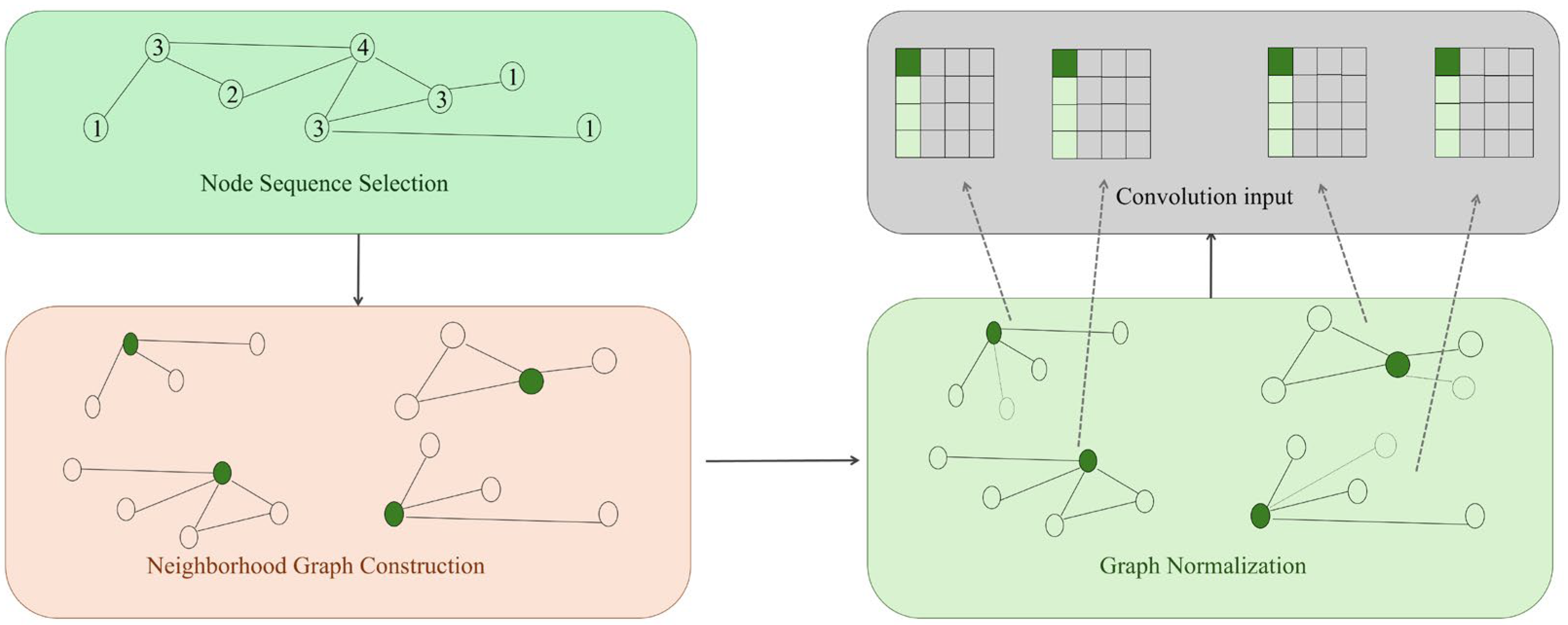

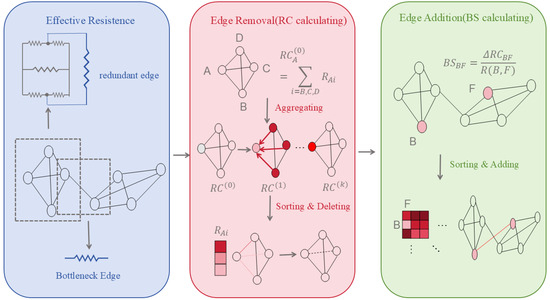

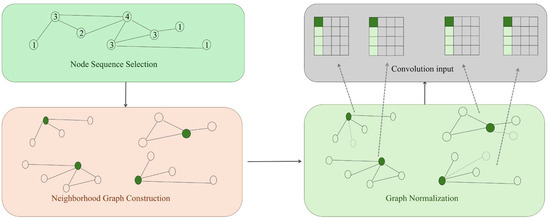

These methods prove particularly suited to biomedical scenarios characterised by sparse annotations and high structural noise (e.g., novel target discovery, rare disease network modelling). The changes are shown in Figure 1.

Figure 1.

The Evolution of Effective Resistance Optimisation.

2.3.1. Graph Structure Learning (GSL)

The most common graph optimisation technique is GSL, which treats graph data as the learnable entity itself. While using GCN to learn node representations, it dynamically learns/modifies the graph’s adjacency structure (adding edges, removing edges, adjusting weights). Since it dynamically adjusts the graph structure based on the actual problem and model, GSL can optimise graph neural networks in various aspects (such as preventing overfitting and over-smoothing) and significantly enhance model robustness against adversarial attacks [42,43,44,45].

Specifically, GSL’s structural modelling involves modifying the original graph’s adjacency matrix:

: Candidate adjacency obtained through a structural modelling function (dependent on node features X, embeddings Z, or learnable parameters ), : Update function (e.g., interpolation, attention fusion, direct replacement).

2.3.2. Curvature Rewiring

However, in recent years, as graph neural network architectures have grown increasingly complex, over-smoothing and over-squashing have emerged as two opposing challenges. Over-smoothing occurs when node embeddings gradually converge as the number of convolutional layers increases, blending representations from different clusters and causing each node to lose its distinctive features. Over-squashing, on the other hand, refers to information compression issues during long-range dependencies: Features from distant nodes must propagate through a finite number of layers, forcing an exponential amount of neighbour information into a fixed-dimensional vector. This causes the contribution of long-range dependencies to sharply decay (especially in “bottleneck” scenarios with few connections between nodes). These two issues often come at the expense of each other—mitigating one typically exacerbates the other. To address this, researchers have introduced curvature reconnection methods.

Specifically, we have a definition to quantitatively describe the training characteristics: Let be a node set, and let denote the set of edges crossing between and , i.e., The Cheeger constant of graph is defined as [46]:

where .

Intuitive interpretation: If there exists a bottleneck in the graph, then is small. It is known that for a connected graph, , Furthermore, is related to by the Cheeger inequality:

If there exists a partition , where both sides have large volumes but few connecting edges, then this ratio will be small, which indicates a significant bottleneck in the graph. So, the will be larger and will be larger.

The Stochastic Jost–Liu curvature-based Rewiring (SJLR) method can be defined as follows:

Curvature Calculation: For each edge , compute the Jost–Liu curvature:

Here, is the number of triangles containing the edge , and are the degrees of the nodes, and indicates taking the positive value. The smaller the JLC, the more likely it is a bottleneck edge (more likely to be removed).

2.3.3. Edge Removal Sampling

For existing edge E:

Specifically, is the result of concatenating the JLC values of each edge, followed by a normalisation process, and is the normalised node embedding distance (representing local information disparity).

Edge Addition Sampling

For candidate non-edges (u, v), we define as the improvement score resulting from the addition of edge . A higher value signifies that incorporating this edge leads to a substantial increase in local curvature, thereby mitigating bottleneck structures within the graph:

After concatenating and normalising we obtain , we also introduced , which represents the distance between the embeddings of the nodes connected by a candidate edge.

2.3.4. Effective Resistance Optimisation

In 2024, Xu Shen et al. [47] introduced the concept of effective resistance to unify these two problems into a single framework: minimising the total constrained effective resistance. This is achieved by manipulating edge deletion and insertion, defined as:

where the resistance matrix is computed using the Laplacian pseudoinverse:

Thus, the condition for edge deletion is maximising the topological redundancy coefficient, calculated as follows:

The condition for edge insertion is minimising the bottleneck sparsity coefficient, calculated as follows:

For the n nodes with the largest RC, delete one of their smallest adjacent edges; for the n candidate edges with the smallest BS, insert one new edge with the largest value in their respective neighbourhoods. Thus, by removing edges and adding edges, the issues of excessive smoothing and excessive compression can be mitigated.

3. Graph Neural Networks for Biological Data: Foundations and Key Architectures

The construction of biologically meaningful graphs defines the foundation upon which graph neural networks operate. Once biological entities and their relationships are encoded into graphs through molecular structures, interaction networks, or multi-omics integration, the next challenge is selecting computational frameworks that can learn from these complex, irregular topologies. Unlike traditional machine learning methods that assume grid-like or sequential structures, GNNs are explicitly designed to model relational dependencies embedded within biological systems. Understanding their theoretical underpinnings and architectural variations is therefore essential for bridging the transition from biological graph construction to effective downstream analysis. In this chapter, we introduce the core formulations and key model families that enable learning on non-Euclidean biological data. Additionally, this chapter emphasises the biological assumptions, applicability, and limitations of major GNN design paradigms, highlighting when specific modelling choices are appropriate or inappropriate for different classes of biomolecular graphs.

3.1. Spectral and Spatial Formulations

In the realm of graph theory, any dataset can establish topological relationships within a normed space [5]. Topological connectivity serves as a versatile data structure, offering the potential to harness this data for diverse tasks spanning various domains, including computer vision and natural language processing, despite differences in data categorisation [5,48,49]. However, a significant challenge arises when attempting to apply traditional Convolutional Neural Network (CNN) architectures to data with non-Euclidean structures. This challenge stems from the fact that in a topological graph, the number of adjacent vertices for each vertex may vary, rendering the utilisation of a fixed-size convolution kernel for convolutional processing impractical. In particular, relational data in the real world, such as protein interactions and patient–disease associations, naturally manifest as non-Euclidean graph structures. The number of neighbours for each node is not fixed, precluding the direct application of fixed-size convolutional kernels.

To overcome this limitation, researchers have innovatively introduced Graph Convolutional Networks (GCNs). These GCNs are specifically designed to extract spatial features from topological maps, enabling the effective processing of data characterised by variable connectivity patterns. This ‘message passing’ mechanism aligns with intuition: the semantic meaning of a node should be influenced by its local topological environment.

In the context of utilising Convolutional Neural Networks (CNNs) to extract spatial features from a topological map, a fundamental requirement is to enable the convolutional layer to compute the topological map. This entails the projection of the topological map from a non-Euclidean space to a Euclidean space.

As the property of a real symmetric positive semi-definite, the symmetrically normalised Laplacian matrix can be factored as , where is a matrix consisting of eigenvectors of the Laplacian matrix where is also the well-known Fourier functions, and is a diagonal matrix of eigenvalues of the Laplacian matrix where is also called the spectrum of a graph. These Fourier functions form an orthonormal basis; thus, , .

The lead-in to graph convolution is the Fourier transformation defined by graph signal processing academics for use with graph data. A graph signal in graph signal processing is a vector consisting of the features of all nodes in the graph, where is the feature of the th node. The Fourier series can be calculated by decomposing the graph signal with Fourier functions [50]:

Thus, the graph Fourier transform to a graph signal can be defined as

Since each column vector of and each row vector of is an eigenvector of the graph Laplacian, and the property of mutual orthogonality of the graph Laplacian eigenvectors [51], the product of and realises the orthogonal projection of the graph signal and the orthogonal basis is formed by the eigenvectors of the graph Laplacian. In addition, the inverse graph Fourier transform can be defined as

where the is the vector consists of the coordinates of the graph signal in the frequency domain.

According to the convolution theorem, which states that the Fourier transform of the convolution of two functions is the product of their individual Fourier transforms, the convolution result of a graph signal and a convolution kernel can be obtained by applying the inverse Fourier transform to the product of their individual Fourier transforms:

Building upon this foundation, investigators project the graph’s structure from a non-Euclidean space onto a Euclidean space, subsequently conducting convolution operations on the data within this projected space. The outcomes of these convolutions are subsequently reconstructed in the original space. This methodology facilitates the employment of Convolutional Neural Networks (CNNs) for the learning and prediction of relational graphs. This conceptualisation directly paved the way for the emergence of Spectral Graph Convolutional Networks (Spectral GCNs).

Spectral GCNs are a class of graph convolutional networks that primarily leverage the spectral domain to perform convolution operations on graph-structured data. These networks are built upon the theoretical foundation of graph signal processing, which involves using the eigenvalues and eigenvectors of a graph’s Laplacian matrix to analyse and process graph data.

Spectral GCNs use a convolution algorithm to define graph convolution from the spectral domain. As noted above, for the vector , which is constituted by the feature extraction of the graph nodes, the basic formula of the convolutional output of a GCN is as follows:

where the is the activation function.

In Spectral GCNs, convolution operations are performed by transforming the graph’s data into the spectral domain, applying spectral filters to this transformed data, and then converting it back to the spatial domain. This process allows the network to capture both local and global graph structures and dependencies. Spectral GCNs have shown promise in various applications, including node classification, link prediction, and graph classification. However, they also have limitations, such as scalability issues when dealing with large graphs and difficulties in handling dynamic graphs.

The approaches delineated above predominantly initiate graph convolution within the spectral domain, commencing from the convolution algorithm as a foundational basis. Conversely, the spatial approach embarks from the node domain, thereby individuating each central node and its associated neighbours via the specification of an aggregation function. Notably, the Chebyshev network and first-order Graph Convolutional Network can be likened to Laplacian matrices, where their variables play an analogous role akin to aggregation functions. In light of this, recent research endeavours have drawn inspiration from this perspective, endeavouring to directly acquire knowledge regarding aggregation functions from the node domains, employing mechanisms such as attention mechanisms [52] and Recurrent Neural Networks (RNNs) [53]. Furthermore, Danel [54] and Qin [55] have introduced a comprehensive framework for GCNs from a spatial vantage point, elucidating the internal intricacies of spatial GCNs. The spatial convolution-based method directly prescribes convolutional operations for the connected relationships of each node, resembling conventional convolution within traditional Convolutional Neural Networks. This universal framework’s formulation illuminates the core considerations in GCN and provides a foundation for the comparative analysis of existing research efforts.

Comparison Between Spectral and Spatial GCNs

In recent years, spatial models have gained increasing prominence, serving as complementary theoretical foundations alongside spectral models in the realm of graph processing within graph convolutional networks. It is noteworthy that spatial models offer several advantages that are challenging for spectral models to match.

Firstly, spatial models excel in terms of efficiency, particularly when dealing with expansive graphs. As graph size escalates, the computational complexity of spectral models undergoes a steep rise, posing significant hurdles in handling a diverse spectrum of graph structures. Spectral models typically necessitate the computation of feature vectors or the processing of the entire graph, thereby leading to scalability concerns. Conversely, spatial models have the capacity to operate on selected nodes rather than the entire graph, harnessing node sampling techniques to enhance efficiency.

Secondly, relative to spectral models, spatial models confer heightened flexibility. Spectral models are primarily tailored for undirected graphs, as Laplace matrices lack a definition for directed graphs. When spectral models are applied to directed graphs, they frequently convert them into undirected ones, thereby constraining their applicability. In contrast, spatial models demonstrate versatility by accommodating any graph structure, whether directed or undirected [56].

Furthermore, with regard to model universality, spectral models embrace a fixed graph structure, rendering them less adaptable to the addition of new nodes or the ever-evolving dynamics of networks [57]. Conversely, spatial models effectively facilitate weight sharing across diverse positions and structures. These models facilitate localised convolution at each node, enabling them to adapt to dynamically changing graph configurations and seamlessly integrate new nodes.

In conclusion, as depicted in Table 4, spatial GCNs have showcased notable advantages in computational efficiency, flexibility, and adaptability, positioning them as a promising avenue for advancing graph convolution technology.

Table 4.

The comparison between spectral and spatial GCNs.

Despite the remarkable success of GCNs, they still face three fundamental challenges that directly drive subsequent innovations in data processing, architectural design, and training strategies:

Over-smoothing: Node representations in deep networks tend to converge, losing discriminative power;

Structural dependency: Performance is highly dependent on the quality of the in-put graph, whereas real biomedical graphs often contain noise or missing edges;

Scalability and generalisation: Difficulty in processing large-scale graphs or generating embeddings for unseen nodes.

3.2. Core GNN Architectures for Molecular and Omics Graphs

In biomolecular systems, graph representations are not merely computational abstractions but reflect fundamental biological organisation principles. Nodes typically correspond to biologically meaningful entities such as genes, proteins, metabolites, or cells, while edges encode biochemical interactions, regulatory relationships, or spatial proximity. Consequently, the choice of graph neural network architecture is often guided by biological assumptions, including pathway modularity, hierarchical regulation, molecular specificity, and context-dependent interactions. GNNs are particularly well suited to biomolecular data because they enable structured information propagation that mirrors how biological signals are transmitted across molecular networks, rather than treating biological features as independent or exchangeable variables. In this section, we therefore reinterpret core GNN architectures through a biological lens, emphasising the modelling assumptions each design embodies and the types of biomolecular questions it is best suited to address. Specifically, this section outlines representative aggregation mechanisms, highlighting how each method family captures unique biological properties and supports a broad range of tasks in bioinformatics and computational biology.

3.2.1. Mean Pooling-Based Aggregation

Mean pooling-based aggregation represents one of the earliest and most widely adopted paradigms in graph neural networks. From a biological perspective, this aggregation strategy embodies a simplifying but often biologically meaningful assumption: that the functional state of a biological entity can be approximated by the average influence of its local interaction partners. In many biomolecular systems, such averaging reflects collective or redundant effects, where no single interaction dominates system behaviour but coordinated activity across multiple neighbours determines function.

Formally, mean aggregation updates a node representation by computing the average of feature vectors from its neighbouring nodes, optionally including the node itself. This operation enforces local smoothness on the graph, encouraging connected biological entities to acquire similar representations. In protein–protein interaction networks, for example, mean pooling implicitly models the observation that proteins participating in the same functional module or pathway often share related biological roles. Likewise, in gene co-expression or regulatory graphs, mean aggregation reflects the assumption that gene activity is influenced by the collective regulatory environment rather than isolated regulators alone.

Mean pooling-based GNNs are particularly effective in biological settings characterised by relatively homogeneous interaction semantics and moderate network density. Representative architectures such as Graph Convolutional Networks (GCNs) and GraphSAGE with mean aggregation have been successfully applied to disease–gene association prediction, protein function annotation, and multi-omics patient stratification. In these tasks, averaging neighbour information can stabilise learning under noisy or incomplete interaction data, a common challenge in biological networks derived from high-throughput experiments.

However, the biological assumptions underlying mean aggregation also impose limitations. By treating all neighbours as equally informative, mean pooling neglects interaction specificity, regulatory directionality, and context-dependent effects that are central to many biological processes. For example, in gene regulatory networks, a master transcription factor may exert disproportionate influence compared to peripheral regulators, while in signalling pathways, upstream and downstream interactions are not interchangeable. Mean aggregation may therefore oversmooth biologically distinct signals, obscuring rare but functionally critical interactions such as tumour suppressor genes or low-degree regulatory hubs.

Consequently, while mean pooling-based aggregation provides a robust and interpretable baseline for biological graph learning, its suitability depends on the underlying biological task and graph structure. In practice, it often serves as a foundational model against which more expressive aggregation mechanisms are evaluated, such as attention-based, structure-aware, or hierarchical approaches. Understanding the biological assumptions encoded by mean aggregation is essential for interpreting its predictions and for determining when more specialised architectures are required to capture the complexity of biomolecular systems.

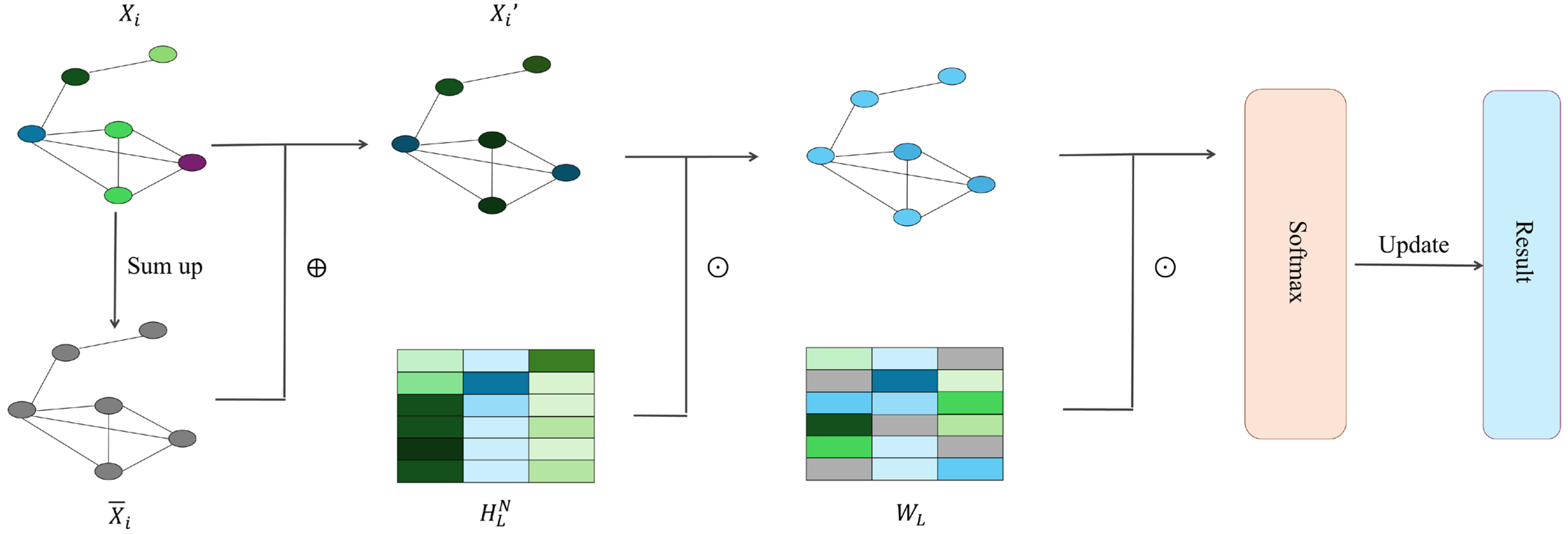

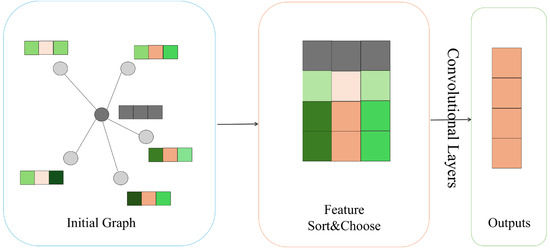

Neural-Fingerprint

Traditionally, most machine learning pipelines for studying properties of novel molecules can only work on fixed-size input. Duvenaud et al. [58] introduced a differentiable neural network that can handle graphs of arbitrary size and shape, with vertices and edges respectively representing individual atoms and bonds of original molecules. Compared to circular fingerprints that compute a fixed-length binary molecular fingerprint vector for downstream analysis, Duvenaud et al. [58] used a graph as input to produce a real-valued vector representing information about atoms and their neighbouring substructures. In addition, discrete operations in circular fingerprints are replaced with differentiable operations. This network exhibits convolutional properties, specifically manifested in nodes with identical degrees sharing the same local filters to aggregate information from neighbouring nodes. The way of information aggregation between neighbours in the graph is shown as follows,

where is the features of the node , is the vertex degree of the node , represents the weight matrixes for the vertex degree in the iteration of information aggregation, and the is a smooth activation function in the single layer neural network. The smooth activation function can lead to similar activations for local molecular structure varying in different ways. For each time when features of a node are updated within an iteration of the whole graph, the fingerprint vector for the graph is updated accordingly as below,

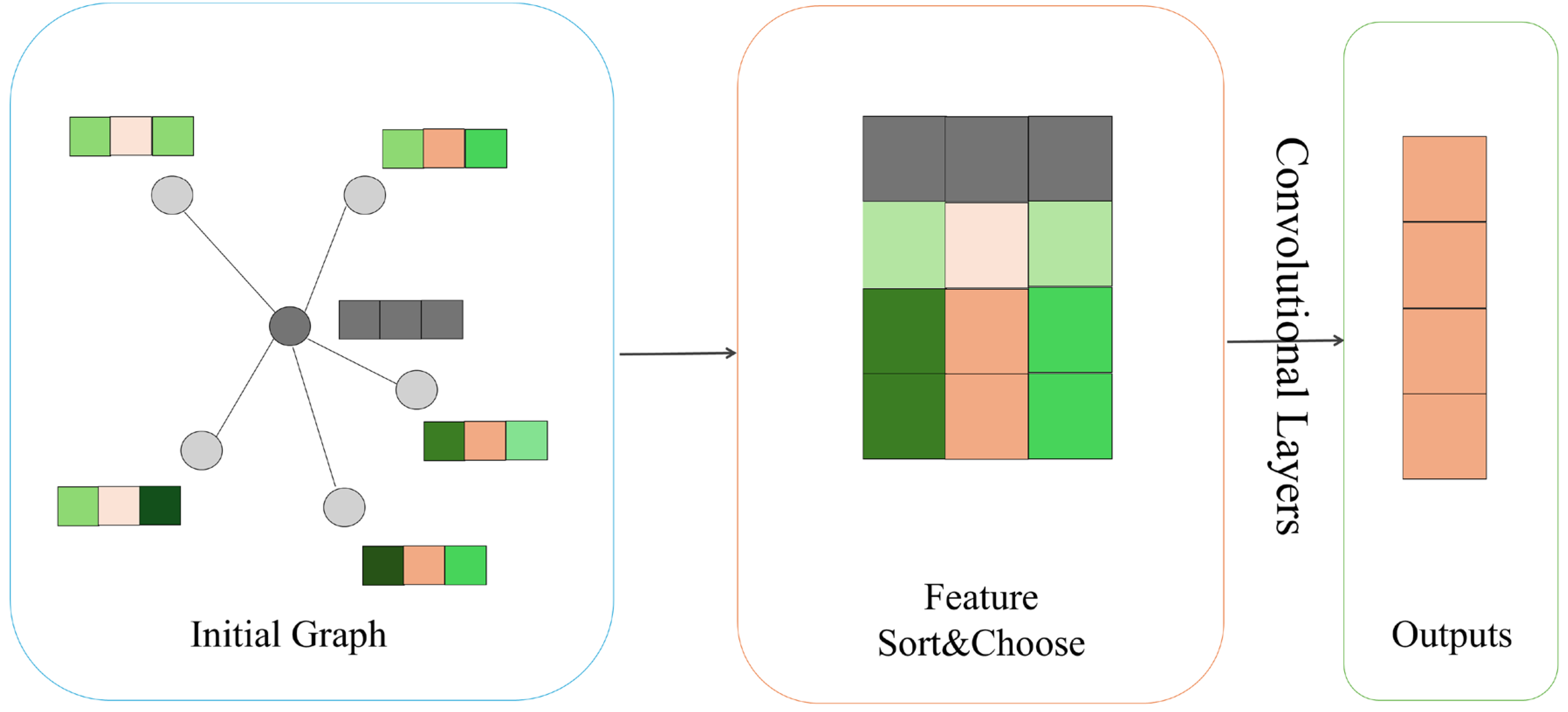

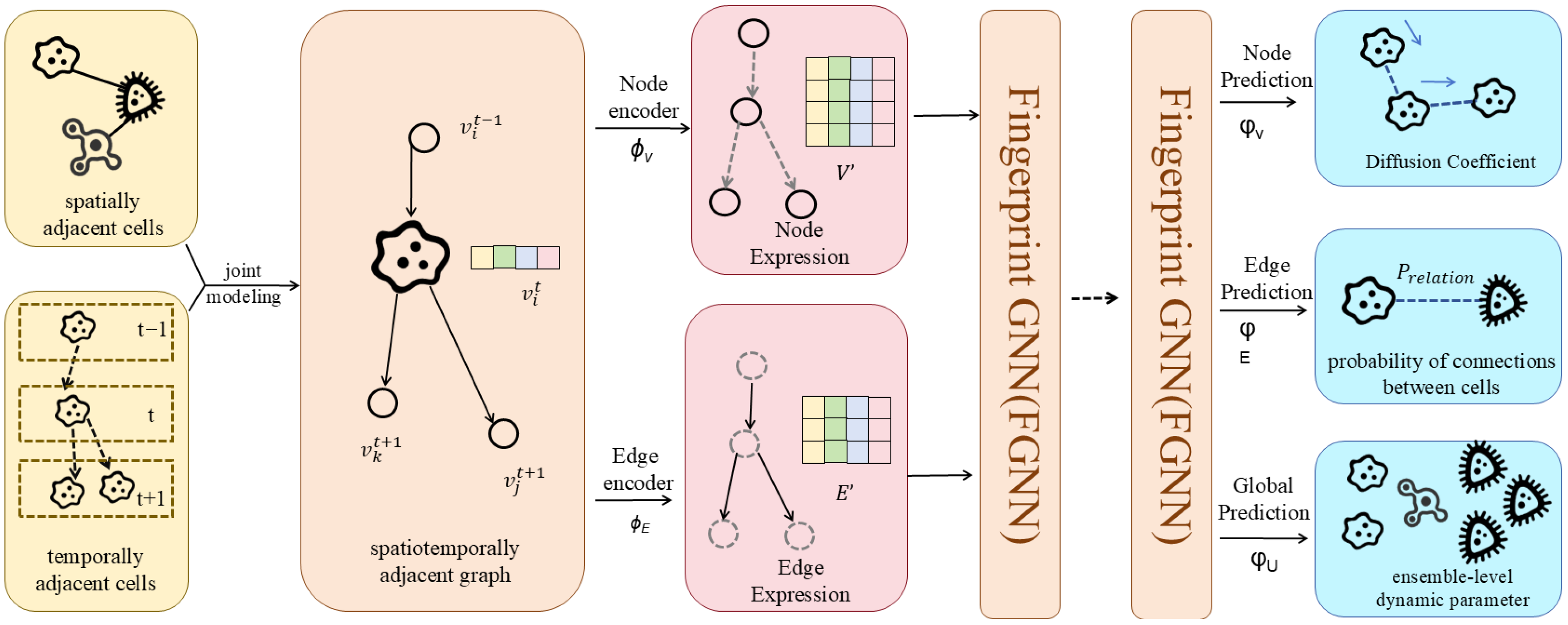

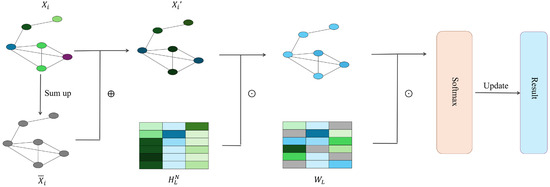

where is the real-value fingerprint vector and is the output weight matrix in the iteration of information aggregation. The is introduced to produce a classification label vector for each node, and the sum of these label vectors is the final fingerprint. Figure 2 illustrates how information flows in the neural network.

Figure 2.

Neural-fingerprint’s procedure.

Compared to traditional fixed fingerprints, the neural graph fingerprints can achieve better predictive performance and only encode relevant features, considering the similarity between molecular fragments.

Diffusion-Convolution Neural Networks (DCNNs)

Many approaches have been proposed towards extending convolutional neural networks to graph-structured data since deep learning techniques have achieved promising results on image-based data. James, et.al. introduced a ‘diffusion-convolution’ operation that learns latent representations by performing a diffusion process across each node in the graph [59]. Their proposed diffusion-convolutional neural networks (DCNNs) are flexible and effective models that can handle general graphical data and various classification tasks, including node classification, edge classification and graph classification. The graph can be weighted or unweighted, directed, or undirected.

Each node is represented by features. The vertices can be denoted as , where is the number of nodes in the graph , and identifies a graph in the set of graphs . DCNNs use the power series of to model the graph diffusion process, where is a degree-normalised transition matrix for the graph which gives the probability of node jumping to node in one hop, and is defined by,

where is the adjacency matrix of the graph , and is the degree matrix.

In the node classification task, the information propagation through the graph can be formulated as follows:

where denotes node features of the , is an tensor representing the power series with as the number of hops of graph diffusion over features, is the trainable weight matrix, the operator is an element-wise multiplication, and is a nonlinear differentiable activation function. A dense layer is connected subsequently to output the hard prediction or a conditional probability distribution:

where is the prediction for the label , and is a conditional probability distribution.

To extend the DCNNs to graph classification, the first equation is simply modified to take the mean activation over the nodes shown as follows,

where is an vector of ones.

In the edge classification task, an edge is considered as a node which is adjacent to the pair of connected nodes . The adjacency matrix for the graph is updated as follows,

where represents connections between the edge and its end nodes, and is the transpose of . The new degree-normalised transition matrix is computed based on the updated adjacency matrix .

GraphSAGE

Although many existing approaches have successfully extended convolution operations to fixed graphs where all nodes are included in network training, generalising such graph convolutional networks to unseen nodes under an inductive setting remains challenging. To address this challenge, Hamilton et al. [60] proposed a general framework, termed GraphSAGE (SAmple and aggreGatE), for learning aggregator functions that can generate embeddings on existing or extremely unseen nodes.

GraphSAGE has designed a unique forward propagation method, where at each iteration (or search depth), vertices aggregate information from their local neighbours, and as this process iterates, vertices obtain information from farther and farther away, which is shown in Algorithm 1. The forward propagation algorithm used by PinSAGE is the same as GraphSAGE, which is the theoretical foundation of PinSAGE.

| Algorithm 1: GraphSAGE embedding generation (i.e., forward propagation) algorithm | |||

| Input | Graph ; input features ; depth K; weight matrices ; non-linearity ; differentiable aggregator functions ; neighbourhood function | ||

| Output | Vector representation for all | ||

| 1 | ; | ||

| 2 | For do | ||

| 3 | For do | ||

| 4 | , | ||

| 5 | , | ||

| 6 | end | ||

| 7 | , | ||

| 8 | end | ||

| 9 | , | ||

An unsupervised loss function was designed in the article, allowing GraphSAGE to train without task supervision.

in which, is the embedding generated by GraphSAGE for node u; Node v is the “neighbour” that node u randomly walks to; is the sigmoid function; is the probability distribution of negative sampling, similar to negative sampling in Word2Vec; Q is the number of negative samples.

The representation of the input loss function in the text is generated from features containing a local neighbour of a vertex, unlike previous methods such as DeepWalk, which train a unique embedding for each vertex and then perform a simple embedding search operation to obtain it.

LightGCN

Although GCNs have shown promising results in learning latent features and performing prediction for collaborative filtering tasks, He et al. [61] empirically showed that feature transformation and nonlinear activation in the standard propagation rule can be removed in view of the user–item interaction graph. The reason is that, unlike those graphs where each node has rich features, the node in a user–item interaction graph is described by a one-hot ID without semantics. Their experiments showed that excluding feature transformation and nonlinear activation in Neural Graph Collaborative Filtering (NGCF) [62] surprisingly leads to substantial improvements in prediction accuracy. The embedding propagation rule in NGCF for each user and node is defined as follows,

where and respectively represent the learned embedding of user and item in the layer , represents the neighbours of user node , represents the neighbours of item node , and represent a trainable weight matrix for performing feature transformation in the layer , denotes the nonlinear activation function, and denotes the element-wise product. The embeddings learned by different layers for each user and item are respectively concatenated to obtain their final embedding. The prediction score for the interaction between user and item is generated by conducting an inner product between the final embeddings of user and item .

Inspired by the results of empirical experiments on NGCF [62], He, et al. [61] proposed a largely simplified GCN model, termed LightGCN, where only the core component of GCN’s ‘neighbourhood aggregation’ is reserved, but feature transformation and nonlinear activation function are removed. The embedding propagation rule in LightGCN is defined as follows,

Different from most of the GCN models that combine information from neighbours and the target node, only the embeddings of neighbours contribute to information aggregation. The novelty of the model is that, instead of assigning a fixed non-semantic identifier for each user and item as input, the initial embeddings for them are the only trainable parameters in the model and can be learned by propagation.

The final representation for each entity in the graph is obtained by combining its embeddings learned in different layers:

By analysing the two layers of LightGCN, the smoothness of the embedding can be intuitively and reasonably demonstrated in the penultimate equation. Considering the embedding learned for a user in the second layer of LightGCN, it can be obtained as

The coefficient for two users who has co-interacted items that then can be obtained by

The interpretation of the coefficient is straightforward: (1) the more co-interacted items they share, the more effects they have on each other; (2) the more popular the item is, the less influence it has on the smoothing strength between two users; and (3) the more active a user is, the less influence that user has on other users.

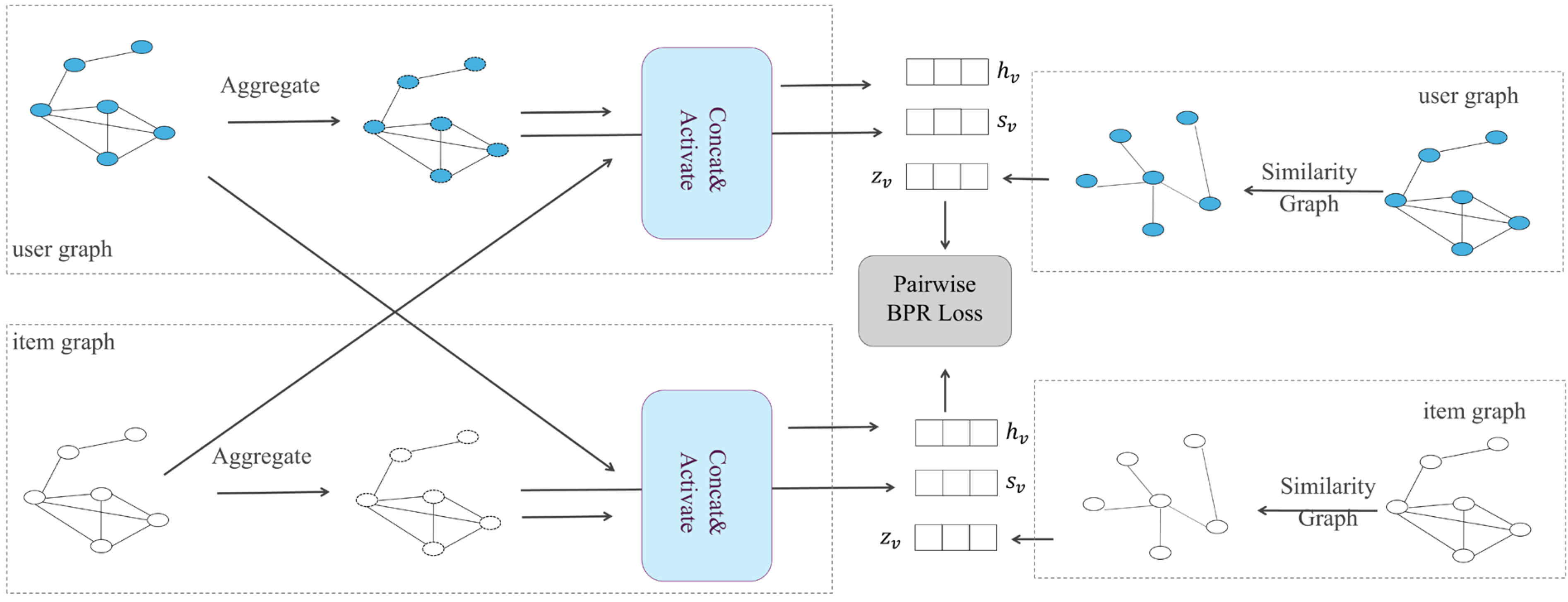

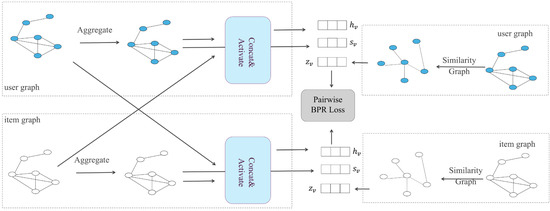

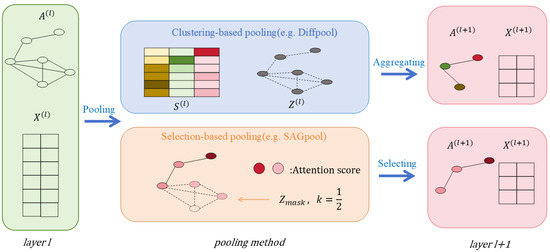

Multi-Graph Convolutional Collaborative Filtering (Multi-GCCF)

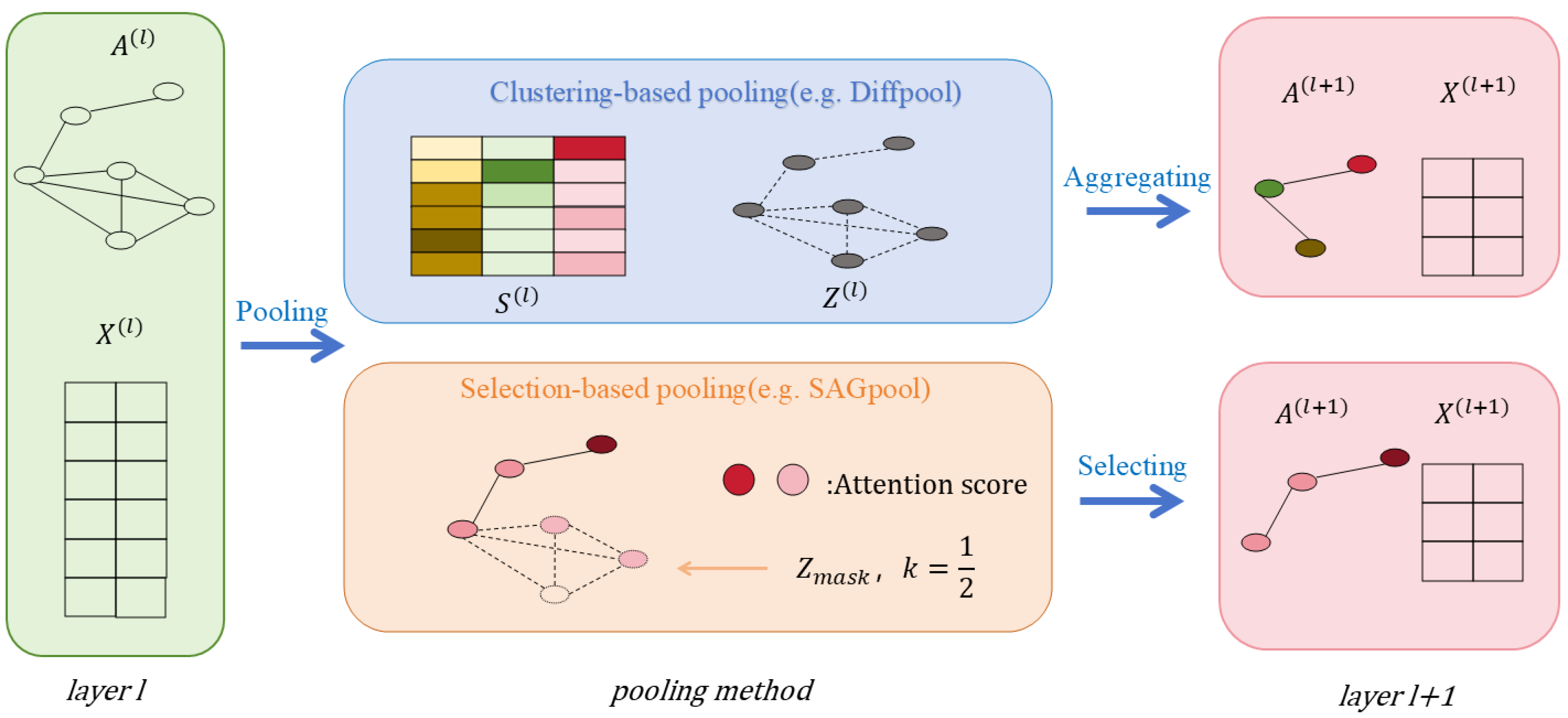

A recommendation system is one of the applications of a graph convolutional network. The user–item interaction in a recommendation scenario can be represented by a bipartite graph whose nodes can be divided into two disjoint and independent sets: user nodes and item nodes. A GCN-based recommendation system framework, Multi-GCCF, proposed by Sun et al. [63], showed that using separate aggregation and transformation functions for user nodes and item nodes can learn more precise embeddings as the intrinsic difference between users and nodes is captured. This framework consists of three components: a bipartite convolutional graph (Bipar-GCN) representing the user–item interaction, two convolutional graphs with a Multi-Graph Encoding (MGE) layer for generating additional embedding nodes and a skip-connection mechanism. The architecture of Multi-GCCF is illustrated in Figure 3. The embeddings of the user node in layer of the Bipar-GCN can be represented as:

Figure 3.

The overall architecture of Multi-GCCF.

Similarly, the embeddings of the item node in layer of the Bipar-GCN can be represented as:

The user–user graph and item–item graph constructed by computing pairwise cosine similarities aim to generate additional embedding for user nodes and item nodes to alleviate the data sparsity problem. The MGE layer consists of a one-hope convolution layer and a sum aggregator. The embeddings for target nodes can be represented as:

3.2.2. Attention-Based Aggregation

Attention-based aggregation extends mean pooling by relaxing the assumption that all biological interactions contribute equally to a node’s functional state. From a biological perspective, attention mechanisms encode the hypothesis that interaction strength, regulatory influence, or functional relevance varies across neighbours, and that selectively weighting these interactions is critical for capturing biologically meaningful dependencies. This assumption aligns closely with many biomolecular systems, where a small number of key regulators, binding partners, or signalling interactions exert disproportionate influence.

In attention-based graph neural networks, such as Graph Attention Networks (GATs), node representations are updated through a weighted combination of neighbour features, where attention coefficients are learned as a function of node attributes and local graph structure. Biologically, these coefficients can be interpreted as context-dependent measures of interaction importance. For example, in gene regulatory networks, attention weights may reflect differential regulatory influence among transcription factors; in protein–protein interaction networks, they may prioritise interfaces critical for complex formation or signal transduction.

Attention-based aggregation is particularly well suited for biological graphs characterised by heterogeneity, sparsity, and interaction specificity. In disease–gene association studies, attention mechanisms can emphasise disease-relevant subnetworks while suppressing background interactions. In protein structure and function modelling, attention can highlight spatially or chemically significant residue interactions over structurally adjacent but functionally neutral contacts. Similarly, in multi-omics integration, attention allows models to dynamically balance contributions from different molecular layers, such as transcriptomic, epigenomic, and proteomic signals, depending on biological context.

Beyond predictive performance, attention-based GNNs offer enhanced interpretability, a property of central importance for biological and clinical applications. Learned attention weights provide a transparent mechanism for identifying influential nodes, edges, or pathways, thereby supporting hypothesis generation and experimental follow-up. For biologists and physicians, this interpretability facilitates reasoning about why a model associates a gene with a disease, prioritises a drug–target interaction, or stratifies patients into molecular subtypes.

Nevertheless, attention-based aggregation also introduces challenges that must be considered in biological settings. Attention weights are learned from data and may reflect dataset biases, noise, or confounding correlations rather than true causal influence. Moreover, attention scores are not guaranteed to correspond to mechanistic importance unless supported by biological priors or experimental validation. Computationally, attention mechanisms increase model complexity and may limit scalability when applied to very large biological graphs, such as single-cell atlases or population-scale interaction networks.

In summary, attention-based aggregation provides a biologically motivated extension of mean pooling by modelling interaction heterogeneity and context-dependent influence. When applied judiciously and interpreted with appropriate biological caution, attention mechanisms enable GNNs to move beyond uniform smoothing toward more selective and interpretable representations of complex biomolecular systems.

Graph Attention Networks (GAT)

Most prior approaches that operate convolution operations on graph-structured data explicitly specify the importance of neighbours to a target node based on the structure of graphs or as a costly learnable weight matrix when aggregating information across neighbourhoods. Motivated by the fact that the self-attention mechanism has shown tremendous ability to achieve state-of-the-art performance in machine translations [64], Veličković et al. [65] proposed graph attention networks (GAT) that utilise self-attention to efficiently compute the attention coefficients across pairs of nodes by using a single-layer neural network. Let and be node features before and after an aggregation of information, where and are the number of features. The computation of the attention coefficient for two connected nodes can be expressed as

where denotes a shared learnable linear transformation matrix, is a shared attentional mechanism, an is an unnormalised coefficient which weights the importance of neighbouring node to target node . The coefficients are then normalised using the softmax function to enable comparison across neighbourhoods of different nodes:

where denotes a set of neighbouring nodes of node . The aggregation of information across the neighbourhood of node can be formulated as follows,

where is a non-linear activation function. Multi-head attention mechanism is applied by combining several self-attention heads obtained from independently replicating the penultimate equation times, aiming to make the learning process of self-attention more stable and accurate:

where denotes the linear transformation in the replica of the self-attention mechanism , the operator represents the concatenation operation, and the dimension of the is . The aggregated features can also be summarised by taking the element-wise mean of feature vectors before a non-linear operation:

The GAT layer satisfies many properties that a standard convolution operation has, including a fixed number of parameters regardless of the structure of the graph, and information aggregation from the local neighbourhood based on learned attentional coefficients and the capability to handle inductive problems. Compared to prior GCN methods, it implicitly assigns different importance to a node and its neighbours and enables efficient computation without eigen decomposition or other costly matrix operations.

Despite its flexibility, the original GAT exhibits a known limitation: the attention coefficients are computed using a static linear transformation of node features, which can restrict the expressive power of attention and prevent effective discrimination between structurally similar neighbours [66]. This limitation may lead to reduced sensitivity to feature interactions and neighbourhood context, particularly in deep or heterogeneous biological graphs.

To address this issue, Graph Attention Network v2 (GATv2) [66] was proposed as a refinement that allows attention scores to be computed as a dynamic function of interacting node features, thereby increasing expressiveness and mitigating attention collapse. GATv2 has been shown to provide more stable optimisation and improved performance across diverse graph learning tasks, and it has therefore become the default attention-based architecture in many recent bioinformatics applications.

Overall, attention-based GNNs provide an adaptive mechanism for weighting biological relationships, but their effectiveness depends critically on the design of the attention function and its ability to capture context-dependent molecular or regulatory interactions.

Factorizable Graph Convolutional Networks (FactorGCN)

In many real-world graphs, a pair of nodes is only connected via a single collapsed edge, even though the relations between them are heterogeneous. This might lead to the concealment of latent intrinsic connections. The work of Yang et al. [67] focuses on factorising relations on the graph level to learn those latent disentangled features, which shows improvement in performance for downstream tasks. Their proposed GCN framework, termed FactorGCN, is made up of several layers, each of which contains three steps: disentangling a single graph into several factorised graphs, performing information aggregation independently of each graph from the other and merging latent disentangled features together by concatenation to form the final features for nodes in the original graph. By stacking several such disentangle layers, FactorGCN can decompose the input data at different levels in different relation spaces, allowing information propagation to perform in disjoint spaces.

In the disentangling step, factor graphs are generated separately through the attention mechanism, where the coefficients for a pair of nodes in the factor graph are learned, and with the range of :

where denotes the set of nodes with features, is a linear transformation matrix, is a one-layer MLP that takes the features of the pair of nodes as input to compute the attention score of the edge for factor graph , is the normalised attention score of the edge from node to node , is the transformed features of nodes shared across all . An additional head is introduced to the disentangle layer to make sure that no factor graphs are redundant and that each factor graph can be distinguished to the rest.

In the aggregation step, the process of information aggregation takes place independently in different factor graphs as follows,

where denotes the updated features of node in the layer of the factor graph , is the coefficient of the edge in the factor graph , is a normalisation term, and is the same linear transformation matrix as the one used in the disentangling step. All disentangled features derived from the aggregation step are concatenated to produce the final form of features for node as follows,

where is the final form of features for node , is the number of factor graphs, and the operator represents the concatenation operation on disentangled features.

3.2.3. Structure-Aware/Spatial-Aware Aggregation

Structure-aware and spatial-aware aggregation mechanisms extend graph neural networks beyond abstract connectivity by explicitly incorporating geometric, topological, and spatial constraints that are intrinsic to biological systems. From a biological standpoint, these approaches reflect the fundamental assumption that the function of biomolecules and cells is not determined solely by interaction existence but by the spatial organisation, relative positioning, and structural context in which interactions occur.

In many biological settings, spatial proximity and geometric arrangement play a decisive role. In molecular graphs, chemical reactivity and binding affinity depend on three-dimensional conformation rather than graph connectivity alone. In protein structures, residue interactions are governed by spatial distance, orientation, and physicochemical compatibility, while in tissues or spatial transcriptomics data, cellular behaviour is shaped by local neighbourhood architecture and microenvironmental context. Structure-aware aggregation mechanisms are designed to capture these constraints by modulating message passing according to geometric features, spatial distance metrics, or higher-order structural descriptors.

Computationally, structure-aware GNNs incorporate additional information such as inter-node distances, angles, relative coordinates, or topological roles when aggregating neighbour information. Rather than treating neighbours as an unordered set, these models preserve structural patterns that distinguish biologically meaningful interactions from incidental proximity. For example, in protein structure modelling, spatial-aware aggregation enables the network to prioritise contacts that are close in three-dimensional space but distant along the amino acid sequence, a key requirement for identifying functional sites and allosteric interactions. Similarly, in molecular property prediction, geometry-aware aggregation supports learning representations that respect chemical validity and stereochemical constraints.

Structure-aware aggregation is also critical for modelling higher-level biological organisation. In cellular and tissue-scale graphs, spatial-aware GNNs can capture gradients, niches, and boundary effects that influence cell fate and function. In brain networks and other anatomical systems, preserving spatial topology allows models to distinguish between structurally conserved regions and functionally specialised modules. These capabilities are essential for moving from pattern recognition toward mechanistic interpretation, particularly in applications involving spatial omics, histopathology, and organ-level modelling.

Despite their biological relevance, structure-aware and spatial-aware approaches introduce additional challenges. Incorporating geometric information increases model complexity and computational cost, and spatial data are often incomplete, noisy, or platform dependent. Moreover, structural features alone do not guarantee biological causality; spatial correlation may arise from shared developmental origin or measurement artefacts rather than direct functional interaction. Consequently, structure-aware aggregation is most effective when combined with biological priors, experimental annotations, or complementary data modalities that help disambiguate structural association from functional relevance.

In summary, structure-aware and spatial-aware aggregation mechanisms represent a critical step toward biologically faithful graph learning. By embedding spatial organisation and structural constraints directly into message passing, these approaches enable GNNs to better reflect the physical and organisational principles governing biomolecular systems, thereby enhancing both predictive accuracy and biological interpretability.

Spatial Graph Convolution Network (SGCN)