1. Introduction

For team leaders to maximize the effectiveness and efficiency of their team as individuals and as a whole, they need to understand the current status of their team members, as described in the empathic computing research field [

1]. For these leaders, workspace awareness defines the knowledge required for workers to interact with a system, utilizing the up-to-the-moment understanding of other people’s actions [

2]. However, actively communicating this information can be complex, given that the team constitutes many individuals. Additionally, each team member must manage their own tasks, workload, and stress levels, which team leaders must be aware of and actively monitor.

We envision that a team leader could view the other team members with Augmented Reality (AR) workspace awareness information. Given that one of the roles of a team leader is to accomplish work through other people [

3], we aim to minimize the time it takes for a leader to make decisions that optimize the whole team’s performance and the well-being of the team members. Although team leaders can obtain feedback on their team performance and status through 2D interfaces, AR presents in situ information related to team members in the physical space [

4]. This information could be represented in various ways, e.g., textual versus graphical [

2,

5], and could even include state information, for example, whether the team member is browsing information or performing focused work on it [

6].

Previous work has examined the effects of sharing physiological states in collaborative Virtual Reality (VR) (e.g., heart rate [

7,

8]), as well as the development of collaborative awareness using realistic avatars [

9,

10], sharing gaze [

11,

12,

13], and even emotions [

14]. Understanding a team member’s direct and passive actions and behaviors provides team leaders with a much broader range of information when making decisions that impact a team member [

15]. This work explores the development of AR visual cues to help team leaders understand the state of their working team members when allocating new tasks to them, as explored by Lee et al. where a local worker shares visual cues with a remote helper [

16]. This allows the team leader to individually manage the workload and well-being of individual members while maximizing task outputs for the team overall.

We developed different AR presentation methods of status information to a team leader, communicating individual team members’ status. The goal is not to investigate methods of physiological sensing or human tracking technologies but to determine the effectiveness of different visual attributes of AR presentations. This is why simulated team members can be employed in our experiments, as we focus on the effect of sharing the team members’ status and not on how we gather it. The AR information is simulated in a VR environment, and the simulated team members are placed in that VR setting. The simulated team members’ well-being is presented to the team leader labeled as stress (Stress is an extremely complex concept and topic. Our use of this term is meant to provide a high-level term covering a person who is experiencing such emotions as being overworked, unable to finish tasks on time, pressured, and falling behind on their work.), following Lazarus and Folkman’s definition as “a particular relationship between the person and the environment that is appraised by the person as taxing or exceeding his or her resources and endangering his or her well-being” [

17]. This investigation focuses on improving information presented to the team leaders who are required to know who is over or underloaded and how they are coping with that work. The presentation of this information aims to allow the team leader to balance having the work done and maintaining a healthy and efficient working environment. Future research will investigate AR-presented information in a physical setting with human team members and physiological sensing.

In this paper, two research questions are addressed:

RQ1—Does providing information about a team member’s stress status and current workload help team leaders manage a team?

RQ2—Which AR visualizations are most effective in helping team leaders understand the status of their team members?

After reviewing related work, this paper presents the developed visualization techniques and experimental platform, then the study design and results, and ends with a discussion and final thoughts.

2. Related Work

Based on previous work, we detail which information is relevant to support awareness of collaboration, how physiological data can further improve awareness, and how all this information can be visualized.

2.1. Awareness in Collaborative XR

When working together in a shared space, the perceptive elements of the environment enable people to be aware of the collaborative situation. This awareness of collaboration is defined by Gutwin and Greenberg [

5] as the set of knowledge and the environmental understanding for answering the questions

Who?,

What?,

How?,

Where?, and

When? about collaborative tasks. The notion of awareness has been widely studied and characterized, whether by focusing on how people interact with each other and with the system [

18] or by focusing on the proximity between the perceptive elements and their observer [

19].

AR can be used in many situations to improve collaborative work by providing additional awareness cues. For example, capturing a team member’s active and passive actions and behaviors would provide other team members and team leaders with a much broader range of information when confronted with decision-making [

15]. To improve the awareness of collaboration, several techniques have been explored: representing hands, arms and full body gestures [

20,

21,

22,

23]; sharing the view frustum as a visible cone or pyramid, head or eye gazes as seeable rays [

1,

6,

11,

12,

13,

22,

24,

25,

26]; giving people a pointer, with a shared visual representation, to clarify what people want to designate [

21,

24,

27,

28]; or providing the ability to sketch on surfaces or mid-air [

21,

28].

2.2. Improving Awareness with Physiological Data

Improving awareness goes beyond just understanding people’s actions, requiring more information about how they feel, why they acted, how they did what they did, and other elements that may influence collaboration. To help people obtain awareness from their perceptions, previous work proposed to provide verbal communication, to embody people with realistic avatars [

9,

10,

26], to share emotions [

1,

14,

26,

29,

30], and physiological data [

6,

7,

8,

26]. For example, Dey et al., shared the heart rate between two participants with a heart icon in their field of view [

7] and later shared the heart rate between two participants through VR controllers’ vibration [

8]. Luong et al. gathered mental workload by integrating sensors in a head-mounted display, and ocular activity was the best indirect indicator of mental workload [

31]. They then showed that designing complex training scenarios with multiple parallel tasks while modulating the user’s mental workload over time (MATB-II) was possible [

32].

2.3. Visualizing Information

Information can be displayed either with a textual presentation (raw data) or a graphical one (with abstraction) [

2,

5]. In terms of placement, information could be displayed at one or more fixed locations for the user, such as signs and panels; as a virtual movable tablet that could be resized, enabled, and disposed of on-demand (e.g., Tablet Menus [

33]); or attached to the users’ body (e.g., on their wrist, fingers, legs, torso, etc., … [

33,

34]). The gaming industry already uses this variety of placement options (such as Lone Echo [

35] or Zenith [

36]) as well as academic research [

2,

6,

37,

38]. In addition, Yang et al. [

4] explored collaborative sensemaking tasks for groups of users immersed in VR. The outcomes of their study demonstrate the potential benefits of spatially distributing information in VR compared to aggregating it in a traditional desktop environment.

2.4. Synthesis of Our Literature Review

According to these related works, effective collaboration hinges on individuals with a good awareness of collaboration: a profound understanding of their collaborators and actions. Our analysis underscores the necessity for shared environments to provide perceptive elements, utilizing both natural means like visible bodies, gestures, and facial expressions, and XR to present internal information such as feelings, physiological data, and well-being. This synthesis of findings emphasizes the essential role of awareness of collaboration in optimizing shared environments for enhanced collaborative experiences, laying the foundation for further exploration in this study. As Gutwin and Greenberg wrote, “The input and output devices used in groupware systems and a user’s interaction with a computational workspace generate only a fraction of the perceptual information that is available in a face-to-face workspace. Groupware systems often do not present even the limited awareness information that is available to the system.” [

5]. Through this assertion, they identify two research directions: generating more perceptual information and presenting already available data, with existing literature suggesting methods for both. This study focuses on the latter, investigating how to effectively provide team members’ performance and physiological data feedback to their leader using AR visualizations.

3. Visualization Techniques

Our review of the literature showed the potential benefits of presenting information with XR. With this work, we aim to apply this observation to collaborative teamwork with AR visual cues. This is why we developed AR visualization techniques to support the team leader in assessing the current workflow situation and team members’ well-being by providing feedback on the team members’ status. We define the

team member’s status as the sum of the team member’s task status and inner status.

Task status refers to all information related to the user’s current, previous, and future tasks.

Inner status encapsulates one’s well-being-related information, e.g., physiological levels (such as heart rate, body temperature, skin conductance), emotions, and fatigue. For the purposes of this work, we do not examine a complete set of

inner status attributes. We instead measure how the team leader’s actions put team members under

stress. In our experiment, we do not directly measure stress. Instead, stress is calculated from known parameters such as the amount of work and rest time the team leader provides and will be addressed as

simulated stress. The formal definition of how we determine

simulated stress is presented in

Section 4.2.

A previous study [

39] showed that providing

task status to team members helped them to know how well others were performing. We can thus infer that

task status can also help the team leader determine which team member is best suited to be assigned a task. Our study shares similarities with prior work where a local worker shares visual cues (gaze, facial expressions, physiological signals, and environmental views) with a remote helper who provides guidance [

16], but our research diverges in its collaborative context. Indeed, we specifically focus on team monitoring and task allocation while concurrently sharing a worker’s status to understand their actions and well-being. To present the task status and the inner status with AR, the graphical assets must be easy to understand and avoid occlusion [

40]. Both text and visual elements can be used; text must be simple and written on a billboard to contrast with the background [

41,

42], and icons, symbols, and simple 2D graphic elements can support or replace text in AR-presented information [

43]. Thus, to answer RQ1 and RQ2, we developed a set of AR visualizations of

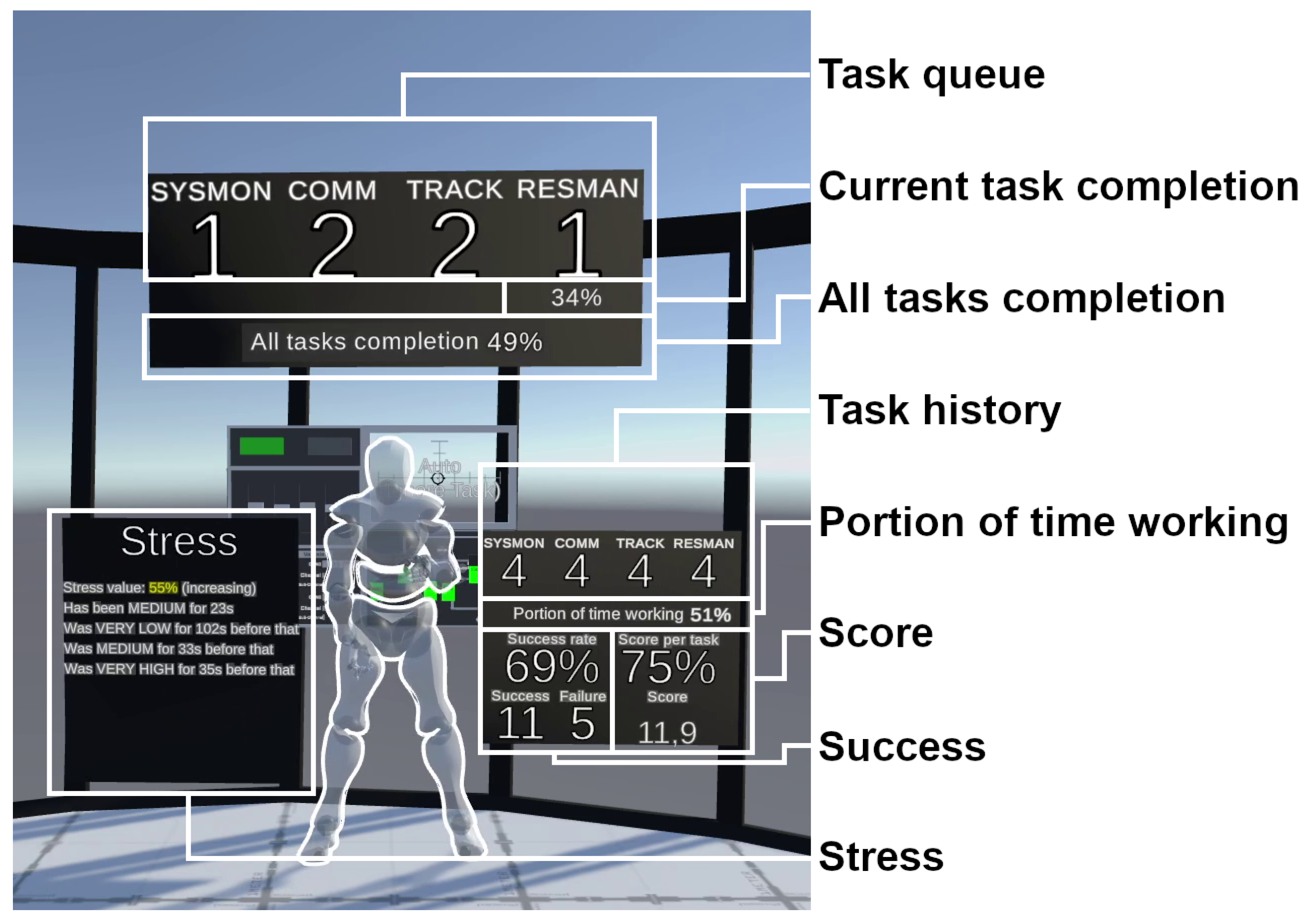

team member’s status as a set of awareness components with two different presentation styles:

textual (

Figure 1) and

graphical (

Figure 2). The textual style employs numbers and text to present the different attributes of user status information. All numbers and text were displayed in white with a black background, where the graphical presentation employs graphs and simple 3D models. All components are displayed on 2D panels floating around workers to avoid occlusion. A description of each component is as follows:

Task Queue displays the number and the type of tasks a team member has yet to complete. The textual style had four numbers, one for each task type. The graphical presentation had a virtual shelf that held stacks of each of the different tasks represented in the cube as on the team leader’s table (see

Figure 3).

Current Task Completion shows which task a team member is currently performing and its progress. The textual style presents this as a numeric percentage, and the graphical presentation employs a progress bar.

All Task Completion shows the progress of a team member on a task batch they have received. Both styles employ the Current Task Completion visualizations.

Task History shows the number and the type of tasks completed from the start of the work session. Both styles employ scaled-down versions of the Task Queue component.

Portion of Time Working represents the proportion of time a team member spent working since the start of the scenario, and the complement to 100% represents the time spent resting. Both styles employ the Current Task Completion visualizations.

Success shows if a team member succeeded or failed their assigned tasks. The textual style presents success and failure as integer values and the ratio of success/failure as a percentage. Instead of using numbers, the graphical style presents a disc that fills radially with the success percentage.

Score shows how well a team member completed their tasks. Each task is rated on a scale from 0 to 1 inclusive (see

Section 4.2 for more details). The component displays a cumulative score since the beginning of the scenario (the sum of all the individual task scores) and the average score per task. The textual style presents the current score as a real number and the score per task as a numeric percentage. The graphical style only presents the score per task percentage as a disc that fills radially.

Stress shares a team member’s

inner status summarized under the

simulated stress term. The textual style presents a history of stress levels an operator has been going through and the current level and value of their

simulated stress. The written value of the current

simulated stress is colored in accordance with the stress level (Low—Green; Medium—Yellow; High—Red, see

Section 4.2 for more details). The graphical style presents a 2D graph with time as the x-axis and

simulated stress as the y-axis. The line drawn on this graph shows the stress level recorded at that time.

Figure 1.

Awareness components with a textual presentation.

Figure 1.

Awareness components with a textual presentation.

Figure 2.

Awareness components with a graphical presentation.

Figure 2.

Awareness components with a graphical presentation.

The organization of components within panels was refined through pilot experiments, where valuable feedback was obtained. Three main categories emerged based on this feedback: the present and future workload of a team member (comprising Task Queue, Current Task Completion, and All Tasks Completion components), their past performance (including Task History, Portion Of Time Working, Success, and Score components), and their past and current inner status (represented by the Stress component). To effectively organize and present this information, we opted for a structured approach. Instead of having everything plotted in one unique panel, we created three dedicated panels, each tailored to one of these categories. The final placement and design of these components aimed to achieve two key objectives. First, it sought to minimize overlap between panels of different team members. Second, the design was crafted with a focus on improving the overall readability of all components.

4. Simulated AR in VR Application: MATB-II-Collab

To evaluate the eight awareness components of the textual and graphical AR presentations, we developed a Unity experimental platform where people were immersed with an HTC Vive Cosmos headset. The experimental platform enables a participant to function as the team leader, assigning tasks to virtual team members, the operators, and being presented with AR visualizations concerning those operators. Operators are part of the experimental platform and were fully simulated to keep the system invariant from one participant to another. They are virtual team members whose behavior is influenced across iterations of the simulation by the tasks assigned. The platform then gathers data from a team leader who assigns tasks to this simulated team. The experimental platform is in VR, and the AR is simulated in the platform.The remainder of this section presents both the experimental platform and the operator model.

4.1. Experimental Platform

We developed an experimental platform where a participant performs the role of a team leader facing virtual team members labeled

operators. The number and placement of operators in the virtual environment can be set or modified depending on the experiment conducted. The system automatically creates tasks that the team leader must assign to operators. Cubes are used to represent the tasks the team leader must allocate to operators by throwing the task cube in the corresponding basket of each operator (see

Figure 3). The system drops a set of tasks onto the table in front of the team leader. The team leader either picks up the tasks from the table by hand or assigns them to the different operators. The sets of tasks are in blocks of 5 to 20 cubes every 30 s.

Figure 3.

The virtual environment used in the experiment was populated by six virtual team members. The team leader’s table is in the center of the room, and the six black buckets are where tasks are allocated. The tasks are represented as cubes on the table.

Figure 3.

The virtual environment used in the experiment was populated by six virtual team members. The team leader’s table is in the center of the room, and the six black buckets are where tasks are allocated. The tasks are represented as cubes on the table.

We modelled the operators to perform tasks at different degrees of success, which is represented by the score, a decimal value between 0 and 1 inclusive given to an operator after performing a task, depending on how stressed they are;

Section 4.2 fully describes this model. The operators have humanoid avatars to tackle the same occlusion and placement issues we would have in an AR workspace. The experimental platform integrates the visualization techniques presented in

Section 3, providing AR-displayed information around each operator, as feedback on the operator’s status. This AR display provides data to inform the team leader’s choice of an operator to assign a task to, thus optimizing the team’s overall success. The experiment was designed for the participants to receive tasks over time to assign to the operators to achieve the greatest team performance. The team performance was calculated by how well the operators performed the given tasks. The team score is calculated as the sum of the scores of all the tasks performed by the operators. The experimental platform enabled two factors to be manipulated: (1) the presentation of indicators (textual and graphical) and (2) whether the

simulated stress feedback is shown or not shown.

4.2. Operator Model

Operators are automated virtual team members controlled by the experimental platform. Their avatar is either controlled by inverse kinematics animation for the four tasks or by skeleton animation when the avatar is idle. The operators virtually perform the four tasks of the NASA MATB-II [

44] on a virtual screen interface in front of them, see

Figure 3 The avatars of the operators replay previously recorded movements with inverse kinematics (using FinalIK). The movement data set comprises more than ten different recordings for each task recorded from a person performing the four MATB-II tasks in a VR setting with the experimental platform. The playback speed for each animation was adjusted to last for 10 s. If not replaying a recorded animation, the avatar of the operator plays one randomly chosen idle animation among the six skeleton animations from the Mixamo library. Inverse kinematics interpolates the positions of the hands and head to ensure consistently smooth movement when the avatar transitions between recorded movements and other animations [

45].

The team leader puts tasks in the operators’ task queues. Operators will perform their tasks one after another without resting between them until the queue is empty, following a FIFO (First In, First Out) pattern. When an operator’s task queue is empty, they are on a rest break until new tasks are placed in their queue.

Each operator has a speed multiplier; with a speed of 1.0, an operator will execute a task in 10 s (the normalized time for all tasks); with a speed of 1.5, they will execute the task in 6.7 s and, with a speed of 0.75, they will execute the tasks in 13.3 s. Two operators have a speed multiplier of 0.75, two operators have a speed multiplier of 1.0, and two have a speed multiplier of 1.5.

Operators have a simulated stress attribute to refer to their internal status (normalized to a 0.0–1.0 range). The team leader’s action of adding a task to an operator’s task queue progressively increases the simulated stress experienced by that operator, which we call stress charge. The total amount of simulated stress per task experienced by an operator is not impacted by the speed at which the operator completes the task; thus, the stress charge is obtained by multiplying the speed of the operator by the stress charge parameter. Operators’ simulated stress decreases with time, which we call stress cooldown; all operators have the same rate of stress cooldown, another parameter of the experimental platform. The stress charge parameter must be stronger than the stress cooldown parameter to increase operators’ simulated stress when they work, as their simulated stress evolves by the following Algorithm: As long as the simulation is running, the operator’s simulated stress decreases by the cooldown rate, then it increases by the charge rate if the operator has a task to perform.

The experimental platform makes a probabilistic determination of whether an operator is successful for each task. The more stressed an operator is, the more likely they will not succeed with the task. We distinguish three different levels of simulated stress: low, medium, and high, with two parametrable thresholds to separate them. With a low level of simulated stress, there is a low chance of failure by an operator, and at a high level of simulated stress, the operator is almost certain to fail at the task. A parametrable function defines a task’s failure probability according to the simulated stress level. As a performance measure, for a score of 1 min, the failure probability is given to an operator after performing a task. Operators have no preference or skill differences among tasks.

Initial conditions of the virtual environments and operators’ attributes will be described in

Section 5.1.

5. Experimental Design—Material and Methods

We performed a 2 × 2 within study to evaluate if the presence of stress feedback (Stress and No Stress) and which presentation of data (textual or graphical) best helped the team leader. The study thus compared four conditions: Textual∼No Stress, Textual∼Stress, Graphical∼No Stress, and Graphical∼Stress. The Textual∼No Stress–Textual∼Stress conditions and Graphical∼No Stress–Graphical∼Stress conditions were designed to evaluate RQ1 on the effect of providing stress feedback to team leaders to improve team performance. The Textual∼Stress and Graphical∼Stress conditions enable the evaluation of the impact of the representation of that stress on user performance to assess RQ2. With this study, we investigate the effect and relevance of providing team members’ status to their leaders in a work where team members must perform their tasks reliably with little to no mistakes.

5.1. Parameters and Initial Conditions

Pilot experiments determined the study’s parameters and initial conditions. The

stress charge parameter was set to 2% per second, and the

stress cooldown parameter was set to −0.1% per second. The threshold between

low and

medium levels of

simulated stress was set to 0.4, and the threshold between

medium and

high levels of

simulated stress has been set to 0.7. For the failure probability function, we used a simple sigmoid function to be easy for the participants to understand. The virtual scene has six operators (see

Section 4.2) arranged in an arc centered on the participant to add a constraint to the experiment. This way, the participant will not be able to see all operators at once and will have to turn their head along the experiment while not putting excessive effort as there could be with a full circle arrangement or having to navigate in a complex workspace. Each operator is assigned a speed factor, a

simulated stress offset, and some initial tasks. At the start of each trial, two operators are randomly chosen to become

slow operators with a 0.75-speed multiplier, two others are randomly chosen to become

fast operators with a 1.5-speed multiplier, and the last two operators will stay

normal speed operators with a speed multiplier of 1.0. At the same time, two operators are randomly chosen to begin with

low simulated stress (0.3), and two others are randomly chosen to begin with

medium simulated stress (0.5), and the two last ones will begin with no

simulated stress at all (0.0). The trial then starts by assigning 12 tasks among operators: two operators are randomly chosen to receive one task, two other operators receive two tasks each, and the last two operators are given three tasks each. The random assignment of initial conditions for the operators removes any learning effects between trials on each of the operator’s abilities. In the worst case, there will only be one failure with the initial conditions, which will have minimal impact on overall team performance.

5.2. Hypotheses

To answer RQ1 and RQ2, we made the following hypotheses:

H1—Providing operators’ stress feedback to team leaders improves team performance. During the training, participants were informed that the stress directly impacted team members’ performance, and they were informed on how their actions increased and decreased team members’ stress levels. Providing participants with information on team members’ stress levels will help them make better decisions and improve the overall team performance.

H2—Team leaders will prefer the presentation of operators’ stress in graphical form rather than textual. A graph helps to read multidimensional data, and the participants will prefer to read a graph rather than multiple lines of text to have information on the team members’ stress over time.

5.3. Data Collection

Experiment independent variables are the presence of simulated stress feedback (Stress and No Stress) and presentation (Textual or Graphical).

Experiment-dependent variables are as follows:

The team score (real between 0.0 and 172.0): sum of the score of all the tasks performed by the operators.

The trial duration (real from 455 s to 1052 s): time the team takes to complete all tasks.

The answers to the NASA-TLX (Task Load indeX [

46]), a survey on six different dimensions: the mental demand, physical demand, temporal demand, the performance, effort, and frustration felt by the subject during the task. Each dimension has been assessed on a five-level Likert scale.

The preferred condition for each component (what helped them the most).

Which components the participant prefers in the textual presentation.

Which components the participant prefers in the graphical presentation.

Additional data were collected during the experiment:

The adopted strategy of assigning tasks after every trial.

A further explanation of the evolution of the participant’s strategy at the end of the experiment.

Participants’ opinion on the importance of stress feedback.

5.4. Apparatus

The study was conducted in a quiet room exclusively dedicated to the study for its duration. Protocols to fight against COVID-19 have been deployed (disinfection of equipment, wearing a mask, etc.). The experiment was run on a VR-ready computer (DELL laptop, DELL, Round Rock, TX, USA, i7-10850H, 32GB RAM, NVIDIA Quadro RTX 3000), using an immersive head-mounted display (HTC Vive Cosmos, Taoyuan City, Taiwan) with controllers. The experimental platform was the

MATB-II-CVE application, a custom-developed software using Unity 2018.4.30f1, C#, and the SteamVR framework. The Unity project of the experimental platform application is open access:

https://github.com/ThomasRinnert/MATB-II_CollabVR (accessed on 29 August 2023).

5.5. Participants

We recruited 24 volunteers (6 females, 18 males) aged from 18 to 54 years old (Mean: 29.3, SD: 8.4), who were rewarded with gift cards after the experiment. The participant self-reported their handedness (1 left-handed, 23 right-handed), and they rated on a scale from 0 (“Not at all”) to 4 (“A lot”) their familiarity with and use of video games (Mean: 3.6, SD: 1.2), 3D environments (Mean: 3.5, SD: 1.5) and immersive technologies (Mean: 3.5, SD: 1.4).

5.6. Protocol

The participants were first welcomed and asked to read an information sheet, and if they agreed to participate, they signed a consent form. They were then informed of the objectives of this experiment: to help team leaders manage their team by being better informed concerning the team members’ status feedback. The participants were then informed how operators work (see

Section 4.2), particularly how the task score is affected by the operator’s stress level. The textual and graphical presentations of the proposed visualization techniques were then presented to the participants (see

Section 3), asking them to achieve the best team performance while minimizing operators’ stress. Participants were aware that time was not a constraint. The university’s ethics committee approved this experimental protocol.

5.6.1. Training

After the introduction, the participants were equipped with the VR headset and its controllers, and the training phase then started. There were five phases of training: the initial training and a training phase before each trial. During the initial training, participants could experience how to assign tasks to operators. They could ask to retry as many times as they wanted until they were ready to begin a trial. Before each condition, the awareness components were shown to participants, and they could assign some tasks, like during the initial training phase, to understand how the awareness components evolved.

5.6.2. Task Assignment Trial

During the experiment, the participant had to complete four trials in the virtual environment of assigning tasks to the six operators. A trial had no fixed duration: 160 task artifacts were given to the participants over approximately 8 min (5 to 20 every 30 s) for them to assign the tasks to operators. The trials end when all tasks are assigned and completed. This number of tasks had been determined during preliminary pilot experiments, where we investigated if there were enough tasks to place the virtual operators in a high-stress state. The experimental parameters produced tasks that, even when assigned with care, operators can reach a point where they are fully stressed. Even though the number of tasks is constant (160), the cubes depicting the tasks are generated to be one of the four MATB-II tasks randomly. The only difference in tasks is the name. The tasks all last the same time range for task completion, and operators have no preferences or skill differences among the four types of tasks. Participants were asked to answer questionnaires between each trial and at the end of the experiment. The experiment lasted for approximately one hour and a half for each participant.

6. Results

Following the methods of the reference books [

47,

48], we analyzed all quantitative data following the same process, starting with a Shapiro–Wilk test to check for normality. For normally distributed data, 2-ways ANOVAs were used (after checking that the sphericity assumption was met with a Maulchy test) with Tukey HSD tests ran as post hoc analysis, and for data not following a normal distribution, we ran two different analyses:

Both conditions with a textual presentation were aggregated and compared with a Wilcoxon test to the two other conditions with a graphical presentation, and the same was carried out for conditions with and without simulated stress feedback;

Friedman tests were used to check for significant differences between conditions, then pairwise Wilcoxon tests were used as a post hoc analysis with an alpha correction to 0.01 to compare the conditions.

6.1. Objective Measures

6.1.1. Team Score

A significant effect of the stress feedback on

Team Score was found. Participants achieved better performance with the

Stress conditions than with the

No Stress conditions (

Stress:

,

;

No Stress:

,

;

,

,

, see

Figure 4). This supports

H1: providing stress feedback improves performance. The presentation alone had no significant effect on

Team Score. Further analysis showed that the

Textual∼Stress condition led to a significantly higher

Team Score than the

Textual∼No Stress and

Graphical∼No Stress conditions (

Textual∼Stress:

,

;

Textual∼No Stress:

,

,

,

,

;

Graphical∼No Stress:

,

,

,

,

).

6.1.2. Trial Duration

There was no explicit request to complete the experiment as fast as possible; however, in reviewing the overall

Trial duration, we could observe a significant effect from both factors and an interaction effect (stress feedback:

; presentation:

; interaction:

, see

Figure 5). The post hoc analysis showed that the

Graphical∼Stress condition led to a longer

Trial Duration compared to all other conditions (

Graphical∼Stress:

s,

s;

Textual∼No Stress:

s,

s,

;

Textual∼Stress:

s,

s,

;

Graphical∼No Stress:

s,

s,

).

6.2. Questionnaires

6.2.1. NASA Task Load IndeX

In reviewing the overall NASA-TLX score, no significant difference was found. However, a detailed assessment of the questionnaire revealed participants felt significantly less mental demand, effort, and frustration when provided with stress feedback (mental demand—Stress: , ; No Stress: , , , , ; effort—Stress: , ; No Stress: , , , , ; frustration—Stress: , ; No Stress: , , , , ).

6.2.2. Strategy

After each trial, participants were asked to explain their task assignment strategy with open feedback. It revealed that when stress feedback was not provided, the main focus was on the Success component when participants needed to choose to whom to assign a task, as an indirect way to assess operators’ stress and chances to succeed in tasks. Eighteen participants reported doing so after the Textual No Stress condition and 17 after the Graphical No Stress condition. Otherwise, the Stress component was the main focus when available. Ten participants stated that their strategy was based on the Stress Component after the Textual Stress condition and 16 reported the same after the Graphical Stress condition. Following operators’ stress feedback, participants aimed at assigning tasks to operators with a low or medium simulated stress level. Sixteen participants reported doing so after the Textual Stress condition and 12 after the Graphical Stress condition. The presentation seems to have little effect on the task assignment strategy except for the Stress component: more participants reported focusing on the stress indicator when it had a graphical presentation than a textual one, which supports H2.

6.2.3. Component Feedback

In the final questionnaire, participants had to choose which components they found most helpful for each condition. The only significant difference is for the Stress component: participants preferred having it displayed with a graphical presentation, which also supports H2 (, ).

An open-ended question asked participants to share their points of view on the textual and graphical presentations of the awareness components (listed in

Section 3). Regarding the graphical presentation, 15 participants reported that they liked the Stress component, and 5 said that this presentation improved their understanding of the data. For the textual presentation, 5 participants said they enjoyed the Success component, 6 the Score component, 5 stated that the written numbers were a perk of the textual presentation, and 6 reported that it was easier to understand the data with this presentation.

Asked if they felt that having stress feedback is essential, all participants answered “yes”. They were then asked to explain their answer, and 11 participants reported that having stress feedback helped them obtain a better score. One participant explicitly mentioned that having stress feedback was better than determining the stress level from other components.

In evaluating the possibility of hybrid components (as a mix of textual and graphical presentations), participants were asked which hybrid components they may find helpful. Some participants suggested not doing hybrid presentations of some components and keeping a textual presentation. Conversely, some participants suggested keeping some components with a graphical presentation. No strong tendencies could be identified except for the Task Queue and the Stress components. Among participants with an opinion on the Task Queue component, 6 recommended keeping it with a textual presentation, 2 recommended keeping a graphical presentation, and only 1 suggested changing it to a hybrid component. Opinions concerning the Stress component confirmed the preference for the graphical presentation by 11 participants mentioning it; however, 7 participants still suggested turning it to a hybrid component, with only 1 suggesting keeping it with a textual presentation.

7. Discussion

In answering RQ1 and RQ2, the paper presents three contributions: a set of visualization techniques for team leaders to present members’ stress and workload to help manage allocating tasks; a study evaluating the effectiveness of the proposed visualization techniques for team leaders and an open-access Unity collaborative VR experimental platform we developed: MATB-II-Collab.

The usage of virtual workers in our experiments enables the investigation of the impact of providing feedback on their workload, individual performance, and inner status on overall team performance. However, it is essential to note that before deploying such visualizations in a team of real people, obtaining explicit consent from team members for sharing their personal data would be mandatory. This process would not be imposed on them but conducted with their informed consent. Importantly, any use of such data should be solely dedicated to improving their well-being at work and must not, under any circumstances, be a tool to exploit people for their ultimate resources.

7.1. Study Outcomes

Results showed that stress feedback significantly improves user performance, supporting H1. Stress feedback also resulted in changes in the participants’ task assignment strategy. Participants mainly focused on the stress level of operators as it was their primary way to ensure better performance. When the participants did not have stress feedback, they had to conjecture the stress level of operators, using their judgment based on other indicators. With stress feedback, the participants found the process easier to manage the stress level of operators. Overall, most participants could monitor their operators’ well-being, which led to better team performance in our system. Providing operators’ status feedback to team leaders is more direct and efficient than having them assess how well their team is via performance measures. For future AR information displays to team leaders, the AR information should contain the stress level information of the team members who could potentially receive workload.

Given that a stress indicator improves team performance, the question is which stress presentation provides the most improvement. The results showed that the textual presentation of stress gave a better score, but the participants preferred the graphical presentation, supporting H2. Based on user feedback, some of the AR information (the Success and Score components) presented to the team leader is more understandable with a textual presentation due to the presence of numbers and the accuracy they bring. Other AR information, such as stress, was easier to understand with a graphical presentation because it gives a better understanding of the evolution of the value and a global idea of the value. The Textual Stress condition was the best performance without the downside of being longer than the Graphical Stress condition. Indeed, the analysis of the participants’ strategy explanations revealed that while participants did not focus on time, the graphical presentation of the stress component was easier and quicker for them to comprehend and complete their tasks. Thus, before assigning tasks, participants waited for the stress level of operators to become lower than with the textual presentation. Another factor is that the graph takes more time to read than the Textual Stress panel and the other textual indicators. Our experiment did not put time pressure on participants to allow inexperienced people to take part in the study with only basic training for the task. To further study the effect of the presentation style and the presence of stress feedback on the trial duration, a future study would propose a similar task to people with a managing background.

We recommend that collaborative setting designers provide both textual and graphical presentations of information to team leaders. Indeed, the textual information leads to better performance, being more precise and processed faster, and the graphical presentation of information is preferred and is enough to obtain a rough idea of the information at a glance. Pursuing research on sharing people’s status should investigate hybrid presentations to take advantage of both textual precision and graphical abstraction.

7.2. Limitations

There are some limitations to this study. We compared only two levels of abstraction of the information; we used a team of virtual operators; we considered that the team leader could see the team members or at least an avatar of theirs; and we ran one-hour-only experiments.

Although our study focused on comparing two extremes in terms of abstraction levels in visualizations, it is important to acknowledge the limitation of this approach. The continuum of abstraction offers numerous possibilities, but our goal was to highlight the advantages of each extreme to recommend a suitable approach later. Both graphical and textual information, as demonstrated by quantitative results and participant feedback, demonstrated their respective benefits. Consequently, we recommended a balance between presenting raw information textually and offering abstracted information through symbols, a balance that must be adapted to specific use cases.

We studied the impact of different feedback provided to a team leader with a virtual team where team members were simulated in our simple operator model, see

Section 4.2. Although our experimental setup allowed us to gain valuable insights, it is essential to consider the potential limitations in generalizing our findings to real-world scenarios with human teams and authentic stress measures. Would the same effects of the indicators’ presentation and stress feedback be observed in a real team of humans with real stress measures, would the team leaders adopt the same strategy, and would they feel the same empathy towards the team members? Our experimental design intentionally simplified the way stress affected the operators for ease of understanding among participants. However, this simplicity may not fully capture the intricacies of how real stress influences human performance and team dynamics. In our experimental context, the lower limit of the failure probability occurred when stress levels were at the lowest. This differs with theories such as the ‘Flow Theory’ [

49] and ‘Yerkes-Dodson’s law’ [

50], suggesting that human performance tends to peak under moderate stress levels rather than low stress. To address the limitations of our current study, a crucial next step would involve conducting experiments with human operators and utilizing physiological sensors to accurately measure stress levels. This approach would offer a more realistic understanding of how stress influences human performance in team settings. Importantly, readers should interpret our findings with the awareness that the simulated stress in our study might not fully capture the nuances of real-world stress, and the extrapolation of results to human teams requires cautious consideration of these experimental constraints.

An additional limitation of our study was that team leaders had visibility of operators; however, the collaborative presence was not systematically measured. The ability of team leaders to visually perceive operators through avatars during the study may have influenced the dynamics of the interaction, creating empathy toward the operators. This empathetic connection might have been further accentuated in the presence of stress feedback, especially when presented graphically. During the study, a subset of participants explicitly reported heightened assertiveness tied to their empathetic response, particularly in situations where stress feedback was incorporated and even more so with graphical representation. This limitation is crucial to acknowledge as it introduces a variable that may have influenced the team leader’s behavior beyond the specific factors we were measuring. The absence of a systematic measurement of collaborative presence makes it challenging to isolate and quantify the impact of this variable. Consequently, readers need to consider this potential influence when interpreting and generalizing the results of our study. Future research could explore this aspect more explicitly, incorporating measurements of collaborative presence to understand further its role in the dynamics of team interactions within the context of AR-supported environments.

Another limitation of our study pertains to the constrained time allocated to each participant during the experiments. Although one hour may seem relatively brief for an investigation into workload balancing and stress management, longer experiments that delve deeper into these aspects could offer valuable insights. However, extending the duration of experiments poses challenges. In fields such as AR VR, one hour is a standard duration, and surpassing this timeframe can be impractical for various reasons, including participant availability and associated costs. Moreover, spending more than an hour in VR raises ethical concerns due to potential mental and physical stress. Despite these limitations, we remain confident that our findings hold relevance for longer team management scenarios. Incorporating workers’ inner status feedback is expected to consistently benefit team leaders in making nuanced decisions when assigning workload to a team. Although the graphical representation of workers’ stress may prove advantageous in longer cases, as suggested in the Study Outcomes section, our recommendation is to maintain a balanced approach by combining both textual and graphical information for a more comprehensive design choice. For future research, it would be valuable to explore longer experiments conducted in a physical setup with human teams to validate and extend our assumptions.

8. Conclusions

This study shows us that different presentations of status information and how team members cope affect team performance with a simulated team. Investigating the effect of providing feedback on the users’ inner status, we found that it enhances team performance and potentially improves how team leaders consider team members’ status. This shows that we might have to change how we manage teams to achieve better team performance and members’ well-being. Indeed, providing users’ status to team leaders should be highly considered in works where team members must perform their tasks reliably with little to no mistakes. The comparison we conducted between graphical and textual presentations of visual cues suggests that the textual presentation of inner status will achieve better performance, even if people prefer the graphical presentation. The raw performance information presented to a team leader is more understandable with a textual presentation due to the presence of numbers and the accuracy they bring. On the other hand, more complex information, such as inner status feedback, seems more straightforward to understand with a graphical presentation due to the abstraction this presentation provides.

The implications of this study extend beyond the simulated team environment, prompting considerations for implementation in various industries. The application of different presentations of status information and strategies for team coping, as identified in this research, has the potential to reshape team management practices. In real work settings, implementing feedback on users’ inner status for team leaders could enhance team performance and contribute to the overall well-being of team members. This shift towards a more empathic teamwork approach may prove valuable in industries where reliable task performance with minimal errors is crucial. Although our study focused on collocated teams, the insights gained are transferable to diverse teamwork scenarios, including remote collaborations facilitated through shared workspaces such as Collaborative Virtual Environments.

However, we recognize that the practical implementation of these techniques comes with its challenges. Of course, as soon as real people are implied in such settings, ethical problems will have to be addressed: obviously, sharing user status must follow privacy rules and ethics and must require the consent of monitored subjects. Moreover, factors such as adapting the feedback mechanisms to specific industry needs, addressing potential resistance to change, and integrating these techniques into existing workflows should be considered. Further research and collaboration with industry professionals can provide valuable insights into overcoming these challenges and optimizing the application of our findings in real-world work settings.

Future work will investigate interactions between the team leader and the indicators (resize/dispose of indicators, alerts…). This includes the effect of providing an estimation of the evolution of the stress level of team members to team leaders. A future direction is to investigate more complex and realistic virtual operators in the experiments.

Author Contributions

Conceptualization, T.R., J.W., C.F., G.C., T.D. and B.H.T.; data curation, T.R.; formal analysis, T.R., C.F., G.C. and B.H.T.; funding acquisition, T.D.; investigation, T.R.; methodology, T.R., J.W., C.F., T.D. and B.H.T.; project administration, T.D. and B.H.T.; resources, J.W. and B.H.T.; software, T.R.; supervision, J.W., C.F., G.C., T.D. and B.H.T.; validation, J.W., C.F., T.D. and B.H.T.; visualization, T.R.; writing—original draft, T.R.; writing—review and editing, T.R., J.W., C.F., T.D. and B.H.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by the Centre National de la Recherche Scientifique (CNRS) with international Ph.D. funding and by the University of South Australia (UniSA) through a Ph.D. scholarship. This work was also supported by French government funding managed by the National Research Agency under the Investments for the Future program (PIA) grant ANR-21-ESRE-0030 (CONTINUUM).

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki and approved by the Ethics Committee of the University of South Australia (approved on 20 September 2022, application ID: 204935).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The data presented in this study are openly available on GitHub at

https://github.com/ThomasRinnert/MATB-II_CollabVR, accessed on 16 November 2023. The MATB-II-Collab application can be found as a Unity 2018 project in this repository. The measures acquired during the user study can be found in the same repository as “XP2_Results.csv”.

Acknowledgments

We would like to thank Nicolas Barbotin, Pierre Bégout, Nicolas Delcombel, and Anthony David for their help with Unity development; Crossing lab members for helping to sort out some ideas—Jean-Philippe Diguet, Cédric Buche, Maëlic Nau, Thomas Ung; IVE students for pilot testing my study (Spencer O’Keeffe, Radhika Jain, Kieran May, Chenkai Zhang); Thomas Clarke, Allison Jing, Adam Drogemuller, and Brandon Matthews for helping with the statistical analysis; and all participants, especially those who told their friends and family about the study.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AR | Augmented Reality |

| VR | Virtual Reality |

| MATB-II | Multi-Attribute Task Battery II |

References

- Piumsomboon, T.; Lee, Y.; Lee, G.A.; Dey, A.; Billinghurst, M. Empathic Mixed Reality: Sharing What You Feel and Interacting with What You See. In Proceedings of the 2017 International Symposium on Ubiquitous Virtual Reality, ISUVR 2017, Nara, Japan, 27–29 June 2017; pp. 38–41. [Google Scholar] [CrossRef]

- Sereno, M.; Wang, X.; Besancon, L.; Mcguffin, M.J.; Isenberg, T. Collaborative Work in Augmented Reality: A Survey. IEEE Trans. Vis. Comput. Graph. 2020, 28, 2530–2549. [Google Scholar] [CrossRef] [PubMed]

- Hotek, D.R. Skills for the 21st Century Supervisor: What Factory Personnel Think. Perform. Improv. Q. 2002, 15, 61–83. [Google Scholar] [CrossRef]

- Yang, Y.; Dwyer, T.; Wybrow, M.; Lee, B.; Cordeil, M.; Billinghurst, M.; Thomas, B.H. Towards immersive collaborative sensemaking. Proc. ACM Hum.-Comput. Interact. 2022, 6, 722–746. [Google Scholar] [CrossRef]

- Gutwin, C.; Greenberg, S. A descriptive framework of workspace awareness for real-time groupware. In Computer Supported Cooperative Work; Kluwer Academic Publishers: Dordrecht, The Netherlands, 2002; Volume 11, pp. 411–446. [Google Scholar] [CrossRef]

- Jing, A.; Gupta, K.; Mcdade, J.; Lee, G.A.; Billinghurst, M. Comparing Gaze-Supported Modalities with Empathic Mixed Reality Interfaces in Remote Collaboration. In Proceedings of the 2022 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Singapore, 17–21 October 2022; pp. 837–846. [Google Scholar] [CrossRef]

- Dey, A.; Piumsomboon, T.; Lee, Y.; Billinghurst, M. Effects of sharing physiological states of players in collaborative virtual reality gameplay. In Proceedings of the Conference on Human Factors in Computing Systems—Proceedings Association for Computing Machinery, Denver, CO, USA, 6–11 May 2017; Volume 2017, pp. 4045–4056. [Google Scholar] [CrossRef]

- Dey, A.; Chen, H.; Hayati, A.; Billinghurst, M.; Lindeman, R.W. Sharing manipulated heart rate feedback in collaborative virtual environments. In Proceedings of the 2019 IEEE International Symposium on Mixed and Augmented Reality, ISMAR 2019, Beijing, China, 14–18 October 2019; pp. 248–257. [Google Scholar] [CrossRef]

- Beck, S.; Kunert, A.; Kulik, A.; Froehlich, B. Immersive Group-to-Group Telepresence. IEEE Trans. Vis. Comput. Graph. 2013, 19, 616–625. [Google Scholar] [CrossRef]

- Yoon, B.; Kim, H.I.; Lee, G.A.; Billinghurst, M.; Woo, W. The effect of avatar appearance on social presence in an augmented reality remote collaboration. In Proceedings of the 26th IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2019, Osaka, Japan, 23–27 March 2019; pp. 547–556. [Google Scholar] [CrossRef]

- Piumsomboon, T.; Dey, A.; Ens, B.; Lee, G.; Billinghurst, M. The effects of sharing awareness cues in collaborative mixed reality. Front. Robot. AI 2019, 6, 1–18. [Google Scholar] [CrossRef]

- Fraser, M.; Benford, S.; Hindmarsh, J.; Heath, C. Supporting awareness and interaction through collaborative virtual interfaces. In Proceedings of the Twelfth Annual ACM Symposium on User Interface Software and Technology, Asheville, NC, USA, 7–10 November 1999; Volume 1, pp. 27–36. [Google Scholar] [CrossRef]

- Bai, H.; Sasikumar, P.; Yang, J.; Billinghurst, M. A User Study on Mixed Reality Remote Collaboration with Eye Gaze and Hand Gesture Sharing. In Proceedings of the HI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–13. [Google Scholar] [CrossRef]

- Niewiadomski, R.; Hyniewska, S.J.; Pelachaud, C. Constraint-based model for synthesis of multimodal sequential expressions of emotions. IEEE Trans. Affect. Comput. 2011, 2, 134–146. [Google Scholar] [CrossRef]

- Irlitti, A.; Smith, R.T.; Itzstein, S.V.; Billinghurst, M.; Thomas, B.H. Challenges for Asynchronous Collaboration in Augmented Reality. In Proceedings of the 2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Merida, Mexico, 19–23 September 2016; pp. 31–35. [Google Scholar] [CrossRef]

- Lee, Y.; Masai, K.; Kunze, K.; Sugimoto, M.; Billinghurst, M. A Remote Collaboration System with Empathy Glasses. In Proceedings of the 2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Merida, Mexico, 19–23 September 2016; pp. 342–343. [Google Scholar] [CrossRef]

- Lazarus, R.S.; Folkman, S. Stress, Appraisal, and Coping; Springer Publishing Company: New York, NY, USA, 1984. [Google Scholar]

- Benford, S.; Fahlén, L. A Spatial Model of Interaction in Large Virtual Environments. In Proceedings of the ECSCW ’93—Third European Conference on Computer-Supported Cooperative Work, Milan, Italy, 13–17 September 1993; pp. 109–124. [Google Scholar] [CrossRef]

- LeChénéchal, M. Awareness Model for Asymmetric Remote Collaboration in Mixed Reality. Ph.D. Thesis, INSA de Rennes, Rennes, France, 2016. [Google Scholar]

- Becquet, V.; Letondal, C.; Vinot, J.L.; Pauchet, S. How do Gestures Matter for Mutual Awareness in Cockpits? In Proceedings of the DIS ’19—2019 on Designing Interactive Systems Conference, San Diego, CA, USA, 23–28 June 2019; pp. 593–605. [Google Scholar] [CrossRef]

- Huang, W.; Kim, S.; Billinghurst, M.; Alem, L. Sharing hand gesture and sketch cues in remote collaboration. J. Vis. Commun. Image Represent. 2019, 58, 428–438. [Google Scholar] [CrossRef]

- Piumsomboon, T.; Day, A.; Ens, B.; Lee, Y.; Lee, G.; Billinghurst, M. Exploring enhancements for remote mixed reality collaboration. In Proceedings of the SIGGRAPH Asia 2017 Mobile Graphics and Interactive Applications, SA 2017, Bangkok, Thailand, 27–30 November 2017; pp. 1–5. [Google Scholar] [CrossRef]

- LeChénéchal, M.; Duval, T.; Gouranton, V.; Royan, J.; Arnaldi, B. Help! I Need a Remote Guide in My Mixed Reality Collaborative Environment. Front. Robot. AI 2019, 6, 106. [Google Scholar] [CrossRef]

- Gupta, K.; Lee, G.A.; Billinghurst, M. Do you see what i see? the effect of gaze tracking on task space remote collaboration. IEEE Trans. Vis. Comput. Graph. 2016, 22, 2413–2422. [Google Scholar] [CrossRef] [PubMed]

- Piumsomboon, T.; Lee, G.; Irlitti, A.; Ens, B.; Thomas, B.H.; Billinghurst, M. On the shoulder of the giant: A multi-scale mixed reality collaboration with 360 video sharing and tangible interaction. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Glasgow, UK, 4–9 May 2019. [Google Scholar] [CrossRef]

- Hart, J.D.; Piumsomboon, T.; Lee, G.; Billinghurst, M. Sharing and Augmenting Emotion in Collaborative Mixed Reality. In Proceedings of the 2018 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), Munich, Germany, 16–20 October 2018; pp. 212–213. [Google Scholar] [CrossRef]

- Duval, T.; Fleury, C. An asymmetric 2D Pointer/3D Ray for 3D Interaction within Collaborative Virtual Environments. In Proceedings of the Web3D 2009: The 14th International Conference on Web3D Technology, Darmstadt, Germany, 16–17 June 2009; Volume 1, pp. 33–41. [Google Scholar] [CrossRef]

- Teo, T.; Lee, G.A.; Billinghurst, M.; Adcock, M. Investigating the use of different visual cues to improve social presence within a 360 mixed reality remote collaboration. In Proceedings of the VRCAI 2019: 17th ACM SIGGRAPH International Conference on Virtual-Reality Continuum and Its Applications in Industry, Brisbane, QLD, Australia, 14–16 November 2019. [Google Scholar] [CrossRef]

- Ajili, I.; Mallem, M.; Didier, J.Y. Human motions and emotions recognition inspired by LMA qualities. Vis. Comput. 2019, 35, 1411–1426. [Google Scholar] [CrossRef]

- Ajili, I.; Ramezanpanah, Z.; Mallem, M.; Didier, J.Y. Expressive motions recognition and analysis with learning and statistical methods. Multimed. Tools Appl. 2019, 78, 16575–16600. [Google Scholar] [CrossRef]

- Luong, T.; Martin, N.; Raison, A.; Argelaguet, F.; Diverrez, J.M.; Lecuyer, A. Towards Real-Time Recognition of Users Mental Workload Using Integrated Physiological Sensors into a VR HMD. In Proceedings of the 2020 IEEE International Symposium on Mixed and Augmented Reality, ISMAR 2020, Porto de Galinhas, Brazil, 9–13 November 2020; pp. 425–437. [Google Scholar] [CrossRef]

- Luong, T.; Argelaguet, F.; Martin, N.; Lecuyer, A. Introducing Mental Workload Assessment for the Design of Virtual Reality Training Scenarios. In Proceedings of the 2020 IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2020, Atlanta, GA, USA, 22–26 March 2020; IEEE: New York, NY, USA, 2020; pp. 662–671. [Google Scholar] [CrossRef]

- Bowman, D.A.; Wingrave, C.A. Design and evaluation of menu systems for immersive virtual environments. In Proceedings of the Virtual Reality Annual International Symposium, Yokohama, Japan, 13–17 March 2001; pp. 149–156. [Google Scholar] [CrossRef]

- Wagner, J.; Nancel, M.; Gustafson, S.; Wendy, E.; Wagner, J.; Nancel, M.; Gustafson, S.; Body, W.E.M.A.; Wagner, J.; Nancel, M.; et al. A Body-centric Design Space for Multi-surface Interaction. In Proceedings of the CHI ’13: SIGCHI Conference on Human Factors in Computing Systems, Paris, France, 27 April 2013. [Google Scholar]

- Ready at Dawn. Lone Echo. 2017. Available online: https://www.oculus.com/lone-echo (accessed on 15 January 2021).

- Ramen VR. Zenith: The Last City. 2022. Available online: https://zenithmmo.com/ (accessed on 15 January 2021).

- Bégout, P.; Duval, T.; Kubicki, S.; Charbonnier, B.; Bricard, E. WAAT: A Workstation AR Authoring Tool for Industry 4.0. In Augmented Reality, Virtual Reality, and Computer Graphics. AVR 2020; Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Cham, Switzerland, 2020; Volume 12243 LNCS, pp. 304–320. [Google Scholar]

- Sasikumar, P.; Pai, Y.S.; Bai, H.; Billinghurst, M. PSCVR: Physiological Sensing in Collaborative Virtual Reality. In Proceedings of the 2022 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), Singapore, 17–21 October 2022. [Google Scholar] [CrossRef]

- Rinnert, T.; Walsh, J.; Fleury, C.; Coppin, G.; Duval, T.; Thomas, B.H. How Can One Share a User’s Activity during VR Synchronous Augmentative Cooperation? Multimodal Technol. Interact. 2023, 7, 20. [Google Scholar] [CrossRef]

- Gattullo, M.; Scurati, G.W.; Evangelista, A.; Ferrise, F.; Fiorentino, M.; Uva, A.E. Informing the Use of Visual Assets in Industrial Augmented Reality. In Design Tools and Methods in Industrial Engineering; Rizzi, C., Andrisano, A.O., Leali, F., Gherardini, F., Pini, F., Vergnano, A., Eds.; Springer: Cham, Switzerland, 2020; pp. 106–117. [Google Scholar]

- Gattullo, M.; Uva, A.E.; Fiorentino, M.; Monno, G. Effect of Text Outline and Contrast Polarity on AR Text Readability in Industrial Lighting. IEEE Trans. Vis. Comput. Graph. 2015, 21, 638–651. [Google Scholar] [CrossRef] [PubMed]

- Gattullo, M.; Uva, A.E.; Fiorentino, M.; Gabbard, J.L. Legibility in Industrial AR: Text Style, Color Coding, and Illuminance. IEEE Comput. Graph. Appl. 2015, 35, 52–61. [Google Scholar] [CrossRef]

- Gattullo, M.; Scurati, G.W.; Fiorentino, M.; Uva, A.E.; Ferrise, F.; Bordegoni, M. Towards augmented reality manuals for industry 4.0: A methodology. Robot. Comput.-Integr. Manuf. 2019, 56, 276–286. [Google Scholar] [CrossRef]

- Santiago-Espada, Y.; Myer, R.R.; Latorella, K.A.; Comstock, J.R. The Multi-Attribute Task Battery II (MATBII): Software for Human Performance and Workload Research: A User’s Guide; NASA Tech Memorandum 217164; National Aeronautics and Space Administration, Langley Research Center: Hampton, VA, USA, 2001.

- Aristidou, A.; Lasenby, J.; Chrysanthou, Y.; Shamir, A. Inverse kinematics techniques in computer graphics: A survey. Comput. Graph. Forum. 2018, 37, 35–58. [Google Scholar] [CrossRef]

- Hart, S.G.; Staveland, L.E. Development of NASA-TLX (Task Load Index): Results of Empirical and Theoretical Research. In Human Mental Workload; Hancock, P.A., Meshkati, N., Eds.; North-Holland: Amsterdam, The Netherlands, 1988; Volume 52, pp. 139–183. [Google Scholar] [CrossRef]

- Field, A.; Miles, J.; Field, Z. Discovering Statistics Using R; SAGE Publications: Thousand Oaks, CA, USA, 2012. [Google Scholar]

- Robertson, J.; Kaptein, M. An Introduction to Modern Statistical Methods in HCI; Springer: Berlin, Germany, 2016. [Google Scholar]

- Csikszentmihalyi, M. The flow experience and its significance for human psychology. In Optimal Experience: Psychological Studies of Flow in Consciousness; Cambridge University Press: Cambridge, UK, 1988; Volume 2, pp. 15–35. [Google Scholar]

- Yerkes, R.M.; Dodson, J.D. The relation of strength of stimulus to rapidity of habit-formation. J. Comp. Neurol. Psychol. 1908, 18, 459–482. [Google Scholar] [CrossRef]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).