Abstract

Structured extraction of emergency event information can effectively enhance the ability to respond to emergency events. This article focuses on extracting Chinese document-level emergency events, which entails addressing two key issues in this field: related datasets and the problem of role overlapping between candidate entities, which has been overlooked in existing DEE (document-level event extraction) studies that predominantly employed sequence annotation for candidate entity extraction. To tackle these challenges, we constructed a Chinese document-level emergency extraction dataset (CDEEE) and provides annotations for argument scattering, multiple events, and role overlapping. Additionally, a model named RODEE is proposed to address the role overlapping problem in DEE tasks. RODEE employs two independent modules to represent the head and tail positions of candidate entities, and utilizes a multiplication attention mechanism to interact between the two, generating a scoring matrix. Subsequently, role-overlapping candidate entities are predicted to facilitate the completion of DEE tasks. Experiments were conducted on our manually annotated dataset, CDEEE, and the results show that RODEE effectively solves the problem of role overlapping among candidate entities, resulting in improved performance of the DEE model, with an F1 value of 77.7%.

1. Introduction

In the real world, emergency events [1], such as traffic accidents, fires, forest fires, earthquakes, and health hazards, pose a serious threat to human life and property. As a result, they garner widespread concern from people across various sectors. In the context of the Internet information era, the rapid spread and fermentation of information about emergency events through online media will breed public opinion events and affect social public safety. Accurate and efficient access to structured information about emergency events can assist relevant personnel in early detection and prompt response to such events, mitigate the emergence of public opinion incidents, and uphold societal safety.

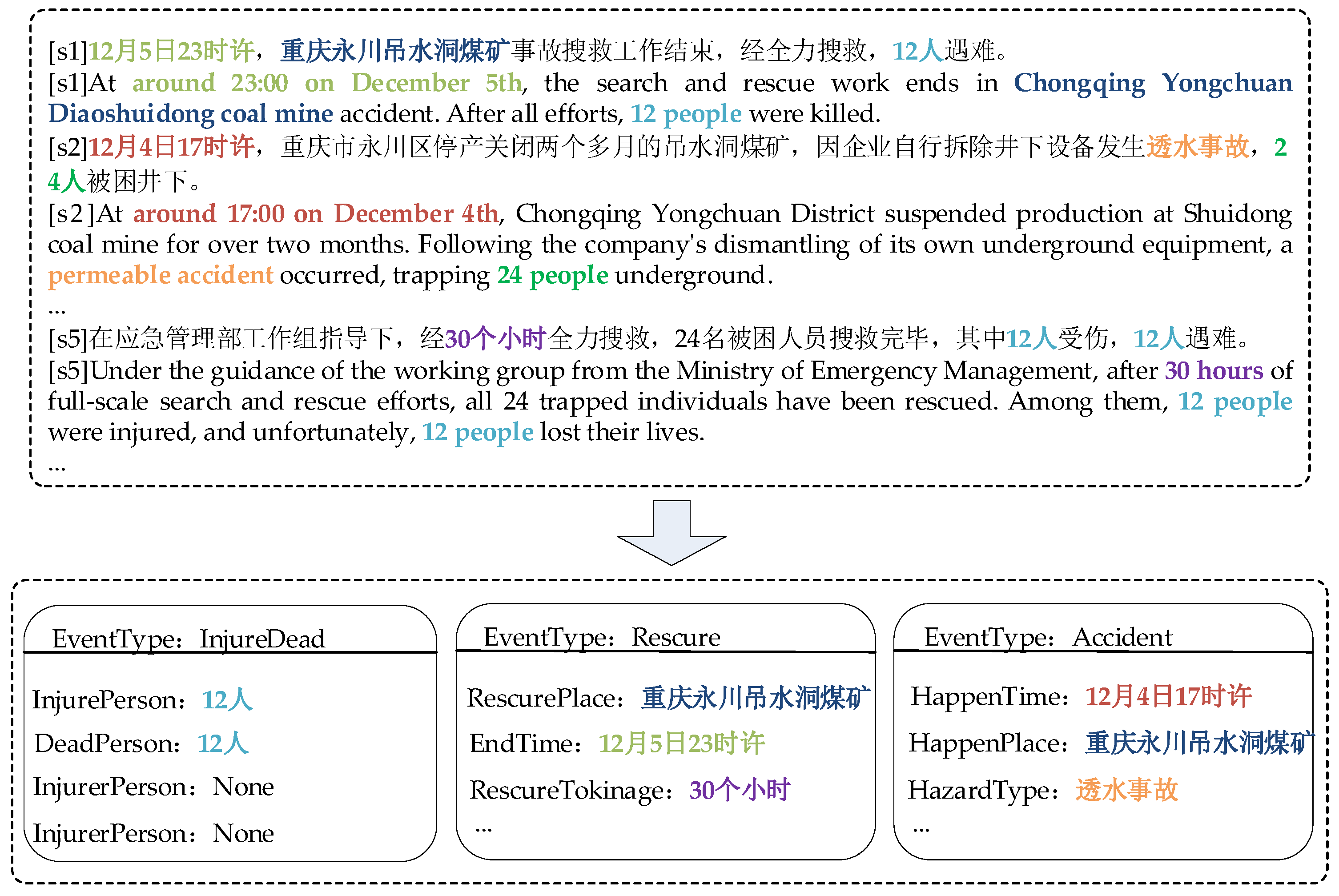

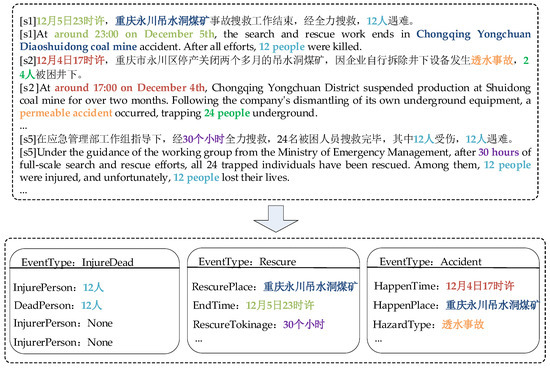

The goal of event extraction is to identify pre-specified types of events and their corresponding event arguments from plain text. Numerous previous studies have primarily focused on sentence-level event extraction [2,3,4], with most of these studies evaluating the ACE dataset [5]. These sentence-level approaches make predictions within a single sentence and are incapable of extracting events that span multiple sentences. In the real world, the information of event arguments cannot be fully obtained from a single sentence. For example, as shown in Figure 1, the event argument of the “Accident” event “重庆永川吊水洞煤矿”(Chongqing Yongchuan Diaoshuidong coal mine) and “12月4日17时许”(around 17:00 on 4 December) are distributed in two different sentences, s1 and s2, so we devoted ourselves to the study of DEE. To extract structured event information from documents, researchers have proposed numerous model methods and datasets for model training and validation. In the following sections, we present the DEE datasets and DEE models and methods that have been put forth in previous works.

Figure 1.

Event extraction instance. Among them, “12 人” corresponds to “12 people”; “重庆永川吊水洞煤矿” corresponds to “Chongqing Yongchuan Diaoshuidong coal minecorresponds”; “12月5日2时许” corresponds to “around 23:00 on December 5th”; “30个小时” corresponds to “30 hours”; “12月4日17时许” corresponds to “around 17:00 on December 4th”; “透水事故” corresponds to “permeable accident”.

A wide range of research scholars have conducted extensive work on DEE datasets to train and validate DEE model methods. They have constructed numerous DEE datasets, such as the MUC-4 [6] dataset. This dataset consists of 1700 documents annotated using an associated role–population template. Additionally, the Twitter dataset was constructed by collecting and annotating English tweets posted in December 2010. It includes 20 event types and 1000 tweets. The WIKIEVENTS dataset, published by Li et al. [7], as a document-level benchmark dataset, utilizes English Wikipedia articles as the data source. Yang et al. [8] performed experiments on four types of financial events, namely equity freeze events, equity pledge events, equity repurchase events, and equity increase events. They tagged a total of 2976 announcements.

Although numerous DEE datasets have been constructed by domestic and foreign researchers in previous works, there are two issues. Firstly, most of these datasets are in English, making it impossible to train and validate Chinese DEE model methods. Secondly, there is a lack of DEE datasets specifically constructed for the field of emergency events. Hence, the current priority is to develop Chinese document-level emergency event extraction datasets and address the issue of missing datasets.

Regarding DEE models and methods, numerous scholars have directed their focus towards addressing two challenges: argument scattering and multiple events. Specifically, argument scattering refers to the situation where event arguments are dispersed across multiple sentences in a document. For instance, as shown in Figure 1, the event arguments “重庆永川吊水洞煤矿”(Chongqing Yongchuan Diaoshuidong coal mine) and “30个小时”(30 h) for the “Rescue” event are found in two different sentences, s1 and s5, within the document. On the other hand, multiple events indicate that a document can encompass various distinct events. For example, Figure 1 includes three different events: “InjureDead”, “Rescure”, and “Accident”.

In a previous study, Yang et al. [8] proposed the DCFEE model, which extracts trigger words and arguments in a sentence-by-sentence manner. Subsequently, a convolutional neural network is employed to classify each sentence and determine whether it qualifies as a key sentence. Additionally, to acquire comprehensive event arguments, an argument complementation strategy is proposed to obtain arguments from the surrounding sentences of the key event’s location for supplementation. Zheng et al. [9] redesigned the DEE task to treat the DEE task as a table-filling task using a trigger-word-free approach to populate candidate entities into a predefined event table. Specifically, they modeled DEE as a continuous prediction paradigm in which arguments are predicted in a predefined role order and multiple events are also extracted in a predefined event order. While the method achieves the goal of the DEE task through trigger-word-free extraction, it introduces error propagation issues as the former argument identification results do not consider the latter argument identification results, given that arguments are predicted in a predefined role order during the argument identification process. Yang et al. [10] proposed an end-to-end model in which information about multiple events as well as event arguments is extracted simultaneously from the document in a parallel manner using a multi-grain decoder after the overall document representation is obtained using multiple encoders. Based on previous work, we can divide the DEE task into three subtasks: candidate entity extraction, event type detection, and argument identification. Among them, candidate entity extraction involves extracting entities related to events from the text; event type detection involves determining the types of events present in the text; and argument identification involves identifying the arguments belonging to an event among the candidate entities. Candidate entity extraction, as the first subtask of DEE, affects the effectiveness of the two subsequent subtasks. Previous research has focused on addressing issues such as scattered arguments and multiple events, overlooking the presence of role overlapping in the first subtask. However, this overlapping significantly impacts the performance of both subsequent subtasks and the overall DEE task. Role overlapping refers to the phenomenon of candidate entities playing multiple roles in the same event or in multiple different events. For example, in Figure 1, the entity “12人”(12 people) plays the role of “InjureDead” and “DeadPerson” in the event of “InjureDead”; the entity “重庆永川吊水洞煤矿”(Chongqing Yongchuan Diaoshuidong coal mine) plays the role of “RescurePlace” in the “Rescure” event and “HappenPlace” role in the “Accident” event.

To address the above-mentioned problems of missing datasets and role overlapping, we have done the following two things. On the one hand, to address the lack of datasets, we defined a framework for unexpected event extraction by analyzing and summarizing information of Chinese emergency events, and constructed a Chinese document-level emergency event extraction dataset CDEEE. We defined 4 event types and 19 role types in this dataset. Furthermore, we have provided annotations for three specific issues: argument scattering, multiple events, and role overlapping. Ultimately, the CDEEE dataset has been annotated, consisting of 5000 documents and 10,755 events. On the other hand, to address the role overlapping problem, we propose the DEE model RODEE. In this model, we first use the pre-trained language model RoBERTa-wwm [11] to embed the text representation and then encode it using Transformer to obtain the text representation, which gives us an overall understanding of the text. Specifically, we designed two separate models to represent the start position information and end position information of the candidate entities, and use multiplicative attention to combine the two to obtain the scoring matrix, so as to predict the candidate entities and assist in the event extraction task.

Overall, our main contributions are in the following three areas:

- We constructed a Chinese document-level emergency event extraction dataset called CDEEE through manual annotation. During the annotation process, we addressed not only the problem of scattered arguments and multiple events but also the issue of role overlapping.

- We propose RODEE, a DEE model designed to handle the role overlapping problem. It utilizes two independent matrices to represent the start and end positions of candidate entities. Additionally, it employs multiplicative attention to generate a score matrix for predicting entities with role overlapping, thereby assisting in the event extraction task.

- We compared the RODEE model approach with the existing DEE model approach using the CDEEE dataset, and the experimental results demonstrate that the RODEE model approach surpasses the performance of the existing DEE model approach.

2. Related Work

2.1. Document-Level Event Extraction Dataset

Event extraction datasets serve as the cornerstone of event extraction research, and in our investigation, we explore the existing DEE datasets.

MUC-4 [6]: MUC-4 was presented at the Fourth Conference on Message Comprehension. The dataset consists of 1700 documents, where five types of events are annotated using an associated role-populated template. The dataset is mainly dedicated to the challenge of argument scattering in task-oriented scenarios.

WIKIEVENTS: The WIKIEVENTS was published by Li et al. [7] as a benchmark dataset at the document level. The dataset is derived from English Wikipedia articles that describe real-world events.

Google: The Google dataset is a subset of the GDELT Event Database1, consisting of event-related word searches and containing documents related to 30 event types sourced from 11,909 news articles.

Twitter: The Twitter dataset was collected from tweets released in December 2010 applying the Twitter Stream API. It includes 20 event types and 1000 tweets.

NO.ANN, NO.POS, NO.NEG [8]: The researchers studied four types of financial events: equity freeze events, equity pledge events, equity repurchase events, and equity increase events. They labeled 2976 announcements. NO.ANN represents the number of announcements that can be automatically labeled for each event type. NO.POS represents the total number of positive case mentions, while NO.NEG conversely represents the number of negative mentions.

ChFinAnn [9]: Using financial reports as the data source, we use the event knowledge base for event annotation in a remote-supervisory-based manner. Based on NO.ANN, NO.POS, and NO.NEG, the Chinese DEE dataset in finance was further enriched and extended to include 32,040 documents and 5 event types, including equity freeze, equity repurchase, equity reduction, equity increase, and equity pledge.

Roles Across Multiple Sentences (RAMS): RAMS was annotated by Eber et al. [12], who used news as the data source. The dataset contains 3194 documents, encompassing 139 event types and a total of 9124 events.

We performed preliminary statistics on the available DEE datasets, as shown in Table 1.

Table 1.

Document-level event extraction dataset.

The existing DEE datasets are mostly annotated in English, which is very helpful for DEE in English. However, the available datasets for Chinese DEE tasks are relatively sparse and do not cater to DEE tasks specifically related to emergency events.

2.2. Document-Level Event Extraction

DEE can extract information about event arguments of interest to users directly from documents, and thus has received a great deal of attention from scholars. In some studies [13,14], the document-level event argument extraction task is considered a populated paradigm that follows the MUC-4 task setting and is dedicated to extracting event arguments scattered in documents. In addition, Yang et al. [8], Huang et al. [15], and Li et al. [7] follow the approach of first detecting the event type and then performing event argument extraction. Specific event trigger words are first identified to determine the event type, and then event arguments beyond the sentence boundaries are extracted. Nevertheless, the approach of event extraction based on trigger words does not perform effectively in the DEE task due to the frequent occurrence of events in documents that either possess obscure trigger words or lack trigger words altogether. Therefore, researchers [9,10,16,17] attempted to perform DEE in a triggerless manner, where the event type is directly determined based on the document semantics. These approaches have addressed, to some extent, the problem of argument scattering and multiple events in the DEE task, and they have achieved good results. However, the role overlapping problem is ignored, and the candidate entities with a role overlapping problem cannot be extracted accurately, which further affects the overall performance of the event extraction task.

2.3. Event Role Overlapping

The traditional joint methods [18,19,20,21,22] for the role overlapping problem involve both trigger word extraction and candidate entity extraction. They solve the problem in a sequential annotation manner, where trigger words and candidate entities are extracted by marking sentences only once. However, these methods cannot solve overlapping event extraction because overlapping characters can lead to label conflicts when multiple labels are required. In addition, some scholars [23,24,25,26] perform trigger word and candidate entity extraction in different stages. Although pipelined approaches have the potential ability to resolve role overlapping, they usually lack explicit dependencies between trigger words and candidate entities, and they also suffer from error propagation. Among these studies, Yang et al. [27] and Xu et al. [28] constructed multiple classifiers to solve the role overlapping problem, which achieved some success in sentence-level event extraction tasks. The above studies, all of which have proposed their own methods for and insights into the role overlapping problem, have contributed to the alleviation of this problem to some extent. However, since the above studies are based on sentence-level event extraction, they cannot be directly transferred to DEE tasks. Therefore, the role overlapping problem in DEE tasks is still a problem that needs to be studied.

3. Chinese Document-Level Emergency Event Extraction Dataset

3.1. Data Choice

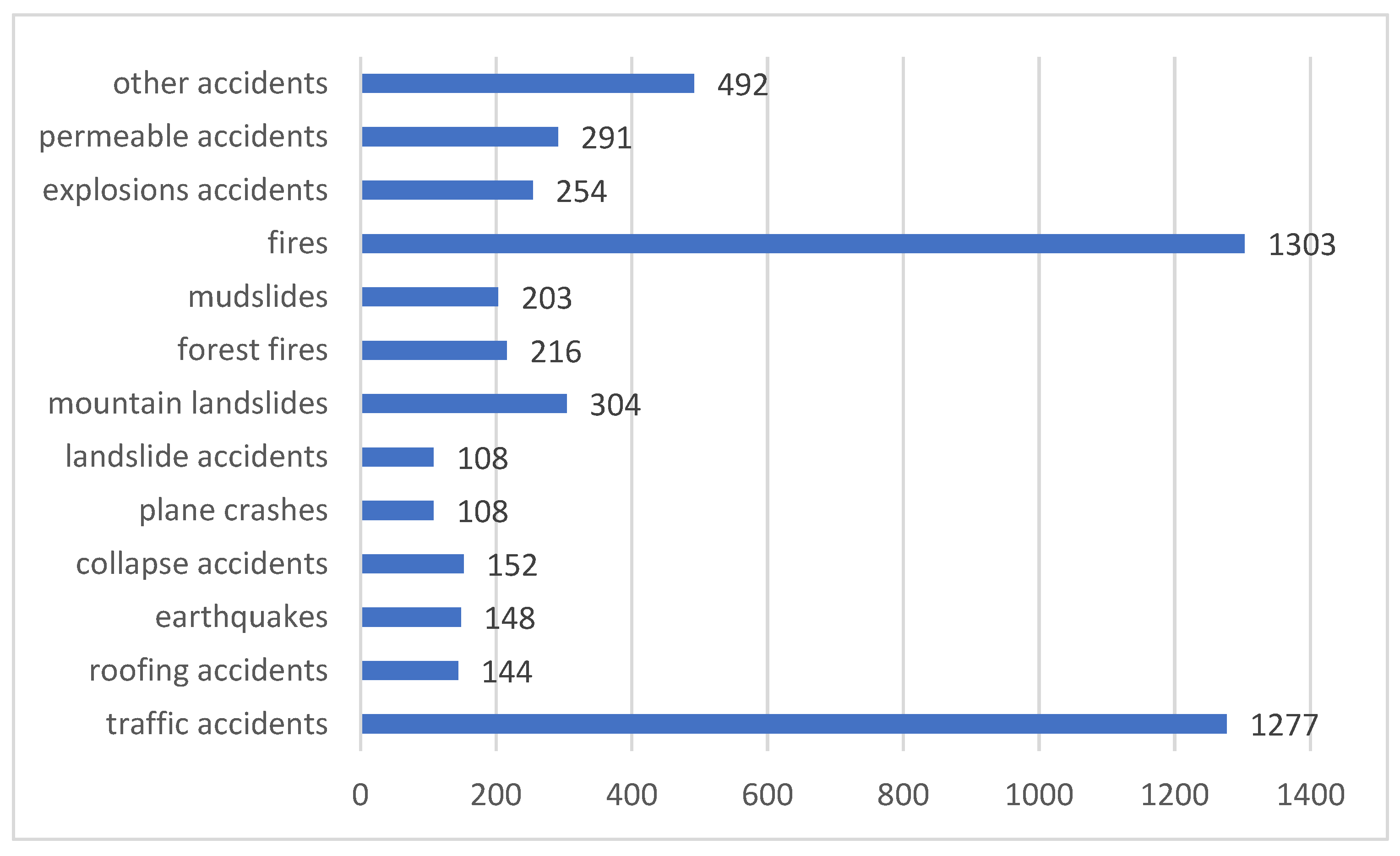

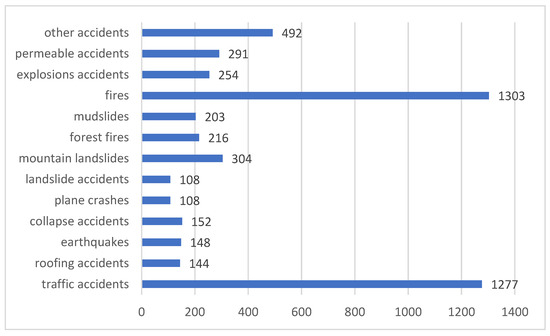

To generate high-quality corpus data, we collected 13 types of common emergency event news reports, including traffic accidents, roofing accidents, earthquakes, collapse accidents, plane crashes, landslide accidents, mountain landslides, fires, mudslides, explosion accidents, permeable accidents, forest fires, and other types of accidents, from the National Emergency Alert Information Release Network (http://www.12379.cn/html/gzaq/fmytplz/index.shtml; (accessed on 10 November 2022)), China News (https://www.chinanews.com.cn/; (accessed on 10 November 2022)), Sohu News (https://news.sohu.com/; (accessed on 10 November 2022)), Sina News (https://news.sina.com.cn/; (accessed on 10 November 2022)), People’s Daily (http://www.people.com.cn/; accessed on 10 November 2022), Tencent News (https://news.qq.com/; (accessed on 10 November 2022)), and Today’s Headlines (https://www.toutiao.com/; (accessed on 10 November 2022)). From the collected information, we screened out news reports that described the overall situation of the emergency in detail as our data to be labeled, and after screening we collected a total of 5000 news reports that met the requirements. Figure 2 details the number of data collected on various types of emergency events.

Figure 2.

Statistics on the number of various emergency events.

3.2. Dataset Construction

The construction of the event extraction dataset can be divided into two parts: the development of the event extraction structure and the event extraction data annotation. The event extraction structure, as the basis of the event extraction task, specifies the structured information to be annotated and extracted. Therefore, the characteristics of the information to be annotated and the characteristics of the event extraction task should be considered in the process of developing the event extraction structure.

On the one hand, we analyzed and summarized the 5000 collected news reports and found that there were numerous types of emergency events, making it impractical to enumerate them all. Finally, we classified the emergencies based on whether the emergencies were caused by natural factors, and the original type of emergencies became a role attribute of the “HazardType” emergencies. At the same time, four types of events were defined based on the emergency event itself, the casualties resulting from the emergency event, and the rescue efforts by the organization. These events were categorized as “InjureHead”, “Rescure”, ”Accident”, and “NaturalHazard”, and each event is described in Appendix A.

On the other hand, in the process of developing event extraction structure, the verb that causes a change in things or states is generally used as the trigger word, and the time and place of the event as well as the participants are used as the key factors of the event. However, in the research related to DEE, it can be found that there are often events in a chapter that do not have obvious trigger words or do not contain trigger words, so the trigger-word-based event extraction method does not work well in DEE. Therefore, Zheng et al. [9] redesigned the DEE task and constructed ChFinAnn, a DEE dataset without trigger words, and validated its performance. The experimental results show that this trigger-word-free construction method met the requirements of the DEE task and greatly improved the efficiency of the data annotation work. Since our study also focused on document-level tasks, we adopted this trigger-free approach to formulate the event extraction structure.

Considering the above two aspects together, we defined 19 event role types to describe event information. In Appendix A, we introduce each role and provide an explanation of the event type to which it belongs.

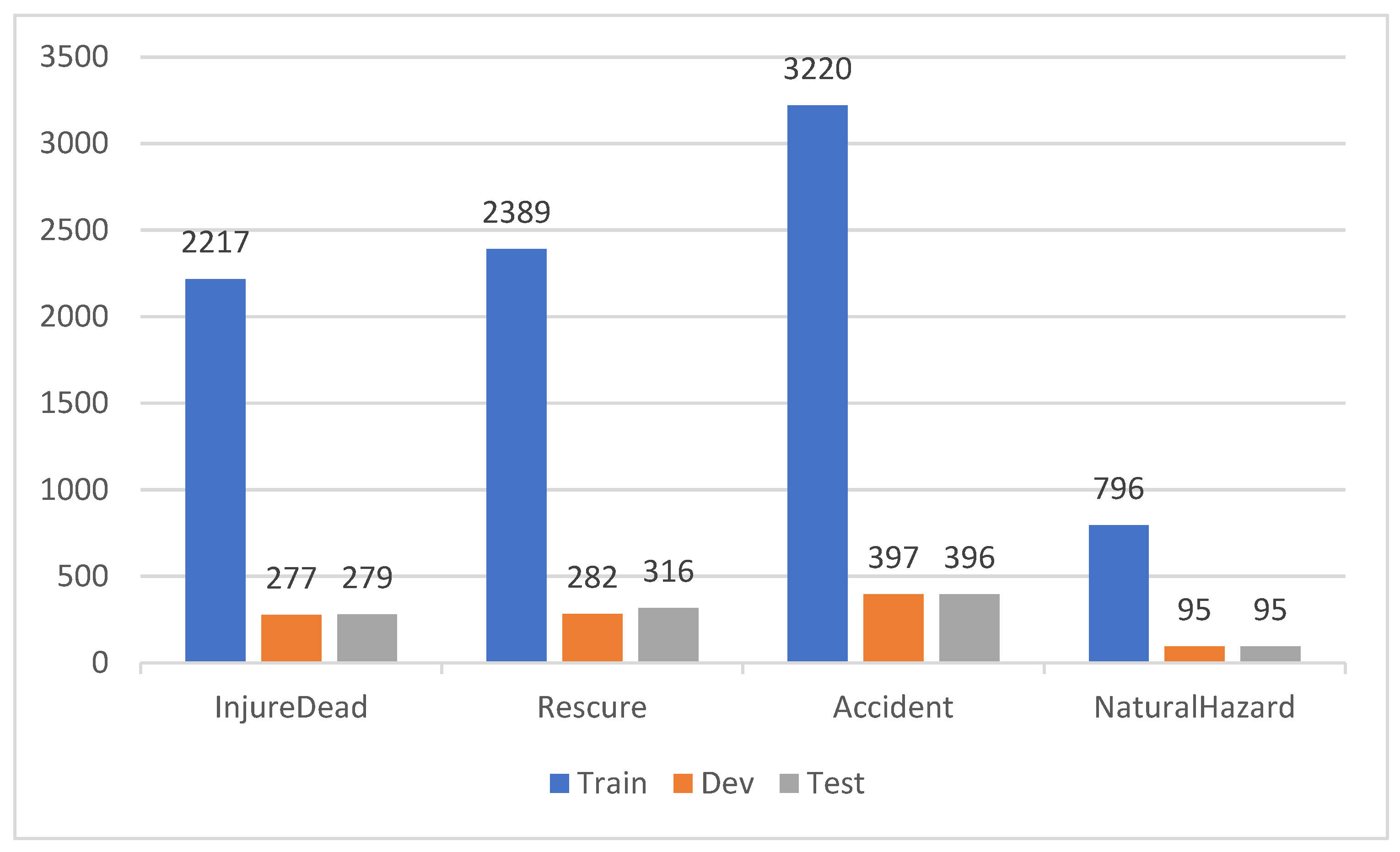

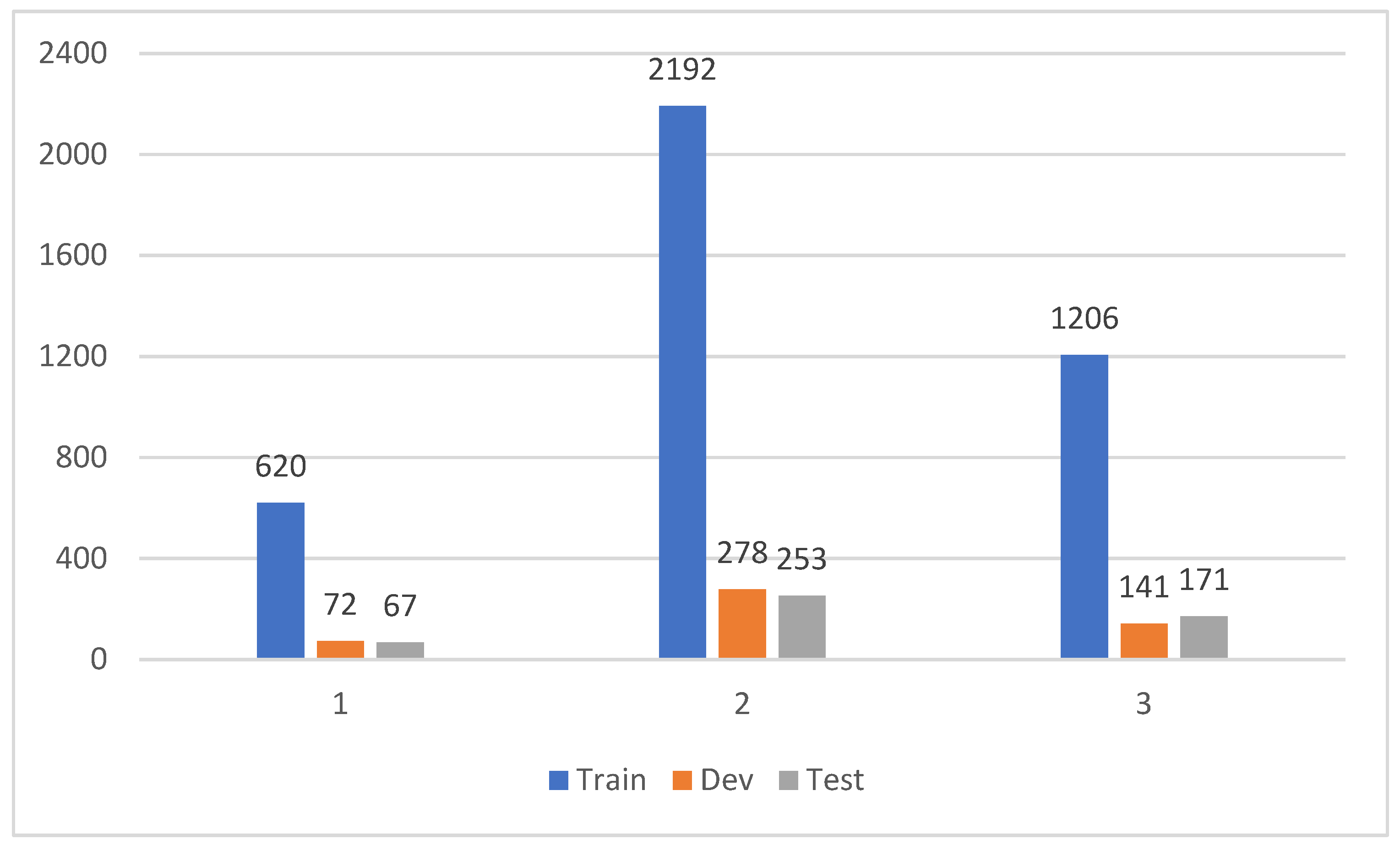

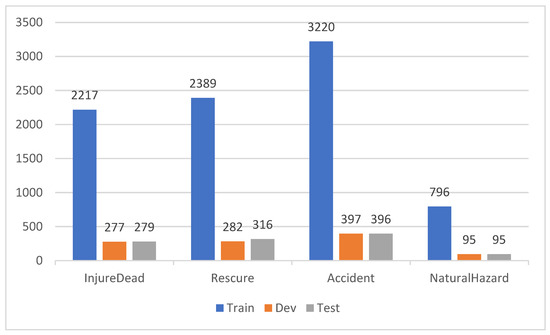

After the development of the event extraction structure, we used manual annotation to annotate the 5000 emergency event documents we collected according to the developed event extraction structure. We annotated more than 10,000 event information in total, and divided the annotated data into a training set, validation set, and test set according to a ratio of 8:1:1. Figure 3 shows the statistical information about the number of events in each category after dividing the dataset.

Figure 3.

Statistics on the number of event types in the CDFEE dataset.

In the process of data annotation, we made the following arrangements in the annotation process to ensure the reliability and accuracy of the annotated data: the annotated data of annotator A will be given to annotator B for validation, the annotated data of annotator B will be given to annotator C for validation, the data of annotator C will be validated by annotator A, and so on. Additionally, in order to further enhance the verification process, we employed the voting method to resolve disagreements during the verification process. After manually verifying the dataset, we proceeded to further assess its quality by utilizing the existing DEE model for final verification, as described in the experimental section.

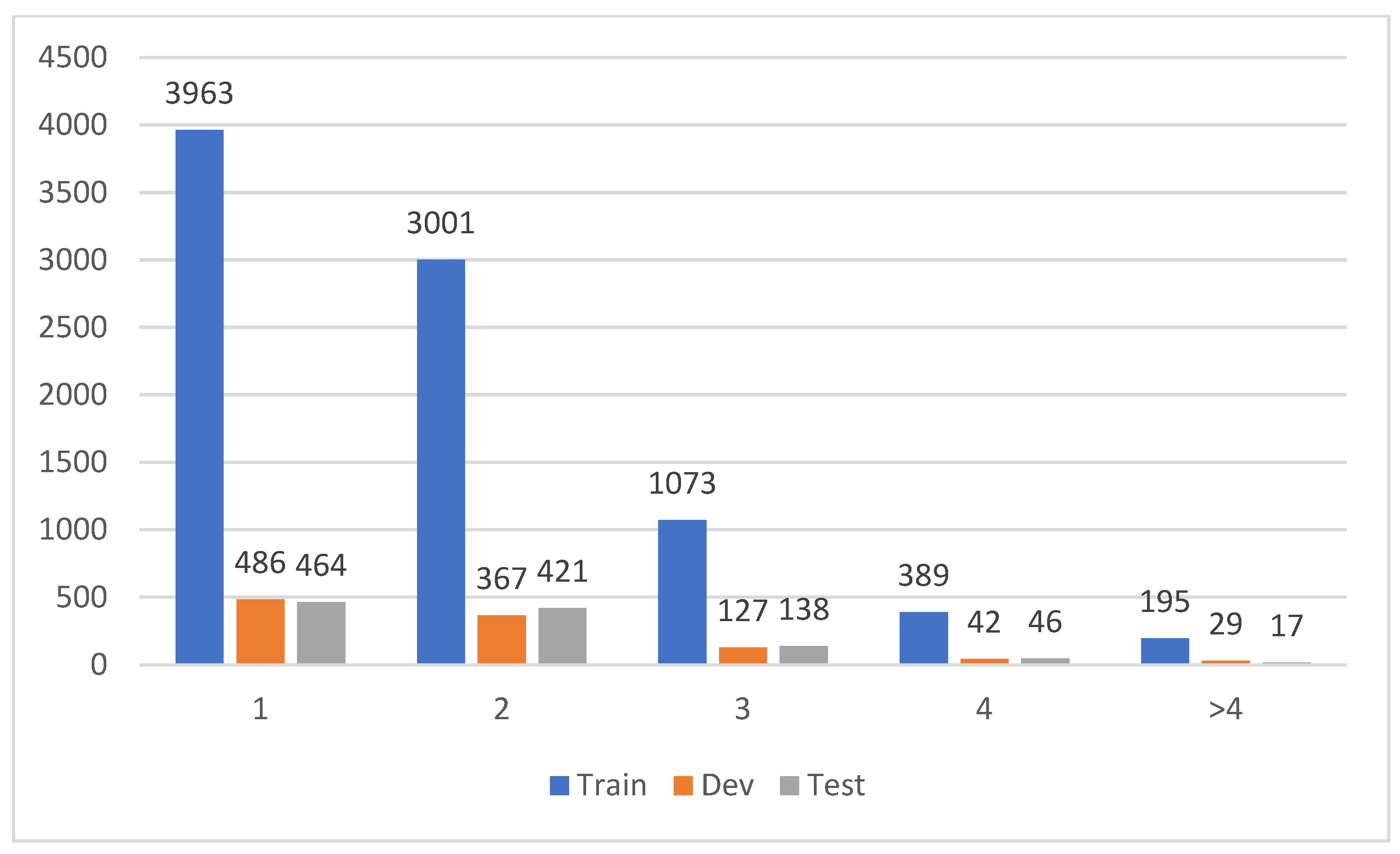

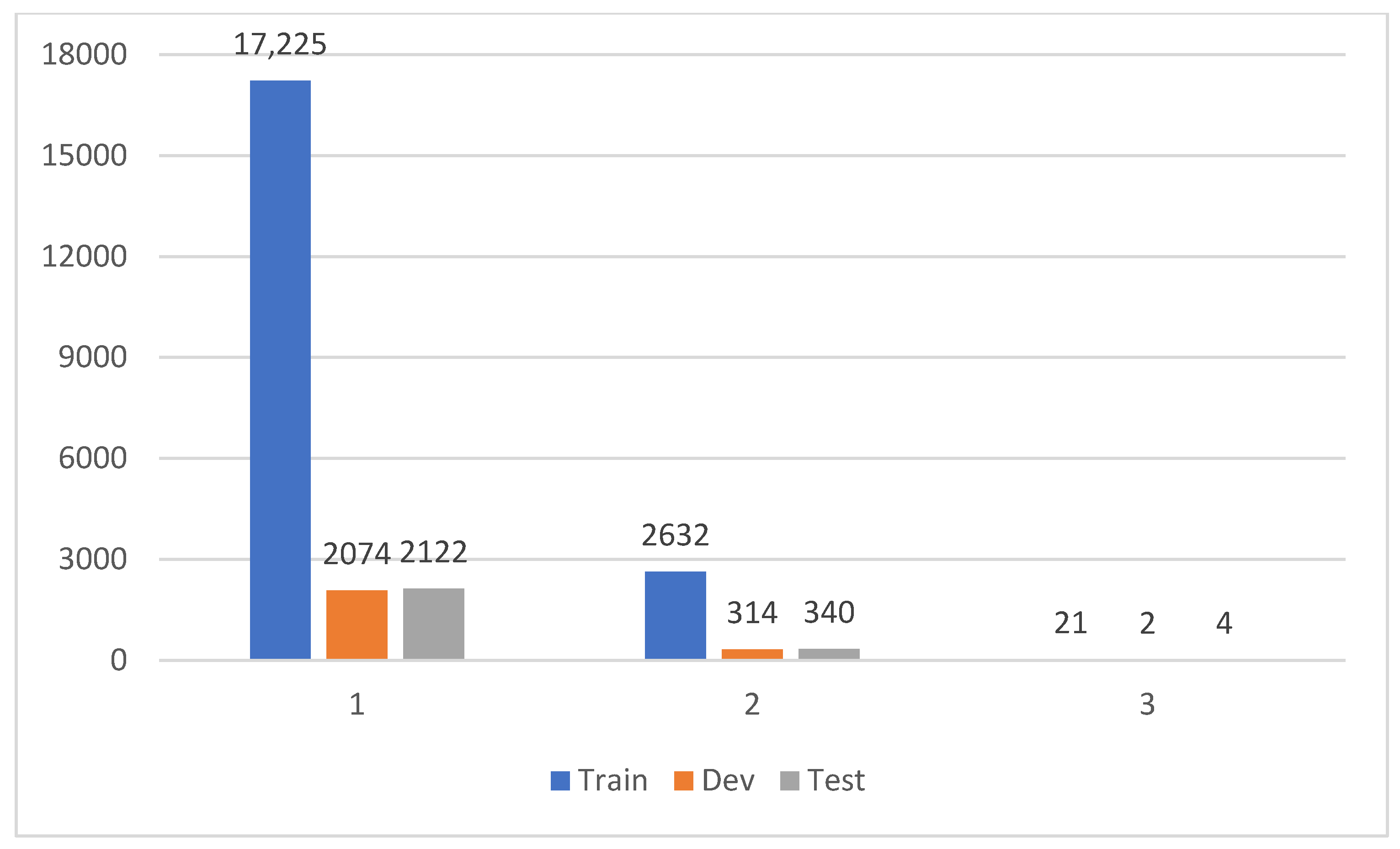

3.3. Dataset Features

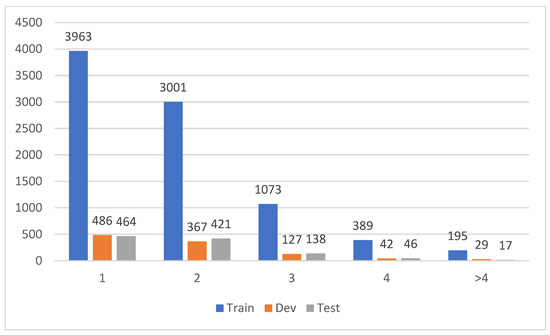

We not only annotated the DEE task with the unique problem of argument scattering and multiple events but also addressed the issue of role overlapping, which is often overlooked in the DEE task. Specifically, first, we annotated the event elements not only in a single sentence but also in the whole document. Figure 4 details the number of sentences that contain event element information. The Y-axis represents the number of events. We can see from the figure that although some events can find all event elements in a single sentence, for most events, the event elements need to be found in multiple sentences. This further highlights the prevalence of argument scattering in the DEE task.

Figure 4.

Statistics for the number of sentences involved in event arguments.

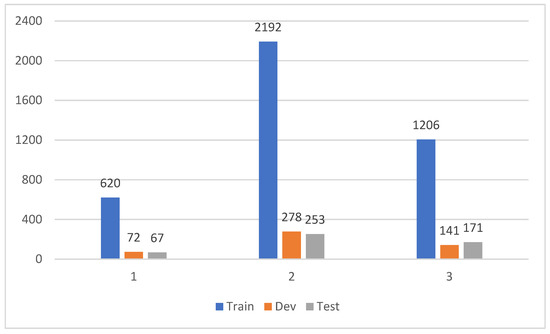

Second, we marked several different events in the same document, and Figure 5 shows the number of events contained in the document, where the Y-axis represents the number of documents. We can see that most of the documents contained two or three events, except for a few documents that contained only one event. This is a good reflection of the fact that there are often multiple events in a document.

Figure 5.

Statistics for event count of documents.

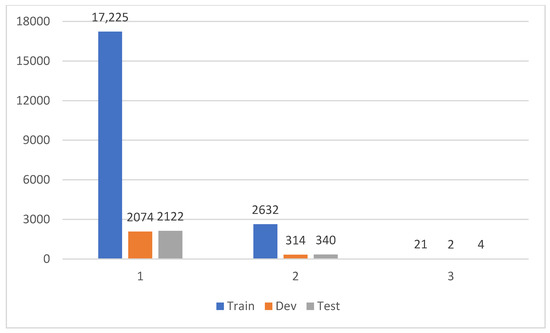

Third, we labeled the role overlapping problem where a candidate entity plays different roles in one or more events, and Figure 6 shows the number of roles played by the candidate entities, with the Y-axis representing the number of events. We can see from the figure that although in most cases a candidate entity plays only one role, there are still a significant number of candidate entities that play two or three different roles at the same time.

Figure 6.

Statistics on the number of roles played by candidate entities.

3.4. Dataset Summary

Compared with existing partial event extraction datasets, our proposed dataset CDEEE has the following advantages. First, we solve the problem of missing datasets in the field of document-level emergency event extraction in Chinese, which allows the relevant models to be trained and validated. Second, our dataset is manually annotated with more documents than most of the existing DEE datasets at a size of 5000 documents. Third, we have annotated the role overlapping problem, which is more reflective of the real environment and reflects the text complexity.

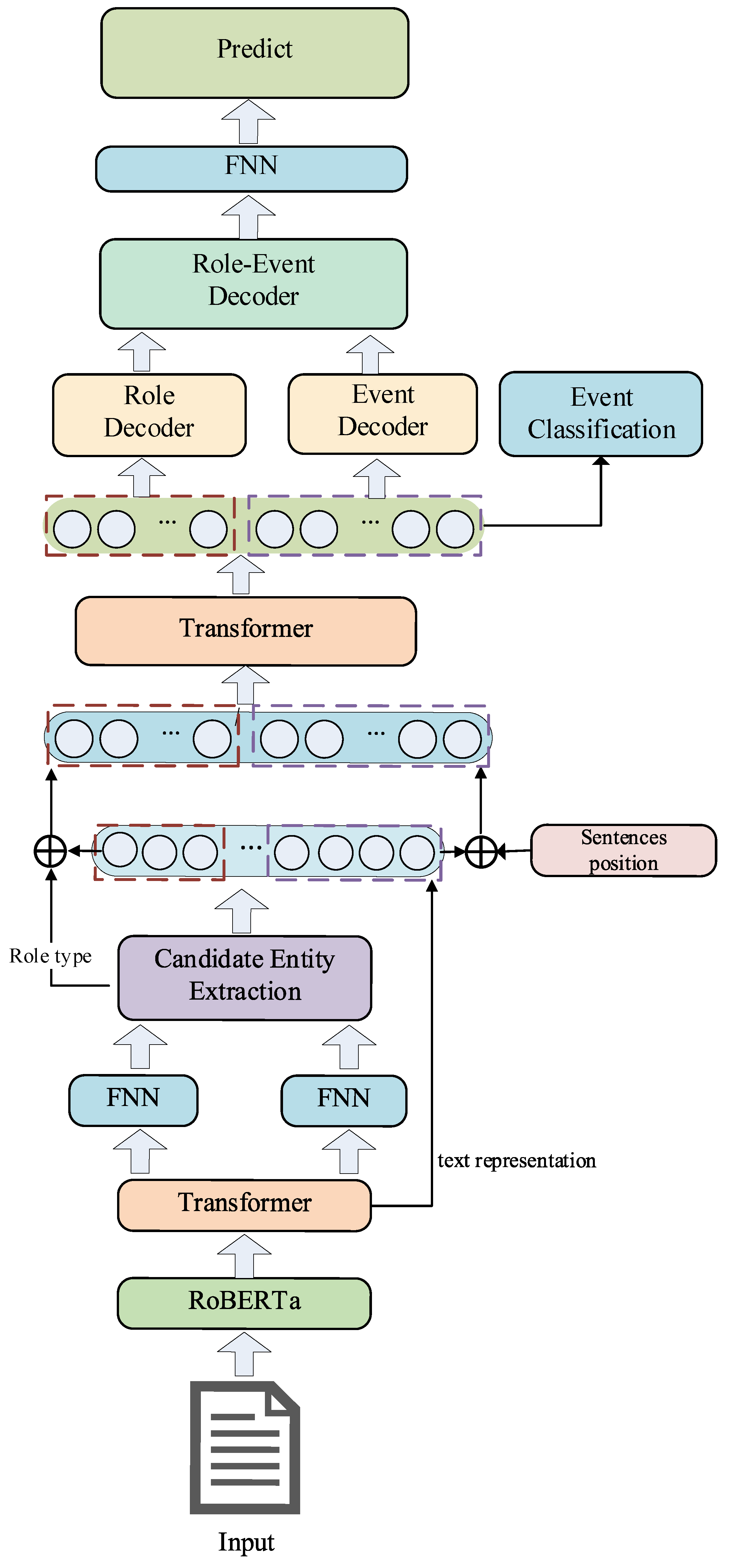

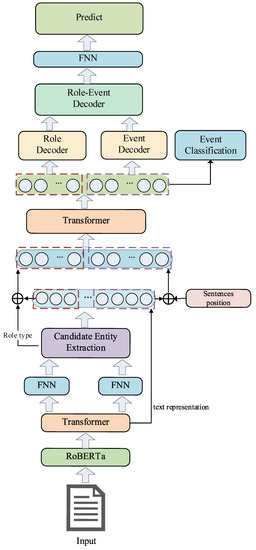

4. Proposed Method

The model described in this paper comprises three main subtasks: candidate entity extraction, event type detection, and argument identification. First, the text embedding and text representation are obtained by the pre-trained language models RoBERTa-wwm and Transformer [29]. Then, we utilize two independent modules to extract the head and tail position information of candidate entities. The interaction between the head and tail position information is achieved through a multiplicative attention mechanism, resulting in a score matrix for predicting candidate entities. Subsequently, the candidate entity representation is fused with the sentence representation and the document representation is obtained for event type detection using Transformer. Finally, we decode the event and role information using a multi-granularity decoder for the recognition and prediction of argument elements. The structure of the proposed model is shown in Figure 7.

Figure 7.

Overall model structure of RODEE.

The DEE task can be described as extracting one or more structured events, denoted as , from an input document consisting of sentences, where represents the number of events contained in the document. Each event extracted from the document contains event types and their associated roles. We denote the set of all event types as and the set of all role types as . The structured event information extracted from the document is denoted as , where represents the event type. The role information corresponding to each event type and the arguments used to populate the role information are denoted as and , respectively.

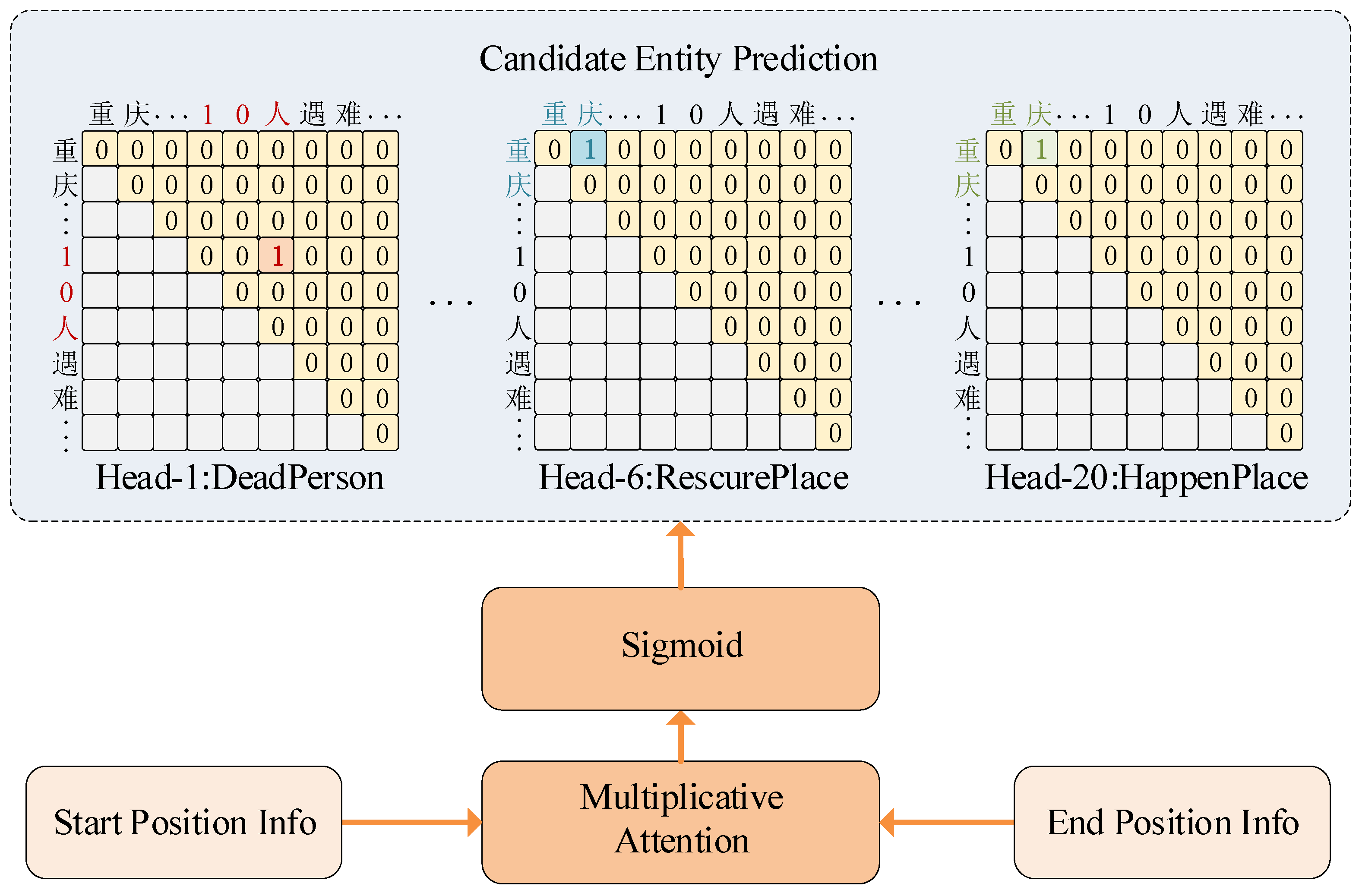

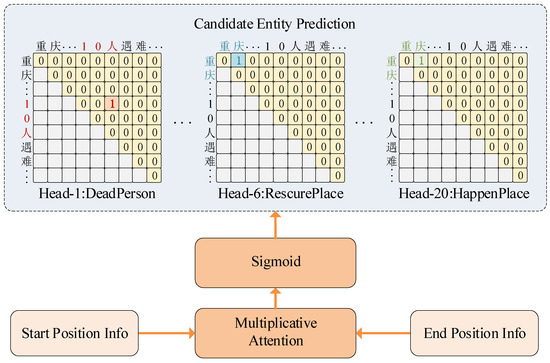

4.1. Candidate Entity Extraction

Candidate entity extraction, as the first subtask of the DEE task, has a huge impact on the performance of the two subsequent subtasks of entity type detection and argument identification. However, in previous candidate entity extraction tasks, candidate entities are usually considered as flat entities and the candidate entity extraction task is accomplished using sequence annotation. Although this sequence labeling-based approach achieves better results in the task of flat entity extraction, it fails to address the issue of overlapping roles and cannot accurately extract entities that have multiple roles. To solve this problem, we firstly use two different matrices to represent the head position information and tail position information of candidate entities respectively in this stage. We then employ multiplicative attention to facilitate interaction between the two matrices, enabling the extraction of deep information. This process yields a score matrix, which is subsequently used to accomplish candidate entity extraction.

Specifically, given a document , where each sentence is represented as , we first apply embedding using the pre-trained language model RoBERTa to obtain an embedded representation , where represents the sentence length. Then, to obtain the textual representation, we encode the embedded representation of the text using the Transformer encoder, and eventually we can obtain the text representation for each sentence as follows:

where , , are the hidden layer sizes.

Finally, in order to accurately extract the overlapping candidate entities of characters, we use two FNNs networks to generate two different matrices: and . These matrices represent the head position information and tail position information of candidate entities, capturing the contextual information of the target characters. By using different matrices to represent the head position information and tail position information of the candidate entities and training them, the start position and end position of the candidate entities can be identified, respectively. Since the contexts of the start and end positions of the candidate entities are different, using different matrices to represent the head position information and tail position information of the candidate entities, respectively, greatly improves task accuracy compared with using the output of Transformer directly. We employ multiplicative attention, as illustrated in Figure 8, to facilitate the interaction between the start position information and end position information. This interaction produces a score matrix, denoted as , for candidate entity prediction, where is the number of predefined role types plus one (i.e., non-predefined role types). We obtain the score matrix in the following way:

where , , and are hyperparameters, indicating the window size used to obtain the contextual embedding of the target character. In this case, we set its value to 64, indicating that 64 characters preceding and following the target character are obtained to form the contextual representation. This contextual representation serves as the start or end position information for the target character. and represent the vector representations of the starting position information and ending position information of the candidate entity with the role type and entity span , where . In order to facilitate interaction between the starting position information and ending position information of the target characters for each role type, we transpose the representation of the ending position information of the target characters. This involves swapping the last two dimensions of , denoted as .

Figure 8.

Candidate entity prediction. Among them, “重庆” corresponds to “Chongqing”; “10人遇难” corresponds to “10 people died”.

After obtaining the score matrix , we generate the candidate entity prediction matrix using the following formula:

where is a trainable matrix, and is the candidate entity prediction matrix in Figure 8. Finally, after the transformation, we obtain the prediction results for the start position, end position, and role type of the candidate entities, as shown in Figure 8. For the candidate entities extracted from each sentence, we denote them as the triplet , where represents the start position of the candidate entity, represents the end position of the candidate entity, and represents the role type of the candidate entity. For all the candidate entities extracted from the whole document, we denote them as the set . For each candidate entity, we represent them with the quadruplet , where denotes the sentence index of the candidate entity.

Regarding the loss function in this section, the cross-entropy loss function is used as follows:

4.2. Event Type Detection

Before performing event type detection, our model needs to understand the document as a whole, which means obtaining document-level contextual encoding information. To obtain a holistic representation of the document, we employ the Transformer encoder, enabling the interaction between all sentence information and candidate entity information. Specifically, to obtain comprehensive documents encoding information, we first use MaxPoolling to obtain the textual representation of candidate entities and each sentence textual representation . This ensures that the textual representation of candidate entities has the same dimension as the sentence representation, facilitating interaction between the two. Then, the sentence information and the candidate entity information are separately embedded in two aspects. The positional information of the sentence is integrated with the sentence text representation after the MaxPooling operation. On the other hand, we embed the role type of the candidate entity after performing the same MaxPooling operation. This process is applied separately for candidate entities with multiple role types, and the multiple embeddings are fused together. Finally, the completed embedded sentence text representation and the candidate entity text representation are fed to the Transformer encoder, resulting in the interaction between them and obtaining the entire document representation. Specifically, as shown in the following equation:

where represents the sentence representation within the document representation, represents the candidate entity representation represents the document representation, represents the number of candidate entities extracted from the document, PE represents sentence position information, and represents feature fusion embedding.

After obtaining the overall document representation, we can use the sentence representation from the document representation for event type detection. Specifically, we bifurcate each event type by performing the MaxPolling operation on . That is, for each event type, we carry out the following:

where represents the probability that the i-th event is an event of type , and represents the number of predefined event types.

Regarding the loss function in this section, the cross-entropy loss function is used as follows:

4.3. Argument Identification

In this stage, we need to match and fill in the arguments and event roles for the existing events. Following Yang et al. [10], we use a multi-granularity decoder to extract events in a parallel manner. This method consists of three parts: an event decoder, a role decoder, and an event-to-role decoder.

The event decoder is designed to support the parallel extraction of all events and is used to model interactions between events. A learnable query matrix is generated for event extraction, where represents the number of events contained in the document and is a hyperparameter. The event query matrix is then passed through a non-autoregressive decoder, which consists of multiple identical stacks of Transformer layers. In each layer, there is a multi-head self-attention mechanism that simulates interactions between events. Additionally, there is a multi-head cross-attention mechanism that integrates the document-aware representation into the event query :

where .

The role decoder is designed in a similar way to support parallel filling of all roles in the event and modeling interactions between roles. A learnable query matrix is generated for event extraction, where represents the number of roles associated with the corresponding event. Then, the role query matrix is fed into a decoder with the same architecture as the event decoder. Specifically, the self-attention mechanism can model relationships between roles, and the cross-attention mechanism can integrate candidate entity representations with the document representation.

where .

In order to generate different events and their corresponding roles, we designed an event-to-role decoder to simulate the interaction between event information and role information.

where .

Finally, after decoding with a multi-granularity decoder, we transform event queries and role queries into predicted events and their corresponding predicted roles. To filter out false events, we assess whether each predicted event is non-empty. Specifically, predicted events can be obtained through the following approach:

where is a learnable matrix.

Afterwards, for each predicted event with predefined roles, we decode the predicted arguments by filling the candidate indices or null values with an class classifier.

where , and are learnable matrices, and . It is worth noting that we carried out some dimensional transformations during the actual operation process.

So far, we have obtained predicted events, and the candidate entities for each role corresponding to each event, . This completes the event extraction, as well as the identification, matching, and filling of the corresponding arguments.

Regarding the loss function for this section, we first use the assignment problem in operations research [30] to find the optimal assignment between the predicted events and the ground truth events:

where represents the permutation space with a length of , and denotes the pairwise matching cost between the ground truth data and the predicted data with the index . To account for all the predicted instances of event roles, we define as follows:

where indicates that the event is not empty. The optimal assignment can be effectively calculated using the Hungarian algorithm [30]. Then, based on all optimal assignments, we define a loss function with negative log likelihood:

Finally, our overall loss function considers the candidate entity extraction loss , the event type detection loss , and the event argument recognition loss , which involves filling the entity–role pairs, as shown below:

where , , and are hyperparameters.

5. Experiments

5.1. Experimental Setting

We used our labeled Chinese document-level unexpected event extraction dataset CDEEE as our experimental data. Our dataset contains a total of 5000 documents, including four event types: “InjureDead”, “Rescure”, “Accident”, and “NaturalHazard”.

For a fair comparison, we adopted the evaluation standard used in Doc2EDAG and DE-PPN. Specifically, for each predicted event, we select the most similar ground truth without a replacement to calculate precision (P), recall (R), and the F1 measure (F1 score). Since an event type often includes multiple roles, we calculated micro-averaged role-level scores as the final DEE metric. Since event types typically consist of multiple roles, the micro-F1 value is calculated at the role level, serving as the final metric. To better reflect the performance of our model, the hyperparameter information in the experiment section follows the settings of the super parameters in the DE-PPN model. We have provided a detailed description of the experimental environment and hyperparameter information in Appendix A.

5.2. Comparison Experiment and Result Analysis

Given that our dataset follows the concept of triggerless word annotation, we utilized the following model as a baseline model for comparison experiments as well as a quality validation model for the dataset:

- Doc2EDAG: An end-to-end model that converts DEE into a table–population task, directly populating event tables with entity-based paths for extensions.

- GreedyDec: This model is a baseline model in the Doc2EDAG model that uses a greedy strategy to populate against an event table.

- DE-PPN: This model uses multi-granularity decoders for parallel extraction of events to improve the speed of event extraction while effectively addressing the challenges of multiple events and argument scattering of document-level events.

In addition, to verify the quality of the dataset, we conducted an experiment involving manual extraction of document-level event information. For human evaluation, we randomly selected 1000 events and invited three individuals to manually extract the event information. The final result is an average of the three individuals’ findings.

Based on the experimental setup in Section 5.1, we used a manual approach to extract event information and analyze it in comparison to the results of the baseline model on the CDEEE dataset. Simultaneously, we trained our proposed model RODEE and analyzed the results of RODEE against the baseline model experiments under the same experimental conditions.

It should be noted that in the experimental results below, we highlight in bold the data from the human evaluation (Human), the RODEE model, and other baseline models that outperform the RODEE model.

Table 2 and Table 3 show the results obtained by each model on the CDEEE dataset for each event type, as well as the overall experimental results. We can observe that the scores achieved by humans on the CDEEE dataset were much higher than those of the existing DEE models. On the one hand, this indicates the high quality of our labeled dataset, and on the other hand, it also indicates that there is still more room for improvement in the DEE task.

Table 2.

Experimental results of DEE models for various events in the CDFEE dataset.

Table 3.

Overall experimental results of DEE in CDFEE dataset.

Considering that the existing DEE model methods use sequence annotation to complete the candidate entity prediction task in the candidate entity extraction stage and embed the role types of candidate entities to assist in the DEE task, our CDEEE dataset is annotated for the candidate entity role overlapping problem. Therefore, we modified the baseline model by removing the role type embedding module from the baseline model and naming the modified baseline model as Doc2EDAG*, GreedyDec*, and DE-PPN*.

By observing Table 2 and Table 3, it can be seen that in the CDEEE dataset annotated with overlapping role issues, the model approach that employs embedding of individual role types to assist in completing the DEE task performed lower overall compared to the DEE model approach that does not involve role type embedding. Therefore, we believe that incomplete embedding of role type information not only fails to enhance the overall performance of the DEE task but may also have negative effects, potentially reducing the performance of the DEE task to some extent.

We can also observe from Table 2 and Table 3 that the overall performance of our proposed RODEE model was better than that of the existing DEE task model, with an improvement of 7 percentage points in the precision P of our model compared to the best-performing DE-PPN model among the baseline models, and compared to the best-performing Dco2EDAG* model among the baseline models in terms of F1 values, our model’s F1 value improved by 3.9 points. In addition, we also observed that the recall R and F1 values for the “InjureDead” event were lower than those of the Doc2EDAG* model, which we attribute to the fact that event “InjureDead” has only four role types and a low role overlapping rate, and thus the performance of our proposed model for the candidate entity role overlapping problem is slightly lower than that of the Doc2EDAG* model for this class of events. Additionally, the recall rate R and F1 score for the “Rescure” event in the Doc2EDAG* model were slightly higher than those of our model. We believe that although the “Rescure” event has more role types compared to the “InjureDead” event, the overall role overlap in this event is relatively low, which leads to the aforementioned phenomenon.

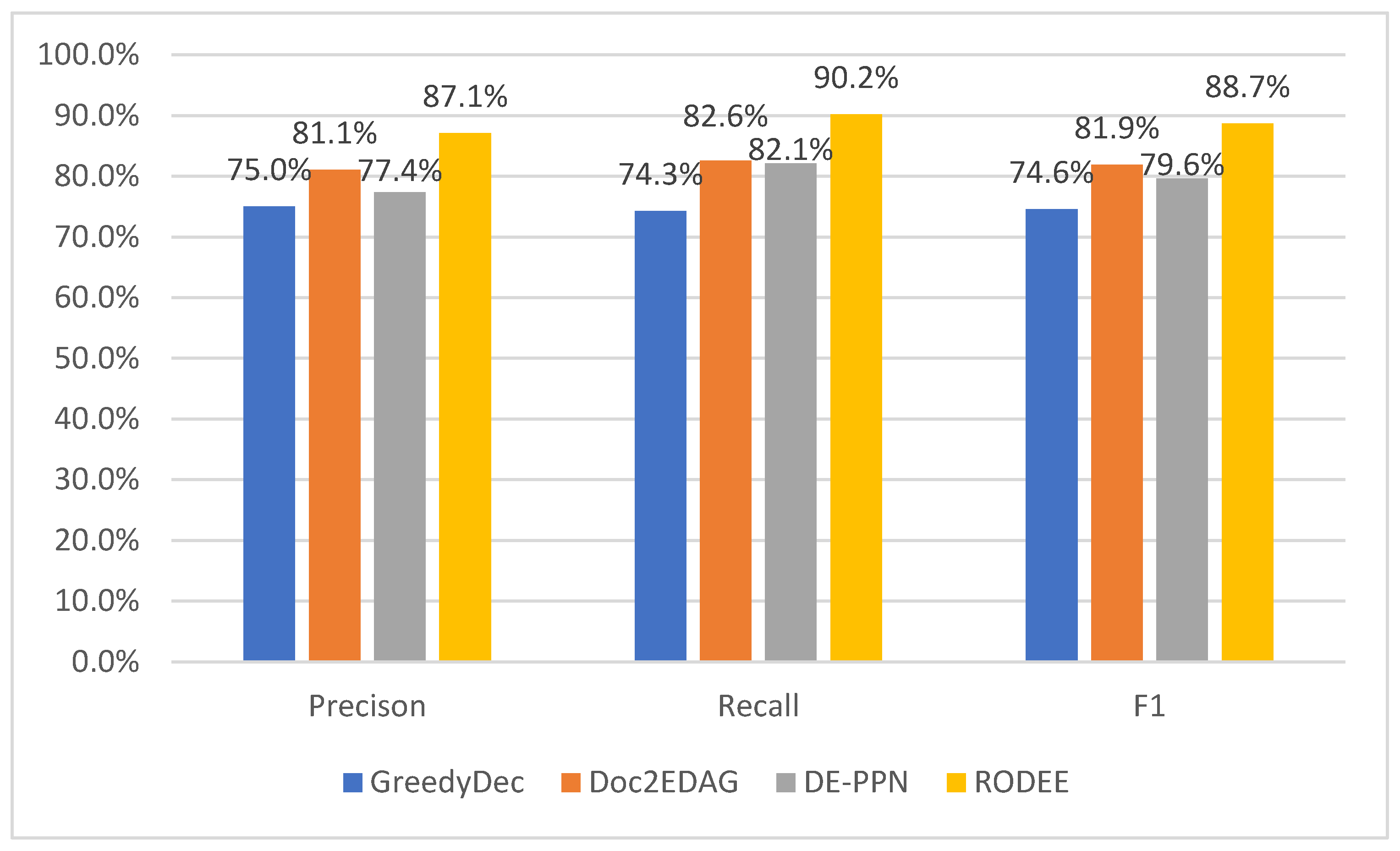

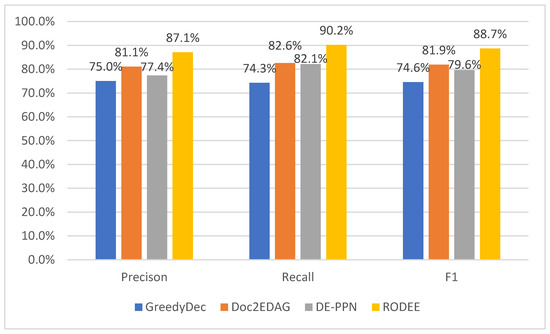

To further analyze the performance of RODEE, we also conducted experiments on the candidate entity extraction subtask, and the results are shown in Figure 9. As can be seen in Figure 9, RODEE not only outperformed the other models on the DEE task, but also on the candidate entity extraction subtask. Compared with other models, RODEE improved at least 11 percentage points on the F1 score for the candidate entity extraction subtask. We believe this is due to the fact that more deep information about the text is obtained to improve the performance of the model for candidate entity extraction. This demonstrates that the performance of candidate entity extraction, as the first subtask of the DEE task, has a significant impact on the overall DEE task, and the improvement in the candidate entity extraction subtask has a positive effect on the DEE task.

Figure 9.

Comparison of candidate entity extraction results.

5.3. Ablation Experiment

To validate the effectiveness of our improvements in the model, we conducted ablation experiments on certain modules. Firstly, we performed ablation experiments on the feature fusion of candidate entity role information. Previously, we had also removed the role information features of candidate entities in various baseline models and obtained better experimental results compared to the source model. Therefore, we also need to verify whether the fusion of role information features positively or negatively affects our overall task. Secondly, due to the use of the pre-trained language model RoBERTa-wwm, we need to verify whether the overall performance improvement in the task is solely attributed to the pre-trained language model. It is well known that pre-trained language models significantly improve performance in various natural language processing tasks. In our case, to address the overlapping problem of argument entity role information, we required more granular information and thus adopted a pre-trained language model, RoBERTa-wwm, which can capture more text information. We made this pre-trained language model an important component of our model. For various reasons, we also conducted ablation experiments on this part. However, in order to support our need for obtaining more granular information, we did not remove the pre-trained language model, but rather added it to the baseline model, DE-PPN. In general, we compared the results of the DE-PPN model, which does not incorporate role type embedding and pre-trained language model modules, with the RODEE module we built and the following two ablation modules:

- −RoleType: removes the role type embedding module from the RODEE model.

- +BERT: adds the RoBERTa-wwm pre-trained language model to the DE-PPN baseline model.

In Table 4, we present the results of our model -RoleEmb after removing the candidate entity role information features, as well as the baseline model DE-PPN with the addition of the same pre-trained language model +BERT that we used. It is evident that our model still maintains its advantageous position compared to the aforementioned two models. Our model achieved a 1.1-point improvement in the F1 score compared to the -RoleEmb model, which removes the candidate entity role information feature. This demonstrates that incorporating correct candidate entity role information plays a beneficial role in our DEE task. Moreover, as shown in Section 5.1, incomplete or incorrect candidate entity role features can negatively impact the overall performance of document-level event extraction. Furthermore, we can observe that DE-PPN achieved a 6.9-point increase in the F1 score after incorporating a pre-trained language model. However, when compared to our RODEE model, there was still a 3.2-point gap in the F1 score. We can conclude that although the powerful performance of the pre-trained language model is leveraged in our model, our proposed model improvements for the candidate entity role overlapping problem still contribute significantly to the performance of the DEE task.

Table 4.

Results of ablation experiment.

6. Conclusions

In this study, we constructed a Chinese document-level emergency event extraction dataset for the problem of data scarcity in Chinese document-level emergency event extraction tasks and validated it using an existing DEE model. The feasibility and research value of our constructed dataset were demonstrated. Our construction of the dataset has not only promoted the development of Chinese document-level event extraction but also laid the foundation for research on related causal knowledge graphs. Additionally, this will help relevant personnel to respond to emergencies in a timely and effective manner. In the process of constructing the dataset, we discovered the role overlapping problem of candidate entities, which is common in the real world and often overlooked in this field. To address this problem, we propose a DEE model to solve the problem and conducted experiments on our dataset. Our approach significantly improved the overall performance of the DEE task compared to the benchmark model. Specifically, we deviated from the conventional approach of using sequence labeling methods for candidate entity extraction during the candidate entity extraction phase. Instead, we utilized two modules to represent the head and tail positions of candidate entities, allowing them to interact and extract candidate entity information. This method enables the extraction of candidate entities with overlapping roles, outperforming sequence labeling methods that can only extract planar entities. Furthermore, the improved performance in the candidate entity extraction subtask further enhances the overall performance, specifically the F1 score, of the document-level event extraction task. For future work in this area, we believe that the introduction of external knowledge bases such as knowledge graphs and factual knowledge graphs will greatly improve the model’s ability to cope with the role overlapping problem and thus improve the overall performance of the DEE task.

Author Contributions

Conceptualization, K.C. and W.Y.; methodology, K.C.; validation, K.C., F.W. and J.S.; data curation, K.C., F.W. and J.S.; writing—original draft preparation, K.C.; writing—review and editing, K.C., W.Y. and F.W.; supervision, K.C. and W.Y.; funding acquisition, W.Y. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Natural Science Foundation of China under grant number 202204120017, the Autonomous Region Science and Technology Program under grant number 2022B01008-2 and 2020A02001-1.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A

Regarding the Chinese document-level dataset for extracting unexpected events, the related event types and their corresponding role attributes are described as follows.

Table A1.

Event type information description.

Table A1.

Event type information description.

| ID | EventType | Description |

|---|---|---|

| 0 | InjureDead | InjureDead, used to describe the situation of personnel casualties caused by unexpected events. |

| 1 | Rescure | Rescure, used to describe the rescue and aftermath situation of the relevant organization after an accident or disaster occurs. |

| 2 | Accident | Accident, used to describe the relevant information of sudden accidents caused by non-natural factors. |

| 3 | NaturalHazard | NaturalHazard, used to describe relevant information on unexpected events caused by natural factors. |

Table A2.

Event role type information description.

Table A2.

Event role type information description.

| ID | RoleType | Description |

|---|---|---|

| 0 | InjurePerson | Used to describe the number of injured people in the InjureDead event. |

| 1 | DeadPerson | Used to describe the number of deaths in the InjureDead event. |

| 2 | InjurerPerson | Used to describe the relevant personnel who caused injuries to people in InjureDead events, such as the culprit in criminal cases. |

| 3 | InjurerArticle | Used to describe the related items that cause personal injuries in InjureDead events, such as the weapon used by the perpetrator in criminal cases. |

| 4 | RescureOrg1 | Used to describe the organizations involved in rescuing operations in Rescue events based on the analysis of the text information related to unexpected events. Typically no more than three rescue organizations are involved. Therefore, we have established three role types: RescueOrg1, RescueOrg2, and RescueOrg3. |

| 5 | RescureOrg2 | |

| 6 | RescureOrg3 | |

| 7 | StartTime | This is used to describe the time at which rescue organizations start rescue operations in a Rescue event in order to illustrate the speed of the rescue organization’s response time. |

| 8 | EndTime | To describe the Rescue event, the time taken by the rescue organization to complete the rescue operation can be compared with the start time to evaluate the efficiency of the rescue operation. |

| 9 | RescureTokinage | Used to describe the duration of rescue missions performed by rescue organizations in Rescue events, it can directly reflect the efficiency of rescue operations. However, although RescueTokenage has similar functionality when combined with StartTime and EndTime, is not always paired with them. |

| 10 | RescurePlace | Used to describe the location where rescue organizations conduct rescue operations during Rescue events. |

| 11 | RescuredTarget | Used to describe the situation of individuals rescued by rescue organizations during a Rescue event. |

| 12 | HappenTime | Used to describe the time of occurrence of emergency events during Accident and Natural Hazard incidents. |

| 13 | HappenPlace | Used to describe the location of the incident that occurred during Accident and Natural Hazard events. |

| 14 | HazardType | Used to describe the type of emergency incidents in Accident and Natural Hazard events. In Accident events, these can include traffic accidents, roof collapses, fires, etc. In Natural Hazard events, these can include earthquakes, mudslides, landslides, etc. |

| 15 | AffectOject | Used to describe the relevant information about individuals affected by emergency events during Accidents and Natural Hazard events. |

| 16 | CauseObject1 | Used to describe the main information about an emergency accident during an Accident event; for example, in a traffic accident, Car A and Car B collided with each other. Similarly, through the analysis of a large amount of textual information, we have established three role types: CauseObject1, CauseObject2, and CauseObject3. |

| 17 | CauseObject2 | |

| 18 | CauseObject3 |

Regarding the experimental environment, we used an NVIDIA GeForce RTX 3090 graphics card for model training and testing. The hyperparameter settings in the model are shown in Table A2.

Table A3.

Hyperparameter settings.

Table A3.

Hyperparameter settings.

| Parameter Name | Parameter Value |

|---|---|

| Batch size | 4 |

| Epoch | 100 |

| Number of generated events | 4 |

| Embedding size | 768 |

| Hidden size 768 | 768 |

| Learning rate for Transformer | 1 × 10−5 |

| Learning rate for RoBERTa-wwm | 2 × 10−5 |

| Learning rate for Decoder | 2 × 10−5 |

| Dropout | 0.1 |

References

- Hong, Y.; Kim, J.-S.; Xiong, L. Media Exposure and Individuals’ Emergency Preparedness Behaviors for Coping with Natural and Human-Made Disasters. J. Environ. Psychol. 2019, 63, 82–91. [Google Scholar] [CrossRef]

- Liu, J.; Chen, Y.; Liu, K.; Bi, W.; Liu, X. Event extraction as machine reading comprehension. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), Online, 16–20 November 2020. [Google Scholar]

- Huang, L.; Ji, H.; Cho, K.; Voss, C.R. Zero-shot transfer learning for event extraction. arXiv 2017, arXiv:1707.01066. [Google Scholar]

- Hong, Y.; Zhang, J.; Ma, B.; Yao, J.; Zhou, G.; Zhu, Q. Using Cross-Entity Inference to Improve Event Extraction. In Proceedings of the Meeting of the Association for Computational Linguistics: Human Language Technologies, Portland, OR, USA, 19–24 June 2011. [Google Scholar]

- Doddington, G.; Mitchell, A.; Przybocki, M.; Ramshaw, L.; Strassel, S.; Weischedel, R. The Automatic Content Extraction (ACE) Program Tasks, Data, and Evaluation. In Proceedings of the Fourth International Conference on Language Resources and Evaluation (LREC’04), Lisbon, Portugal, 26–28 May 2004. [Google Scholar]

- Grishman, R.; Sundheim, B. Message Understanding Conference-6: A Brief History. In Proceedings of the 16th Conference on Computational Linguistics, Copenhagen, Denmark, 5–9 August 1996. [Google Scholar]

- Li, S.; Ji, H.; Han, J. Document-Level Event Argument Extraction by Conditional Generation. arXiv 2021, arXiv:2104.05919. [Google Scholar]

- Yang, H.; Chen, Y.; Liu, K.; Xiao, Y.; Zhao, J. DCFEE: A Document-Level Chinese Financial Event Extraction System Based on Automatically Labeled Training Data. In Proceedings of the Meeting of the Association for Computational Linguistics, Melbourne, Australia, 15–20 July 2018. [Google Scholar]

- Zheng, S.; Cao, W.; Xu, W.; Bian, J. Doc2EDAG: An End-to-End Document-Level Framework for Chinese Financial Event Extraction. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, 3–7 November 2019. [Google Scholar]

- Yang, H.; Sui, D.; Chen, Y.; Liu, K.; Zhao, J.; Wang, T. Document-Level Event Extraction via Parallel Prediction Networks. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing, Online, 1–6 August 2021. [Google Scholar]

- Cui, Y.; Che, W.; Liu, T.; Qin, B.; Yang, Z.; Wang, S.; Hu, G. Pre-Training with Whole Word Masking for Chinese BERT; Institute of Electrical and Electronics Engineers (IEEE): Piscataway, NJ, USA, 2021. [Google Scholar]

- Ebner, S.; Xia, P.; Culkin, R.; Rawlins, K.; Van Durme, B. Multi-Sentence Argument Linking. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020. [Google Scholar]

- Du, X.; Cardie, C. Document-Level Event Role Filler Extraction Using Multi-Granularity Contextualized Encoding. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020. [Google Scholar]

- Du, X.; Rush, A.; Cardie, C. GRIT: Generative Role-Filler Transformers for Document-Level Event Entity Extraction. In Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, Online, 19–23 April 2021. [Google Scholar]

- Huang, K.-H.; Peng, N. Document-Level Event Extraction with Efficient End-to-End Learning of Cross-Event Dependencies. In Proceedings of the Third Workshop on Narrative Understanding, Virtual, 11 June 2021. [Google Scholar]

- Huang, Y.; Jia, W. Exploring Sentence Community for Document-Level Event Extraction. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021, Punta Cana, Dominican Republic, 16–20 November 2021. [Google Scholar]

- Xu, R.; Liu, T.; Li, L.; Chang, B. Document-Level Event Extraction via Heterogeneous Graph-Based Interaction Model with a Tracker. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing, Online, 1–6 August 2021. [Google Scholar]

- Nguyen, T.H.; Cho, K.; Grishman, R. Joint Event Extraction via Recurrent Neural Networks. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, San Diego, CA, USA, 12–17 June 2016. [Google Scholar]

- Li, Q.; Ji, H.; Huang, L. Joint Event Extraction via Structured Prediction with Global Features. In Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics, Sofia, Bulgaria, 4–9 August 2013. [Google Scholar]

- Nguyen, T.M.; Nguyen, T.H. One for All: Neural Joint Modeling of Entities and Events. In Proceedings of the Thirty-First Innovative Applications of Artificial Intelligence Conference, Honolulu, HI, USA, 27 January–1 February 2019. [Google Scholar]

- Sha, L.; Qian, F.; Chang, B.; Sui, Z. Jointly Extracting Event Triggers and Arguments by Dependency-Bridge RNN and Tensor-Based Argument Interaction. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- Liu, X.; Luo, Z.; Huang, H. Jointly Multiple Events Extraction via Attention-Based Graph Information Aggregation. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 31 October–4 November 2018. [Google Scholar]

- Wadden, D.; Wennberg, U.; Luan, Y.; Hajishirzi, H. Entity, Relation, and Event Extraction with Contextualized Span Representations. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, Hong Kong, China, 3–7 November 2019. [Google Scholar]

- Li, F.; Peng, W.; Chen, Y.; Wang, Q.; Pan, L.; Lyu, Y.; Zhu, Y. Event Extraction as Multi-Turn Question Answering. In Proceedings of the Findings of the Association for Computational Linguistics, Online, 16–20 November 2020. [Google Scholar]

- Du, X.; Cardie, C. Event Extraction by Answering (Almost) Natural Questions. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, Online, 16–20 November 2020. [Google Scholar]

- Chen, Y.; Chen, T.; Ebner, S.; White, A.S.; Van Durme, B. Reading the Manual: Event Extraction as Definition Comprehension. In Proceedings of the Fourth Workshop on Structured Prediction for NLP, Online, 20 November 2020. [Google Scholar]

- Yang, S.; Feng, D.; Qiao, L.; Kan, Z.; Li, D. Exploring Pre-Trained Language Models for Event Extraction and Generation. In Proceedings of the 57th Conference of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019. [Google Scholar]

- Xu, N.; Xie, H.; Zhao, D. A Novel Joint Framework for Multiple Chinese Events Extraction. In Proceedings of the Chinese Computational Linguistics-19th China National Conference, Haikou, China, 30 October–1 November 2020. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Kuhn, H.W. The Hungarian Method for the Assignment Problem. Nav. Res. Logist. Q. 1955, 2, 83–97. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).