Abstract

As artificial intelligence (AI) systems are increasingly adopted in recruitment practices, applicants’ responses to AI-mediated interviews have become an important issue for organizations. Understanding how applicants interpret these systems is relevant for organizational attractiveness and employer branding. Drawing on social exchange theory and signaling theory, this study examines the role of AI interview explainability in shaping applicants’ evaluations of organizations. It proposes that explainability influences organizational attractiveness through two parallel mechanisms: perceived organizational support and perceived innovativeness. Survey data were collected from 196 job applicants with experience in AI-based interviews. The results show that higher perceived explainability of AI interviews is associated with stronger perceptions of organizational support and organizational innovativeness. Both perceptions are positively related to organizational attractiveness. These findings support a dual-mediation model and suggest that explainable AI interview systems communicate both supportive intentions and technological capability to applicants. By focusing on applicants’ perceptions, this study contributes to the growing literature on AI use in human resource management. It highlights the importance of explainable system design in shaping early applicant reactions. The findings also provide practical implications for organizations seeking to implement AI-based recruitment tools that are transparent, credible, and attractive to potential applicants.

1. Introduction

The advent of artificial intelligence (AI) has precipitated a paradigm shift in the domain of recruitment. According to Facts & Factors (2024), the global AI interview market reached 692 million USD in 2023 and is projected to grow at a compound annual growth rate of 6.5%, surpassing 890 million USD by 2028. This growing trend in technological adoption is evidenced by data from Resume Builder, which shows that 43% of companies plan to implement AI interview systems by 2025. Within the paradigm of algorithmic management, AI is responsible not only for the execution of conventional recruitment functions such as the screening of résumés and the evaluation of interviews (Weng & Why, 2025), but also for an increasing involvement in employee training (Madhumithaa et al., 2025) and even employment relationship decisions (Kellogg et al., 2020).

However, the efficiency gains brought by AI are accompanied by a profound crisis of trust. Although AI technologies significantly improve recruitment efficiency (Al-Quhfa et al., 2024), their “black box” nature may trigger systematic anxiety among job applicants (Adadi & Berrada, 2018). Most current AI systems provide only outcome predictions while concealing the underlying decision logic, and this lack of explainability has been shown to reduce user acceptance (Cysneiros et al., 2018), as well as erode trust and reliance on AI systems (Hasan et al., 2021). In response, regulatory bodies have begun to address this concern. For instance, the European Commission (2021) has mandated that AI systems must offer understandable explanations to end users, not merely to technical experts, in order to foster trust. Nonetheless, effectively communicating AI decision-making processes to non-technical users remains a complex design challenge (Weitz et al., 2021).

The necessity for the development of explainable artificial intelligence (XAI) has been increasingly emphasized in recent research. XAI refers to AI systems capable of generating understandable reasoning or providing decision-relevant information tailored to specific end users (Arrieta et al., 2020), thereby enabling individuals to comprehend the logic underlying AI-driven decisions (Laato et al., 2022). Prior studies have shown that explainable AI can generate several positive outcomes for users, including enhanced perceived usefulness and ease of use (Meske et al., 2022), increased trust in AI systems (Habbal et al., 2024), and higher levels of acceptance and satisfaction (Huo et al., 2025).

In AI-mediated recruitment interviews, explainability typically manifests through several practical features, including the transparency of decision logic, the clarity of evaluation criteria, and the interpretability of system feedback provided to applicants. These elements enable applicants to better understand how their interview responses are assessed and how algorithmic decisions are generated, thereby reducing uncertainty in technology-mediated hiring processes. Although explainability is conceptually related to constructs such as perceived transparency and fairness perceptions, these constructs capture different aspects of applicant evaluations. Transparency reflects the perceived clarity of the system’s reasoning process, whereas fairness typically concerns the perceived equity of evaluation outcomes. Explainability, by contrast, represents a broader design principle aimed at making AI decision processes understandable to end users. In this study, we conceptualize AI explainability at the perceptual level as applicants’ perceived transparency regarding the AI interviewer’s decision logic, representing the core dimension through which individuals interpret algorithmic explanations

Despite these potential benefits, the first critical research gap lies in the prevailing technical orientation of the field. Extant research in XAI has focused predominantly on the technical validity and algorithmic transparency of explanation methods (Haque et al., 2023; Arrieta et al., 2020). Comparatively fewer studies have examined explainable AI from the perspective of end users, particularly in terms of how AI-generated explanations influence users’ subjective experiences and behavioral responses (Haque et al., 2023).

Building on this general limitation, a second and more specific research gap exists within the recruitment context. While applicants actively seek signals about organizational practices (Walker et al., 2012), there is a lack of theoretical understanding regarding how the explainability of algorithmic decisions functions as an informational cue in early-stage hiring (Cai et al., 2025; Meijerink et al., 2021). In this study, the AI interviewer refers to an automated interview evaluation system that relies on machine learning and natural language-processing techniques to analyze applicants’ verbal or textual responses and generate structured assessments. When AI systems provide understandable explanations regarding how interview responses are evaluated, applicants may perceive the organization as more transparent, technologically competent, and respectful toward candidates. From a signaling perspective, explainable AI therefore functions as an important organizational signal that communicates organizational values and capabilities. From a social exchange perspective, providing understandable explanations may also convey consideration and respect toward applicants, thereby fostering positive relational expectations. Consequently, AI explainability can be theoretically positioned as a key antecedent of organizational attractiveness, as it shapes both applicants’ cognitive evaluations of organizational attributes and their affective interpretations of how the organization treats candidates during the recruitment process.

2. Literature Review

To integrate Social Exchange Theory and Signaling Theory in a theoretically coherent manner, this study adopts a dual-process perspective on applicant sensemaking in AI-mediated recruitment. Dual-process theories posit that individuals form evaluations through the parallel operation of affective–experiential and cognitive–analytic processing systems, particularly in contexts characterized by uncertainty and high personal involvement. Recruitment interactions—and AI-based interviews in particular—constitute such contexts, as applicants simultaneously experience emotional reactions to the interaction process and cognitively evaluate the organizational implications conveyed by recruitment practices.

Building on this perspective, we conceptualize applicants’ evaluations of organizational attractiveness as the joint outcome of two complementary psychological pathways. First, drawing on Social Exchange Theory, AI explainability is theorized to influence applicants’ affective responses by signaling organizational care, fairness, and respect, thereby shaping perceptions of organizational support and reciprocal expectations even prior to formal employment. Second, informed by Signaling Theory, AI explainability functions as a salient organizational signal that enables applicants to infer the organization’s technological competence, innovativeness, and strategic orientation under conditions of information asymmetry.

2.1. External Job Applicants’ Perceptions of AI Interview Interaction and Organizational Attractiveness

Organizational attractiveness is conceptualized as applicants’ positive attention towards an organization’s reputation, accompanied by a resultant willingness to join and remain within the organization (Zaki & Pusparini, 2020). It functions as a pivotal metric in the evaluation of applicants’ experiences in the recruitment process (Bauer et al., 2006) and exerts a substantial influence on their propensity to apply for and subsequently join an organization (Hoffman & Woehr, 2006). Organizational attractiveness is a core component of employer branding, reflecting the organization’s ability to position itself as a ‘preferred employer’ in a competitive talent market (Leekha Chhabra & Sharma, 2014). By enhancing explainability, organizations can signal a brand identity characterized by transparency and innovation.

As the initial point of contact between job applicants and organizations, AI-based interview processes exert considerable influence on applicants, perceptions of organizational attractiveness (Cai et al., 2025). Empirical evidence suggests that the perceived use of artificial intelligence tools in recruitment is positively associated with employer attractiveness (Horodyski, 2023). This relationship can be attributed to the fact that information encountered by applicants during the recruitment and selection process significantly shapes their attitudes and behavioral intentions (Chapman et al., 2005). Specifically, when the recruitment process is perceived as transparent, fair, and efficient, applicants are more likely to evaluate the organization positively, thereby enhancing their perceptions of organizational attractiveness (Hausknecht et al., 2004). Consequently, the examination of applicant responses and perceptions is imperative in studies of organizational image, reputation, and attractiveness.

2.2. Explainable Artificial Intelligence (XAI)

In the context of AI-based recruitment, the concept of explainable artificial intelligence (XAI) has emerged as a salient factor influencing applicants, perceptions (Hamm et al., 2023). XAI refers to the capability of AI systems to articulate their decision-making processes and outcomes in a manner comprehensible to human users (Vilone & Longo, 2021). However, as the intricacy of AI systems and algorithms continues to escalate, they are increasingly perceived as opaque “black boxes” due to the extensive technical expertise required to interpret their functioning and outputs (Adadi & Berrada, 2018). The majority of users possess limited understanding of how such systems generate decisions, and this growing complexity contributes to a lack of explainability, thereby hindering comprehension and eroding trust (Adadi & Berrada, 2018).

Nonetheless, existing research has primarily concentrated on factors affecting organizational attractiveness in traditional recruitment contexts, with limited attention to the distinctive role of AI explainability in recruitment (van der Waa et al., 2021). Empirical evidence indicates that insufficient explainability in AI systems may lead to user distrust and resistance (Druce et al., 2021), particularly in high-stakes decision-making domains such as recruitment and medical diagnostics. The enhancement of AI explainability is not merely a technical imperative, but also an ethical and legal necessity (Hamm et al., 2023).

When applicants perceive the XAI interview process as highly explainable, they are more likely to interpret the organization’s decision-making as transparent and fair (Mujtaba & Mahapatra, 2024), a positive signal which in turn promotes more favorable evaluations of the organization’s attractiveness (Joo et al., 2016). Furthermore, by providing clear and accessible feedback mechanisms, XAI reduces applicants’ uncertainty and signals that the organization is attentive to their interests (Ochmann et al., 2024). Based on the existing literature, it is reasonable to assume that XAI facilitates users’ understanding of the recruitment process, thereby enhancing perceptions of organizational attractiveness.

H1.

The perceived explainability of the AI interview process positively affects job applicant’s perceptions of organizational attractiveness.

2.3. The Mediating Role of Perceived Organizational Support

According to social exchange theory (Cropanzano & Mitchell, 2005), social interactions are fundamentally characterized by reciprocal exchanges of resources, wherein individuals assess the value of continuing a relationship based on perceived costs and benefits. In recruitment contexts, applicants interpret organizational behaviors, such as the implementation of AI-based interview systems, as indicative of the extent to which the organization values their interests and experiences (Paramita et al., 2024). Within AI-based recruitment processes, applicants’ subjective evaluations of AI Explainability, including perceptions of transparent assessment criteria and clear feedback mechanisms (Yu et al., 2025), function as a basis for inferring organizational intentions.

Due to the “black-box” nature of AI, organizations make extra efforts to enhance its explainability by providing more information, offering clear guidelines and incorporating user-friendly interfaces that allow applicants to better understand how the AI evaluates and selects candidates. When applicants perceive the AI decision-making process to be highly explainable, they are more likely to regard such transparency as a purposeful organizational gesture that communicates respect, fairness, and attentiveness to applicant concerns (Eisenberger et al., 2019). This perception reinforces their sense of perceived organizational support (POS), defined as the belief that the organization values their contributions and genuinely cares about their well-being (Kurtessis et al., 2017). From the perspective of social exchange theory, the explainability of AI systems serves as a symbolic cue that the organization is committed to equitable and supportive treatment of prospective employees, thereby enhancing applicants’ POS (Eisenberger et al., 2019).

A substantial body of empirical research has established that POS is a critical determinant of the employee–organization relationship, positively associated with job satisfaction, organizational commitment, and favorable employer evaluations (Kurtessis et al., 2017). When individuals perceive high levels of support from the organization, they are more likely to reciprocate through positive attitudes and behavioral intentions, including stronger perceptions of organizational attractiveness and increased willingness to join the organization. However, existing studies have primarily concentrated on interpersonal interactions in traditional recruitment contexts. There remains a paucity of research that systematically examines how the technological features of AI systems, such as explainability, contribute to POS and, in turn, influence perceptions of organizational attractiveness. Based on the foregoing discussion, the following hypothesis is proposed:

H2.

Perceived organizational support mediates the relationship between applicants’ perceptions of AI Explainability and their perceptions of organizational attractiveness.

2.4. The Mediating Role of Perceived Innovativeness

According to signaling theory (Spence, 1973), individuals rely on all available information to infer unknown qualities and reduce uncertainty in decision-making. In contexts characterized by information asymmetry, organizations can convey their internal quality or capabilities to external stakeholders (e.g., applicants) by sending observable signals, such as technological innovation or transparency (Bafera & Kleinert, 2023). The effectiveness of these signals depends on their credibility and observability, whether they help recipients reduce uncertainty and form meaningful evaluations of the organization (Spence, 1973). This signaling mechanism is particularly salient in personnel selection processes. During recruitment, applicants continuously receive new information, such as the use of technology and communication styles, and adjust their perceptions and attitudes toward the organization accordingly.

In AI-based recruitment contexts, when applicants perceive the decision-making processes of AI systems as highly explainable, they tend to interpret this perception as indicative of the organization’s investment in and capability for technological innovation (Sandeep et al., 2025). Organizations that implement AI technologies are not only able to enhance recruitment efficiency, but also communicate a forward-thinking and innovative organizational image to applicants (Sommer et al., 2017). This innovation signal strengthens applicants’ evaluation of the organization’s innovativeness, as the deployment of explainable AI technologies often demands additional organizational resources, including research and development expenditure and technical infrastructure, thereby demonstrating the organization’ s technological strength (Van Esch et al., 2021).

Empirical research has consistently shown that organizational attractiveness is closely associated with the manner in which companies deploy their strategic advantages to engage potential applicants, particularly in relation to technological advancement (Soeling et al., 2022). When applicants evaluate recruitment technologies as objective (Minge & Thüring, 2018), user-oriented (Howardson & Behrend, 2014), efficient (Gonzalez et al., 2019), innovative (Sommer et al., 2017), and consistent with their personal values (Vanderstukken et al., 2016), their perceptions of the organization’s attractiveness are significantly enhanced. Furthermore, the novelty inherent in AI-based recruitment systems may be particularly appealing to applicants who are intrinsically motivated by innovation and technological development (Vanderstukken et al., 2016). Therefore, we propose the following hypothesis:

H3.

Perceived innovativeness mediates the relationship between applicants’ perceptions of AI explainability and perceived organizational attractiveness.

2.5. The Moderating Role of AI Use Anxiety

AI use anxiety refers to the tension, uneasiness, or apprehension experienced by individuals when interacting with AI technologies in the workplace (J. Park & Woo, 2022). This construct not only reflects individuals’ general attitudes toward technological innovation, including resistance or acceptance tendencies, but is also shaped by contextual features of the work environment (Yuan & Woodman, 2010). AI use anxiety may indirectly influence perceptions of organizational attractiveness by diminishing applicants’ perceived organizational support and perceived innovativeness.

2.5.1. The Moderation of the Perceived Organizational Support

Perceived organizational support is fundamentally rooted in social exchange processes. Applicants form this perception when they interpret explainable AI as an indication of organizational care and support (Eisenberger et al., 1986). Given its emotionally grounded nature, perceived organizational support is highly susceptible to affective states such as anxiety.

Applicants’ judgments about organizational support depend on whether they believe the organization values their contributions and cares about their well-being (Eisenberger et al., 2019). Explainable AI, through transparent decision-making and clear feedback mechanisms, generally enhances this perception (Yu et al., 2025). However, when applicants experience elevated levels of anxiety toward AI, they may question the authenticity or intent behind the explanations, even when the AI processes are highly explainable (Suseno et al., 2023). For instance, experimental evidence by (Vuori et al., 2025) shows that highly anxious individuals are prone to perceive transparent AI explanations as superficial rather than as sincere expressions of organizational support. On the other hand, individuals experiencing high anxiety are more likely to focus on potential negative outcomes, including concerns that the AI system may inaccurately assess them. This affective state can overshadow the positive signals embedded in the explainable features of the system, leading to a transfer of negative emotion toward the organization as a whole.

H4.

Elevated levels of AI use anxiety weaken the positive relationship between explainable AI and perceived organizational support.

2.5.2. The Moderation of the Innovation

Prior research indicates that individuals’ perceptions of AI reflect a cognitive assessment of its anticipated benefits and costs. When stress and anxiety are salient in relation to AI use, individuals tend to adopt more negative views regarding its implementation (E. H. Park et al., 2022). This attitude contributes to increased turnover intentions, greater burnout, and heightened resistance to change and to external technologies (Arias-Pérez & Vélez-Jaramillo, 2022).

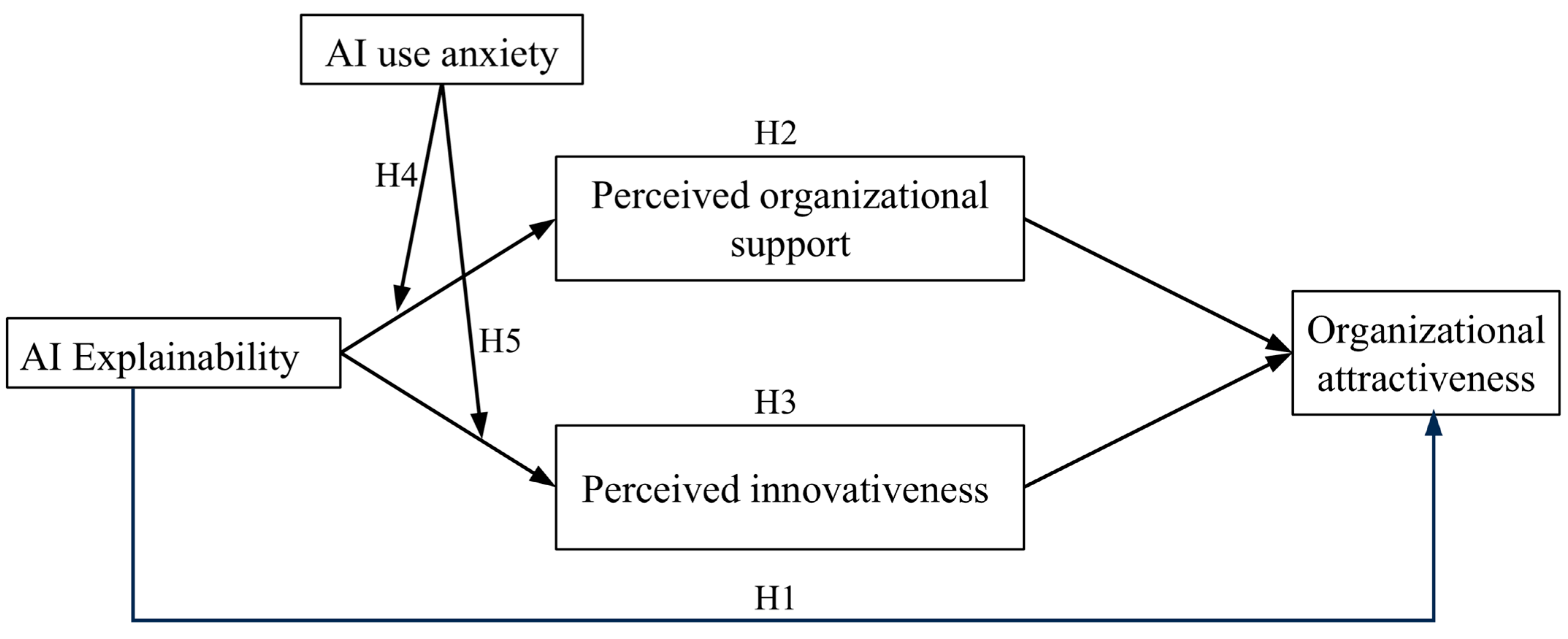

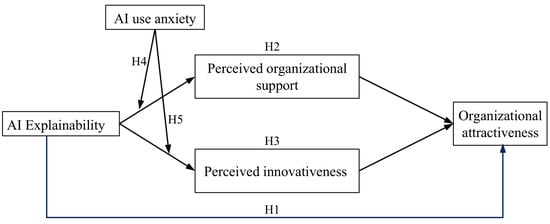

We argue that once AI use anxiety reaches a certain threshold, it interferes with the normal functioning of the signaling process. This interference may occur through the depletion of cognitive resources, which limits applicants’ ability to process and interpret innovation-related cues. As a result, their evaluation of the organization’s innovative capacity becomes distorted (Figure 1).

Figure 1.

The proposed theoretical model.

H5.

Elevated levels of AI use anxiety weaken the positive relationship between explainable AI and perceived innovativeness.

3. Research Methodology

We recruited employees (N = 219) from enterprises located in eastern China, including those operating in the finance, accounting, and technology service sectors. These industries were selected for two main reasons. First, organizations in these fields tend to place a higher emphasis on innovation and often prioritize the selection and development of employees with innovative capabilities. Second, these firms frequently encourage their employees to leverage personal strengths to accomplish work tasks, which supports the role of perceived organizational support in facilitating strength use. To eliminate constraints related to time and location, we used the Credamo 2.0 platform to administer an online experiment and collect the corresponding data.

4. Experimental Design and Performance Evaluation

4.1. Experimental Environment

To examine the relationships among organizational attractiveness, perceived organizational support, perceived innovativeness, and explainable AI, we employed a cross-sectional design.

Participants were invited to complete an online questionnaire. Each applicant was asked to read a brief scenario after their AI-based recruitment process and to review themselves applying for the highly desirable position. The recruitment process involved AI-based résumé screening followed by an AI-scored digital interview. Participants were then asked to respond to items measuring their perceptions of organizational attractiveness and other constructs. Two attention check questions were embedded in the survey. Those who failed either question were excluded from further analysis.

4.2. Experimental Materials

The final valid sample size was 196. Among the participants, 40.1 percent identified as male and 59.9 percent as female. The average age was 26.3 years. All survey items were rated using a five-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree).

4.3. Parameter Settings

- AI explainability:

Applicants’ perceptions of AI explainability were assessed using the perceived transparency scale developed by Wang and Benbasat (2016), which consists of five items measured on a five-point Likert scale. In the present study, AI explainability is explicitly conceptualized and operationalized primarily through perceived transparency, referring to the extent to which the decision logic of the AI system is understandable and comprehensible to applicants. While the broader Explainable AI (XAI) literature encompasses multiple dimensions—including interpretability, justification, and controllability—transparency can be understood as the core perceptual dimension through which applicants experience explainability during interview interactions. We adopted the established scale by Wang and Benbasat (2016) because its focus on the clarity of system reasoning aligns closely with applicants’ formation of explainability perceptions in AI-mediated contexts. This operationalization ensures conceptual convergence at the perceptual level between our theoretical definition and empirical measurement. An example item is “It is easy for me to understand how the AI interviewer works internally.” A pilot test confirmed the internal consistency of the scale in the current context (Cronbach’s α = 0.83).

- Perceived Organizational Support:

We measured perceived organizational support using the eight-item scale developed by Eisenberger et al. (1986). Responses were recorded on a five-point Likert scale. A sample item is “This organization that uses AI interviews cares about my opinions.”

- Perceived Innovativeness:

To assess perceived innovativeness, we employed six items originally developed by Slaughter et al. (2004). We deleted one item (“Do you think this company is plain?”) from the original scale due to the inappropriateness in the Chinese context. As all measurement items were translated using a translation–back-translation procedure. To ensure cultural appropriateness, the translated items were further evaluated by two bilingual experts in human resource management and organizational psychology. Based on expert feedback and a small-scale pilot test, one innovativeness item was identified as culturally ambiguous in the Chinese recruitment context. A pilot confirmatory factor analysis further indicated that this item exhibited a substantially lower factor loading compared to other items. Accordingly, the item was removed prior to the main data collection to enhance content validity and measurement reliability. All items were rated on a five-point Likert scale. One sample item is “I think this company is interesting.”

- AI Use Anxiety:

Applicants’ AI-related anxiety was measured using a four-item scale developed by J. Park and Woo (2022). Each item was rated on a five-point Likert scale. A sample item is “I would feel uneasy if my job required me to use AI.”

- Organizational Attractiveness:

Perceived organizational attractiveness was measured using five items from the scale developed by Lievens and Highhouse (2003). Each item was rated on a five-point Likert scale. Higher scores indicate more favorable evaluations of the organization, while lower scores reflect dissatisfaction.

- Control Variables

This study included several control variables, namely gender, age and educational background. Gender was assessed using a binary coding system: male (1) and female (2). Educational background was measured using a nominal scale, with values ranging from 1 (middle school or below) to 5 (graduate degree or above). Age and organizational tenure were measured as continuous variables, expressed in years. Familiarity with AI interviews was assessed using a five-point Likert scale, ranging from 1 (very unfamiliar) to 5 (very familiar). Demographic variables including gender, age, and educational background were included, as these factors are often associated with varying levels of technology readiness and baseline expectations toward recruitment fairness (Venkatesh & Thong, 2016). By accounting for these variables, we aimed to minimize potential confounding effects and provide a more conservative test of our dual-mediation model. All regression analyses accounted for these control variables in order to reduce their potential confounding effects on the relationships among the key variables in this study.

4.4. Performance Evaluation

This study employed SPSS 27.0 and AMOS 28 to examine the reliability and validity of the measurement instruments. The confirmatory factor analysis (CFA) results indicated satisfactory convergent validity, with all composite reliability (CR) values exceeding the recommended threshold of 0.70 (Hair et al., 2019). Discriminant validity was assessed using the Fornell–Larcker criterion, which showed that the square root of the average variance extracted (√AVE) for each construct was greater than its highest correlation with any other construct. Notably, the correlation between innovativeness (INNO) and organizational attractiveness (ATTR) (r = 0.694) slightly exceeded the √AVE of INNO (0.64). However, this result aligns with theoretical expectations regarding the strong influence of perceived innovativeness on organizational attractiveness (Van Esch et al., 2021) and does not compromise the overall discriminant validity of the measurement model. To further examine discriminant validity, alternative CFA models were estimated by combining theoretically related constructs. The hypothesized five-factor model demonstrated a significantly better fit than the four-factor model in which innovativeness and organizational attractiveness were merged (Δχ2 = 47.54, p < 0.001), supporting the distinctiveness of the two constructs.

As presented in Table 1, the Cronbach’s alpha coefficients for all variables exceeded 0.7, indicating satisfactory internal consistency of the primary constructs. The composite reliability (CR) values also exceeded 0.7, suggesting good convergent validity across all scales used in this study.

Table 1.

Convergent validity and discriminant validity.

The results of the confirmatory factor (Table 2) analysis indicated that the five-factor model demonstrated a good fit with the data (χ2 /df = 1.520, CFI = 0.911, IFI = 0.913, RMSEA = 0.053), reflecting adequate discriminant validity among the constructs.

Table 2.

Overall model fit indices.

To address the potential influence of common method bias due to the homogeneity of data sources, this study conducted Harman’s one-factor test using SPSS 27. The results identified seven factors in total, with the first factor accounting for 28.31 percent of the total variance. The results of CFA and Harman’s one-factor test indicated that common method bias is unlikely to pose a significant threat in this study.

The means, standard deviations, and intercorrelations among the primary variables were examined, as presented in Table 3. Perceived explainable AI was positively correlated with perceived innovativeness (r = 0.439, p < 0.01), perceived organizational support (r = 0.553, p < 0.01), and organizational attractiveness (r = 0.311, p < 0.01). Additionally, both perceived innovativeness (r = 0.694, p < 0.01) and perceived organizational support (r = 0.517, p < 0.01) were significantly and positively associated with organizational attractiveness. These results provided preliminary support for the proposed research hypotheses.

Table 3.

Descriptive statistics and discriminant validity tests.

Hierarchical regression analysis was conducted to test the hypothesized relationships, controlling for gender, age, and education level (see Table 4).

Table 4.

Results of parallel mediation model for the bootstrap analysis.

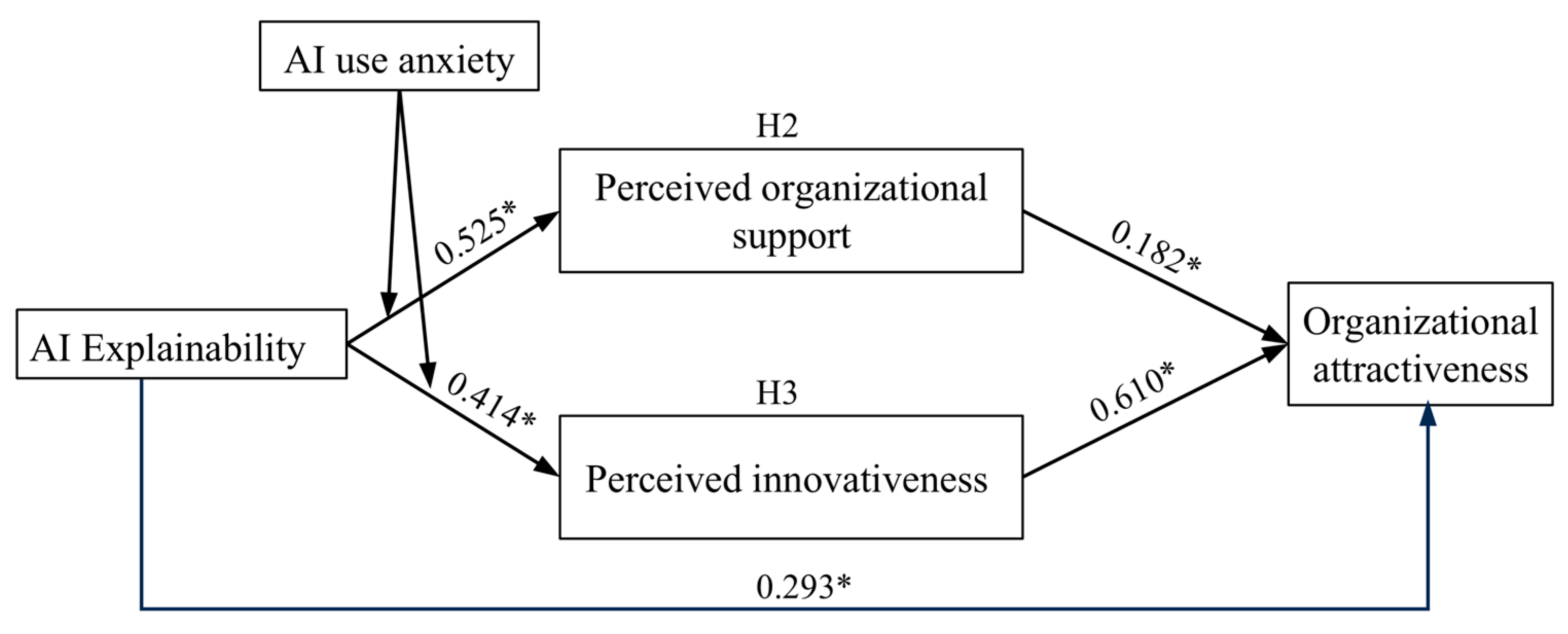

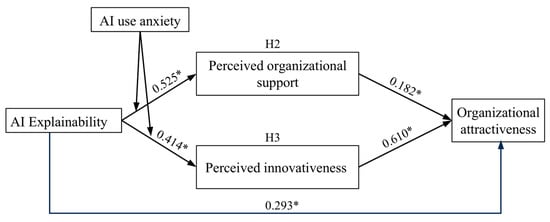

AI explainability had significant positive effects on organizational attractiveness (Model 1: β = 0.293, p < 0.001), perceived organizational support (Model 3: β = 0.525, p < 0.001), and innovation (Model 5: β = 0.414, p < 0.001), supporting Hypotheses 1, 2, and 5.

Furthermore, both perceived organizational support (Model 2: β = 0.182, p < 0.01) and innovation (Model 2: β = 0.301, p < 0.001) significantly predicted organizational attractiveness, confirming Hypotheses 2 and 3.

To test moderation, we conducted hierarchical regression after mean-centering AI explainability and AI use anxiety. Model 4 in Table 4 showed a significant negative interaction effect between AI explainability and AI use anxiety on perceived organizational support (β = −0.3192, p < 0.05). However, the interaction was not significant for innovation (Model 6).

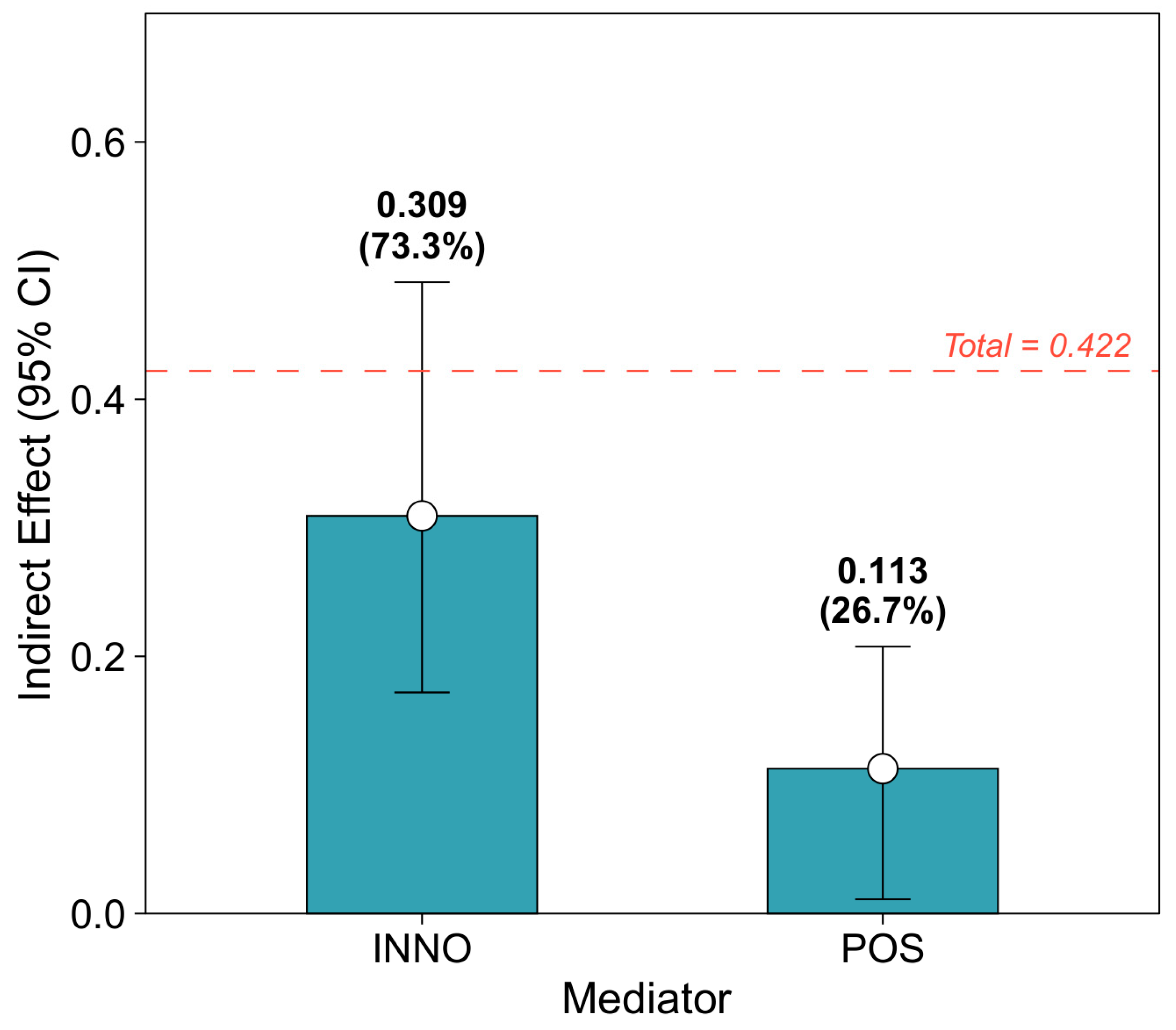

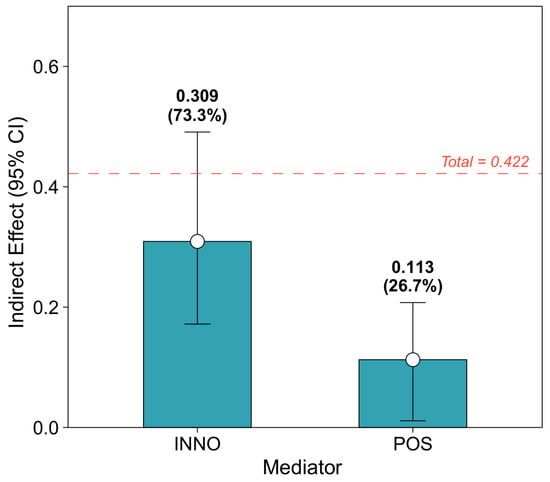

To further validate the mediation effects, a bootstrapping procedure with 5000 resamples was conducted using PROCESS in SPSS 27.0. Results (Table 5) showed a total indirect effect of 0.422 (p < 0.001), with the 95% confidence interval excluding zero, indicating a significant overall mediation.

Table 5.

Indirect effect mediation analysis.

The indirect effect of AI explainability on organizational attractiveness via innovation was 0.3093, accounting for 73.3% of the total indirect effect. The indirect effect via perceived organizational support was 0.1127, accounting for 26.7% (Figure 2). These results support Hypotheses 2 and 3, confirming dual mediation, with innovation playing the dominant mediating role (Figure 3).

Figure 2.

Comparison of indirect effects of XAI on organizational attractiveness (ATTR) via perceived innovativeness (INNO) and perceived organizational support (POS).

Figure 3.

Results of model effect analysis. Note: * p < 0.05.

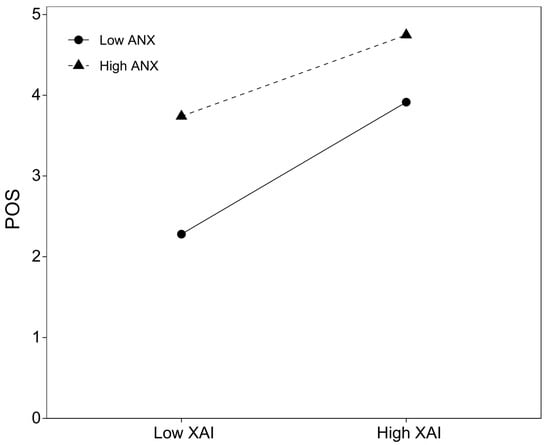

To test moderated mediation (Hypotheses 4 and 5), we used PROCESS Model 7 with 5000 bootstrap samples and a 95% confidence level.

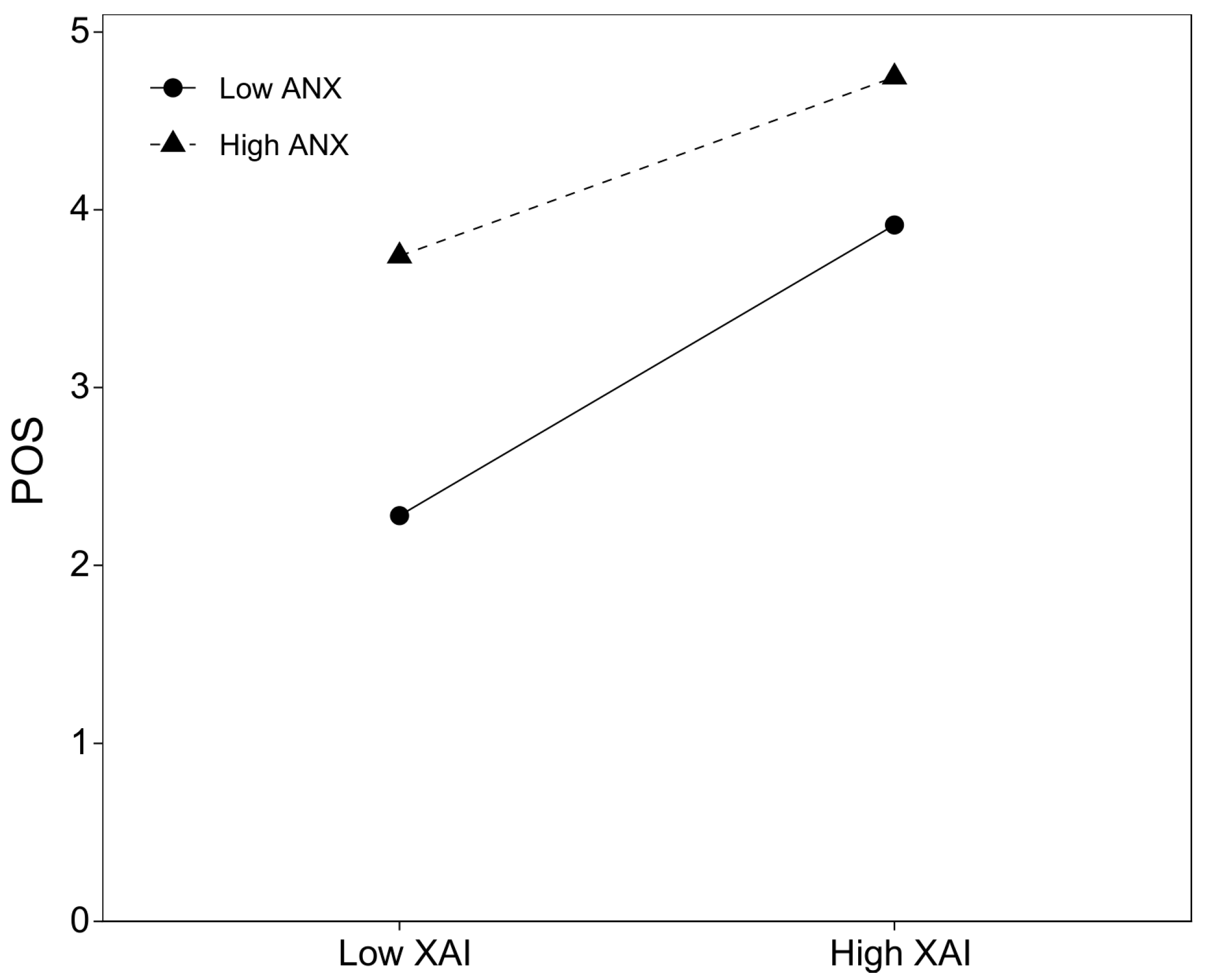

As shown in Table 6, the index of moderated mediation for perceived organizational support was significant (Index = −0.0529, 95% CI = [−0.1457, −0.0005]), confirming that AI use anxiety moderates the mediation of perceived organizational support between AI explainability and organizational attractiveness.

Table 6.

Moderated mediation analysis.

Specifically, the indirect effect through perceived organizational support was significant under both low-AI anxiety (0.1175, 95% CI = [0.0116, 0.2149]) and high-AI anxiety (0.0911, 95% CI = [0.0079, 0.1703]) conditions.

The difference between the two groups (∆ = −0.0265, 95% CI = [−0.0728, −0.0003]) was also significant, indicating stronger mediation under low-anxiety conditions. These findings support Hypothesis 4 (Figure 4).

Figure 4.

Interaction effect of explainable AI (XAI) and AI use anxiety (ANX) on perceived organizational support (POS). Note: Low and high levels of XAI and ANX represent M − 1 SD and M + 1 SD, respectively. Predicted values are computed from the standardized regression coefficients with control variables held constant.

In contrast, AI use anxiety did not significantly moderate the mediation of innovation (Index = −0.0638, 95% CI = [−0.3801, 0.0986]), and the indirect effect difference across high and low groups was non-significant (∆ = −0.0319, 95% CI = [−0.1901, 0.0493]).

4.5. Discussion

Overall, the results provide strong support for the hypothesized model. Specifically, AI explainability significantly enhanced organizational attractiveness (H1), and this effect was mediated by both perceived organizational support (H2) and perceived innovativeness (H3). Among the two mediators, innovativeness played a more dominant role, accounting for 73.3% of the total indirect effect.

Drawing on a dual-process perspective, while the results confirm the hypothesized effects, a more important theoretical insight lies in the relative strength of the two mediating mechanisms. The finding that perceived innovativeness accounts for a substantially larger share of the indirect effect than perceived organizational support suggests that, in AI-mediated recruitment contexts, cognitive signaling mechanisms may outweigh affective exchange processes in shaping organizational attractiveness.

From a signaling theory perspective, explainable AI functions as a high-credibility innovation signal that allows applicants to infer an organization’s technological capability, ethical awareness, and future orientation (Lavanchy et al., 2023). Unlike affective signals, which rely heavily on interpersonal cues, innovation signals embedded in AI explainability are transmitted through objective system features such as algorithmic logic, evaluation dimensions, and transparency mechanisms (Haque et al., 2023). In technology-intensive recruitment contexts characterized by high uncertainty and limited human interaction, applicants are therefore more likely to rely on cognitively processed signals to form organizational evaluations (Huo et al., 2025).

This interpretation helps explain why AI use anxiety moderated the relationship between explainability and perceived organizational support, but not between explainability and perceived innovativeness. While affective exchange processes are sensitive to emotional states and perceived loss of control, assessments of organizational innovativeness appear to be more resilient to individual anxiety, because they are grounded in observable technological attributes rather than emotional experience.

Finally, this perspective helps reconcile inconsistent findings in prior research reporting negative applicant reactions to AI-based recruitment. Such reactions are often observed in contexts where AI systems are opaque, uncontrollable, or framed as substituting human judgment (Malin et al., 2025). In contrast, the present findings demonstrate that it is not AI per se, but explainable AI, that transforms algorithmic decision-making from a source of resistance into a positive organizational signal, thereby enhancing organizational attractiveness in technology-mediated recruitment contexts.

5. Conclusions

5.1. Theoretical Implications

This study contributes to the AI recruitment literature in three key ways. First, it extends social exchange theory by identifying AI explainability as a novel antecedent of perceived organizational support (POS) in technology-mediated interactions. The findings demonstrate that transparent AI decision-making processes can fulfill applicants’ needs for organizational care—an effect previously associated only with human agents in social exchange (Eisenberger et al., 2019). We show that AI explainability signals that “the organization values the applicant experience” through mechanisms such as transparent feedback and fair assessments, thereby eliciting applicants’ reciprocal beliefs (POS) and enhancing organizational attractiveness. This finding links technological characteristics (algorithm explainability) with the social exchange concept of perceived organizational care, addressing a theoretical gap in AI recruitment research (responding to the call by van der Waa et al. (2021)).

Second, the study advances signaling theory by proposing a dual-mediation framework. This dual-pathway model resolves the paradox of how transparent AI-driven organizations can attract both relationally oriented applicants and innovation-seeking applicants, offering a more comprehensive perspective than previous single-pathway studies (e.g., Van Esch et al., 2021).

Third, by revealing the asymmetric moderating role of AI use anxiety, the study refines affective event theory. Specifically, AI use anxiety undermines the affective pathway of perceived organizational support while leaving the cognitive pathway of innovativeness intact. This delineates the boundary conditions of emotional versus cognitive processing in AI-mediated recruitment. The coexistence of affective (perceived organizational support-driven) and cognitive (innovation-driven) paths to organizational attractiveness explains why some applicants with high AI anxiety may still be attracted to highly explainable AI systems, as the innovation signal can offset the negative emotional impact. This challenges the traditional assumption that technological anxiety universally diminishes organizational attractiveness (cf. Arias-Pérez and Vélez-Jaramillo (2022)).

Overall, these insights bridge macro-level organizational signaling and micro-level applicant cognition and emotion, enriching our understanding of technology infused social exchange dynamics.

5.2. Practical Implications

This study offers several practical implications for the application of AI in recruitment management. First, AI explainability should be positioned as a core organizational communication mechanism in recruitment rather than a purely technical feature. The findings show that explainability reduces information asymmetry and uncertainty in AI-based recruitment, thereby enhancing organizational attractiveness. Organizations should explicitly communicate key evaluation criteria, scoring logic, and feedback mechanisms embedded in AI interview systems so that applicants can understand how decisions are made and form early perceptions of organizational fairness and responsibility.

Second, explainable AI systems should be integrated with human-centered recruitment practices to sustain perceived organizational support. Although AI systems conduct evaluations, applicants’ perceptions of organizational support depend on whether they feel respected and considered. The results indicate that explainability strengthens perceived organizational support by signaling organizational attentiveness. In practice, organizations should complement AI interviews with supportive interaction cues, such as personalized system messages, empathetic feedback, or post-interview clarification channels, to maintain socio-emotional continuity in technology-mediated recruitment.

Third, explainable AI recruitment can be strategically leveraged to enhance organizational innovativeness and employer branding while addressing applicant anxiety. Applicants tend to interpret explainable AI systems as signals of technological sophistication and ethical responsibility. Organizations should integrate AI explainability into their employer branding communications. For instance, marketing the use of ‘transparent and applicant-centric AI’ can differentiate a company from competitors, attracting high-quality talent who value both innovation and fairness. At the same time, because AI-related anxiety weakens the positive effects of explainability, organizations should proactively reduce uncertainty by providing clear pre-interview information on AI decision logic, data protection, and human oversight mechanisms.

5.3. Limitations and Future Research

Despite its contributions, several methodological limitations must be acknowledged. First, this study utilized a scenario-based survey design. While this approach ensures control over experimental conditions, it may lack the “psychological realism” of actual high-stakes job interviews, potentially influencing the intensity of applicants’ reactions. Second, our reliance on self-reported data introduces potential risks of common method variance (CMV) and social desirability bias. Although we implemented a time-lagged design (Time 1 and Time 2) to mitigate these effects, the absence of objective behavioral data (e.g., actual job acceptance) means the findings primarily reflect subjective perceptions. Third, although the time-lagged approach provides a stronger basis for temporal precedence than cross-sectional designs, it still falls short of definitive causal inference. Omitted variable bias or reverse causality cannot be entirely ruled out without a purely longitudinal or laboratory-controlled experiment. Finally, the external validity of our findings is constrained by the specific context and sample size (N = 196). The results, derived primarily from the tech industry in China, may not be fully generalizable to labor-intensive industries or cultural contexts with different orientations toward algorithmic authority.

Despite its limitation, this study also opens several avenues for future research. First, future studies could extend the proposed model by examining cross-cultural and cross-industry boundary conditions using comparative research designs. Building on signaling theory and social exchange theory, future research may investigate whether the effects of AI explainability on perceived organizational support and organizational attractiveness vary across cultural contexts with different levels of uncertainty avoidance or power distance. Methodologically, multi-country survey studies or cross-cultural experiments that manipulate levels of AI explainability could provide stronger causal evidence and enhance the external validity of the model. Similarly, comparative field studies across knowledge-intensive and labor-intensive industries could clarify industry-specific dynamics of AI-enabled recruitment.

Second, future research should adopt experimental and multi-method approaches to strengthen causal inference and reduce common method bias. While this study relied on self-reported data, future studies could employ controlled experiments that manipulate AI explainability (e.g., high- vs. low-explainability conditions) and measure applicants’ real-time emotional responses, perceived organizational support, and behavioral intentions. In addition, combining self-report measures with behavioral indicators (e.g., application completion rates, withdrawal decisions) or physiological data (e.g., stress or anxiety indicators) would provide a more comprehensive understanding of applicant reactions to AI-based recruitment. Future studies are encouraged to apply more stringent discriminant validity assessments, such as the heterotrait–monotrait ratio (HTMT), particularly when examining conceptually adjacent constructs in AI-mediated recruitment contexts.

Third, future research could examine the role of explainability across different AI recruitment tools and stages of the recruitment process. Although transparency is widely recognized as a central dimension of AI explainability, the measurement instrument used in this study does not capture all possible facets of explainability. For instance, other aspects such as decision rationale, underlying decision processes, and counterfactual explanations were not explicitly assessed. As a result, the current measurement may reflect only part of applicants’ perceptions of AI explainability. This study focused on AI-based interviews, yet AI is increasingly used in resume screening, chatbot-based communication, and automated assessments. Future studies could employ comparative experimental designs to test whether explainability plays different roles across recruitment stages and AI applications. Such research would contribute to a more nuanced, process-oriented understanding of how explainable AI shapes organizational attractiveness throughout the recruitment journey.

Author Contributions

Conceptualization, Q.Z.; methodology, Q.Z.; software, C.-H.W. and X.Z.; validation, Q.Z.; formal analysis, Q.Z. and H.L.; investigation, Q.Z.; resources, C.-H.W.; data curation, Q.Z. and X.Z.; writing—original draft preparation, Q.Z.; writing—review and editing, C.-H.W.; visualization, H.L.; supervision, C.-H.W.; project administration, C.-H.W.; funding acquisition, Q.Z. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki, and approved by the Institutional Review Board of Shanghai University (protocol code 2024.21021002 and 4 March 2024).

Informed Consent Statement

Written informed consent has been obtained from the patient(s) to publish this paper.

Data Availability Statement

The data supporting the conclusions of this article will be made available by the authors on request.

Acknowledgments

We wish to sincerely thank all the participants for their valuable contributions to this study. All acknowledged individuals have provided consent.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Adadi, A., & Berrada, M. (2018). Peeking inside the black-box: A survey on explainable artificial intelligence (XAI). IEEE Access, 6, 52138–52160. [Google Scholar] [CrossRef]

- Al-Quhfa, H., Mothana, A., Aljbri, A., & Song, J. (2024). Enhancing talent recruitment in business intelligence systems: A comparative analysis of machine learning models. Analytics, 3(3), 297–317. [Google Scholar] [CrossRef]

- Arias-Pérez, J., & Vélez-Jaramillo, J. (2022). Ignoring the three-way interaction of digital orientation, not-invented-here syndrome and employee’s artificial intelligence awareness in digital innovation performance: A recipe for failure. Technological Forecasting and Social Change, 174, 121305. [Google Scholar] [CrossRef]

- Arrieta, A. B., Díaz-Rodríguez, N., Del Ser, J., Bennetot, A., Tabik, S., Barbado, A., García, S., Gil-López, S., Molina, D., Benjamins, R., Chatila, R., & Herrera, F. (2020). Explainable artificial intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI. Information Fusion, 58, 82–115. [Google Scholar] [CrossRef]

- Bafera, J., & Kleinert, S. (2023). Signaling theory in entrepreneurship research: A systematic review and research agenda. Entrepreneurship Theory and Practice, 47(6), 2419–2464. [Google Scholar] [CrossRef]

- Bauer, T. N., Truxillo, D. M., Tucker, J. S., Weathers, V., Bertolino, M., Erdogan, B., & Campion, M. A. (2006). Selection in the information age: The impact of privacy concerns and computer experience on applicant reactions. Journal of Management, 32(5), 601–621. [Google Scholar] [CrossRef]

- Cai, Z., Li, X., Mao, Y., He, H., & Fu, Q. (2025). Who are favored by AI and why them? Influencing factors of job applicants’ interview performance and intention to use AVI-AI. International Journal of Organizational Leadership, 14(1). [Google Scholar] [CrossRef]

- Chapman, D. S., Uggerslev, K. L., Carroll, S. A., Piasentin, K. A., & Jones, D. A. (2005). Applicant attraction to organizations and job choice: A meta-analytic review of the correlates of recruiting outcomes. Journal of Applied Psychology, 90(5), 928–944. [Google Scholar] [CrossRef]

- Cropanzano, R., & Mitchell, M. S. (2005). Social exchange theory: An interdisciplinary review. Journal of Management, 31(6), 874–900. [Google Scholar] [CrossRef]

- Cysneiros, L. M., Raffi, M., & do Prado Leite, J. C. S. (2018). Software transparency as a key requirement for self-driving cars. In 2018 IEEE 26th international requirements engineering conference (RE) (pp. 382–387). IEEE. [Google Scholar] [CrossRef]

- Druce, J., Harradon, M., & Tittle, J. (2021). Explainable artificial intelligence (XAI) for increasing user trust in deep reinforcement learning driven autonomous systems. arXiv, arXiv:2106.03775. [Google Scholar] [CrossRef]

- Eisenberger, R., Huntington, R., Hutchison, S., & Sowa, D. (1986). Perceived organizational support. Journal of Applied Psychology, 71(3), 500–507. [Google Scholar] [CrossRef]

- Eisenberger, R., Rockstuhl, T., Shoss, M. K., Wen, X., & Dulebohn, J. H. (2019). Is the employee–organization relationship dying or thriving? A temporal meta-analysis. Journal of Applied Psychology, 104(8), 1036–1057. [Google Scholar] [CrossRef]

- European Commission. (2021). Regulatory framework proposal on artificial intelligence. Available online: https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai (accessed on 10 March 2026).

- Facts & Factors. (2024). Generative AI market size, share global analysis report, 2024–2032. Available online: https://www.fnfresearch.com/generative-ai-market (accessed on 23 October 2024).

- Gonzalez, M. F., Capman, J. F., Oswald, F. L., Theys, E. R., & Tomczak, D. L. (2019). “Where’s the IO?” Artificial intelligence and machine learning in talent management systems. Personnel Assessment and Decisions, 5(1), 5. [Google Scholar] [CrossRef]

- Habbal, A., Ali, M. K., & Abuzaraida, M. A. (2024). Artificial Intelligence trust, risk and security management (AI trism): Frameworks, applications, challenges and future research directions. Expert Systems with Applications, 240, 122442. [Google Scholar] [CrossRef]

- Hair, J. F., Risher, J. J., Sarstedt, M., & Ringle, C. M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2–24. [Google Scholar] [CrossRef]

- Hamm, P., Klesel, M., Coberger, P., & Wittmann, H. F. (2023). Explanation matters: An experimental study on explainable AI. Electronic Markets, 33(1), 17. [Google Scholar] [CrossRef]

- Haque, A. B., Islam, A. N., & Mikalef, P. (2023). Explainable Artificial Intelligence (XAI) from a user perspective: A synthesis of prior literature and problematizing avenues for future research. Technological Forecasting and Social Change, 186, 122120. [Google Scholar] [CrossRef]

- Hasan, R., Shams, R., & Rahman, M. (2021). Consumer trust and perceived risk for voice-controlled artificial intelligence: The case of Siri. Journal of Business Research, 131, 591–597. [Google Scholar] [CrossRef]

- Hausknecht, J. P., Day, D. V., & Thomas, S. C. (2004). Applicant reactions to selection procedures: An updated model and meta-analysis. Personnel Psychology, 57(3), 639–683. [Google Scholar] [CrossRef]

- Hoffman, B. J., & Woehr, D. J. (2006). A quantitative review of the relationship between person–organization fit and behavioral outcomes. Journal of Vocational Behavior, 68(3), 389–399. [Google Scholar] [CrossRef]

- Horodyski, P. (2023). Applicants’ perception of artificial intelligence in the recruitment process. Computers in Human Behavior Reports, 11, 100303. [Google Scholar] [CrossRef]

- Howardson, G. N., & Behrend, T. S. (2014). Using the Internet to recruit employees: Comparing the effects of usability expectations and objective technological characteristics on Internet recruitment outcomes. Computers in Human Behavior, 31, 334–342. [Google Scholar] [CrossRef]

- Huo, W., Li, Q., Liang, B., Wang, Y., & Li, X. (2025). When healthcare professionals use AI: Exploring work well-being through psychological needs satisfaction and job complexity. Behavioral Sciences, 15(1), 88. [Google Scholar] [CrossRef]

- Joo, Y. R., Moon, H. K., & Choi, B. K. (2016). A moderated mediation model of CSR and organizational attractiveness among job applicants: Roles of perceived overall justice and attributed motives. Management Decision, 54(6), 1269–1293. [Google Scholar] [CrossRef]

- Kellogg, K. C., Valentine, M. A., & Christin, A. (2020). Algorithms at work: The new contested terrain of control. Academy of Management Annals, 14(1), 366–410. [Google Scholar] [CrossRef]

- Kurtessis, J. N., Eisenberger, R., Ford, M. T., Buffardi, L. C., Stewart, K. A., & Adis, C. S. (2017). Perceived organizational support: A meta-analytic evaluation of organizational support theory. Journal of Management, 43(6), 1854–1884. [Google Scholar] [CrossRef]

- Laato, S., Tiainen, M., Najmul Islam, A. K. M., & Mäntymäki, M. (2022). How to explain AI systems to end users: A systematic literature review and research agenda. Internet Research, 32(7), 1–31. [Google Scholar] [CrossRef]

- Lavanchy, M., Reichert, P., Narayanan, J., & Savani, K. (2023). Applicants’ fairness perceptions of algorithm-driven hiring procedures. Journal of Business Ethics, 188(1), 125–150. [Google Scholar] [CrossRef]

- Leekha Chhabra, N., & Sharma, S. (2014). Employer branding: Strategy for improving employer attractiveness. International Journal of Organizational Analysis, 22(1), 48–60. [Google Scholar] [CrossRef]

- Lievens, F., & Highhouse, S. (2003). The relation of instrumental and symbolic attributes to a company’s attractiveness as an employer. Personnel Psychology, 56(1), 75–102. [Google Scholar] [CrossRef]

- Madhumithaa, N., Sharma, A., Adabala, S. K., Siddiqui, S., & Kothinti, R. R. (2025). Leveraging AI for personalized employee development: A new era in human resource management. Advances in Consumer Research, 2, 134–141. [Google Scholar]

- Malin, C., Fleiß, J., Ortlieb, R., & Thalmann, S. (2025). Rejected by an AI? Comparing job applicants’ fairness perceptions of artificial intelligence and humans in personnel selection. Frontiers in Artificial Intelligence, 8, 1671997. [Google Scholar] [CrossRef]

- Meijerink, J., Boons, M., Keegan, A., & Marler, J. (2021). Algorithmic human resource management: Synthesizing developments and cross-disciplinary insights on digital HRM. The International Journal of Human Resource Management, 32(12), 2545–2562. [Google Scholar] [CrossRef]

- Meske, C., Bunde, E., Schneider, J., & Gersch, M. (2022). Explainable artificial intelligence: Objectives, stakeholders, and future research opportunities. Information Systems Management, 39(1), 53–63. [Google Scholar] [CrossRef]

- Minge, M., & Thüring, M. (2018). Hedonic and pragmatic halo effects at early stages of user experience. International Journal of Human-Computer Studies, 109, 13–25. [Google Scholar] [CrossRef]

- Mujtaba, D. F., & Mahapatra, N. R. (2024). Fairness in AI-driven recruitment: Challenges, metrics, methods, and future directions. arXiv, arXiv:2405.19699. [Google Scholar] [CrossRef]

- Ochmann, J., Michels, L., Tiefenbeck, V., Maier, C., & Laumer, S. (2024). Perceived algorithmic fairness: An empirical study of transparency and anthropomorphism in algorithmic recruiting. Information Systems Journal, 34(2), 384–414. [Google Scholar] [CrossRef]

- Paramita, D., Okwir, S., & Nuur, C. (2024). Artificial intelligence in talent acquisition: Exploring organisational and operational dimensions. International Journal of Organizational Analysis, 32(1), 108–131. [Google Scholar] [CrossRef]

- Park, E. H., Werder, K., Cao, L., & Ramesh, B. (2022). Why do family members reject AI in health care? Competing effects of emotions. Journal of Management Information Systems, 39(3), 765–792. [Google Scholar] [CrossRef]

- Park, J., & Woo, S. E. (2022). Who likes artificial intelligence? Personality predictors of attitudes toward artificial intelligence. The Journal of Psychology, 156(1), 68–94. [Google Scholar] [CrossRef]

- Sandeep, M., Lavanya, V., & Balakrishnan, J. (2025). Leveraging AI in recruitment: Enhancing intellectual capital through resource-based view and dynamic capability framework. Journal of Intellectual Capital. Advance online publication. [Google Scholar] [CrossRef]

- Slaughter, J. E., Zickar, M. J., Highhouse, S., & Mohr, D. C. (2004). Personality trait inferences about organizations: Development of a measure and assessment of construct validity. Journal of Applied Psychology, 89(1), 85–103. [Google Scholar] [CrossRef]

- Soeling, P. D., Arsanti, S. D. A., & Indriati, F. (2022). Organizational reputation: Does it mediate the effect of employer brand attractiveness on intention to apply in Indonesia? Heliyon, 8(12), e11839. [Google Scholar] [CrossRef] [PubMed]

- Sommer, L. P., Heidenreich, S., & Handrich, M. (2017). War for talents—How perceived organizational innovativeness affects employer attractiveness. R&D Management, 47(2), 299–310. [Google Scholar] [CrossRef]

- Spence, M. (1973). Job market signaling. The Quarterly Journal of Economics, 87(3), 355–374. [Google Scholar] [CrossRef]

- Suseno, Y., Chang, C., Hudik, M., & Fang, E. S. (2023). Beliefs, anxiety and change readiness for artificial intelligence adoption among human resource managers: The moderating role of high-performance work systems. In S. K. Malik, A. K. Mishra, & R. Singh (Eds.), Artificial intelligence and international HRM (pp. 144–171). Routledge. [Google Scholar]

- van der Waa, J., Nieuwburg, E., Cremers, A., & Neerincx, M. (2021). Evaluating XAI: A comparison of rule-based and example-based explanations. Artificial Intelligence, 291, 103404. [Google Scholar] [CrossRef]

- Van Esch, P., Black, J. S., & Arli, D. (2021). Job candidates’ reactions to AI-enabled job application processes. AI and Ethics, 1, 119–130. [Google Scholar] [CrossRef]

- Vanderstukken, A., Van den Broeck, A., & Proost, K. (2016). For love or for money: Intrinsic and extrinsic value congruence in recruitment. International Journal of Selection and Assessment, 24(1), 34–41. [Google Scholar] [CrossRef]

- Venkatesh, V., & Thong, J. (2016). Unified theory of acceptance and use of technology: A synthesis and the road ahead. Journal of the association for Information Systems, 17(5), 328–376. [Google Scholar] [CrossRef]

- Vilone, G., & Longo, L. (2021). Notions of explainability and evaluation approaches for explainable artificial intelligence. Information Fusion, 76, 89–106. [Google Scholar] [CrossRef]

- Vuori, N., Burkhard, B., & Pitkäranta, L. (2025). It’s amazing–but terrifying!: Unveiling the combined effect of emotional and cognitive trust on organizational members’ behaviours, AI performance, and adoption. Journal of Management Studies. Advance online publication. [Google Scholar] [CrossRef]

- Walker, H. J., Feild, H. S., Bernerth, J. B., & Becton, J. B. (2012). Diversity cues on recruitment websites: Investigating the effects on job seekers’ information processing. Journal of Applied Psychology, 97(1), 214–224. [Google Scholar] [CrossRef] [PubMed]

- Wang, W., & Benbasat, I. (2016). Empirical assessment of alternative designs for enhancing different types of trusting beliefs in online recommendation agents. Journal of Management Information Systems, 33(3), 744–775. [Google Scholar] [CrossRef]

- Weitz, K., Schiller, D., Schlagowski, R., Huber, T., & André, E. (2021). “Let me explain!”: Exploring the potential of virtual agents in explainable AI interaction design. Journal on Multimodal User Interfaces, 15(1), 87–98. [Google Scholar] [CrossRef]

- Weng, C. K., & Why, N. K. (2025). Resume data extract and job recruitment Chatbot features for AI-based resume screening & analytics. MethodsX, 16, 103775. [Google Scholar] [CrossRef] [PubMed]

- Yu, J., Ma, Z., & Zhu, L. (2025). The configurational effects of artificial intelligence-based hiring decisions on applicants’ justice perception and organisational commitment. Information Technology & People, 38(2), 553–581. [Google Scholar] [CrossRef]

- Yuan, F., & Woodman, R. W. (2010). Innovative behavior in the workplace: The role of performance and image outcome expectations. Academy of Management Journal, 53(2), 323–342. [Google Scholar] [CrossRef]

- Zaki, M. N., & Pusparini, E. (2020). What constitute intentions to apply for the job in Indonesia technology-based start-ups companies? In Proceedings of the international conference on business and management research (ICBMR 2020) (pp. 306–313). Atlantis Press. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.