The Impact of Educational LLM Agent Use on Teachers’ Curriculum Content Creation: The Chain Mediating Role of School Support and Teacher Self-Efficacy

Abstract

1. Introduction

- To what extent and in what ways does the application of educational LLM agents exert a significant influence on the quality of teachers’ curriculum content creation?

- What is the mediating role and effect size of school support in the association between educational LLM agents utilization and teachers’ curriculum content creation quality?

- To what degree does teachers’ self-efficacy mediate the relationship between the use of educational LLM agents and the quality of their curriculum content creation?

- How does school support indirectly boost the quality of teachers’ curriculum content creation by improving their self-efficacy, and what is the magnitude of this chain mediation effect?

2. Literature Review

2.1. The Impact of Educational LLM Agents on Teachers’ Curriculum Content Creation

2.2. The Mediating Role of School Support

2.3. The Mediating Role of Teacher Self-Efficacy

2.4. The Chain Mediating Role of School Support and Teacher Self-Efficacy

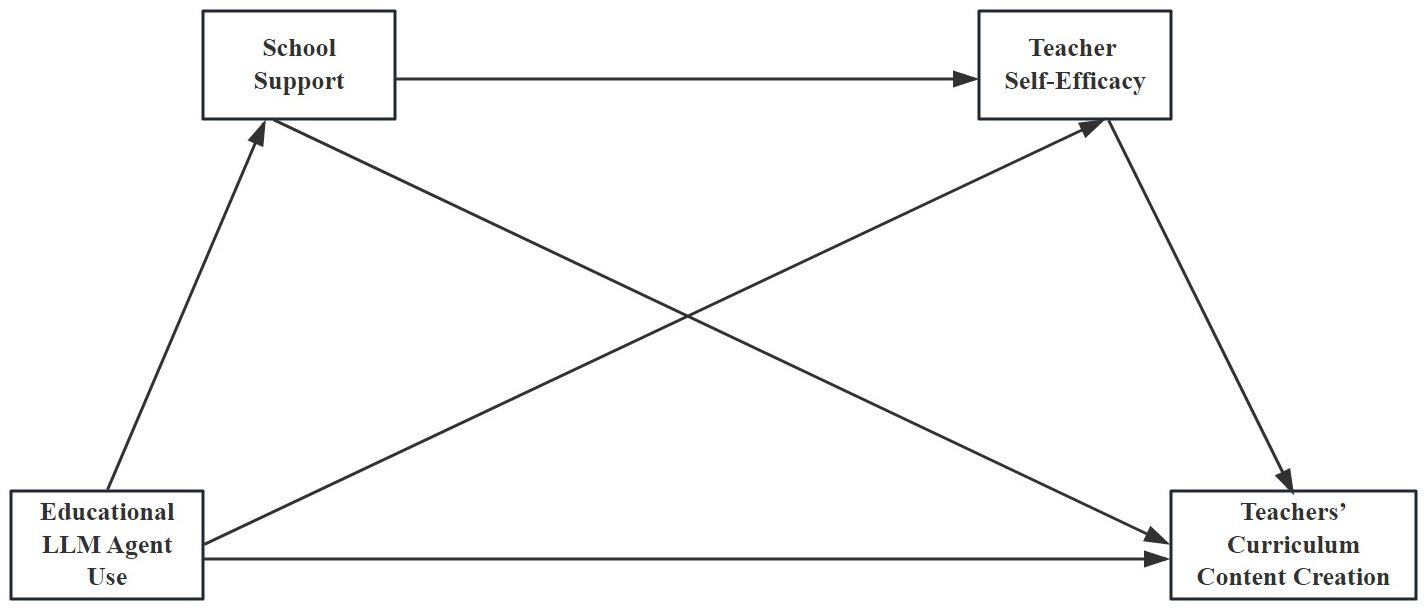

3. The Research Hypotheses

4. Method

4.1. Participants

4.2. Instruments

4.2.1. Key Scales

Educational LLM Agent Use Scale

School Support Scale

Teacher Self-Efficacy Scale

Curriculum Content Creation Scale

4.2.2. Measurement Model

4.3. Data Analysis

5. Results

5.1. Common Method Bias Test

5.2. Descriptive Statistics and Correlation Analysis

5.3. Testing the Chain Mediation Model of School Support and Teacher Self-Efficacy

5.3.1. Regression Analyses

5.3.2. Mediation Analyses

6. Discussion

6.1. The Relationship Between Educational LLM Agent Use and Teachers’ Curriculum Content Creation

6.2. The Influence of School Support and Teacher Self-Efficacy

6.3. The Chain Mediation of School Support and Teacher Self-Efficacy

7. Limitations and Future Directions

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. Survey Instruments in the Study

- 1. Basic informationYour gender is: □ Male □ FemaleYour domicile:□ Rural □ townships □ counties □ prefecture-level cities □ provincial capitals □ municipalities directly under the Central GovernmentThe middle school grades you teach is:□ 1st □ 2nd □ 3rd grade □ Cross-grade teaching (please specify: ______)The subjects you teach is:□ Chinese □ Mathematics □ English □ Physics □ Chemistry □ Biology □ History□ Geography □ Politics (Ethics & Rule of Law) □ Music □ Fine Arts □ Physical Education□ Information Technology □ Others (please specify: ______)2. Agent tools and functional cognitionPlease hit “√” after the option that matches your situation, you can select multiple options:I understand the tool platform of the agent: □ Domestic: Baidu Wenxin Agent□ Domestic: iFLYTEK Spark Platform □ Domestic: Tencent Hunyuan Platform □ Others (please specify: ______)I understand the functions of the following agents: □ Text-to-VideoFunction □ Text-to-Document □ Answering Function □ Text-to-ImageDiagram Function □ Audio Clip Function □ Code Generation Function □ Others (Please Specify: ______)3. Attitude towards the use and operation of agent toolsThe meaning of each alternative answer is as follows (same below):5 Very true: means that this statement holds true for you in almost all cases;4 Conformity: means that under normal circumstances, this statement is consistent with you;3 Uncertainty: means that in half of the cases, this statement is consistent with you;2 Non-conformity: means that under normal circumstances, this statement is not in accordance with you;1 Very inconsistent: means that in almost all cases this statement is inconsistent with you

| Scale Problem | Degree of Conformity | ||||

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

| 1 | 2 | 3 | 4 | 5 |

References

- Arnold, J. A., Arad, S., Rhoades, J. A., & Drasgow, F. (2000). The empowering leadership questionnaire: The construction and validation of a new scale for measuring leader behaviors. Journal of Organizational Behavior, 21(3), 249–269. [Google Scholar] [CrossRef]

- Bandura, A. (1977). Self-efficacy: Toward a unifying theory of behavioral change. Psychological Review, 84(2), 191–215. [Google Scholar] [CrossRef] [PubMed]

- Bandura, A. (1986). Social foundations of thought and action: A social cognitive theory. Prentice-Hall. [Google Scholar]

- Bandura, A. (1997). Self-efficacy: The exercise of control. W. H. Freeman. [Google Scholar]

- Bas, G. (2021). Effect of student teachers’ teaching beliefs and attitudes towards teaching on motivation to teach: Mediating role of self-efficacy. Journal of Education for Teaching, 48(3), 348–363. [Google Scholar] [CrossRef]

- Bautista, A. P., Bleza, D. G., Buhain, C. B., & Bali Brea, D. M. (2021). School support received and the challenges encountered in distance learning education by Filipino teachers during the COVID-19 pandemic. International Journal of Learning, Teaching and Educational Research, 20(6), 360–385. [Google Scholar] [CrossRef]

- Berretta, S., Tausch, A., Peifer, C., & Kluge, A. (2023). The job perception inventory: Considering human factors and needs in the design of human–AI work. Frontiers in Psychology, 14, 1128945. [Google Scholar] [CrossRef]

- Cai, Y., & Tang, R. (2021). School support for teacher innovation: Mediating effects of teacher self-efficacy and moderating effects of trust. Thinking Skills and Creativity, 41, 100854. [Google Scholar] [CrossRef]

- Cai, Y., & Tang, R. (2022). School support for teacher innovation: The role of basic psychological need satisfaction. Thinking Skills and Creativity, 45, 101047. [Google Scholar] [CrossRef]

- Cheung, A. C. K. (2025). The potential of educational agents for generating new possibilities of education. Science Insights Education Frontiers, 28(2), 4633–4635. [Google Scholar] [CrossRef]

- Dever, D. A., Wied Busch, M. D., Romero, S. M., & Anthony, S. (2024). Investigating pedagogical agents’ scaffolding of self-regulated learning in relation to learners’ subgoals. British Journal of Educational Technology, 55(4), 1290–1308. [Google Scholar] [CrossRef]

- Ding, L. J., Li, J. M., & Hui, B. H. (2025). Will teacher-AI collaboration enhance teaching engagement? Behavioral Sciences, 15(7), 866. [Google Scholar] [CrossRef]

- Fang, J., & Qi, Z. (2023). The influence of school climate on teachers’ job satisfaction: The mediating role of teachers’ self-efficacy. PLoS ONE, 18(10), e0287555. [Google Scholar] [CrossRef]

- Ferikoğlu, D., & Akgün, E. (2022). An investigation of teachers’ artificial intelligence awareness: A scale development study. Malaysian Online Journal of Educational Technology, 10(3), 215–231. [Google Scholar] [CrossRef]

- Granström, M., & Oppi, P. (2025). Assessing teachers’ readiness and perceived usefulness of AI in education: An Estonian perspective. Frontiers in Education, 10, 1234567. [Google Scholar] [CrossRef]

- Guan, L., Zhang, E. Y., & Gu, M. M. (2025). Examining generative AI–mediated informal digital learning of English practices with social cognitive theory: A mixed-methods study. ReCALL, 37(3), 315–331. [Google Scholar] [CrossRef]

- Han, Y. J. (2024). Commentary: Generative artificial intelligence empowers educational reform: Current status, issues, and prospects. Frontiers in Education, 9, 1445169. [Google Scholar] [CrossRef]

- Hazzan-Bishara, A., Kol, O., & Levy, S. (2025). The factors affecting teachers’ adoption of AI technologies: A unified model of external and internal determinants. Education and Information Technologies, 30, 15043–15069. [Google Scholar] [CrossRef]

- Huang, L., Zhang, T., & Huang, Y. (2020). Effects of school organizational conditions on teacher professional learning in China: The mediating role of teacher self-efficacy. Studies in Educational Evaluation, 66, 100893. [Google Scholar] [CrossRef]

- Jaboob, M., Hazaimeh, M., & Al-Ansi, A. M. (2024). Integration of generative AI techniques and applications in student behavior and cognitive achievement in Arab higher education. International Journal of Human–Computer Interaction, 41(1), 353–366. [Google Scholar] [CrossRef]

- Kayal, A. (2024). Transformative pedagogy: A comprehensive framework for AI integration in education. In Explainable AI for education: Recent trends and challenges (pp. 247–270). Springer Nature Switzerland. [Google Scholar] [CrossRef]

- Keedy, J. L., Gordon, S. P., Newton, R. M., & Winter, P. A. (2001). An assessment of school councils, collegial groups, and professional Development as Teacher Empowerment Strategies. Journal of In-Service Education, 27(1), 29–50. [Google Scholar] [CrossRef]

- Khlaif, Z. N., Ayyoub, A., Hamamra, B., Bensalem, E., Mitwally, M. A. A., Ayyoub, A., Hattab, M. K., & Shadid, F. (2024). University teachers’ views on the adoption and integration of generative AI tools for student assessment in higher education. Education Sciences, 14(10), 1090. [Google Scholar] [CrossRef]

- Kohnke, L., & Ulla, M. B. (2024). Embracing generative artificial intelligence: The perspectives of English instructors in Thai higher education institutions. Knowledge Management & E-Learning-An International Journal, 16(4), 653–670. [Google Scholar] [CrossRef]

- Konczak, L. J., Stelly, D. J., & Trusty, M. L. (2000). Defining and measuring empowering leader behaviors: Development of an upward feedback instrument. Educational and Psychological Measurement, 60(2), 301–313. [Google Scholar] [CrossRef]

- Küchemann, S., Avila, K. E., Dinc, Y., Hortmann, C., Revenga, N., Ruf, V., Stausberg, N., Steinert, S., Fischer, F., Fischer, M., Kasneci, E., Kasneci, G., Kuhr, T., Kutyniok, G., Malone, S., Sailer, M., Schmidt, A., Stadler, M., Weller, J., & Kuhn, J. (2025). On opportunities and challenges of large multimodal foundation models in education. NPJ Science of Learning, 10(1), 11. [Google Scholar] [CrossRef] [PubMed]

- Lam, S. F., Cheng, R. W. Y., & Choy, H. C. (2010). School support and teacher motivation to implement project-based learning. Learning and Instruction, 20(6), 487–497. [Google Scholar] [CrossRef]

- Li, L., & Zeng, D. T. (2025). Effect of teacher autonomy support on student engagement in physical education classrooms in a blended learning environment: The mediating role of performance expectancy and academic self-efficacy. BMC Psychology, 13(1), 123. [Google Scholar] [CrossRef]

- Liang, Y., & Lu, J. (2025). How school support influences the content creation of pre-service teachers’ instructional design. Behavioral Sciences, 15(5), 568. [Google Scholar] [CrossRef]

- Lim, J. J. Y., Zhang-Li, D., Yu, J., Cong, X., He, Y., Liu, Z., Liu, H., Hou, L., Li, J., & Xu, B. (2025). Learning in context: Personalizing educational content with large language models to enhance student learning. arXiv, arXiv:2509.15068. [Google Scholar] [CrossRef]

- Lu, J., Luo, T., Zhang, M., Shen, Y., Zhao, P., Cai, N., & Stephens, M. (2022). Examining the impact of VR and MR on future teachers’ creativity performance and influencing factors by scene expansion in instruction designs. Virtual Reality, 26(4), 1615–1636. [Google Scholar] [CrossRef]

- Lu, J., Zheng, R., Gong, Z., & Xu, H. (2024). Supporting teachers’ professional development with generative AI: The effects on higher order thinking and self-efficacy. IEEE Transactions on Learning Technologies, 17, 1279–1289. [Google Scholar] [CrossRef]

- Meron, Y., & Araci, Y. T. (2023). Artificial intelligence in design education: Evaluating ChatGPT as a virtual colleague for post-graduate course development. Design Science, 9, e30. [Google Scholar] [CrossRef]

- Molefi, R. R., Ayanwale, A. M., Kurata, L., & Park, S. (2024). Do in-service teachers accept artificial intelligence-driven technology? The mediating role of school support and resources. Computers and Education Open, 6, 100191. [Google Scholar] [CrossRef]

- Mu, S., Chen, X. R., & Zhou, D. Q. (2025). Generative artificial intelligence empowering instructional design analysis: Needs, methods, and development. Open Education Research, 31(1), 61–72. (In Chinese) [Google Scholar] [CrossRef]

- Norton, R. K., Gerber, E. R., Fontaine, P., Hohner, G., & Koman, P. D. (2022). The promise and challenge of integrating multidisciplinary and civically engaged learning. Journal of Planning Education and Research, 42(1), 102–117. [Google Scholar] [CrossRef] [PubMed]

- Oster, N., Henriksen, D., & Mishra, P. (2024). ChatGPT for teachers: Insights from online discussions. Tech Trends, 68, 640–646. [Google Scholar] [CrossRef]

- Pandey, N., & Singh, A. P. (2025). Assessing the role of artificial intelligence in the teaching-learning process. Archives of Current Research International, 25(7), 585–597. [Google Scholar] [CrossRef]

- Schwarzer, R., & Jerusalem, M. (1995). Generalized self-efficacy scale. In J. Weinman, S. Wright, & M. Johnston (Eds.), Measures in health psychology: A user’s portfolio. Causal and control beliefs (pp. 35–37). NFER-Nelson. [Google Scholar]

- Sharma, S., Mittal, P., Kumar, M., & Bhardwaj, V. (2025). The role of large language models in personalized learning: A systematic review of educational impact. Discover Sustainability, 6(1), 243. [Google Scholar] [CrossRef]

- Shen, W. P., Lin, X. F., Chiu, T. K. F., & Wang, L. (2024). How school support and teacher perception affect teachers’ technology integration: A multilevel mediation model analysis. Education and Information Technologies, 29(18), 12345–12367. [Google Scholar] [CrossRef]

- Shirli, W., Gumpel, T. P., Koller, J., & Leyser, Y. (2022). Correction: Can self-efficacy mediate between knowledge of policy, school support and teacher attitudes towards inclusive education? PLoS ONE, 17(1), e0261234. [Google Scholar] [CrossRef]

- Song, Y., Wang, J., Chen, Y., & Li, H. (2025). Exploring the potential of adopting an interactive mixed-reality tool in teacher professional development: Impact on teachers’ self-efficacy and practical competencies of dialogic pedagogy. Computers & Education, 238, 105390. [Google Scholar] [CrossRef]

- Stevenson, E., van Driel, J., & Millar, V. (2024). How to support teacher learning of integrated STEM curriculum design. Journal for STEM Education Research, 1–26. [Google Scholar] [CrossRef]

- Theeuwes, B., Saab, N., Denessen, E., & Admiraal, W. (2025). Unraveling teachers’ intercultural competence when facing a simulated multicultural classroom. Teaching and Teacher Education, 162, 105053. [Google Scholar] [CrossRef]

- Wang, G., Bai, H., Tsang, K. K., & Li, L. (2025). The effect of teacher collaboration on teachers’ career well-being in China: A moderated mediation model of teacher self-efficacy and distributed leadership. Acta Psychologica, 259, 105303. [Google Scholar] [CrossRef] [PubMed]

- Xiang, B., Xin, M., Fan, X., & Xin, Z. (2024). How does career calling influence teacher innovation? The chain mediation roles of organizational identification and work engagement. Psychology in the Schools, 61(12), 4672–4687. [Google Scholar] [CrossRef]

- Xu, G., Yu, A., Gao, A., & Trainin, G. (2025). Developing an AI-TPACK framework: Exploring the mediating role of AI attitudes in pre-service TCSL teachers’ self-efficacy and AI-TPACK. Education and Information Technologies, 30, 22471–22495. [Google Scholar] [CrossRef]

- Yao, N., & Wang, Q. (2024). Factors influencing pre-service special education teachers’ intention toward AI in education: Digital literacy, teacher self-efficacy, perceived ease of use, and perceived usefulness. Heliyon, 10(14), e34894. [Google Scholar] [CrossRef]

- Yaseen, H., Mohammad, A. S., Ashal, N., Abusaimeh, H., Ali, A., & Sharabati, A.-A. A. (2025). The impact of adaptive learning technologies, personalized feedback, and interactive AI tools on student engagement: The moderating role of digital literacy. Sustainability, 17(3), 1133. [Google Scholar] [CrossRef]

- Zangana, H. M., Nobles, C., & Omar, M. (2025). Harnessing AI for teacher support and professional development. IGI Global Scientific Publishing. [Google Scholar] [CrossRef]

- Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education—Where are the educators? International Journal of Educational Technology in Higher Education, 16(1), 39. [Google Scholar] [CrossRef]

- Zhai, X. (2025). Transforming teachers’ roles and agencies in the era of generative AI: Perceptions, acceptance, knowledge, and practices. Journal of Science Education and Technology, 34, 1323–1333. [Google Scholar] [CrossRef]

- Zhou, H., & Long, L. (2004). Statistical test and control of common method bias. Advances in Psychological Science, 12(6), 942–950. (In Chinese) [Google Scholar]

- Zhu, S., Li, Q., Yao, Y., Li, J., & Zhu, X. (2025). Improving writing feedback quality and self-efficacy of pre-service teachers in Gen-AI contexts: An experimental mixed-method design. Assessing Writing, 66, 100960. [Google Scholar] [CrossRef]

| Variable | Category | Frequency | Percentage |

|---|---|---|---|

| Gender | Male | 149 | 32.1% |

| Female | 315 | 67.9% | |

| Household Registration | Rural | 350 | 75.4% |

| Urban | 114 | 24.6% | |

| Teaching Grade | Grade 7 | 215 | 46.4% |

| Grade 8 | 147 | 31.7% | |

| Grade 9 | 102 | 22.0% | |

| Teaching Subject | Science | 188 | 40.5% |

| Information Technology | 113 | 24.4% | |

| Mathematics | 163 | 35.1% |

| Model | χ2 | df | χ2/df | CFI | TLI | NFI | GFI | RMSEA | SRMR | Δχ2 |

|---|---|---|---|---|---|---|---|---|---|---|

| Baseline Model (M1) | 416.794 | 113 | 3.688 | 0.970 | 0.964 | 0.959 | 0.904 | 0.076 | 0.0234 | |

| Model 1 | 868.401 | 116 | 7.486 | 0.925 | 0.912 | 0.915 | 0.783 | 0.118 | 0.0409 | 359.606 |

| Model 2 | 570.135 | 116 | 4.915 | 0.955 | 0.947 | 0.944 | 0.866 | 0.092 | 0.0288 | 153.341 |

| Model 3 | 763.599 | 116 | 6.582 | 0.936 | 0.925 | 0.925 | 0.807 | 0.110 | 0.0349 | 346.805 |

| Model 4 | 961.679 | 118 | 8.150 | 0.916 | 0.903 | 0.906 | 0.768 | 0.124 | 0.0418 | 544.885 |

| Model 5 | 1287.207 | 119 | 10.817 | 0.884 | 0.867 | 0.874 | 0.692 | 0.146 | 0.0466 | 870.413 |

| Variable | M ± SD | 1. Educational LLM Agent Use | 2. School Support | 3. Teacher Self-Efficacy |

|---|---|---|---|---|

| 1. Educational LLM Agent Use | 3.17 ± 0.814 | |||

| 2. School Support | 2.98 ± 0.825 | 0.777 ** | ||

| 3. Teacher Self-Efficacy | 3.11 ± 0.809 | 0.905 ** | 0.827 ** | |

| 4. Teachers’ Curriculum Content Creation | 3.20 ± 0.808 | 0.902 ** | 0.748 ** | 0.857 ** |

| Regression Path | Overall Fit Indices | Predictors (IVS) | ||||

|---|---|---|---|---|---|---|

| Outcome Variable (DV) | Predictor Variable (IV) | R | R2 | F | β | t |

| School support | Educational LLM Agent Use | 0.777 | 0.604 | 704.445 | 0.777 | 26.541 *** |

| Teacher Self-efficacy | Educational LLM Agent Use | 0.926 | 0.858 | 1392.108 | 0.664 | 23.796 *** |

| School support | 0.311 | 11.146 *** | ||||

| Teachers’ Curriculum Content Creation | Educational LLM Agent Use | 0.908 | 0.824 | 717.136 | 0.690 | 14.856 *** |

| School support | 0.063 | 1.793 | ||||

| Teacher Self-efficacy | 0.181 | 3.481 ** | ||||

| Pathway | Indirect Effect | Bootstrap SE | 95% CI | Significance | Proportion of Effect |

|---|---|---|---|---|---|

| Total Effect | 0.902 | 0.0200 | 0.8569~0.9353 | Significant | 100% |

| Direct Effect | 0.6895 | 0.0464 | 0.5983~0.7807 | Significant | 76.4% |

| Indirect Effect | 0.2125 | 0.0592 | 0.1036~0.3338 | Significant | 23.6% |

| Educational LLM Agent Use → School Support → Teachers’ Curriculum Content Creation | 0.0488 | 0.0412 | −0.0258~0.1357 | Not Significant | \ |

| Educational LLM Agent Use → Teacher Self-Efficacy → Teachers’ Curriculum Content Creation | 0.1200 | 0.0446 | 0.0364~0.2113 | Significant | 13.3% |

| Educational LLM Agent Use → School Support → Teacher Self-Efficacy → Teachers’ Curriculum Content Creation | 0.0437 | 0.0159 | 0.0146~0.0763 | Significant | 4.8% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xu, H.; Chen, M.; Wang, M.; Lu, J. The Impact of Educational LLM Agent Use on Teachers’ Curriculum Content Creation: The Chain Mediating Role of School Support and Teacher Self-Efficacy. Behav. Sci. 2026, 16, 124. https://doi.org/10.3390/bs16010124

Xu H, Chen M, Wang M, Lu J. The Impact of Educational LLM Agent Use on Teachers’ Curriculum Content Creation: The Chain Mediating Role of School Support and Teacher Self-Efficacy. Behavioral Sciences. 2026; 16(1):124. https://doi.org/10.3390/bs16010124

Chicago/Turabian StyleXu, Huifen, Minjing Chen, Minjuan Wang, and Jijian Lu. 2026. "The Impact of Educational LLM Agent Use on Teachers’ Curriculum Content Creation: The Chain Mediating Role of School Support and Teacher Self-Efficacy" Behavioral Sciences 16, no. 1: 124. https://doi.org/10.3390/bs16010124

APA StyleXu, H., Chen, M., Wang, M., & Lu, J. (2026). The Impact of Educational LLM Agent Use on Teachers’ Curriculum Content Creation: The Chain Mediating Role of School Support and Teacher Self-Efficacy. Behavioral Sciences, 16(1), 124. https://doi.org/10.3390/bs16010124