Abstract

Strong gravitational lensing provides valuable insights into the mass distribution of galaxies and the nature of dark matter. However, its modeling is computationally demanding due to the large volume of strong lensing observations. In this work, we explore the application of Convolutional Neural Networks to infer physical parameters from simulated galaxy–galaxy lens systems, described by the Singular Isothermal Ellipsoid (SIE) profile for the galaxy lens. We construct a dataset of 76,396 synthetic lensing images derived from the China Space Station Telescope catalog and employ it to train a modified CNN model, based on the AlexNet architecture, to predict four key SIE parameters, the Einstein radius, the axis ratio and ellipticity components. We analyze the network performance under three distinct dropout configurations to quantify their influence on generalization and parameter inference accuracy. The results indicate that the incorporation of dropout is critical for enhancing the precision and robustness of the estimated parameters as demonstrated using a 4-fold cross-validation procedure. When dropout tools are included, we obtain coefficients of determination up to for most SIE parameters and mean peak signal-to-noise ratios of up to ∼37 dB. Relative to the configuration without dropout, the use of dropout reduces the relative errors in the inferred SIE parameters by approximately 60–76%, resulting in errors of at most ∼9% at the 90% confidence level for the majority of parameters. These findings highlight the potential of deep learning approaches to enable scalable, computationally efficient, and high-precision modeling of strong gravitational lensing systems.

1. Introduction

A gravitational lens is any massive object, such as a galaxy, galaxy cluster, or quasar, whose gravitational influence results in the curvature of space-time, thereby altering the trajectory of light from more distant objects. When the projected mass within the Einstein radius becomes sufficient to produce multiple images, the observed phenomenon is known as a strong gravitational lens (SGL). When the alignment observer-lens-source is perfect, and the source is extended the resulting image is a ring known as an Einstein ring [1,2,3,4,5]. Such phenomena impose significant constraints on the projected mass of the lensed object, aligning with the predictions of Einstein’s theory of general relativity. Thus, strong lensing functions as a vital tool for probing dark matter within the central regions of halos or for determining the gradient of the inner mass density profile of galaxies [5,6]. Moreover, strong lenses facilitate the examination of sources with high redshift as a result of their ability to magnify up to 30 times the background source [7]. Grillo et al. [8] proposed a methodology to estimate cosmological parameters using Strong Gravitational Systems (SGSs). This method leveraged the correlation between the Einstein radius and the central stellar velocity dispersion, assuming an isothermal distribution of the total density for the lensed galaxy. However, the problem with applying this method is the mass-sheet degeneracy and the deviations from isothermality and orbital isotropy that can cause the observed velocity dispersion to differ by up to [5]. Solving this degeneracy requires spatially resolved kinematics, which might not be readily available for the thousands of lensed systems expected in future surveys. Thus, the method proposed by [8] is a first approximation for such a large sample of systems. Another application is its use to estimate the Hubble constant by analyzing time delays between multiple quasar images of the source [5,9,10]. However, this method requires investing in months or years of photometric follow-up observations.

Advanced and sophisticated telescopes, including Euclid [11,12], the Very Large Telescope [13], the James Webb Space Telescope (JWST) [14,15] are already providing unprecedented data. Furthermore, next-generation facilities such as the Simonyi telescope at the Vera C. Rubin Observatory [16], the Extremely Large Telescope (ELT) [17,18] and the Chinese Survey Space Telescope (CSST) [19] are expected to begin operations in the coming years. Together, these instruments will capture images and collect extensive datasets of approximately ∼105 [20] to enhance our understanding of SGSs. Some known large galaxy–galaxy lens surveys include the Cosmic Lens All-Sky Survey (CLASS) [21,22], the COSMOS Survey [23], and the Sloan Lens ACS Survey (SLACS) [24,25]. Such an extensive data collection, comprising more than 100,000 images, poses a challenge to traditional analytical methods, which may require substantial processing time. Conventional lens modeling techniques attempt to address the non-linear inverse problem of reconstructing the brightness of lensed sources while simultaneously modeling the gravitational potential of lenses.

Subsequent to the detection of lenses, it is imperative to develop a model that accurately depicts the total mass distribution, thereby assisting in the elimination of potential false-positive outcomes. Currently, Monte Carlo Markov Chain (MCMC) techniques have been deployed for system modeling; however, these methods are becoming inadequate given the increasing volume of data [26]. To mitigate this issue, artificial intelligence tools such as Convolutional Neural Networks (CNNs) are highly valued due to their proven success in stellar image classification, thereby becoming the burgeoning technique for lens search and identification. CNNs were first introduced in 1988 when Zhang [27] proposed the first two-dimensional convolutional neural network, known as the shift-invariant artificial neural network. The inaugural application of CNNs in astronomical classification involved spectrum classification in the SDSS as documented by Hála’s [28]. Petrillo et al. [29] pioneered the use of a CNN-based morphological classification method to identify strong gravitational lenses in the 225 Kilo Degree Survey. Hezaveh showcased the proficiency of CNNs in predicting parameter values of mass models using preprocessed high-resolution lens images [30]. This team further expanded their research by presenting a CNN specifically designed for modeling high-resolution lens images. The Highly Optimized Lensing Investigations of Supernovae, Microlensing Objects, and Kinematics of Ellipticals and Spirals (HOLISMOKES) team developed a CNN aimed at modeling strongly lensed galaxy images [31], crafted to predict the five parameters of the SIE mass model. Warren R. Morningstar et al. [32] introduced a machine learning approach to reconstructing undistorted images of background sources in strongly lensed systems. Additionally, Bayesian Neural Networks (Bayesian NNs), a probabilistic variant of deep neural networks, have exhibited great success in the extraction of highly abstract information from complex image data. This capability is exemplified in the work of Ji Won Park et al. [33], who used simulated lens time delays to determine the Hubble constant, and Pearson et al. [34], who trained an approximate Bayesian CNN to predict the parameters of the mass profile. More recently, the Euclid Collaboration [35] designed a Bayesian NN to model gravitational lenses automatically and efficiently. With LEMON, key parameters of the lens mass profile, such as the Einstein radius, are estimated in addition to the parameters of the light distribution of the lens galaxy, along with its uncertainties. LEMON was applied to simulated lenses (with characteristics similar to those captured by the Euclid telescope) and also to real lenses. Further works such as those by R. Parlange et al. [36] dive into transformer models to detect strong gravitational lenses from sets from HOLISMOKES VI and SuGOHI X.

Due to the efficacy of CNNs in analyzing SGS, this study provides a comprehensive examination of CNN performance in predicting the physical parameters associated with strong lensing. Specifically, our research emphasizes the influence of CNN architecture and regularization strategies, with particular attention to the incorporation of dropout layers on the accuracy and robustness of parameter estimation. Dropout, a widely used technique introduced by N. Srivastava et al. [37], plays a crucial role in mitigating overfitting in deep learning models, thereby enhancing their generalization capabilities. Its functionality consists on randomly turning off a set of neurons during each training iteration, preventing the units from depending on each other and enabling the network to learn more complex structures. The dropout technique is more detailed in Section 3. In contexts such as gravitational lensing, where the recovery of model parameters such as ellipticity and external shear is acutely sensitive to minute variations in image features, the optimization of dropout placement and rate is crucial in enhancing prediction quality. Consequently, our objective is to determine the optimal configuration and integration of dropout layers within a CNN model to enable precise and reliable estimation of the parameters of the Singular Isothermal Ellipsoid (SIE) model. Our detailed analysis involves comparing the complete image set against the discrepancies observed between simulated images and those modeled based on CNN-predicted values. The synthetic dataset comprises 76,396 images of simulated galaxy–galaxy lens systems derived from the China Space Station Telescope (CSST) catalog, generated in a noise-free environment. In our analysis, the dataset was partitioned into 70,000 images for the k-fold cross-validation process, in which each fold utilized 75% of the images for training and 25% for validation. Additionally, the 6393 remaining images were used in testing purposes. A model based on the AlexNet architecture [38] was selected because actual deep architectures are excessive and computationally expensive to process the millions of images that the great telescopes are going to get. The modifications made to the network enable a smaller architecture compared to current ones, facilitating rapid predictions. The addition of a convolutional layer to the central block enhances data extraction depth and mitigates linearity. The model predicts four parameters from the SIE lens model: complex ellipticity components and , axial ratio f and Einstein radius (see Section 2 for their definitions). The predictive accuracy of the model is assessed by measuring the relative error between the actual and predicted values. Through this investigation, our aim is to advance the interpretability and effectiveness of deep learning methodologies in modeling gravitational lenses through the validation of the dropout as a critical tool for the reliability of predictions and the use of a lighter architecture designed for the massive data.

The manuscript is organized as follows. Section 2 and Section 3 introduce the subject matter and articulate the principal aim of the paper. These sections provide a theoretical foundation concerning gravitational lensing and succinctly expound on CNNs. Section 4 explores the synthetic data used in this investigation, elaborating on the factors considered in the methodological approach, and offers a comprehensive overview of the evaluation metrics used to assess the performance of the model and analyze the relative errors. Section 5 provides a summary of the performance of the model, elucidates the relative errors associated with the predictions, and discusses these findings. Finally, Section 6 presents the conclusions derived from the study and outlines recommendations for future research.

2. Gravitational Lensing Background

2.1. Strong Lensing Theory

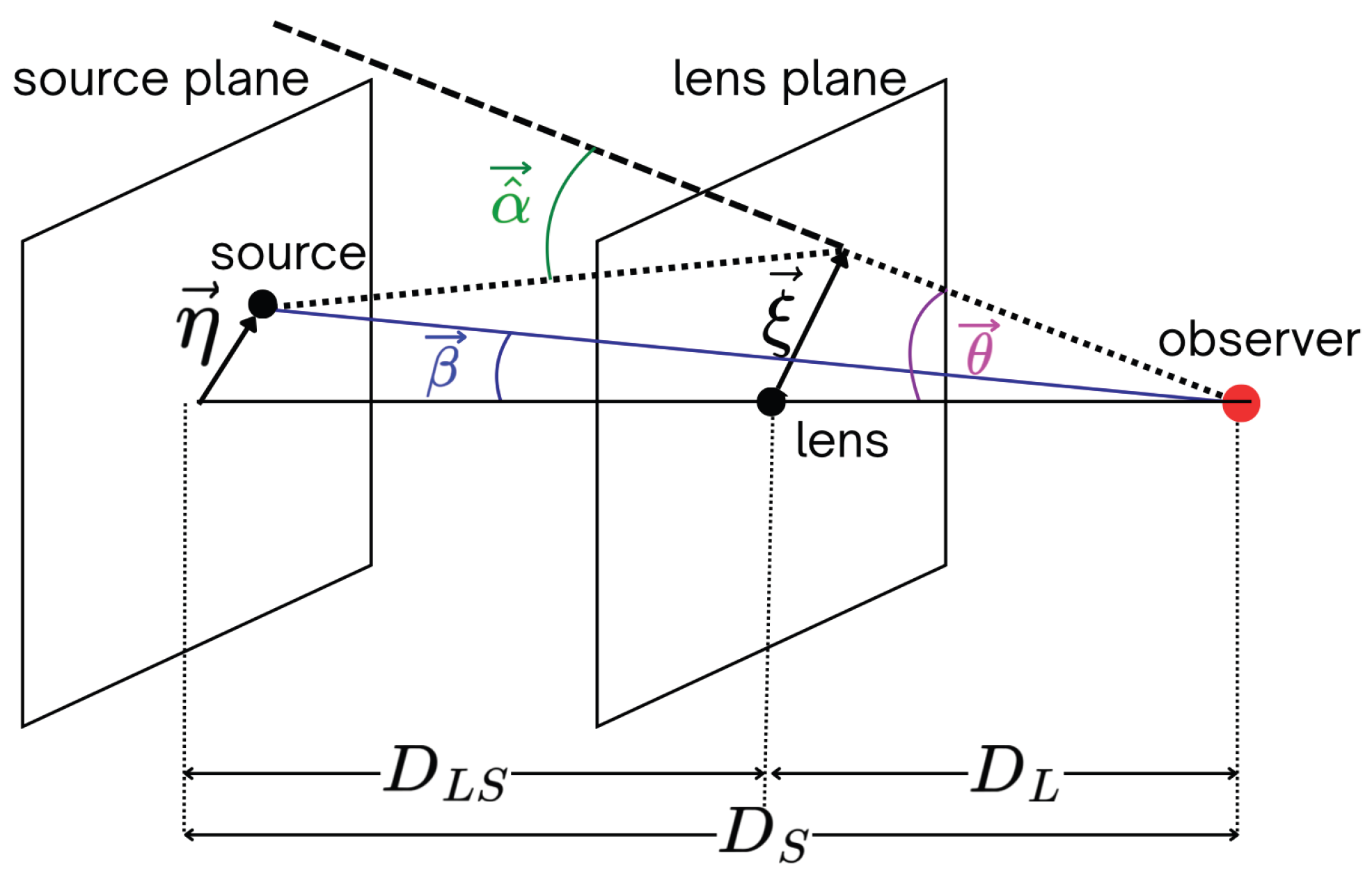

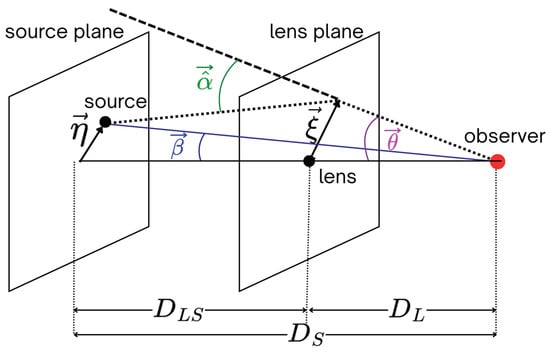

A representative scenario in gravitational lensing is depicted in Figure 1, where a mass concentration is observed to deflect light rays emanating from a source located at a distance . In the absence of additional deflectors near the line of sight, and assuming that the extent of the deflecting mass along the line-of-sight is considerably smaller than both and the distance from the deflector to the source, light rays are considered to be deflected in a plane (thin lens hypothesis). The magnitude and orientation of this curvature are characterized by the deflection angle , which depends on the mass distribution of the deflector and the impact vector of the light ray [1]. The real position of the source relative to the observed position of the images in the sky is therefore delineated by the lens equation, which outlines a transformation from the lens plane to the source plane for any mass distribution. For a source positioned in the source plane with a real angular position , the light rays that arrive at the lens plane at a distance from the optical axis (defined by the lens-observer line) are deflected by the angle before reaching an observer. As a result of the deflection, the observer perceives images of the source at the apparent angular positions , with i representing each image. If , , and are small angles (of the order of arcseconds), considering the reduced deflection angle by

where is the angular diameter distance between the lens and the source, the lens equation can be written as

One important consequence of gravitational lensing is image distortion, which can be described by the Jacobian matrix as

where represents the effective lensing potential. By segregating the isotropic component (associated to the mass of the main deflector) from the Jacobian and extracting its trace-less segment, we can derive the shear tensor, which encapsulates the external perturbation, thereby characterizing the distortions of background sources. The expression for the shear tensor is

where and .

Figure 1.

Sketch not drawn to scale; illustrates the parameters involved in a typical gravitational lensing system. represents the real position of the source, represents the apparent position of the source and represents the deflection angle.

2.2. SIE Model Parametrization

In order to construct realistic models of gravitational lens systems, we must employ mass distributions defined by elliptical isodensity contours. In this research, we focus on the surface density of the Singular Isothermal Ellipsoid (SIE) model [4,39,40,41]. The SIE model can be understood as a natural generalization of the singular isothermal sphere (SIS), which assumes spherical symmetry and an isothermal velocity distribution. In the SIS model, three-dimensional mass density () follows:

where represents the line-of-sight velocity dispersion of the lens galaxy and r is given by , which represents the spherical radial coordinate. To account for deviations from spherical symmetry, the SIE model introduces ellipticity in the projected mass distribution by replacing the circular radial coordinate in the lens plane with an elliptical one. Following [39], this is achieved through the transformation . Under this prescription, the projected density profile and the corresponding surface mass density () become

In this context, f refers to the axis ratio of the ellipses, specified within the interval . The SIE model profile is fundamentally defined by the line-sight velocity dispersion of the lens galaxy, . This motivation is key, as serves as an observational input to alternatively estimate the galaxy mass. Consequently, the dimensionless surface mass density or convergence is directly related to this dispersion. Given the angular coordinates , the convergence is given by

The expression delineates the density of the projected surface mass within the lens. Within this formulation, given a perfect alignment source-lens-observer, denotes the Einstein radius, which is defined by

where c denotes the speed of light. Using polar coordinates , where is the polar angle measured from the semi-major axis (), and taking into account the relationship delineated in , where represents the lensing potential for the SIE model, which can be expressed as

where and characterizes the shape of equipotential surfaces and tends to unit at the circular boundary [1,4,39]. The deflection angle relevant to SIE model can be expressed as follows:

where and denote the unit vectors along the principle axes and the deflection angle is related to the potential by . In addition to the free parameters of the model, which include the central coordinates and the position angle between the semi-major axis of the mass ellipse of the lens and the axis x of the lens plane, the ellipticity is typically parameterized by the eccentricity moduli

which are continuously defined according to , thus removing the periodic boundaries associated with and addressing the discontinuity in as noted in [42].

2.3. Light Model Parametrization

We assume that the lens and source light follow the elliptical Sérsic model distribution, allowing a flexible but physically motivated description of galaxy morphology while keeping the number of free parameters manageable for lens modeling. The Sérsic profile for the surface brightness is defined by the following expression:

where denotes the surface brightness at the half-light radius , and represents a constant related to the Sérsic index by [43,44,45,46].

3. Convolutional Neural Networks Theory

Convolutional neural networks, although analogous to traditional feedforward neural networks, are characterized by their ability to extract hierarchical features through convolutional processes, thus reducing computational expenses [47,48]. CNNs have several advantages over conventional neural networks. Firstly, they reduce the number of parameters and enhance convergence by establishing neuron connections with smaller subsets of neurons from the previous layer. Secondly, the method of weight-sharing decreases parameters by distributing weights across various connections. Lastly, CNNs incorporate downsampling, which reduces the input dimensionality, making them suitable for smaller input sizes.

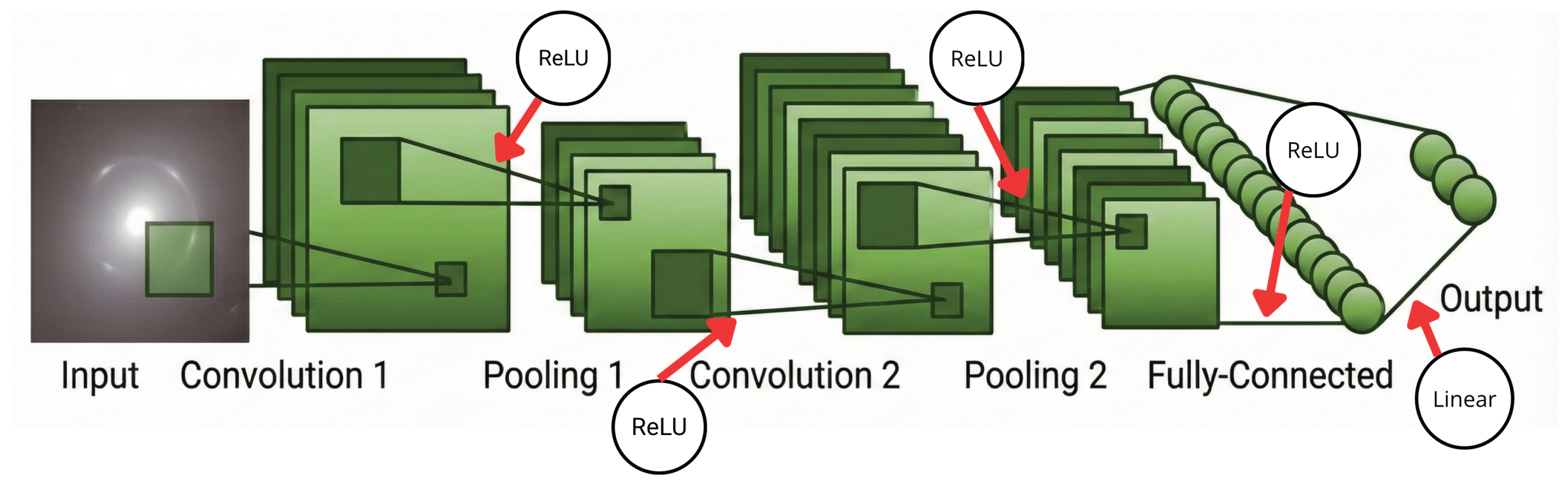

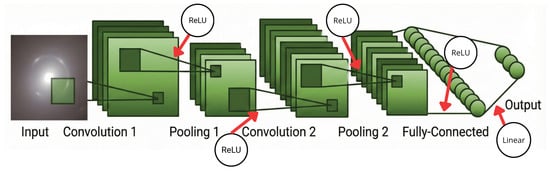

The CNN architecture comprises four main components—convolutional layers, pooling layers, activation functions, and fully connected layers—as depicted in Figure 2. Convolutional layers utilize filters on the input data to identify patterns. The pooling layers perform simplification by downsampling along spatial dimensions, thus reducing parameter loads in the activation process. Their core function is to reduce the spatial dimensions of the activation maps while preserving critical features, which helps to reduce computational demands, control overfitting, and achieve invariant representations of small translations. Activation functions are integral to the expression of complex features, acting similarly to the neuronal model of the human brain by determining which information propagates to the succeeding neuron. The Rectified Linear Unit (ReLU) is prevalently used among activation functions [38,49,50]; it assists the model in discerning non-linear image patterns. If the input x is negative, its function value is 0, while if x is non-negative, its function value equals x itself. The ReLU function is written as

Figure 2.

Illustrative architecture of a CNN model. The design incorporates convolution layers for the extraction of local features, which are subsequently reduced in spatial dimensions through pooling layers. The processed features are subsequently managed by fully connected layers to yield the final output, corresponding either to the prediction of physical parameters or to classification tasks. An important component of NNs is the activation function, which manage the activation of the neurons in each layer.

Although the term CNN was assigned in the 1980s, it was Yann LeCun [51] who pioneered the effective application of the backpropagation method for CNNs aimed at handwritten zip code recognition, coining the term convolution in the process. Dropout is a significant machine learning algorithm introduced in the conference on Neural Information Processing Systems (NIPSs) 2012 [52] to train neural networks, which works by randomly dropping out neurons during training to prevent feature detector co-adaptation1. Dropout facilitates the training of numerous network configurations within reasonable timescales [53], alleviates overfitting, and offers an effective approximation to combine exponentially many unique NN structures [37,54]. In considering an NN with hidden layers L, where is the input vector for each layer, represents the output vector from layer l, and signifies the weights in layer l, the dropout feedforward algorithm undergoes redefinition:

where represents a vector of independent Bernoulli random variables, each associated with probability p. The output of that layer is multiplied in elements by to produce thinned outputs [37]. The probability of dropping out depends on the architecture and size of the dataset, and its study is crucial to obtain promising results and reduce overfitting. Normally, the rate at which the dropout is selected is between 20% and 50%. In the subsequent section, we examine three scenarios concerning the influence of dropout on CNN outputs.

4. Methodology

4.1. Simulated Sample from China Space Station Telescope (CSST)

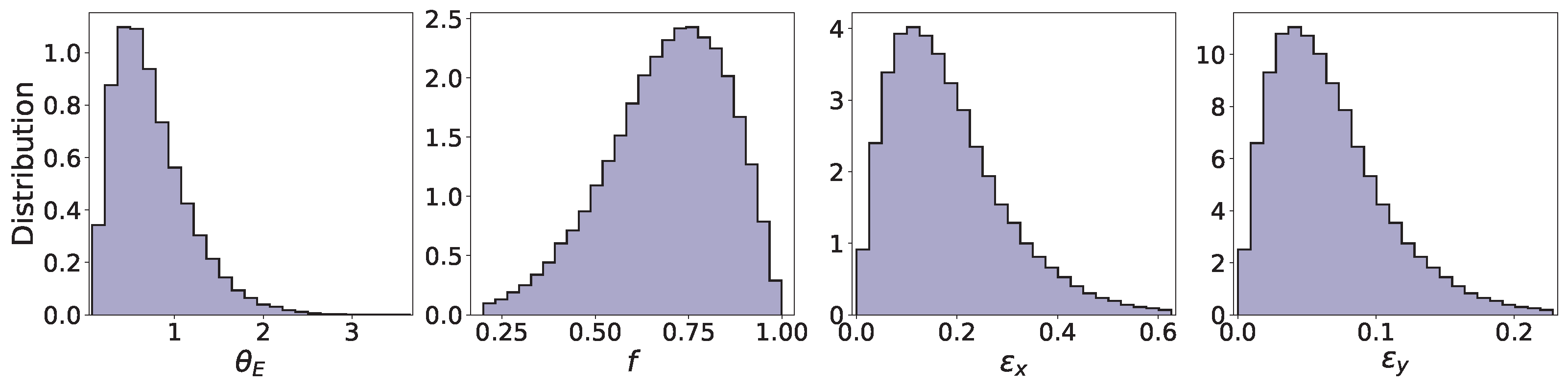

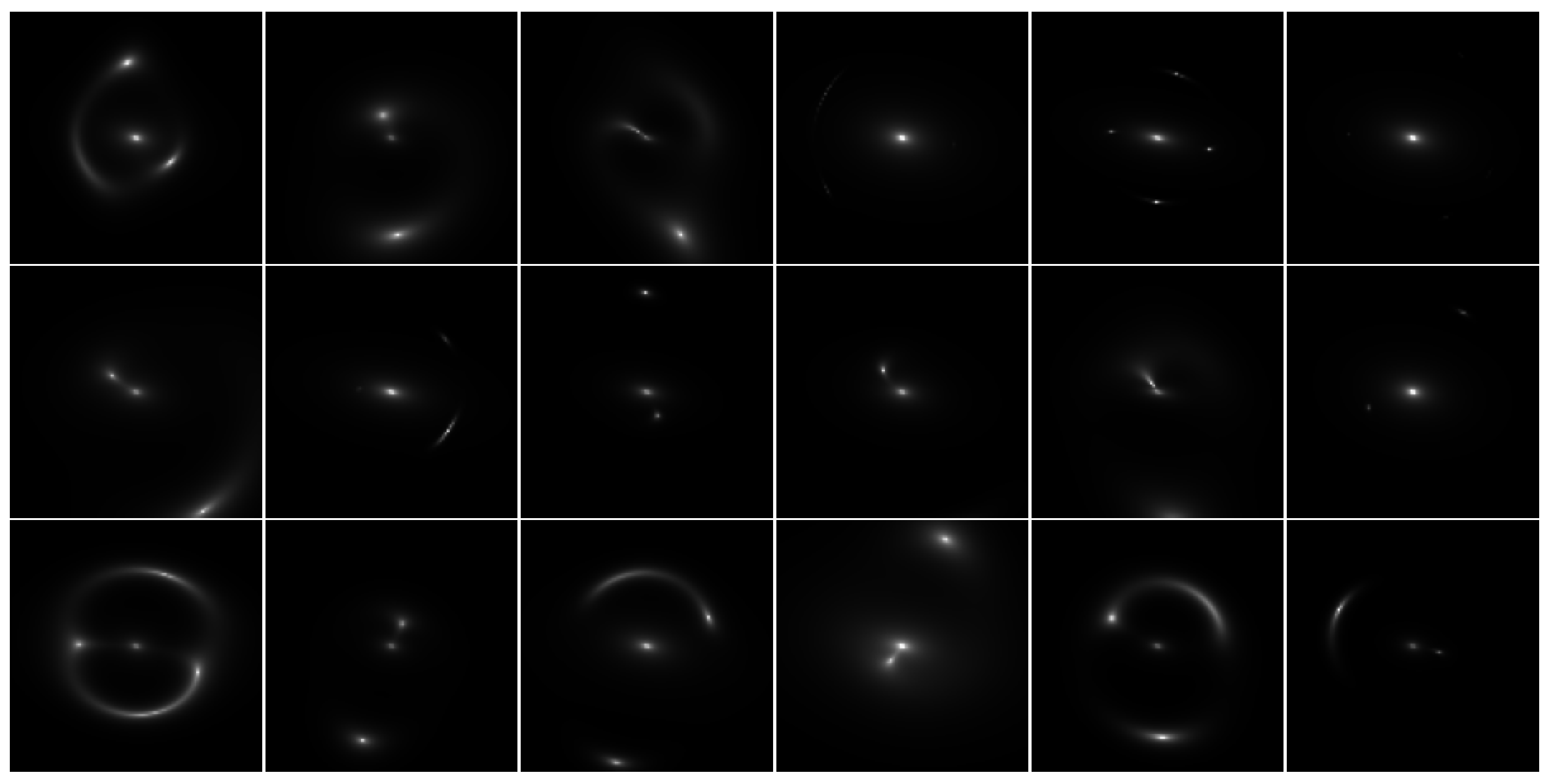

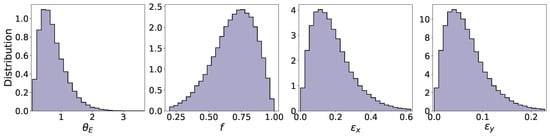

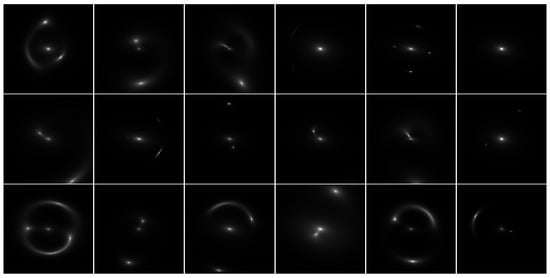

The dataset used to train, validate and test the CNN model consists of 76,396 images, each with dimensions of pixels with 1 channel and a resolution of arcseconds/pixel, based on the configuration of strong lens systems presented by CSST [55], corresponding to the single band Wide-Field sample. The images are generated using the Lenstronomy package, in the Python (3.11.10) environment [56], in which the source is modeled by the Sérsic model characterized by the space parameter , where x and y represent the position of the source with respect to the lens plane and setting the Sérsic index . This value of the Sérsic index was chosen because it represents an intermediate value between distinct galaxy populations ( for disk galaxies and for elliptical galaxies). Furthermore, S. Mukherjee et al. [57] found that the precise choice of source parameters for lens modeling is of secondary importance and does not significantly bias lens modeling. As shown in Equations (12) and (13), the components of the ellipticities were computed knowing the axis ratio from configurations of the CSST sample [55]. For this work, the position angle (p.a.) used to compute the components of the ellipticities for the lens () was arbitrarily set at deg from North, while the p.a. used to calculate the ellipticities components for the source were extracted from the CSST sample. The lens is characterized by the SIE model for the mass distribution with parameters and the Sérsic model for the brightness distributions used for the source and lens. For the following sections, the notation (,) will be adopted to refer to the ellipticities of the lens. Figure 3 shows the distribution for each parameter used in training issues. The distributions for and ellipticity components (, ) exhibit a clear positive skew, while axis ratio (f) shows a concentration toward higher values. For , the values are concentrated between [0.07, 3.65]. For f, the values can be found between [0.20, 1.00]. The ellipticity components (, ) can be found between [0.00, 0.63] and [0.00, 0.23], respectively. Figure 4 shows several random examples of synthetic images used in the analysis. To train the model, the dataset was partitioned into 70,000 images for the k-fold cross-validation process2 [58]) and 6396 images for independent testing. Within the 4-fold cross-validation scheme, each fold utilized 75% of the images (52,500) for training and 25% (17,500) for validation.

Figure 3.

Marginal distributions of the training parameters of the SIE lens model.

Figure 4.

Random examples of synthetic images of galaxy–galaxy lens systems used in training with a resolution of 0.06 arcsec/pixel. The pixel2 grid ensures a field of view that captures the position of the lensed images. The lens systems were simulated considering the SIE lens model and using the CSST catalog [55].

4.2. CCN

The primary objective is to train an AlexNet-modified CNN (described later) to predict the four characteristic parameters of the SIE model (, f, , ) for lens modeling, assessing the performance of the CNN model under three conditions in which the dropout layers were altered. As mentioned previously, the dataset was partitioned into 70,000 images for 4-fold cross-validation for training and validation and 6396 images for independent testing. In the 4-fold cross-validation scheme, each fold used 75% of the images for training and 25% for validation. A crucial element of the dataset is the binary format used to store images, namely TFRecords, which efficiently stores data sequences, optimized for use with TensorFlow [59]. This format is ideal for large datasets, as it allows for more compact storage and faster data reading during model training, even if the data do not fit completely in memory. This format facilitates the systematic labeling and organization of images, allowing a single file to be partitioned into training, validation, and test samples. Furthermore, the format’s support for grayscale images streamlines the preprocessing procedures.

The CNN model used in this research is based on the AlexNet architecture, which incorporates modifications in both the number of layers and neurons. This architecture, introduced by Alex Krizhevskii et al. in 2012 [38,60,61], achieved significant success in the ImageNet Large Scale Visual Recognition Challenge of 2012, an effort that evaluates algorithmic performance in object detection and image classification. AlexNet achieved an error rate of 15.3% in the classification of large-scale images. AlexNet expands the foundational concepts of LeNet and applies the core principles of CNNs to a more extensive and deep network. Furthermore, AlexNet integrates the ReLU activation function, dropout, and local response normalization (LRN) for the first time within a CNN. For the purposes of this preliminary study, this model was selected among various available models due to its notable performance as evidenced by works such as those of Perreault et al. [62], which demonstrate its ability to accurately estimate lens parameters.

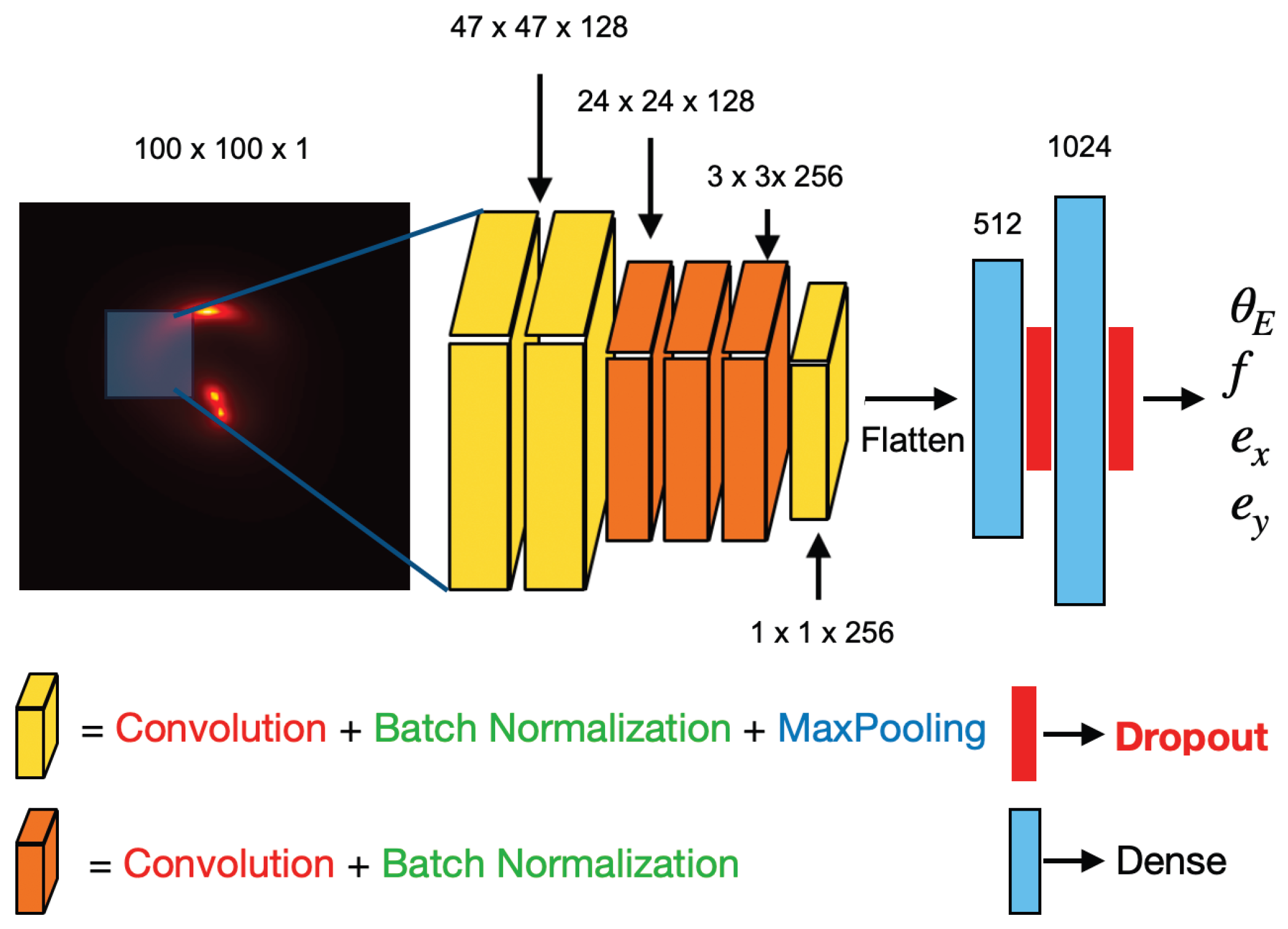

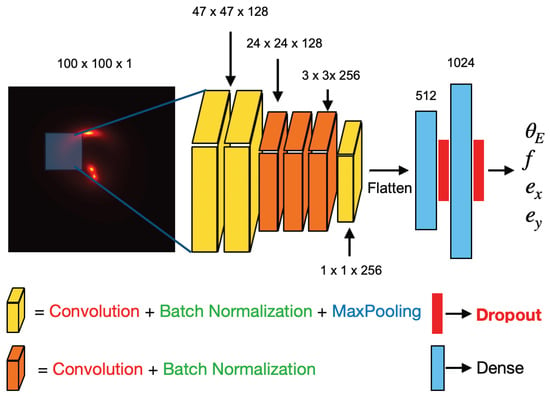

The architecture of the AlexNet-based CNN model is shown in Figure 5. The model comprises six blocks preceding the fully connected layers. The initial blocks consist of a convolutional layer, followed by batch normalization and maximum-pooling layers. The first convolutional layer applies 128 filters of size with a stride of 2, thereby reducing the spatial resolution. The second convolutional layer utilizes 256 filters of size with a stride of 3, facilitating the extraction of mid-scale features. The deep convolutional block, consisting of four convolutional layers with 256 filters of size , captures hierarchical representations and enhances the depth of the network without considerably increasing the number of parameters. This block concludes with a Max-Pooling layer to further reduce spatial dimensionality. The flattened feature maps are processed by two dense layers consisting of 512 and 1024 neurons, respectively. The investigation places significant emphasis on the dropout layers, examining changes in dropout rates across three scenarios. Ultimately, the model concludes with a dense layer possessed of linear activation and yielding four outputs corresponding to the parameters of interest. The primordial improvement that presents the model is the batch normalization layers, which stabilize training, allow higher learning rates, and reduce sensitivity to initialization. Another advantage of the architecture used in this work is the inclusion of an additional convolutional layer. This layer enhances the model’s ability to extract more details from the images. Additionally, the architecture undergoes a significant change with the implementation of filter factorization within the convolutional layer block. This provides a better representation of the characteristics while keeping the computational cost. Because the ellipticity components (, ) produce weaker variations in the images than other parameters of the lens model, we observed that the network tended to underadjust them. To mitigate this effect, we use a weighting scheme in the loss function defined by Equation (20), assigning higher weights to the terms associated with and . The weights for the Einstein radius and the axis ratio were 1.0 and for the ellipticity components 3.0, respectively. This strategy increases the contribution of these parameters to the gradient during training, stimulating the model to learn more sensitive representations of its morphological signatures.

Figure 5.

The CNN that we use consists of a series of convolution groups interspersed with max pooling layers and batch normalization terminating in two dense layers with two layers of dropout followed by an output layer as shown in the figure.

The NAdam optimizer, an adaptation of the Adam optimizer that incorporates Nesterov momentum [63], was used for weight refinement. Nesterov’s Accelerated Gradient (NAG) is a first-order optimization technique that ensures a higher convergence rate in certain contexts compared to the traditional gradient descent method. This momentum distinguishes itself from the Stochastic Gradient Descent (SGD) optimizer by first taking a substantial step in the direction of the accumulated gradient, followed by the evaluation of the gradient’s terminal position to perform necessary adjustments. NAG achieves a global convergence rate of , marking an advance over the convergence rate of gradient descent [64]. This refinement not only accelerates the convergence process but also enhances precision in minimizing the loss function. To mitigate overfitting, a ReduceLROnPlateau callback was integrated into the model, systematically reducing the learning rate and thus improving the training metrics. This technique allows a dynamic adaptation of the learning rate: if the loss of validation does not show a significant improvement after a threshold of n times (patience), the learning rate is reduced by a factor [65,66]. In this work, the factor for ReduceLROnPlateau was fixed in . The chosen minimum learning rate was . A significant experimental analysis involved modifying the dropout rates of two dropout layers, which were systematically tested under three conditions: initially using dropout rates of 20% and 30%, then uniformly applying a dropout rate of 20% to both layers, and finally, disabling the dropout layers entirely. For further evaluation of the model, a test sample consisting of 6396 images was selected, with the relative errors related to each prediction visualized.

4.3. Evaluation Metrics

To assess and compare the performance of the model, the weighted Mean Squared Error (MSE) was selected as the loss function, setting the weights for the Einstein radius and axial ratio in 1.0 and the weights for the ellipticity components of the lens in 3.0, following the Multi-Task Learning approach proposed by Caruana (1997) [67], while the Mean Absolute Error (MAE) served as the evaluation metric [68,69]. Furthermore, the coefficient of determination was calculated, providing an indication of the extent to which the variance in the observed values is explicable by the predictions of the model, expecting a value near 1 [70]. These metrics are articulated in the following expressions:

in which denotes the actual value, represents the predicted value, and signifies the mean of the actual values. Additionally, we assess the relative error inherent in the predictions by determining the residuals between the forecasted and actual values and subsequently plotting the actual values against the forecasted values [71]. The relative error was evaluated employing the elementary definition of relative error

In order to evaluate the accuracy of the predictions the models on the test images, we computed the bias defined as

where corresponds to the predicted values array and to the true values array. The scatter of the distribution can be obtained by computing using the 16th and the 84th percentiles of the distribution by the next definitions:

Furthermore, we determined the Normalized Median Absolute Deviation (NMAD), which is a robust statistic used to measure data dispersion, defined as the Median Absolute Deviation (MAD) scaled by a factor of approximately [72]. NMAD is defined as

A critical examination to reinforce the findings involves simulating images with the predicted outputs of the CNN model in order to facilitate a comparative analysis using the PSNR and MSE between the images, allowing an objective assessment of the quality of the results. Taking into account a reference image X and a test image Y of size , the PSNR, measured in dB, is determined by between them using the following expression:

where

and is the maximum value that a pixel can have in the image. A good value of PSNR is in a range between 30 and 40 dB. A PSNR value above 40 dB means a high-quality reconstruction of the original image [73,74].

5. Results

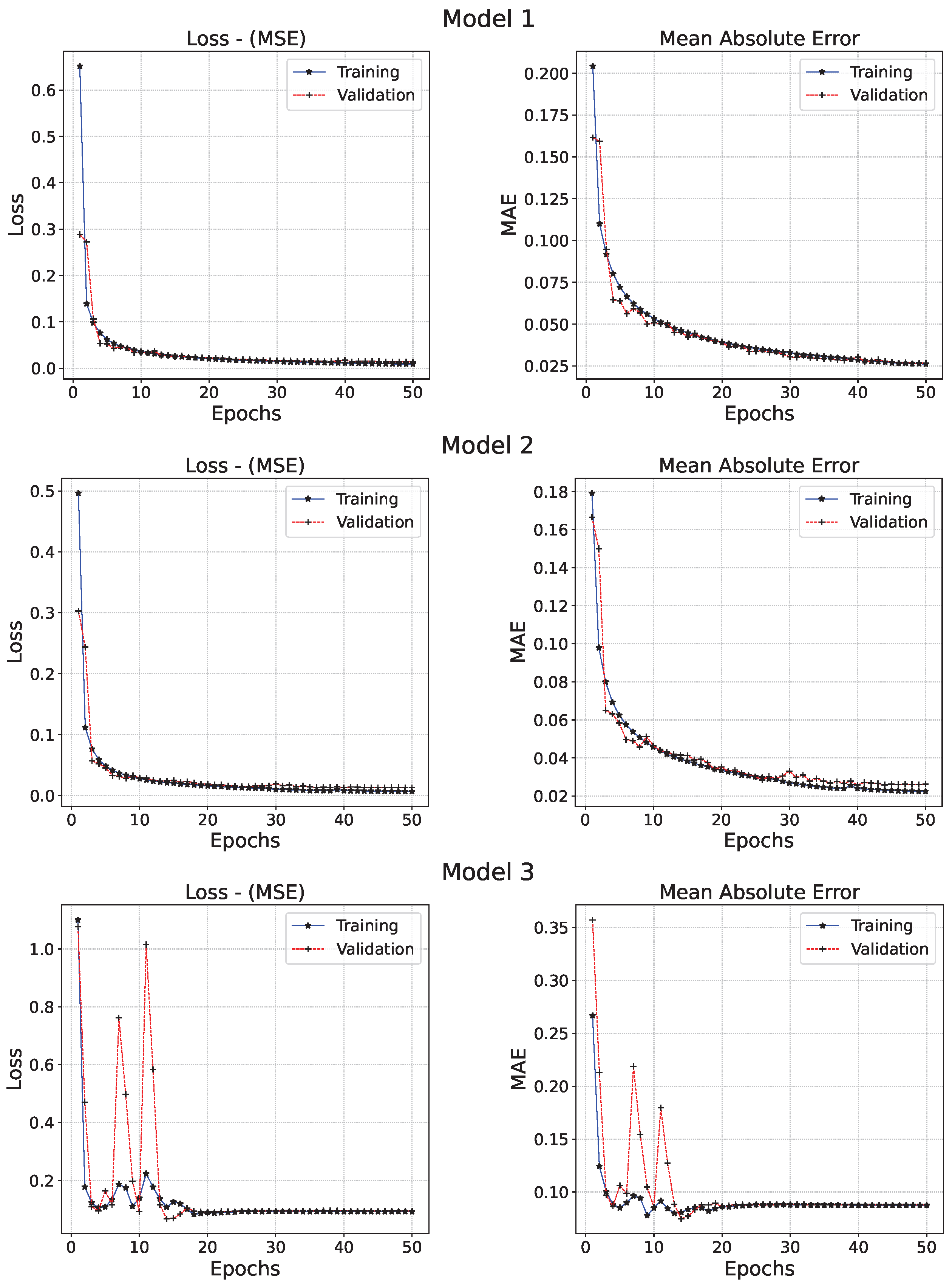

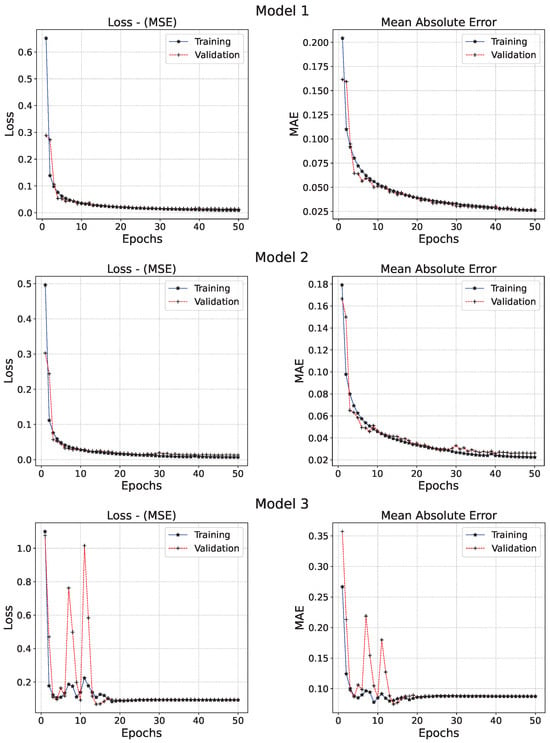

This section is devoted to analyzing the performance of the CNN model in predicting four parameters of the SIE model (lens galaxy). We note that the reported results correspond to the average values and their standard deviation obtained from a cross-validation procedure employing a 4-fold partitioning scheme. Specifically, the dataset was divided into four independent subsets. Figure 6 illustrates the evolution of loss and MAE functions with respect to the epochs, for which Models 1 and 2 exhibit consistent behavior. Although both functions of Model 3 display greater instability during the initial training phase, they eventually converge. Nevertheless, based on the minimum values attained by these metrics, as shown in Table 1, Model 3 achieves the highest (i.e., worst) loss and MAE values among the three CNN models.

Figure 6.

The performance metrics of the three models that were analyzed during the training phase. The MSE average values and their standard deviation at the end of the training for Model 1, Model 2, and Model 3 were , , and , respectively, while the corresponding MAE average values and their standard deviation were , , and , respectively.

Table 1.

Results for and median of each parameter for each model. The effect of dropout on generalization is evident. Models 1 and 2 show consistent performance in terms of , while the absence of dropout in Model 3 leads to overfitting and reduced generalization capability. Each relative error includes its standard deviation according to the 4-fold-cross procedure.

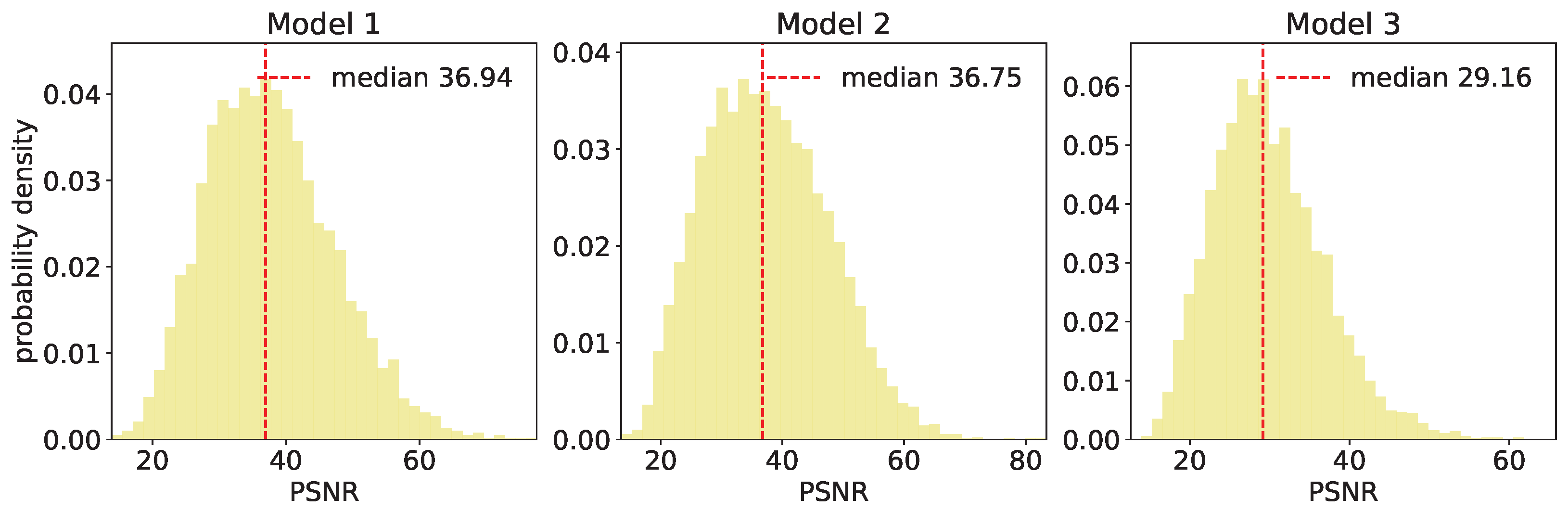

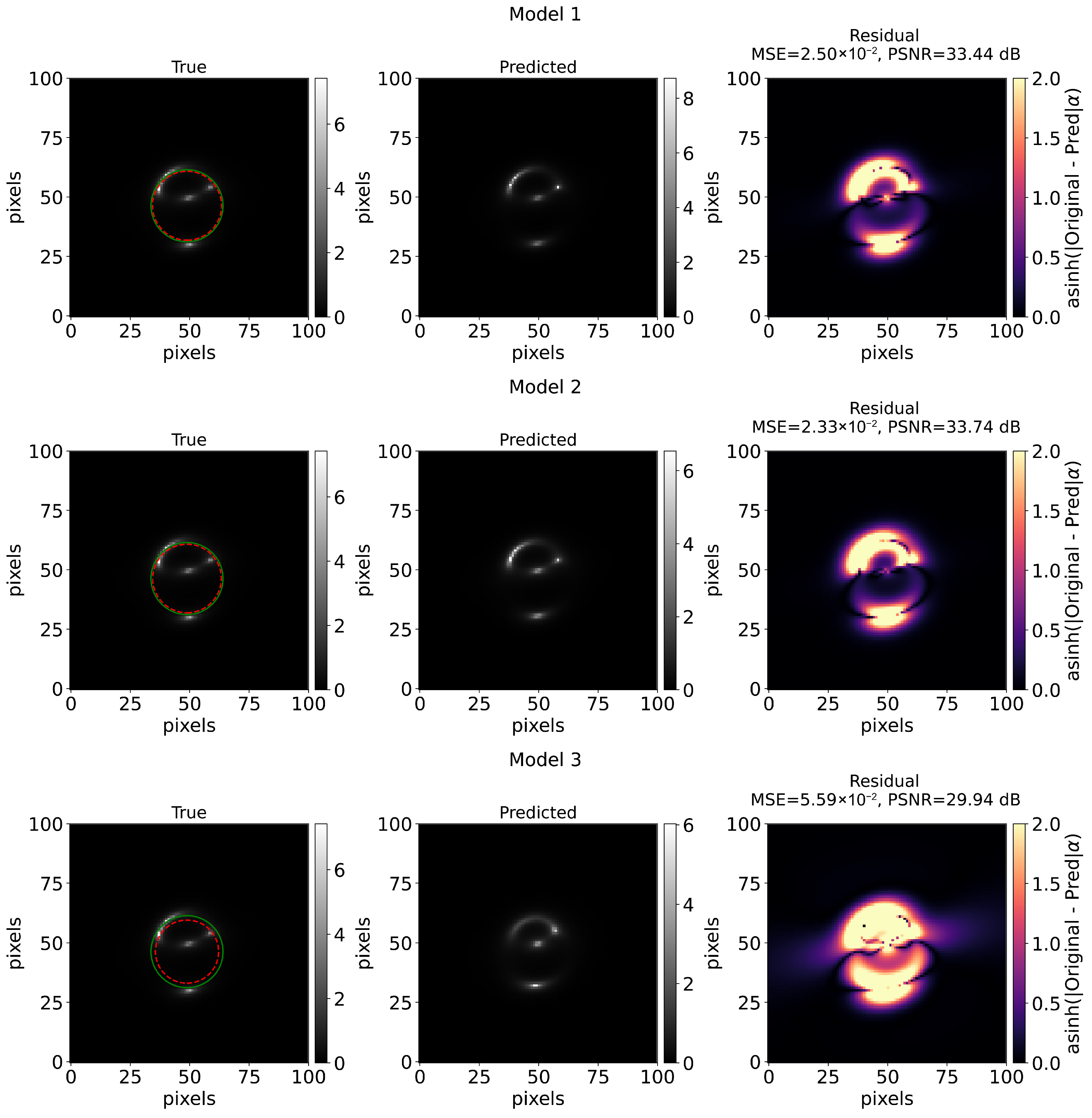

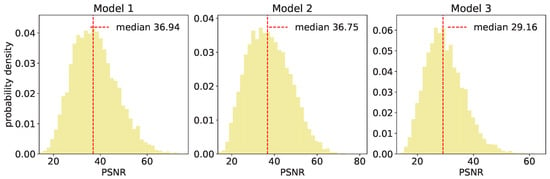

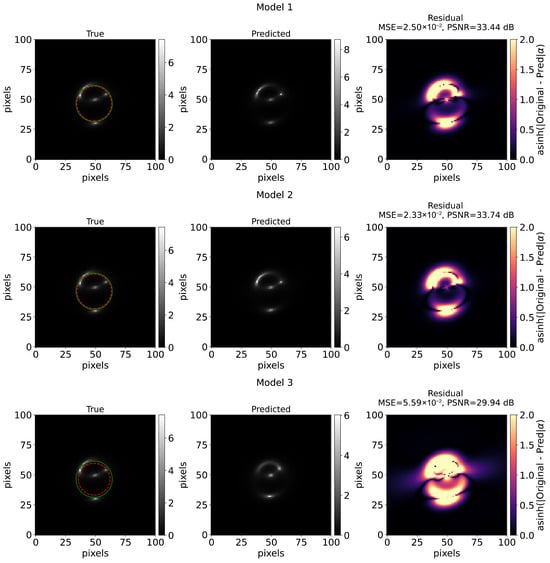

In the following, the results presented are obtained using the testing sample corresponding to 6396 images. Additionally, indicates that models that incorporate dropout exhibit superior predictive performance for SIE parameters (see Table 1), achieving values in the range of 0.95 to 0.97, while Model 3 achieves values comparatively lower (>0.56). Furthermore, Figure 7 shows the distribution of the PSNR for the three models, in which the median value is denoted by a dotted red line. Based on this quantity, we find a good reconstruction of the images of the lensed source for Model 1 and Model 2 compared to Model 3, obtaining similar results (median values of ≈37) with dropout being considered. Figure 8 displays a random example of a galaxy–galaxy lens system reconstructed using the true values (left panel) and the predicted values (middle panel). The right panel shows the residual distribution obtained as the difference between the true image and the predicted. As the intensity of the residual images is very small, we use a Lupton-type asinh transformation [75] that highlight the details of bright and weak areas by transforming weak signals linearly and strong signal logarithmically. We observe that the worst predicted reconstruction occurs when Model 3 is used.

Figure 7.

PSNR values for all the images used in test where the red dotted line indicates the median value. It can be seen that the predictions of Models 1 and 2 generate images with high quality compared to the third model.

Figure 8.

Random example of a galaxy–galaxy lens systems. Left column shows the original synthetic image and the predicted synthetic image is shown on the middle column for the three CNN models. Right column is the distribution of the residual, difference between the original image and the predicted one. Furthermore, the Einstein ring is drawn using the predicted values (green circles) and the true values (red circles) of the lens system. The intensity on the residuals distribution is scaled using the Lupton Transformation to show details of bright and weak areas. This transformation behaves linearly for weak signals and logarithmically for strong signals by setting ; which in this case is given a value of , related with the percentile 95 from the means of the residuals to avoid extreme atypical values in the display scale.

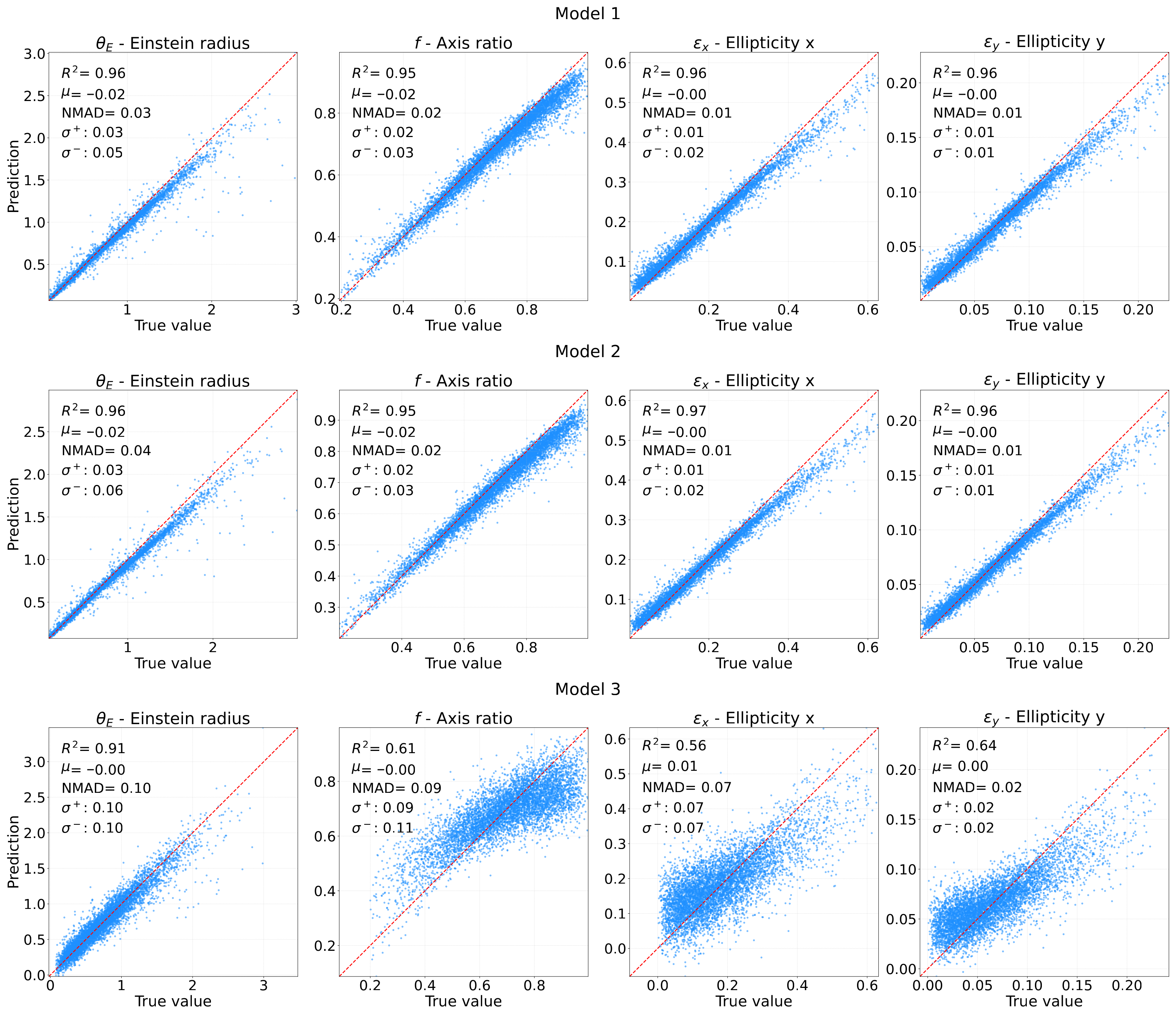

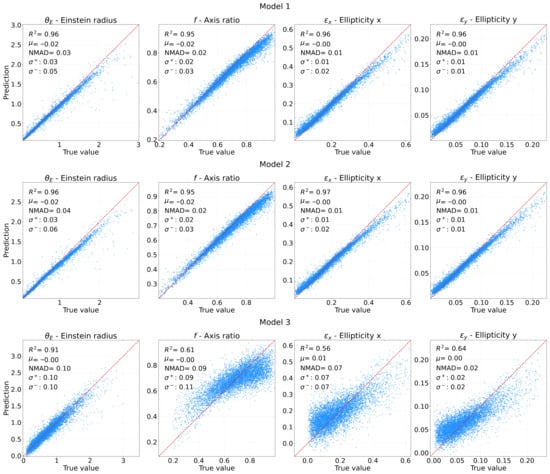

Figure 9 presents the scatter distribution between the true and predicted values of the SIE parameters. Exact predictions lie on the 45-degree diagonal (solid red line), and ideal predictions correspond to a distribution tightly aligned with this line. As anticipated, Models 1 and 2 show broadly consistent results: they exhibit a higher predictive accuracy for low values of , while a systematic bias becomes apparent for . This can be associated with the values of utilized for training, as depicted in Figure 3. This figure illustrates a significant concentration of values within the range of . Similarly, the parameter f displays a noticeable bias for , where the models systematically underestimate the true values. For components and , the bias is even more pronounced in the regimes and , respectively. In contrast, Model 3 shows the largest dispersion and bias among the three, clearly revealing the significant impact of dropout on the quality of the predictions. To assess the uncertainties of the models, we also computed the bias, the standard deviation and the NMAD, which serve as indicators of the accuracy of the predictions. The results of these metrics reveal that Models 1 and 2 exhibit a negligible systematic bias () but extremely low NMAD values between 0.01 and 0.04, confirming that predictions are highly certain and present minimal dispersion with respect to the line of truth. In contrast, while Model 3 maintains a low average bias (), its high residual dispersion (NMAD ≈ 0.07–0.10) indicates a regime of high variance that limits its reliability for individual parameter inference.

Figure 9.

Predicted vs. true parameters for the lens SIE model (Einstein radius, axis ratio, and ellipticity components) for the three models. The ideal recovery of the predictions is represented as a red dashed line. Models 1 and 2 show consistent high accuracy, while Model 3 has significant dispersion, especially in the ellipticity and axis ratio predictions. The bias () is shown in the plots, along with the NMAD and dispersion limits. Predicted values are averages from the 4-fold cross-validation.

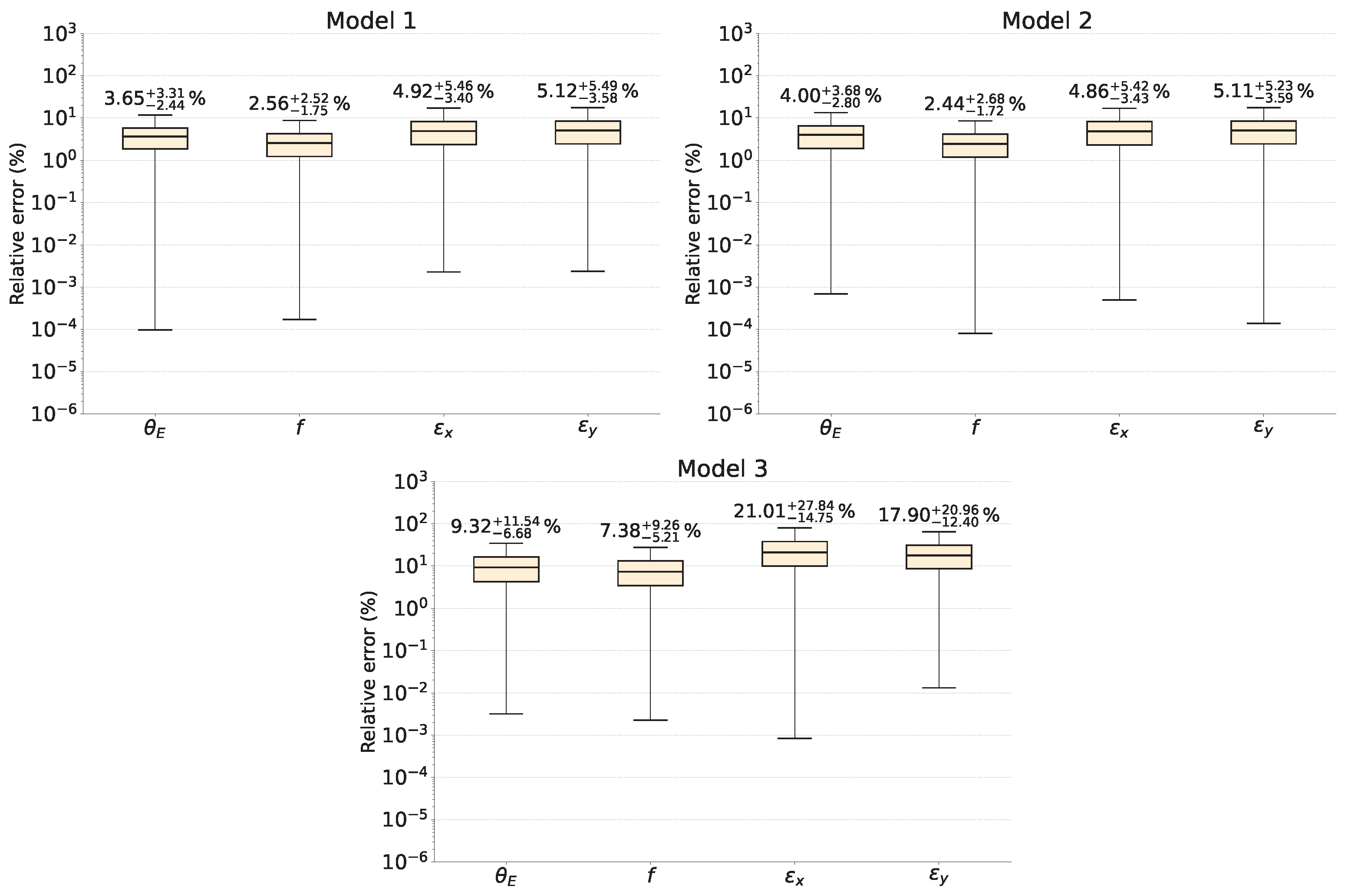

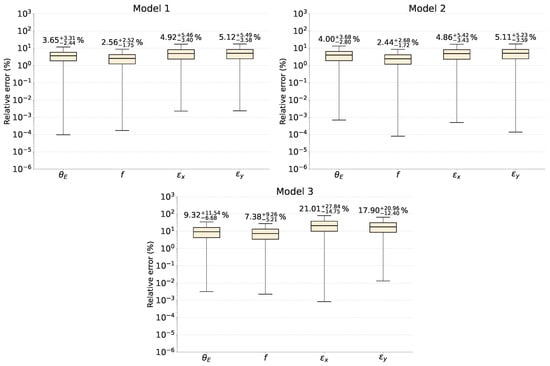

Finally, the relative errors of the SIE parameters for the three CNN architectures are presented in Figure 10. The values shown at the top of each boxplot correspond to the median relative error of the associated parameter. The best predictive performance is obtained for the parameter f, with median relative errors as low as for the CNN models that incorporate dropout layers; this median error increases by approximately a factor of three when dropout is disabled. The poorest performance is observed for the ellipticity parameters, which exhibit median relative errors up to for Models 1 and 2, and up to for Model 3. These median values are also summarized in Table 1.

Figure 10.

Relative errors of the SIE parameters for the three CNN models. Median values and their uncertainty at 68% confidence level are explicit, exposed at the top of the boxplots.

6. Discussion and Conclusions

The AlexNet-based CNN architecture was implemented to predict the parameters of the SIE lens model from 76,396 simulated galaxy–galaxy lens systems from the CSST sample [55]. The study compared three model configurations: Model 1 added dropout rates of 20% and 30% for the first and second dense NN layers; Model 2 used dropout rates of 20% in both layers; and Model 3 turned off dropout layers to assess their impact on generalization and predictive capabilities. Additionally, the reported uncertainties of the results account for a cross-validation procedure implemented using a 4-fold partitioning scheme.

First, although Model 3 did not exhibit overfitting, both MSE and MAE error metrics converged to local minima, with values of 0.092 and 0.085, respectively. These are considerably higher than the corresponding minimum values obtained for Models 1 and 2, which are approximately 15 times and 4 times lower for MSE and MAE, respectively. This limitation also affected other performance indicators, such as and PSNR, suggesting that models that incorporate dropout produce higher-quality predictions. We consistently observed that error prediction is substantially improved, i.e., significantly reduced in magnitude, as reported in Table 1. Additionally, we note that Model 1 differs from Model 2 by a 50% increase in the dropout rate in one of the dense layers. We observe a similar behavior during the training phase and a comparable impact on the final results, which supports the robustness of the proposed technique, and achieving values greater than for the predicted parameters, in contrast to values in the range 0.56–0.91 obtained with Model 3. Regarding image reconstruction performance, Figure 7 reports the PSNR distributions, with median values of 36.9 dB, 36.8 dB, and 29.2 dB for Models 1, 2, and 3, respectively. When dropout is incorporated into the models, these values place the reconstructed images in the lower range of the high-quality reconstruction regime. The residual dispersion analysis exposed in Figure 9 shows that Models 1 and 2 present negligible systematic bias () and low NMAD values (0.01–0.04), indicating highly certain and minimal dispersion with a remarkable stability across all morphological parameters. In contrast, while Model 3 has a low average bias (), its high residual dispersion (NMAD 0.07–0.10) limits its reliability for individual parameter inference, indicating a structural inability to capture the data variance.

All three models demonstrated proficiency in predicting the Einstein radius and the axial ratio f, both of which consistently exhibited the lowest levels of relative errors (Figure 10). Models 1 and 2 achieved relative errors with an upper limit around 5–9% in the CL for these two parameters. The parameters related to ellipticity (, ) proved more difficulty to predict, presenting larger and more dispersed relative errors. This difficulty can be attributed to the degeneracy of the lens equation, where different parameter combinations yield similar images, and the potential confusion of ellipticity components or source asymmetries. Despite these challenges, the overall performance was remarkable, with relative errors around 5–12% at the CL for most SIE parameters. Furthermore, the model struggled to correctly learn the high values for , suggesting a scarcity of training samples with high values or the complexity of interpreting the ellipticity.

The implementation of dropout to enhance gravitational lens studies aligns with previous work, such as Perreault Levasseur et al. [62], who optimized this hyperparameter to obtain expected coverage probabilities. Consistent with their findings, this work observed that networks with optimized dropout (Model 2) provided accurate predictions, while the absence of dropout (Model 3) resulted in higher and less reliable predictions. Similarly, Morningstar et al. [76] demonstrated that changing dropout rates affects predictions, achieving good calibration with a dropout rate in a recurrent model. More recently, the LEMON project by Gentile et al. [77] and the Euclid Collaboration [35] used a Bayesian NN with a dropout rate (keep rate ) to model lenses for HST and Euclid. Their results, achieving and MAE , are comparable to the results obtained in this work ( up to 0.97). However, we would remark that these works are performed with different samples and include noise. The relevance of the results obtained in Model 2 lies in its balance between computational efficiency and analytical accuracy. While previous lens modeling work using CNN used dense architectures that require significant resources, our use of an optimized version of AlexNet shows that it is possible to achieve a determination coefficient of with a reduced computational load. Since Einstein’s radius () is the main indicator of the projected mass within the critical radius, reaching an uncertainty of just 9% (at 90% CL) for this parameter has a direct impact on the estimation of the mass enclosed within the . This precision is vital for cosmology studies to restrict dark matter profiles [78,79]. Therefore, the model not only delivers a quick prediction but ensures that the derived scientific parameters maintain the integrity necessary for large-scale analysis in missions such as CSST and Euclid.

Finally, this study confirms that CNNs are promising tools for reducing prediction errors in gravitational lensing modeling. Regularization through dropout is crucial to reduce the variability of these errors and prevent overfitting. The predictions of Models 1 and 2 offer high-quality image reconstruction with a minimal MSE around . Furthermore, this method is significantly faster than traditional Markov Chain Monte Carlo (MCMC) techniques, efficiently predicting SIE parameters using a single GPU. This efficiency facilitates future analyses of large datasets from modern telescopes such as the Chinese Southern Sky Telescope (CSST) and the Euclid Space Telescope [19,55]. Future research should investigate alternative network architectures—such as ResNet [80], ConvNeXt, [81] and U-Net [82]—or suitably modified variants thereof, and should incorporate more advanced data augmentation strategies. This would enable a more rigorous characterization of distortions induced by convergence and shear components, particularly in scenarios where the presence of background noise and the finite spatial resolution of the detector could produce image degradation that impacts the model estimation and significantly complicates their analysis.

Author Contributions

Conceptualization, J.J.A.-F., A.H.-A. and V.M.; methodology, J.J.A.-F. and A.H.-A.; formal analysis, J.J.A.-F. and A.H.-A.; writing—original draft preparation, J.J.A.-F., A.H.-A. and V.M.; writing—review and editing, A.H.-A. and V.M. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The synthetic data is described in Section 4.1 and is based on the configuration of the CSST [55].

Acknowledgments

We thank anonymous referees for thoughtful remarks and suggestions. J.J.A.-F. thanks to Secretaría de Ciencia, Humanidades, Tecnología e Innovación (SECIHTI) for the master’s scholarship support. A.H.-A. acknowledges the support from cátedra Marcos Moshinsky (MM), Universidad Iberoamericana for support with the SNI grant, and the numerical analysis was also carried out by Numerical Integration for Cosmological Theory and Experiments in High-energy Astrophysics (Nicte Ha) cluster at IBERO University, acquired through cátedra MM support. V.M. acknowledges support from ANID FONDECYT Regular grant number 1231418 and Centro de Astrofísica de Valparaíso CIDI 21. J.J.A.-F., A.H.-A., and V.M. acknowledge partial support from project ANID Vinculación Internacional FOVI240098.

Conflicts of Interest

The authors declare no conflicts of interest.

Notes

| 1 | Co-adaptation occurs when several characteristic detectors “agree” and work well only if they are all together. Every neuron stops learning useful signs by themselves and become a “parasite” of the rest. Dropout breaks that group behavior: turn off random neurons during training to force them to learn robust and independent things. |

| 2 | k-fold cross-validation is a statistical technique used to evaluate the generalization capacity of a model and prevent overfitting. It consists of dividing the dataset into k folds; the model is trained using of those groups and is validated with the remaining group that was left out. |

References

- Schneider, P.; Kochanek, C.; Wambsganss, J. Gravitational Lensing: Strong, Weak and Micro; Saas-Fee Advanced Course 33; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2006; Volume 33. [Google Scholar]

- Schneider, P. Extragalactic Astronomy and Cosmology: An Introduction; Springer: Berlin/Heidelberg, Germany, 2015. [Google Scholar] [CrossRef]

- Hezaveh, Y.D.; Dalal, N.; Marrone, D.P.; Mao, Y.Y.; Morningstar, W.; Wen, D.; Blandford, R.D.; Carlstrom, J.E.; Fassnacht, C.D.; Holder, G.P.; et al. Detection of lensing substructure using ALMA observations of the dusty falaxy SDP.81. Astrophys. J. 2016, 823, 37. [Google Scholar] [CrossRef]

- Meneghetti, M. Introduction to Gravitational Lensing: With Python Examples; Springer Nature: Cham, Switzerland, 2021; Volume 956. [Google Scholar]

- Shajib, A.J.; Vernardos, G.; Collett, T.E.; Motta, V.; Sluse, D.; Williams, L.L.R.; Saha, P.; Birrer, S.; Spiniello, C.; Treu, T. Strong Lensing by Galaxies. Space Sci. Rev. 2024, 220, 87. [Google Scholar] [CrossRef] [PubMed]

- Treu, T. Strong Lensing by Galaxies. Annu. Rev. Astron. Astrophys. 2010, 48, 87–125. [Google Scholar] [CrossRef]

- Natarajan, P.; Williams, L.L.R.; Bradač, M.; Grillo, C.; Ghosh, A.; Sharon, K.; Wagner, J. Strong Lensing by Galaxy Clusters. Space Sci. Rev. 2024, 220, 19. [Google Scholar] [CrossRef]

- Grillo, C.; Lombardi, M.; Bertin, G. Cosmological Parameters from Strong Gravitational Lensing and Stellar Dynamics in Elliptical Galaxies. Astron. Astrophys. 2008, 477, 397–406. [Google Scholar] [CrossRef]

- Chae, K.H. The Cosmic Lens All-Sky Survey: Statistical Strong Lensing, Cosmological Parameters, and Global Properties of Galaxy Populations. Mon. Not. R. Astron. Soc. 2003, 346, 746–772. [Google Scholar] [CrossRef][Green Version]

- Birrer, S.; Millon, M.; Sluse, D.; Shajib, A.J.; Courbin, F.; Erickson, S.; Koopmans, L.V.E.; Suyu, S.H.; Treu, T. Time-Delay Cosmography: Measuring the Hubble Constant and Other Cosmological Parameters with Strong Gravitational Lensing. Space Sci. Rev. 2024, 220, 48. [Google Scholar] [CrossRef] [PubMed]

- Mellier, Y. et al. [Euclid Collaboration] Euclid: I. Overview of the Euclid Mission. Astron. Astrophys. 2025, 697, A1. [Google Scholar] [CrossRef]

- Laureijs, R.; Amiaux, J.; Arduini, S.; Auguères, J.L.; Brinchmann, J.; Cole, R.; Cropper, M.; Dabin, C.; Duvet, L.; Ealet, A.; et al. Euclid Definition Study Report. arXiv 2011. [Google Scholar] [CrossRef]

- Enard, D. The European Southern Observatory Very Large Telescope. J. Opt. 1991, 22, 33. [Google Scholar] [CrossRef]

- McElwain, M.W.; Feinberg, L.D.; Perrin, M.D.; Clampin, M.; Mountain, C.M.; Lallo, M.D.; Lajoie, C.P.; Kimble, R.A.; Bowers, C.W.; Stark, C.C.; et al. The James Webb Space Telescope Mission: Optical Telescope Element Design, Development, and Performance. Publ. Astron. Soc. Pac. 2023, 135, 058001. [Google Scholar] [CrossRef]

- Gardner, J.P.; Mather, J.C.; Clampin, M.; Doyon, R.; Greenhouse, M.A.; Hammel, H.B.; Hutchings, J.B.; Jakobsen, P.; Lilly, S.J.; Long, K.S.; et al. The James Webb Space Telescope. Space Sci. Rev. 2006, 123, 485–606. [Google Scholar] [CrossRef]

- Bianco, F.B.; Ivezić, Ž.; Jones, R.L.; Graham, M.L.; Marshall, P.; Saha, A.; Strauss, M.A.; Yoachim, P.; Ribeiro, T.; Anguita, T.; et al. Optimization of the Observing Cadence for the Rubin Observatory Legacy Survey of Space and Time: A Pioneering Process of Community-focused Experimental Design. Astrophys. J. Suppl. Ser. 2021, 258, 1. [Google Scholar] [CrossRef]

- Hook, I. The Science Case for the European ELT. In Science with the VLT in the ELT Era; Moorwood, A., Ed.; Springer: Dordrecht, The Netherlands, 2009; pp. 225–232. [Google Scholar]

- Palle, E.; Biazzo, K.; Bolmont, E.; Mollière, P.; Poppenhaeger, K.; Birkby, J.; Brogi, M.; Chauvin, G.; Chiavassa, A.; Hoeijmakers, J.; et al. Ground-Breaking Exoplanet Science with the ANDES Spectrograph at the ELT. Exp. Astron. 2025, 59, 29. [Google Scholar] [CrossRef]

- Gong, Y. et al. [CSST Collaboration] Introduction to the Chinese Space Station Survey Telescope (CSST). Sci. China Phys. Mech. Astron. 2026, 69, 239501. [Google Scholar] [CrossRef]

- Collett, T.E. The Population of Galaxy–Galaxy Strong Lenses in Forthcoming Optical Imaging Surveys. Astrophys. J. 2015, 811, 20. [Google Scholar] [CrossRef]

- Myers, S.T.; Jackson, N.J.; Browne, I.W.A.; de Bruyn, A.G.; Pearson, T.J.; Readhead, A.C.S.; Wilkinson, P.N.; Biggs, A.D.; Blandford, R.D.; Fassnacht, C.D.; et al. The Cosmic Lens All-Sky Survey—I. Source Selection and Observations. Mon. Not. R. Astron. Soc. 2003, 341, 1–12. [Google Scholar] [CrossRef]

- Browne, I.W.A.; Wilkinson, P.N.; Jackson, N.J.F.; Myers, S.T.; Fassnacht, C.D.; Koopmans, L.V.E.; Marlow, D.R.; Norbury, M.; Rusin, D.; Sykes, C.M.; et al. The Cosmic Lens All-Sky Survey—II. Gravitational Lens Candidate Selection and Follow-Up. Mon. Not. R. Astron. Soc. 2003, 341, 13–32. [Google Scholar] [CrossRef]

- Scoville, N.; Aussel, H.; Brusa, M.; Capak, P.; Carollo, C.M.; Elvis, M.; Giavalisco, M.; Guzzo, L.; Hasinger, G.; Impey, C.; et al. The Cosmic Evolution Survey (COSMOS): Overview. Astrophys. J. Suppl. Ser. 2007, 172, 1. [Google Scholar] [CrossRef]

- Bolton, A.S.; Burles, S.; Koopmans, L.V.E.; Treu, T.; Moustakas, L.A. The Sloan Lens ACS Survey. I. A Large Spectroscopically Selected Sample of Massive Early-Type Lens Galaxies. Astrophys. J. 2006, 638, 703. [Google Scholar] [CrossRef]

- Bolton, A.S.; Burles, S.; Koopmans, L.V.E.; Treu, T.; Gavazzi, R.; Moustakas, L.A.; Wayth, R.; Schlegel, D.J. The Sloan Lens ACS Survey. V. The Full ACS Strong-Lens Sample. Astrophys. J. 2008, 682, 964. [Google Scholar] [CrossRef]

- Fowlie, A.; Handley, W.; Su, L. Nested Sampling Cross-Checks Using Order Statistics. Mon. Not. R. Astron. Soc. 2020, 497, 5256–5263. [Google Scholar] [CrossRef]

- Zhang, W.; Hasegawa, A.; Matoba, O.; Itoh, K.; Ichioka, Y.; Doi, K. Shift-Invariant Neural Network for Image Processing: Learning and Generalization. Appl. Artif. Neural Netw. III 1992, 1709, 257–268. [Google Scholar] [CrossRef]

- Hála, P. Spectral classification using convolutional neural networks. arXiv 2014, arXiv:1412.8341. [Google Scholar] [CrossRef]

- Petrillo, C.E.; Tortora, C.; Chatterjee, S.; Vernardos, G.; Koopmans, L.V.E.; Verdoes Kleijn, G.; Napolitano, N.R.; Covone, G.; Schneider, P.; Grado, A.; et al. Finding Strong Gravitational Lenses in the Kilo Degree Survey with Convolutional Neural Networks. Mon. Not. R. Astron. Soc. 2017, 472, 1129–1150. [Google Scholar] [CrossRef]

- Hezaveh, Y.D.; Levasseur, L.P.; Marshall, P.J. Fast Automated Analysis of Strong Gravitational Lenses with Convolutional Neural Networks. Nature 2017, 548, 555–557. [Google Scholar] [CrossRef]

- Schuldt, S.; Suyu, S.H.; Meinhardt, T.; Leal-Taixé, L.; Cañameras, R.; Taubenberger, S.; Halkola, A. HOLISMOKES—IV. Efficient Mass Modeling of Strong Lenses through Deep Learning. Astron. Astrophys. 2021, 646, A126. [Google Scholar] [CrossRef]

- Morningstar, W.R.; Levasseur, L.P.; Hezaveh, Y.D.; Blandford, R.; Marshall, P.; Putzky, P.; Rueter, T.D.; Wechsler, R.; Welling, M. Data-driven Reconstruction of Gravitationally Lensed Galaxies Using Recurrent Inference Machines. Astrophys. J. 2019, 883, 14. [Google Scholar] [CrossRef]

- Park, J.W.; Wagner-Carena, S.; Birrer, S.; Marshall, P.J.; Lin, J.Y.Y.; Roodman, A.; The LSST Dark Energy Science Collaboration. Large-Scale Gravitational Lens Modeling with Bayesian Neural Networks for Accurate and Precise Inference of the Hubble Constant. Astrophys. J. 2021, 910, 39. [Google Scholar] [CrossRef]

- Pearson, J.; Maresca, J.; Li, N.; Dye, S. Strong Lens Modelling: Comparing and Combining Bayesian Neural Networks and Parametric Profile Fitting. Mon. Not. R. Astron. Soc. 2021, 505, 4362–4382. [Google Scholar] [CrossRef]

- Busillo, V.; Tortora, C.; Metcalf, R.; Nightingale, J.; Meneghetti, M.; Gentile, F.; Gavazzi, R.; Zhong, F.; Li, R.; Clément, B.; et al. Euclid Quick Data Release (Q1). LEMON—LEns MOdelling with Neural networks. Automated and fast modelling of Euclid gravitational lenses with a singular isothermal ellipsoid mass profile. Astron. Astrophys. 2026. [Google Scholar] [CrossRef]

- Parlange, R.; Cuevas-Tello, J.C.; Valenzuela, O.; Cabrera-Rosas, O.d.J.; Verdugo, T.; More, A.; Jaelani, A.T. GraViT: Transfer Learning with Vision Transformers and MLP-Mixer for Strong Gravitational Lens Discovery. Mon. Not. R. Astron. Soc. 2025, 545, staf1747. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A Simple Way to Prevent Neural Networks from Overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Hinton, G.; Krizhevsky, A.; Sutskever, I.; Rachmad, Y. ImageNet Classification with Deep Convolutional Neural Networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Kormann, R.; Schneider, P.; Bartelmann, M. Isothermal Elliptical Gravitational Lens Models. Astron. Astrophys. 1994, 284, 285–299. [Google Scholar]

- Tessore, N.; Metcalf, R.B. The Elliptical Power Law Profile Lens. Astron. Astrophys. 2015, 580, A79. [Google Scholar] [CrossRef]

- Asada, H.; Hamana, T.; Kasai, M. Images for an Isothermal Ellipsoidal Gravitational Lens from a Single Real Algebraic Equation. Astron. Astrophys. 2003, 397, 825–829. [Google Scholar] [CrossRef]

- Etherington, A.; Nightingale, J.W.; Massey, R.; Cao, X.; Robertson, A.; Amorisco, N.C.; Amvrosiadis, A.; Cole, S.; Frenk, C.S.; He, Q.; et al. Automated Galaxy–Galaxy Strong Lens Modelling: No Lens Left Behind. Mon. Not. R. Astron. Soc. 2022, 517, 3275–3302. [Google Scholar] [CrossRef]

- Sérsic, J.L. Influence of the Atmospheric and Instrumental Dispersion on the Brightness Distribution in a Galaxy. Bol. Asoc. Argent. Astron. 1963, 6, 41–43. [Google Scholar]

- Sersic, J.L. Atlas de Galaxias Australes; Observatorio Astronómico: Cordoba, Argentina, 1968. [Google Scholar]

- Ciotti, L.; Bertin, G. Analytical properties of the R(1/m) luminosity law. arXiv 1999, arXiv:astro-ph/9911078. [Google Scholar]

- Cardone, V.F. The Lensing Properties of the Sersic Model. Astron. Astrophys. 2004, 415, 839–848. [Google Scholar] [CrossRef]

- Li, Z.; Liu, F.; Yang, W.; Peng, S.; Zhou, J. A Survey of Convolutional Neural Networks: Analysis, Applications, and Prospects. IEEE Trans. Neural Netw. Learn. Syst. 2022, 33, 6999–7019. [Google Scholar] [CrossRef] [PubMed]

- Alzubaidi, L.; Zhang, J.; Humaidi, A.J.; Al-Dujaili, A.; Duan, Y.; Al-Shamma, O.; Santamaría, J.; Fadhel, M.A.; Al-Amidie, M.; Farhan, L. Review of Deep Learning: Concepts, CNN Architectures, Challenges, Applications, Future Directions. J. Big Data 2021, 8, 53. [Google Scholar] [CrossRef]

- Nair, V.; Hinton, G.E. Rectified Linear Units Improve Restricted Boltzmann Machines. In Proceedings of the 27th International Conference on International Conference on Machine Learning, ICML’10, Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- Glorot, X.; Bordes, A.; Bengio, Y. Deep Sparse Rectifier Neural Networks. Proc. Mach. Learn. Res. 2011, 15, 315–323. [Google Scholar]

- LeCun, Y.; Boser, B.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.; Jackel, L.D. Backpropagation applied to handwritten zip code recognition. Neural Comput. 1989, 1, 541–551. [Google Scholar] [CrossRef]

- Baldi, P.; Sadowski, P.J. Understanding Dropout. In Proceedings of the 27th International Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 5–10 December 2013; Curran Associates, Inc.: Red Hook, NY, USA, 2013; Volume 26. [Google Scholar]

- Hinton, G.E.; Srivastava, N.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R.R. Improving Neural Networks by Preventing Co-Adaptation of Feature Detectors. arXiv 2012. [Google Scholar] [CrossRef]

- Salehin, I.; Kang, D.K. A Review on Dropout Regularization Approaches for Deep Neural Networks within the Scholarly Domain. Electronics 2023, 12, 3106. [Google Scholar] [CrossRef]

- Cao, X.; Li, R.; Li, N.; Li, R.; Chen, Y.; Ding, K.; Shan, H.; Zhan, H.; Zhang, X.; Du, W.; et al. CSST Strong Lensing Preparation: Forecasting the Galaxy–Galaxy Strong Lensing Population for the China Space Station Telescope. Mon. Not. R. Astron. Soc. 2024, 533, 1960–1975. [Google Scholar] [CrossRef]

- Birrer, S.; Amara, A. Lenstronomy: Multi-purpose Gravitational Lens Modelling Software Package. Phys. Dark Universe 2018, 22, 189–201. [Google Scholar] [CrossRef]

- Mukherjee, S.; Koopmans, L.V.E.; Metcalf, R.B.; Tessore, N.; Tortora, C.; Schaller, M.; Schaye, J.; Crain, R.A.; Vernardos, G.; Bellagamba, F.; et al. SEAGLE—I. A Pipeline for Simulating and Modelling Strong Lenses from Cosmological Hydrodynamic Simulations. Mon. Not. R. Astron. Soc. 2018, 479, 4108–4125. [Google Scholar] [CrossRef]

- Stone, M. Cross-Validatory Choice and Assessment of Statistical Predictions. J. R. Stat. Soc. Ser. B (Methodol.) 2018, 36, 111–133. [Google Scholar] [CrossRef]

- TensorFlow Authors. TFRecord and tf.train.Example. 2024. Available online: https://www.tensorflow.org/tutorials/load_data/tfrecord (accessed on 10 December 2025).

- Ballester, P.; Araujo, R. On the Performance of GoogLeNet and AlexNet Applied to Sketches. In Proceedings of the AAAI Conference on Artificial Intelligence, Phoenix, AZ, USA, 12–17 February 2016; Volume 30. [Google Scholar] [CrossRef]

- Zhang, X. The AlexNet, LeNet-5 and VGG NET Applied to CIFAR-10. In Proceedings of the 2021 2nd International Conference on Big Data & Artificial Intelligence & Software Engineering (ICBASE), Zhuhai, China, 24–26 September 2021; pp. 414–419. [Google Scholar] [CrossRef]

- Perreault Levasseur, L.; Hezaveh, Y.D.; Wechsler, R.H. Uncertainties in Parameters Estimated with Neural Networks: Application to Strong Gravitational Lensing. Astrophys. J. Lett. 2017, 850, L7. [Google Scholar] [CrossRef]

- Dozat, T. Incorporating Nesterov Momentum into Adam. In Proceedings of the 4th International Conference on Learning Representations, Workshop Track, San Juan, Puerto Rico, 2–4 May 2016; pp. 1–4. [Google Scholar]

- Sutskever, I.; Martens, J.; Dahl, G.; Hinton, G. On the Importance of Initialization and Momentum in Deep Learning. Proc. Mach. Learn. Res. 2013, 28, 1139–1147. [Google Scholar]

- Keras. 2015. Available online: https://keras.io (accessed on 10 December 2025).

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Available online: http://www.deeplearningbook.org (accessed on 15 November 2025).

- Caruana, R. Multitask Learning. Mach. Learn. 1997, 28, 41–75. [Google Scholar] [CrossRef]

- Ruppert, D. The elements of statistical learning: Data mining, inference, and prediction. J. Am. Stat. Assoc. 2004, 99, 567. [Google Scholar] [CrossRef]

- Botchkarev, A. A New Typology Design of Performance Metrics to Measure Errors in Machine Learning Regression Algorithms. Interdiscip. J. Inf. Knowl. Manag. 2019, 14, 45–76. [Google Scholar] [CrossRef]

- Chicco, D.; Warrens, M.J.; Jurman, G. The coefficient of determination R-squared is more informative than SMAPE, MAE, MAPE, MSE and RMSE in regression analysis evaluation. PeerJ Comput. Sci. 2021, 7, e623. [Google Scholar] [CrossRef]

- Hyndman, R.J.; Koehler, A.B. Another look at measures of forecast accuracy. Int. J. Forecast. 2006, 22, 679–688. [Google Scholar] [CrossRef]

- Huber, P.J. Robust Statistics. In International Encyclopedia of Statistical Science; Lovric, M., Ed.; Springer: Berlin/Heidelberg, Germany, 2011; pp. 1248–1251. [Google Scholar] [CrossRef]

- Sadykova, D.; James, A.P. Quality assessment metrics for edge detection and edge-aware filtering: A tutorial review. In Proceedings of the 2017 International Conference on Advances in Computing, Communications and Informatics (ICACCI), Udupi, India, 13–16 September 2017; pp. 2366–2369. [Google Scholar] [CrossRef]

- Petrovic, V.; Pavlović, B.; Andrić, M.; Bondzulic, B. Performance of peak signal-to-noise ratio quality assessment in video streaming with packet losses. Electron. Lett. 2016, 52, 454–456. [Google Scholar] [CrossRef]

- Lupton, R.; Blanton, M.R.; Fekete, G.; Hogg, D.W.; O’Mullane, W.; Szalay, A.; Wherry, N. Preparing Red-Green-Blue Images from CCD Data. Publ. Astron. Soc. Pac. 2004, 116, 133. [Google Scholar] [CrossRef]

- Morningstar, W.R.; Hezaveh, Y.D.; Levasseur, L.P.; Blandford, R.D.; Marshall, P.J.; Putzky, P.; Wechsler, R.H. Analyzing interferometric observations of strong gravitational lenses with recurrent and convolutional neural networks. arXiv 2018, arXiv:1808.00011. [Google Scholar] [CrossRef]

- Gentile, F.; Tortora, C.; Covone, G.; Koopmans, L.V.E.; Li, R.; Leuzzi, L.; Napolitano, N.R. lemon: LEns MOdelling with Neural networks—I. Automated modelling of strong gravitational lenses with Bayesian Neural Networks. Mon. Not. R. Astron. Soc. 2023, 522, 5442–5455. [Google Scholar] [CrossRef]

- Binney, J.; Tremaine, S. Galactic Dynamics; Princeton Series in Astrophysics; Princeton University Press: Princeton, NJ, USA, 1987. [Google Scholar]

- Koopmans, L.V.E.; Treu, T. The Structure and Dynamics of Luminous and Dark Matter in the Early-Type Lens Galaxy of 0047–281 at z = 0.485. Astrophys. J. 2003, 583, 606. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. A ConvNet for the 2020s. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 11966–11976. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.