Abstract

In the intelligent operation and maintenance of industrial equipment, labeling failure data remains a challenging task due to its high cost and low efficiency. Although incorporating a large amount of unlabeled data alongside limited labeled samples can partially alleviate this “labeling bottleneck,” the performance and robustness of models still heavily depend on the scale and quality of annotated data, which often leads to generalization issues in real industrial scenarios. To address these challenges, this paper proposes an unsupervised fault diagnosis method based on an efficient domain adaptation model named E-DANNMK. This approach reduces reliance on manually labeled fault data, thereby mitigating annotation-related issues such as high cost and potential bias. The E-DANNMK model integrates residual networks, an efficient channel attention mechanism, and domain adversarial neural networks to improve both feature discriminability and cross-domain adaptability. To validate its effectiveness, experiments were conducted on two major bearing fault datasets. The results demonstrate that the proposed E-DANNMK model achieves an average diagnostic accuracy of 94.21%, outperforming mainstream domain adaptation methods—including CDAN, CORAL, DANN, CNN-Transformer, DMT and DANN-MK—by a margin ranging from 3.12% to 7.15%.

1. Introduction

As a key component of mechanical systems, the operating condition of bearings is critical to the safety and reliability of the entire system [1]. Research on bearing fault diagnosis is essential for predictive maintenance and industrial intelligence, forming the foundation of a safer, more efficient modern industrial system [2].

In recent years, data-driven fault diagnosis methods have gained considerable attention due to their high accuracy and ease of deployment [3]. For instance, hybrid models combining ResNet with transfer learning [4] and attention mechanisms have been developed to improve feature selection and recognition. Internationally, innovations include using Generative Adversarial Networks to address data scarcity [5] and integrating bearing dynamics with Graph Neural Networks for interpretable diagnostics [6]. Much focus has been on domain adaptation methods to tackle the domain shift caused by varying operating conditions [7,8,9].

Early work established basic domain adaptation frameworks, such as intermediate domain techniques that showed robustness to speed variations and cross-domain scenarios [10]. With advances in deep learning, research evolved to extract transferable features and align distributions explicitly [11]. Methods employed deep sparse filtering with domain classifiers [12], This is refined by employing strategies such as instance-level weighted adversarial learning to enhance the domain adaptation model [13], deep belief networks with multi-kernel alignment [14], and later combinations like Deep CORAL with Joint Maximum Mean Discrepancy [15].

Subsequent efforts refined alignment mechanisms and classifier structures. Examples include using Local Maximum Mean Discrepancy (LMMD) within one-dimensional ConvNeXt for sub-domain adaptation [16], combining LMMD with adversarial learning and attention mechanisms [17], and employing geodesic flow kernels with non-parametric classifiers [18]. Other approaches introduced symmetry constraints [19], gradient supervision [20], and strategies to maximize inter-domain discrepancies for stable features [21,22]. Further work integrated graph networks with adversarial training [23], proposed “Maximum Domain Discrepancy” strategies [24], and used multi-scale convolutions with dynamic loss balancing [25].

Recent trends emphasize multi-technique integration, practical deployment, and robustness. This includes multi-scale feature fusion with attention mechanisms [26,27], combining physical models with data-driven approaches to generate simulated data [28], and developing lightweight adaptive methods such as meta-learning with adversarial adaptation [29]. Novel paradigms like “source-free” domain adaptation [30] or adaptation using only normal target data [31] are also emerging to address privacy and deployment challenges. Additionally, advanced diagnostic modeling methods have also achieved significant progress in the field of industrial visual inspection, offering new insights for related technologies. For example, the vision-language cyclic interaction model enhances boundary perception and decision-making accuracy in complex scenarios through a cyclic interaction mechanism [32], while the multi-expert diffusion model, from a generative perspective, enables multi-scale defect detection under strong interference by leveraging multi-expert selection and large-kernel convolution [33]. The innovations of these methods in feature interaction, multi-scale perception, and generative modeling provide valuable references for addressing challenges such as variable speeds and limited samples in bearing fault diagnosis.

This paper aims to systematically review the evolution and current limitations of deep transfer learning in bearing fault diagnosis, with a particular focus on practical industrial challenges such as variable speeds and loads. Building on this, an innovative collaborative optimization framework is proposed, which integrates multi-source domain alignment strategies with a modular integration mechanism to significantly enhance the model’s generalization capability and diagnostic accuracy in complex and variable industrial environments. Compared to existing methods, the proposed model effectively improves transferability.

2. Unsupervised Fault Diagnosis Method Based on E-DANNMK Model and Introduction to Comparative Models

2.1. E-DANNMK Model

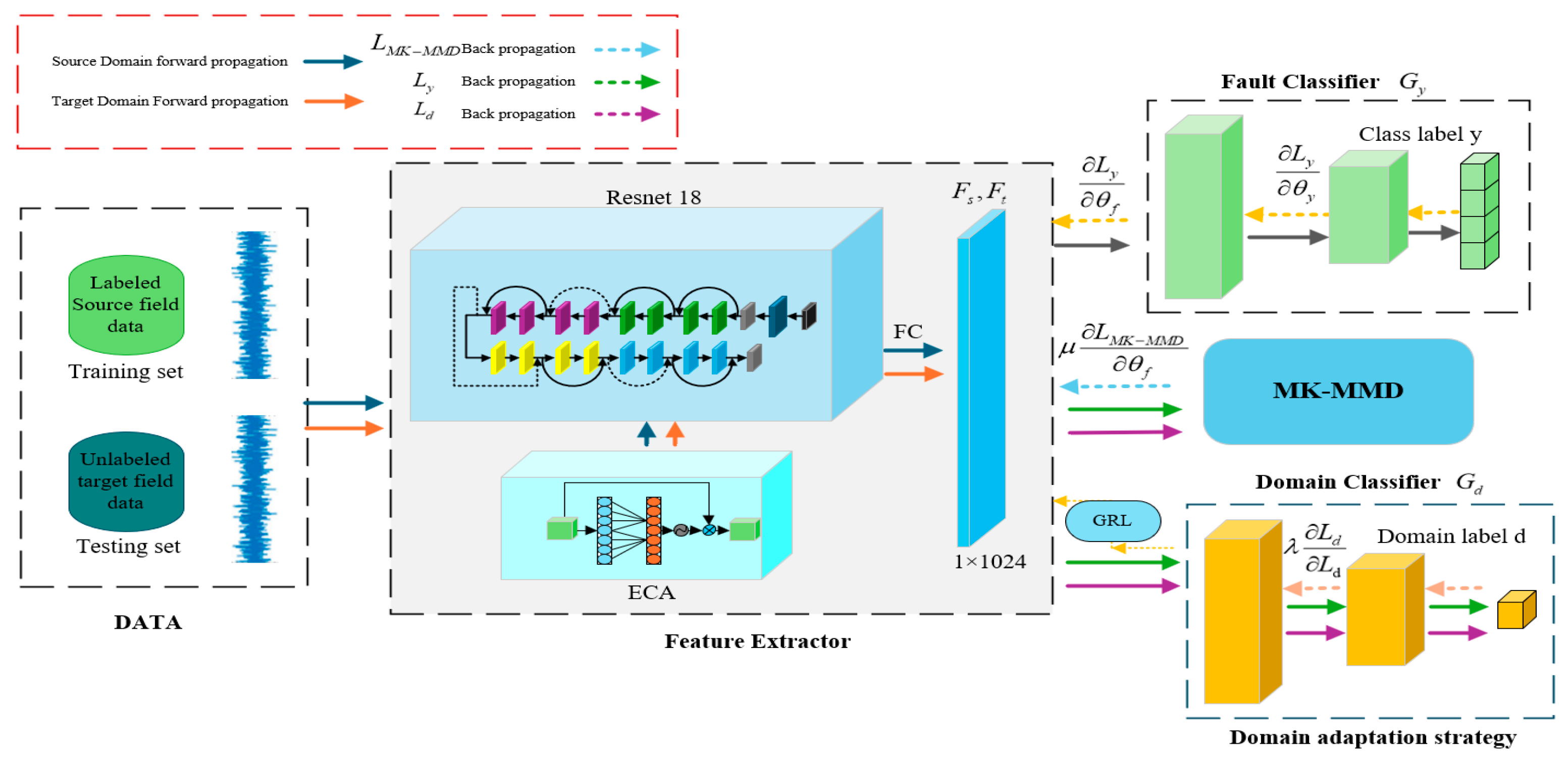

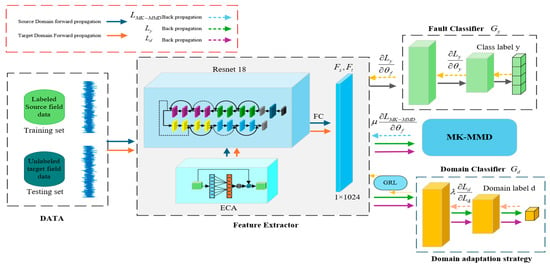

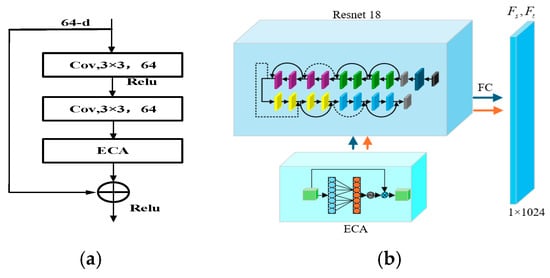

The model architecture constructed in this study is illustrated in Figure 1, which comprises three core modules: an efficient feature extractor, a fault classifier, and a domain classifier. The feature extractor is built by embedding an efficient channel attention module into the ResNet-18 architecture. Leveraging the local cross-channel interaction characteristics of adaptive one-dimensional convolution, this module enables the model to adaptively calibrate channel-wise feature responses, thereby enhancing its capability to capture fault-related information from bearing monitoring data. Furthermore, the model incorporates a deep adversarial neural network by combining the Multi-Kernel Maximum Mean Discrepancy (MK-MMD) metric with the domain adversarial training paradigm. This design exploits their complementary strengths: MK-MMD aligns high-order statistical moments between domains through a multi-kernel function, while adversarial learning confuses domain decision boundaries via gradient reversal. These two mechanisms operate at different levels of abstraction, with MK-MMD ensuring similarity in distribution shape and adversarial learning promoting domain-invariant feature representations, together forming a more comprehensive multi-level alignment strategy than any single approach. A joint loss function is introduced to collaboratively drive the model to learn domain-invariant features that exhibit strong discriminability and high generalizability. It is worth noting that the weighting coefficients in this joint loss require careful tuning; for instance, reducing the classification loss weight directly impairs accuracy, while excessively high adversarial or MK-MMD weights may lead to feature degradation or training instability. Experimental results indicate that the initial ratio among the classification loss, adversarial loss, and MK-MMD loss is 1:0.3:0.2, while the optimal configuration should be dynamically adjusted based on the specific degree of domain shift. Experimental results indicate that the initial ratio among the classification loss, adversarial loss, and MK-MMD loss is 1:0.3:0.2, while the optimal configuration should be dynamically adjusted based on the specific degree of domain shift. The MK-MMD component employs three Gaussian kernels with bandwidth parameters σ set to 0.1, 1, and 10 to measure multi-scale feature distribution discrepancies. This integrated approach ensures the robustness and diagnostic accuracy of the bearing fault diagnosis model under varying operational conditions.

Figure 1.

Schematic diagram of the E-DANNMK model. In the feature extractor, the solid line denotes identity mapping with unchanged input and output dimensions, while the dashed line represents downsampling via a 1 × 1 convolution to adjust dimensions for matching the output.

The overall objective of the model is driven by three loss components: the classification loss ensures the discriminative power of features for fault categories; the adversarial loss promotes the learning of domain-invariant features; and the MK-MMD loss directly aligns the feature distributions of the source and target domains. The total loss function is defined as:

where and are the weighting coefficients that balance the three loss terms.

Let the features extracted from the source domain data by the deep feature extractor be denoted as , Let the corresponding features of the target domain data be denoted as , Then, denotes the Multi-Kernel Maximum Mean Discrepancy (MK-MMD) loss function between and , whose expression is given by:

where denotes the mathematical expectation, represents the mapping function to the Reproducing Kernel Hilbert Space, and signifies the RKHS endowed with the characteristic kernel k.

(Discriminative Loss): In adversarial training, the domain discriminator is employed to distinguish whether sample data originate from the source or the target domain. The loss function encompasses two backpropagation processes, which update the network parameters of . The Gradient Reversal Layer (GRL) establishes an adversarial relationship between these processes, optimizing through backpropagation to achieve Nash equilibrium. Its expression is given by:

(Classification Loss): The bearing condition classifier, composed of fully connected layers and a softmax function, is designed to output the category prediction results for the source domain data. The fault classification loss is computed via cross-entropy, which measures the discrepancy between the predicted labels and the true labels of the source domain. Its expression is given by:

where is the number of fault categories, is the total number of samples, is the indicator function (taking values of 0 or 1), and denotes the predicted probability that sample belongs to category .

The ReLU activation function used in residual blocks is a simple yet efficient nonlinear function well-suited for deep learning models. ReLU successfully mitigates the gradient vanishing problem commonly encountered with traditional Sigmoid and Tanh functions in deep networks, while offering faster computational speed. Its derivative in the positive region is a constant 1, a property that significantly accelerates the model training process and effectively prevents gradient vanishing issues.

where represents the input value.

2.1.1. Efficient Feature Extractor

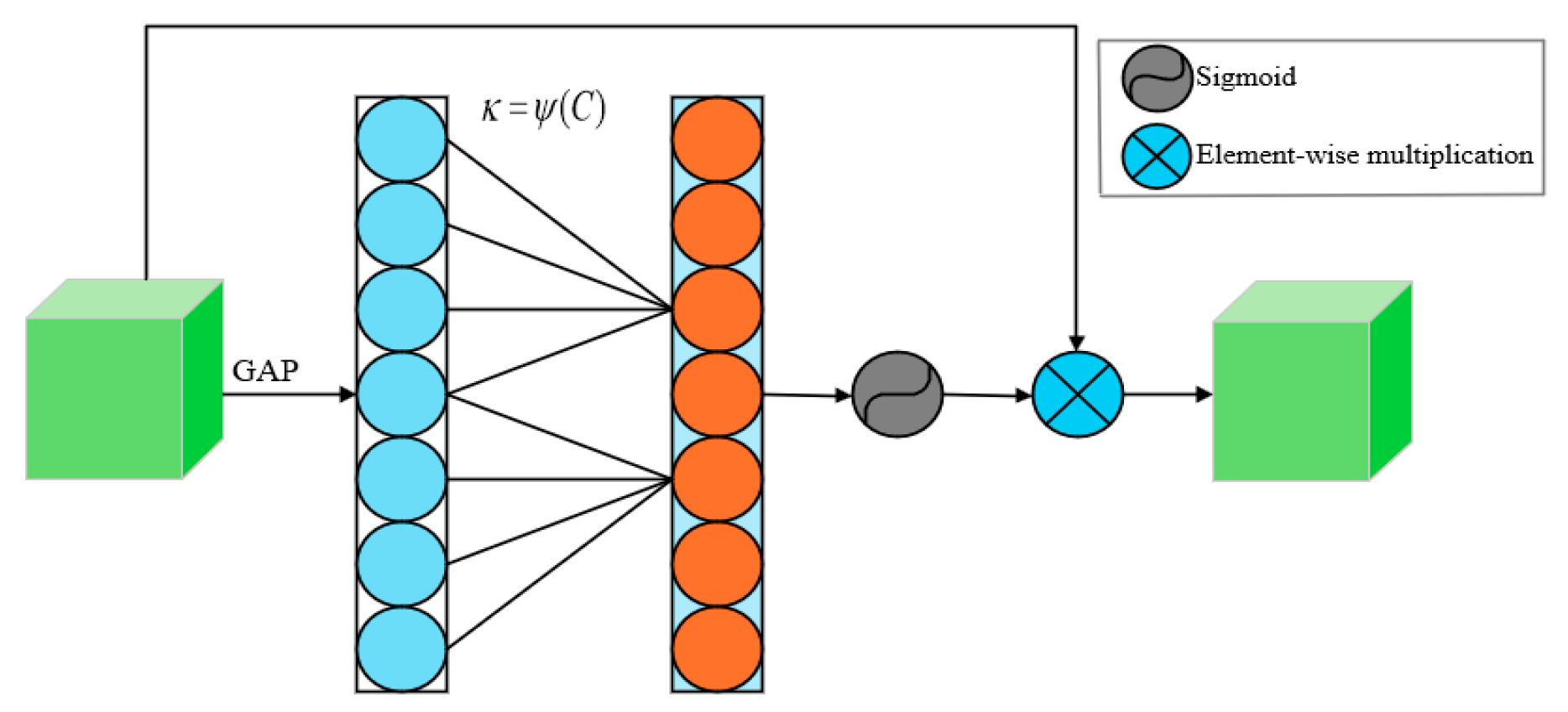

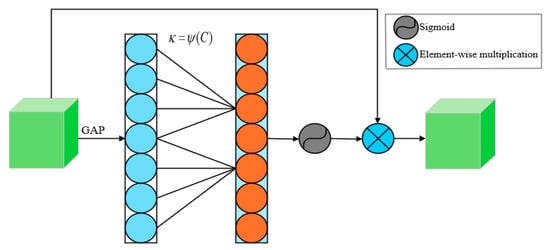

The Efficient Channel Attention (ECA) [34] is a lightweight attention mechanism designed to dynamically enhance the discriminability of feature channels through local cross-channel interactions. Its core approach replaces the fully connected layers in traditional channel attention with one-dimensional convolution. This avoids the information loss caused by dimensionality reduction operations while adaptively optimizing the range of cross-channel interaction via an adaptive kernel size—determined by a nonlinear mapping of the channel dimension—thereby improving the model’s ability to focus on key channel features.

As shown in Figure 2, the ECA module first applies Global Average Pooling (GAP) to the input feature map. It then learns interdependencies among channels via a one-dimensional convolution operation, followed by a Sigmoid function to generate the channel attention map. Finally, the output of the ECA module is obtained by performing element-wise multiplication between this channel attention map and the input feature map of the module. As shown in Figure 2, the ECA module first applies Global Average Pooling (GAP) to the input feature map. It then learns interdependencies among channels via a one-dimensional convolution operation, followed by a Sigmoid function to generate the channel attention map. Finally, the output of the ECA module is obtained by performing element-wise multiplication between this channel attention map and the input feature map of the module.

Figure 2.

ECA module.

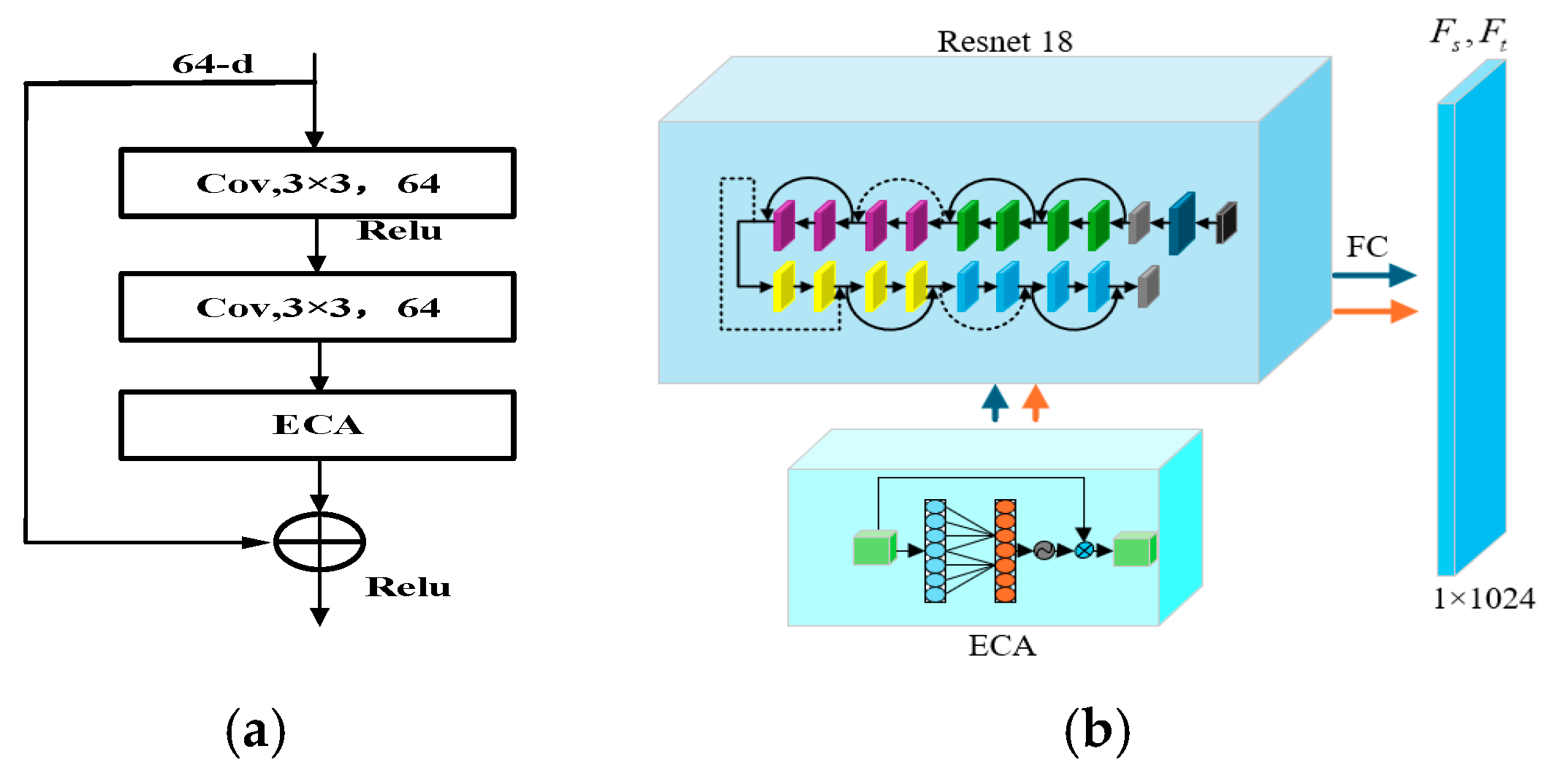

The E-DANNMK model embeds the ECA module into ResNet-18 as its core feature extractor and integrates it with the DANN framework, with the specific embedding location illustrated in Figure 3a. A dual-branch structure with shared weights is employed to simultaneously process labeled source domain data and unlabeled target domain data. To further enhance domain invariance, the model introduces a domain classifier to perform binary discrimination on the origin of the extracted features. A GRL is utilized to implement adversarial training, where minimizing the domain discrimination loss forces the feature extractor to generate cross-domain shared features that confuse the domain classifier. During backpropagation, the model jointly optimizes the classification loss, the MK-MMD domain adaptation loss, and the domain adversarial loss. Hyperparameters are used to dynamically balance the learning weights between feature discriminability and domain invariance, ultimately achieving synergistic improvement in both fault diagnosis accuracy and cross-operational-condition generalization capability. The efficient feature extractor is depicted in Figure 3b.

Figure 3.

(a) Embedding location of the ECA module; (b) Efficient Feature Extractor of the E-DANNMK Model. The solid line denotes identity mapping, preserving the input and output dimensions, while the dashed line represents downsampling through a 1 × 1 convolution to align the output dimensions accordingly.

The source and target domain data are processed through convolutional layers and the ECA module, respectively, generating high-dimensional feature vectors. The source domain features are fed into the fault classifier to output fault category labels, while the target domain features undergo distribution alignment with the source domain features via the MK-MMD metric. By embedding the ECA module into the ResNet-18 network, the feature selection capability of ResNet-18 is optimized during the feature extraction stage through a dynamic channel weighting mechanism. While the convolutional kernels in the traditional ResNet-18 can progressively extract high-level features through multi-layer stacking, their fixed-weight convolution operations lack adaptability to the dynamic variations of fault features under complex working conditions. With the introduction of the ECA module, the network can adjust the weights of each channel in real time based on the characteristics of the input signal, enabling the model to accurately pinpoint fault-related frequency bands even during sudden speed changes, thereby enhancing the robustness of feature representation.

2.1.2. Fault Classifier and Domain Classifier

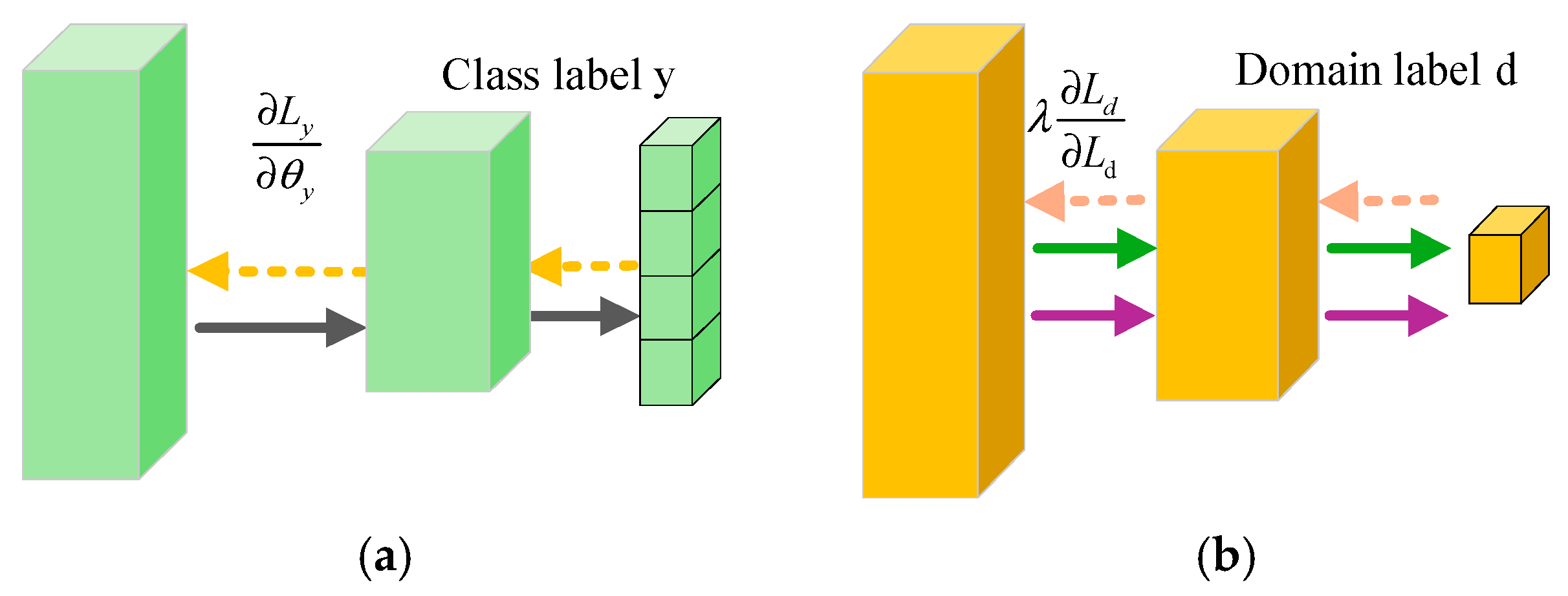

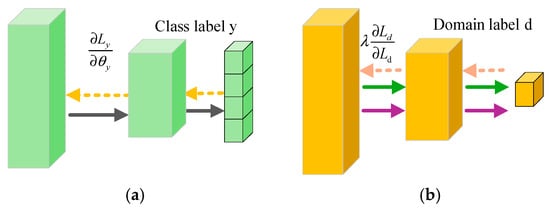

The fault classifier serves as the core component of this model for achieving intelligent diagnostic functionality, as illustrated in Figure 4a. The model undergoes end-to-end supervised training based on the bearing fault labels of the source domain data. This training aims to guide the efficient feature extractor to learn highly discriminative fault-sensitive features, thereby enabling accurate identification and classification of different fault modes.

Figure 4.

(a) Fault classifier; (b) Domain classifier.

The domain classifier is illustrated in Figure 4b. It achieves domain adaptation through adversarial training, with its core mechanism lying in the dynamic game between the feature extractor and the domain classifier: the latter strives to distinguish the domain origin of the features, while the former, via a reverse optimization strategy, aims to eliminate domain-specific information from the features. This reduces the model’s dependency on specific operational conditions, thereby effectively transferring the fault diagnosis knowledge learned from the source domain and applying it to unlabeled target domain data under unknown working conditions.

2.2. Introduction to Comparative Models

To validate the effectiveness and superiority of the proposed E-DANNMK, comparisons were conducted with existing mainstream domain adaptation methods. This chapter primarily compares it with the following four methods:

2.2.1. Conditional Domain Adversarial Networks (CDANs) [35]

CDAN deeply integrates category information with feature representations, enabling the domain discriminator to directly align the joint distribution. This method addresses the shortcomings of traditional domain adversarial networks when facing conditional distribution shifts, significantly enhancing the model’s adaptive capability and transfer robustness in complex scenarios such as inter-class distribution divergence, label drift, or absence of target domain categories.

2.2.2. Convolutional Neural Networks (CNNs) [36]

CNN excel at processing spatially correlated data such as images and can automatically learn high-dimensional features. They are widely applied in fields including image classification, object detection, and medical image analysis. In fault diagnosis, CNN can be directly trained using bearing vibration data from source operating conditions, thereby enabling effective identification of fault types under target operating conditions.

2.2.3. CORrelation Alignment (CORAL) [37]

CORAL method addresses distribution shift in unsupervised domain adaptation by minimizing the discrepancy between the feature covariance matrices of the source and target domains, thereby implicitly aligning their second-order statistical distributions. CORAL requires no target domain labels or complex adversarial training, which reduces computational complexity and implementation difficulty.

2.2.4. Domain Adversarial Neural Network (DANN) [38]

DANN pioneered adversarial training in unsupervised domain adaptation by implicitly aligning cross-domain feature distributions. Its core mechanism is the GRL, which simultaneously minimizes source classification error and maximizes domain discrimination error in an end-to-end adversarial framework. This approach suits scenarios with labeled source and unlabeled target domains and has greatly advanced adversarial transfer learning.

2.2.5. CNN-Transformer [39]

The architecture integrates the local feature extraction capability of CNN with the global dependency modeling strength of Transformer. Specifically, the convolutional modules capture fine-grained local features, while the self-attention modules mine long-range feature dependencies. Through feature fusion, the model outputs representations that simultaneously exhibit local discriminability and global contextual relevance, effectively balancing local details with holistic information. This design demonstrates strong generalization ability, maintains diagnostic accuracy while balancing computational efficiency, and is well-suited for multi-condition fault diagnosis scenarios.

2.2.6. Dynamic Multiscale Transformer (DMT) [40]

The architecture employs multi-scale convolutional layers to extract fault features at different frequencies in parallel, combined with a dynamic attention mechanism to adaptively focus on fault-sensitive regions while suppressing noise interference. Through hierarchical fusion, effective features are progressively enhanced. The key advantages include outstanding multi-scale adaptability, excellent noise resistance, effective identification of early weak faults and compound faults, and strong robustness under non-stationary operating conditions.

3. Results

Data-driven fault diagnosis methods depend on extensive annotated data and optimal performance under identical data distribution assumptions. However, distribution shifts caused by complex industrial conditions limit model generalization. Transfer learning and domain adaptation address data scarcity and distribution differences. These approaches are applied not only in fault diagnosis but also in remaining useful life prediction, where frameworks such as federated transfer learning enable privacy-preserving cross-device knowledge transfer [41]. To validate and compare within this framework, In this study, two experimental bearing fault monitoring datasets are selected: the Case Western Reserve University Rolling Bearing Dataset [42] and the Paderborn University Bearing Dataset [43]. The two datasets are widely recognized and complementary in fault diagnosis research. The CWRU data are collected under stable operating conditions and covers typical fault types and sizes, making it suitable for validating the fundamental diagnostic capabilities of models. In contrast, the Paderborn data originates from a real-world test rig, containing natural damage, multiple variable operating conditions, and environmental interference, thereby better reflecting the challenges of complex industrial scenarios. Their combination allows for the systematic evaluation of method generalization under both controlled and near-industrial conditions.

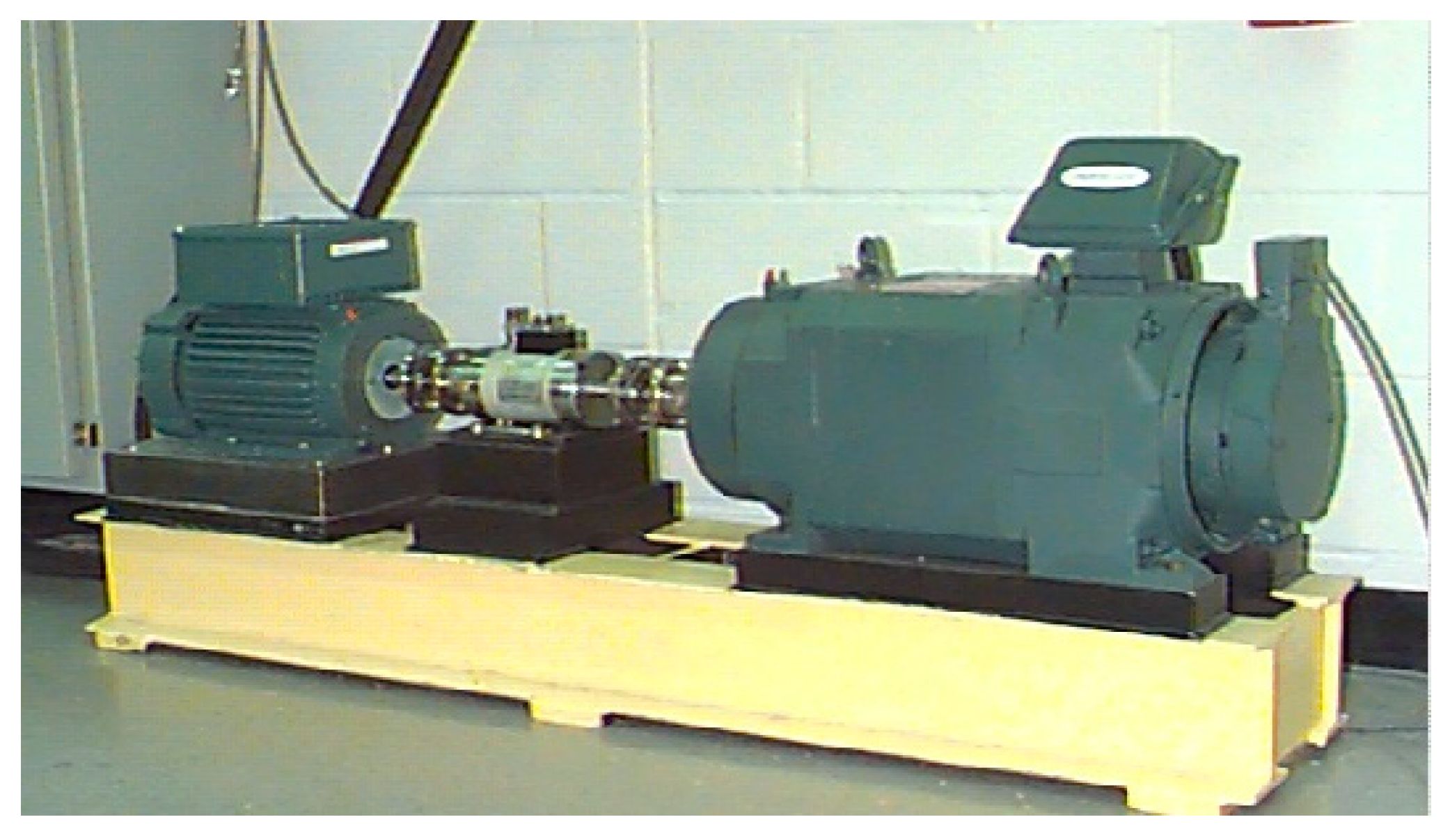

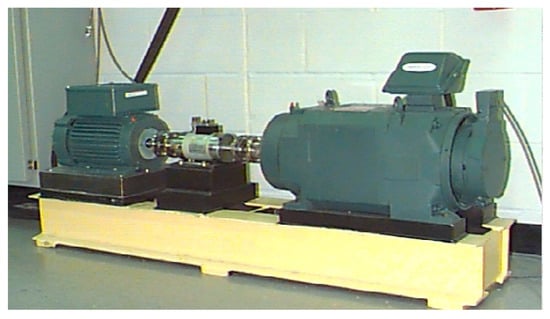

The Case Western Reserve University bearing dataset is collected from a test bench shown in Figure 5. From left to right, the setup consists of a 1.5 kW motor, a torque transducer, a rolling bearing test module, and a power meter. An accelerometer is mounted on the bearing housing to collect vibration signals using a 16-channel data recorder. The motor speed is maintained at approximately 1800 rpm, with a sampling frequency of 12 kHz. To obtain different types of fault data, single-point faults are artificially introduced via electrical discharge machining on different components of the deep-groove ball bearing SKF6205, simulating multiple fault conditions including inner race faults, outer race faults, and rolling element faults.

Figure 5.

Bearing data test bench.

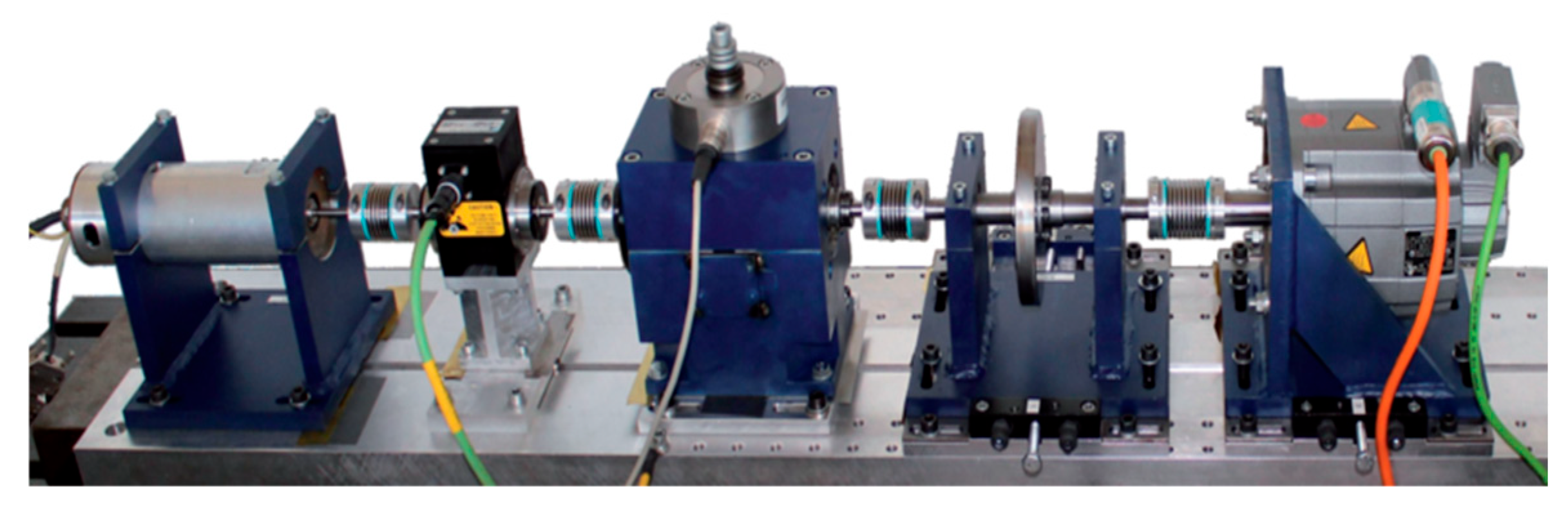

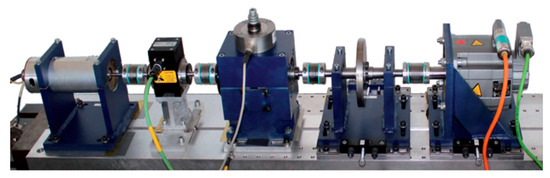

The Paderborn University bearing dataset test bench is illustrated in Figure 6. It is driven by an electric motor and equipped with a torque measurement shaft for real-time monitoring and measurement of torque within the transmission system. The bearing test module simulates actual bearing operating conditions and collects experimental data, while a flywheel and a load motor provide the required load. During the experiments, various types, locations, and severities of bearing damage are artificially simulated on the test module. The bearing model used is a 6203 ball bearing. A piezoelectric accelerometer is mounted on the bearing housing to acquire vibration signals, with a sampling frequency set to 64 kHz. The sampling duration is 4 s per measurement, under operating conditions of 1500 rpm, a load torque of 0.7 N·m, and a radial load of 1000 N.

Figure 6.

Bearing data experimental platform.

3.1. Experimental Results of the Case Western Reserve University Dataset

In this experiment, transfer performance testing was conducted on the CDAN, CNN, CORAL, DANN, DANN-MK, CNN-Transformer, DMT and E-DANNMK models. Through a comparative experiment, four different operating conditions (C0, C1, C2, and C3) from the Case Western Reserve University Bearing Dataset were selected to design 12 transfer tasks, as detailed in Table 1. To ensure statistical robustness, all experiments were repeated independently five times, and the reported results are the averages over these runs. To ensure the comparability of data distributions between the source and target domains and eliminate scale differences, this study applies a consistent preprocessing pipeline to the vibration signal data from both domains. First, each sample is independently standardized using sample-wise Z-score normalization, i.e., subtracting its own mean and dividing by its standard deviation. This operation does not rely on any global statistics, thereby avoiding systematic bias introduced by inter-domain overall distribution differences. Meanwhile, during model training, batch normalization layers dynamically adjust the distribution of internal network features to further adapt and reduce inter-domain discrepancies. The entire preprocessing procedure remains entirely consistent across the source and target domains, ensuring that any differences between them originate solely from genuine variations in operating conditions or fault patterns, rather than from inconsistencies in data processing.

Table 1.

Transfer tasks under different operating conditions.

The operating condition information of the Case Western Reserve University dataset is presented in Table 2. This table records key parameters under different rotational speeds and the corresponding classification information associated with each speed, providing foundational data support for further analysis of system characteristics under varying conditions. The table parameters include: fault labels, covering inner race fault (IR), ball fault (BR), and outer race fault (OR); horsepower (HP); rotational speed (r/min); and condition labels, with four representative test conditions (C0–C3) selected in the table.

Table 2.

Operating condition information of the Case Western Reserve University dataset.

In this section, eight different models were applied to conduct 12 transfer tasks, and the fault diagnosis accuracy for each task is presented in Table 3. To ensure the statistical reliability of the results, each transfer task was independently repeated 5 times under the same hyperparameter settings. All diagnostic accuracy values reported in this paper are the averages of these five independent runs.

Table 3.

Experimental Results of Different Transfer Learning Methods on the Case Western Reserve University Dataset (%).

Based on the comparison with DANNMK’s accuracy of 91.20% and the baseline accuracy of 76.39%, the model proposed in this paper achieves an accuracy of 95.37%, representing an improvement of 5–20%. This not only significantly surpasses the baseline model but also outperforms CNN-Transformer’s accuracy of 92.78% and DMT’s accuracy of 93.56%, with an improvement margin of approximately 1.6–2.8%. In several tasks such as T5, T9, and T12, E-DANNMK consistently maintains accuracy above 99.00%, exceeding CNN-Transformer and DMT by an average of 0.4–0.7%. This demonstrates its stable high-precision diagnostic capability in well-conditioned tasks, with a comprehensive improvement range of approximately 1–5%.

However, in challenging tasks involving significant operational condition differences, the advantage of E-DANNMK narrows, revealing its performance boundaries. For instance, in tasks T1 and T3, where the source domain conditions (1 HP, 1772 r/min) differ notably from the target domain conditions (2/3 HP, 1730 r/min) in terms of load and rotational speed, cross-domain adaptation becomes more difficult. Consequently, E-DANNMK’s accuracy does not exceed 90.00% in these cases. Nevertheless, its accuracy in T1 and T3—87.50% and 84.26%, respectively—remains comparable to, or even slightly surpasses, CNN-Transformer’s 89.55% and 83.84% and DMT’s 88.75% and 84.10% in the same tasks. This indicates that E-DANNMK remains robustly competitive even when faced with extreme distribution discrepancies.

In summary, the E-DANNMK model achieves stable performance improvements of 1–5% in most tasks, particularly under moderate operational condition differences, demonstrating strong domain adaptation capabilities. In a few extreme challenge tasks, although it maintains performance on par with mainstream models, its overall accuracy remains constrained by significant variations in operational conditions. However, a limitation of the E-DANNMK model was identified in tasks with extreme domain shifts, such as those in the CWRU dataset featuring vastly different loads (T1, T3) and rotational speeds. The resulting divergence in feature distributions exceeds the alignment capacity of the current MK-MMD and adversarial learning framework, leading to potential performance degradation under such disparate operating conditions. To address this in complex and unpredictable industrial settings, potential strategies include online fine-tuning with limited target data or incorporating physics-informed model guidance to enhance robustness.

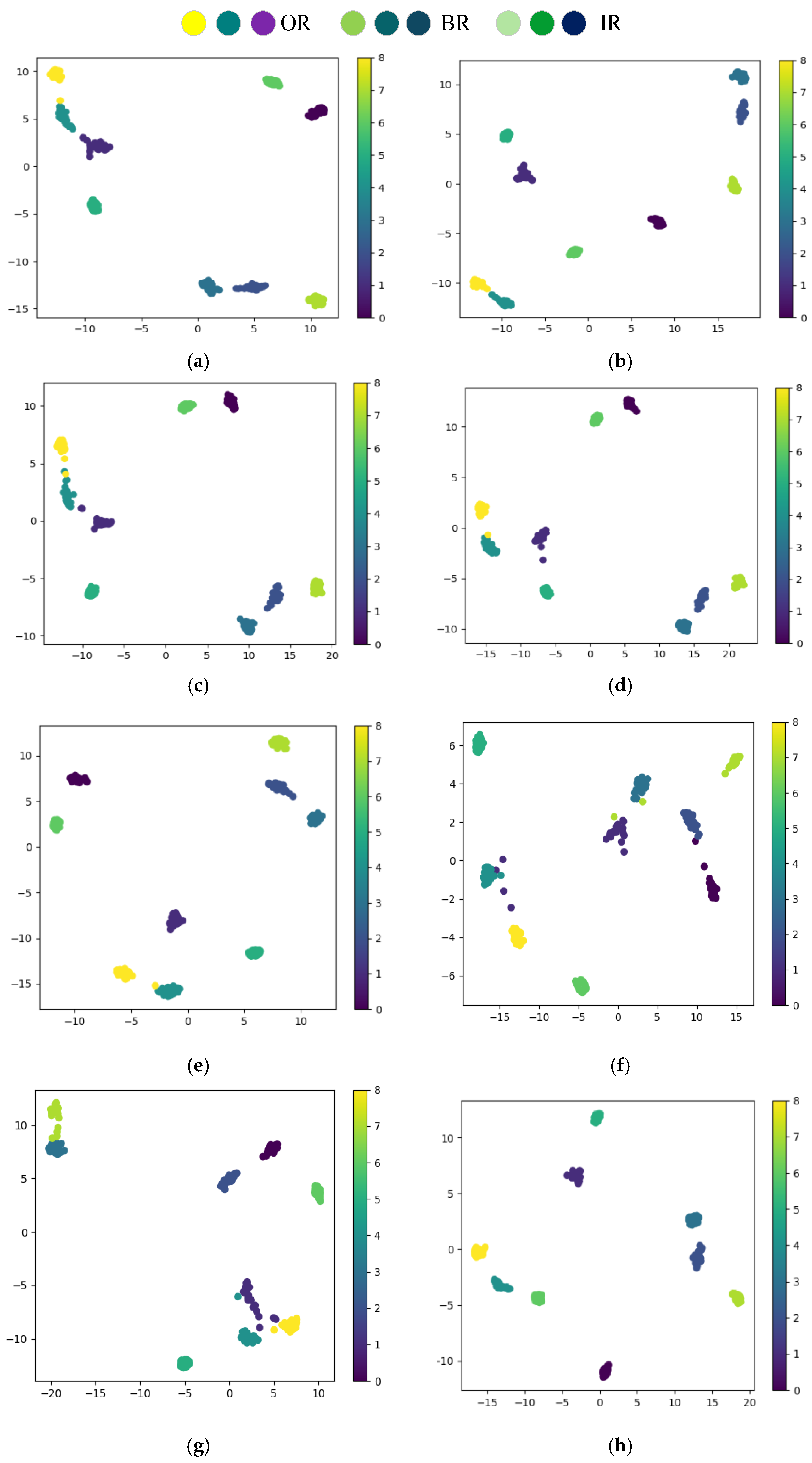

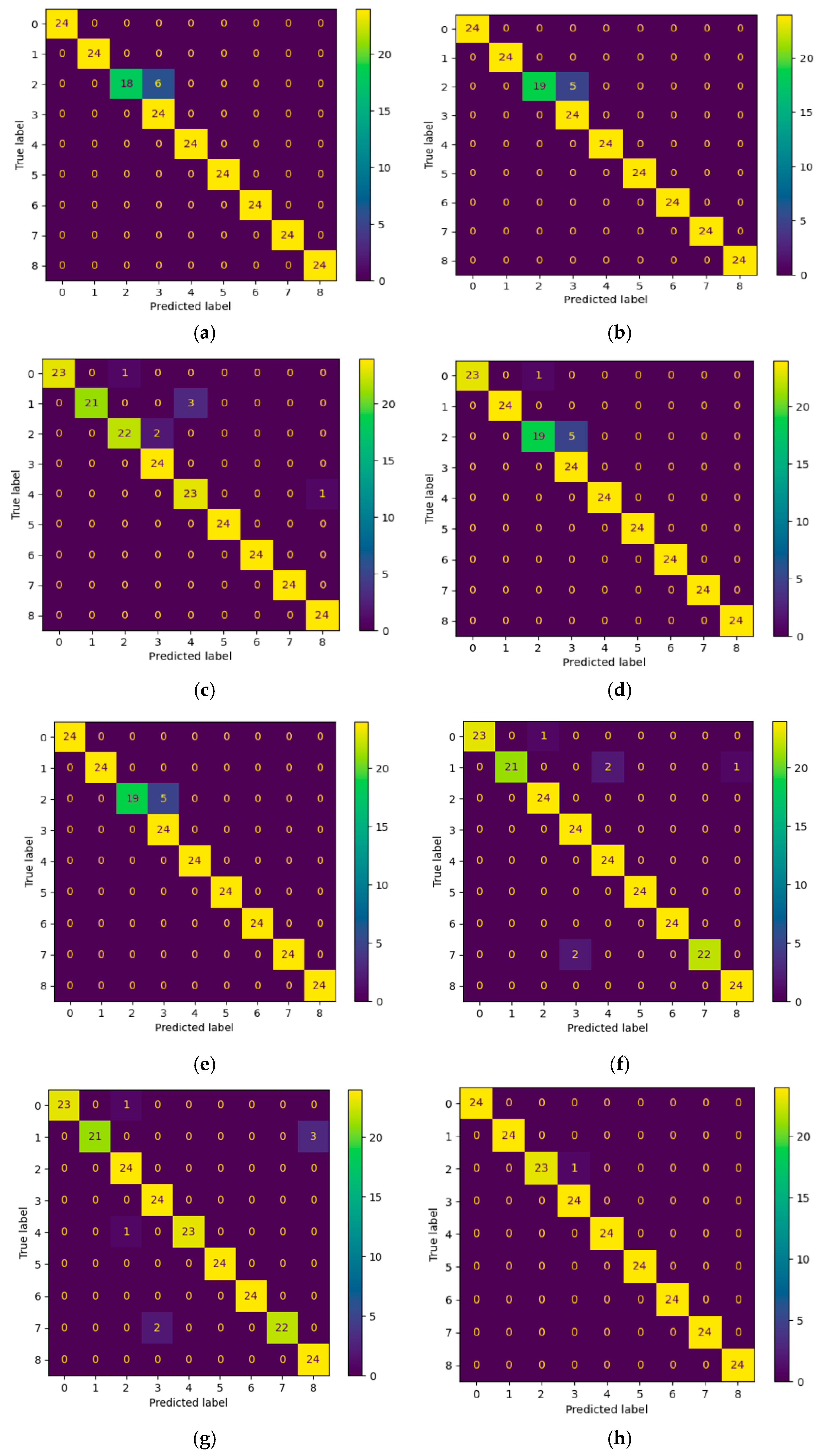

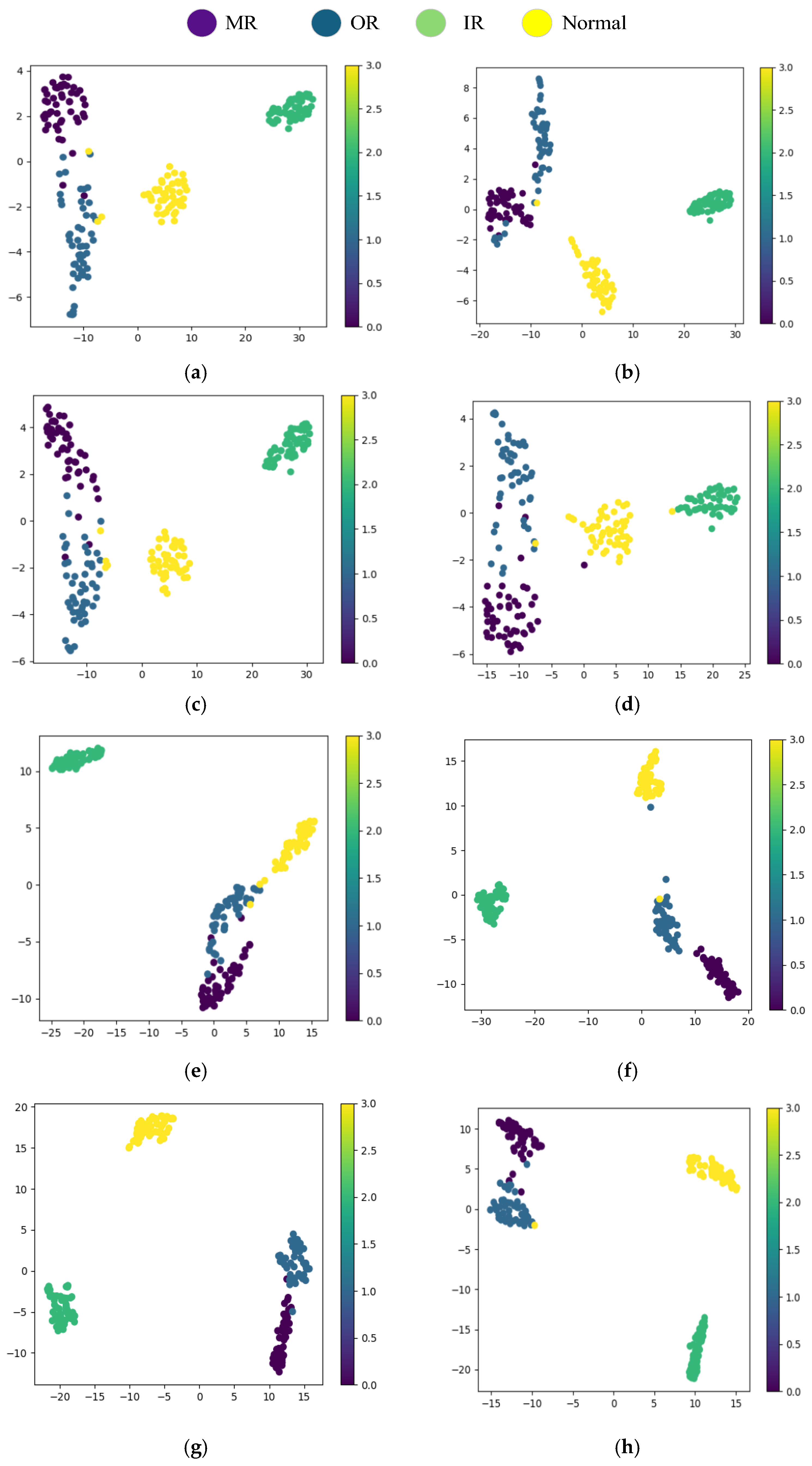

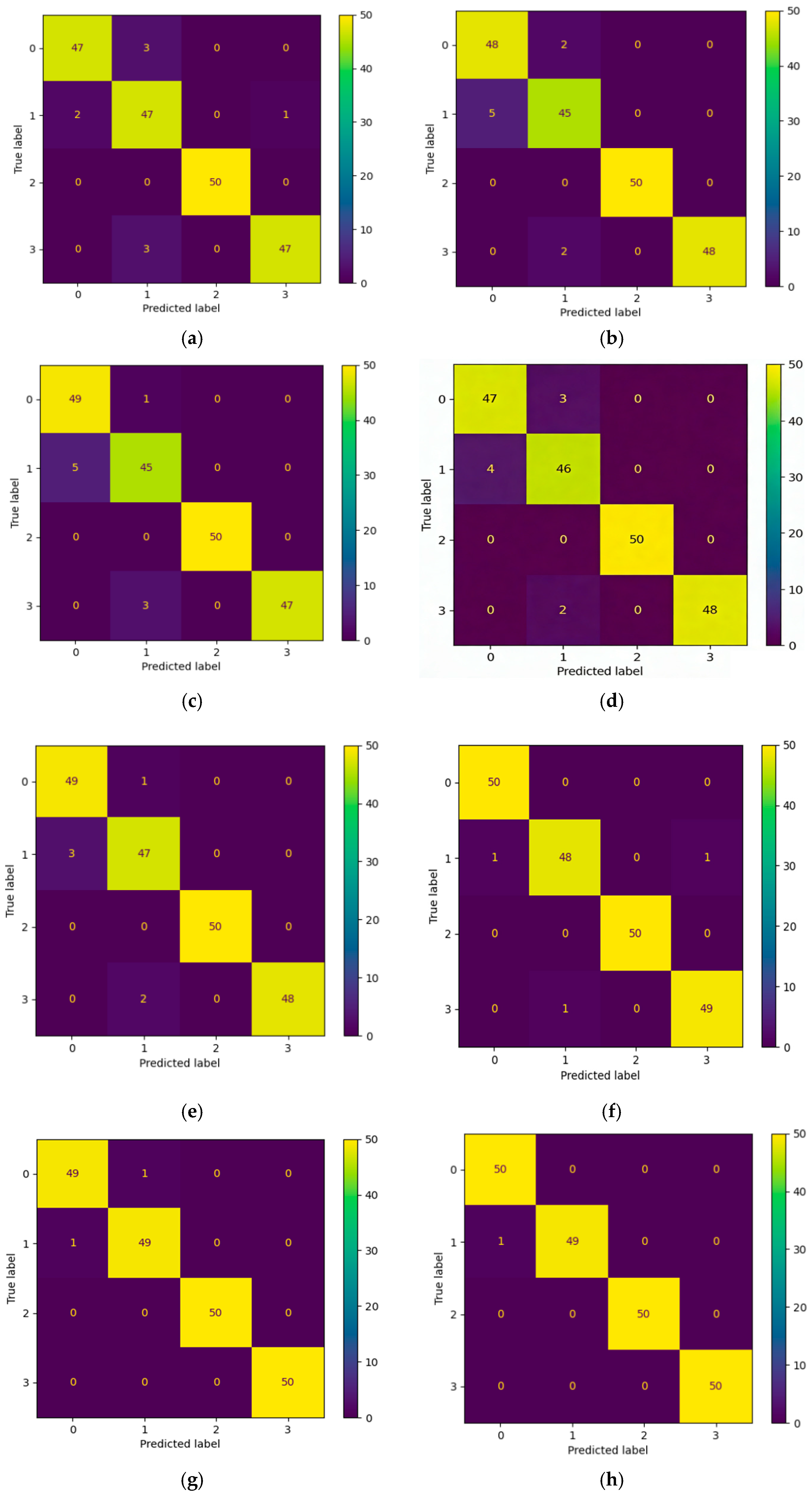

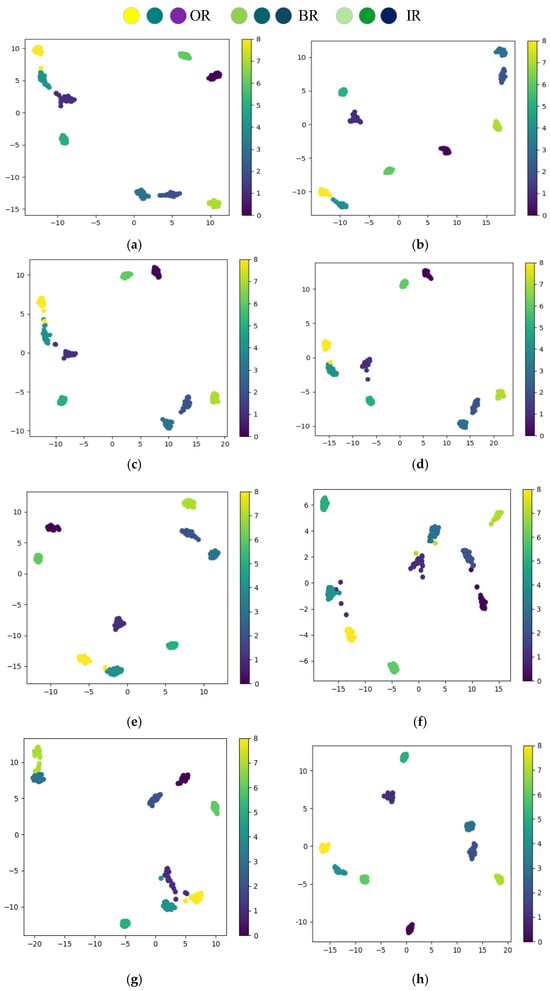

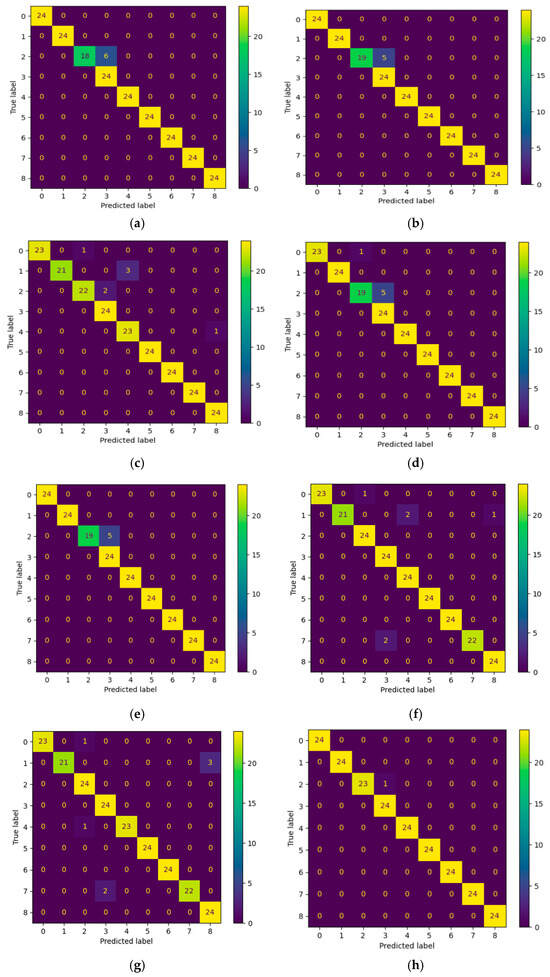

To comprehensively evaluate the generalization performance of the efficient domain adaptation model E-DANNMK proposed in this chapter, the T4 transfer task is selected for visual analysis. Figure 7 and Figure 8 present the t-SNE distribution plots and confusion matrices for the classification predictions of the trained CDAN, CNN, CORAL, DANN, DANN-MK, CNN-Transformer, DMT and E-DANNMK models on the test set, respectively.

Figure 7.

Comparison of t-SNE distribution plots across different models on the CWRU dataset: (a) CDAN; (b) CNN; (c) CORAL; (d) DANN; (e) DANN-MK; (f) CNN-Transformer; (g) DMT; (h) E-DANNMK.

Figure 8.

Comparison of confusion matrices across different models on the CWRU dataset: (a) CDAN; (b) CNN; (c) CORAL; (d) DANN; (e) DANN-MK; (f) CNN-Transformer; (g) DMT; (h) E-DANNMK.

The fault diagnosis results are shown in Figure 7 and Figure 8. Note: The t-SNE visualization is for qualitative analysis; the presented plot is a representative result from multiple runs with fixed random seed for reproducibility. The proposed E-DANNMK method demonstrates notable clustering advantages in the feature space, with samples of different bearing fault states exhibiting high intra-class compactness, clear inter-class separation, and well-defined cluster boundaries. In the corresponding confusion matrix, only one value in the diagonal region is 23, while all others are 24. Observing the non-zero values in the off-diagonal region reveals that the misclassification occurs between the second-level and third-level ball faults. These results indicate that, in most cases, the proposed model can effectively distinguish faults at different locations and severity levels, with only a few misclassifications occurring between different severity levels of the same fault type.

In contrast, the t-SNE plots of the comparison models show varying degrees of overlap and mixing among points representing different bearing fault states. In the results of the CDAN, CNN, and DANN-MK models, points of two fault types are close to each other, while in the results of the CORAL and DANN models, points from two fault types overlap. Further analysis of the confusion matrices reveals that the diagonal values for CDAN, CNN, and DANN-MK all contain at least one value not equal to 24, with some falling below 20. Misclassifications mainly occur in the second-level ball fault state, indicating that the misclassification rates of these three models are higher than that of E-DANNMK. For CORAL and DANN, there are four and two non-24 diagonal values, respectively, with misclassifications occurring in ball fault states and inner race fault states, suggesting increased misclassification rates and correspondingly reduced fault diagnosis accuracy.

3.2. Experimental Results of the Paderborn University Dataset

Table 4 Operating condition information of the Paderborn University dataset includes the following parameters: fault type, load torque (N·m), rotational speed (rpm), and radial force (N).

Table 4.

Operating Condition Information of the Paderborn University Dataset.

The transfer performance of the CDAN, CNN, CORAL, DANN, DANN-MK, CNN-Transformer, DMT and E-DANNMK models was evaluated through a comparative experiment. Specifically, four different operating conditions from the Paderborn University dataset—namely T0, T1, T2, and T3—were selected to design 12 transfer tasks. enabling a systematic assessment of the differences in cross-domain diagnostic capability among the models. The classification accuracy of each model is shown in Table 5.

Table 5.

Experimental Results of Different Transfer Learning Methods on the Paderborn University Dataset (%).

In the transfer diagnosis tasks of this study, the E-DANNMK model demonstrates stable and leading performance in most cases. For instance, in task T11, E-DANNMK achieves an accuracy of 97.00%, which represents an improvement of approximately 0.5% compared to CNN-Transformer’s 96.00% and DMT’s 96.50%. In task T7, E-DANNMK reaches 99.50%, outperforming CNN-Transformer’s 98.50% and DMT’s 99.00% by 1.0% and 0.5%, respectively. Across multiple tasks such as T3, T4, T5, and T9, E-DANNMK consistently maintains accuracy above 98.00%, exhibiting comparable performance with minor fluctuations relative to CNN-Transformer and DMT, with overall improvements ranging from −0.5% to 1.0%. This indicates that E-DANNMK possesses stable and high-precision diagnostic capability under relatively smooth variations in operational conditions.

However, E-DANNMK also reveals certain performance limitations in some tasks, highlighting its adaptation boundaries. For example, in tasks T1, T2, T6, T10, and T12, its accuracy is slightly lower than that of CNN-Transformer or DMT, with gaps ranging from approximately 0.5% to 1.0%. Take T6 as an example: E-DANNMK achieves 98.5%, which is 1.0% lower than DMT. This is related to the combined variations in operational parameters such as rotational speed, load torque, and radial force. For instance, when the rotational speed decreases from 1500 rpm to 900 rpm while the load torque drops from 0.7 N·m to 0.1 N·m, the inter-domain distribution discrepancy significantly increases, thereby raising the difficulty of cross-domain adaptation for the model.

Overall, E-DANNMK maintains performance comparable to or slightly better than CNN-Transformer and DMT in most tasks, demonstrating good robustness in stable operational condition transfers. However, when faced with complex cross-domain scenarios involving significant multi-parameter variations, its performance still exhibits fluctuations, indicating room for improvement in the model’s adaptability to extreme distribution discrepancies.

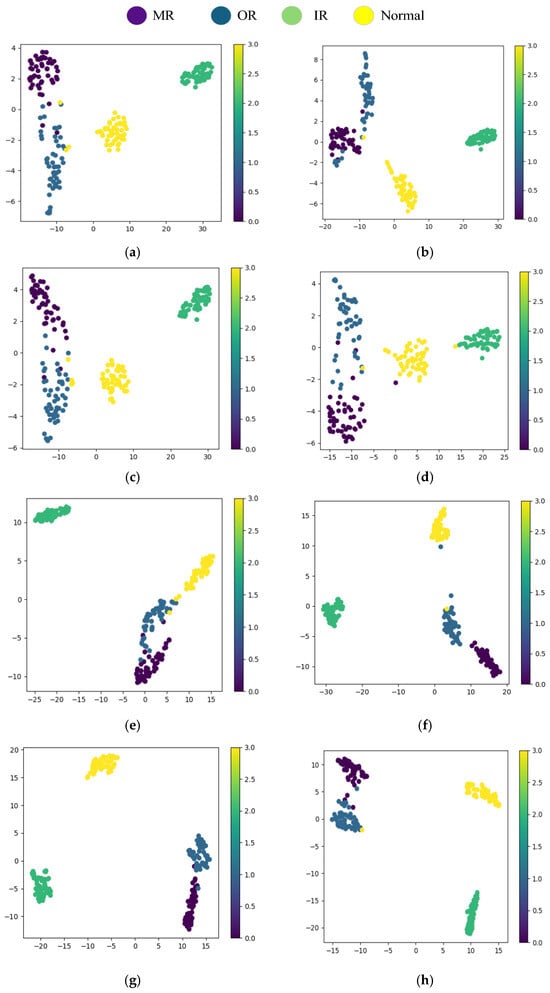

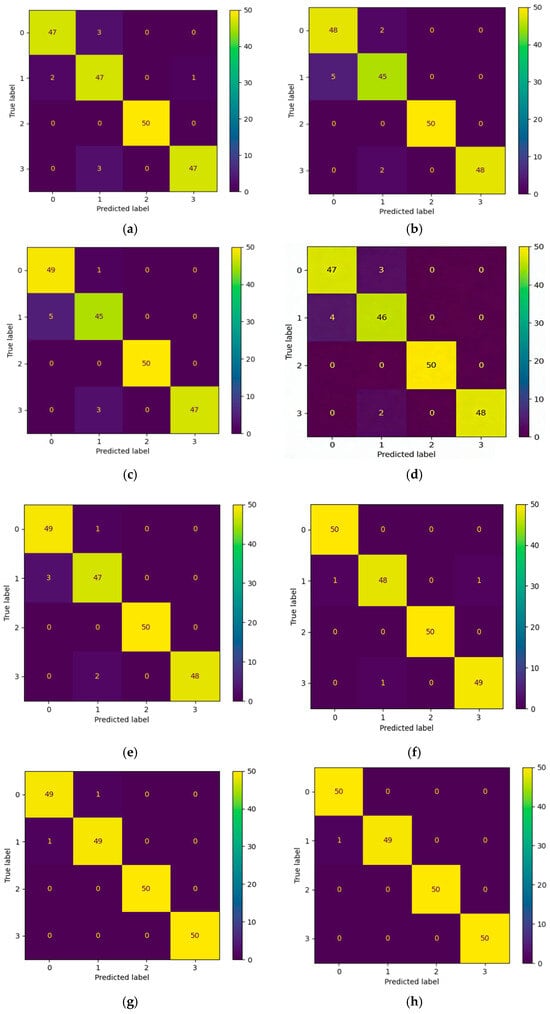

To comprehensively evaluate the generalization performance of the efficient domain adaptation model E-DANNMK proposed in this chapter, the T7 transfer task was selected for visual analysis. Figure 9 and Figure 10 present the t-SNE distribution plots and confusion matrices for the classification predictions of the trained CDAN, CNN, CORAL, DANN, DANN-MK, CNN-Transformer, DMT and E-DANNMK models on the test set, respectively.

Figure 9.

Comparison of t-SNE distribution plots across different models on the PU dataset: (a) CDAN; (b) CNN; (c) CORAL; (d) DANN; (e) DANN-MK; (f) CNN-Transformer; (g) DMT; (h) E-DANNMK.

Figure 10.

Comparison of confusion matrices across different models on the PU dataset: (a) CDAN; (b) CNN; (c) CORAL; (d) DANN; (e) DANN-MK; (f) CNN-Transformer; (g) DMT; (h) E-DANNMK.

According to the t-SNE distribution maps and the corresponding confusion matrix analysis results shown in Figure 9 and Figure 10, Note: The t-SNE visualization is for qualitative analysis; the presented plot is a representative result from multiple runs with fixed random seed for reproducibility. The E-DANNMK method proposed in this paper demonstrates favorable clustering characteristics in the feature space, with samples of each category distributed in a concentrated manner and clear boundaries between classes. From the corresponding confusion matrix, it can be observed that E-DANNMK achieves completely accurate classification for most categories, with only one non-50 value. Slight confusion exists between inner race faults (Category 1) and rolling element faults (Category 2) for a few samples, but the overall classification accuracy is high and stability is strong. In contrast, traditional methods such as CDAN, CNN, and DANN-MK show obvious inter-class overlaps in their t-SNE maps, and their confusion matrices reveal misclassifications across multiple categories, particularly between rolling element faults and normal states, with some models showing significantly fewer than 50 correct classifications for Category 2, reflecting insufficient diagnostic reliability. Further comparison with two advanced models, CNN-Transformer and DMT, indicates that although their feature distributions are superior to traditional methods, their confusion matrices still show multiple misclassifications across categories. Their performance in distinguishing between rolling element faults and inner race faults remains unstable, especially as the number of correct classifications for certain categories does not reach 50, indicating that their classification consistency falls short of E-DANNMK. In summary, on the Paderborn dataset, E-DANNMK not only exhibits superior feature separability but also achieves higher accuracy and stronger stability in practical classification tasks.

4. Discussion

This paper proposes an efficient domain adaptation model, E-DANNMK, aimed at addressing data distribution discrepancies caused by variations in operating conditions in bearing fault diagnosis. By deeply integrating residual networks, an efficient channel attention mechanism, and domain adversarial networks, an unsupervised diagnostic framework is constructed. Experiments conducted on two major bearing datasets—Case Western Reserve University and Paderborn University—demonstrate that the E-DANNMK model achieves the highest diagnostic accuracy across all tasks, with an average accuracy of 94.21%, significantly outperforming traditional domain adaptation methods. The specific conclusions are as follows:

- A lightweight fault feature extractor design is proposed. The ECA module is embedded into the traditional ResNet-18 architecture to construct a dynamic feature calibration mechanism, enabling dynamic allocation of channel weights. This design achieves adaptive learning of inter-channel dependencies through lightweight computation, further enhancing the feature response intensity in key fault frequency bands;

- A dual-path domain adaptation fault diagnosis framework is proposed, which integrates multi-kernel statistical alignment and gradient reversal adversarial training to achieve precise cross-condition feature distribution alignment. On one hand, MK-MMD is introduced to explicitly constrain global distribution shift and accurately quantify high-order statistical differences between the source and target domains. On the other hand, gradient reversal adversarial training is adopted to implicitly optimize local feature confusion, significantly increasing the domain classification error rate in transfer tasks on the two major bearing datasets. These two components synergistically form a dual-drive mechanism, which substantially enhances cross-condition generalization robustness compared to using either method alone.

- The proposed method still exhibits limitations in distinguishing between different severity levels of the same fault type and in differentiating healthy states from incipient faults. Experimental analysis reveals that the primary misclassifications occur in two scenarios: first, between different severity levels of the same fault type (e.g., Grade 2 and Grade 3 ball faults), and second, between healthy states and early-stage outer race faults. This indicates that, while the model effectively learns domain-invariant features for distinguishing major fault categories across varying operational conditions under strong domain adaptation objectives, its ability to capture and discern fine-grained “quantitative-change” features that characterize fault progression, as well as subtle early-stage fault signatures, remains insufficient.

Author Contributions

Methodology, P.L.; software, P.L. and Z.L.; writing—original draft preparation, P.L.; writing—review and editing, P.L. and Z.L.; funding acquisition, Z.L. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by [the Scientific Research Foundation of Hunan Provincial Education Department] grant number [23B0698].

Data Availability Statement

The publicly available datasets supporting the conclusions of this study are derived from two well-established benchmarks: (1) the CWRU Bearing Data Center, accessible via its official channels (refer to citation [38]); and (2) the PU bearing dataset, as detailed in reference [39]. No new archived data were created by the authors in this study.

Conflicts of Interest

The authors declare that there is no conflict of interest regarding the publication of this paper.

Abbreviations

The following abbreviations are used in this manuscript:

| LMMD | Local Maximum Mean Discrepancy |

| GRL | Gradient Reversal Layer |

| ECA | Efficient Channel Attention |

| GAP | Global Average Pooling |

| MK-MMD | Multiple Kernel Maximum Mean Discrepancy |

| CDAN | Conditional Domain Adversarial Networks |

| CNN | Convolutional Neural Networks |

| CORAL | CORrelation Alignment |

| DANN | Domain Adversarial Neural Network |

| DMT | Dynamic Multiscale Transformer |

References

- Fang, M.; Yu, M.; Guo, G.; Feng, Z. Research on compound faults identification of aeroengine inter-shaft bearing based on CCF-complexity-VMD-SVD. Struct. Health Monit.—Int. J. 2023, 22, 2688–2707. [Google Scholar] [CrossRef]

- Zhou, J.; Yang, X.; Liu, L.; Wang, Y.; Wang, J.; Hou, G. Fuzzy broad learning system combined with feature-engineering-based fault diagnosis for bearings. Machines 2022, 10, 1229. [Google Scholar] [CrossRef]

- Zhang, W.; Li, X.; Ding, Q. Deep residual learning-based fault diagnosis method for rotating machinery. ISA Trans. 2019, 95, 295–305. [Google Scholar] [CrossRef]

- Razavi, M.; Mavaddati, S.; Koohi, H. ResNet deep models and transfer learning technique for classification and quality detection of rice cultivars. Expert Syst. Appl. 2024, 247, 123276. [Google Scholar] [CrossRef]

- Gan, Y.; Xiang, T.; Liu, H.; Ye, M.; Zhou, M. Generative adversarial networks with adaptive learning strategy for noise-to-image synthesis. Neural Comput. Appl. 2023, 35, 6197–6206. [Google Scholar] [CrossRef]

- Bongini, P.; Pancino, N.; Bendjeddou, A.; Scarselli, F.; Maggini, M.; Bianchini, M. Composite graph neural networks for molecular property prediction. Int. J. Mol. Sci. 2024, 25, 6583. [Google Scholar] [CrossRef] [PubMed]

- Jiao, Z.; Zhang, Z.; Li, Y.; Wu, Y.; Liu, L.; Shao, S. Rolling bearing fault diagnosis based on the fusion of sparse filtering and discriminative domain adaptation method under multi-channel data-driven. Meas. Sci. Technol. 2024, 35, 066112. [Google Scholar] [CrossRef]

- Lei, Y.; Fan, C.; He, H.; Xie, Y. Enabling efficient cross-building HVAC fault inferences through novel unsupervised domain adaptation methods. Build. Environ. 2025, 272, 112678. [Google Scholar] [CrossRef]

- Jia, Z.; Ren, L.; Shen, Z.-J.M.; Yang, L.T. Multisource domain separation network for industrial intelligent monitoring in unseen conditions. IEEE Trans. Cybern. 2025; Online ahead of print. [Google Scholar] [CrossRef] [PubMed]

- Schwendemann, S.; Amjad, Z.; Sikora, A. Bearing fault diagnosis with intermediate domain based layered maximum mean discrepancy: A new transfer learning approach. Eng. Appl. Artif. Intell. 2021, 105, 104415. [Google Scholar] [CrossRef]

- Zhang, W.; Li, X.; Ma, H.; Luo, Z.; Li, X. Transfer learning using deep representation regularization in remaining useful life prediction across operating conditions. Reliab. Eng. Syst. Saf. 2021, 211, 107556. [Google Scholar] [CrossRef]

- Zhang, Z.; Shao, M.; Ma, C.; Lv, Z.; Zhou, J. An enhanced domain-adversarial neural networks for intelligent cross-domain fault diagnosis of rotating machinery. Nonlinear Dyn. 2022, 108, 2385–2404. [Google Scholar] [CrossRef]

- Zhang, W.; Li, X.; Ma, H.; Luo, Z.; Li, X. Open-set domain adaptation in machinery fault diagnostics using instance-level weighted adversarial learning. IEEE Trans. Ind. Inform. 2021, 17, 7445–7455. [Google Scholar] [CrossRef]

- Che, C.; Wang, H.; Ni, X.; Fu, Q. Domain adaptive deep belief network for rolling bearing fault diagnosis. Comput. Ind. Eng. 2020, 143, 106427. [Google Scholar] [CrossRef]

- Hei, Z.; Shi, Q.; Fan, X.; Qian, F.; Kumar, A.; Zhong, M.; Zhou, Y. Distance-guided domain adaptation for bearing fault diagnosis under variable operating conditions. Meas. Sci. Technol. 2024, 35, 086128. [Google Scholar] [CrossRef]

- Fu, H.; Yu, D.; Zhan, C.; Zhu, X.; Xie, Z. Unsupervised rolling bearing fault diagnosis method across working conditions based on multiscale convolutional neural network. Meas. Sci. Technol. 2024, 35, 035018. [Google Scholar] [CrossRef]

- Cheng, W.; Liu, X.; Xing, J.; Chen, X.; Ding, B.; Zhang, R.; Zhou, K.; Huang, Q. AFARN: Domain adaptation for intelligent cross-domain bearing fault diagnosis in nuclear circulating water pump. IEEE Trans. Ind. Inform. 2023, 19, 3229–3239. [Google Scholar] [CrossRef]

- Lei, P.; Shen, C.; Wang, D.; Chen, L.; Zhou, Z.; Zhu, Z. A new transferable bearing fault diagnosis method with adaptive manifold probability distribution under different working conditions. Measurement 2021, 173, 108565. [Google Scholar] [CrossRef]

- Mao, W.; Liu, Y.; Ding, L.; Safian, A.; Liang, X. A new structured domain adversarial neural network for transfer fault diagnosis of rolling bearings under different working conditions. IEEE Trans. Instrum. Meas. 2021, 70, 3509013. [Google Scholar] [CrossRef]

- Li, J.; Chen, B.; Shen, C.; Wang, D.; Shi, J.; Jiang, X. A new probability guided domain adversarial network for bearing fault diagnosis. IEEE Sens. J. 2023, 23, 1462–1470. [Google Scholar] [CrossRef]

- Zhang, Y.; Ren, Z.; Zhou, S.; Feng, K.; Yu, K.; Liu, Z. Supervised contrastive learning-based domain adaptation network for intelligent unsupervised fault diagnosis of rolling bearing. IEEE—ASME Trans. Mechatron. 2022, 27, 5371–5380. [Google Scholar] [CrossRef]

- Zhong, Z.; Zhang, Z.; Cui, Y.; Xie, X.; Hao, W. Failure mechanism information-assisted multi-domain adversarial transfer fault diagnosis model for rolling bearings under variable operating conditions. Electronics 2024, 13, 2133. [Google Scholar] [CrossRef]

- Liu, F.; Zhang, F.; Geng, X.; Mu, L.; Zhang, L.; Sui, Q.; Jia, L.; Jiang, M.; Gao, J. Structural discrepancy and domain adversarial fusion network for cross-domain fault diagnosis. Adv. Eng. Inform. 2023, 58, 102217. [Google Scholar] [CrossRef]

- Wang, C.; Wu, S.; Shao, X. Unsupervised domain adaptive bearing fault diagnosis based on maximum domain discrepancy. EURASIP J. Adv. Signal Process. 2024, 2024, 11. [Google Scholar] [CrossRef]

- Zhang, Q.; Lv, Z.; Hao, C.; Yan, H.; Fan, Q. Intelligent fault diagnosis of bearings in unsupervised dynamic domain adaptation networks under variable conditions. IEEE Access 2024, 12, 82911–82925. [Google Scholar] [CrossRef]

- Wang, H.; Li, Y.; Jiang, M.; Zhang, F. Partial transfer learning method based on MDWCAN for rolling bearing fault diagnosis under noisy conditions. Proc. Inst. Mech. Eng. Part C—J. Mech. Eng. Sci. 2024, 238, 8561–8575. [Google Scholar] [CrossRef]

- Yu, X.; Wang, Y.; Liang, Z.; Shao, H.; Yu, K.; Yu, W. An adaptive domain adaptation method for rolling bearings’ fault diagnosis fusing deep convolution and self-attention networks. IEEE Trans. Instrum. Meas. 2023, 72, 3509814. [Google Scholar] [CrossRef]

- Niu, M.; Jiang, H.; Shao, H. Dynamic weighted adversarial domain adaptation network with sparse representation denoising module for rotating machinery fault diagnosis. Eng. Appl. Artif. Intell. 2025, 142, 109963. [Google Scholar] [CrossRef]

- Du, Z.; Liu, D.; Cui, L. Dynamic model-driven dictionary learning-inspired domain adaptation strategy for cross-domain bearing fault diagnosis. Reliab. Eng. Syst. Saf. 2025, 258, 110905. [Google Scholar] [CrossRef]

- Li, J.; Yue, K.; Wu, Z.; Jiang, F.; Zhong, Z.; Li, W.; Zhang, S. Source-free progressive domain adaptation network for universal cross-domain fault diagnosis of industrial equipment. IEEE Sens. J. 2025, 25, 8067–8078. [Google Scholar] [CrossRef]

- Goodarzi, P.; Schutze, A. Practical test-time domain adaptation for industrial condition monitoring by leveraging normal-class data. Sensors 2025, 25, 7614. [Google Scholar] [CrossRef]

- Shen, X.; Li, L.; Ma, Y.; Xu, S.; Liu, J.; Yang, Z.; Shi, Y. VLCIM: A vision-language cyclic interaction model for industrial defect detection. IEEE Trans. Instrum. Meas. 2025, 74, 2538713. [Google Scholar] [CrossRef]

- Shen, X.; Wang, Y.; Ma, Y.; Li, L.; Niu, Y.; Yang, Z.; Shi, Y. A multi-expert diffusion model for surface defect detection of valve cores in special control valve equipment systems. Mech. Syst. Signal Process. 2025, 237, 113117. [Google Scholar] [CrossRef]

- Xu, B.; Tan, C.; Wu, Y.; Li, F. Gradually vanishing bridge based on multi-kernel maximum mean discrepancy for breast ultrasound image classification. J. Adv. Comput. Intell. Intell. Inform. 2024, 28, 835–844. [Google Scholar] [CrossRef]

- Qin, H.; Pan, J.; Li, J.; Huang, F. Improved conditional domain adversarial networks for intelligent transfer fault diagnosis. Mathematics 2024, 12, 481. [Google Scholar] [CrossRef]

- Liang, M.; Zhou, K. Joint loss learning-enabled semi-supervised autoencoder for bearing fault diagnosis under limited labeled vibration signals. J. Vib. Control 2024, 30, 4537–4550. [Google Scholar] [CrossRef]

- Wang, Z.; Xuan, J.; Shi, T. Domain reinforcement feature adaptation methodology with correlation alignment for compound fault diagnosis of rolling bearing. Expert Syst. Appl. 2025, 262, 125594. [Google Scholar] [CrossRef]

- Jin, Y.; Song, X.; Yang, Y.; Hei, X.; Feng, N.; Yang, X. An improved multi-channel and multi-scale domain adversarial neural network for fault diagnosis of the rolling bearing. Control Eng. Pract. 2025, 154, 106120. [Google Scholar] [CrossRef]

- Chen, Q.; Zhang, F.; Wang, Y.; Yu, Q.; Lang, G.; Zeng, L. Bearing fault diagnosis based on efficient cross space multiscale CNN transformer parallelism. Sci. Rep. 2025, 15, 12344. [Google Scholar] [CrossRef]

- Chen, H.; Zou, L.; Zhuang, K.; Deng, B.; Hu, J. Adaptive noise-resilient fault diagnosis for rotating machinery via dynamic multiscale transformer networks. Struct. Health Monit.—Int. J. 2025. Online ahead of print. [CrossRef]

- Zhang, W.; Jiang, N.; Yang, S.; Li, X. Federated transfer learning for remaining useful life prediction in prognostics with data privacy. Meas. Sci. Technol. 2025, 36, 076107. [Google Scholar] [CrossRef]

- Smith, W.A.; Randall, R.B. Rolling element bearing diagnostics using the case western reserve university data: A benchmark study. Mech. Syst. Signal Process. 2015, 64–65, 100–131. [Google Scholar] [CrossRef]

- Lessmeier, C.; Kimotho, J.K.; Zimmer, D.; Sextro, W. Condition monitoring of bearing damage in electromechanical drive systems by using motor current signals of electric motors: A benchmark data set for data-driven classification. PHM Soc. Eur. Conf. 2016, 3. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.