1. Introduction

The pantograph–catenary interaction is one of the three main principal dynamic coupling relationships in high-speed systems [

1], with contact behavior between the pantograph and the catenary critically influencing operational safety and economic efficiency. As the current collection apparatus, the pantograph may induce extreme contact force fluctuations during engagement with the catenary, leading to contact anomalies: excessive force accelerates wear on the collector head and catenary wire [

2,

3,

4], potentially causing stripping or fractures, whereas insufficient force results in intermittent contact, producing abrupt voltage differentials that manifest as electrical arcing [

5,

6,

7]. These adverse contact phenomena are shown in

Figure 1. Traditional passive control approaches primarily focus on structural parameter optimization, which offers limited effectiveness with high implementation costs. Active control algorithms mitigate contact force oscillations by applying external forces to regulate the lift amplitude of the pantograph. This methodology not only delivers significantly enhanced control performance but also represents a software-driven solution with significant practical advantages.

Academically, research on active pantograph control algorithms mainly focuses on the improvement of traditional control methods. Al-Awad et al. [

8] employed a genetic algorithm to optimize PID parameters on a reduced-order model, achieving smaller overshoot and a faster response. Still, the model is overly idealized and fails to capture the contact force dynamic characteristics arising from the real pantograph–catenary coupling situation. Farhan et al. [

9] proposed a simplified fuzzy controller to reduce design complexity, demonstrating effective suppression of contact force oscillations; however, the simplified fuzzy rule set offers limited adaptability and lacks robustness guarantees against unknown disturbances. Song et al. [

10] introduced a mechanical impedance-based PD sliding-mode surface design, theoretically reducing system impedance in the dominant contact-force frequency band, yet the switching gain depends on manual tuning without algorithmic optimization. Wang et al. [

11] applied fractional-order modeling to the pantograph base air spring and used LQR via feedback linearization to handle time-varying stiffness in the pantograph–catenary coupling; nevertheless, the

and

weight matrices remain empirically set up, without further validation of optimal performance. The foregoing studies reveal a common drawback of conventional active control algorithms, which all depend on experience-driven tuning of controller parameters.

In recent years, the deep reinforcement learning (DRL) algorithm, as a decision-making algorithm, has gained extensive application in control engineering [

12,

13,

14]. Building on this approach, numerous studies have also emerged in the field of train control [

15,

16]. Wang et al. [

17,

18] pioneered the application of DRL to active pantograph control. Their work focuses on modifying DRL algorithms to accommodate the dynamic characteristics of pantograph–catenary systems, validating the method’s effectiveness in mitigating extreme oscillations of PCCF by training agents in simulation environments constructed with real-world railway-line parameters. Wang et al. [

19] conducted further research on the feasibility of improved DRL algorithms for pantograph active control, based on the Deep Deterministic Policy Gradient (DDPG) framework. Sharma et al. [

20], despite the “deep reinforcement learning” label, actually combined deep learning and traditional control: a Bi-LSTM network predicts contact force fluctuations to drive a fuzzy fractional PID controller, with Aquila optimization tuning parameters, offering a novel perspective for pantograph control. However, their deep-learning application centers on prediction and parameter optimization rather than genuine reinforcement learning. Leveraging its trial-and-error decision making, a DRL agent can function as a self-contained controller that autonomously learns corrective control forces to suppress extreme PCCF oscillations, thereby complementing and enhancing conventional control strategies.

Given that the pantograph can be modeled as a spring–damper system [

21,

22,

23], its control strategy can draw on active suspension control methods used for similarly modeled vehicle suspensions, where LQR has been widely applied in automotive suspension and railway bogie control [

24,

25,

26,

27]. Accordingly, by introducing the Crowned Porcupine Optimization algorithm (CPO) to optimize the

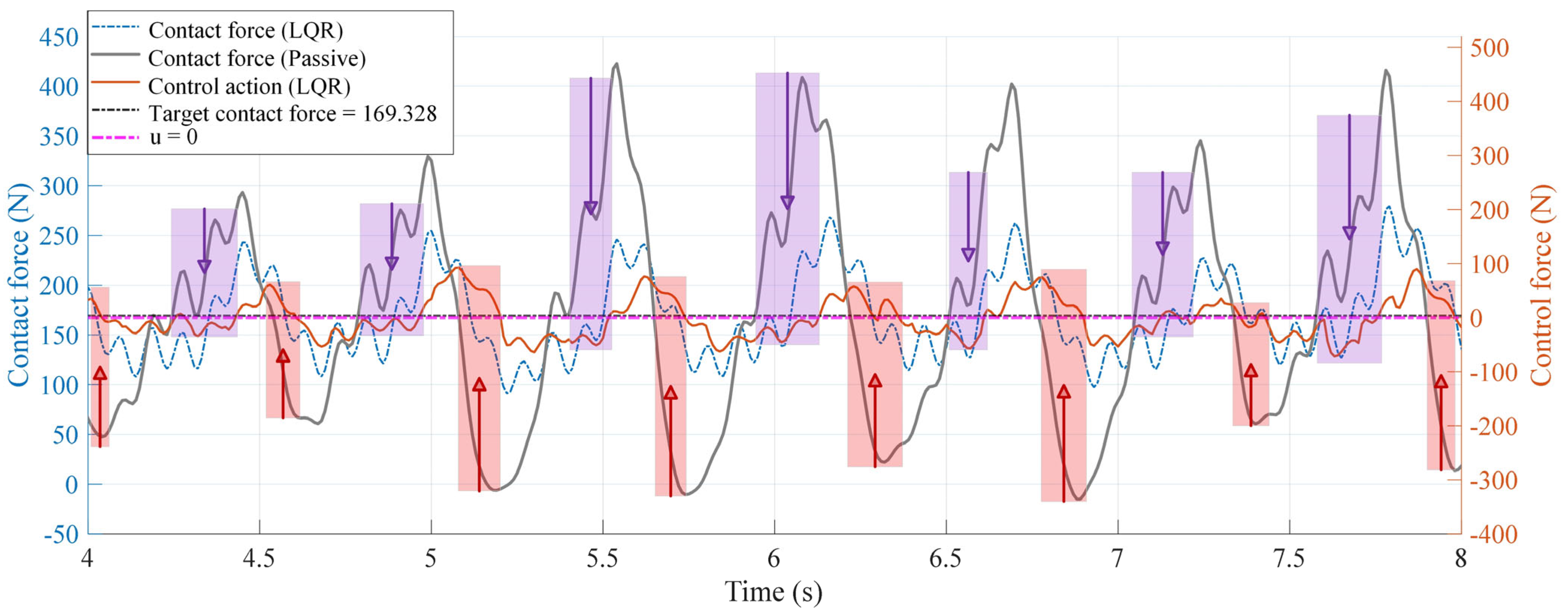

-weight matrix coefficients, optimal LQR feedback control actions are achieved. However, due to the coupled dynamics of the pantograph–catenary interaction, the PCCF still oscillates and remains significantly deviated from the target contact force [

28,

29] even under the LQR control situation. To address this issue, we designed an offline dual-model-based control law for secondary tuning of the LQR output, generating an ideal action distribution that brings the actual contact force closer to the target. However, since the dual-model strategy requires two controlled pantograph instances and cannot be directly deployed in a real-time system—and simply implementing the control law on a single-model pantograph would disrupt real-time LQR computation—we treat the tuned actions as “expert actions” and employ a combined imitation learning (IL) and DRL approach to learn the expert action distribution and online compensate the real-time CPO-LQR controller output. This enables, within a single-model framework, significant suppression of extreme contact force oscillations beyond the theoretical baseline.

Specifically, the main contributions of this paper are as follows:

A CPO-LQR baseline controller is developed by optimizing the -weight matrix via the CPO algorithm, achieving strong PCCF suppression; its control outputs are further refined using an offline control law based on the dual pantograph–catenary model structure, resulting in expert actions that outperform the baseline and provide theoretically optimal control performance.

To enhance practical applicability, a compensation control strategy is proposed by superimposing the offline expert force as well as the compensation force onto real-time CPO-LQR output , enabling an active controller based on a single pantograph–catenary model structure and yielding superior suppression of extreme oscillations.

The compensatory action is trained using the BC-SAC algorithm, which embeds behavioral cloning loss into SAC to allow partial expert imitation while preserving the environment-driven interaction capabilities; an attenuation mechanism further balances exploration and imitation, leading to better performance than pure expert-based compensation.

This article is organized as follows:

Section 2 establishes the pantograph–catenary coupling dynamic model and defines the control objectives;

Section 3 presents the core control framework proposed herein;

Section 4 provides simulation experiments and validations; and

Section 5 lists the conclusion.

2. Preliminaries and Problem Formulation

To elucidate the dynamic characteristics of contact force during pantograph–catenary coupling and provide a basis for subsequent active control strategy design, this section first establishes a mathematical model of the pantograph–catenary interaction to accurately describe the system’s dynamic response under operating conditions. The model is then embedded in a Markov decision process (MDP) framework, defining the state and action spaces for the active pantograph control task and laying a formal foundation for compensatory controller design and training based on the DRL algorithm. To ensure that the control objectives are scientifically sound and comparable, this section also quantifies the target contact force and several performance metrics by standards [

28,

29], and designs corresponding objective and reward functions, providing quantitative criteria for training and simulation validation of the control strategies.

2.1. Mathematical Modeling of the Pantograph–Catenary Coupling System

The pantograph–catenary coupling model comprises two subsystems, which are the catenary system and the pantograph system. In this work, MATLAB/Simulink R2021b is used to model both the pantograph and the catenary system as the controlled plant for offline training and online validation of the control strategy.

The catenary is a complex, nonlinear structure that must be simplified for practical modeling. Following the approach in [

30], we apply the following simplifications:

Thus, the simplified stiffness expression is derived by fitting the actual stiffness curve obtained from finite element analysis using least squares, from which the expression [

30] can be written as follows:

where

is the operating speed of the train in units of m/s;

is the span of the contact wire;

is the distance between adjacent dropper lines;

is the average stiffness of the catenary system; and

are the stiffness coefficients of the catenary system, for which the values are

,

,

,

, and

.

and

are set to 50 m and 8 m, respectively, and

is equal to 3694.5 N·m

−1.

Numerous pantograph models have been developed for specific research objectives [

33,

34]. However, simpler approaches—such as the two-mass model in [

8,

11]—cannot fully capture the pantograph’s overall structural response. Therefore, we adopt a more comprehensive three-mass model to represent the pantograph’s three main components—the collector head, the upper frame, and the lower frame—as illustrated in

Figure 3. The three-mass model is sufficient to characterize the pantograph’s dynamic behavior and has been validated in [

22].

Based on the three-mass pantograph model, force analysis is performed, and for each mass, the dynamic differential equation is derived as follows:

The above expressions can be arranged into the state-space function as follows:

where

;

;

represents the oscillatory displacement of the collector head, upper frame and lower frame of the pantograph, respectively;

denotes the static uplift force; and

is the disturbance transmitted from carriage oscillations to the pantograph base.

In this paper, we adopt the parameters of the high-speed pantograph type DSA380, where are 7.12 kg, 6 kg, and 5.8 kg, respectively; are 9430 N·m−1, 14,100 N·m−1, and 0.1 N·m−1, respectively; and are 0 N·s·m−1, 0 N·s·m−1, and 70 N·s·m−1, respectively.

2.2. Markov Decision Process Modeling

When formulating the active pantograph control problem as a Markov Decision Process, the state space and action space must be explicitly defined to enable the agent to make decisions based on current observations at each timestep while dynamically interacting with the environment, as illustrated in

Figure 4. This section details the state space and control actions required for implementing the deep reinforcement learning-based active pantograph control algorithm.

2.2.1. State Space

The state should include sufficient information to characterize the current system dynamics and environmental disturbances, enabling the control policy to compensate for oscillations or deviations promptly. Assuming control is executed at discrete time steps

s,

s,

s,

, the evolution of the pantograph–catenary coupling system state over each sampling period can be approximated as a Markov transition, where the next state depends only on the current state and the applied action. Therefore, the state space is defined as follows:

where

denotes the pantograph–catenary contact force. According to the first mass equation in (2), the contact force equals the variable stiffness coefficient of the catenary multiplied by the displacement of the collector head. Therefore, we directly focus on the state information of

rather than using

, adopting

as the primary controlled state variable to enable the agent to make more accurate control decisions.

2.2.2. Action Space

The agent’s action corresponds to the compensation force applied to the output of the real-time LQR controller by the proposed framework, denoted as

. Considering the actuator’s physical capabilities and safety limits, the compensation force must be restricted to the interval

, and the agent selects actions within this range based on the observed state. To facilitate network training and output, we typically normalize the policy network’s raw output to

, then use a linear mapping to obtain the actual compensation force value as follows:

At each discrete-time period , the agent observes the system state and outputs a normalized action. This action is mapped to a compensatory force , which is superimposed on the baseline LQR controller output to form the composite control input. Within our framework, a one-dimensional continuous compensatory force suffices to suppress catenary force oscillations while reducing policy learning complexity.

2.3. Definition of the Objective Function and the Reward Function

In this subsection, the desired contact force state for the pantograph active control task is specified in detail based on international standards. On this basis, an objective function is designed for optimizing the -weight matrix coefficients of the LQR primary controller via the CPO algorithm, and the reward function is defined to guide policy learning for the compensatory controller trained by the DRL algorithm.

2.3.1. Specification of Performance Metrics

According to the Reference [

17] and Standards [

28,

29], the target value of the desired contact force varies with train operating speed, as expressed by the following equation:

where

denotes the target contact force, in the unit of N, and

is the operating speed of the train, in the unit of km/h.

We define the control error

as the deviation between the controlled contact force

and the target contact force

, serving to assess how closely the controller drives the contact force toward its target value. Ideally, a smaller error indicates more effective suppression of extreme contact force fluctuations. The control error

is expressed as:

The standard deviation of the contact force is a key performance metric, as higher standard deviations reflect more extreme fluctuations. Ideally, this metric should be minimized. The acceptable range of the statistical contact force

is calculated based on the standard deviation, which is defined as:

where

is the mean contact force and

is the standard deviation value as specified by standards [

28,

29].

Figure 5 delineates the variation trend of target contact force when train speeds exceed 300 km/h, while contrastingly presenting the statistically derived contact force ranges under passive control at different velocities (computed per (8)). Crucially, as velocity increases, the fluctuation range of contact forces exhibits notable expansion, indicating significant amplification of contact force oscillations.

2.3.2. Objective Function

The objective function drives the offline CPO algorithm’s search for optimal LQR solutions. Its design principles encompass a dynamic target contact force, permissible standard deviation ranges with safety thresholds, and a penalty mechanism for constraint violations. The objective of overarching is to minimize the standard deviation of the contact force as much as possible while satisfying safety and convergence requirements; if significant deviations from the target contact force or potentially unsafe force levels arise, penalty terms steer the optimization process away from suboptimal solutions. The specific formulation is as follows:

where

denotes the mean contact force;

is the contact force standard deviation under active control;

is the contact force standard deviation under passive control; and

is the safety threshold for the contact force standard deviation, set to 30% of the mean contact force. The first term

reflects the degree to which the fluctuations’ amplitude exceeds acceptable levels. The second term

quantifies the deviation of the mean contact force from the target value, using an absolute value to capture deviations when the mean is above the target or below the lower bound. The third term

is a fixed penalty: a constant +10 is added to assign a large fitness value to severe violations, guiding the optimization algorithm to avoid such solutions. When all constraints are satisfied, the fitness value is determined solely by the first term

. The optimization objective is thus reduced to minimizing this standard deviation to suppress contact force fluctuations while maintaining the mean contact force around the target value.

2.3.3. Reward Function

The reward function serves as the quantitative evaluation mechanism bridging agent–environment interactions, directly governing the convergence quality and ultimate performance of learned policies. Given that the primary objective of active pantograph control is to regulate uncontrolled contact force fluctuations around the target value, we consider the error metric as the absolute deviation between actual and target states to guide the policy exploration, formally expressed as:

where

denotes the environmental state variable;

denotes the target contact force value; and

serves as the weighting coefficient, equal to 0.5.

By penalizing the absolute error—whether the contact force overshoots or undershoots the target—with a negative reward, the agent is compelled to drive this error toward zero. Maximizing the cumulative reward thus directly corresponds to minimizing the contact force deviation. In the ideal scenario, when the measured force exactly equals the target, the absolute error vanishes and the total reward achieves its theoretical maximum of zero, indicating perfect fulfillment of the control objective.

4. Experimental Validation and Result Analysis

In this section, based on the pantograph–catenary coupling model established in

Section 2.1 and the performance metrics defined in

Section 2.3.1, we conduct a detailed comparative analysis and validate the effectiveness of the proposed active compensation control framework. We present the training and testing results of both the primary controller and the compensatory controller, and illustrate the control performance of the control schemes under various speed conditions.

4.1. Hyperparameter Settings

Table 1 summarizes the definitions and values of the hyperparameters used in the CPO-optimized LQR primary controller.

For the BC-SAC algorithm, which is used to train the compensatory control policy, the hyperparameters are listed in

Table 2.

The detailed parameters of the pantograph and catenary used in the validation are provided in

Section 2.1. All experiments were conducted on a host configured with an Intel(R) Core(TM) i7-10700F CPU @2.90 GHz (Intel Corporation, Santa Clara, CA, USA), NVIDIA GeForce RTX 3080 (NVIDIA Corporation, Santa Clara, CA, USA), and 16 GB of RAM; software interaction was facilitated by PyCharm 2022 with MATLAB/Simulink R2021b, and the core algorithms were built within the PyTorch 1.13.1+cu116 environment constructed on the Python 3.9 interpreter.

4.2. Control Performance of the Baseline Controller

As the baseline control method in the proposed framework, the control performance of CPO-LQR must be optimal to highlight the value of the compensatory control policy, i.e., to further improve upon a fully exploited baseline. This subsection presents a comparative validation of the proposed CPO-LQR and CPO-LQR-CtrlLaw.

4.2.1. Training and Testing Results

From

Figure 5 discussed above, when the train operates above 300 km/h, the pantograph–catenary contact force exhibits severe oscillations. Therefore, we select four speed conditions—320 km/h, 340 km/h, 360 km/h, and 380 km/h—to optimize the corresponding

-weight matrix for each case. To highlight the superiority of the CPO algorithm over other classical metaheuristic methods, we compare CPO with the genetic algorithm (GA) and the particle swarm optimization algorithm (PSO) used in References [

24,

25,

26] under identical parameter settings. The convergence curves of the three algorithms for each speed condition are presented in

Figure 10.

Figure 10 shows that the CPO algorithm exhibits the best overall convergence performance. Specifically, although PSO converges fastest among the three algorithms, it also settles at the highest final fitness value, indicating inferior exploration due to premature convergence. GA converges faster than CPO but still ends with a higher final fitness value; it enters a steady state in fewer iterations, risking entrapment in local optima. In the figure, GA’s final fitness matches CPO’s only at 360 km/h, while at all other speeds its final values remain higher than CPO’s. Owing to its four-stage exploration mechanism, CPO maintains a broad search scope, which naturally results in relatively slower convergence but ultimately contributes to achieving the lowest final fitness value, demonstrating the optimality of its optimization results. This indicates that, with identical parameter settings, CPO can discover more optimal

-weight matrix coefficients, resulting in superior control performance. The optimized

values for each speed condition are summarized in

Table 3.

Table 3 shows that the CPO-tuned

-weight matrices vary in all six diagonal weight coefficients across speeds, with no uniform trend. This variability indicates that CPO adapts each controller’s emphasis to the specific dynamic behavior at each speed. Such flexibility ensures that the baseline LQR is optimally matched to its operating condition before applying the matrices.

By loading the trained

-weight matrix into the LQR controller, we conducted online tests at all four speed conditions and compared three control strategies, passive Control, empirically tuned LQR, and CPO-LQR. In the empirical LQR method, the

and

matrices are set according to Reference [

11], with

,

.

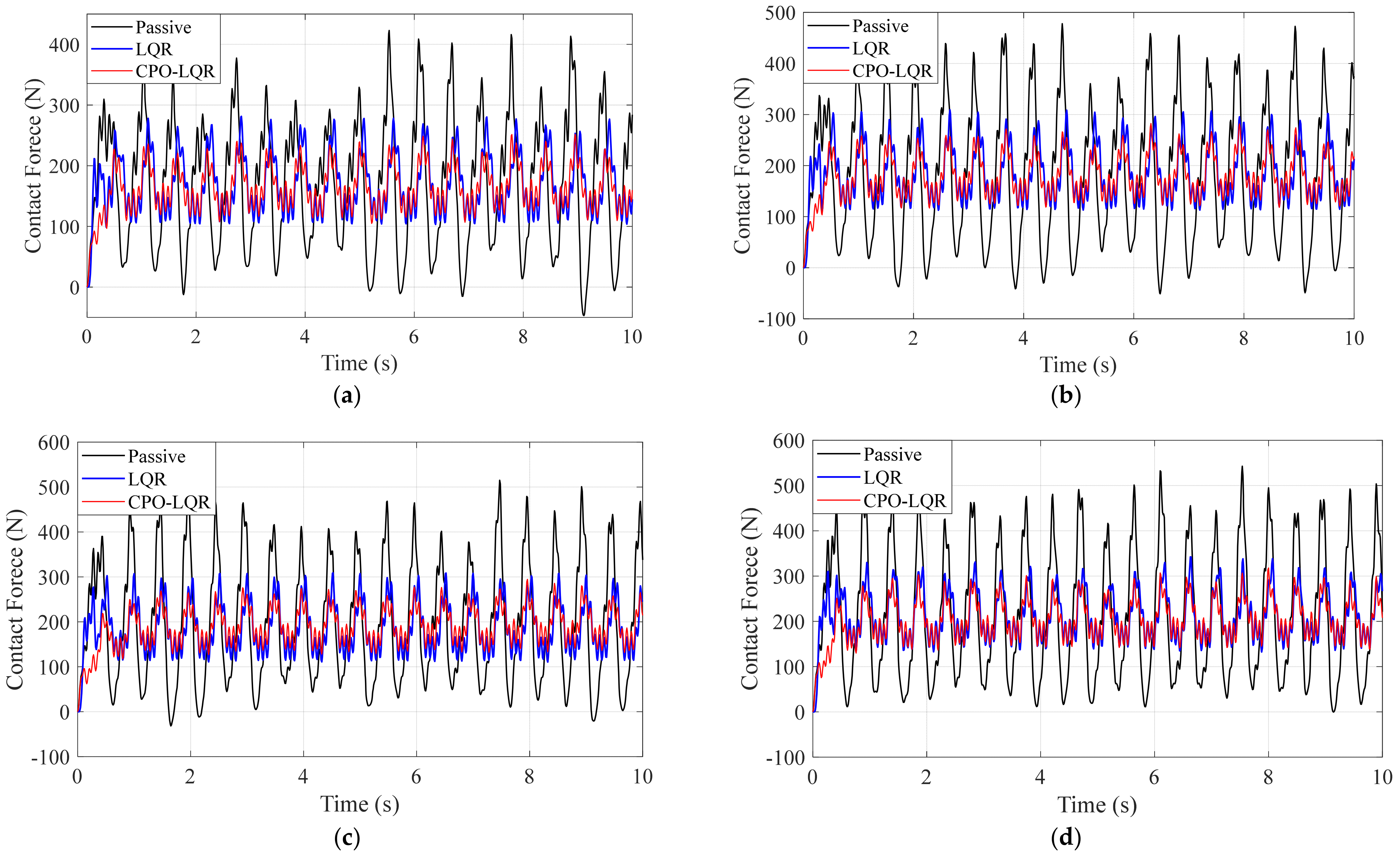

Figure 11 illustrates the control performance of the three control strategies across four speed conditions. The experimental results show that the CPO-optimized controller consistently and markedly reduces oscillation amplitudes in all cases. Compared to the uncontrolled case (Passive Control) and the empirically tuned LQR controller, CPO-LQR yields significantly lower force peaks as well as higher force valleys, demonstrating the smallest contact force fluctuation and superior contact force suppression performance.

4.2.2. Comparative Validation with the Offline Control Law

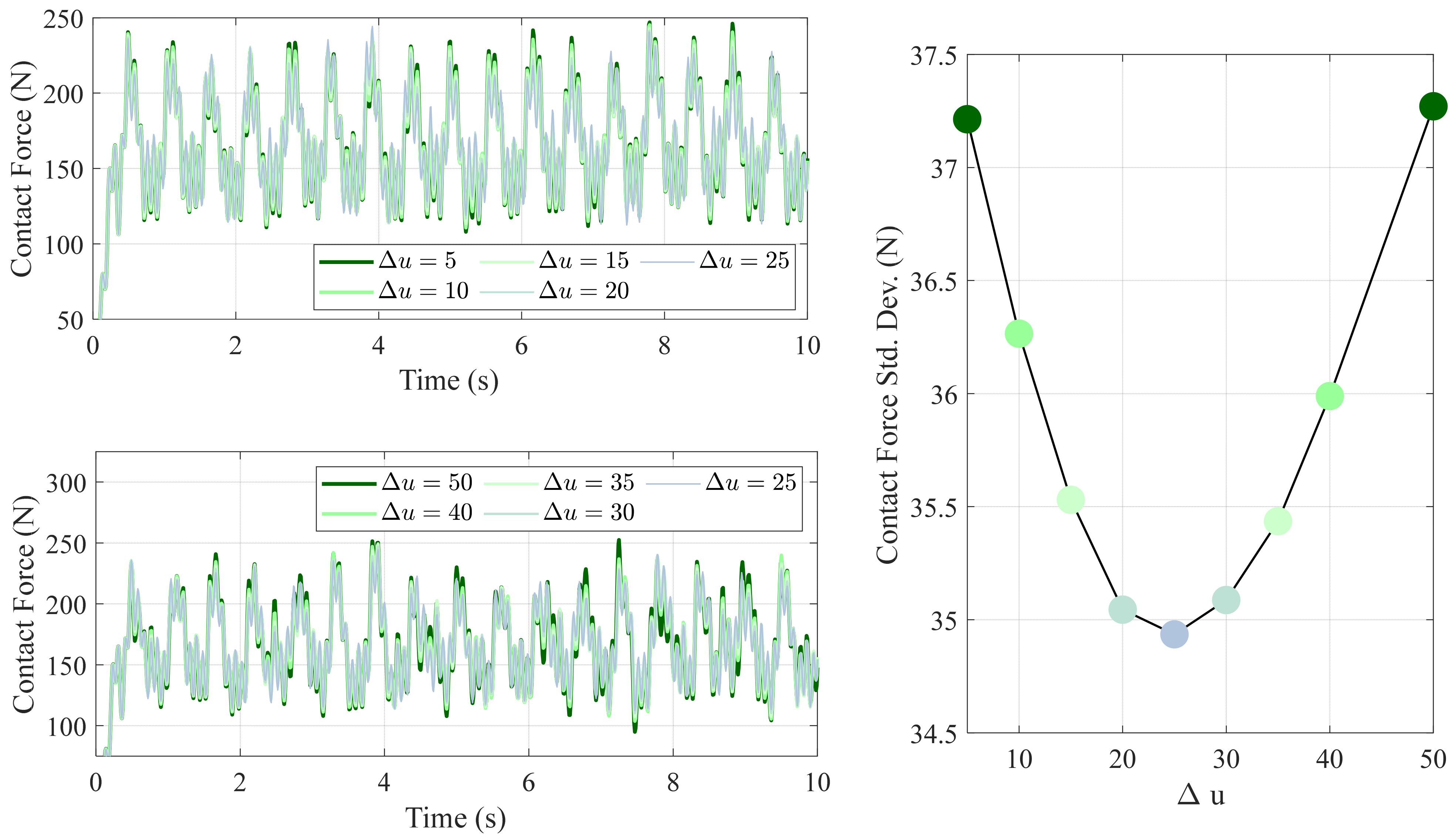

As mentioned earlier, the value of significantly affects the performance of the control law. We validated a range of in N to identify the optimal increment value.

As shown in

Figure 12, when the increment value

is below 25 N, the control action converges toward the baseline controller, yielding diminishing improvements. This behavior is expected because, per (18), as

approaches zero, the correction term vanishes and the controller effectively reduces to

, offering no noticeable advantage. Conversely, values of

above 25 N lead to excessive corrections and degraded performance rather than further improvement. This trend is reflected in the curve of the contact force standard deviation: both very small and very large

values increase the standard deviation and thus are reflected as the larger contact force oscillation amplitude. The minimum standard deviation occurs at

N, indicating that this increment yields the best overall control performance.

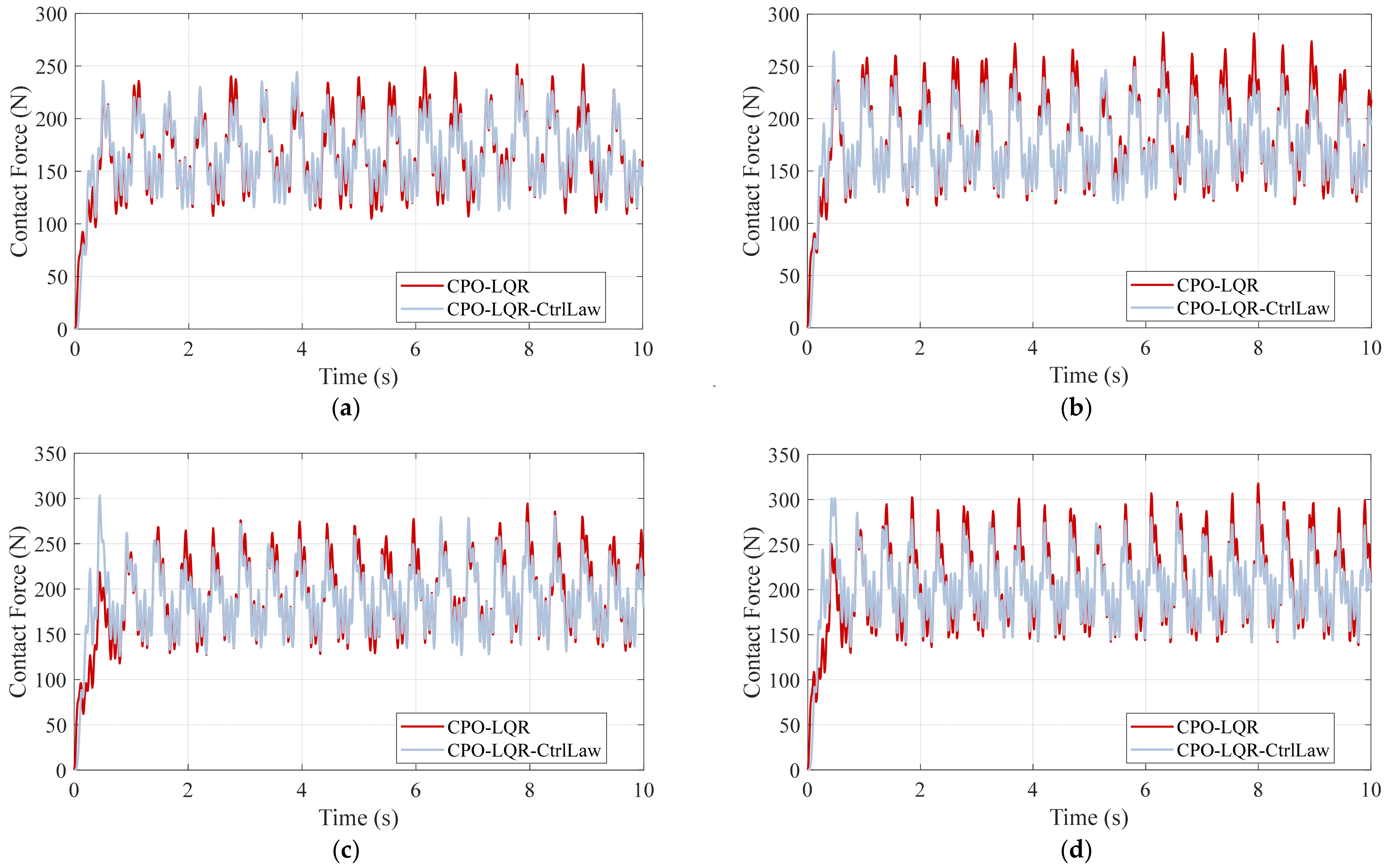

We then validated the dual model-based secondary-tuning control law CPO-LQR-CtrlLaw method under the same four speed conditions, as shown in

Figure 13.

As shown in

Figure 13, the experimental results indicate that the CPO-LQR-CtrlLaw not only reduces the excessive peaks of the contact force waveform but also raises the deep troughs, bringing both extreme contact force situations closer to the corresponding target contact value under all four speed conditions. Consequently, the secondarily adjusted baseline actions further enhance overall suppression of the contact-force fluctuations, confirming that the CPO-LQR CtrlLaw method outperforms the baseline CPO-LQR controller.

4.3. Control Performance of the Proposed Framework

Building upon the aforementioned baseline controller and the control law method, we further validated the online performance of the proposed unified compensation control framework, examining both its convergence behavior during training and its suppression effectiveness on contact force under different speed conditions in online testing.

4.3.1. Training and Testing Results

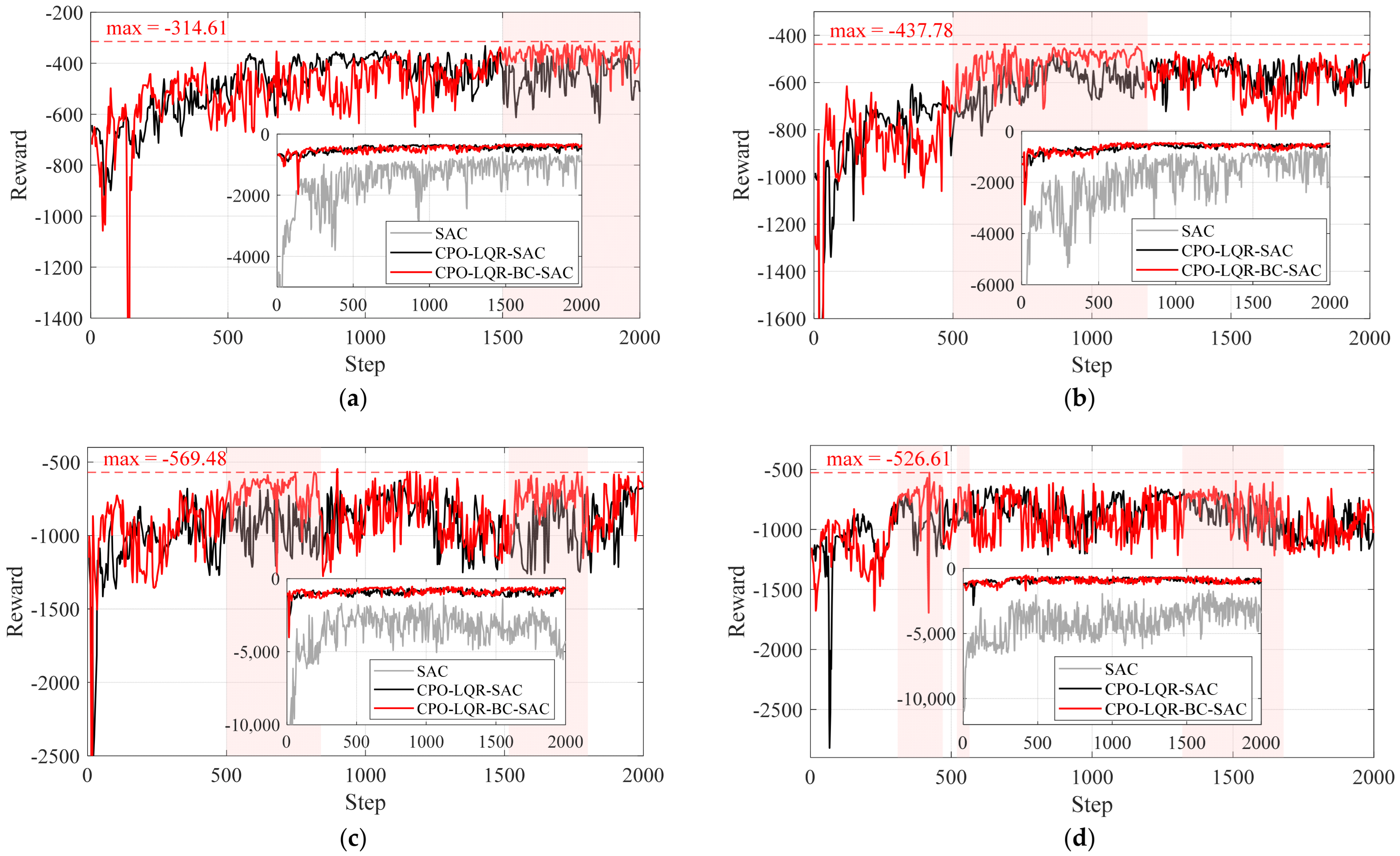

To comprehensively evaluate the contribution of each module in the proposed framework to control performance, we compared three schemes: the standalone SAC, the combined framework with CPO-LQR as the primary controller and the SAC as the compensatory controller, and the proposed framework with the added behavior-cloning loss term. Their reward convergence curves are shown in

Figure 14.

Regardless of speed, the standalone SAC algorithm exhibits the worst convergence: its reward curve fluctuates wildly and settles at the lowest final value, indicating failure to explore an optimal policy. When SAC compensation is grafted onto the CPO-LQR baseline at 320 km/h, fluctuations are damped, but the peak reward stalls and even declines after roughly 800–1200 episodes, failing to continue improving. In contrast, CPO-LQR-BC-SAC, which integrates behavior cloning, demonstrates a consistently increasing reward trend over 2000 episodes and reaches the highest final reward. This advantage persists at higher speeds: at 340 km/h, it converges to a significantly higher reward between episodes 500 and 1200; at 360 km/h, it outperforms in two distinct bands (episodes 500–840 and 1515–1800); and at 380 km/h, it remains superior over episodes 310–470, 520–565 and 1320–1680. These findings demonstrate that adding behavior cloning effectively guides the agent toward targeted exploration in the policy space, enhancing learning stability and ultimately discovering superior control strategies. It is worth noting that the reward curves at 360 km/h and 380 km/h are more volatile than at lower speeds—a reasonable outcome given that contact-force oscillations intensify with train velocity.

The online test results for all speed conditions are presented in

Figure 15. The standalone SAC policy fails to outperform the baseline CPO-LQR at any speed. However, when SAC is employed as a compensator alongside the CPO-LQR primary controller, the fluctuation suppression performance is significantly enhanced, as demonstrated by reduced oscillation amplitudes and less control errors across all four speed conditions. Furthermore, with the addition of the BC guided, the proposed framework achieves the best performance among the four methods—exhibiting the lowest oscillation amplitude and the smallest deviation from the target contact force—thereby fully confirming its superiority.

4.3.2. Comparative Validation Based on Expert Action Compensation

As previously described, to address the impracticality of deploying the dual-model offline control law directly, we propose superimposing the secondarily tuned baseline control actions onto the real-time CPO-LQR outputs, thereby achieving performance beyond that of standalone CPO-LQR. These tuned actions are treated as expert demonstrations for subsequent behavior cloning. In order to validate the effectiveness of expert action compensation and to demonstrate that the proposed framework outperforms pure expert action imitation,

Figure 16 compares the performance of four control schemes under the 320 km/h, 340 km/h, 360 km/h, and 380 km/h conditions. Here, Expert-Compensated CPO-LQR (ECCPO-LQR) refers to the control framework that relies entirely on

-based compensation.

The experimental results in

Figure 16 demonstrate that the ECCPO-LQR scheme significantly outperforms the CPO-LQR baseline and closely matches the performance of the ideal CPO-LQR-CtrlLaw. Hence, using these generated actions as “expert demonstrations” for behavior cloning is well justified. Furthermore, across all speed conditions, the CPO-LQR-BC-SAC framework not only retains the strengths of the expert demonstrations but, through autonomous exploration in deep reinforcement learning, surpasses both the CPO-LQR-CtrlLaw and ECCPO-LQR schemes. These results empirically demonstrate the effectiveness of our proposed active compensation control architecture, with imitation learning as its foundation and adaptive exploration enabling performance gains beyond the baseline.

4.4. Performance Evaluation

This subsection first conducts a comparative analysis of the proposed framework against various control algorithms under different speed conditions. Subsequently, to further verify its robustness, the trained control strategy is transferred to two additional pantograph types for testing, and its performance retention is evaluated.

4.4.1. Speed Range Performance Evaluation and Comparative Validation

Using the performance metrics defined in

Section 2.3.1, we quantitatively compared the online test results of all control schemes discussed in this paper. The statistical summary of the control performance is presented in

Table 4.

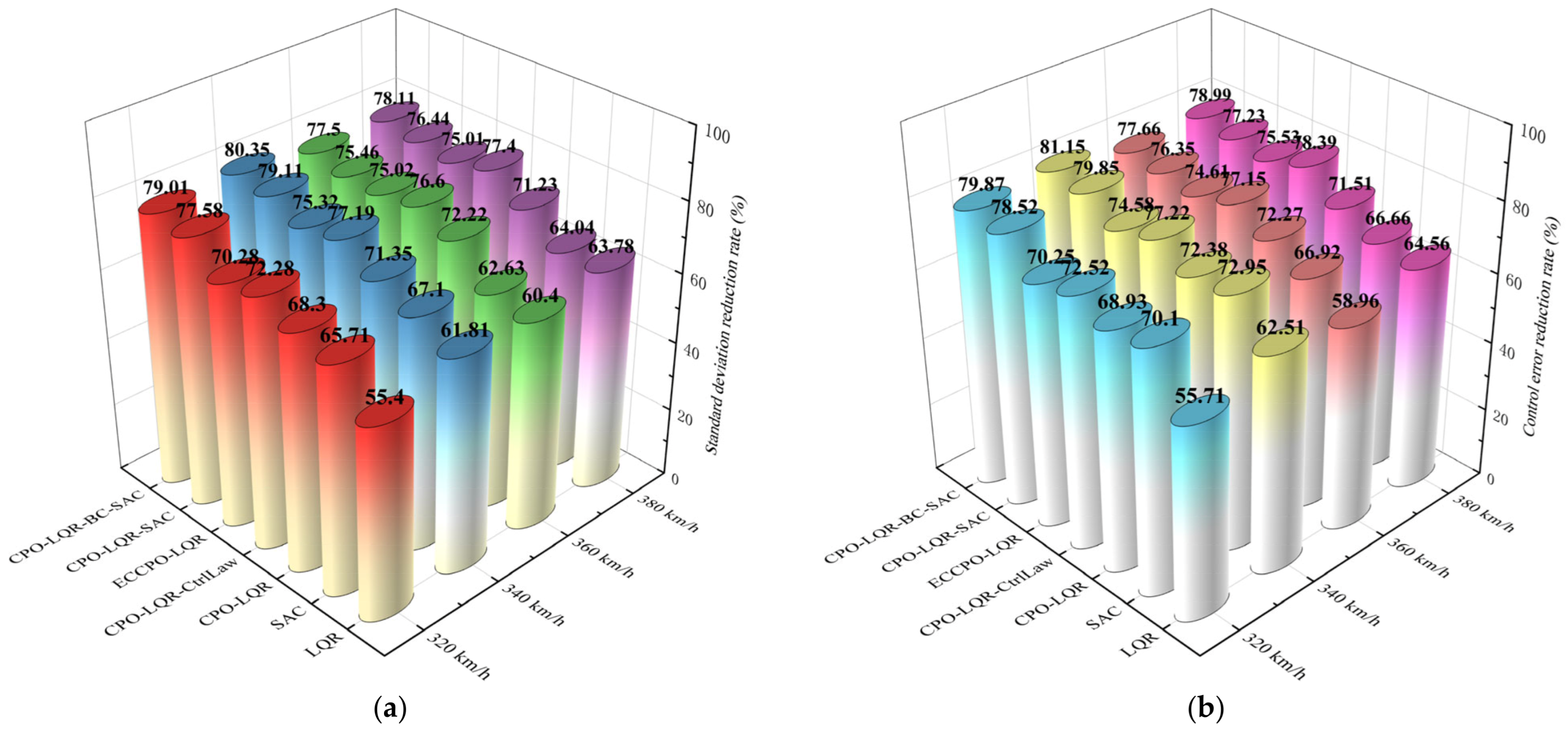

As observed in the table above, the proposed framework achieves the lowest standard deviation of contact force across all four speed conditions compared to the other control schemes, with the highest standard deviation reduction rates of 80.35%, and all the highest standard deviation reduction rates exceeding 77%. Concurrently, it also attains the minimum error mean while demonstrating comparably the highest error reduction rates of 81.15%, also exceeding 77% under all four speed conditions. These metrics substantiate the superior control performance of CPO-LQR-BC-SAC over other control architectures. Crucially, progressive performance enhancement is evident: transitioning from SAC to CPO-LQR-CtrlLaw and ultimately to CPO-LQR-BC-SAC control yields sequentially decreasing standard deviation values and monotonically increasing standard deviation reduction rates. This systematic improvement pattern, mirrored in error metrics, validates the gradient optimization paradigm embedded in our compensation framework design. For enhanced visualization of the control schemes’ performance across speed conditions, the tabular data is reconstituted in

Figure 17.

As shown in

Figure 17a, for standard deviation reduction across all speed conditions, the seven control schemes display an overall increasing trend in reduction rates, and our CPO-LQR-BC-SAC framework consistently achieves the highest values. Although the ideal dual-model offline control method CPO-LQR-CtrlLaw also performs strongly at all speeds (ranking second at 360 km/h and 380 km/h, and third at 320 km/h and 340 km/h), its reduction rate is always lower than that of the proposed framework. This not only verifies the effectiveness of offline tuning actions but also highlights the advantages of our proposed framework. From the control schemes’ perspective, the standard deviation reduction rate generally increases with speed; however, in the higher speed conditions (360–380 km/h), the gains for both CPO-LQR-BC-SAC and CPO-LQR-SAC taper off. This is likely due to more severe contact-force oscillations at higher speeds and the resulting instability in the agent’s exploration strategy, which limits further improvements—consistent with their reward convergence behavior. Fortunately, even in this speed band, our framework remains the top performer. The control error reduction shown in

Figure 17b follows a similar pattern. Notably, SAC alone achieves error reduction rates of 70.10% and 72.95% at 320 km/h and 340 km/h—slightly above CPO-LQR’s 68.93% and 72.38%—but these gaps are minimal. At all other speeds, the improvement trends mirror those of the standard deviation reduction.

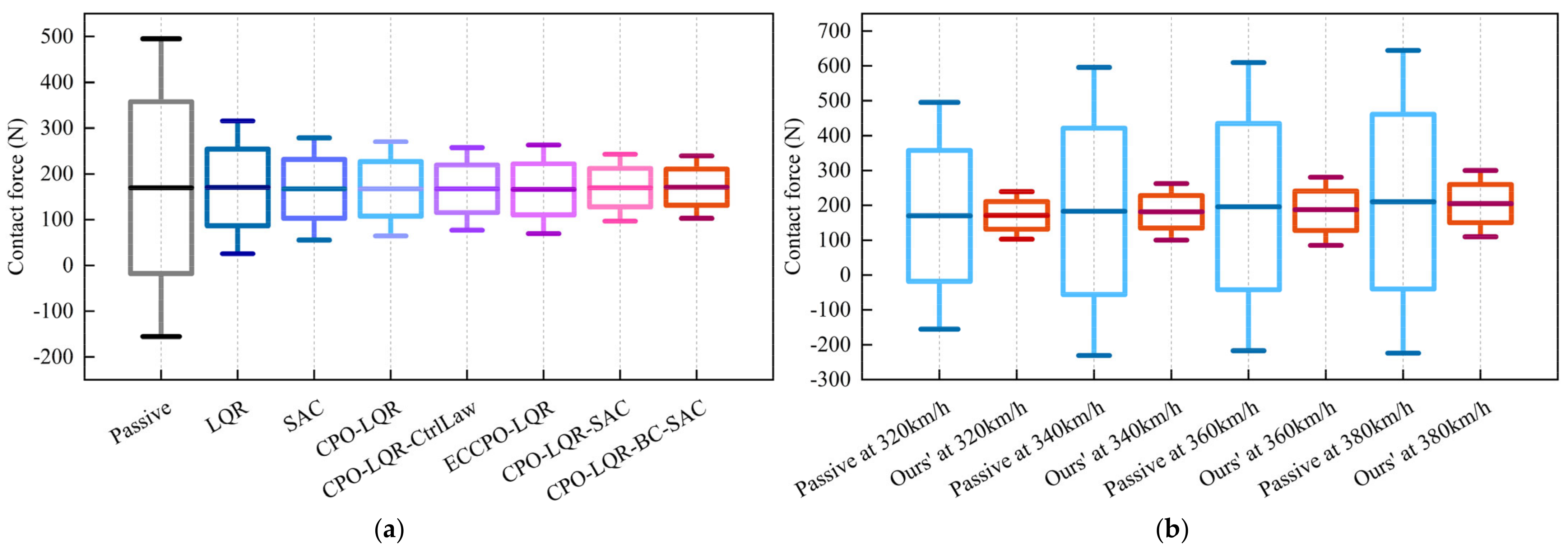

Based on the statistical contact force ranges discussed in

Section 2.3.1, we compared the statistical distribution range of contact force under each control scheme at 320 km/h, as shown in

Figure 18a. Similarly, we also compared the statistical contact force ranges under passive control and the proposed framework across all four speed conditions, as shown in

Figure 18b. Whether examined by control scheme (

Figure 18a) or by speed condition (

Figure 18b), the statistical contact force ranges under the proposed framework consistently remain close to the target value, whereas in the passive control scenario, this range is markedly wider. These results further confirm the superior performance of the proposed compensation control framework in suppressing contact force fluctuations.

Furthermore, it is important to note that the adoption of LQR and SAC in the proposed framework is motivated by previous research findings. Specifically, LQR is included based on the results reported in [

11], where it has been demonstrated to achieve highly effective control performance. Similarly, SAC is incorporated with reference to [

17,

18], which demonstrated that SAC outperforms other DRL-based control algorithms in exploring control actions for continuously oscillatory tasks such as pantograph–catenary contact force regulation. Therefore, LQR and SAC were selected as the core components for comparative evaluation in this study. To provide an approximate performance reference relative to the existing work, we extracted control performance metrics from two recent studies—CB-DMRL [

18] and IDDPG [

19]—which were conducted under comparable speed conditions (320–380 km/h). A summary of these results is presented in

Table 5.

Although the exact simulation environments, and disturbance assumptions in those works differ from ours, a speed-matched comparison shows that our proposed CPO-LQR-BC-SAC framework achieves significantly lower contact force standard deviations and higher reduction rates across all test speeds. For instance, at 320 km/h, our method reduces the standard deviation to 22.77 N (79.01%), outperforming both CB-DMRL (32.82 N, 14.71%) and IDDPG (42.98 N, 45.12%). Similarly, at 380 km/h, our framework maintains a reduction rate of 78.11%, considerably higher than CB-DMRL’s 35.69%.

Since vehicle speed is the most critical factor affecting control performance, surpassing the impact of other disturbances in both simulations and real-world applications, we aligned the test conditions in terms of speed to ensure a fair comparison. Additionally, our work adopts modeling procedures based on the EN50318 [

28] and GB/T 32591 [

29] standards, ensuring consistency in the modeling framework. Although the experimental platforms are not entirely identical and some implementation details of the two referenced works are not fully disclosed, this comparison is not intended as a strict benchmark. Rather, it serves as a contextual reference that highlights the potential performance advantage of our method under similar speed conditions. Our approach consistently delivers better control performance at the same speeds, demonstrating both its effectiveness and its potential generalizability to practical applications.

4.4.2. Robustness Validation Across Different Pantograph Types

The control strategy trained using the parameters of the DSA380 pantograph was directly transferred to two different pantograph types—DSA350S and SSS400+—and tested in simulation at 320 km/h to evaluate whether the proposed framework can maintain its control performance under parameter variations. The model parameters of the two additional pantograph types are listed in

Table 6.

As shown in

Figure 19, even with changes in the model parameters, the proposed framework not only demonstrates significant superiority over passive control but also consistently outperforms the baseline CPO-LQR method, effectively suppressing contact force fluctuations. From both the contact force and error waveforms, it is evident that the oscillation amplitude is notably reduced for both pantograph types, and the errors are smaller than those under the baseline control. It should be noted, however, that although strong control performance is retained, a certain performance drop compared to the results on DSA380 is inevitable. To illustrate this difference more clearly, a cross-type comparison of performance metrics is presented in

Table 7.

The results show that the standard deviation reduction rate is the lowest on DSA350S and slightly higher on SSS400+, both below the 79.01% reduction rate achieved on DSA380. The largest performance drop occurs on DSA350S, which is partly due to its already lower standard deviation under passive control, leaving less room for improvement. Nevertheless, the performance for both additional types remains in the high-performance range and surpasses that of the baseline CPO-LQR, indicating that the control strategy exhibits a certain degree of model robustness. This robustness stems from two main factors: (1) the baseline CPO-LQR controller inherently possesses robustness, and (2) the BC-SAC compensation strategy does not compromise system stability but instead enhances the suppression of fluctuations within a controlled range. Consequently, the proposed framework can maintain excellent control performance even under variations in pantograph model parameters.

5. Conclusions

This article addresses extreme PCCF fluctuations in high-speed railway systems by proposing a CPO-LQR-BC-SAC active compensation control framework, which progressively integrates optimization, imitation learning, and reinforcement learning to achieve high-performance suppression of contact force fluctuations. The main conclusions are as follows:

- (1)

The baseline CPO-LQR controller is constructed and optimized using the CPO algorithm, yielding a high-performance control policy that effectively reduces PCCF fluctuations across varying speeds.

- (2)

An offline secondary-tuned control law based on a dual-model structure further refines the control actions and provides expert demonstrations that enhance oscillation suppression.

- (3)

A practical compensation strategy is developed by integrating real-time CPO-LQR outputs with expert action corrections within a unified single-model framework.

- (4)

The CPO-LQR-BC-SAC learning framework is trained through a hybrid of behavior cloning and SAC, enabling it to imitate expert actions while maintaining exploratory capabilities.

The proposed framework reduces the standard deviation of PCCF by over 77% across all tested speeds and demonstrates the generalization capability to transfer the control strategy to different pantograph types. Future research will aim to enhance model fidelity by incorporating extreme-condition factors such as line tension variations, crosswinds, and structural heterogeneity. Furthermore, hardware-in-the-loop experiments will be conducted to validate the practical stability of the framework and support its real-world deployment.