Highlights

What is the main finding?

- The multi-level collaborative fusion strategy (MLC) is developed using multi-source optical data by integrating the strengths of both pixel-level and feature-level fusion.

What are the implications of the main findings?

- The optical–SAR fusion framework is proposed by integrating multi-source optical and multi-temporal Sentinel-1 SAR images in mixed broad-leaved and coniferous forests.

- The saturation effect commonly observed in optical data is effectively mitigated by the optical–SAR collaborative approach.

Abstract

For assessing forest resource quality and carbon sequestration, both optical and synthetic aperture radar (SAR) remote sensing data have been widely used to map forest aboveground carbon storage (AGC), demonstrating considerable potential across diverse forest types. However, the fusion approaches between SAR and optical data remain technically challenging, particularly when combining multi-source optical and multi-temporal SAR datasets. In this study, multiple optical datasets with varying spatial resolutions and spectral bands (Landsat-9, Sentinel-2, GF-6 PMS, and GF-6 WFV) and time-series Sentinel-1 data acquired within the same year were employed to develop an optical–SAR fusion framework for mapping forest AGC in mixed broadleaf–coniferous forests. Firstly, a multi-level collaborative fusion strategy (MLC) was developed using multi-source optical data by integrating the strengths of both pixel-level and feature-level fusion. Subsequently, a multi-temporal SAR combining approach was designed based on seasonal variation patterns using one-year time-series Sentinel-1 data. Finally, an optical–SAR modeling approach was established to map forest AGC using multiple machine learning models combined with the sequential forward feature selection method. The results demonstrate that the proposed MLC fused method for multi-source optical data offers significant advantages in enhancing estimation accuracy and improving model robustness. Furthermore, when multi-temporal Sentinel-1 data were integrated with the MLC-fused optical data, the optical–SAR collaborative approach further improved the coefficient of determination (R2), effectively mitigating the saturation effect commonly observed in optical data. The highest performance was achieved using spring-acquired multi-temporal Sentinel-1 data within the SVR model, yielding an R2 of 0.69 and reducing rRMSE to 18.03%. It is indicated that an appropriate fusing strategy for integrating optical and SAR data can substantially enhance both accuracy and reliability in mapping forest AGC.

1. Introduction

Mapping forest aboveground carbon storage (AGC) is crucial for quantifying carbon sequestration and supporting reliable global carbon budget assessments. Recently, driven by rapid advancements in remote sensing technologies and artificial intelligence, data-driven approaches that integrate remote sensing data with ground-based samples have emerged as the dominant method for mapping forest AGC [1]. Due to differences in data collection methodologies between sensors, both optical and synthetic aperture radar (SAR) data exhibit distinct advantages and limitations in observing forest parameters [2,3].

Recently, optical data has continued to serve as the primary data source due to its wide availability and rich spectral information [4,5]. However, optical sensors are inherently confronted with a trade-off between spatial and spectral resolution, which restricts further enhancements in the accuracy of forest AGC mapping [6,7]. Moreover, due to the intrinsic limitations of individual sensors in terms of spatial, spectral, or temporal resolution, precisely characterizing the intricate relationships between forest parameters and features extracted from optical data still poses a significant challenge. Additionally, accurate monitoring of forest AGC in heterogeneous regions demands high-frequency, temporally continuous observations [4,8,9]. Nevertheless, challenging geographical and climatic conditions, including rugged terrain, frequent rainfall, fog, and persistent cloud cover, substantially decrease the temporal frequency and continuity of available optical data. Therefore, the integration of multi-source optical data with diverse spatial resolutions, temporal resolutions, and spectral bands is a crucial approach to offset the frequent observational gaps in heterogeneous forest regions.

To address the inherent trade-off among temporal, spatial, and spectral resolution in single-source optical data, prior studies have extensively explored multi-source optical data fusion techniques. These methods aim to synergistically utilize the complementary advantages of different sensors and platforms to generate fused outputs that simultaneously offer high temporal resolution, high spatial resolution, and high spectral fidelity for targeted applications [10]. Fusion approaches have evolved from early component substitution methods, such as the Gram–Schmidt (GS) and Brovey transforms, multi-resolution analysis techniques (e.g., wavelet transforms), and model-driven strategies like the spatial–temporal adaptive reflectance fusion model (STARFM) [10,11]. Recently, the field has shifted toward end-to-end deep learning-based fusion networks [11]. Despite the considerable potential demonstrated by multi-source optical remote sensing data fusion, significant challenges persist in fusion strategies. Especially, pixel-level fusion is prone to spectral distortion, whereby the spatial resolution of the fused imagery is enhanced at the expense of spectral fidelity, potentially deviating from the original multispectral or hyperspectral data [8,9]. Feature-level fusion, while facilitating enhanced information complementarity, often encounters issues such as noise interference and data inconsistency [8]. Moreover, these mainstream feature-level fusion strategies incur an exponential increase in the number of features, thereby complicating feature selection, substantially amplifying the complexity of inversion models, and increasing the risk of overfitting [9]. Furthermore, in mapping forest AGC, the objective of multi-source optical data fusion is to enhance the sensitivity between key remote sensing features and AGC by mitigating the spatial–temporal inconsistency of remote sensing data. However, most approaches predominantly focus on improving spatiotemporal resolution rather than enhancing the sensitivity between feature descriptors and AGC. Nevertheless, developing an optimal multi-source optical data fusion strategy remains challenging due to the structural complexity of forests and the heterogeneity of sensors.

Furthermore, synthetic aperture radar (SAR) and polarimetric SAR data offer all-weather, day-and-night observational capabilities along with partial vegetation penetration, enabling more reliable multi-temporal monitoring of forested areas through canopy-level observations [5]. Currently, polarimetric SAR (Sentinel-1) data have become increasingly important in forest AGC estimation [12,13]. However, the temporal non-stationarity of polarimetric SAR data, frequently observed when acquisitions occur over short time intervals, can lead to significant inconsistencies in forest parameter inversion across different dates, severely undermining the reliability of forest AGC products [13,14]. Multi-temporal polarimetric SAR data fusion, which synergistically leverages both temporal and polarimetric information, is considered a key strategy for mitigating the spatiotemporal instability of polarimetric features [15]. Previous studies have shown that improving the temporal stability of these features enhances the accuracy of parameter inversion, with multi-temporal averaging being the most used method [13,14,15]. Moreover, feature-level integration of multi-temporal SAR data has been demonstrated to effectively improve regression model performance, resulting in more accurate inversion outcomes [15,16]. Nevertheless, uncertainties in the selection and fusion of multi-temporal polarimetric SAR data persist due to factors such as forest growth dynamics, precipitation events, and variations in soil moisture, limiting their accuracy in mapping forest AGC [2,3,17].

To leverage the advantages of optical and SAR data, developing effective fusing strategies for integrating multi-source and multi-temporal remote sensing data has become a critical direction for improving the accuracy of mapping forest AGC [6,18,19,20]. The core advantage of integrating optical and SAR data for mapping forest AGC lies in their ability to provide complementary, multi-dimensional information [21,22]. However, due to fundamental differences in imaging mechanisms and electromagnetic wave properties between optical and SAR sensors, their effective integration remains challenging [19,20]. Due to the distinct imaging mechanisms and inherent properties of optical and SAR sensors, current fusion strategies typically operate at the feature level: vegetation indices and textural metrics are extracted from optical data, while SAR-derived features, including backscatter coefficients, interferometric coherence, and polarimetric decomposition parameters, are combined to form an enhanced feature set for quantitative forest parameter inversion [19]. Previous studies suggest that feature-level fusion, which combines spectral indices derived from optical data with polarimetric features extracted from SAR data to form joint high-dimensional feature sets, can effectively leverage the complementary strengths of multi-source data in forest parameter estimation, showing significant potential [20,23,24]. Nevertheless, because the correlation between polarimetric features and forest parameters is generally weaker than that of spectral features, straightforward feature concatenation may result in insufficient model learning of SAR-derived information, thereby reducing the contribution of SAR data and limiting the fusing of multi-source datasets [24,25]. Therefore, it is essential to enhance the spatiotemporal stability of polarimetric features through multi-temporal observations to leverage the complementary strengths of optical and SAR data for mapping forest AGC.

To address the redundancy in features derived from multi-source optical data and improve the spatiotemporal stability of polarimetric features, the objective is to develop an optical–SAR fusion framework for mapping forest AGC by integrating multi-temporal SAR data with multi-source optical data. For constructing the optical–SAR fusion framework, a multi-level collaborative fusion framework (MLC) was firstly proposed to integrate multi-source optical data by incorporating the goals of mapping forest AGC into a unified multi-source data fusion processing, including Landsat-9, Sentinel-2, GF-6 PMS, and GF-6 WFV. Second, to reduce the influence of the spatial–temporal non-stationarity of polarimetric SAR data on feature sensitivity, a multi-temporal SAR combining approach based on seasonal variation patterns was developed using one-year time-series Sentinel-1 data. Finally, an optical–SAR modeling approach was established to map forest AGC using multiple machine learning models combined with sequential forward feature selection. Furthermore, various fusion strategies for both multi-source optical and multi-temporal Sentinel-1 data were systematically evaluated. The potential of combining multi-source optical and multi-temporal SAR data to improve the accuracy of mapping forest AGC was also investigated in comparison to using only multi-source optical data.

2. Study Area and Data

2.1. Study Area

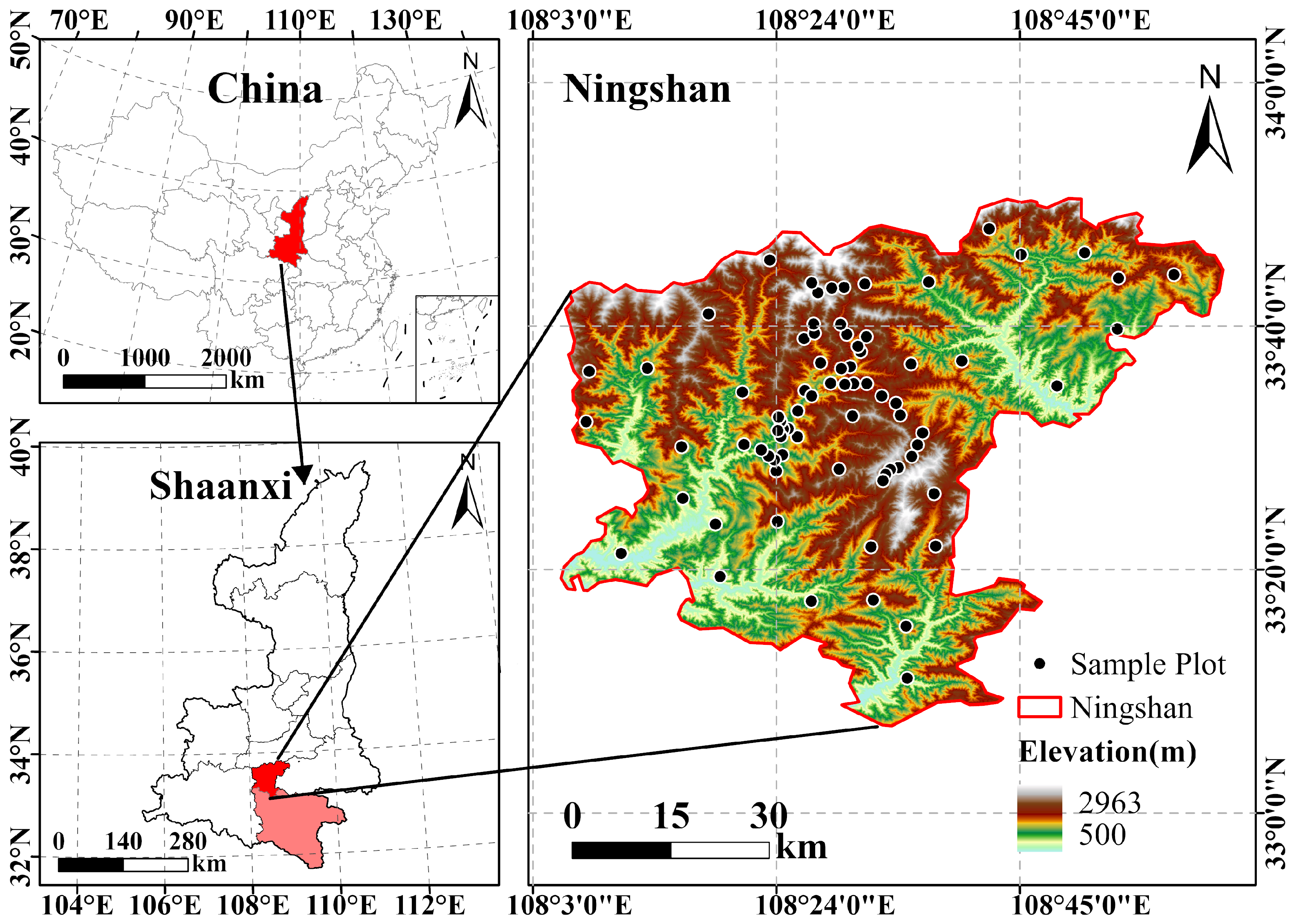

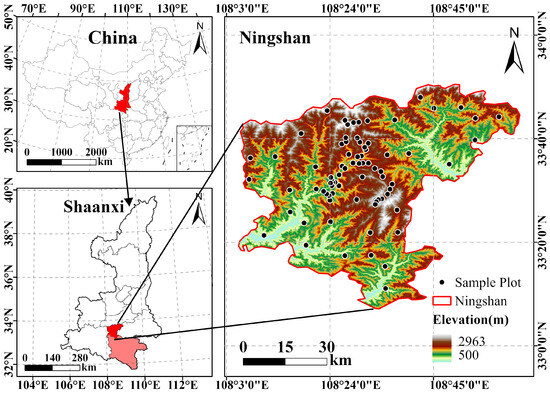

Ningshan County, located between 33°7′11″ and 33°50′38″N latitude and 108°2′33″ and 108°56′48″E longitude (Figure 1), is selected as the study area for this research. Situated in the northwest of Ankang City, Shaanxi Province, it lies on the southern slope of the central Qinling Mountains and belongs to the Hanjiang River system within the Yangtze River Basin. The region has a semi-humid subtropical climate, with a mean annual temperature of 12.5 °C, mean annual precipitation of 873.2 mm, and approximately 210 frost-free days [26]. The landscape is predominantly mountainous and deeply dissected, shaped largely by the main Qinling range and the Pinghe Ridge. The terrain exhibits high complexity, with a vertical elevation difference of 2425 m between the highest and lowest points, resulting in pronounced vertical zonation in climate and ecosystems. In high-altitude zones, brown and yellow-brown soils predominate, supporting forest vegetation dominated by fir, birch, and mixed coniferous–broadleaved forests. Mid-elevation areas are primarily covered by yellow-brown soil and host vegetation such as oak, Huashan pine, larch, and bamboo. Lowland regions are characterized by paddy soils and chernozem, with forest cover including walnut, chestnut, Quercus variabilis, and patches of cultivated farmland.

Figure 1.

The maps of study area and ground measured samples.

2.2. Ground Data

To map forest AGC, 77 standard plots (20 m × 20 m) were established through stratified random sampling based on continuous forest inventory data. These plots were in areas with slopes primarily ranging from 18° to 35°. Subsequently, individual tree parameters with a diameter at breast height (DBH) exceeding 5 cm were measured, including DBH, crown width, and canopy height. In addition, high-precision geographic coordinates of each plot’s four corners and center were also measured using global navigation satellite system (GNSS) equipment [27]. Based on field measurements, individual tree volumes were calculated using a binary volume table, and the total volume per unit area was derived by summing the volumes of all trees within each plot [28]. After that, the forest aboveground biomass was calculated using the continuous function method of conversion factors described in the “Assessment of Biomass and Carbon Storage of Forest Vegetation in China” [29]. Finally, the carbon storage per unit area of the tree layer in each plot (without deadwood) was calculated by applying carbon content coefficients specific to dominant tree species.

where V is total volume per unit (m3/hm2); B is forest aboveground biomass (t/hm2); a and b are model conversion parameters between volume and biomass; C is carbon storage (MgC/hm2); and CF is carbon content coefficient. In this study, the sampled plots represented six major forest types: Pinus tabulaeformis forests (11 plots), Cunninghamia lanceolata forests (one plot), Quercus forests (15 plots), Larix gmelinii forests (one plot), broad-leaved mixed forests (47 plots), and Abies fabri forests (two plots). AGB and AGC transformation parameters of main dominant tree species were listed in Table 1.

Table 1.

AGB and AGC transformation parameters of dominant tree species.

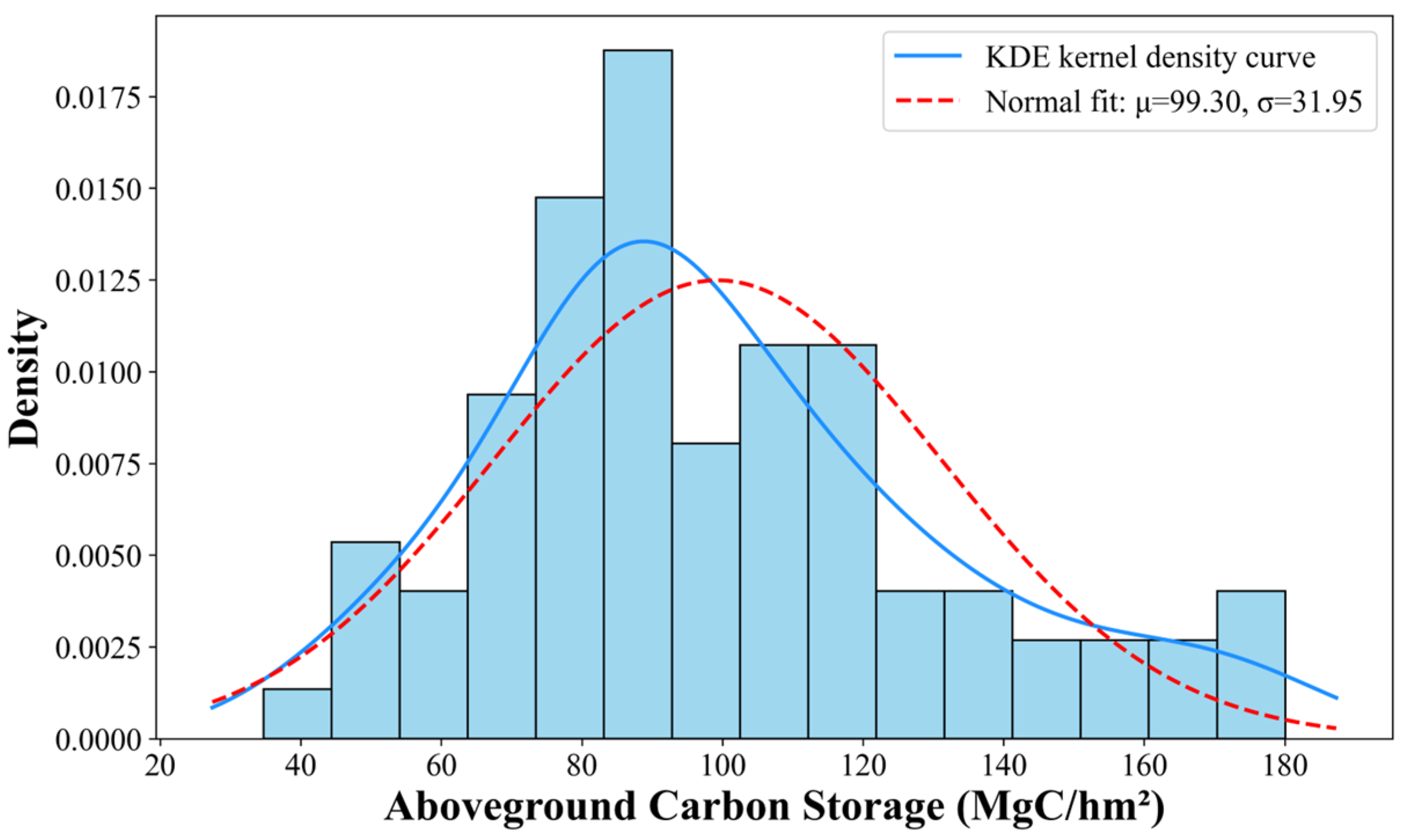

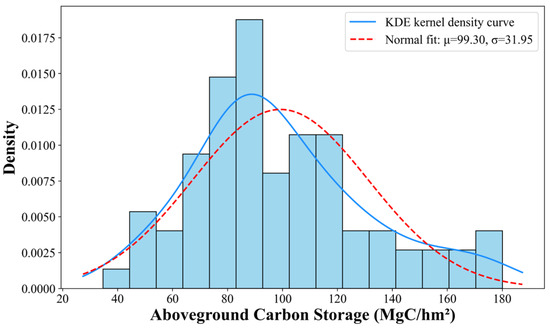

The distribution of AGC across the sample plots generally conforms to a pattern approximating a normal distribution (Figure 2). The distribution is predominantly bell-shaped, with a slight positive skew evident in the extended tail toward higher carbon storage values. Although minor deviations are present, the overall distribution satisfies the assumption of normality and is therefore deemed appropriate for subsequent parametric statistical analyses.

Figure 2.

The histogram of forest AGC for all samples.

2.3. Remote Sensing Data and Preprocessing

In this study, two types of remote sensing data, namely multi-source optical data and multi-temporal SAR data, were integrated to construct an enhanced variable set (Table 2). To fully utilize the synergistic advantages of spatial resolution, temporal resolution, and spectral characteristics, the optical data were mainly sourced from China’s Gaofen-6 (GF-6) PMS and WFV sensors [30], the European Space Agency’s Sentinel-2 mission, and NASA’s Landsat-9 satellite [31]. Additionally, due to the high-frequency acquisition capability of Sentinel-1 data, 12 dual-polarization (VV and VH) Sentinel-1 SAR images were acquired between January and December 2023. These SAR images have a spatial resolution of 10 m and an incidence angle ranging from 35.06° to 35.08°.

Table 2.

The information of acquired remote sensing data.

Before extracting features from the multi-source optical data, a comprehensive preprocessing workflow, including radiometric calibration, atmospheric correction, terrain correction, and geometric correction, was carried out. Additionally, to effectively extract polarization information from Sentinel-1 data, precise co-registration of multi-temporal images was first performed by 10.0.0 of SNAP software (version 10.0.0) [15,26]. Subsequently, multi-temporal filtering was applied to the SAR data to reduce speckle noise and significantly enhance image quality. Finally, an external digital elevation model (DEM) with a spatial resolution of 12.5 m was employed for geocoding and radiometric calibration.

3. Methodology

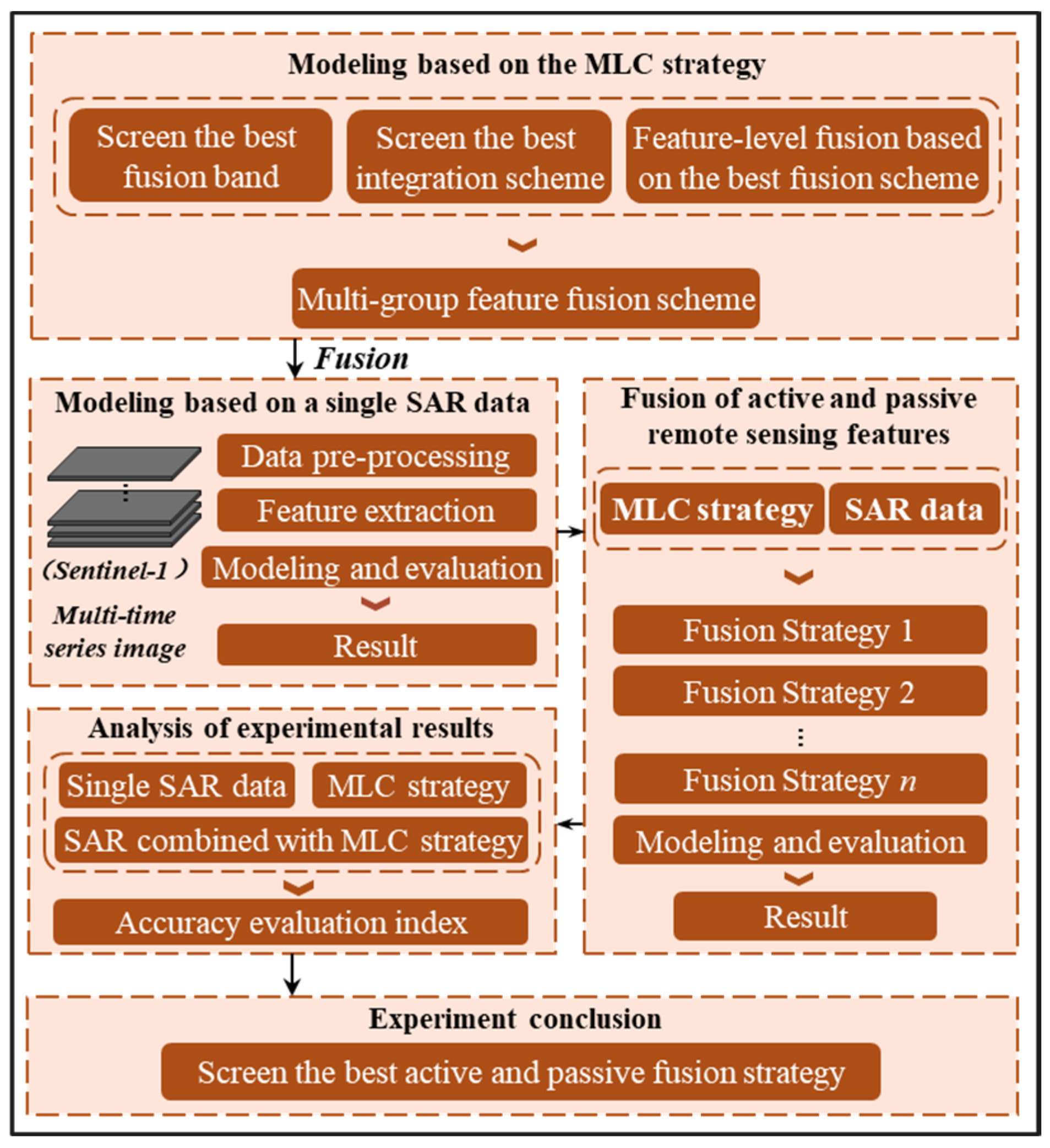

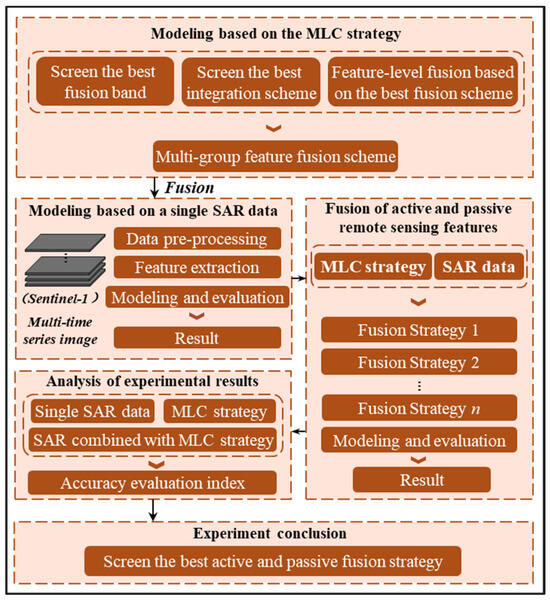

To improve the accuracy of mapping forest AGC by fusing multi-source optical and multi-temporal Sentinel-1 data in mixed broadleaf–coniferous forests, the multi-level collaborative fusion framework (MLC) and the multi-temporal SAR combining approach were initially proposed based on multi-source optical data and multi-temporal Sentinel-1 images, respectively. Subsequently, various feature sets were extracted from fused optical data and multi-temporal Sentinel-1 images by leveraging seasonal variation patterns. Finally, an optical–SAR modeling approach was developed using machine learning models, and a comprehensive evaluation of the model performance was conducted to demonstrate the potential of combining optical and SAR data for improving the accuracy of forest AGC mapping (Figure 3).

Figure 3.

Flowchart of optical–SAR fusion framework for mapping forest AGC by integrating multi-temporal SAR data with multi-source optical data.

3.1. Multi-Level Collaborative Fusion Framework Using Multi-Source Optical Images

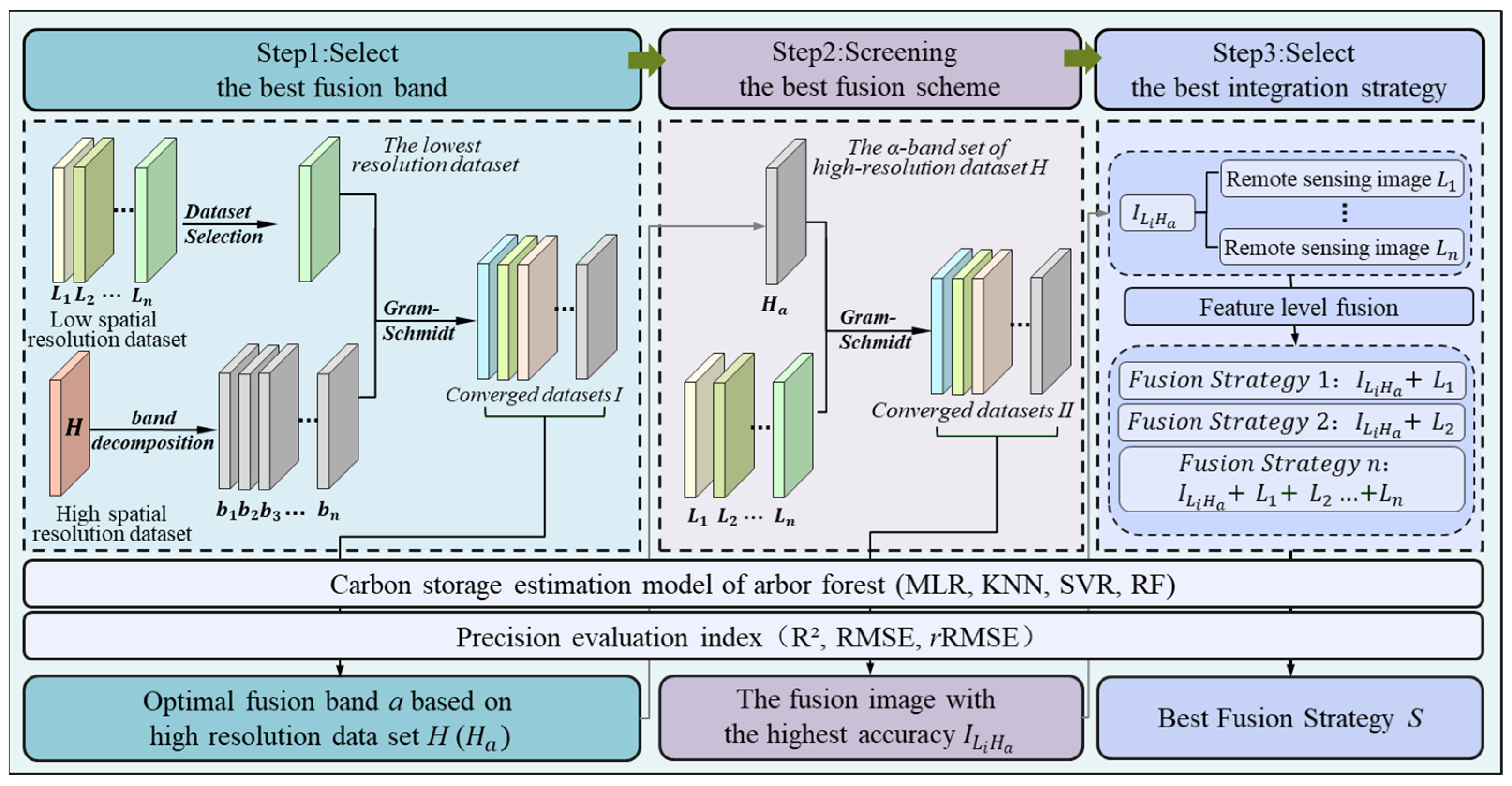

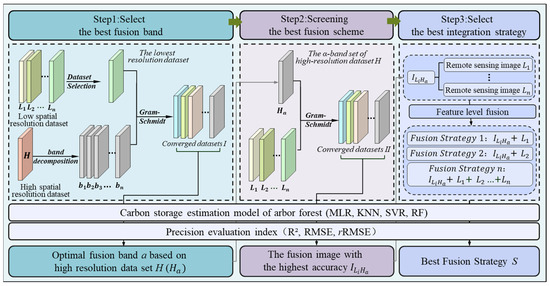

Constrained by the detection capabilities and observation frequency of optical sensors, the integration of multi-source optical data can improve the estimation accuracy of forest AGC. Leveraging the complementary strengths of pixel-level and feature-level fusion, this study proposes a multi-level collaborative fusion framework (MLC) that incorporates the estimation of AGC into a unified multi-source data fusion framework (Figure 4). The framework employs a hierarchical fusion modeling and evaluation approach to systematically explore and identify the optimal fusion strategy.

Figure 4.

The flowchart of multi-level collaborative fusion using multi-source optical images.

Firstly, multi-source optical data are divided into datasets with low spatial resolution {, , …, } and datasets with high spatial resolution {, , …, }, and the data with the lowest spatial resolution is selected from the low-resolution dataset. This low-resolution image is then fused with each band of the high-spatial-resolution dataset by means of the Gram–Schmidt method, a widely adopted pan-sharpening technique for integrating high-spatial-resolution panchromatic data with lower-resolution multispectral imagery. Subsequently, quantitative evaluation metrics are applied to assess the fusion performance across different band combinations in mapping forest AGC, and the optimal band (α) can be observed in the high-spatial-resolution dataset.

Then, a cross-platform data fusion framework is established. At the pixel level, the low-spatial-resolution datasets are individually fused with the optimal band (α) to generate n sets of fused images. Furthermore, a regression model is developed by integrating forest AGC of samples and features extracted from these fused images. Three statistical evaluation metrics, such as coefficient of determination (R2), root mean square error (RMSE), and relative root mean square error (rRMSE), were employed to quantitatively assess the AGC accuracy of forest AGC estimation, and the optimal fusion scheme () under cross-sensor and cross-scale are obtained from these fused images set.

Finally, multiple fusion strategies are generated by integrating the remaining low-spatial-resolution data sources and the optimal fusion scheme (), and various feature sets extracted from multiple fusion strategies are formed to construct the modes for estimating the forest AGC. The optimal fusion strategy S is selected through quantitative evaluation metrics.

The multiple fusion strategies are generated by integrating the remaining low-spatial-resolution data sources {, , …, }: and . The optimal fusion strategy S is selected through a comprehensive evaluation. Meanwhile, a systematic comparison is conducted with the estimation results obtained from the feature-level fusion method to validate the effectiveness of the multi-level collaborative fusion strategy (MLC) in estimating forest AGC.

3.2. Variables Extracted from Optical and SAR Images

To construct the models of mapping forest AGC, three types of features were extracted from optical remote sensing data: spectral reflectance, vegetation indices, and texture variables [13,15,32]. In particular, spectral reflectance encompassed both the raw reflectance values and their standardized forms for the blue, green, red, and near-infrared bands. Nine commonly used vegetation indices in forest parameters estimation, including NDVI, EVI, SAVI, RVI, DVI, ARVI, TVI, MSR, and NLI, were selected to enhance sensitivity to forest vegetation coverage and aboveground carbon storage [25,26,32]. Texture features were derived using the gray-level co-occurrence matrix (GLCM) method, computing eight statistical indicators, including contrast, entropy, and correlation [32,33,34,35]. Based on the spatial relationship between the plots and pixels, 5 × 5 and 7 × 7 sliding windows were selected as the window sizes to ensure adequate coverage of the plot areas, across four directional angles (0°, 45°, 90° and 135°). To eliminate directional dependence and enhance feature stability, the final texture feature layer was generated by averaging the outputs across all four directions, thereby effectively capturing the spatial heterogeneity of the canopy structure (Table 3).

Table 3.

The table of variables extracted from optical and Sentinel-1 data.

In addition, four types of variables—backscatter coefficients, derived features, texture features, and index features [12,13,15]—were extracted from Sentinel-1 SAR images. First, the backscatter coefficients (σVH and σVV) for VH and VV polarizations were extracted from each Sentinel-1 SAR dataset [12]. Second, six derived features related to the backscatter coefficients were calculated through mathematical combinations [18]. Third, texture analysis was conducted on the grayscale images (σVH and σVV) using the gray-level co-occurrence matrix (GLCM). Sixteen texture features (eight per polarization) were derived with a window size of 5 × 5, including mean, variance, homogeneity, contrast, dissimilarity, entropy, second moment, and correlation. Furthermore, the radar vegetation index (RVI) and the enhanced dual-polarization SAR vegetation index (EDPSVI) were incorporated into alternative feature sets for mapping forest AGC (Table 3) [34,35].

3.3. The Optical–SAR Modeling Approach by Integrating Optical and SAR Images

Optical remote sensing data have inherent limitations in capturing forest vertical structural information, particularly in densely forest areas where their sensitivity to key structural attributes, such as tree height and crown width, is limited [36]. To overcome these constraints, an optical–SAR modeling approach is proposed to enhance the accuracy of forest AGC estimation by integrating optical and SAR imagery. This approach utilizes C-band Sentinel-1 SAR data to capture seasonal structural dynamics in forests and employs a multi-temporal variable integration method to extract polarimetric features across different phenological stages [37,38]. In this study, time-series Sentinel-1 images are partitioned into four multi-temporal datasets according to seasonal variation patterns (spring, summer, autumn, and winter). The polarimetric features derived from each seasonal dataset are then fused with fused optical data by MLC to construct the optical–SAR collaborative approach for AGC estimation.

In addition, to comprehensively evaluate the effectiveness of the multi-source optical and multi-temporal SAR data fusion strategy proposed in this study, we constructed various dataset combinations, including single-sensor, multi-source optical, multi-temporal SAR, and fused optical–SAR datasets, to systematically investigate the impacts of multi-sensor inputs, temporal diversity, and different fusion approaches on the accuracy and reliability of forest aboveground carbon stock estimation (Table 4).

Table 4.

The information combinations obtained from multiple sources optical and multi-temporal Sentinel-1 data.

3.4. Modes and Accuracy Evaluation

In this study, three machine learning models [10,15]—support vector regression (SVR), K-nearest neighbor regression (KNN), and random forest (RF)—along with a multiple linear stepwise regression model (MLR), were developed to estimate forest AGC [39,40,41]. An optimal feature subset was identified through a forward feature selection approach applied to various variable combinations. To assess model performance, leave-one-out cross-validation (LOOCV) was employed [42], using three evaluation metrics: coefficient of determination (R2), root mean square error (RMSE), and relative root mean square error (rRMSE (%)) [27]. The calculation formulas for these metrics are as follows:

where n represents the number of samples, represents the measured aboveground carbon stock of the i-th sample, represents the average of the measured forest AGC of all samples, and represents the predicted forest AGC of the i-th samples.

4. Results

4.1. The Results of Estimated Forest AGC Using Single-Source Optical Data

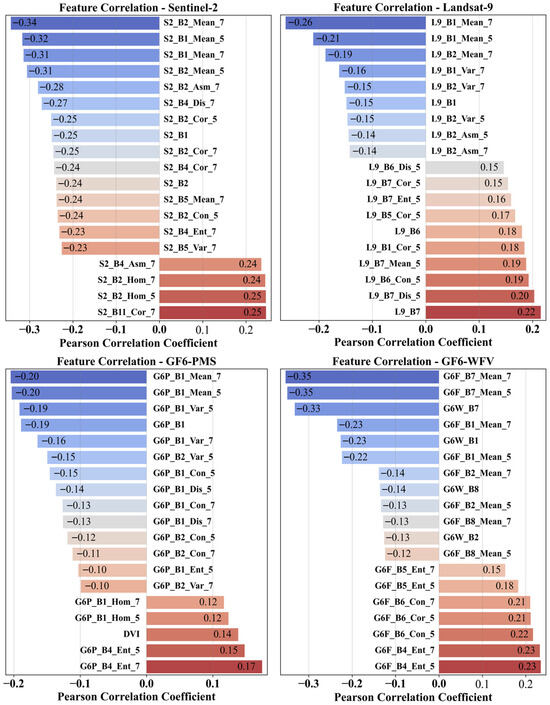

In this study, Pearson correlation coefficients were used to assess the sensitivity between features extracted from various optical data and forest AGC, with the top 18 features exhibiting the highest correlations presented in Figure 5. The results show that the Pearson correlation coefficients range from −0.35 to 0.25, indicating generally weak-to-moderate linear relationships. Moreover, texture features demonstrate greater sensitivity compared to spectral features derived from single-source optical remote sensing data. This is primarily because texture information effectively captures the spatial heterogeneity of forest stands, reflecting key structural attributes such as canopy architecture, vegetation density, and vertical complexity, thus providing enhanced explanatory power for carbon storage estimation.

Figure 5.

Pearson correlation coefficients between forest AGC and the top 18 features extracted from Sentinel-2, Landsat-9, GF6-PMS, and GF6-WFV optical images. S2, L9, G6P, and G6W denote the corresponding sensors, and B1, B2, and B3, … represent spectral bands. Texture features were derived from the gray-level co-occurrence matrix (GLCM), including Mean, Var, Hom, Con, Dis, Ent, Asm, and Cor (mean, variance, homogeneity, contrast, dissimilarity, entropy, angular second moment, and correlation). The numbers 5 and 7 indicate the texture window sizes.

For these alternative feature sets extracted from single-source optical data, the regression models of estimating forest AGC were constructed by the forward feature selection approach combined with several machine learning models. The accuracy indices of estimated forest AGC were employed to evaluate the potential of these single-source optical remote sensing data (Table 5). For the feature set extracted from the GF6-WFV data, all models achieve a R2 greater than 0.32, with the RF model performing best, yielding an R2 of 0.41 and a rRMSE of 24.73%. For the GF6-PMS_MS data, both SVR and RF models show similar performance, achieving R2 values of 0.34 and 0.33, respectively, and rRMSE ranging from 26.15% to 26.41%. In the case of Sentinel-2, the MLR and RF models attain R2 values of 0.33 and 0.32, respectively, whereas the KNN model performs substantially worse, producing the lowest R2 at 0.14 and an rRMSE as high as 29.89%. The Landsat-9_MS data source yields the weakest overall performance, with the SVR model only reaching an R2 of 0.31 and an rRMSE of 26.73%, falling short of robust estimation standards. These results indicate that single-source optical remote sensing data holds potential for mapping forest AGC. However, their estimation reliability and accuracy remain constrained by the limited spectral and structural information provided by a single data source. Therefore, further integration of multi-source remote sensing data is necessary to improve the robustness and accuracy of forest AGC estimation.

Table 5.

Summary of accuracy metrics for estimating forest AGC using single-source optical data.

4.2. The Results of Forest AGC by MLC Fusion with Multi-Source Optical Data

To compare the differences among various fusion strategies, this study applies the GS fusion algorithm to perform pixel-level fusion between the multispectral (MS) and panchromatic (PAN) bands of Landsat-9 and GF6-PMS data, generating the fused datasets Landsat-9_PAN and GF6PMS_PAN. Subsequently, using the PAN band of GF6-PMS as the reference, the same fusion algorithm is employed to integrate Sentinel-2 and GF6-WFV data with the GF6-PMS PAN data, resulting in the fused datasets SG6P_PAN and GG6P_PAN. Feature selection and modeling evaluation are then systematically conducted on these fused datasets.

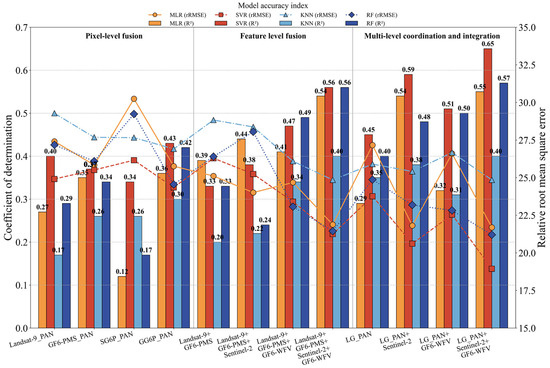

The results demonstrate that pixel-level data fusion strategies improve forest AGC estimation. However, the degree of improvement varies across single-source optical data (Figure 6). Specifically, Landsat-9_PAN outperforms Landsat-9_MS, with the coefficient of determination (R2) increasing from 0.31 to 0.40 in the optimal model. The R2 value for GF6PMS_PAN improves by 0.03 compared to GF6PMS_MS. Other fused data groups (SG6P_PAN and GG6P_PAN) also exhibit a consistent upward trend in accuracy. Furthermore, in feature-level fusion, model prediction accuracy improves progressively as the number of datasets increases (Figure 6). The highest accuracy is achieved when four data sources are integrated, with both SVR and RF models reaching an R2 of 0.56. These findings suggest that both pixel-level and feature-level fusion strategies contribute to enhanced forest AGC estimation accuracy. However, while feature-level fusion significantly improves precision, its effectiveness is limited by the fusion algorithm’s capacity to preserve spatial details. Moreover, multicollinearity among features may undermine model stability and generalization capability.

Figure 6.

The histograms of accuracy indices for mapping forest AGC with the various fusion strategies.

To integrate the complementary advantages of multi-source optical remote sensing data in spatial and spectral information, this study proposed the multi-level collaborative (MLC) fusion framework that integrates data from multiple sensors. The MLC fusion strategy is guided by forest AGC estimation accuracy. In the pixel-level fusion stage, multispectral imagery from Landsat-9 and individual bands from GF-6 PMS are fused to generate five fused datasets: LG_BLUE, LG_GREEN, LG_RED, LG_NIR, and LG_PAN. The optimal band for forest AGC mapping is selected from these datasets based on accuracy evaluation metrics. This optimal band is then used to perform pixel-level fusion with Sentinel-2 and GF-6 WFV data, respectively. By comparing model performance in forest AGC estimation, the best-performing pixel-level fused dataset is identified. In the feature-level fusion stage, candidate feature sets are extracted from the optimal pixel-level fused data, as well as from the original Sentinel-2 and GF-6 WFV data. Finally, multiple regression models are constructed to estimate forest AGC using these feature sets within the MLC fusion framework.

The forest aboveground carbon (AGC) estimation accuracy under different fusion strategies is presented in Figure 6. These results indicate that both pixel-level and feature-level fusion possess distinct advantages in enhancing model performance. However, they are restricted in their capacity to fully utilize multi-source information. Models employing pixel-level fusion generally attain R2 values below 0.45, with rRMSE ranging from 24% to 26%. This suggests that the ability to map forest AGC is still restricted by the spectral capacity of individual data sources. Feature-level fusion significantly boosts model performance, reaching a maximum R2 of 0.56 and reducing rRMSE to 21.25%. This highlights the potential of integrating multi-source data to improve feature representation and estimation accuracy. The MLC fusion strategy further enhances both the precision and stability of forest AGC estimation. The optimal model achieves an R2 of 0.65 and reduces RMSE and rRMSE to 18.80 MgC/hm2 and 18.94%, respectively. These results demonstrate that the MLC fusion strategy offers significant advantages over conventional pixel- and feature-level approaches in improving estimation accuracy.

4.3. The Results of Forest AGC by Multi-Temporal Sentinel-1 Data

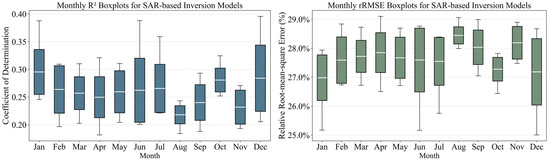

Given the low annual growth rate of forest AGC in the study area, monthly dual-polarization Sentinel-1 images were acquired throughout 2023. From these data, three types of features—backscatter coefficients, polarization ratios, and texture metrics—were extracted to construct a comprehensive feature set. A forest AGC estimation model was developed by integrating forward feature selection with machine learning algorithms. Boxplots of the R2 and rRMSE for forest AGC estimation were generated using data from each month (Figure 7). The results reveal significant temporal variations in model performance across different months, with R2 values ranging from 0.18 to 0.39 and rRMSE values varying between 24.95% and 29.08%, indicating pronounced fluctuations in estimation accuracy. Moreover, these findings suggest that using single-temporal dual-polarization Sentinel-1 data for forest AGC estimation is constrained by limited predictive capacity and high sensitivity to environmental variability, resulting in inconsistent and unreliable estimates.

Figure 7.

The boxplots of the R2 and rRMSE in mapping forest AGC using monthly Sentinel-1 data.

To address the limitations of single-temporal Sentinel-1 data in estimating forest AGC, multi-temporal Sentinel-1 images acquired within the same growing season were selected, guided by the seasonal dynamics of forest growth and surface moisture variation. Feature sets for each season were extracted, and the multi-temporal SAR combining approach based on seasonal variation patterns were employed to develop models for mapping forest AGC. The potential of both single-temporal and multi-temporal Sentinel-1 data for AGC estimation is systematically evaluated (Figure 8).

Figure 8.

The histograms of accuracy indices in mapping forest AGC using the multi-temporal SAR combining approach based on seasonal variation patterns.

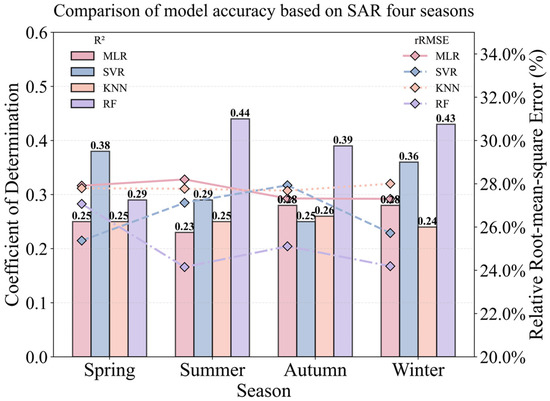

The results indicate that the multi-temporal fusion approach based on seasonal variation patterns significantly improves estimation accuracy and stability, with rRMSE values between 24.19% and 28.20%. Furthermore, minimal variation was observed across different seasons, with the RF model demonstrating the best performance. It can be inferred that multi-temporal Sentinel-1 data can partially mitigate uncertainties associated with single-temporal observations. However, challenges regarding insufficient accuracy and reliability persist when using a single SAR data source for forest AGC estimation. Therefore, future research should focus on exploring synergistic inversion methods that integrate multi-source optical remote sensing data with multi-temporal SAR data.

4.4. Integrating Multi-Source Optical and Multi-Temporal Sentinel-1 Data for Mapping Forest AGC

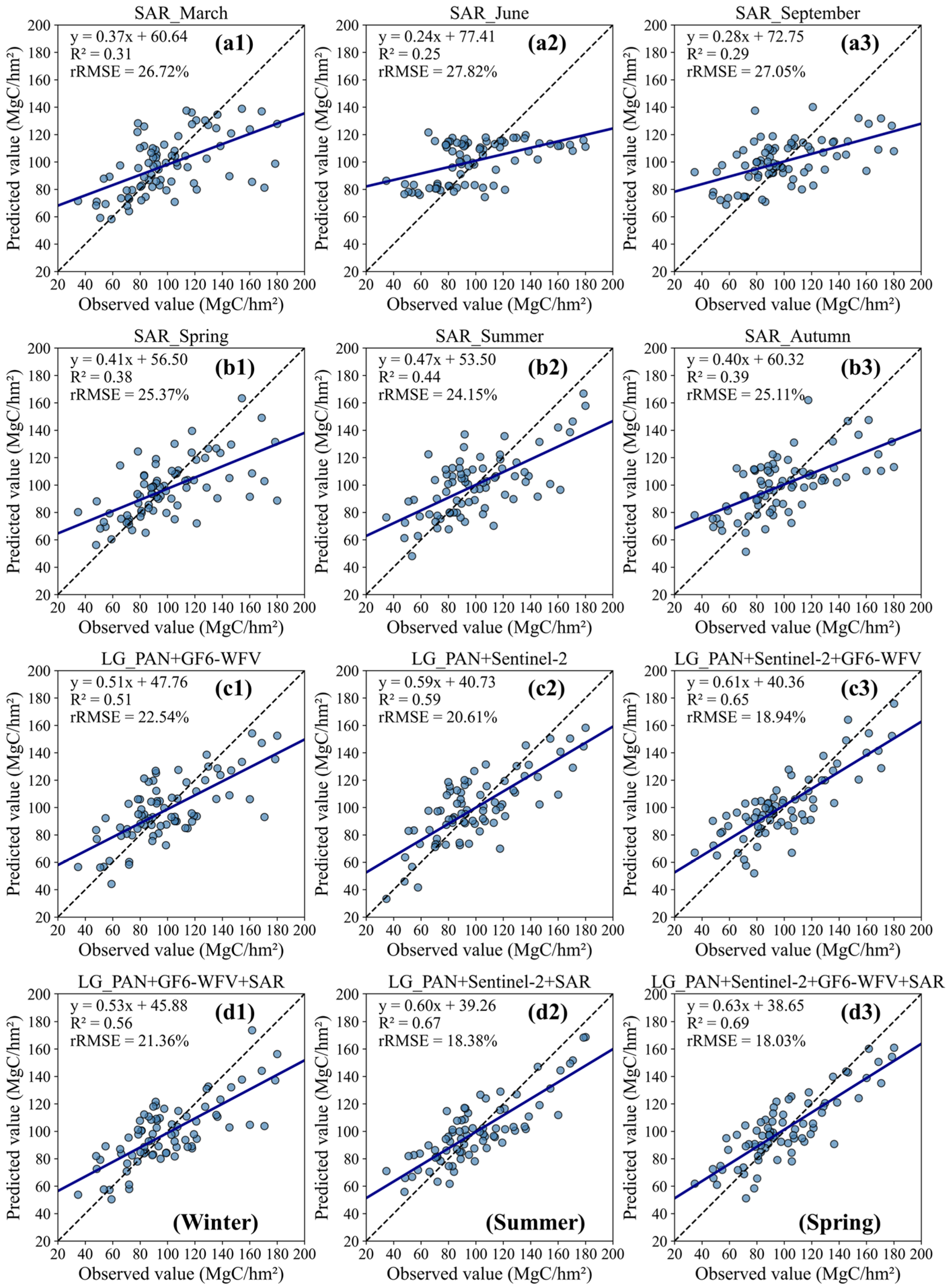

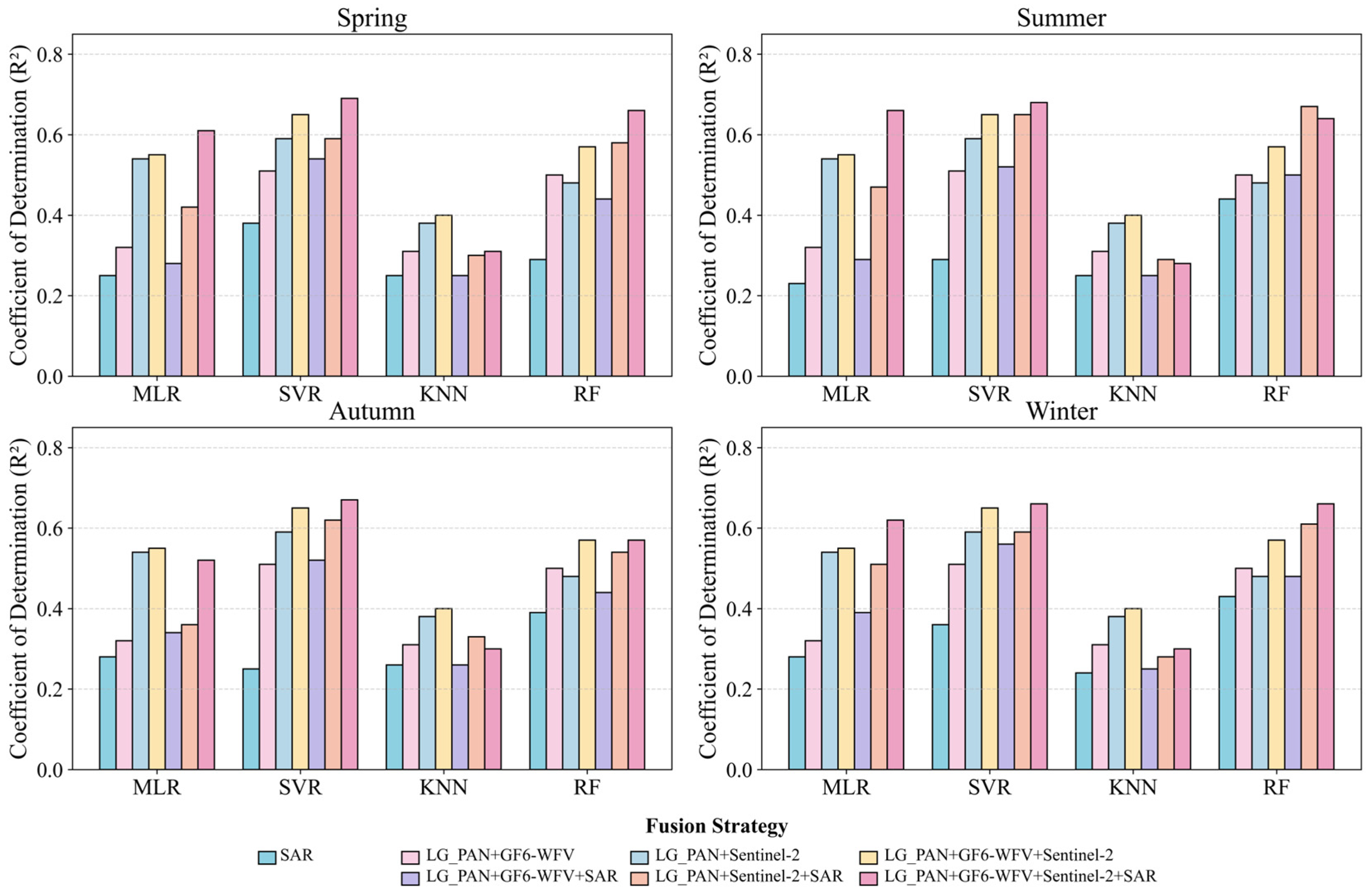

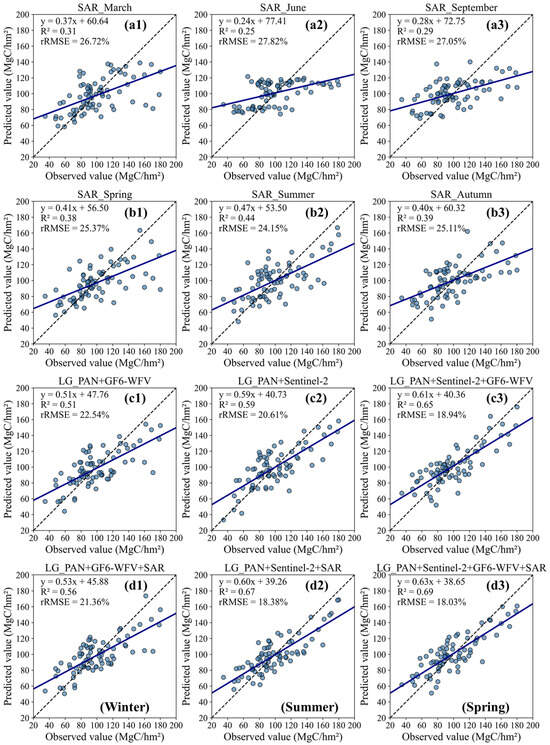

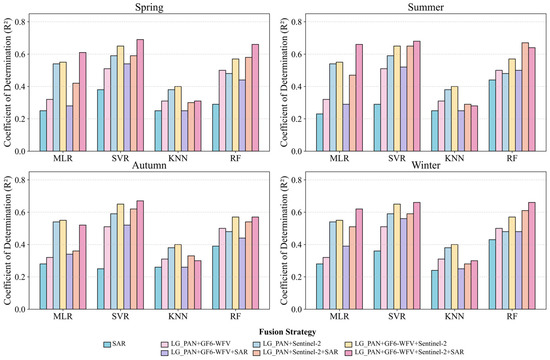

To improve forest AGC estimation accuracy and reliability, multi-source optical data (LG6P + S, L6GP + W, LG6P + W + S) fused by the MLC strategy were combined with multi-temporal Sentinel-1 SAR data, grouped by seasonal patterns (spring, summer, autumn, winter). Subsequently, the optical–SAR collaborative inversion model was developed for forest AGC mapping. The performance of these models is systematically evaluated compared with multi-source optical data fusion datasets and four multi-temporal Sentinel-1 datasets (Table 6). The results indicate that the optical–SAR collaborative approach significantly improves model reliability, particularly in terms of the R2, where the enhancement is most pronounced (Figure 9).

Table 6.

Accuracy indices of mapping forest AGC using optical–SAR modeling approach.

Figure 9.

The scatterplots between ground observed and predicted AGC using SVR model with various fused strategies; (a1–a3) is obtained from single Sentinel-1 images, (b1–b3) is obtained from multi-temporal Sentinel-1 images with various seasons, (c1–c3) is obtained from multi-source optical data with MLC fused strategy, and (d1–d3) is obtained from an optical–SAR collaborative approach.

Among the datasets employing the MLC fusion strategy, the LG6P + W + S dataset combined with multi-temporal Sentinel-1 data in spring achieves the highest estimation accuracy within the SVR model, yielding an R2 of 0.69. This finding further underscores the advantage of SAR data in capturing vegetation structural information. Furthermore, among the four regression models evaluated, the SVR and RF models exhibit superior stability and estimation accuracy, suggesting that the multi-source data fusion strategy demonstrates better adaptability and generalization capability within these model frameworks. In conclusion, SAR data plays a significant role in enhancing the capacity of multi-source remote sensing for forest AGC inversion and demonstrates strong adaptability and robustness in forest carbon stock estimation.

To evaluate the impact of multi-source optical data fusion strategies and multi-temporal SAR data combinations on the accuracy of forest AGC estimation, partial results derived from the SVR model are further analyzed. These include several types of feature sets extracted from single-temporal Sentinel-1 data, multi-temporal Sentinel-1 data combinations using seasonal stratification, multi-source optical datasets fused by MLC method, and integrated optical–SAR datasets. The scatter plots between observed and predicted values are plotted to assess performance of data fusing approaches (Figure 9). The results reveal that when relying solely on single-temporal Sentinel-1 data, high AGC values tended to be underestimated, whereas low values were overestimated, substantially limiting estimation accuracy. Incorporating multi-temporal Sentinel-1 data through seasonal grouping partially mitigated the underestimation of high AGC values and improved overall accuracy; however, improvements for low AGC samples remained limited. Multi-source optical datasets based on MLC fusion slightly alleviate spectral saturation effects but still exhibit notable prediction biases in non-saturated regions. In contrast, fusing multi-source optical and multi-temporal SAR data reduces spectral saturation and lowers estimation errors across sample ranges. This results in a significant increase in the R2. These findings indicate that by strategically integrating optical and SAR data and capitalizing on their complementary strengths, the optical–SAR collaborative approach can substantially enhance both the accuracy and reliability of forest AGC estimation.

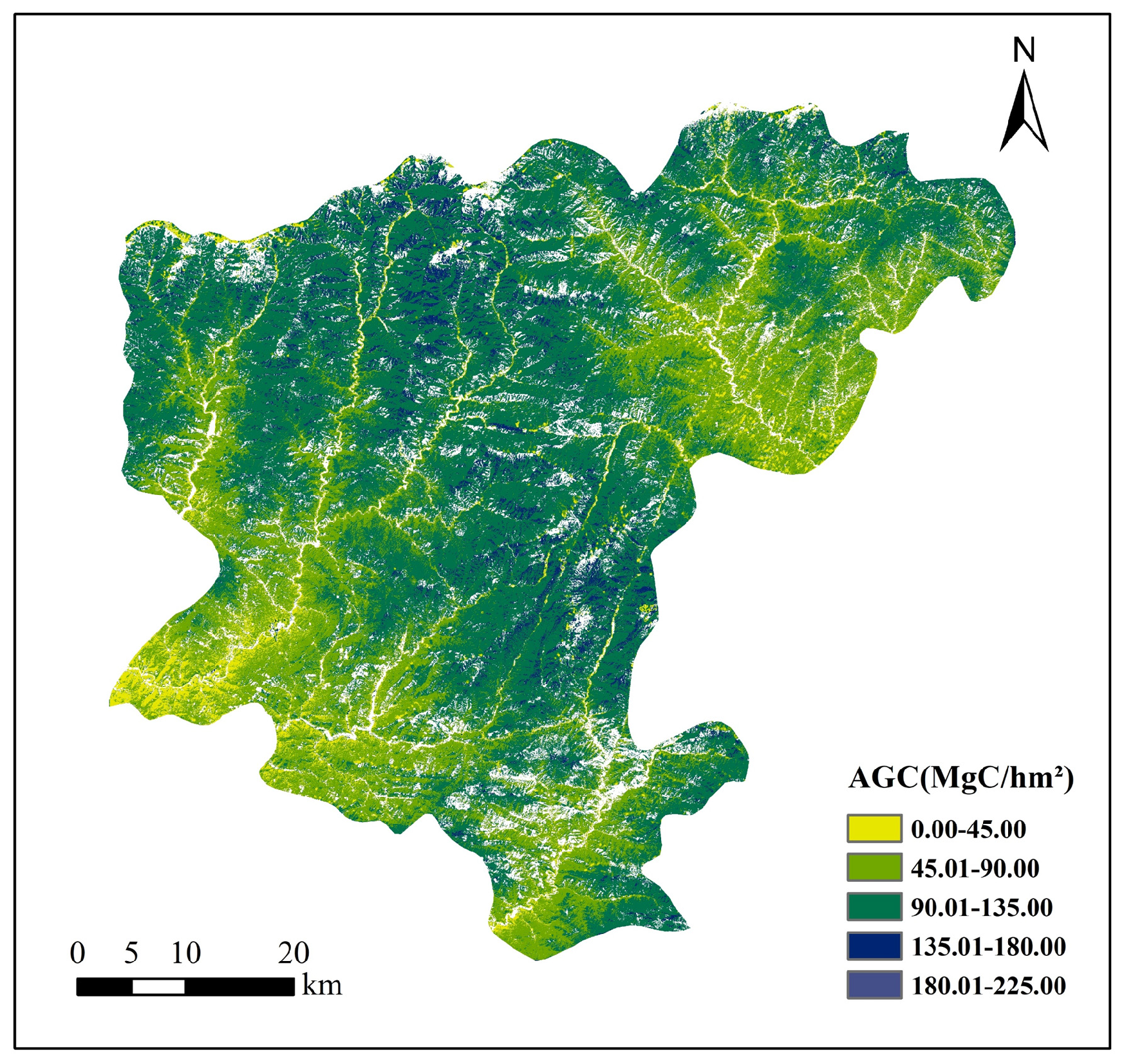

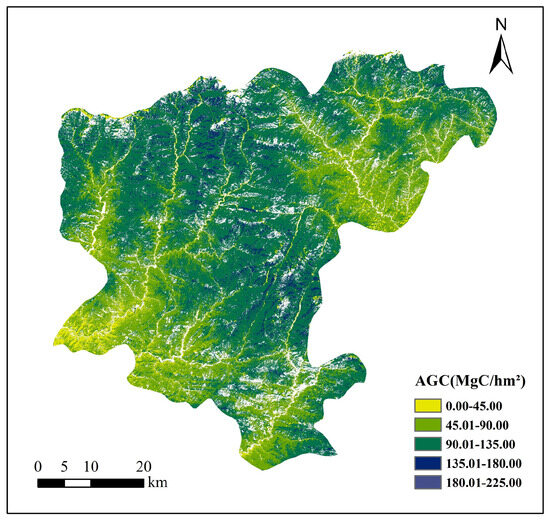

Finally, the map of forest AGC in the study area is generated using the optimal model derived from the optical–SAR collaborative approach with the SVR algorithm (Figure 10). The results indicate higher AGC in the central-southern and northwestern regions, while lower values are observed in the eastern and southwestern parts. High AGC zones are predominantly distributed in mid-to-high altitude mountainous and hilly areas, characterized by extensive coverage of mature and over-mature forests. In contrast, low AGC areas are mainly located in river valleys and low-lying terrains, where intensified anthropogenic disturbances, such as urban expansion and infrastructure development, have led to increased forest fragmentation, impeded vegetation recovery, and diminished carbon sequestration potential.

Figure 10.

The maps of forest AGC in study area using the optical–SAR collaborative approach with SVR model (LG6P + W + S dataset combined with multi-temporal Sentinel-1 data in Spring).

5. Discussions

5.1. The Potential and Limitations of Fusion Strategies for Multi-Source Optical Data

The results of this study clearly demonstrate that feature sets extracted from multi-source optical fusion data present significant advantages over those derived from single optical data sources. This advantage is evident in the notable enhancement of the model’s R2 and the reduction of the RMSE values (Table 6). Moreover, the improvement in the accuracy of mapping forest aboveground carbon storage (AGC) is closely related to the multi-source optical data fusion methodology (Figure 6). Additionally, compared to pixel-level fusion methods, feature-level joint strategies can enhance the estimation accuracy of forest AGC. However, feature combination significantly increases the number of alternative features, which in turn complicates the efficiency of feature selection. Previous research results have verified that the feature-level fusion of multi-source optical data has greater potential than single data sources in forest parameter estimation [43]. Meanwhile, numerous advanced methodologies have also been developed to efficiently identify the optimal feature subset from the large candidate feature set for constructing forest parameter prediction models. Nevertheless, the efficiency of feature selections remains a significant challenge [44,45].

By integrating the goals of mapping forest AGC into a unified multi-source data fusion framework, the proposed multi-level collaborative fusion strategy (MLC) fusion strategy combines multiple optical datasets such as Landsat-9, GF-6 PMS, Sentinel-2, and GF-6 WFV. In this study, it achieves substantial improvements in estimating forest AGC. These results further illustrate that the effectiveness of multi-source data fusion is highly contingent upon the specific fusion strategy. Moreover, through the integration of pixel-level and feature-level fusion strategies, the quantity of candidate features is significantly lower compared to feature-level fusion methods, thus effectively enhancing the efficiency of the feature selection process. Additionally, embedding task objectives into the fusion methodology enables the rapid identification of data and feature combinations that enhance the accuracy of mapping forest AGC. It was found that the highest accuracy was attained when four data sources were integrated, with both the SVR and RF models reaching an R2 of 0.56. Compared with the estimation results of forest AGC using multi-source optical data fusion in plantation forests, the estimation accuracy achieved in this study for heterogeneous forest regions approaches a comparable level [44,46,47].

Nevertheless, multi-source remote sensing data holds significant potential for improving model performance. First, such data can provide richer band combinations, diverse observation modes, and finer spatial resolution, all of which can theoretically enhance a model’s sensitivity to variations in carbon storage [22]. The main challenge, however, lies in designing rational and efficient information integration mechanisms to overcome the inherent limitations of multi-source remote sensing data [43]. In recent years, deep learning-based image fusion approaches—such as convolutional neural networks, transformers, and graph neural networks—have made substantial progress in remote sensing image processing [47,48,49]. These methods can extract high-level semantic features without stringent requirements for geometric alignment or spectral consistency and support adaptive fusion of multi-source data, offering a promising solution for integrating heterogeneous remote sensing imagery [50,51].

5.2. The Advantages of Optical–SAR Modeling Approach in Mapping Forest AGC

In this study, the forward feature selection method, combined with machine learning techniques, was utilized to construct a feature-level fusion of multi-temporal Sentinel-1 SAR data for forest aboveground carbon (AGC) mapping. When compared with the use of single-temporal Sentinel-1 data for forest AGC mapping, the multi-temporal synthetic aperture radar (SAR) combination approach based on seasonal variation patterns significantly improves the accuracy and reliability in this study. These results also demonstrate a substantial improvement in the spatiotemporal instability of polarimetric features. It can be inferred that multi-temporal Sentinel-1 data can partly mitigate the uncertainties associated with single-temporal observations. Previous research on boreal and plantation forests has produced equivalent results, which are largely consistent with the findings presented in this study, thus further illustrating both the advantages and limitations of SAR data in mapping forest AGC [15,19,52]. However, although the results suggest that integrating multi-temporal Sentinel-1 data that captures seasonal variations can enhance the accuracy of forest AGC estimation, the performance remains inferior to that achieved by using only multi-source optical data fusion [44].

It is widely recognized that the penetration ability of SAR data serves as a crucial means to mitigate the saturation effects that occur in optical data [18,20,45]. Therefore, the combination of SAR and optical data holds substantial potential for enhancing the accuracy of AGC estimation in forests [52,53]. In this study, although the underestimation of high values has been partially mitigated through the fusion of multi-source optical data, the saturation effect of optical data remains extremely prominent (Figure 9). After applying the proposed optical–SAR modeling approach, the saturation phenomenon has been significantly mitigated, leading to a notable improvement in the estimation accuracy of mapping forest AGC (Table 6). Moreover, comparative evaluations among different models, data combinations, and seasonal SAR configurations indicate that the feature-level integration of optical and SAR data not only improves estimation accuracy but also enhances result consistency, with the R2 exhibiting the most significant improvement (Figure 11). The findings of this study are consistent with existing similar research results, further validating that the integration of optical and SAR data contributes to enhancing the estimation accuracy of AGC in complex forests [43,53].

Figure 11.

The histograms of R2 extracted from four models using various multi-source optical data with MLC fused method and multi-temporal Sentinel-1 data.

However, the quality of both data types and their capacity to capture information on forest canopy structure, including encompassing surface and internal characteristics, is often constrained by multiple limiting factors. The proposed fusion method in this study is largely confined to feature-level integration, depending on statistical correlations rather than mechanistic representations of physical processes. This limitation reduces model adaptability in heterogeneous environments and hinders a comprehensive understanding of feature-level interactions. Consequently, achieving deep and meaningful integration of optical and SAR data continues to pose a significant challenge.

6. Conclusions

To overcome the redundancy in features derived from multi-source optical data and enhance the spatiotemporal stability of polarimetric SAR features, a comprehensive set of spectral features derived from multi-source optical data (Landsat-9, Sentinel-2, and GF-6) and polarization features extracted from multi-temporal SAR data (Sentinel-1) were integrated into the proposed optical–SAR fusion framework to improve the accuracy of mapping AGC in heterogeneous forest regions. By integrating the goal of mapping forest AGC into a unified multi-source data fusion framework, the proposed MLC fusion strategy using multi-source optical data offers significant advantages over conventional methods. The optimal model achieved an R2 of 0.65, with the rRMSE reduced to 18.94%. After applying the proposed optical–SAR modeling approach, the complementary advantages of the spectral characteristics in optical data and the penetration capabilities of SAR data effectively alleviate the saturation effect in the estimation results, thereby enhancing the accuracy of mapping forest AGC. The highest performance was achieved when using spring-acquired multi-temporal Sentinel-1 data within the SVR model, yielding an R2 of 0.69 and reducing the rRMSE to 18.03%. These findings indicate that the optical–SAR collaborative approach has great potential to improve the accuracy of forest AGC estimation. However, considering the complexity of forest structure and the disparities in sensor imaging mechanisms, improving the accuracy of mapping forest AGC through the fusion of optical and SAR data predominantly relies on effective fusion strategies. As a result, attaining a profound and meaningful integration of optical and SAR data remains a significant challenge. Especially when estimating complex, high-dimensional variables like forest AGC, achieving a consistent representation of multi-scale and multi-dimensional features from various data sources is crucial for enhancing both the estimation accuracy and the model’s generalization ability.

Author Contributions

Conceptualization, G.W. and G.X.; methodology, C.X.; software, C.X. and S.W.; validation, J.L., X.Y. and Y.D.; formal analysis, J.L. and G.X.; investigation, C.X. and S.W.; writing—original draft preparation, G.X. and C.X.; writing—review and editing, G.X. and J.L.; visualization, H.L.; supervision, H.L.; project administration, J.L.; funding acquisition, S.W. and G.W. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Innovation Project of Northwest Surveying and Planning Institute of National Forestry and Grassland Administration (project number: XBY-KJCX-2023-03, XBY-KJCX-2021-12).

Data Availability Statement

The raw data supporting the conclusions of this article will be made available by the authors on request.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Ehlers, D.; Wang, C.; Coulston, J.; Zhang, Y.; Pavelsky, T.; Frankenberg, E.; Woodcock, C.; Song, C. Mapping Forest Aboveground Biomass Using Multisource Remotely Sensed Data. Remote Sens. 2022, 14, 1115. [Google Scholar]

- Chen, C.; He, Y.; Zhang, J.; Xu, D.; Han, D.; Liao, Y.; Luo, L.; Teng, C.; Yin, T. Estimation of Above-Ground Biomass for Pinus densata Using Multi-Source Time Series in Shangri-La Considering Seasonal Effects. Forests 2023, 14, 1747. [Google Scholar] [CrossRef]

- Sun, X.; Li, G.; Wang, M.; Fan, Z. Analyzing the Uncertainty of Estimating Forest Aboveground Biomass Using Optical Imagery and Spaceborne LiDAR. Remote Sens. 2019, 11, 722. [Google Scholar] [CrossRef]

- Joshi, N.; Baumann, M.; Ehammer, A.; Fensholt, R.; Grogan, K.; Hostert, P.; Jepsen, M.R.; Kuemmerle, T.; Meyfroidt, P.; Mitchard, E.T.A.; et al. A Review of the Application of Optical and Radar Remote Sensing Data Fusion to Land Use Mapping and Monitoring. Remote Sens. 2016, 8, 70. [Google Scholar]

- Indirabai, I.; Nair, M.H.; Nair, J.R.; Nidamanuri, R.R. Optical Remote Sensing for Biophysical Characterisation in Forests: A Review. Int. J. Appl. Eng. Res. 2019, 14, 344–354. [Google Scholar] [CrossRef]

- Huang, C.; Gong, W.; Pang, Y. Remote Sensing and Forest Carbon Monitoring—A Review of Recent Progress, Challenges and Opportunities. J. Geod. Geoinf. Sci. 2022, 5, 124–147. [Google Scholar]

- Karimi, A.; Abtahi, B.; Kabiri, K. Mapping and Estimating Blue Carbon in Mangrove Forests Using Drone and Field-Based Tree Height Data: A Cost-Effective Tool for Conservation and Management. Forests 2025, 16, 1196. [Google Scholar]

- Ghamisi, P.; Rasti, B.; Yokoya, N.; Wang, Q.; Hofle, B.; Bruzzone, L.; Bovolo, F.; Chi, M.; Anders, K.; Gloaguen, R.; et al. Multisource and Multitemporal Data Fusion in Remote Sensing: A Comprehensive Review of the State of the Art. IEEE Geosci. Remote Sens. Mag. 2019, 7, 6–39. [Google Scholar]

- Wang, Z.; Ma, Y.; Zhang, Y. Review of pixel-level remote sensing image fusion based on deep learning. Inf. Fusion 2023, 90, 36–58. [Google Scholar] [CrossRef]

- Mao, Z.; Deng, L.; Liu, X.; Wang, Y. A Comparative Analysis of SAR and Optical Remote Sensing for Sparse Forest Structure Parameters: A Simulation Study. Forests 2025, 16, 1244. [Google Scholar] [CrossRef]

- Zhang, S.; Han, Y.; Wang, H.; Hou, D. Gram–Schmidt Remote Sensing Image Fusion Algorithm Based on Matrix Elementary Transformation. J. Phys. Conf. Ser. 2022, 2410, 012001. [Google Scholar] [CrossRef]

- Peng, C.; Fang, C.; Long, J.; Zhang, T.; Zheng, H.; Ye, Z. Estimation of Forest Aboveground Biomass Using Multitemporal Quad-Polarimetric PALSAR-2 SAR Data by Model-Free Decomposition Approach in Planted Forest. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 13519–13537. [Google Scholar]

- Zhang, T.; Long, J.; Lin, H.; Liu, Z.; Ye, Z.; Zheng, H. A Novel Feature Evaluation Method in Mapping Forest AGB by Fusing Multiple Evaluation Metrics Using PolSAR Data. IEEE Geosci. Remote Sens. Lett. 2024, 21, 4006605. [Google Scholar] [CrossRef]

- Marino, A. Trace Coherence: A New Operator for Polarimetric and Interferometric SAR Images. IEEE Trans. Geosci. Remote Sens. 2017, 55, 2326–2339. [Google Scholar] [CrossRef]

- Chou, X.; Yun, S.; Zi, W.; Fengli, Z. Multitemporal Polarimetric SAR Data Fusion for Land Cover Mapping. In Proceedings of the 18th International Conference on Geoinformatics, Beijing, China, 18–20 June 2010; pp. 1–5. [Google Scholar]

- Shakya, A.; Biswas, M.; Pal, M. Fusion and Classification of Multi-Temporal SAR and Optical Imagery Using Convolutional Neural Network. Int. J. Image Data Fusion 2022, 13, 113–135. [Google Scholar] [CrossRef]

- Li, Q.; Lin, H.; Long, J.; Liu, Z.; Ye, Z.; Zheng, H.; Yang, P. Mapping Forest Stock Volume Using Phenological Features Derived from Time-Serial Sentinel-2 Imagery in Planted Larch. Forests 2024, 15, 995. [Google Scholar] [CrossRef]

- Fu, Y.; Yang, S.; Yan, H.; Xue, Q.; Shi, Z.; Hu, X. Optical and SAR Image Fusion Method with Coupling Gain Injection and Guided Filtering. J. Appl. Remote Sens. 2022, 16, 046505. [Google Scholar] [CrossRef]

- Zhang, Y.; He, B.; Chen, R.; Zhang, H.; Fan, C.; Yin, J.; Li, Y. The Potential of Optical and SAR Time-Series Data for the Improvement of Aboveground Biomass Carbon Estimation in Southwestern China’s Evergreen Coniferous Forests. GIScience Remote Sens. 2024, 61, 2345438. [Google Scholar] [CrossRef]

- Alparone, L.; Garzelli, A.; Zoppetti, C. Fusion of VNIR Optical and C-Band Polarimetric SAR Satellite Data for Accurate Detection of Temporal Changes in Vegetated Areas. Remote Sens. 2023, 15, 638. [Google Scholar] [CrossRef]

- Mohammadpour, P.; Viegas, C. Applications of Multi-Source and Multi-Sensor Data Fusion of Remote Sensing for Forest Species Mapping. In Advances in Remote Sensing for Forest Monitoring; John Wiley & Sons: Hoboken, NJ, USA, 2022; pp. 255–287. [Google Scholar]

- Tian, X.; Li, J.; Zhang, F.; Zhang, H.; Jiang, M. Forest Aboveground Biomass Estimation Using Multisource Remote Sensing Data and Deep Learning Algorithms: A Case Study over Hangzhou Area in China. Remote Sens. 2024, 16, 1074. [Google Scholar] [CrossRef]

- Nurmemet, I.; Aili, Y.; Xiang, Y.; Aihaiti, A.; Qin, Y.; Aizezi, B. A Three-Dimensional Feature Space Model for Soil Salinity Inversion in Arid Oases: Polarimetric SAR and Multispectral Data Synergy. Agronomy 2025, 15, 1590. [Google Scholar] [CrossRef]

- Kong, Y.; Yan, B.; Liu, Y.; Leung, H.; Peng, X. Feature-Level Fusion of Polarized SAR and Optical Images Based on Random Forest and Conditional Random Fields. Remote Sens. 2021, 13, 1323. [Google Scholar] [CrossRef]

- Liu, C.; Sun, Y.; Xu, Y.; Sun, Z.; Zhang, X.; Lei, L.; Kuang, G. A Review of Optical and SAR Image Deep Feature Fusion in Semantic Segmentation. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 12910–12930. [Google Scholar] [CrossRef]

- Lei, D.; Zhou-Ping, S. Characteristics of Forest Vegetation Carbon Storage and Carbon Density in Ningshan County, Qinling Mountain. Acta Bot. Boreal. Occident. Sin. 2011, 31, 2310–2320. [Google Scholar]

- Alauddin, M.W.; Anshori, M.; Wicaksono, A.S.; Utaminingrum, F. Comparative Study Based on Error Calculation in Multiple Linear Regression Coefficient for Forest Fires Prediction. In Proceedings of the 2018 International Conference on Sustainable Information Engineering and Technology (SIET), Malang, Indonesia, 10–12 November 2018; pp. 115–120. [Google Scholar]

- Zeng, W.S. Development of Monitoring and Assessment of Forest Biomass and Carbon Storage in China. For. Ecosyst. 2014, 1, 20. [Google Scholar] [CrossRef]

- GB/T 43648-2024; National Technical Committee on Forest Resources Standardization (SAC/TC 370). Biomass Models and Carbon Accounting Parameters for Major Tree Species. China Standard Press: Beijing, China, 2024.

- Gray, H.R. Volume Measurement of Single Trees. Aust. For. 1944, 8, 44–61. [Google Scholar] [CrossRef]

- Wang, H.; He, Z.; Wang, S.; Zhang, Y.; Tang, H. Radiometric Cross-Calibration of GF6-PMS and WFV Sensors with Sentinel 2-MSI and Landsat 9-OLI2. Remote Sens. 2024, 16, 1949. [Google Scholar]

- Zhu, X.; Cai, F.; Tian, J.; Williams, T.K.-A. Spatiotemporal Fusion of Multisource Remote Sensing Data: Literature Survey, Taxonomy, Principles, Applications, and Future Directions. Remote Sens. 2018, 10, 527. [Google Scholar] [CrossRef]

- Poudel, A.; Shrestha, H.L.; Mahat, N.; Sharma, G.; Aryal, S.; Kalakheti, R.; Lamsal, B. Modeling and Mapping of Aboveground Biomass and Carbon Stock Using Sentinel-2 Imagery in Chure Region, Nepal. Int. J. For. Res. 2023, 2023, 5553957. [Google Scholar] [CrossRef]

- Iqbal, N.; Mumtaz, R.; Shafi, U.; Zaidi, S.M.H. Gray Level Co-Occurrence Matrix (GLCM) Texture Based Crop Classification Using Low Altitude Remote Sensing Platforms. PeerJ Comput. Sci. 2021, 7, e536. [Google Scholar] [CrossRef] [PubMed]

- Wood, E.M.; Pidgeon, A.M.; Radeloff, V.C.; Keuler, N.S. Image Texture as a Remotely Sensed Measure of Vegetation Structure. Remote Sens. Environ. 2012, 121, 516–526. [Google Scholar] [CrossRef]

- Zhao, J.; Li, J.; Liu, Q. Review of Forest Vertical Structure Parameter Inversion Based on Remote Sensing Technology. J. Remote Sens. 2013, 17, 697–716. [Google Scholar]

- Abdikan, S.; Sekertekin, A.; Ustunern, M.; Sanli, F.B.; Nasirzadehdizaji, R. Backscatter Analysis Using Multi-Temporal Sentinel-1 SAR Data for Crop Growth of Maize in Konya Basin, Turkey. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2018, 42, 9–13. [Google Scholar] [CrossRef]

- Santos, E.P.; Santos, I.C.; Bussinguer, J.F.; Cruz, R.R.P.; Amaral, C.H.D.; da Silva, D.D.; Moreira, M.C. Dual-Polarization Vegetation Indices for the Sentinel-1 SAR Sensor and its Correlation to Forest Biomass from Atlantic Forest Fragments. CERNE 2024, 30, e-103286. [Google Scholar] [CrossRef]

- Zhao, Q.; Yu, S.; Zhao, F.; Tian, L.; Zhao, Z. Comparison of Machine Learning Algorithms for Forest Parameter Estimations and Application for Forest Quality Assessments. For. Ecol. Manag. 2019, 434, 224–234. [Google Scholar] [CrossRef]

- Cosenza, D.N.; Korhonen, L.; Maltamo, M.; Packalen, P.; Strunk, J.L.; Næsset, E.; Gobakken, T.; Soares, P.; Tomé, M. Comparison of Linear Regression, k-Nearest Neighbour and Random Forest Methods in Airborne Laser-Scanning-Based Prediction of Growing Stock. Forestry 2021, 94, 311–323. [Google Scholar] [CrossRef]

- Yan, X.; Li, J.; Smith, A.R.; Yang, D.; Ma, T.; Su, Y.; Shao, J. Evaluation of Machine Learning Methods and Multi-Source Remote Sensing Data Combinations to Construct Forest Above-Ground Biomass Models. Int. J. Digit. Earth 2023, 16, 4471–4491. [Google Scholar] [CrossRef]

- Sreedharan, R.; Prajapati, J.; Engineer, P.; Prajapati, D. Leave-One-Out Cross-Validation in Machine Learning. In Ethical Issues in AI for Bioinformatics and Chemoinformatics; CRC Press: Boca Raton, FL, USA, 2023; pp. 56–71. [Google Scholar]

- Liang, X.; Yu, S.; Meng, B.; Wang, X.; Yang, C.; Shi, C.; Ding, J. Multi-Source Remote Sensing and GIS for Forest Carbon Monitoring Toward Carbon Neutrality. Forests 2025, 16, 971. [Google Scholar] [CrossRef]

- Li, X.; Ye, Z.; Long, J.; Zheng, H.; Lin, H. Inversion of Coniferous Forest Stock Volume Based on Backscatter and InSAR Coherence Factors of Sentinel-1 Hyper-Temporal Images and Spectral Variables of Landsat 8 OLI. Remote Sens. 2022, 14, 2754. [Google Scholar] [CrossRef]

- Xu, X.; Lin, H.; Liu, Z.; Ye, Z.; Li, X.; Long, J. A Combined Strategy of Improved Variable Selection and Ensemble Algorithm to Map the Growing Stem Volume of Planted Coniferous Forest. Remote Sens. 2021, 13, 4631. [Google Scholar] [CrossRef]

- Solanky, V.; Katiyar, S.K. Pixel-Level Image Fusion Techniques in Remote Sensing: A Review. Spat. Inf. Res. 2016, 24, 475–483. [Google Scholar] [CrossRef]

- Zhang, D.; Yue, P.; Yan, Y.; Niu, Q.; Zhao, J.; Ma, H. Multi-Source Remote Sensing Images Semantic Segmentation Based on Differential Feature Attention Fusion. Remote Sens. 2024, 16, 4717. [Google Scholar]

- Xiang, B.; Pan, C.; Liu, J. A deep learning network for fusing optical and infrared images in complex imaging environments by using the modified U-Net. J. Opt. Soc. Am. A 2023, 40, 1644–1653. [Google Scholar] [CrossRef] [PubMed]

- Yun, T.; Li, J.; Ma, L.; Zhou, J.; Wang, R.; Eichhorn, M.P.; Zhang, H. Status, Advancements and Prospects of Deep Learning Methods Applied in Forest Studies. Int. J. Appl. Earth Obs. Geoinf. 2024, 131, 103938. [Google Scholar]

- Saidi, S.; Idbraim, S.; Karmoude, Y.; Masse, A.; Arbelo, M. Deep-Learning for Change Detection Using Multi-Modal Fusion of Remote Sensing Images: A Review. Remote Sens. 2024, 16, 3852. [Google Scholar]

- Hussain, M.; O’Nils, M.; Lundgren, J.; Mousavirad, S.J. A Comprehensive Review on Deep Learning-Based Data Fusion. IEEE Access 2024, 12, 180093–180124. [Google Scholar] [CrossRef]

- Zheng, H.; Long, J.; Zang, Z.; Lin, H.; Liu, Z.; Zhang, T.; Yang, P. Interpreting the Response of Forest Stock Volume with Dual Polarization SAR Images in Boreal Coniferous Planted Forest in the Non-Growing Season. Forests 2023, 14, 1700. [Google Scholar] [CrossRef]

- Huang, X.; Ziniti, B.; Torbick, N.; Ducey, M.J. Assessment of forest above ground biomass estimation using multi-temporal C-band Sentinel-1 and Polarimetric L-band PALSAR-2 data. Remote Sens. 2018, 10, 1424. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.