Abstract

Change detection in very-high-spatial-resolution (VHR) remote sensing images is a very challenging area with applicability in many problems ranging from damage assessment to land management and environmental monitoring. In this study, we investigated the change detection problem associated with analysing the vegetation corresponding to crops and natural ecosystems over VHR multispectral and hyperspectral images obtained by sensors onboard drones or satellites. The challenge of applying change detection methods to these images is the similar spectral signatures of the vegetation elements in the image. To solve this issue, a consensus multi-scale binary change detection technique based on the extraction of object-based features was developed. With the objective of capturing changes at different granularity levels taking advantage of the high spatial resolution of the VHR images and, as the segmentation operation is not well defined, we propose to use several detectors based on different segmentation algorithms, each applied at different scales. As the changes in vegetation also present high variability depending on capture conditions such as illumination, the use of the CVA-SAM applied at the segment level instead of at the pixel level is also proposed. The results revealed the effectiveness of the proposed approach for identifying changes over land cover vegetation images with different types of changes and different spatial and spectral resolutions.

1. Introduction

Land cover change detection based on remotely sensed data [1] is a technique whose main objective is to identify differences on the ground surface in multitemporal or bitemporal images. This process is widely used in the field of remote sensing for a wide range of applications on vegetation images including monitoring changes in the vegetation cover, identifying areas affected by natural disasters such as wildfires, assessing the effects of climate change on vegetation patterns, or detecting invasive vegetation species [2,3,4].

Change detection in remote sensing images is highly important in many applications related to vegetation, natural spaces, agriculture, and different ecosystems. For example, the mapping of vegetation cover in terrestrial and aquatic environments is a key indicator of environmental health in marine and freshwater ecosystems [5]. Change detection enables the identification of temporal variations in land cover or land use, providing critical insights for diverse applications spanning agriculture, geology, forestry, regional mapping and planning, oil pollution monitoring, and surveillance [6,7]. These techniques can also be used to monitor environmental conditions such as the impact of natural disasters, evaluate desertification, or detect specific urban or natural variations in the environment [3].

In the domain of change detection in vegetation images, a critical challenge is the detection of changes between different types of vegetation [8]. Primarily, this complexity is due to the similar spectral signatures that different vegetation types exhibit [1]. Therefore, pixel-level change detection methods based on comparing spectral signatures are limited in detecting these changes, a task that becomes particularly vital when identifying changes in native compared to foreign vegetation [9,10]. This enhancement is crucial for maintaining ecological balance and managing biodiversity, demonstrating the practical implications of our proposed methodology. Different vegetation types have been correctly classified in the literature using object-based algorithms based on spatial information and textures [11].

With the increasing improvement of the sensors used to capture images of the Earth’s surface, the resolution per pixel has also increased, specifically in the case of very-high-spatial-resolution (VHR) images. These images usually have a resolution from sub-metre to several metres per pixel [12,13] and contain a large amount of spatial information, but present several problems in terms of change detection. Since VHR images contain limited spectral information of only four or five bands [14], it is more difficult to separate classes containing a similar spectral signature because of the low variance between classes [15]. Moreover, there is a higher intra-class variance of the classes represented in the image because of the higher spatial resolution of each pixel [16,17].

Consequently, the pixel-level change detection used thus far generates rather poor results in terms of accuracy for VHR images because such algorithms only rely on the use of spectral information. These algorithms include CVA-based techniques [1,4,18,19,20,21,22,23,24] and do not exploit the extensive amount of spatial information provided by the structures and textures of the VHR images [25,26,27]. Therefore, different object-based change detection (OBCD) algorithms have been incorporated to take advantage of the spatial information [28,29,30,31], which can also be used to detect homogeneous structures to reduce the computational cost of the techniques.

The minimum unit or structure used for the detection is pixel-level or object-level algorithms [32]. Traditionally, pixel-level methods have been used in low–medium resolution images, for example change vector analysis (CVA) is widely used [33] to analyse the change vector between pairs of pixels of bi-temporal images to generate a difference image or magnitude [21]. Each pixel of this image stores the intensity of change, and a binary thresholding algorithm is used to determine the intensity above which a pixel is defined as a change or not. Statistical techniques such as expectation–maximisation (EM) [34] or, most frequently, the Otsu algorithm [35,36,37,38] have been used to obtain an optimal threshold.

Several detection techniques based on the fusion of results from different methods, such as different object scales (multi-scale approach) [39] or different algorithms (multi-algorithm approach) [40], have been developed to take full advantage of the properties of spatial information extraction algorithms. Multi-scale and multi-algorithm techniques have one step in common: merging of results. The most-commonly used methods in the literature for this purpose are majority vote (MV) [41], Dempster–Shafer (DS) [42], or fuzzy integral (FI) [43]. Currently, most detection methods that use fusion techniques are based on deep learning and supervised detection. The main approach is to have several classifiers in parallel with the same objective, but to vary the parameters of the supervised learning [44,45,46], for example using different convolutional neural networks (CNNs) differing in the convolutional filter size (e.g., 3 × 3, 5 × 5, and 7 × 7) in parallel and then fusing their results [47,48]. Another reported approach is to perform feature-based change detection using textures and morphological profiles at different scales [49]. There are also object-based multi-scale techniques where variability is achieved by varying the scale of each object using circular structures [50] or segmentation algorithms [51]. Ensemble learning has also been used to perform fusion for change detection [52,53,54] and is based on a combination of multiple classifiers to make a final decision, whereby the main objective is to minimise the errors of a single classifier by compensating them with other classifiers, resulting in a higher overall accuracy [55].

The most-commonly used algorithms for object extraction in a non-regular context are segmentation algorithms, which divide the image into segments, homogeneous regions according to some criterion. Segmentation is not a well-defined problem, so different algorithms have been proposed based on different approximations. The watershed transform [56] is a region-based technique that can find the catchment basins and watershed lines for any grayscale image. It is not directly applicable to multidimensional images, so it is common to use an algorithm such as robust colour morphological gradient (RCMG) [57], which reduces the dimensionality to a single band before segmentation. Superpixel methods are another type of segmentation algorithm, which can modify the granularity of the segmentation depending on parameters such as segment size or regularity. Superpixel techniques are separated into different categories [58,59]: watershed-based such as waterpixels [60] and morphological superpixel segmentation (MSS) [61] based on the watershed algorithm; density-based such as quick-shift (QS) [62]; graph-based, which interpret the image as a non-directional graph such as entropy rate superpixels (ERSs) [63]; clustering-based, which use clustering algorithms to generate the segmentation. The latter group involves simple linear iterative clustering (SLIC) [64] or extended topology preserving (ETPS) [65]. Based on these segmentation algorithms, different multi-resolution approaches have been proposed by applying a segmentation algorithm at different spatial resolutions. These methods are especially adequate for VHR images or for those datasets that present change areas of different sizes [66]. The multi-resolution segmentation (MRS) algorithm [67] and the MSEG algorithm [68] are commonly used multi-resolution segmentation algorithms. In general, all segmentation algorithms can be designed to exploit not only the spatial information of the images, but also the spectral information by considering the different bands of the image for each pixel. In this study, three segmentation algorithms that exploit all the spectral information available in the images were selected including the watershed algorithm as it is very efficient in remote sensing [69,70] and SLIC and waterpixels because they preserve the edges and boundaries in the images and provide flexibility as the superpixel algorithms can be tuned by adjusting the size and shape of the superpixels.

Different techniques can be used to analyse multi-temporal images, and they are classified into two main groups: those based on multi-temporal information at the feature level and those based on the multi-temporal information at the decision level [71,72]. The feature-based group is directly related to unsupervised change detection using techniques such as the calculation of the ratio or pixel-to-pixel difference between two images. The decision-based change detection approach is usually based on the post-classification of the processed images and on the calculation of the differences between the generated classification maps [73,74,75] or performing a joint classification of multiple images [49,76] with domain adaptation [77,78].

The two most-common algorithms for detecting changes are binary detection and multiclass detection. The first generates a change map, where each pixel is classified into a binary set as change or unchanged, whereas multiclass detection classifies pixels associated with changes into a set of classes corresponding to different types of changes [79,80,81].

Regarding the field of application, some multi-scale methods focus on detecting changes at the level of structures or buildings [82] using the LEVIR-CD building dataset consisting of VHR images taken from Google Earth. Some researchers have developed methods for detecting changes in vegetation [44,49,83,84,85], specifically for detecting the rapid growth of invasive species. In particular, one research group [49] used texture extraction and multi-scale for performing an extended morphological profile, which was then used as the input for a set of support vector machine (SVM) classifiers. The objective was to detect changes related to the replacement of vegetation by buildings using the multispectral Steinacker dataset composed of two pan-sharpened QuickBird images. The method proposed in [44] uses a similar approach, but instead of using multi-scale object-based techniques, it uses the multiresolution segmentation algorithm (MRS) with multiple SVM classifiers for detecting changes between VHR multispectral images in land use mainly from cultivated land to bare soil. Using algebraic techniques not based on machine learning, Reference [86] extracted textures with a single object-level descriptor (not multi-scale) and differentiated between them; this method has been used on VHR images of vegetation, in particular for changes from vegetation to bare ground or from vegetation to buildings.

The main contribution of this paper is the proposed multi-scale binary change detection based on consensus techniques for multi-algorithm fusion. Consensus exploits the same idea as in ensemble learning, but from a classical algorithmic approach rather than a machine learning approach. Ensemble learning uses the combination of multiple classifiers with the same objective and, therefore, is more robust than individual algorithms in detecting changes [54,55]. By using multiple algorithms, ensemble learning is also less sensitive to noise and outliers in the data, which can lead to more accurate results [53]. Overall, ensemble learning is a powerful approach to improving the robustness and flexibility of change detection in remote sensing. The proposed method was designed for multispectral and hyperspectral VHR multi-temporal vegetation datasets using the CVA-SAM at the segment level to compute the difference maps. This technique combines several multi-scale segmentation algorithms by making a consensus fusion of the results obtained by different multi-scale segmentation algorithms. The result is a binary change detection map that indicates whether there is a change or not for each pixel.

2. Materials and Methods

2.1. Dataset Description

This subsection presents and describes bi-temporal change detection datasets. Each dataset contains a pair of multispectral or hyperspectral images and a reference map where each pixel is labelled as change or not. The reference information has been elaborated by experts in the field using visual inspection, information from public repositories, and labelling techniques.

Five datasets were selected for the evaluation of different scenarios related to changes in forest and crop vegetation. In particular, three of the datasets allowed us to evaluate the changes in crops due to agricultural cycles. On the other hand, the other two datasets were specifically selected to evaluate the changes between different types of vegetation in forests. They included scenarios of vegetation change, such as the transition from pine to eucalyptus.

The three low–medium-resolution hyperspectral datasets contain vegetation changes related to crops [87] and were taken by the satellites HYPERION [88] and AVIRIS [89]. The other two datasets are VHR images taken by unmanned aerial vehicles (UAVs) using the MicaSense RedEdge-MX sensor [90] and contain vegetation changes in natural ecosystems of river watersheds in Galicia (Spain) [91]. Note that, although the datasets were collected in the same phenological period, they show substantial changes. For these datasets, the changes between images are known and represent the reference information. Table 1 shows the characteristics of each dataset along with the information related to the sensors used to capture it.

Table 1.

Description of the change detection datasets used and the amount of spectral information and resolution of each image.

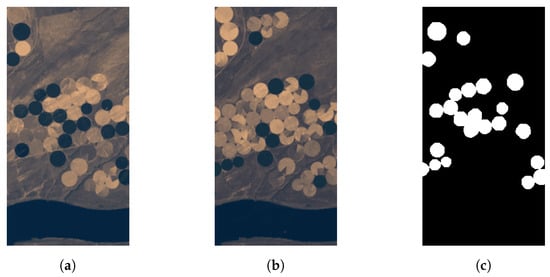

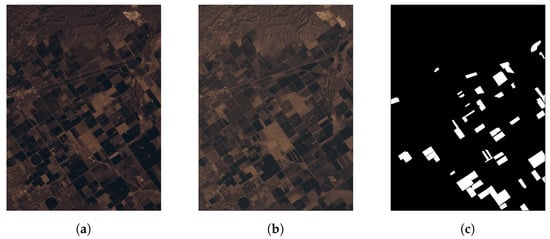

Regarding the satellite change detection datasets, Figure 1 shows the Hermiston scene consisting of two images obtained by the sensor HYPERION over an area of Hermiston in Oregon (45°5849.4N, 119°1309.6W). The dataset shows a rural area with several circular crop fields. The images correspond to the years 2004 and 2007 and were downloaded from the United States Geological Survey (USGS) website [88]. The other two datasets, Santa Barbara and Bay Area, were captured by the AVIRIS [89] sensor with a resolution of 20 m/pixel. Figure 2 shows the Bay Area dataset, taken over the city of Patterson in California (United States) in 2013 and 2015. Santa Barbara is shown in Figure 3 and was captured in 2013 and 2014 over the city of Santa Barbara in California (United States).

Figure 1.

Change detection dataset of Hermiston city: (a) original image taken in 2004; (b) image with changes taken in 2007; (c) change reference information.

Figure 2.

Change detection dataset of Bay Area in Patterson city: (a) original image taken in 2013; (b) image with changes taken in 2015; (c) change reference information.

Figure 3.

Change detection dataset of Santa Barbara in Patterson city: (a) original image taken in 2013; (b) image with changes taken in 2014; (c) change reference information.

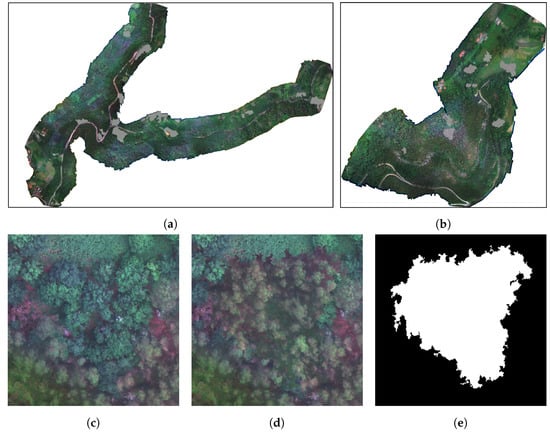

The UAV datasets were taken in 2018 with a MicaSense RedEdge-MX multispectral [90] camera onboard at an altitude of 120 m. This camera captures five spectral bands corresponding to the following wavelengths of the electromagnetic spectrum of light: 475 nm (blue), 560 nm (green), 668 nm (red), 717 nm (red edge), and 842 nm (near-infrared). The VHR change detection datasets are shown in Figure 4a,b, including in grey the regions corresponding to changes. As usual, the vegetation changes do not correspond to isolated pixels, but to regions of the images. Figure 4c–e show a clipping of the images for the Oitaven dataset, illustrating an example of vegetation change.

Figure 4.

VHR change detection datasets, original image and reference information of: (a) Oitavén dataset and (b) Ermidas dataset. Details of the changes for an area of the Oitavén dataset: (c) original area; (d) area with changes; (e) reference information of changes.

2.2. Methodology

This paper proposes a binary change detector based on consensus techniques and the integration of spatial–spectral information using multi-scale segmentation. The change detection process was performed at the segment level, and the use of segments instead of regular patches allowed for the exploitation of the image spatial information.

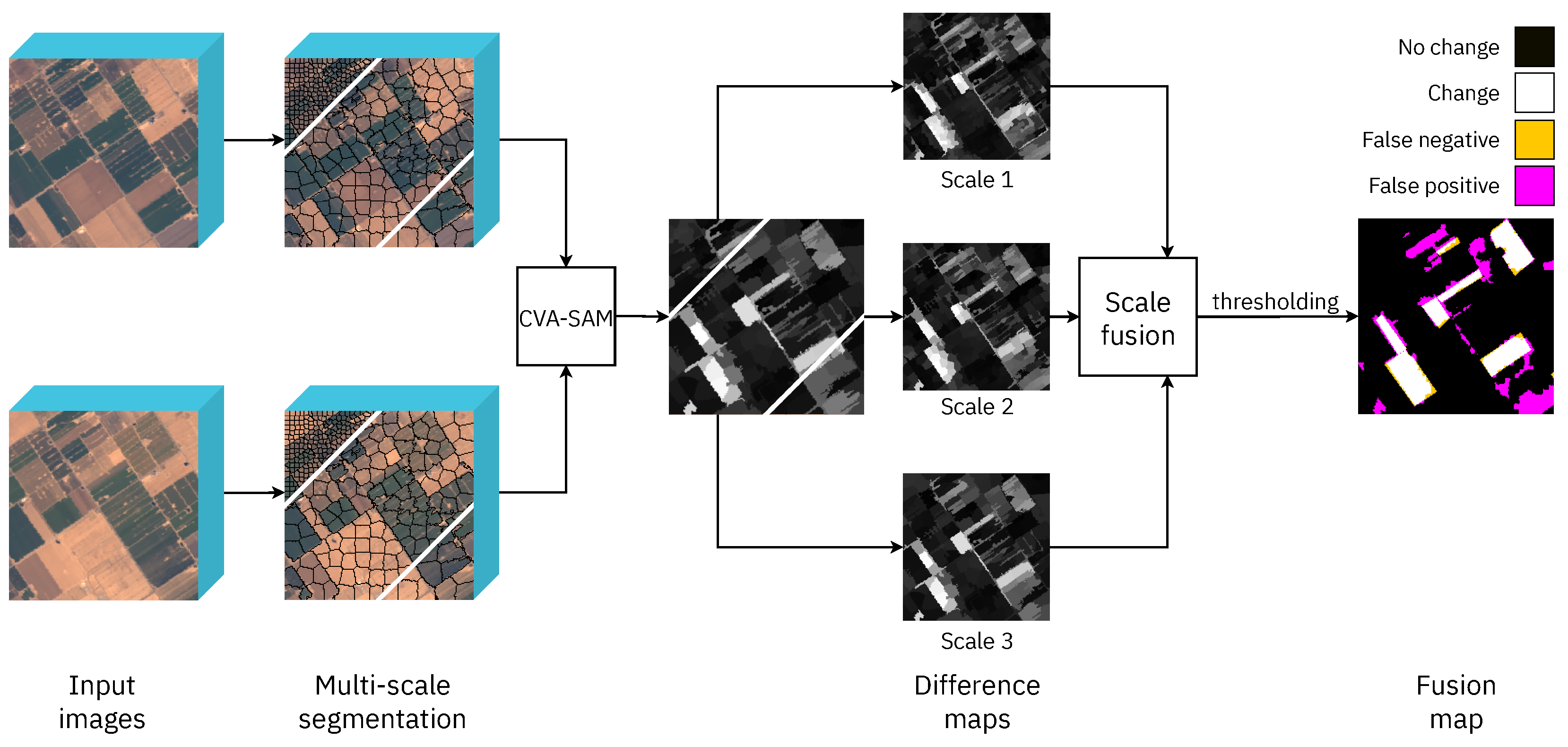

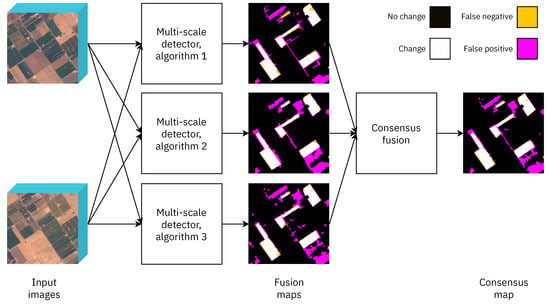

The proposed technique is shown in Figure 5 where the detection was applied over two images obtained at different times (input images in the figure). Each multi-scale detector shown in the figure used a different segmentation algorithm applied at different scales to perform multi-scale segmentation and obtained a binary change detection map (fusion maps in the figure). These maps were then merged using consensus techniques to obtain a single binary change map (consensus map in the figure). This last fusion was a decision-level fusion: a consensus was reached among the different detectors to decide whether it was classified as a change or not for each pixel. The different steps of the proposed technique are detailed in the following paragraph.

Figure 5.

Proposed technique for binary change detection using three different multi-scale detectors, each with a different segmentation algorithm (algorithms 1, 2 and 3). The yellow pixels correspond to the changes that the detector has not been able to identify. The magenta pixels are those that the algorithm has erroneously detected as change.

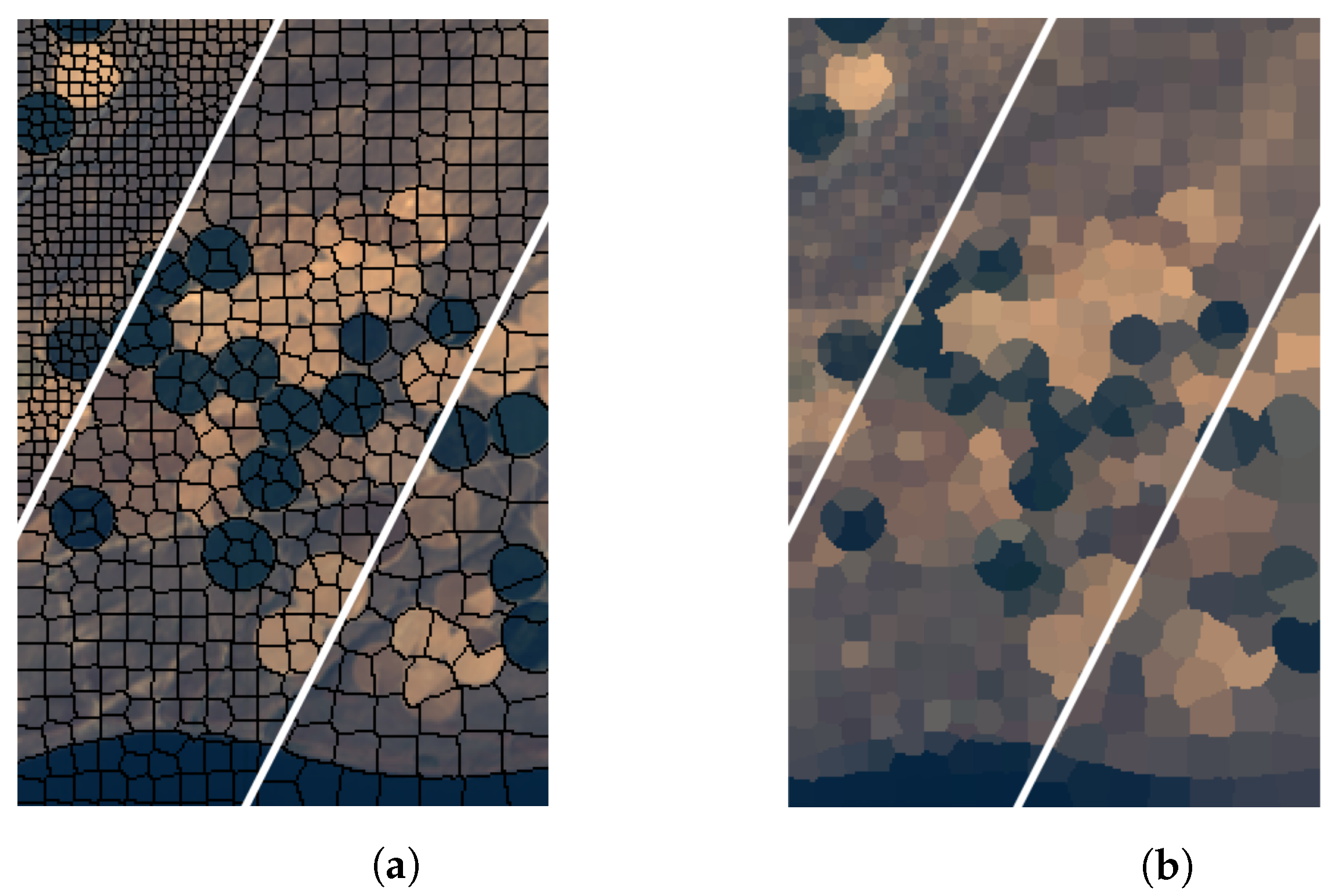

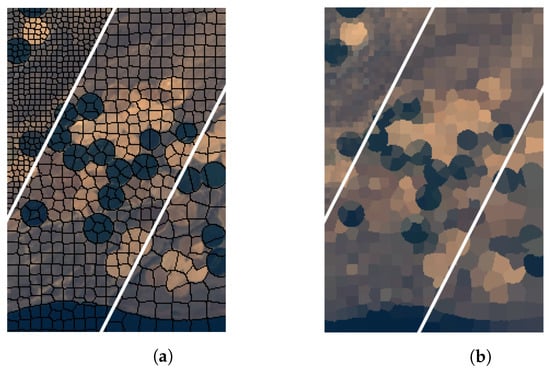

Segmentation algorithms: Objects were extracted from the image using segmentation algorithms to take advantage of the high spatial resolution of the VHR images. The result was a map that indicated the segment to which each pixel belonged. As segmentation is not a well-defined task and different approaches are possible, several segmentation algorithms were used in this study, as is shown in Figure 5, and their results were combined by applying a consensus technique. In particular, watershed [56], waterpixels [60], and SLIC [64,92,93] were applied because they use all the spectral information of each pixel and are efficient at adapting to objects of different sizes. The segmentation algorithm was computed on the image that had changed, the most up-to-date in time, and the resulting segmentation map was applied to both images. Thus, both images were partitioned into segments of the same shape and size. For example, Figure 6 shows the results obtained by applying SLIC at three different scales for the Hermiston image.

Figure 6.

Example of a multi-scale segmentation of the Hermiston dataset using SLIC: (a) image segmented at three different scales; (b) image after segmentation at different scales and assigning one representative pixel to each segment.

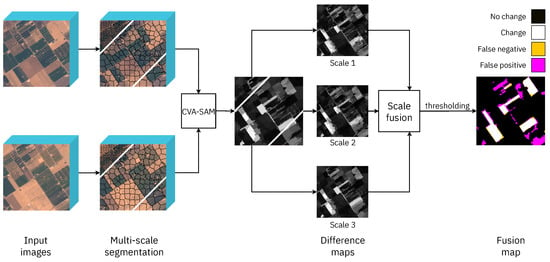

Multi-scale detector: Figure 7 shows the proposed technique for each individual multi-scale detector in Figure 5. Each multi-scale detector used a different segmentation algorithm to obtain a binary change map using multi-scale segmentation, that is performing a segmentation at different scales. As shown in Figure 7, each multi-scale detector was composed of a segmenter that operated at multiple scales, a CVA-SAM module that generated difference maps at the corresponding scales, and a scale fusion module. Once the segmentation was calculated, a difference operation between both images was applied at the segment level. In this study, the difference method applied was the CVA-SAM based on CVA with the spectral angle mapper (SAM) distance, which is one of the best-performing techniques in the literature related to change detection [94,95]. As shown in Figure 7, for each segmentation, the CVA-SAM was computed, producing a difference map representing the magnitude of the change for each segment (all pixels in a segment had the same value in the difference map). Therefore, different instances of the CVA-SAM were computed and, subsequently, merged using a scale fusion algorithm. Finally, a thresholding operation based on the Otsu algorithm was applied to obtain a single binary change map (fusion map in the figure). Each of these elements is discussed in detail below.

Figure 7.

Detail of each multi-scale detector shown in Figure 5. The segmentation algorithm is applied at different spatial scales, and the CVA-SAM at the region level computes the image difference for each scale.

Segment-based CVA-SAM: The CVA technique is based on computing the vector of change between each pair of pixels of the images corresponding to the same position in both the images [33], and in particular, the SAM technique calculates the similarity between two pixels by analysing the angle of the the change vector [96]. This vector is obtained by performing the difference between the pixel vector of the changed and the original image. Once the change vector is obtained, it is analysed following different metrics such as distance or angle.

Other techniques for computing the difference between images can be found for change detection in the literature, such as the neighbourhood correlation image (NCI) [97], a method that calculates the correlation between the pixels of the images to check whether there is a change or not. In this study, CVA was used instead of the NCI technique because of the former’s lower computational cost.

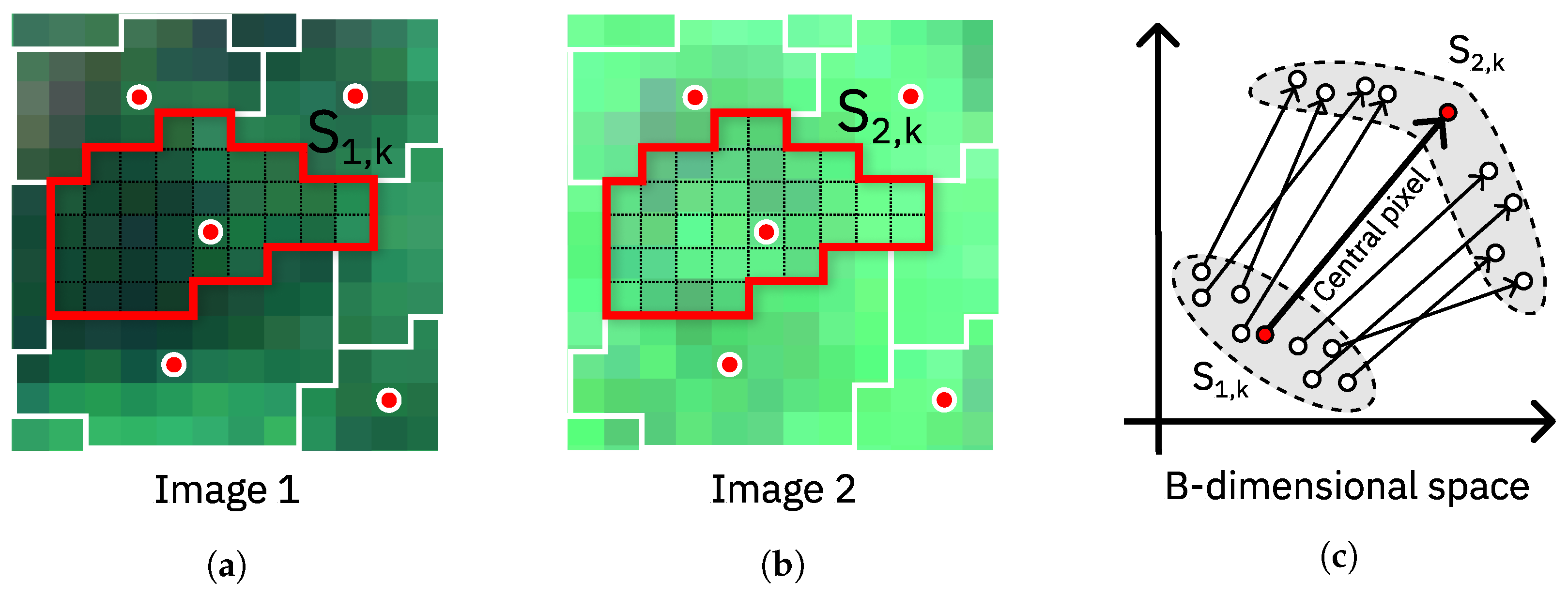

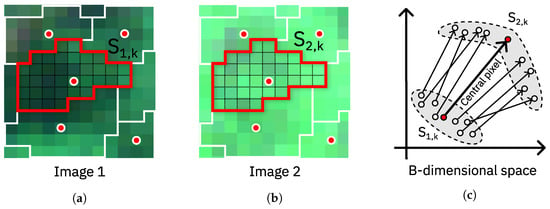

The main problem with using a technique such as CVA is that it performs a pixel-by-pixel difference between both images without using spatial or context information. Therefore, a CVA-SAM method was used to exploit the segmentation information by two representatives of the segment: the first was the pixel vector corresponding to the central pixel of the segment, and the second was the mean of the values of the segment. Thus, a segment-by-segment difference was calculated using both the representative pixel vectors per segment, and the operations were computed at the segment level instead of pixel-by-pixel, mitigating the effect of noise in the detection. The use of these representative pixels also reduced the computational cost by reducing the data dimensionality. Figure 8 shows an example of the CVA-SAM computation between two segments where the change vector corresponding to the central pixel clearly indicates the main magnitude and direction of change.

Figure 8.

Computation of the CVA-SAM at the region level over two segments (segment selected in red): (a) first image, (b) second image, and (c) representation of the change vectors enhancing the change vector for the central pixel (red point of the segment) in a B-dimensional space with B as the number of bands.

Equation (1) shows the computation of the difference between pixel vector representatives and of segment k using the SAM distance. A single value using all the spectral information was computed for each pixel corresponding to the same position in both multi-temporal images. The resulting difference map made up of these intensities of change values, each one being representative of a segment of the images, was thresholded to discriminate between pixels with and without change.

where:

- and are the spectrum of the representatives of the segment k in Images 1 and 2.

- is the dot product and the module of the vector.

- is the spectral angle between the spectra and .

Thresholding: This step determined the change in intensity over which a pixel was considered a change. The Otsu algorithm, based on thresholding the histogram of the difference map, is commonly used for this task because of its high efficiency [38], as well as low computational cost.

The Otsu algorithm minimises the intraclass variance and maximises the interclass variance. Once the optimal threshold t has been obtained, the difference map is processed by filtering the pixels that fall below the threshold value and keeping as a change those that exceed it, as shown in Equation (2), where indicates the pixel value at position .

Scale fusion and consensus methods: Two types of fusion techniques were applied in this study, a data-level fusion for each multi-scale detector, i.e., for merging the difference maps obtained for each segmentation algorithm at different scales, and a decision-level fusion, a consensus technique used for merging the change maps obtained by the different multi-scale detectors.

The selected fusion methods are described below and were selected instead of Dempster–Shafer (DS) [42,98,99] or the fuzzy integral (FI) theory [43] because of their simplicity and efficiency:

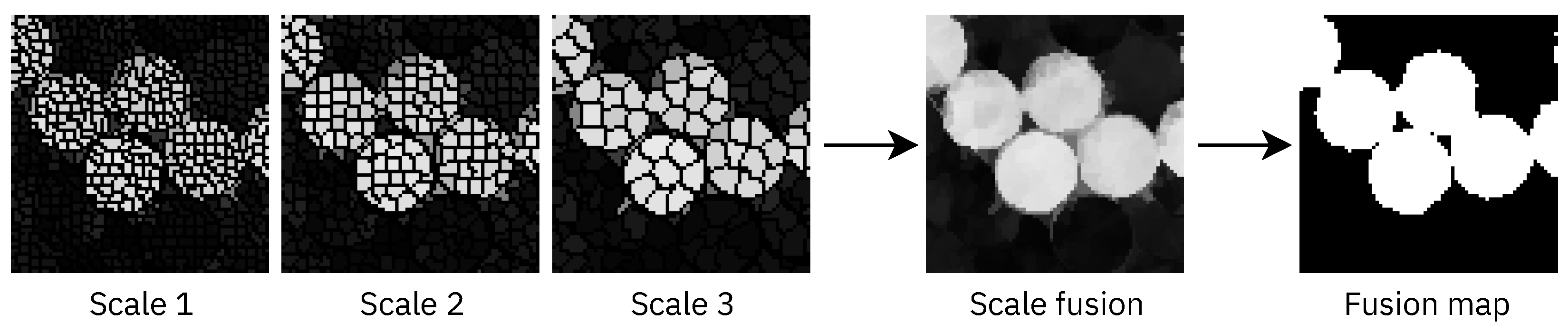

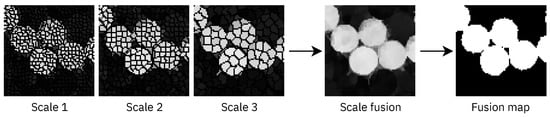

- Scale fusion: The techniques listed in Table 2 were used to merge the change intensity maps obtained after applying the same segmentation algorithm at different scales. Figure 9 shows an example of scale fusion for three scales of a Hermiston cropping, showing the difference map obtained after fusion and the subsequent thresholded map (fusion map in the figure).

Table 2. Equations of the scale-fusion techniques used in this study, where indicates the value intensity of the pixel of the map k and n denotes the number of maps to be fused.

Table 2. Equations of the scale-fusion techniques used in this study, where indicates the value intensity of the pixel of the map k and n denotes the number of maps to be fused. Figure 9. Example of scale fusion of three difference maps at three different segmentation scales for a Hermiston cropping. The result of the fusion (scale fusion) and the subsequent thresholding of the result (fusion map) are shown.In the case of weighted fusion, a weight inversely proportional to the segment size was assigned to each difference map as shown in Equation (3), where indicates the weight of the map corresponding to the i-th scale segmentation. This implied that the smaller the segment size corresponding to a difference map, the higher the weight assigned to the map was.

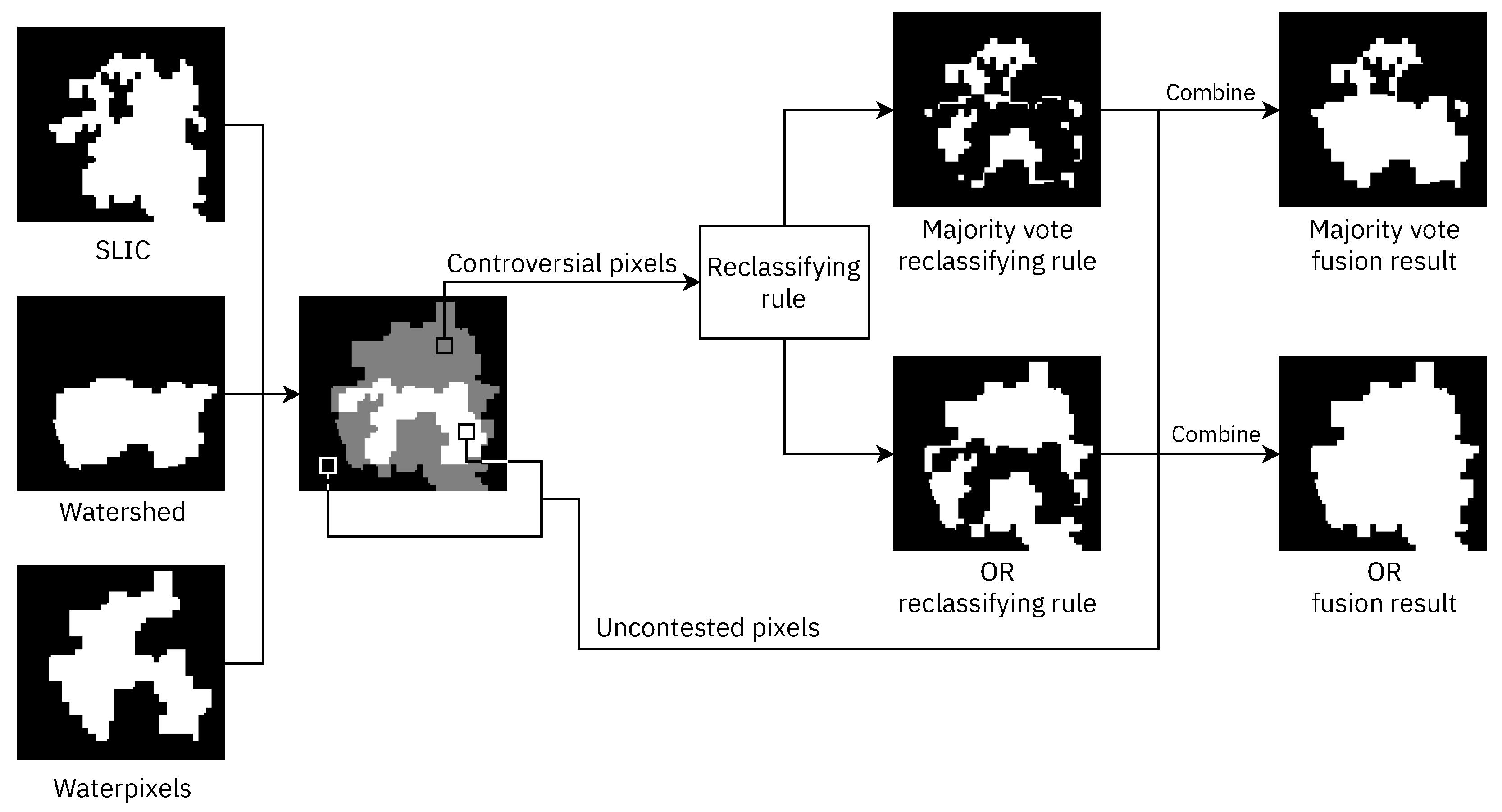

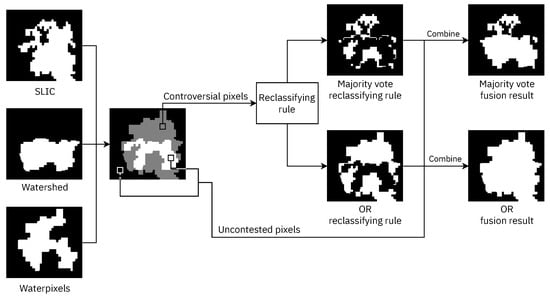

Figure 9. Example of scale fusion of three difference maps at three different segmentation scales for a Hermiston cropping. The result of the fusion (scale fusion) and the subsequent thresholding of the result (fusion map) are shown.In the case of weighted fusion, a weight inversely proportional to the segment size was assigned to each difference map as shown in Equation (3), where indicates the weight of the map corresponding to the i-th scale segmentation. This implied that the smaller the segment size corresponding to a difference map, the higher the weight assigned to the map was. - Consensus technique: A final decision was made based on multiple binary change maps. Figure 10 illustrates the process considering that SLIC, watershed, and waterpixels denote the change maps produced by the three different multi-level detectors. Two types of pixels were considered: controversial and uncontested pixels [100]. An uncontested pixel was one for which all of the input maps matched the class to which it belonged (change or no change), whereas controversial pixels were those for which there was no consensus between the input maps and, therefore, required a reclassification to make a final decision. After the reclassification, the reclassified pixels were combined with the uncontested pixels, generating a change map. The reclassification or consensus techniques used are shown below:

Figure 10. Consensus techniques for decision fusion, with the reclassification of controversial pixels using two techniques: majority vote and OR.

Figure 10. Consensus techniques for decision fusion, with the reclassification of controversial pixels using two techniques: majority vote and OR.- -

- Majority vote fusion (MV): For each pixel, each detector took a vote with its result, so if the majority of detectors detected a change, the final map would classify that pixel as a change.

- -

- OR fusion (OR): If a single detector had classified a pixel as a change, this pixel was classified as a change in the final map.

As the objective of this application was to detect all of the changes in vegetation, even at the cost of having some false positives, OR fusion was the best solution, as it could be confirmed by the experimental results.

2.3. Experimental Methodology

This sub-section focuses on defining the metrics used to assess the effectiveness of the change detection method in terms of accuracy. Each result of the binary change detection was a map where each pixel was classified as “change” (positive) or “no change” (negative) and was compared to the reference information of the change pixels to analyse the quality of these results. In a binary classification, such as binary change detection, pixels can be divided into different sets: true positive (TP), true negative (TN), false positive (FP), and false negative (FN). True pixels correspond to the pixels correctly classified within the corresponding category (change or no change).

The following metrics were used to evaluate the quality of the change detection results following the nomenclature used in [101]:

- Completeness (CP) or recall: is the percentage of positives (change pixels) correctly detected by the technique.

- No change accuracy (NCA): is the percentage of negatives (no change pixels) correctly detected by the technique.

- Correctness (CR) or precision: is the percentage of changes correctly detected over the number of changes detected by the algorithm.

- Overall accuracy (OA): is the percentage of hits of the algorithm. This is the metric most commonly used for measuring the performance of classification algorithms. Nevertheless, it does not describe correctly the results of change detection as the percentage of hits in change and no change are jointly measured even if the percentages of changed and unchanged pixels in the reference data are very different.

- -score: is a generalisation of the -score, which computes the harmonic mean between the recall (CP) and the precision (CR); this is useful for comparing the performance of different change detection algorithms.where determines the ratio of the influence of recall over the precision [54]. The main problem with the -score () is that it gives the same importance to precision as to recall when in many applications of binary change detection (and binary classification in general), detecting all the changes (higher recall) is critical even if a detected change is not really a change (lower precision). This was the case in this study because detecting all of the possible changes in vegetation was the priority even if some false positives were obtained, so we used the -score ().

Regarding the configuration parameters of the segmentation algorithms required for performing segmentation at different scales, in the case of the superpixel-based methods (SLIC and waterpixels), the parameter modified for the different scales was the average size of the superpixels, i.e., the segments. In the case of watershed, the region marker threshold was modified to achieve different scales. The selected values for the superpixel sizes and markers for the three different scales considered in the experiments (L0, L1, and L2) are presented in Table 3. Additionally, the average segment size for each segmentation configuration is shown, thus indicating the growth of the granularity with the modified parameter.

Table 3.

Segmentation configurations for the three scales L0, L1, and L2 and for each dataset. The first number is the parameter related to the average segment size for each algorithm (superpixel size in the case of SLIC and waterpixels and region marker threshold in the case of watershed). The second number shows the resulting segment average size (number of pixels per segment).

3. Results

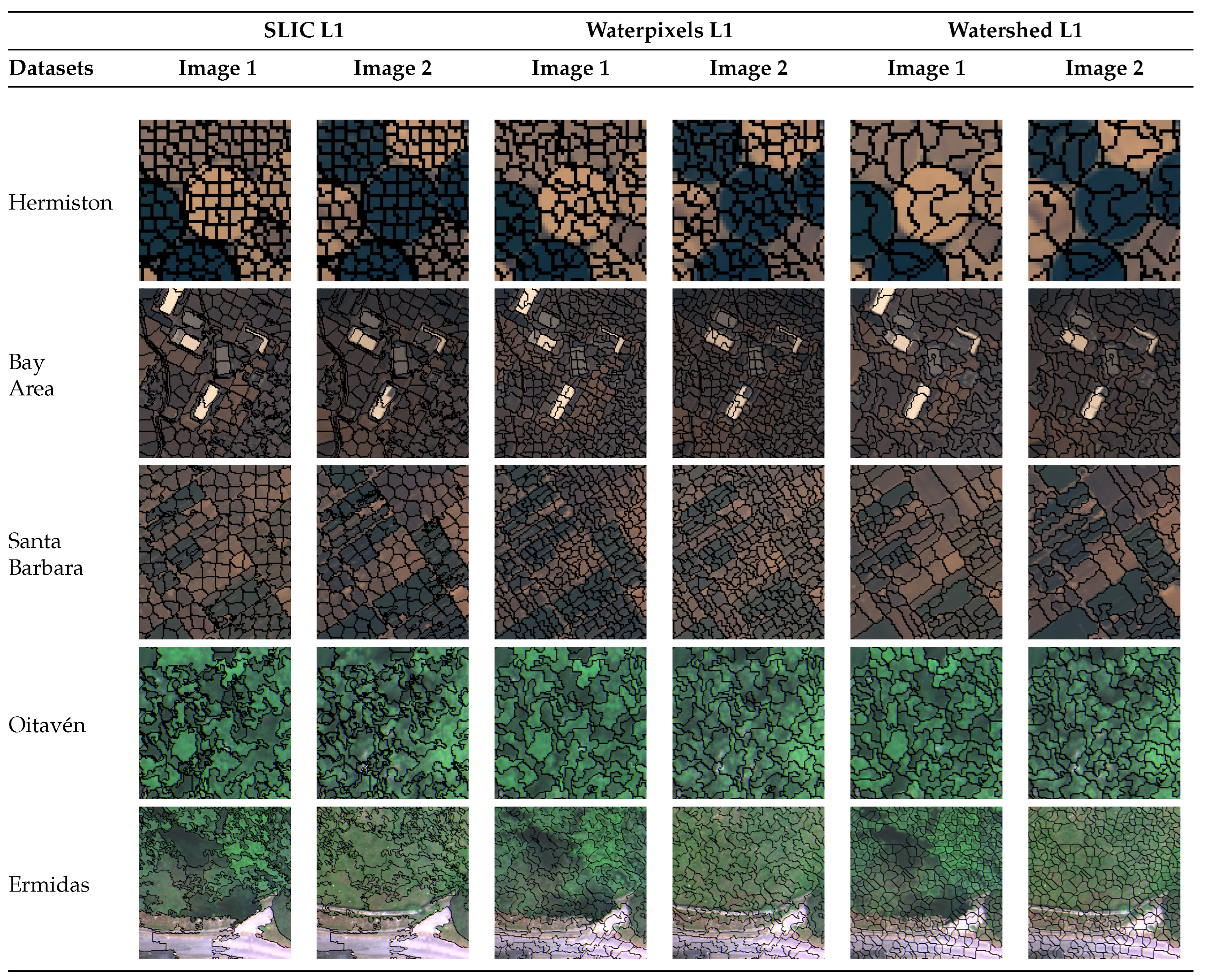

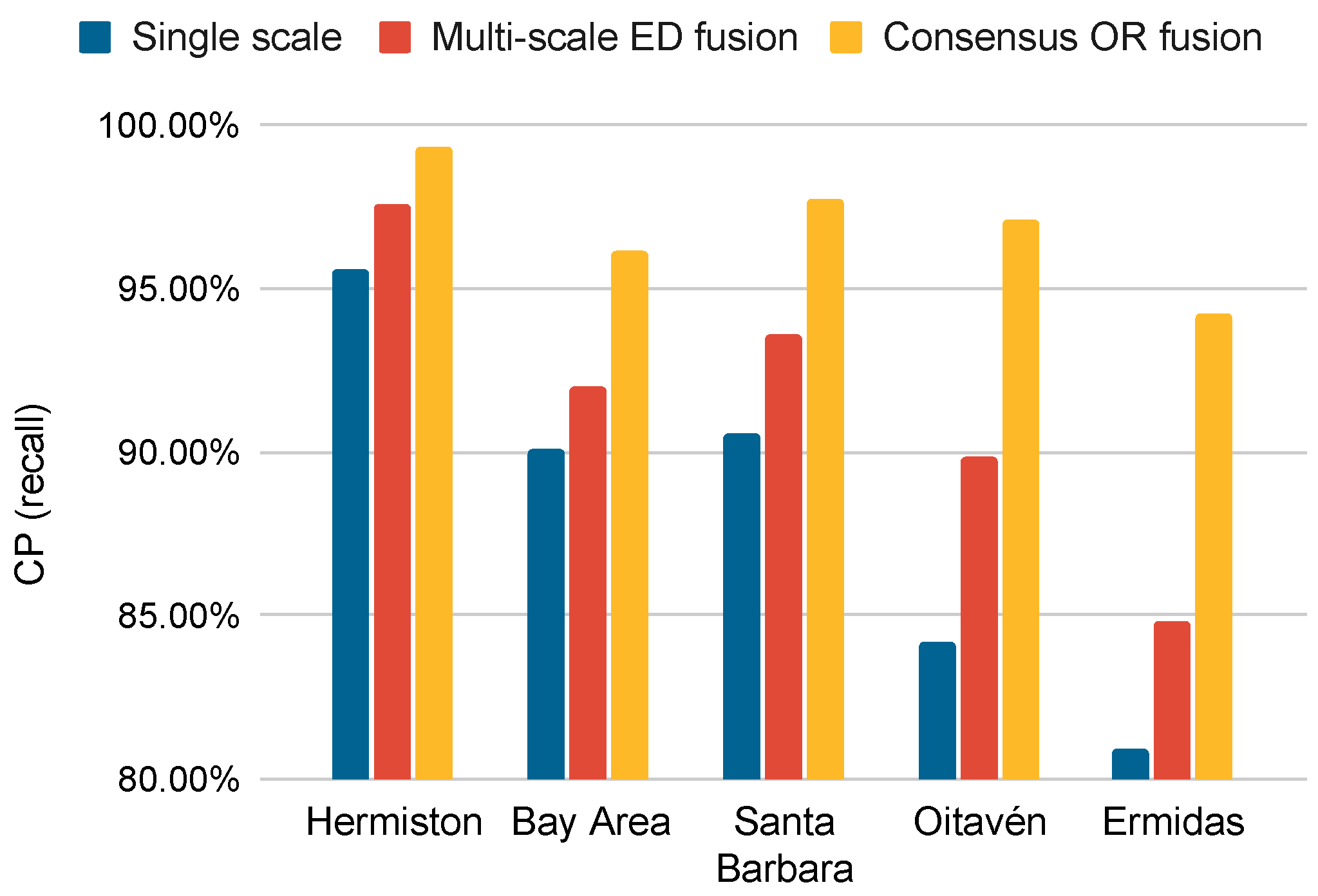

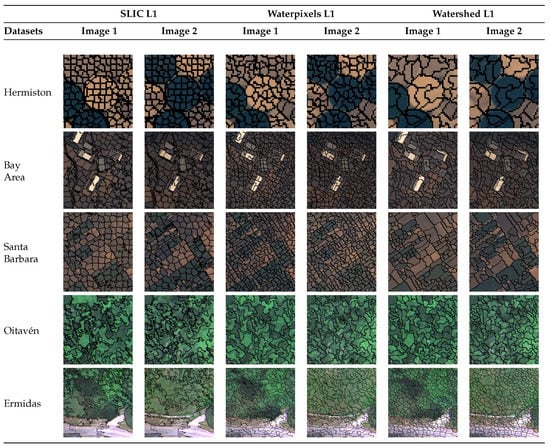

This section analyses the quality in terms of the accuracy of the proposed multi-scale approach based on consensus techniques and compares it using different configurations. The different metrics used to assess the quality of change detection are described in Section 2.3. Table 4 shows the results obtained by the proposed change detection (CD) method in terms of CP or recall using three levels of segmentation for each of the three segmentation algorithms. The results for all of the intermediate phases are also shown. This table shows the accuracy of every single level of segmentation (single scale) after merging several levels with the five different fusion algorithms described in Section 2 (Columns 5–9) and, finally, after merging the results of each multi-scale segmentation using two consensus techniques (last two columns in the table). The colour code from dark red to dark green made it easy to observe that the worst results were obtained using a single-scale technique. Multi-scale fusion yielded better quality results, particularly with the fusion based on the Euclidean distance (ED) for all of the datasets. The ED detected more changes at the cost of some erroneous detection, as mentioned in the previous section. Finally, the best results were obtained by performing consensus fusion: merging the results of each ED multi-scale fusion, in particular with the OR fusion technique. These results confirmed the effectiveness of the proposed method with the ED, multi-scale fusion, and OR consensus fusion, obtaining accuracies exceeding 94% in both VHR and low- or medium-resolution datasets. Figure 11 presents the croppings of the Level-1 segmentation maps generated for each dataset using SLIC and waterpixels, showing that, for the same image (one row of the figure), the three different segmentation algorithms provided different spatial information even when the same average size for the segment was used for all.

Table 4.

CP in percentage using the proposed multi-scale scheme for each dataset. The results are shown for each dataset with a colour gradient from dark red (worst result) to dark green (best result). Accuracy values were computed by varying the scale and algorithm fusion type. The results are detailed progressively, first the single scale for each segmentation, then the fusion of the three scales, and finally, the fusion of the results for the different segmentation algorithms. This last consensus fusion merged the results obtained by the ED scale fusion (marked in bold) using the consensus fusion algorithms MV and OR.

Figure 11.

Level-1 (L1) segmentation map generated by each algorithm (SLIC, waterpixels, and watershed) for each dataset. One cut from each dataset is displayed to visualise the segmentation. The segmentation was computed on the most-current image in time and was used in both images. Images 1 and 2 represent the images of each dataset obtained on different dates.

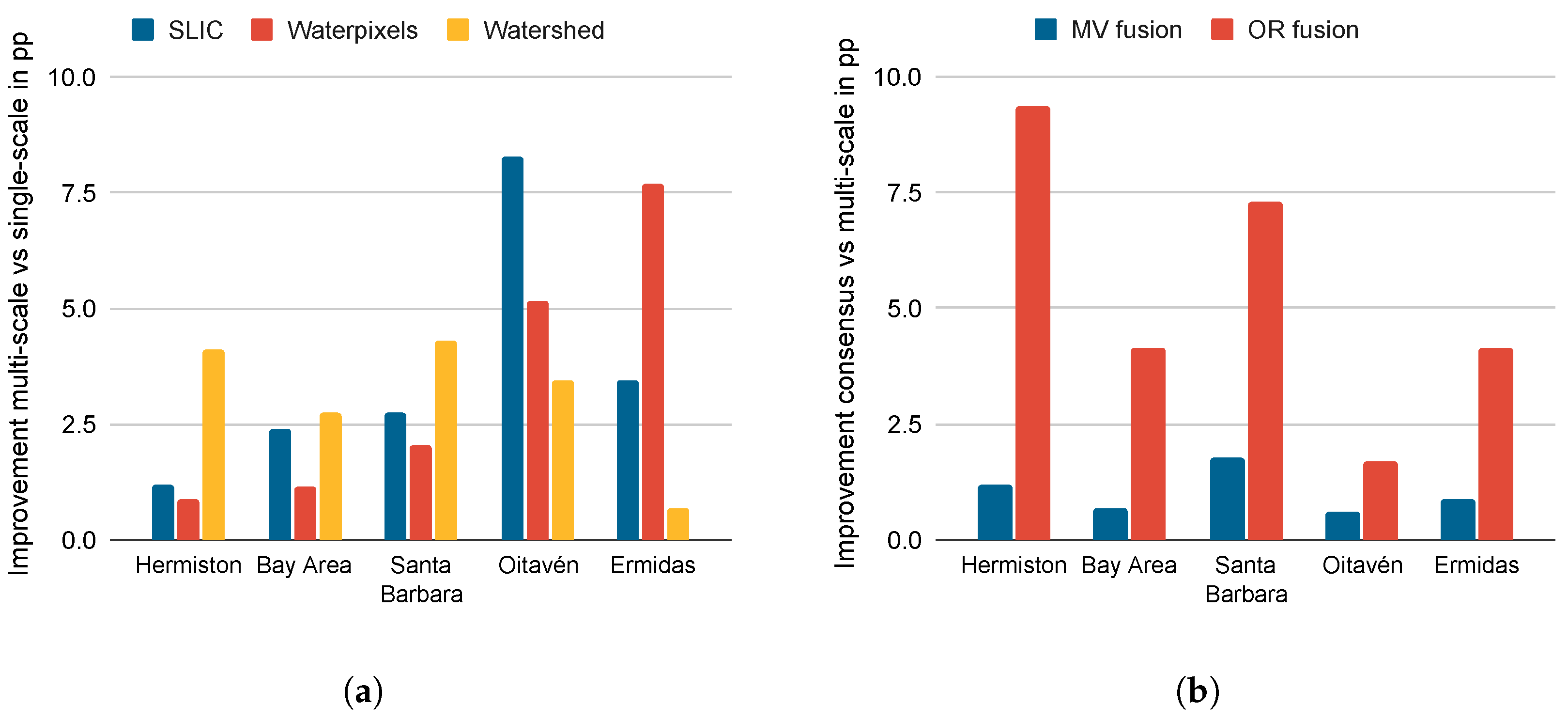

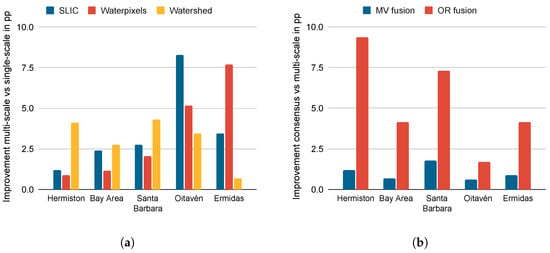

The problem of detecting changes in vegetation, particularly from VHR images, requires first considering that the vegetation changes were usually at the level of objects of different non-regular sizes and shapes; thus, segmentation at several granularity scales was considered. Therefore, our first hypothesis was that there was an improvement in CP (recall) when using a multi-scale segmentation for a single scale. Figure 12a presents the difference between the results obtained by the multi-scale approach using the best-proposed fusion method (ED fusion in Table 4) and the average of the accuracies obtained using the single-scale detector with the scale L0, showing improved CP in all of the datasets and with all of the segmentation algorithms upon merging the scales.

Figure 12.

Improvements in CP (recall) (a) using multi-scale segmentation for each algorithm with respect to the average using a single-scale segmentation; (b) using consensus and different segmentation algorithms with respect to the average of the multi-scale approach.

In addition, as segmentation is not a well-defined problem and VHR images present richer spatial information, different segmentation algorithms were proposed, and their results were combined using consensus techniques. Therefore, it was necessary to test the hypothesis that a consensus-based fusion among segmentation algorithms improved the accuracy of the multi-scale version with only one segmentation algorithm. To answer this question, Figure 12b shows the improvements in CP using the consensus technique approach over the use of a single multi-scale segmentation algorithm, with improvements obtained for all of the datasets obtaining the highest results in the case of the OR fusion technique. This improvement was, as expected, particularly high for the VHR datasets, i.e., an average improvement of +5.33 percentage points (pp) and, specifically, an average improvement of +8.33 points for the two VHR datasets (Oitaven and Ermidas).

Table 5 presents the accuracy results for each step of the technique evaluated in terms of different metrics: CP, NCA, and OA, showing the multi-scale detection results using only the best option selected above, the ED fusion, and the results using consensus fusion by MV and OR. Since the MV technique reduces the number of FPs (higher NCA) at the cost of detecting fewer changes (a higher number of FNs and a lower CP), this technique obtains results where each detected change has a higher probability of being a real change. In contrast, the OR fusion technique has the opposite behaviour, as it detects a larger number of changes (reduces the number of FNs and increases CP) at the cost of an increase in the number of FPs (lower NCA). Therefore, the latter technique allows the generation of change maps for which the number of undetected changes is minimal. The best consensus method for the main objective of this paper is the OR fusion because it obtained the best CP results (marked in bold) with only small OA decreases.

Table 5.

CP, NCA, and OA in percentage using the proposed multi-scale technique for each dataset. First, the accuracies for multi-scale fusion using the ED fusion technique are shown. Then, consensus fusion using the MV and OR fusion techniques is shown. The best results in each category (CP, NCA, and OA) for each dataset are shown in bold.

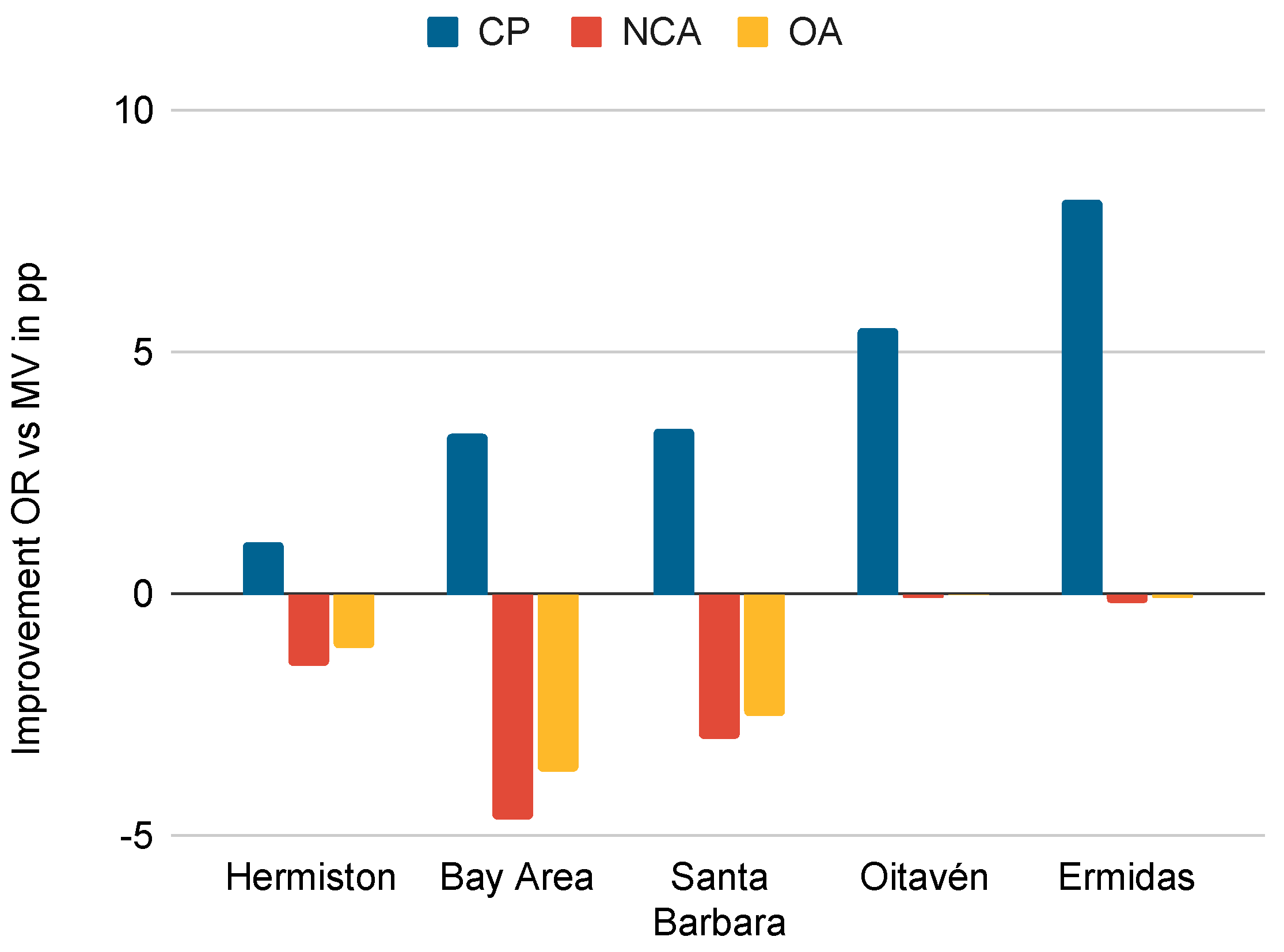

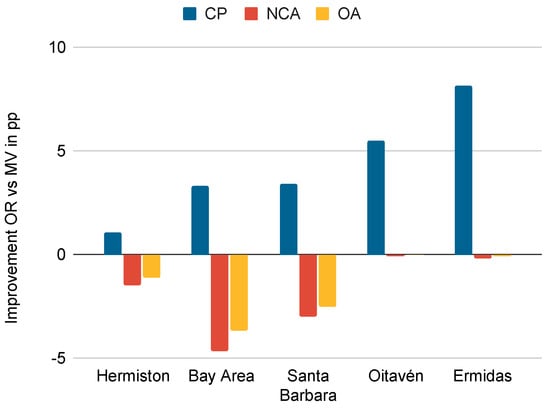

Figure 13 shows a detailed comparison of the OR and MV fusion techniques for the reclassification of the controversial pixels that allowed us to analyse whether there was an increase (positive value) or decrease (negative value) in the quality of OR with respect to MV. It can be seen that, in all datasets, there was an average +4.30 pp of improvement in CP when using the OR technique with respect to MV, while the OA was not reduced by more than −1.48 pp on average. This indicated that the OR fusion technique aims to maximise the number of detected changes at the cost of failing in some pixels, and this can be seen in the increase with respect to the MV of the CP for all datasets. In this paper, the OR technique was selected as the best consensus technique because it detected more changes than MV without committing many errors in comparison.

Figure 13.

Accuracy improvement in percentage points of consensus by OR over consensus by MV for the accuracy metrics CP, NCA, and OA.

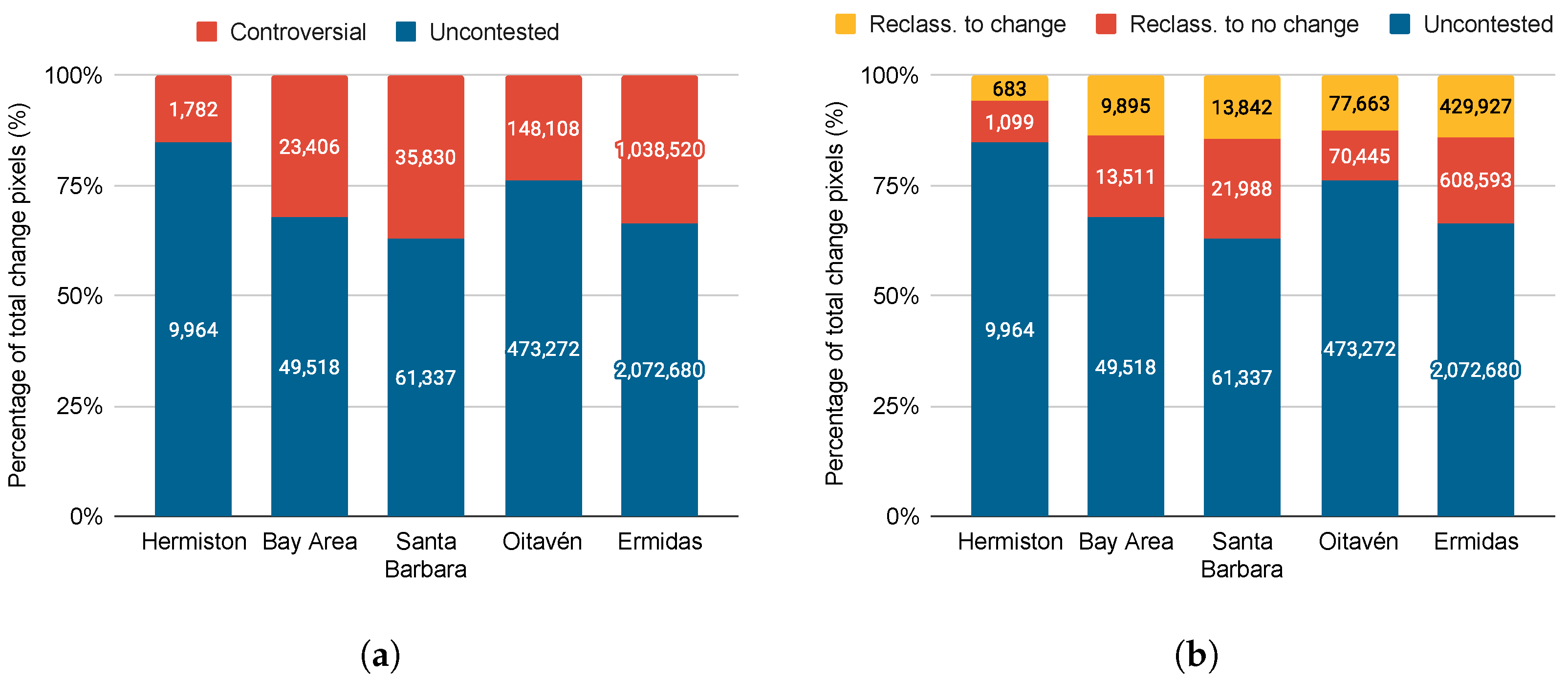

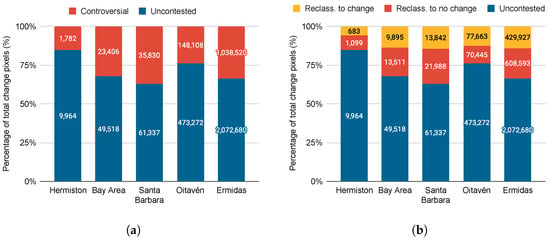

Focusing more on the consensus techniques, Table 6 shows the uncontested and controversial change pixels for each dataset and the subsequent reclassification of the controversial pixels. As explained in Section 2, the uncontested pixels were classified by all three detectors on the same class; for the controversial pixels, there was no consensus among the detectors, so they had to be reclassified. As shown in Table 6, the OR technique reclassified all of the controversial pixels as a change, while the MV technique, on average, reclassified more pixels as no change than change. Figure 14a shows the percentage of pixels of each type for each dataset with a higher percentage of uncontested pixels than controversial pixels, which implied that there was a consensus between the three detectors of 70.90% on average without the application of any additional technique. The remaining 29.10% were pixels with no consensus among the detectors that had to be reclassified. Two techniques were applied to perform the reclassification of the controversial pixels: OR fusion, which reclassified all of the controversial pixels as change, and the MV fusion shown in Figure 14b, which reclassified on average 56.93% of the controversial pixels as no change and the rest as change.

Table 6.

Number of uncontested and controversial change pixels for each dataset along with the results of classifying the controversial pixels as change or no change.

Figure 14.

Total percentage of change pixels for the use of consensus techniques showing the following pixel types: (a) controversial and uncontested; (b) uncontested, reclassified as change and reclassified as no change.

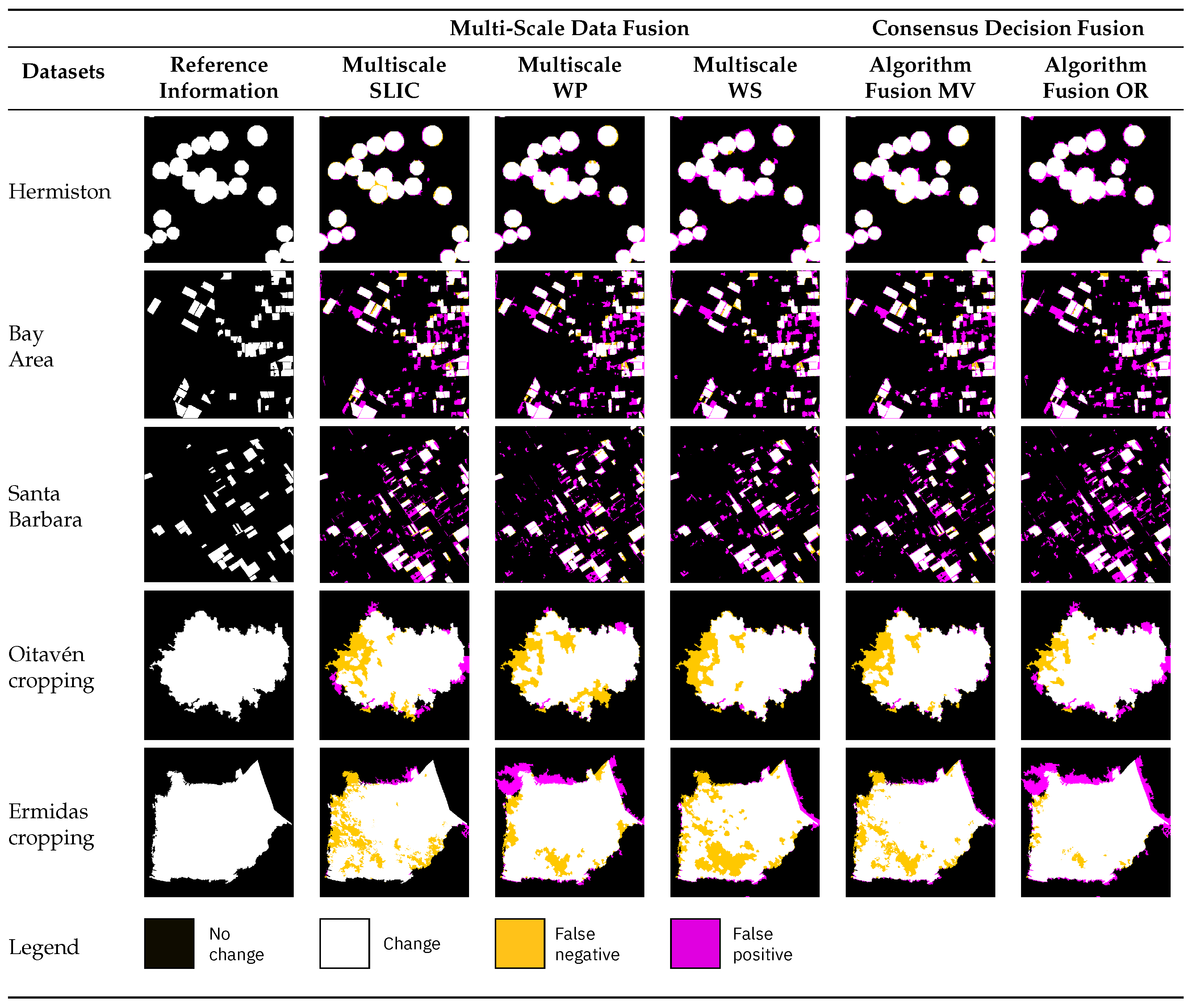

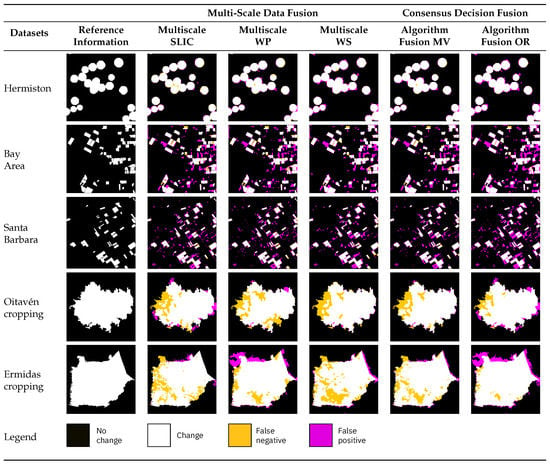

Figure 15 shows the binary change maps for each dataset obtained by each stage of the proposed technique: the multi-scale data fusion of each segmentation algorithm and, finally, the consensus decision fusion of all of the segmentation algorithms used. The multi-scale data fusion columns are the result of merging the difference maps (data level fusion) of each single-scale segmentation with the ED fusion technique for the segmentation algorithm indicated. The consensus decision fusion maps were the result of merging the multi-scale maps shown in the previous columns by using the indicated fusion technique (MV or OR). Finally, the white pixels denote the changes correctly detected; magenta pixels correspond to pixels wrongly classified as a change (FP); yellow pixels indicate the changes not detected by the algorithm (FN). Note that the number of changes not correctly detected (yellow pixels) by individual segmentation-based detectors (four first images in each row) for the Oitavén and Ermidas examples decreased when the four segmentation algorithms were considered (last two columns in the figure), while the number of pixels wrongly classified as change (in magenta) did not increase after fusing the results of the different detectors.

Figure 15.

Binary change maps generated through the proposed multi-scale technique. Columns 2–4 from the left represent the results obtained by each segmentation technique applied (SLIC, waterpixels, and watershed) using the ED fusion method. The last two columns show the results of merging the previous multi-scale maps with the MV or OR algorithm. White pixels are correctly classified changes; magenta pixels are incorrectly classified changes (FP); yellow pixels are undetected changes (FN).

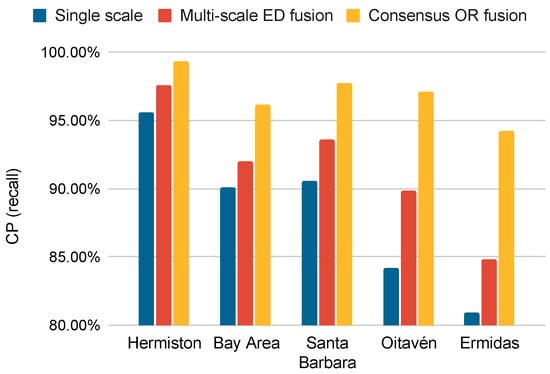

Figure 16 shows that the two initial hypotheses were fulfilled: First, the multi-scale approach detected more changes than a single-scale approach and, second, the consensus between different detectors increased with an increase in the CP with respect to a single detector. The results shown in Figure 16 are for the single-scale approach: the average of the CP values obtained for three different segmentations; for multi-scale: the average for three multi-scale detectors with an ED fusion; the OR consensus result.

Figure 16.

CP obtained for each stage of the proposed method: single-scale approach, multi-scale ED fusion, and finally, consensus OR fusion with different segmentation algorithms.

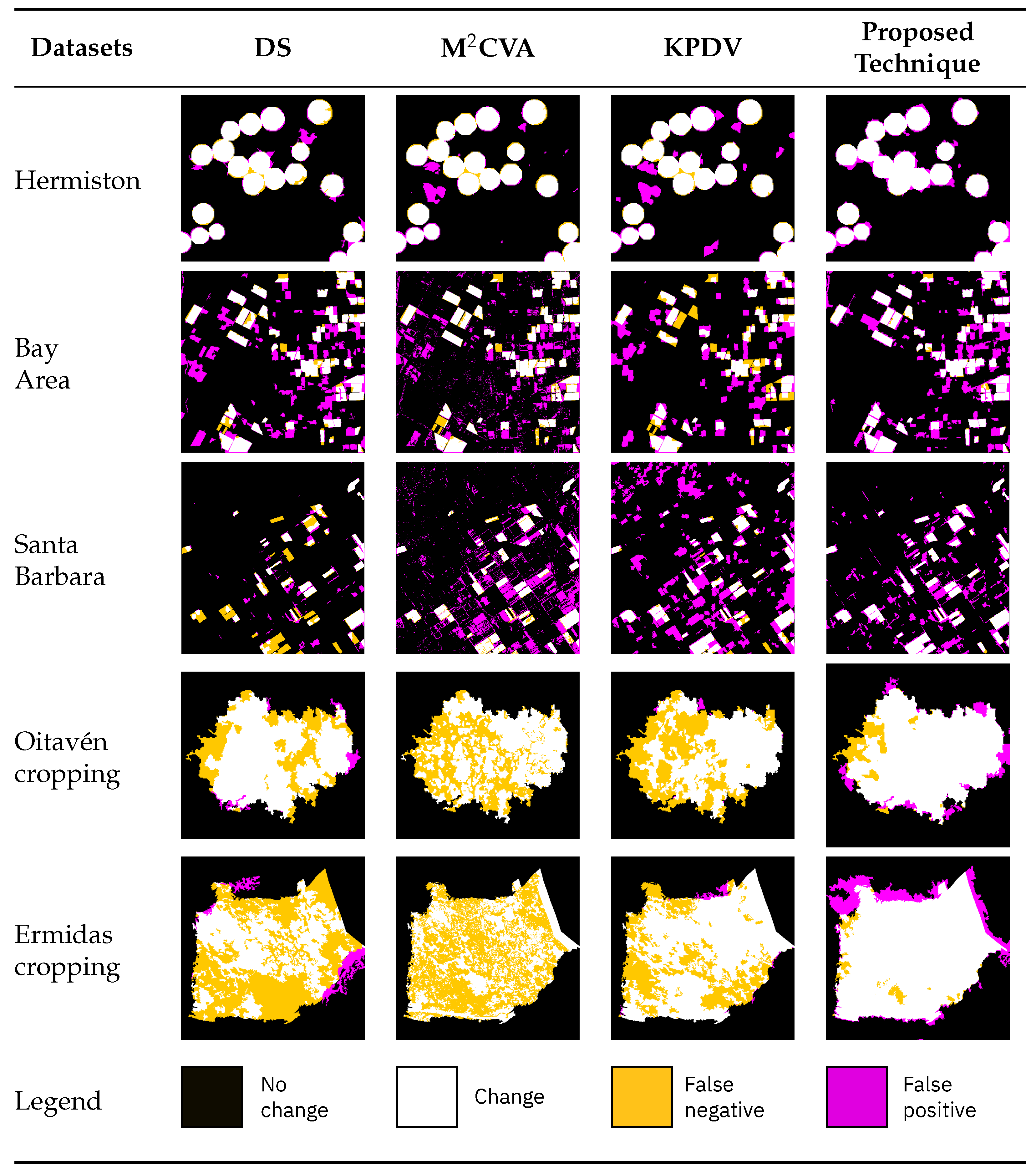

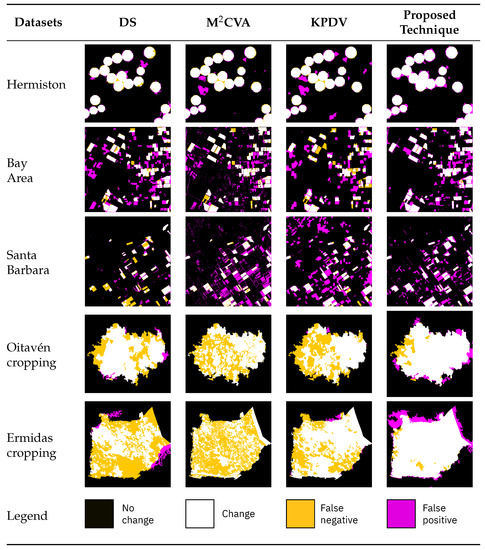

The proposed technique was compared to related works on change detection applied to vegetation or building CD datasets, as well as the -score measure as it was the most-interesting for this study as it assigned a higher weight to CP than to CR. Table 7 shows the accuracies obtained in terms of CP, NCA, and -score for each of the techniques in the literature and the proposed method, with the best results in each category (CP, NCA, and -score) for each dataset shown in bold. The first technique in Table 7, DS [40], performed multi-algorithm change detection to calculate a binary change map using three pixel-level detection algorithms: CVA, IRMAD, and PCA. Then, it performed a fusion of these algorithms using a segmentation map and the Dempster–Shafer algorithm. The second method is MCVA [39], which performed change detection based on feature extraction using morphological profiles varying the scale of the structural element and merging the results at the data level using CVA. Finally, the last method is KPVD [86], which performed an object-based change detection using a single segmentation scale with a proposed key point vector distance (KPVD). Previously, two of these methods (MCVA and KPVD) were applied to the VHR multispectral vegetation dataset [39,86], while DS was applied to detect changes in the VHR multispectral buildings dataset. These methods were chosen because they use techniques related to the one proposed in this paper. DS [40] proposes a multi-algorithm approach with the use of consensus techniques and different segmentation algorithms. Additionally, MCVA [39] proposes using different scales of spatial information extraction using morphological profiles. Finally, KPDV [86] proposes a spatial information extraction technique based on segments. From Table 7, it can be observed that the best results in terms of CP and -score were obtained by the technique proposed in this paper. As shown in Table 7, DS, MCVA, and KPVD achieved high values of non-change accuracy (NCA) and relatively low values of completeness (CP) and -score possibly because these methods focused on increasing the accuracy in the detection of non-changed pixels (NCA measurement), while our proposal prioritised the accuracy in the detection of changed pixels (CP measurement).

Table 7.

Comparison of CP, NCA, and -score in percentage for each of the methods in the literature and the proposed technique. The best results in each category (CP, NCA, and -score) for each dataset are in bold.

Finally, Figure 17 illustrates the comparative results by displaying the binary change maps obtained by the selected methods, showing that the proposed method obtained lower values of false negatives (FNs) than the related methods for all the datasets. This observation is compatible with the CP values observed in Table 7.

Figure 17.

Comparisons of cropped binary change maps obtained by the proposed method and other methods in the literature.

4. Discussion

The main contribution of this paper was the proposed method for binary change detection over medium- and VHR multispectral and hyperspectral images for land cover vegetation applications for the extraction of object-based features based on the use of multi-scale detection. The method uses several detectors, each one built over a segmentation algorithm applied at different scales. As changes in vegetation present high variability depending on the capture conditions such as illumination, CVA using the SAM distance (called the CVA-SAM in this paper) was applied at the segment level. The quality of the proposed method was evaluated using different configurations and metrics and compared to other reported similar methods, showing that the proposed method achieved high accuracy in terms of recall (CP) for every single level of segmentation and after merging the results of each multi-scale segmentation using consensus techniques. CP is the preferred metric for evaluation as the accuracy in the detection of changed pixels is prioritised over the accuracy in the detection of non-changed pixels.

Section 3 highlights different features of the proposed method as they significantly contribute to the change detection results obtained. Firstly, the use of object-based features and multi-scale segmentation allowed adequate exploitation of the spatial information contained in the images and the identification of changes at different granularity levels. Secondly, the use of several detectors based on different segmentation algorithms improved the accuracy of the change detection process over the use of a single segmentation algorithm. Finally, the use of CVA-SAM applied at the segment level instead of at the pixel level improved the robustness of the approach to changes in the images such as those produced by illumination conditions.

Moreover, the proposed method can detect changes between different vegetation types with better results than those reported in the literature. The proposed method was particularly efficient for detecting changes in vegetation due to several characteristics. The segmentation-based approach averages the spectral characteristics of the vegetation taking into account the growth stage, variability in canopy structure, shading, lighting, and so on. In addition, the CVA-SAM by using angle instead of magnitude was more robust to environmental or atmospheric changes, e.g., lighting, whereas the method was more adaptable to changes of different granularities such as changes in vegetation using multi-scale segmentation. Finally, the use of consensus techniques allowed the application of different segmentation methods to extract different information from the segment as discussed above.

Regarding the method limitations, the computational cost of the technique in terms of execution time was considered high as several segmentation algorithms at different scales were computed. However, the computation of changes on a segment basis instead of on a pixel basis reduced the computational cost, with the CVA-SAM computed using a representative pixel vector for each segment of the image. The need for user intervention in the selection of the parameters could be considered a strong limitation. Indeed, the selection of several parameters, such as the number of segmentation levels and the parameters of the segmentation algorithms such as segmentation size, which affect the accuracy of the results, need to be performed. Nevertheless, the impact of the parameter selection in the detection results was small, for example if the scaling parameter was changed by ±10%, the differences in CP accuracy varied less than 2 percentage points. Regarding the number of segmentation levels selected, varying the number of levels between 3 and 9 to analyse the variability of the obtained CP values was performed with the resulting CP values only varying by ±1.5 pp on average compared to the use of 3 levels. To alleviate the burden of parameter tuning for users, the parameters could be determined based on the sensor characteristics used to capture the images.

Future work will involve an optimisation algorithm to configure the parameters of the methods, such as the number of levels, initial scales, and steps between scales as a function of the input images. Furthermore, the incorporation of additional context information available in the VHR images by the use of, for example, textures will be considered. In addition, some improvements could be applied to the computation of the difference images by adapting the CVA-SAM to the spectral patterns of variability observed in vegetation images. Finally, as computational efficiency is also relevant, an exhaustive analysis of the performance in terms of execution time and computational resources required is also necessary, as well as the use of hardware accelerators to reduce execution time while maintaining correctness and accuracy.

5. Conclusions

This paper proposed an unsupervised binary change detection technique using multi-scale segmentation and merging by consensus. The technique was adapted to detect changes in multispectral and hyperspectral vegetation images, in particular VHR images, with changes detected at the level of objects and priority given to minimise the undetected changes. The use of multi-scale and consensus techniques allowed the detection of all possible changes at different granularity levels, taking advantage of the high spatial information provided by VHR images. The CVA-SAM algorithm applied at the level of uniform regions produced by the segmentation algorithms allowed the different stages to be analysed in terms of accuracy, with the final results compared to those of other reported techniques.

The proposed method was effective for identifying vegetation changes, as the use of multi-scale segments instead of pixels allowed for adapting to the granularity of changes, which is especially important for irregular changes, for example forests. This is essential for discerning various vegetation types due to the extraction of spatial information. Secondly, given that vegetation changes can exhibit considerable variability based on image capture conditions, such as illumination, this can be resolved by the application of the CVA-SAM algorithm at the segment level. Lastly, the incorporation of a consensus approach among different multi-scale detectors maximises the number of changes detected when using different spatial information extraction techniques for an efficient and robust technique.

Five change detection datasets consisting of multispectral and hyperspectral images with vegetation changes were used, two of them being multispectral VHR images of rivers in Galicia, concluding that the use of multi-scale segmentation improved the CP results compared to a single-scale version. Additionally, the incorporation of consensus techniques between different multi-scale detectors obtained more accurate results. For example, in the case of the Oitavén dataset, a CP of 97.60% was obtained compared to an average of 89.15% in the single-scale approach. The best method for scale fusion was ED fusion, which maximised the number of changes detected by the technique, and OR fusion improved the accuracy with respect to MV.

The proposed technique also improved, in terms of accuracy and -score, the results obtained by the other solutions proposed in the literature, obtaining improvements of +18.90 pp in CP (recall) and +19.61 pp on average in the case of the VHR datasets. The -score metric obtained improvements of +12.91 pp on average.

Author Contributions

Conceptualisation, F.A. and D.B.H.; methodology, F.A., D.B.H. and F.J.C.; experiments F.J.C.; validation, F.A., D.B.H. and F.J.C.; investigation, F.A., D.B.H. and F.J.C.; data curation, F.J.C.; writing, F.A., D.B.H. and F.J.C.; visualisation, F.J.C.; supervision, F.A. and D.B.H. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported in part by grants PID2019-104834GB-I00, TED2021-130367B-I00, and FJC2021-046760-I funded by MCIN/AEI/10.13039/501100011033 and by “European Union NextGenerationEU/PRTR”. It was also supported by Xunta de Galicia—Consellería de Cultura, Educación, Formación Profesional e Universidades (Centro de investigación de Galicia accreditation 2019–2022 ED431G-2019/04 and Reference Competitive Group accreditation, ED431C-2022/16], by Junta de Castilla y León (Project VA226P20 (PROPHET-II)), and by the European Regional Development Fund (ERDF).

Data Availability Statement

In the case that the paper is accepted, the change detection datasets used in this article will be made available.

Acknowledgments

The authors would like to thank Babcock International for the use of unmanned technologies to capture the very-high-resolution images of Galicia rivers.

Conflicts of Interest

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of the data; in the writing of the manuscript; nor in the decision to publish the results.

Abbreviations

| AP | Attribute profile |

| CD | Change detection |

| CNN | Convolutional neural network |

| CP | Completeness |

| CR | Correctness |

| CVA | Change vector analysis |

| DS | Dempster–Shafer |

| ED | Euclidean distance |

| EM | Expectation–maximisation |

| EMP | Extended morphological profile |

| FI | Fuzzy integral |

| FN | False negative |

| FP | False positive |

| GM | Geometry mean |

| HM | Harmonic mean |

| KPVD | Key point vector distance |

| MN | Mean |

| MP | Morphological profile |

| MRS | Multiresolution segmentation |

| MV | Majority vote |

| NCA | No change accuracy |

| NCI | Neighbourhood correlation image |

| OA | Overall accuracy |

| OBCD | Object-based change detection |

| PCA | Principal component analysis |

| pp | Percentage points |

| RCMG | Robust colour morphological gradient |

| SAM | Spectral angle mapper |

| SE | Structural element |

| SLIC | Simple linear iterative clustering |

| SVM | Support vector machine |

| TN | True negative |

| TP | True positive |

| UAV | Unmanned aerial vehicle |

| USGS | United States Geological Survey |

| VHR | Very high spatial resolution |

| WG | Weighted mean |

References

- Liu, S.; Marinelli, D.; Bruzzone, L.; Bovolo, F. A review of change detection in multitemporal hyperspectral images: Current techniques, applications, and challenges. IEEE Geosci. Remote Sens. Mag. 2019, 7, 140–158. [Google Scholar] [CrossRef]

- Panuju, D.R.; Paull, D.J.; Griffin, A.L. Change Detection Techniques Based on Multispectral Images for Investigating Land Cover Dynamics. Remote Sens. 2020, 12, 1781. [Google Scholar] [CrossRef]

- Afaq, Y.; Manocha, A. Analysis on change detection techniques for remote sensing applications: A review. Ecol. Inform. 2021, 63, 101310. [Google Scholar] [CrossRef]

- Lv, Z.; Liu, T.; Benediktsson, J.A.; Falco, N. Land Cover Change Detection Techniques: Very-high-resolution optical images: A review. IEEE Geosci. Remote Sens. Mag. 2022, 10, 44–63. [Google Scholar] [CrossRef]

- Xie, Y.; Sha, Z.; Yu, M. Remote sensing imagery in vegetation mapping: A review. J. Plant Ecol. 2008, 1, 9–23. [Google Scholar] [CrossRef]

- Kumar, S.; Anouncia, M.; Johnson, S.; Agarwal, A.; Dwivedi, P. Agriculture change detection model using remote sensing images and GIS: Study area Vellore. In Proceedings of the 2012 International Conference on Radar, Communication and Computing (ICRCC), Tiruvannamalai, India, 21–22 December 2012; pp. 54–57. [Google Scholar] [CrossRef]

- Gandhi, G.M.; Parthiban, S.; Thummalu, N.; Christy, A. Ndvi: Vegetation Change Detection Using Remote Sensing and Gis—A Case Study of Vellore District. Procedia Comput. Sci. 2015, 57, 1199–1210. [Google Scholar] [CrossRef]

- Walter, V. Object-based classification of remote sensing data for change detection. ISPRS J. Photogramm. Remote Sens. 2004, 58, 225–238. [Google Scholar] [CrossRef]

- De Castro, A.I.; Shi, Y.; Maja, J.M.; Peña, J.M. UAVs for Vegetation Monitoring: Overview and Recent Scientific Contributions. Remote Sens. 2021, 13, 2139. [Google Scholar] [CrossRef]

- Zhang, N.; Yang, G.; Pan, Y.; Yang, X.; Chen, L.; Zhao, C. A Review of Advanced Technologies and Development for Hyperspectral-Based Plant Disease Detection in the Past Three Decades. Remote Sens. 2020, 12, 3188. [Google Scholar] [CrossRef]

- Blanco, S.R.; Heras, D.B.; Argüello, F. Texture Extraction Techniques for the Classification of Vegetation Species in Hyperspectral Imagery: Bag of Words Approach Based on Superpixels. Remote Sens. 2020, 12, 2633. [Google Scholar] [CrossRef]

- Longbotham, N.; Chaapel, C.; Bleiler, L.; Padwick, C.; Emery, W.J.; Pacifici, F. Very High Resolution Multiangle Urban Classification Analysis. IEEE Trans. Geosci. Remote Sens. 2012, 50, 1155–1170. [Google Scholar] [CrossRef]

- Wen, D.; Huang, X.; Bovolo, F.; Li, J.; Ke, X.; Zhang, A.; Benediktsson, J.A. Change Detection from Very-High-Spatial-Resolution Optical Remote Sensing Images: Methods, applications, and future directions. IEEE Geosci. Remote Sens. Mag. 2021, 9, 68–101. [Google Scholar] [CrossRef]

- Dalla Mura, M.; Prasad, S.; Pacifici, F.; Gamba, P.; Chanussot, J.; Benediktsson, J.A. Challenges and Opportunities of Multimodality and Data Fusion in Remote Sensing. Proc. IEEE 2015, 103, 1585–1601. [Google Scholar] [CrossRef]

- Huang, X.; Wen, D.; Li, J.; Qin, R. Multi-level monitoring of subtle urban changes for the megacities of China using high-resolution multi-view satellite imagery. Remote Sens. Environ. 2017, 196, 56–75. [Google Scholar] [CrossRef]

- Huang, X.; Zhang, L.; Li, P. A multiscale feature fusion approach for classification of very high resolution satellite imagery based on wavelet transform. Int. J. Remote Sens. 2008, 29, 5923–5941. [Google Scholar] [CrossRef]

- Huang, X.; Cao, Y.; Li, J. An automatic change detection method for monitoring newly constructed building areas using time-series multi-view high-resolution optical satellite images. Remote Sens. Environ. 2020, 244, 111802. [Google Scholar] [CrossRef]

- Bovolo, F.; Bruzzone, L. A theoretical framework for unsupervised change detection based on change vector analysis in the polar domain. IEEE Trans. Geosci. Remote Sens. 2007, 45, 218–236. [Google Scholar] [CrossRef]

- Liu, S.; Bruzzone, L.; Bovolo, F.; Zanetti, M.; Du, P. Sequential spectral change vector analysis for iteratively discovering and detecting multiple changes in hyperspectral images. IEEE Trans. Geosci. Remote Sens. 2015, 53, 4363–4378. [Google Scholar] [CrossRef]

- Thonfeld, F.; Feilhauer, H.; Braun, M.; Menz, G. Robust Change Vector Analysis (RCVA) for multi-sensor very high resolution optical satellite data. Int. J. Appl. Earth Obs. Geoinf. 2016, 50, 131–140. [Google Scholar] [CrossRef]

- Singh, S.; Talwar, R. Review on different change vector analysis algorithms based change detection techniques. In Proceedings of the 2013 IEEE Second International Conference on Image Information Processing (ICIIP-2013), Shimla, India, 9–11 December 2013; pp. 136–141. [Google Scholar] [CrossRef]

- Marinelli, D.; Bovolo, F.; Bruzzone, L. A Novel Change Detection Method for Multitemporal Hyperspectral Images Based on Binary Hyperspectral Change Vectors. IEEE Trans. Geosci. Remote Sens. 2019, 57, 4913–4928. [Google Scholar] [CrossRef]

- Saha, S.; Bovolo, F.; Bruzzone, L. Unsupervised deep change vector analysis for multiple-change detection in VHR images. IEEE Trans. Geosci. Remote Sens. 2019, 57, 3677–3693. [Google Scholar] [CrossRef]

- Wang, X.; Du, P.; Liu, S.; Senyshen, M.; Zhang, W.; Fang, H.; Fan, X. A novel multiple change detection approach based on tri-temporal logic-verified change vector analysis in posterior probability space. Int. J. Appl. Earth Obs. Geoinf. 2022, 111, 102852. [Google Scholar] [CrossRef]

- Chen, H.; Wu, C.; Du, B.; Zhang, L. Change Detection in Multi-temporal VHR Images Based on Deep Siamese Multi-scale Convolutional Networks. arXiv 2019. [Google Scholar] [CrossRef]

- Huo, C.; Zhou, Z.; Lu, H.; Pan, C.; Chen, K. Fast Object-Level Change Detection for VHR Images. IEEE Geosci. Remote Sens. Lett. 2010, 7, 118–122. [Google Scholar] [CrossRef]

- Xian, G.; Homer, C. Updating the 2001 National Land Cover Database Impervious Surface Products to 2006 using Landsat Imagery Change Detection Methods. Remote Sens. Environ. 2010, 114, 1676–1686. [Google Scholar] [CrossRef]

- Hussain, M.; Chen, D.; Cheng, A.; Wei, H.; Stanley, D. Change detection from remotely sensed images: From pixel-based to object-based approaches. ISPRS J. Photogramm. Remote Sens. 2013, 80, 91–106. [Google Scholar] [CrossRef]

- Xu, Y.; Huo, C.; Xiang, S.; Pan, C. Robust VHR image change detection based on local features and multi-scale fusion. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 1991–1995. [Google Scholar] [CrossRef]

- Huang, J.; Liu, Y.; Wang, M.; Zheng, Y.; Wang, J.; Ming, D. Change Detection of High Spatial Resolution Images Based on Region-Line Primitive Association Analysis and Evidence Fusion. Remote Sens. 2019, 11, 2484. [Google Scholar] [CrossRef]

- Bansal, P.; Vaid, M.; Gupta, S. OBCD-HH: An object-based change detection approach using multi-feature non-seed-based region growing segmentation. Multimed. Tools Appl. 2022, 81, 8059–8091. [Google Scholar] [CrossRef]

- Song, A.; Kim, Y.; Han, Y. Uncertainty Analysis for Object-Based Change Detection in Very High-Resolution Satellite Images Using Deep Learning Network. Remote Sens. 2020, 12, 2345. [Google Scholar] [CrossRef]

- Malila, W.A. Change vector analysis: An approach for detecting forest changes with Landsat. In Proceedings of the LARS Symposia, Malmo, Sweden, 30–31 May 1980; p. 385. [Google Scholar]

- Bruzzone, L.; Prieto, D. Automatic analysis of the difference image for unsupervised change detection. IEEE Trans. Geosci. Remote Sens. 2000, 38, 1171–1182. [Google Scholar] [CrossRef]

- Otsu, N. A Threshold Selection Method from Gray-Level Histograms. IEEE Trans. Syst. Man Cybernet. 1979, 9, 62–66. [Google Scholar] [CrossRef]

- Seydi, S.T.; Shah-Hosseini, R.; Hasanlou, M. New framework for hyperspectral change detection based on multi-level spectral unmixing. Appl. Geomat. 2021, 13, 763–780. [Google Scholar] [CrossRef]

- Seydi, S.T.; Shah-Hosseini, R.; Amani, M. A Multi-Dimensional Deep Siamese Network for Land Cover Change Detection in Bi-Temporal Hyperspectral Imagery. Sustainability 2022, 14, 12597. [Google Scholar] [CrossRef]

- Xing, H.; Zhu, L.; Chen, B.; Liu, C.; Niu, J.; Li, X.; Feng, Y.; Fang, W. A comparative study of threshold selection methods for change detection from very high-resolution remote sensing images. Earth Sci. Inform. 2022, 15, 369–381. [Google Scholar] [CrossRef]

- Liu, S.; Du, Q.; Tong, X.; Samat, A.; Bruzzone, L.; Bovolo, F. Multiscale morphological compressed change vector analysis for unsupervised multiple change detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 4124–4137. [Google Scholar] [CrossRef]

- Han, Y.; Javed, A.; Jung, S.; Liu, S. Object-Based Change Detection of Very High Resolution Images by Fusing Pixel-Based Change Detection Results Using Weighted Dempster–Shafer Theory. Remote Sens. 2020, 12, 983. [Google Scholar] [CrossRef]

- Shao, P.; Shi, W.; Liu, Z.; Dong, T. Unsupervised Change Detection Using Fuzzy Topology-Based Majority Voting. Remote Sens. 2021, 13, 3171. [Google Scholar] [CrossRef]

- Shafer, G. A Mathematical Theory of Evidence; Princeton University Press: Princeton, NJ, USA, 1976. [Google Scholar]

- Du, P.; Liu, S.; Gamba, P.; Tan, K.; Xia, J. Fusion of Difference Images for Change Detection Over Urban Areas. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 5, 1076–1086. [Google Scholar] [CrossRef]

- Zhang, Y.; Peng, D.; Huang, X. Object-based change detection for VHR images based on multiscale uncertainty analysis. IEEE Geosci. Remote Sens. Lett. 2018, 15, 13–17. [Google Scholar] [CrossRef]

- Hao, M.; Shi, W.; Deng, K.; Zhang, H.; He, P. An Object-Based Change Detection Approach Using Uncertainty Analysis for VHR Images. J. Sens. 2016, 2016, 9078364. [Google Scholar] [CrossRef]

- Zhang, X.; He, L.; Qin, K.; Dang, Q.; Si, H.; Tang, X.; Jiao, L. SMD-Net: Siamese Multi-Scale Difference-Enhancement Network for Change Detection in Remote Sensing. Remote Sens. 2022, 14, 1580. [Google Scholar] [CrossRef]

- Chen, H.; Wu, C.; Du, B.; Zhang, L. Deep Siamese Multi-scale Convolutional Network for Change Detection in Multi-Temporal VHR Images. In Proceedings of the 2019 10th International Workshop on the Analysis of Multitemporal Remote Sensing Images, MultiTemp 2019, Shanghai, China, 5–7 August 2019. [Google Scholar] [CrossRef]

- Song, A.; Choi, J. Fully Convolutional Networks with Multiscale 3D Filters and Transfer Learning for Change Detection in High Spatial Resolution Satellite Images. Remote Sens. 2020, 12, 799. [Google Scholar] [CrossRef]

- Volpi, M.; Tuia, D.; Bovolo, F.; Kanevski, M.; Bruzzone, L. Supervised change detection in VHR images using contextual information and support vector machines. Int. J. Appl. Earth Obs. Geoinf. 2013, 20, 77–85. [Google Scholar] [CrossRef]

- Wang, C.; Xu, M.; Wang, X.; Zheng, S.; Ma, Z. Object-oriented change detection approach for high-resolution remote sensing images based on multiscale fusion. J. Appl. Remote Sens. 2013, 7, 073696. [Google Scholar] [CrossRef]

- Guo, Q.; Zhang, J.; Li, T.; Lu, X. Change detection for high-resolution remote sensing imagery based on multi-scale segmentation and fusion. In Proceedings of the International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 23–28 July 2017; pp. 1919–1922. [Google Scholar] [CrossRef]

- Cui, B.; Zhang, Y.; Yan, L.; Wei, J.; Wu, H. An Unsupervised SAR Change Detection Method Based on Stochastic Subspace Ensemble Learning. Remote Sens. 2019, 11, 1314. [Google Scholar] [CrossRef]

- Wang, X.; Liu, S.; Du, P.; Liang, H.; Xia, J.; Li, Y. Object-Based Change Detection in Urban Areas from High Spatial Resolution Images Based on Multiple Features and Ensemble Learning. Remote Sens. 2018, 10, 276. [Google Scholar] [CrossRef]

- Lim, K.; Jin, D.; Kim, C.S. Change Detection in High Resolution Satellite Images Using an Ensemble of Convolutional Neural Networks. In Proceedings of the 2018 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference, APSIPA ASC 2018, Honolulu, HI, USA, 12–15 November 2018; pp. 509–515. [Google Scholar] [CrossRef]

- Sagi, O.; Rokach, L. Ensemble learning: A survey. In Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery; Wiley: Hoboken, NJ, USA, 2018; Volume 8. [Google Scholar] [CrossRef]

- Zhang, Y.; Feng, X.; Le, X. Segmentation on multispectral remote sensing image using watershed transformation. In Proceedings of the 2008 Congress on Image and Signal Processing, Las Vegas, NV, USA, 30 March–4 April 2008; Volume 4, pp. 773–777. [Google Scholar]

- Tarabalka, Y.; Chanussot, J.; Benediktsson, J.A. Segmentation and classification of hyperspectral images using watershed transformation. Pattern Recognit. 2010, 43, 2367–2379. [Google Scholar] [CrossRef]

- Ren, M. Learning a classification model for segmentation. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Washington, DC, USA, 13–16 October 2003; Volume 1, pp. 10–17. [Google Scholar] [CrossRef]

- Stutz, D.; Hermans, A.; Leibe, B. Superpixels: An evaluation of the state-of-the-art. Comput. Vis. Image Underst. 2018, 166, 1–27. [Google Scholar] [CrossRef]

- Machairas, V.; Faessel, M.; Cárdenas-Peña, D.; Chabardes, T.; Walter, T.; Decencière, E. Waterpixels. IEEE Trans. Image Process. 2015, 24, 3707–3716. [Google Scholar] [CrossRef]

- Benesova, W.; Kottman, M. Fast superpixel segmentation using morphological processing. In Proceedings of the Conference on Machine Vision and Machine Learning, Prague, Czech Republic, 28–31 July 2014; pp. 67-1–67-9. [Google Scholar]

- Vedaldi, A.; Soatto, S. Quick shift and kernel methods for mode seeking. In Proceedings of the Computer Vision—ECCV 2008: 10th European Conference on Computer Vision, Marseille, France, 12–18 October 2008; pp. 705–718. [Google Scholar]

- Yao, J.; Boben, M.; Fidler, S.; Urtasun, R. Real-time coarse-to-fine topologically preserving segmentation. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 2947–2955. [Google Scholar] [CrossRef]

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; Süsstrunk, S. SLIC superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 2274–2282. [Google Scholar] [CrossRef]

- Liu, M.Y.; Tuzel, O.; Ramalingam, S.; Chellappa, R. Entropy rate superpixel segmentation. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Colorado Springs, CO, USA, 20–25 June 2011; pp. 2097–2104. [Google Scholar] [CrossRef]

- Chen, Y.; Chen, Q.; Jing, C. Multi-resolution segmentation parameters optimization and evaluation for VHR remote sensing image based on meanNSQI and discrepancy measure. J. Spat. Sci. 2021, 66, 253–278. [Google Scholar] [CrossRef]

- Kavzoglu, T.; Tonbul, H. A comparative study of segmentation quality for multi-resolution segmentation and watershed transform. In Proceedings of the 2017 8th International Conference on Recent Advances in Space Technologies (RAST), Istanbul, Turkey, 19–22 June 2017; pp. 113–117. [Google Scholar] [CrossRef]

- Tzotsos, A.; Argialas, D. MSEG: A generic region-based multi-scale image segmentation algorithm for remote sensing imagery. In Proceedings of the ASPRS 2006 Annual Conference, Reno, NV, USA, 1–5 May 2006; pp. 1–13. [Google Scholar]

- Hall, O.; Hay, G.J. A Multiscale Object-Specific Approach to Digital Change Detection. Int. J. Appl. Earth Obs. Geoinf. 2003, 4, 311–327. [Google Scholar] [CrossRef]

- Xue, Y.; Zhao, J.; Zhang, M. A Watershed-Segmentation-Based Improved Algorithm for Extracting Cultivated Land Boundaries. Remote Sens. 2021, 13, 939. [Google Scholar] [CrossRef]

- Bovolo, F.; Bruzzone, L. The time variable in data fusion: A change detection perspective. IEEE Geosci. Remote Sens. Mag. 2015, 3, 8–26. [Google Scholar] [CrossRef]

- Jiang, H.; Peng, M.; Zhong, Y.; Xie, H.; Hao, Z.; Lin, J.; Ma, X.; Hu, X. A Survey on Deep Learning-Based Change Detection from High-Resolution Remote Sensing Images. Remote Sens. 2022, 14, 1552. [Google Scholar] [CrossRef]

- Ling, F.; Li, W.; Du, Y.; Li, X. Land Cover Change Mapping at the Subpixel Scale With Different Spatial-Resolution Remotely Sensed Imagery. IEEE Geosci. Remote Sens. Lett. 2011, 8, 182–186. [Google Scholar] [CrossRef]

- Kempeneers, P.; Sedano, F.; Strobl, P.; McInerney, D.O.; San-Miguel-Ayanz, J. Increasing robustness of postclassification change detection using time series of land cover maps. IEEE Trans. Geosci. Remote Sens. 2012, 50, 3327–3339. [Google Scholar] [CrossRef]

- Zhu, Q.; Guo, X.; Deng, W.; Guan, Q.; Zhong, Y.; Zhang, L.; Li, D. Land-Use/Land-Cover change detection based on a Siamese global learning framework for high spatial resolution remote sensing imagery. ISPRS J. Photogramm. Remote Sens. 2022, 184, 63–78. [Google Scholar] [CrossRef]

- Song, W.; Quan, H.; Chen, Y.; Zhang, P. SAR Image Feature Selection and Change Detection Based on Sparse Coefficient Correlation. In Proceedings of the 2022 17th International Conference on Control, Automation, Robotics and Vision (ICARCV), Singapore, 11–13 December 2022; pp. 326–329. [Google Scholar] [CrossRef]

- Demir, B.; Bovolo, F.; Bruzzone, L. Detection of Land-Cover Transitions in Multitemporal Remote Sensing Images with Active-Learning-Based Compound Classification. IEEE Trans. Geosci. Remote Sens. 2012, 50, 1930–1941. [Google Scholar] [CrossRef]

- Zhang, C.; Feng, Y.; Hu, L.; Tapete, D.; Pan, L.; Liang, Z.; Cigna, F.; Yue, P. A domain adaptation neural network for change detection with heterogeneous optical and SAR remote sensing images. Int. J. Appl. Earth Obs. Geoinf. 2022, 109, 102769. [Google Scholar] [CrossRef]

- López-Fandiño, J.; Heras, D.B.; Argüello, F. Multiclass change detection for multidimensional images in the presence of noise. In Proceedings of the High-Performance Computing in Geoscience and Remote Sensing VIII. International Society for Optics and Photonics, Berlin, Germany, 12–13 September 2018; Volume 10792, p. 1079204. [Google Scholar]

- Pirrone, D.; Bovolo, F.; Bruzzone, L. A Novel Framework Based on Polarimetric Change Vectors for Unsupervised Multiclass Change Detection in Dual-Pol Intensity SAR Images. IEEE Trans. Geosci. Remote Sens. 2020, 58, 4780–4795. [Google Scholar] [CrossRef]

- Zhu, Q.; Guo, X.; Li, Z.; Li, D. A review of multi-class change detection for satellite remote sensing imagery. Geo-Spat. Inf. Sci. in press. 2022. [Google Scholar] [CrossRef]

- Chen, J.; Fan, J.; Zhang, M.; Zhou, Y.; Shen, C. MSF-Net: A Multiscale Supervised Fusion Network for Building Change Detection in High-Resolution Remote Sensing Images. IEEE Access 2022, 10, 30925–30938. [Google Scholar] [CrossRef]

- Liu, M.; Chai, Z.; Deng, H.; Liu, R. A CNN-Transformer Network With Multiscale Context Aggregation for Fine-Grained Cropland Change Detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 4297–4306. [Google Scholar] [CrossRef]

- Yang, M.; Jiao, L.; Liu, F.; Hou, B.; Yang, S.; Jian, M. DPFL-Nets: Deep Pyramid Feature Learning Networks for Multiscale Change Detection. IEEE Trans. Neural Netw. Learn. Syst. 2022, 33, 6402–6416. [Google Scholar] [CrossRef] [PubMed]

- Manninen, T.; Jaaskelainen, E.; Tomppo, E. Nonlocal Multiscale Single Image Statistics From Sentinel-1 SAR Data for High Resolution Bitemporal Forest Wind Damage Detection. IEEE Geosci. Remote Sens. Lett. 2022, 19, 2504705. [Google Scholar] [CrossRef]

- Lv, Z.; Liu, T.; Benediktsson, J.A. Object-oriented key point vector distance for binary land cover change detection using vhr remote sensing images. IEEE Trans. Geosci. Remote Sens. 2020, 58, 6524–6533. [Google Scholar] [CrossRef]

- López-Fandiño, J.; Heras, D.B.; Argüello, F.; Dalla Mura, M. GPU framework for change detection in multitemporal hyperspectral images. Int. J. Parallel Program. 2019, 47, 272–292. [Google Scholar] [CrossRef]

- Earth Resources Observation and Science (EROS) Center. Earth Observing One (EO-1)—Hyperion. Available online: https://www.usgs.gov/centers/eros/science/usgs-eros-archive-earth-observing-one-eo-1-hyperion (accessed on 14 March 2023).

- AVIRIS. Airborne Visible/Infrared Imaging Spectrometer. Available online: https://aviris.jpl.nasa.gov/dataportal/ (accessed on 14 March 2023).

- AgEagle Sensor Systems Inc., d/b/a MicaSense. RedEdge—MX | MicaSense. 2021. Available online: https://micasense.com/rededge-mx/ (accessed on 14 March 2023).