A Preliminary Study of Deep Learning Sensor Fusion for Pedestrian Detection

Abstract

1. Introduction

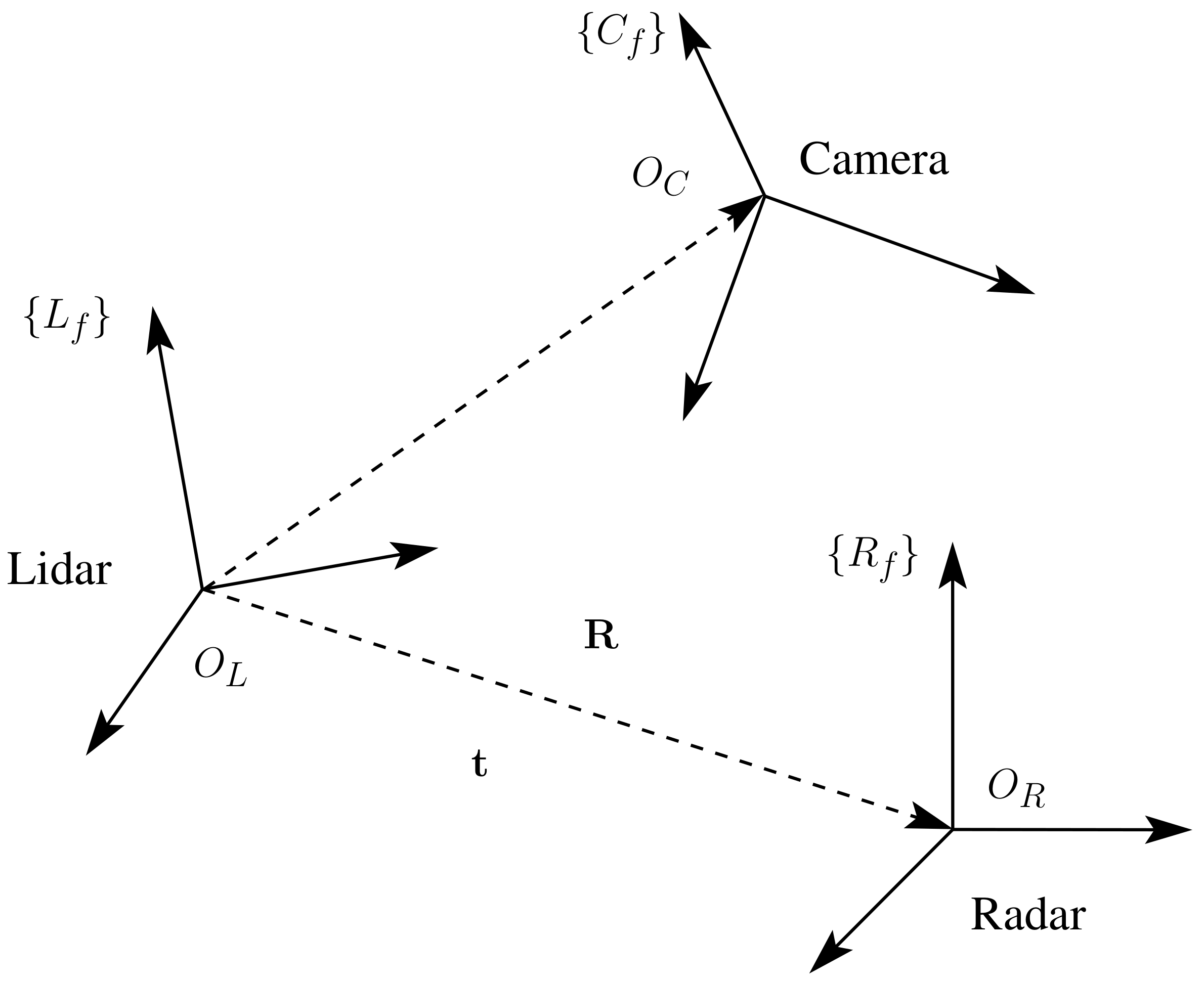

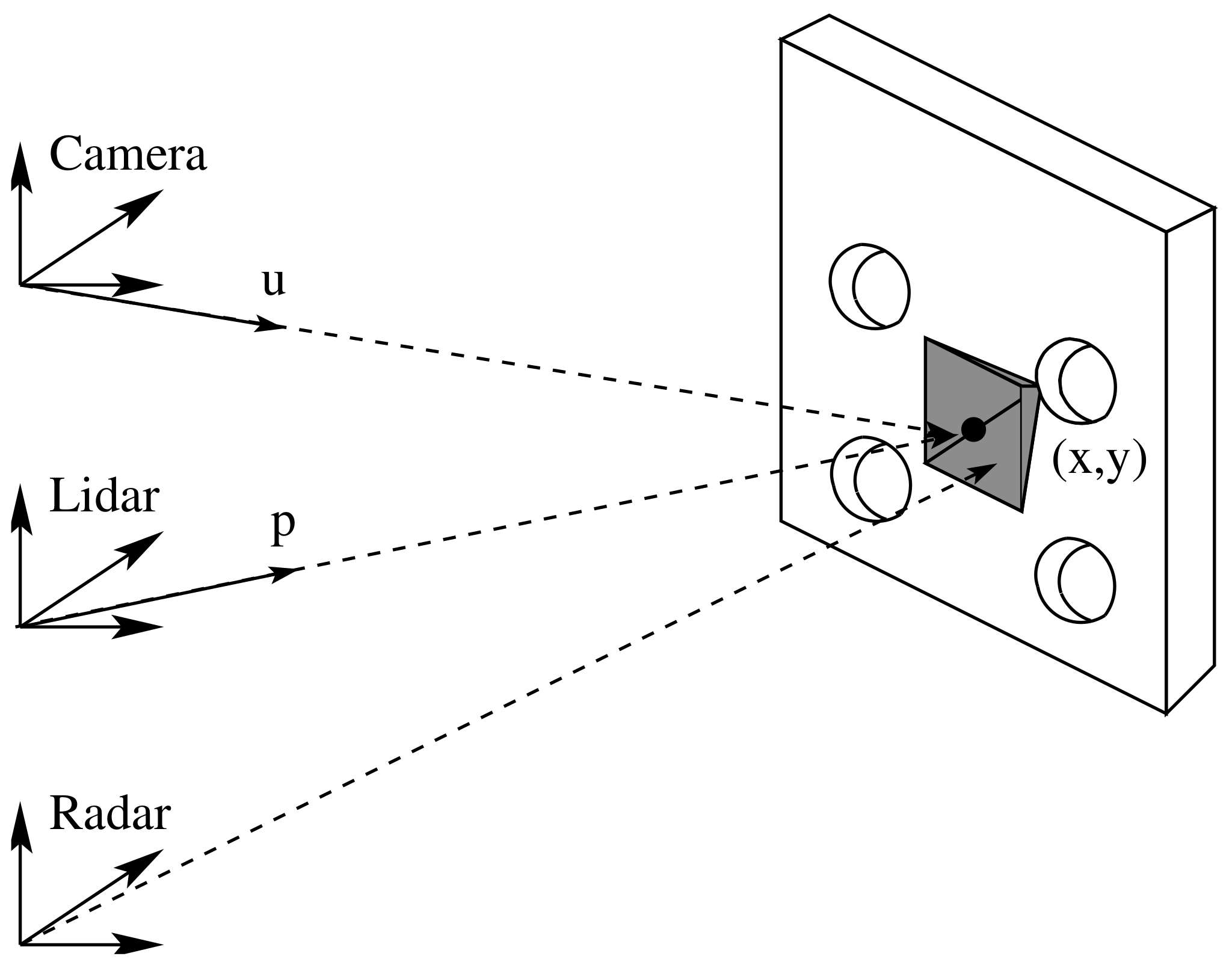

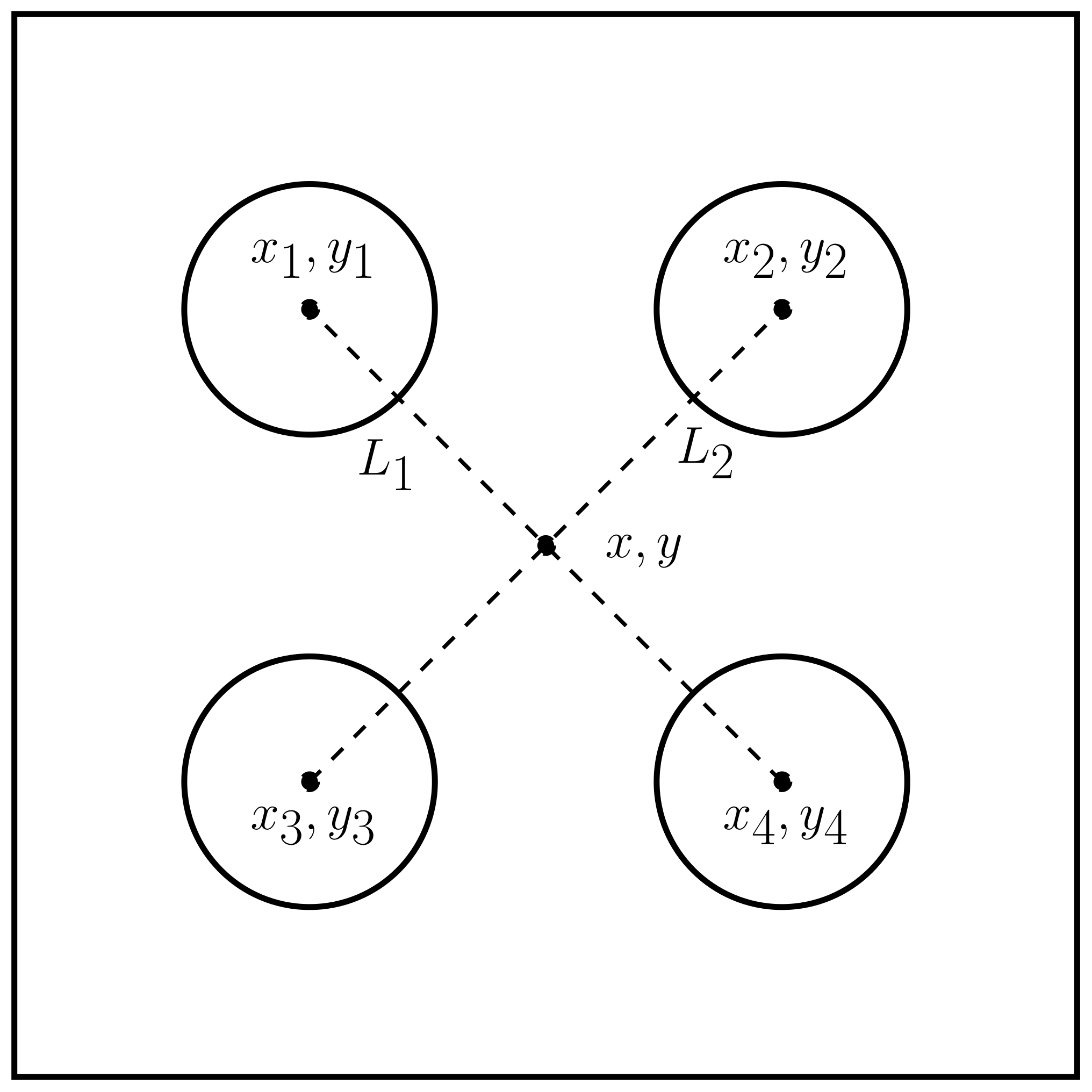

2. Sensor Calibration

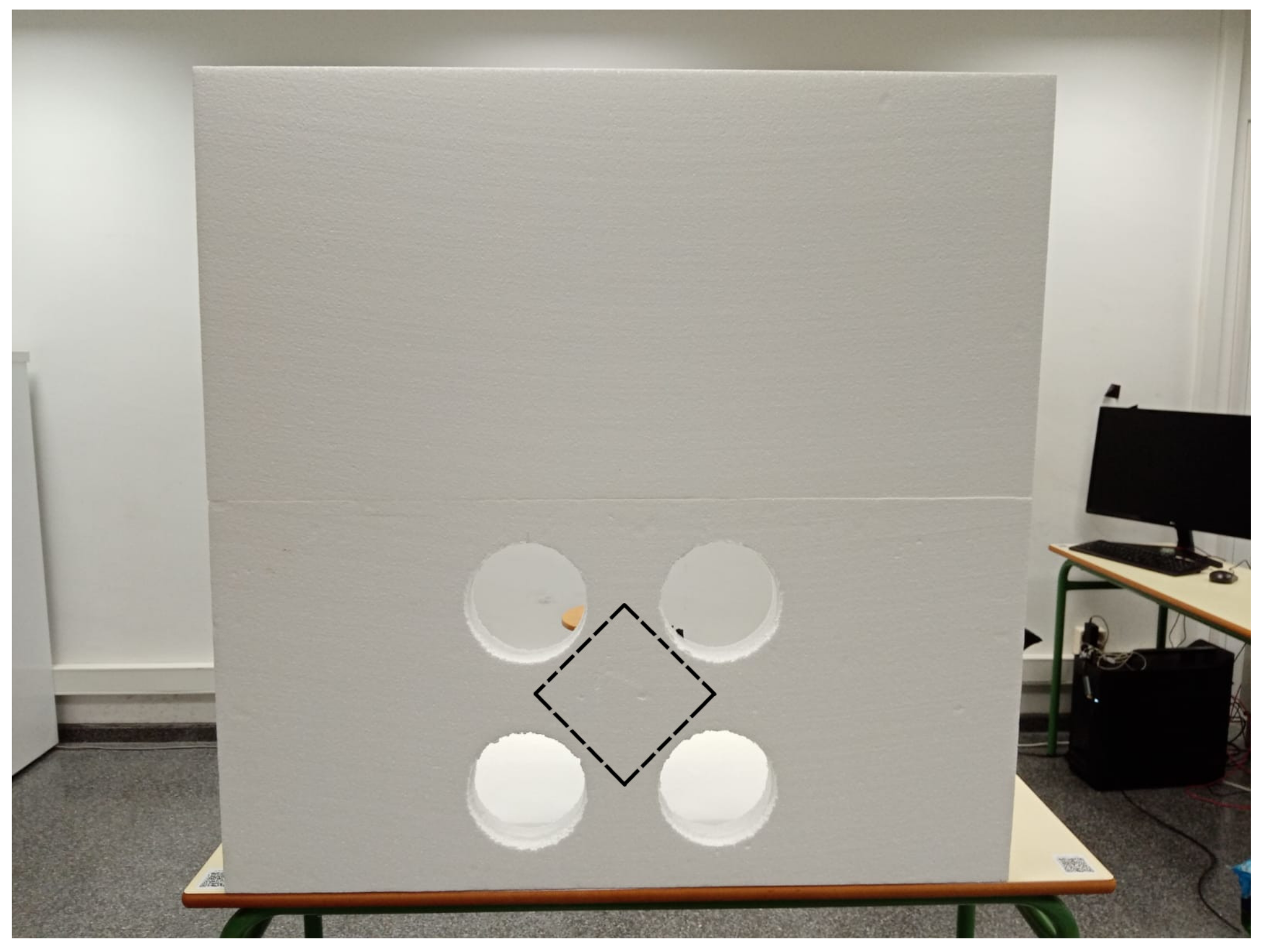

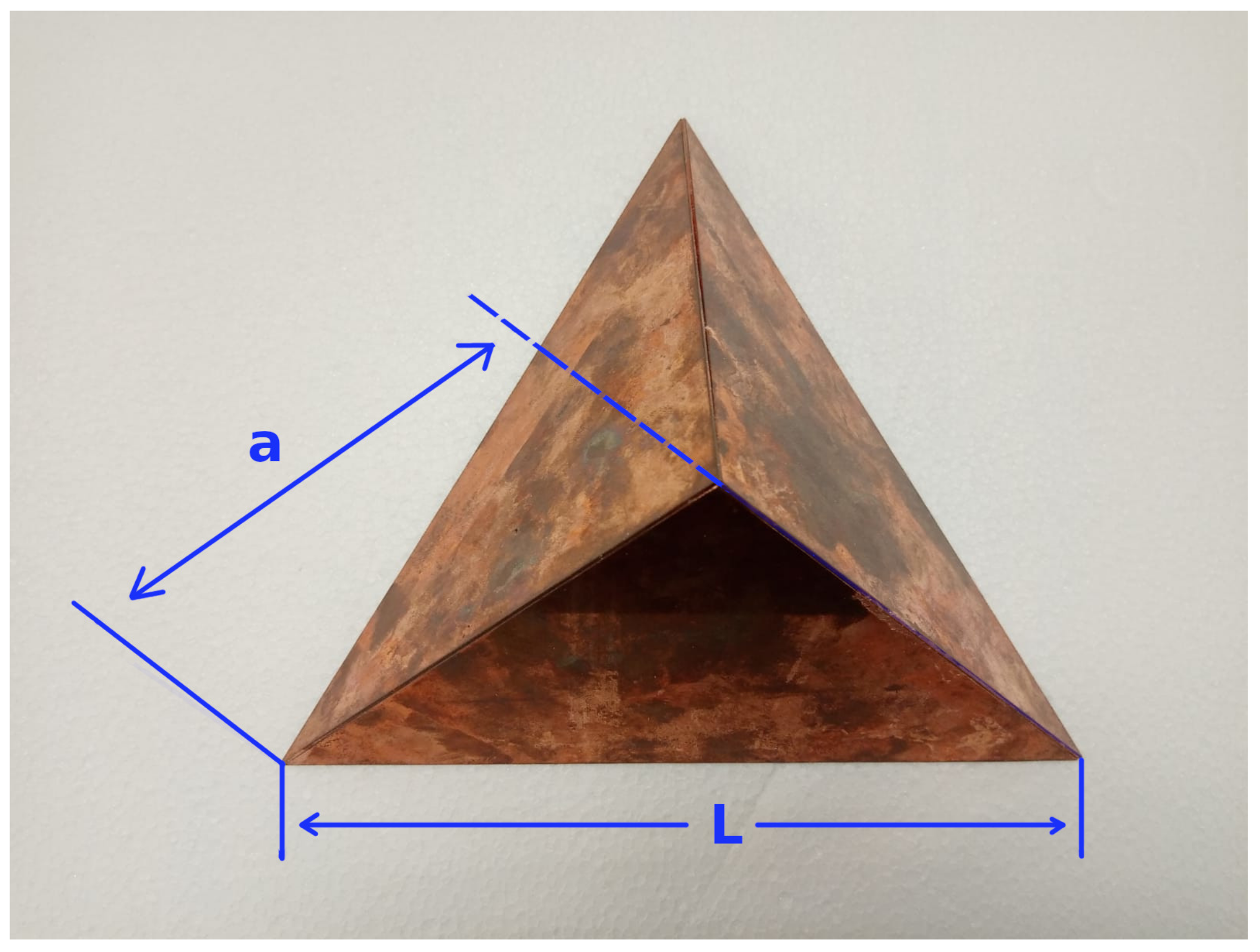

2.1. Calibration Board

2.2. Location and Calibration

2.2.1. Step 1: Image Point Segmentation

2.2.2. Step 2: Lidar Point

| Algorithm 1 Lidar point coordinates | ||

| 1: | procedure | |

| 2: | •Get the undirstorted point in pixel coordinates. | |

| 3: | function UndirstortPoint() | |

| 4: | ||

| 5: | ||

| 6: | return | |

| 7: | end function | |

| 8: | •Get the directional vector in the camera frame. | |

| 9: | function DirectionalVector() | |

| 10: | ||

| 11: | ||

| 12: | ||

| 13: | return | |

| 14: | end function | |

| 15: | •Get the directional vector in the lidar frame. | |

| 16: | function Camera2LidaRotation() | |

| 17: | ||

| 18: | return | |

| 19: | end function | |

| 20: | •Compute the intersection point between a line and a plane. | |

| 21: | function PlaneLineCrossingPoint() | |

| 22: | ▷ creates the vector | |

| 23: | ▷ board’s parallel plane model | |

| 24: | ▷ plane normal vector | |

| 25: | ▷ a point in the model plane | |

| 26: | ▷ translation vector from camera to lidar | |

| 27: | ||

| 28: | ▷ dot product | |

| 29: | ▷ dot product | |

| 30: | ||

| 31: | ▷ intersection point | |

| 32: | return | |

| 33: | end function | |

| 34: | end procedure | |

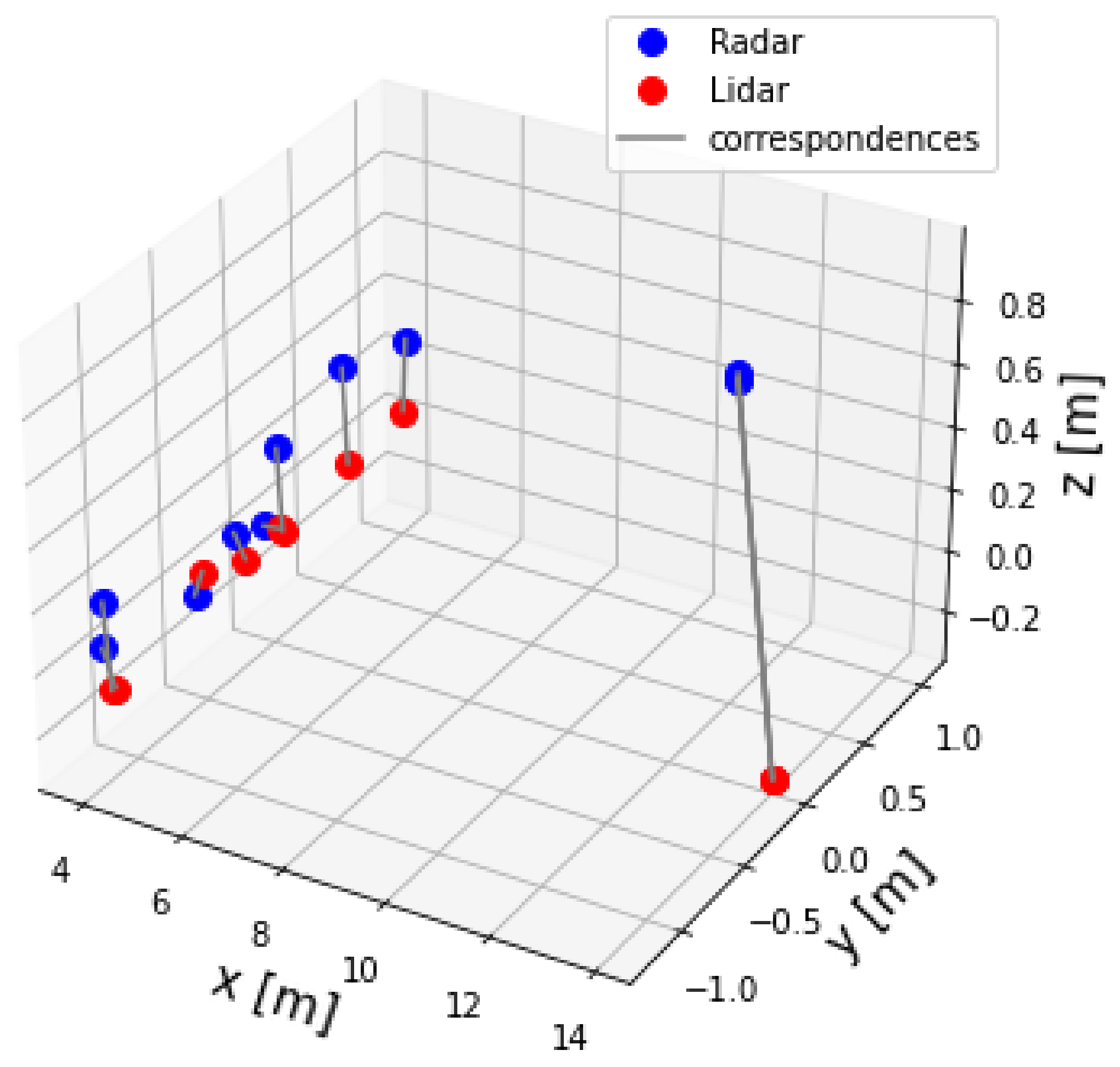

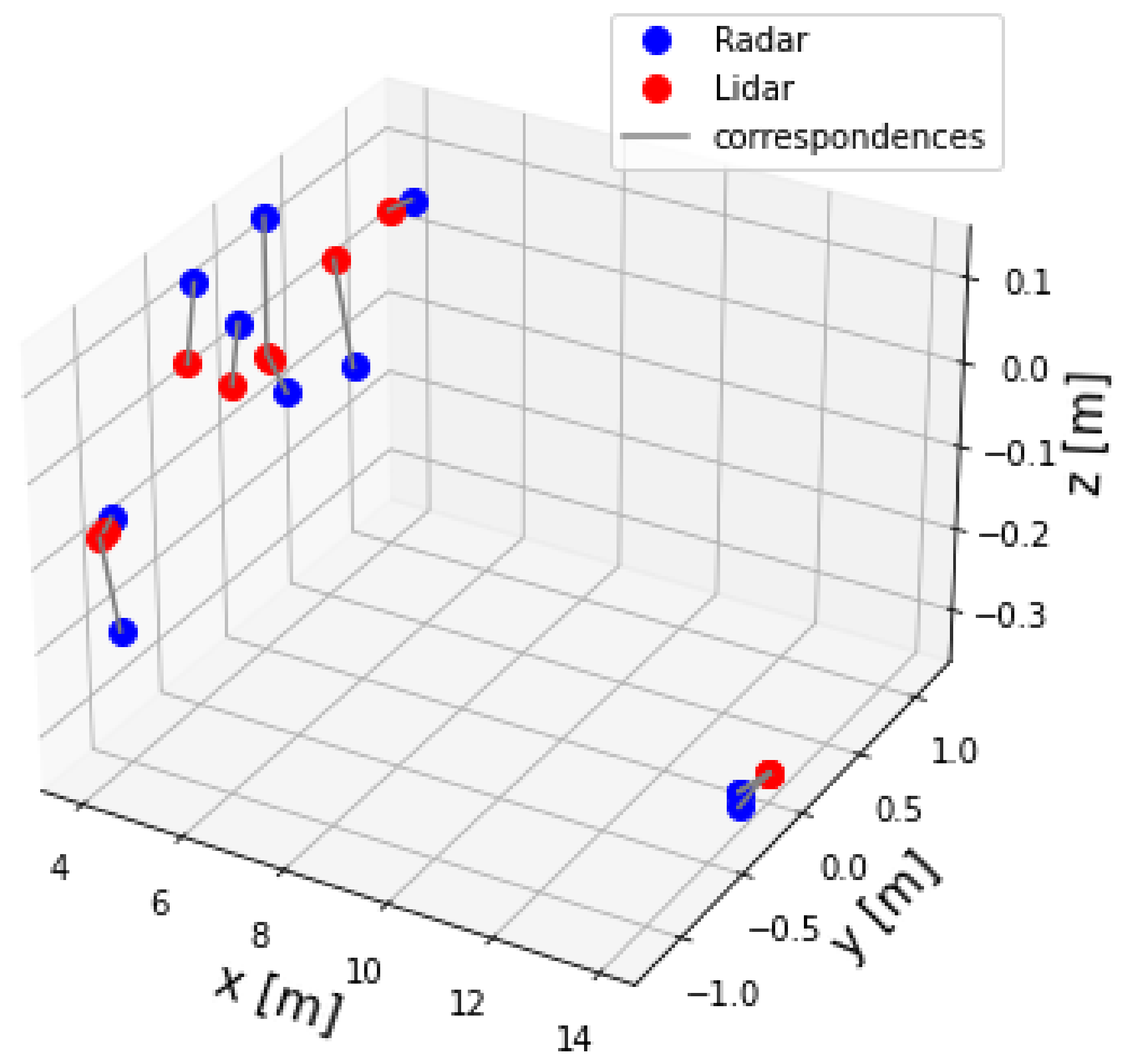

2.2.3. Step 3: Extrinsic Calibration Matrix

| Algorithm 2 Least squares alignment of two 3D point sets based on SVD | ||

| 1: | procedure | |

| 2: | Let and two sets with corresponding points. | |

| 3: | function AlignmentSVD() | |

| 4: | ▷ mean of the data point set X | |

| 5: | ▷ mean of the data point set Y | |

| 6: | ▷ cross covariance between X and Y | |

| 7: | ▷ singular value decomposition of H | |

| 8: | ▷ rotation matrix | |

| 9: | ▷ translation vector | |

| 10: | ▷ extrinsic parameter matrix | |

| 11: | return | |

| 12: | end function | |

| 13: | end procedure | |

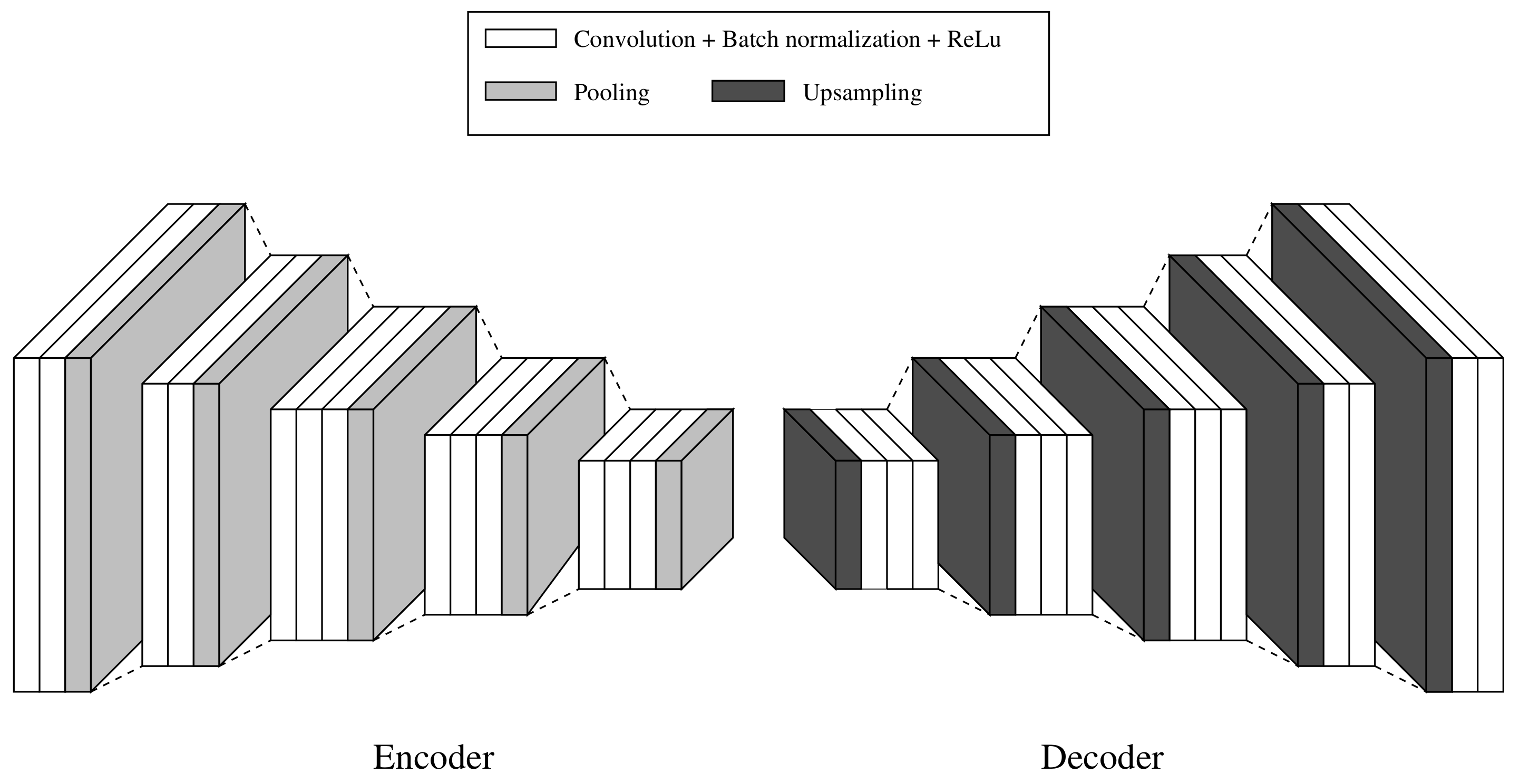

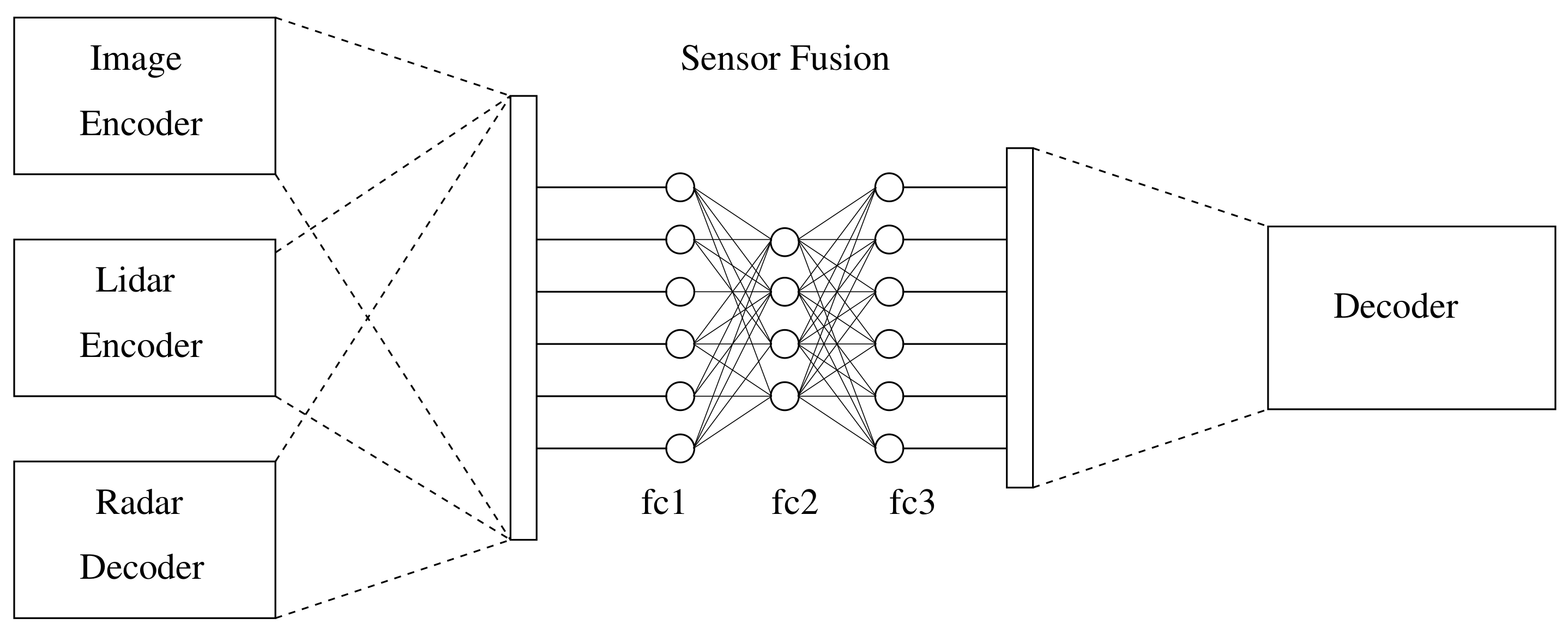

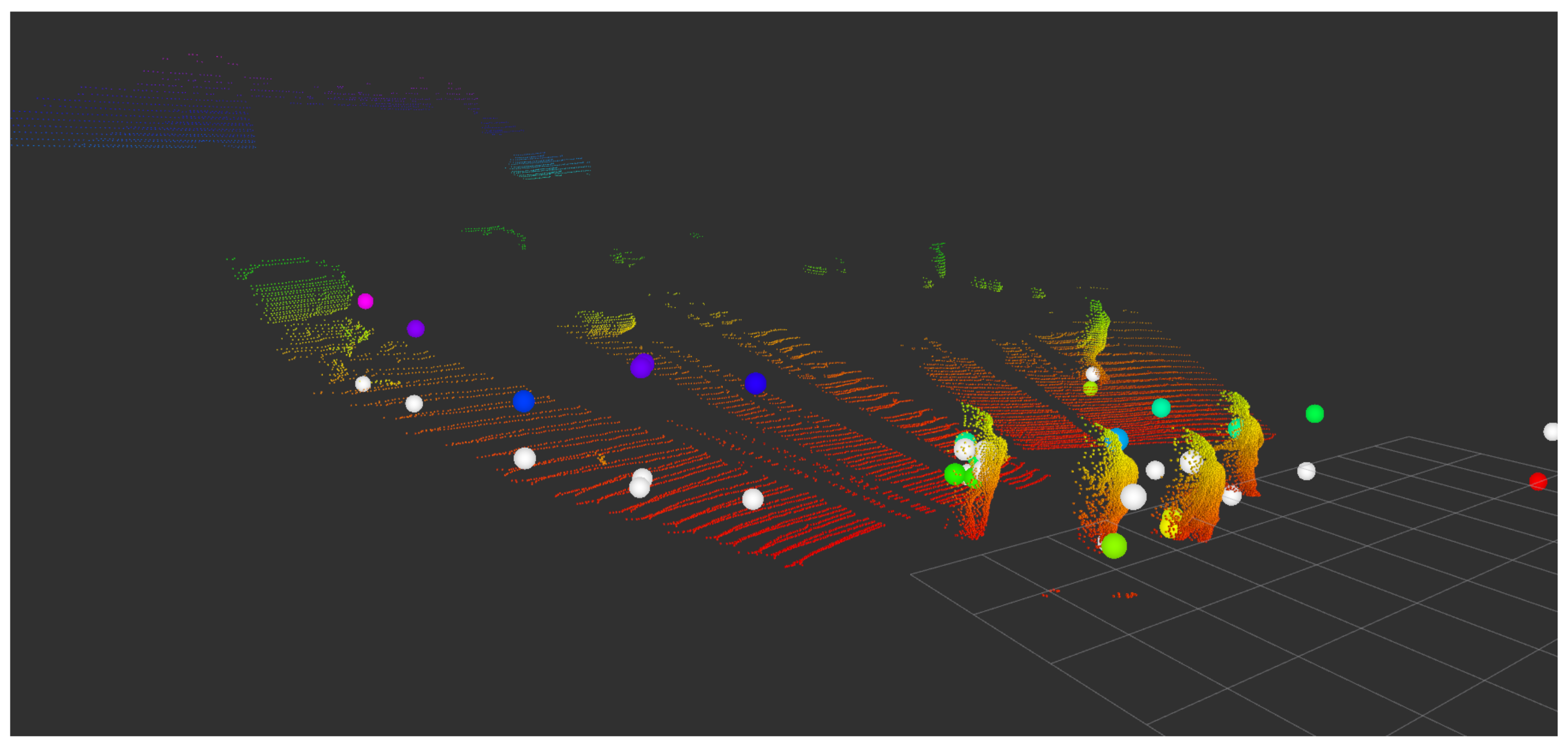

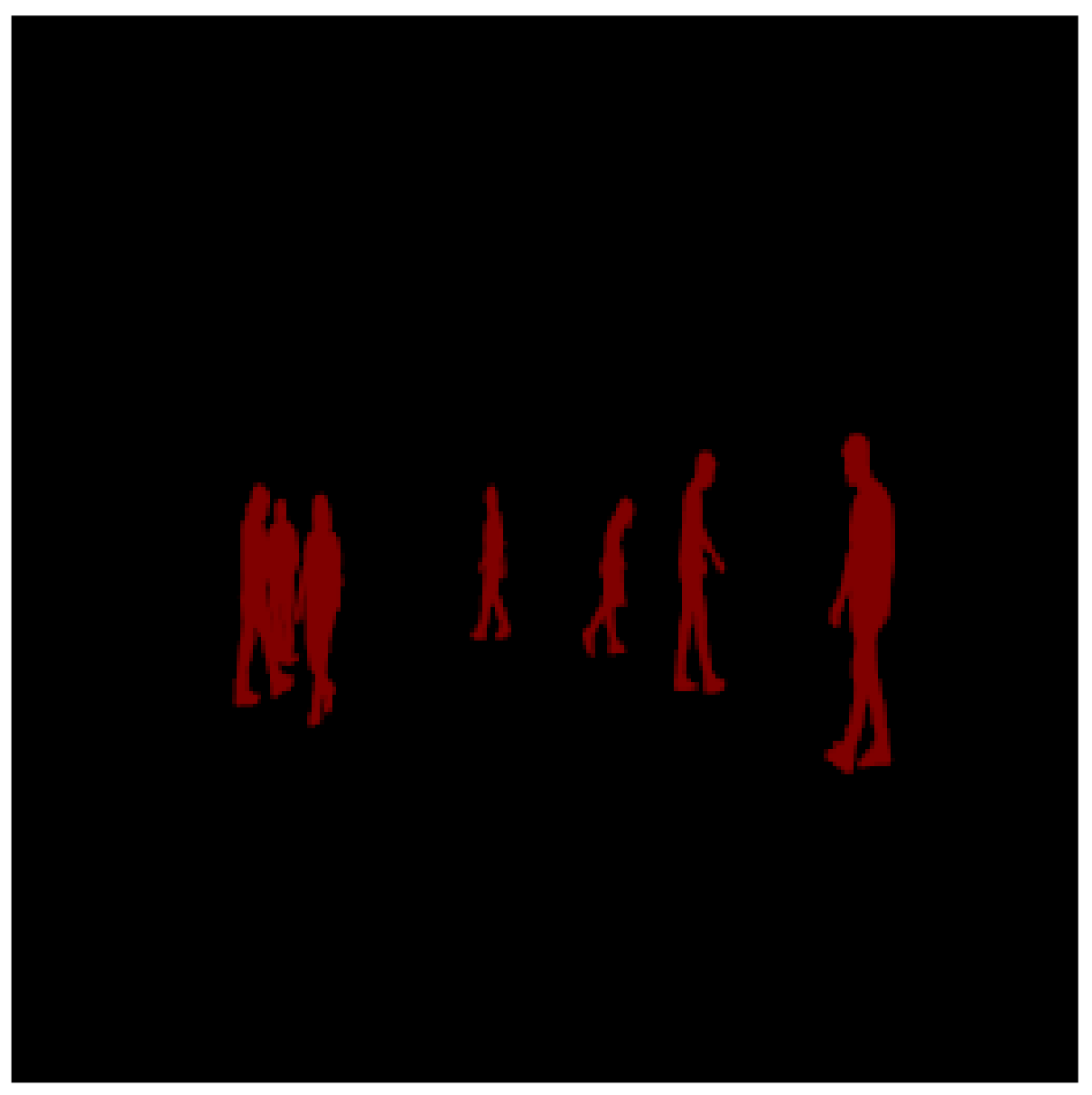

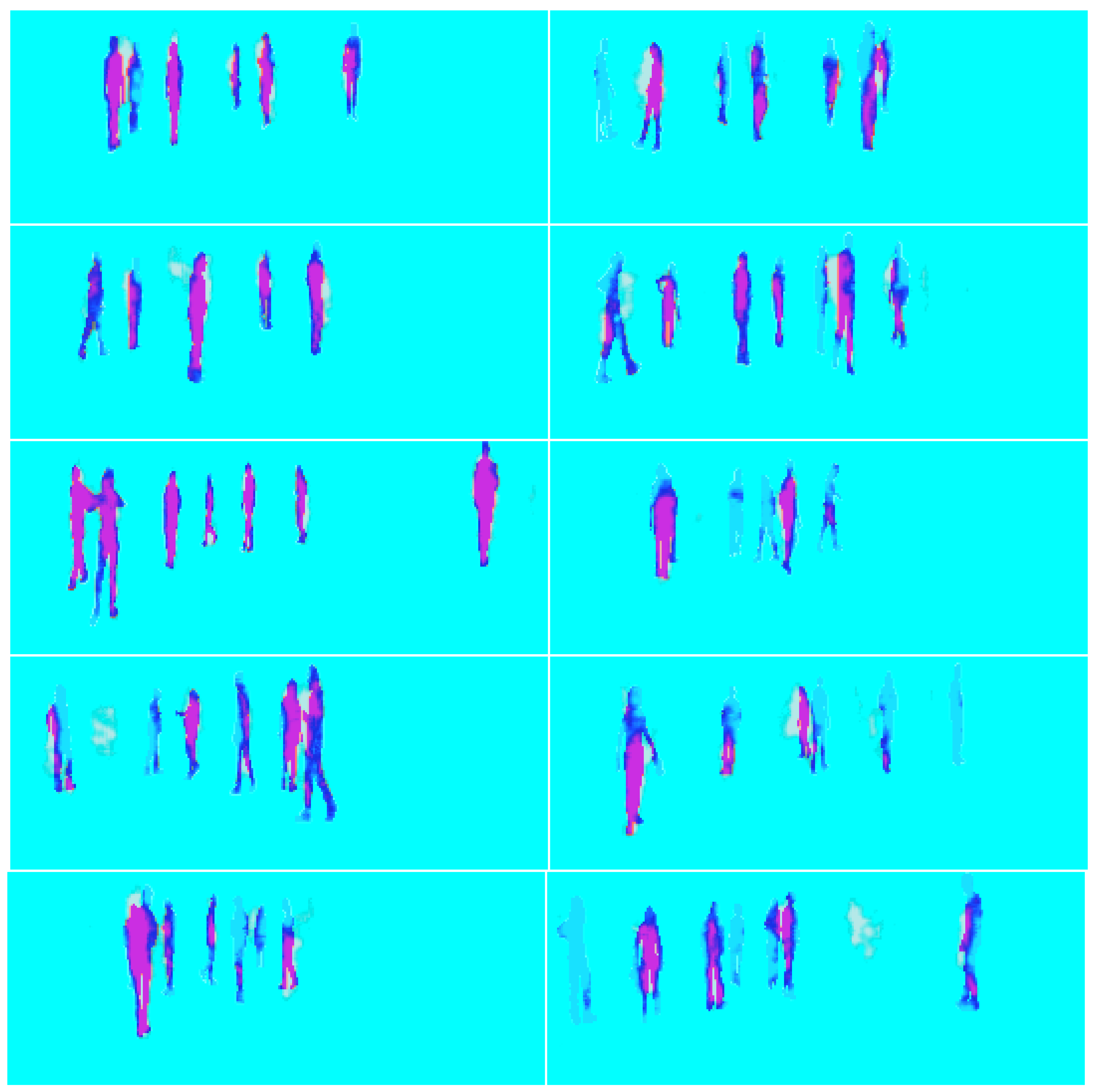

3. Proposed Architecture

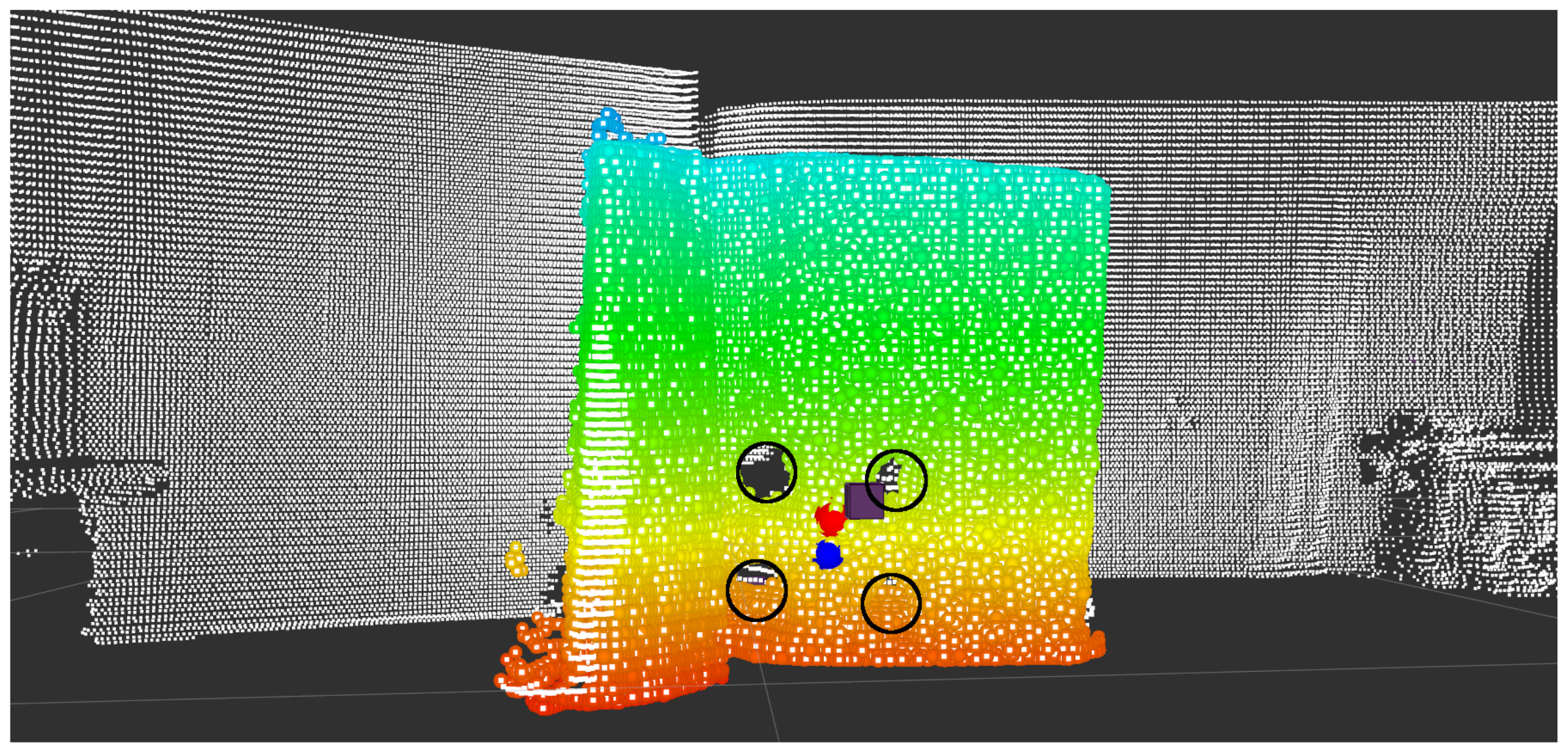

4. Results

4.1. Extrinsic Parameters Matrix

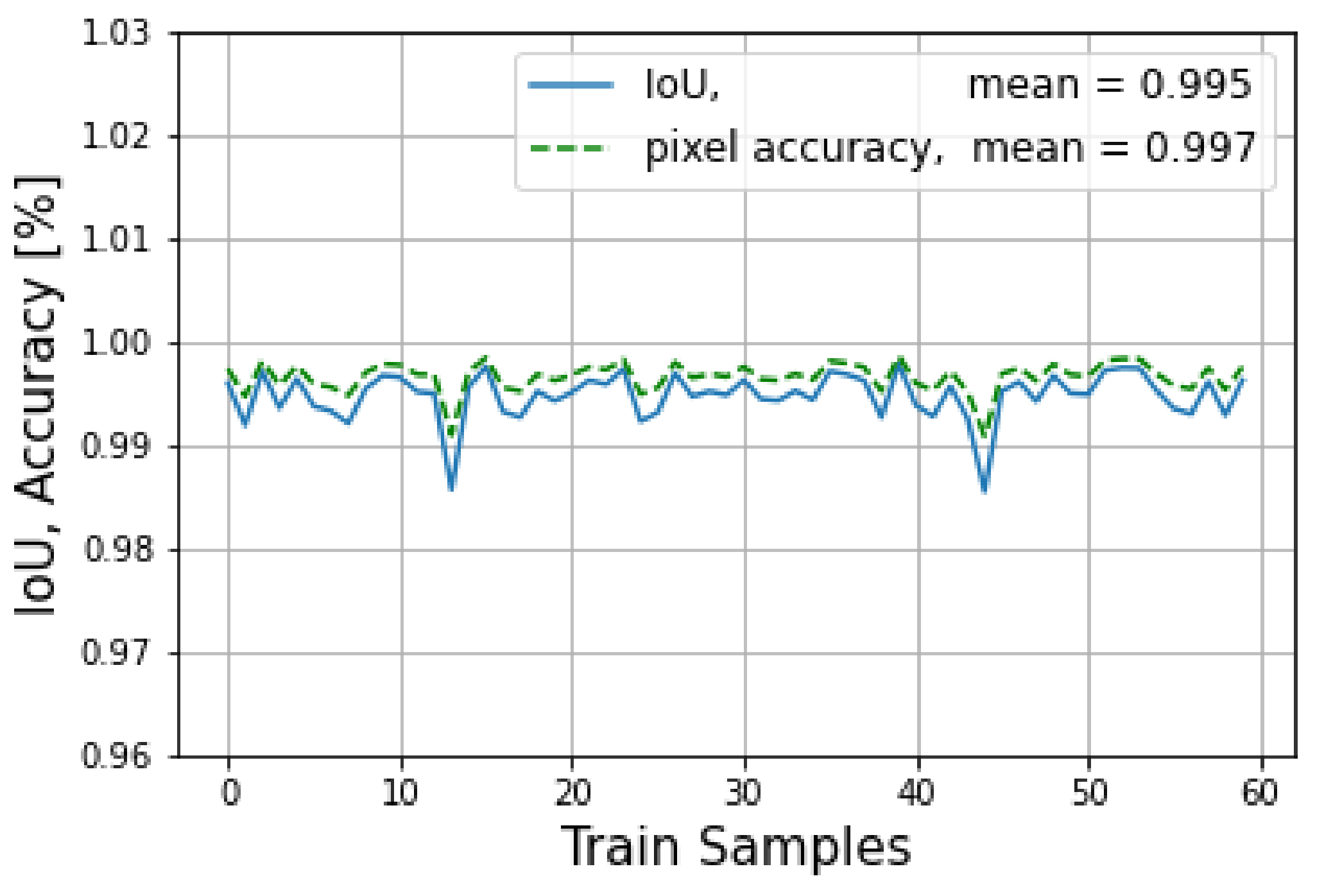

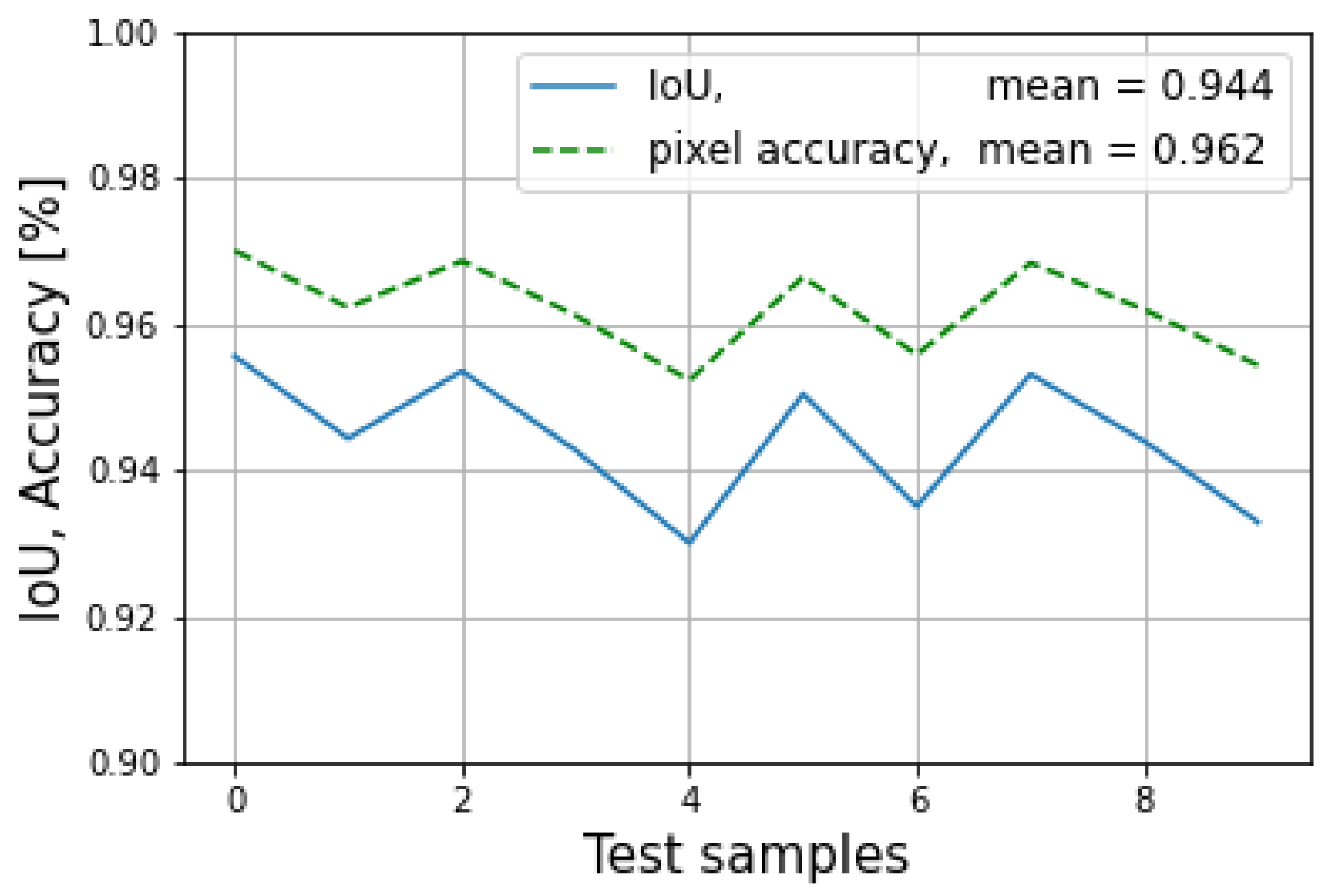

4.2. Network

- The IoU refers to the ratio of the overlap area in pixels to the union of the target mask and the prediction mask in pixels and is represented by Equation (10).

- The pixel accuracy refers to the ratio of the correctly identified positives and negatives to the size of the image and is represented by Equation (11).

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

Abbreviations

| DL | deep learning |

| RNN | recursive neural networks |

| CNN | convolutional neural networks |

| ADC | autonomous driving cars |

| RoI | region of interest |

| RGB | read green blue |

| R-CNN | Region based-CNN |

| SSD | Single shot multibox detector |

| YOLO | you only look once |

| RF | reference frame |

| SVD | singular value decomposition |

| EN | encoder network |

| DN | decoder network |

| FCNN | fully connected neural network |

| SegNet | segmentation network |

| ROS | robot operating system |

| PCD | pointcloud data |

| RRL | radar rgb lidar |

| TP | true positive |

| S | segmentation |

| FP | false positive |

| FN | false negative |

References

- Alzubaidi, L.; Zhang, J.; Humaidi, A.J.; Al-dujaili, A.; Duan, Y.; Al-Shamma, O.; Santamaría, J.; Fadhel, M.A.; Al-Amidie, M.; Farhan, L. Review of deep learning: Concepts, CNN architectures, challenges, applications, future directions. J. Big Data 2021, 8, 53. [Google Scholar] [CrossRef] [PubMed]

- Bimbraw, K. Autonomous cars: Past, present and future a review of the developments in the last century, the present scenario and the expected future of autonomous vehicle technology. In Proceedings of the 2015 12th International Conference on Informatics in Control, Automation and Robotics (ICINCO), Colmar, France, 21–23 July 2015; Volume 1, pp. 191–198. [Google Scholar]

- Yao, G.; Lei, T.; Zhong, J. A review of Convolutional-Neural-Network-based action recognition. Pattern Recognit. Lett. 2019, 118, 14–22, Cooperative and Social Robots: Understanding Human Activities and Intentions. [Google Scholar] [CrossRef]

- Soga, M.; Kato, T.; Ohta, M.; Ninomiya, Y. Pedestrian Detection with Stereo Vision. In Proceedings of the 21st International Conference on Data Engineering Workshops (ICDEW’05), Tokyo, Japan, 3–4 April 2005; p. 1200. [Google Scholar] [CrossRef]

- Yu, X.; Marinov, M. A Study on Recent Developments and Issues with Obstacle Detection Systems for Automated Vehicles. Sustainability 2020, 12, 3281. [Google Scholar] [CrossRef]

- Hall, D.; Llinas, J. An introduction to multisensor data fusion. Proc. IEEE 1997, 85, 6–23. [Google Scholar] [CrossRef]

- Bhateja, V.; Patel, H.; Krishn, A.; Sahu, A.; Lay-Ekuakille, A. Multimodal Medical Image Sensor Fusion Framework Using Cascade of Wavelet and Contourlet Transform Domains. IEEE Sensors J. 2015, 15, 6783–6790. [Google Scholar] [CrossRef]

- Liu, X.; Liu, Q.; Wang, Y. Remote sensing image fusion based on two-stream fusion network. Inf. Fusion 2020, 55, 1–15. [Google Scholar] [CrossRef]

- Smaili, C.; Najjar, M.E.E.; Charpillet, F. Multi-sensor Fusion Method Using Dynamic Bayesian Network for Precise Vehicle Localization and Road Matching. In Proceedings of the 19th IEEE International Conference on Tools with Artificial Intelligence (ICTAI 2007), Patras, Greece, 29–31 October 2007; Volume 1, pp. 146–151. [Google Scholar] [CrossRef]

- Kam, M.; Zhu, X.; Kalata, P. Sensor fusion for mobile robot navigation. Proc. IEEE 1997, 85, 108–119. [Google Scholar] [CrossRef]

- Fayyad, J.; Jaradat, M.A.; Gruyer, D.; Najjaran, H. Deep Learning Sensor Fusion for Autonomous Vehicle Perception and Localization: A Review. Sensors 2020, 20, 4220. [Google Scholar] [CrossRef] [PubMed]

- Shakeri, A.; Moshiri, B.; Garakani, H.G. Pedestrian Detection Using Image Fusion and Stereo Vision in Autonomous Vehicles. In Proceedings of the 2018 9th International Symposium on Telecommunications (IST), Tehran, Iran, 17–19 December 2018; pp. 592–596. [Google Scholar] [CrossRef]

- Suganuma, N.; Matsui, T. Robust environment perception based on occupancy grid maps for autonomous vehicle. In Proceedings of the SICE Annual Conference 2010, Taipei, Taiwan, 18–21 August 2010; pp. 2354–2357. [Google Scholar]

- Torresan, H.; Turgeon, B.; Ibarra-Castanedo, C.; Hebert, P.; Maldague, X. Advanced surveillance systems: Combining video and thermal imagery for pedestrian detection. In Proceedings of the SPIE—The International Society for Optical Engineering, Orlando, FL, USA, April 2004. [Google Scholar] [CrossRef]

- Musleh, B.; García, F.; Otamendi, J.; Armingol, J.M.; De la Escalera, A. Identifying and Tracking Pedestrians Based on Sensor Fusion and Motion Stability Predictions. Sensors 2010, 10, 8028–8053. [Google Scholar] [CrossRef] [PubMed]

- Schlosser, J.; Chow, C.K.; Kira, Z. Fusing LIDAR and images for pedestrian detection using convolutional neural networks. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 2198–2205. [Google Scholar] [CrossRef]

- Baabou, S.; Fradj, A.B.; Farah, M.A.; Abubakr, A.G.; Bremond, F.; Kachouri, A. A Comparative Study and State-of-the-art Evaluation for Pedestrian Detection. In Proceedings of the 2019 19th International Conference on Sciences and Techniques of Automatic Control and Computer Engineering (STA), Sousse, Tunisia, 24–26 March 2019; pp. 485–490. [Google Scholar] [CrossRef]

- Liu, J.; Zhang, S.; Wang, S.; Metaxas, D. Multispectral Deep Neural Networks for Pedestrian Detection. In Proceedings of the Proceedings of the British Machine Vision Conference (BMVC), York, UK, 19–22 September 2016; Richard C. Wilson, E.R.H., Smith, W.A.P., Eds.; BMVA Press: Durham, UK, 2016; pp. 73.1–73.13. [Google Scholar] [CrossRef]

- Kim, J.H.; Batchuluun, G.; Park, K.R. Pedestrian detection based on faster R-CNN in nighttime by fusing deep convolutional features of successive images. Expert Syst. Appl. 2018, 114, 15–33. [Google Scholar] [CrossRef]

- Chen, A.; Asawa, C. Going beyond the Bounding Box with Semantic Segmentation. Available online: https://thegradient.pub/semantic-segmentation/ (accessed on 15 December 2022).

- Rawashdeh, N.; Bos, J.; Abu-Alrub, N. Drivable path detection using CNN sensor fusion for autonomous driving in the snow. In Proceedings of the Autonomous Systems: Sensors, Processing, and Security for Vehicles and Infrastructure 2021, Online, 12–17 April 2021; Dudzik, M.C., Jameson, S.M., Axenson, T.J., Eds.; International Society for Optics and Photonics, SPIE: Orlando, FL, USA, 2021; Volume 11748, pp. 36–45. [Google Scholar] [CrossRef]

- Zhang, R.; Candra, S.A.; Vetter, K.; Zakhor, A. Sensor fusion for semantic segmentation of urban scenes. In Proceedings of the 2015 IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, 26–30 May 2015; pp. 1850–1857. [Google Scholar] [CrossRef]

- Madawy, K.E.; Rashed, H.; Sallab, A.E.; Nasr, O.; Kamel, H.; Yogamani, S.K. RGB and LiDAR fusion based 3D Semantic Segmentation for Autonomous Driving. arXiv 2019, arXiv:1906.00208. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Sordo, M.; Zeng, Q. On Sample Size and Classification Accuracy: A Performance Comparison. In Proceedings of the Biological and Medical Data Analysis; Oliveira, J.L., Maojo, V., Martín-Sánchez, F., Pereira, A.S., Eds.; Springer: Berlin/Heidelberg, Germany, 2005; pp. 193–201. [Google Scholar]

- Althnian, A.; AlSaeed, D.; Al-Baity, H.; Samha, A.; Dris, A.B.; Alzakari, N.; Abou Elwafa, A.; Kurdi, H. Impact of Dataset Size on Classification Performance: An Empirical Evaluation in the Medical Domain. Appl. Sci. 2021, 11, 796. [Google Scholar] [CrossRef]

- Barbedo, J.G.A. Impact of dataset size and variety on the effectiveness of deep learning and transfer learning for plant disease classification. Comput. Electron. Agric. 2018, 153, 46–53. [Google Scholar] [CrossRef]

- Domhof, J.; Kooij, J.F.; Gavrila, D.M. An Extrinsic Calibration Tool for Radar, Camera and Lidar. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 8107–8113. [Google Scholar] [CrossRef]

- Arun, K.S.; Huang, T.S.; Blostein, S.D. Least-Squares Fitting of Two 3-D Point Sets. IEEE Trans. Pattern Anal. Mach. Intell. 1987, PAMI-9, 698–700. [Google Scholar] [CrossRef] [PubMed]

- Chávez Plascencia, A.; Pablo García-Gímez, P.; Bernal Perez, E.; DeMas-Giménez, G.; Casas R, J.; Royo Royo, S. Deep Learning Sensor Fusion for Pedestrian Detection. Available online: https://github.com/ebernalbeamagine/DeepLearningSensorFusionForPedestrianDetection (accessed on 13 December 2022).

- Beamagine. Available online: https://beamagine.com/ (accessed on 30 October 2020).

- Smartmicro. Available online: https://www.smartmicro.com/ (accessed on 1 January 2022).

- Reflector. Available online: https://www.miwv.com/wp-content/uploads/2020/06/Trihedral-Reflectors-for-Radar-Applications.pdf (accessed on 2 January 2022).

- Sors, M.S.; Carbonell, V. Analytic Geometry; Joaquin Porrua: Coyoacán, Mexico, 1983. [Google Scholar]

- Bellekens, B.; Spruyt, V.; Berkvens, R.; Weyn, M. A Survey of Rigid 3D Pointcloud Registration Algorithms. In Proceedings of the Fourth International Conference on Ambient Computing, Applications, Services and Technologies, Rome, Italy, 24–28 August 2014. [Google Scholar]

| Encoder Network | |||||

|---|---|---|---|---|---|

| Layer | In Channels | Out Channels | Kernel | Padding | Stride |

| conv 1 | 3 | 64 | 3 | 1 | 1 |

| conv 2 | 64 | 64 | 3 | 1 | 1 |

| MaxPool | 1 | 2 | 0 | 2 | |

| conv 3 | 64 | 128 | 3 | 1 | 1 |

| conv 4 | 128 | 128 | 3 | 1 | 1 |

| MaxPool | 2 | 2 | 0 | 2 | |

| conv 5 | 128 | 256 | 3 | 1 | 1 |

| conv 6 | 256 | 256 | 3 | 1 | 1 |

| conv 7 | 256 | 256 | 3 | 1 | 1 |

| MaxPool 3 | 2 | 0 | 2 | ||

| conv 8 | 256 | 512 | 3 | 1 | 1 |

| conv 9 | 512 | 512 | 3 | 1 | 1 |

| conv 10 | 512 | 512 | 3 | 1 | 1 |

| MaxPool 4 | 2 | 0 | 2 | ||

| conv 11 | 512 | 512 | 3 | 1 | 1 |

| conv 12 | 512 | 512 | 3 | 1 | 1 |

| conv 13 | 512 | 512 | 3 | 1 | 1 |

| MaxPool 5 | 2 | 0 | 2 | ||

| Fully Connected Network | ||

|---|---|---|

| Layer | In | Out |

| fc1 | 98,304 | 2048 |

| fc2 | 2048 | 1024 |

| fc3 | 1024 | 65,536 |

| Decoder Network | |||||

|---|---|---|---|---|---|

| Layer | In Channels | Out Channels | Kernel | Padding | Stride |

| MaxUnpool 1 | 2 | 0 | 2 | ||

| conv 1 | 512 | 512 | 3 | 1 | 1 |

| conv 2 | 512 | 512 | 3 | 1 | 1 |

| conv 3 | 512 | 512 | 3 | 1 | 1 |

| MaxUnpool 2 | 2 | 0 | 2 | ||

| conv 4 | 512 | 512 | 3 | 1 | 1 |

| conv 5 | 512 | 512 | 3 | 1 | 1 |

| conv 6 | 512 | 256 | 3 | 1 | 1 |

| MaxUnpool 3 | 2 | 0 | 2 | ||

| conv 7 | 256 | 256 | 3 | 1 | 1 |

| conv 8 | 256 | 256 | 3 | 1 | 1 |

| conv 9 | 256 | 128 | 3 | 1 | 1 |

| MaxUnpool 4 | 2 | 0 | 2 | ||

| conv 10 | 128 | 128 | 3 | 1 | 1 |

| conv 11 | 128 | 64 | 3 | 1 | 1 |

| MaxUnpool 5 | 2 | 0 | 2 | ||

| conv 12 | 64 | 64 | 3 | 1 | 1 |

| conv 13 | 64 | 3 | 3 | 1 | 1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Plascencia, A.C.; García-Gómez, P.; Perez, E.B.; DeMas-Giménez, G.; Casas, J.R.; Royo, S. A Preliminary Study of Deep Learning Sensor Fusion for Pedestrian Detection. Sensors 2023, 23, 4167. https://doi.org/10.3390/s23084167

Plascencia AC, García-Gómez P, Perez EB, DeMas-Giménez G, Casas JR, Royo S. A Preliminary Study of Deep Learning Sensor Fusion for Pedestrian Detection. Sensors. 2023; 23(8):4167. https://doi.org/10.3390/s23084167

Chicago/Turabian StylePlascencia, Alfredo Chávez, Pablo García-Gómez, Eduardo Bernal Perez, Gerard DeMas-Giménez, Josep R. Casas, and Santiago Royo. 2023. "A Preliminary Study of Deep Learning Sensor Fusion for Pedestrian Detection" Sensors 23, no. 8: 4167. https://doi.org/10.3390/s23084167

APA StylePlascencia, A. C., García-Gómez, P., Perez, E. B., DeMas-Giménez, G., Casas, J. R., & Royo, S. (2023). A Preliminary Study of Deep Learning Sensor Fusion for Pedestrian Detection. Sensors, 23(8), 4167. https://doi.org/10.3390/s23084167