Development of a Biosensory Computer Application to Assess Physiological and Emotional Responses from Sensory Panelists

Abstract

1. Introduction

2. Materials and Methods

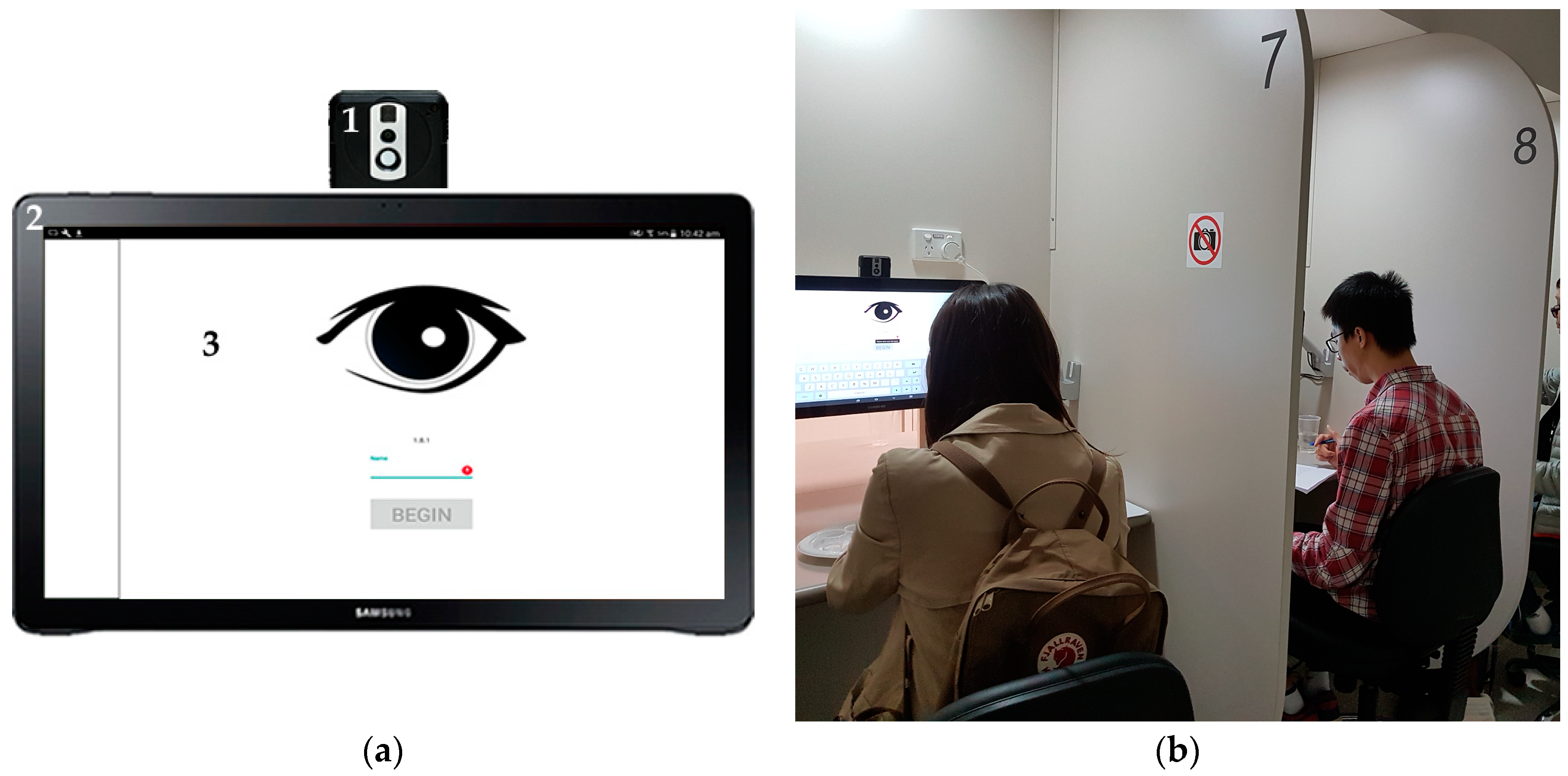

2.1. Integrated Video- and Thermal-Camera Description

2.2. Integrated System and Biosensory App Development

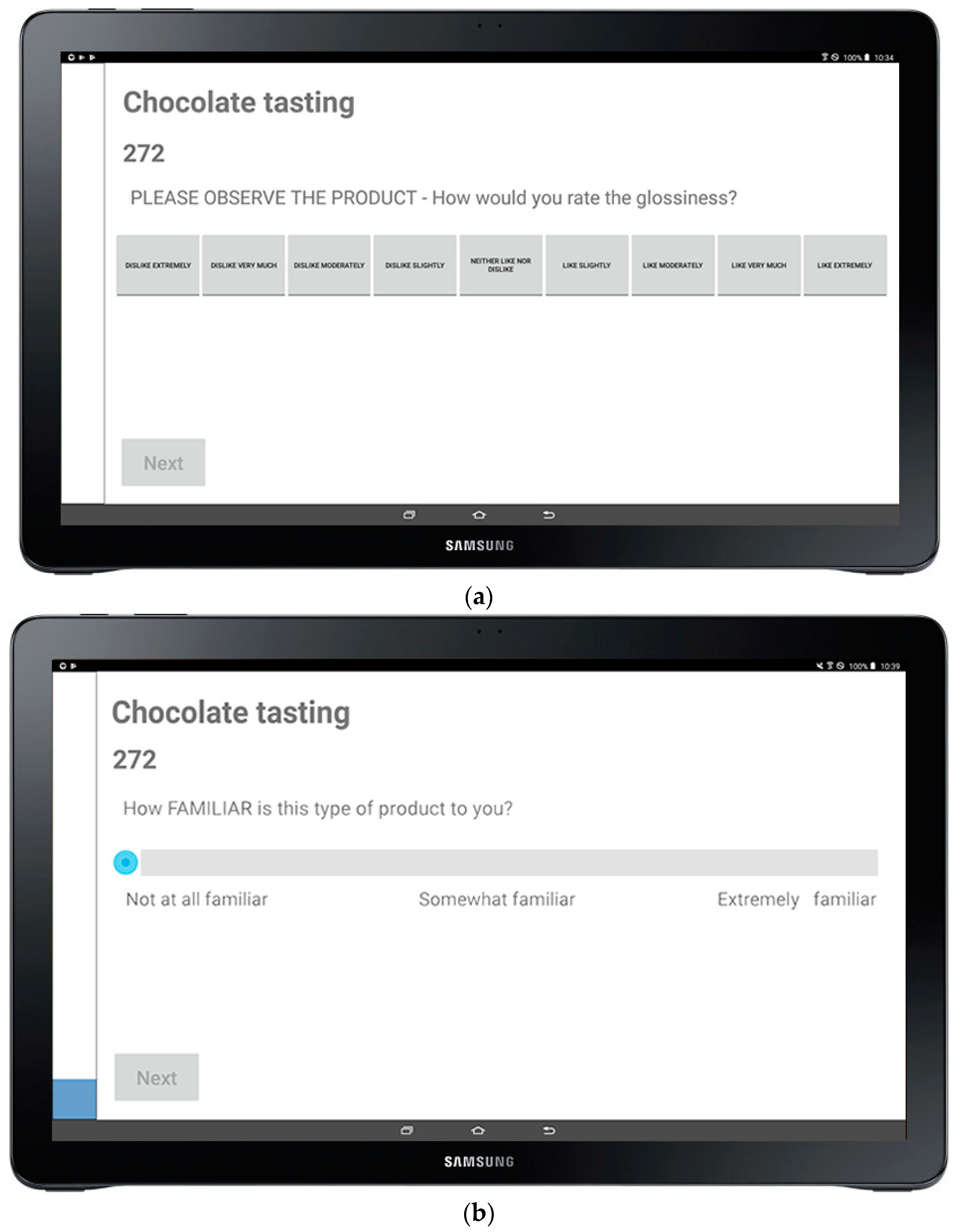

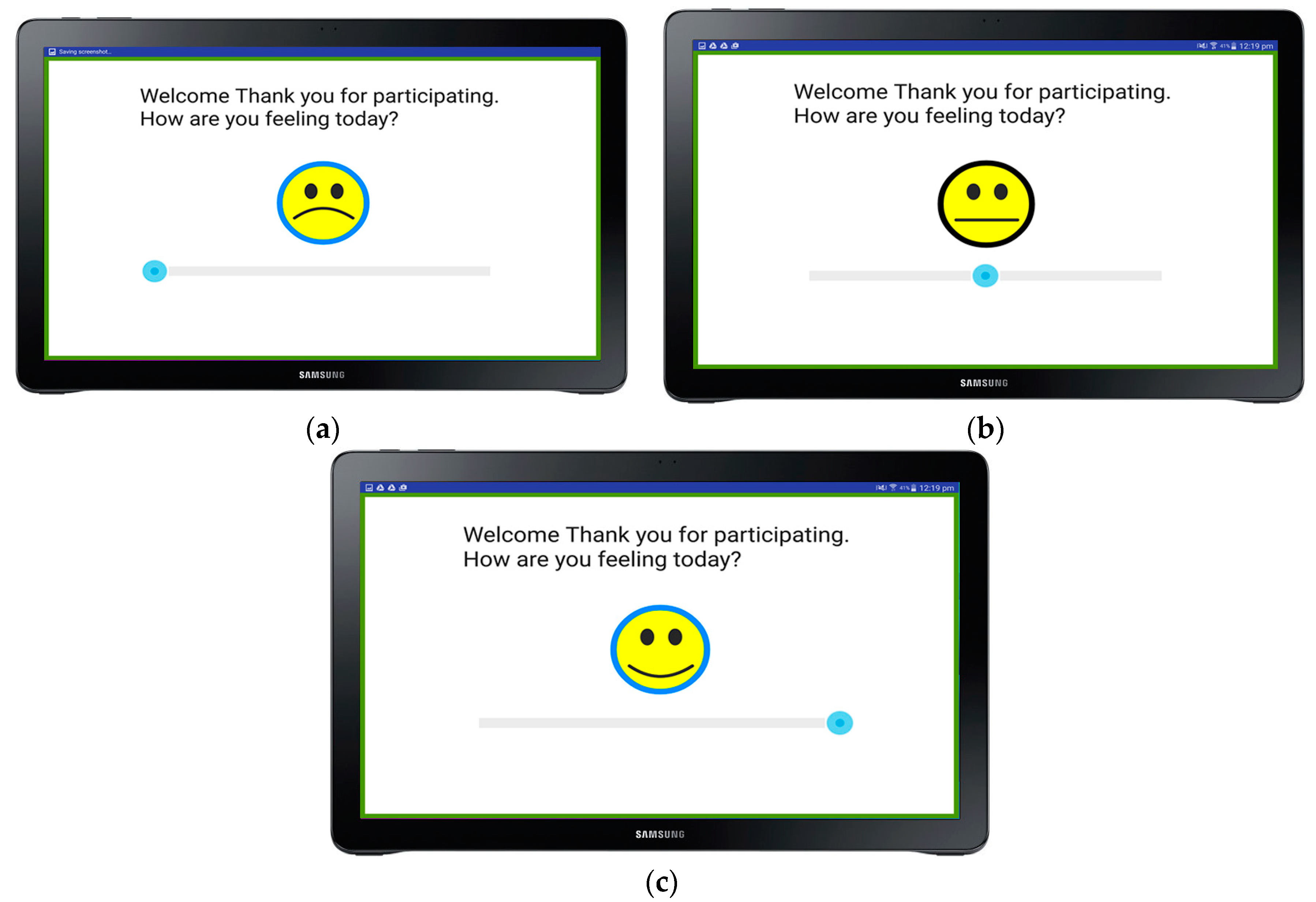

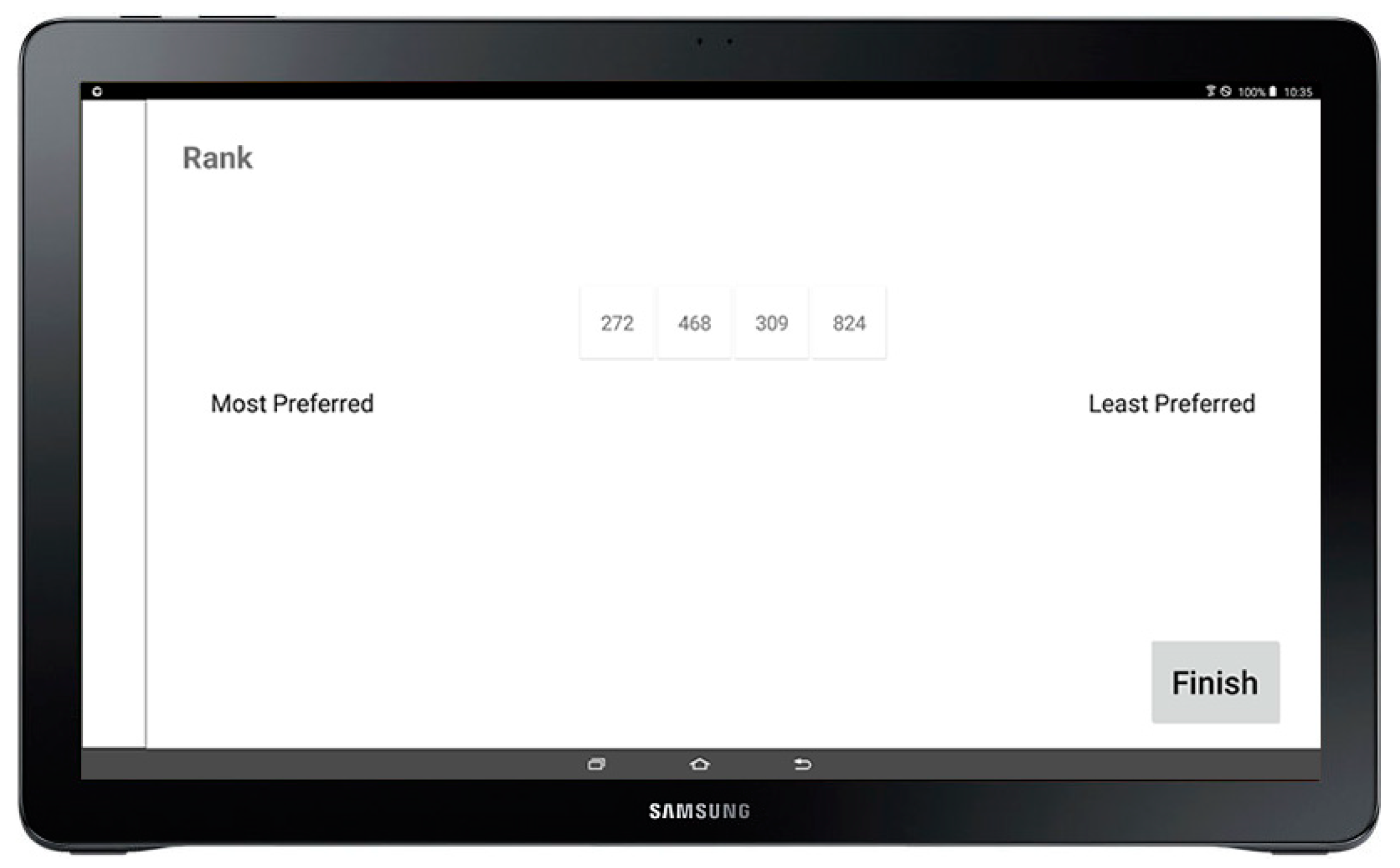

2.2.1. Biosensory App Development

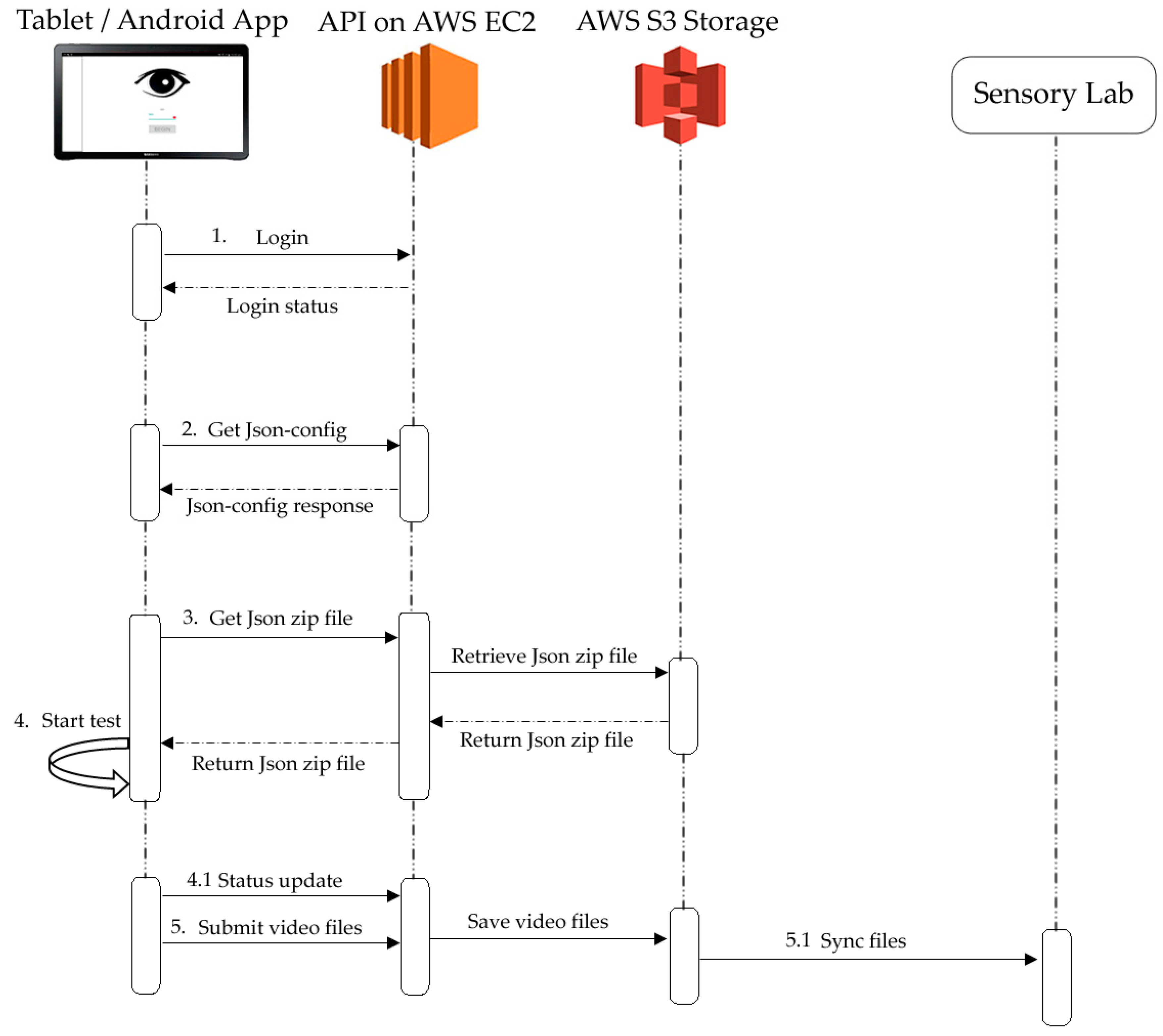

2.2.2. Monitoring-System and Cloud-System Description

2.3. How the Biosensory App Works

2.4. Algorithms Used for Image, Video, and Infrared Thermography Analyses

2.5. Examples of the Use of the Integrated Camera System in Sensory Sessions Using Beer and Images as Stimuli

3. Results from Examples

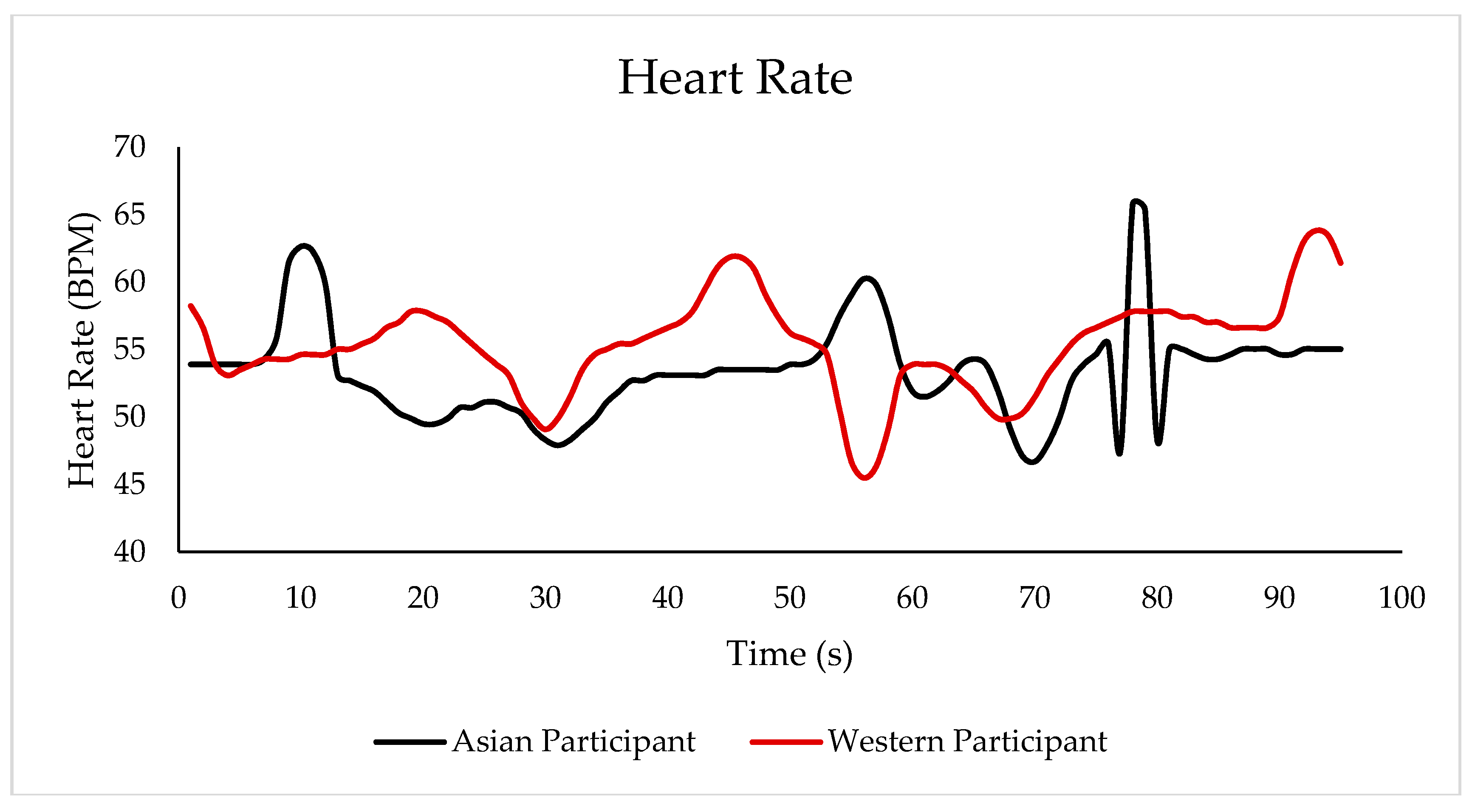

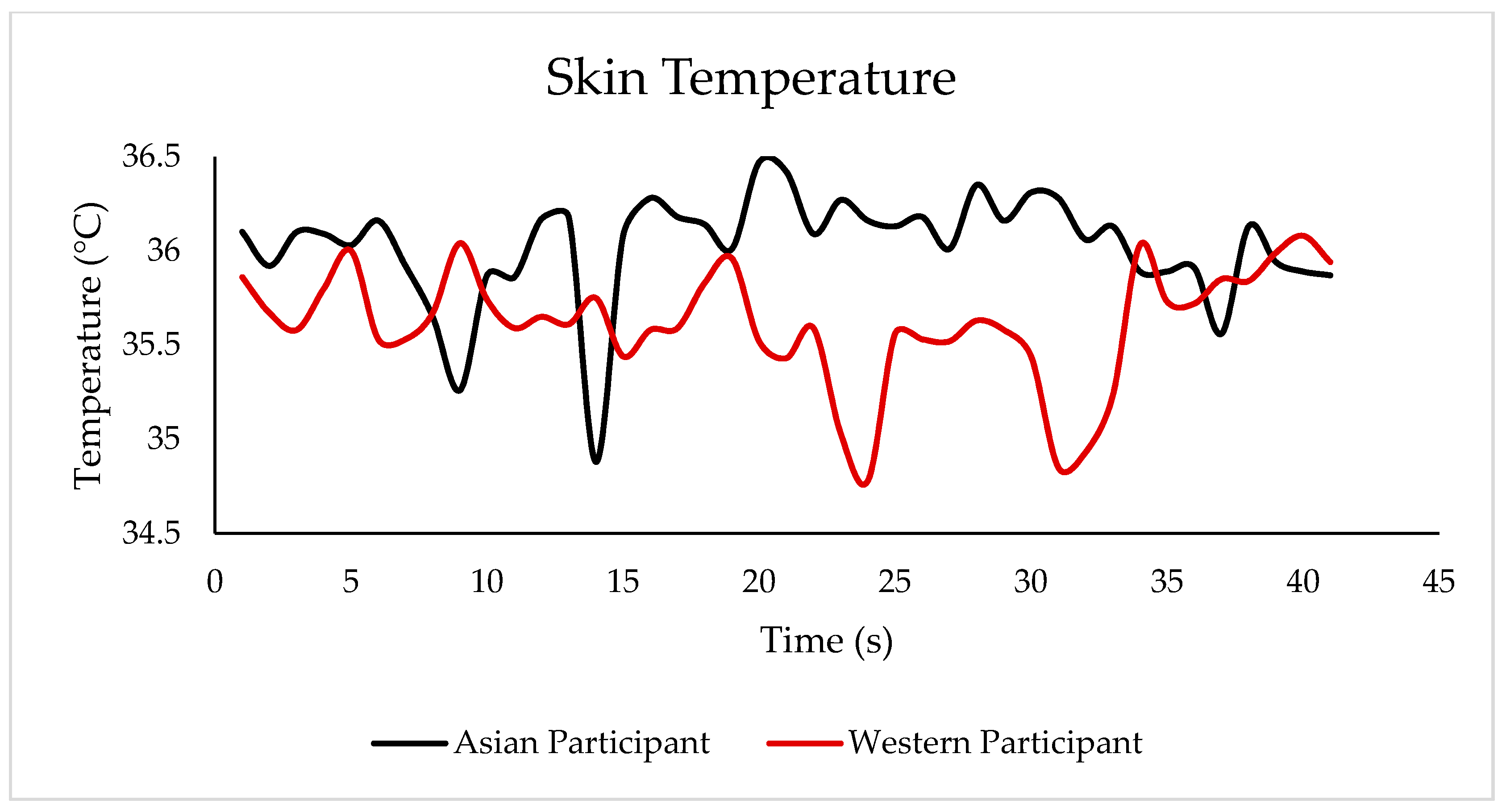

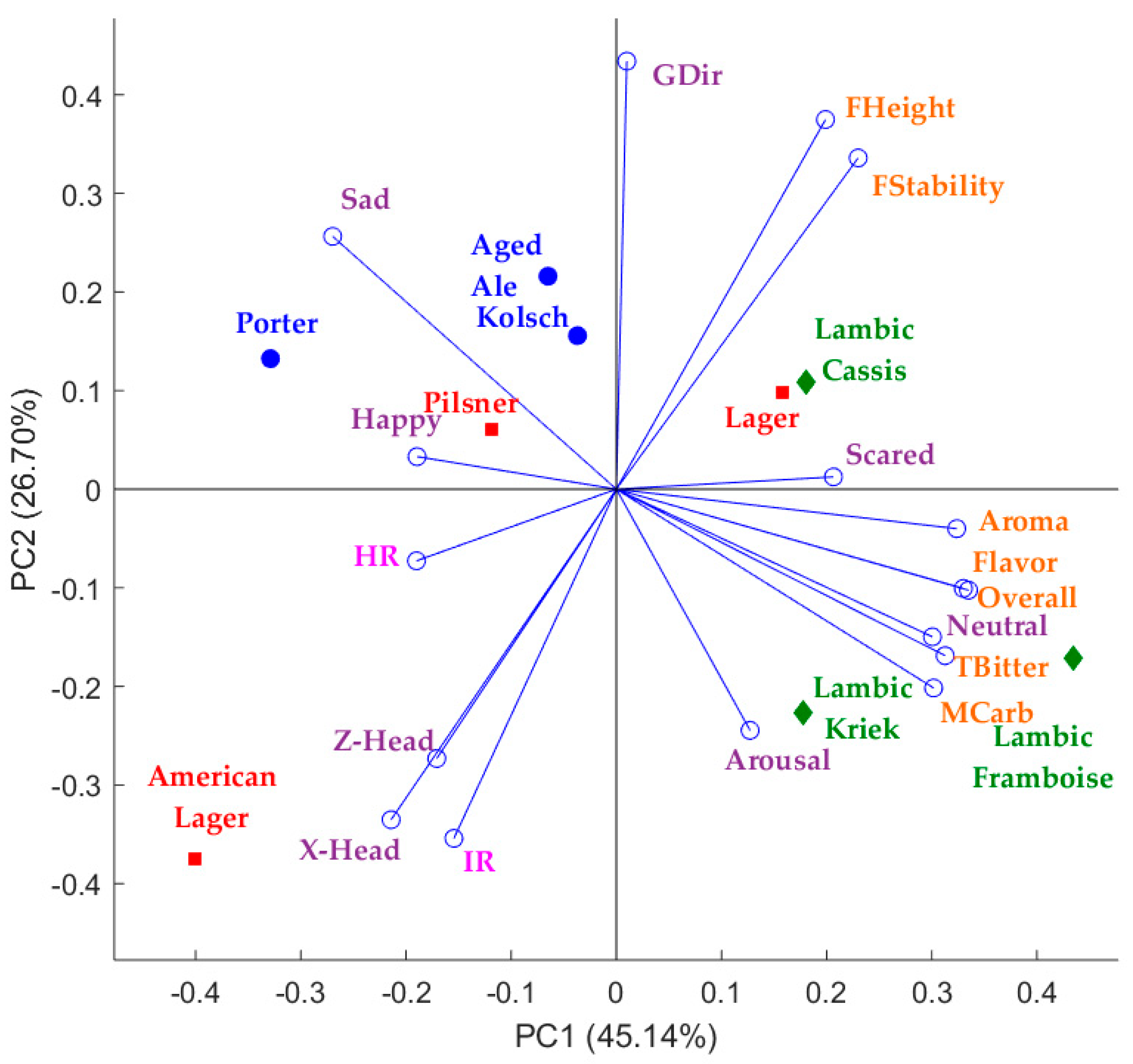

3.1. Results Obtained from the Biosensory App Using the Integrated Camera System for the Sensory Sessions Using Beer Samples as Stimuli

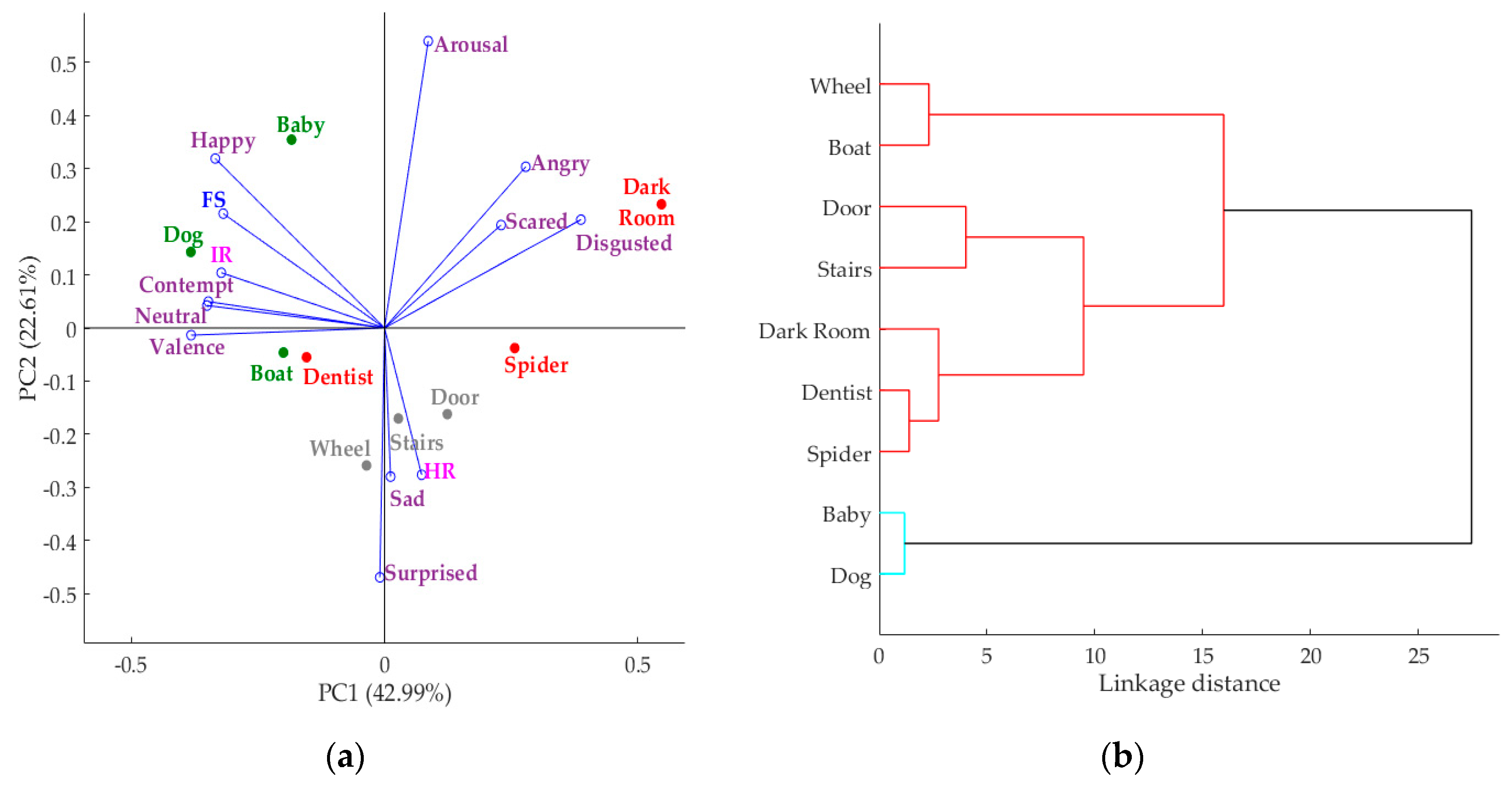

3.2. Results Obtained from the Biosensory App Using the Integrated Camera System for the Sensory Sessions Using Images as Stimuli

4. Discussions

4.1. Application of the Biosensory App Using the Integrated Camera System for the Sensory Sessions Using Beer and Images as Stimuli

4.2. Example of Application of the Biosensory App Using an Integrated Camera System to Other Products to Obtain Machine-Learning Models

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Moya, F.I.; Angulo, Y.B. Análisis Sensorial de Alimentos: Métodos y Aplicaciones; Springer Iberica: Barcelona, Spain, 2001. [Google Scholar]

- Plemmons, L.E.; Resurreccion, A.V.A. Note on computerized data collection in consumer sensory evaluation. J. Sens. Stud. 1999, 14, 19–34. [Google Scholar] [CrossRef]

- Gonzalez Viejo, C.; Fuentes, S.; Howell, K.; Torrico, D.D.; Dunshea, F.R. Integration of non-invasive biometrics with sensory analysis techniques to assess acceptability of beer by consumers. Physiol. Behav. 2018. [Google Scholar] [CrossRef] [PubMed]

- Stone, H.; Bleibaum, R.; Thomas, H.A. Sensory Evaluation Practices; Elsevier/Academic Press: San Diego, CA, USA, 2012. [Google Scholar]

- Kemp, S.; Hollowood, T.; Hort, J. Sensory Evaluation: A Practical Handbook; Wiley: Oxford, UK, 2011. [Google Scholar]

- Kreibig, S.D. Autonomic nervous system activity in emotion: A review. Biol. Psychol. 2010, 84, 394–421. [Google Scholar] [CrossRef] [PubMed]

- Viejo, C.G.; Fuentes, S.; Howell, K.; Torrico, D.; Dunshea, F.R. Robotics and computer vision techniques combined with non-invasive consumer biometrics to assess quality traits from beer foamability using machine learning: A potential for artificial intelligence applications. Food Control 2018, 92, 72–79. [Google Scholar] [CrossRef]

- Torrico, D.D.; Fuentes, S.; Gonzalez Viejo, C.; Ashman, H.; Gunaratne, N.M.; Gunaratne, T.M.; Dunshea, F.R. Images and chocolate stimuli affect physiological and affective responses of consumers: A cross-cultural study. Food Qual. Preference 2018, 65, 60–71. [Google Scholar] [CrossRef]

- De Wijk, R.A.; He, W.; Mensink, M.G.; Verhoeven, R.H.; de Graaf, C. ANS responses and facial expressions differentiate between the taste of commercial breakfast drinks. PLoS ONE 2014, 9, e93823. [Google Scholar] [CrossRef] [PubMed]

- De Wijk, R.A.; Kooijman, V.; Verhoeven, R.H.; Holthuysen, N.T.; de Graaf, C. Autonomic nervous system responses on and facial expressions to the sight, smell, and taste of liked and disliked foods. Food Qual. Preference 2012, 26, 196–203. [Google Scholar] [CrossRef]

- Beyts, C.; Chaya, C.; Dehrmann, F.; James, S.; Smart, K.; Hort, J. A comparison of self-reported emotional and implicit responses to aromas in beer. Food Qual. Preference 2017, 59, 68–80. [Google Scholar] [CrossRef]

- Verastegui-Tena, L.; Schulte-Holierhoek, A.; van Trijp, H.; Piqueras-Fiszman, B. Beyond expectations: The responses of the autonomic nervous system to visual food cues. Physiol. Behav. 2017, 179, 478–486. [Google Scholar] [CrossRef] [PubMed]

- Taamneh, S.; Dcosta, M.; Kwon, K.-A.; Pavlidis, I. SubjectBook: Hypothesis-Driven Ubiquitous Visualization for Affective Studies. In Proceedings of the CHI Conference Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; ACM: New York, NY, USA, 2016; pp. 1483–1489. [Google Scholar]

- Taamneh, S.; Tsiamyrtzis, P.; Dcosta, M.; Buddharaju, P.; Khatri, A.; Manser, M.; Ferris, T.; Wunderlich, R.; Pavlidis, I. A multimodal dataset for various forms of distracted driving. Sci. Data 2017, 4, 170110. [Google Scholar] [CrossRef] [PubMed]

- Patel, S.; Park, H.; Bonato, P.; Chan, L.; Rodgers, M. A review of wearable sensors and systems with application in rehabilitation. J. Neuroeng. Rehabil. 2012, 9, 21. [Google Scholar] [CrossRef] [PubMed]

- Frelih, N.G.; Podlesek, A.; Babič, J.; Geršak, G. Evaluation of psychological effects on human postural stability. Measurement 2017, 98, 186–191. [Google Scholar] [CrossRef]

- Pavlidis, I.; Dowdall, J.; Sun, N.; Puri, C.; Fei, J.; Garbey, M. Interacting with human physiology. Comput. Vis. Image Underst. 2007, 108, 150–170. [Google Scholar] [CrossRef]

- Danner, L.; Sidorkina, L.; Joechl, M.; Duerrschmid, K. Make a face! Implicit and explicit measurement of facial expressions elicited by orange juices using face reading technology. Food Qual. Preference 2014, 32, 167–172. [Google Scholar] [CrossRef]

- Viejo, C.G.; Fuentes, S.; Torrico, D.D.; Dunshea, F.R. Non-Contact Heart Rate and Blood Pressure Estimations from Video Analysis and Machine Learning Modelling Applied to Food Sensory Responses: A Case Study for Chocolate. Sensors 2018, 18, 1802. [Google Scholar] [CrossRef] [PubMed]

- Viola, P.; Jones, M. Rapid object detection using a boosted cascade of simple features. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Kauai, HI, USA, 8–14 December 2001; Volume 1, pp. I-511–I-518. [Google Scholar]

- Dan-Glauser, E.S.; Scherer, K.R. The Geneva affective picture database (GAPED): A new 730-picture database focusing on valence and normative significance. Behav. Res. Methods 2011, 43, 468. [Google Scholar] [CrossRef] [PubMed]

- Gunaratne, N.M.; Gonzalez Viejo, C.; Gunaratne, T.M.; Torrico, D.D.; Ashman, H.; Dunshea, F.R. Image stimuli affect self-reported emotions and biometric facial expression responses. J. Sens. Stud. submitted.

- Torrico, D.D.; Fuentes, S.; Viejo, C.G.; Ashman, H.; Gurr, P.A.; Dunshea, F.R. Analysis of thermochromic label elements and colour transitions using sensory acceptability and eye tracking techniques. LWT 2018, 89, 475–481. [Google Scholar] [CrossRef]

- Gonzalez Viejo, C.; Fuentes, S.; Torrico, D.D.; Howell, K.; Dunshea, F.R. Assessment of Beer Quality Based on a Robotic Pourer, Computer Vision, and Machine Learning Algorithms Using Commercial Beers. J. Food Sci. 2018, 83, 1381–1388. [Google Scholar] [CrossRef] [PubMed]

- Gonzalez Viejo, C.; Fuentes, S.; Li, G.; Collmann, R.; Condé, B.; Torrico, D. Development of a robotic pourer constructed with ubiquitous materials, open hardware and sensors to assess beer foam quality using computer vision and pattern recognition algorithms: RoboBEER. Food Res. Int. 2016, 89, 504–513. [Google Scholar] [CrossRef] [PubMed]

| Assessment | Image | Sample | Baby | Face scale | Progress | 85 | Time: 10:23:03 |

| Assessment | Image | Sample | Dark room | Face scale | Progress | 13 | Time: 10:24:48 |

| Assessment | Image | Sample | Spider | Face scale | Progress | 22 | Time: 10:26:06 |

| Assessment | Image | Sample | Stairs | Face scale | Progress | 48 | Time: 10:28:55 |

| Assessment | Image | Sample | Boat | Face scale | Progress | 79 | Time: 10:29:23 |

| Assessment | Image | Sample | Dentist | Face scale | Progress | 30 | Time: 10:31:01 |

| Assessment | Image | Sample | Door | Face scale | Progress | 52 | Time: 10:31:53 |

| Assessment | Image | Sample | Wheel | Face scale | Progress | 55 | Time: 10:32:14 |

| Assessment | Image | Sample | Dog | Face scale | Progress | 94 | Time: 10:33:23 |

| Rank | Sample Baby | Position 1 | Sample Dog | Position 2 | Sample Boat | Position 3 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fuentes, S.; Gonzalez Viejo, C.; Torrico, D.D.; Dunshea, F.R. Development of a Biosensory Computer Application to Assess Physiological and Emotional Responses from Sensory Panelists. Sensors 2018, 18, 2958. https://doi.org/10.3390/s18092958

Fuentes S, Gonzalez Viejo C, Torrico DD, Dunshea FR. Development of a Biosensory Computer Application to Assess Physiological and Emotional Responses from Sensory Panelists. Sensors. 2018; 18(9):2958. https://doi.org/10.3390/s18092958

Chicago/Turabian StyleFuentes, Sigfredo, Claudia Gonzalez Viejo, Damir D. Torrico, and Frank R. Dunshea. 2018. "Development of a Biosensory Computer Application to Assess Physiological and Emotional Responses from Sensory Panelists" Sensors 18, no. 9: 2958. https://doi.org/10.3390/s18092958

APA StyleFuentes, S., Gonzalez Viejo, C., Torrico, D. D., & Dunshea, F. R. (2018). Development of a Biosensory Computer Application to Assess Physiological and Emotional Responses from Sensory Panelists. Sensors, 18(9), 2958. https://doi.org/10.3390/s18092958