Human-Computer Interaction in Pervasive Computing Environments

A topical collection in Sensors (ISSN 1424-8220). This collection belongs to the section "Intelligent Sensors".

Viewed by 110689Editor

Interests: information security; cyber physical systems; cloud computing; blockchain technologies; intrusion detection; artificial intelligence; social media and networking

Special Issues, Collections and Topics in MDPI journals

Topical Collection Information

Dear Colleagues,

Sensors are widely used in everyday life today. They are present in a wide variety of areas, offering an excellent opportunity to face challenges related to medicine and healthcare, smart cities, smart homes, smart learning, and entertainement, among others. Sensors bring technology closer to humans in an increasingly transparent and natural approach, building genuine technological ecosystems in which human–computer interaction plays a key role.

Despite the penetration of the sensors, though, it is necessary to continue improving their design, implementation, and use to improve usability, accessibility, and user experience in smart environments.

The aim of this Topical Collection is to highlight recent advances and trends in human–computer interaction in pervasive computing environments. It will address a broad range of topics related to smart environments, including (but not limited) to the following:

- Usability, accessibility, and sustainability;

- User experience;

- Natural user interfaces;

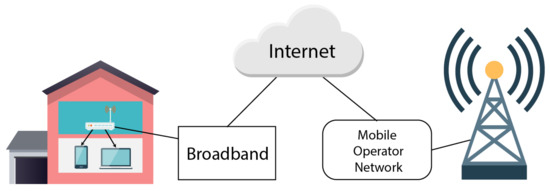

- Sensors networks;

- Haptic computing;

- Ambient-assisted living;

- Healthcare environments;

- Smart cities design;

- Multimodal systems and interfaces;

- IoT dashboards and platforms;

- Technological ecosystems for smart enviroments;

- Service-oriented information visualization;

- Smart interfaces for learning;

- Ambient and pervasive interactions.

Dr. Brij B. Gupta

Collection Editor

Manuscript Submission Information

Manuscripts should be submitted online at www.mdpi.com by registering and logging in to this website. Once you are registered, click here to go to the submission form. All submissions that pass pre-check are peer-reviewed. Accepted papers will be published continuously in the journal (as soon as accepted) and will be listed together on the collection website. Research articles, review articles as well as short communications are invited. For planned papers, a title and short abstract (about 250 words) can be sent to the Editorial Office for assessment.

Submitted manuscripts should not have been published previously, nor be under consideration for publication elsewhere (except conference proceedings papers). All manuscripts are thoroughly refereed through a single-blind peer-review process. A guide for authors and other relevant information for submission of manuscripts is available on the Instructions for Authors page. Sensors is an international peer-reviewed open access semimonthly journal published by MDPI.

Please visit the Instructions for Authors page before submitting a manuscript. The Article Processing Charge (APC) for publication in this open access journal is 2600 CHF (Swiss Francs). Submitted papers should be well formatted and use good English. Authors may use MDPI's English editing service prior to publication or during author revisions.

Keywords

- smart environment

- smart spaces

- smart cities

- smart interfaces for learning

- human–computer interaction

- usability, accessibility and sustainability

- user experience

- natural user interfaces

- sensors networks

- haptic computing

- ambient assisted living

- healthcare environments

- multimodal systems

- multimodal interfaces

- IoT dashboards

- IoT platforms

- technological ecosystems

- service-oriented information visualization

- ambient and pervasive interactions