User Experience in Social Robots

Abstract

:1. Introduction

2. Research Methodology

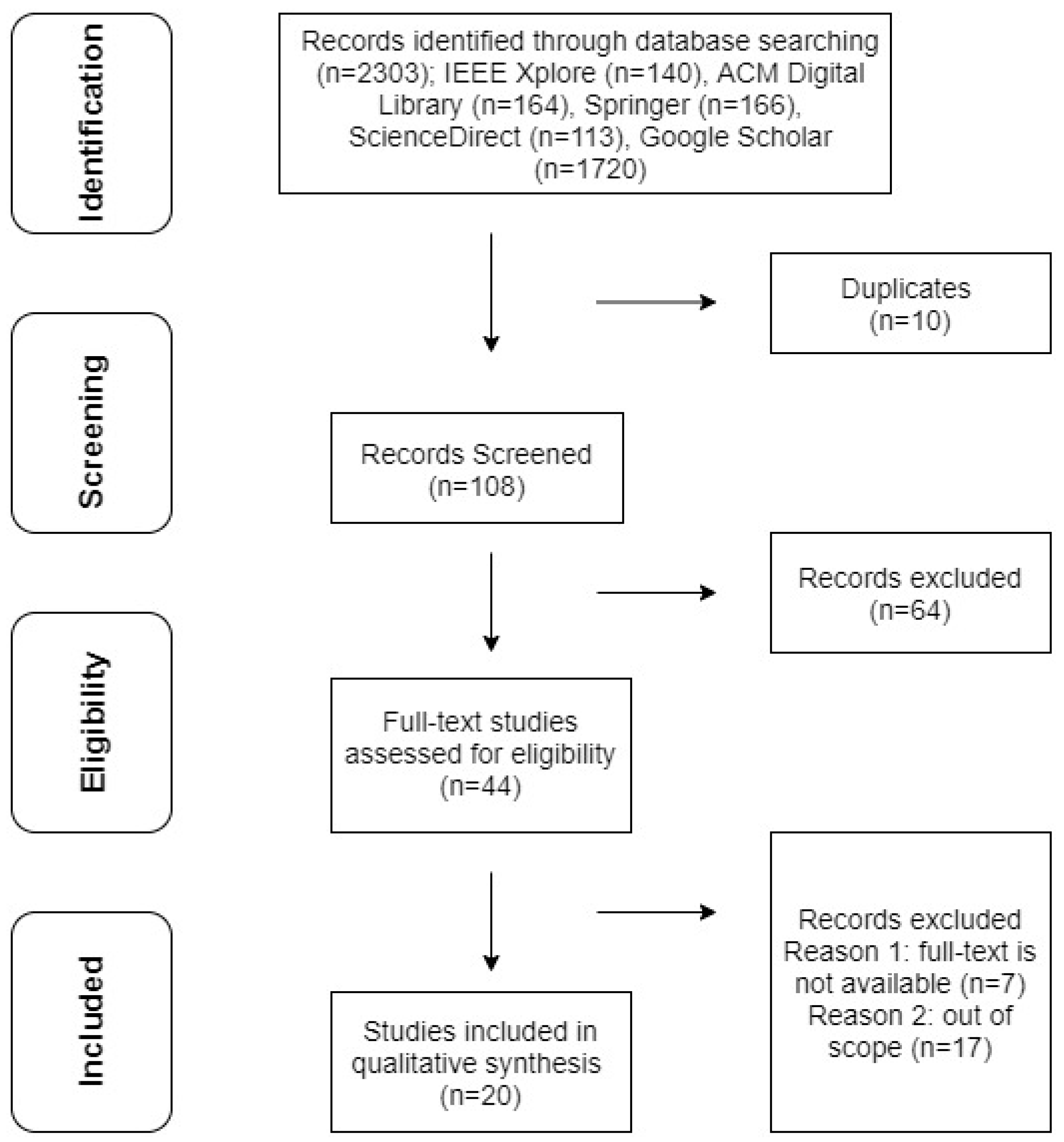

2.1. Systematic Literature Review Using Prisma Guidelines

- RQ1: What has been studied about user experience in social robots operating in different domains?

- RQ2: What are the reported techniques for assessing UX in social robots?

- RQ3: What are the reported challenges and benefits in evaluating UX for social robots?

2.2. Eligibility Criteria

2.3. Information Sources and Search

- IEEE Xplore

- ACM Digital Library

- Springer

- ScienceDirect

- Google Scholar

2.4. Study Selection and Data Collection Process

2.5. Data Extraction and Synthesis

2.6. Risk of Biases

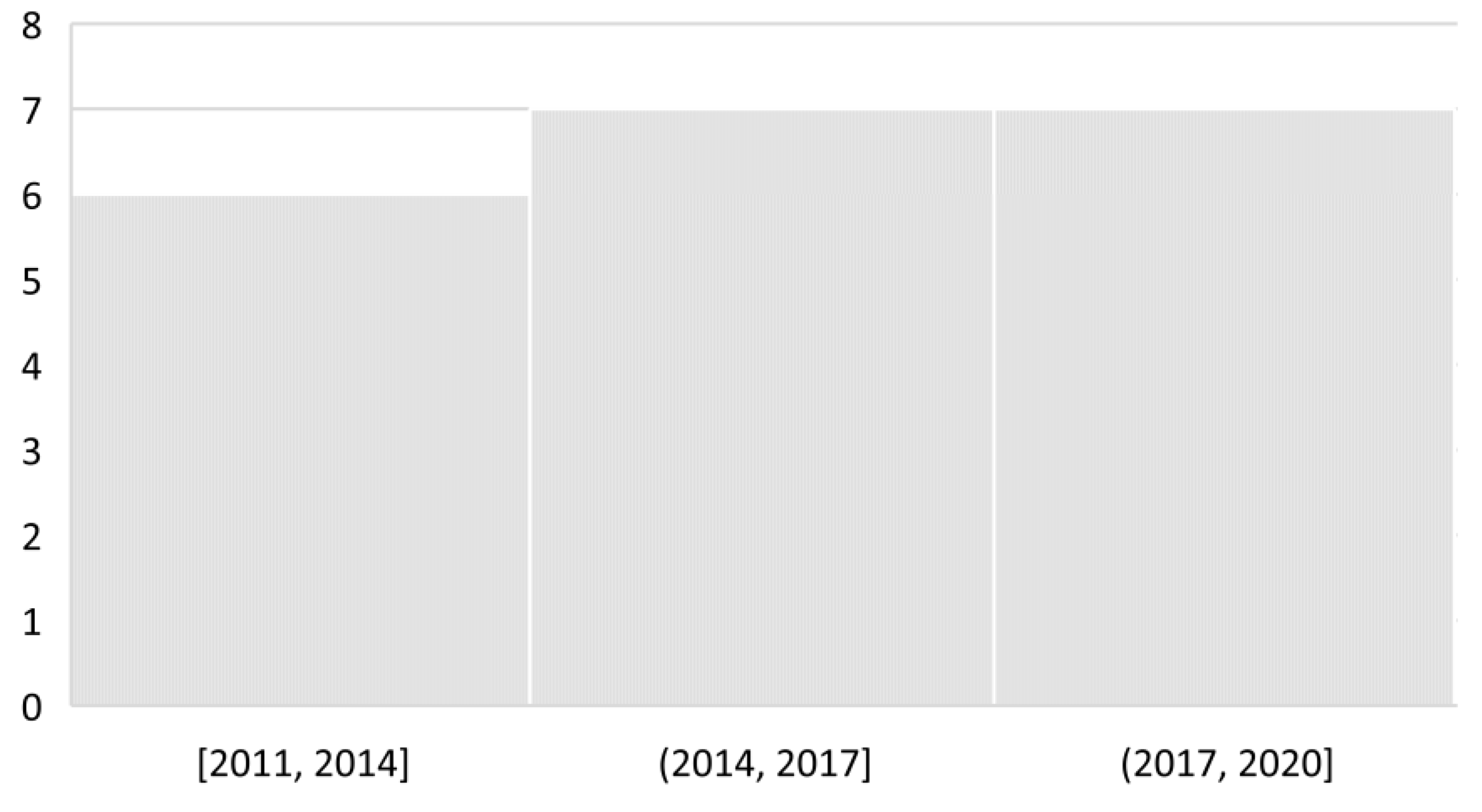

3. Results

3.1. RQ1: What Has Been Studied About User Experience in Social Robots Operating in Different Domains?

3.2. RQ2: What Are the Reported Techniques for Assessing UX in Social Robots?

- Attractiveness: the impression of the product

- Perspicuity: easy to use and follow the product

- Efficiency: solving users’ task without additional work

- Dependability: users’ feeling of being in control of the interaction

- Stimulation: how engaging the product is

- Novelty: the novelty of the product

- Perceived Humanness: the level of humanity and human-like characteristics of the robot

- Eeriness: the feeling of strangeness, disgust, and familiarity

- Attractiveness: the level of physical attraction

- Sub-scale 1: Negative Attitudes towards Situations and Interactions with Robots (six items)

- Sub-scale 2: Negative Attitudes towards Social Influence of Robots (five items)

- Sub-scale 3: Negative Attitudes towards Emotions in Interaction with Robots (three items)

3.3. RQ3: What Are the Reported Challenges and Benefits in Evaluating UX for Social Robots?

4. Discussion

4.1. UX in Social Robots: An Overview

4.2. Limitations

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| ID | Reference | Year | Title | Source |

|---|---|---|---|---|

| 1 | [44] | 2020 | Evaluating the User Experience of Human–Robot Interaction | Book Chapter (HRI) |

| 2 | [41] | 2019 | User Experience for Social Human-Robot Interactions | Conference (AICAI) |

| 3 | [84] | 2019 | A Survey of Behavioral Models for Social Robots | Journal (Robotics) |

| 4 | [67] | 2018 | Assessment of Perceived Attractiveness, Usability, and Societal Impact of a Multimodal Robotic Assistant for Aging Patients with Memory Impairments | Journal (Front. Neurol) |

| 5 | [74] | 2018 | Design Methodology for the UX of HRI: A Field Study of a Commercial Social Robot at an Airport | Conference (HRI) |

| 6 | [78] | 2018 | Some Brief Thoughts on the Past and Future of Human-Robot Interaction | Journal (HRI) |

| 7 | [42] | 2018 | Agile UX Design for a Quality User Experience | Book |

| 8 | [81] | 2017 | Design and Evaluation of a Short Version of the User Experience Questionnaire | Journal (IJIMAI) |

| 9 | [69] | 2016 | Long-Term Evaluation of a Telepresence Robot for the Elderly: Methodology and Ecological Case Study | Journal (Int J of Soc Robotics) |

| 10 | [87] | 2016 | Current Challenges for UX Evaluation of Human-Robot Interaction | Conference (AHFE) |

| 11 | [85] | 2016 | Promoting Interactions Between Humans and Robots Using Robotic Emotional Behavior | Journal (IEEE Trans Cybern.) |

| 12 | [70] | 2015 | Walking in the uncanny valley: importance of the attractiveness on the acceptance of a robot as a working partner | Journal (Front. Psychol.) |

| 13 | [68] | 2015 | Learning with Educational Companion Robots? Toward Attitudes on Education Robots, Predictors of Attitudes, and Application Potentials for Education Robots | Journal (Int J of Soc Robotics) |

| 14 | [86] | 2015 | Extensive assessment and evaluation methodologies on assistive social robots for modelling human–robot interaction | Journal (IS) |

| 15 | [73] | 2014 | Inventing Japan’s ‘robotics culture’: The repeated assembly of science, technology, and culture in social robotics | Journal (Soc Stud Sci) |

| 16 | [79] | 2014 | Designing Robots in the Wild: In situ Prototype Evaluation for a Break Management Robot | Journal (HRI) |

| 17 | [80] | 2014 | Review: Seven Matters of Concern of Social Robots and Older People | Journal (Int J of Soc Robotics) |

| 18 | [76] | 2014 | When to Use Which User-Experience Research Methods | Nielsen Norman Group |

| 19 | [71] | 2013 | Exploring influencing variables for the acceptance of social robots | Journal (RAS) |

| 20 | [77] | 2011 | User Experience and Experience Design | Book Chapter |

References

- Sandry, E. (Ed.) Introduction. In Robots and Communication; Palgrave Macmillan: London, UK, 2015; pp. 1–10. [Google Scholar]

- Zhang, C.; Wang, W.; Xi, N.; Wang, Y.; Liu, L. Development and Future Challenges of Bio-Syncretic Robots. Engineering 2018, 4, 452–463. [Google Scholar] [CrossRef]

- Duffy, B.R. Anthropomorphism and the social robot. Robot. Auton. Syst. 2003, 42, 177–190. [Google Scholar] [CrossRef]

- Malik, A.A.; Brem, A. Digital twins for collaborative robots: A case study in human-robot interaction. Robot. Comput. Manuf. 2021, 68, 102092. [Google Scholar] [CrossRef]

- Graaf, D.M. Living with Robots: Investigating the User Acceptance of Social Robots in Domestic Environments. Ph.D. Thesis, Univesity of Twente, Twente, The Netherlands, 2015. [Google Scholar]

- Duffy, B.R.; Rooney, C.; O’Hare, G.M.P.; O’Donoghue, R. What is a Social Robot? In Proceedings of the 10th Irish Conference on Artificial Intelligence & Cognitive Science, Cork, Ireland, 1–3 September 1999. [Google Scholar]

- Siciliano, B.; Khatib, O. Humanoid Robots: Historical Perspective, Overview, and Scope. In Humanoid Robotics: A Reference; Goswami, A., Vadakkepat, P., Eds.; Springer: Berlin/Heidelberg, Germany, 2019; pp. 3–8. [Google Scholar]

- Breazeal, C. Designing Sociable Robots. Des. Sociable Robot. 2004. [Google Scholar] [CrossRef]

- Malinowska, J.K. Can I Feel Your Pain? The Biological and Socio-Cognitive Factors Shaping People’s Empathy with Social Robots. Int. J. Soc. Robot. 2021, 1–15. [Google Scholar] [CrossRef]

- Henschel, A.; Laban, G.; Cross, E.S. What Makes a Robot Social? A Review of Social Robots from Science Fiction to a Home or Hospital Near You. Curr. Robot. Rep. 2021, 2, 9–19. [Google Scholar] [CrossRef]

- Kennedy, J.; Ramachandran, A.; Scassellati, B.; Tanaka, F. Social robots for education: A review. Sci. Robot. 2018, 3, eaat5954. [Google Scholar] [CrossRef] [Green Version]

- Lytridis, C.; Bazinas, C.; Sidiropoulos, G.; Papakostas, G.A.; Kaburlasos, V.G.; Nikopoulou, V.-A.; Holeva, V.; Evangeliou, A. Distance Special Education Delivery by Social Robots. Electronics 2020, 9, 1034. [Google Scholar] [CrossRef]

- Rosenberg-Kima, R.B.; Koren, Y.; Gordon, G. Robot-Supported Collaborative Learning (RSCL): Social Robots as Teaching Assistants for Higher Education Small Group Facilitation. Front. Robot. Ai 2020, 6, 148. [Google Scholar] [CrossRef] [Green Version]

- Berghe, R.V.D.; Verhagen, J.; Oudgenoeg-Paz, O.; van der Ven, S.; Leseman, P. Social Robots for Language Learning: A Review. Rev. Educ. Res. 2019, 89, 259–295. [Google Scholar] [CrossRef] [Green Version]

- Kanero, J.; Geçkin, V.; Oranç, C.; Mamus, E.; Küntay, A.; Göksun, T. Social Robots for Early Language Learning: Current Evidence and Future Directions. Child Dev. Perspect. 2018, 12, 146–151. [Google Scholar] [CrossRef] [Green Version]

- Belpaeme, T.; Vogt, P.; Berghe, R.V.D.; Bergmann, K.; Göksun, T.; De Haas, M.; Kanero, J.; Kennedy, J.; Küntay, A.; Oudgenoeg-Paz, O.; et al. Guidelines for Designing Social Robots as Second Language Tutors. Int. J. Soc. Robot. 2018, 10, 325–341. [Google Scholar] [CrossRef] [Green Version]

- Logan, D.E.; Breazeal, C.; Goodwin, M.S.; Jeong, S.; O’Connell, B.; Smith-Freedman, D.; Heathers, J.; Weinstock, P. Social Robots for Hospitalized Children. Pediatrics 2019, 144, e20181511. [Google Scholar] [CrossRef] [PubMed]

- Robinson, N.L.; Cottier, T.V.; Kavanagh, D.J. Psychosocial Health Interventions by Social Robots: Systematic Review of Randomized Controlled Trials. J. Med Internet Res. 2019, 21, e13203. [Google Scholar] [CrossRef] [PubMed]

- Chen, S.; Jones, C.; Moyle, W. Social Robots for Depression in Older Adults: A Systematic Review. J. Nurs. Sch. 2018, 50, 612–622. [Google Scholar] [CrossRef] [Green Version]

- Scoglio, A.A.; Reilly, E.D.; A Gorman, J.; E Drebing, C. Use of Social Robots in Mental Health and Well-Being Research: Systematic Review. J. Med. Internet Res. 2019, 21, e13322. [Google Scholar] [CrossRef]

- Pu, L.; Moyle, W.; Jones, C.; Todorovic, M. The Effectiveness of Social Robots for Older Adults: A Systematic Review and Meta-Analysis of Randomized Controlled Studies. Gerontologist 2019, 59, e37–e51. [Google Scholar] [CrossRef]

- De Kervenoael, R.; Hasan, R.; Schwob, A.; Goh, E. Leveraging human-robot interaction in hospitality services: Incorporating the role of perceived value, empathy, and information sharing into visitors’ intentions to use social robots. Tour. Manag. 2020, 78, 104042. [Google Scholar] [CrossRef]

- Nakanishi, J.; Kuramoto, I.; Baba, J.; Ogawa, K.; Yoshikawa, Y.; Ishiguro, H. Continuous Hospitality with Social Robots at a hotel. SN Appl. Sci. 2020, 2, 1–13. [Google Scholar] [CrossRef] [Green Version]

- Huang, H.-L.; Cheng, L.-K.; Sun, P.-C.; Chou, S.-J. The Effects of Perceived Identity Threat and Realistic Threat on the Negative Attitudes and Usage Intentions Toward Hotel Service Robots: The Moderating Effect of the Robot’s Anthropomorphism. Int. J. Soc. Robot. 2021, 1–13. [Google Scholar] [CrossRef]

- Fuentes-Moraleda, L.; Díaz-Pérez, P.; Orea-Giner, A.; Mazón, A.M.-; Villacé-Molinero, T. Interaction between hotel service robots and humans: A hotel-specific Service Robot Acceptance Model (sRAM). Tour. Manag. Perspect. 2020, 36, 100751. [Google Scholar] [CrossRef]

- Garcia-Haro, J.M.; Oña, E.D.; Hernandez-Vicen, J.; Martinez, S.; Balaguer, C. Service Robots in Catering Applications: A Review and Future Challenges. Electronics 2020, 10, 47. [Google Scholar] [CrossRef]

- De Boer, S.; Jansen, B.; Bustos, V.M.; Prinse, M.; Horwitz, Y.; Hoorn, J.F. Social Robotics in Eastern and Western Newspapers: China and (Even) Japan are Optimistic. Int. J. Innov. Technol. Manag. 2021, 18, 2040001. [Google Scholar] [CrossRef]

- Horstmann, A.C.; Krämer, N.C. Great Expectations? Relation of Previous Experiences with Social Robots in Real Life or in the Media and Expectancies Based on Qualitative and Quantitative Assessment. Front. Psychol. 2019, 10, 939. [Google Scholar] [CrossRef] [Green Version]

- Čaić, M.; Mahr, D.; Oderkerken-Schröder, G. Value of social robots in services: Social cognition perspective. J. Serv. Mark. 2019, 33, 463–478. [Google Scholar] [CrossRef]

- Chi, O.H.; Jia, S.; Li, Y.; Gursoy, D. Developing a formative scale to measure consumers’ trust toward interaction with artificially intelligent (AI) social robots in service delivery. Comput. Hum. Behav. 2021, 118, 106700. [Google Scholar] [CrossRef]

- Mubin, O.; Ahmad, M.I.; Kaur, S.; Shi, W.; Khan, A. Social Robots in Public Spaces: A Meta-review. In Social Robotics; Ge, S.S., Cabibihan, J.-J., Salichs, M.A., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2018; pp. 213–220. [Google Scholar]

- Pieterson, W.; Ebbers, W.; Madsen, C.Ø. New Channels, New Possibilities: A Typology and Classification of Social Robots and Their Role in Multi-channel Public Service Delivery. In Electronic Government; Janssen, M., Axelsson, K., Glassey, O., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2018; pp. 47–59. [Google Scholar]

- Thunberg, S.; Ziemke, T. Are People Ready for Social Robots in Public Spaces? In Proceedings of the Companion of the 2020 ACM/IEEE International Conference on Human-Robot Interaction, Cambridge, UK, 23–26 March 2020; pp. 482–484. [Google Scholar]

- Mintrom, M.; Sumartojo, S.; Kulić, D.; Tian, L.; Carreno-Medrano, P.; Allen, A. Robots in public spaces: Implications for policy design. Policy Des. Pract. 2021, 1–16. [Google Scholar] [CrossRef]

- Aymerich-Franch, L.; Ferrer, I. Social Robots as a Brand Strategy. In Innovation in Advertising and Branding Communication; Routledge: New York, NY, USA, 2020; pp. 86–102. [Google Scholar]

- Leite, I.; Martinho, C.; Paiva, A. Social Robots for Long-Term Interaction: A Survey. Int. J. Soc. Robot. 2013, 5, 291–308. [Google Scholar] [CrossRef]

- Mandal, F.B. Nonverbal Communication in Humans. J. Hum. Behav. Soc. Env. 2014, 24, 417–421. [Google Scholar] [CrossRef]

- Yamazaki, A.; Yamazaki, K.; Kuno, Y.; Burdelski, M.; Kawashima, M.; Kuzuoka, H. Precision Timing in Human-Robot Interaction: Coordination of Head Movement and Utterance. In Proceedings of the CHI ‘08: CHI Conference on Human Factors in Computing Systems, Florence, Italy, 5–10 April 2008; pp. 131–140. [Google Scholar]

- Breazeal, C.; Scassellati, B. Robots that imitate humans. Trends Cogn. Sci. 2002, 6, 481–487. [Google Scholar] [CrossRef]

- Lambert, A.; Norouzi, N.; Bruder, G.; Welch, G. A Systematic Review of Ten Years of Research on Human Interaction with Social Robots. Int. J. Hum. Comput. Interact. 2020, 36, 1804–1817. [Google Scholar] [CrossRef]

- Greunen, D.v. User Experience for Social Human-Robot Interactions. In Proceedings of the Amity International Conference on Artificial Intelligence (AICAI), Dubai, United Arab Emirates, 4–6 February 2019; pp. 32–36. [Google Scholar]

- Hartson, R.; Pyla, P.S. The UX Book: Agile UX Design for a Quality User Experience; Morgan Kaufmann: Cambridge, MA, USA, 2018. [Google Scholar]

- ISO 8968-1:Milk and Milk Products—Determination of Nitrogen Content—Part 1: Kjeldahl Principle and Crude Protein Calculation. Available online: https://www.iso.org/obp/ui/#iso:std:iso:8968:-1:ed-2:v1:en (accessed on 12 January 2021).

- Lindblom, J.; Alenljung, B.; Billing, E. Evaluating the User Experience of Human–Robot Interaction. In Springer Series on Bio- and Neurosystems; Springer Science and Business Media LLC: Berlin/Heidelberg, Germany, 2020; pp. 231–256. [Google Scholar]

- Maia, C.L.B.; Furtado, E. A Systematic Review About User Experience Evaluation. In Transactions on Petri Nets and Other Models of Concurrency XV; Springer Science and Business Media LLC: Berlin/Heidelberg, Germany, 2016; pp. 445–455. [Google Scholar]

- Moher, D.; Shamseer, L.; Clarke, M.; Ghersi, D.; Liberati, A.; Petticrew, M.; Shekelle, P.; Stewart, L.A.; PRISMA-P Group. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst. Rev. 2015, 4, 1. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Shamseer, L.; Moher, D.; Clarke, M.; Ghersi, D.; Liberati, A.; Petticrew, M.; Shekelle, P.; Stewart, L.A.; PRISMA-P Group. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015: Elaboration and explanation. BMJ Br. Med. J. 2015, 349, g7647. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Moher, D. Updating guidance for reporting systematic reviews: Development of the PRISMA 2020 statement. J. Clin. Epidemiol. 2021, 134, 103–112. [Google Scholar] [CrossRef] [PubMed]

- Şalvarlı, Ş.İ.; Griffiths, M.D. Internet Gaming Disorder and Its Associated Personality Traits: A Systematic Review Using PRISMA Guidelines. Int. J. Ment. Health Addict. 2019, 1–23. [Google Scholar] [CrossRef] [Green Version]

- Gaikwad, M.; Ahirrao, S.; Phansalkar, S.; Kotecha, K. Online Extremism Detection: A Systematic Literature Review with Emphasis on Datasets, Classification Techniques, Validation Methods and Tools. IEEE Access 2021, 48364–48404. [Google Scholar] [CrossRef]

- Frizzo-Barker, J.; Chow-White, P.A.; Adams, P.R.; Mentanko, J.; Ha, D.; Green, S. Blockchain as a disruptive technology for business: A systematic review. Int. J. Inf. Manag. 2020, 51, 102029. [Google Scholar] [CrossRef]

- Çetin, M.; Demircan, H. Özlen Empowering technology and engineering for STEM education through programming robots: A systematic literature review. Early Child Dev. Care 2020, 190, 1323–1335. [Google Scholar] [CrossRef]

- Savela, N.; Turja, T.; Oksanen, A. Social Acceptance of Robots in Different Occupational Fields: A Systematic Literature Review. Int. J. Soc. Robot. 2018, 10, 493–502. [Google Scholar] [CrossRef] [Green Version]

- Gualtieri, L.; Rauch, E.; Vidoni, R. Emerging research fields in safety and ergonomics in industrial collaborative robotics: A systematic literature review. Robot. Comput. Manuf. 2021, 67, 101998. [Google Scholar] [CrossRef]

- Buettner, R.; Renner, A.; Boos, A. A Systematic Literature Review of Research in the Surgical Field of Medical Robotics. In Proceedings of the 2020 IEEE 44th Annual Computers, Software, and Applications Conference (COMPSAC), Madrid, Spain, 13–17 July 2020; pp. 517–522. [Google Scholar]

- Tijani, B.; Feng, Y. Social Impacts of Adopting Robotics in the Construction Industry: A Systematic Literature Review. In Proceedings of the 23rd International Symposium on Advancement of Construction Management and Real Estate; Long, F., Zheng, S., Wu, Y., Eds.; Springer: Singapore, 2021; pp. 668–680. [Google Scholar]

- Schulz, T.; Torresen, J.; Herstad, J. Animation Techniques in Human-Robot Interaction User Studies. ACM Trans. Hum. Robot Interact. 2019, 8, 1–22. [Google Scholar] [CrossRef] [Green Version]

- Zafrani, O.; Nimrod, G. Towards a Holistic Approach to Studying Human–Robot Interaction in Later Life. Gerontologist 2018, 59, e26–e36. [Google Scholar] [CrossRef]

- Nelles, J.; Kwee-Meier, S.T.; Mertens, A. Evaluation Metrics Regarding Human Well-Being and System Performance in Human-Robot Interaction–A Literature Review. In Proceedings of the 20th Congress of the International Ergonomics Association (IEA 2018); Bagnara, S., Tartaglia, R., Albolino, S., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2019; pp. 124–135. [Google Scholar]

- Esterwood, C.; Robert, L.P. Personality in Healthcare Human Robot Interaction (H-HRI): A Literature Review and Brief Critique. In Proceedings of the 8th International Conference on Human-Agent Interaction; Association for Computing Machinery: New York, NY, USA, 2020; pp. 87–95. [Google Scholar]

- González-González, C.S.; Gil-Iranzo, R.M.; Paderewski-Rodríguez, P. Human–Robot Interaction and Sexbots: A Systematic Literature Review. Sensors 2020, 21, 216. [Google Scholar] [CrossRef] [PubMed]

- Lin, C.-C.; Liao, H.-Y.; Tung, F.-W. Design Guidelines of Social-Assisted Robots for the Elderly: A Mixed Method Systematic Literature Review. In Late Breaking Papers: Cognition, Learning and Games; Stephanidis, C., Harris, D., Li, W.-C., Eds.; HCI International 2020; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 90–104. [Google Scholar]

- Kitchenham, B. Procedures for Performing Systematic Reviews; Keele University: Keele, UK, 2004; pp. 1–26. [Google Scholar]

- Petersen, K.; Feldt, R.; Mujtaba, S.; Mattsson, M. Systematic Mapping Studies in Software Engineering. In Proceedings of the International Conference on Evaluation and Assessment in Software Engineering (EASE), Bari, Italy, 26–27 June 2008; pp. 1–10. [Google Scholar]

- Garousi, V.; Felderer, M.; Mäntylä, M.V. Guidelines for including grey literature and conducting multivocal literature reviews in software engineering. Inf. Softw. Technol. 2019, 106, 101–121. [Google Scholar] [CrossRef] [Green Version]

- Kitchenham, B.; Charters, S. Guidelines for Performing Systematic Literature Reviews in Software Engineering; Keele University: Keele, UK, 2017. [Google Scholar]

- Gerłowska, J.; Skrobas, U.; Grabowska-Aleksandrowicz, K.; Korchut, A.; Szklener, S.; Szczęśniak-Stańczyk, D.; Tzovaras, D.; Rejdak, K. Assessment of Perceived Attractiveness, Usability, and Societal Impact of a Multimodal Robotic Assistant for Aging Patients with Memory Impairments. Front. Neurol. 2018, 9. [Google Scholar] [CrossRef]

- Reich-Stiebert, N.; Eyssel, F. Learning with Educational Companion Robots? Toward Attitudes on Education Robots, Predictors of Attitudes, and Application Potentials for Education Robots. Int. J. Soc. Robot. 2015, 7, 875–888. [Google Scholar] [CrossRef]

- Cesta, A.; Cortellessa, G.; Orlandini, A.; Tiberio, L. Long-Term Evaluation of a Telepresence Robot for the Elderly: Methodology and Ecological Case Study. Int. J. Soc. Robot. 2016, 8, 421–441. [Google Scholar] [CrossRef] [Green Version]

- Destephe, M.; Brandão, M.; Kishi, T.; Zecca, M.; Hashimoto, K.; Takanishi, A. Walking in the uncanny valley: Importance of the attractiveness on the acceptance of a robot as a working partner. Front. Psychol. 2015, 6, 204. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- De Graaf, M.M.; Ben Allouch, S. Exploring influencing variables for the acceptance of social robots. Robot. Auton. Syst. 2013, 61, 1476–1486. [Google Scholar] [CrossRef]

- Korn, O.; Akalin, N.; Gouveia, R. Understanding Cultural Preferences for Social Robots. ACM Trans. Hum. Robot Interact. 2021, 10, 1–19. [Google Scholar] [CrossRef]

- Šabanović, S. Inventing Japan’s ‘robotics culture’: The repeated assembly of science, technology, and culture in social robotics. Soc. Stud. Sci. 2014, 44, 342–367. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Tonkin, M.; Vitale, J.; Herse, S.; Williams, M.A.; Judge, W.; Wang, X. Design Methodology for the UX of HRI: A Field Study of a Commercial Social Robot at an Airport. In Proceedings of the 2018 ACM/IEEE International Conference on Human-Robot Interaction; ACM: Chicago, IL, USA, 2018; pp. 407–415. [Google Scholar]

- Gothelf, J.; Seiden, J. Lean UX: Designing Great Products with Agile Teams; O’Reilly Media, Inc.: Newton, MA, USA, 2016. [Google Scholar]

- Rohrer, C. When to Use Which User-Experience Research Methods; Nielsen Norman Group: Fremont, CA, USA, 2014. [Google Scholar]

- Hassenzahl, M. User Experience and Experience Design; Interaction Design Fundation: Aarhus, Denmark, 2011. [Google Scholar]

- Dautenhahn, K. Some Brief Thoughts on the Past and Future of Human-Robot Interaction. ACM Trans. Hum. Robot Interact. 2018, 7, 1–3. [Google Scholar] [CrossRef] [Green Version]

- Šabanović, S.; Reeder, S.M.; Kechavarzi, B. Designing Robots in the Wild: In situ Prototype Evaluation for a Break Management Robot. J. Hum. Robot Interact. 2014, 3, 70–88. [Google Scholar] [CrossRef] [Green Version]

- Frennert, S.; Östlund, B. Review: Seven Matters of Concern of Social Robots and Older People. Int. J. Soc. Robot. 2014, 6, 299–310. [Google Scholar] [CrossRef]

- Schrepp, M.; Hinderks, A.; Thomaschewski, J. Design and Evaluation of a Short Version of the User Experience Questionnaire (UEQ-S). Int. J. Interact. Multimed. Artif. Intell. 2017, 4, 103. [Google Scholar] [CrossRef] [Green Version]

- Schrepp, M. User Experience Questionnaire Handbook; Version 2; SAP Research: Waldorf, Germany, 2016. [Google Scholar]

- Nomura, T.; Kanda, T.; Suzuki, T. Experimental investigation into influence of negative attitudes toward robots on human–robot interaction. Ai Soc. 2005, 20, 138–150. [Google Scholar] [CrossRef]

- Nocentini, O.; Fiorini, L.; Acerbi, G.; Sorrentino, A.; Mancioppi, G.; Cavallo, F. A Survey of Behavioral Models for Social Robots. Robotics 2019, 8, 54. [Google Scholar] [CrossRef] [Green Version]

- Ficocelli, M.; Terao, J.; Nejat, G. Promoting Interactions Between Humans and Robots Using Robotic Emotional Behavior. IEEE Trans. Cybern. 2016, 46, 2911–2923. [Google Scholar] [CrossRef]

- Sim, D.Y.Y.; Loo, C.K. Extensive assessment and evaluation methodologies on assistive social robots for modelling human–robot interaction—A review. Inf. Sci. 2015, 301, 305–344. [Google Scholar] [CrossRef]

- Lindblom, J.; Andreasson, R. Current Challenges for UX Evaluation of Human-Robot Interaction. In Advances in Ergonomics of Manufacturing: Managing the Enterprise of the Future; Schlick, C., Trzcieliński, S., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2016; pp. 267–277. [Google Scholar]

- Cao, H.-L.; Esteban, P.G.; De Beir, A.; Simut, R.; Van De Perre, G.; Lefeber, D.; VanderBorght, B. A Survey on Behavior Control Architectures for Social Robots in Healthcare Interventions. Int. J. Hum. Robot. 2017, 14, 1750021. [Google Scholar] [CrossRef]

- Amanatiadis, A.; Kaburlasos, V.; Dardani, C.; Chatzichristofis, S. Interactive social robots in special education. In Proceedings of the 2017 IEEE 7th International Conference on Consumer Electronics-Berlin (ICCE-Berlin), Berlin, Germany, 3–6 September 2017; pp. 126–129. [Google Scholar]

- Niemelä, M.; Heikkilä, P.; Lammi, H.; Oksman, V. A Social Robot in a Shopping Mall: Studies on Acceptance and Stakeholder Expectations. In Social Robots: Technological, Societal and Ethical Aspects of Human-Robot Interaction; Korn, O., Ed.; Springer International Publishing: Berlin/Heidelberg, Germany, 2019; pp. 119–144. [Google Scholar]

- De Carolis, B.; Palestra, G.; Della Penna, C.; Cianciotta, M.; Cervelione, A. Social robots supporting the inclusion of unaccompanied migrant children: Teaching the meaning of culture-related gestures. J. E-Learning Knowl. Soc. 2019, 15, 43–57. [Google Scholar]

- Edwards, A.; Edwards, C.; Westerman, D.; Spence, P.R. Initial expectations, interactions, and beyond with social robots. Comput. Hum. Behav. 2019, 90, 308–314. [Google Scholar] [CrossRef]

- Miler, J.; Menjega-Schmidt, M. Evaluation of Selected UX Techniques by Product Managers—A Preliminary Survey. In Integrating Research and Practice in Software Engineering; Jarzabek, S., Poniszewska-Marańda, A., Madeyski, L., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 159–169. [Google Scholar]

- Alomari, H.W.; Ramasamy, V.; Kiper, J.D.; Potvin, G. A User Interface (UI) and User eXperience (UX) evaluation framework for cyberlearning environments in computer science and software engineering education. Heliyon 2020, 6, e03917. [Google Scholar] [CrossRef] [PubMed]

- Biduski, D.; Bellei, E.A.; Rodriguez, J.P.M.; Zaina, L.A.M.; De Marchi, A.C.B. Assessing long-term user experience on a mobile health application through an in-app embedded conversation-based questionnaire. Comput. Hum. Behav. 2020, 104, 106169. [Google Scholar] [CrossRef]

- Lindblom, J.; Alenljung, B. The ANEMONE: Theoretical Foundations for UX Evaluation of Action and Intention Recognition in Human-Robot Interaction. Sensors 2020, 20, 4284. [Google Scholar] [CrossRef] [PubMed]

- Chavan, A.L.; Prabhu, G. Should We Measure UX Differently? In Design, User Experience, and Usability. Interaction Design; Marcus, A., Rosenzweig, E., Eds.; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 176–187. [Google Scholar]

- Zhou, X.; Jin, Y.; Zhang, H.; Li, S.; Huang, X. A Map of Threats to Validity of Systematic Literature Reviews in Software Engineering. In Proceedings of the 2016 23rd Asia-Pacific Software Engineering Conference (APSEC), Hamilton, New Zealand, 6–9 December 2016; pp. 153–160. [Google Scholar]

- Wohlin, C.; Runeson, P.; Höst, M.; Ohlsson, M.; Regnell, B.; Wesslén, A. Experimentation in Software Engineering; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

| Study | Publication Year | Author | Scope |

|---|---|---|---|

| [41] | 2019 | D. v Greunen | Social Robots Application Areas |

| [69] | 2016 | A. Cesta et al. | Evaluation of Telepresence Social Robot Assistant for the Elderly |

| [67] | 2018 | J. Gerłowska et al. | Assessment of Impact of a Robotic Assistant for Aging Patients with Memory Impairments |

| [70] | 2015 | M. Destephe et al. | Assessment of UX the acceptance of a robot as a working partner |

| [68] | 2015 | N. Reich-Stiebert et al. | Education Robots and teacher assistance |

| [73] | 2014 | S. Šabanović | Integration of Social Robots and cultural and traditional themes |

| [74] | 2018 | M. Tonkin et al. | Implementation of Commercial Social Robot at an Airport |

| [75] | 2016 | J. Gothelf et al. | Integration of Lean UX with HRI research |

| No. | Questionnaire Item | Sub-Scale |

|---|---|---|

| 1 | I would feel uneasy if robots really had emotions. | S2 |

| 2 | Something bad might happen if robots developed into living beings. | S2 |

| 3 | I would feel relaxed talking with robots * | S3 |

| 4 | I would feel uneasy if I was given a job where I had to use robots. | S1 |

| 5 | If robots had emotions, I would be able to make friends with them. * | S3 |

| 6 | I feel comforted being with robots that have emotions. * | S3 |

| 7 | The word “robot” means nothing to me. | S1 |

| 8 | I would feel nervous operating a robot in front of other people. | S1 |

| 9 | I would hate the idea that robots or artificial intelligences were making judgements about things. | S1 |

| 10 | I would feel very nervous just standing in front of a robot. | S1 |

| 11 | I feel that if I depend on robots too much, something bad might happen. | S2 |

| 12 | I would feel paranoid talking with a robot. | S1 |

| 13 | I am concerned that robots would be a bad influence on children. | S2 |

| 14 | I feel that in the future, society will be dominated by robots. | S2 |

| Study | Publication Year | Author(s) | Method |

|---|---|---|---|

| [69] | 2016 | Cesta et al. | Multidimensional Assessment of Telepresence Robot (MARTA) |

| [67] | 2018 | Gerłowska et al. | User Experience Questionnaire (UEQ) and survey |

| [70] | 2015 | Destephe et al. | MacDorman questionnaire, personality questionnaire and, Ho’s questionnaire |

| [73] | 2014 | Sabanovic et al. | in situ evaluation (pre- and post-interview, online questionnaire, self-report, final focus group) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shourmasti, E.S.; Colomo-Palacios, R.; Holone, H.; Demi, S. User Experience in Social Robots. Sensors 2021, 21, 5052. https://doi.org/10.3390/s21155052

Shourmasti ES, Colomo-Palacios R, Holone H, Demi S. User Experience in Social Robots. Sensors. 2021; 21(15):5052. https://doi.org/10.3390/s21155052

Chicago/Turabian StyleShourmasti, Elaheh Shahmir, Ricardo Colomo-Palacios, Harald Holone, and Selina Demi. 2021. "User Experience in Social Robots" Sensors 21, no. 15: 5052. https://doi.org/10.3390/s21155052

APA StyleShourmasti, E. S., Colomo-Palacios, R., Holone, H., & Demi, S. (2021). User Experience in Social Robots. Sensors, 21(15), 5052. https://doi.org/10.3390/s21155052