Abstract

Background: This paper is a systematic review of 100 peer-reviewed articles (2015–2025) related to artificial intelligence (AI) applications in the auditing field, and includes machine learning, natural language processing, robotic process automation, and other AI methods. Purpose: The paper delves into the integration of these AI technologies into the audit workflow; empirical implications of these technologies on audit effectiveness; efficiency and quality; and technical, organizational, and regulatory obstacles that suggest more widespread adoption is still limited. Methods: Five large-scale databases and other sources were searched and selected using PRISMA; structured data were extracted, assessed in quality and narrative, and thematically analyzed. Results: The discussion indicates that machine learning-based anomaly detection and predictive analytics, document analysis through NLP, and automation through RPA are becoming part of planning, risk assessments, control tests, and substantive procedures/reporting, with improvements in detection capabilities, coverage and efficiency reported in various empirical and design science studies. The review also presents common architectural models of AI-enabled audit processes, including layered data and governance, model development and oversight, orchestration and automation, auditor-facing applications, and human-in-the-loop controls. Conclusions: The article proposes an AI-based audit workflow reference architecture and summarizes evidence on opportunities, threats, and implementation obstacles, highlighting gaps in longitudinal assessment, comparative evaluation of AI methods, and regulatory recommendations. The results have practical implications for auditors, standard-setters, and system designers seeking to revise the audit approach and regulations to enable AI-driven assurance.

1. Introduction

The introduction of artificial intelligence (AI), machine learning, and data analytics is changing the auditing profession [1]. Traditionally, audits were based on representative sampling, expert judgment, and control testing based on rules to make audit opinions [2,3]. However, the emergence of digital transactional data and sophisticated AI algorithms has led to a shift toward population-level analysis, anomaly detection, continuous monitoring, and predictive risk analysis [4,5]. AI-enabled platforms are now applied in different stages of the audit process, such as identifying high-risk accounts in the planning phase, generating automated internal control audit tests, anomaly detection in journal entries, analyzing unstructured text, and building real-time dashboards for control deviations [6,7]. The “big four” accounting firms and even smaller and mid-tier firms are making significant investments in AI, either by purchasing AI startups, building in-house teams, or licensing specialized audit platforms [8,9].

Despite these advancements, the adoption of AI technologies in auditing has led to concerns raised by regulatory bodies, such as the IAASB and PCAOB, about auditor judgment, explainability, and the appropriate use of technology [10,11]. Standard-setters have started to offer guidance on the nature of oversight auditors should provide for the appropriateness of AI tools and be accountable for the conclusions that are reached based on AI-assisted procedures [12,13]. While AI in auditing is gaining momentum, the available academic and professional literature is fragmented, addressing individual technologies or applications such as fraud detection or internal audits and not discussing the integration of AI into the entire audit process [14,15,16]. Existing reviews tend to focus on subdomains and leave a gap in understanding how AI components integrate across the overall audit workflow and organization [17,18].

To fill this gap, a systematic review is required to pool empirical evidence on the role of AI in auditing. This review categorizes AI techniques and their applications in every stage of auditing, identifies research gaps, and provides both academic and practical applications. The aim is not only to provide a conceptual framework for incorporating AI throughout the audit process but also to offer an integrated approach to technology orchestration. This systematic review provides three main contributions: (i) an AI technique-based taxonomy aligned with audit stages, (ii) a reference architecture describing functional layers, control points, and boundaries between humans and AI, and (iii) an analysis of the adoption barriers and research gaps for system designers and audit practitioners. This comprehensive framework goes beyond descriptive surveys and makes actionable recommendations for designing a system and implementing changes in practice.

1.1. Research Gaps and Contributions

Current scholarly and practitioner research on AI within auditing is dispersed, often concentrating on the application or associated tools of a particular technology or application, including anomaly-detection models, internal audit analytics, or continuous auditing software. Prior reviews tend to focus on subdomains (such as audit analytics or digital transformation) and lack a system-wide perspective on how AI elements are coordinated throughout the audit process and integrated into audit organizations. Consequently, no unified mapping of AI methods to audit phases, no unified architecture of AI-enabled audit processes, and no synthesis of empirical evidence on the influence of AI on audit quality, efficiency, and the technical, organizational, and regulatory issues that impede mass implementation still exist.

This review addresses these gaps by (i) creating an AI technique-based taxonomy in line with phases of the audit working process, (ii) offering a reference architecture defining data, model, orchestration, application and governance layers, human-in-the-loop interaction, and (iii) summing up reported benefits, challenges, and research gaps as they apply to system designers, audit practitioners, and standard-setters. In doing so, the research offers a fresh workflow-based approach that bridges the gap between AI methods, system design, and empirical findings in auditing.

1.2. Research Questions

The following research questions are guided by this gap:

RQ1: At what levels of the audit working process is AI applied, and what goals and results of the application are reported?

RQ2: How are the functions of AI architecturally integrated into audit systems, and what are the design principles of the interaction between humans and AI?

RQ3: Does empirical evidence exist as to the effect of AI on audit effectiveness, efficiency, and quality?

RQ4: Which technical, organizational, and regulatory obstacles prevent the widespread use of AI in audit processes?

2. Materials and Methods

This systematic review was planned and reported in accordance with the PRISMA 2020 guidelines. The protocol was prepared in advance but was not registered in a public repository (e.g., PROSPERO). This systematic review was designed and reported in accordance with the guidelines for systematic literature reviews [19,20,21], adapted for software engineering and information systems research in the audit field [22,23,24].

2.1. Search Strategy and Information Sources

Electronic searches were conducted in five major multidisciplinary databases (Scopus, Web of Science, IEEE Xplore, ScienceDirect, and Google Scholar). These databases were deemed to represent publications in the domains of accounting, auditing, information systems, computer science, artificial intelligence, and management, as AI in auditing is, by its nature, interdisciplinary [25,26,27,28].

The search strategy was a combination of controlled vocabulary (MeSH, Scopus subject terms) and keyword searches. Core search strings included the following:

- Search String 1: (“machine learning” OR “deep learning” OR “neural network” OR “artificial intelligence”), AND (“auditing” OR “auditor” OR “audit” OR “internal audit”).

- Search String 2: (“NLP” OR “text mining” OR “natural language processing”) AND (“auditing” OR “audit” OR “audit workflow”).

- Search String 3: “RPA” OR “process automation” OR “robotic process automation”), AND (“audit” OR “internal audit”).

- Search String 4: (“continuous monitoring” OR “real-time audit*”, “continuous audit*”) AND (“artificial intelligence” OR “machine learning”).

- Search String 5: “audit effectiveness”, “audit quality”, AND “automation”, “data analytics”, “artificial intelligence”).

- Change from 2015 to 2025: This review covers the following timeframe: 1 January 2015–31 December 2025 (a period of 10 years). This period was selected because it spans the most recent peak in AI-enabled audit innovation while also capturing contemporary trends in machine learning and AI techniques.

- Language: English-language articles only.

We also conducted backward snowballing (researching the reference lists of included articles) and forward snowballing (identifying studies that cited the included research) to achieve more relevant literature and minimize the possibility of missing impactful articles.

Gray literature from major audit firms, professional bodies, and standard-setting organizations was also reviewed. In our study classification, we have considered only those documents as substantive where they contained technical or methodological detail of AI-enabled audit tools, workflows, or governance, and classified them as practice-oriented reports [29,30].

2.2. Inclusion and Exclusion Criteria

- Inclusion Criteria

- Studies focused on the application of artificial intelligence, machine learning, deep learning, natural language processing, robotic process automation, or similar techniques in audit or assurance.

- Studies outlining artificial intelligence applications, architectures, frameworks, and tools/human assessments or empirical evaluations that relate to at least one of the identifiable stages of the audit workflow (planning, risk assessment, controls testing, substantive procedures, reporting, or continuous monitoring).

- Peer-reviewed journal articles, peer-reviewed conference proceedings, or high-quality institutional/professional reports from recognized audit firms, standard-setting bodies, or research organizations.

- For empirical studies, adequate methodological information is given to allow a judgment on study design, sample characteristics, and criteria for evaluation.

- ○

- To illustrate, Rahman and Ziru [31] examined the role of digitalizing clients and audits in the context of the Chinese audit market, offering empirical panel data on client digitalization and audit quality based on a digital expertise analytics approach, and demonstrating that digital clients mediate the interaction between client digitalization and audit quality.

- ○

- A case-based empirical investigation of the global fintech lending environment, conducted by Sachan et al. [32], showed that human–AI collaboration in the context of decision support systems can reduce noise in the decision-making process, giving grounds to this phenomenon and the use of AI.

- ○

- The study by Sun and Vasarhelyi [33], a technical empirical structure of textual data analytics in auditing, demonstrated the performance of deep learning NLP models in improving the efficiency and effectiveness of textual data audits within the global audit analytics environment.

- Exclusion Criteria

- Studies on generic data analytics, business intelligence, or business process management without explicitly mentioning AI/machine learning approaches.

- Articles with a focus on applications of AI or machine learning in accounting, finance, and other areas of business, other than audit or assurance,

- Pure opinion pieces, editorial commentaries or speculative pieces without substantive technical, methodical, or empirical content.

- Research conducted in non-English languages or studies that lacked sufficient detail to extract relevant data.

- Duplicate of article or multiple publications of the same work.

2.3. Study Selection Process

Two independent reviewers screened the titles and abstracts of all records obtained from the database and supplement searches for the inclusion/exclusion criteria using DistillerSR (2.31.0) or similar systematic review software [34,35]. The two authors discussed any disagreements until they agreed on the best way to resolve them; no third-party reviewer was used. Full-text articles for all potentially relevant records were retrieved, and their eligibility was independently evaluated by two reviewers using the same inclusion criteria. Reasons for exclusion at the full-text stage were recorded [36,37].

Moreover, the process yielded a final sample of 100 studies for thorough review, from which data were extracted. This sample size was deemed appropriate to ensure breadth across audit contexts, technologies, and research approaches, and to be manageable for in-depth analysis and synthesis [38].

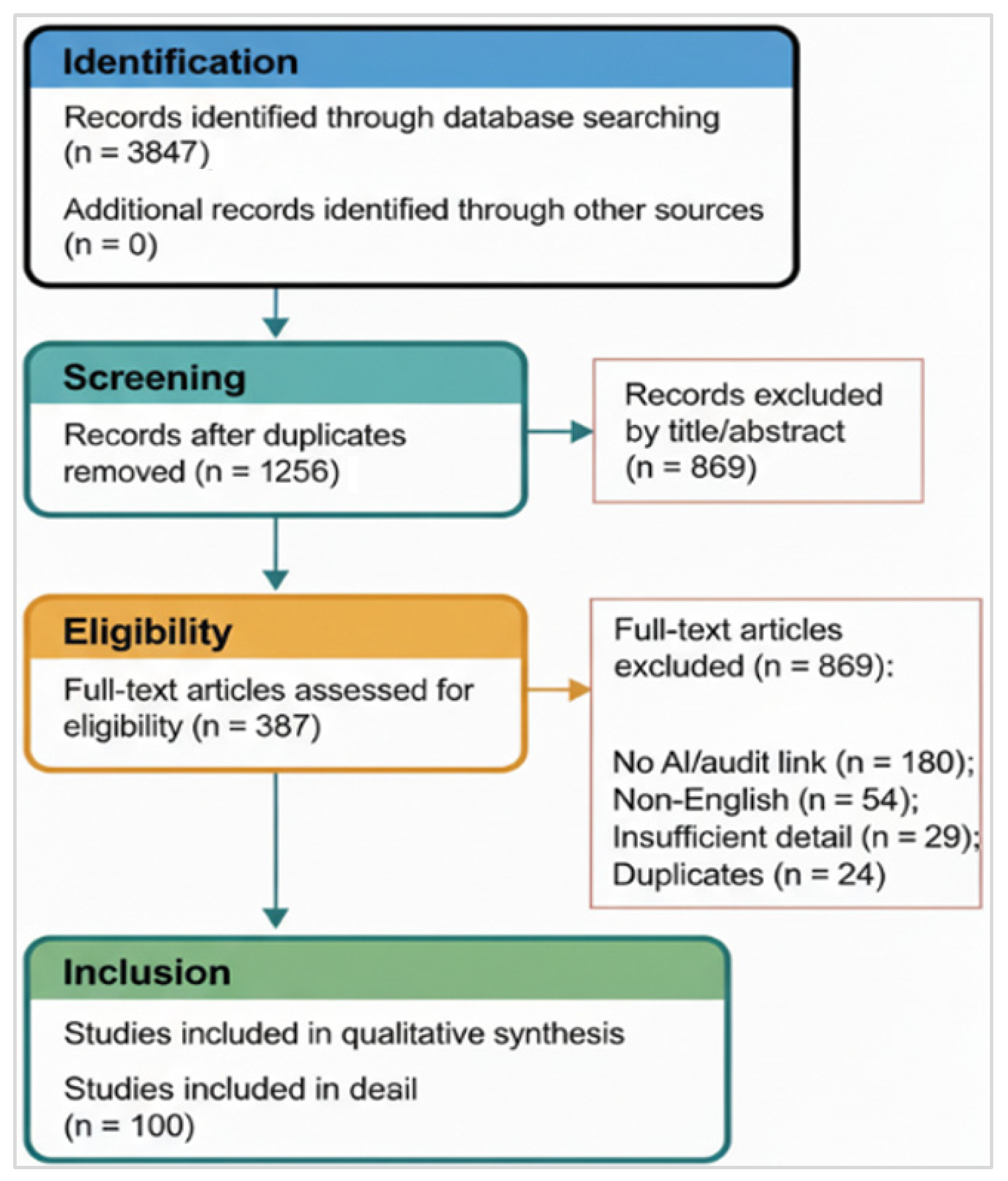

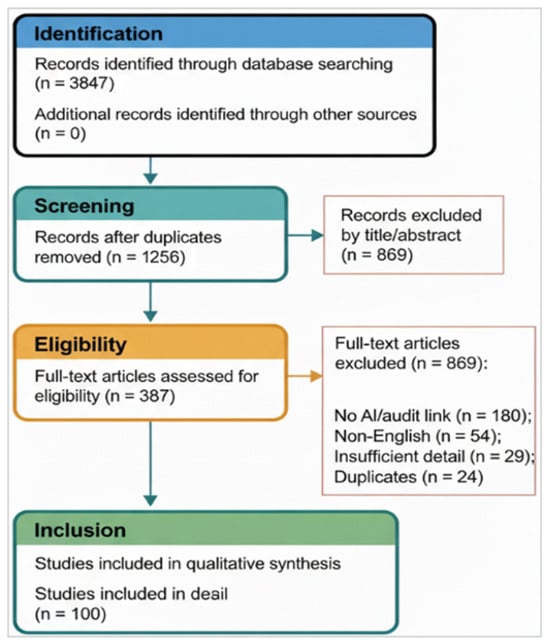

The PRISMA 2020 flow diagram is presented in Figure 1, and the completed PRISMA checklist is presented in Supplementary File S1. Furthermore, database and supplementary searches provided 3847 records, of which 1256 records remained after de-duplication. After title and abstract screening, 387 full-text articles were evaluated for their eligibility. A follow-up to the detailed evaluation identified 100 studies that met the final inclusion and exclusion criteria.

Figure 1.

PRISMA 2020 flow diagram of the study selection.

The 100 studies comprised the following:

- Empirical studies (45%): Case studies, controlled experiments, field evaluation, surveys, mixed-method studies.

- Design science and development studies (28%): Prototype development, system design descriptions, technical architecture papers.

- Frameworks, position papers, story syntheses (17%): Conceptual studies and literature reviews.

- Practice-oriented reports (10%): White papers/technical guidance from major audit firms and professional organizations.

- Publication timeline: Our earliest matching publication dates to 2015. Publications began to accelerate around 2018, with notable growth in number from 2020 to 2025, driven by increasing attention to AI in auditing as technological advances emerged and competitors adopted these tools.

- Geographic distribution: Authors and studies originated mainly from Europe (38%), North America (35%), Asia-Pacific (20%), and Africa/Middle-East (7%), with significant contributions coming from jurisdictions with strong responses from audit firms and advanced financial markets.

- Contexts of audit addressed: Research on auditing has extensively covered a variety of contexts. Forty-two studies focused on internal auditing, which gives importance to monitoring and evaluating the internal processes of an organization. External financial statement auditing has been covered in 35 works, with a focus on the transparency and accuracy of financial reporting. Public sector and government audits have been the topic of 12 such studies, in which an audit essentially judges the efficiency and accountability of government expenditure and spending in specific sectors. Tax and compliance audits are covered in eight studies, demonstrating the importance of complying with financial and tax laws and regulatory standards. Lastly, forensic and fraud audits have been examined in three studies, which focus on the detection and prevention of fraudulent activities in organizations.

Inter-reviewer reliability was assessed in the title/abstract screening and quality evaluation steps at percent agreement using Cohen’s kappa and was substantial (>’s kappa> 0.75). The review process followed the reporting guidelines (PRISMA), and the filled PRISMA checklist is included in the Supplementary Materials (File S1 attached).

2.4. Quality Assessment

A structured quality assessment checklist was created and adapted from the systematic review guidelines in software engineering and information systems [39,40]. The key dimensions of the checklist were as follows:

- Clarity/specificity of research objectives and scope;

- Transparency of methodologies, including description of data source and selection of sample;

- Adequacy of data description and auditable quality checks;

- Specification of AI techniques, models, and parameters;

- Appropriateness of evaluation design (e.g., empirical methods, metrics, comparators);

- Completeness of reporting of results;

- Reasons for limitations and possible bias, and acknowledgement and discussion;

- Clarity in terms of generalizability and applicability to context X.

Each study was independently assessed on each dimension by two groups of reviewers using a scale (e.g., high—clear and comprehensive, with low bias risk; moderate—adequate, with some gaps in clarity or completeness of information; low—significant gaps in transparency or rigor). The overall quality rating of the studies was determined based on the pattern of their dimension ratings. Studies rated as moderate or low quality were not excluded but were noted, and the strength of evidence for particular findings was qualified by the underlying quality of the studies [41,42,43].

2.5. Data Extraction

A standardized data extraction form, in spreadsheet or specialized software format, was created and pilot-tested on a subsample of studies to ensure standardization. The form contained the following information:

- Bibliographic information: Author(s), year of publication, type of study (empirical, design science, conceptual or review), source (journal name, conference, report).

- Audit context: Type of audit (internal audit, external audit/financial statement audit, public sector audit, tax audit/compliance audit, forensic/fraud audit, others).

- Participants/setting: Organization type: multinational firm, big four, small and medium-sized entities (SMEs), public/government, non-profit, audit domains.

- AI techniques and tools: Specific AI techniques that were used (machine learning algorithms and approaches for NLP, RPA platforms, knowledge-based systems, and hybrids) and software/platforms that were mentioned.

- Areas of audit documentation covered how AI supported tasks in the following audit workflow stages: planning, risk assessment, controls testing, substantive procedures, reporting, and continuous monitoring.

- Key findings and outcomes: Reported benefits, efficiency measures, detection rates, accuracy measures, user satisfaction, lessons learnt.

- Architectural/design features: system architecture, data sources, model governance, explainability mechanisms, human–AI interaction design, integration points.

- Challenges and barriers: Technical: data quality, model performance; Organizational: adoption, skills, change management; Regulatory/ethical: compliance, bias, transparency; Governance.

- Research gaps and future directions: The open questions and recommendations that emerged from the research.

Data extraction was performed by one reviewer and validated by a second reviewer on a random 20% sample to determine extraction accuracy [43,44].

Data availability: The 100 eligible studies [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100] and their features are summarized in Supplementary Table S1.

2.6. Synthesis Approach

Given the heterogeneity of study designs, audit contexts, and AI techniques, the synthesis combined narrative thematic analysis with mapping and tabulation approaches [45,46].

- Thematic analysis commenced by coding the extracted data in terms of research question, audit workflow stage, artificial intelligence technique, and challenge type, followed by data synthesis by refining themes/subthemes iteratively [47,48].

- Mapping: Refers to structural tables and visual diagrams, which were created to correlate AI techniques with the audit workflow stages, architecture elements, opportunities, and challenges to facilitate pattern recognition and gap identification.

- Selected studies: Excerpts from the abstract paragraphs and a summary of the study characteristics (e.g., year of publication, audit context, and AI techniques) will be presented in a quantitative summary [49,50].

A meta-analysis of quantitative outcomes was considered but found inappropriate because of a great deal of heterogeneity in study designs, outcome measures, and contexts; instead, findings were synthesized in narrative form with tabular summaries of effect estimates and outcome measures where available [50].

3. Results

3.1. AI Techniques and Tools Identified

Machine learning and predictive analytics (58 studies): Supervised and unsupervised methods of machine learning, threat models and methods featuring the most significant portion of studies, including the following:

- Classification algorithms (random forests, gradient boosting, support vector machines, logistic regression) for anomaly detection and classification (fraud and spam) and risk scoring.

- Clustering concepts (k-means, hierarchical clustering, DBSCAN) for the segmentation of transactions and finding patterns.

- Deep learning neural network (multilayer perceptron, convolutional neural networks, recurrent neural networks, and LSTMs) for sequential pattern recognition and time-series forecasting.

- Ensemble methods, which combine multiple models (to achieve better robustness).

Natural language processing (31 studies): Natural language processing techniques for unstructured audit data:

- Named entity recognition (NER) and information extraction for contract analysis and regulatory compliance document review.

- Sentiment and tone analysis for management commentary, earnings calls, and internal communications.

- Topic modeling (Latent Dirichlet Allocation, Non-negative Matrix Factorization) based on categorization and summarization of the audit documentation.

- Document matching and validation of standard classification and semantic similarity for text.

- Large language models (GPT variants, BERT, transformer architectures) for document summarization and question answering.

Robotic process automation (24 studies): Rule-based automation and workflow orchestration:

- RPA for the development of bots that can extract data, navigate systems easily, and produce reports upon request.

- Integration of RPA with machine learning for “intelligent automation” enables context-aware decision-making.

- Workflow orchestration engines coordinate multiple bots and AI services.

- Exception handling and escalation mechanisms are also important.

Other AI techniques (15 studies): Expert systems and knowledge-based reasoning, reinforcement learning for dynamic sampling and testing strategy optimization, process mining and discovery, and computer vision for physical asset verification.

3.2. Audit Workflow Stages and AI Application Patterns

Planning and risk assessment (42 studies): AI is used to aid the earliest stages of audit engagement.

- Integrated risk scoring models use combinations of financial metrics, control scoring, process indicators, and management narrative analysis to prioritize accounts, entities, or processes to focus audits.

- Time-series forecasting and anomaly detection on past financial data to detect out-of-the-ordinary trends in potential risk areas.

- Sentiment and tone analysis of management commentary and regulatory filings to determine tone at the top and quality of the disclosure.

- Entity linking and network analysis to identify the related parties and complex structure requiring increased attention during the audit.

- Machine learning-based inherent risk models based on client industry, regulatory context, and organization factors.

Tests of controls and control monitoring (38 studies): Continuous or periodic AI-enabled control monitoring includes the following:

- Real-time analysis of system logs and user access patterns to identify segregation of duty violations and unauthorized system transactions.

- Process mining algorithms to reconstruct real flows in processes based on transaction logs and compare them to the designed controls, flagging deviations.

- Rule-based and machine learning models to monitor transaction approvals, authorization limits, and patterns.

- Continuous monitoring dashboards that raise alarms for auditors concerning breaches of controls, anomalies, or exceptions in near real-time.

Substantive procedures and transaction testing (48 studies): A majority of the studies dealt with AI in substantive testing, including the following:

- Unsupervised and supervised anomaly detection used for journal entries, accounts receivable, inventory, and other transaction populations to find unusual transactions for focused audit investigation.

- Automated verification and validation of supporting documents (invoices, purchase orders, receipts, contracts) with the help of NLP and computer vision.

- The project aims to combine journal entry testing based on a mix of rule-based (unusual timing, round amounts, top accounts) and machine learning-based anomaly detection.

- Predictive models for the prediction of the likelihood of misstatement or estimates of the account balances for providing the basis of audit judgment and identifying unexpected variances.

- Fraud risk scoring, which is based on characteristics of the transaction, user behavior, and historical patterns, to prioritize items for substantive review.

- Supporting or external reporting, communication, and ongoing assurance (31 studies): post-fieldwork and continuous activities by following AI.

- Automated generation of audit documentation, workpaper summaries, and management letters with the findings and recommendations.

- Interactive dashboards and visualization tools for communicating with the audit committee and how to manage risk heat maps, anomaly profiles, control status, and findings.

- Continuous auditing and monitoring systems to allow the ongoing assessment of control effectiveness and emerging risks instead of point-in-time audit opinions.

- Predictive models for forecasting future control performance/misstatement likelihood to inform audit strategies and allocate resources.

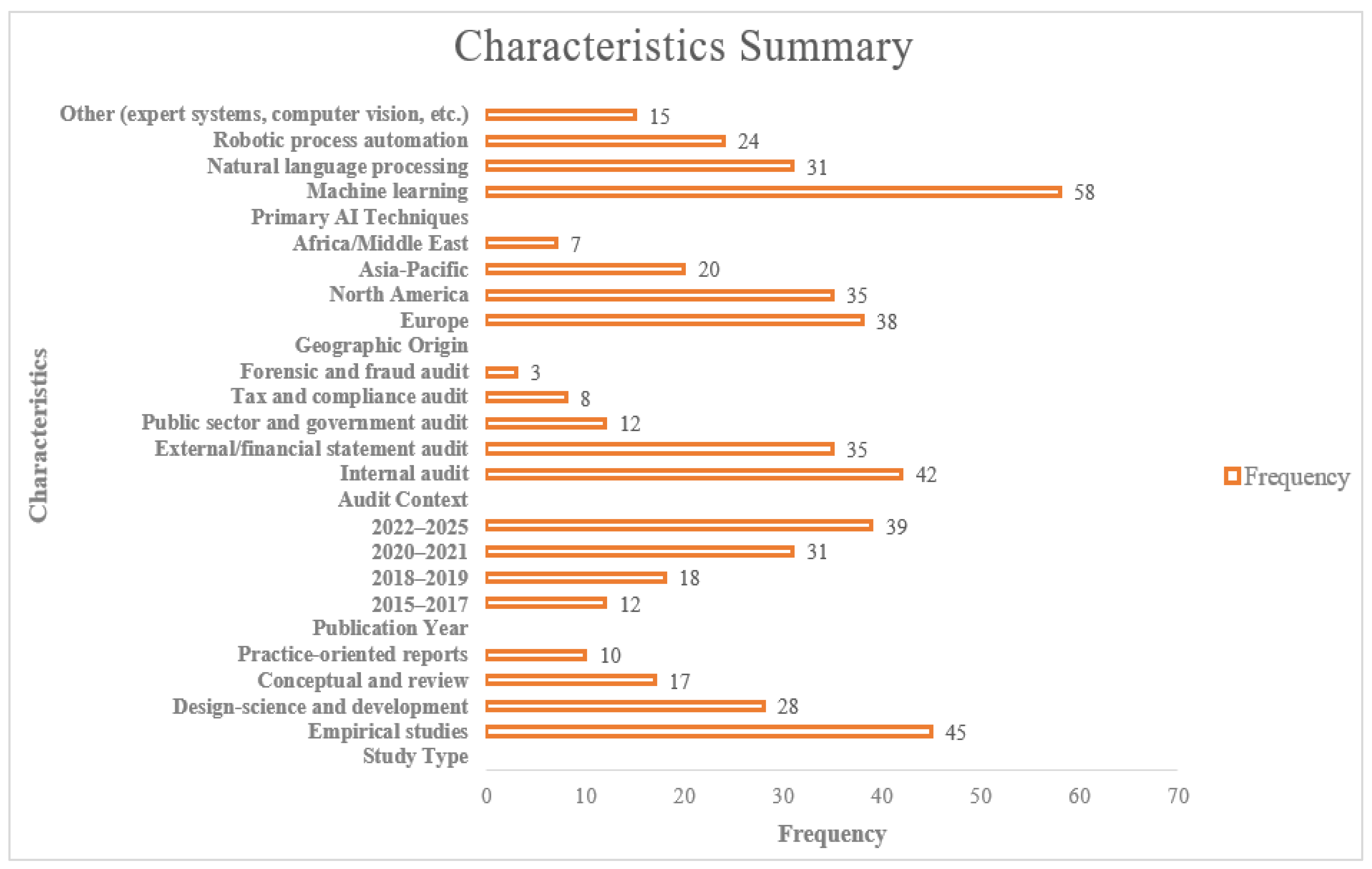

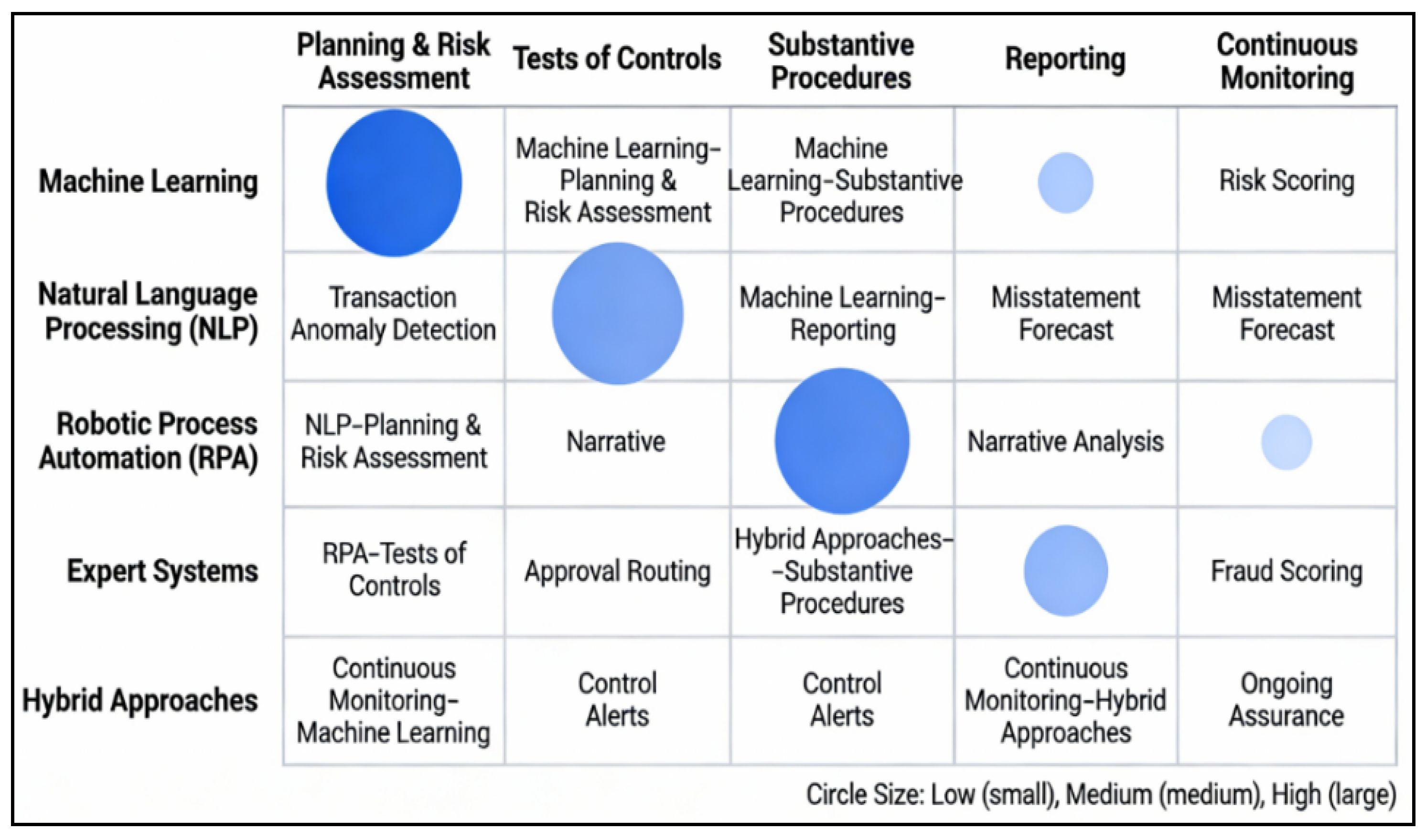

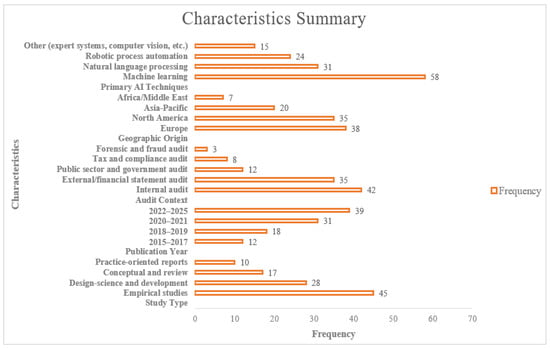

Moreover, the AI methods identified in the research are applied across different steps of the audit process, improving its effectiveness and efficiency. Machine learning (ML) algorithms are applied in the planning and risk assessment phase, and specifically in predictive analytics and risk scoring to prioritize accounts, identify high-risk areas, and detect anomalies. To conduct tests of controls, robotic process automation (RPA) and process mining are used to continuously monitor controls and detect possible violations, and machine learning is used to support transaction approval testing and the segregation of duties testing. Machine learning and natural language processing (NLP) are used during substantive procedures as tools of anomaly detection, journal entry testing, and document verification. Further, inventory monitoring and checking of assets are all performed through computer vision. During the reporting and constant assurance phases, AI applications like dashboards, predictive analytics, and machine learning are used to visualize risk matrices, measure the effectiveness of control in real-time, and provide ongoing alerts, among others, to make the auditing process dynamic and constant. Figure 2 summarizes 100 studies, which are mostly empirical and more recent publications. Research primarily focuses on internal and external audits, is primarily from Europe and North America, and focuses on machine learning, NLP, and automation, often combining multiple applications of AI techniques.

Figure 2.

Summary of characteristics.

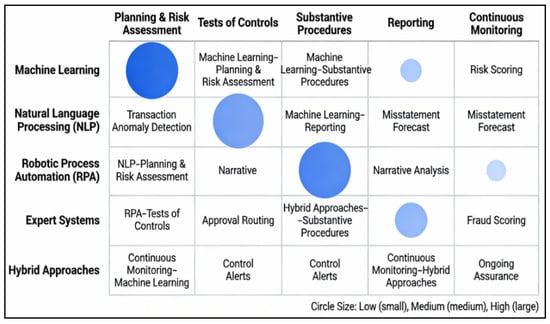

Furthermore, a mapping of AI techniques to audit workflow stages is illustrated in Figure 3, which shows where machine learning, NLP, RPA, expert, and hybrid approaches offer added value. The circles used in the figure are of varying sizes depending on the influence or significance of the technologies in each region. Color coding represents dissimilar groups where darker colors depict greater applicability or emphasis in particular fields of planning, risk assessment, and constant monitoring. Applications concentrate on planning, control testing, and substantive procedures, with growing roles in reporting and continuous monitoring in assurance and risk-focused activities in the enterprise.

Figure 3.

AI techniques by audit workflow stage.

3.3. Study Characteristics

Table 1 provides an overview of the evidence at an aggregate level, with studies being grouped based on four dimensions—study type, audit context, and the various main contributions based on the primary AI techniques—rather than providing a list of 100 studies. Empirical quantitative and survey-based research on internal and external audit settings generally shows benefit–challenge trade-offs in machine learning-based anomaly detection, risk scoring, and efficiency gains, while conceptual and framework-based research gives taxonomies, reference models, and future research agendas for AI-enabled auditing. Contributions grounded in practical and design science approaches show examples of real-world AI-based systems and processes, including process automation using RPA, semantic analysis of documents using NLP, and audit processes supported by knowledge graphs. The entire study-level table of all 100 citations with their respective study type, context, AI technique, and primary contribution is included in Supplementary File S2 to give readers improved traceability.

Table 1.

Synthesized patterns across the 100 included studies by audit context, dominant AI techniques, typical main contributions, and corresponding references.

The referenced literature includes empirical quantitative literature, survey literature, archival literature, conceptual framework literature, and systematic or structured literature reviews, which reflect the methodological diversity of literature that analyzes AI applications in auditing [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100]. The sample is dominated by empirical studies, specifically field and archival research on audit quality, audit efficiency, audit risk assessment, and audit professional judgment [1,2,3,4,8,9,14,17,21,23,24,25,31,35,36,37,46,67,71,80,91,92,93].

Contextually, the research covers a very broad scope of audit situations, such as external financial audit, internal auditing, audit risk and fraud detection, audit reporting, continuous auditing, and technology-enabled assurance services [1,2,3,4,5,6,12,16,18,24,30,41,45,57,61,66,81]. Other studies also extend beyond the field of traditional auditing into adjacent areas, like automation of accounting systems, regulation, blocks, reporting on blockchains, and AI-based decision support, showing the widening scope of audit research [19,28,57,58,59,73,77,78,79,82,95].

Regarding the AI methods, machine learning models, deep learning architectures, natural language processing, expert systems, robotic process automation, and hybrid AI-blockchain/AI-knowledge graph methods are cited regularly [1,9,12,16,17,33,35,37,53,60,71,75,81,87,93]. Conceptual and review-based papers highlight the strategic and ethical and governance implication of these technologies, and empirical research provides evidence of performance benefits, adoption trends, and new skill requirements in the auditor profession [5,7,10,15,20,39,54,61,70,88,89].

Overall, Supplementary Table S1 illustrates that the included studies cumulatively report a gradual change towards data-driven, intelligent, and technology-assisted auditing, as well as note a variety of current challenges associated with heterogeneity in adoption, institutional preparedness, and methodological integration among audit settings [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100].

4. Findings: AI Technologies in Audit

4.1. Machine Learning and Anomaly Detection

Machine learning techniques are extensively represented in the AI-in-auditing literature, as they are key to detecting anomalies and risks. Supervised learning approaches use historical-labeled data (e.g., transactions later determined to be fraudulent or erroneous) to learn a model for classifying new transactions as normal or anomalous [28,39,54,55].

- Transaction-level anomaly detection: Random forests, gradient boosting machine (XGBoost 3.0.0, LightGBM 4.6.0), and logistic regression are widely employed for the detection of outlier transactions in journal entries, receivables, payables, and inventory [16,33,35,46]. These models learn patterns in millions of routine transactions and identify transactions with unusual characteristics (amount, timing, counterparty, approval chain, and account combination) [25,33,35]. Studies have reported that such models, when properly trained and validated, can detect fraud and errors more than those achievable through manual sampling [25,35].

- Unsupervised anomaly detection (clustering, isolation forests, and autoencoders) is useful if labeled fraud data are limited, as they are often in audit environments [35]. These methods are used to identify a transaction that presents a significant deviation from the learned standard behavior pattern without the need for explicit fraud labels [33,38].

- Key findings: Based on empirical investigations, we find an improvement in detection rates of 20–70% compared with manual sampling methods, but the absolute detection rate varies greatly depending on the data quality, feature engineering, and actual probability of anomalies in the dataset [25,35]. However, several studies have also reported high false-positive rates, which require the auditor to review and triage the alerts, and discuss the importance of investing in data quality, feature engineering, and model validation [39,41].

4.2. Natural Language Processing and Document Analysis

NLP applications help address the audit challenge of handling large volumes of unstructured text. Contracts, board minutes, policy documents, email communications, and management narratives are increasingly subjected to automated analysis [50,51,53].

- Contract and regulatory document analysis: NLP models are used to identify important clauses (covenants, termination conditions, related party terms and contingencies) from contracts and deviations from templates or standard language [51,52,54]. Named entity recognition tools are employed to find out named entities like persons, dates, money amount, etc. [55,56]. Such capabilities aid audit procedures in verifying contract completeness, determining the terms of odd contracts, and verifying the adequacy of disclosure [57,58,59].

- Sentiment and tone analysis: Tools are used to measure the tone, the complexity, and the linguistic indicator for possible bias or management overrides in earnings call transcripts, management commentary, and internal communications [59,60,61]. Studies suggest that combining quantitative sentiment measures with human reviews will improve auditors’ ability to evaluate management’s attitude toward controls and the tone at the top [62,63,64].

- Document classification and clustering: Topic modeling and text classification fall into the category of labeling (assigning audit documents into categories, such as controlling narratives, risk assessment, and regulatory filings) and create a way to retrieve and rank audit documents for review [51,52,65]. Large language models (LLMs), such as GPT and BERT, can be used to summarize long documents and answer questions related to their content, which could save auditors time when reading and synthesizing information [56,61].

- Challenges and limitations: The performance of NLP relies on domain adaptation. Natural language models cannot be used to perform NLP on technical texts about accounting or documents related to accounting [51,52,61]. Multilingual scenarios, sarcasm, and implicit meanings [56,65] are further challenges. Studies highlight the carelessness of validating NLP tools in an audit setting and communicating clear information on confidence and explainability to auditors and clients [60,61].

4.3. Robotic Process Automation and Workflow Orchestration

RPA is used to automate repetitive, rule-based tasks in the auditing process workflow [65,66]. Audit bots are used to extract data from ERP systems, perform reconciliations, feed spreadsheets, and produce standardized reports, and are often executed on some kind of schedule or event [66].

- Data preparation and integration: RPA bots are used to move across different systems to retrieve and consolidate their data for audit analysis, saving manual data compilation time and errors [67,68,69]. This helps improve the efficiency and reliability of the data foundation for further AI analysis [65,70].

- Intelligent automation: RPA can be combined with machine learning, enabling “intelligent automation,” where bots can use the logic of decision making to route transactions, approve exceptions, or fill out fields based on patterns or scores that they have learned from [65,71]. For example, a bot could sample transactions and identify them as normal or anomalous (using a deployed machine learning model) and forward the items of high risk to human auditors for analysis [66,72].

- Continuous control monitoring: RPA scripts can be set up to run continuously or have a high frequency of execution, monitoring control logs and flagging violations, unauthorized activities, or policies (in near real-time) [66,73,74]. This provides a transition from periodic audit testing to continuous assurance [66,72].

- Challenges: The governance of RPA scripts and change management are key issues that are accompanied by versioning, exception handling, and documentation [72,74]. Studies provide important evidence for robust audit controls over RPA bots to ensure that RPA robots operate as designed and that exceptions are managed properly [65,75].

4.4. Hybrid and Emerging Approaches

Hybrid and emerging approaches in auditing are based on the use of advanced technologies to improve the audit process. Process mining tools reconstruct actual process flows from the event logs of the systems and compare them with the designed process, bringing out deviations to aid the control testing process and identify unusual process execution patterns [66,74]. Reinforcement learning supports dynamic sampling and AI-based adaptive audit sampling with an intelligent testing sequence for optimal feedback, although very few practitioners can apply it. Additionally, computer vision is becoming a reliable method of inventory observation, asset condition evaluation, and physical checks, and is used in situations where items are remote or in high-volume settings. These innovations are designed to make audits more efficient and accurate; however, this idea has not yet been fully realized across all industrial sectors under the right conditions [72].

5. Opportunities and Benefits

5.1. Enhanced Detection Capability

One of the main opportunities of AI in auditing is its capabilities to analyze whole transaction populations (instead of samples), sophisticated pattern recognition, and the detection of anomalies and exceptions that could be missed by the human eye [72,75]. Empirical studies show that appropriately designed machine learning models can reveal fraud, mistakes, and misstatements at rates and speeds that are not possible using manual methods [65,72].

Implications: This capability has the potential to enhance the effectiveness of auditing in terms of identifying a wider variety of issues, including subtle or low-value anomalies that aggregate to material amounts [65,75].

5.2. Expanded Audit Coverage and Population-Level Analysis

Rather than using representative samples, AI allows auditors to test entire populations or very high percentages, creating more confidence and facilitating a transition from exception-based testing to comprehensive analysis [75]. This is particularly useful for high-volume routine transactions [76].

Implications: Expanded coverage may increase audit quality by decreasing the risk of missing material items and may improve auditor efficiency by automating routine testing of populations that are at low risk of problems to free up time for higher-value, judgment-intensive work [75].

5.3. Continuous and Real-Time Monitoring

AI makes continuous auditing and monitoring systems possible to check the effectiveness of controls, compliance of transactions, and status of risks on a rolling basis instead of at period-ends [77,78,79]. This brings audit assurance in line with real-time business cycles and decision-making [80,81].

Implications: Continuous monitoring can be useful to address issues such as faster control failure detection and resolution, dynamic risk assessment, audit lag, and reduction in cycle time [75,78,81].

5.4. Improved Efficiency and Resource Optimization

Automation of routine data-intensive procedures (data gathering, reconciliation, document matching, and rule-based testing) minimizes manual effort, possible errors, and audit cycle time [82]. This allows audit professionals to focus on more valuable activities, such as interpretation, judgment, and client engagement [75,81].

Evidence: Gains in efficiency of 10–50% in pilot projects/case studies, but variation is due to differences in the scope of implementation and baseline efficiency [81,82].

5.5. Deeper Insights and Richer Analysis

AI analysis of unstructured data (contracts, communications, and documents) reveals insights that would otherwise be impossible owing to time-consuming manual analysis [80,82]. Combined with the analysis of structured data, this demands a more holistic understanding of risk, control effectiveness, and management intent [75,79].

Implications: A more valuable analysis potentially allows auditors to identify more valuable advisory insights for audit committees and management [76,79].

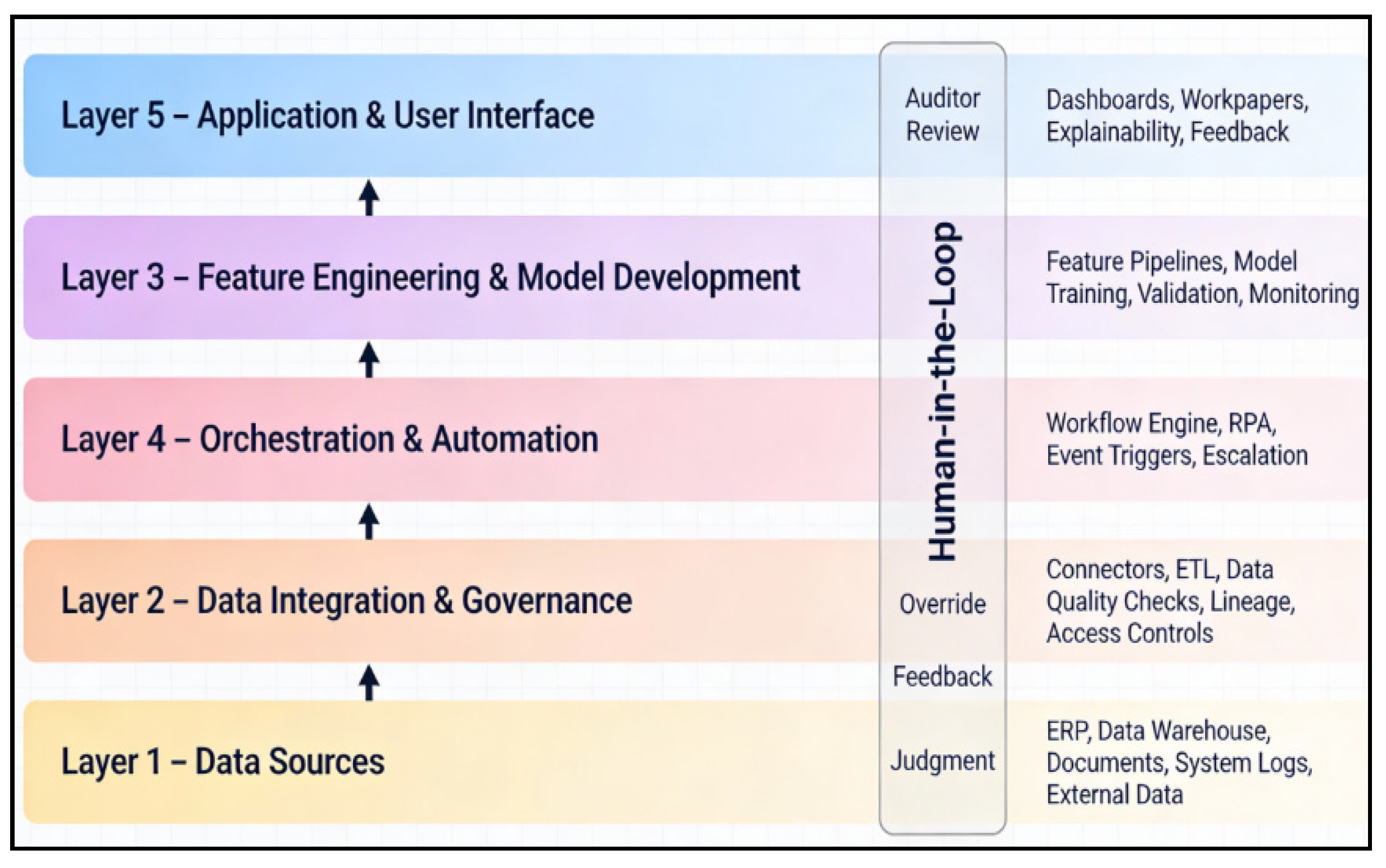

6. Overview of the Reference Architecture for AI-Enabled Audit Workflow

6.1. Conceptual Layers and Components

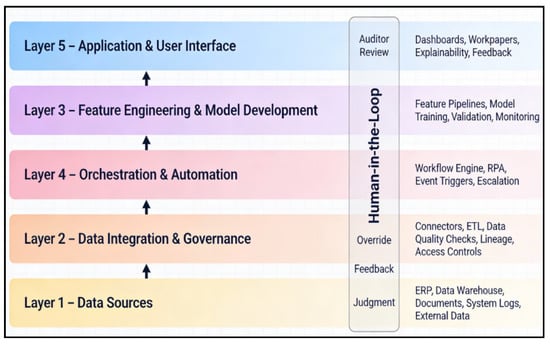

The conceptual model for the AI-enabled audit process workflow has five major interrelated layers that play a different role in the overall process. These layers are intended to ensure the integration, development, and deployment of artificial intelligence (AI) models in an organized, controlled, and efficient way. In the end, these layers can become part of an improvement of the audit process. The reference architecture for the AI-enabled audit workflow is shown in Figure 3.

Layer 1: Data Integration and Data Governance

The foundation of any AI-enabled audit system is the Data Integration and Governance layer [18,28,57]. The task of this layer is to ingest, validate, and integrate structured and unstructured data from disparate sources, including ERP systems, data warehouses, logs, documents, and external data sources [66]. The important components present in this layer are data connectors and ETL (Extract, Transform, Load) pipelines that are used to reliably extract data from multiple systems and in different formats. Additionally, data quality checks and anomaly detection mechanisms are implemented at the point of data ingestion to flag any issues with data quality in the ingestion process, which may impact the analysis [73]. Data lineage and provenance tracking are important to support the audit trail and documentation of evidence to ensure transparency and traceability of the data [77]. Access and security controls are also implemented in this layer to safeguard confidential audit and client data, where data governance policies set rules about data ownership, retention, and use. This governance structure ensures that data are handled in a consistent, reliable, and secure manner, which builds trust in the entire audit process [79].

Layer 2: Feature Engineering and AI Model Development

The second layer is called Feature Engineering and AI Model Development, and this transforms the raw data into applicable insights by creating AI models [25,38,53]. This layer includes the workflows that are responsible for data transformation, feature engineering, and model training essential for transforming the raw data to the prediction and classification. The important elements of this layer are pipelines of feature engineering that calculate derived variables, aggregate statistics, and temporal features from raw data [46]. Machine learning models, including supervised and unsupervised learning, are then trained on previous historical, labeled data, or for the discovery of patterns. Model architecture selection and hyperparameter tuning were performed to optimize the performance and ensure that the models were providing accurate results. Model validation and back-testing are important to ensure that models perform well on holdout data or historical test cases [75]. Continuous monitoring of the models is implemented to detect possible degradation of performance (model drift) or data drift over time. Moreover, the methods of explainability and interpretability, such as SHAP values or Local Interpretable Model-agnostic Explanations (LIME), are incorporated so that auditors can understand why specific predictions are being made, which plays an essential role in making AI-driven decisions transparent.

Layer 3: Orchestration and Smart Automation

The third, Orchestration and Intelligent Automation, helps ensure the smooth running of AI models and the automated processes involved in executing audit-based processes [45,55]. This layer coordinates the execution of robotic process automation (RPA) bots, workflow engines, and API-based services, triggering AI models and automation throughout the audit process [59]. The components of this layer include a workflow engine to orchestrate the multi-step audit procedure, whereby coordination between data extraction, model inference, result interpretation, and escalation is accomplished seamlessly [81]. RPA bots are used to automate routine tasks, enhance efficiency, and reduce manual errors. Event-driven architecture is used to initiate AI models at particular points in the audit process, such as when new transactions are added or at the beginning of substantive testing. Exception handling and escalation rules are established to pass high-priority items or unexpected results to human reviewers for further investigation. In this layer, audit trail logging is also a key element that ensures that all automated actions and AI decisions are recorded for future review, which can bring transparency and accountability.

Layer 4: Application layer and User Interface layer

The fourth layer, Application and User Interface, focuses on providing auditors with tools to interact with AI, allowing in-depth investigations and the possibility of learning [32]. This layer provides dashboards and visualizations, including a risk heatmap, anomaly profiles, control status, and key findings, to inform decisions for decision-makers and auditors [33,36]. Workpaper systems include AI-generated summaries, alerts, and evidence directly into the audit documentation process and streamline the process. Explainability interfaces help auditors understand why particular transactions or accounts were flagged by providing model confidence scores and identifying the factors that contributed to this. Feedback mechanisms are also built into this layer, allowing auditors to mark false positives or false negatives, and mark cases for retraining the model [50,72]. These interfaces help ensure that auditors can effectively interact with AI models to improve the efficiency and accuracy of the audit process.

Layer 5: Governance, Compliance, and Security

The Governance, Compliance, and Security layer provides oversight and control mechanisms throughout the operation of AI systems to ensure that they function reliably, ethically, and in compliance with the relevant audit standards and regulations [2,5,6]. This pertains to governance policies within models, which span the life cycle of AI models from development and validation to deployment and retirement of AI models. Change management and version control processes are implemented to track changes in the models and ensure that the models are reliable over time [21,23]. Performance monitoring and KPI dashboards have been implemented to track the model accuracy, coverage, and model compliance with service level agreements (SLAs). Bias and fairness assessments are also conducted in order to detect and alleviate any discriminatory results from the AI models [39]. Regulatory compliance checks are built into this layer, including making sure that industry standards (such as ISA, PCAOB, and SEC regulations) and data protection laws (such as GDPR) are met. Security controls are also crucial for effectiveness, such as data encryption, authentication, and protection of information. Incident response procedures are described regarding potential model failures or breaches of data or anomalous behavior of AI in order to guarantee the integrity of the audit process [40,80]. Last but not least, documentation and evidence management procedures are developed to satisfy the requirements of audit quality and regulatory expectations and requirements, where all AI-driven decisions ought to be tracked and validated.

Each of these five layers work together to create a strong AI-enabled audit system that will not only improve the efficiency and reliability of the audit process but also ensure compliance and transparency.

6.2. Human-in-the-Loop Design and Professional Judgment

A common theme in the literature is the emphasis on maintaining human judgment, auditor responsibility, and professional skepticism in audits that use AI-enabled tools. To ensure that these elements are maintained, reference architectures for AI-assisted auditing should account for human-in-the-loop (HITL) designs, in which auditors are actively involved in major decision-making processes. The following are the critical aspects of these frameworks:

- Review and Interpret AI Outputs: Auditors must review flagged transactions, anomaly explanations, and risk scores from AI before making conclusions, so that they have the responsibility to retain professional judgment and decision making [2,6,25,36].

- Override AI Recommendations: In instances where there is a discrepancy between recommendations from an AI mechanism and auditor judgment grounded in other, related information, auditors may decide not to accept AI conclusions and provide reasons for their conclusions for accountability [2,5,39].

- Provide Feedback for Model Improvement: Auditors can provide feedback for improving AI models like annotated examples, marking misclassifications, and working with data scientists to improve the model’s behavior and accuracy [16,61].

- Maintain Zones of Judgment: Some audit tasks are still purely manual and require complete use of professional judgment because of a very intensive understanding of the context, skepticism, or tasks unsuited for automation [21,36,39].

The reference architecture, as shown in Figure 4, should be clear on which tasks are automated (rules-based, low risk), augmented (AI recommendations for auditor validation), and manual (requiring an auditor’s professional judgment), and ensure accountability, but avoid excessive reliance on AI.

Figure 4.

Reference architecture for AI-enabled audit workflow.

Human-in-the-loop mechanisms provide explicit boundaries between automated analysis and professional judgment. AI systems produce risk scores, flags of anomalies, and summaries, but auditors maintain the power of acceptance, override, and documentation of conclusions. All overrides must be logged with a rationale to retain accountability. Tasks that involve reasoning about a context, ethical assessment, or determination of materiality are still not automated. This allocation maintains adherence to auditing standards, with the potential to build AI assistance on a scalable basis.

6.3. Conceptualization Validation of the Proposed Architecture

To conceptually prove the validity of the proposed reference architecture, a systematic synthesis of the 100 selected studies that were used in the systematic review was carried out. The workflow components and architectural layers were then obtained by publishing the frequent patterns of designs, system elements, and integrations as outlined in the empirical studies, research in design sciences, and reports by professionals.

The validation was conducted through a cross-comparison of reported architectural elements in the literature such as data integration pipelines, model development practices, workflow orchestration mechanisms, user interaction interfaces and governance controls. The support of the proposed layered architecture was through consistent architectural patterns in various studies. Also, the design was gauged on logical consistency in terms of both the overall auditing standards and professional requirements, especially as far as audit evidence, documentation, professional judgment, and human oversight were concerned. Further, the proposed architecture, as such, is a conceptual reference model based on the current research and professional practice, and not an implemented system. This architecture could be empirically evaluated in the future research through case studies and practical implementation.

7. Challenge and Implementation Barriers

The implementation of artificial intelligence (AI) in auditing can deliver significant benefits. However, their practical application is restricted by various interrelated challenges. These barriers range from the availability and quality of data, technical limitations of models, organizational and human factors, and regulatory and governance considerations. Understanding these challenges is crucial for developing realistic, responsible, and effective audit frameworks enabled by artificial intelligence (AI).

7.1. Data-Related Challenges

Data quality and completeness remain fundamental issues in AI-driven auditing. Machine learning models are susceptible to the quality of the input data, and problems such as incomplete records, missing values, inconsistent definitions of data across systems, and incorrect data entries can significantly degrade the performance of the model and introduce a systematic bias [83,84]. Many organizations do not have mature data governance structures, standardized data taxonomies, and effective data stewardship practices, making it a challenge to have the level of data reliability needed for AI applications in auditing [85,86,87].

Another important issue is the lack of labeled data in this field. Supervised machine learning methods for detecting fraud or material misstatements are based on labeled examples of confirmed fraud or error. In practice, such data are scarce, highly imbalanced, and often skewed, because most transactions are legitimate and only a small percentage of fraud cases are identified and confirmed [87,88]. This scarcity limits the processes of model training, validation, and performance benchmarking, thereby limiting trust in AI results [89].

System integration and data access complicate AI deployment. Many audit clients have fragmented IT environments with legacy systems, siloed databases, and poor integration of financial, operational, and transactional data sources [89,90]. The radius of restricted data access, inconsistent data formats, and manual data extraction processes adds considerable effort to the data preparation process, which compromises the scalability and efficiency of AI-based audit procedures [90,91].

7.2. Model Challenges and Technical Challenges

A significant technical challenge is the explainability and interpretability of the mod-el. Advanced AI models, especially deep neural networks and ensemble techniques, can be a “black box” that produces accurate predictions, but not in a reasonable manner [91,92]. In auditing situations, where there is a need for auditors, regulators, and stakeholders to be treated with transparent justification for flagged transactions or for assessed levels of risk, this opacity destroys trust and makes audit documentation and review difficult [92,93].

Monitoring of model performance and drift is another issue of concern. AI models trained on past data can suffer from performance degradation when the underlying data distributions shift owing to changes in business practices, economic conditions, or fraud tactics [93]. Continuous monitoring, drift detection mechanisms, and structured retraining processes are therefore necessary; however, many organizations do not yet possess the infrastructure and/or governance maturity to implement them effectively [94,95].

Additionally, generalization across contexts is poor. As can be seen in [95], models trained on data originating from one organization or industry or from one audit domain do not usually transfer well to other environments, which impedes the reusability of AI solutions and leads to increased cost and effort to customize them for a specific setting. Concerns about robustness and adversarial manipulation make deployment even more challenging, as fraudsters can intentionally change their behavior to bypass AI-based detection systems [96].

7.3. Organizational and Human Issues

Beyond the technical issues, the organizational aspect and change management are important for the adoption of AI. Successful implementation requires cultural changes, re-designing of audit processes, and long-term investments of infrastructural and talent re-sources, which are difficult for many audit organizations to implement [55,61,82]. Resistance from audit professionals, fear of job replacement, and reliance on established methods can slow or block adoption [83,85,88].

A deficit in skills and competencies is also a significant obstacle. Effective AI-enabled auditing benefits from close cooperation between auditors, data scientists, and IT professionals, but many audit firms lack both in-house data science knowledge or structured programs to make auditors AI literate and able to evaluate them critically [83,91,92]. Developing auditors who can exploit the benefits of AI tools and be professional and skeptical is a significant training challenge [91,92,94].

Furthermore, interoperability with existing audit methods remains a complex task. AI tools must be consistent with existing audit standards, workpaper systems, and quality control processes. This requires updating the audit guide to specify appropriate use cases and formally introduce AI-assisted procedures into audit methodologies.

7.4. Regulatory, Compliance, and Governance Issues

Regulatory expectations and guidance regarding the use of AI in auditing are evolving but are currently fragmented. Regulators and standard-setters have started paying more attention to audits with the help of artificial intelligence, although there is uncertainty about what audit evidence is acceptable, how trust in AI should be recorded, and what validation criteria will be used [82,86].

Issues of professional responsibility and accountability are closely related. When AI systems produce biased or erroneous outputs, it is unclear whether the auditor, audit firm, or AI vendor is responsible [94,95]. Existing professional standards and liability frame-works have not been fully developed to address AI-based decision-making.

Ethical considerations and data protection add to the complexity of implementing these systems. AI models can result in historic bias embedded in the data used to train the model, leading to discriminatory situations that compromise fairness and trust [95,96]. Moreover, the use of sensitive financial and personal data requires strict adherence to the data protection rules (GDPR and local privacy laws) [88,92].

7.5. AI Governance and Quality Assurance

Proper model governance and lifecycle management are essential for the effective implementation of AI in auditing. Organizations need to document model assumptions, track versions, keep them up to date, and decommission obsolete models to avoid uncontrolled “model sprawl” and ensure accountability [93,95,97]. However, audit organizations do not have mature governance frameworks to support these activities [87,98].

Finally, validation, testing, and incident management are crucial for auditing quality. AI systems must be rigorously validated, such as back-testing, sensitivity, and stress test-ing against adverse conditions, to comply with professional standards for audits [80,84,99,100]. Continuous monitoring and incident response procedures should be implemented to identify failures, data-related problems, or unexpected behaviors and ensure trust in AI-aided auditing [91,93].

7.6. Risks of Artificial Intelligence in Auditing

Although the opportunities that artificial intelligence offers are pronounced, AI ap-plication in auditing also implies several risks that should be addressed efficiently.

One of the significant risks is associated with algorithmic and data dependency. AI models that are trained using historical audit data can recreate the existing biases or errors and this can result in a misleading risk assessment or incorrect audit findings. The other significant issue is the inability to be transparent about complex AI models. Black box algorithms can also be useful when they give good predictions but offer less explanation so that auditors would find it hard to justify their conclusions to the regulators and other interested parties. Moreover, the issue of data protection and cybersecurity risks also esca-lates due to AI-driven auditing, as vast amounts of personalized financial and organizational information will have to be gathered, analyzed, and stored in cyberspace. Excessive reliance on automated decision systems poses a professional risk. Overreliance on AI tools can undermine professional judgment without an adequate level of skepticism on the part of auditors in accepting the automated results without due criticism. Lastly, regulatory uncertainty is a considerable risk to consider since the audit standards and legal frameworks are in transition to include the application of artificial intelligence in assurance services.

7.7. Summary of Research Question Findings

The results address each of the four research questions developed in Section 1.2.

RQ1: AI use in Auditing Process: The review indicates that artificial intelligence is used at every phase of the audit working process such as planning, risk assessment, control testing, substantive procedures and reporting. Anomaly detection and predictive risk assessment are most performed with the help of machine learning techniques, document analysis is performed with the help of natural language processing, and workflow automation is performed with the help of robots.

Referenced Section for RQ1: Section 3.1 and Section 4.2 (AI application across audit phases).

RQ2: AI Architectural Integration: The findings suggest that AI is integrated through common system structures comprising data assimilation, model development, coordination, application interfaces, and control features. The human-in-the-loop systems are necessary to ensure auditor supervision and professional judgment.

Referenced Section for RQ2: Section 6.1 (AI system architecture).

RQ3: Effect on Audit Performance: Empirical data also indicates that AI can enhance the performance of the audit in terms of effectiveness and efficiency, as it allows conducting testing on the population level, better anomaly detection, and automatic data processing. The size of the improvement is, however, highly dependent on the quality of the data and level of maturity.

Referenced Section for RQ3: Section 4.1 (Empirical data on AI impact).

RQ4: Implementation Barriers: The primary obstacles to the adoption of AI in auditing are problems with data quality, insufficiency of technical expertise, integration obstacles, and uncertainty over regulation. Further, issues of governance also contribute in the deterrence of the broad implementation.

Referenced Section for RQ4: Section 6.3 (Barriers to AI adoption).

8. Discussion and Future Research

8.1. Research Gaps

The existing literature on AI in auditing shows that the field is ripe for further research on AI in auditing, but specifically in the context of real-world impact and AI methodology comparison. The lack of longitudinal studies on the long-term consequences of the use of AI tools on audit quality, efficiency, and judgment is a major concern. Many studies related to short-term controlled environments leave a gap in understanding the sustained impact of AI. Additionally, there is scarce research comparing the effectiveness of different approaches to AI, such as different anomaly detection algorithms and natural language processing, in various audit tasks. The organizational and behavioral aspects of AI adoption, such as auditor interaction with AI recommendations, are also underexplored. Moreover, a lack of regulatory and standardization frameworks also acts as a barrier to AI integration, and there is a need for audit standard-setting bodies to create guidelines for AI validation and documentation to overcome this barrier.

8.2. Future Research Directions

Furthermore, future studies must address some of the gaps presented in this study to enable further development and implementation of AI in auditing. One of the areas of possible future work is that of Explainable AI (XAI), which is essential for building trust among auditors and stakeholders while preserving high levels of accuracy. Techniques such as few-shot learning, transfer learning, and federated learning should be investigated to improve AI model adaptability while preserving data privacy. These methods would help AI systems perform well with limited data and in decentralized environments. In addition, using reinforcement learning and causal inference as part of audit processes could help improve decision-making processes and process optimization, resulting in more efficient audits. This study also calls for the development of specified governance frameworks for AI in auditing, if they are in accordance with professional standards and ethical guidelines, to ensure the transparency and fairness of AI systems. Furthermore, there is a need to develop AI literacy for auditors and amend professional responsibility frameworks to facilitate ethical, legal, and effective deployment of AI. Future studies should also focus on longitudinal research to examine the long-term effects of AI on auditing, comparative research to determine the methods of AI that work best, and the development of more advanced XAI methods. By targeting these areas, AI has the potential to contribute significantly to improving the quality and efficiency of audits and adding value to stakeholders in a data-rich and digital business context.

9. Conclusions

This research has numerous new contributions to the literature on artificial intelligence in auditing. First, it presents a systematic overview of AI applications throughout the audit process through a systematic analysis of 100 studies. Second, it creates an architecture of references where artificial intelligence is incorporated into the audit procedure with well-articulated functional layers. Third, the research combines the existing empirical data regarding the effect of AI on audit quality, efficiency, and effectiveness. In addition, the work has a theoretical contribution to the literature on auditing research in terms of the workflow perspective on artificial intelligence implementation. In practice, the suggested architecture and taxonomy offer guidance to audit firms, system developers, and standard-setters who are interested in deploying AI-based auditing solutions. On the academic front, the paper is well-synthesized to form a background of further empirical studies.

Furthermore, this research synthesizes ten years of studies on AI-assisted auditing and offers a system-level view of how AI can be used in the audit process. By relating AI methods to the different phases of the audit process and formulating a proposed reference architecture from empirical evidence, this study goes beyond isolated cases to propose a holistic design of audit systems. The findings reveal that the efficacy of AI in auditing is much more dependent on governance, information standards, and human supervision than on algorithms. The proposed reference architecture includes important components such as data integration, feature engineering, and human-in-the-loop supervision. It contextualizes these components as key to the responsible adoption of AI in auditing processes. Successful integration of AI requires bypassing various hurdles, such as technical, organizational, regulatory, and governance issues. To achieve this, organizations must ensure alignment by prioritizing the importance of building robust data governance foundations, implementing comprehensive AI frameworks, and providing auditors with targeted AI training. Additionally, this research has some limitations to be taken into account during interpretation of findings. To begin with, this systematic review is confined to studies published after 2015 and before 2025 and in the English language; these might not capture the relevant research published in other languages and prior to 2015. Second, the review is based mostly on published scholarly literature and chosen professional reports, which is not an exhaustive representation of the practice of proprietary artificial intelligence applications in audit firms. Third, the suggested reference architecture is an imaginary concept that is based on the literature, and has not yet undergone empirical validation by being demonstrated in practice. Lastly, the rapid evolution of artificial intelligence technologies indicates that not all findings would be permanently valid since there will always be newer ways and applications.

Supplementary Materials

The following supporting information can be downloaded at https://www.mdpi.com/article/10.3390/accountaudit2010004/s1, File S1: Extended PRISMA checklist (See Ref. [101]); File S2: Full list of 100 reviewed articles with extraction details; Table S1: Summary of characteristics of included studies (N = 100).

Author Contributions

Conceptualization, A.A.; methodology, A.A. and M.O.A.; validation, A.A. and M.O.A.; formal analysis, A.A. and M.O.A.; investigation, A.A. and M.O.A.; writing—original draft preparation, A.A. and M.O.A.; writing—review and editing, A.A. and M.O.A.; visualization, A.A.; supervision, M.O.A. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Acknowledgments

All figures/tables were created by the authors—no GenAI was used. The authors have reviewed and edited the output and take full responsibility for the content of this publication.

Conflicts of Interest

Author Ashif Anwar was employed by the Wolters Kluwer United States Inc, the remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

Abbreviations

| Term | Full Form | Term | Full Form |

| AI | Artificial Intelligence | GDPR | General Data Protection Regulation |

| ML | Machine Learning | PCAOB | Public Company Accounting Oversight Board |

| NLP | Natural Language Processing | IAASB | International Auditing and Assurance Standards Board |

| RPA | Robotic Process Automation | LSTM | Long Short-Term Memory |

| HITL | Human-in-the-loop | XGBoost | Extreme Gradient Boosting |

| XAI | Explainable AI | RF | Random Forest |

| ERP | Enterprise Resource Planning | Autoencoders | A type of artificial neural network used for unsupervised learning |

| RPA Bots | Robotic Process Automation Bots | KPI | Key Performance Indicators |

| CLV | Customer Lifetime Value | PRISMA | Preferred Reporting Items for Systematic Reviews and Meta-Analyses |

| SLAs | Service Level Agreements | AML | Anti-Money Laundering |

References

- Adelakun, B.O.; Fatogun, D.T.; Majekodunmi, T.G.; Adediran, G.A. Integrating machine learning algorithms into audit processes: Benefits and challenges. Financ. Account. Res. J. 2024, 6, 1000–1016. [Google Scholar] [CrossRef]

- Kokina, J.; Blanchette, S.; Davenport, T.H.; Pachamanova, D. Challenges and opportunities for artificial intelligence in auditing: Evidence from the field. Int. J. Account. Inf. Syst. 2025, 56, 100734. [Google Scholar] [CrossRef]

- Pérez-Calderón, E.; Alrahamneh, S.A.; Montero, P.M. Impact of artificial intelligence on auditing: An evaluation from the profession in Jordan. Discov. Sustain. 2025, 6, 251. [Google Scholar] [CrossRef]

- Chen, S.; Yang, J. Intelligent manufacturing, auditor selection and audit quality. Manag. Decis. 2025, 63, 964–997. [Google Scholar] [CrossRef]

- Al-Omush, A.; Almasarwah, A.; Al-Wreikat, A. Artificial intelligence in financial auditing: Redefining accuracy and transparency in assurance services. EDPACS 2025, 70, 1–20. [Google Scholar] [CrossRef]

- Leocádio, D.; Reis, J.; Malheiro, L. Trends and Challenges in Auditing and Internal Control: A Systematic Literature Review. 2024. Available online: https://ssrn.com/abstract=4872558 (accessed on 8 January 2026).

- Almufadda, G.; Almezeini, N.A. Artificial Intelligence Applications in the Auditing Profession: A Literature Review. J. Emerg. Technol. Account. 2022, 19, 29–42. [Google Scholar] [CrossRef]

- Lontsi, U.J.S.; Ektik, D. Auditors’ opinion about AI and the impact of AI on audit quality: A study on qualified auditors in Africa. Siirt Sos. Araştırmalar Derg. 2025, 4, 1–17. [Google Scholar]

- Ham, C.; Hann, R.N.; Rabier, M.; Wang, W. Auditor Skill Demands and Audit Quality: Evidence from Job Postings. Manag. Sci. 2025, 71, 5805–5829. [Google Scholar] [CrossRef]

- Shazly, M.A.; AbdElAlim, K.; Zakaria, H. The Impact of Artificial Intelligence on Audit Quality. In Technological Horizons; Emerald Publishing Limited: Leeds, UK, 2025; pp. 1–10. [Google Scholar] [CrossRef]

- Khayoon, D.F.; Hawi, H.T.; Abdullh, L.Q. The Impact of Using Artificial Intelligence Technology (Expert Systems) on Audit Quality. In Proceedings of the 2025 XXVIII International Conference on Soft Computing and Measurements (SCM), Saint Petersburg, Russia, 28–30 May 2025; IEEE: Piscataway, NJ, USA, 2025; pp. 266–273. [Google Scholar] [CrossRef]

- Awad, K.A.; Ali, W.A.M. Utilizing Robotic Process Automation and Artificial Intelligence in Auditing to Mitigate Audit Risks. Technol. Soc. Sci. J. 2024, 66, 1–14. [Google Scholar] [CrossRef]

- Afroze, D.; Aulad, A. Perception of Professional Accountants about the Application of Artificial Intelligence (AI) in Auditing Industry of Bangladesh. J. Soc. Econ. Res. 2020, 7, 51–61. [Google Scholar] [CrossRef]

- Chen, X.; Guo, Y. Does the executive pay gap affect audit risk? BCP Bus. Manag. 2023, 49, 308–317. [Google Scholar] [CrossRef]

- Suyono, W.P.; Puspa, E.S.; Anugrah, S.; Firnanda, R. Artificial Intelligence in Auditing: A Systematic Review of Tools, Applications, and Challenges. RIGGS J. Artif. Intell. Digit. Bus. 2025, 4, 3393–3401. [Google Scholar] [CrossRef]

- Yuan, T.; Zhang, X.; Chen, X. Machine learning based enterprise financial audit framework and high-risk identification. arXiv 2025, arXiv:2507.06266. [Google Scholar] [CrossRef]

- Saidi, J.; Sari, R.N.; Hendriani, S.; Machasin, M.; Savitri, E.; Efni, Y.; Iznillah, M.L. The Role of Audit Report Readability in Linking Management Characteristics to Corporate Sustainability Performance: A Study of Indonesian SOEs. Qubahan Acad. J. 2025, 5, 61–83. [Google Scholar] [CrossRef]

- Abu Huson, Y.; García, L.S.; Benau, M.A.G.; Aljawarneh, N.M. Cloud-based artificial intelligence and audit report: The mediating role of the auditor. VINE J. Inf. Knowl. Manag. Syst. 2025, 55, 1553–1574. [Google Scholar] [CrossRef]

- Abu Huson, Y.; Sierra-García, L.; Garcia-Benau, M.A. A bibliometric review of information technology, artificial intelligence, and blockchain on auditing. Total. Qual. Manag. Bus. Excel. 2024, 35, 91–113. [Google Scholar] [CrossRef]

- Alareeni, B.; Hamdan, A. The Impact of Artificial Intelligence on Accounting and Auditing in Light of the COVID-19 Pandemic. In Artificial Intelligence and COVID Effect on Accounting; Springer Nature: Singapore, 2022; pp. 3–7. [Google Scholar] [CrossRef]

- Ismail, I.H.M. A Systematic Literature Review of the External Audit Reliance Issues. Pak. J. Life Soc. Sci. (PJLSS) 2024, 22, 7115–7135. [Google Scholar] [CrossRef]

- Aslan, L. The evolving competencies of the public auditor and the future of public sector auditing. In Contemporary Studies in Economic and Financial Analysis; Emerald Publishing Limited: Leeds, UK, 2021; pp. 113–129. [Google Scholar]

- Benhayoun, I.; Bougrine, S.; Sassioui, A. Readiness for artificial intelligence adoption by auditors in emerging countries—A PLS-SEM analysis of Moroccan firms. J. Financ. Rep. Account. 2025, 23, 1486–1508. [Google Scholar] [CrossRef]

- Choi, S.U.; Lee, K.C.; Na, H.J. Exploring the deep neural network model’s potential to estimate abnormal audit fees. Manag. Decis. 2022, 60, 3304–3323. [Google Scholar] [CrossRef]