Abstract

The article presents an overview of several studies in the field of Brain–Computer Interfaces (BCIs), the requirements for the architecture of such promising devices, as well as multi-modal BCI for drone control in a smart-city environment. Distinctive features of the proposed solution are the simplicity of the architecture (the use of only one smartphone for both receiving and processing bio-signals from the headset and transmitting commands to the drone), an open-source software solution for signal processing, generating, and sending commands to the unmanned aerial vehicle (UAV), as well as multimodality of the BCI (the use of both electroencephalographic (EEG) and electrooculographic (EOG) signals of the operator). For bio-signal acquisition, we used the NeuroSky Mindwave Mobile 2 headset, which is connected to an Android-based smartphone via Bluetooth. The developed Android application (Tello NeuroSky) processes signals from the headset and generates and transmits commands to the DJI Tello UAV via Wi-Fi. The decrease (depression) and increase of - and -rhythms of the brain, as well as EOG signals that occur during blinking were the triggers for UAV commands. The developed software allows the manual setting of the minimum, maximum and threshold values for the processed bio-signals. The following commands for the UAV were implemented: take-off, landing, forward movement, and backwards movement. Two threads of the smartphone’s central processing unit (CPU) were utilized when processing signals in the software to increase the performance: for signal processing (1-D Daubechies 2 (db2) wavelet transform) and updating data on the diagrams, and for generating and transmitting commands to the drone.

1. Introduction

The current concept of a smart city assumes the increase of self-sufficiency of unmanned vehicles (UVs), as well as the amount of data generated and transmitted. The decisions on changing the trajectory and modes of movement will be made either by the UVs themselves or by traffic control centers. The operators in these centers will monitor and control current road/air situations. In addition, in the case of emergencies, the operators will be able to take control of one or more UVs to prevent traffic/air incidents. The rapid development in BCI-based control of robotics, UAVs, and other objects (including in smart environments [1,2,3,4]) supposes the implementation and use of such a way of control of UVs in traffic control centers, including as a backup option. UV control using BCI has the potential to reduce the time of transmitting the commands, as well as to ensure simultaneous control of multiple vehicles by one operator. Thus, the problem of developing methods, techniques, algorithms, and software for the control of UVs in smart cities using BCI is relevant. It should be noted that this paper focuses exclusively on the control of aerial objects—UAVs—but the given solution can be adapted for other similar objects, including ground and surface UVs.

It is customary to allocate two main data-processing and transmission nodes in the loop of UV/UAV control using the operator’s bio-signals:

- BCI, designed to acquire, convert, and process these bio-signals, classify and detect features;

- Computer–Machine Interface (CMI), designed to convert the output of BCI to drone/robot/machine-compatible control commands and transmit them to the control object.

Although the general solution for operating a robot/UAV using human bio-signals would be better called the Brain–Machine Interface, the literature also refers to it as the BCI (as a combination of the Brain–Computer Interface itself and the CMI). Hereinafter, the term BCI will be used [5].

The work is structured as follows. Section 2 presents a description of existing BCI-based UAV control solutions (related work), the types of bio-signals used, and their analysis. Section 3 provides the requirements for promising BCIs for UV control in a smart-city environment, as well as the aim of the research. Section 4 presents the description of the architecture of the proposed BCI, its hardware, and software. Section 5 presents a discussion on the current state of BCI-based solutions for UAV control, including in smart cities.

2. Related Work

One of the main steps in the development of BCI is the choice of the bio-signal to process, identify changes, and match them with a certain command for the control object. In particular, such signals may include [1,2,3,4,5,6,7]:

- 1.

- EOG, from blinking and eye movement;

- 2.

- Electromyographic (EMG) data resulting from tension/relaxation of facial muscles;

- 3.

- EEG, e.g., the increase of -rhythms and decrease of -rhythms due to mental relaxation (meditation) or decrease of -rhythms and increase of -rhythms when concentrating.

The latter type of signals includes:

- Steady-state visual-evoked potentials (SSVEPs) generated by the brain in response to visual stimulation of a certain frequency (flashes, brightness changes, etc.);

- Motor imagery (MI)—signals that occur during the imagination of performing motor movements;

- Visual imagery (VI)—signals that occur during the visual representation of objects in the absence of appropriate real visual stimuli;

- Speech imagery (SI)—signals that occur during the mental pronouncing of letters or words;

- Mental commands to the control object—signals that occur when imagining the desired state or action of the object (movement, rotation, etc.).

In addition, it is possible to select and use several types of signals; in this case, such BCIs are referred to as multi-modal [6,7]. Below is a description of several existing BCI-based solutions for UAV control, corresponding approaches, methods, algorithms, hardware, and software, as well as the results achieved.

2.1. EOG and EMG

In paper [8], a Parrot Mambo Fly UAV was controlled based on the changes in EOG and EMG signals of the operator. These signals were transmitted to a personal computer (PC) for processing, including signal filtering, the use of a convolutional neural network, and various classifiers (random forest (RF), nearest neighbors, and convolutional) for feature detection. The drone was controlled as follows: the move axis was selected by raising the eyebrows, and the blinking of the left and right eyes corresponded to forward and backward flight along the selected axis. The advantages of the proposed approach are high recognition accuracy (higher than 80%) and low time response (42.21 ms). However, the description of the process of actual application of the proposed BCI architecture for the control of the Parrot Mambo Fly UAV was insufficient, and the overall drone control process is relatively complex.

The authors of [9] presented a method of UAV control using widely available and relatively inexpensive equipment. In particular, the researchers used the TGAM module sensor made by NeuroSky Shennian Technology with two electrodes. Data transfer from BCI to Arduino Pro Mini with NodeMcu for further processing was carried out via Bluetooth (HC-05 module). The UAV (DJI Tello) was connected and controlled via Wi-Fi. EMG and EOG signals were used to form commands. The EMG triggers are eyebrow pick, single, double, and triple blink, corresponding to four commands for the UAV: forward, backward, left, and right. EMG signals were used to prevent losing control of the drone. Several other commands are stated to be implemented, including take-off, landing, move up, and move down. The authors note high control signal delay, low recognition accuracy, and inflexible switching between actions for the proposed solution as the disadvantages of the proposed solution. In addition, it is generally not clear how the drone was operated, i.e., what signals (except EMG) were eventually used to form other commands for the UAV.

2.2. Mental Concentration and Relaxation

The authors of [10] proposed an approach to control a virtual drone using Emotiv Insight EEG headset by changing the states of mental concentration and relaxation of the operator. A virtual scene was implemented in the Unity Game Engine environment. The virtual UAV was controlled using proprietary Emotiv Emokey software, which emulates keystrokes. Forward acceleration on the predefined path (track) was realized when the operator enters the active mental state. In a neutral state (mental relaxation), the drone slowed down until it stopped. As a disadvantage, there was the implementation of only two commands and a fixed route for the drone.

2.3. SSVEPs

The authors in [11] presented the method of UAV control and the corresponding BCI based on the Unicorn software and hardware, and the g.tec. Hybrid Black EEG headset. The EEG signal was transmitted via Bluetooth to the Unicorn Speller PC. UAV control commands were formed using SSVEPs: The operator focused on certain flashing characters in the Unicorn Speller program. Data from the Unicorn Speller were then passed to another PC via UDP, converted to UAV-compatible commands using Python API, and then transmitted via Wi-Fi to the Parrot Bebop 2 drone. The researchers managed to introduce 12 SSVEP-based commands (take-off, right, left, up, down, move forward, backward, take picture, start video stream, pause, land, and emergency stop). A disadvantage is the bulky hardware architecture of the solution, as well as delays in the transmission of control signals.

Another approach to UAV control using an SSVEP-based BCI is given in article [12]. Emotiv Epoch headset and Easycap devices were used to collect EEG data. Visual stimuli at four frequencies (5.3, 7, 9.4 and 13.5 Hz) were used to form UAV control commands. The 8th-order Butterworth bandpass filter and Fast Fourier Transform algorithm were used for data filtering and SSVEP feature detection, respectively. The use of four SSSVEPs allowed the implementation of the appropriate number of commands for UAV control—take-off, land, move forward, and right turn. The researchers managed to reach a 92.5% value of feature-detection accuracy. However, the architecture of the proposed solution and the stack of tools used is quite complicated: the user is supposed to use the EEG headset, Easycap device, and one PC to form SSVEPs and another one to process the EEG signals.

The authors in [13] presented a BCI for drone control capable of operating in VR and AR environments using head-mounted displays. A DSI VR300 device was used to acquire EEG signals. A virtual scene was developed in Unity, and OpenViBE software was used to communicate with the EEG headset. Eight control commands (turn right, turn left, move up, move down, move left, move right, move forward, and move backward) were formed using SSVEPs (interface buttons flashing with different frequencies). It is possible to control a virtual drone and a real DJI Tello UAV in VR and AR modes, respectively. However, the disadvantage of the proposed solution is similar to previous SSVEP-based BCIs [11,12]—bulky architecture and a large stack of hardware used.

2.4. Motor Imagery

The authors in [5] presented a BCI based on motor imagery ( brain-wave response) for drone control purposes. The OpenBCI hardware platform was used to acquire the EEG signals. Common Spatial Patterns and Linear Discriminant Analysis methods were used to process EEG data. The authors proposed an algorithm for forming commands for the drone based on the classifier. To verify the proposed approach and algorithm, a virtual simulator with AR Drone 2.0 UAV (including its dynamic model) was used. However, only two commands were implemented—turn left and turn right—by changing the yaw angle, while the UAV in the simulator was moving with a constant velocity.

The authors in [14] proposed the use of LabVIEW and MATLAB software and the Undecimated Wavelet Transform algorithm for noise reduction and resolution analysis. A method for extracting the features of EEG signals using Independent Component Analysis and the coefficient of determination was developed. A hybrid neural network was developed to classify sensorimotor rhythms (MI) in EEG signals. The obtained maximum classification rate result was 95.67%. The LabVIEW environment was used to control a virtual drone.

2.5. Mental Commands

The authors in [15] presented an approach to UAV control based on the mental commands (mind concentration and relaxation) of the operator. The authors presented a mathematical model for processing EEG data in a MATLAB framework. The Emotiv Insight BCI headset was used to acquire the EEG signal, and a PC with the EmotivBCI application was used to process signals and form commands for the DJI Tello UAV via the appropriate API. Three commands were mentioned in the paper—move left, move up, and move down—but only two latter commands were described. A similar approach is given by the authors in [16], its fundamental difference being the purposeful use of consumer-grade EEG headsets for BCI. In particular, Emotiv Insight and Muse (Interaxon) devices were used to acquire EEG signals. The paper also proposes the use of a machine-learning model for classification and feature extraction, considering two classifiers: RF and Support Vector Machine (SVM). The use of the Muse EEG headset and the SVM classifier resulted in 70% feature extraction accuracy. However, the movement of the Crazyflie 2.0 drone in the practical experiment was realized only using two commands (move forward and move backward), while there is no description of other commands (choosing the direction of movement, turns, altitude changes, etc.).

The authors in [17] presented a similar approach to UAV control. Emotiv Insight headset was used for EEG signal retrieval. The object of control was the Parrot Rolling Spider quadcopter. The MATLAB EEGLAB toolbox and BCI2000 software were used to process EEG signals. Feature extraction and training were carried out using the Emotiv Xavier Control Panel software. Five commands for the UAV are available: take-off, move up, move down, change of pitch angle with right turn, and change of pitch angle with left turn. The main disadvantage is the lack of practical testing results of the proposed solution, as well as the use of proprietary systems for feature extraction and training.

2.6. Multi-Modal BCIs

The authors in [18] proposed a BCI that combines the use of MI and SSVEPs. Geodesic Sensor Net (Electrical Geodesics Inc.) was used to acquire an EEG signal. The processed data were transmitted with a fixed time interval to the DJI Matrice 100 quadcopter via Wi-Fi. As a result of the processing of detected features, the following commands for the UAV were implemented: left-forward, right-forward, move up, and move down. Eye-blinking was used to switch between the two flight modes. The main shortcoming of the proposed approach was the relative complexity of the architecture (the use of an EEG headset, one PC for signal processing, another PC, and a monitor for generating and showing visual stimuli).

The authors in [19] proposed an approach to the combined use of MI, VI, and SI techniques for EEG-based control of the drone swarm. A headset with 64 electrodes and BrainVision Recorder (BrainProducts GmbH) software was used to retrieve the EEG signal. The left and right hands were assigned to choose the left or right direction of the swarm of drones, respectively, and arms and legs were assigned to high- and low-altitude flights, respectively (MI usage). Smarm maneuvering, merging, and splitting were carried out via VI, and flight control was conducted using SI. Machine-learning algorithms were used to classify patterns in EEG signals.

The authors in [20] presented an approach to UAV control using BCI based on the recognition of the mental commands of the operator, and EMG signals resulting from the changes in the facial expression of the operator. An Emotiv Insight headset was used to acquire the EEG signal, which was then transferred via Bluetooth to a PC for further processing. Preliminary control signals were transmitted to the Raspberry Pi board, which converted them into drone-compatible commands and sent them to the Parrot Mambo MiniDrone UAV via Wi-Fi. Five commands were implemented: move backward, move forward, turn left, turn right, and land. The authors claim 88% precision recognition of mental commands of the operator. However, the influence of facial motor skills on the EEG signals in the practical experiment was not fully disclosed. Moreover, the architecture of the proposed solution was relatively complex.

The authors in [21] proposed EEG-based drone control algorithms based on monitoring the operator’s blinking and mental concentration. A NeuroSky device was used for EEG signal retrieval, and the data acquired were then transferred to a PC via Bluetooth for further processing. The classification system was based on a neural network, the SVM classifier, the Linear Regression Method, and the dynamic concentration threshold value. Based on the number of operator blinks within five seconds, a 4-bit sequence corresponding to a specific command for the drone was generated. Thus, it is possible to form up to 16 commands, and the following nine commands were implemented in the paper: take-off, land, move up, move down, move forward, move backward, move to the right, move to the left, and stop. However, it should be noted that the article does not fully disclose the description of the problem of generating false commands for the UAVs when generating several control signals within less than five seconds.

2.7. Analysis of the Proposed Solutions

In general, based on the studies considered, it is possible to identify the following main shortcomings of the existing BCI solutions for UAV control:

- 1.

- The complexity of the control process and switching between actions, insufficient number of implemented commands, as well as a priori simplification of the UAV control (e.g., moving in a straight line, with a fixed velocity, etc.).

- 2.

- The complexity of the architecture of the solution (the use of one or more PCs, Arduino/Raspberry Pi boards, as well as various software), which leads to high control signal delay.

- 3.

- Proprietary and open-source software solutions for signal retrieval and processing are deployed mainly on PCs.

- 4.

- The need to use additional hardware for bio-signal processing (for example, SSVEP-based interfaces may require an additional monitor and a PC to display visual stimuli).

Based on the identified shortcomings, it is possible to form requirements for a promising BCI-based solution for drone control, as well as to state the problem of the research.

3. Requirements for a Promising BCI and Problem Statement

Based on the review of the considered studies that propose different approaches, methods, and algorithms for BCI-based drone control, as well as the shortcomings identified, it is advisable to highlight the following four main requirements for the promising solutions:

- 1.

- The implementation of at least 10 control commands (take-off, land, move forward, move backward, move left, move right, move up, move down, turn left, and turn right).

- 2.

- Ease of control and switching between actions, for example, using different bio-signals to generate different commands within multi-modal BCI.

- 3.

- Simplicity of BCI architecture, and the use of the minimum necessary hardware and software stack for drone control. It is preferable to use open-source software solutions.

- 4.

- Taking into account the unique features of the brain activity of each operator, including adaptive adjustments via the use of machine-learning methods when processing bio-signals.

Problem Statement

In this paper, we aim to develop the architecture of the BCI and its corresponding software that meet the third and second (partially) items of the above requirements. The aim of the work is to simplify the hardware components of the BCI, as well as to develop an open-source solution for processing bio-signals and generating commands for a drone. The multimodality of the developed BCI involves the use of both EEG and EOG signals.

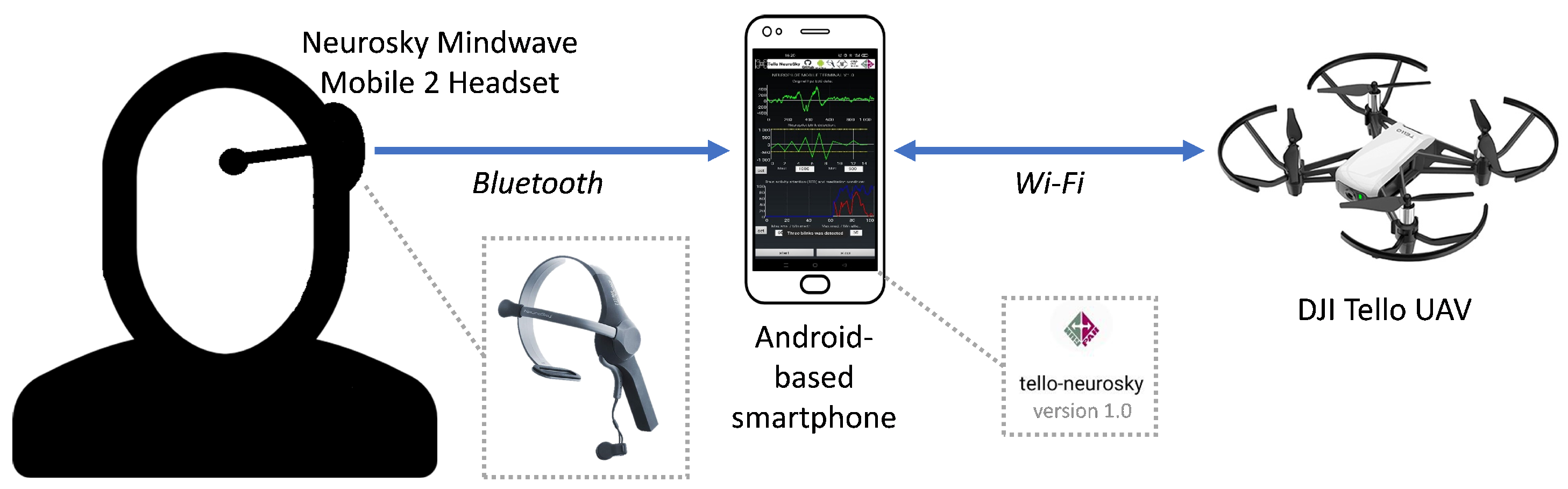

4. BCI Architecture, Hardware and Software

This paper uses the proposed concept of non-invasive BCI, as well as methods of EEG signal retrieval and processing [22,23]. To acquire EEG and EOG signals, we used the NeuroSky Mindwave Mobile 2 headset, which was connected to an Android-based smartphone via Bluetooth. The developed Android application—Tello NeuroSky (v. 1.0)—processes the signals received from the headset, generates drone-compatible commands, and transmits them to the DJI Tello UAV via Wi-Fi. The architecture of the proposed BCI and the equipment used are shown in Figure 1 and Figure 2, respectively.

Figure 1.

The architecture of the proposed BCI-based UAV control solution.

Figure 2.

BCI equipment and hardware.

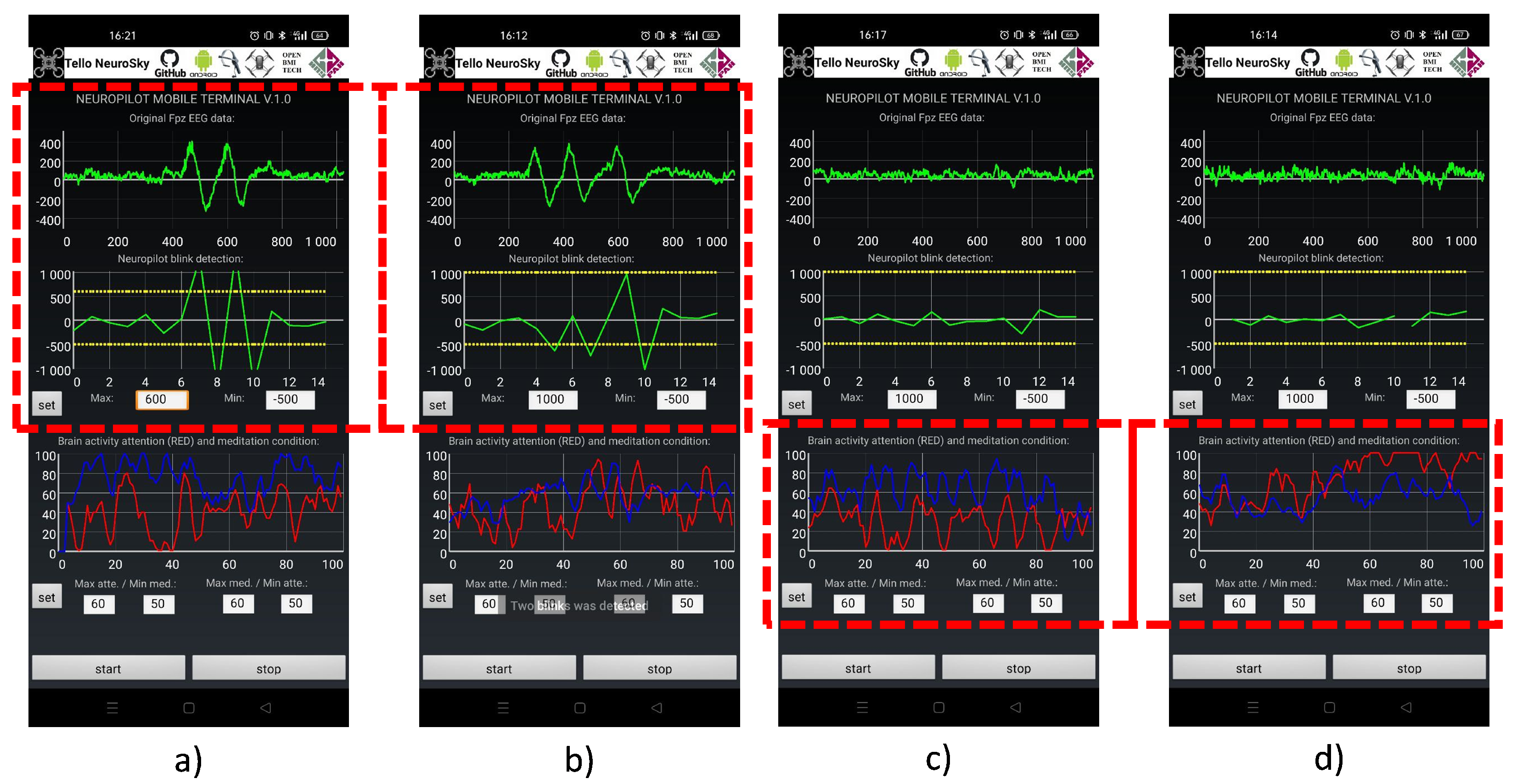

The developed software allows the use of the increase and decrease of - and -rhythms and EOG signals from blinking as triggers to generated commands for UAV. The application implements manual adjustment of minimum, maximum, and threshold values for - and -rhythms, as well as for EOG signals. The following commands have been implemented: two blinks in a row—take-off; three blinks in a row—land; increase of -rhythms (above the threshold and -rhythms)—move forward by 20 cm; increase of -rhythms (above the threshold and -rhythms)—move backward by 20 cm. Due to the high computational load on the smartphone CPU, the software uses two separate threads: one for signal processing (1-D Daubechies 2 (db2) wavelet transform) and visualizing data on charts, and another one for generating and transmitting commands to the drone. Figure 3 shows the graphical user interface (GUI) of the developed software.

Figure 3.

Software GUI (a) detection of two blinks in a row; (b) detection of three blinks in a row; (c) detection of -rhythm amplification and -rhythm depression; (d) detection of -rhythm amplification and -rhythm depression.

5. Discussion

Within the formed list of requirements for a promising BCI-based solution for UAV control (including in smart cities), we managed to implement the third as well as part of the second requirement. The simplicity of the architecture of the proposed solution lies in the use of only one smartphone for retrieving and processing bio-signals, and for transmitting control commands to the drone; the software solution is open-source and free to the public. The developed BCI is multi-modal: the tracking of changes in - and -rhythms and eye-blinking are used to form different commands for the UAV. We plan to introduce additional commands for the UAV: changes in -rhythms of the brain can be used to implement left/right movement. In addition, machine-learning methods and a neural network will be introduced to better detect blinking and take into account the individual characteristics of the bio-signals of each operator. This paper focuses exclusively on the control of aerial objects—UAVs—though the proposed solution can be adapted for other objects, including ground and surface UVs. The software code for the presented solution is available at [24].

6. Conclusions

The article presents an overview of the current state of research in BCI for UAV control and requirements for similar promising solutions, and proposes multi-modal BCI to control drones in a smart-city environment. The developed software allows the use of the depression and amplification of - and -rhythms of the brain and EOG signals that occur during blinking as commands to control the flight of aerial objects. Four commands are implemented: take-off, land, move forward, and move backward.

The distinctive features of the proposed solution are the simplicity of the architecture. Only one Android-based smartphone is used for both receiving and processing signals from the headset and generating and transmitting commands to the drone. The above functions are implemented in the developed open-source publicly available software. The proposed BCI is multi-modal since both EEG and EOG signals are being processed. It is planned to introduce additional commands for a UAV (move left/right) and implement a neural network and machine-learning methods to take into account the individual characteristics of bio-signals of each operator. The presented solution can be adapted to control other mobile objects in smart cities, including ground and surface UVs.

Author Contributions

Data curation, D.W.; formal analysis, D.W. and M.M.; funding acquisition, E.J.; investigation, D.W.; methodology, E.J.; project administration, E.J.; resources, D.W.; software, D.W.; supervision, E.J.; validation, M.M.; visualization, M.M.; writing—original draft, M.M.; writing—review and editing, M.M. All authors have read and agreed to the published version of the manuscript.

Funding

The reported study was partially funded by RFBR, project number 19-29-06044.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

Data available in a publicly accessible repository that does not issue DOIs. URL: https:/github.com/Runsolar/tello-neurosky.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Latif, M.Y.; Naeem, L.; Hafeez, T.; Raheel, A.; Saeed, S.M.U.; Awais, M.; Alnowami, M.; Anwar, S.M. Brain computer interface based robotic arm control. In Proceedings of the 2017 International Smart Cities Conference (ISC2), Wuxi, China, 14–17 September 2017; pp. 1–5. [Google Scholar]

- Al-Turabi, H.; Al-Junaid, H. Brain computer interface for wheelchair control in smart environment. In Proceedings of the Smart Cities Symposium 2018, Zallaq, Bahrain, 22–23 April 2018; pp. 1–6. [Google Scholar]

- Li, Y.; Zhang, F.; Yang, Y. Smart House Control System Controlled by Brainwave. In Proceedings of the 2019 International Conference on Intelligent Transportation, Big Data & Smart City (ICITBS), Changsha, China, 12–13 January 2019; pp. 536–539. [Google Scholar]

- Thum, G.E.; Gaffar, A. The future of brain-computer interaction: How future cars will interact with their passengers. In Proceedings of the 2017 IEEE SmartWorld, Ubiquitous Intelligence & Computing, Advanced & Trusted Computed, Scalable Computing & Communications, Cloud & Big Data Computing, Internet of People and Smart City Innovation (SmartWorld/SCALCOM/UIC/ATC/CBDCom/IOP/SCI), San Francisco, CA, USA, 4–8 August 2017; pp. 1–5. [Google Scholar]

- Duarte, R.M. Low Cost Brain Computer Interface System for AR.Drone Control. Master’s Thesis, Universidade Federal de Santa Catarina Centro Tecnológico Programa de Pós-Graduação em Engenharia de Automação e Sistemas, Florianópolis, Brazil, 2017. [Google Scholar]

- Hekmatmanesh, A.; Nardelli, P.H.J.; Handroos, H. Review of the State-of-the-Art of Brain-Controlled Vehicles. IEEE Access 2021, 9, 110173–110193. [Google Scholar] [CrossRef]

- Värbu, K.; Muhammad, N.; Muhammad, Y. Past, Present, and Future of EEG-Based BCI Applications. Sensors 2022, 22, 3331. [Google Scholar] [CrossRef] [PubMed]

- Villegas, I.D.; Camargo, J.R.; Perdomo, C.C.A. Recognition and Characteristics EEG Signals for Flight Control of a Drone. IFAC-PapersOnLine 2021, 54, 50–55. [Google Scholar] [CrossRef]

- Sun, S.; Ma, J. Brain Wave Control Drone. In Proceedings of the 2019 International Conference on Artificial Intelligence and Advanced Manufacturing (AIAM), Dublin, Ireland, 16–18 October 2019; pp. 300–304. [Google Scholar]

- Tezza, D.; Garcia, S.; Hossain, T.; Andujar, M. Brain eRacing: An Exploratory Study on Virtual Brain-Controlled Drones. In Virtual, Augmented and Mixed Reality. Applications and Case Studies, Proceedings of the 11th International Conference, VAMR 2019, Held as Part of the 21st HCI International Conference, HCII 2019, Orlando, FL, USA, 26–31 July 2019; Springer International Publishing: Cham, Switzerland, 2019; Volume 11575, pp. 150–162. [Google Scholar]

- Al-Nuaimi, F.A.; Al-Nuaimi, R.J.; Al-Dhaheri, S.S.; Ouhbi, S.; Belkacem, A.N. Mind Drone Chasing Using EEG-Based Brain Computer Interface. In Proceedings of the 2020 16th International Conference on Intelligent Environments (IE), Madrid, Spain, 20–23 July 2020; pp. 74–79. [Google Scholar]

- Chiuzbaian, A.; Jakobsen, J.; Puthusserypady, S. Mind Controlled Drone: An Innovative Multiclass SSVEP based Brain Computer Interface. In Proceedings of the 2019 7th International Winter Conference on Brain-Computer Interface (BCI), Gangwon, Republic of Korea, 18–20 February 2019; pp. 1–5. [Google Scholar]

- Kim, S.; Lee, S.; Kang, H.; Kim, S.; Ahn, M. P300 Brain—Computer Interface-Based Drone Control in Virtual and Augmented Reality. Sensors 2021, 21, 5765. [Google Scholar] [CrossRef]

- Dumitrescu, C.; Costea, I.-M.; Semenescu, A. Using Brain-Computer Interface to Control a Virtual Drone Using Non-Invasive Motor Imagery and Machine Learning. Appl. Sci. 2021, 11, 11876. [Google Scholar] [CrossRef]

- Reddy, M.H.H.N. Brain Computer Interface Drone. In Brain-Computer Interface; IntechOpen: Rijeka, Croatia, 2021; pp. 1–19. [Google Scholar] [CrossRef]

- Peining, P.; Tan, G.; Aung, A.; Phyo Wai, A. Evaluation of Consumer-Grade EEG Headsets for BCI Drone Control. In Proceedings of the IRC Conference on Science, Engineering, and Technology, Singapore, 10 August 2017; pp. 1–6. [Google Scholar]

- Rosca, S.; Leba, M.; Ionica, A.; Gamulescu, O. Quadcopter control using a BCI. IOP Conf. Ser. 2018, 294, 0120485. [Google Scholar] [CrossRef]

- Duan, X.; Xie, S.; Xie, X.; Meng, Y.; Xu, Z. Quadcopter Flight Control Using a Non-invasive Multi-Modal Brain Computer Interface. Front. Neurorobot. 2019, 13, 23. [Google Scholar] [CrossRef] [PubMed]

- Lee, D.-H.; Ahn, H.-J.; Jeong, J.-H.; Lee, S.-W. Design of an EEG-based Drone Swarm Control System using Endogenous BCI Paradigms. In Proceedings of the 2021 9th International Winter Conference on Brain-Computer Interface (BCI), Gangwon, Republic of Korea, 22–24 February 2021; pp. 1–5. [Google Scholar]

- Marin, I.; Al-Battbootti, M.J.H.; Goga, N. Drone Control based on Mental Commands and Facial Expressions. In Proceedings of the 2020 12th International Conference on Electronics, Computers and Artificial Intelligence (ECAI), Bucharest, Romania, 25–27 June 2020; pp. 1–4. [Google Scholar]

- Abdulwahhab, A.H. Improved Algorithms for EEG-Based BCI Application. Master’s Thesis, Istanbul Gelisim University, Institute of Graduate Studies, Istanbul, Turkey, 2021. [Google Scholar]

- Turovskiy, Y.; Volf, D.; Iskhakova, A.; Iskhakov, A.Y. Neuro-Computer Interface Control of Cyber-Physical Systems. In High-Performance Computing Systems and Technologies in Scientific Research, Automation of Control and Production, Proceedings of the 11th International Conference, HPCST 2021, Barnaul, Russia, 21–22 May 2021; Springer International Publishing: Cham, Switzerland, 2022; Volume 1526, pp. 338–353. [Google Scholar]

- Kharchenko, S.; Meshcheryakov, R.; Turovsky, Y.; Volf, D. Implementation of Robot—Human Control Bio-Interface When Highlighting Visual-Evoked Potentials Based on Multivariate Synchronization Index. In Proceedings of the 15th International Conference on Electromechanics and Robotics “Zavalishin’s Readings”, Ufa, Russia, 15–18 April 2020; Smart Innovation, Systems and Technologies. Springer: Singapore, 2021; Volume 187, pp. 225–236. [Google Scholar]

- GitHub Repository. Tello-Neurosky. 2022. Available online: https:/github.com/Runsolar/tello-neurosky (accessed on 1 September 2022).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).