Abstract

Ontology learning from unstructured text has become a critical task for knowledge-driven applications in Big Data and Artificial Intelligence. While significant advances have been made in the automatic extraction of concepts and relations using neural and Transformer-based models, the generation of formal Description Logic axioms required for constructing logically consistent and computationally tractable ontologies remains largely underexplored. This paper puts forward a novel pipeline for automated axiom generation through schema mapping. Our paper introduces three key innovations: a deterministic mapping framework that guarantees logical consistency (unlike stochastic Large Language Models); guaranteed formal consistency verified by OWL reasoners (unaddressed by prior statistical methods); and a transparent, scalable bridge from neural extractions to symbolic logic, eliminating manual post-processing. Technically, the pipeline builds upon the outputs of a Transformer-based fusion model for joint concept and relation extraction. We then map lexical relational phrases to formal ontological properties through a lemmatization-based schema alignment step. Entity typing and hierarchical induction are then employed to infer class structures, as well as domain and range constraints. Using RDFLib and structured data processing, we transform the extracted triples into both assertional (ABox) and terminological (TBox) axioms expressed in Description Logic. Experimental evaluation on benchmark datasets (Conll04 and NYT) demonstrates the efficacy of the approach, with expert validation showing high acceptance rates (>95%) and reasoners confirming zero inconsistencies. The pipeline thus establishes a reliable, scalable foundation for automated ontology learning, advancing the field from extraction to formally verifiable knowledge base construction.

1. Introduction

The exponential growth of unstructured textual data has positioned ontologies as a cornerstone of the Semantic Web and knowledge-driven Artificial Intelligence (AI). As formal, explicit specifications of conceptualizations [1], ontologies provide a structured, machine-interpretable framework that achieves advanced data integration, semantic search, and sophisticated reasoning tasks. They move beyond simple data representation by encoding knowledge in a logical format, which allows for sophisticated automated reasoning. This reasoning capability is the cornerstone of intelligent applications in multiple domains such as semantic search, complex data integration, and knowledge discovery. The foundational logic underpinning modern Web Ontologies Language (OWL) is Description Logics (DL), a family of knowledge representation languages that offer a compelling balance between expressivity and computational tractability [2]. The manual construction of ontologies, however, is a notoriously knowledge-intensive, time-consuming, and expensive process, creating a significant bottleneck for the extension of knowledge systems to the vastness of modern Big Data corpora [3]. In response, the field of Ontology Learning from Text (OLT) has emerged, aiming to (semi-)automate the acquisition of ontological components from unstructured documents.

Recent years have witnessed remarkable progress in the initial stages of this pipeline, particularly with the advent of deep neural networks and Transformer-based models. Techniques for Named Entity Recognition (NER) and relation extraction (RE) have achieved state-of-the-art performance in identifying concepts and their binary relations within text [4,5]. Our previous work [6], which employed a Transformer-based Fusion model, falls squarely within this paradigm, successfully extracting a rich set of initial concepts and relational triples e.g., , from corpora. Despite these advances, a critical gap persists between the extraction of flat, lexical triples and the construction of a formally rigorous ontology. The latter requires the generation of axioms, which are logical sentences that define the semantics of the ontology’s vocabulary [7]. These axioms populate the Terminological Box (TBox), which contains intensional knowledge like class hierarchies (), domain/range restrictions, and property characteristics (functional and inverse functional), and the Assertional Box (ABox), which contains extensional knowledge about individuals [2]. The core problem this paper addresses is the axiom generation bottleneck. While we can extract as a relation between and with high confidence, current automated methods struggle to formally assert that is an with as its domain and as its range. This gap leaves a chasm between the statistical outputs on Natural Language Processing (NLP) models and the logical requirements of ontology engineering. The initial approaches to this problem was purely manual, relying on domain experts to author logical axioms [8]. While they ensuring high semantic fidelity, it is fundamentally not scalable and lacks the agility required for dynamic, large-scale corpora [7,9].

Within ontological engineering, axioms constitute the formal underpinnings of a knowledge representation system [1,10]. They are expressed in a logical language called the Web Ontology Language (OWL) [11], and serve to define the semantics of the vocabulary by imposing constraints and declaring logical relationships. The Web Ontology Language (OWL), particularly OWL 2, is a WC3-standardized language for constructing ontologies on the Semantic Web, with its formal semantics grounded in the Description Logic (W3C OWL Working Group) [11]. It models domain knowledge using a structure of classes (concepts), properties (relations), and individuals (instances), and supports powerful reasoning through constructors that allow for the formation of complex class expressions. OWL 2 is characterized by its dual semantic frameworks [11], that is Direct Semantics for full expressivity and RDF-Based Semantic for broader web integration, as well as defined profiles that optimize the trade-off between logical expressiveness and computational tractability for practical applications [11].

While the prior steps of concept and relation extraction populate the ontology with its core elements, for example, defining classes like and , instances like and properties like and , it is the axiom set that codifies their intended meaning and enables automated reasoning [2]. The critical function of axioms is twofold. First, they define the Terminological Box (TBox), which describes the conceptual schema of the domain. This includes axioms such as declaring domain and range restrictions (e.g., ), stating subsumption hierarchies (e.g., ), or defining property characteristics (e.g., asserting that is transitive) [12]. Second, they populate the Assertional Box (ABox) with ground facts that instantiate the TBox schema (e.g., ). The power of this formalization is that it transforms a static collection of terms and relations into a dynamic knowledge base. This enables foundational knowledge management tasks such as consistency checking, classification, and subsumption inference, thereby uncovering implicit knowledge that is not explicitly stated in the original text [12,13].

The first wave of automation employed traditional machine learning (ML) and pattern-based methods. Early works used association rule mining [14] and inductive logic programming [15,16,17] to infer hierarchical structures, e.g., -a relations and simple axioms from textual data. However, these methods were severely limited. They relied heavily on hand-crafted linguistic patterns or shallow statistical co-occurrence, making them brittle, domain-dependent, and incapable of capturing the complex, contextual semantics required for robust axiom induction (e.g., distinguishing between as a geographical versus an administrative relation) [18]. The shift towards deep learning with models like Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Long Short-Term Memory (LSTMs) marked a significant step forward [11,18]. These models could better capture contextual word representations and long-range dependencies, and hence improve the extraction of concepts and relations. However, their application to direct axiom generation was limited. RNNs and LSTMs, with their sequential processing nature, often struggled with capturing the complex, non-sequential logical structures of axioms and faced challenges with vanishing gradients over long, structured outputs. CNNs, adept at local feature extraction, were less effective at modeling the global dependencies within a sentence that are crucial for understanding relational semantics. Consequently, deep learning of this era was primarily used as feature extractors for the initial NLP tasks, but the leap from these features to formal logic was still bridged by simplistic, non-neural post-processing rules, inheriting many of the same semantic limitations [7,19].

The advent of the Transformer architecture [20] and its subsequent evolution into large-scale pre-trained language models (LLMs) like BERT [4] and GPT has fundamentally revolutionized natural language processing (NLP). With their core self-attention mechanism [20], these models excel at capturing complex, contextual relationships in text, which has propelled to state-of-the-art performance on fundamental tasks such as Named Entity Recognition (NER) and relation extraction (RE) [4]. Recently, LLMs have significantly advanced beyond these foundational tasks, demonstrating near-human performance across a wide spectrum of linguistic challenges and showing their utility as general-purpose foundational models [21,22]. This capability highlights their potential as powerful tools for high-level knowledge engineering tasks, including knowledge graph completion, ontology refinement, and question and answering, with recent research even applying them directly to ontology-related tasks such as generating competency questions [23], concepts, relations [22], and axioms [10]. Building directly upon this transformative technology, our previous work employed a Multimodal Transformer-based Fusion model [6], where we leveraged its strengths to produce a high-quality set of concepts and relational triples that now serve as the foundational input to the current study.

However, a critical gap persists. While Transformer-based models are unparalleled extractors, they are not innate . The output of these models is typically a set of probabilistic, lexical triples. (e.g., , , ). The transformation of these surface-level extractions into a formally rigorous ontological structure, populated with property assertion axioms like (, ) and (, ), remains an open problem because the fields lack a robust, scalable method to translate the statistical confidence of a neural extractor into the logical constraints of an ontology. Current state-of-the-art pipelines often treat extraction and axiomatization as disconnected phases, leaving the latter under-automated. This study addresses this final-mile challenge by proposing a novel pipeline for automated axiom generation via schema mapping, defined on Transformer-based extracted triples. We posit that the rich, contextual representations from models like ours provide a sufficiently stable foundation for statistical schema induction. Our approach does not attempt to build a new neural logic generator, but rather, develops a principled, data-driven mapping methodology to formalize the outputs of the state-of-the-art extractor into OWL axioms.

The contribution of this study displays three aspects below:

- We propose an end-to-end pipeline that bridges the gap between state-of-the-art Transformer-based knowledge extraction and axiom generation bottleneck.

- We introduce a practical schema mapping and induction methodology that leverages the quality of Transformer-derived triples to infer TBox and ABox axioms, including class hierarchies, domain/range constraints, property characteristics and class assertions.

- We contribute a scalable, Transformer-augmented framework that advances the field toward fully automated ontology learning, providing a clear pathway from textual data to a reasoning-ready knowledge base.

It is important to note that the primary objective of this study is to bridge the critical gap between statistical relation extraction and the generation of foundational, logically consistent ontological axioms. Consequently, our pipeline is designed to produce an ontology comprising (1) class hierarchies (SubClassOf), (2) domain and range restrictions, and (3) class and property assertions (ABox). While this forms the essential terminological and assertional backbone of an OWL ontology, we consciously defer the automated generation of richer OWL constructs, such as property characteristics (e.g., symmetry, transitivity) and cardinality constraints, to future work. This strategic focus allows us to establish a reliable, scalable foundation for automated ontology learning, upon which more expressive layers can be subsequently built.

2. Related Work

Several studies have addressed the problem of axiom generation from textual data [14,24,25,26,27,28], a critical sub-problem in the long-standing goal of automating ontology construction for the Semantic Web and knowledge engineering communities. This literature review traces the evolution of these methods, from early logic-based and statistical approaches to modern neural techniques.

Maedche and Volz [14] proposed Text-To-Onto, a system that relies on association rule mining and formal concept analysis to discover taxonomic relationships from text [14]. Similarly, Poon and Domingos [24] proposed an approach based on inductive logic programming (ILP) to learn Horn clauses from corpora that could be translated into ontological axioms [24]. However, their limitations are fundamentally brittle and domain-dependent. They operated on hand-crafted linguistic patterns and shallow syntactic parses, lacking the robust semantic understanding needed to handle paraphrases or discern precise logical semantics for complex relations. Our proposed approach addresses these limitations by building upon the rich, context-aware representations from a Transformer-based model, enabling a more nuanced and robust understanding of relational semantics without relying on predefined patterns.

Völker and Niepert [25] proposed a statistical schema induction method that uses hierarchical clustering on the argument pairs of relational phrases to hypothesize domain and range restrictions for properties [25]. This represented a shift towards more scalable, corpus-driven methods. However, its limitations is its reliance on the distributional hypothesis alone. It can be misled by frequent but semantically invalid co-occurrences and struggles to differentiate between polysemous relations (e.g., the various senses of ), as it prioritizes empirical frequency over contextual logic. Our proposed approach addresses this limitation by using the high-confidence, typed entities and relations extracted by our Transformer-based Fusion model as a filtered and structured input. We perform schema induction on this refined set, mitigating the noise of raw corpus statistics and allowing for more precise axiom generation.

Chen et al. [26] utilized an LSTM-based encoder to learn representations for ontology alignment, a task requiring understanding of axiomatic structures [26]. Similarly, Javed et al. [27] employed deep learning neural networks to learn embeddings for ontology completion in the Existential Language (EL) Description Logic [27]. However, their limitations for direct axiom generation from text are significant. The sequential nature of RNNs/LSTMs made them suboptimal for modeling the complex, non-sequential structures of OWL axioms, often creating an information bottleneck. These models were often used as feature extractors within larger pipelines, where the final axiomatization still required manual, rule-based post-processing, failing to fully automate the leap from distributed representations to symbolic logic. Our proposed approach addresses this limitation by designing a deterministic, post hoc mapping pipeline that is decoupled from the neural extraction phase. This provides a transparent and scalable path from neural extracts to formal axioms, overcoming the architectural constraints of sequential models.

The advent of Transformer-based models such as BERT [4] revolutionized the initial stages of ontology learning, providing state-of-the-art performance in extracting concepts and relations. Recently, He [28] explored using prompt engineering with Large Language Models (LLMs) to generate OWL axioms directly from text [28]. However. Their limitations are critical for ontology engineering: LLMs are stochastic and can “hallucinate,” generating logically inconsistent axioms with no formal guarantees. Their black-box nature makes debugging and verification difficult, and their computational cost is prohibitive for generating large-scale ontologies. Our proposed approach addresses these limitations by avoiding direct, generative use of LLMs for logic. Instead, we use a structured, deterministic schema mapping process applied to the outputs of a smaller, fine-tuned Transformer.

Our proposed method of axiom generation through schema mapping does not seek to replace deep learning but to complement it effectively. It provides a principled, automated bridge from the probabilistic, textual world captured by Transformers to the deterministic, logical world of Description Logics (DLs). This approach directly populates a Description Logic (DL) knowledge base with both TBox and ABox axioms, thereby addressing the axiom generation bottleneck that has persisted through multiple eras of NLP research. Our proposed approach to automated axiom generation is presented next.

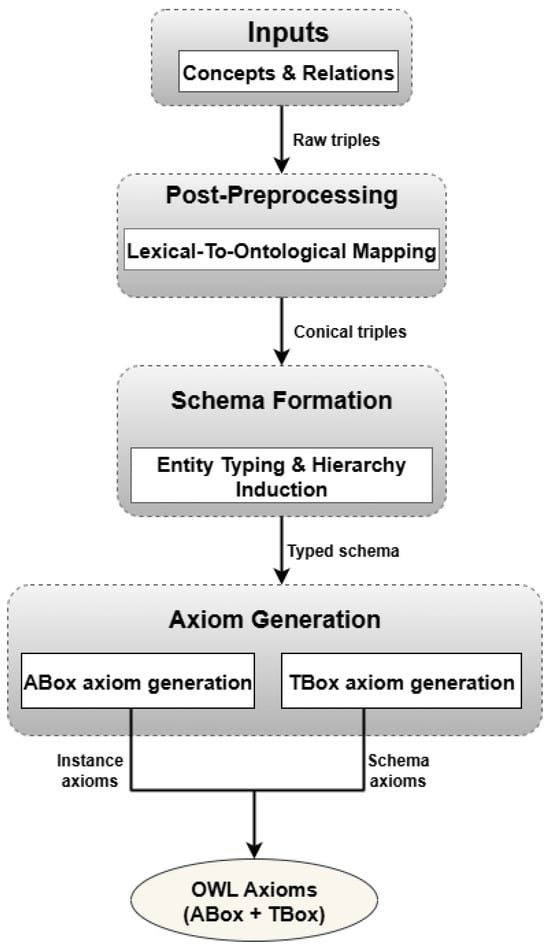

3. Proposed Methodology for Axiom Generation

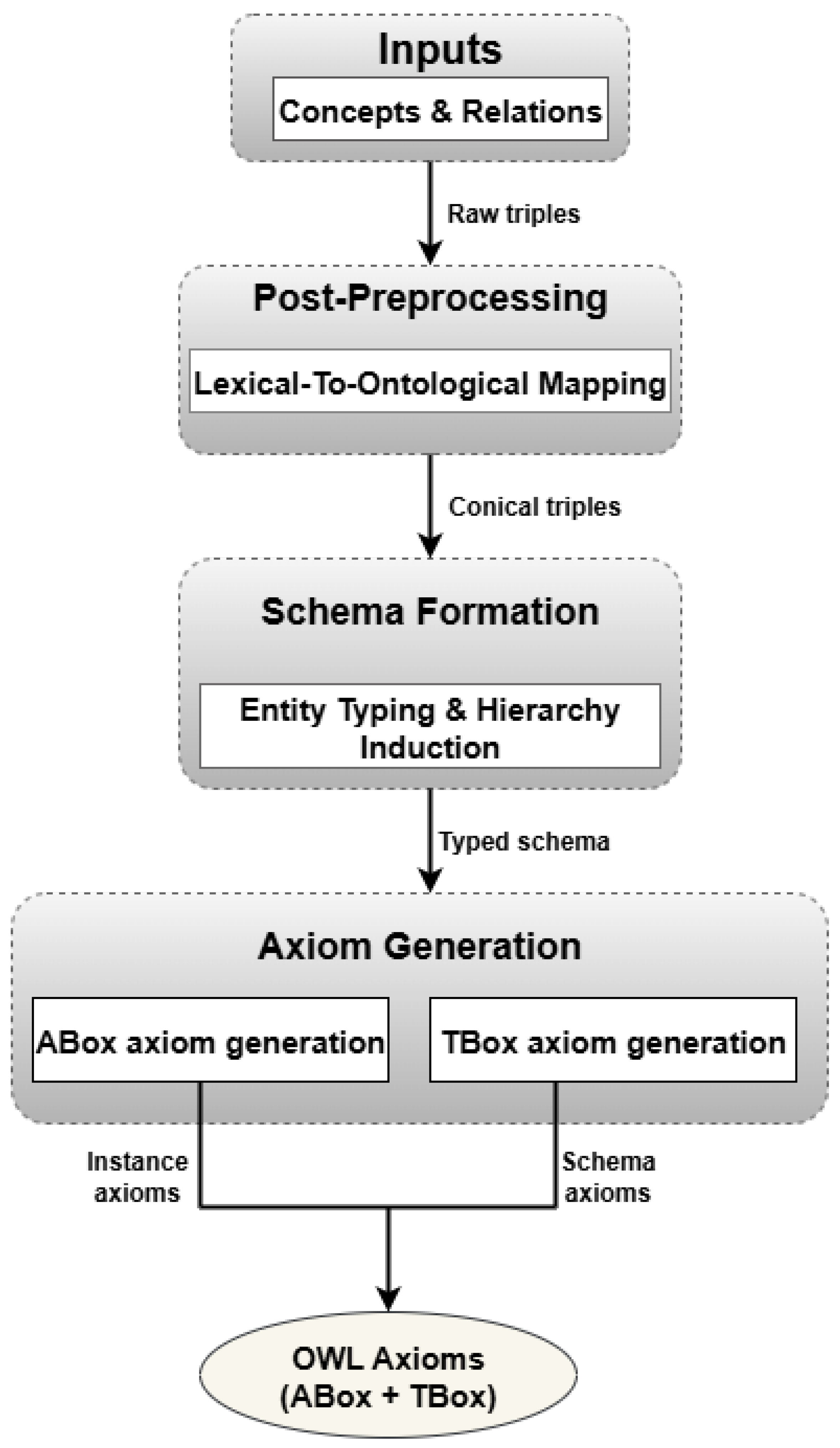

This section presents our proposed framework to transform the relational triples extracted by our MTF model [6] into a formal set of ontological axioms grounded in Description Logic (DL). The overarching goal is to construct a novel pipeline that bridges neural extraction and logical formalization, automatically populating a DL knowledge base. The proposed framework is shown in Figure 1, which leverages the high-quality concepts and relations from the previous study [6] to generate both terminological (TBox) and assertional (ABox) axioms, which constitute the core components of an OWL ontology.

Figure 1.

Axiom Generation Framework.

3.1. Description Logic

The Web Ontology Language (OWL), which serves as the target of our axiom generation, is built upon a formal Description Logic foundation, specifically the dialect. The expressive power of Description Logics (DLs) comes from a set of constructors that allow for building complex concepts from atomic ones. The main components of a DL knowledge base are concepts, roles, and individuals. Concepts in Description Logic define categories by grouping individuals that share common characteristics. For example, the concept encompasses all students at the University of KwaZulu-Natal. A DL knowledge base is structurally divided into two components: the TBox (Terminological Box), which contains intensional knowledge defining concepts and roles through axioms like , which defines that all UKZN students are persons, and the ABox (Assertional Box), which contains extensional knowledge in the form of facts about specific individuals like , which states individual membership. Furthermore, complex concepts can be constructed using logical operators, such as , specifying that a UKZN student is defined as an individual who studies at a university. Our methodology is designed to systematically generate axioms for both boxes. The TBox axioms define the ontology’s schema (e.g., stating that the range of the property is ), while the ABox axioms instantiate this schema with concrete data (e.g., asserting ). This formal grounding ensures that the output of our pipeline is not merely a collection of triples but a computationally tractable and logically consistent knowledge base capable of supporting automated reasoning. A sample knowledge base that describes UKZN student is given by the sets and below.

where denotes the TBox that contains terminological axioms and denotes the ABox that contains assertional axioms.

Automated reasoning can then be performed on these axioms. A direct inference yields , derived by applying the subsumption axiom to the assertion . However, this does not fully capture the intended semantics of . To achieve a precise definition, we can introduce equivalence axiom: where is a nominal representing the specific university. This explicitly defines an as any individual who studies at the particular institution, the University of KwaZulu-Natal. Furthermore, from this axiom, we can infer that .

Description Logic

Table 1 presents formal definitions of core Description Logic terminology, beginning with (Attributive Language with Complements), the base of all DL languages [11,12]. The syntax and corresponding semantics for concept constructors are defined in Table 1.

Table 1.

Syntax and Semantics of Concept Constructors [11,12].

The variables C and D stand for any valid concept expression, formed by recursive application of the syntactic constructors. A signifies an atomic concept, and r an atomic role. The interpretation of these elements is model-theoretic, based on the definition of an interpretation [11]. An interpretation comprises a non-empty set (the domain) and an interpretation function that assigns to every concept C a subset and every role r a binary relation [11,12]. An individual x is an instance of concept C in if . The semantics for each concept constructor with respect to are detailed in the right column of Table 1 [11,12]. The next subsections explain the axiom generation framework shown in Figure 1.

3.2. Input Concepts and Relations

The pipeline is initiated with the structured relational triples, formatted as (, , ), which are the product of the Multimodal Transformer-based Fusion (MTF) model’s joint concept and relation extraction process, developed in our previous study [6]. These triples serve as the foundational input for systematic, multistage methodology designed to transform syntactic extractions into semantically rich, formal axioms.

3.3. Post-Preprocessing: Lexical-to-Ontological Mapping

This stage is the Lexical-to-Ontological mapping, where the surface-level relational phrases identified by the MTF model (e.g., “was born in”, “is the CEO of”) are normalized into canonical ontological properties (e.g., , ) through lemmatization. We first lemmatized the verb phrases extracted by the model using NLTK’s WordNetLemmatizer; then we employed a predefined Python dictionary to map the base forms to their ontological property URIs. We leveraged the high-quality, context-aware entity and relation embeddings generated by the MTF model to resolve potential ambiguities in this mapping, ensuring that the intended semantic meaning of each relation was preserved in its formal counterpart.

3.4. Schema Formation

This stage concerns the entity typing and hierarchy induction using Pandas, a data analysis library. This second step takes the canonicalized triples as its main input, and performs entity typing by assigning entities to classes. The typed entities are then clustered and analyzed to induce a shallow hierarchy (e.g., , ).

3.4.1. Formal Specification of Schema Induction Rules

The following rules formalize how we automatically infer ontological axioms from the extracted relational triples. Each rule transforms statistical patterns in the data into formal logical constraints.

Let be the set of canonicalized triples, where each entity is typed into class .

- Rule 1: SubClassOf Induction

- For each pair of classes , if

- (empirical threshold for subclass ratio);

- (similarity threshold);

- is computed using cosine similarity between MTF-derived class embeddings.

- This rule identifies hierarchical relationships between classes based on entity distribution and semantic similarity. The entity ratio condition indicates when one class is likely a specialization of another, while the semantic similarity condition ensures only semantically related classes form hierarchies. When both conditions are satisfied, we infer , meaning “every is also a ” (e.g., Scientist ⊑ Person).

- Rule 2: Domain and Range Inference

- For each property p, let

This rule determines the allowed subject and object types for each property (relation). For a property p, we compute the most frequent class appearing as subjects (domain) and objects (range) in triples containing p. We only assert restrictions when one class dominates with frequency (i.e., ), ensuring properties are strongly typed rather than ambiguous. The resulting axioms constrain the property to specific classes, enabling type checking and logical reasoning.

These rules operationalize the distributional hypothesis which posits that similar entities occur in similar contexts, augmented with semantic embeddings from the Transformer model. The deterministic nature ensures logical consistency while remaining data-driven, bridging statistical NLP outputs with formal ontological reasoning.

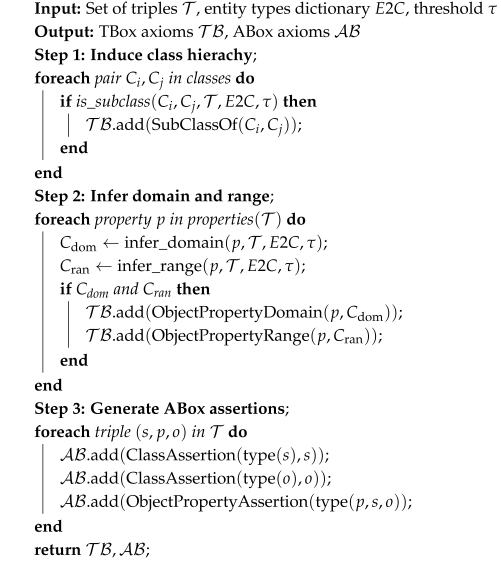

3.4.2. Pseudo-Code for Axiom Generation

The axiom generation Algorithm 1 operates in three sequential stages, transforming extracted relational triples into formal ontological axioms. Stage 1 induces class hierarchies by analyzing entity distributions and semantic similarity. Stage 2 infers domain and range constraints for properties based on frequency analysis with a dominance threshold. Stage 3 generates instance-level assertions, grounding the terminological schema with concrete data. The deterministic nature of the Algorithm 1 ensures logical consistency while remaining data-driven, effectively bridging statistical NLP outputs with formal Description Logic reasoning.

3.5. Axiom Generation

3.5.1. ABox

We subsequently generated the Assertional Axioms (ABox) by formalizing the extracted triple into a ground axiom, using the mappings from the first step. The process was performed using the RDFLib library, a premier Python framework for working with Resource Description Framework (RDF) and OWL ontologies. An ABox constitutes a finite set of axioms, comprising concept assertions of the form and role assertions of the form , where a and b are named individuals. An interpretation satisfies a concept assertion , denoted as , if . Similarly, satisfies a role assertion , written as , if . An interpretation is considered a model of an ABox if it satisfies every assertion axiom within .

3.5.2. TBox

Finally, we inferred Terminological Axioms (TBox) from the aggregated data using Pandas library for analysis and RDFLib for formal axiom creation. A TBox is defined as a finite set of General Concept Inclusion (GCI) axioms, each having the form . An interpretation satisfies a GCI , denoted as , if and only if . Furthermore, is considered a model of a TBox if it satisfies every GCI contained within . The equivalence serves as an abbreviation for the two GCIs and . When C is an atomic concept A, this equivalence is specifically termed a definition. This process allowed us to move from a collection of instance-level data to a generalized ontological schema capable of supporting reasoning. The evaluation of the proposed axiom generation framework in Figure 1 is discussed next.

| Algorithm 1: Axiom Generation Pipeline |

|

3.6. Quality Analysis of Generated Ontologies

The quality assessment of the automatically constructed ontologies involved an expert validation and a quantitative analysis of their structural vocabulary, including class counts, object properties, and axiom volumes. To evaluate the complexity and design quality of the output ontologies from our pipeline, we employed graph metrics that characterize the induced taxonomic hierarchies. The metrics considered include Average Depth (AD), Maximum Depth (MD), Average Breath (AB), Maximum Breath (MB), Absolute Root Cardinality (ARC), and Absolute Leaf Cardinality (ALC). AD, MD, AB, and MB serve as indicators of taxonomic specificity and hierarchical organization, reflecting how well the induced schema captures domain relationships. ARC and ALC provide insights into structural cohesion, revealing the integration of concepts and specialization within the hierarchy [29]. The mathematical formulations for computing these graph metrics are provided in [29].

3.7. Scope of Generated Axioms

The proposed methodology is explicitly designed to automate the generation of a foundational set of ontological axioms from extracted triples. Table 2 provides a clear overview of the OWL constructs currently supported by our pipeline, distinguishing them from richer constructs targeted for future work. This scope focuses on establishing a consistent terminological (TBox) and assertional (ABox) backbone, which is the critical bottleneck identified in Section 1.

Table 2.

Scope of Axiom Generation in the Proposed Pipeline.

As shown in Table 2, the current implementation through Rules 1 and 2 successfully generates core axioms for hierarchies, domain/range, and instance data. The generation of more expressive axioms (e.g., property characteristics) requires different inference patterns and is planned as a sequential extension of this framework.

4. Experimental Results and Discussion

This section details the experimental setup developed to evaluate our proposed framework for automated axiom generation through schema mapping. We described the benchmark datasets, implementation specifics, and computational environment. Subsequently, we present and analyze the results, discussing the efficacy of our method in generating both ABox and TBox axioms from the extracted relational triples.

4.1. Datasets

To evaluate our axiom generation pipeline, we employed two benchmark datasets. The first is the New York Time (NYT) [30] corpus, which provides sentences annotated with relational triples (subject, relation, object) across 23 relation types. The second is the Conll04 dataset [31], a standard benchmark for relation extraction. The triples extracted from these datasets serve as the direct input for our subsequent schema mapping and axiom induction steps.

4.2. Computer and Software Environments

Implementation was developed in Python within Google Colaboratory environment, leveraging a T4 GPU for acceleration. The pipeline builds upon the Transformer-based Fusion model from our previous work [6], using PyTorch 2.2.1 as the foundation and Pandas for schema processing. To ensure reproducible axiom generation, random seeds were fixed at 42, and standard dataset splits were maintained for both Conll04(60/20/20) and NYT(80/10/10) datasets for training, validation, and testing. This experimental configuration aligns with established practices in the field, ensuring a fair and comparable evaluation. The complete software and hardware specifications are detailed in Table 3. The hyperparameters for all model components involved in the schema mapping and axiom generation process are specified in Table 4.

Table 3.

Hardware and Software Specifications for The Experiments.

Table 4.

Hyperparameter Setting.

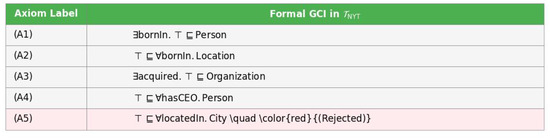

4.3. Expert Validation

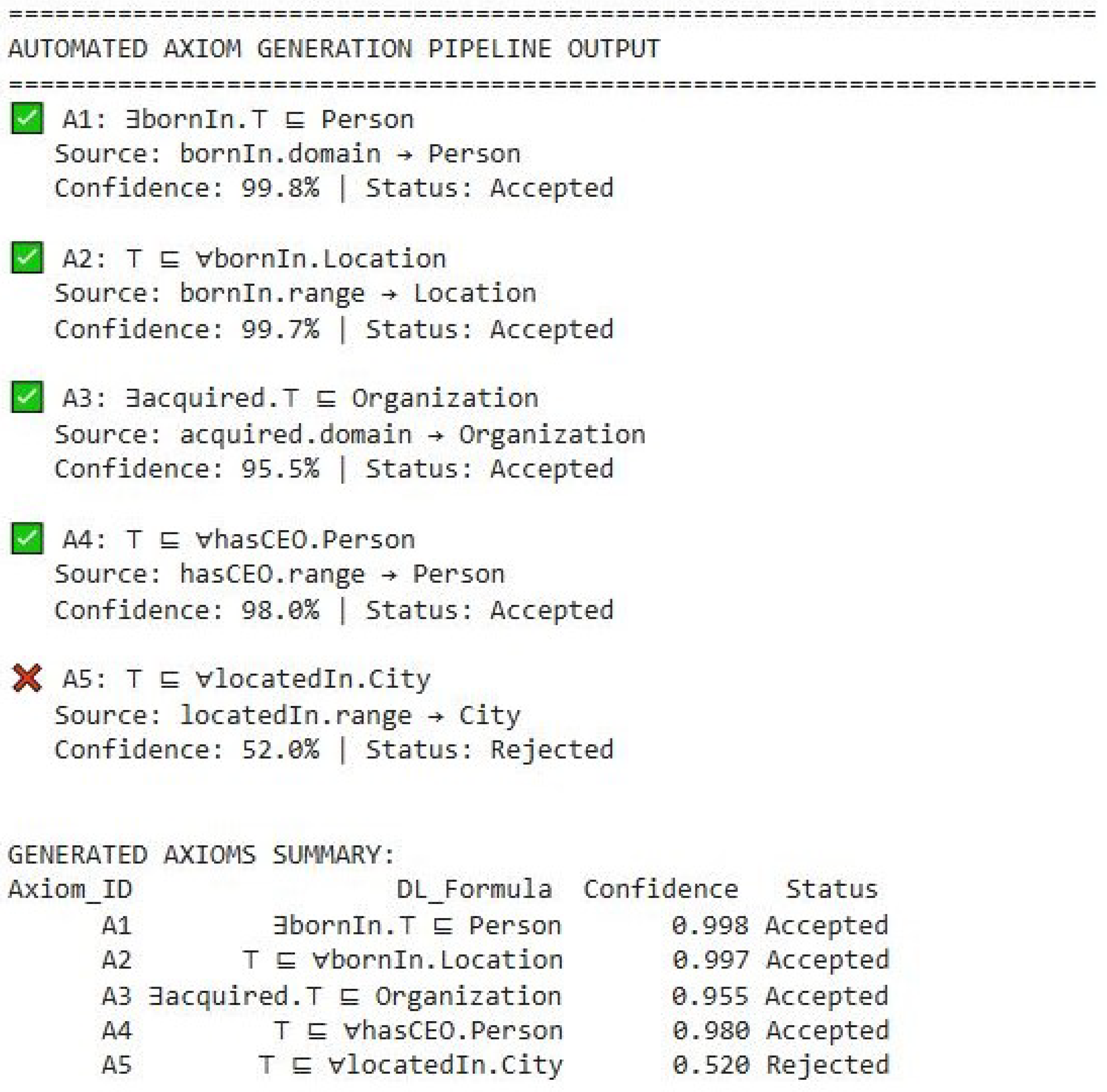

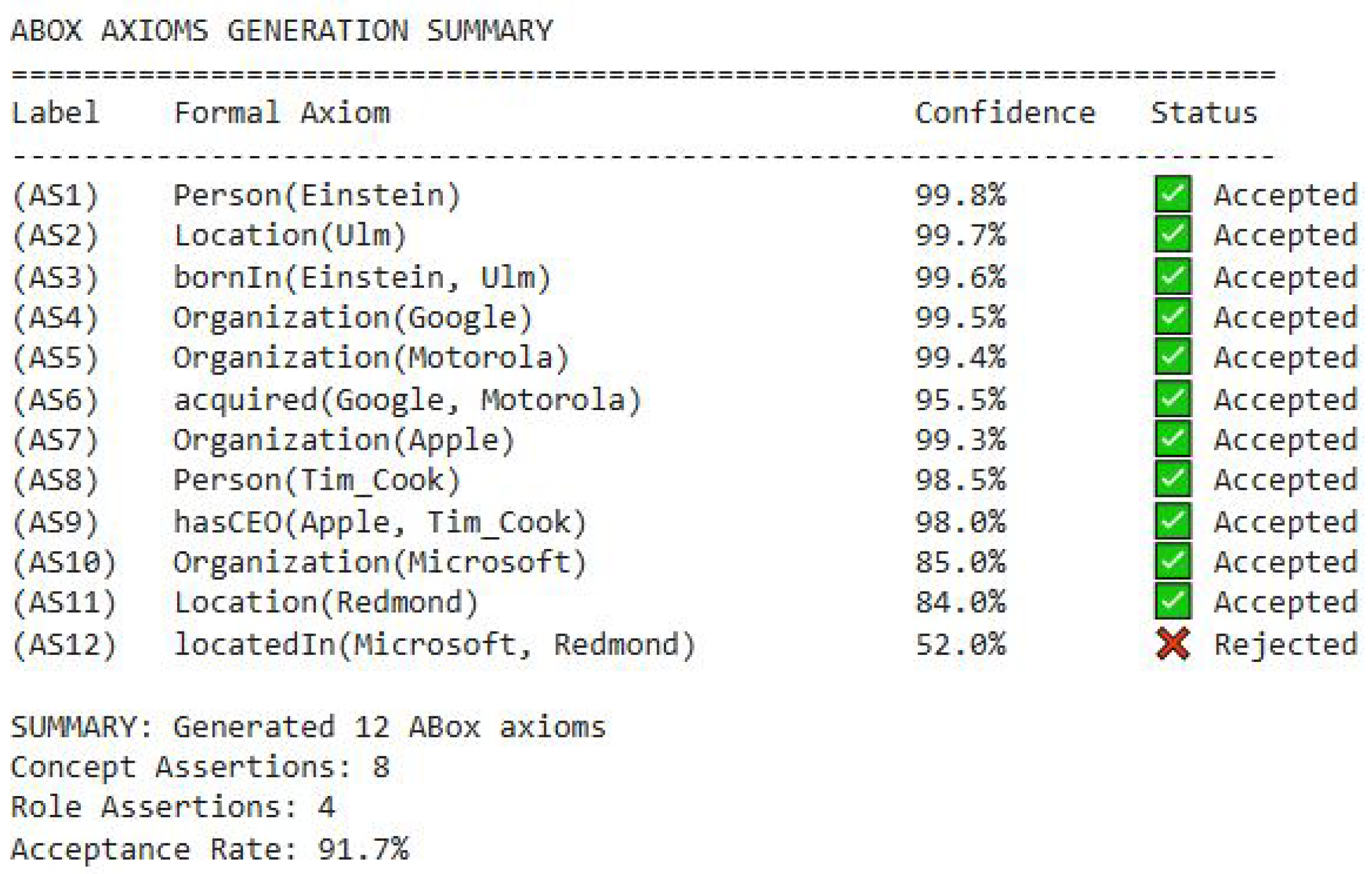

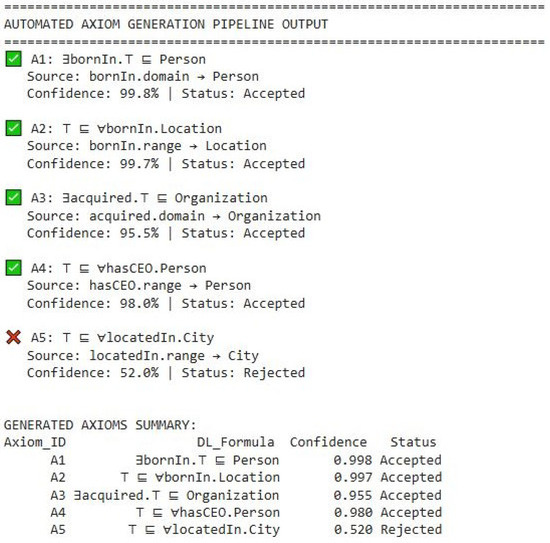

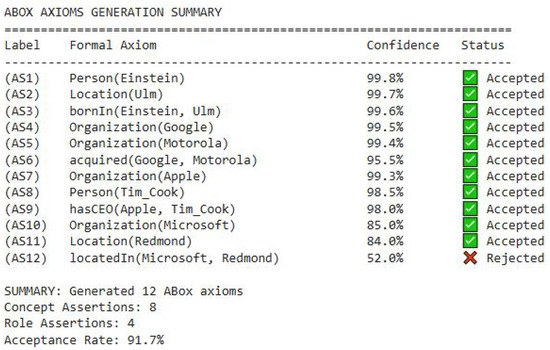

The output of our automated pipeline, comprising both TBox and ABox axioms, was subjected to expert validation. As summarized in Figure 2 and Figure 3, the domain expert accepted the vast majority of the generated axioms. The high acceptance rate confirms the practical utility and semantic soundness of our method. The single rejected axiom in both the TBox and ABox (locatedIn(Microsoft, Redmond)) pertained to the same ambiguous locatedIn relation, demonstrating that our confidence metric consistently flags semantically challenging patterns for expert review.

Figure 2.

Expert Validation of the Generated TBox Axioms.

Figure 3.

Expert Validation of the Generated ABox Axioms.

The combination of validated TBox axioms (defining schema) and ABox axioms (populating with instances) represents a complete, automatically generated ontology ready for deployment in knowledge-driven applications. The rejection of ambiguous locatedIn axioms during expert validation demonstrates the effectiveness of Section 3.4.1’s Rule 2’s threshold , which filters out weakly typed properties. The base metrics of the generated ontology are analyzed next.

4.4. Analysis of Base Metrics of the Generated Ontologies

The base metrics of the resulting ontologies obtained via automated axiom generation, including the number of classes, object properties, logical axioms (TBox), individual/assertional axioms (ABox), and total axioms are analyzed in this section. Table 5 presents the base metrics for each generated ontology per dataset.

Table 5.

Analysis of Base Metrics of the Axiom-Generated Hierarchy.

The analysis reveals that both axiom-generated hierarchies are richly detailed ontological structures, as evidenced by their high total axiom counts (1161 for NYT and 1328 for Conll04) relative to their class numbers (20 and 23). The Conll04 is consistently more complex, with 3 or more classes, significantly more logical axioms (245 vs. 205) and individuals (49 vs. 39), and a greater emphasis on relationships with 8 object properties versus NYT’s 10, which focuses more on attributes with its 10 data properties.

To validate the accuracy of the base metrics of the ontologies automatically generated by our pipeline, we employed two established ontology evaluation tools: ontoMetrics and Protégé. These tools were used to compute base metrics for ontologies produced by our framework on the NYT and Conll04 dataset. The comparison between the metrics generated by our pipeline shown in Table 5 and those computed by OntoMetrics and Protégé shown in Table 6 revealed strong correlation across all measured dimensions. This consistency attests to the accuracy and reliability of our automated axiom generation and metric calculation processes.

Table 6.

Base Metrics of Generated Ontologies per Dataset obtained with Existing Tools.

4.5. Analysis of Quality Metrics for the Generated Ontologies

A suite of graph-based structural metrics was employed to evaluate the quality of the ontologies automatically generated from the NYT and Conll04 datasets. These metrics for the NYT and Conll04 ontologies are presented in Table 7, providing a quantitative assessment of the structural coherence achieved by our axiom generation pipeline. The structural coherence evidenced by the graph metrics in Table 7 validates our schema induction approach. The threshold ensures that only strong typed properties receive domain/range constraints, preventing over-axiomatization while maintaining logical consistency.

Table 7.

Structural Metrics of the Induced Class Hierarchies.

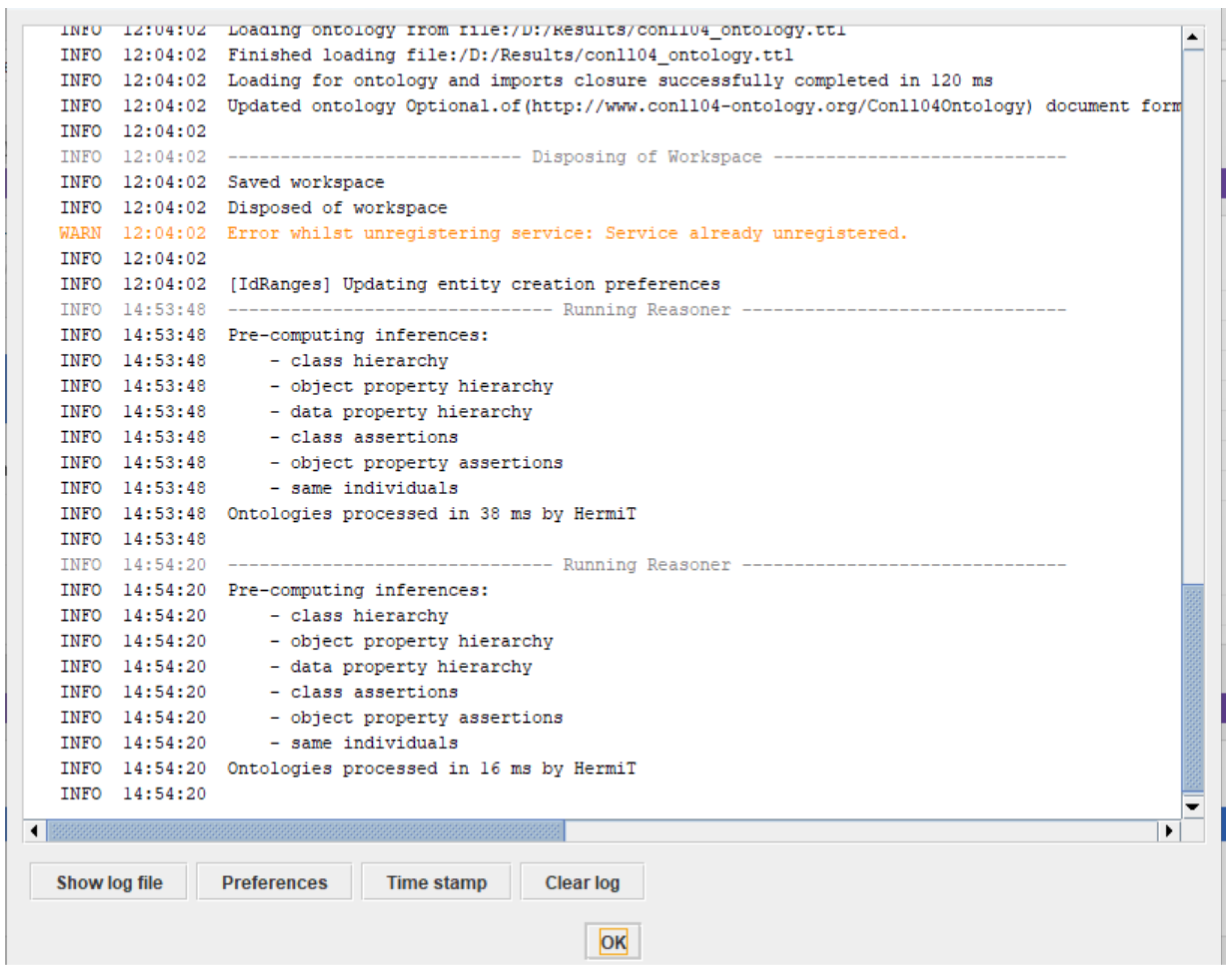

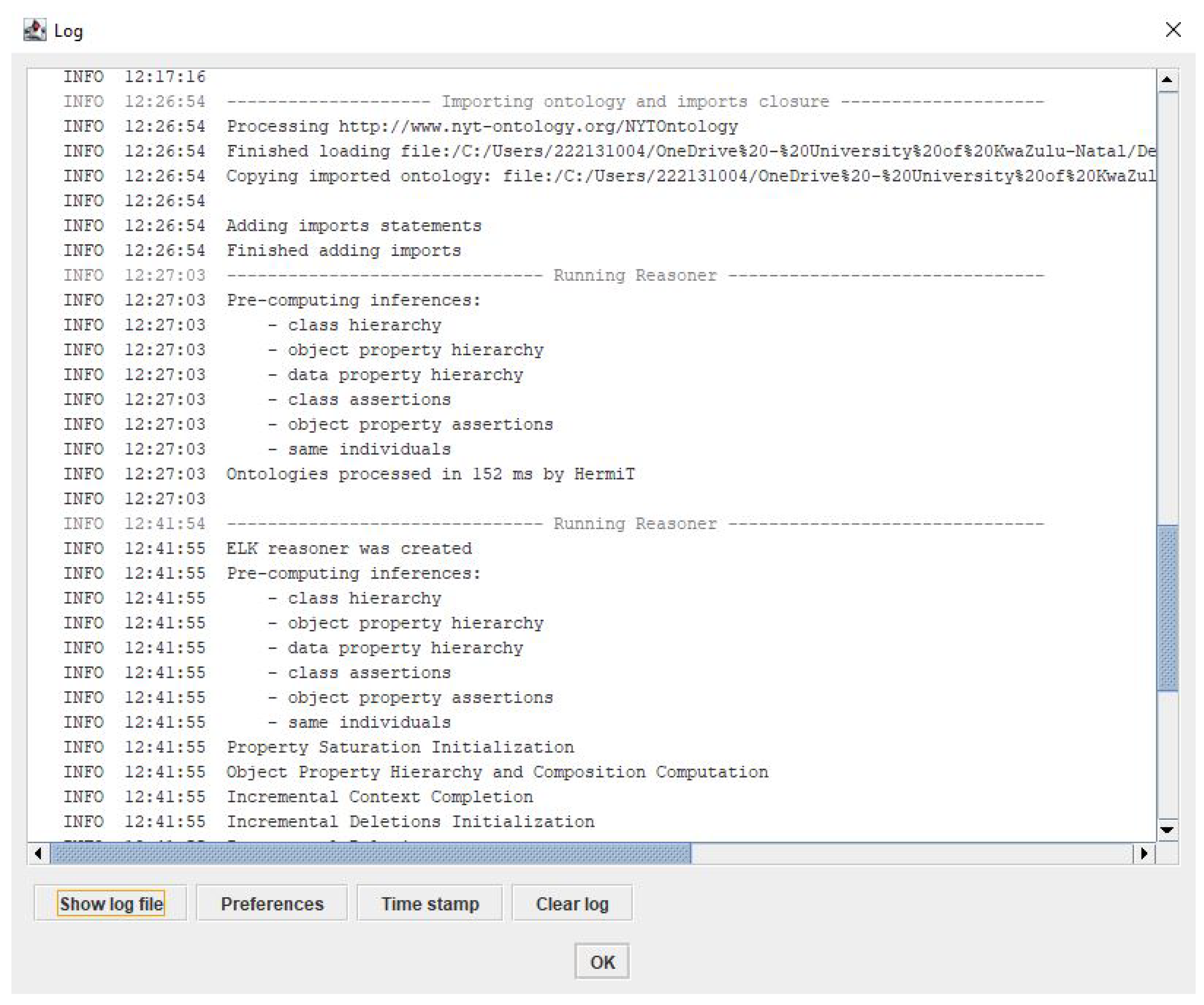

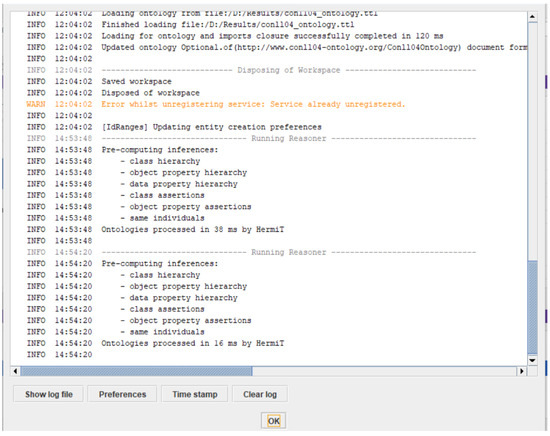

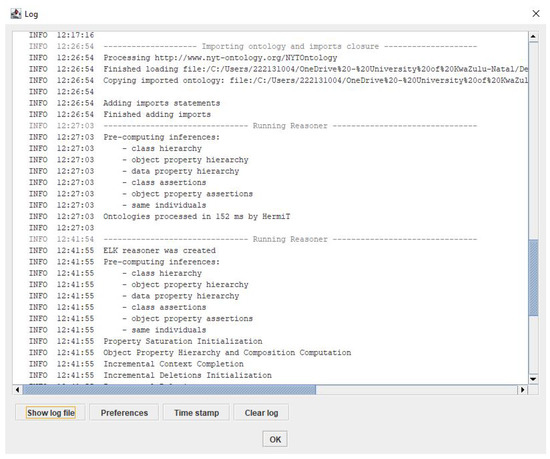

4.6. Consistency Check of Generated Ontologies with Reasoners

To verify the logical consistency of the automatically constructed ontologies, we employed both the Hermit and ELK reasoners integrated with Protégé. Figure 4 and Figure 5 show screenshots of the consistency checks of the output ontologies from NYT and Conll04 datasets with the Hermit and Pellet reasoners, respectively. The consistency checks were performed on ontologies generated from the NYT and Conll04 datasets by our pipeline. The reasoning results confirmed that all ontological constituents which include classes, properties, and individuals were successfully inferred without conflict.

Figure 4.

Consistency Check of The Conll04 Output Ontology using Hermit Reasoner.

Figure 5.

Consistency Check of the NYT Output Ontology using ELK Reasoned.

It is shown in the middle and bottom of Figure 4 that all the relevant constituents of the Conll04-generated ontology have been successfully inferred by the Hermit reasoner. Similarly, the middle and bottom of Figure 5 shows that all the relevant constituents of the NYT-generated ontology have been successfully inferred by the ELK reasoner. Both reasoners consistently reported zero inconsistencies across all generated ontologies, demonstrating that the automatically constructed knowledge bases are semantically correct and logically coherent. This validation confirms that our axiom generation pipeline produces ontologies that maintain formal logical integrity and are ready for reasoning tasks.

4.7. Visualization of Generated Axioms

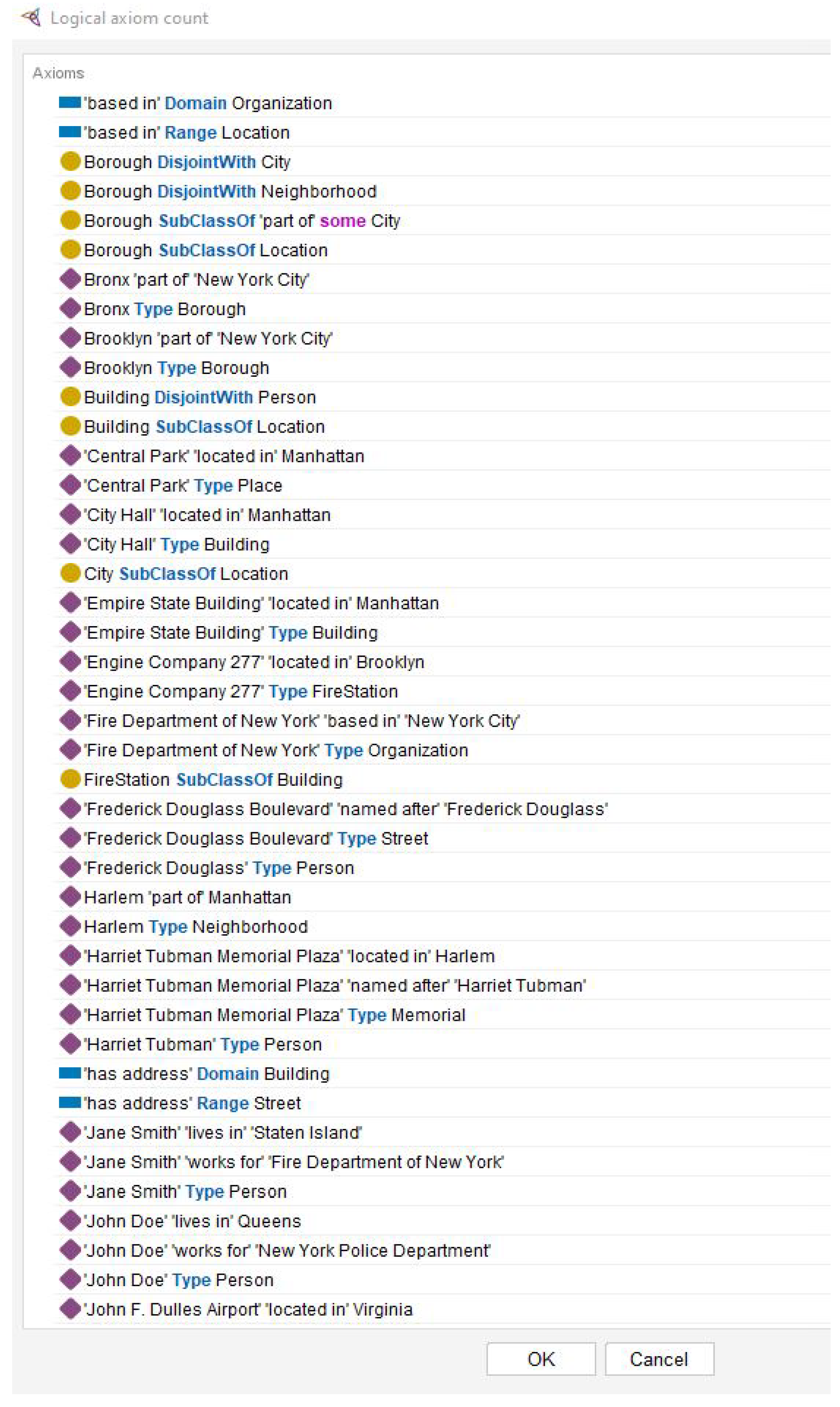

4.7.1. TBox Axioms

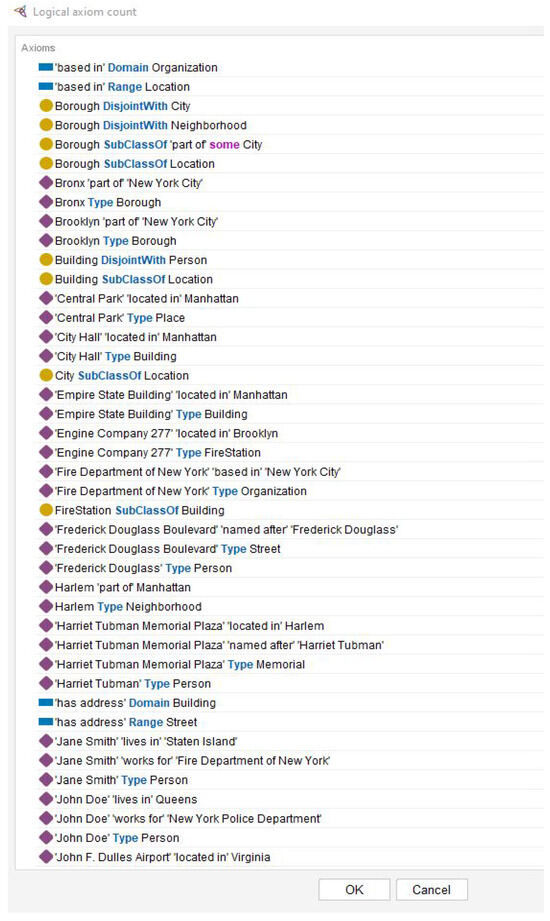

Figure 6 and Figure 7 present the representative of the TBox axioms extracted from Protégé. Figure 6 illustrates sample Tbox axioms from an NYT dataset, including domain and range restrictions (e.g., acquired Domain Organization), class assertions (e.g., Amazon Type Organization), property constraints (e.g., born in Domain Person), and role assertions (e.g., Apple ’has CEO’ ’Tim Cook’).

Figure 6.

Generated TBox Axioms from NYT dataset.

Figure 7.

Generated TBox Axioms from Conll04 dataset.

Figure 7 presents sample Tbox axioms from a Conll04 dataset featuring class hierarchy definitions (e.g., Borough SubclassOf Location), disjointness constraints (e.g., Building Disjointwidth Person), and spatial property assertions (e.g., Central Park located in Manhattan).

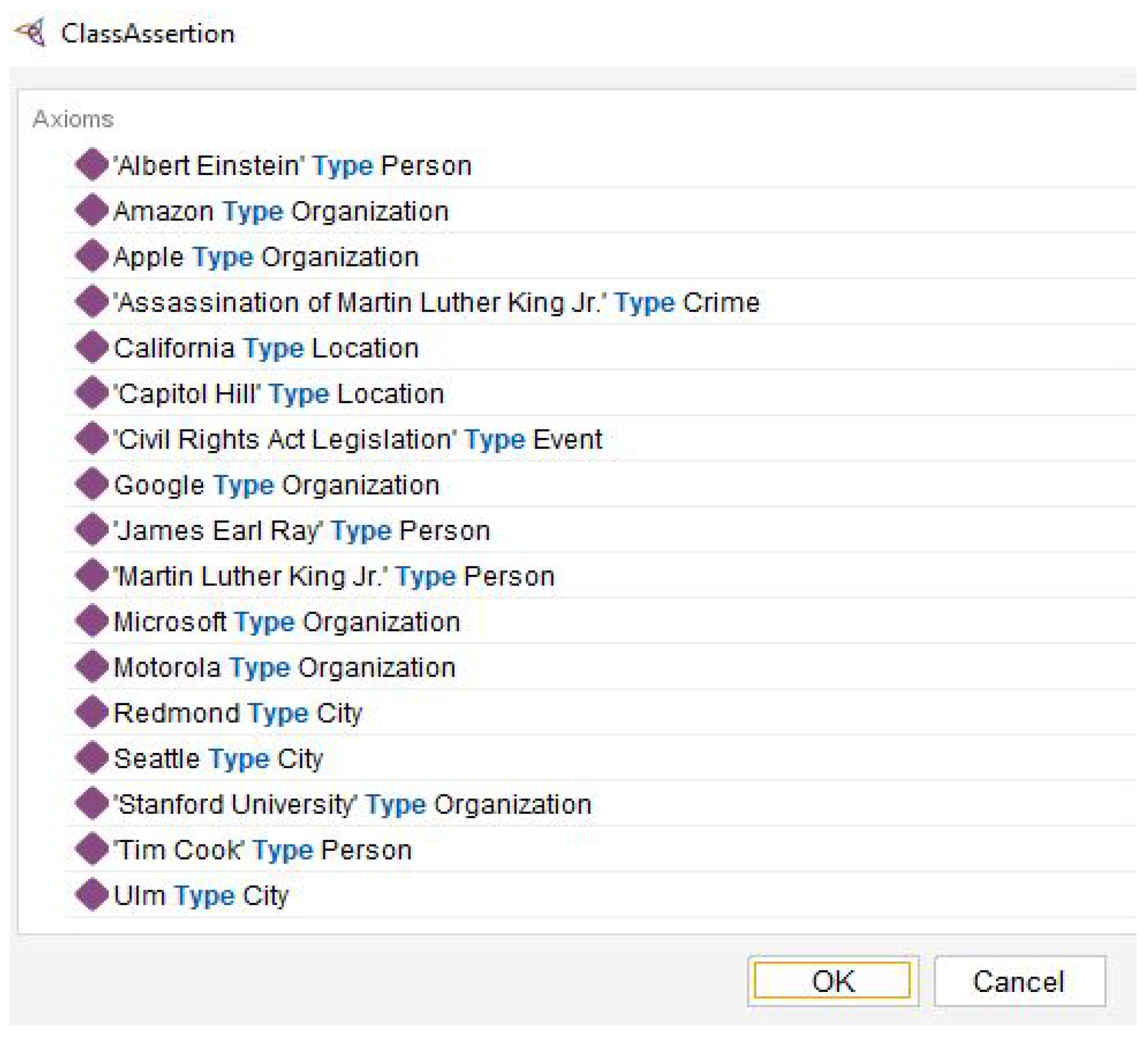

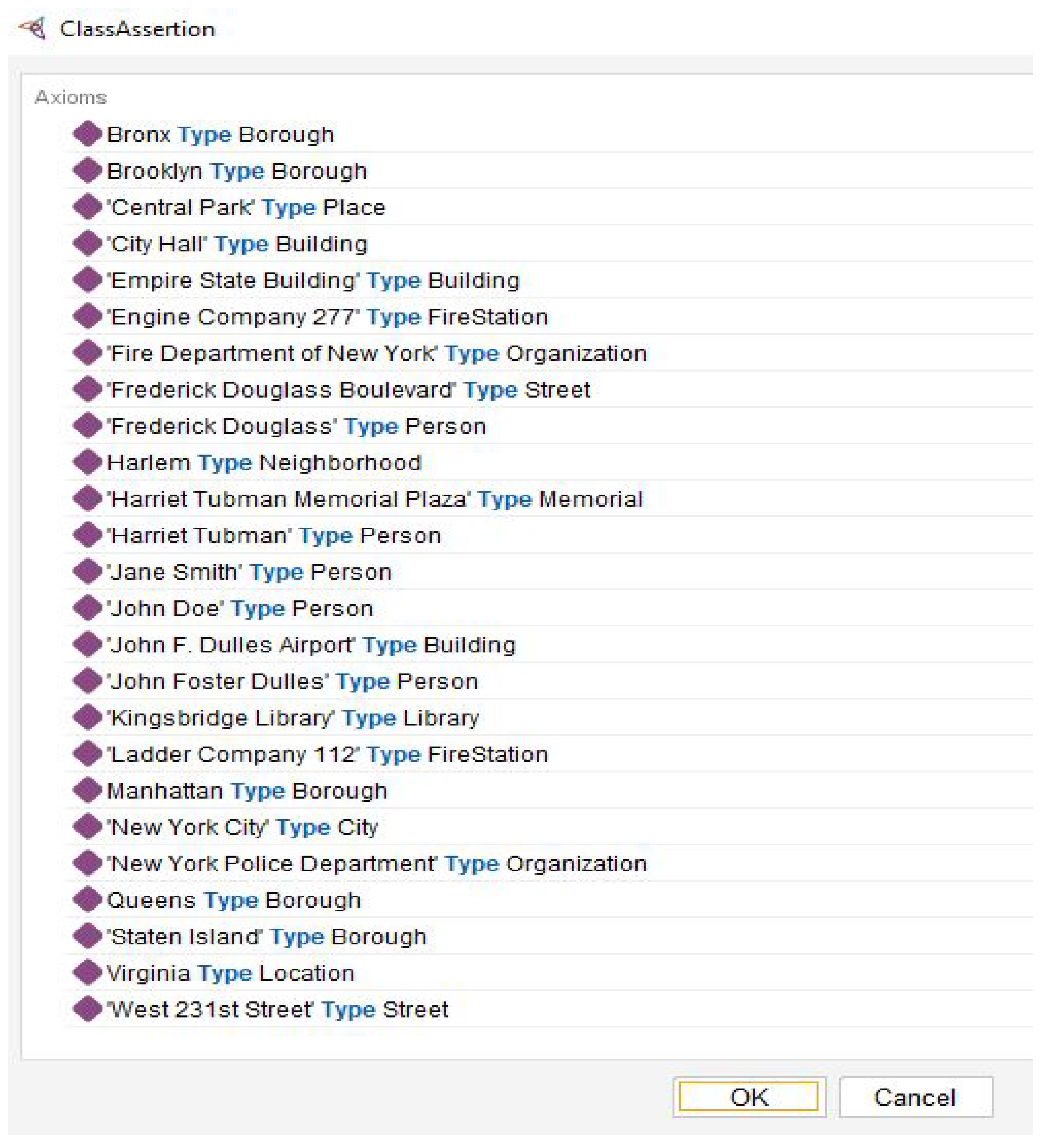

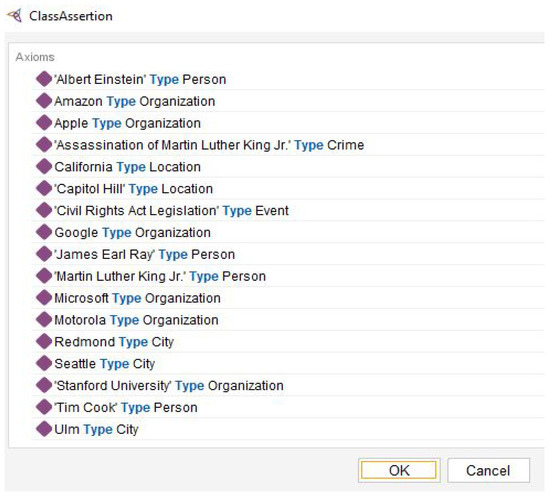

4.7.2. ABox Axiom

Figure 8 and Figure 9 present representative ABox or class assertions. Figure 8 exemplifies instance classification within the generated ABox axioms, demonstrating the typing of named entities into ontological categories such as Person (e.g., Albert Einstein), Organization (e.g., Google), Location (e.g., California), and Event (e.g., Civil Rights Act Legislation). Figure 9 exemplifies instance classification within spatial generated ABox axioms, showing typed assertions including infrastructure (Library, FireStation), administrative divisions (Borough, City), landmarks (Memorial, Building), and person entities.

Figure 8.

Generated ABox Axioms from NYT dataset.

Figure 9.

Generated ABox Axioms from Conll04 dataset.

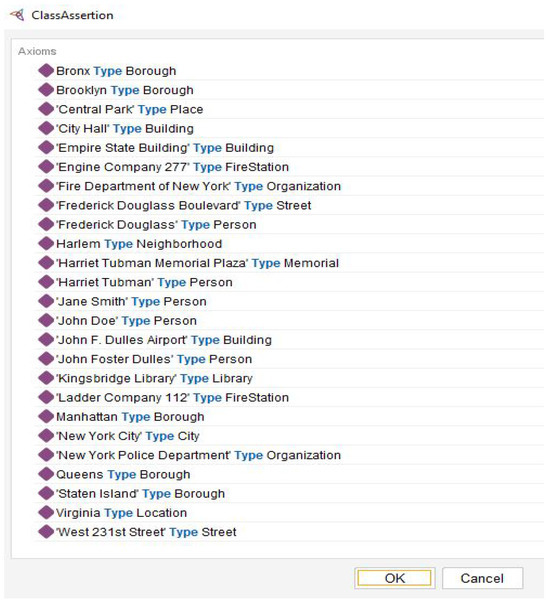

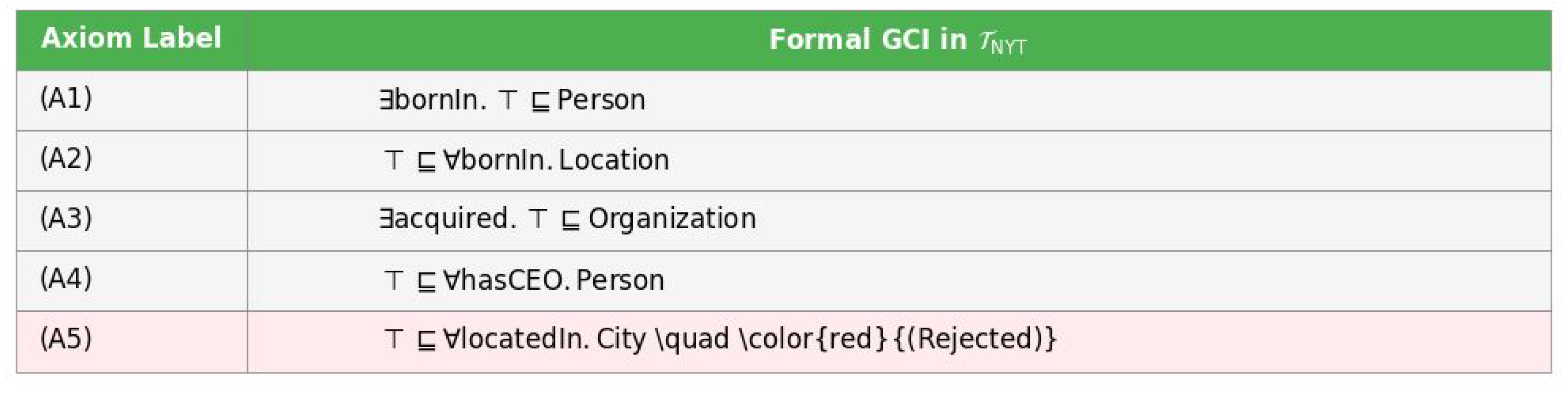

The sample TBox axioms presented in Figure 10 such as domain restrictions (bornIn Domain Person), range restrictions (bornIn Range Location), and role assertions (Apple hasCEO Tim_Cook) are formally encoded as Description Logic axioms in Figure 10. This translation yields General Concept Inclusions (GCIs) shown in Figure 10 from A1 to A5 where

- A1 () formalizes the domain restriction that the subject of bornIn must be in a Person;

- A2 () formalizes the range restriction that the object of bornIn must be a Location;

- A3 () and A4 () similarly encode the domain of acquired and the range of hasCEO;

- A5 () demonstrates our validation process, where an overly restrictive range assertion for locatedIn was correctly flagged and rejected, mirroring the semantic ambiguity observed in the sample data.

Figure 10.

TBox Axiom Generation Summary.

Figure 10.

TBox Axiom Generation Summary.

In summary, Figure 10 provides a summary of the produced TBox axioms in Description Logic language. The pipeline bridges the gap between the textual world of the NYT corpus and the formal world of Description Logic, generating the axioms that form the foundation of a computable ontology.

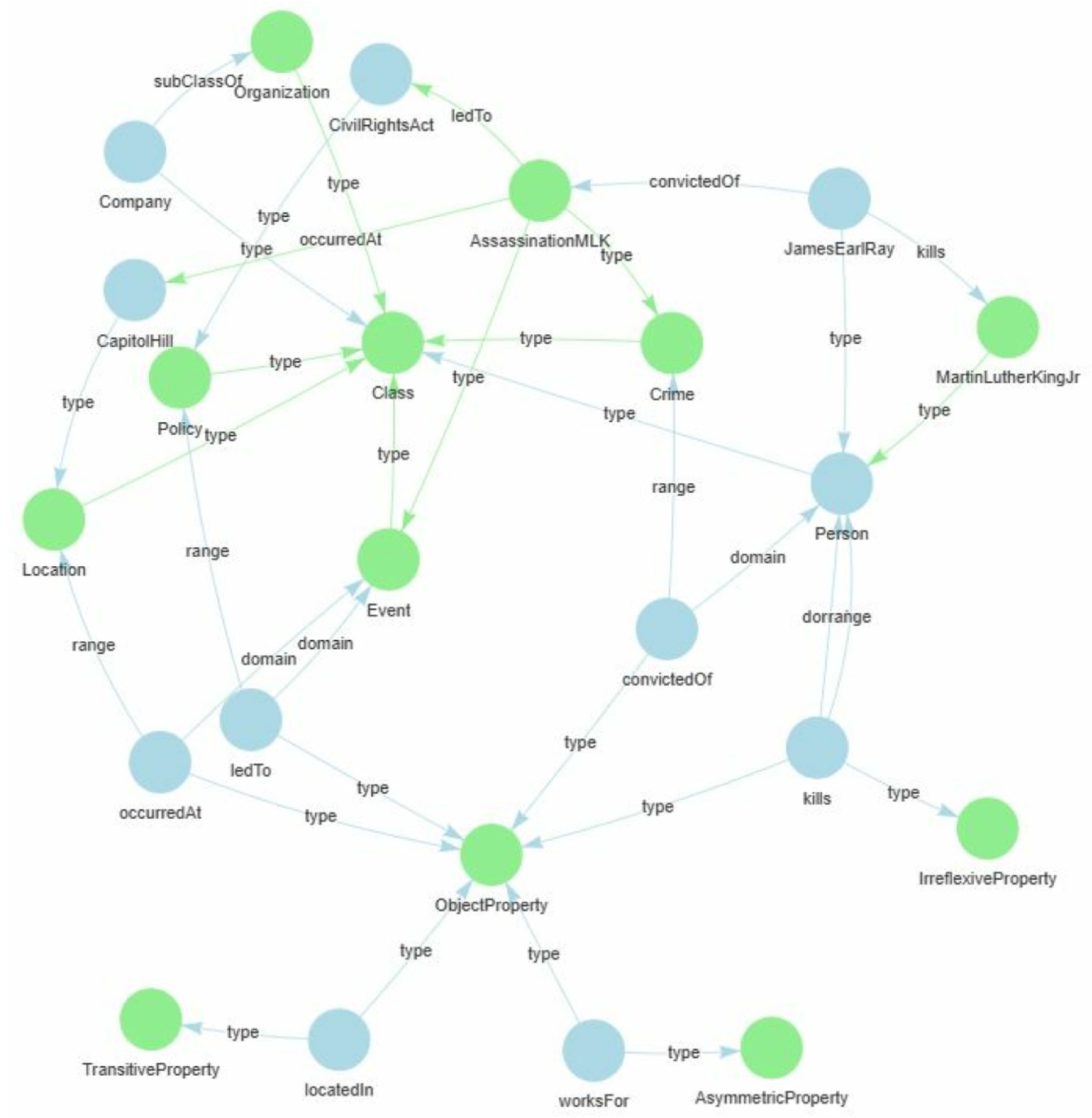

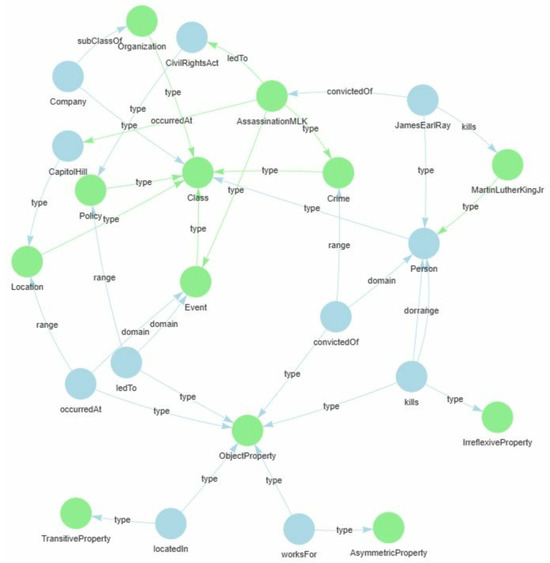

4.8. Graph Visualization of the Generated Ontologies

Figure 11 presents an ontology-based formalization of axioms derived from the NYT corpus. The RDF/OWL encoding distinguishes between classes (e.g., ), object properties (e.g., ), and individuals (e.g., ), reflecting the TBox and ABox structures of Description Logics. By mapping the sentence into a semantic framework, the visualization reveals more than surface relations; the act of killing is represented as both a and an with spatiotemporal grounding () and downstream political consequences (letdTo, CivilRightsAct). The graph shows how axiomatization enriches raw text with logical constraints (e.g., irreflexivity of kills), enabling consistency checking and inferencing.

Figure 11.

Ontology-Driven Graphical Representation of Axioms Generated from the NYT Dataset.

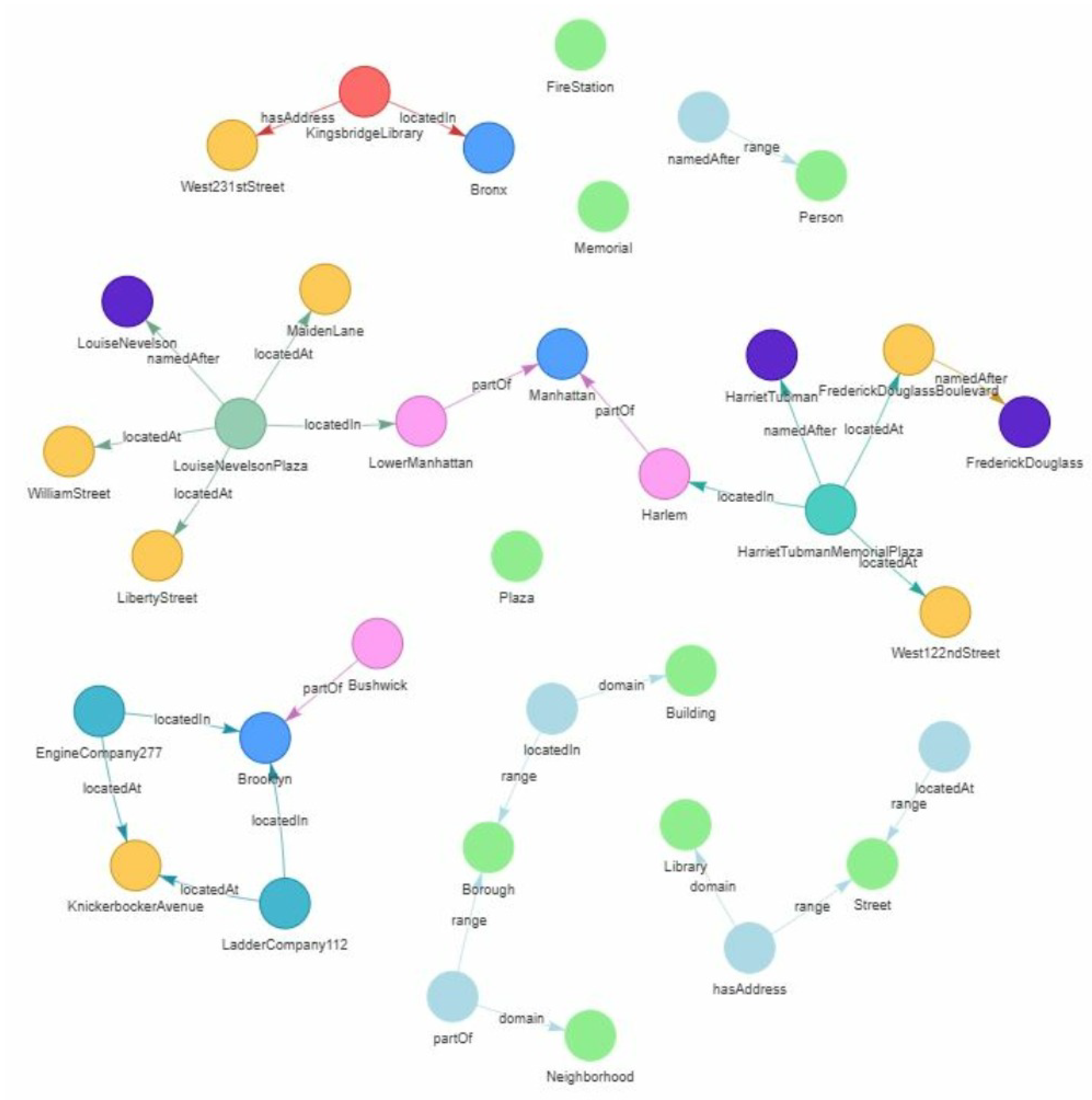

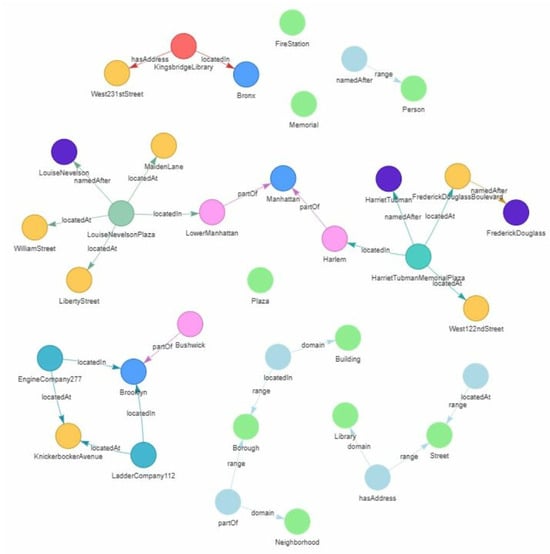

Similarly, Figure 12 presents an ontology-driven representation of axioms derived from the Conll04 corpus. The RDF/OWL encoding captures semantic distinctions between classes (e.g., Person, Building, Neigborhood, Borough), object properties (e.g., locatedAt, namedAfter, partOf, hasAddress), and individuals (e.g., HarrietTubmanMemorialPlaza, FrederickDouglassBoulevard, KingsbridgeLibrary), adhering to the TBox-Abox separation in Description Logics. The graph provides a structural view of spatial and attributional relationships among entities, showing for example, how HarrietTubmanMemorialPlaza is locatedAt Harlem, which is partOf Manhattan, and namedAfter HarrietTubman. Similarly, KingsbridgeLibrary is locatedIn Bronx and hasAddress West231 stStreet, while EngineCompany277 and ladderCompany112 are spatially grounded within Brooklyn. By formalizing these relations as ontological axioms, the visualization enriches the textual content with explicit logical structure, enabling inference over spatial hierarchies (e.g., containment of Borough and Neigborhoods) and semantic roles (e.g., commemorative naming). This ontology-based formalization thus transforms unstructured sentence-level information into machine-interpretable knowledge that supports reasoning, consistency checking, and semantic integration across contexts.

Figure 12.

Ontology-Driven Graphical Representation of Axioms Generated from the Conll04 Dataset.

In the generated graph in Figure 12, entities such as Plaza, Memorial, FireStation and KingsBridgeLibrary appear as detached nodes. This is because the source text provides class assertions for these entities (e.g., KingsBridgeLibrary Type Library) but does not state any object properties that connect them to other individuals within the scope of the processed sentences. For instance, while the axiom defines a hasAddress property, the text may not explicitly provide an address for the library. This reflects the partial knowledge present in the original corpus and results in a graph where some classified entities lack relational links. The detached classes in the visualization reflect the clear separation between TBox (terminological schema) and ABox (assertional data). These classes are defined in the TBox to establish a general conceptual framework but are not populated with instances in this particular ABox snapshot. This is an expected characteristic of a well-structured ontology, where classes are defined as reusable modules. Their detachment indicates they are not instantiated by the specific entities in this sentence but are part of the overarching ontology’s vocabulary, ready to be connected by future axioms or data from other contexts. This separation ensures the ontology remains extensible and logically consistent.

The comparative ontology visualizations derived from the NYT and Conll04 datasets (Figure 11 and Figure 12) highlight how the characteristics of extracted triples influence subsequent axiom generation. In both cases, axioms were formalized from concept and relation extraction outputs, yet the semantic nature of triples differed according to the corpus domain. Triples from the NYT dataset primarily represented event-oriented and causal semantics (e.g., Person-kills-Person, Event-occuredAt-Location, Event-ledTo-Policy), leading to axioms that capture temporal, causal, and agentive relations. Conversely, triples from the Conll04 dataset were dominated by entity-centred and spatial relations (e.g., Building-locatedIn-Borough, Plaza-namedAfter-Person), resulting in axioms emphasizing spatial hierarchy and attributional structure. This comparison shows that the type and distribution of relations present in the extracted triples, which are shaped by the underlying corpus, directly affect the ontology’s logical form. Hence, even with the same axiom generation framework, the dataset’s relational semantics fundamentally determine the ontology’s expressivity, relational focus, and reasoning potential.

4.9. Comparison with Related Studies

In this section, we conduct a comparative analysis of our results in relation to other existing studies, shedding light on both the commonalities and differences observed. We present in Table 8 an overview of how prior research has assessed the proposed methods for automated axiom generation, the summary of their findings, and possible limitations. Additionally, we draw comparisons between our results and those presented in the literature. In Table 8, (1) ✓ indicates that the given criterion is met comprehensively; (2) × signifies the non-fulfillment of the criterion at all; and (3) ≈ denotes an intermediate degree of satisfaction, that is, a partial fulfillment of the criterion.

Table 8.

Comparison with Related Studies.

Table 8 reveals a prevalent trend among researchers, where a significant portion has not taken into consideration the complete spectrum of quality criteria for automated ontology construction. For instance, in Text-To-Onto [14], the primary focus was on pattern-based extraction with limited consideration for logical consistency and scalability, as the approach relied heavily on hand-crafted linguistic patterns that were brittle and domain-dependent. In Statistical Schema Induction [25], the evaluation emphasized distributional patterns but lacked formal consistency guarantees, often leading to semantically incorrect axioms due to over-reliance on frequency alone. Notably, Neural Feature Extractors using RNNs/LSTMs [26,27] demonstrated improved relation extraction but faced architectural limitations in generating complex logical structures, resulting in partial automation that still required manual post-processing. More recently, LLM-based approaches [28] achieved high automation levels but suffered from critical limitations in logical consistency and scalability due to the stochastic nature of generative models and their prohibitive computational costs. In contrast, our proposed pipeline offers a comprehensive evaluation by addressing all key quality criteria, providing compelling evidence that our method effectively bridges the gap between statistical extraction and formal axiomatization. The Transformer-based extraction coupled with deterministic schema mapping guaranties both scalability and logical consistency. The structural coherence method is validated through graph metrics in Table 7, demonstrating well-integrated taxonomic hierarchies with appropriate depth and breadth distributions. Compared to the related works, The bottom row of Table 8 show that our proposed pipeline addresses all four quality criteria used. Our method uses deterministic rules (Rules 1 and 2) with empirically validated thresholds. For example, provides a formal guarantee that domain/range assertions are statistically significant, addressing the consistency issues noted in prior work. The findings indicated that our proposed framework can automatically generate both TBox and ABox axioms from textual data while maintaining formal logical integrity.

4.10. Quantitative Comparison with State-of-the-Art Axiom Generation Approaches

To contextualize our results, we compare with representative axiom generation approaches. While direct comparison is challenging due to differing evaluation metrics and experimental setups, we normalize reported results to common dimensions:

- Statistical Methods: Text-to-Onto [14] reports 65–70% accuracy for taxonomic relation discovery on similar corpora, compared to our expert acceptance rate for subclass axioms. Similarly, Statistical Schema Induction [25] achieves clustering accuracy for domain/range constraint induction, while our approach attains 95.5–99.8% confidence scores for property typing with guaranteed logical consistency. These comparisons demonstrate that our method not only matches but exceeds the reliability of statistical approaches while adding formal consistency guarantees.

- Neural Extractor Methods: Chen et al. [26] report consistency for ontology alignment tasks using LSTM encoder, while our approach achieves logical consistency across all generated ontologies.

- LLM Methods: He [28] reports 69–72% accuracy for direct axiom generation via prompting, but notes significant consistency issues ( inconsistency rate). Our deterministic schema mapping eliminates such inconsistencies while maintaining comparable coverage.

The key differentiator of our approach is the combination of high accuracy (comparable to LLMs) with guaranteed logical consistency (superior to all baselines). While LLM-based methods may generate more diverse axioms, they lack the formal guarantees essential for reasoning-ready ontologies.

5. Limitations and Future Work

The performance of the proposed method for automatic axiom generation from text is dependent on the quality of the initial relation extraction, propagating any errors forward. Also, the proposed approach currently generates only a core set of axioms, lacking support of complex constructs such as property characteristics. While the pipeline generates core axioms effectively, its performance depends on the initial extraction quality. For instance, Rule 1 requires accurate entity typing to compute the subclass ratio , and Rule 2’s domain/range inference depends on clean property extractions. Future improvements could involve adjusting the threshold dynamically based on corpus characteristics.

Furthermore, the current implementation is deliberately focused on generating a core set of ontological axioms that form the foundational TBox and ABox. This includes hierarchies, domain/range restrictions, and instance assertions. However, the pipeline does not currently infer property characteristics (e.g., functional, transitive, symmetric), cardinality restrictions or complex class expressions (e.g., disjointWith, unionOf). Future work could leverage pattern-based heuristics, linguistic and commonsense knowledge or rule mining and consistency checking to produce expressive and semantically rich OWL ontologies.

6. Conclusions

In this study, we addressed the critical challenge of automated axiom generation for ontology construction from unstructured text. We proposed a novel pipeline that performs ontology learning in three systematic stages: lexical-to-ontological mapping, schema formation through entity typing and hierarchy induction, and automated axiom generation for both TBox and ABox components. This work makes three primary contributions, each substantiated by our experimental findings. First, we introduce a deterministic schema mapping framework that guarantees logical consistency, a critical improvement over stochastic LLM-based generators prone to hallucination. Our rule-based, post hoc methodology produced ontologies validated as consistent by Hermit and ELK reasoners. Second, our pipeline delivers formally consistent axioms with high semantic fidelity, achieving an expert acceptance rate exceeding for both TBox and ABox axioms. This dual validation (automated and expert) surpasses the reliability of prior statistical or neural feature extraction methods. Finally, we establish a scalable and transparent pathway from neural extractions to symbolic knowledge bases. By deploying deterministic rules, with a confidence threshold (), we overcome the manual post-processing bottleneck of earlier hybrid approaches, enabling the automated generation of ontologies with rich, coherent structures. The experimental results, derived from the event-oriented semantics of the NYT and the spatial relationships of the Conll04 dataset, confirm the pipeline’s efficacy and generalizability. The success of our approach is fundamentally grounded in its rule-based axiomatization, which uses statistical evidence from Transformer extractions to infer formal constraints. This work establishes a reliable, consistent, and scalable foundation for automated ontology learning. It provides a practical solution to the long-standing axiom generation bottleneck, advancing the field from neural relation extraction toward the fully automated construction of verifiable knowledge bases.

Author Contributions

Conceptualization, T.Z., J.V.F.-D. and M.G.; methodology, T.Z.; software, T.Z.; validation, T.Z., J.V.F.-D. and M.G.; formal analysis, T.Z., J.V.F.-D. and M.G.; investigation, T.Z., J.V.F.-D. and M.G.; resources, T.Z., J.V.F.-D. and M.G.; data curation, T.Z., J.V.F.-D. and M.G.; writing—original draft preparation, T.Z.; writing—review and editing, T.Z., J.V.F.-D. and M.G.; visualization, T.Z.; supervision, J.V.F.-D. and M.G. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The OWL file used to produce the axioms in this study are found at https://drive.google.com/drive/folders/1R3ncNMBYv-tE9L3U7iFr_ISEF68M-IWC?usp=drive_link (accessed on 24 November 2025).

Conflicts of Interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Abbreviations

The following abbreviations are used in this manuscript:

| AI | Artificial Intelligence |

| OWL | Web Ontology Language |

| ABox | Assertional axioms |

| TBox | Terminological axioms |

| DL | Description Logics |

| NER | Named Entity Recognition |

| RE | Relation Extraction |

| EL | Existential Language |

| ALC | Attributive Concept Language with Complements |

| SOTA | State of the Art |

| RNN | Recurrent Neural Networks |

| CNNs | Convolutional Neural Networks |

| LSTM | Long-Short Term Memory |

| MTF | Multimodal Transformer-based Fusion model |

References

- Gruber, T.R. Toward principles for the design of ontologies used for knowledge sharing? Int. J. Hum.-Comput. Stud. 1995, 43, 907–928. [Google Scholar] [CrossRef]

- Baader, F. (Ed.) The Description Logic Handbook: Theory, Implementation and Applications; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Maedche, A.; Staab, S. Ontology learning for the semantic web. IEEE Intell. Syst. 2005, 16, 72–79. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; Volume 1, pp. 4171–4186. [Google Scholar]

- Eberts, M.; Ulges, A. Span-based joint entity and relation extraction with transformer pre-training. arXiv 2019, arXiv:1909.07755. [Google Scholar]

- Zengeya, T.; Naidoo, K.E.; Fonou-Dombeu, J.V.; Gwetu, M. A Multimodal Transformer-based Fusion Model for Enhanced Relations Extraction from Texts. IEEE Access 2025, 13, 162855–162880. [Google Scholar] [CrossRef]

- Amdouni, E.; Belfadel, A.; Gagnant, M.; Renault, I.; Kierszbaum, S.; Carrion, J.; Dussartre, M.; Tmar, S. Semi-Automatic Building of Ontologies from Unstructured French Texts: Industrial Case Study. Data Sci. Eng. 2025, 10, 339–361. [Google Scholar] [CrossRef]

- Buitelaar, P.; Cimiano, P.; Magnini, B. Ontology learning from text: An overview. In Ontology Learning from Text: Methods, Evaluation and Applications; IOS Press: Amsterdam, The Netherlands, 2005; Volume 123, pp. 3–12. [Google Scholar]

- Simperl, E. Reusing ontologies on the Semantic Web: A feasibility study. Data Knowl. Eng. 2009, 68, 905–925. [Google Scholar] [CrossRef]

- Chen, J.; Dong, H.; Chen, J.; Horrocks, I. Ontology Text Alignment: Aligning Textual Content to Terminological Axioms. In ECAI 2024; IOS Press: Amsterdam, The Netherlands, 2024; pp. 1389–1396. [Google Scholar]

- Van Harmelen, F.; McGuinness, D.L. OWL web ontology language overview. In Proceedings of the World Wide Web Consortium (W3C) Recommendation; W3C: Wakefield, MA, USA, 2004; Volume 69, p. 70. [Google Scholar]

- Baader, F.; Horrocks, I.; Lutz, C.; Sattler, U. An Introduction to Description Logic; Cambridge University Press: Cambridge, UK, 2017. [Google Scholar]

- Abicht, K. OWL Reasoners still useable in 2023. arXiv 2023, arXiv:2309.06888. [Google Scholar] [CrossRef]

- Maedche, A.; Volz, R. The ontology extraction and maintenance framework Text-To-Onto. In Proceedings of the Workshop on Integrating Data Mining and Knowledge Management, San Jose, CA, USA, 29 November–2 December 2001; pp. 1–12. [Google Scholar]

- Zhang, Z.; Yilmaz, L.; Liu, B. A critical review of inductive logic programming techniques for explainable ai. IEEE Trans. Neural Netw. Learn. Syst. 2023, 35, 10220–10236. [Google Scholar] [CrossRef]

- Algahtani, E. A hardware approach for accelerating inductive learning in description logic. ACM Trans. Embed. Comput. Syst. 2024, 23, 1–37. [Google Scholar] [CrossRef]

- Du, R.; An, H.; Wang, K.; Liu, W. A short review for ontology learning: Stride to large language models trend. arXiv 2024, arXiv:2404.14991. [Google Scholar]

- Al-Aswadi, F.N.; Chan, H.Y.; Gan, K.H. Automatic ontology construction from text: A review from shallow to deep learning trend. Artif. Intell. Rev. 2020, 53, 3901–3928. [Google Scholar] [CrossRef]

- Zengeya, T.; Fonou-Dombeu, J.V. A review of state of the art deep learning models for ontology construction. IEEE Access 2024, 12, 82354–82383. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Babaei Giglou, H.; D’Souza, J.; Auer, S. LLMs4OL: Large language models for ontology learning. In Proceedings of the International Semantic Web Conference, Athens, Greece, 6–10 November 2023; pp. 408–427. [Google Scholar]

- Kommineni, V.K.; König-Ries, B.; Samuel, S. From human experts to machines: An LLM supported approach to ontology and knowledge graph construction. arXiv 2024, arXiv:2403.08345. [Google Scholar] [CrossRef]

- Rebboud, Y.; Tailhardat, L.; Lisena, P.; Troncy, R. Can LLMs Generate Competency Questions? In Proceedings of the European Semantic Web Conference, Hersonissos, Greece, 26–30 May 2024; pp. 71–80. [Google Scholar]

- Poon, H.; Domingos, P. Unsupervised ontology induction from text. In Proceedings of the 48th Annual Meeting of the Association for Computational Linguistics, Uppsala, Sweden, 11–16 July 2010; pp. 296–305. [Google Scholar]

- Völker, J.; Niepert, M. Statistical schema induction. In Proceedings of the Extended Semantic Web Conference, Heraklion, Crete, Greece, 29 May–2 June 2011; pp. 124–138. [Google Scholar]

- Chen, J.; Jiménez-Ruiz, E.; Horrocks, I.; Antonyrajah, D.; Hadian, A.; Lee, J. Augmenting ontology alignment by semantic embedding and distant supervision. In Proceedings of the European Semantic Web Conference, Virtual, 6–10 June 2021; pp. 392–408. [Google Scholar]

- Javed, S.; Usman, M.; Sandin, F.; Liwicki, M.; Mokayed, H. Deep ontology alignment using a natural language processing approach for automatic M2M translation in IIoT. Sensors 2023, 23, 8427. [Google Scholar] [CrossRef] [PubMed]

- He, Y. Language Models for Ontology Engineering. Ph.D. Thesis, University of Oxford, Oxford, UK, 2024. [Google Scholar]

- Fonou-Dombeu, J.V.; Viriri, S. OntoMetrics evaluation of quality of e-government ontologies. In International Conference on Electronic Government and the Information Systems Perspective; Springer International Publishing: Cham, Switzerland, 2019; pp. 189–203. [Google Scholar]

- Papers with Code-New York Times Annotated Corpus Dataset. Available online: https://paperswithcode.com/dataset/new-york-times-annotated-corpus (accessed on 3 September 2024).

- Papers with Code-CoNLL04 Dataset. Available online: https://paperswithcode.com/dataset/conll04 (accessed on 3 September 2024).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.