Abstract

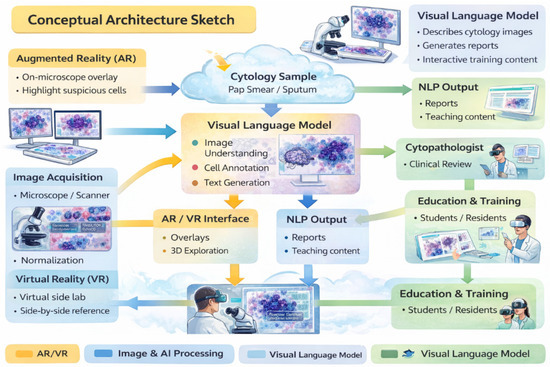

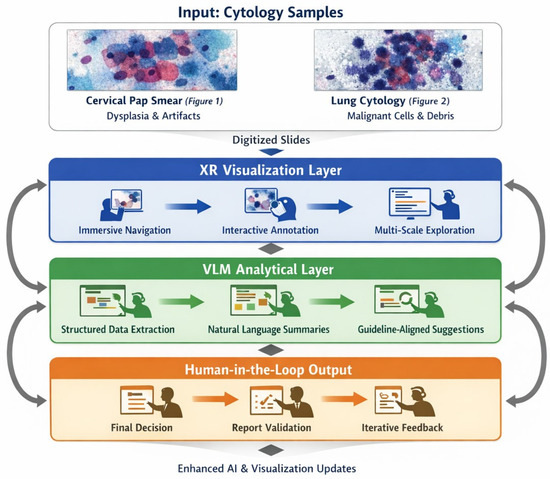

Emerging digital technologies, including immersive environments (VR/AR/XR) and Vision–Language Models (VLMs), have the potential to reshape digital pathology and medical imaging. While immersive tools can enhance spatial visualization and procedural training, VLM-based copilots offer cognitive and workflow support. Their combined impact on cytopathology remains largely conceptual and preclinical. This Conceptual Exploratory Narrative Review (CENR) examines how immersive technologies and VLM-based copilots may jointly influence cytopathologists’ professional workflow, training, and diagnostic processes, introducing the notion of the “augmented cytopathologist.” A structured exploratory approach integrated peer-reviewed literature, position papers, preprints, gray literature (technical reports, white papers, conference abstracts, blogs), and cross-disciplinary perspectives. Database searches (PubMed, Web of Science, Scopus) confirmed a limited number of studies addressing immersive or AI-assisted cytopathology imaging. Thematic analysis focused on four conceptual dimensions: (1) technological capabilities and maturity; (2) workflow and educational applications; (3) professional implications and cytopathologist role; and (4) responsible use of LLMs and VLMs as supportive tools. This approach emphasizes interpretation of emerging trends over aggregation of empirical data, enabling conceptual synthesis of early-stage implementations and perspectives in the field. Immersive technologies facilitate three-dimensional visualization, procedural skill development, and collaborative engagement, whereas VLMs support report generation, literature retrieval, and decision guidance. Together, they offer a synergistic model for perceptual and cognitive augmentation. Key challenges include technical maturity, interoperability, workflow integration, regulatory compliance, and ethical oversight. Figures illustrate representative examples of (1) remote collaborative immersive evaluation and (2) integration of immersive visualization with VLM-based copilots, highlighting potential applications in training and workflow support. The CENR underscores the potential of combining immersive tools and AI copilots to support cytopathology, particularly for education, workflow efficiency, and cognitive augmentation. Adoption should be incremental and carefully governed, emphasizing augmentative rather than transformative use. Future research should focus on clinical validation, scalable integration, and regulatory and ethical frameworks to realize the concept of the augmented cytopathologist in practice.

1. Introduction

1.1. From Conventional Cytopathology to Digital and AI-Driven Transformation

Cytopathology has always been a discipline grounded in visual expertise and interpretive judgment. Since the mid-20th century, it has evolved from conventional smears and manual microscopy toward automated staining, liquid-based cytology, and digital archiving. Despite these technological advances, the core of cytopathological practice remains deeply human: the cytopathologist continuously integrates visual patterns with clinical context, often under conditions of uncertainty and limited tissue availability.

The digital transformation of cytopathology has unfolded progressively over the past two decades, building on early telepathology experiences developed in the late 20th century [1]. Initial efforts focused on the transmission of static images [2,3], with Petrovichev et al. exploring their diagnostic applicability in cytology [3]. Subsequent advances introduced dynamic telepathology systems with robotic control, enabling real-time remote slide examination, as demonstrated by Della Mea et al. [4]. Integrated networks combining robotic and non-robotic telepathology modalities were later described by Dunn et al. [5], while Demichelis et al. confirmed the feasibility of robotic telepathology for intraoperative consultations using still images [6]. Nevertheless, communication latency remained a significant limitation in these systems, as reported by Carr et al. [7].

Virtual microscopy represented a key step in overcoming these constraints. The transition from physical slides to high-resolution digital replicas accessible remotely was documented by Giansanti et al. [1]. Educational platforms such as virtual-microscopy.net [8], histologyguide.com [9], and virtualmicroscopy.co.uk [10] further supported this transition by providing structured repositories and training resources. Donnelly et al. demonstrated the successful adoption of virtual microscopy in cytotechnology education [11], while Saini et al. reviewed the expanding role of Whole Slide Imaging (WSI) in digital cytology [12]. Additional educational and training benefits were highlighted by Chiou and Jia [13], and by Giansanti et al., who described the integration of virtual microscopy into telepathology training programs to strengthen digital competencies [14].

Despite these advances [14,15], digital cytopathology presents challenges that are distinct from those encountered in histopathology. Cytological specimens are inherently heterogeneous and three-dimensional, often requiring multilayer imaging techniques such as Z-stacking to accurately capture cellular depth [16]. Software-based focus enhancement has been proposed to improve image clarity in digital cytology [17], yet implementation of digital workflows frequently entails increased initial workload and infrastructure costs. Survey data from Denmark reported by Smith et al. indicate that the transition to digital pathology can place significant strain on organizational processes [15], a finding echoed by Detlefsen et al. [18]. At the same time, the growing integration of artificial intelligence has added further complexity to digital pathology workflows [19]. Standardization remains a critical issue, as emphasized by Marletta et al. through a Delphi consensus focused on WSI in thyroid cytopathology [20], while Chantziantoniou et al. identified barriers such as pathologist resistance and algorithmic limitations that continue to slow adoption [21]. Regulatory frameworks, including FDA guidelines on WSI, play a central role in ensuring quality, consistency, and compliance during this transition [22].

Within this evolving digital landscape, artificial intelligence has emerged as a major driver of transformation in cytopathology. AI-based systems have demonstrated their ability to enhance diagnostic accuracy and workflow efficiency by supporting cellular image analysis and algorithmic classification [23,24]. Beyond image interpretation, AI has expanded into predictive analytics, enabling disease progression forecasting and supporting clinical decision-making [25]. In high-volume screening settings, AI-driven systems have contributed to a substantial reduction in manual workload for cytotechnologists while maintaining high diagnostic standards [26]. However, the heterogeneity of cytological samples, characterized by variable staining and complex three-dimensional structures, complicates algorithm training and limits model generalizability [27]. Effective deployment therefore requires robust and diverse datasets, as well as architectures capable of adapting to real-world variability [28].

Equally important is the integration of AI into routine clinical workflows. AI tools must align with established diagnostic practices and support, rather than disrupt, the work of cytopathologists. Achieving this alignment requires iterative validation and close collaboration between clinicians, computer scientists, and system developers [29]. While AI holds considerable promise, it has not yet reached full maturity in cytopathology. As highlighted in comprehensive reviews by Giansanti [30,31], the acceleration of digital cytopathology—particularly during the COVID-19 pandemic—has laid the technological and cultural foundations for AI-driven innovation, but ethical, regulatory, and organizational challenges remain. Digitization and AI should therefore be understood as deeply interconnected processes within a dynamic and still-evolving transformation, rather than as sequential or fully stabilized paradigms.

1.2. Immersive Technologies and Vision–Language Models as Emerging Copilots

Building on the combined trajectories of digitization and artificial intelligence, a further layer of innovation is emerging through the integration of immersive technologies and Vision–Language Models (VLMs), including large language models (LLMs) [32,33]. Immersive technologies—such as augmented reality (AR), virtual reality (VR), extended reality (XR), and metaverse-based platforms—function as advanced human–machine interfaces rather than AI per se. Their primary value lies in enhancing spatial understanding, interactive training, real-time collaboration, and contextual data immersion, particularly in complex imaging and educational scenarios relevant to cytopathology.

The development of immersive technologies reflects the progressive evolution of both hardware and software ecosystems. Advances in head-mounted displays, controllers, hand-tracking systems, haptic interfaces, and rendering algorithms have addressed earlier limitations related to computational power, display resolution, and user discomfort. Among current platforms, the HoloLens 2 (Microsoft) represents one of the most advanced AR/MR devices in healthcare, integrating holographic overlays, spatial audio, tracking sensors, and wireless connectivity. Its applications in surgical navigation, anatomical education, and medical training have been highlighted by Palumbo [34], while Veerla et al. demonstrated the potential of combining mixed reality visualization with VLM integration to optimize digital pathology workflows and collaborative diagnostics [35].

Other immersive platforms are increasingly explored in digital pathology and medical education. The Oculus Rift has been explored for the examination of digital pathology slides [33], while the Apple Vision Pro (Apple) has been proposed as a paradigm shift in spatial computing for medical visualization and training [36]. More accessible solutions such as the Valve Index (Valve), Google Cardboard (Google), and Samsung GearVR (Samsung) are commonly adopted in educational and preliminary simulation settings, although further validation is needed for sustained clinical use. Beyond education, immersive technologies have demonstrated broader clinical utility, including enhanced teaching of three-dimensional anatomy and pathology [37] and applications in pain management during medical procedures [38].

Table 1 summarizes key immersive devices, highlighting their primary technological features, typical applications in healthcare and education, and the expected benefits for user training, workflow optimization, and spatial comprehension. Rather than presenting an exhaustive list, the table emphasizes illustrative platforms that are representative of current trends in AR/VR adoption for biomedical purposes, allowing readers to grasp the diversity of hardware capabilities and their relevance to cytopathology and related clinical contexts.

Table 1.

Selected immersive technologies in healthcare and education. The table summarizes key platforms, their main features, typical applications, expected benefits, and relevant references. “Key Features” highlights the technological capabilities; “Typical Applications” lists common use cases in clinical practice and education; “Expected Benefits” outlines the advantages or improvements these technologies offer in healthcare and learning contexts.

In parallel, VLM-based copilots are rapidly transforming biomedical research and healthcare by providing advanced language understanding, reasoning capabilities, and context-aware assistance. Leveraging natural language processing, these systems can synthesize large bodies of biomedical literature, integrate multimodal data, and generate actionable insights that support clinical and research decision-making. Platforms such as Med-PaLM 2 (Google DeepMind), Claude (Anthropic), ChatGPT 5.2 (OpenAI), and BioGPT (Microsoft Research) illustrate how VLMs can support literature summarization, guideline interpretation, hypothesis generation, and decision support across biomedical domains [45,46,47,48].

Table 2 presents representative VLM platforms with their main functionalities, application domains, and demonstrated benefits. The table is designed to provide a conceptual overview of how these AI copilots can assist healthcare professionals and researchers in complex decision-making tasks, facilitating literature synthesis, evidence-based recommendations, and integration with digital workflows. Again, the examples are illustrative rather than exhaustive, underscoring the ongoing development and necessary human oversight associated with these technologies.

Table 2.

Representative Vision–Language Model (VLM) copilots in biomedical applications. The table summarizes key platforms, their application domains, main features, expected benefits, and relevant references. “Application Domain” indicates the clinical or biomedical context in which the VLM is applied; “Key Features” highlights the technological or functional capabilities; “Expected Benefits” describes the advantages provided to clinicians or researchers, such as support in decision-making or protocol adherence.

When integrated with digital infrastructures such as electronic health records, laboratory information systems, and AI-powered imaging platforms, VLM copilots have the potential to form cohesive ecosystems that bridge the gap between raw data and actionable knowledge. However, these systems remain illustrative examples rather than exhaustive solutions, and their deployment continues to require careful human oversight to ensure accuracy, transparency, and clinical safety.

While VLMs, including LLM-enabled systems, hold significant promise in supporting literature synthesis, evidence integration, and workflow facilitation, it is crucial to recognize that these models are not designed for autonomous medical diagnosis. Their use should remain augmentative, providing clinicians and cytopathologists with decision support while maintaining human oversight, adherence to established clinical standards, and reliance on domain-specific medical models. Techniques such as retrieval-augmented generation (RAG) can offer context-sensitive information, yet they cannot replace expert judgment or fully validated diagnostic systems.

At the same time, the broader market trajectory reflects rapid and largely unstoppable growth. The global immersive technology market—including AR, VR, and MR—is projected to increase from approximately USD 1.67 billion in 2025 to over USD 9 billion by 2035, representing a compound annual growth rate (CAGR) of ~18.5% [49]. Similarly, the healthcare AI market, covering machine learning, natural language processing, and LLM-enabled systems, is expected to expand at a CAGR of ~35% over the next decade [50], driven by increasing adoption in diagnostics, decision support, and clinical workflows. This dual perspective highlights that while clinical caution remains essential, the technological and economic momentum of immersive and AI-enabled systems is accelerating decisively, shaping the future landscape of cytopathology and biomedical practice.

1.3. Objective and Structure of the Conceptual Exploratory Narrative Review

In this emerging fields several questions naturally arise: How are immersive technologies being applied in healthcare and cytopathology, and what benefits do they provide for training, diagnostics, and workflow optimization? In what ways can VLM-based copilots support clinical decision-making, report generation, and cognitive augmentation in cytopathology? What technical, operational, and workflow challenges must be addressed to integrate these tools into routine practice? How do ethical, regulatory, and organizational factors influence their safe and effective adoption? And, finally, how is the role of the cytopathologist evolving in response to immersive tools and AI copilots, and what implications emerge for professional competencies and training?

In this conceptual exploratory narrative review (CENR), we address these questions by examining how immersive technologies and VLM-based AI copilots may jointly transform cytopathological practice and training. The CENR approach integrates insights from peer-reviewed literature, preprints, gray sources, and digital platforms to propose an interpretive framework in domains where empirical evidence is still limited. Traditional systematic or scoping reviews are often impractical in emerging areas due to the scarcity of consolidated studies. A conceptual exploratory approach enables the identification of trends, challenges, and opportunities, while supporting interdisciplinary synthesis and forward-looking interpretation.

We focus on two complementary technological strands: immersive technologies, which enhance spatial understanding and procedural skills through interactive three-dimensional visualization, and VLMs, which provide cognitive augmentation by supporting interpretation, report generation, and knowledge synthesis. Their convergence underpins the concept of the “augmented cytopathologist,” a professional who integrates morphological expertise with digital literacy and AI-assisted decision support.

The remainder of the manuscript is organized as follows. Section 2 describes the study design and conceptual approach. Section 3 presents current applications of immersive technologies and VLM copilots in healthcare and cytopathology. Section 4 critically discusses technical, operational, ethical, and professional implications. Section 5 presents an integrative conceptual framework synthesizing insights from immersive technologies and AI copilots in cytopathology. Section 6 discusses the limitation and the future work. Section 7 is dedicated to the conclusions.

2. Methodological Approach

2.1. Study Design and Conceptual Approach

This CENR adopts an exploratory and integrative approach to describe the emerging role of immersive technologies and Vision–Language Models (VLMs), including large language models (LLMs), as copilots in cytopathology. Rather than generating new empirical findings, the goal is to provide an interpretation of ongoing developments, integrating evidence from multiple sources and disciplinary perspectives. This approach is typical of CENRs, which are particularly suitable for areas where empirical literature is sparse and early-stage implementations are limited, allowing conceptual synthesis and framework development.

The CENR follows the editorial guidelines of the journal [51], which specify that “The structure can include an Abstract, Keywords, Introduction, Relevant Sections, Discussion, Conclusions, and Future Directions.” No specific protocol or detailed methodology is required, yet in this section we provide a methodological orientation designed to reveal emerging themes, critical challenges, and conceptual patterns in the integration of immersive technologies and AI copilots into cytopathology, rather than to generate exhaustive empirical data.

Traditional bibliographic databases such as PubMed, Web of Science, and Scopus yielded very limited results, reflecting the early stage of research on immersive simulations and AI copilots in cytopathology. Representative PubMed searches conducted in October 2025 illustrate this scarcity:

- LLMs and copilots in cytopathology:Query: ((LLM[Title/Abstract]) AND (copilot[Title/Abstract])) AND (cytopathology[Title/Abstract]).PubMed link → L1 → 0 results.

- Immersive tools in cytopathology:Query: ((Virtual reality[Title/Abstract]) OR (metaverse[Title/Abstract]) OR (Augmented Reality[Title/Abstract])) AND (cytopathology[Title/Abstract]).PubMed link → L2 → 1 result.

These results clearly indicate that traditional systematic review methods would have been inadequate, as they rely on substantial published empirical evidence. In contrast, a CENR allows for a more flexible and integrative synthesis, capturing early-stage developments, conceptual frameworks, and pilot implementations that are not yet peer-reviewed or formally reported.

To complement these findings, the review also included preprints (arXiv, medRxiv), gray literature (technical reports, white papers, conference abstracts, blogs), and cross-disciplinary sources (computer science, medical informatics, digital pathology, education, human–technology interaction). This combination allowed the capture of early experimental implementations, pilot projects, and emerging discussions in the field, providing a more comprehensive understanding of the state of the art. The investigation draws on three main domains:

- Scientific and Technical Literature: Publications from 2020 onward addressing immersive technologies (VR, AR, XR, metaverse) and VLM-based copilots in healthcare, with emphasis on cytopathology and digital pathology, were included. Boolean-based searches enabled focused exploration of both technological innovation and workflow integration.

- Gray Literature and Digital Platforms: Technical reports, white papers, conference abstracts, blogs, and curated online repositories were included to document early implementations and pilot projects in education and diagnostic practice.

- Cross-Disciplinary Perspectives: Insights from computer science, medical informatics, digital pathology, education, and human–technology interaction were integrated to highlight potential benefits, tensions, and implications for professional roles, training models, and human–AI collaboration.

Overall, the CENR approach is justified by the scarcity of formal evidence, the early stage of technological development, and the cross-disciplinary nature of the field. By integrating preprints, gray literature, and conceptual discussions, this review provides a foundational synthesis that can guide future empirical research, workflow design, and educational strategies in cytopathology.

Table 3 summarizes the key domains, search keywords, and the scope of inquiry, illustrating how each source contributes to understanding emerging immersive and AI-assisted tools in cytopathology workflows. Table 4 expands on the range of sources beyond traditional peer-reviewed literature, including preprints, gray literature, cross-disciplinary perspectives, and software/package repositories. This broader view highlights the diverse origins of emerging evidence, capturing pilot implementations, experimental platforms, and early-stage discussions that are critical to an integrative understanding of immersive technologies and AI copilots in cytopathology.

Table 3.

Key domains, keywords, and scope of inquiry in immersive and AI-assisted cytopathology. The table outlines the main domains or sources considered in the review, the search keywords or queries used to explore each domain, and the purpose or scope of the inquiry. “Domain/Source” indicates the field or dataset analyzed; “Search Keywords/Queries” lists the terms used to identify relevant literature or technologies; “Purpose/Scope” describes the objective of the search or the aspect of cytopathology being investigated.

Table 4.

Sources and scope of inquiry in immersive and AI-assisted cytopathology. The table summarizes the types of sources reviewed, examples or representative platforms, and their purpose in the analysis. “Type/Source” indicates the category of literature or material analyzed; “Examples/Platforms” provides representative journals, conferences, or tools; “Purpose in Analysis” describes the objective of including these sources in the review, such as consolidating evidence on technology, workflow, or applications in cytopathology.

The analysis followed a thematic and iterative approach to explore how immersive technologies and VLM-based copilots are represented in healthcare and cytopathology. Rather than aggregating empirical results, the focus was on identifying key conceptual dimensions relevant to the research questions:

- Technological Capabilities and MaturityExamining hardware, software, and platform developments to understand readiness for integration and potential benefits for training, diagnostics, and workflow optimization.

- Workflow and Educational ApplicationsExploring how these tools may support clinical and educational processes, including cytopathology training, remote collaboration, and report generation, while noting practical and operational constraints.

- Professional Implications and Cytopathologist RoleConsidering how AI and immersive technologies may influence expertise, autonomy, and professional competencies, and how the cytopathologist’s role may evolve in response to digital augmentation.

- Responsible Use of LLMs and CopilotsFraming LLMs and copilots as supportive, augmentative tools rather than autonomous diagnostic systems, focusing on conceptual understanding of their role, applications, and boundaries.

This approach allowed for a broad interpretive synthesis, providing a framework to discuss the applications, benefits, challenges, and professional implications of immersive technologies and AI copilots in cytopathology, aligned with the review’s guiding questions.

2.2. Study Selection Flow

The selection of sources from those listed in Table 4 followed a concept-driven, iterative approach consistent with the CENR methodology. Rather than applying strict criteria of methodological quality, the focus was on relevance to key conceptual domains: technological innovation, workflow integration, educational applications, and professional implications.

D.G. and E.G. independently screened all candidate sources, including peer-reviewed papers, preprints, gray literature, cross-disciplinary studies, and software/package repositories. Each source was evaluated for its ability to inform the conceptual framework of immersive technologies and AI copilots in cytopathology. For every source ultimately selected, a brief consensus report (CR) was drafted to document the rationale for inclusion and ensure traceability of decisions. Any disagreements between reviewers were resolved through discussion and consensus.

The selection flow can be summarized as follows:

- Identification: Retrieval of all candidate sources from bibliographic databases, preprint servers, gray literature, and cross-disciplinary platforms.

- Relevance Screening: Assessment of titles, abstracts, or executive summaries for alignment with the conceptual domains:

- ○

- Technological capabilities and maturity;

- ○

- Workflow and educational applications;

- ○

- Professional implications and cytopathologist role;

- ○

- AI copilots and immersive systems in cytopathology.

- Inclusion in Analysis: Sources contributing meaningful insights to at least one domain were retained for thematic synthesis.

This approach ensured that the CENR captured the diversity of emerging discussions, pilot implementations, and experimental platforms, while remaining faithful to its exploratory and integrative objectives. The use of consensus reports for each selected study further strengthens transparency and rigor in documenting how conceptually relevant sources were identified and retained.

Additional details on the study selection process are reported in the Supplementary Materials (see Supplementary Materials section). In total, 26 references [52,53,54,55,56,57,58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77] were selected and included in the results section.

3. Results

3.1. Exploring Immersive Healthcare Solutions: A Pathway to Digital Cytopathology Integrations

Immersive technologies, encompassing augmented reality (AR), virtual reality (VR), and mixed reality (MR), are transforming healthcare education, procedural training, and diagnostic workflows. Their core strength lies in creating intuitive, interactive environments in which anatomical structures, instruments, and procedural steps can be visualized in three dimensions. These platforms, accessible via VR headsets, AR-enabled devices, or even smartphones, allow learners to acquire foundational and advanced skills in a controlled setting before engaging with real patients. By enabling rehearsal of complex preoperative procedures, including detailed anatomical navigation and sequential operational steps, immersive systems enhance both cognitive and procedural understanding [52]. Compared to traditional textbooks, 2D images, or procedural videos, immersive environments provide richer sensory engagement, combining visual, spatial, and haptic cues, which support long-term knowledge retention and deeper comprehension of complex tasks.

Beyond individual skill acquisition, immersive simulations foster interdisciplinary learning and collaboration among healthcare teams. Integration with AI-driven modules enables real-time feedback, adaptive difficulty adjustment, and performance tracking, reinforcing patient safety and procedural accuracy [53]. Haptic-enabled VR systems further enhance this learning by simulating tactile interactions with tissues and instruments, promoting manual dexterity and coordination within risk-free scenarios [54]. In parallel, patient-centered applications of immersive technologies offer interactive educational experiences that reduce anxiety, improve understanding of procedures, and enhance compliance, particularly in pediatric and geriatric populations [55].

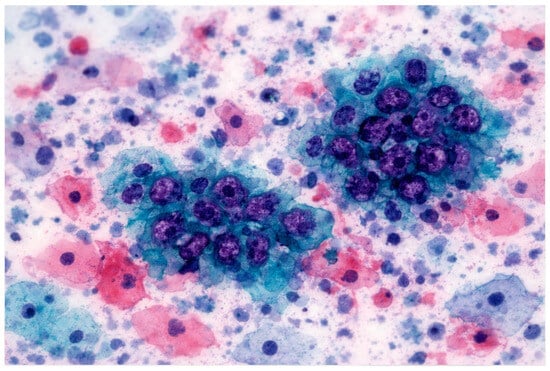

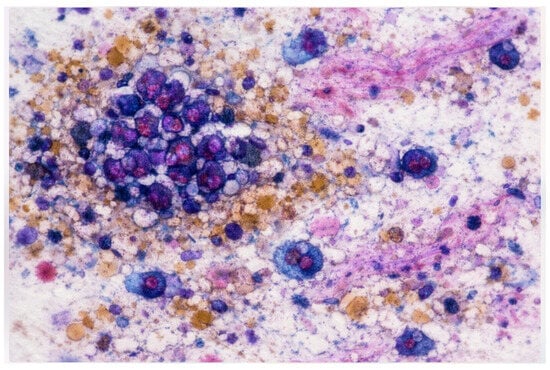

Building on these foundations, immersive technologies are progressively applied to cytopathology education and diagnostic workflows. Virtual microscopy and whole-slide imaging (WSI) platforms allow high-resolution scanning and remote examination of cytology slides, enabling telecytology and distance learning. Commercial solutions such as Leica Aperio, Philips IntelliSite, and Hamamatsu scanners provide high-quality digitized slides, supporting accurate diagnosis and structured educational interactions [56]. Optimized slide preparation and scanning improve image fidelity, which is critical for both diagnostic accuracy and effective training outcomes [56].

Beyond slide visualization, fully simulated laboratory environments are being developed. Immersive replicas of cytopathology labs allow trainees to practice fine-needle aspirations (FNAs), slide preparation, staining techniques, artifact recognition, and rapid on-site evaluations before performing real procedures [57,58,59]. Such simulations enhance procedural consistency, reproducibility, and confidence while minimizing risk and accelerating learning curves. Advanced AR/VR systems introduce interactive 3D cellular models, allowing exploration of spatial and morphological relationships that are difficult to perceive with conventional slides alone [59]. Integrative platforms like Microscope 2.0 overlay AI-generated heatmaps and annotations directly into the pathologist’s field of view, combining immersive visualization with real-time interpretive support [60]. These tools create a continuous learning environment where novices and experienced cytopathologists alike can refine their skills and optimize workflow efficiency.

Taken together, immersive healthcare solutions establish a layered pathway to digital cytopathology. They begin with foundational skill acquisition through interactive simulation, progress to collaborative and interdisciplinary learning, and culminate in application within digital laboratory environments where AI and AR/VR technologies converge. By bridging educational, procedural, and diagnostic domains, these platforms enhance safety, efficiency, and accuracy, offering a cohesive framework for integrating emerging technologies into cytopathology practice.

3.1.1. General Applications of Immersive Technologies in Healthcare

Immersive technologies—including augmented reality (AR), virtual reality (VR), and mixed reality (MR)—are increasingly used in healthcare to provide interactive, intuitive, and flexible learning environments. By enabling visualization of anatomical structures, instruments, and procedural steps in three dimensions, these platforms offer learners the opportunity to acquire both foundational and advanced clinical skills before interacting with real patients, minimizing risk and reducing cognitive load [52]. These immersive experiences allow progressive skill development, enabling trainees to rehearse complex procedures, explore surgical access routes, and internalize sequential steps in a controlled, repeatable setting.

Unlike traditional learning resources such as textbooks, 2D images, or instructional videos, immersive systems combine visual, spatial, and sometimes haptic cues, providing a multisensory experience that enhances engagement, comprehension, and long-term retention. For instance, haptic-enabled VR platforms simulate tactile interactions with tissues or surgical instruments, allowing learners to refine manual dexterity, hand–eye coordination, and procedural fluency in a risk-free environment [54]. This form of simulation is particularly valuable for procedures that require precise manipulations or fine motor skills, which are difficult to practice safely in clinical settings.

Immersive technologies also facilitate interdisciplinary learning, promoting teamwork, communication, and coordination among different healthcare professionals. When integrated with AI-driven modules, immersive platforms can provide real-time feedback, adaptive difficulty adjustment, and performance tracking, reinforcing best practices and patient safety principles [53]. By simulating realistic clinical scenarios, these tools help learners anticipate potential challenges, troubleshoot errors, and internalize standardized protocols, fostering a culture of safety and collaborative problem-solving.

Beyond training healthcare professionals, immersive technologies are increasingly applied for patient education. Interactive AR/VR experiences can help patients, particularly pediatric or geriatric populations, understand the rationale, steps, and expected outcomes of medical procedures. By providing clear visualizations of anatomy, instrumentation, and procedural sequences, these tools can reduce anxiety, improve comprehension, and increase cooperation during interventions [55]. Such patient-centered approaches support shared decision-making and enhance the overall healthcare experience.

To conceptualize the collective impact of immersive technologies, we introduce the Augmented Workforce Training Group (AWTG) framework. The AWTG highlights four key domains where immersive systems contribute to healthcare:

- Skill acquisition and procedural training: enabling interactive rehearsal, repetitive practice, and progressive mastery of clinical tasks [52];

- Interdisciplinary collaboration: fostering teamwork, communication, and adherence to patient safety principles [53];

- Realistic simulation: providing tactile, interactive, and haptic-enabled practice for surgical and procedural training [54];

- Patient engagement and education: enhancing understanding, reducing anxiety, and improving compliance through immersive educational experiences [55].

While these immersive technologies show clear promise in enhancing training, skill retention, and patient experience, it is important to approach them with cautious optimism. Most current implementations serve as supplementary educational and workflow tools rather than replacements for clinical judgment or hands-on experience. Effectiveness depends on the quality of content, realism of simulation, and integration into broader clinical education programs, emphasizing that immersive platforms are enablers rather than autonomous solutions.

Overall, the integration of immersive technologies establishes a structured, safe, and interactive learning pathway that bridges theoretical knowledge, technical skills, and patient-centered practice, providing the conceptual foundation for more specialized applications, such as in digital cytopathology.

3.1.2. Immersive Technologies in Digital Cytopathology: From Visualization to Procedural Mastery

Immersive technologies, including augmented reality (AR), virtual reality (VR), and mixed reality (MR), are progressively transforming cytopathology education and laboratory workflows by providing interactive, highly visual, and context-rich environments. These systems enable trainees and professionals to explore cellular morphology, tissue architecture, and laboratory procedures in three dimensions, significantly enhancing spatial understanding, procedural comprehension, and interpretive reasoning beyond conventional 2D images or video-based learning [56,57,58,59,60].

A principal advantage of these immersive platforms is their ability to simulate complete laboratory environments, from slide preparation and staining protocols to fine-needle aspirations (FNAs) and on-site evaluations. By digitally replicating both routine and complex tasks, immersive systems create a safe, repeatable, and risk-free environment where learners can develop technical dexterity, troubleshoot errors, and internalize standard operating procedures before engaging with real specimens [57,58]. Studies indicate that such simulations improve procedural consistency, confidence, and efficiency, fostering accuracy and reliability in laboratory practice [59].

Beyond procedural rehearsal, immersive technologies provide enhanced interaction with cellular and tissue structures. Advanced AR/VR tools allow the manipulation of 3D cellular models, revealing spatial and morphological relationships that are challenging to perceive using traditional microscopy alone [59]. Some platforms integrate real-time visual overlays, guiding users through complex analyses and highlighting key diagnostic features for educational purposes [60]. These interactive experiences support the development of critical observation skills, pattern recognition, and interpretive reasoning, reinforcing structured learning while maintaining controlled, low-risk conditions.

Immersive platforms also facilitate team-based and interdisciplinary learning, promoting collaboration among cytotechnologists, pathologists, and other laboratory personnel. By simulating integrated laboratory workflows, shared case discussions, and coordinated diagnostic decision-making, these technologies enhance communication, workflow understanding, and adherence to procedural standards, contributing to both educational and operational improvements [53,56].

Additionally, immersive technologies can be applied to patient engagement and education, particularly for procedures that are complex or anxiety-inducing. Interactive 3D visualizations allow patients to understand procedural steps, laboratory workflows, and expected outcomes, improving comprehension, reducing fear, and enhancing compliance with clinical protocols [55]. While the primary focus here remains on cytopathology, these examples illustrate a broader principle: immersive platforms offer multi-sensory, interactive, and context-aware experiences that bridge the gap between theoretical knowledge, technical skill, and practical application [52,53,54,55].

It is, however, important to make the appropriate considerations regarding boundaries.

Despite their advantages, immersive technologies have well-defined limits that must be acknowledged. First, these systems do not replace hands-on experience with actual specimens, nor can they fully substitute the nuanced judgment of experienced cytopathologists. Their strength lies in preparatory training, procedural rehearsal, and enhanced visualization, but diagnostic authority remains with human experts.

Second, immersive platforms are dependent on data quality and fidelity. High-resolution imaging, accurate 3D reconstruction, and realistic simulation of tactile interactions (in haptic-enabled systems) are crucial; poor input quality can propagate errors in learning or misrepresent anatomical and cellular structures [56,57].

Third, technological and logistical constraints, including hardware cost, software compatibility, and infrastructure requirements, may limit widespread adoption, particularly in resource-constrained settings. Careful integration into curricula and workflows is necessary to ensure practical feasibility and sustainability.

Finally, immersive systems should be considered complementary to, not substitutive of, AI-assisted diagnostic tools or traditional teaching methods. While AR/VR can enhance perception, spatial understanding, and procedural rehearsal, they do not inherently perform analysis, generate diagnoses, or replace professional judgment. Future integration with AI-assisted guidance, workflow optimization, or tool-augmented pipelines should be approached cautiously, maintaining human oversight and educational primacy [56,57,58,59,60].

In sum, immersive technologies in digital cytopathology act as comprehensive educational and procedural scaffolds, supporting visualization, procedural rehearsal, interdisciplinary collaboration, and patient engagement. By acknowledging their boundaries, these platforms provide a safe, progressive, and context-aware learning pathway, laying the foundation for future, carefully layered integrations with AI and other digital tools while preserving accuracy, reproducibility, and human oversight [56,57,58,59,60].

Table 5 provides a structured synthesis of key studies that have contributed to understanding the role of immersive and digitally mediated technologies in cytopathology training, laboratory workflows, and patient engagement. While some studies focus on clearly immersive modalities such as virtual reality (VR), augmented reality (AR), and haptic simulations, others [56,57,58] explore virtual digital reconstruction, slide scanning, and computational platforms that, although not immersive per se, provide essential high-fidelity digital environments supporting spatial understanding, procedural rehearsal, and workflow optimization.

Table 5.

Technologies, contexts, purposes, and boundaries of reviewed studies. The table summarizes selected studies, the technologies and settings used, the purpose or focus of each study, and key observations or limitations. “Study/Authors” identifies the cited work; “Technology/Setting” describes the immersive or AI-assisted tool and its context of use; “Purpose/Focus” outlines the main objective of the study; “Observations/Boundaries” highlights notable outcomes, limitations, or areas requiring further validation.

This collection of studies highlights both the opportunities and the boundaries of immersive and digital technologies. Immersive tools enhance procedural training, visualization of cellular and tissue structures, and interdisciplinary collaboration, yet they do not replace hands-on experience or the judgment of experienced cytopathologists. Digital and computational tools further contribute by enabling accurate data capture, repeatable workflows, and safe practice, forming the broader ecosystem in which immersive experiences can be effectively integrated.

By combining these insights, Table 5 summarizes the technologies, contexts, purposes, and observed boundaries of the reviewed studies, providing a clear overview of the landscape that underpins the subsequent discussion on immersive technologies in digital cytopathology.

3.2. Integrating Domain-Specific LLMs and VLMs for Workflow Support in Digital Pathology

3.2.1. Limits of LLMs and VLMs in Direct Medical Diagnosis

The reviewed literature consistently emphasizes that large language models (LLMs), including vision–language models (VLMs), are not designed to function as autonomous diagnostic systems in clinical medicine, digital pathology, or cytopathology. Across multiple clinical domains, LLM-based systems have demonstrated utility primarily as decision-support and reasoning aids rather than as diagnostic authorities. Reviews and perspective articles explicitly frame LLMs as tools for enhancing, rather than replacing, human expertise, highlighting their role in synthesizing guidelines, structuring clinical information, and supporting complex decision-making processes [61].

Empirical studies evaluating LLMs in diagnostic contexts report encouraging performance in structured or simulated settings, but also delineate clear boundaries of applicability. GPT-4–based systems have shown the ability to generate differential diagnoses, summarize longitudinal patient records, and assist in complex case reasoning when provided with curated clinical information [63]. Similarly, guideline-aware implementations, such as those aligned with National Comprehensive Cancer Network (NCCN) protocols, demonstrate value in reinforcing adherence to standardized care pathways, particularly in oncology decision support [62]. However, these systems operate by reasoning over provided abstractions—clinical text, structured inputs, or summarized findings—rather than by extracting diagnostic features directly from raw medical data.

This distinction is further reinforced by evaluations of LLM performance on professional examination-style tasks. Comparative analyses of LLMs on specialty board exam questions in the health domain reveal strong performance in knowledge recall and protocol-based reasoning, yet also expose vulnerabilities to hallucination, contextual misinterpretation, and lack of causal grounding, underscoring their unsuitability for unsupervised clinical diagnosis [63]. Together, these findings indicate that LLM competence is tightly coupled to the structure, completeness, and framing of the input information, rather than to independent diagnostic inference.

The limitations of LLMs become particularly evident in pathology and cytopathology, where diagnosis depends on fine-grained morphological patterns, spatial relationships among cells, and tissue architecture that are not readily reducible to textual descriptors. Even in multimodal settings, general-purpose VLMs rely on visual encoders that are typically less specialized than dedicated pathology models. As shown in studies of multimodal pathology assistants, diagnostic reliability arises from large-scale, domain-specific visual foundation models, while the language component primarily supports interpretation, explanation, and reporting [64,65].

PathChat and TITAN exemplify this paradigm, demonstrating that clinically meaningful performance in digital pathology is achieved through pretrained whole-slide visual encoders, with LLMs serving as interactive and explanatory layers rather than as primary diagnostic engines [65]. Similarly, analyses of generalist multimodal chatbot systems deployed as pathology copilots reveal systematic pitfalls, including reduced robustness to rare entities and sensitivity to prompt formulation, further cautioning against their use as standalone diagnostic tools [67].

In cytopathology, where datasets are smaller and morphological variability is high, these limitations are even more pronounced. Architectures such as GNNFormer explicitly model cellular morphology and spatial organization using graph-based representations, illustrating that accurate cytopathological reasoning requires inductive biases and model structures tailored to biological organization, well beyond the capabilities of general-purpose LLMs or loosely coupled VLMs [66].

From a clinical governance and regulatory standpoint, these findings converge on a consistent conclusion: expecting LLMs or VLMs to perform standalone medical diagnosis is both conceptually and practically inappropriate. Their probabilistic, generative nature, combined with sensitivity to input framing and lack of intrinsic grounding in biological signal extraction, conflicts with requirements for reproducibility, traceability, and clinical validation. Accordingly, their appropriate role lies in collaborative, tool-augmented pipelines, where diagnostic-grade neural networks trained on domain-specific data perform core inference, and LLMs provide orchestration, contextual reasoning, and communication support [61,62,63,64,65,66,67]. Smaller neural networks specialized for specific cytological or pathological tasks can be integrated as modular tools, enhancing the pipeline’s versatility and diagnostic reliability.

3.2.2. Foundation Models and Tool-Augmented Architectures in Digital Pathology

Recent advances in computational pathology have been driven primarily by the emergence of large-scale, domain-specific foundation models trained on whole-slide images (WSIs) and paired visual–textual data. Unlike general-purpose or pathology-adapted VLMs, these foundation models are explicitly designed to capture the multi-scale morphological, spatial, and contextual features that underpin diagnostic reasoning in histopathology and cytopathology. By learning pathology-native visual representations directly from large and heterogeneous datasets, they provide a robust substrate for downstream diagnostic, prognostic, and reporting tasks, reducing reliance on narrowly optimized, task-specific architectures.

This shift marks a transition from task-centric modeling to representation-centric learning in computational pathology. Foundation models emphasize generalizable visual embeddings that can be reused across multiple clinical applications, while language components are introduced selectively to enhance interpretability, interaction, and workflow integration rather than to perform primary diagnostic inference. General-purpose LLMs, such as ChatGPT, serve here mainly as benchmarks to illustrate reasoning capabilities and workflow integration; they are not medically reliable by design.

Domain-Specific Foundation Models

CONCH represents a paradigmatic example of a pathology-native visual–language foundation model. It leverages contrastive learning over more than 1.17 million image–caption pairs derived from histopathology images and biomedical text, enabling task-agnostic pretraining that yields highly transferable representations [68]. CONCH demonstrates strong performance across a wide range of downstream tasks—including classification, segmentation, captioning, and cross-modal retrieval—illustrating how diagnostic versatility emerges from robust visual representations rather than from task-specific optimization.

PathOrchestra further extends this paradigm by scaling both dataset diversity and evaluation breadth. Trained on 287,424 whole-slide images collected across multiple institutions and tissue types, PathOrchestra is evaluated on over 100 clinically relevant tasks, encompassing pan-cancer classification, lesion detection, biomarker assessment, and structured report generation [69]. Its results underscore a key insight: clinically meaningful performance in digital pathology is driven by large-scale, heterogeneous pre-training that captures real-world morphological variability.

Prov-GigaPath reinforces this conclusion by demonstrating that pretraining on provenance-aware, real-world datasets derived from large healthcare networks yields representations that generalize across patients, tissues, and clinical settings [70]. By explicitly addressing distributional shift and institutional variability, Prov-GigaPath highlights the importance of pathology-native foundation models for robust and deployable clinical AI systems.

Recently, PathologyVLM represents a state-of-the-art large vision–language model explicitly designed for pathology image understanding [71]. Trained on high-resolution whole-slide images paired with rich textual annotations, it captures multi-scale morphological features, spatial relationships, and contextual information crucial for histopathology and cytopathology interpretation. Unlike general-purpose multimodal models, PathologyVLM is optimized to align visual and textual representations, enabling clinicians to perform interactive queries, retrieve similar cases, and explore structured and semantically meaningful report drafts. Its integration within tool-augmented workflows allows it to complement pathology-native visual foundation models, providing interpretive and explanatory layers that enhance accessibility, reproducibility, and consistency without taking over diagnostic authority. In practice, PathologyVLM functions as a workflow-integrated copilot: it supports quality assurance, training, and report standardization, facilitates guideline-aligned documentation, and allows for real-time clinician interaction with complex image data. By leveraging a robust foundation in domain-specific data, PathologyVLM illustrates the emerging paradigm in computational pathology, where vision–language models extend the interpretive reach of validated visual encoders while ensuring that core diagnostic decisions remain with human experts and specialized foundation models.

Multimodal Copilots and Workflow Support in Pathology and Cytopathology

Within a foundation-model-centric framework, multimodal copilots are best understood as interface and coordination layers rather than autonomous diagnostic agents. Their primary function is to mediate interactions between clinicians and complex AI systems by translating model outputs into interpretable, structured, and clinically meaningful representations. LLMs pretrained on extensive clinical text corpora, such as those evaluated in the DRAGON benchmark, demonstrate strong performance in annotation, report drafting, clinical information extraction, and normalization of unstructured data, offering scalable and cost-effective support across multiple stages of the pathology workflow without supplanting human expertise or diagnostic responsibility [72].

Beyond efficiency gains, multimodal copilots contribute to standardization and consistency in reporting by enforcing structured templates, harmonizing terminology, and supporting guideline-aligned documentation. These capabilities are particularly relevant in high-throughput pathology settings, where variability in reporting practices and cognitive load can impact turnaround times and report quality. Importantly, the role of the copilot remains explicitly supportive: it facilitates information flow and interpretability while preserving human oversight and accountability.

In cytopathology, where annotated datasets are limited and morphological variability is high, maintaining a clear division of labor between visual inference and language-based interpretation is especially critical. Systems such as PathChat and TITAN exemplify this design philosophy by integrating large, pathology-native visual encoders with language models that summarize, contextualize, and explain model-derived findings rather than independently inferring diagnoses [65,66]. These systems enable natural language interaction with complex visual models, allowing clinicians to query results, explore alternative interpretations, and generate structured reports, while diagnostic reasoning remains anchored in specialized visual representations.

The importance of architecture-aware design is further highlighted by graph-based approaches such as GNNFormer, which explicitly model cell-to-cell relationships and spatial organization to capture biologically meaningful structures essential for accurate cytopathology reporting [66]. By incorporating inductive biases aligned with cellular organization, such models address limitations inherent to language-centric or loosely adapted multimodal systems. In this context, multimodal copilots act as translators between biologically grounded model outputs and clinical narratives, rather than as sources of independent diagnostic inference.

Collectively, these systems demonstrate that clinical value in digital pathology and cytopathology emerges from collaborative, human-in-the-loop architectures. Pathology-native visual models provide the diagnostic signal, pathology-adapted language components enhance interaction, explanation, and reporting, and multimodal copilots coordinate these elements within existing clinical workflows. This collaborative paradigm improves efficiency, report quality, and training while deliberately deferring diagnostic authority to validated models and human experts, in alignment with current best practices for responsible AI use in clinical research settings.

Model Adaptation, RAG, and Cost-Aware Strategies for Digital Cytology

The deployment of AI systems in digital pathology and cytology is shaped not only by algorithmic performance, but also by practical constraints, including computational cost, data availability, infrastructure heterogeneity, and the need for continual adaptation to evolving clinical knowledge and guidelines. These constraints are particularly relevant in cytopathology, where datasets are smaller, annotation is labor-intensive, and subspecialty-specific variability limits the feasibility of large-scale end-to-end model retraining.

Parameter-efficient fine-tuning techniques, such as Low-Rank Adaptation (LoRA) and Quantized LoRA (QLoRA), provide a practical solution. They enable adaptation of large language models to specific institutional, subspecialty, or workflow contexts with substantially reduced computational and memory overhead [72]. By modifying only a small subset of model parameters, these approaches allow customization of LLM behavior—for instance, reporting style, terminology alignment, or local guideline compliance—without requiring full model retraining. This ensures that LLMs support workflow integration without attempting primary diagnostic inference.

When combined with Retrieval-Augmented Generation (RAG), pathology-adapted LLMs can further address limitations related to static training data and knowledge obsolescence. RAG architectures allow LLMs to dynamically access curated, up-to-date, domain-specific knowledge, including clinical guidelines, consensus statements, exemplar cases, and institutional protocols, at inference time without altering the underlying diagnostic model [72,73]. This approach is particularly suitable for digital cytology, where large, curated datasets comparable to those in histopathology are often lacking.

In practice, RAG-enabled LLMs provide interpretive, educational, and reference-driven support rather than direct diagnostic inference. By delivering contextual knowledge alongside model outputs, they enhance clinician understanding, facilitate quality assurance, and support training and continuing education. Importantly, this design reinforces a central principle in computational pathology: LLMs augment human expertise and specialized visual models, while diagnostic authority remains with validated models and clinicians.

MedGemma and Domain-Specific LLMs

MedGemma exemplifies an emerging class of domain-specific large language models optimized for multimodal medical understanding. Built on the Gemma 3 architecture, its multimodal variants (4B and 27B) integrate a pretrained SigLIP image encoder capable of processing histopathology and other clinical images. MedGemma is Google’s medical LLM and serves as a proof-of-concept system; it is not clinically approved. This enables the model to support image interpretation, structured report generation, and clinically grounded reasoning within a unified framework [74,75,76,77]. While not clinically approved, MedGemma serves as a proof of concept for how medical-specific pretraining and architectural alignment can enhance workflow integration without replacing human expertise or validated diagnostic models.

From a systems perspective, MedGemma-like models are best understood as pathology-adapted language components within tool-augmented pipelines rather than standalone diagnostic engines. When integrated with pathology-native visual foundation models, such as CONCH, PathOrchestra, or TITAN, these LLMs [65,66,67,68,69] can:

- Contextualize visual model outputs to improve interpretability;

- Generate structured and standardized reports aligned with clinical guidelines;

- Facilitate interactive exploration of findings for clinicians.

All diagnostic inference remains grounded in validated visual foundation models, ensuring that LLM outputs augment rather than supplant clinical decision-making.

This layered architecture—combining pathology-native foundation models, pathology-adapted LLMs, and cost-aware adaptation strategies (e.g., LoRA/QLoRA fine-tuning)—preserves diagnostic rigor while leveraging the strengths of LLMs in reasoning, communication, and workflow integration. By explicitly separating diagnostic signal extraction from interpretive and narrative functions, these pipelines maintain reproducibility, traceability, and accountability, aligning with emerging regulatory, ethical, and safety expectations for responsible clinical AI deployment in high-stakes domains such as digital pathology and cytopathology.

Finally, RAG further extends the utility of these pipelines by allowing LLMs to access curated, up-to-date, domain-specific information—such as clinical guidelines, exemplar cases, and institutional protocols—without modifying the underlying diagnostic model. In practice, RAG-enabled LLMs provide interpretive, educational, and reference-driven support, enhancing clinician understanding, quality assurance, and training, while diagnostic authority firmly remains with validated pathology models and human experts [72,73]. Smaller, task-specific neural networks can be incorporated as tools to further strengthen domain-specific capabilities, supporting a modular and collaborative AI workflow.

Table 6 provides a structured overview of the studies that informed our analysis of large language models (LLMs), vision–language models (VLMs), and domain-specific foundation models in digital pathology and cytopathology. The table is organized to summarize each study’s technological approach, focus, and observed boundaries, highlighting their role as supportive tools rather than standalone diagnostic systems. This organization emphasizes the distinction between visual foundation models, language components, and tool-augmented pipelines, illustrating how clinical, interpretive, and workflow functions are distributed across these systems. Web-based resources, such as MedGemma, are included to reflect practical examples of workflow-integrated multimodal LLMs.

Table 6.

Technologies, contexts, purposes, and boundaries of reviewed studies involving large language models (LLMs). The table summarizes selected studies, the LLM technologies and their clinical or educational settings, the main purpose or focus of each study, and key observations or limitations. “Study” identifies the cited work; “Technology/Setting” describes the LLM used and its context; “Purpose/Focus” outlines the primary objective of the study; “Observations/Boundaries” highlights notable outcomes, limitations, or considerations for safe and effective use.

4. Discussion

4.1. Discussion—Key Findings and Responses to Research Questions

This review was structured around five key questions: (1) How are immersive technologies being applied in healthcare and cytopathology, and what benefits do they provide for training, diagnostics, and workflow optimization? (2) In what ways can VLM-based copilots support clinical decision-making, report generation, and cognitive augmentation in cytopathology? (3) What technical, operational, and workflow challenges must be addressed to integrate these tools into routine practice? (4) How do ethical, regulatory, and organizational factors influence their safe and effective adoption? (5) How is the role of the cytopathologist evolving in response to immersive tools and AI copilots, and what implications emerge for professional competencies and training?

The literature indicates that immersive technologies, such as virtual and augmented reality platforms, can enhance procedural training, spatial understanding, and collaborative learning (Clay et al., 2024 [52]; Chance, 2025 [53]; Lim & Yap, 2024 [54]; Iqbal et al., 2024 [55]). They allow learners to rehearse clinical procedures in controlled environments, which is particularly valuable in cytopathology, where real patient exposure is limited. However, benefits are often contextual and evidence remains limited: studies frequently involve small cohorts, simulated scenarios, or early-stage prototypes, which restricts generalizability. High-quality empirical validation in real-world clinical workflows is still lacking, and the effectiveness of immersive technologies depends heavily on system fidelity, user training, and integration with standard clinical processes. These observations partially address the first research question, highlighting both potential and limitations.

For VLM-based copilots, evidence shows that these systems can support clinical decision-making, interactive report generation, and structured interpretation of complex data (Lu et al., 2024 [64,68]; Ding et al., 2024 [65]; Yan et al., 2025 [69]; Xu et al., 2024 [70]). They function as augmentative tools rather than autonomous diagnostic agents, providing contextualized suggestions, evidence synthesis, and interactive guidance. Yet, performance is highly dependent on the quality of visual encoders, the specificity of pretraining datasets, and the design of task-specific pipelines. Risks include reduced reliability with rare entities, sensitivity to input prompts, and potential overreliance by users (Liu et al., 2024 [67]; Al-Asi et al., 2025 [73]). This nuanced understanding directly responds to the second research question, illustrating how AI can enhance cognitive processes without replacing human expertise, while also delineating boundaries of applicability.

Integration into clinical practice faces multiple challenges. Technical constraints include variability in hardware, software, and platform maturity, as well as interoperability with existing laboratory information systems (Dai et al., 2025 [71]; Al-Asi et al., 2025 [73]). Operational challenges involve user training, workflow adaptation, and balancing cognitive load. Organizational, regulatory, and ethical considerations impose additional limitations: clear accountability, adherence to clinical guidelines, and structured human oversight are essential to mitigate risks of misuse (Jia Li et al., 2025 [61]; Kaiser et al., 2025 [62]; Eriksen et al., 2024 [63]). These points address the third and fourth research questions, emphasizing that successful adoption requires careful planning, validation, and governance.

Finally, the role of the cytopathologist is evolving in response to these technologies. Professionals are expected to integrate AI copilots and immersive tools into their practice, which may shift required competencies toward digital literacy, data interpretation, and workflow management, while maintaining diagnostic expertise (Zhou et al., 2023 [66]; Liu et al., 2024 [67]). This observation responds to the fifth research question, suggesting that while these technologies provide augmentation, they also reshape professional identity, training needs, and the division of responsibilities within the cytopathology team.

Overall, the evidence presents a balanced picture: immersive technologies and VLM-based copilots offer measurable enhancements in training, workflow, and cognitive support, but their benefits are bounded by technical, operational, and organizational constraints. They are not yet mature for autonomous diagnostic use, and careful integration with validated clinical models and human expertise remains essential. These findings set the stage for a more detailed examination in the subsequent sections of the discussion: Technological Capabilities and Maturity, Workflow and Educational Applications, Professional Implications, and Conceptual Limitations and Responsible Use. The following sections of the discussion are further corroborated by additional evidence from the literature [78,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100,101,102,103,104], providing support for the analysis of technological capabilities, workflow applications, professional implications, and ethical considerations.

4.2. Discussion: Conceptual and Practical Considerations for Immersive and AI-Assisted Systems in Cytopathology

4.2.1. Technological Capabilities and Maturity

The reviewed literature suggests that immersive technologies and AI-based copilots in healthcare and cytopathology are characterized by heterogeneous levels of technological maturity. Virtual and augmented reality systems have reached sufficient stability for use in training and educational contexts, where controlled environments and predefined scenarios limit clinical risk (Clay et al., 2024 [52]; Lim & Yap, 2024 [54]; Iqbal et al., 2024 [55]). In these settings, immersive platforms provide high-fidelity simulations that support procedural rehearsal, spatial understanding, and exposure to rare or complex scenarios. However, their translation into routine diagnostic workflows remains limited by hardware costs, infrastructure requirements, and variability in platform performance.

In parallel, advances in computational pathology have been driven primarily by domain-specific visual foundation models rather than by general-purpose LLMs or VLMs. Models such as CONCH, PathOrchestra, and Prov-GigaPath demonstrate that diagnostic performance in digital pathology relies on large-scale, pathology-native pretraining capable of capturing morphological variability across tissues and institutions (Lu et al., 2024 [68]; Yan et al., 2025 [69]; Xu et al., 2024 [70]). Vision–language components, when present, act as interpretive or interactive layers rather than primary sources of diagnostic inference. Pathology-adapted VLMs further extend accessibility through multimodal retrieval and navigation but remain constrained by the quality and specialization of their visual encoders (Dai et al., 2025 [71]).

Overall, the current technological landscape supports augmentation and workflow assistance, but not autonomous diagnostic deployment. Maturity varies across components: while immersive hardware and foundation models are increasingly robust, their integration into cohesive, validated clinical systems is still evolving.

4.2.2. The Copilot Paradigm: Human-in-the-Loop, Trends, and Lessons from Other Diagnostics Fields

Recent bibliometric and narrative trends indicate that the concept of the AI copilot has emerged as a central organizing principle in biomedical artificial intelligence. Searches in PubMed reveal a rapidly expanding body of literature, particularly regarding immersive technologies, up to the period during which this study was conducted. A simple search using the terms.

(“Virtual reality”[Title/Abstract]) OR (“Extended reality”[Title/Abstract]) OR (“Augmented reality”[Title/Abstract])

Demonstrates a total of 31,462 studies [97] since 1991, including 5521 reviews (18%). Among these, 25,362 studies were published in the last 10 years, and 18,536 studies in the last 5 years (59%), highlighting the accelerating adoption of immersive approaches in biomedical research.

Large language models (LLMs), as expected, are of more recent diffusion. A PubMed search using “large language model”[Title/Abstract] retrieved 3901 [98] studies, including 323 reviews (8%). Except for a single study published in 2019, all studies have been concentrated within the last three years, reflecting the rapid rise of LLM-based research in biomedicine. The relatively low proportion of review articles among LLM studies indicates that the field is still in an early, exploratory phase. Most publications focus on original research, model development, or preliminary applications, rather than on synthesis, guidelines, or consolidated evidence. This contrasts with immersive technologies, where the higher number of reviews reflects a more mature body of literature with established conceptual frameworks, methodological consensus, and educational or clinical integration.

The overall literature on immersive technologies and large language models (LLMs) in biomedicine has grown rapidly over the last decade. PubMed searches and bibliometric analyses show accelerating publication rates across original studies, preprints, clinical guidelines, and related databases. However, when the focus is narrowed to cytopathology, the number of studies is extremely limited—so few that they can be “counted on the fingers of one hand.” as reported in Section 2. This scarcity highlights the early stage of empirical research in this domain and motivated the implementation of a CENR, aimed at synthesizing the available evidence from different sources and framing it within a coherent conceptual structure. The purpose is to reaffirm the copilot paradigm: AI systems should be understood as cognitive assistants, supporting and structuring human decision-making, rather than as autonomous diagnostic agents.

To contextualize the copilot concept in cytopathology, it is useful to examine more mature diagnostic fields such as radiology. In radiology, AI systems have evolved from stand-alone classifiers into integrated copilots that actively support multiple stages of clinical workflows, including image triage, lesion detection, automated measurements, report drafting, guideline-aligned decision support, professional training, and even patient-facing applications, such as immersive interventions that reduce anxiety, improve patient understanding, and foster collaborative engagement [99,100,101,102]. Across these applications, diagnostic responsibility remains with the human expert, and emerging regulatory frameworks consistently reinforce that AI should function as a supportive cognitive agent, rather than as an autonomous decision-maker. Workflows are designed so that algorithms propose, rank, or visualize hypotheses, while the clinician interprets, validates, and finalizes decisions.

The value of AI copilots lies not in providing epistemic authority, but in mediating between heterogeneous data sources, complex clinical guidelines, and human reasoning. When combined with immersive or interactive visualization, these copilots allow clinicians to navigate, interrogate, and contextualize high-dimensional biomedical information—capabilities that exceed what static interfaces or isolated tools can achieve [101,102]. Reference [103] directly reinforces this perspective by examining the role of image biomarkers and explainable AI: the study compares handcrafted features versus deep-learned features and highlights how interpretability of AI-derived representations is critical to supporting human understanding, trust, and validation in diagnostic decision-making. This underscores that effective copilot systems require both computational accuracy and transparent feature representation, enabling clinicians to trace AI reasoning and integrate it safely into their workflow.

In cytopathology, the limited availability of copilot-oriented studies does not indicate a lack of clinical relevance; rather, it reflects the absence of a well-defined conceptual and structural framework that connects large language models (LLMs), pathology-native visual foundation models, and immersive interfaces to practical diagnostic workflows. Unlike radiology, which has successfully transitioned from “AI as a tool” to “AI as a copilot” [101,102], cytology remains largely fragmented, with most AI applications evaluated in isolated, narrowly scoped tasks. This fragmentation explains why purely quantitative or systematic syntheses are insufficient to capture the potential of AI-assisted workflows in cytopathology.

By grounding this review in both bibliometric trends and the more mature radiology copilot paradigm, the CENR highlights how human-in-the-loop, immersive, and AI-assisted systems could evolve in cytopathology from isolated diagnostic tools into fully integrated copilots. The focus is on workflow integration, interpretability, and human augmentation, rather than on individual model performance. Incorporating insights from [103] further strengthens this approach by demonstrating that explainable features—whether handcrafted or deep-learned—are essential for clinicians to understand, validate, and trust AI outputs, ensuring safe and effective adoption in high-stakes diagnostic environments. This conceptual framework can guide future research, workflow development, educational programs, and patient engagement strategies, providing a roadmap for translating isolated AI tools into clinically meaningful copilots in cytopathology.

4.2.3. Workflow and Educational Applications

Across the reviewed studies, immersive technologies are most consistently applied in education, training, and workflow simulation, where they offer clear advantages with relatively low risk. VR and AR platforms enable repeated practice, visualization of complex anatomical or cellular structures, and remote collaboration, addressing long-standing limitations in cytopathology training related to case availability and variability (Clay et al., 2024 [52]; Venkatesan et al., 2021 [59]). These benefits align with broader trends in medical education, where immersive tools complement traditional instruction rather than replacing hands-on experience.

VLM-based copilots contribute to workflow support by assisting with report drafting, information retrieval, and interactive exploration of model outputs (Ding et al., 2024 [65]; Liu et al., 2024 [67]). In high-throughput pathology settings, such tools may reduce cognitive load by structuring information, harmonizing terminology, and supporting guideline-aligned documentation (Bosma et al., 2025 [72]). However, evidence of direct improvements in diagnostic accuracy or efficiency remains limited and context-dependent. Integration challenges include interoperability with laboratory information systems, adaptation to local reporting practices, and the need for sustained user training.

Thus, while workflow and educational applications represent the most mature and immediately applicable use cases, their effectiveness depends on careful alignment with existing clinical processes and realistic expectations regarding their capabilities.

4.2.4. Professional Implications and the Evolving Role of the Cytopathologist

The introduction of immersive tools and AI copilots is reshaping the professional landscape of cytopathology, not by displacing expertise, but by reconfiguring how expertise is exercised. Cytopathologists are increasingly positioned as supervisors of AI-assisted workflows, responsible for interpreting outputs, validating findings, and contextualizing results within clinical narratives (Zhou et al., 2023 [66]; Liu et al., 2024 [67]). This shift emphasizes competencies in digital literacy, critical appraisal of AI outputs, and integration of multimodal information.