Abstract

A common issue in the Multi-Objective Portfolio Optimization Problem (MOPOP) is the presence of uncertainty that affects individual decisions, e.g., variations on resources or benefits of projects. Fuzzy numbers are successful in dealing with imprecise numerical quantities, and they found numerous applications in optimization. However, so far, they have not been used to tackle uncertainty in MOPOP. Hence, this work proposes to tackle MOPOP’s uncertainty with a new optimization model based on fuzzy trapezoidal parameters. Additionally, it proposes three novel steady-state algorithms as the model’s solution process. One approach integrates the Fuzzy Adaptive Multi-objective Evolutionary (FAME) methodology; the other two apply the Non-Dominated Genetic Algorithm (NSGA-II) methodology. One steady-state algorithm uses the Spatial Spread Deviation as a density estimator to improve the Pareto fronts’ distribution. This research work’s final contribution is developing a new defuzzification mapping that allows measuring algorithms’ performance using widely known metrics. The results show a significant difference in performance favoring the proposed steady-state algorithm based on the FAME methodology.

1. Introduction

The Portfolio Optimization Problem (POP) is always present in organizations. One key issue in POP’s decision process is the uncertainty caused by the variability in the project benefits and resources. The latter situation arises the necessity of a tool for describing and representing uncertainty associated with real-life decision-making situations. The POP searches a subset of projects under a predefined set of resources that maximizes the produced benefits; its formal definition is as follows.

Let A be a finite set of N projects, each characterized by estimates of its impacts and resource consumption. A portfolio is a subset of A that can be represented by a binary vector that assigns for every financed project i, and otherwise. Let be the vector of impacts resulting from the linear sum of the attribute values of each financed project in x, i.e., the vector of size p representing multiple attributes related to organizational goals that describe the consequences of a portfolio . Assume w.l.o.g. that the higher an attribute’s value is, the better. Then, Problem (1) formally defines POP.

In Problem (1), RF is the space of feasible portfolios, usually determined by the available budget and other constraints that the Decision Maker (DM) wants to impose (e.g., budget limits on types, geographic areas, social roles of projects, etc.).

Different scientific research works address POP’s variant in Problem (1), considering precise values on the available resources and the projects’ impacts [1,2,3,4,5,6]. Moreover, there is an area called Portfolio Decision Analysis (PDA) dedicated to studying mathematical models to solve POP. There are theories, methods, and practices developed within this area to help decision-makers select projects from a very large set of them, taking into account relevant constraints, preferences, uncertainty, or imprecision [7]. PDA-related problems’ difficulty comes from a combination of factors such as (1) large entry space; (2) consequences of multidimensionality in portfolio construction and selection; or (3) qualitative, imprecise or uncertain information.

A large entry space requires a solution process with exponential complexity for decision-making problems, even with simple decisions on allocating resources for candidate projects.

The consequences of multidimensionality in portfolio construction and selection relate conflicting attributes with difficulty in the decision process. Usually, the larger the number of dimensions, the more complex the solution space is. The latter causes a situation with so many solutions that it easily exceeds the human cognitive capabilities for evaluating and selecting the best candidate solutions [8].

The qualitative, imprecise, or uncertain information exists because of the varying nature of the distinct attributes and resources considered in the construction of portfolios. Such information can sometimes occur from different circumstances as a DM needs to use non-numerical data to describe the effects of a project instead of a quantitative measure. Other cases might indicate that there is lack of knowledge about future states of specific criteria, vagueness in the provided information, the values used to describe attributes or resources are not accurately known beforehand, or vague approximations and areas of ignorance. All the previous situations, denoted hereafter as uncertainty, limit the scientific approach in Operational Research-Decision Aiding [9], and modeling them using probability distributions can be a challenge [9].

Several optimization problems use fuzzy numbers to model the uncertainty in parameters’ values from arbitrariness, imprecision, and poor determination [10]. Among the most recent and works related to the Multi-objective Portfolio Optimization Problem are the following: García [11] solved the Multi-objective and Static Portfolio Optimization Problem (MOSPOP) with real parameters using the generational algorithms HHGA-SPPv1 and HHGA-SPPv2 and considering the preferences of a DM. Rivera-Zarate [12] uses the Non-Outranked Ant Colony Optimization (NO-ACO) to address a variant of MOSPOP that includes interdependency among objectives and that has partial support with real parameters. Bastiani [13] solves the MOSPOP variant that includes synergy using ACO-SPRI, ACO-SOP, and ACO-SOP, three strategies based on the ACO that incorporate in their search process priority ranking, preferences, and synergy, respectively. Sánchez [14] proposes using classification methods on the generational algorithms H-MCSGA and I-MCSGA to approximate the Region of Interest (ROI) in MOSPOP. The first algorithm adds the preferences at the beginning of the process, while the second algorithm adds them during the process (while interacting with the DM). Balderas addresses the MOSPOP with uncertainty using intervals; it proposes the generational algorithm I-NOSGA based on NSGAII but incorporates interval numbers. I-NOSGA includes preferences “a priori” and uses Crowding Distance as its density estimator. Martínez [15] addresses the Dynamic Multi-objective Portfolio Optimization Problem (DMOPOP) with real parameters; the proposed approach introduces dynamism by changing the problem definition at the end of each period. Martínez presents three new multi-objective algorithms that also incorporate “a priori” preferences: the generational D-NSGA-II-FF, a new version of a classic genetic algorithm of no-dominance; the D-AbYSS-FF, a modified version of scatter search; and the D-MOEA\D-FF, a new variant of a state-of-the-art algorithm based on decomposition.

Table 1 summarizes the main features of the previously described works. Column 1 cites the research work and the studied POP variant. Columns 2 to 7 show the considered features in the research works: the solution algorithms it proposed, the type of instances it solved, the performance metrics it used, if it integrated preferences in the search process, if it considered a static or dynamic POP’s version, the type of parameters it used, and if it used a steady-state selection scheme or not.

Table 1.

Related works.

It is worth nothing that, from the information in Table 1, only approaches based on intervals address POP’s variant with uncertainty, and none of them utilized a steady-state selection scheme. The Fuzzy Adaptive Multi-objective Evolutionary solution methodology (FAME) has had great success in many optimization problems; however, there is a lack of studies about its performance on the POP. The previous situations open an area of opportunity, addressed in this work, consisting of studying optimization approaches’ performance derived from fuzzy numbers and steady-state selection schemes on their search process to solve the Multi-objective POP with uncertainty (MOPOP).

Evolutionary algorithms commonly use a generational selection scheme to update each generation’s population; the process creates several offspring through genetic operators and combines them with the parents to form the next generation of individuals [10,14,15]. On the other hand, an algorithm using a steaty-state selection scheme produces a single offspring during the reproduction process to combine with the parents. The efficiency of the population’s update process achieved by the latter method is advantageous for any research [16]. Hence, this work proposes a new method based on FAME and fuzzy numbers to handling uncertainty and obtaining more robust solutions in MOPOP; the approach mainly uses fuzzy trapezoidal sets to reflect a magnitude’s imprecision.

This work’s main contributions are: (1) a new mathematical model for MOPOP that considers fuzzy trapezoidal parameters; (2) a new algorithm based on FAME to solve the proposed model; (3) two novel steady-state NSGA-II to solve this MOPOP’s variant; and (4) a novel strategy to measure the performance of the fuzzy multi-objective algorithms with the commonly used real metrics.

The remaining structure of this paper is as follows. Section 2 includes some elements of the fuzzy theory used in this work. Section 3 describes a new mathematical model of the Portfolio Optimization Problem with Trapezoidal Fuzzy Parameters. Section 4 and Section 5 contain the proposed steady-state algorithms: T-NSGA-II and T-FAME, respectively. Section 6 describes the computational experiments done to assess the performance of the algorithms. Finally, Section 7 presents the conclusions.

2. Elements of Fuzzy Theory

This section contains the main concepts of fuzzy theory used in this work.

2.1. Fuzzy Sets

Let X be a collection of objects x, then a fuzzy set A defined over X is a set of ordered pairs A = {(x, μA(x))/x є X} where μA(x) is called the membership function or grade of membership of x in A which maps X to the real membership subspace M [17]. The range of the membership function is a subset of the nonnegative real numbers whose supremum is finite. Elements with a zero degree of membership usually are not listed.

2.2. Generalized Fuzzy Numbers

A generalized fuzzy number A is any fuzzy subset of the real line R, whose membership function satisfies the following conditions [18]:

- is a continuous function from to the closed interval

- , is strictly increasing on

- , for

- is strictly decreasing on

- , for

where are real numbers.

We denote this type of generalized fuzzy number as . When the generalized fuzzy number is denoted as When and are straight lines, then A is a trapezoidal fuzzy number, and denoted as When b = , then A is a triangular fuzzy number, and denoted as .

A triangular membership function definition is as:

A trapezoidal membership function definition is as:

2.3. Trapezoidal Addition Operator

Given two trapezoidal numbers and , then [19]:

2.4. Graded Mean Integration (GMI)

Graded mean integration [19] is a defuzzification method to compare two generalized fuzzy numbers. We compare the numbers based on their defuzzified values. The number with a higher defuzzified value is larger. The formula to calculate the graded mean integration of a trapezoidal number A is given by:

For a trapezoidal fuzzy number , there is a more straightforward expression which is .

2.5. Order Relation in the Set of the Trapezoidal Fuzzy Numbers

Given the trapezoidal fuzzy numbers and , then:

- if only if

- if only if

- if only if

2.6. Pareto Dominance

Given the following fuzzy vectors: and where and are trapezoidal fuzzy numbers, then we say that dominates , if only if for all and for some [20].

3. Multi-Objective Portfolio Optimization Problem with Trapezoidal Fuzzy Parameters

This section presents the proposed mathematical model for MOPOP with Fuzzy Trapezoidal Parameters. It offers a detailed description of the construction of the fuzzy trapezoidal instances used in this work to assess the proposed solution algorithms’ performance. It also includes a description of how the fuzzy trapezoidal parameter’ values participate in evaluating objective functions and the candidate solutions’ feasibility when the solution algorithms search across the solution space.

3.1. Mathematical Model

Let n be the number of projects to consider, C the total available budget, O the number of objectives, the cost of the project i, bij the produced benefit with the execution of the project i in objective j, K the number of areas to consider, M the number of regions, and the lower and upper limits in the available budget for the area k, and and the lower and upper limits in the available budget for the region m. The arrays and contain the area and region assigned to the project i. is a binary vector that specifies the selected projects included in the portfolio. If xi = 1 then the project i is selected, otherwise it is not. Now we define the MOPOP with Fuzzy Trapezoidal parameters as follows:

where

Subject to the following constraints:

In this model, all the parameters and variables in bold and italic are trapezoidal fuzzy numbers.

The objective function tries to maximize the contributions of each objective (6). We calculate each objective by adding all the selected projects’ contributions in the binary vector (7). The constraint (8) makes sure that the sum of the costs required for all the selected projects does not exceed the available budget. The set of constraints (9) makes sure that the sum of the projects’ costs is in the range of the involved areas’ available budget. The set of constraints (10) makes sure that the sum of the projects’ costs is in the range of the available budgets for the corresponding regions. The final set of constraints (11) makes sure that the binary variables can only have values of 0 or 1.

We should note that the problem definition is over the space defined by the binary vectors whose size is 2n. Then the solution algorithms must search across this space to find the Pareto optimal solutions. On the other hand, given that the well-known NP-hard Knapsack problem can be easily reduced to MOPOP, the latter is also NP-hard [21].

3.2. Strategy to Generate the Fuzzy Trapezoidal Instances

This work uses instances initially designed for the POP with interval parameters, where the fuzzy representation of the parameters of the problem uses fuzzy interval type numbers (for example, the interval [76,800, 83,200]) [10]. Fixing the values of , to 0.5, and adding them to any interval in the original POP’s instances allowed the creation of MOPOP’s instances with Trapezoidal Fuzzy Parameters. Following this way, an interval value such as would be seen as in the new set of instances.

To create a random fuzzy interval type instance the following real parameters are considered: budget (B), number of objectives (m), projects (p), areas (a) and regions (r), and ranges of costs (c1, c2), and objectives (m1, m2). Then to generate a fuzzy interval instance the following interval type values must be determined:

- ← Budget as interval

- [ai, a′i] ← Limits of each area I = 1, 2, …, a

- [ri, r′i] ← Limits of each region r = 1, 2, …, r

- [bij, b′ij] ← Benefit from the objective I = 1, 2, ..., m and for each project j = 1, 2, …, p

- {Ci, Ai, Ri} ← Real values of the cost, area and region for each project i = 1, 2, …, p.

Implementing MOPOP’s instances generator combines the previous parameters along with Equations (12)–(24) to create random instances [10].

B = 0.58B B′ = 1.3B

al = (0.7 * B)/(1.7ª + 0.1a2), a′l = (1.27 * B)/(1.7ª + 0.1a2)]

au = ((1.02 + 0.06r) * B)/r), a′u = ((2.635 + 0.155a) * B)/a

ai = al + Random (a′l− al) for i = 1,2,…,a

a′i = au + Random (a′u − al) for i = 1,2,…,a

rl = (0.7 * B)/(1.7a + 0.1a2), r′l = (1.27 * B)/(1.7a + 0.1a2)]

ru = ((1.02 + 0.06r) *B)/r), r′u = ((2.635 + 0.155a) * B)/a

ri = rl + Random (r′l − rl) for i = 1,2,…,r

r′I = ru + Random (r′u − rl) for i = 1,2,…,r

Ai = Random(a) i = 1,2,…,p

Ri = Random(r) i = 1,2,…,p

o = m1 + Random (m2 − m1), bij = 0.8*o,

b′ij = 1.1*o for i = 1, 2, …, p and i = 1, 2, …, m

The interval instances, built with the instances generator, have names under the following format ompn_idI, where m is the number of objectives the instance has, n is the number of projects, id is a consecutive number, and I indicate that the instance is of interval type. An example of this would be the instance o2p100_1I, meaning that it is the instance number 1 with 2 and 100 projects.

The Algorithm 1 details the structure of a fuzzy interval type instance.

| Algorithm 1. o2p25_0I fuzzy interval type instance |

| // Fuzzy interval type value of the total available budget |

| [76800, 83200] |

| // Number of objectives |

| 2 |

| // Number of areas |

| 3 |

| // Fuzzy interval type values of the upper and lower bounds of the available budget // in each area, a row for each area. |

| [13060, 16560] [46245, 49745] |

| [13810, 15810] [47895, 48095] |

| [13210, 16410] [46545, 49445] |

| // Number of regions. |

| 2 |

| // Fuzzy interval type values of the upper and lower bounds of the available budget // in each region, a row for each region. |

| [22775, 24275] [67950, 68050] |

| [23325, 23725] [67900, 68100] |

| // Number of projects |

| 25 |

| // For each project, there is a row that includes the following: fuzzy interval type |

| // value of the project cost, project area, project region, and the fuzzy interval type |

| // value of the benefits obtained with each objective. (only 5 of the 25 projects are |

| // showed) |

| [9308, 10082] [1] [1] [7642, 8278] [231, 249] |

| [8290, 8980] [2] [1] [8506, 9214] [404, 436] |

| [5895, 6385] [3] [1] [3831, 4149] [111, 119] |

| [9053, 9807] [1] [2] [3908, 4232] [399, 431] |

| [6058, 6562] [1] [2] [5760, 6240] [418, 452] |

In order to transform a given fuzzy interval type instance into a fuzzy trapezoidal instance, all the interval values [a, b] are changed to fuzzy trapezoidal values [a, b, a, b] with a = 0.5 and b = 0.5. The Algorithm 2 shows the result of converting the fuzzy interval type instance o2p25_0I to the fuzzy trapezoidal instance o2p25_0T.

| Algorithm 2. o2p25_0T fuzzy trapezoidal instance |

| // Fuzzy trapezoidal value of the total available budget |

| [76800, 83200, 0.5, 0.5] |

| // Number of objectives |

| 2 |

| // Number of areas |

| 3 |

| // Fuzzy trapezoidal values of the upper and lower bounds for the available budget // in each area, a row for each area. |

| [13060, 16560, 0.5, 0.5] [46245, 49745, 0.5, 0.5] |

| [13810, 15810, 0.5, 0.5] [47895, 48095, 0.5, 0.5] |

| [13210, 16410, 0.5, 0.5] [46545, 49445, 0.5, 0.5] |

| // Number of regions. |

| 2 |

| // Fuzzy trapezoidal values of the upper and lower bounds for the available budget |

| // in each region, a row for each region. |

| [22775, 24275, 0.5, 0.5] [67950, 68050, 0.5, 0.5] |

| [23325, 23725, 0.5, 0.5] [67900, 68100, 0.5, 0.5] |

| // Number of projects |

| 25 |

| // For each project, there is a row that includes the following: fuzzy trapezoidal value // of the project cost, project area, project region, and the fuzzy trapezoidal values of |

| // the benefits obtained with each objective. (only 5 of the 25 projects are showed) |

| [9308, 10082, 0.5, 0.5] [1] [1] [7642, 8278, 0.5, 0.5] [231, 249, 0.5, 0.5] |

| [8290, 8980, 0.5, 0.5] [2] [1] [8506, 9214, 0.5, 0.5] [404, 436, 0.5, 0.5] |

| [5895, 6385, 0.5, 0.5] [3] [1] [3831, 4149, 0.5, 0.5] [111, 119, 0.5, 0.5] |

| [9053, 9807, 0.5, 0.5] [1] [2] [3908, 4232, 0.5, 0.5] [399, 431, 0.5, 0.5] |

| [6058, 6562, 0.5, 0.5] [1] [2] [5760, 6240, 0.5, 0.5] [418, 452, 0.5, 0.5] |

3.3. Evaluating the Solutions and Verifying the Feasibility

This section describes how to calculate the objective values of a solution and how to determine its feasibility. To explain this process, let F the trapezoidal fuzzy numbers set, and R the set of real numbers. Now it is described how to apply the map such that . The map associates the GMI value to each trapezoidal fuzzy number. A remarkable property of this map is that if , then , hence, the computation of a vector solution for a MOPOP’s instance with two objectives is transformed into a vector of two trapezoidal fuzzy numbers, which in turn is transformed into a vector of two real numbers. As this process is consistently applied to all the solutions, the algorithms will be performed considering that the binary vector objectives space is the real vector space. The transformation must also be applied to all the trapezoidal fuzzy numbers in the constraints to validate the solutions’ feasibility in the search space process. Equations (25)–(30) shows how evaluate the solution and verify the feasibility.

where

Subject to the following constraints:

An additional benefit is that this mapping transforms the approximated Pareto front in a set of real vectors. In such a case, standard commonly used metrics can be applied to evaluate the performance of the algorithms.

Example: Consider the following simplified instance:

Then using the model, the problem to solve is:

Maximize:

Subject to:

The objectives z1 and z2 are the benefits generated by the projects selected in the binary vector x. The constraint verifies that the cost of that project is not higher than the available budget (C).

Given the solution x = [0, 1,0], then the fuzzy trapezoidal values of the two objectives are the following:

Evaluating the constraint to verify the feasibility of the solution x, we have:

Now the GMI is used to compare the fuzzy trapezoidal numbers. For a trapezoidal fuzzy number , the GMI is:

As P( P([3,20,1,5]) = 12.166, solution x is feasible.

Notice that this process was done in the fuzzy trapezoidal numbers space; only at the end the GMI is used to verify the constraint. To perform the process in the real space, the two fuzzy objectives and the fuzzy costs in the constraint are transformed into real numbers using the GMI. The evaluation of the solution is as follows:

Then = 3 and = 8.966.

Transforming the constraint we have:

Hence, the solution x is feasible given that 12.166.

The algorithms proposed in this work use the evaluation and feasibility verification procedures described in this section. The algorithms must call such methods on every new solution generated by them.

4. Steady-State T-NSGA-II Algorithm

This section presents the design of all the components included in the definition of the proposed algorithm. This is an adaptation of the classic Deb algorithm NSGA-II [22] modified to work with the trapezoidal fuzzy numbers. As all the algorithms proposed in this work, T-NSGA-II updates the population, applying in each generation the steady-state approach to include in the population only one of the generated individuals. In generational algorithms, the new set of offsprings are combined with the parents to create individuals’ next generation; the input to the algorithm is a MOPOP’s instance. The output is an approximate Pareto front for the instance.

4.1. Representation of the Solutions

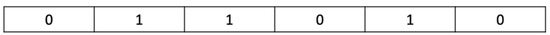

A MOPOP’s solution is represented by binary vector , where n is the number of projects. This vector is a portfolio, and each value si = 1 represents the inclusion of project i in the portfolio. The first element in the vector is s0, and the last is sn–1. Figure 1 shows an example of this representation.

Figure 1.

Representation of a solution.

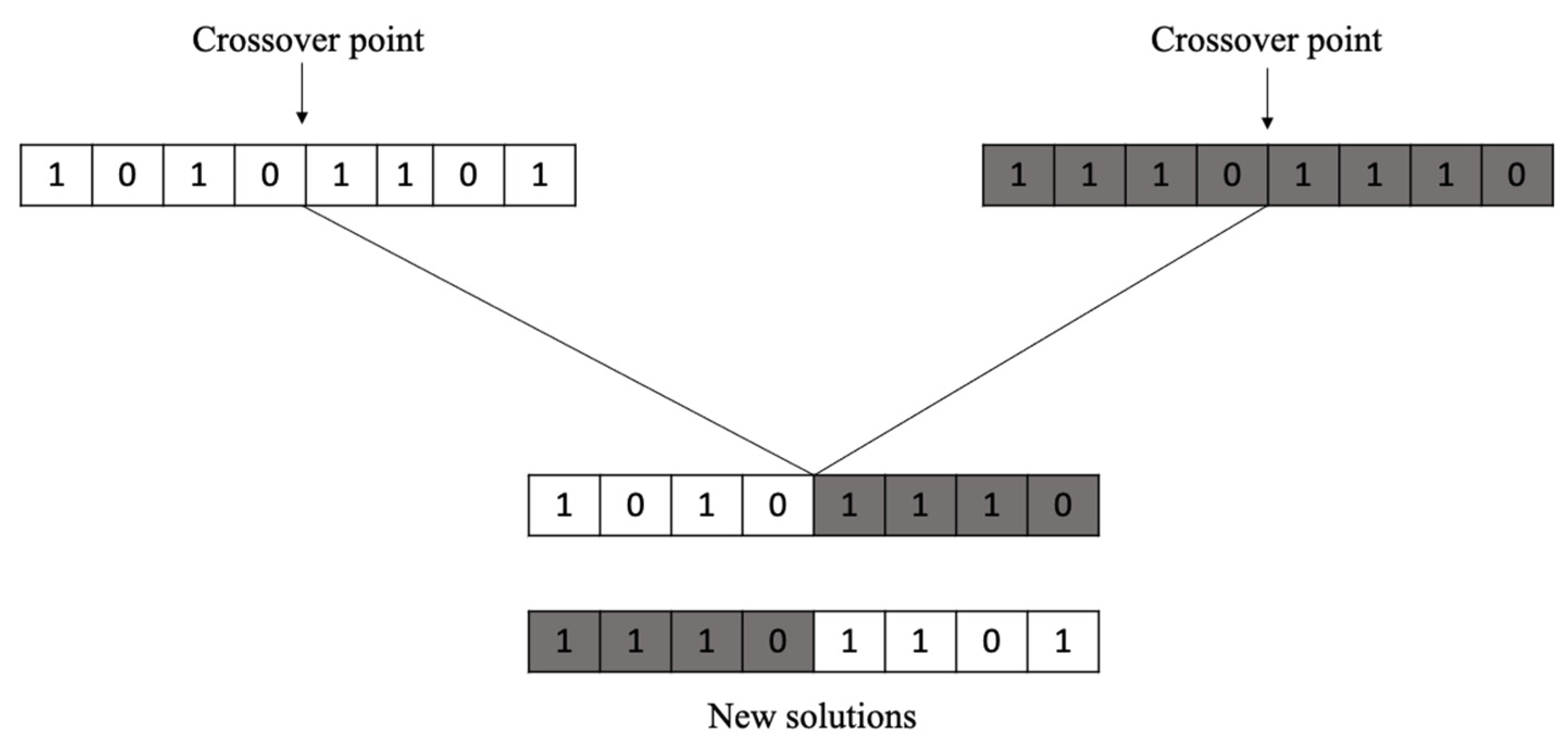

4.2. One-Point Crossover Operator

The one-point crossover operator generates two offsprings from two parents [23]. The process first defines a random cutting point cp in the range [0, n – 1]. After this, it split each parent vector into left and right sections, where for parent i, the lefti contains its values {s0, …, scp}, and the righti contains its values {scp+1, …, sn–1}. Finally, it mixes the split sections to generate two new offsprings h1, h2, where h1 uses left1 and right2, and h2 uses left2 and right1. The parents are chosen at random. The steady-state approach only utilizes the first offspring h1. The number of crossovers that are done is a defined parameter. Figure 2 shows an example of this operator.

Figure 2.

Example of one-point crossover operator at index cp = 3.

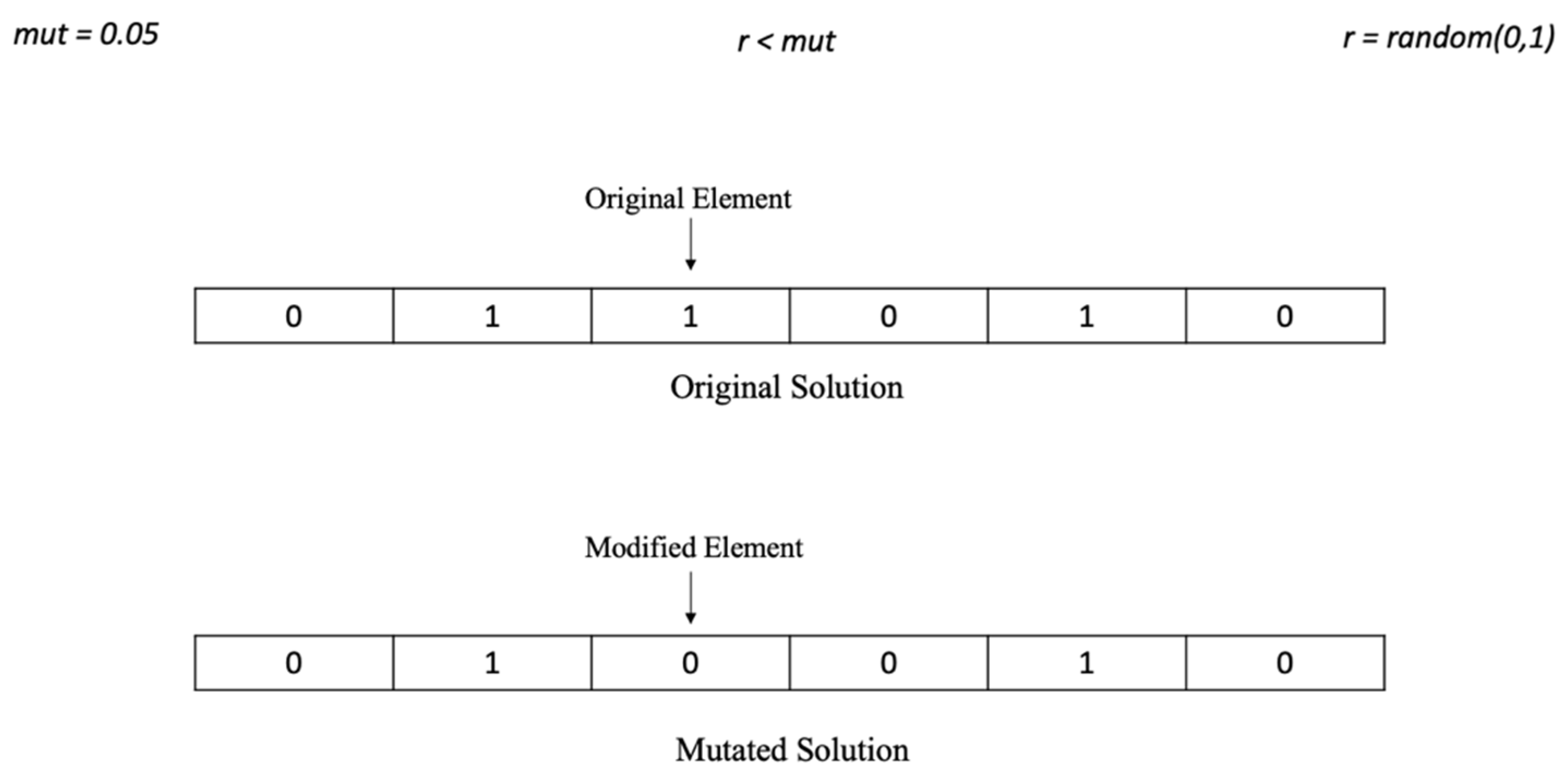

4.3. Uniform Mutation Operator

The uniform mutation operator generates a new solution for the mutation population from given a solution vector S = {s0, s1, …, sn–1 } [24]. The process generates for each index i, for 0 ≤ i ≤ n − 1, a random number u in the range [0, 1], and if u < mut then the value of si changes from 1 to 0 or vice versa, otherwise the value si remains intact. The parameter mut is the mutation probability used by the operator. Figure 3 shows an example of the use of this mutation.

Figure 3.

Example of when an element changes its value.

Another parameter of the operator is the number of new mutated solutions that must be generated. Usually, the solutions that undergo this process come from the crossover operator’s results; otherwise are randomly chosen.

4.4. Initial Population

A predefined number of randomly generated solutions are created to have an initial population. When a new random solution is generated, the objectives vector for the solution is determined and its feasibility is verified.

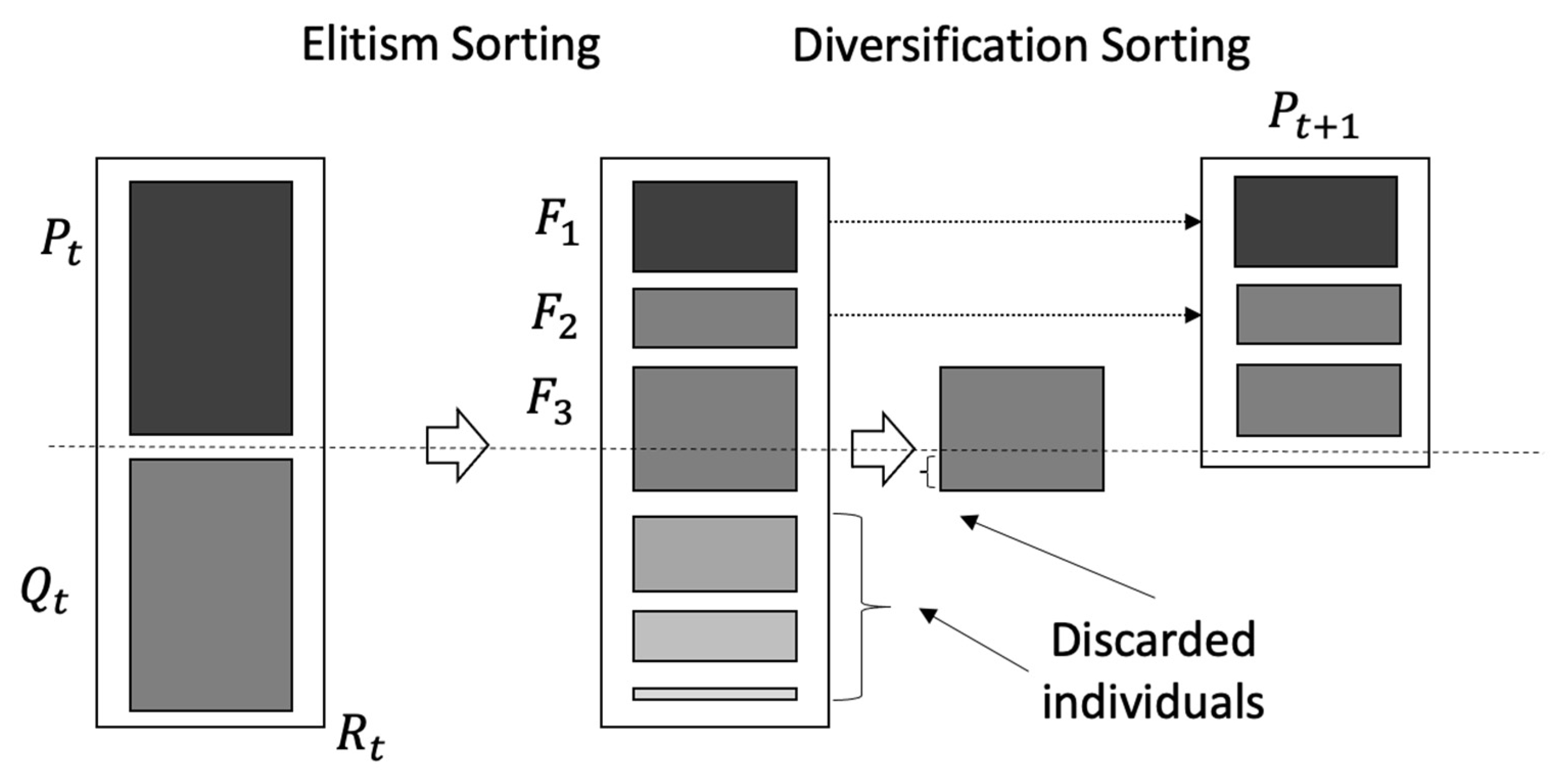

4.5. Population Sorting

This process consists of sorting the solutions of the population, and it is composed of two phases: (1) the elitist phase, which keeps the best solutions; and (2) the diversification phase, which ensures that there are solutions different enough to avoid local optima in the search process of the algorithm. The elitist phase is also known as non-dominated sorting. It consists of separating the population in fronts or sets of non-dominated solutions, making sure that the best solutions are always on the first front. The diversification phase sorts the solutions of a front according to the Crowding Distance indicator. The solutions in the best fronts are included in the population, and when a front cannot be completely inserted, the solutions with the worst crowding distances are discarded. Figure 4 shows both phases.

Figure 4.

Elitism sorting and diversification phases.

4.6. Non-Dominated Sorting

This process has two parts, and works on a given population. The first part constructs the first front with the set of non-dominated solutions identified from the comparison of vectors of objective values among all the population’ solutions. A solution is non-dominated if its vector of objective values is not dominated by any other. Note that the Pareto dominance uses real value vectors in its definition.

The second part builds the remaining fronts one by one. Each new front integrates those solutions that are only dominated by solutions in previously built fronts. The process repeats until no more fronts can be made.

4.7. Calculating the Crowding Distance

According to [22], this process orders the solutions in a front by their Crowding Distance (CD). The distance is a measure of the separation of the solutions, and it is relative to the normalized value of the objectives. The CD identify the solutions with extreme values on the objectives and put it first on the front. After that, the solutions order are according to their accumulated degree of separation per objective, the greatest the separation the better. For each objective, the CD computes the degree of separation using the ordered array of objective values resulting from the front; the solutions with the highest and smallest objective values will have a specific Crowding Distance value d equal to infinite (), while the remaining solutions will be calculated by the following formula:

where d is the Crowding Distance, I is the solution position in the whole population in general, j is the solution position after the ordering by objective m within the front, f is the objective value and m is the current objective. The accumulation of Crowding Distance value d of all the objectives results in the final value of CD for each solution I.

4.8. Calculating the Spatial Spread Deviation (SSD)

The Spatial Spread Deviation (SSD) is a density estimator used to rearrange the solutions in a front, so the spread is not by a wide margin [25]. The method calculates for each solution the SSD value using a matrix of normalized distances between the solutions in the approximated front. The solutions are sorted from the lowest to highest SSD value in order to punish solutions according to their standard deviation and their proximity to their closest -neighbors. The next three equations show how to calculate the SSD values, in the process i is the solution in the front for which the SSD is calculated, and j take values over all the solutions in the front except i.

where is the distance from solution to solution . is the biggest distance between all the solutions and is the closest distance between all the solutions. is the number of neighbors closest to solution . is the initial value of SSD, which is -INF if the solution is at one of the ends of the front when the normalized values of the graded mean integration of the objective values are calculated.

4.9. Pseudocode of the T-NSGA-II Algorithm

The T-NSGA-II is based in the structure of the classic multi-objective algorithm NSGA-II proposed by Deb [22]. As previously described, the algorithm had several modifications to work with trapezoidal fuzzy numbers and the proposed MOPOP model. Algorithm 3 shows the detailed pseudocode of the algorithm T-NSGA-II.

| Algorithm 3. T-NSGA-II pseudocode |

| INPUT: Instance with the trapezoidal parameters of the portfolio problem. OUTPUT: Approximated Pareto Front NOTE: The algorithm is called T-NSGA-II-CD when the Crowding Distance is used, and T-NSGA-II-SSD when is used the Spatial Spread Deviation. *************************************** 1. Create the initial population pop 2. Evaluate all the solutions in pop 3. Order pop using no-dominated Sorting 4. For all solutions in pop calculate Spatial Spread Deviation/Crowding distance |

| 5. pop sorting due to fronts and Spatial Spread Deviation/CD 6. Main loop, until stopping condition is met *** Steady state approach: only one generated individual is considered to include in popc 7. Create popc using crossover operator *********************************************************************** 8. Create popm using mutation operator 9. Join popc and popm to create popj 10. Evaluate solutions in popj and put feasibles in popf 11. Add popf to pop, and calculate objective functions 12. Order pop using no-dominated sorting 13. Calculate Spatial Spread Deviation/Crowding distance 14. pop sorting due to the front ranking and Spatial Spread Deviation/CD 15. Truncate pop to keep a population of original size 16. No-dominated sorting 17. Calculate Spatial Spread Deviation/Crowding distance of the individuals in pop 18. pop sorting due to front ranking and Spatial Spread Deviation/CD 19. End Main loop 20. Return (Front 0). ***Approximated Pareto Front |

5. T-FAME Algorithm

This section presents the design of all the components of the T-FAME algorithm. The algorithm adapts the FAME algorithm to work with the trapezoidal fuzzy numbers [25]. The input to the algorithm is an instance of MOPOP. The output is the approximate Pareto front for that instance. T-FAME updates the population, applying the steady-state approach to include in the population only one of the generated individuals. The following algorithm components are the same described in Section 4: the structure used to represent the solutions, the evaluation of a solution, the construction of the initial population, the sorting of the population, the non-dominated sorting process, and the density SSD estimator. The components described in this section are those not included in the previous description or with significant differences, such as the fuzzy controller, the additional genetic operators, and the structure used to store the approximated Pareto front.

5.1. Fuzzy Controller

This section introduces an intelligent mechanism that allows an MOEA to apply different recombination operators at different search process stages. The use of different operators is dynamically adjusted according to their contribution to the search in the past. Intuitively, the idea is to favor operators generating higher quality solutions over others. For this purpose, the fuzzy controller dynamically tunes the probability selection of the available recombination operators [25].

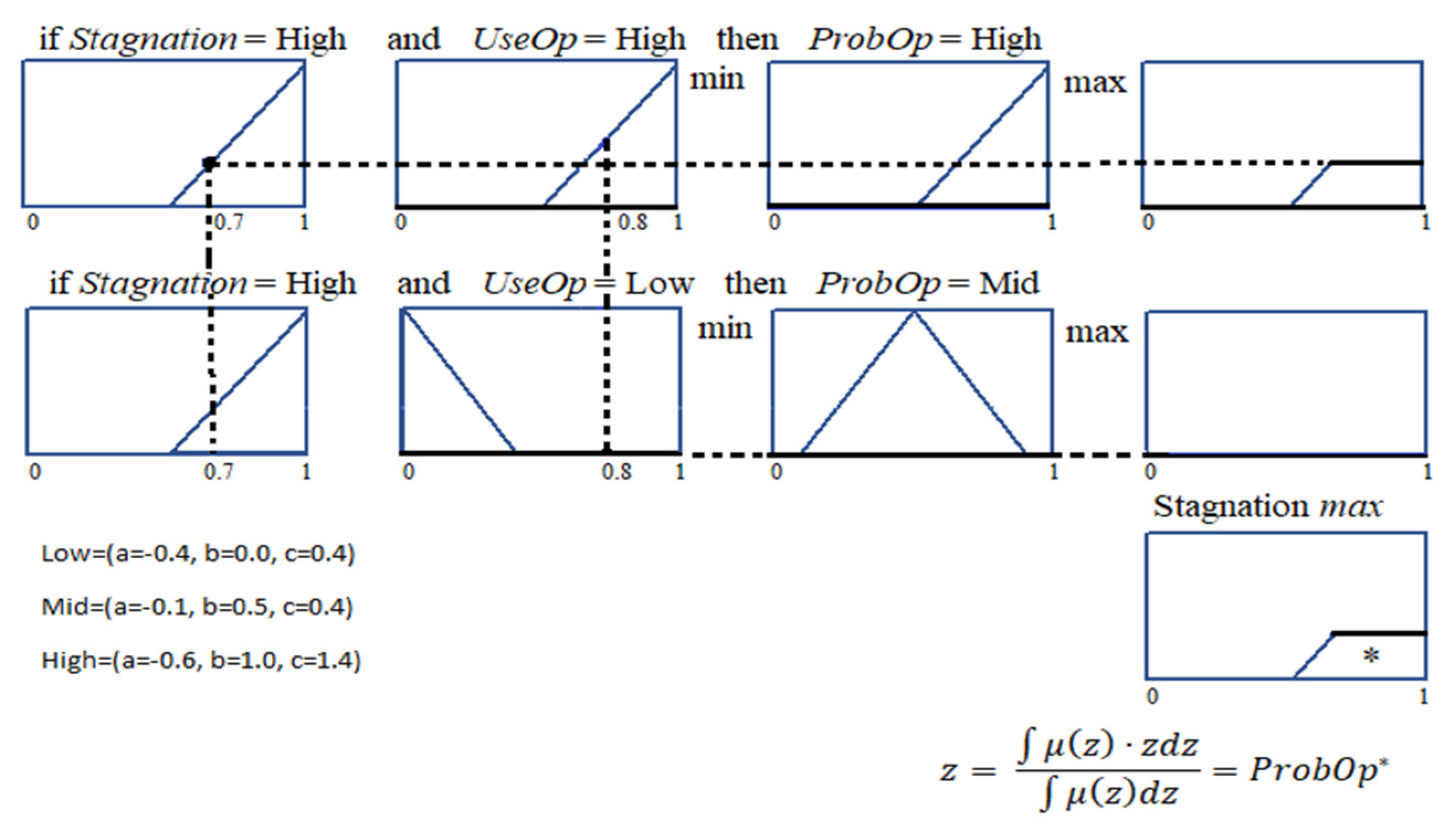

The fuzzy controller uses a Mamdani-Type Fuzzy Inference System (FIS) [26] to compute the probability of applying the different operators. Fuzzy sets defined by membership functions represent the linguistic values of the model’s input and output variables. Regarding the inference, we use the approach originally proposed by Mamdani based on the “max min” composition: using the minimum operator for implication and maximum operator for aggregation. The aggregation of the consequents from the rules are combined into a single fuzzy set (output), to be defuzzified (mapped to a real value). A widely used defuzzification method is the centroid calculation, which returns the area’s center under the curve. We use triangular-shaped membership functions in all inputs and outputs,

the parameters a and c determine the “corners” of the triangle, and b determines the peak. A membership function maps real values of x with a degree of membership 0 ≤ ≤ 1. The used granularity levels were: Low (a = −0.4, b = 0.0, c = 0.4), Mid (a = 0.1, b = 0.5, c = 0.9) and High (a = 0.6, b = 1.0, c = 1.4).

The interaction of the fuzzy controller with the algorithm works as follows: Let Operators the set of genetic operators available. The evolutionary algorithm monitors the search process in a series of time windows, each of size Window. At the end of each time window, the algorithm sends to the fuzzy controller the real values of the input variables Stagnation and UseOp, and receives from the controller the real value of the output variable ProbOp.

Each of the fuzzy variables has associated the fuzzy linguistic values: High, Mid and Low. Then the membership functions of the fuzzy variable Stagnation are: In a similar way, the membership functions are defined for the variables UseOp and ProbOp.

To show how works the fuzzification process consider that the received real values of the input variables are Stagnation = 0.7 and UseOp = 0.8.

The fuzzified values for the Stagnation variable are the membership degrees:

For the UseOp variable the fuzzified values are the membership degrees: All the membership degrees are values in the interval (0,1).

Now the FIS includes a set of fuzzy rules which are specified in terms of the fuzzy variables, the linguistic values, and a set of logic operators. To continue with the previous example, consider that the fuzzy rules in the FIS are:

Once the fuzzification of the inputs is done, the next process is to evaluate the antecedents of the rules determining the following values:

In the rule evaluation, the min operator is associated with the logic operator and, and the max operator is associated to the logic operator or.

Now the membership functions of the consequents of the rules must be determined. For each rule an operator of implication is applied to the antecedent value obtained in the previous process and to the consequent of the rule, to determine the membership function of the conclusion of the rule. The min operator is used to implement the implication logic operator, which truncates the membership function of the rule’s consequent. For example, the truncated membership functions of the consequents are the following:

Now the truncated membership functions are integrated using an aggregation operator to create a new membership function, which is the controller’s fuzzy output. The aggregation operators that are frequently used are max and sum.

For the example, the max operator is used to determine the aggregated membership function, which is the following:

Finally, the defuzzification of the fuzzy output obtained is done. In this step a real number is associated to the aggregated membership function, which is the output of the inference process. In the previous example, the center of the area under the curve of the aggregated membership function is used to defuzzify the output of the controller as following:

Figure 5 graphically shows the fuzzy inference process for the example described.

Figure 5.

Mamdani Fuzzy Inference System used in the fuzzy controller.

All of the controller rules are of the type: Antecedent AND Antecedent then Consequent. The fuzzy rules were designed to have soft changes in the input variables (Stagnation and UseOp), to avoid abrupt changes in the output variable (ProbOp). The configuration was manually done by observing the surface that these three variables generated [25]. Table 2 shows the rules of the fuzzy controller.

Table 2.

Fuzzy controller rules.

The Algorithm 4 shows the structure of the fuzzy controller used in the fuzzy controller implementation with the Java Library Fuzzy Lite 6.0.

| Algorithm 4. Fuzzy controller structure. |

| [System] |

| Name=‘FuzzyController ‘ |

| Type=‘mamdani’ |

| Version=2.0 |

| NumInputs=2 |

| NumOutputs=1 |

| NumRules=9 |

| AndMethod=‘min’ |

| OrMethod=‘max’ |

| ImpMethod=‘min’ |

| AggMethod=‘max’ |

| DefuzzMethod=‘centroid’ |

| [Input1] |

| Name=‘Stagnation’ |

| Range=[0 1] |

| NumMFs=3 |

| MF1=‘Low’:’trimf’,[−0.4 0 0.4] |

| MF2=‘Mid’:’trimf’,[0.1 0.5 0.9] |

| MF3=‘High’:’trimf’,[0.6 1 1.4] |

| [Input2] |

| Name=‘UseOp’ |

| Range=[0 1] |

| NumMFs=3 |

| MF1=‘Low’:’trimf’,[−0.4 0 0.4] |

| MF2=‘Mid’:’trimf’,[0.1 0.5 0.9] |

| MF3=‘High’:’trimf’,[0.6 1 1.4] |

| [Output1] |

| Name=‘ProbOp’ |

| Range=[0 1] |

| NumMFs=3 |

| MF1=‘Low’:’trimf’,[−0.4 0 0.4] |

| MF2=‘Mid’:’trimf’,[0.1 0.5 0.9] |

| MF3=‘High’:’trimf’,[0.6 1 1.4] |

| [Rules] |

| 3 3, 2 (1) : 1 |

| 3 2, 1 (1) : 1 |

| 3 1, 2 (1) : 1 |

| 2 3, 2 (1) : 1 |

| 2 2, 1 (1) : 1 |

| 2 1, 2 (1) : 1 |

| 1 3, 3 (1) : 1 |

| 1 2, 2 (1) : 1 |

| 1 1, 1 (1) : 1 |

In the [Rules] section, the first and second columns contain the linguistic values of the two input variables (1-Low, 2-Mid, 3-High), the third column is the weight of the rules, and the last one indicates the logic operator used in the rule (1-and, 2-or).

The interaction of the fuzzy controller with the algorithm works as follows: Let Operators the set of genetic operators available. The T-FAME algorithm searches in the solutions space in time windows of size Window, each time window the algorithm performs Window iterations. At the end of each time window, the algorithm sends to the fuzzy controller the values of the input variables Stagnation and UseOp[i] for all i ∈ Operator. For each pair of input values, a Fuzzy Inference generates ProbOp[i] for all i ∈ Operator. This process is done for the T-FAME algorithm with the following pseudocode where v is the windows counter:

If (v == Window) then

39. v =0; Stagnation = 0;

40. Endif

The line numbers are those that appear in the T-FAME algorithm pseudocode included in Section 6.4. Notice that in lines 37 and 38, the algorithm uses the fuzzy controller to update all the available recombination genetic operators’ selection probability.

The Stagnation value is shared for all the operators, and it is an indicator of the evolution of the search in the current time window. This is a normalized value that is increased by 1.0/Window each time the generated solution cannot enter the set where the non-dominated solutions are kept and reset when the time window is over. UseOp[i] is a normalized value that is increased by 1.0/Window every time the operator i is used.

5.2. Additional Genetic Operators

Four operators are used in T-FAME to create new solutions: One-point crossover, Uniform Mutation, Fixed Mutation, and Differential Evolution. Two of these operators (One-point crossover and Uniform Mutation) are the same ones that are used on T-NSGA-II, and they are already described in the previous section.

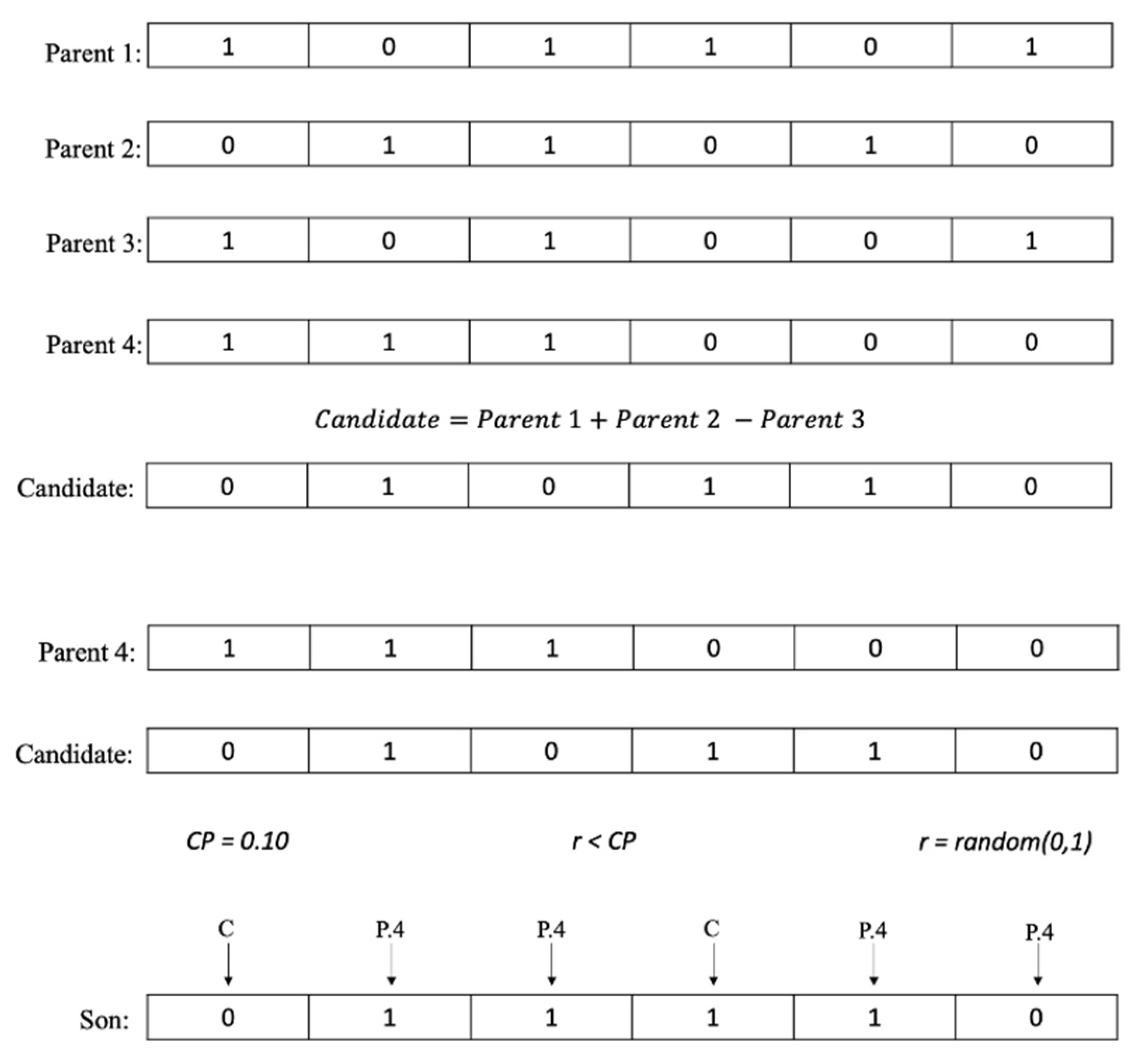

Differential Evolution: This method was proposed by Rainer [27], and its implementation was based on [28]. It uses the four parents obtained with the tournament method. The first part of the process consists of creating a new solution called Candidate using Parent 1, Parent 2, and Parent 3, this solution is obtained by doing a binary addition of the parents. Figure 6 shows an example of how this operator works.

Figure 6.

Differential evolution operator example.

Once the Candidate is calculated, a binary crossover operator is done between the candidate and Parent 4 to create a new solution called Son, this binary crossover operator is different from the one-point crossover operator described previously, and it uses a parameter called crossover percentage (CP). The binary crossover operator consists of the following: For each array index, a random number between 0 and 1 is generated, if that number has a lesser value than CP, then that index receives the value of the Candidate, if this is not the case, then that index receives the value of Parent 4.

Once the new solution Son is completed, a dominance test is done between Son and Parent 4, if the objective values of Parent 4 dominate the objective values of Son, then Parent 4 proceeds to be the new solution, but if this is not the case, then Son proceeds to be the new solution.

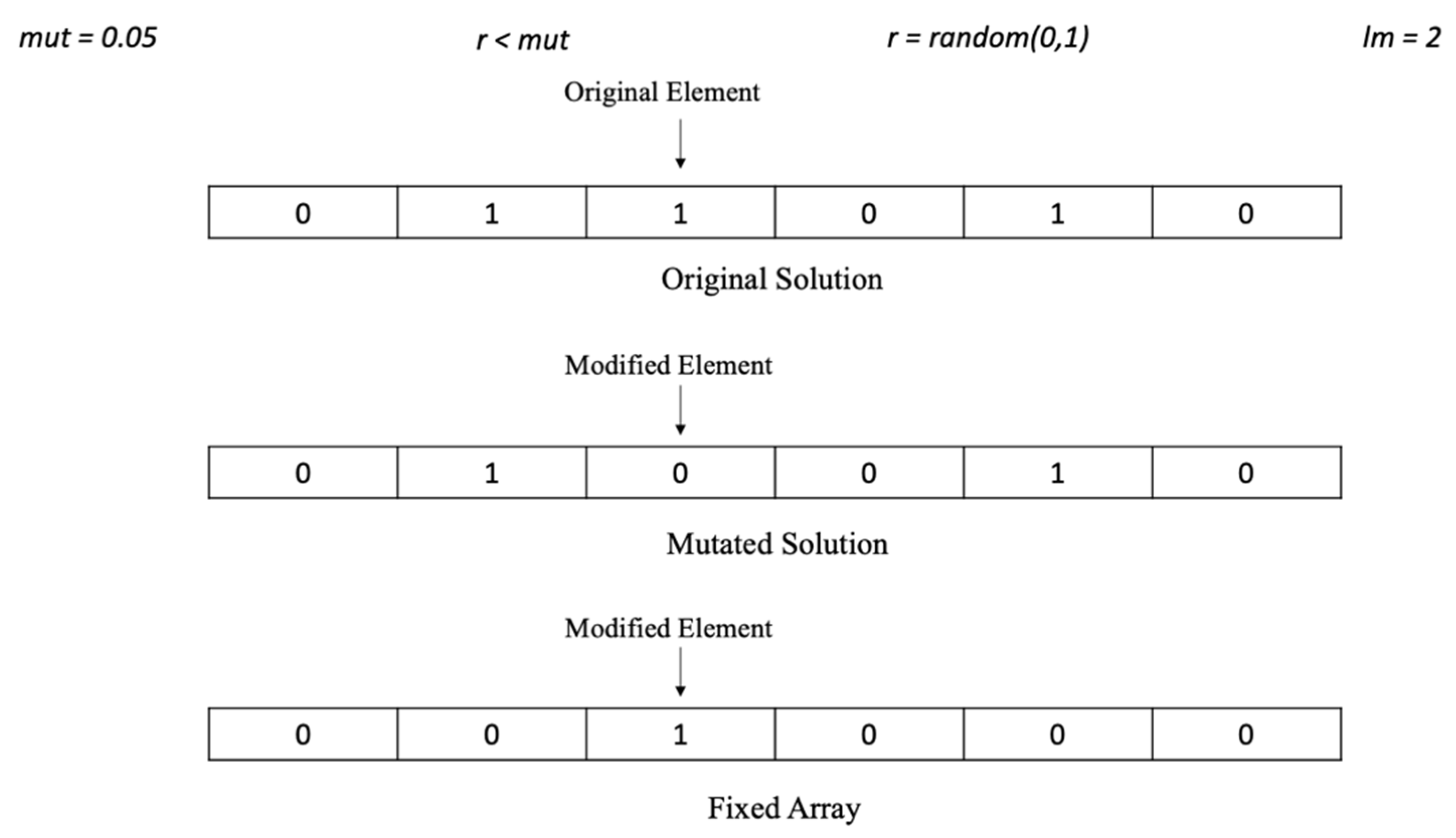

Fixed Mutation: This method is very similar to the uniform mutation operator that was described previously. The main difference lies in the fact that the whole process is done in a loop until n mutations are made, where n is a parameter previously defined. This operator also makes sure that no element in the solution is changed twice or more times, this is done by using a fixed array to keep track of the changed elements in the solution. Figure 7 shows an example of the Fixed Mutation operator.

Figure 7.

Fixed Mutation operator example.

5.3. Used Structures to Store the Population and the Approximated Pareto Front

The algorithm uses the structure pop to maintain a solutions population, which contains the following information for each solution i:

- V(i): vector binary associated to the solution i.

- O1(i) and O2(i): values of the two objectives of the solution i, converted to GMI values.

- r(i): ranking of the solution i is the number of the front in which is located.

- Dominated(i): solutions dominated by the solution i.

- Domines(i): solutions that dominates to solution i.

- CD (i): Crowding Distance value of the solution i.

- SSD(i): Spatial Spread Deviation value of solution i.

The structure Front is used to store the approximated Pareto front, which contains the following information for each stored solution i:

- V(i): vector binary associated to the solution i.

- O(i): real vector of the graded mean values of the fuzzy triangular objectives of the solution V(i).

- r(i): ranking of the solution i is the number of the front in which is located.

- Dominated(i): solutions dominated by the solution i.

- Domines(i): solutions that dominates to solution i.

- SSD (i): Spatial Spread Deviation value of the solution i.

5.4. T-FAME Algorithm Pseudocode

This section presents the pseudocode for the algorithm T-FAME in Algorithm 5.

| Algorithm 5. T-FAME pseudocode |

| INPUT: Instance with the trapezoidal parameters of the portfolio problem. OUTPUT: Approximated Pareto front Variables pop: Population of solutions (binary vectors) Front: Limited sized set were no-dominated solutions are kept Operator: Vector of size SizeOP that contains the index of the available operators Parents: Vector of size NParents that contains the chosen parents ProbOp(i): Probability that operator i has of being chosen, it has values between 0 and 1 UseOp(i): Normalized Indicator of how much operator i has been used, it has values between 0 and 1 Stagnation: Normalized indicator of the number of generated solutions that couldn’t be inserted into Front, because they were either dominated solutions or there was not space available for them, it can have values between 0 and 1. MAXEVAL: Maximum number of evaluations of the objective function (stopping criterion) Window: Size of the time window. eval: Accumulator of the evaluations of the objective function v: Counter of the time windows that have elapsed Functions |

| CreateaSon(Operator(i), Parents): Generates one solution using the previous chosen operator i with the chosen parents (Steady state) Evaluate(Son): Calculates the objective values of Son and verify feasibility FuzzyController(Stagnation, UseOp(i)): Function that invokes the fuzzy controller with Stagnation and UseOp(i) as input values and returns the probability of selection of all the operators no-dominated_sortingSSD(NewPop): Sorts the fronts of NewPop by dominance and uses as ranking the SSD values of the solutions. EliminateWorstSolutionSSD(NewPop): Eliminates from the last front of NewPop the solution with the worst SSD, and assign NewPop to pop. |

| **************************************************************** 1. Create(pop) **Create random population 2. Front=NoDominated(pop) **Insert in Front the no-dominated solutions of pop 3. 1, UseOp(i)=0 4. v =0; Stagnation = 0; eval=0; 5. while (eval<MAXEVAL) do. **** Stop condition ** Chose |NParents| ** With a probability each parent is taken from Front to intensify) and with 1- from pop to diversify. 6. do 7. if (RandomDouble(0,1) ) then **The parent is chosen from Front 8. Parents[i] ← TournamentSSD(Front) 9. Else **The parent is chosen from pop 10. Parents[i] ← TournamentSSD(pop) *** Roulette to choose an operator with the selection probabilities of the operators 11. sum=0 12. 13. sum=sum+ProbOp(i) 14. while (sum>0) do 15. 16. sum=sum+ProbOp(i) ********** ***** The chosen operator is associated with the last value of i ** ***Steady state approach 17. Son ← CreateaSon(Operator(i), Parents) **** Get the objective vector values corresponding to Son and verify feasibility. 18. Evaluate(Son) 19. eval=eval+1 20. UseOp(Operator(i)) = UseOp(Operator(i))+ 1 . 0/ Window 21. v=v+1 ******************************* 22. If (Son dominates a set S of solutions in Front) 23. then { Front=Front\S; Front=Front Son} 24. else If ( s Front such that s dominates Son) 25. then (Stagnation= Stagnation+1.0/ Window) 26. else if (Sizeof(Front)<100) 27. then (Front=Front Son) 28. else { 29. Front=Front Son ** Front[1 00]=Son 30. Calculate SSD for all the solutions in Front 31. Sort the solutions in Front in ascending order by SSD 32. Eliminate the solution in Front with worst SSD:Front[100] 33. If (Son Front) 34. then Stagnation= Stagnation+1.0/ Window 35. } 36. If (v == Window) then **** The Fuzzy Controller is used to update the selection probability ****of all the operators 37. 38. 39. v =0; Stagnation = 0; 40. End if 41. pop=pop Son 42. NewPop=pop 43. no-dominated_sortingSSD(NewPop) 44. pop ← EliminateWorstSolutionSSD(NewPop) 45. End while 46. Return(Front) *** Approximated Pareto front generated |

6. Experimental Results

Two experiments were done in order to evaluate the performance of the proposed algorithms. The tested steady-state algorithms were T-NSGA-II-CD, T-NSGA-II-SSD, and T-FAME. The first experiment was done to make sure the algorithms were implemented correctly, while the second experiment was done to compare the performance between them using performance metrics.

The software and hardware platforms that were used for these experiments include Intel Core i5 1.6GHz processor, RAM 4GB, and IntelliJ IDEA CE IDE.

6.1. Performance Metrics Used

In order to measure the performance of each algorithm, two metrics were used: hypervolume [28] and generalized spread [29].

Hypervolume is the n-dimensional solution space volume that is dominated by the solutions in the reference set. If this space is big, then that means that the set is close to the Pareto Front. It is desirable for the indicator to have large values. Generalized Spread calculates the average of the distances of the points in the reference set to their closest neighbor. If this indicator has small values, then that means the solutions in the reference set are well distributed.

6.2. Experimental Setup

In order to configure the algorithms used in this work, the parameter values reported in the state-of-the-art were considered. The parameter value for the maximum number of evaluations was determined after a preliminary experimental phase. The comparison of all the algorithms, under the same operation conditions, utilizes a steady-state approach, using the dominant son. Table 3 and Table 4 show the values of the parameters used in the algorithms. The configuration of algorithm T-NSGA-II-SSD is the same one as T-NSGA-II-CD, however, it uses Spatial Spread Deviation instead of Crowding Distance as its density estimator.

Table 3.

T-NSGA-II-SSD parameters.

Table 4.

T-FAME parameters.

6.3. Experiment 1. Validating the Implemented Algorithms

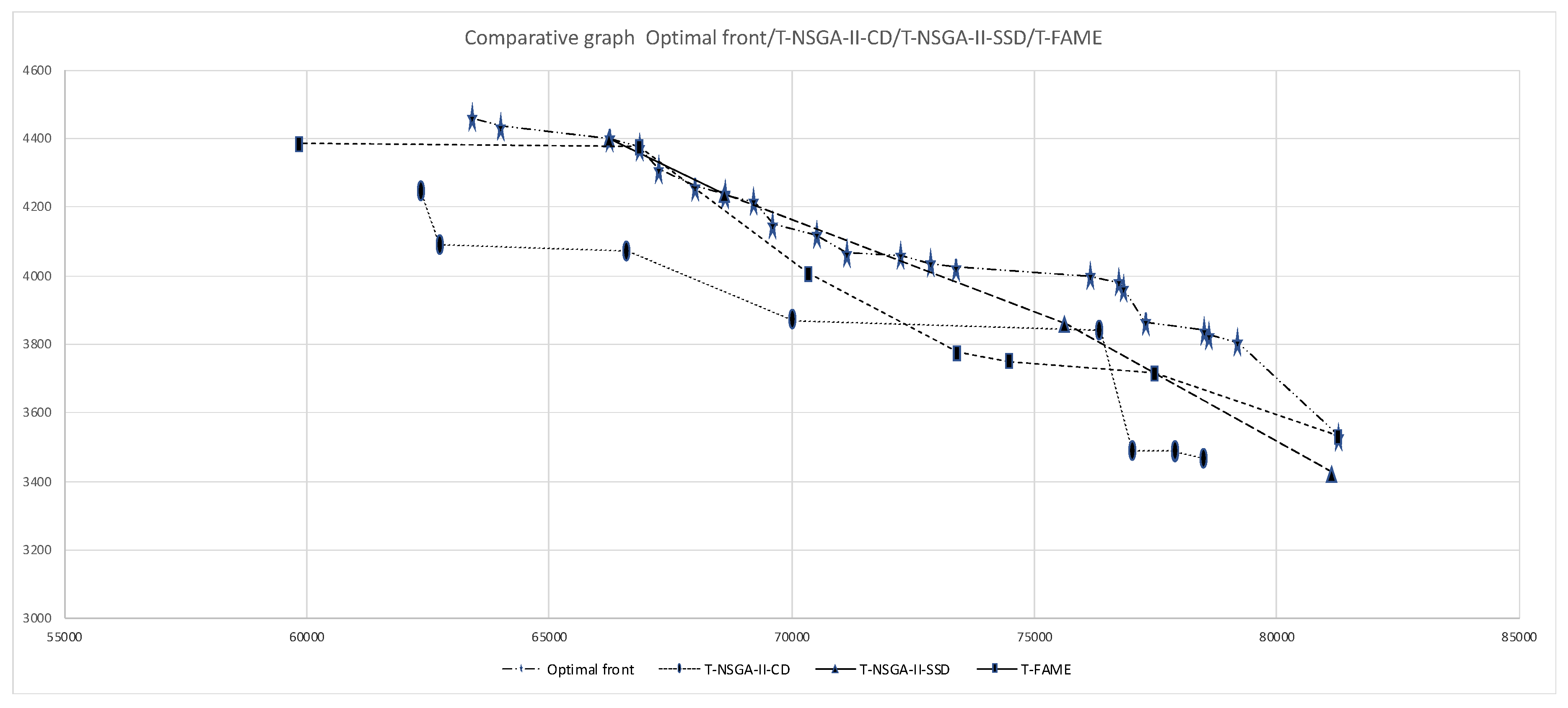

For this experiment, an instance named o2p25_rand was used, this instance was originally created for POP with intervals, which was converted in a trapezoidal fuzzy instance by adding two parameters to the intervals. The optimum Pareto Front was obtained using an exhaustive algorithm, and approximate fronts were obtained with T-NSGA-II-CD, T-NSGA-II-SSD, and T-FAME algorithms. All algorithms solve the MOPOP with Fuzzy Parameters and use a steady-state election mechanism, creating one solution from the genetic operators’ application. This adaptation from FAME has an advantage over algorithms using the classic generational approach in genetic algorithms.

The purpose of this experiment is to validate the correct operation of the implemented algorithms in the project. In the experiment, the fronts are generated, and they are compared to the optimum front, in order to determine if the algorithms are generating similar fronts. All the fronts that were generated are shown in Table 5. Each front is shown in two columns that contain the values of the two objectives that were originally Trapezoidal Fuzzy numbers, but they were converted into real numbers with the transformation based on GMI. The graph the fronts uses the GMI values obtained from the objectives.

Table 5.

Generated fronts of the algorithms with instance o2p25_rand.

It is worth nothing that, in Figure 8, the approximated fronts are relatively close and below the optimum front. Also, observe that the T-NSGA-II-SSD and T-FAME algorithms managed to reach some optimum solutions. Finally, note that the T-FAME algorithm has a good distribution between its solutions.

Figure 8.

Generated fronts of the algorithms with instance o2p25_rand.

6.4. Experiment 2. Evaluating the Performance of the Algorithms with Instances of 25 Projects

This experiment evaluates the performances of algorithms T-NSGA-II-CD, T-NSGA-II-SSD, and T-FAME, and utilizes 13 instances with 2 objectives and 25 projects. In order to compare the performance between the three algorithms, each algorithm was executed 30 times per instance. The performance metrics used were hypervolume and generalized spread. For each instance, the reference set contains the non-dominated solutions obtained from the combination of the 30 generated fronts. The computation of the metrics uses the reference set as an approximation to the optimum Pareto Front. The computation of the median value and interquartile ranges uses the metric values of the 30 instances sorted in ascending order. With the sorted array, the median value was the average of the metric values from positions 15 and 16. At the same time, the interquartile ranges correspond to those in positions 23 and 8, corresponding to the 75% and 25% of the metrics values, respectively. The median value and the interquartile ranges are used instead of the average and the standard deviation because they are less sensitive to extreme values. The experiment performs a hypothesis test to validate the obtained results. The hypothesis was proven using the parametric t student test on those data sets that passed the normality and homoscedasticity tests and using the non-parametric Wilcoxon signed-rank test on those that do not. Both tests apply a confidence level of 95%, pairing T-FAME with each of the other two algorithms. Table 6, Table 7, Table 8 and Table 9 show the results of the normality and homoscedasticity tests done for all the instances used in this work (25 and 100 projects) and the metrics of hypervolume and generalized spread. Table 6 and Table 8 show in the last column pairs (i,j), which indicate that the comparison of T-NSGA-II-CD and T-FAME uses test i, and the comparison T-NSGA-II-SSD and T-FAME uses test j. The values t and W in (i, j) stand for t student test and Wilcoxon test. This work tests each instance separately.

Table 6.

Hypervolume normality test, the null hypothesis is that the samples follow a normal distribution which is accepted (a) when p-value < 0.05 and rejected (r) otherwise.

Table 7.

Hypervolume homoscedasticity test, the null hypothesis is that all the input populations come from populations with equal variances, which is accepted (a) when p-value < 0.05 and rejected (r) otherwise. We can observe that the null hypothesis is accepted (a) for all the instances. The parametric t student test can be applied for all the instances that accept the null hypothesis in the normality tests.

Table 8.

Generalized Spread normality test, the null hypothesis is that the samples follow a normal distribution which is accepted (a) when p-value < 0.05 and rejected (r) otherwise.

Table 9.

Generalized Spread homoscedasticy test, the null hypothesis is that all the input populations come from populations with equal variances, which is accepted (a) when p-value < 0.05 and rejected (r) otherwise. Observe that the null hypothesis is accepted (a) for all the instances. The parametric t student test can be applied for all the instances that accept the null hypothesis in the normality tests.

Table 10 shows the performance results with the hypervolume metric, and Table 11 shows the results with the generalized spread metric. For the hypervolume metric, the algorithm with the largest value is considered to be the one with the best performance. For the generalized spread metric, the best algorithm is considered to be the one with the smallest value. The table’s cells show the median value of the metric (M) and the interquartile range (IRQ) in the following format: MIRQ. In the result tables, for each instance the best and second-best values are marked with solid or light black, respectively. In order to indicate if the observed differences in the performance of the algorithms are significant or not, for each algorithm the symbol indicates that the performance of T-FAME is significantly better that the algorithm which it is being compared. The symbol indicates the opposite, and the symbol indicates that the difference is not significant. These symbols are marked with an asterisk when the t student test was applied. To confirm the results obtained with the paired tests, a global evaluation is done with the three algorithms. This evaluation was done by applying a Friedman test with 95% confidence.

Table 10.

Results with the hypervolume metric.

Table 11.

Results with the generalized spread metric.

The information presented in Table 10 shows that T-NSGA-II-CD stands out as the algorithm with the best performance in 12 of 13 cases. The results on Table 11 shows that T-NSGA-II-SSD positions itself as the best algorithm in 10 of 13 cases and T-FAME in 8 of 13 cases. It can also be observed that these differences are significant in all cases, this is due to the fact that when the differences are not significant between the best and second-best algorithms, then that means the algorithms are considered tied. Table 12 confirms the results observed with the t student and Wilcoxon tests. As a result of applying the Friedman test with the three algorithms, the ones with the lowest rank for the hypervolume and generalized spread metrics are T-NSGA-II-CD and T-NSGA-II-SSD, respectively.

Table 12.

Friedman ranks of all algorithms with hypervolume and generalized spread.

6.5. Experiment 3. Evaluation of the Algorithm’ Perfomances Using Instances with 100 Projects

As indicated previously, the previous experiment was done with instances with 25 projects, for which the algorithms had to navigate in a space of binary vectors of length 25. In that case the size of the solution space was of 225. For this experiment, 9 instances of 2 objectives and 100 projects were used, these instances represented a greater complexity for the algorithms because the solution space increased to 2100. The experiment conditions were just as in the previous one, using the same metrics but in a scenario of greater complexity scenario. For each instance, the reference set contains the non-dominated solutions obtained from the combination of the 30 generated fronts. The computation of the metrics uses the reference set as an approximation to the optimum Pareto Front. The computation of the median value and interquartile ranges uses the metric values of the 30 instances sorted in ascending order. With the sorted array, the median value was the average of the metric values from positions 15 and 16. At the same time, the interquartile ranges correspond to those in positions 23 and 8, corresponding to the 75% and 25% of the metrics values, respectively. The experiment performs a hypothesis test to validate the obtained results. The hypothesis was proven using the parametric t student test on those data sets that passed the normality and homoscedasticity tests and using the non-parametric Wilcoxon signed-rank test on those that do not. Both tests apply a confidence level of 95%, pairing T-FAME with each of the other two algorithms. Table 6, Table 7, Table 8 and Table 9 shows the results of the normality homoscedasticity tests done for all the instances used in this work (25 and 100 projects) and the metrics of hypervolume and generalized spread.

Table 13 shows the results with the hypervolume metric and Table 14 shows the results with the generalized spread metric. For the hypervolume metric, the algorithm with the largest value is considered to be the one with the best performance. For the generalized spread metric, the best algorithm is considered to be the one with the smallest value. The table cells show the median value of the metric (M) and the interquartile range (IRQ) in the following format: MIRq. In the result tables, for each instance the best and second best values are marked with solid or light black, respectively. In order to indicate if the observed differences in the performance of the algorithms are significant or not, for each algorithm the symbol indicates that the performance of T-FAME is significantly better that the algorithm which it is being compared. The symbol indicates the opposite, and the symbol indicates that the difference is not significant. These symbols are marked with an asterisk where the t student test was applied. To confirm the results obtained with the paired tests, a global evaluation is done with the three algorithms. This evaluation was done by applying a Friedman test with 95% confidence.

Table 13.

Results with the hypervolume metric.

Table 14.

Results with the generalized spread metric.

The information presented in Table 13 shows T-FAME stands out as the algorithm with the best performance in 7 of 9 cases and T-NSGA-II-SSD in 5 of 9 cases. The results on Table 14 show that T-FAME stands out as the best algorithm in 6 of 9 cases and T-NSGA-II-SSD in 4 of 9 cases. These differences are significant in all cases, this is due to the fact that when the differences are not significant between the best and second-best algorithms, then that means the algorithms are considered tied. Table 15 confirms the results observed with the t student and Wilcoxon tests. As a result of applying the Friedman test with the three algorithms, the one that has the lowest rank for both metrics is T-FAME.

Table 15.

Friedman ranks of all algorithms with hypervolume and generalized spread.

7. Conclusions and Future Work

This work approaches the Multi-Objective Portfolio Optimization Problem with Trapezoidal Fuzzy Parameters. To the best of our knowledge, there are no reports of this variant of the problem. This work, for the first time, presents a mathematical model of the problem, and, additionally, contributes with a solution algorithm using the Fuzzy Adaptive Multi-objective Evolutionary (FAME) methodology and two novel steady state algorithms that apply the Non-Dominated Genetic Algorithm (NSGA-II) methodology to solve this variant of the problem. Traditionally, these kinds of algorithms use the Crowding Distance density estimator, so this work proposes substituting this estimator for the Spatial Spread Deviation to improve the distribution of the solutions in the approximated Pareto fronts. This work contributes with a defuzzification process that permits measurements on the algorithms’ performances using commonly used real metrics. The computational experiments use a set of problem instances with 25 and 100 projects and hypervolume and generalized spread metrics. The results with the challenging instances of 100 projects show that the algorithm T-FAME has the evaluated algorithms’ best performance. Three hypothesis tests supported these results, and this is encouraging because they confirm the feasibility of the proposed solution approach.

The main open works identified in this research are to develop algorithms for solving the problem with many objectives, preferences, and dynamic variants. Currently, we are working to change the fuzzy controller selector for a selector based on a reinforcement learning agent.

Author Contributions

Conceptualization: A.E.-P., D.L.-G., H.J.F.-H., L.C.-R.; Methodology: M.L.M.-R., N.R.-V.; Investigation: H.J.F.-H., L.C.-R.; Software: C.G.-S., N.R.-V.; Formal Analysis: H.J.F.-H.; Writing review and editing: A.E.-P., D.L.-G., H.J.F.-H., C.G.-S. All authors have read and agreed to the published version of the manuscript.

Funding

Authors thanks to CONACYT for supporting the projects from (a) Cátedras CONACYT Program with Number 3058. (b) CONACYT Project with Number A1-S-11012 from Convocatoria de Investigación Científica Básica 2017–2018 and CONACYT Project with Number 312397 from Programa de Apoyo para Actividades Científicas, Tecnológicas y de Innovación (PAACTI), a efecto de participar en la Convocatoria 2020-1 Apoyo para Proyectos de Investigación Científica, Desarrollo Tecnológico e Innovación en Salud ante la Contingencia por COVID-19. (c) A. Estrada and D. López would like to thank CONACYT for the support numbers 740442 and 931846.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Salo, A.; Keisler, J.; Morton, A. Portfolio Decision Analysis: Improved Methods for Resource Allocation; Springer: Berlin/Heidelberg, Germany, 2011; p. 409. [Google Scholar]

- Carlsson, C.; Fuller, R.; Heikkila, M.; Majlender, P. A fuzzy approach to R&D portfolio selection. Int. J. Approx. Reason. 2007, 44, 93–105. [Google Scholar]

- Coffin, M.A.; Taylor, B.W. Multiple criteria R&D project selection and scheduling using fuzzy sets. Comput. Oper. 1996, 23, 207–220. [Google Scholar]

- Klapka, J.; Pinos, P. Decision support system for multicriterial R&D and information systems projects selection. Eur. J. Oper. Res. 2002, 140, 434–446. [Google Scholar]

- Ringuest, J.L.; Graves, S.B.; Case, R.H. Mean–Gini analysis in R&D portfolio selection. Eur. J. Oper. Res. 2004, 154, 157–169. [Google Scholar]

- Stummer, C.; Heidemberger, K. Interactive R&D portfolio analysis with project interdependencies and time profiles of multiple objectives. IEEE Trans. Eng. Manag. 2003, 30, 175–183. [Google Scholar]

- Salo, A.; Keisler, J.; Morton, A. Portfolio Decision Analysis. Improved Methods for Resource Allocation, International Series in Operations Research & Management Science, Chapter An Invitation to Portfolio Decision Analysis; Springer: New York, NY, USA, 2011; pp. 3–27. [Google Scholar]

- Fernandez, E.; Lopez, E.; Lopez, F.; Coello, C. Increasing selective pressure toward the best compromise in Evolutionary Multiobjective Optimization: The extended NOSGA method. Inf. Sci. 2011, 181, 44–56. [Google Scholar] [CrossRef]

- Roy, B. Robustness for Operations Research and Decision Aiding; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Balderas, F.; Fernandez, E.; Gomez, C.; Rangel, N.; Cruz-Reyes, L. An interval-based approach for evolutionary multi-objective optimization of project portfolios. Int. J. Inf. Technol. Decis. Mak. 2019, 18, 1317–1358. [Google Scholar] [CrossRef]

- García, R.R. Hiper-heurístico para Resolver el Problema de Cartera de Proyectos Sociales. Master’s Thesis, Maestro en Ciencias de la Computación, Instituto Tecnológico de Ciudad Madero, Tamps, Mexico, 2010. [Google Scholar]

- Rivera, Z.G. Enfoque Metaheurístico Híbrido para el Manejo de Muchos Objetivos en Optimización de Cartera de Proyectos Interdependientes con Decisiones de Apoyo Parcial. Ph.D. Thesis, Doctorado en Ciencias de la Computación, Instituto Tecnológico de Tijuana, Tamps, Mexico, 2015. [Google Scholar]

- Bastiani, M.S. Solución de Problemas de Cartera de Proyectos Públicos a partir de Información de Ranking de Prioridades. Ph.D. Thesis, Doctorado en Ciencias de la Computación, Instituto Tecnológico de Tijuana, Tamps, Mexico, 2017. [Google Scholar]

- Sánchez, S.P. Incorporación de Preferencias en Metaheurísticas Evolutivas a través de Clasificación Multicriterio. Ph.D. Thesis, Doctorado en Ciencias de la Computación, Instituto Tecnológico de Tijuana, Tamps, Mexico, 2017. [Google Scholar]

- Martínez, V.D. Optimización Multiobjetivo de Cartera de Proyectos con Fenómenos de Dependencias Temporales y Decisiones Dinámicas de Financiamiento. Ph.D. Thesis, Doctorado en Ciencias de la Computación, Instituto Tecnológico de Tijuana, Tamps, Mexico, 2020. [Google Scholar]

- Durillo, J.J.; Nebro, A.J.; Luna, F.; Alba, E. On the Effect of the Steady-State Selection Scheme in Multi-Objective Genetic Algorithms. In Evolutionary Multi-Criterion Optimization. EMO 2009. Lecture Notes in Computer Science; Ehrgott, M., Fonseca, C.M., Gandibleux, X., Hao, J.K., Sevaux, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; p. 5467. [Google Scholar]

- Zadeth, L.A. Fuzzy Sets. Inf. Control 1965, 8, 338–353. [Google Scholar] [CrossRef]

- Vahidi, J.; Rezvani, S. Arithmetic Operations on Trapezoidal Fuzzy Numbers. J. Nonlinear Anal. Appl. 2013, 2013, 1–8. [Google Scholar] [CrossRef][Green Version]

- Kumar, V. Multi-Objective Fuzzy Optimization; Indian Institute of Technology: Kharagpur, India, 2010. [Google Scholar]

- Yao, S.; Jiang, Z.; Li, N.; Zhang, H.; Geng, N. A multi-objective dynamic scheduling approach using multiple attribute decision making in semiconductor manufacturing. Int. J. Prod. Econ. 2011, 130, 125–133. [Google Scholar] [CrossRef]

- Karp, R.M. Reducibility Among Combinatorial Problems. Complex. Comput. Comput. 1972, 85–103. [Google Scholar] [CrossRef]

- Deb, K.; Agrawal, S.; Pratap, A.; Meyarivan, T. A Fast Elitist Non-dominated Sorting Genetic Algorithm for Multi-objective Optimization: NSGA-I. In Proceedings of the Proceedings of the Parallel Problem Solving from Nature VI, Paris, France, 18–20 September 2000; pp. 849–858. [Google Scholar]

- Umbarkar, A.J.; Sheth, P.D. Crossover operators in genetic algorithms: A review. ICTAC J. Soft Comput. 2015, 6, 1083–1092. [Google Scholar]

- Reeves, C.R. Genetic Algorithms. In International Series in Operations Research & Management Science, Handbook of Metaheuristics; Gendreau, M., Potvin, J.Y., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; p. 146. [Google Scholar]

- Santiago, A.; Dorronsoro, B.; Nebro, A.J.; Durillo, J.J.; Castillo, O.; Fraire, H.J. A novel multi-objective evolutionary algorithm with fuzzy logic based adaptive selection of operators: FAME. Inf. Sci. 2019, 471, 233–251. [Google Scholar] [CrossRef]

- Roy, S.; Chakraborty, U. Introduction to Soft Computing: Neurofuzzy and Genetic Algorithms; Dorling-Kindersley: London, UK, 2013. [Google Scholar]

- Rainer, S.; Kenneth, P. Differential Evolution-A Simple and Efficient Heuristic for Global Optimization over Continuous Spaces. J. Glob. Optim. 1997, 11, 341–359. [Google Scholar]

- While, V.; Member, S.; Bradstreet, L.; Barone, V. A Fast Way of Calculating Exact Hypers. IEEE Trans. Evol. Comput. 2012, 16, 86–95. [Google Scholar] [CrossRef]

- Zhou, A.; Jin, Y.; Zhang, Q.; Sendhoff, B.; Tsang, E. Combining Model-based and Genetics-based Offspring Generation for Multi-objective Optimization Using a Convergence Criterion. IEEE Congr. Evol. Comput. 2006, 892–899. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).