Safety Behavior Recognition for Substation Operations Based on a Dual-Path Spatiotemporal Network

Abstract

1. Introduction

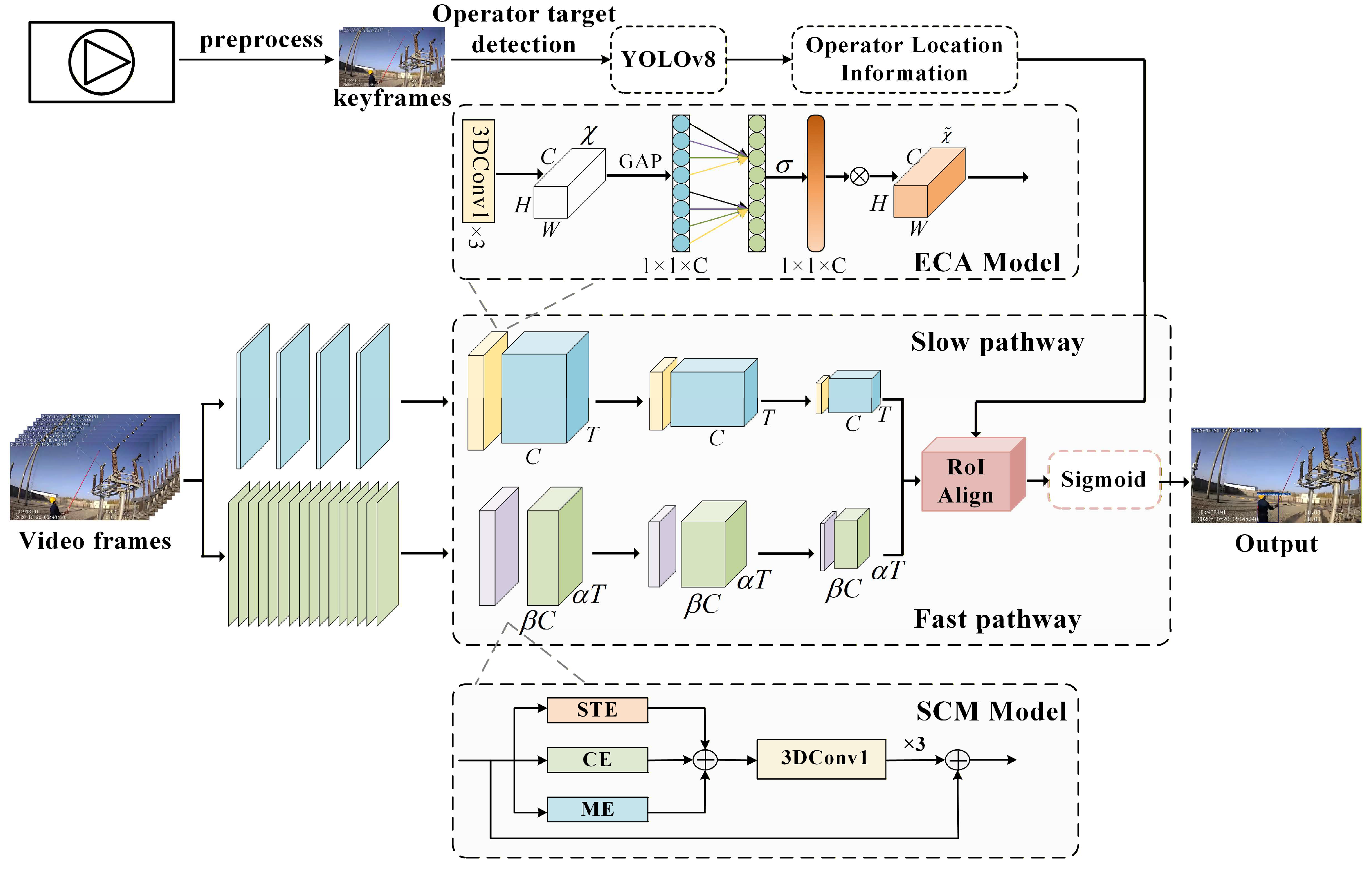

- To model the temporal structure formed by continuous posture variations and the alternation of fine-grained local actions, this paper proposes a behavior recognition method based on a dual-path spatiotemporal network. By combining static target localization from YOLOv8 with dynamic behavior recognition from SlowFast, the method achieves accurate detection and continuous action analysis of operator behaviors in substation operation scenarios for both rapid movements and long-duration sequences, thereby improving multi-scenario adaptability.

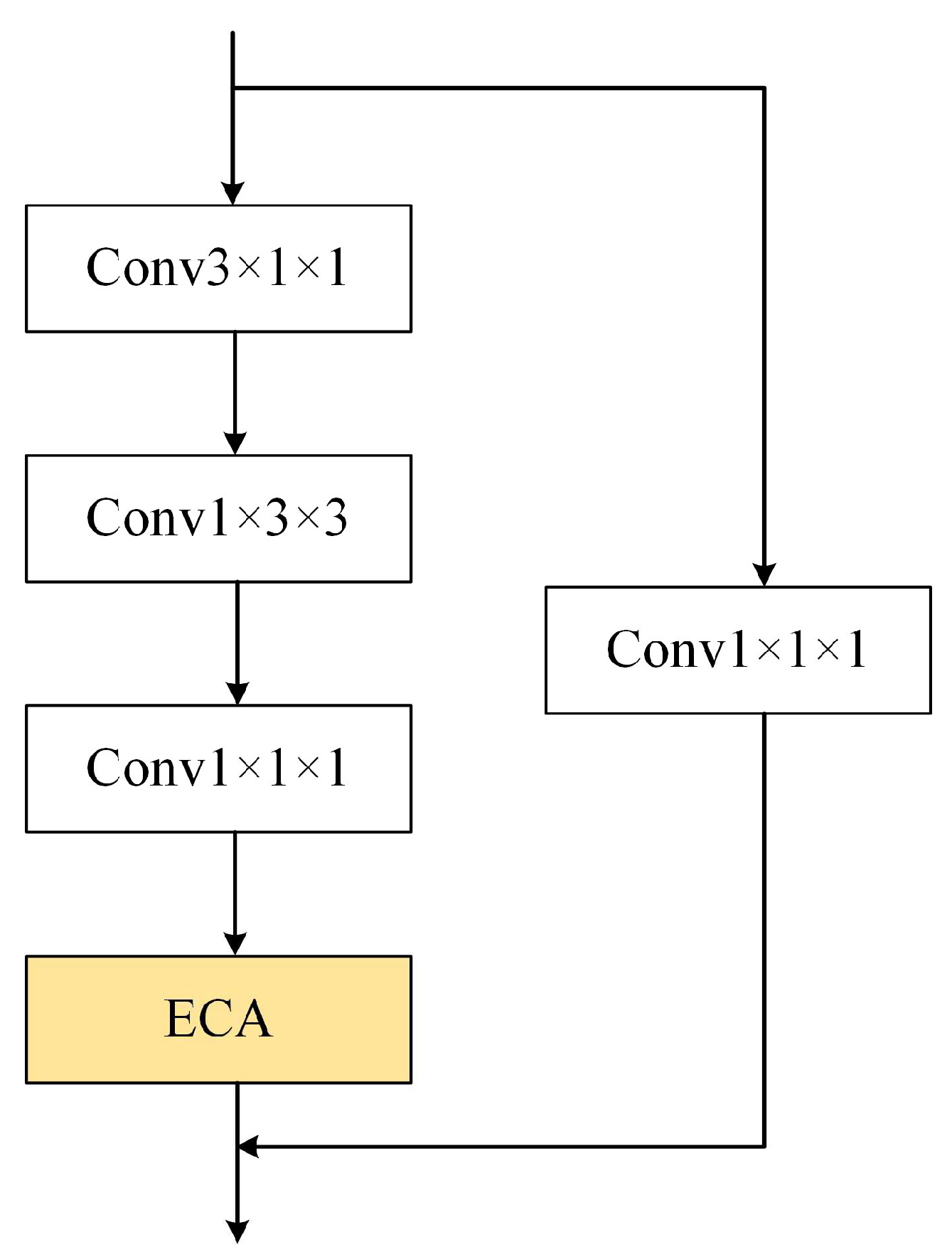

- To address the problems of partial occlusion of operators and limited computational efficiency, an attention mechanism is integrated with the original residual block in the Slow Pathway to form a lightweight ECA-Res module. This design enables efficient channel weight allocation, thereby strengthening the temporal representation capability of long-duration operational postures, while effectively mitigating occlusion-induced interference in dynamic scenes and reducing overall network complexity.

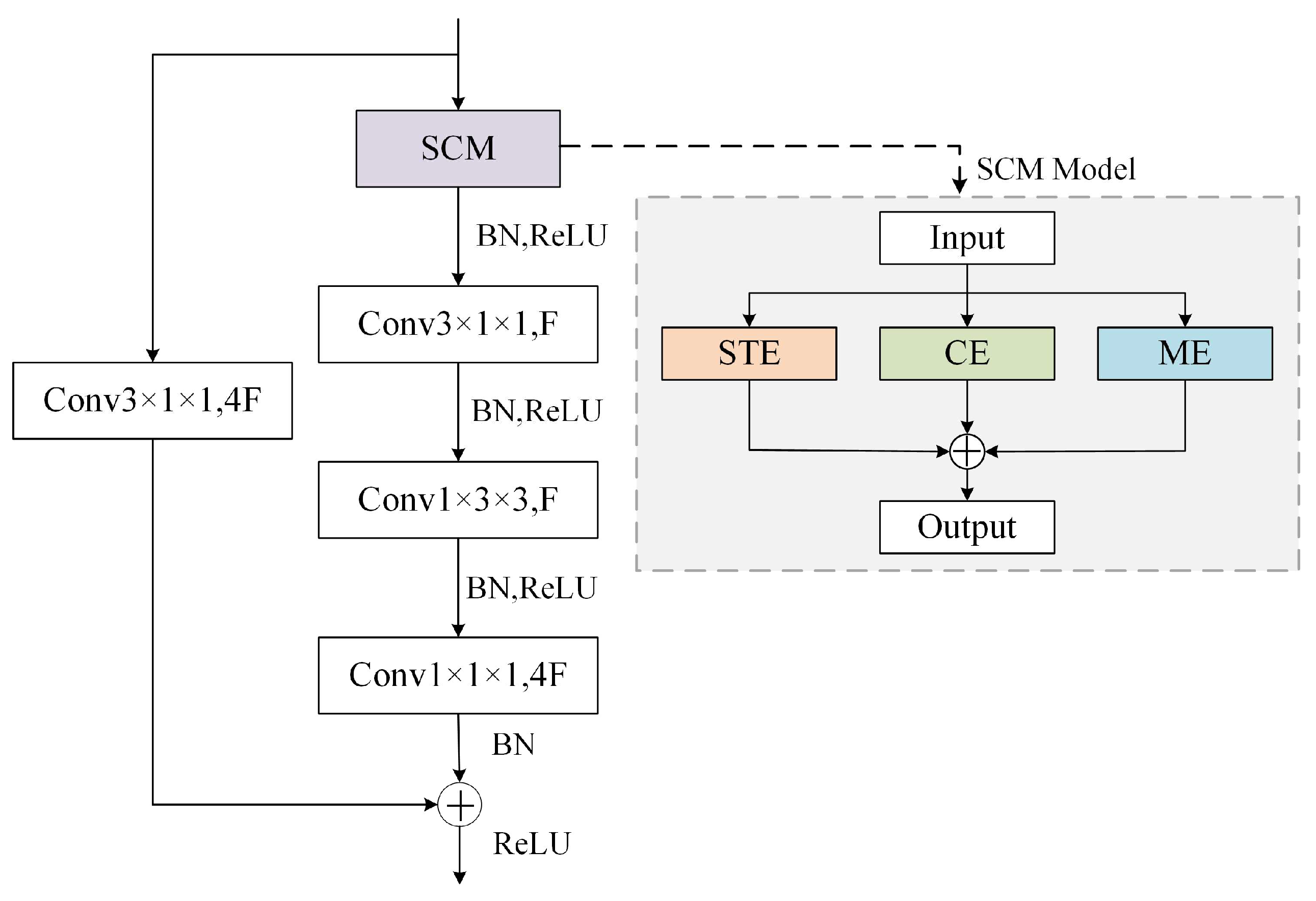

- Considering that operator behaviors exhibit continuity and similarity, a multi-path excitation residual network (SCM-Res) is introduced in the Fast Pathway. By performing weighted fusion of spatial–temporal, channel, and motion information, this module effectively captures fine-grained temporal variations of rapid behaviors between adjacent frames and enhances multi-scale feature fusion capability for fast motions.

- To address the class imbalance problem in substation operation datasets, a binary cross-entropy-based Focal Loss function is designed to effectively reduce the loss weight of easily classified samples and improve the attention and recognition ability for infrequent classes.

2. Basic Theory of Behavior Recognition

2.1. YOLOv8 Network

2.2. SlowFast Network

3. Methods

3.1. Method Overview

3.2. Improvements to the SlowFast Network Architecture

3.2.1. Multi-Path Excitation Residual Network

3.2.2. ECA-Res

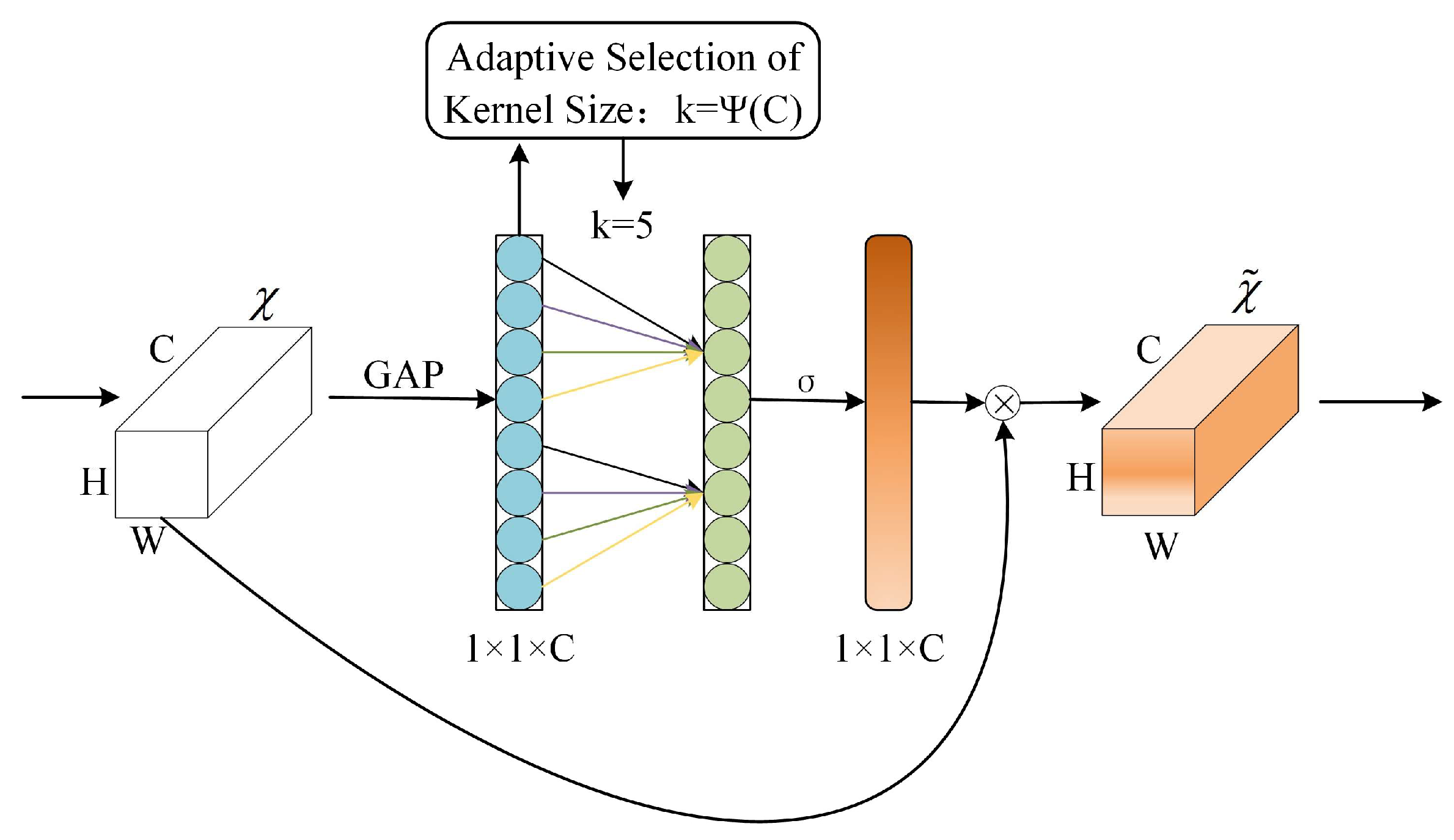

- The feature map is first compressed through convolution to obtain a new feature map χ, which is then processed by global average pooling to produce a 1 × 1 × C vector.

- Calculate the size of the adaptive one-dimensional convolution kernel as shown in Equation (8).where γ and b are hyperparameters, with γ = 2 and b = 1; k represents the kernel size, and C denotes the channel size. The parameters γ and b govern the scaling relationship between the convolution kernel size and the number of channels. This design maintains a small and stable odd-sized convolution kernel across different channel scales, thereby achieving a good balance between channel-wise coverage and computational efficiency.

- Use the adaptive convolution kernel into the one-dimensional convolution to get the weights of each channel, allowing layers with larger number of channels to interact more between neighboring channels.

- The generated weights σ are multiplied channel-by-channel with the original feature map χ to obtain the final channel-weighted feature map . This process enhances the response of important channels while suppressing background noise or unimportant information.

3.2.3. Focal Loss Function

4. Experimental Design and Result Analysis

4.1. Dataset Construction

4.2. Experimental Environment

4.3. Experimental Results and Analysis

4.3.1. Ablation Studies

4.3.2. Stability and Robustness Analysis

4.3.3. Analysis of Experimental Results on Public Datasets

4.3.4. Comparative Experiments

4.3.5. Operational Scenario Testing

5. Conclusions

- YOLOv8 is first used to replace the original Faster R-CNN region detection head, enabling precise localization of operators in video frames and providing reliable spatial information for subsequent behavior feature extraction and recognition.

- By incorporating the ECA attention mechanism into the Slow Pathway and the multi-path excitation residual network into the Fast Pathway, the two pathways form a complementary structure during multi-scale spatiotemporal feature fusion, thereby enabling effective representation of long-term postural states and rapid dynamic behaviors in complex operation scenarios.

- A binary cross-entropy-based Focal Loss is designed to balance the weight distribution of positive and negative samples across different categories, supporting more stable recognition performance for categories with limited samples.

6. Limitations

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ma, F.; Wang, B.; Dong, X. Scene understanding method utilizing global visual and spatial interaction features for safety production. Inf. Fusion 2025, 114, 102668. [Google Scholar] [CrossRef]

- Ramirez-Bettoni, E.; Eblen, M.L.; Nemeth, B. Analysis of live work accidents in overhead power lines and other electrical systems between 2010–2022. In Proceedings of the 2024 IEEE IAS Electrical Safety Workshop (ESW), Tucson, AZ, USA, 4–8 March 2024; pp. 1–5. [Google Scholar] [CrossRef]

- Lin, C.; Xu, Q.; Huang, Y. Pro-control method of power safety accidents based on event evolutionary graph. J. Saf. Sci. Technol. 2021, 17, 39–45. [Google Scholar] [CrossRef]

- Guan, C.; Yan, Y.; Zhang, S. Research on behavioral causes of casualties in electric power enterprises based on 24Model. J. Saf. Environ. 2023, 23, 2788–2793. [Google Scholar] [CrossRef]

- Vukicevic, A.M.; Petrovic, M.; Milosevic, P.; Peulic, A.; Jovanovic, K.; Novakovic, A. A systematic review of computer vision based personal protective equipment compliance in industry practice: Advancements, challenges and future directions. Artif. Intell. Rev. 2024, 57, 319. [Google Scholar] [CrossRef]

- Meng, L.; He, D.; Ban, G. Incremental spatio-temporal augmented sampling for power grid operation behavior recognition. Electronics 2025, 14, 3579. [Google Scholar] [CrossRef]

- Meng, L.; He, D.; Ban, G. Active hard sample learning for violation action recognition in power grid operation. Information 2025, 16, 67. [Google Scholar] [CrossRef]

- Kulsoom, F.; Narejo, S.; Mehmood, Z. A review of machine learning-based human activity recognition for diverse applications. Neural Comput. Appl. 2022, 34, 18289–18324. [Google Scholar] [CrossRef]

- Liu, K.; Zhao, H.; Wu, T.; Wu, C.; Wan, Y. Lightweight human pose estimation for safety identification in live power distribution network operations. J. Saf. Environ. 2025, 25, 3445–3455. [Google Scholar] [CrossRef]

- Wang, B.; Ma, F.; Jia, R. Skeleton-based violation action recognition method for safety supervision in operation field of distribution network based on graph convolutional network. CSEE J. Power Energy 2021, 9, 2179–2187. [Google Scholar] [CrossRef]

- Li, W.; Ma, F.; Zuo, Z. SafetyGPT: An autonomous agent of electrical safety risks for monitoring workers’ unsafe behaviors. Int. J. Electr. Power Energy Syst. 2025, 168, 110672. [Google Scholar] [CrossRef]

- Chandramouli, A.N.; Natarajan, S.; Alharbi, H.A. Enhanced human activity recognition in medical emergencies using a hybrid deep CNN and bi-directional LSTM model with wearable sensors. Sci. Rep. 2024, 14, 30979. [Google Scholar] [CrossRef]

- Wei, D.; Tian, Y.; Wei, L. Efficient dual attention SlowFast networks for video action recognition. Comput. Vis. Image Underst. 2022, 222, 103484. [Google Scholar] [CrossRef]

- Chen, Y.; Ge, H.; Liu, Y. AGPN: Action granularity pyramid network for video action recognition. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 3912–3923. [Google Scholar] [CrossRef]

- Ma, F.; Liu, Y.; Wang, B. Research on intelligent identification method of distribution grid operation safety risk based on semantic feature parsing. Int. J. Electr. Power Energy Syst. 2024, 160, 110139. [Google Scholar] [CrossRef]

- Feng, X.; Wu, T.; Wan, Y. Behavior recognition method of live working personnel based on human–object interaction detection. J. Saf. Sci. Technol. 2024, 20, 205–211. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, Q.; Xu, X. Unsafe behavior recognition model of high-climbing workers based on vision. China Saf. Sci. J. 2025, 35, 144–151. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Zou, M.; Zhou, Y.; Jiang, X. Spatio-temporal behavior detection in field manual labor based on improved SlowFast architecture. Appl. Sci. 2024, 14, 2976. [Google Scholar] [CrossRef]

- Li, J.; Xie, S.; Zhou, X. Real-time detection of coal mine safety helmet based on improved YOLOv8. J. Real-Time Image Process. 2024, 22, 26. [Google Scholar] [CrossRef]

- Chen, H.; Zhou, G.; Jiang, H. Student behavior detection in the classroom based on improved YOLOv8. Sensors 2023, 23, 8385. [Google Scholar] [CrossRef] [PubMed]

- Zou, H.; Yang, J.; Sun, J. Detection method of external damage hazards in transmission line corridors based on YOLO-LSDW. Energies 2024, 17, 4483. [Google Scholar] [CrossRef]

- Feichtenhofer, C.; Fan, H.; Malik, J.; He, K. SlowFast networks for video recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV 2019), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 6202–6211. [Google Scholar]

- Sun, N.; Leng, L.; Liu, J. Multi-stream slowfast graph convolutional networks for skeleton-based action recognition. Image Vis. Comput. 2021, 109, 104141. [Google Scholar] [CrossRef]

- Wang, Z.; She, Q.; Smolic, A. ACTION-net: Multipath excitation for action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2021), Nashville, TN, USA, 19–25 June 2021; pp. 13214–13223. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2018), Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2020), Seattle, WA, USA, 13–19 June 2020; pp. 11531–11539. [Google Scholar]

- Lin, T.-Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV 2017), Venice, Italy, 22–29 October 2017; pp. 2999–3007. [Google Scholar]

- Cui, Y.; Jia, M.; Lin, T.-Y.; Song, Y.; Belongie, S. Class-balanced loss based on effective number of samples. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2019), Long Beach, CA, USA, 15–20 June 2019; pp. 9268–9277. [Google Scholar]

- Li, Y.; Zou, G.; Zou, H. Insulators and defect detection based on the improved focal loss function. Appl. Sci. 2022, 12, 10529. [Google Scholar] [CrossRef]

- Li, Y.; Shi, F.; Hou, S. Feature pyramid attention model and multi-label focal loss for pedestrian attribute recognition. IEEE Access 2020, 8, 164570–164579. [Google Scholar] [CrossRef]

- Yang, F. A multi-person video dataset annotation method of spatio-temporally actions. arXiv 2022, arXiv:2204.10160. [Google Scholar]

| Reference | Model | Recognized Behaviors | AP/% | Parameters/M | GFLOPs | Limitations |

|---|---|---|---|---|---|---|

| [9] | YOLOv8n-Pose + ST-GCN | Live working | 88.00 | 6.23 | 4.50 | Not optimized or validated under complex background clutter and occlusion conditions |

| [15] | ResNet101 + Transformer | Ladder climbing | 91.2 | 121.40 | 202.30 | Limited behavior categories and insufficient generalization capability |

| [16] | OpenPose + ST-GCN + YOLOv5s | Live working | 88.9 | — | — | Human pose estimation is constrained under occlusion conditions |

| [17] | PCT-1DCNN | High-altitude operation | 90.3 | — | — | Limited generalization to other behavior types |

| Stage | Slow Pathway | Fast Pathway | S/F Output T × S2 |

|---|---|---|---|

| Raw clip | — | — | 64 × 2242 |

| Data layer | Stride16, 12 | Stride2, 12 | 4/32 × 2242 |

| Conv1 | 1 × 72, 64 | 5 × 72, 64 | 4/32 × 1122 |

| Pool1 | 1 × 32, max | 1 × 32, max | 4/32 × 562 |

| Res2 | {1 × 12, 64 1 × 32, 64 1 × 12, 256} × 3 | {3 × 12, 8 1 × 32, 8 1 × 12, 32} × 3 | 4/32 × 562 |

| Res3 | {1 × 12, 128 1 × 32, 128 1 × 12, 512} × 4 | {3 × 12, 64 1 × 32, 64 1 × 12, 256} × 4 | 4/32 × 282 |

| Res4 | {3 × 12, 256 1 × 32, 256 1 × 12, 1024} × 6 | {3 × 12, 32 1 × 32, 32 1 × 12, 128} × 6 | 4/32 × 142 |

| Res5 | {3 × 12, 512 1 × 32, 512 1 × 12, 2048} × 3 | {3 × 12, 64 1 × 32, 64 1 × 12, 256} × 3 | 4/32 × 72 |

| Global average pool, concate, fc | #classes | ||

| Behavior Category | Videos | Labels | Annotation Strategy | |

|---|---|---|---|---|

| Train | Test | |||

| Check electricity | 307 | 77 | 2296 | Operator holds a voltage detector and performs electricity-checking behaviors near equipment. |

| Stand | 406 | 102 | 3615 | Operator holds a voltage detector and checks for the absence of voltage to ground near the equipment. |

| Squat | 77 | 19 | 554 | Operator adopts a crouched posture with a lowered center of gravity. |

| Ladder climb | 64 | 16 | 520 | Operator maintains contact with a ladder while moving upward or downward, or remaining stationary. |

| Live working | 283 | 71 | 2580 | Operator performs maintenance, testing, or operational tasks in close proximity to energized equipment. |

| Pole climb | 78 | 20 | 574 | Operator climbs along a utility pole or performs tasks while in contact with the pole. |

| Total | 1215 | 305 | 10,139 | —— |

| Model | SCM-Res | ECA-Res | Accuracy of Different Behaviors Under Operating Conditions (%) | mAP /% | Params /Million | GFLOPs/G | |||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Check Electricity | Stand | Squat | Ladder Climb | Live Working | Pole Climb | ||||||

| 1 | × | × | 81.03 | 83.71 | 75.03 | 79.91 | 83.27 | 82.19 | 80.86 | 33.568 | 53.10 |

| 2 | × | √ | 83.57 | 84.93 | 75.43 | 82.01 | 83.68 | 84.13 | 82.29 | 33.572 | 53.12 |

| 3 | √ | × | 86.97 | 88.65 | 80.25 | 84.99 | 85.77 | 87.79 | 85.74 | 33.631 | 53.30 |

| 4 | √ | √ | 88.92 | 90.83 | 82.44 | 87.90 | 87.36 | 89.15 | 87.77 | 33.634 | 53.37 |

| Behavior Category | Variance | Std/% | 95% CI/% |

|---|---|---|---|

| Check electricity | 0.00021 | 1.45 | [86.10, 91.63] |

| Stand | 0.00018 | 1.34 | [88.42, 93.10] |

| Squat | 0.00039 | 1.97 | [78.66, 85.98] |

| Ladder climb | 0.00026 | 1.61 | [84.82, 90.77] |

| Live working | 0.00015 | 1.22 | [85.01, 89.74] |

| Pole climb | 0.00031 | 1.76 | [85.90, 92.11] |

| Scenario Factor | Condition | Accuracy of Different Behaviors Under Operating Conditions (%) | |||||

|---|---|---|---|---|---|---|---|

| Check Electricity | Stand | Squat | Ladder Climb | Live Working | Pole Climb | ||

| Lighting | Original | 90.24 | 92.39 | 83.70 | 87.95 | 87.65 | 90.31 |

| Brightness enhancement | 89.44 | 91.10 | 83.50 | 87.44 | 87.40 | 89.85 | |

| Camera viewpoints | Front view | 89.15 | 92.72 | 84.15 | 86.83 | 87.46 | 90.21 |

| Back view | 88.91 | 91.21 | 82.14 | 84.42 | 85.43 | 89.96 | |

| Motion Pattern | Continuous motion | 90.51 | 91.45 | 83.81 | 88.58 | 87.34 | 89.42 |

| Intermittent motion | 89.32 | 91.70 | 83.23 | 87.75 | 86.78 | 89.10 | |

| Model | Accuracy/% | |

|---|---|---|

| HMDB51 | UCF101 | |

| Slow Only | 66.8 | 80.3 |

| TimeSformer | 69.5 | 82.5 |

| AMP-HOI | 76.8 | 89.9 |

| Proposed method | 77.6 | 91.6 |

| Model | Accuracy of Different Behaviors Under Operating Conditions (%) | mAP/% | |||||

|---|---|---|---|---|---|---|---|

| Check Electricity | Stand | Squat | Ladder Climb | Live Working | Pole Climb | ||

| Slow Only | 78.92 | 87.77 | 74.36 | 76.75 | 84.67 | 71.03 | 78.92 |

| TimeSformer | 84.17 | 85.98 | 77.33 | 80.29 | 85.37 | 76.42 | 81.59 |

| AMP-HOI | 87.30 | 88.71 | 79.50 | 84.31 | 86.59 | 82.52 | 84.82 |

| Proposed method | 88.92 | 90.83 | 82.44 | 87.90 | 87.36 | 89.15 | 87.77 |

| Model | Precision/% | Recall/% | F1-Score/% | Params/M | GFLOPs | FPS (Frame/s) |

|---|---|---|---|---|---|---|

| Slow Only | 78.64 | 76.68 | 77.64 | 31.63 | 63.92 | 101.50 |

| TimeSformer | 81.72 | 77.82 | 79.72 | 115.76 | 146.54 | 75.20 |

| AMP-HOI | 82.91 | 80.00 | 81.43 | 265.16 | 129.83 | 82.60 |

| Proposed method | 89.07 | 82.47 | 85.56 | 33.63 | 53.37 | 117.90 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhao, X.; Ma, F.; Cao, G.; Lv, S.; Liu, Q. Safety Behavior Recognition for Substation Operations Based on a Dual-Path Spatiotemporal Network. Processes 2026, 14, 133. https://doi.org/10.3390/pr14010133

Zhao X, Ma F, Cao G, Lv S, Liu Q. Safety Behavior Recognition for Substation Operations Based on a Dual-Path Spatiotemporal Network. Processes. 2026; 14(1):133. https://doi.org/10.3390/pr14010133

Chicago/Turabian StyleZhao, Xiaping, Fuqi Ma, Ge Cao, Shixuan Lv, and Qian Liu. 2026. "Safety Behavior Recognition for Substation Operations Based on a Dual-Path Spatiotemporal Network" Processes 14, no. 1: 133. https://doi.org/10.3390/pr14010133

APA StyleZhao, X., Ma, F., Cao, G., Lv, S., & Liu, Q. (2026). Safety Behavior Recognition for Substation Operations Based on a Dual-Path Spatiotemporal Network. Processes, 14(1), 133. https://doi.org/10.3390/pr14010133