Abstract

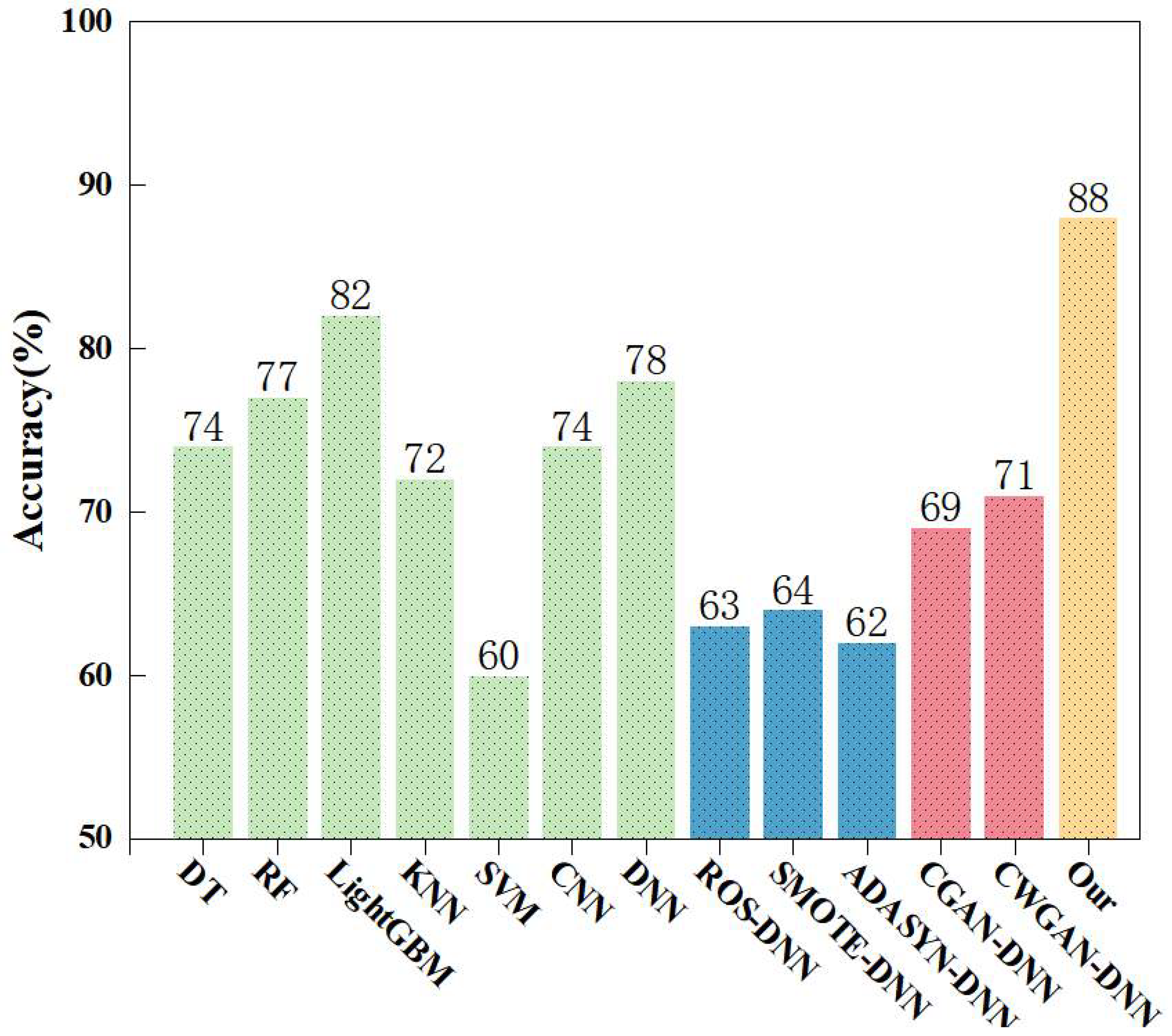

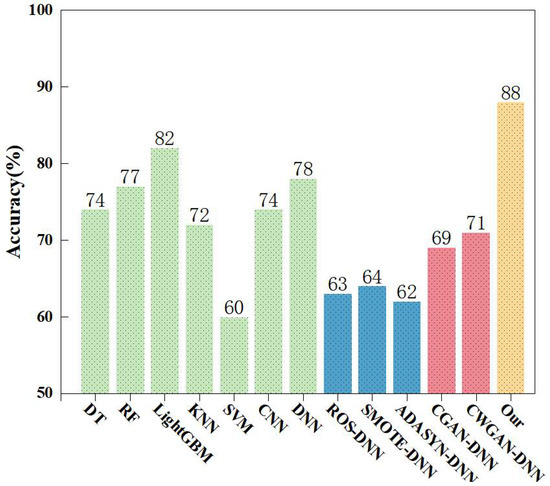

Industrial-Internet security faces a core challenge: improving detection accuracy for critical minority-class network attacks. The existing intrusion detection methods based on Conditional Generative Adversarial Nets (CGANs) aim to achieve data balance by reconstructing minority-class attack samples. However, they encounter problems such as generating deceptive samples, poor sample quality, vanishing gradients and difficulties in training. This paper proposes an intrusion detection method based on the Multi-Discriminator Conditional Classification Generative Adversarial Network (MDCCGAN), an improved variant of CGAN, which integrates multiple discriminators and an independent classifier into the traditional CGAN framework. The multiple discriminators reduce the probability of generating deceptive samples, the independent classifier decouples the classification loss to clarify the direction of gradient updates, and the introduction of the Wasserstein distance fundamentally addresses the gradient-vanishing problem. Experiments conducted on the NSL-KDD and UNSW-NB15 datasets demonstrate that the proposed method significantly improves the recall, F1-score and accuracy for minority-class attacks. Specifically, on the NSL-KDD dataset, the overall accuracy increases from 74% to 94%, and the F1-score for the extremely rare U2R attack surges from 0% to 77%. Similarly, on the UNSW-NB15 dataset, the accuracy reaches 88%, a 10% improvement over the baseline DNN, and the F1-scores for extreme minority attacks such as Analysis, Backdoor, and Worms improved to 97%, 62%, and 84%, respectively. These results confirm that our method effectively outperforms traditional generation models and common class-balancing methods. It provides reliable technical support for industrial-Internet security.

Keywords:

minority-class attack; conditional generative adversarial networks; multiple discriminators; Wasserstein distance; class balance MSC:

68-06

1. Introduction

Intrusion Detection Systems (IDSs) play a critical role in enhancing the cybersecurity of the industrial Internet. As a key technical component of the industrial-Internet security protection system, an IDS can ensure information integrity and the privacy and security of transmitted data [1]. Its working principle is to retrieve known attack signatures or identify abnormal features that deviate from preset normal activities [2]. Leveraging an active defense mode, the system is capable of detecting and reporting any abnormal traffic with network security risks automatically, which covers risk scenarios ranging from internal attacks and external intrusions to operational errors [3]. When network attacks or malicious behaviors occur, the system can automatically trigger alarm mechanisms or implement corresponding protective actions. Therefore, an IDS is pivotal to safeguarding the cybersecurity of the industrial Internet, protecting information integrity and the privacy and security of transmitted data, ensuring data integrity and reliability, and maintaining the normal operation of industrial production.

Currently, deep learning models are widely applied to construct intrusion detection systems, thanks to their capacity for processing massive high-dimensional feature data and their adaptive learning characteristics. Although existing studies have significantly improved the overall accuracy of intrusion detection by optimizing model architectures, these schemes share a critical flaw: detection accuracy of minority-class attack samples is often masked by overall metrics, which weakens the ability of models to identify low-frequency attacks in practical industrial scenarios. Moreover, the imbalance between normal traffic samples and attack traffic samples is particularly pronounced in practical application environments of the industrial Internet [4]. Dimensional completeness and sample diversity of dataset are key prior conditions determining performance of deep learning-based intrusion detection models [5]. This is because deep learning-based intrusion detection methods rely heavily on large-scale labeled samples for model training, which readily leads to the issue of “majority-class-dominated training process” in imbalanced data scenarios. Such a problem impairs the model detection performance on critical minority-class samples in multi-classification tasks, resulting in low detection rates and high false positive rates for minority-class attacks and thus severely limiting practical applicability of the models [6]. Notably, attack behaviors in industrial-Internet scenarios typically exhibit characteristics of concealment, diversity and suddenness. The missed detection of minority-class attack samples is highly likely to trigger serious security incidents such as equipment failures, production interruptions and even industrial control system paralysis. To clarify the problem formulation across different datasets, in this study, we formally define “minority classes” as those attack categories that constitute an extremely small fraction of the overall network traffic, typically accounting for less than 1.5% of the total dataset. For instance, this corresponds to the R2L and U2R aggregated classes in the NSL-KDD dataset, and the Analysis, Backdoors, Shellcode, and Worms categories in the UNSW-NB15 multi-class dataset. Furthermore, these minority attacks are designated as “critical” because, despite their low occurrence frequency, their mechanisms, such as gaining unauthorized root privileges or stealthily bypassing security protocols, pose severe threats. The successful execution of these attacks can lead to devastating system-level compromises, making their accurate detection disproportionately important relative to their minimal sample size. Therefore, addressing the data imbalance problem and improving detection accuracy of minority-class attacks have become core bottlenecks urgently requiring breakthroughs in the field of industrial-Internet intrusion detection, which are directly related to the practical application value of deep learning models in industrial security protection.

In the early stage of research, oversampling technology is one of the classic core methods to address class imbalance problems [7]. Due to its significant advantages of simple principles and convenient implementation, it is widely applied in many classification tasks such as intrusion detection. The core idea of such technologies lies in actively adjusting the distribution ratio of samples of various classes in the dataset. Specifically, the data imbalance can be alleviated through two key approaches: first, directly performing repeated sampling to increase the number of minority-class attack samples, such as Random Over Sampling (ROS); second, generating virtual minority-class samples through interpolation in the feature space, such as Synthetic Minority Oversampling Technique (SMOTE) [8] and Adaptive Synthetic Sampling Approach (ADASYN) [9]. Through the aforementioned approaches, although oversampling technology can assist classification models in learning feature representations of samples from various classes in a more balanced way and thereby improve the ability of models to identify minority-class attacks to a certain extent, the improvement effect is not significant. The root cause of this phenomenon lies in the inherent limitations of oversampling technology, which directly restrict the performance improvement of deep learning-based intrusion detection models. This is specifically manifested in two aspects: first, random oversampling achieves data balance by simply repeating minority-class samples. This mechanical sample augmentation easily leads to data redundancy, causing deep learning models to overfit local features of minority-class samples during training. This significantly impairs model generalization ability and hinders effective adaptation to the practical demand of diverse attack types in industrial scenarios. Second, for interpolation-based oversampling methods such as SMOTE and its improved algorithms, the generated virtual samples often fail to accurately match the distribution of real data and tend to distort the feature space structure of original data. This prevents models from accurately learning essential data features, thereby reducing the detection accuracy of minority-class attacks. These limitations indicate that traditional oversampling technologies cannot fundamentally solve the data imbalance problem in industrial-Internet intrusion detection, nor can they provide high-quality balanced training data for deep learning models. Against this backdrop, the current Conditional Generative Adversarial Nets (CGANs) [10] have stood out due to their powerful capability of generating specified real samples. This type of model introduces a conditional discrimination mechanism on the basis of the generative adversarial network. Through the adversarial training mechanism of the generator and the discriminator, it overcomes the drawback that the generative adversarial networks (GANs) [11] cannot generate labeled samples. A CGAN can learn the distribution characteristics of real data and generate high-fidelity attack samples as specified, precisely making up for the deficiencies of oversampling techniques in terms of sample generation quality and data distribution fitting. Although the traditional CGAN has demonstrated significant advantages in generating targeted samples, when directly applied to the industrial-Internet intrusion detection scenario, it still has inherent drawbacks that cannot be ignored, mainly reflected in the following three aspects:

- Insufficient stability during training. During the adversarial training of a traditional CGAN generator and discriminator, problems are prone to occur. These include difficulty in convergence and training oscillation. Such issues make it hard to achieve dynamic balance between the two components. This further affects the stability and consistency of generated samples. Meanwhile, traditional CGAN with a single discriminator has poor discrimination ability. The generator tends to produce deceptive samples. This leads to imbalance in the generative discrimination cycle game. Consequently, the gradient signal received by the generator approaches zero, resulting in model collapse.

- The quality of generated samples needs improvement. In industrial-Internet scenarios where attack samples have complex features and diverse patterns, the single discriminator of traditional CGANs must undertake two core tasks simultaneously. One is to evaluate the authenticity of generated samples, which is quality discrimination. The other is to verify the matching between generated samples and labels, which is category discrimination. This dual task load makes it difficult for the discriminator to accurately distinguish and optimize discrimination loss and classification loss. It further transmits vague gradient guidance signals to the generator. Eventually, this results in the poor quality of generated samples. These samples can hardly accurately fit the distribution characteristics of real attack samples.

- The category discrimination ability is limited. Traditional CGANs can generate labeled samples. However, in multi-class generation scenarios, the single discriminator’s performance is limited in multi-class scenarios, preventing it from providing the generator with accurate gradients regarding category matching. As a result, the generator generates samples that do not match the target labels. This directly undermines the effectiveness of the data augmentation process.

To address the problem of missed detection of key minority attacks caused by data imbalance in industrial-Internet intrusion detection, as well as the inherent defects of conditional generative adversarial networks, this paper proposes to introduce a multi-discriminator collaborative mechanism and an independent classifier module into the traditional CGAN architecture to construct a Multi-discriminator Conditional Classification Generative Adversarial Network (MDCCGAN) model, and cascades it with a Deep Neural Network (DNN) to form an MDCCGAN-DNN hybrid detection framework. Specifically, the core collaborative mechanism of this framework is divided into two modules. First, the MDCCGAN module is responsible for high-quality sample generation and data balancing. Multiple discriminators jointly judge the authenticity and category consistency of generated samples. An independent classifier is introduced to specifically undertake the task of accurate category determination for generated samples. The gradient loss output by the two components collaboratively guide the generator to generate high-quality and category-accurate minority-class attack samples. By using multiple discriminators and independent classifiers, the discrimination loss and classification loss of the traditional CGAN discriminator can be effectively decoupled. This not only avoids the problem of unclear gradient direction transmission but also significantly reduces the probability of the generator generating deceptive samples, ultimately achieving the expansion and balance of the original imbalanced dataset. Ultimately, the expansion and balancing of the original imbalanced dataset are achieved. Second, the DNN classifier module enables accurate identification of attack patterns. Leveraging the balanced dataset enhanced by MDCCGAN, the DNN deeply mines the high-level semantic features of attack behaviors through stacked layers of nonlinear transformation units, thereby constructing a precise attack pattern recognition model. The collaborative design of this hybrid framework not only effectively mitigates the interference of class imbalance on model training but also significantly improves the detection accuracy and generalization ability of the detection model for critical minority-class attacks by effectively expanding the feature space with generated samples.

This paper is divided into six sections: Section 1 introduces the development of intrusion detection technology and the challenges encountered, followed by the solutions proposed based on these issues; Section 2 systematically elaborates on the applications and advancements of class balancing techniques and generative adversarial networks in the field of intrusion detection; Section 3 provides a detailed elaboration and theoretical explanation of the MDCCGAN-DNN intrusion detection model proposed in this paper; Section 4 presents the experimental results and discussions; Section 5 presents the conclusive opinions; Section 6 addresses the challenges and future prospects.

2. Related Works

Intrusion detection technology has gradually evolved from traditional machine learning models to deep learning models. Early studies mostly relied on shallow learning models, with typical representatives including Logistic Regression (LR) [12], Support Vector Machine (SVM) [13], Random Forest (RF) [14], and Gradient Boosting Decision Tree (GBDT) [15]. The core limitation of such models lies in the fact that their detection performance is highly dependent on manual feature engineering. And they require professional knowledge in the field of industrial-Internet security to extract effective features from the raw data in order to achieve the identification and distinction of attack categories. With the development of deep learning technology, intrusion detection technology has been deeply integrated with various deep learning models. By virtue of the hierarchical feature extraction mechanism of multi-layer neural networks, deep learning can automatically learn and fuse high-level abstract features from low-level raw data such as network traffic packets and host logs. This effectively overcomes the excessive dependence of traditional methods on expert experience [16]. Vigneswaran et al. [17] investigated the application of DNNs in intrusion detection, with a focus on the impact mechanism of network layer depth on detection performance. The experiments conducted on the KDDCup-99 benchmark dataset showed that the DNN model with a three-layer network achieved optimal performance, with a detection accuracy of 93%. Li et al. [18] proposed a heterogeneous ensemble model that integrated Convolutional Neural Network (CNN), Recurrent Neural Network (RNN), and SVM. This model extracted and compressed spatial features from network traffic through a CNN and simultaneously utilized the time-recursive structure of an RNN to capture the temporal dependencies in the traffic sequence. After deep pre-training, the joint spatio-temporal feature vectors were input into the SVM classifier for final decision-making. The binary classification experiments on the CIC-IDS2017, UNSW-NB15, and WSN-DS benchmark datasets showed that the model achieved detection accuracies of 99.59%, 93.68%, and 99.61% respectively, which validated the effectiveness of the joint spatio-temporal feature modeling and classifier cascading mechanism. Bamber et al. [19] proposed an intelligent network intrusion detection system based on a hybrid model of CNN and Long Short-Term Memory (LSTM). The system aimed to improve detection accuracy and robustness by combining the spatial feature extraction capability of a CNN and the time series modeling advantage of LSTM. The study adopted the NSL-KDD dataset as the benchmark, and the experimental results showed that the CNN-LSTM model achieved the optimal performance across multiple evaluation metrics. Without using recursive feature elimination, the model achieved an accuracy of 95%, a recall of 89%, and an F1-score of 94%. Even with recursive feature elimination applied, its accuracy still reached 93%, which was significantly higher than that of other comparative models. In addition, the study further verified the model’s effectiveness in class distinction and false-alarm reduction through ROC curves and confusion matrices, with an AUC value of 0.948, indicating strong classification and discrimination capabilities.

Although the aforementioned studies have fully verified the application value of deep learning technology in the field of intrusion detection, most of these research outcomes have not designed effective detection mechanisms for minority-class attacks. In industrial-Internet environments, minority-class attacks usually exhibit stronger concealment and destructiveness; their successful intrusion may trigger severe industrial production accidents. Therefore, improving the detection rate of critical minority-class attacks has become a research focus in recent years. Against this backdrop, the research hot topic in the intrusion detection field is gradually shifting toward addressing the class imbalance problem of training datasets, which is also the core bottleneck currently restricting the improvement in the detection efficiency of minority-class attacks. With the technological breakthroughs of two mainstream generative models, Variational Auto-encoder (VAE) [20] and GAN, the research paradigm for addressing class imbalance has gradually shifted to minority-class sample augmentation methods based on generative models. Compared with traditional oversampling techniques that are prone to overfitting due to sample redundancy or distribution distortion, generative models can generate high-quality minority-class samples by virtue of their ability to accurately model and learn the latent distribution of data. This characteristic not only effectively addresses the class imbalance problem of datasets but also avoids the degradation of the generalization performance of detection models caused by overfitting. Liu et al. [21] employed a Conditional Variational Autoencoder (CVAE) data augmentation scheme to balance the class distribution of the CSE-CICIDS2018 dataset, resulting in increased sample diversity in the balanced dataset. Experiments showed that training different intrusion detection models with the class-balanced dataset improved their F1-score. Chuang and Huang [22] addressed the issue of insufficient decoder performance in VAEs, thereby improving the classification accuracy of intrusion detection. Xu et al. [23] addressed the challenge of CVAEs in fitting the distribution of minority-class samples by proposing an optimization method based on the log-cosh loss function. By replacing the traditional reconstruction loss function with the log-cosh function, the model’s ability to estimate the density of sparse samples in the discrete feature space was enhanced, improving the quality of generated samples and achieving excellent performance on the NSL-KDD dataset. Javaid et al. [24] and Yan and Han [25] integrated sparse auto-encoders into intrusion detection models and achieve good experimental results, demonstrating that sparse auto-encoders can serve as effective intrusion detection models. Ding et al. [26] conducted a systematic comparative study on traditional oversampling techniques and GAN-based models to address the class imbalance problem in intrusion detection. The experiments selected classic oversampling methods such as Random Over-Sampling (ROS), SMOTE, and ADASYN, and compared their performance with a CGAN, a Wasserstein Generative Adversarial Network (WGAN) [27], and the proposed TACGAN model in that study. Results on three benchmark datasets, KDDCup-99, NSL-KDD and UNSW-NB15, showed that generative models significantly outperformed oversampling techniques in improving detection accuracy. The TACGAN model achieved a 4.5% accuracy improvement on the KDDCup-99 dataset, verifying the technical advantages of generative adversarial networks in solving class imbalance problems. Zhu et al. [28] proposed a deep fusion model of a VAE and a CGAN, constructing a three-stage feature optimization mechanism of “encoding–adversarial enhancement–decoding” by embedding a CGAN module in the latent space between the encoder and decoder. Specifically, the CGAN dynamically adjusted the manifold distribution of minority-class samples in the latent space through adversarial training, making the feature vectors output by the encoder more discriminative in the low-dimensional space and thus improving the decoder’s reconstruction accuracy for sparse samples. That model provided a new paradigm for solving the representation challenge of sparse attack samples in industrial scenarios, while similar VAE-GAN fusion ideas were also explored in Tian et al. [29], Li et al. [30] and Yang et al. [31], further validating the effectiveness of generative model joint modeling in class imbalance problems. Yang et al. [32] addressed the problems of insufficient conditional constraints and difficulty in balancing the quality and diversity of generated samples in traditional GAN for intrusion detection data augmentation. They proposed a conditional aggregated encoding–decoding generative adversarial network (CE-GAN) and constructed an ensemble classifier by integrating the game theory-based Nash equilibrium principle, providing an integrated solution for solving the data imbalance problem in multiple categories intrusion detection. That study conducted experiments on two classic imbalanced datasets, namely, NSL-KDD and UNSW-NB15. The results demonstrated that the CE-GAN could effectively augment minority-class attack samples and significantly improve the recognition performance of the classifier on minority classes. After data augmentation, the ensemble classifier achieved significant improvements in multiple evaluation metrics, especially exhibiting stronger recognition capability for previously hard-to-detect minority-class attacks.

To systematically identify the current research gaps, Table 1 presents a comparison matrix of the aforementioned data augmentation and balancing approaches regarding three critical dimensions: generated sample quality, training stability, and label-consistency. As illustrated in the matrix, while existing methods address certain aspects of the class imbalance problem, none provide a comprehensive solution. Specifically, traditional oversampling techniques offer process stability but severely compromise sample quality by distorting the original feature space. Traditional CGANs introduce conditional generation but suffer from severe training instability (e.g., gradient vanishing) and poor label consistency. This stems from a fundamental structural flaw: a single discriminator is overloaded with dual, often conflicting tasks (verifying sample authenticity and matching categorical labels). Recent hybrid variants (such as VAE-GANs) and WGANs improve stability and feature representation, yet the fundamental issue of coupled optimization objectives in multi-class scenarios remains unresolved. Without a decoupled architecture, the generator struggles to simultaneously produce high-fidelity samples and strictly align them with specific minority class labels. This specific unresolved gap motivates the proposed MDCCGAN. By introducing an independent classifier, our model completely decouples the label consistency task from the discriminator, providing precise category gradients. Concurrently, the multi-discriminator architecture prevents the generator from exploiting structural vulnerabilities, thereby guaranteeing the quality and diversity of the generated samples.

Table 1.

Comparison matrix of existing class-balancing approaches in intrusion detection (Note: The symbol “✓” denotes an advantage in the respective dimension, with a higher number of “✓”s indicating a stronger advantage).

This specific unresolved gap motivates the proposed MDCCGAN. The objective is to construct an efficient detection scheme tailored to industrial-Internet scenarios for critical minority-class attacks. By introducing an independent classifier, our model completely decouples the label consistency task from the discriminator, providing precise category gradients. Concurrently, the multi-discriminator architecture prevents the generator from exploiting structural vulnerabilities, thereby guaranteeing the quality and diversity of the generated samples. This method specifically overcomes the performance bottlenecks of traditional CGAN in terms of generated sample quality, mode diversity, and training stability, as detailed below:

- Design of Multi-Discriminator Collaborative Architecture: by introducing multiple discriminators, a multi-dimensional adversarial learning constraint mechanism is constructed. Compared with the adversarial mode of a single discriminator in traditional CGAN, the multi-discriminator architecture significantly reduces the probability of the generator producing deceptive samples. Meanwhile, it increases the difficulty for the generator to generate samples that conform to the real distribution, forcing the generator to fully learn the multi-dimensional feature distribution of minority-class samples. This effectively alleviates the model-collapse problem that is prone to occur in traditional CGAN and improves the diversity and representativeness of generated samples.

- Decoupling Optimization Mechanism of Independent Classifier: the classification task and the adversarial discrimination task are decoupled, and an independent classifier module is embedded into the generative adversarial network framework. This design clarifies the optimization objective of “class consistency” for the generator in the sample generation process, ensuring that the generated minority-class samples not only are close to real samples in distribution but also can accurately match the core features of the target attack classes.

- Loss Function Improvement Driven by Wasserstein Distance: the Wasserstein distance is adopted to replace the Jensen–Shannon (JS) divergence commonly used in traditional CGANs for constructing the loss function. From the perspective of mathematical principles, the Wasserstein distance can effectively measure the distance between any two distributions. Even in scenarios where data distributions are non-overlapping or have extremely low overlap, it can still provide continuous and smooth gradient information. This fundamentally solves the gradient-vanishing problem caused by the saturation of the JS divergence in traditional CGANs, significantly improving the stability and convergence efficiency of the model training process.

3. Proposed Methodology

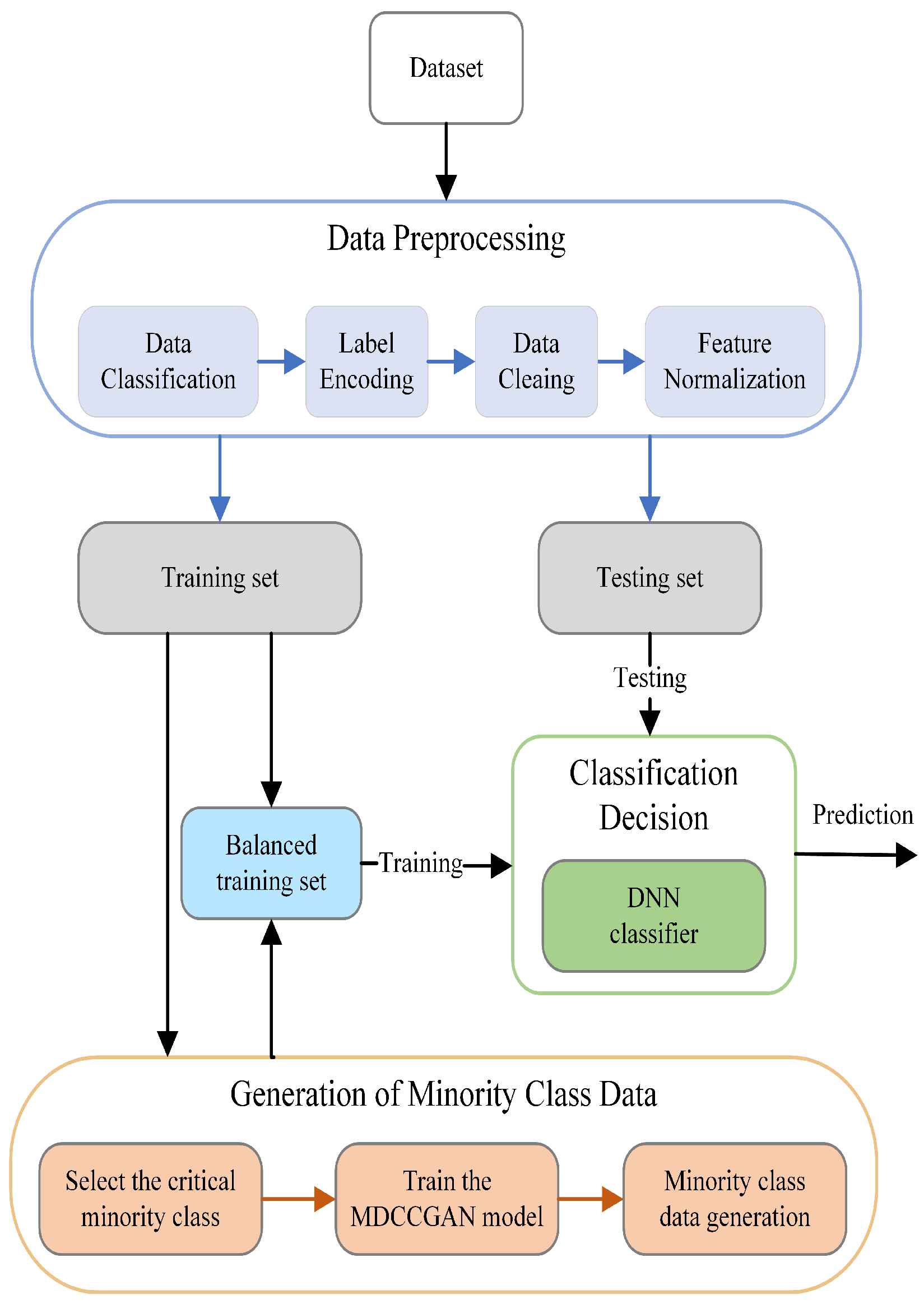

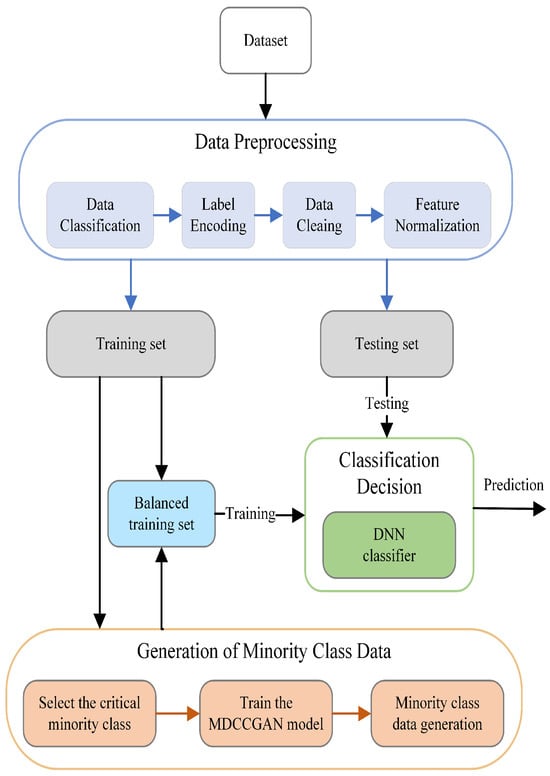

In this chapter, we elaborate in detail on the MDCCGAN-DNN industrial-Internet intrusion detection method proposed in this paper. The core design objective of this method is to address the problem of low detection rate caused by the scarcity of minority-class attack samples in the industrial-Internet intrusion detection scenario. Among them, the MDCCGAN model undertakes the core data augmentation task, leveraging its strong generative capability to generate high-quality minority-class samples for balancing the dataset distribution; the DNN model serves as the back-end intrusion detection classifier, responsible for the accurate classification and recognition of the balanced data. The workflow of the MDCCGAN-DNN industrial-Internet intrusion detection model based on the aforementioned architecture is illustrated in Figure 1. Through a three-stage processing workflow consisting of (1) Data Preprocessing, (2) Generation of Minority Class Data, and (3) Classification Decision, the model constructs a closed-loop system for minority-class attack detection in the industrial internet.

Figure 1.

Intrusion detection architecture based on MDCCGAN-DNN.

3.1. Data Preprocessing

Data preprocessing, as a fundamental step in deep learning model training, aims to transform raw intrusion detection data into standardized feature representations suitable for model learning. The data preprocessing module designed in this study includes the following four-stage processing flow: (1) Data classification; (2) Data cleaning; (3) Label encoding; (4) Feature normalization.

Existing intrusion detection datasets contain numerous attack types and a large number of redundant values, which hinder the learning of attack patterns by intrusion detection models. In this paper, according to the different characteristics of each dataset, attack types are scientifically categorized and reclassified based on the characteristics of each dataset (details of specific data classification and data cleaning are shown in Section 4.1). Meanwhile, the dataset is cleaned by removing redundant samples, samples with missing values, and noisy samples (i.e., samples with identical feature values but different attack labels). Excessive noisy data significantly affect model training, and deleting redundant values, missing values, and noisy samples can improve model performance and reduce the learning cost.

After data cleaning, numerical encoding of non-numerical features is required. Label encoding converts non-numerical features in the dataset into numerical values without increasing dimensionality, where original non-numerical features are sequentially encoded as [0, 1, 2, …, ]. For example, the Protocol Type attribute in the NSL-KDD dataset is encoded as [0, 1, 2] corresponding to the original values [icmp, tcp, udp]. Feature normalization scales feature values to reduce the impact of extreme values on the model. Min-max normalization is a widely recognized and utilized standardization method, which compresses feature values into the range [0, 1] [33]. The formula is as follows:

Here, represents the minimum value of feature , and represents the maximum value of feature . After preprocessing, the dataset can be divided into a training set and a test set.

3.2. Generation of Minority Class Data Based on MDCCGAN

The MDCCGAN-based minority-class data generation module is the core unit of the intrusion detection method proposed in this paper for addressing the class imbalance problem. Its design objective is as follows: utilizing the trained MDCCGAN model to generate high-quality synthetic samples that are highly consistent with the feature distribution of real minority-class attack samples; subsequently fusing these synthetic samples with the original training set to construct an augmented dataset with a balanced class distribution. This provides sufficient and balanced data support for the subsequent training of the DNN decision classification model, ultimately enhancing the model’s detection accuracy and generalization ability for minority-class attacks. This section focuses on elaborating the design logic of MDCCGAN from a theoretical perspective, centering on the inherent drawbacks of traditional CGANs in minority-class data generation tasks, clarifying the targeted improvement directions and technical measures of MDCCGAN, and detailing its network architecture design and model training mechanism.

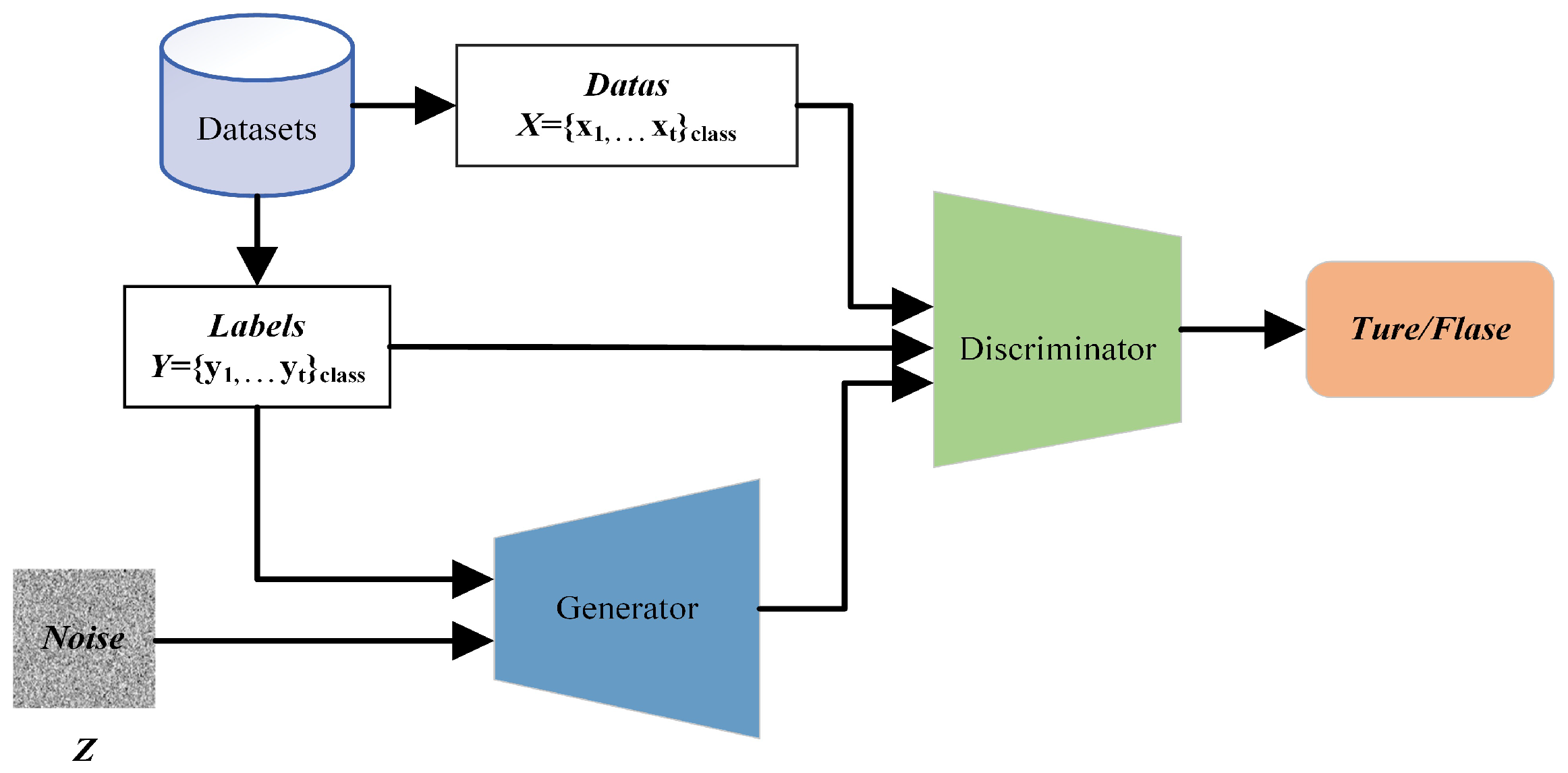

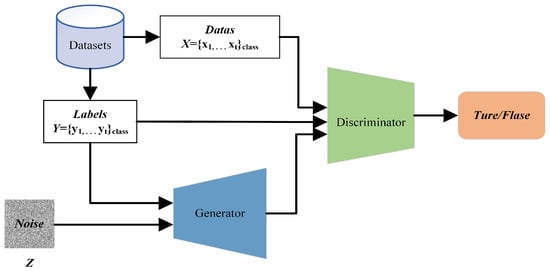

Generative adversarial networks enable the generator and discriminator to learn real data distributions through adversarial training. However, due to the lack of explicit conditional constraint mechanisms, their generation process is uncontrollable, failing to produce generated samples of specified categories. To address this limitation, a CGAN introduce a class-label-conditioned generation mechanism, embedding the class label vector synchronously into the input layers of both the generator and discriminator to construct the conditional constraint architecture shown in Figure 2.

Figure 2.

Basic architecture of a traditional CGAN.

In the traditional CGAN, both the generator and discriminator adopt deep neural network architectures. They achieve controllable sample generation through a minimax adversarial game mechanism under conditional constraints, with the formula as follows:

where denotes the real sample vector, and represents a random noise vector (typically following a Gaussian distribution or uniform distribution). represents the expectation. represents the output of the discriminator, and represents the output of the generator.

In the traditional CGAN, the generator learns the distribution of real samples, fuses input random noise with label vectors, and generates synthetic samples corresponding to the label information. The discriminator undertakes dual tasks: first, determining whether input samples originate from the real data distribution or the synthetic sample distribution; second, verifying the consistency between samples and class label information. Only when the input sample is real data and matches the label will the discriminator give a score close to 1; otherwise, the score approaches 0. Through adversarial training, the generator and discriminator iteratively optimize until can sufficiently fit real samples, making it impossible for to distinguish the sample source. At this point, outputs 0.5, indicating a 50% probability that the input sample is either real or synthetic, and there is no room for improvement for either side, meaning that a Nash equilibrium state has been reached.

By introducing class label information into both the generator and discriminator, a traditional CGAN effectively overcome the limitation of GANs that makes it difficult to generate samples of specified classes, thereby providing a feasible approach for the targeted generation of minority-class attack samples. However, the direct application of traditional CGAN still has unavoidable inherent drawbacks, which restrict their application effectiveness in addressing class imbalance problems. Specifically, these drawbacks are as follows:

(1) In the traditional CGAN, the discriminator’s loss function can be transformed into minimizing the Jensen–Shannon (JS) divergence between the real data distribution and the generated distribution . The JS divergence is introduced to measure the difference between the distributions of real and synthetic samples; the closer the two distributions, the smaller the JS divergence. Derived from the Kullback–Leibler (KL) divergence, the JS divergence is mathematically defined as:

where denotes the Kullback–Leibler divergence. represents the distribution of real samples, and denotes the distribution of generated samples.

During the initial training phase, the distribution of samples generated by the generator may deviate significantly from the real sample distribution. In this case, the discriminator is likely to assign a probability near 0 to the event that generated samples belonging to the real distribution, while assigning a non-zero probability to those belonging to the generated distribution. That is, when and , where denotes the probability that the discriminator assigns to the sample being from the real space, and from the synthetic space, the JS divergence converges to a constant value, expressed as:

This situation leads to the vanishing of the update gradient for the generator .

(2) Due to the architectural characteristics of a traditional CGAN, in the initial training phase, the classification capability of the discriminator is not yet mature. This enables the generator to exploit a certain weakness, namely, learning to generate deceptive samples that meet specific conditions, leading the discriminator to incorrectly classify them as high-scoring samples despite significant deviations between these deceptive samples and the actual data distribution. Once the generator masters this strategy of making fake samples fool the discriminator, it will continuously generate samples of the same type to deceive the discriminator during adversarial training, ultimately causing the model to fall into model collapse.

(3) In the traditional CGAN, the discriminator must perform two critical tasks simultaneously: verifying sample authenticity and ensuring category consistency. This means its gradient update direction is determined by both sample quality (authenticity) and category alignment (label accuracy). The generator’s gradient, however, relies entirely on the discriminator’s feedback. When the discriminator assigns low scores to generated samples, a key challenge emerges: the generator struggles to distinguish whether the low scores result from poor sample quality or misaligned category labels, making it difficult to optimize gradient directions for both objectives simultaneously.

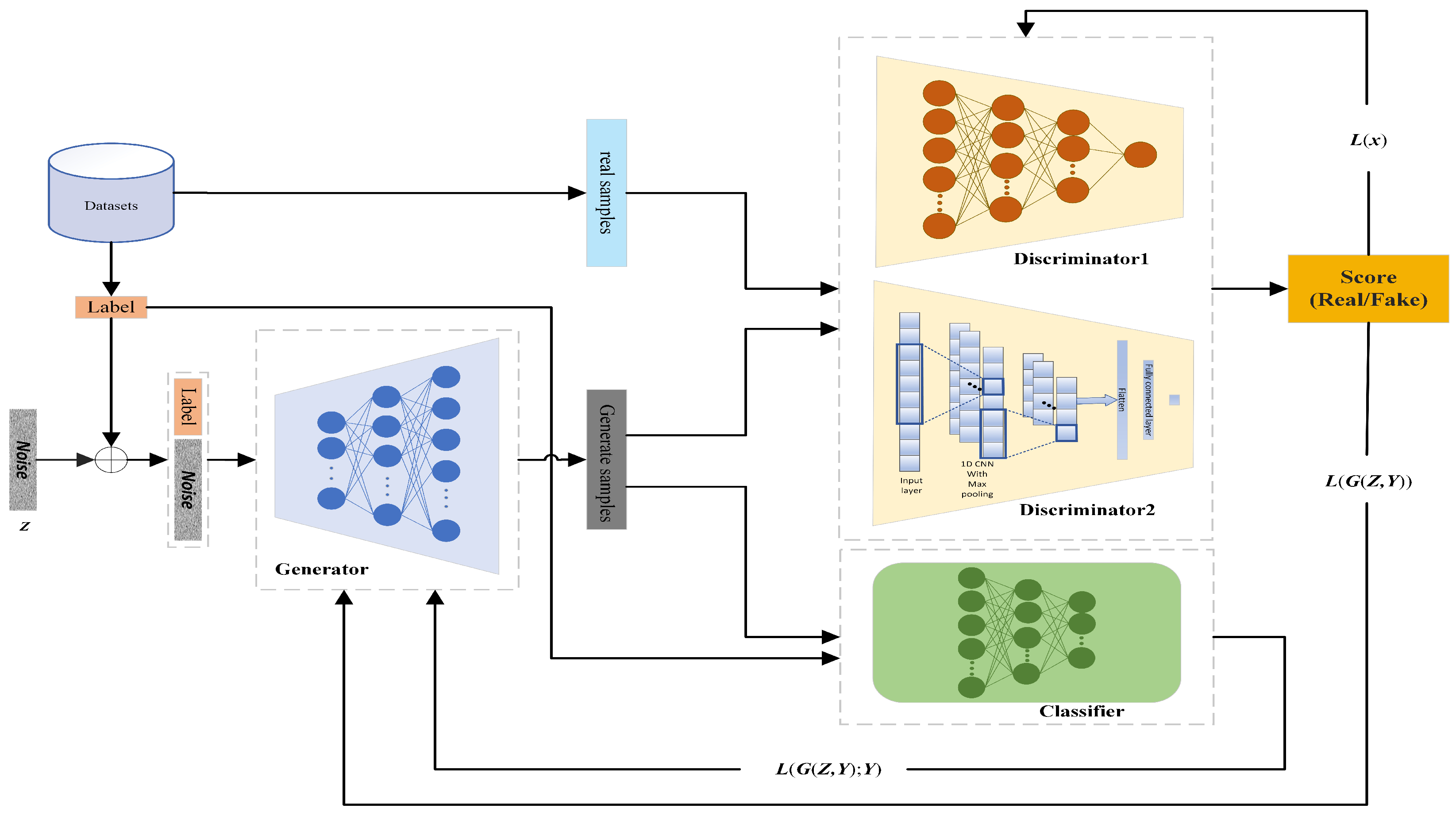

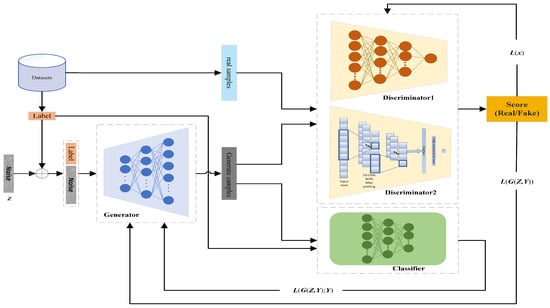

In view of the aforementioned problems, this paper proposes a MDCCGAN model, whose network architecture is shown in Figure 3 and consists of a generator , an independent classifier , and two heterogeneous discriminators and . Figure 3 illustrates the intuitive workflow of the proposed MDCCGAN. The process begins with the generator receiving a joint input of a random noise vector (z) and a specific target label (y). The intuition is that the generator acts as a high-dimensional mapping function, translating this noise into a synthetic feature vector. This synthetic vector is then simultaneously evaluated by three independent modules. and check if the overall structure resembles real traffic, and classifier C strictly verifies if the generated vector truly possesses the label characteristics. This collaborative architecture forces the synthetic sample to be both realistic and categorically accurate. Consistent with the traditional CGAN, the generator, classifier and discriminators of the MDCCGAN are all built based on deep neural networks, and the two discriminators are designed with differentiated network architectures: discriminator adopts a fully connected layer neural network architecture, while discriminator is constructed based on a one-dimensional convolutional neural network. This heterogeneous design requires the generator to simultaneously learn the optimization strategies to fool the two discriminators with distinct feature extraction mechanisms and network architectures during the adversarial training process. Moreover, the probability that the generator can fool the two heterogeneous discriminators at the same time is much lower than that of fooling the single discriminator in the traditional CGAN, and this design artificially increases the difficulty for the generator to generate samples that conform to the real data distribution. This constraint compels the generator to abandon the optimization “shortcut” of exploiting vulnerabilities in a specific network structure but instead to fully and deeply learn the real distribution laws of minority-class samples in the high dimensional feature space. Relying on the above-mentioned multi-module collaborative constraint mechanism, this architecture can effectively alleviate the model-collapse problem in the traditional CGAN caused by the generator exploiting vulnerabilities in the discriminator architecture, which not only improves the stability of model training but also significantly enhances the diversity and feature representativeness of the finally generated samples.

Figure 3.

Architecture of MDCCGAN.

To ensure the reproducibility of the proposed model, the precise network specifications of the four core components within the MDCCGAN (generator, discriminator , discriminator , and classifier) are detailed in Table 2. In this architecture, a leaky ReLU is predominantly used in the discriminators to prevent the “dying ReLU” problem and ensure stable gradient flow. Dropout layers are incorporated to mitigate overfitting. For discriminator , the 1D-CNN extracts local spatial patterns from the sequence of feature inputs through stacked 1D convolutional layers and max-pooling operations. Batch normalization is applied in the generator to stabilize the adversarial training process.

Table 2.

Detailed network specifications of the MDCCGAN components.

The generator of our MDCCGAN follows the “noise + label” conditional input mode of a traditional CGAN. The generator precisely learns the feature distribution of real minority-class samples, and based on the input label vector and random noise, generates synthetic samples corresponding to the target label, ensuring the category orientation of the generated samples. The core differences from a traditional CGAN lie in the design of the multi-discriminator architecture and the decoupling mechanism of classification tasks. The discriminator of a traditional CGAN must undertake the dual tasks of “sample authenticity judgment” and “class consistency verification” simultaneously, which is prone to cause mutual interference between the two tasks and reduce the quality of generated samples and the accuracy of class matching. The MDCCGAN achieves this by employing a multi-discriminator design, enabling the generator to simultaneously acquire the optimization strategies for probabilities that can deceive the discriminators of two different network architectures. This probability is much lower than that of deceiving a single discriminator, thereby effectively alleviating the model-collapse problem caused by the generator exploiting the vulnerabilities of the discriminators. In contrast, the MDCCGAN adopts a multi-discriminator design, enabling the generator to simultaneously acquire the probability of optimizing strategies that can deceive the discriminators of two different network architectures. This probability is much lower than that of deceiving a single discriminator, thereby effectively alleviating the model-collapse problem caused by the generator capturing the vulnerabilities of the discriminators. And the embedded independent classifier is responsible for determining the class consistency between the generated samples and the input labels. By decoupling the classification loss from the discriminator, it clarifies the gradient update direction of the generator, thereby improving the generation quality of samples of the specified class. This scheme not only solves the problem that the generator in the traditional CGAN may generate deceptive samples but also enables the generator to learn the sample distribution features more accurately. This architectural design and function decoupling strategy not only simplifies the learning objective of a single module and improves the stability of adversarial training, but also strengthens the feature consistency between the generated samples and the target class through the exclusive optimization of the classifier, providing a guarantee for the subsequent generation of high-quality minority-class samples.

Compared with the two-player zero-sum game framework of the traditional CGAN, the multi-player game adopted by the MDCCGAN in this paper, consisting of a generator , two discriminators , and an independent classifier , reconstructs the model’s convergence mechanism and Nash equilibrium characteristics from the perspective of game theory. The two-player zero-sum game of the traditional CGAN relies on the antagonistic optimization between the generator and the discriminator, which is prone to gradient vanishing or model collapse due to a single gradient signal, and its theoretical Nash equilibrium point is extremely difficult to converge to in the high-dimensional and sparse traffic data of the industrial Internet. Through a hybrid structure of collaborative confrontation by the dual discriminators and constraint guidance by the classifier, the multi-player game of the MDCCGAN decouples the authenticity discrimination task of a single discriminator into multi-dimensional feature fitting by the dual discriminators: is responsible for capturing global features and for capturing temporal local features, while an independent classifier is introduced to provide categorical consistency constraints. This design enables the generator to obtain richer gradient signals, and its convergence direction is confined to a feasible region of high fidelity and accurate categorization, leading to a significant improvement in training stability. Theoretically, its Nash equilibrium point needs to satisfy distribution equilibrium, categorical equilibrium and collaborative equilibrium simultaneously, forming a robust optimal solution under multiple constraints, rather than the zero-sum antagonistic equilibrium of the traditional CGAN.

To address the gradient-vanishing issue in the traditional CGAN caused by the discriminator’s use of the JS divergence, the MDCCGAN model adopts the Wasserstein distance as the core metric to replace the Jensen–Shannon (JS) divergence of the traditional CGAN. Instead of directly performing a formal rewriting of the Wasserstein distance formula, its discriminator loss function transforms the calculation of the Wasserstein distance into the optimization objective of the discriminator by virtue of the duality theory. The original Wasserstein distance formula is as follows:

In the formula: inf denotes the infimum (the minimum cost of transforming the real distribution into the generated distribution ), represents the set of all possible joint distributions between the real distribution and the generated distribution , and signifies the distance between the real sample x and the generated sample .

In the original definition, refers to an infinite dimensional set of joint distributions, which cannot be directly calculated or optimized and thus cannot be directly used as a loss function to guide model training. This constitutes the core reason for the introduction of the duality theory of the Wasserstein distance in this paper. According to the Kantorovich–Rubinstein duality theorem, when and are probability distributions defined on a compact metric space—in this paper, the normalized network traffic feature space where feature values are compressed into the range [0, 1]—satisfying compactness, and is the 1-norm, the Wasserstein-1 distance can be transformed into its dual form, with the formula given as follows:

where sup denotes the supremum, to find a function f that satisfies the constraints to maximize the difference in the parentheses; f represents a continuous real-valued function mapping from the sample feature space to the real number field, which is embodied by the discriminator in this paper, = , and the discriminator is a neural network that fits this function; means that f satisfies the Lipschitz continuity constraint (with the Lipschitz constant = 1), which is a necessary condition for dual transformation and aims to prevent the divergence of the difference caused by the unboundedness of the function . In this paper, the network traffic features are high-dimensional continuous features, and the 2-norm is adopted to measure the sample distance. Thus, the Lipschitz constant is generalized to , and the constraint is implemented through weight clipping or gradient penalty. The dual form can be extended to an engineering optimizable form as follows:

In this paper, the MDCCGAN is designed with two heterogeneous discriminators and , which share the same loss function form derived directly from the dual form of the Wasserstein distance. The core logic is to define the optimization objective of the discriminators as maximizing the dual form of the Wasserstein distance, and then derive the loss function for minimization. It can be inferred from the dual form of the Wasserstein distance that is positively correlated with . The core task of the discriminators is to accurately distinguish real samples from generated samples, i.e., to maximize the expected difference between the outputs of the discriminator for real and generated samples. Thus, the optimization objective of the discriminator D is given by:

The core of model training in deep learning is to minimize the loss function. Therefore, the maximization objective of the discriminator is transformed into a minimization loss function, that is, let the discriminator loss ; then:

To make the physical meaning of the loss function more intuitive and facilitate its implementation in engineering applications, this paper reverses the sign of the loss function and ultimately obtains the discriminator’s loss function:

The dual transformation of the Wasserstein distance relies on the Lipschitz continuity constraint, which is implemented via the weight clipping method in this paper. The specific operation is as follows: after each round of parameter update for the discriminators and , all their network parameters are clipped to a fixed interval [−, ], where = 0.01 was adopted in this study. This value is the standard recommended in the original WGAN paper, which performs stably in most generation tasks and ensures that the output function of the discriminators satisfies the Lipschitz continuity condition. This operation is an essential step connecting the theoretical Wasserstein distance with the engineered loss function. If this step is omitted, the dual transformation no longer holds, the discriminator loss fails to truly reflect the distance between distributions, and the gradient-vanishing problem existing in the traditional CGAN still arises.

denotes the cross-entropy classification loss of the independent classifier C, with its calculation form given as follows:

where represents the probability predicted by the classifier that the generated sample belongs to the target real class . Based on the classifier’s category judgment results for generated samples, the feature consistency between generated samples and the target attack categories is constrained. This ensures that the generated samples do not fit real samples indiscriminately but accurately generate samples of the specified minority-class attacks, thus solving the problem of category matching deviation caused by the single discriminator undertaking multiple tasks in traditional CGANs.

In this paper, the construction of the generator loss function for the MDCCGAN involves the weighted fusion of the adversarial loss of the discriminators and the categorical classification loss of the classifier, ultimately forming a loss function form adapted to the generation of minority-class attack samples on the industrial Internet. The core training objectives of the generator consist of two dimensions: first, to make the generated samples approximate the real minority-class attack samples in terms of feature distribution (adversarial fitting); second, to make the generated samples accurately match the label of the target attack category (categorical consistency). Based on this, the generator loss function was defined as:

Incorporating the classifier loss as a positive term into the generator loss function, instead of a negative term or a weighted penalty term, is a design based on dual considerations of the training optimization direction of the generator and the task positioning of the independent classifier. From the perspective of the optimization direction, the minimization objective of the classifier loss is fully consistent with the category constraint objective of the generator. The core task of the independent classifier C is to accurately determine whether a generated sample matches the target class , and the value of its cross-entropy loss is negatively correlated with the category matching degree of the generated sample. The category constraint objective of the generator is to maximize the category matching degree of the generated samples, which corresponds to minimizing the classifier loss , while the overall training objective of the generator is to minimize the total loss . Therefore, after incorporating as a positive term into the total loss, the generator naturally minimizes synchronously in the process of minimizing , thereby achieving the objective of category consistency constraint indirectly through the optimization of the total loss. This design eliminates the need for the additional setting of penalty coefficients or designing reverse optimization logic, and ensures the uniqueness and simplicity of the model’s optimization direction. From the perspective of task decoupling, incorporating into the generator loss as a positive term enables the collaborative optimization of the two objectives (adversarial fitting and category constraint) and effectively avoids gradient conflict. A core innovation of the MDCCGAN proposed in this paper is the decoupling of the dual tasks of “authenticity discrimination + category judgment” undertaken by a single discriminator in the traditional CGAN, where independent discriminators are designed to be solely responsible for adversarial fitting and an independent classifier for category judgment. The key essence of this decoupling design is to realize the independent transmission and collaborative optimization of the gradient signals of the two tasks, and incorporating into the generator loss as a positive term is the core means to achieve this design objective. After fusing the adversarial loss and the classification loss in the form of an additive positive term, the total gradient of the generator is the direct sum of the gradients of the two subtasks. This approach neither causes gradient cancellation or conflict due to the superposition of negative terms, nor leads to the dominance of the gradient of a single objective due to the introduction of weighted terms, thus truly achieving the balanced optimization of the two training objectives. It fundamentally solves the problems of low quality of generated samples and poor category matching in the traditional CGAN caused by ambiguous gradient signals. From the perspective of model training stability, incorporating into the generator loss as a positive term imposes a hard constraint on the generator for categorical consistency, which can effectively avoid model collapse and the generation of deceptive samples. In the traditional CGAN, a single discriminator undertakes dual tasks simultaneously, so the generator can easily fool the discriminator by learning deceptive features, which in turn leads to model collapse or a severe deviation of generated samples from the target class. In this paper, incorporating into the generator loss function as a positive term sets a hard constraint for the generator on categorical consistency: if the generator attempts to generate deceptive samples to evade the discriminator’s detection, the categorical features of the generated samples will deviate from the target class . In this case, will surge sharply, directly causing a rise in the generator’s total loss , and such opportunistic optimization directions of the generator will be suppressed directly. This design of positive term incorporation directly avoids the opportunistic optimization behaviors of the generator from the perspective of loss optimization, which not only ensures the stability of model training but also improves the quality and categorical accuracy of generated samples simultaneously.

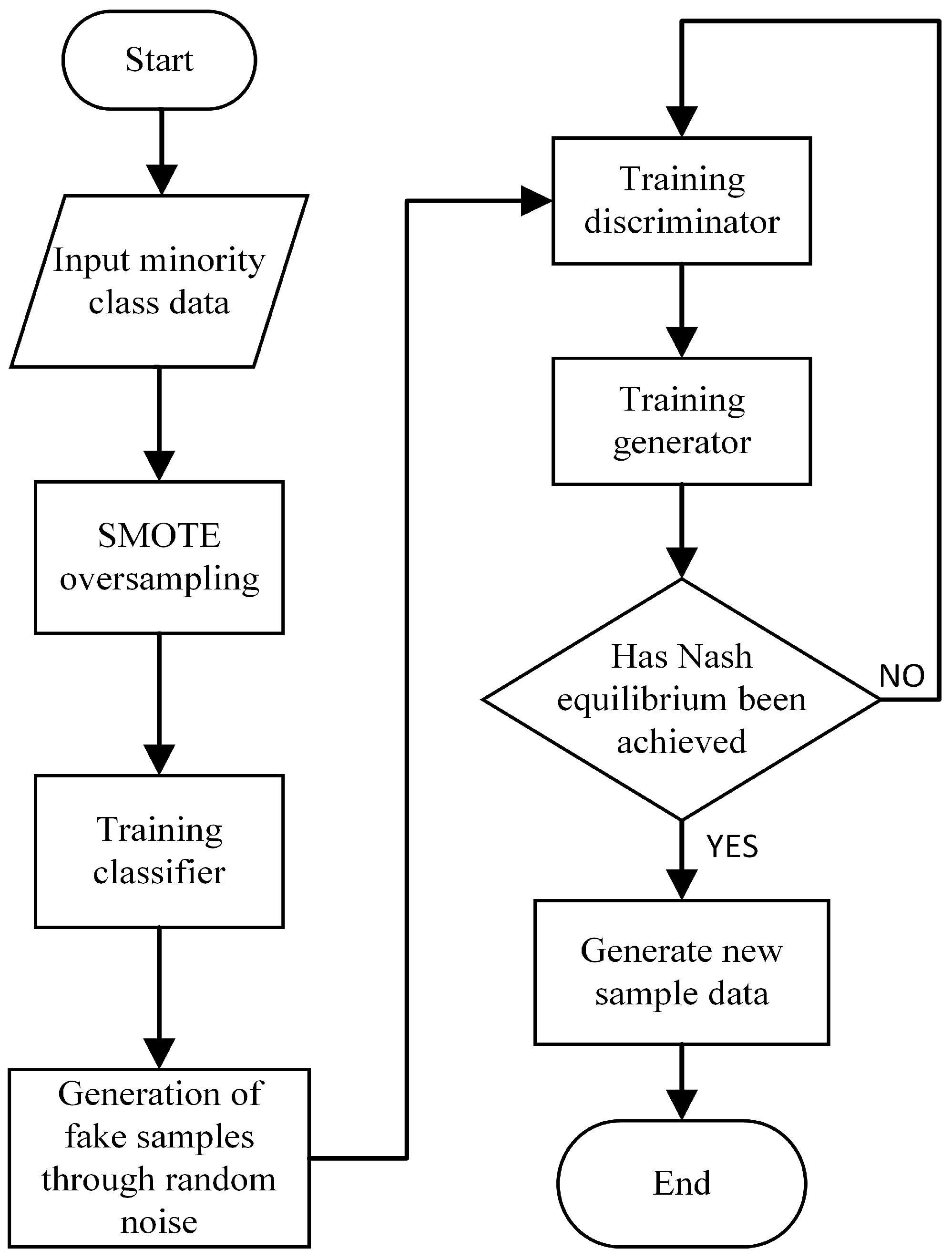

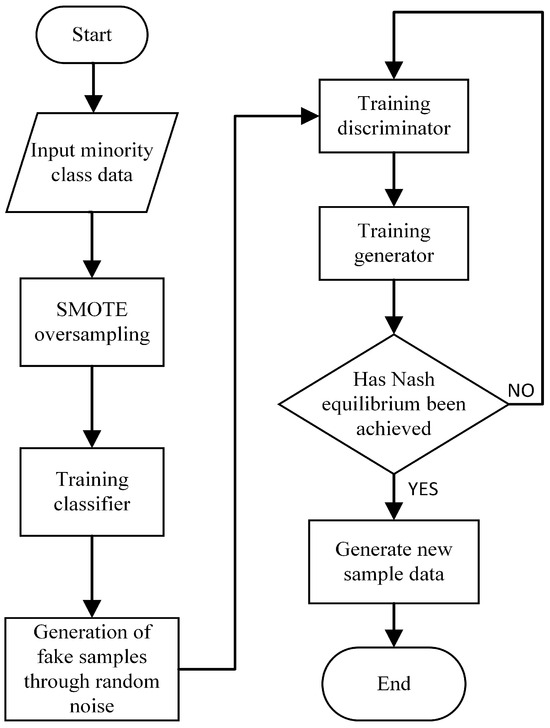

The training process of the proposed MDCCGAN model for generating critical minority-class samples is illustrated in Figure 4, and its training procedure is specified in Algorithm 1 as follows. The specific training process of the model is as follows:

Figure 4.

Training process of MDCCGAN.

- Step 1:

- During the training phase of the MDCCGAN, key minority-class attack samples are first filtered from the preprocessed dataset. Due to the severe imbalance in the distribution of the key minority-class samples that are selected, the original sample size is insufficient to enable the independent classifier to learn stable category features, and it is even unable to provide the generator with an accurate direction for updating the category gradient. Therefore, the SMOTE oversampling method is first employed to augment these samples, and the augmented minority-class samples are then fed into the independent classifier for training, enabling the classifier to fully learn the class distribution characteristics of the key minority-class samples. It is important to note that the core task of this classifier is to determine the class consistency between generated samples and input labels, and its performance relies on the precise fitting of the conditional distribution of real minority-class samples. Unlike conventional classification tasks that aim to pursue generalization and avoid overfitting, the tighter the classifier fits the real minority-class samples within a reasonable range, the more fully it learns the features of minority-class samples, thereby enhancing its ability to judge the class consistency of generated samples. Thus, the classifier in this model does not suffer from the traditional “overfitting flaw”. Augmenting samples via SMOTE oversampling can, to a certain extent, ensure that the classifier comprehensively learns the distribution characteristics of various types of samples in the key minority class, laying a foundation for improving the accuracy of class consistency judgment.

- Step 2:

- During the training phase of the discriminators, the generator parameters are first fixed, and the synthetic samples generated by the generator and the real minority-class samples are input into discriminator and , respectively. It should be emphasized that the loss function of the discriminators in this model is constructed based on the Wasserstein distance, whose core function is to accurately measure the difference between the real sample distribution and the synthetic sample distribution. At this stage, the training objective of the discriminators is to maximize this distribution distance, namely, to enhance their ability to distinguish between real samples and synthetic samples. During the training process, the loss functions and corresponding to discriminators and are minimized through the gradient descent algorithm and Back Propagation algorithm, thereby completing the iterative update of the network parameters of the dual discriminators.

| Algorithm 1 Training algorithm of MDCCGAN |

| Input: training dataset; Output: expanded dataset; |

|

- Step 3:

- During the training phase of the generator, the parameters of the trained discriminators are first fixed; meanwhile, the parameters of the independent classifier have been optimized and fixed in the previous Step 1. The loss function of the generator consists of two components: one is the Wasserstein distance loss fed back by the discriminators, and the other is the class constraint loss provided by the classifier. The specific training process is as follows: the random noise vector is concatenated with the target class label vector and input into the generator G to generate synthetic samples of the target class; subsequently, the synthetic samples are input into discriminators and respectively to calculate the Wasserstein distance loss, and the cross-entropy loss between the generated samples and the input labels is calculated through the independent classifier C; the core training objective of the generator is to minimize the total generation loss , where is defined as the weighted sum of the negative value of the discriminator loss and the classification loss, and the iterative update of the generator network parameters is completed through the gradient descent algorithm.

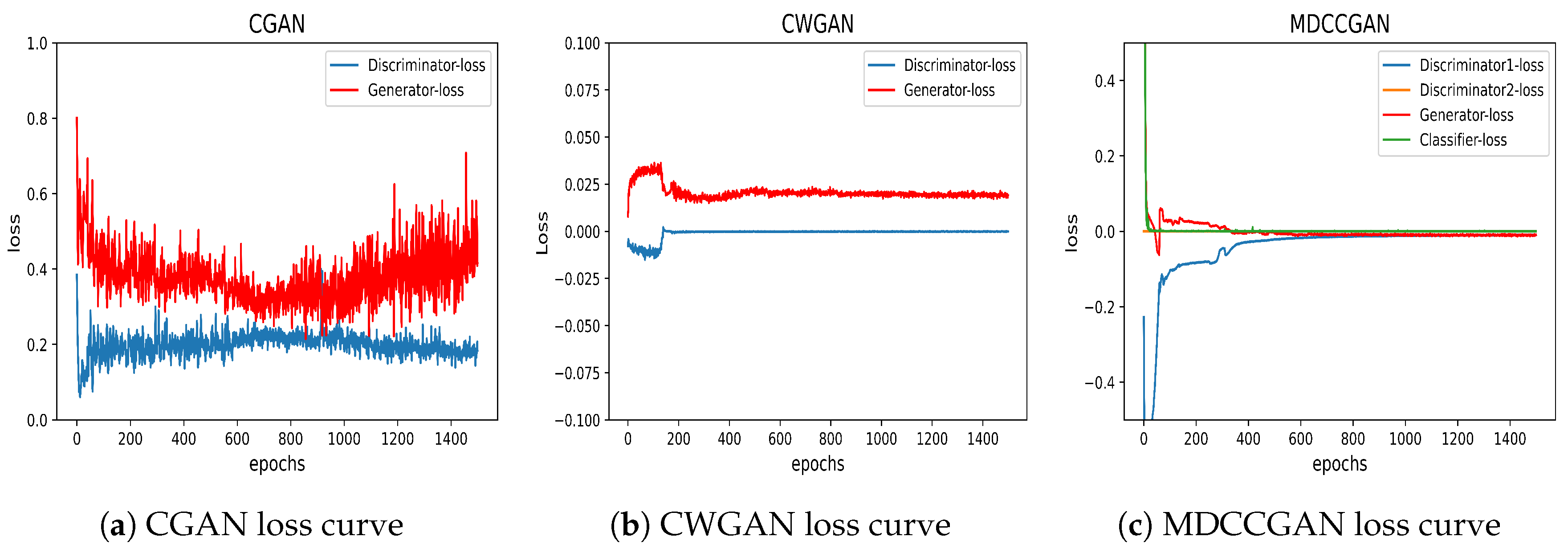

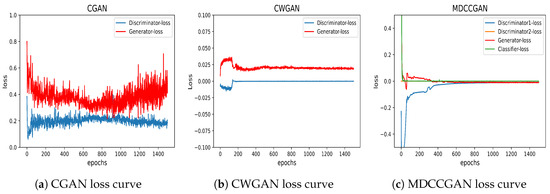

- Step 4:

- Alternately execute the discriminator training Step 2 for n times and the generator training Step 3 for m times. Typically, is set to ensure that the discriminators are fully optimized first. After multiple rounds of iterative training, the discriminator loss and generator loss gradually stabilizes and converges, and the classifier loss also gradually decreases to a convergent state. At this point, the entire model reaches the Nash equilibrium state, and the generator can be stably used to synthesize critical minority-class samples, achieving effective augmentation of the imbalanced dataset.

Unlike the traditional CGAN, which uses the JS divergence and inherently suffers from gradient vanishing when distributions do not overlap, the MDCCGAN model fundamentally addresses this issue by introducing the Wasserstein distance and restructuring the training process. During training, the discriminators are preferentially subjected to n iterative training rounds () to enable them to fully learn the discrepancy between real and generated distributions. Since the Wasserstein distance can still provide meaningful gradient signals in non-overlapping distribution regions, the discriminators can effectively transfer this distribution discrepancy information to the generator to guide its parameter updates. This design allows the generator to more accurately capture the distribution characteristics of real samples, thereby significantly improving the generation quality of minority-class samples.

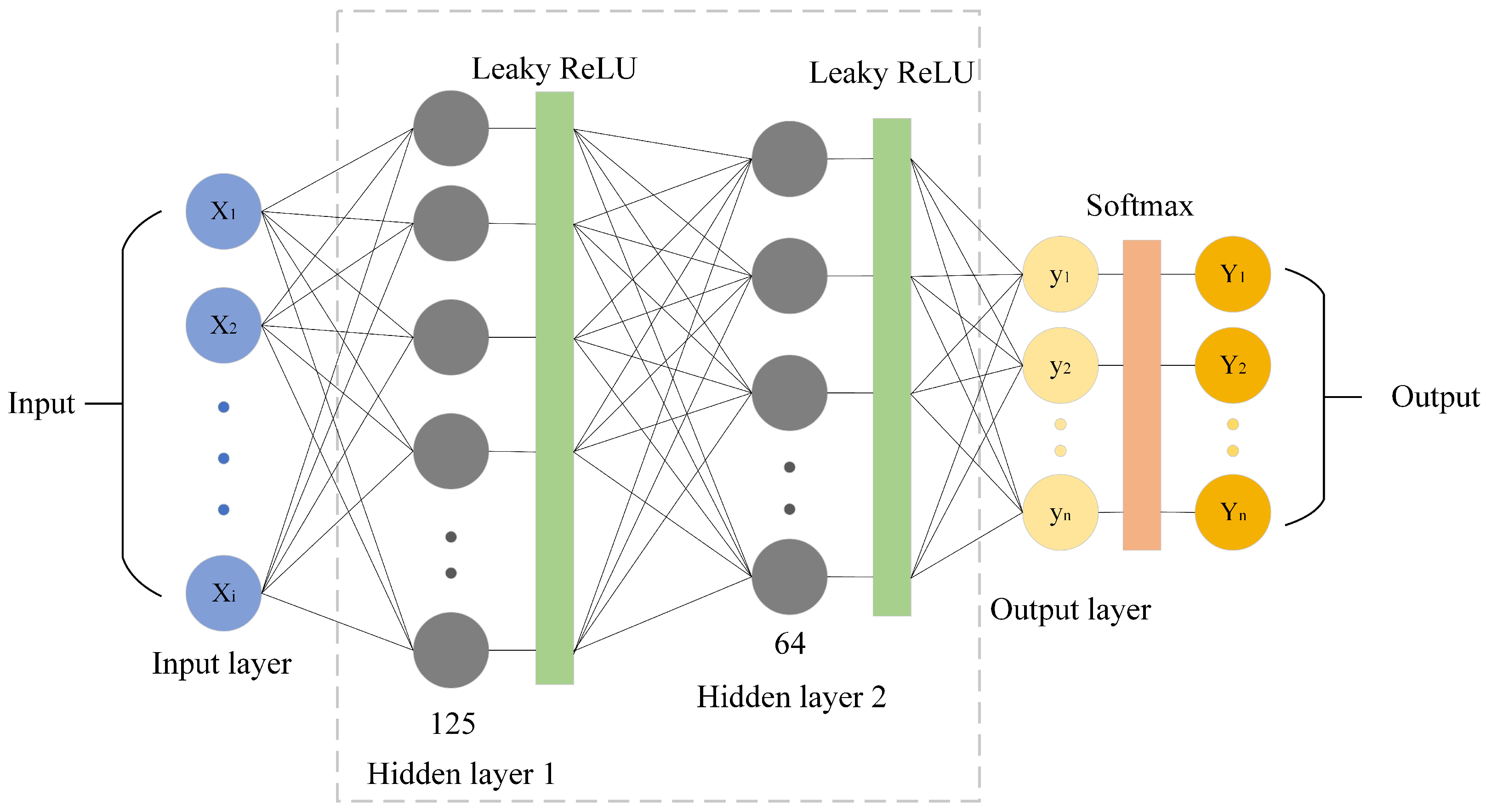

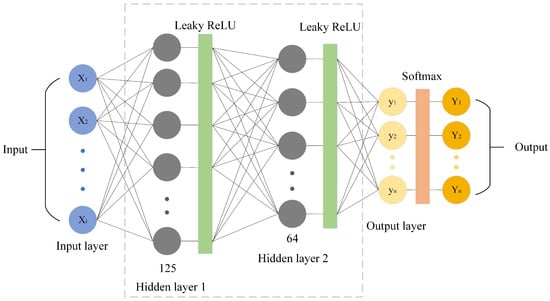

3.3. Classification Decision Module

This paper selected a DNN model as the classification decision model, whose structure is shown in Figure 5. The DNN is composed of three fully connected layers, arranged sequentially as input layer → hidden layer 1 (125 neurons) → hidden layer 2 (64 neurons) → output layer. Notably, the dimensions of the input and output layers are determined by the feature dimensions of the dataset and the number of classification categories, respectively. The hidden layers employ the leaky-ReLU activation function, chosen to mitigate the “dying neuron” issue inherent to the standard ReLU, while the output layer uses the Softmax activation function to produce class probability distributions. To ensure the reproducibility of experiments, the specific training and optimization hyperparameters of the DNN classifier were set as follows. In the training phase, the training dataset balanced by the MDCCGAN was used for model training to improve the detection performance of the model on minority-class critical attacks. Training was stopped when the loss function converged to an extreme value. To prevent overfitting, regularization (weight decay = ) was incorporated into the Adam optimizer (lr = 0.001), and a Dropout layer (rate = 0.2) was added after each hidden layer. Furthermore, since the MDCCGAN module had achieved strict balance among all classes before DNN training, it was unnecessary to set additional class weights, and the standard categorical cross-entropy loss function was directly adopted.

Figure 5.

Architecture of DNN.

The newly balanced training set input was fed to the DNN detection model for training, requiring presetting a reasonable number of training iterations. When the number of model training iterations reached the preset threshold, the training process of the DNN detection model was completed. The test set was used to verify the detection capability of the trained model: by inputting test data, the model outputted detection results, enabling performance evaluation and analysis based on these results and preset evaluation metrics, such as accuracy, recall, F1-score, etc.

4. Experiments and Evaluation

The experiments were conducted on a computing platform with an Intel Core i7-12700 @ 2.10 GHz processor and used the deep learning framework Pytorch 1.12.0 and Sklearn 1.3.2 as the training environment. The hyperparameters of the intrusion detection model and data generation model, including learning rates, input batch sizes, and iteration counts, were determined through combined training. The final values are shown in Table 3.

Table 3.

MDCCGAN model parameters.

4.1. Introduction to the Dataset

This study used NSL-KDD and UNSW-NB15 as benchmark datasets.

4.1.1. NSL-KDD Dataset

The NSL-KDD dataset [34], serving as a standard dataset in the field of intrusion detection, is a reduced version of the KDD-Cup99 dataset [35] which removes missing samples and prunes duplicate and redundant records. Compared with the original KDD-Cup99, NSL-KDD avoids interference from redundant samples, making it more suitable for intrusion detection performance evaluation. The NSL-KDD dataset comprises a designated training set (KDDTrain+) and a test set (KDDTest+), providing standardized dataset partitioning for model training and evaluation. The training set KDDTrain+ contains 125,973 samples, while the test set KDDTest+ includes 22,544 samples. Each sample in NSL-KDD consists of 41 features and one label. The dataset labels consist of one “Normal” class and 39 distinct attack types. These attack types are categorized into four major classes based on the DARPA/CIDF model: DoS (Denial of Service), Probe (Surveillance and Reconnaissance), U2R (User to Root), and R2L (Remote to Local). This classification provides a standardized framework for multiple-category performance evaluation of intrusion detection models. The training set, KDDTrain+, only contains 22 out of the 39 types of attack data, while the remaining 17 types are all in the test set, KDDTest+. These 17 types of attack data that are missing in the training set cover the above four major categories. This partitioning scheme introduces the challenge of detecting unseen attack types during testing, providing a rigorous assessment of the model’s generalization capability of the classification model for various attacks. Table 4 details the distribution of the four major attack categories and the normal category in the NSL-KDD dataset.

Table 4.

Data distribution in NSL-KDD.

Table 4 reveals a severe class imbalance within the NSL-KDD training set: the U2R class contains only 52 samples and the R2L class contains only 995 samples, exhibiting differences exceeding three and one order of magnitude, respectively, compared to the Normal class (67,343 samples). Probe attack samples (11,656) also show a significant deficit. This extreme imbalance induces a gradient update bias in intrusion detection models during training as optimization objectives tend to minimize the classification loss of majority-class samples, thereby suppressing or ignoring the feature learning of minority-class samples. Consequently, intrusion detection models exhibit extremely low detection rates for critical minority-class samples, compromising model performance and posing hidden risks to networks security protection.

4.1.2. UNSW-NB15 Dataset

To verify the model’s generalization ability, this paper conducted supplementary experiments using the UNSW-NB15 [36] intrusion detection dataset. Developed by the Cyber Security Research Centre at the University of New South Wales (Canberra), Australia, this dataset used the IXIA PerfectStom tool to construct a mixed dataset containing modern normal traffic and abnormal traffic. UNSW-NB15 covers one “Normal” class and nine attack categories: Generic, Exploits, Fuzzers, DoS, Reconnaissance, Analysis, Backdoors, Shellcode, and Worms. Their descriptions are as follows:

- Normal: natural transaction data;

- Generic: A technique that works against all block ciphers (with a given block and key size), without consideration about the structure of the block cipher;

- Exploits: The attacker knows of a security problem within an operating system or a piece of software and leverages that knowledge by exploiting the vulnerability;

- Fuzzers: Attempting to cause a program or networks to suspend by feeding it randomly generated data;

- DoS: A malicious attempt to make a server or a networks resource unavailable to users, usually by temporarily interrupting or suspending the services of a host connected to the Internet;

- Reconnaissance: contains all strikes that can simulate attacks that gather information;

- Analysis: It contains different attacks of port scan, spam and html files penetrations;

- Backdoors: A technique in which a system security mechanism is bypassed stealthily to access a computer or its data;

- Shellcode: A small piece of code used as the payload in the exploitation of software vulnerability.

- Worms: A worm replicates itself in order to spread to other computers. Often, it uses a computer networks to spread itself, relying on security failures on the target computer to access it.

The UNSW-NB15 dataset contains 175,341 records in the training set (UNSW-NB15_training) and 82,332 records in the test set (UNSW-NB15_testing), with the specific quantities of each attack type referenced in Table 5. Each record comprises 43 features and two labels (totaling 45 attributes). The “ID” feature serves as a unique identifier and was excluded as non-predictive. The two labels consist of a binary label (“label”) and a multiple categories label (“attack_cat”). As our focus was on multiple categories detection, particularly for minority-attack categories, we retained only the “attack_cat” label. After processing, the dataset contained 42 feature attributes and one multiple-category label attribute. Furthermore, we identified and removed numerous redundant records exhibiting identical feature values but differing attack categories, as such noise can significantly interfere with model training. The training set in the cleaned data contained 101,040 samples, and the test set had 53,946 samples. The overall distribution is shown in Table 6. It should be noted that the removal of redundant records was performed on the original UNSW-NB15_training and UNSW-NB15_test training sets without redividing the dataset. It is evident that the UNSW-NB15, similar to the NSL-KDD, exhibits an extreme class imbalance problem.

Table 5.

Data distribution in UNSW-NB15.

Table 6.

Cleaned data distribution in UNSW-NB15.

4.2. Evaluation Metrics

This paper used four core metrics in multiple categories model evaluation to quantitatively analyze model performance: accuracy, precision, recall, and F1-score. Accuracy, a quantitative indicator of the model’s overall classification performance, is calculated as the ratio of correctly predicted samples to the total number of predicted samples. Precision measures the reliability of predicted positive instances. Recall (also known as sensitivity) evaluates the model’s ability to capture actual positive instances. The F1-score, as the harmonic mean of precision and recall, more comprehensively reflects model performance in class-imbalanced scenarios. It avoids evaluation bias caused by majority-class dominance in single metrics (e.g., accuracy). The calculation formulas for each indicator are as follows:

In Equations (15)–(18), TP (True Positive) denotes the number of samples that are actually positive and correctly predicted as positive. TN (True Negative) denotes the number of samples that are actually negative and correctly predicted as negative. FP (False Positive) denotes the number of samples that are actually negative but mistakenly predicted as positive. FN (False Negative) denotes the number of samples that are actually positive but mistakenly predicted as negative.

4.3. Experiments and Results

This paper used two typical datasets, NSL-KDD and UNSW-NB15, to conduct model evaluation. Cross-dataset validation effectively verified the proposed model’s generalization ability in industrial-Internet scenarios; the differences in traffic features, attack type distributions, and degrees of class imbalance across datasets comprehensively tested the model’s adaptability to diverse industrial network environments, providing more convincing performance references for practical engineering applications.

4.3.1. Results Based on the NSL-KDD Dataset Experiment

To verify the model’s effectiveness, we compared the MDCCGAN against classic oversampling techniques (ROS, SMOTE, ADASYN) for class balancing the NSL-KDD training set. An identical DNN classifier (same architecture and hyperparameters) was then trained on each balanced dataset. Performance was evaluated on the standard test set (KDDTest+). The sample distributions in NSL-KDD after processing by each balancing method (ROS, ROS, SMOTE, ADASYN and MDCCGAN) are shown in Table 7.

Table 7.

Data distributions in NSL-KDD after class balancing.

In the proposed MDCCGAN model, adopting the SMOTE technique to balance the training data of the independent classifier is a critical design choice. To verify the necessity of this method and address the potential concern that SMOTE may introduce artificial noise into the adversarial generation process, we conducted targeted ablation experiments on the NSL-KDD dataset. Specifically, the SMOTE-based pre-training strategy proposed in this paper was compared with three baseline methods: pre-training directly on the original imbalanced data, pre-training using ROS, and pre-training using ADASYN. In terms of the experimental process, we integrated the classifier pre-trained with the aforementioned different sampling strategies into the MDCCGAN model to participate in the subsequent joint training and minority-class sample generation. Subsequently, using the datasets expanded and balanced by each MDCCGAN variant, we trained the downstream DNN intrusion detection classifiers separately and evaluated their performance on an independent test set. The final test performance of the downstream DNN classifiers objectively and intuitively reflects the real sample generation quality of each MDCCGAN variant, and the detailed experimental comparison results are shown in Table 8.

Table 8.

Classifier ablation experiment comparison on NSL-KDD.

As shown in Table 8, when the independent classifier is directly trained on the original imbalanced data, its decision boundary is severely inclined toward the majority class. This bias prevents the classifier from providing accurate category conditional gradients for minority classes (e.g., U2R) to the generator, making it difficult for the generator to learn minority-class label information during adversarial training, resulting in an F1-score of only 12% for U2R. On the other hand, compared with directly using the original dataset, the use of ROS can increase the F1-score of U2R by 15%. However, since ROS merely performs simple physical replication of minority-class samples, it is highly prone to causing the classifier to overfit to duplicate samples. This overfitting transmits rigid gradient guidance signals to the generator, which severely limits the diversity and generalization ability of generated samples, thereby leading to a bottleneck in the overall accuracy of the model that is difficult to further break through. More importantly, when comparing the two advanced synthetic methods, ADASYN performs worse than SMOTE. Because ADASYN adaptively generates more synthetic data for minority samples that are more difficult to learn, usually outliers near dense majority-class regions, this causes the classifier to form an overly aggressive and distorted decision boundary. Therefore, the gradients provided to the generator excessively push the synthetic minority samples into the majority-class regions, thereby increasing the misjudgment rate. In contrast, SMOTE can provide more uniform and stable interpolation, enabling to output smooth and accurate classification guidance. The experimental results also indicate that SMOTE is overall superior to ADASYN in terms of data quality.

Furthermore, it is particularly emphasized that any inherent noise or feature distortion associated with SMOTE is not transmitted to the finally generated samples. In our decoupled architecture, the classifier (C) only provides directional gradients to ensure label consistency. The high-fidelity feature distribution and structural authenticity of the generated samples are completely determined by the dual discriminators ( and ), which are specifically trained using original real network traffic data. Therefore, the dual discriminators act as strict filters to ensure that the generated data are authentic and reliable and completely avoid artifacts caused by SMOTE.

Table 9 demonstrates the detection performance of the DNN model integrated with class balancing techniques for different attack categories. The data indicate that in experiments with the original unbalanced dataset, the DNN intrusion detection model exhibited significant defects in detecting minority-class attacks: the recall rate for R2L attacks was only 6%, while the precision, recall, and F1-score for U2R attacks were all zero. This extreme result demonstrates that when the U2R attack sample size is only 52 (accounting for 0.04% of total samples), the model completely fails to capture the feature patterns of that class, falling into a state of feature learning failure.

Table 9.

Class balancing technique comparison on NSL-KDD.