Abstract

There are three principal novelties in the present investigation. It is the first time Cohen–Grossberg-type neural networks are considered with the most general delay and advanced piecewise constant arguments. The model is alpha unpredictable in the sense of electrical inputs and is researched under the conditions of alpha unpredictable and Poisson stable outputs. Thus, the phenomenon of ultra Poincaré chaos, which can be indicated through the analysis of a single motion, is now confirmed for a most sophisticated neural network. Moreover, finally, the approach of pseudo-quasilinear reduction, in its most effective form is now expanded for strong nonlinearities with time switching. The complexity of the discussed model makes it universal and useful for various specific cases. Appropriate examples with simulations that support the theoretical results are provided.

Keywords:

Cohen–Grossberg type neural networks; alpha unpredictable neural networks; Poisson stable piecewise constant argument; method of pseudo-quasilinear reduction; method of included intervals; ultra Poincare chaos; alpha unpredictable oscillation MSC:

34K23

1. Introduction

In this paper, we examine a specific class of recurrent neural networks known as Cohen–Grossberg types, which were introduced by M. Cohen and S. Grossberg in 1983 [1]. These networks have become a foundational model widely applied in fields such as signal processing and optimization. The Cohen–Grossberg model is particularly notable for its stability properties, which arise from the characteristics of the neuron-like components it represents. Significant advancements in this model include modifications to accommodate time delays, impulses, and nonlinear activation functions, thereby greatly enhancing its applicability to complex scenarios. This study first explores Cohen–Grossberg-type neural networks incorporating the most general delay and an advanced piecewise constant argument. The extension further broadens the model’s applicability, allowing for a more comprehensive analysis of complex dynamical behaviors in neural networks.

Neural networks with piecewise constant arguments have become an important tool for modeling systems exhibiting continuous and discontinuous dynamics. These models provide a simplified yet effective way to represent complex systems, reducing computational effort while maintaining practical relevance to real-world applications such as control systems, biological processes, and signal processing. Differential equations are essential tools for understanding how systems change over time. Equations with piecewise constant arguments have become an important research focus in recent years. Over the years, researchers have developed new approaches to handle systems that combine continuous and discrete behaviors, leading to studying differential equations with piecewise constant arguments.

The fundamental ideas behind differential equations with generalized piecewise constant arguments were developed in [2,3]. They were first introduced at the Conference on Differential and Difference Equations at the Florida Institute of Technology, 1–5 August 2005, Melbourne, Florida [4]. These equations have attracted significant attention from multiple disciplines because of their theoretical importance and broad applications in mathematics, biology, engineering, electronics, control theory, and neural networks. They are particularly remarkable as they combine elements of both continuous and discrete dynamical systems [5,6,7,8,9].

A significant aspect of neural network research is the analysis of recurrent oscillations, which include periodic and almost periodic motions. Poisson stable motions are the most complex type of oscillations, and there are relatively few results in this area. In this study, we propose examining a subclass of Poisson stable dynamics that are alpha unpredictable [10,11]. This novel approach allows us to reduce the analysis of chaotic systems. Thus, traditional methods for verifying the existence and stability of recurrent motions, such as periodic and almost periodic motions, can be applied to complex dynamics. The principles, which are based on alpha unpredictability, make the concept convenient for the research of both recurrent motions of models such as the line of periodicity, where almost periodicity is continued, and chaos [10] (previously known as unpredictability [12]). Alpha unpredictability is strongly associated with ultra Poincaré chaos [11] (formerly Poincaré chaos [12]). The ultra Poincare chaos is an alternative to all the types, Li-Yorke, Devaney, etc., which are based on the Lorenz sensitivity.

Thus, the novel concept of alpha unpredictability allows us to reduce the analysis of chaotic systems. Moreover, traditional methods for verifying the existence and stability of recurrent motions, such as periodic and almost periodic motions, can be applied to complex dynamics. The principles, which are based on alpha unpredictability, make the concept convenient for the research of both recurrent motions of models such as the line of periodicity, where almost periodicity is continued, and chaos [10,11].

Ultra Poincaré chaos [11] is based on the concept of alpha unpredictability, which focuses solely on a single trajectory, while well-known Lorentz chaos relies on the collective behavior of orbits, including sensitivity, dense cycles, transitivity, proximality, and frequency separation [13,14]. An unpredictable trajectory is naturally positively Poisson stable, and its key feature is the emergence of chaos within the corresponding quasi-minimal set. This concept offers a new perspective in chaos theory while also reconnecting with the core principles of classical dynamics and modern theories of differential and difference equations, emphasizing motion and stability. This novel understanding of motion advances chaos research both theoretically and in practical applications across various scientific and industrial fields. Moreover, it is particularly valuable in neuroscience, where complex dynamics present significant challenges.

It is well established that chaos plays a vital role in neural network applications. In [15,16], the similarity between naturally occurring chaos observed experimentally in the brain’s neural systems and the chaotic behavior of artificial neural networks was examined, emphasizing asymmetry and nonlinearity in a dynamic model. The phenomenon of neural network chaos is closely associated with an improved comprehension of signal and pattern recognition in noisy environments and the processing and transmission of information. The quantity of information transmitted in chaotic dynamic systems was calculated in [17,18]. It was demonstrated that a chaotic neural network possesses the capability for the efficient transmission of any externally received information.

Chaos plays a significant role in cognitive functions related to memory processes in the human brain. Various models of self-organizing hypothetical computers have been proposed, including the synergetic computer [19], resonance neurocomputers [20], the holonic computer [21], and chaotic computing models [22], as well as chaotic information processing and chaotic neural networks [23]. These models attempt to incorporate dynamical system theory to varying degrees, particularly in nonlinear and far-from-equilibrium states of physical systems, as a possible mechanism for explaining how higher animals process information in the brain.

In [24,25], the adaptive synchronization problem of chaotic Cohen–Grossberg neural networks with mixed time delays and discrete delays was extensively studied. These studies focused on developing synchronization schemes that ensure the stability of chaotic neural networks under various delay conditions, providing theoretical proofs and numerical simulations to support their findings. The problem of adaptive synchronization analysis for a class of Cohen–Grossberg neural networks with mixed time delays has been rigorously analyzed using a Lyapunov–Krasovskii functional approach and the invariant principle of functional differential equations. As described in [24], these methods ensure robustness against system uncertainties and external perturbations, making them highly effective for practical applications in neural network synchronization.

Furthermore, when considering chaos synchronization in the case of alpha unpredictable Cohen–Grossberg neural networks, the synchronization mechanism may be based on a specific approach known as delta synchronization of Poincaré chaos [26]. This method provides a framework for analyzing and controlling chaotic dynamics in unpredictable systems by leveraging Poincaré map techniques and delta synchronization principles. Authors have discussed the effectiveness of delta synchronization in scenarios where other synchronization methods may not be effective. They noted that generalized synchronization is a well-known and effective tool; however, a study demonstrated that delta synchronization can be achieved even when generalized synchronization is not present. This finding suggests that delta synchronization is valuable in cases where other conservative synchronization methods fail.

Our studies provide a mathematical justification for chaos and expand the understanding of this phenomenon. In particular, ultra Poincaré chaos is an alternative to Lorenz chaos and demonstrates alpha unpredictability as a characteristic of isolated motion [11]. The practical application of these concepts is especially relevant in neural networks, where mathematical chaos reflects the complexity of the world and has the potential to enhance the study of intellectual activity in the brain. Modern research integrates the theoretical foundations of classical dynamic systems and differential equations with the study of chaos in neural models [10,11,27].

One of the most common types of differential equations with the piecewise constant argument has the following structure [2]:

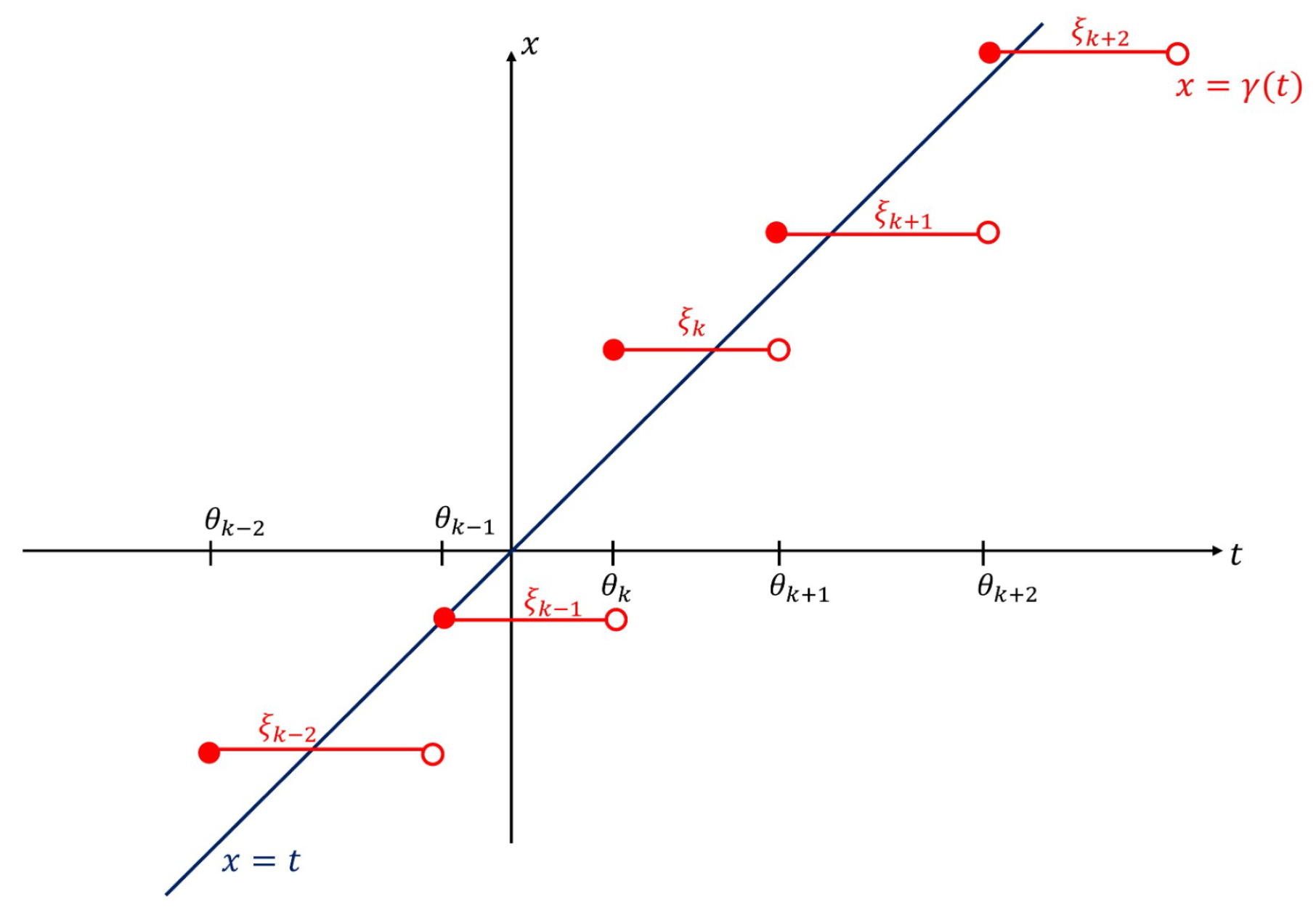

where if where and is a piecewise constant function, and and where are strictly increasing sequences of real numbers, unbounded on the left and on the right such that for all A sketch of the graph of the function is shown in Figure 1.

Figure 1.

The graph of the piecewise constant argument function .

The development of differential equations with piecewise constant arguments [4], along with subsequent insights from [2,3], has been an important step in understanding models with deviated arguments. Two significant advancements have emerged from this research. First, reducing these systems to equivalent integral equations has proven effective, enabling the application of well-established tools from differential equations. Second, the time intervals where the argument stays constant can be freely chosen. This flexibility allows for better adaptation to specific needs, like optimization, accuracy, or modeling real-world scenarios in physics and biology. This flexibility allows us to study models with natural nonlinearity, which traditional methods for hybrid systems often cannot handle. As a result, this approach enhances our understanding of real-world processes and expands the analytical scope, significantly strengthening methodological capabilities. It is now recognized that these models belong to the broader class of functional differential equations, occupying an intermediate position between ordinary and functional differential equations. This makes them particularly important for the mathematical modeling of phenomena in neuroscience.

To enhance the applicability, a new class of compartmental functions has been recently introduced [10]. They are formed by combining Poisson stable or alpha unpredictable functions with periodic, quasi-periodic functions called compartmental Poisson stable or compartmental alpha unpredictable functions. They include theoretical features of regular and irregular functions, which strongly support their appearance in real-world problems.

Given the growing interest in neural networks with piecewise constant arguments, this study aimed to develop the theoretical framework further and explore new dynamical properties in these models. By integrating concepts such as Poisson stability and alpha unpredictability, we extend previous research [28] and provide a more comprehensive understanding of the interplay between continuous and discrete dynamics in neural networks. Our findings contribute to both these models’ theoretical advancement and practical application, offering new perspectives on complex dynamical behaviors in neuroscience, control systems, and beyond.

Description of the Model

We investigate the following Cohen–Grossberg neural network:

where , , , corresponds to the state of the ith neuron at time moment t; is a piecewise constant argument function; p is the number of neurons in the network; , ,is an amplification function; and , , are components of the rates with which the neuron self-adjusts or resets its potential when isolated from other neurons and inputs; and , , are the strength of connectivity between cells j and i at moment t; and , , are activation functions; and , , is an external input introduced to cell i from outside the network. Functions and , are continuous, and strengths and as well as inputs , , are alpha unpredictable functions.

A key feature of this model is the set of coefficients that define the rates and the activation functions, which we consider sums of members with continuous time and with piecewise constant arguments. Specifically, we add components and to the coefficients and We believe that this approach will allow for a more effective exploitation of models that describe complex biological processes, such as ecological interactions or population dynamics. By incorporating aspects like population growth, reproduction delays, metamorphosis, or other stages of an organism’s life cycle, we aim to better capture the complexities of these processes [29,30,31]. With our suggestions, we significantly increase the application capabilities of the model, and in this paper, we will apply it for chaos appearance.

Moreover, the Cohen–Grossberg neural network model we are examining possesses a universal nature, as it can encompass a variety of specific cases by choosing its parameters. For instance, by setting certain parameters such as rates and the activation functions, and , the model simplifies to a standard framework [1,28]. Additionally, if we set and in replace the piecewise constant argument with time delays, the model represents another special case of a generalized argument [32]. In this case, the system captures the dynamics governed by time deviations rather than the original argument structure. For such configurations, the existence and behavior of periodic and almost periodic solutions have been thoroughly investigated in prior research [33,34,35,36,37]. These studies provide a foundation for understanding the diverse solution properties within the broader framework of the Cohen–Grossberg neural network model.

2. Preliminaries

In this section, the basic definitions are provided as well as description of the discontinuous deviated argument, and reduction to the pseudo-quasilinear model is performed.

First of all, let us start with the classical notations for the natural, integer, and real numbers: , , and , respectively. Within this paper, we utilize the norm where denotes the absolute value, , and .

Definition 1

([38]). A sequence where and , is called Poisson stable provided that it is bounded and there exists a sequence , , of positive integers that satisfies as on bounded intervals of integers.

Definition 2

([38]). A uniformly continuous and bounded function is Poisson stable if there exists a sequence that diverges to infinity such that as uniformly on compact subsets of .

Definition 3

([10]). A uniformly continuous and bounded function is alpha unpredictable if there exist positive numbers , and sequences and , both of which diverge to infinity such that the following hold:

- as uniformly on compact subsets of

- for each and

Fix the sequences of real numbers and which are strictly increasing with regard to the indices. Sequences and are unbounded in both directions. Moreover, it satisfies with positive numbers and .

Definitions of the Poisson couple and triple are presented below.

Definition 4

([12]). A couple of sequences and , , is called a Poisson couple if there exists a sequence , , that diverges to infinity such that

uniformly on each bounded interval of integers k.

Definition 5

([12]). A triple of the sequences and , , is called Poisson triple if there exists a sequence , , of integers that diverges to infinity such that condition (3) is satisfied and

uniformly on each bounded interval of integers k.

2.1. The Delay-Advanced Piecewise Constant Argument

We aim to determine the argument function in (2) by considering its general characteristics, as outlined in [2]. Specifically, we assume that if , . The function is defined over the entire real line and associated with two Poisson sequences and such that the interval satisfies for some positive numbers and applicable to all integer values A prototype of the function is present by the graph in Figure 1.

Alongside and , we fix a sequence , forming a Poisson triple , as per Definition 5. By analyzing for a fixed , we prove that for sufficiently large n, the function takes the form if , where , .

Our goal is now to show that this discontinuous argument function possesses a property known as discontinuous Poisson stability.

Consider the bounded interval with , and choose any positive number such that over this interval. Without loss of generality, we assume and analyze the discontinuity points for within satisfying

It follows that for sufficiently large values n, the condition

holds for all and that

for any , except in cases where t lies between and for a given k. Now, fixing k, in we observe that for a given k, the function satisfies , , and , . For sufficiently large n, the interval is non-empty, and condition (5) holds a result of (3). Furthermore, from condition (4), it follows that for sufficiently large

for all . Thus, inequalities (5) and (6) are approved.

2.2. Reduction to the Pseudo-Quasilinear System

We will consider a solution, of model (2), which is determined on the real axis, and assume that the solution is bounded, such that , where is a fixed positive number.

To construct an environment for the research, we will use the following notation:

for each .

The following conditions are required:

- (C1)

- Each function , is continuous, and there exist constants and such that for all , and .

- (C2)

- Components and are Lipschitzian: that is, and with positive constants and for all and and .

- (C3)

- Components , , and are alpha unpredictable functions with the common sequences of convergence, and separation, .

- (C4)

- Functions and are Lipschitzian: that is, and if and with positive constants and .

- (C5)

- .

According to , for every , it is possible to determine a function , . Obviously, . Because of the condition , one can obtain that the function is increasing, and the inverse function , , is existential, continuous, and differentiable. Moreover, , where is the derivative of function

By mean value theorem, one can find that

for and and . For this reason, function fulfills the Lipschitz condition:

if and .

Now, new variables,

are proposed, which reduce the highly nonlinear model and admit a pseudo-quasilinear shape.

One can obtain that and . To satisfy the inequality , the motion in the new variables must subdue the relation , where and .

Consequently, for piecewise constant argument function , it is clear that

and , .

Using (9) and (10) for system (2), we obtain that

where and , Because of the structure’s right-hand side, the last model can be called a pseudo-quasilinear system of differential equations.

A function is considered as a bounded solution of Equation (11) if and only if it satisfies the corresponding integral equation:

with [40].

3. Main Result

Introduce the set of p-dimensional functions , , with the norm such that the following must hold:

- (P1)

- There exists a positive number H that satisfies for all ;

- (P2)

- There are Poisson stable with convergence sequence , .

Suppose that the conditions below hold for every :

- (C6)

- There exist constants , and such that and ;

- (C7)

- There exist positive numbers and such that and for all and ;

- (C8)

- ;

- (C9)

- .

The following notations and lemma are required to prove the main results of this paper.

Denote , where

The proofs for all statements discussed in this work are provided in the Appendix A.

Lemma 1.

Assume that conditions (C1)–(C7) hold true. If , , is a motion of model (11), then the inequality given by

is valid.

Define in the operator such that where

Lemma 2.

Lemma 3.

The operator Π is a contraction on .

Theorem 1.

If conditions – are fulfilled, then there exists a unique Poisson stable motion of the network (2).

The following theorem establishes the existence of a unique alpha unpredictable solution for neural networks (2).

Theorem 2.

Under the assumption that conditions (C1)–(C9) hold, the Cohen–Grossberg neural network (2) has a unique alpha unpredictable solution.

Denote and and let be a positive number such that

The requirement is essential for proving exponential stability.

- (C10)

Theorem 3.

Assume that conditions (C1)–(C10) are satisfied. Then, the Poisson stable and alpha unpredictable solutions of the Cohen–Grossberg neural network (2) approved in Theorems 1 and 2, respectively, are exponentially stable.

4. An Example

Let us start by creating alpha unpredictable functions. In [11], it was proved that the logistic equation

admits an alpha unpredictable solution if That is, there exist sequences and as and a positive number such that as for each k in the bounded intervals of integers, and for each .

Using the function , if , we construct an integral function, . The function is bounded on . The Poisson stability of the function is proved using the method of included intervals, and the alpha unpredictability is also established as in [10].

While working on new types of recurrence, we were surprised to discover that, despite extensive literature, there is a lack of numerical examples and simulations for both the functions and solutions of differential equations. Meanwhile, the demands of industries, particularly in fields such as neuroscience, artificial intelligence, and other modern domains, require numerical representations of motions that are already well supported by theoretical frameworks. Our research addresses these challenges comprehensively, as we are the first to construct samples of Poisson stable and alpha unpredictable functions using solutions to the logistic equation.

The next analysis of a neural network is a confirmation of the results, and beside it is an illustration of the theorems of this paper.

Consider the following Cohen–Grossberg neural network with a piecewise constant argument:

where . The following functions are used as activations: and . The amplification functions are as follows: and The rates are given by and Neurons are connected through the following measures of connectivity strength: And the external inputs are and .

The constant argument function is defined by the sequences and , , , which constitute the Poisson triple [10,12].

Calculate and .

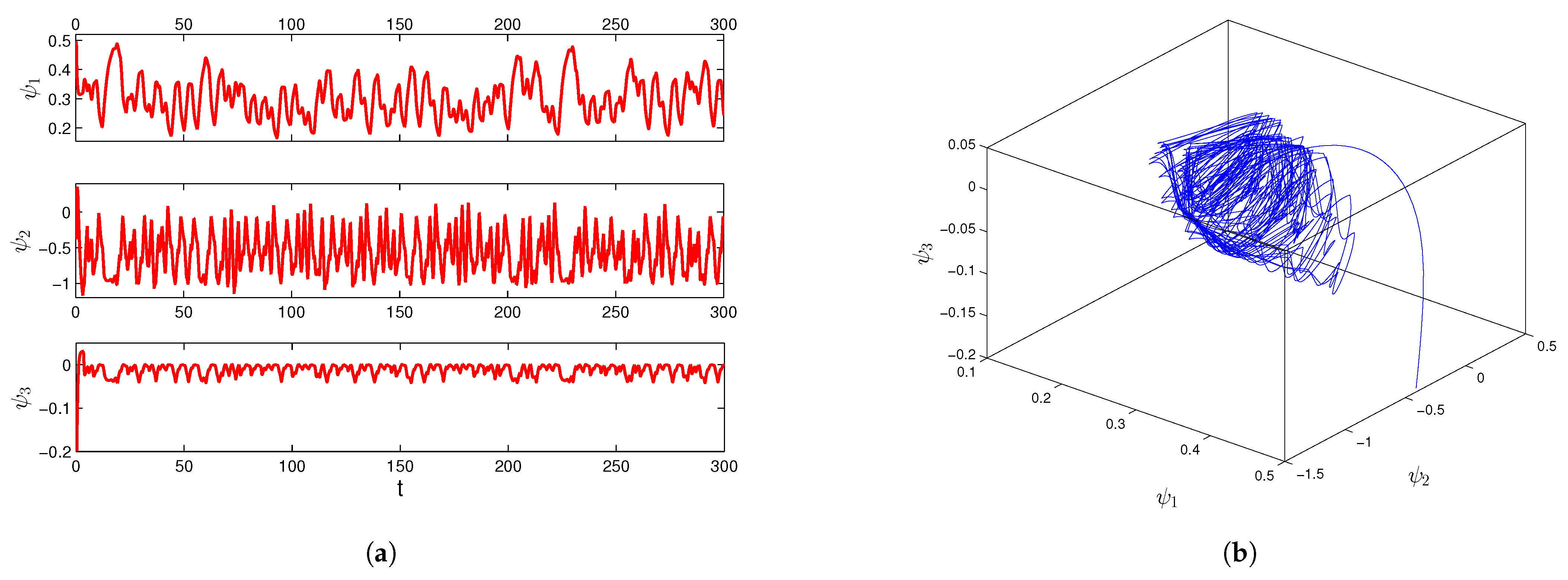

Taking into account that one can find and We have that and such that , and . Thus, we obtain that , , , , and . Functions and satisfy the Lipschitz condition with and and and Conditions (C1)–(C10) hold for the functions and constants described above. Assumption (C11) is valid given that , , and According to Theorem 3, there exists a unique alpha unpredictable solution, of neural network (15). In Figure 2, coordinates (a) and trajectory (b) of solution which exponentially converges to the alpha unpredictable solution , are shown.

Figure 2.

The coordinates (a) and trajectory (b) of the solution with initial data and .

5. Conclusions

Chaos is a crucial aspect of neural dynamics, influencing cognitive functions such as memory formation, learning, and decision making. In neural networks, chaotic behavior allows for enhanced adaptability, improved pattern recognition, and efficient information processing, closely resembling the dynamics observed in biological brains. The ability to harness chaos in artificial neural networks can lead to the development of more efficient machine learning models.

This study advances the theoretical and application role of Cohen–Grossberg-type neural networks by introducing a model with the most general delay and an advanced piecewise constant argument. The alpha unpredictability and conditions for Poisson stability are analyzed, confirming the phenomenon of ultra Poincaré chaos in complex neural networks. The model and its recurrence properties pose new challenges in studying neural networks, particularly in proving Poisson stability. Additionally, the system’s complexity is heightened by strong nonlinearities and time-dependent switching, necessitating an extension of the pseudo-quasilinear reduction method.

The theoretical results, supported by numerical simulations, provide valuable insights for practical applications in signal processing, pattern recognition, and synchronization in deterministic and stochastic processes. These findings contribute to the broader understanding of complex neural network behavior and their potential real-world implementations.

Author Contributions

Conceptualization, M.A.; methodology, M.A.; investigation, M.A., Z.N., and R.S.; writing—original draft preparation, R.S.; writing—review and editing, Z.N.; supervision R.S.; software, Z.N. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Science Committee of the Ministry of Science and Higher Education of the Republic of Kazakhstan (Grant No. AP23487275).

Data Availability Statement

No datasets were generated or analyzed during the current study.

Acknowledgments

The authors wish to express their sincere gratitude to the referees for helpful criticism and valuable suggestions.

Conflicts of Interest

The authors declare no conflicts of interest.

Appendix A. Proofs of the Results

Proof of Lemma 1.

We begin with the consideration of a bounded motion for system (2). It satisfies the following integral equations [40]:

Let us prove that

for all .

First, fix an integer k such that , and then consider two alternative cases: (a) and (b) .

(a) For obtain that

The Gronwall–Bellman Lemma yields that

Moreover,

The last inequality implies relation (A2).

Proof of Lemma 2.

Let us denote

We have the following for fixed and :

From the last inequality and condition (C8), we obtain . So, property (P1) is valid for .

Next, let us prove property (P2) for . This requires proving a sequence , , ensuring that for each uniformly over every closed and bounded interval of the real axis. To achieve this, we employ the method of included intervals considered in [10]. Fix an interval where with and a positive real number . To prove the claim, it is sufficient to show that for and large n. Choose numbers and such that

Take n large enough such that , , whenever , and , and , for all , . Then, for , we obtain that

Examine the integral as the sum of its components over the last two intervals and . Using inequalities (A3)–(A5), we obtain that the following estimates are correct for each :

Let us calculate the integral over the interval :

In the following, inequality

where will be intensively utilized. To evaluate the integral , we use the mean value theorem. According to this theorem, for an exponential function, we have , with and a number between and Additionally, it can be easily shown that relation holds.

Now, let us evaluate the integral using inequality (A6).

Moreover, since the last integral contains a summation with a piecewise constant argument, to evaluate this integral on the interval , we divide this interval into small parts as follows. For a fixed , we assume, without loss of generality, that and . That is, there are exactly r breakpoints in Let the following inequalities

and

be satisfied for the given .

Consider the last integral as follows:

Denote

and

where , and

By condition (6), for , , we have that , Thus, we obtain that

Due to the uniform continuity of , for sufficiently large n and , there exists a corresponding such that if From this, one can conclude that

Moreover, we obtain that

as a consequence of condition (5). Likewise, for the following integral can be estimated:

In this way,

can be obtained.

So,

Proof of Lemma 3.

Let functions and belong to the space . It is true for all that

So, it is true for all that

Consequently, conditions (C6) and (C9) imply that operator is contractive. The lemma is proved. □

Proof of Theorem 1.

Let us first proof that space is complete. Consider a Cauchy sequence in that converges to a limit function on . Let us start with the second condition because the property can be easily checked. Fix a closed and bounded interval . Thus, one can write

It is possible to choose sufficiently large values for k and n so that each term on the right side of inequality (A9) remains smaller than for any given and for all . A result of inequality (A9) guarantees that uniformly on I. This establishes the completeness of .

By applying Lemmas 2 and 3, the invariance and contractive nature of operator in ensure the existence a unique point , which is a fixed point of . This point corresponds to a solution of system (11) and satisfies the convergence property. Consequently, function serves as the unique Poisson stable solution of system (11).

Now, consider a function such that According to substitution (9), function is a unique solution of system (2). Let us show that is Poisson stable. Using inequality (8), on a fixed bounded interval we obtain that

for all Therefore, each sequence uniformly converges to as This leads to the conclusion that function represents the unique Poisson stable solution of neural network (2). The theorem is proved. □

Proof of Theorem 2.

According to the previous theorem, it follows that neural network (2) possesses a unique Poisson stable solution, given by . Now, we aim to demonstrate the alpha unpredictability of The first step is to verify that the Poisson stable solution of system (11) fulfills the separation property.

Applying relations

and

one can obtain that

for each

It is possible to find positive numbers and integer values l and k such that the subsequent inequalities hold for all :

Let numbers , and as well as numbers and be fixed. Consider the following two alternatives, (i) and (ii) :

(ii) If it is not difficult to find that (A15) implies the following:

if and Thus, both cases and indicate that solution satisfies the separation property. It follows that qualifies as an alpha unpredictable solution of system (11), as defined in Definition 3, where sequences and along with positive values and are considered.

Next, we demonstrate that the function , which represents a solution of neural network (2), also possesses alpha unpredictability. Utilizing condition (8), we obtain that

for all and This confirms that neural network (2) admits a unique alpha unpredictable solution, as described in Definition 3. The theorem is proved. □

Proof of Theorem 3.

Consider the solution which is proved to be Poisson stable by Theorem 1 and alpha unpredictable by Theorem 2. It is clear that one can consider the solution as a bounded one, and examining it exponentially stable is sufficient to verify the theorem.

The solution satisfies the following integral equation:

with .

Let be another solution of system (11). Then, for fixed , one can find that

Denote and for all Then, it is true that

Now, let us construct the sequence of successive approximations for the last system considering

Using (A17), we obtain that for each the following inequalities are correct:

Assume that for fixed , the following inequality is valid:

By applying the principle of mathematical induction, we can establish that

for every From condition , it follows that as This result confirms that the sequence converges uniformly to the unique solution, of integral equation (A17), satisfying the inequality

Consequently, the solution of system (11) is exponentially stable.

Next, we demonstrate that the solution of neural network (2) also exhibits exponential stability. If represents another solution of system (2), then we obtain

Therefore, the Poisson stable solution of neural network (2) is confirmed to be exponentially stable. □

References

- Cohen, M.A.; Grossberg, S. Absolute stability of global pattern formation and parallel memory storage by competitive neural networks. IEEE Trans. SMC 1983, 13, 815–826. [Google Scholar]

- Akhmet, M. Nonlinear Hybrid Continuous/Discrete-Time Models; Atlantis Press: France, Paris, 2011. [Google Scholar]

- Akhmet, M.U. Integral manifolds of differential equations with piecewise constant argument of generalized type. Nonlinear Anal. 2007, 66, 367–383. [Google Scholar]

- Akhmet, M.U. On the integral manifolds of the differential equations with piecewise constant argument of generalized type. In Proceedings of the Conference on Differential and Difference Equations at the Florida Institute of Technology, Melbourne, FL, USA, 1–5 August 2005; pp. 11–20. [Google Scholar]

- Wan, L.; Wu, A. Stabilization control of generalized type neural networks with piecewise constant argument. J. Nonlinear Sci. Appl. 2016, 9, 3580–3599. [Google Scholar]

- Torres, R.; Pinto, M.; Castillo, S.; Kostić, M. Uniform approximation of impulsive Hopfield cellular neural networks by piecewise constant arguments on [τ,∞). Acta Appl. Math. 2021, 171, 8. [Google Scholar]

- Li, X. Existence and exponential stability of solutions for stochastic cellular neural networks with piecewise constant argument. J. Appl. Math. 2014, 2014, 1–11. [Google Scholar]

- Bao, G.; Wen, S.H.; Zeng, Z.H. Robust stability analysis of interval fuzzy Cohen–Grossberg neural networks with piecewise constant argument of generalized type. Neural Netw. 2012, 33, 32–41. [Google Scholar]

- Xi, Q. Global exponential stability of Cohen-Grossberg neural networks with piecewise constant argument of generalized type and impulses. Neural Comput. 2016, 28, 229–255. [Google Scholar]

- Akhmet, M.; Tleubergenova, M.; Zhamanshin, A.; Nugayeva, Z. Artificial Neural Networks: Alpha Unpredictability and Chaotic Dynamics; Springer: Cham, Switzerland, 2024. [Google Scholar]

- Akhmet, M. Ultra Poincaré Chaos and Alpha Labeling: A New Approach to Chaotic Dynamics; IOP Publishing: Philadelphia, PA, USA, 2024. [Google Scholar]

- Akhmet, M.; Tleubergenova, M.; Nugayeva, Z. Unpredictable and Poisson Stable Oscillations of Inertial Neural Networks with Generalized Piecewise Constant Argument. Entropy 2023, 25, 620. [Google Scholar] [CrossRef]

- Devaney, R. An Introduction to Chaotic Dynamical Systems; Addison-Wesley: Menlo Park, CA, USA, 1990. [Google Scholar]

- Li, T.Y.; Yorke, J.A. Period three implies chaos. Am. Math. Mon. 1975, 82, 985–992. [Google Scholar]

- Garliauskas, A. Neural network chaos analysis. J. Nonlinear Anal. Model. Control. 1988, 3, 1–14. [Google Scholar]

- Chapeau-Blondeau, F.; Chauvet, G. Stable, Oscillatory, and Chaotic Regimes in the Dynamics of Small Neural Network with Delay. Neural Netw. 1992, 5, 735–743. [Google Scholar]

- Matsumoto, K.; Tsuda, I. Extended information in one dimensional maps. Phys. D 1987, 26, 347–357. [Google Scholar]

- Matsumoto, K.; Tsuda, I. Calculation of information ow rate from mutual information. J. Phys. A Math. Gen. 1988, 64, 3561–3566. [Google Scholar]

- Haken, H. Learning in Synergetic Systems for Pattern Recognition and Associative Action. Z. Phys. B-Condens. Matter 1988, 71, 521–526. [Google Scholar]

- Grossberg, S. The Adaptive Brain I; North-Holland: Amsterdam, The Netherlands, 1987. [Google Scholar]

- Shimizu, H.; Yamaguchi, Y. Synergetic Computer and Holonics Information Dynamics of a Semantic Computer. Phys. Scr. 1987, 36, 970–985. [Google Scholar]

- Masayoshi, I.; Nagayoshi, A. A chaos neuro-computer. Phys. Letter A 1991, 158, 373–376. [Google Scholar]

- Tsuda, I. Dynamic Link of Memory—Chaotic memory Map in Nonequillibrium Neural Networks. Neural Netw. 1992, 5, 313–326. [Google Scholar]

- Zhu, Q.; Cao, J. Adaptive synchronization of chaotic Cohen–Crossberg neural networks with mixed time delays. Nonlinear Dyn. 2010, 61, 517–534. [Google Scholar]

- Liu, Q.; Zhang, S.H. Adaptive lag synchronization of chaotic Cohen-Grossberg neural networks with discrete delays. Chaos 2012, 22, 033123. [Google Scholar]

- Akhmet, M.; Başkan, K.; Yeşil, C. Delta synchronization of Poincaré chaos in gas discharge-semiconductor systems. Chaos 2022, 32, 083137. [Google Scholar]

- Akhmet, M.U. Dynamical synthesis of quasi-minimal sets. Int. J. Bifurc. Chaos 2009, 19, 1–5. [Google Scholar] [CrossRef]

- Akhmet, M.; Tleubergenova, M.; Zhamanshin, A. Cohen-Grossberg neural networks with unpredictable and Poisson stable dynamics. Chaos Solitons Fractals 2024, 178, 114307. [Google Scholar] [CrossRef]

- Cottam, R.; Vounckx, R. Chaos, complexity and computation in the evolution of biological systems. Biosystems 2022, 217, 104671. [Google Scholar] [CrossRef] [PubMed]

- Tadeusiewicz, R. Neural networks as a tool for modeling of biological systems. Bio-Algorithms Med-Syst. 2015, 11, 7. [Google Scholar] [CrossRef]

- Hanrahan, G. Artificial Neural Networks in Biological and Environmental Analysis; CRC Press: Boca Raton, FL, USA, 2011. [Google Scholar]

- Kong, F.; Zhu, Q.; Aouiti, C.; Dridi, F. Periodic and homoclinic solutions of discontinuous Cohen–Grossberg neural networks with time-varying delays. Eur. J. Control. 2021, 59, 238–249. [Google Scholar] [CrossRef]

- Zhao, H.; Chen, L.; Mao, Z. Existence and stability of almost periodic solution for Cohen–Grossberg neural networks with variable coefficients. Nonlinear Anal. RWA 2008, 9, 662–673. [Google Scholar] [CrossRef]

- Li, Y.; Fan, X. Existence and globally exponential stability of almost periodic solution for Cohen–Grossberg BAM neural networks with variable coefficients. Appl. Math. Model. 2009, 33, 2114–2120. [Google Scholar] [CrossRef]

- Kong, F.; Ren, Y.; Sakthivel, R.; Pan, X.; Liu, S. New criteria on periodicity and stabilization of discontinuous uncertain inertial Cohen-Grossberg neural networks with proportional delays. Chaos Solitons Fractals 2021, 150, 111148. [Google Scholar] [CrossRef]

- Cai, Z.; Huang, L.; Wang, Z.; Pan, X.; Liu, S. Periodicity and multi-periodicity generated by impulses control in delayed Cohen-Grossberg-type neural networks with discontinuous activations. Neural Netw. 2021, 143, 230–245. [Google Scholar] [CrossRef]

- Liang, T.; Yang, Y.; Liu, Y.; Li, L. Existence and global exponential stability of almost periodic solutions to Cohen-Grossberg neural networks with distributed delays on time scales. Neurocomputing 2014, 123, 207–215. [Google Scholar] [CrossRef]

- Sell, G. Topological Dynamics and Ordinary Differential Equations; Van Nostrand Reinhold Company: London, UK, 1971. [Google Scholar]

- Akhmet, M. Principles of Discontinuous Dynamical Systems; Springer: New York, NY, USA, 2010. [Google Scholar]

- Hartman, P. Ordinary Differential Equations; Birkhauser: Boston, UK, 2002. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).