Abstract

Nonlinear mixed effects models have become a standard platform for analysis when data is in the form of continuous and repeated measurements of subjects from a population of interest, while temporal profiles of subjects commonly follow a nonlinear tendency. While frequentist analysis of nonlinear mixed effects models has a long history, Bayesian analysis of the models has received comparatively little attention until the late 1980s, primarily due to the time-consuming nature of Bayesian computation. Since the early 1990s, Bayesian approaches for the models began to emerge to leverage rapid developments in computing power, and have recently received significant attention due to (1) superiority to quantify the uncertainty of parameter estimation; (2) utility to incorporate prior knowledge into the models; and (3) flexibility to match exactly the increasing complexity of scientific research arising from diverse industrial and academic fields. This review article presents an overview of modeling strategies to implement Bayesian approaches for the nonlinear mixed effects models, ranging from designing a scientific question out of real-life problems to practical computations.

Keywords:

Bayesian nonlinear hierarchical model; Bayesian nonlinear mixed effects models; inter-individual variation; intra-individual variation; Markov chain Monte Carlo technique MSC:

62-01; 62C10; 62F15; 70K75; 60J22

1. Introduction

One of the common challenges in biological, agricultural, environmental, epidemiological, financial, and medical applications is to make inferences on characteristics underlying profiles of continuous, repeated measures data from multiple individuals within a population of interest [1,2,3,4]. By ‘repeated measures data’ we mean the data type generated by observing a number of individuals repeatedly under differing experimental conditions, where the individuals are assumed to constitute a random sample from a population of interest. A common type of repeated measures data is longitudinal data such that the observations are ordered by time [5,6].

Linear mixed effects models for repeated measures data have become popular due to their straightforward interpretations, flexibility allowing correlation structure among the observations, and utility accommodating unbalanced and multi-level data structure (i.e., clustered designs that vary among individuals) [7,8]. The modeling framework is also intuitively appealing: the central idea that individuals’ responses are governed by a linear model with slope or intercept parameters that vary among individuals seems to be appropriate in many scientific problems (for, e.g., see [9,10]). It also allows practitioners to test and evaluate multivariate causal relationships by conducting regression analysis at the population level. By preserving the multi-level structure in a single model, estimation or prediction for the analyses can take advantage of information borrowing [11].

For many applications, researchers often want to theorize that time courses of individual response commonly follow a certain nonlinear function dictated by a finite number of parameters [12]. These nonlinear functions are based on reasonable scientific hypotheses, typically represented as a differential equation system. By tuning the parameters, the shape of the function in terms of curvature, steepness, scale, height, etc., may change, which is used as the rationale behind describing heterogeneity between subjects. Nonlinear mixed effects models, also referred to as hierarchical nonlinear models, have gained broad acceptance as a suitable framework for these purposes [13,14,15]. Analyses based on this model are now routinely reported in various industrial problems, which is, in part, enabled by the breakthrough development of software [16,17,18,19,20]. The excellent books and review papers were published by [14,15,21]. Although their works were published more than 20 years ago, they still provide statisticians, programmers, and researchers with many pedagogical insights about the modeling framework, implementations, and practical applications of using the nonlinear mixed effects models.

While frequentist analysis of nonlinear mixed effects models has a long history, Bayesian analysis for the models was a relatively dormant field until the late 1980s. This is due primarily to the time-consuming nature of the calculations required for Bayesian computation to implement a Bayesian model [22]. Since the early 1990s, Bayesian approaches began to re-emerge, motivated both by exploitation of rapid developments in computing power and by the growing desire to quantify the uncertainty associated with parameter estimation and prediction [23,24,25]. Since then, Bayesian nonlinear mixed effects models, also called Bayesian hierarchical nonlinear models, have been extensively used in diverse industrial and academic researches, endowed with new computational tools providing a far more flexible framework for statistical inference exactly matching the increasing complexity of scientific research [26,27,28,29,30,31].

The objective of this article is to present an updated look at the Bayesian nonlinear mixed effects models. Although the works of [14,15] discuss some of the Bayesian approaches for the nonlinear mixed effects models, the main perspective adopted in the works is much more oriented to the frequentist framework, and prior distributions and Bayesian computing strategy explained in the works are quite outdated. In the literature, it is striking that very few research works provide an updated overview of the Bayesian methodologies on the nonlinear mixed effects models. Motivated by this, in this article, we provide an overview of modeling strategies to implement Bayesian approaches for the nonlinear mixed effects models, ranging from designing a scientific question out of real-life problems to practical computations. The novelty of this paper is as follow:

- Guidance for Bayesian workflow to solve a real-life problem is provided for domain experts to facilitate efficient collaboration with quantitative researchers;

- Recently developed prior distributions and Bayesian computation techniques for a basic model and its extensions are illustrated for statisticians to develop more complex models built on the basic model;

- Illustrated methodologies can be directly exploited in diverse applications, ranging from small data to big data problems, for quantitative researchers, modeling scientists, and professional programmers working in diverse industries.

This article is organized as follows. In Section 2, we explore trends and workflow on the use of Bayesian nonlinear mixed effects. In Section 3, we motivate readers to understand why it is necessary to use the Bayesian nonlinear mixed effects model by illustrating four real-life problems, which will be conceptualized as a statistical problem. To solve the statistical problem, we suggest a basic version of the Bayesian nonlinear mixed effects models in Section 4, and its likelihood is analyzed in Section 5 wherein frequentist computations are briefly discussed. Section 6 describes modern Bayesian computation strategies to implement the basic model. Popularly used prior distributions are presented in Section 7. Section 8 discusses model selection, and Section 9 reviews recent advances and extensions that build on the basic model. Finally, Section 10 concludes the article.

2. Trends and Workflow of Bayesian Nonlinear Mixed Effects Models

2.1. Rise in the Use of Bayesian Approaches for the Nonlinear Mixed Effects Models

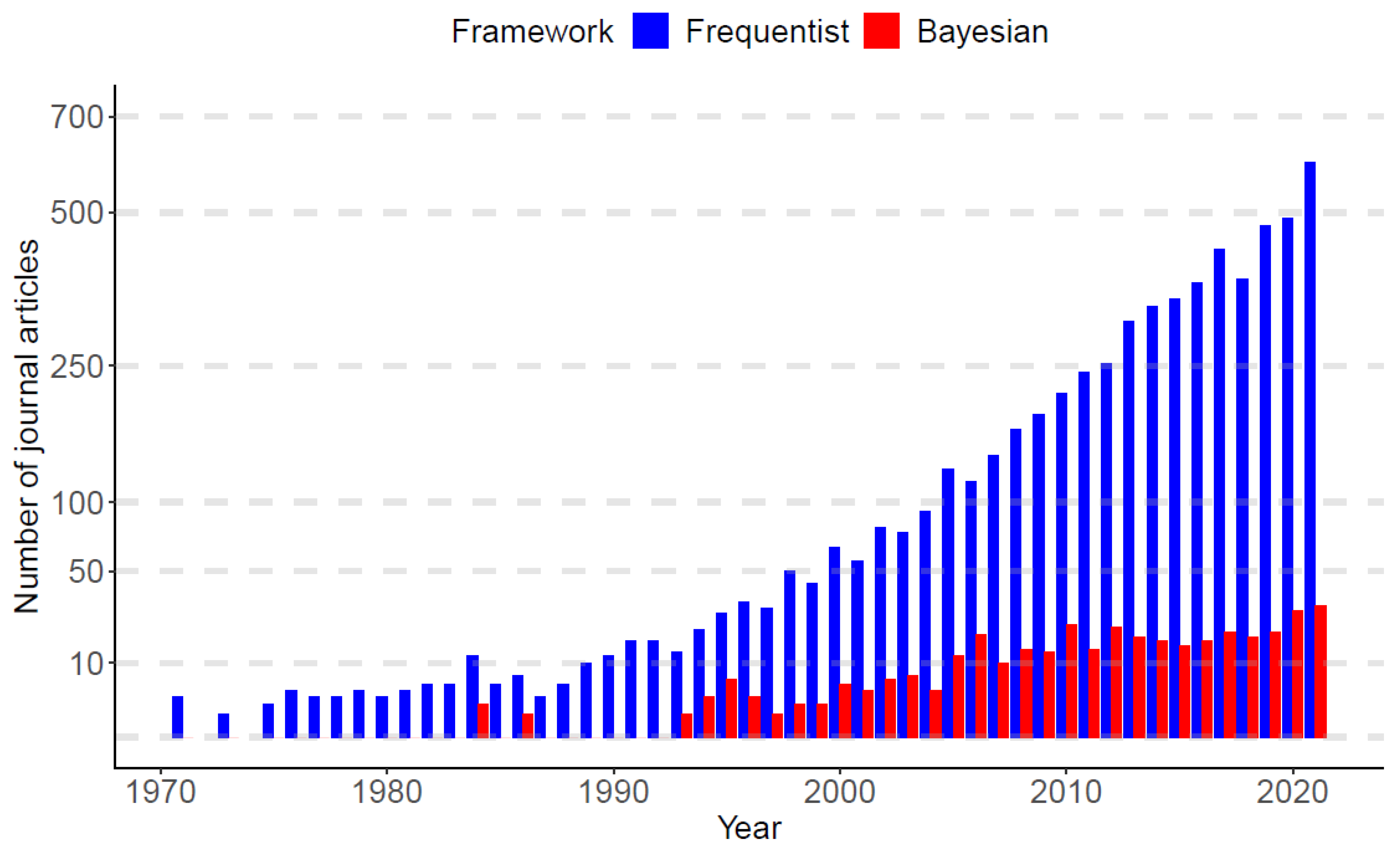

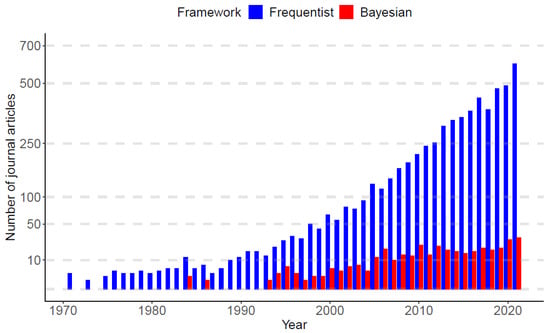

As of January 1970 to December 2021, PubMed.gov (https://pubmed.ncbi.nlm.nih.gov/, accessed on 23 February 2022) searched of “nonlinear mixed-effect models” yielded 6288 publications. Among the published articles, nearly 94% of works used frequentist approaches (5929 articles), while only 6% of works adopted Bayesian approaches (359 articles). Figure 1 displays a bar plot based on the published articles, categorized by the frequentist and Bayesian approaches over time. In the panel, it is observed that, until the late 1980s, the Bayesian research was nearly dormant, but since the early 1990s, Bayesian works begin to re-emerge, and the gap between frequentist and Bayesian works becomes gradually narrower as time evolves.

Figure 1.

Publication trends of the nonlinear mixed-effect models categorized by frequentist and Bayesian frameworks. the x-axis represents the year from 1 January 1970 to 31 December 2021. The value on the y-axis is the number of published articles in each year (data sources: PubMed.gov, accessed on 23 February 2022).

The dormancy of the Bayesian approaches until the late 1980s is mainly due to the time-consuming nature of the calculations based on a sampling scheme, which was previously impossible by the limitation of computing power. Fortunately, a breakthrough in computer processors (for, e.g., Am386 in 1991, Pentium Processor in 1993, etc.) took place in the early 1990s, driving the computing revolution to solve computationally intense problems, and this has given the statistical community the ability to solve statistical questions by using Bayesian methods. This timeline is also aligned with the widespread Markov chain Monte Carlo (MCMC) sampling techniques in the Bayesian community [32,33]. Since then, the Bayesian community has been gradually gaining the momentum to leverage the rapidly growing developments of computing power, and now, assorted Bayesian software packages (e.g., JAGS [34], BUGS [35], and Stan [17]) are available for researchers to answer scientific questions arising from industrial and academic research.

To understand the rise of the Bayesian approaches, we first want to understand what will be some of the advantages of using Bayesian methods over frequentist methods in the context of nonlinear mixed effects models. As the primary focus of this review paper is to provide the readers with some insight on methodologies and practical implementation of using Bayesian approaches, our comparison and exposition below are described from an operational viewpoint. Table 1 summarizes the modeling strategies of using the frequentist and Bayesian approaches for the nonlinear mixed effects models. Broadly speaking, the usual estimation method of the frequentist computation is optimization, while that of the Bayesian computation is sampling. Normally, it is known that the former is much faster than the latter. This is not surprising because a sampling scheme, by its nature, needs to explore a wide range of the parameter space, whereas the optimization only needs to find the best point estimate, which is often described by the maximum likelihood estimate. In many practical problems, widely used frequentist optimization algorithms are the first-order approximation [36], Laplace approximation [37], and stochastic approximation of expectation-maximization algorithm [38]. They will be briefly discussed in Section 5.4. As for the Bayesian sampling algorithms, combinations of Gibbs sampler [39], Metropolis-Hastings algorithm [40], Hamiltonian Monte Carlo [41], and No-U-Turn sampler [42] are popularly used, among many others [43,44,45]. We explain these in detail in Section 6.

Table 1.

Comparison of modeling strategies used in frequentist and Bayesian approaches for the nonlinear mixed effects models from an implementational viewpoint.

The stark difference between using frequentist and Bayesian approaches may be the procedure of describing an uncertainty underlying the parameter estimation for the nonlinear mixed effects models. Here, the parameter which is of primary interest is the population-level parameters (also called fixed effects), typical values for the individual-level parameters. In many cases, frequentist confidence intervals for the parameters of the models are constructed by assuming that asymptotic normality of maximum likelihood estimator holds in a finite sample study, which is actually the most accurate in large sample scenario [51]. Most frequentist software packages, such as NONMEM [13,47], Monolix [48], and nlmixr [18], by default, may print out a confidence interval of the form, “Estimate ± 1.96 × Standard Error”, or some transformation of the lower and upper bounds, if necessary, such that the Standard Error is calculated by using (observed) Fisher information matrix [52,53,54]. Using such a scheme in small sample studies is highly likely to overlook the gap between the reality of the data and the idealistic asymptotic situation.

In contrast, as for the Bayesian approaches, the large-sample theory is not needed for the uncertainty quantification, and the procedure to obtain posterior credible intervals is a lot easier than obtaining confidence intervals (See Chapter 4 of [55]). Furthermore, Bayesian credible intervals based on percentiles of posterior samples allow for a strongly skewed distribution, wherein frequentist confidence intervals (based on large-sample theory) may induce a non-negligible approximating error due to the deviation from the asymptotic normality. Along with that, Bayesian methods are highly appreciated when researchers wish to incorporate prior knowledge from previous studies into the model so that posterior inference provides the researchers with an updated view on the problem, possibly with a more accurate estimation. Using prior information would be particularly useful in small-sample contexts [56,57]. For example, in medical device clinical trials, some opportunities and challenges in developing a new medical device are: (i) there is often a great deal of prior information for a medical device; (ii) a medical device evolves in relatively small increments from previous generations to a new generation; (iii) there are only a few numbers of patients for the trials; and (iv) companies need to make a rational decision promptly to reduce cost. In those settings, Bayesian methods have been demonstrated to be suitable, and their proper use is guided by Food and Drug Administration [58,59].

2.2. Bayesian Workflow

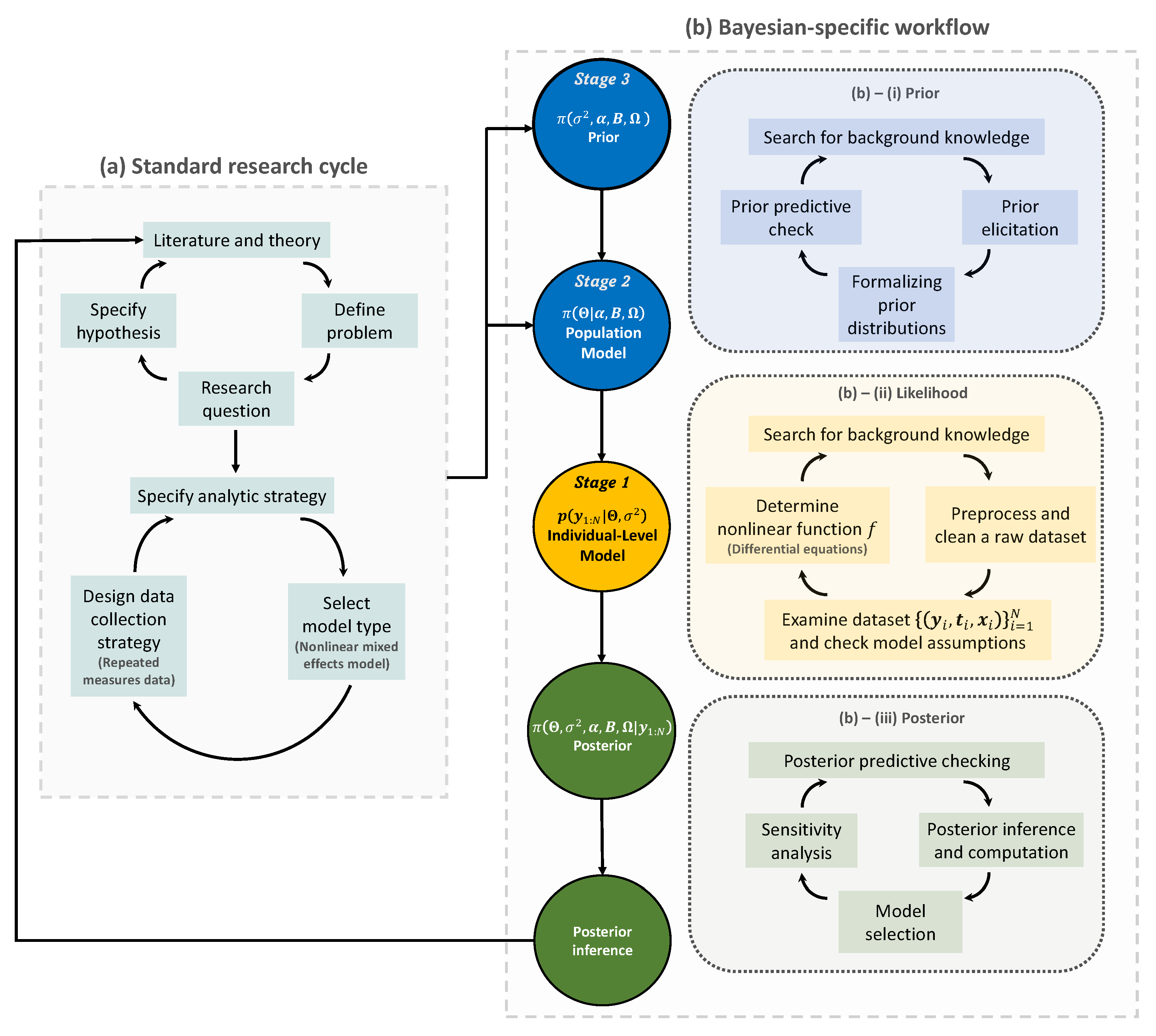

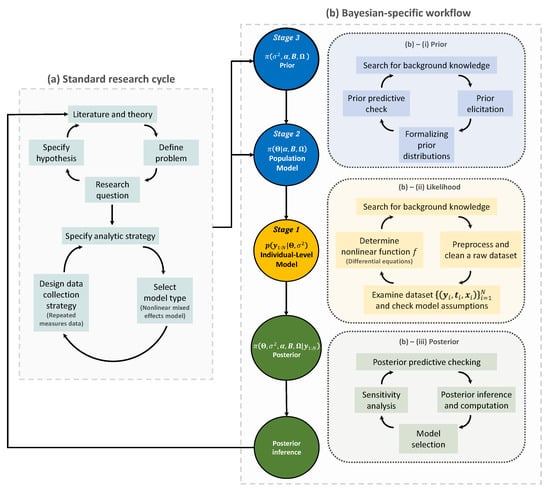

We outline the first two steps in the Bayesian workflow of using Bayesian nonlinear mixed effect models described in Figure 2. The panel includes some mathematical notations that are consistently used throughout the paper. These notations will be clearly understood later. The aim of our explanation at this point is to provide readers with a blueprinted plan to implement Bayesian modeling strategies for the nonlinear mixed effects models. We assume that readers are familiar with basic concepts and generic workflow in Bayesian statistics; see [55,60,61] for those basic concepts and refer to the review paper by [62] and references therein for detailed concepts and general terminologies used in workflow, such as prior and posterior predictive checks, and prior elicitation, etc.

Figure 2.

The Bayesian research cycle. A research cycle using Bayesian nonlinear mixed effects model comprises two steps: (a) standard research cycle and (b) Bayesian-specific workflow. Standard research cycle involves literature review, defining a problem and specifying the research question and hypothesis. Bayesian-specific workflow comprises three sub-steps: (b)–(i) formalizing prior distributions based on background knowledge and prior elicitation; (b)–(ii) determining the likelihood function based on a nonlinear function f; and (b)–(iii) making a posterior inference. The resulting posterior inference can be used to start a new research cycle. Distributions for prior, likelihood, and posterior are colored in blue, yellow, and green, respectively. , model matrix; , error variance parameter; , intercepts; , coefficient matrix; , covariance matrix; , probability distribution; , prior or posterior probability distribution; , data.

The first step of the Bayesian research cycle is (a) standard research cycle [63,64]. Some early activities at this step involve reviewing literature, defining a problem, and specifying a research question and a hypothesis. After that, researchers specify which analytic strategy would be taken to solve the research question and suggest possible model types, followed by data collection. The data type arising in this process may include a response variable and some covariates that are grouped longitudinally, which then formulates repeated measures data of a population of interest. Furthermore, if there appears to be some nonlinear temporal tendency at each subject, then a possible model type for the analysis is a nonlinear mixed effects model [15,65].

The second step of the Bayesian research cycle is (b) Bayesian-specific workflow. Logically, the first thing to do at this step is to determine prior distributions (see Step (b)–(i) in Figure 2). The selection of priors is often viewed as one of the most crucial choices that a researcher makes when implementing a Bayesian model, as it can have a substantial impact on the final results [66]. As exemplified earlier in the context of Bayesian medical device trials, using a prior in small sample studies may improve the estimation accuracy, but an unthoughtful choice of priors would lead to a significant bias in estimation. Prior elicitation effort would require Bayesian expertise to formulate domain expert’s knowledge in a probabilistic form [67]. Strategies for prior elicitation include asking domain experts to provide suitable values for the hyperparameters of the prior [68,69]. After prior is specified, one can check the appropriateness of the priors through prior predictive checking process [70]. For almost all practical problems, prior distribution of Bayesian nonlinear mixed effect models can be hierarchically represented as follow: (1) a prior for the parameters used in likelihood, often called ‘population-level model’ in the literature of mixed effects modeling; and (2) a prior for the parameters used in the population-level model and for the parameters describing the residual errors used in likelihood. It is important to note that the former type of prior distribution (that is, (1)) is also a requirement to implement frequentist approaches for the nonlinear mixed effects model, as a name of ‘distribution for random effects’. Essentially, the defining factor of the Bayesian framework is the latter type of prior distribution (that is, (2)), which is fixed in the frequentist framework, as a name of ‘fixed effects’. Some prior options of the latter type will be discussed in Section 7.

The second task is to determine the likelihood function (see Step (b)–(ii) in the panel). At this time, the raw dataset collected in (a) standard research cycle should be cleaned and preprocessed. Before embarking on more serious statistical modeling, it is a common practice to get some insight about the research question via exploratory data analysis and have a discussion with domain experts such as clinical pharmacologists, clinicians, physicians, engineers, etc. To some extent, eventually, all these efforts are to determine a nonlinear function (denoted as f in this paper) that best describes the temporal profiles of all subjects. This nonlinear function is a known function because it should be specified by researchers. In other words, the branch of the nonlinear mixed effects models belongs to parametric statistics. However, one technical challenge is that, in many problems, such a nonlinear function is represented as a solution of a differential equation system [71,72], and therefore there is no guarantee that we can conveniently work with a closed-form expression of the nonlinear function. For example, if researchers wish to work with nonlinear differential equations [73,74], then some approximation via differential equation solver [75,76] may be needed to calculate the nonlinear function. As such, most software packages dedicated to implementing a nonlinear mixed effect model, or, more generally, a Bayesian hierarchical model, are equipped with several built-in differential equation solvers [17,47,77]. For instance, visit the website (https://mc-stan.org/docs/2_29/stan-users-guide/ode-solver.html, accessed on 20 February 2022) to see some functionality supported in Stan [17].

Finally, the likelihood is combined with the prior to form the posterior distribution (see Step (b)–(iii) in the panel). Given the important roles that the prior and the likelihood have in determining the posterior, this step must be conducted with care. The implementational challenge at this step is to construct an efficient MCMC sampling algorithm. The basic idea behind MCMC here is the construction of a sampler that simulates a Markov chain that is converging to the posterior distribution. One can use software packages if prior distributions to be implemented in Bayesian models exist in the list of prior options available in the packages. Otherwise, professional programmers and Bayesian statisticians are needed to make codes manually; this review paper will be useful for that purpose. Another activity important at this step is to compare multiple models with different priors and nonlinear functions, specified in Step (b)–(i) and (ii), and select the best model out of them. This topic is broadly called the model selection [78], which will be discussed in Section 8.

3. Applications of Bayesian Nonlinear Mixed Effects Model in Real-Life Problems

3.1. The Setting

To exemplify circumstances for which the nonlinear mixed effects model is a suitable modeling framework, we review challenges from several diverse applications. Table 2 summarize four real-life problems that will be illustrated in the next subsections.

Table 2.

Summary of examples.

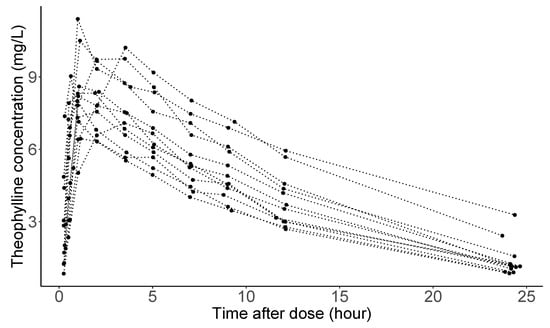

3.2. Example 1: Pharmacokinetics Analysis

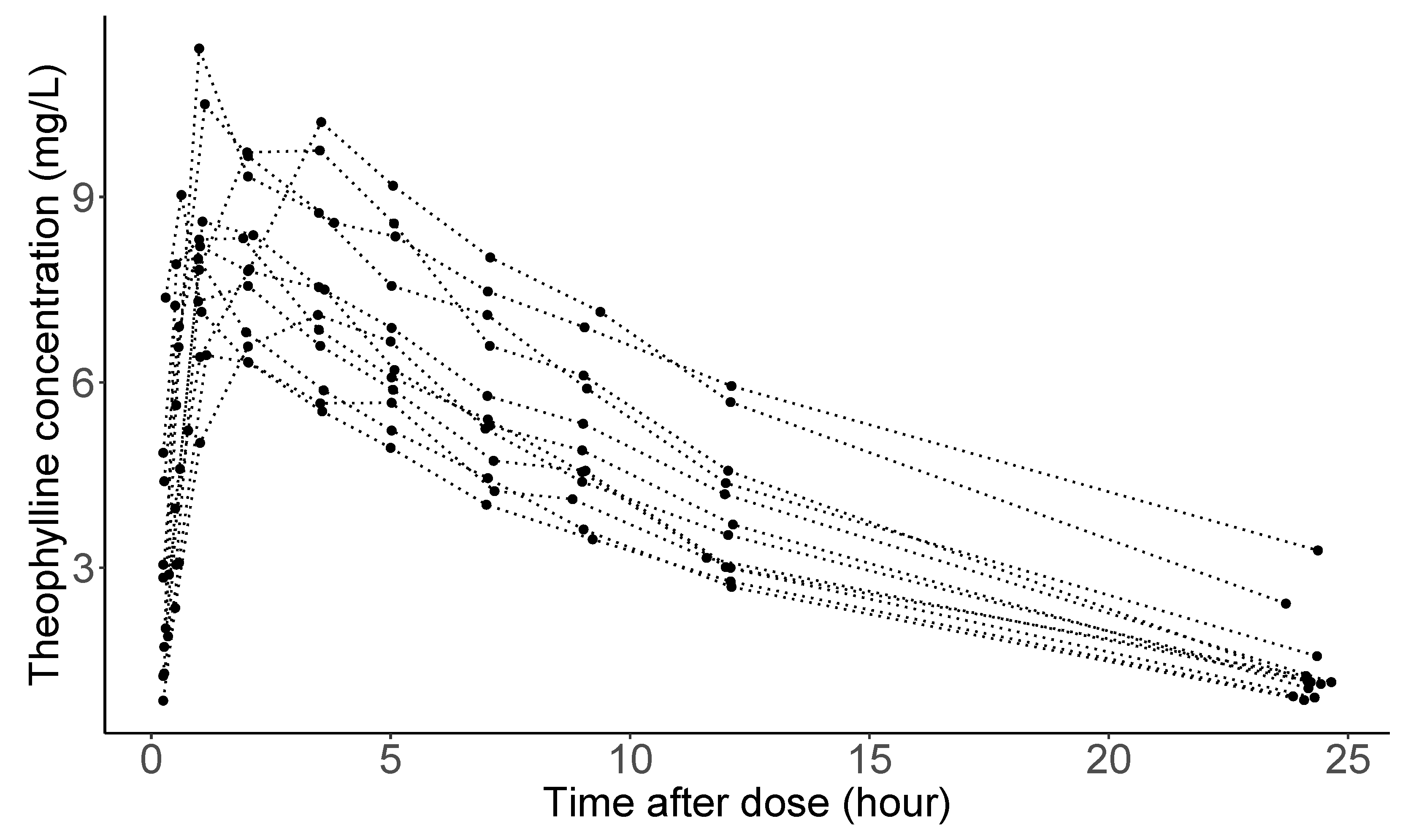

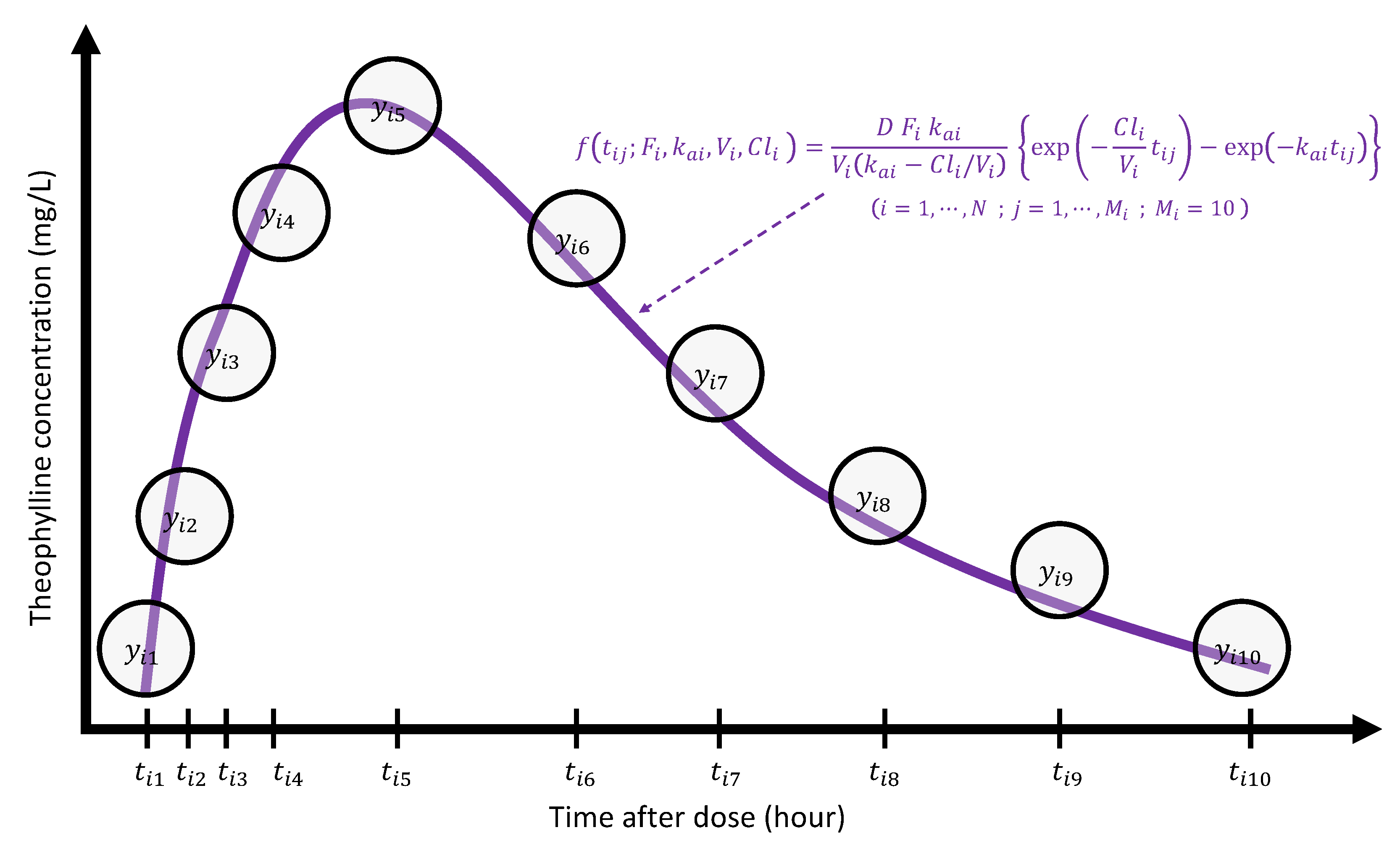

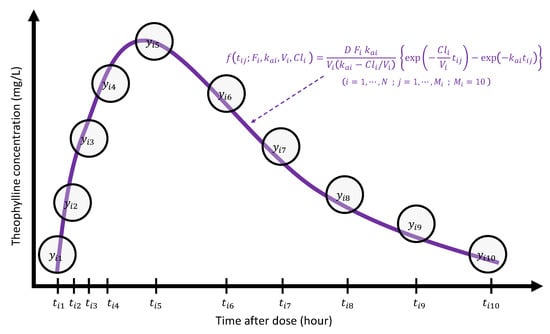

Studies of the pharmacokinetics of drugs help us learn about the variability in drug disposition in a population [92]. Figure 3 shows theophylline concentration in the plasma as a function of time after oral administration of the same amount of anti-asthmatic theophylline for 12 subjects (the data considered here are courtesy of Dr. Robert A. Upton of the University of California, San Francisco.) As seen in the panel, concentration trajectories have a similar functional shape for all individuals. However, and (peak concentration and time when it is achieved), absorption, and elimination phases are substantially different across subjects. Clinical pharmacologists believe that these differences are attributable to between-subject variation in the underlying pharmacokinetic processes, explained by Absorption, Distribution, Metabolism, and Excretion (ADME), understanding of which is crucial in a new drug development in the pharmaceutical industry.

Figure 3.

Theophylline concentrations for 12 subjects following an oral dose.

In pharmacokinetics analysis, often abbreviated by ‘PK analysis’, it is routine to use compartmental modeling to describe the amount of drug in the body by dividing the whole body into one or more compartments [93]. For theophylline, a one-compartment model is normally used, which assumes that the entire body acts like a single, uniform compartment; see page 30 from [94] for a detailed explanation about the model:

where is drug concentration at time t for a single subject following oral dose D at . Here, F is the bioavailability which expresses the proportion of a drug that gains access to the systemic circulation. is the absorption rate constant describing how quickly the drug is absorbed from the gut into the systemic circulation. V is the volume of the central compartment. is the clearance rate representing the volume of plasma from which the drug is eliminated per unit time. Eventually, the pharmacokinetic processes for a given subject is summarized by the 4-dimensional vector with ‘PK parameters’ . Obviously, it is the modeler’s discretion to proceed with a more complex PK model such as a three compartment models with nonlinear clearance to fit the data, but in this case, over-parameterization should be carefully examined [95].

Typically, the dataset collected in a drug development program includes demographic and clinical covariates obtained from each subject, for, e.g., body weight, height, age, sex, creatinine clearance, albumin, etc.; and furthermore, one can also involve genetic information in an individual’s response to drugs. Most covariates are measured at baseline, before assigning the drug, while some covariates can be measured at every sampling time. One of the crucial goals of PK analysis is to illustrate the effect of such covariates on the PK parameters [96]. The causal relationship inferred by the covariate analysis can be used to support physicians in making the necessary judgments about the medicines that they prescribe, tailored to individual patients [97].

In a PK report for a new drug application to government authorities like U.S. Food and Drug Administration (FDA) or European Medicines Agency (EMA), the PK parameters are summarized by mean or median, and very importantly, estimates of parameter precision. Estimates of parameter precision can provide valuable information regarding the adequacy of the data to support those parameters [98]. Parameter uncertainty can be estimated through several methods, including bootstrap procedures [99], log-likelihood profiling [100], or using the asymptotic standard errors of parameter estimates, and recently, Bayesian approaches draw a lot of attention from the pharmaceutical industry [101]. Particularly, Bayesian approach for the population PK analysis can be very useful when there is prior knowledge about PK parameters learned from preclinical studies, published works, etc., and one wants to incorporate them into the prior specification for PK parameters [28].

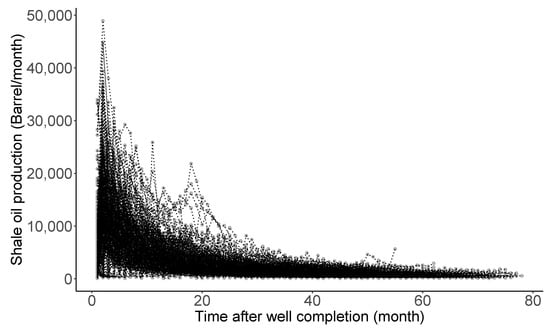

3.3. Example 2: Decline Curve Analysis

The US shale boom—a product of technological advances in horizontal drilling and hydraulic fracturing that unlocked new stores of energy—has greatly benefited the growth in the US economy. Horizontal drilling is a directional drilling technology where a well is drilled parallel to the reservoir bedding plane [102]. Well productivity of a horizontal well is known to often be 3 to 5 times greater than that of a vertical well [103,104], but it also costs 1.5 to 2.5 times more than a vertical well [105]. Therefore, the eventual success of the drilling project of unconventional shale wells relies on a large degree of well construction costs [106]. Because of very low permeability, and a flow mechanism very different from that of conventional reservoirs, estimates for the shale well construction cost often contain high levels of uncertainty. For this reason, one of the crucial tasks of petroleum engineers is to quantify the uncertainty associated with the process of oil or gas production to reduce the extra initial risk for the projects.

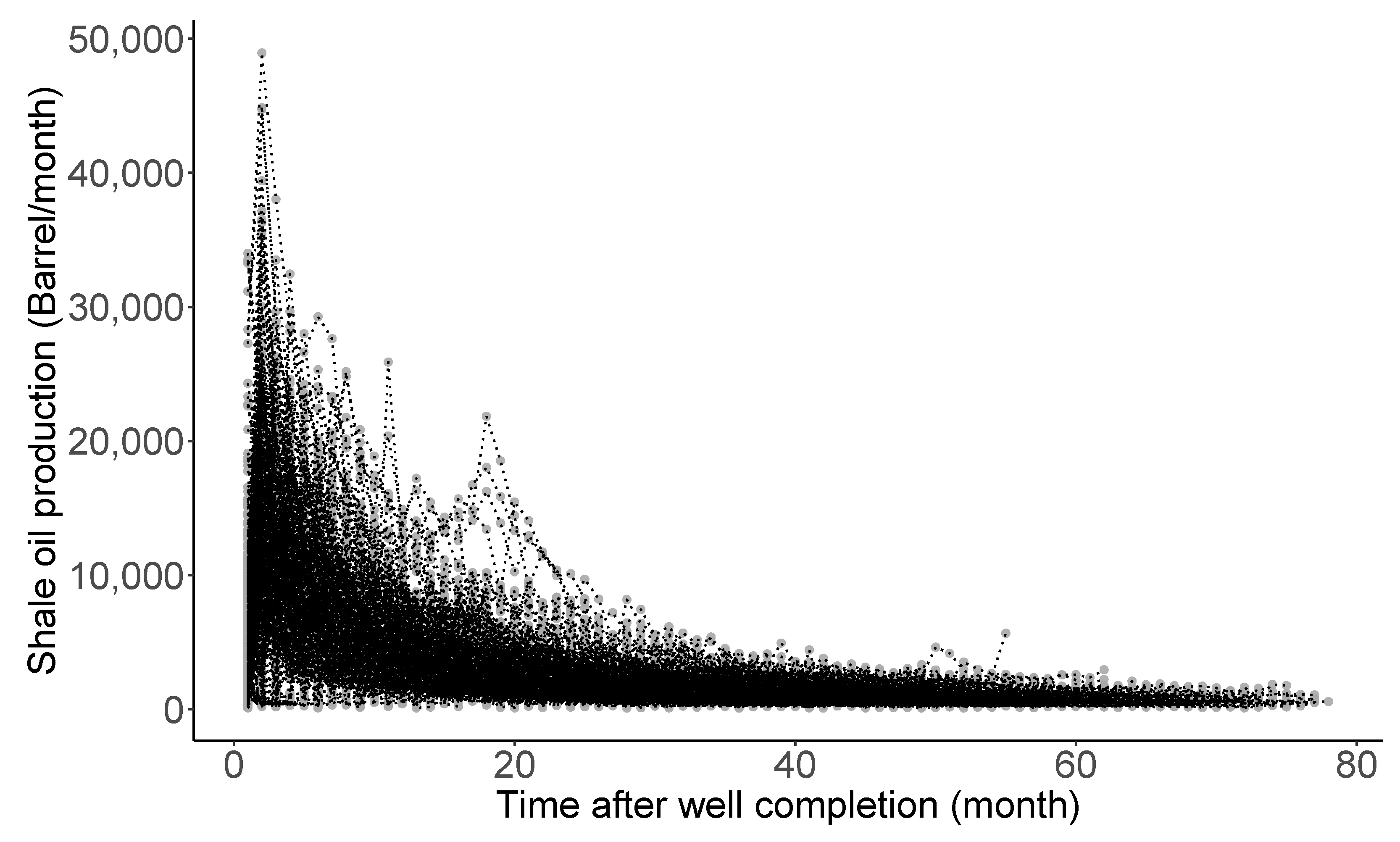

Figure 4 shows monthly production rate trajectories of 360 shale oil wells completed in the Eagle Ford Shale of South Texas, studied by [31]. The declining pattern manifested in the trajectories is commonly observed in almost all oil production rate time series data following well completion. Here, the completion is terminology in petroleum engineering, meaning the process of transforming a well ready for the initial production [107]. Decline curve analysis (DCA), introduced by [83] around 100 years ago, is one of the most popularly utilized methods for petroleum engineers. Its purpose is to (i) theorize a curve describing the declining pattern, (ii) analyze the declining production rates, (iii) characterize the well-productivity, and (iv) forecast the future performance of oil and gas wells. Particularly, estimation and uncertainty quantification of estimated ultimate recovery (EUR) (here, EUR is a special jargon defined as an approximated quantity of oil from a well that is potentially recoverable by the end of its producing life [108]) is the utmost important task and a starting point in the decision-making process for future drilling projects. In addition, the oil and gas companies comply with financial regulations for EUR outlined by the U.S. Securities and Exchange Commission: see https://www.sec.gov/rules/final/2008/33-8995.pdf (accessed on 20 February 2022), for the regulations.

Figure 4.

Production rates for 360 shale oil wells after completion.

Most curves used in DCA are derived from solving certain differential equations that describe a hidden dynamic from production rate trajectory [109,110,111,112,113,114]. See [84,85,115,116] for an overview of such curves. Ref. [31] studied Arps’ hyperbolic, stretched exponentiated decline, Duong, and Weibull curves to fit the trajectories shown in the Figure 4. Particularly, the Duong model was developed for unconventional reservoirs with very low permeability:

where is the production rate at time t for a single well following completion. is the initial rate coefficient, and m and a are additional model parameters. We note that the parameters, , m and a, have their own meanings in terms of well-productivity: see [114] for the interpretation. That being said, the well-productivity for a given well is summarized by the 3-dimensional parameter vector, . In a modeling perspective, the variation of the well-productivity across different wells is attributable to the different values for . To explain this variability, one can regress the values on the well-design parameters such as true vertical depth, measure depth, etc. The causal relationship inferred by the covariate analysis will be used in a future drilling project. Geological information of wells can also be incorporated to make a spatial prediction for the EUR at a new location, as researched by [31].

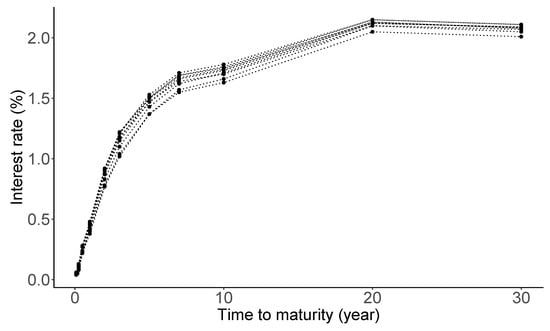

3.4. Example 3: Yield Curve Modeling

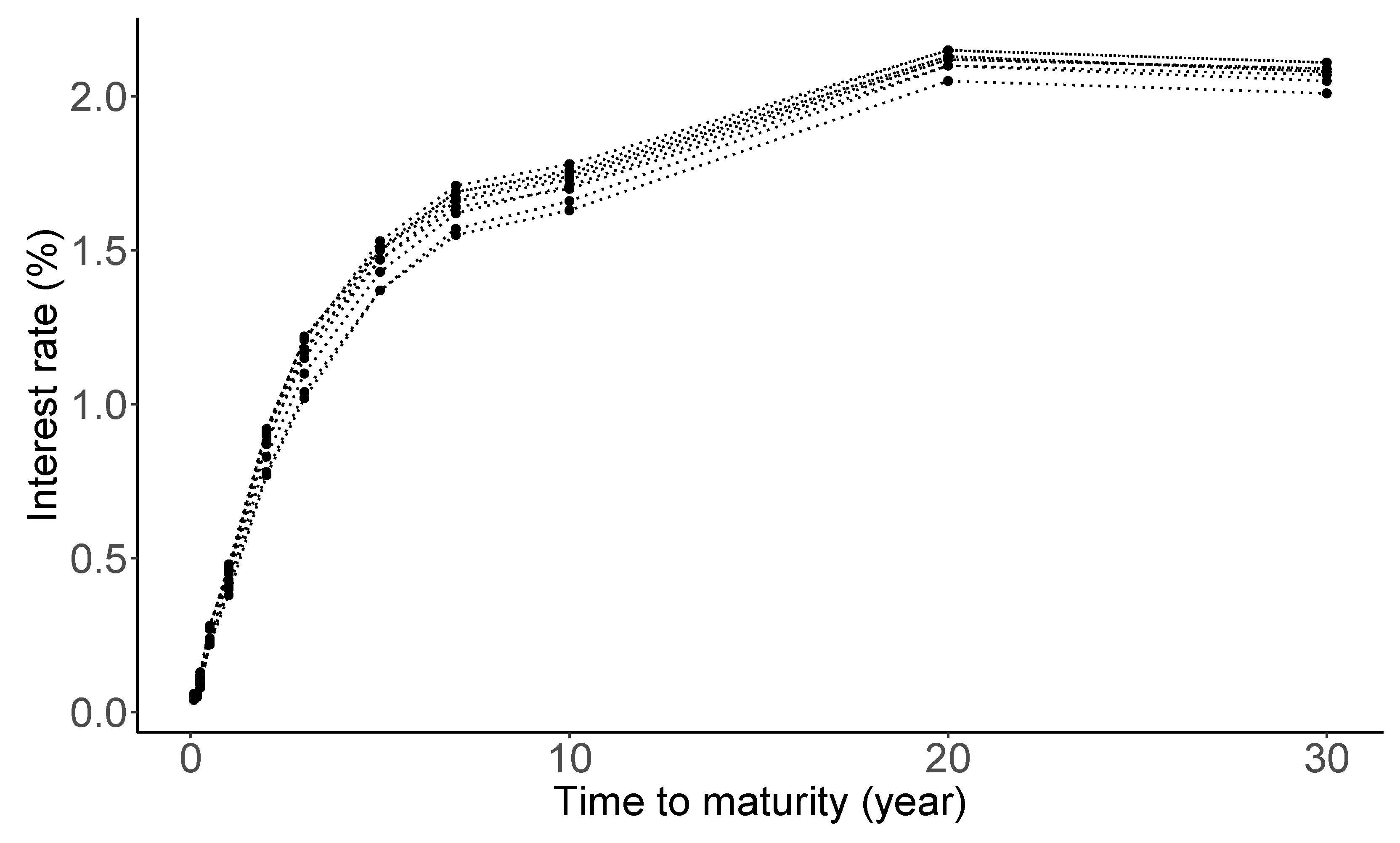

Macroeconomists, financial economists, and market participants all attempt to build good models of the ‘yield curve’ [117]. The yield curve on a given day is a curve showing the interest rates across different maturity spans (one month, one year, five years, etc.) for a similar debt contract at a particular date. It determines the interest rate pattern (i.e., cost of borrowing), which can be used to calculate a bond’s price [118]. Figure 5 shows daily treasury par yield curve rates spanning from 3 to 13 January 2022, with maturities up to 30 years. The data source is from the U.S. Department of the treasury (https://www.treasury.gov/resource-center/data-chart-center/interest-rates/Pages/TextView.aspx?data=yield, accessed on 20 February 2022). As seen from the panel, the shape of the yield curve displays a slightly delayed humped shape. Economists believe that such a shape of the yield curve has an important implication on the economic growth [119].

Figure 5.

Daily Treasury par yield curve rates from 3 to 13 January 2022.

The Nelson–Siegel model [86] is a very popular model in the literature to fit the term structure:

where denotes the (zero-coupon) yield evaluated at , and denotes the time to maturity. The model parameters have a specific financial meaning: , , and are related long-term, short-term, and mid-term effects on the interest rate, respectively, and is referred to as a decay factor [87]. Each of the yield curves is summarized by the 4-dimensional parameter , and it is known that the model can capture a wide range of possible shapes of the yield curve [86,87,120,121]. Therefore, the Nelson–Siegel model is extensively used by central banks and monetary policymakers [122]. For example, The Federal Reserve updates estimates of once per week: visit the website (https://www.federalreserve.gov/data/yield-curve-tables/feds200628_1.html, accessed on 20 February 2022). In recent years, there has been a great deal of interest in the uncertainty quantification of the Nelson–Siegel parameters over time, and their relationship with macroeconomic variables such as inflation and real activity, etc, in financial applications: refer to [121,123,124,125] for some of those works.

3.5. Example 4: Early Stage of Epidemic

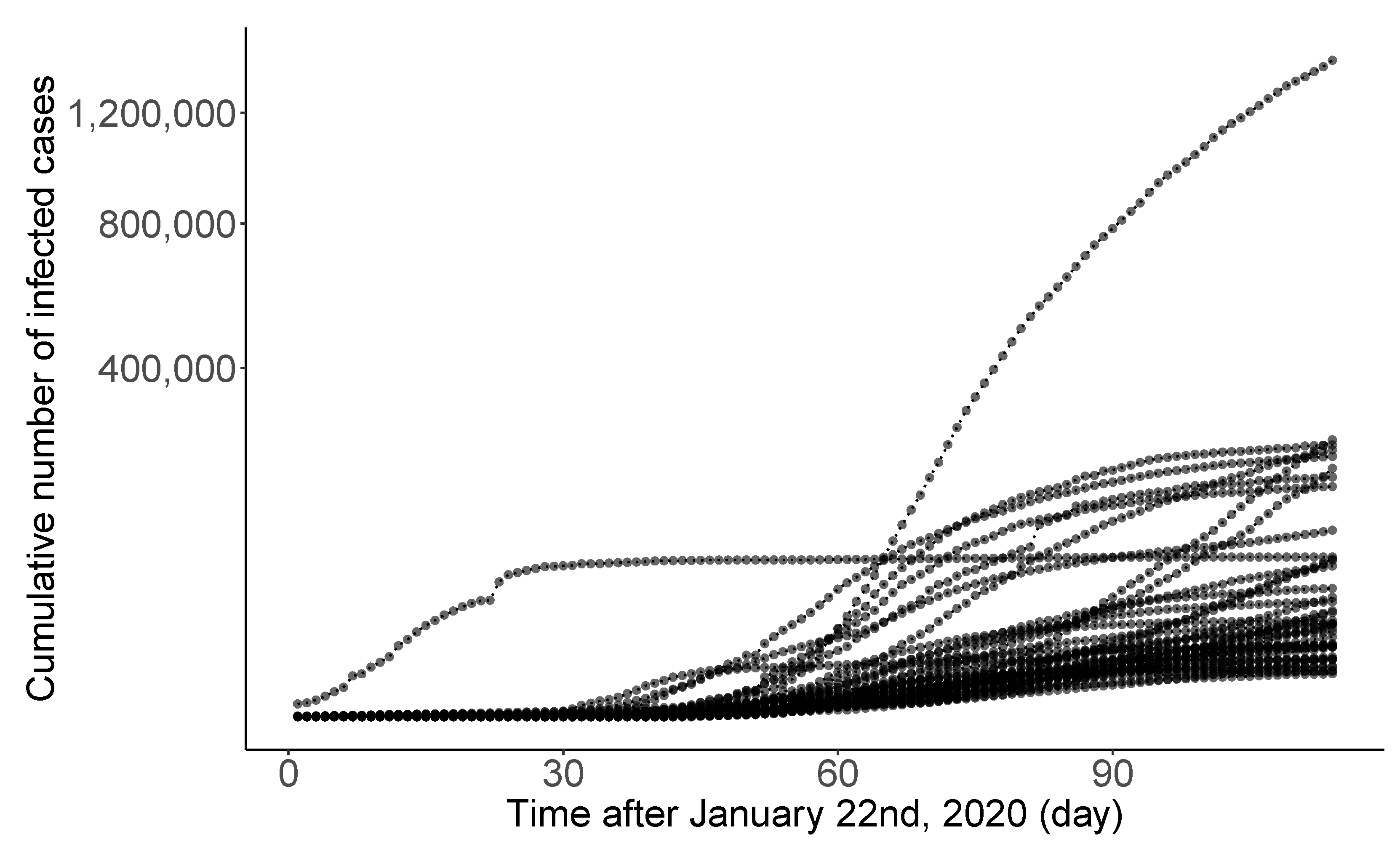

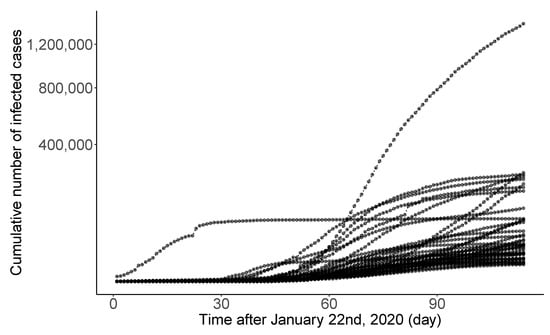

Novel coronavirus disease 2019 (COVID-19) is a big threat to global health. The rapid spread of the virus has created a pandemic, and countries all over the world are struggling with a surge in COVID-19 infected cases. Figure 6 displays the daily infection trajectories describing the cumulative numbers of infected cases for 40 countries, spanning from 22 January to 14 May 2020, studied by [30]. The data source is from COVID-19 Data Repository by the Center for Systems Science and Engineering at Johns Hopkins University (https://coronavirus.jhu.edu/map.html, accessed on 20 February 2022). Refer to Table S.1 in [30] for the list of 40 countries. The time frame of the authors’ research was the early stage of the pandemic when there was no drug or other therapeutics approved by the US FDA.

Figure 6.

Daily trajectories for cumulative numbers of COVID-19 infections for 40 countries from 22 January to 14 May 2020.

In general, during an early phase of a pandemic, information regarding the disease is very limited and scattered, even if it exists. In spite of that, it is crucial to predict future cases of infection or death. In such a situation, one consideration is to use data integration (also called ‘borrowing information’), combining data from diverse sources and eliciting useful information with a unified view of them. Additionally, it is very important to find risk factors relevant to the disease. Reliable and early risk assessment of a developing infectious disease outbreak allow policymakers to make swift and well-informed decisions that would be needed to ensure epidemic control. Quantifying uncertainty about the final epidemic size is also very important.

Richards growth curve [126], so-called the generalized logistic curve [127], is a popularly used growth curve for population studies in situations where growth is not symmetrical about the point of inflection [128,129]. There are variant reparamerized forms of the Richards curve in the literature [130,131,132,133], and one of the frequently used form is

where is the cumulative number of infected cases at time t. Here, epidemiological meanings of the parameters, a, b, and c, are the final epidemic size, infection rate, and lag phase of the trajectory, respectively. The parameter is the shape parameter, and there seems no clear epidemiological meaning [134]. Each infection trajectory in Figure 6 can be characterized by the 4-dimensional parameters if the Richards curve is used. Due to its flexibility originating from the shape parameter , Richards curve has been widely used in epidemiology for real-time prediction of outbreak of diseases, possibly at an early phase of the pandemic when there is no second wave. Examples include SARS [135,136], dengue fever [137,138], pandemic influenza H1N1 [139], and COVID-19 outbreak [30,140].

3.6. Statistical Problem

In the previous subsections, we presented a range of examples in which nonlinear mixed effects models can be exploited. They have their own challenges to solve the problems that are representative of issues many researchers have to deal with in other areas: for example, (1) how to describe a possible nonlinear clearance with a limited number of patients; (2) how to handle an enormously large number of shale oil wells and make a spatial prediction of EUR at a new location; (3) how to describe the dynamic of the financial parameters over time; and (4) how to integrate data from different sources to produce more accurate forecast on the epidemic size.

An emerging issue accompanied by these problems, requested from researchers, government agencies, domain experts, etc., is how to quantify the uncertainty associated with parameter estimation and prediction. Although the traditional nonlinear mixed effects models, based on the maximum likelihood method, can provide confidence intervals and statistical tests, calculations of those generally involve approximations that are most accurate for large sample sizes, as discussed in Section 2.1. On the other hand, in the Bayesian approach—in which the prior automatically imposes the parameter constraints—inferences about parameter values based on the posterior distribution usually require integration rather than maximization, and no further approximation is involved. For that reason, the Bayesian approach is often suggested as a viable alternative to the frequentist approach to solving the problems.

We now formulate these problems as a statistical problem. First, we summarize common features of the dataset for the analysis.

- (1)

- There exist repeated measures of a continuous response over time for each subject;

- (2)

- There exists a variation of individual observations over time;

- (3)

- There exists a variation from subject-to-subject in trajectories;

- (4)

- There exist covariates measured at baseline for each subject.

The subject of sampling units considered in the statistical analysis is quite comprehensive. We have seen that it can be a patient, a shale oil well, a particular date, and a country. As the unique identifier, we assign the index i to each individual. By denoting N as the number of individuals (i.e., the sample size), the index i will take an integer from 1 to N. The sample size N available for the data analysis substantially varies across different industrial problems as well as subfields within the same industry. For example, the number of shale oil wells on Eagle Ford Shale Play can be as large as 6000 [31]. As for the pharmaceutical industry, in phase I cancer clinical trials, the number of cancer patients N may be strictly confined to 25 [141], but for phase III trials for non-oncology drug studies, N can be as large as 2000 [142].

Here the term ‘time’ is meant in the broadest sense. It can be a calendar time, a nominal time, a time after some event (e.g., the time after dose from Figure 3 and the time after well completion from Figure 4), or a time to some event (e.g., the time to maturity from Figure 5). Essentially, time can be defined as a physical quantity that can be indexed with consecutive integers to produce a temporal record. Another important characteristic of the time is that each subject may have different time points where observations are measured. In this article, we use to represent the time point, where the integer indexes the time point from the earliest to the last observations. Thus, represents the number of repeated observations for the i-th individual. When is relatively small (or large), we say the repeated measures are sparsely observed (or densely observed). For example, the theophylline and yield curve data shown in Figure 3 and Figure 5 are sparse data, while the oil production and COVID-19 data shown in Figure 4 and Figure 6 are dense data.

As for the repeated measures, denotes the continuous response of the i-th subject at the time point . We assume that has been already pre-processed so that it is ready to be used for statistical modeling. For most applications, it may be necessary first to transform the data into some new representation before training the model. For example, as seen from Figure 4, oil productions vary substantially across different wells. For that reason, the authors [31] take a logarithm on the productions to derive the response , followed by appropriate statistical modeling on the log-scale. To some extent, data pre-processing may enhance the performance of the model.

Suppose that researchers collected P number of covariates at the baseline from each subject i (). Here, the baseline refers to the time point (or possibly right before the time point ), where at the first response has not been observed yet. Let denote the b-th covariate of the i-th subject (). In general, there are two types of covariates: time-invariant and time-varying covariates. This article mainly concerns the former type. As similar to N, the number of covariates P substantially varies across industries and specific problems. For instance, in pharmacogenetics analysis, the number of protein-coding genes P would be around 20,000 [143]. In the oil and gas industry, if we consider most of the covariates obtained from the well completion procedure, P could be at least 100 [31].

In conclusion, the dataset for the statistical analysis can be represented by the collection of the N triplets . Here, for each subject i (), we formulated two -dimensional vectors and , and a P-dimensional vector .

The data structures described up to this point are commonly encountered in longitudinal data studies [7]. Essentially, the feature of dataset motivating the use of nonlinear mixed effects models is that, for each subject i, the response vector displays some nonlinear tendency over time , as seen in Figure 3, Figure 4, Figure 5 and Figure 6. To explain this nonlinearity, a researcher needs to theorize some nonlinear function, denoted as f, such as one compartment, Duong, Nelson-Siegel, and Richards models, depending on the contexts. The construction of such functions relies on human modelers’ abstraction of data into a suitable dynamical system, which is often represented by a differential equation. Such a differential equation has a finite number of parameters that control the dynamic of the solution of the system, the understanding of which is vital for causal inference for the nature of the system by associating with covariates .

Figure 7 displays a pictorial description about how a PK modeler would see the theophylline concentration trajectory from the modeling perspective, where she theorized that one compartment model (1) would be suitable to describe the trajectories over time for each subject i (). Then the 10-dimensional vector is summarized by a 4-dimensional PK parameter vector ; the dimension reduction is intrinsically embedded in this process. As each of the parameters , , , and has an important clinical meaning, it is very natural to ask how they are related with P covariates to induce a causal relationship. For the purpose of modeling, it may be necessary to transform the original PK parameters to model parameters () so that elements () are supported on the real number by taking transformations , , , and . As these transformations were taken only for the modeling purpose, interpretations on the PK parameter for the PK report should be carried out after transforming back to the original scale.

Figure 7.

Pictorial illustration of PK modeling for the theophylline data.

4. The Model

4.1. Basic Model

Assume that we have dataset for a statistical analysis from N subjects, as explained in Section 3.6. We consider a basic version of the model here. Extensions are discussed in Section 7.3 and Section 9. The usual Bayesian nonlinear hierarchical model may then be written as a three-stage hierarchical model as follows:

- Stage 1: Individual-Level ModelIn (2), the conditional mean is a known function governing within-individual temporal behavior dictated by a K-dimensional parameter specific to the subject i. We assume that the residuals, , are normally distributed with mean zero and with an unknown variance, .

- Stage 2: Population ModelIn (3), the l-th model parameter is used as the response of an ordinary linear regression with predictor , with intercept and coefficient vector . By letting , we assume that the is distributed according a K-dimensional Gaussian distribution with covariance matrix . The diagonality in implies that each model parameter are uncorrelated across l.

- Stage 3: Prior

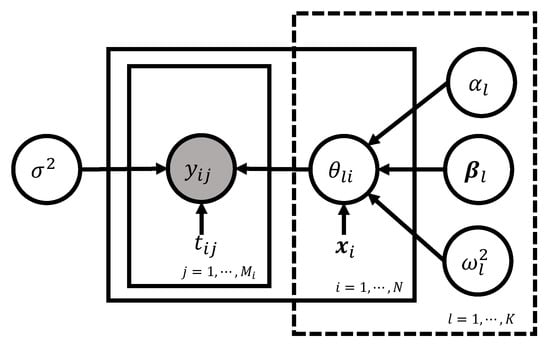

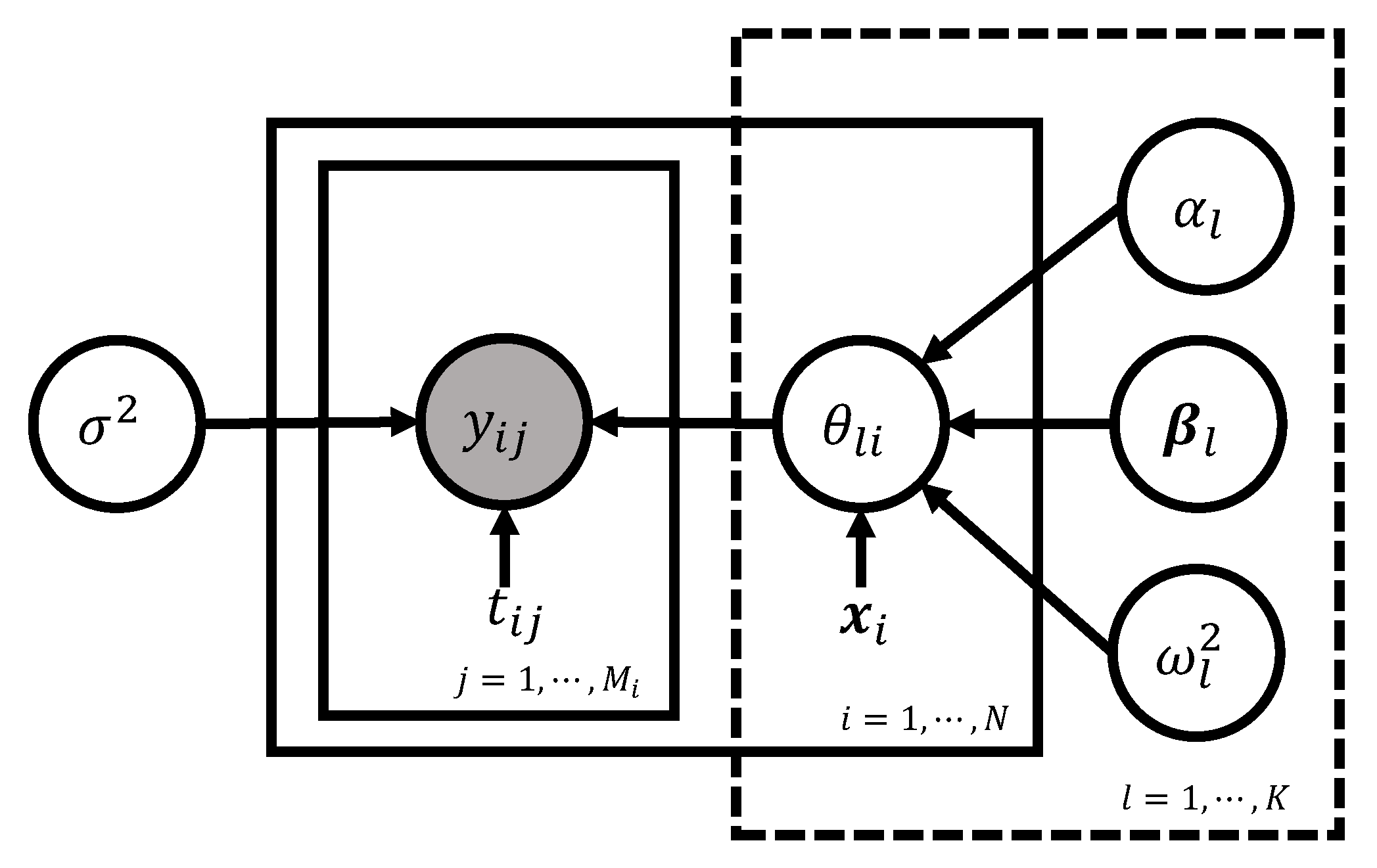

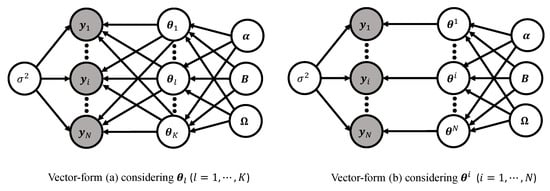

Directed asymmetric graphical (DAG) model representation of the basic model (2)–(4) is depicted in Figure 8. Following the grammar of the graphical model (Chapter 8 of [144]), the circled variables indicate stochastic variables, while the observed ones are additionally colored in gray. Non-stochastic quantities are uncircled. The arrows indicate the conditional dependency between the variables.

4.2. Vectorized Form of the Basic Model

We will often wish to write the hierarchy (2)–(4) for the i-th individual’s entire response vector and represent it with an equivalent vector-form. This turns out to be useful to develop relevant computational algorithms. We first introduce a dimensional matrix frequently used throughout this article:

The matrix (5) is referred to as model matrix because it comprises of scalar model parameters from all subjects. Indeed, most of the computational techniques either via frequentist or Bayesian setting in the literature have been developed to overcome an obstacle of a nonlinear association of the model matrix into the mean function f.

In (5), the subject index i is stacked column-wisely, while model parameter index l is stacked row-wisely, different from the conventional way adopted in most statistics. The column indexing for the subjects (i.e., stacking individual-based vector horizontally) shown in (5) is often adopted in modern computation theory of deep learning [145], and one of the main advantages of using this indexing is that it may give some pedagogical insights on the use of vectorization toward the entries to exploit parallel computations, stochastic updating, etc., in optimization or sampling techniques.

The model matrix (5) can be re-expressed as , obtained by stacking the individual model parameter vector in Stage 1 (2). Alternatively, we can represent the matrix with by defining a N-dimensional vector corresponding the l-th model parameter across all subjects (). Former and latter indexing method are referred to as i-indexing and l-indexing, respectively.

- Stage 1: Individual-Level ModelIn (6), is a -dimensional vector whose elements are temporally stacked: for the subject i. The vector is distributed according to the -dimensional Gaussian distribution with mean and covariance matrix .

- Stage 2: Population Model (l-indexing)In (7), for each l, the N-dimensional model parameter vector is used as the response vector of an ordinary linear regression: (i) N-by-P design matrix ; (ii) intercept ; (iii) coefficient vector , and (iv) isotropic Gaussian error vector with variance . (Notation in (7) represents an all-ones vector.).

- Stage 2: Population Model (i-indexing)Equation (8) is derived by incorporating each of the N columns of the model matrix (5). Here, represents a K-dimensional vector , and represents a K-by-P matrix with rows (). Here, the K-dimensional vector in the right-hand side of (8) is the mathematically identical to , where and ( is the K-by-K identity matrix and ⊗ represents the Kronecker matrix product.). The error vector is distributed according a K-dimensional Gaussian distribution with mean and covariance matrix .

- Stage 3: PriorEach of the parameter blocks in is assumed to be independent a priori.

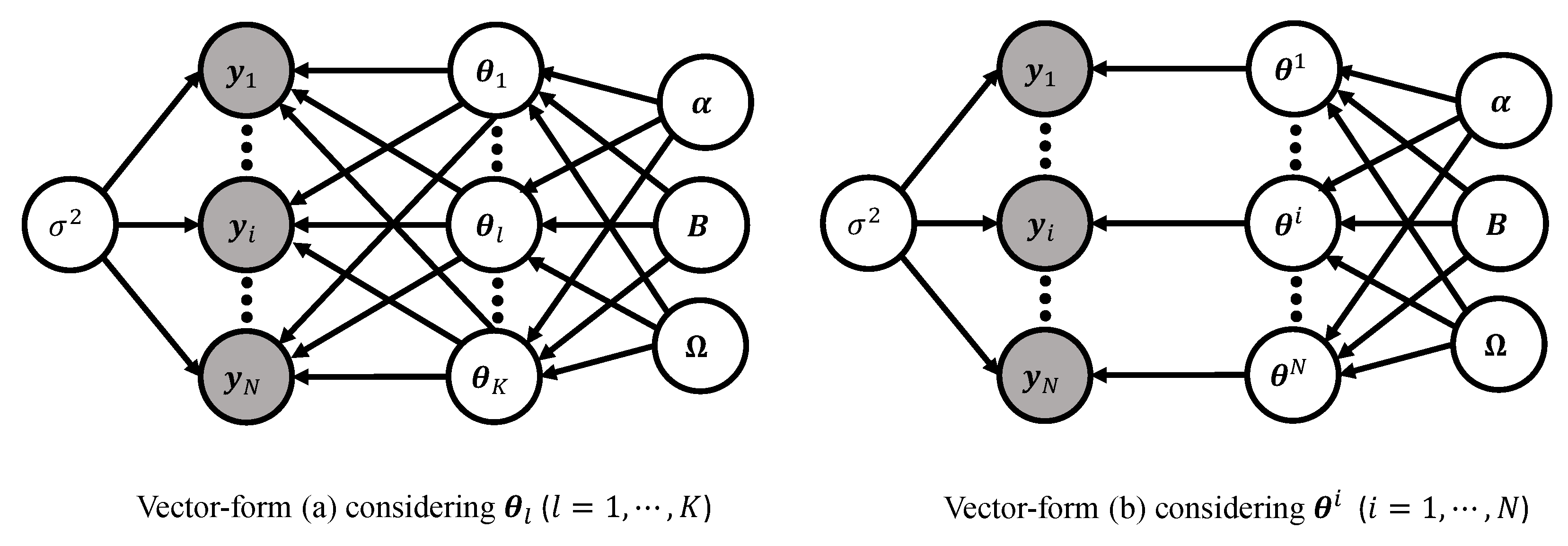

To summarize, we derived two equivalent vectorized formulations representing the basic model (2)–(4) according to how the model matrix (5) is vectorized:

Figure 9 displays the DAG representations of the two vector forms of the basic model. In vector-form (a), K latent nodes are fully connected toward the N response vectors . On the other hand, in vector-form (b), N latent nodes are bijectively connected to the N response vectors for each subject i. These two ways of looking at the framework of the Bayesian nonlinear hierarchical models complement each other for a more proper understanding of the modeling framework and provide modelers with a statistical insight. For example, vector-form (a) is useful to understand some mathematics underlying the linear regression using P regressors, while vector-form (b) makes it easy to comprehend the role of the covariance matrix .

Figure 9.

DAG representations of the basic model (2)–(4) in vector-form (a) (Stage 1—(6), Stage 2—(7) (l-indexing), and Stage 3—(9)) (left) and vector-form (b) (Stage 1—(6), Stage 2—(8) (i-indexing), and Stage 3—(9)) (right). Two vector forms are equivalent, except for the way how the model matrix (5) is vectorized.

5. Likelihood

5.1. Outline

In this section, we investigate a likelihood function based on the basic model (2)–(4). As illustrated in Bayesian workflow in Section 2.2, the likelihood theory is fundamental of Bayesian inference (see Figure 2). Therefore, it is worth spending time to re-study the formulation of the likelihood function. Here, one caveat is that, due to the hierarchical nature of the nonlinear mixed effects model, a notion of the likelihood function depends on what part of the model specification is considered to be part of the likelihood, and what is not. Most papers directly consider the marginal likelihood that will be discussed in Section 5.4. In this paper, before marching there, we study other two formulations of the likelihood in Section 5.2 and Section 5.3 to get some pedagogical insights. We will briefly discuss popularly used frequentist computing strategies in Section 5.4.

5.2. Likelihood Based on Stage 1

As in most of the statistical models, a natural starting point for inference is maximum likelihood estimation. We start with considering only Stage 1 from the basic model in Section 4.1 and ignore Stages 2 and 3 for now. Then the likelihood function for the i-th subject is

Therefore, the likelihood function based on the N subjects is

Now, we maximize the likelihood (10) with respect to the model matrix (5) given fixed:

where is the Euclidean norm.

The estimator of the model matrix (5) can be obtained by various optimization techniques such as Newton-Raphson method or Gradient descent method [146]. Noting from the summation across i in (11), N estimators are independent. We can obtain an estimator of the variance by plugging into the likelihood (10), and then maximize with respect to the . To investigate a denoised temporal tendency for the trajectory , we can simply plug into the function . To see a future pattern, we can extrapolate the function by extending the time index beyond the last time point . Eventually, the illustrated approach is based on traditional least squares estimation.

Unfortunately, there are three major drawbacks in this approach. First, it forfeits the opportunity to use ‘information borrowing’ [30] to improve a predictive accuracy due to the ignorance of Stage 2. What happens in Stage 2 (3) is to borrow strength across N individuals to produce a better estimator for (5) than an estimator simply based on individual data. A similar issue can be found in the Clemente problem from [11] where the James–Stein estimator [147] predicts better than an individual hitter-based estimator. Another example applied to epidemic data can be found in [30]. Second, it is not well-aligned with the generic motivation to use the mixed effects models, whose primary purpose is to understand “typical” values for the model parameters in f, representing whole subjects, which should be addressed by making an inference about the parameters , , and . Third, it only produces point estimates for the parameters, failing to describe the underlying uncertainty.

A remedy of the first two drawbacks is the consideration of Stages 1 (2) and 2 (3) hierarchically in a single model, leading to a frequentist version of nonlinear mixed effects models, which will be discussed in Section 5.3 and Section 5.4. To describe relevant uncertainty within the frequentist framework one may have to resort to bootstrap methods [99] or use a large-sample theory. Uncertainty quantification in frequentist analysis often needs to be done step-wisely. Another solution resolving all the three drawbacks at once is to incorporate Stage 1 (2), 2 (3), and 3 (4) into a single model in a fully Bayesian way, resulting in a Bayesian version of nonlinear mixed effects models, which is the main topic in this paper.

5.3. Likelihood Based on Stage 1 and 2 from Vector-Form (a)

A likehood function based on vector-form (a) is derived. More specifically, we examine the frequentist setting where the assumptions of Stage 1—(6) and Stage 2—(7) are considered, while the parameters introduced in Stage 3—(9) are fixed (i.e., no prior assumptions).

The individual model on Stage 1 (6) yields a conditional density for each subject . Under the population assumption on Stage 2 (7), we have the density for each model parameter index . The joint density of given parameters and is a product-form distribution:

where and . Now, the next step is to integrate out the latent model parameters (or equivalently, the model matrix (5)) from the density above to get a likelihood for the :

In most cases, the integral (12) is not tractable due to the non-linearity of the function with respect to the . Although it may be possible to use numerical techniques for the evaluation of the integral (12), this might require enormous computational effort, which is not really appreciated in the literature due to the high-dimensionality of the integral involving the dimensional model parameters .

5.4. Likelihood Based on Stage 1 and 2 from Vector-Form (b)

A likehood function based on vector-form (b) adopting i-indexing is derived here. Similar to Section 5.3, we preserve the assumption of Stage 1—(6) and Stage 2—(8), but work with fixing the parameters in Stage 3—(9). In these specifications, for each index , the individual model on Stage 1 (6) and population model on Stage 2 (8) lead to densities and , respectively. Thus, the joint density of given parameters and is

Given the parameters , the ordered pairs in the collection are conditionally independent across individuals. Therefore, a likelihood for the is based on the marginal density of :

where the last equality is derived by using the change of variable (8). The last expression (14) is a standard mathematical formulation that many frequentist computing strategies are constructed with: see Equation (3.2) from [14]. Essentially, the part that makes the MLE computation complicated is the mean vector .

As the model parameter in (13) (or similarly, in (14) which is often called random effect in the frequentist framework) participates to the function f in a non-linear fashion, the integral generally cannot be obtained in a closed-form. Benefiting from a conditional independence [148], dimensionality of the N integrals (13) is much lower than that of the integral (12) based on vector-form (a). Analytically, the likelihood functions of the basic model (2)–(4) based on vector-form (a) (12) and vector-form (b) (13) may be equivalent. That being said, minimization of the two functions with respect to the parameters yields the same solution, , so-called maximum likelihood estimators (MLE).

We shall briefly discuss MLE computations. One approach would be to perform a multivariate numerical integration (e.g., Gauss-Hermite quadrature [149]) to each of the N integrals (13), and then obtain the MLE by maximizing the product of the N numerical integrals with respect to the parameters [150]. This approach turns out to be computationally expensive and may have poor converge properties due to the following two reasons [151]. First, the numerical integration necessitates increasingly expensive iterative procedures within a MLE algorithm as the correlation of the model parameters (or equivalently, random effects) increases. Second, convergence property may be highly deteriorated when the number of model parameters K is large (i.e., high-dimensional integral) and the number of sampling times is small (i.e., sparse data) due to the ‘curse of dimensionality’ [152].

A class of common approaches for the MLE computations is based on analytical approximation to each of the N integrals (14) [13,36,153,154,155], and some of them have been successfully adopted to industrial software like NONMEM [47,101] and SAS [46]. Here, we illustrate a key idea of the first-order method attributed to [36]. Let us define a mapping for each subject i, where A is an open set with . Suppose that is smooth on the set A: then, by Taylor’s theorem (page 375 of [156]), we have the best linear approximation of the mapping at the origin given by , where is the Jacobian matrix of at . Now, we shall replace the function in integral (14) with the resulting approximation for each i (), leading to a closed-form expression

To summarize, a linearization was used to convert the nonlinear mixed effects model to a linear mixed effects model, in some sense, equivalent to the Lindley–Smith form [157]. This enables us to integrate out the random vector from the N integrals (15), deriving a marginal likelihood (15) to approximate the exact marginal likelihood (13). MLE can be obtained by jointly maximizing (15) assuming the approximation is exact.

Another way to compute the MLE is through the use of expectation-maximization (EM) algorithm [158]. Borrowing terms from EM updating process [159], , , , and (i.e., the integrand in (13)) can be viewed as observable incomplete data, missing data, complete data, unknown parameters, and density of complete data, respectively, for the i-th subject. The goal is to maximize the exact marginal likelihood (13) by iterating E-step and M-step, leading to the MLE . The E-step computes a conditional expected log-likelihood of based on the hierarchy (2)–(3), followed by the M-step that maximizes the function with respect to . The nonlinearity associated with the model matrix (5) makes the E-step intractable. As a remedy, variant versions of the EM algorithm are proposed; see [160,161,162,163] for a technical detail applied to a hierarchy similar to the basic model. Among them, the scheme of stochastic approximation EM algorithm proposed by [38], splitting the E-step into two steps, namely a simulation step and a stochastic approximation step, is widely used in many applications for its numerical stability, fast computation, and theoretical soundness [164,165], which has been successfully deployed as industrial software including Monolix [48] as well as open source software such as R package nlmixr [18].

6. Bayesian Inference and Implementation

6.1. Bayesian Inference

We briefly overview two contrasting workflows of Bayesian and frequentist approaches for nonlinear mixed effects models before moving to a technical detail. Both settings allow the randomness in the model matrix (5), but then, they diverge when it comes to how parameters are treated. Bayesians treat as random, while frequentists regard it as fixed. To conceptualize a subtlety arising from this difference, let us recap frequentist computing strategies discussed in Section 5.4. There, the model matrix was eventually integrated out from the joint density of , either approximately or exactly, to derive a marginal likelihood of from which the MLE is computed via various optimization methods. After that, frequentists apply standard Bayesian formulas, such as posterior density, posterior mean, and so on, to estimate [7].

In contrast, to drive the Bayesian engine, one would need an appropriate prior . After that, the entire collection of parameters will be updated through the Bayes’ theorem post observing the data , leading to the posterior density [166] (See Figure 2). The essence of the Bayesian viewpoint is that there is no logical distinction between and , which are associated with the random and fixed effects, respectively, from the frequentist perspective. In Bayesian framework, both and are random quantities. It is important to point out that the likelihood principle is naturally incorporated in the Bayes’ theorem [167]. Clearly, modern data complications such as enormous volume, large dimensionality, and multi-level structures may necessitate a sophistication on the prior specifications.

We are now in a position to describe the Bayesian analysis for the basic model (2)–(4), that assumed independence, a priori, for each parameter blocks , , and , . (Our logic below can be generalized to a more complex prior setting.) As discussed in Section 4, it is the discretion of the modeler of how she would treat the model matrix (5) with l-indexing or i-indexing , leading to vector forms (a) and (b), respectively. For the sake of readability, we illustrate the Bayesian inference by using the vector-form (a), but we will sometimes use the vector-form (b) when this seems more understandable.

A central task in the application of the Bayesian nonlinear mixed effects models is to evaluate the posterior density, or indeed to compute expectation with respect to the density:

where the last equation can be detailed as follows

From a Bayesian perspective, all inferential problems regarding the parameter may be addressed in terms of the posterior distribution (16). Unfortunately, for almost all problems, the distribution is intractable. In such situations, we need to resort to approximation techniques, and these fall broadly into two classes, according to whether they rely on stochastic [41,45,168,169] or deterministic [170,171,172,173] approximations. See [174,175] for review papers of these techniques. In this article, we mainly focused on the stochastic approximation. The basic idea behind the methodology is to construct a Markov chain whose stationary distribution is the posterior distribution (16).

6.2. Gibbs Sampling Algorithm

We resort to MCMC technique [174] to sample from the full joint density (16). Among many MCMC techniques, we use the Gibbs sampling algorithm [39,168] to exploit the conditional independence [148] induced by the hierarchical formulation. A generic Gibbs sampler would cycle in turn through each of the conditional distributions for the parameter blocks , and as follows:

Step 1. Sample from its full conditional distribution

Step 2. Sample from its full conditional distribution

Step 3. Sample from its full conditional distribution

Step 4. Sample from its full conditional distribution

Step 5. Sample from its full conditional distribution

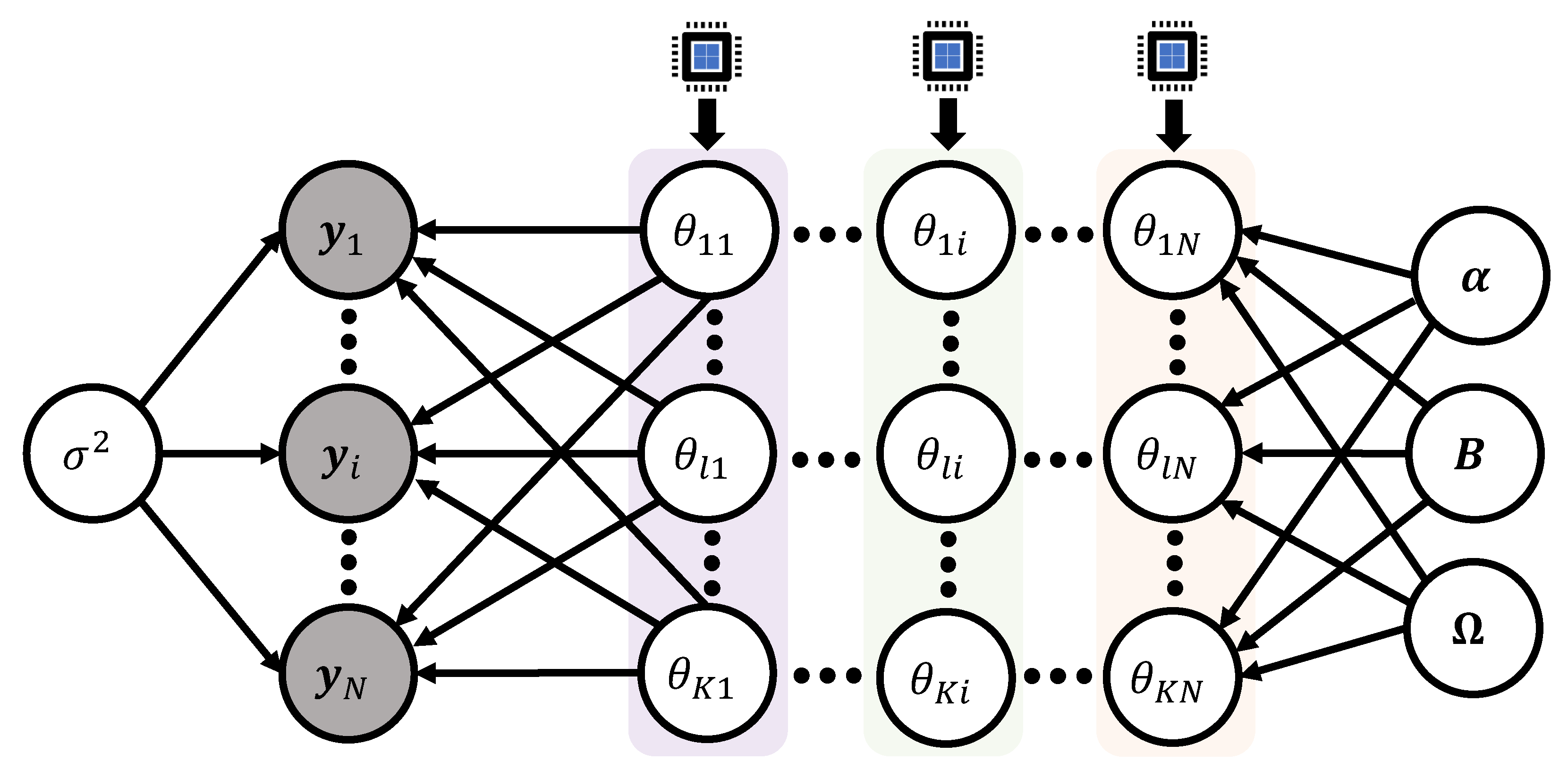

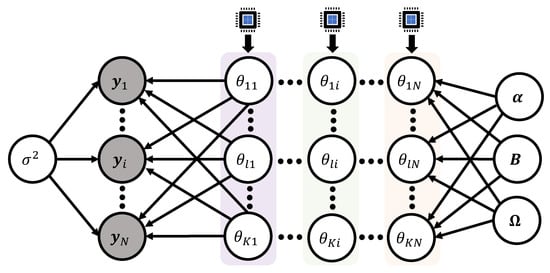

6.3. Parallel Computation for Model Matrix

One of the most computer-intensive steps to implement the Gibbs sampler in Section 6.2 is Step 1 to sample the model matrix (5), or equivalently its entries , from the full conditional distribution (17). Clearly, the nonlinear participation of the model parameters to the function f makes the conditional distribution intractable, hence, non-conjugate sampling is unavoidable, which may suffer from a slow convergence. At the same times, due to the Markovian nature of the Gibbs algorithm, it is difficult to parallelize the whole steps of the Gibbs sampler, which creates difficulties in slower languages like R [176]. Nevertheless, the increasing number of parallel cores that are available at a very low price drives more and more interest in ‘parallel sampling algorithms’ that can benefit from the available parallel processing units on computers [177,178].

We suggest a framework of parallel computations to efficiently update the model matrix . This framework can be particularly appreciated under the setting of Bayesian nonlinear mixed effects models when the number of subjects N is a lot larger than the number of model parameters K ().

The first version of parallel sampling algorithms is based on scalar updating. For the derivation, we start with analyzing the full conditional posterior distribution of a single element ():

where the notation represents the all the entries of except for , that is, . Here, we used a conventional notation in Bayesian computation: ‘’ indicates the density , where the notation ‘−’ in implies all the parameters except for the in the basic model (2)–(4) along with N observations.

Note that the proportional part of the full conditional (22) only involves the i-th column vector of the model matrix (5), that is, in its analytic expression. This implies that we can update the K entries of the vector () independently across subjects. Parallel sampling algorithm can be completed by assigning a single CPU process to each of the subjects i. Within the step to sample the K entries from the vector , it is required to use Gibbs iterative procedure to update the scalar components. Authors [30,31] applied this technique to update the model matrix for a Bayesian nonlinear mixed effects model to train the dataset explained in Section 3.3 and Section 3.5. Figure 10 displays the schematic idea of the parallel sampling algorithm.

The second version of parallel sampling algorithms is based on vector updating. We analyze the full conditional posterior distribution of the vector ():

where the notation represents the all the column vectors of except for . Similar to the first version, we can use the parallel computation to update the model matrix by simultaneously sampling from the full-conditional density (23) across subjects by assigning one CPU to each individual.

6.4. Elliptical Slice Sampler

Due to the issue of non-conjugacy to sample from the univariate density (22) or K-dimensional density (23), the choice of a suitable MCMC method and further the choice of a proposal distribution is crucial for the fast convergence of the Markov chain simulated from the Step 1 within the Gibbs sampler in Section 6.2. The Metropolis-Hastings (MH) algorithm [179,180] is the first solution to consider in such intractable situations: see the Algorithm 1 from [181]. In practice, the performances of the MH algorithm are highly dependent on the choice of the proposal density [182]. In the past decades, numerous MH-type algorithms to improve computational efficiency have been developed, and these fall broadly into two classes, according to whether the proposal density reflects a gradient information [41,43,44,183] or not [169,184]. In specific, the gradient information, here, refers to the first-order derivative of the minus of the log of the target density (i.e., or , where the notation ∇ represents the gradient operator). Typically, gradient-based samplers are attractive in terms of rapid exploration of the state space, but the cost of the gradient computation can be prohibitive when the sample size N or model dimension K is extremely large [185,186]. Fortunately, this requirement can be made less burdensome by using automatic differentiation [187].

In the present subsection, we introduce an efficient gradient-free sampling technique, the elliptical slice sampler (ESS) proposed by [169], to simulate a Markov chain from the density (22). The sampling logic can be directly applied to the situation to sample from the density (23) by simply replacing by and changing the dimension of relevant distributions, stochastic processes, etc, from 1 to K. Conceptually, MH and ESS algorithms are similar in that both comprise two steps: a proposal step and a criterion step. A difference between the two algorithms arises in the criterion step. If a new candidate does not pass the criterion, then the MH algorithm takes the current state as the next state: whereas ESS re-proposes a new candidate until rejection does not take place, rendering the algorithm rejection-free. The utility of ESS can be appreciated when an analytic expression of a target density can be factored to have a Gaussian prior distribution. Unlike the traditional MH algorithm that requires the proposal variance or density, ESS is fully automated, and no tuning is required.

To adapt the ESS to our example, we re-write the density (22) as the following form:

where , and Z is the normalization constant. By introducing the notation , it is our intention that we shall treat the function as a likelihood part temporarily only when sampling from the density (24). Alternatively, one can proceed with the choice as a likelihood part to operate ESS, which then changes the normalization constant accordingly. We recommend to use the simplest functional form for the likelihood part, if possible, to reduce the computation cost.

Algorithm 1 summarizes the ESS in an algorithmic form, where the situation is at the -th iteration of the Gibbs sampler. Therefore, the goal is to draw from the target density () (24), where we already have as the current state for the target variable realized from the s-th iteration:

| Algorithm 1: ESS to sample from (22) |

| Goal: Sampling from the full conditional posterior distribution

Input: Current state . Output: A new state .

|

6.5. Metropolis Adjusted Langevin Algorithm

We introduce the Metropolis adjusted Langevin algorithm (MALA) [43,44] which is popular for its use of problem-specific proposal distribution based on the gradient information of the target density. The main idea of MALA is to use Langevin dynamics to construct the Markov chain. To adapt the sampling technique to our example, we re-write the density (22) as the following form:

where . Now, we consider a stochastic differential equation [188] that characterizes the evolution of the Langevin diffusion with the drift term set by the gradient of the log of the density (25):

where is a standard 1-dimensional Wiener process, or Brownian motion [189]. In (26), t indexes a fictitious continuous time. ∇ represents the gradient operator with respect to . Under fairly mild conditions on the function , Equation (26) has a strong solution that is a Markov process [190]. Furthermore, it can be shown that the distribution of converges to the invariant distribution (25) as .

Since solving the Equation (26) is very difficult, a first-order Euler-Maruyama discretization [191] is used to approximate the solution to the equation:

where is the step size of discretization, and indexes the discrete time steps. This recursive update defines the Langevin Monte Carlo algorithm. Typically, to handle the discretization error and satisfy the detailed balance [192] to make Markov chain converge to the target distribution (25), the MH correction is needed. Algorithm 2 details MALA to sample from the () (25):

| Algorithm 2: MALA to sample from (22) |

| Goal: Sampling from the full conditional posterior distribution

Input: Current state and step size . Output: A new state .

|

6.6. Hamiltonian Monte Carlo

We introduce the Hamiltonian Monte Carlo (HMC) algorithm that employs Hamiltonian dynamics to efficiently explore the parameter space [41,183]. Among many MH-type sampling algorithms, HMC has been recognized as one of the most effective algorithms due to its rapid mixing rate and small discretization error. By that reason, HMC has been deployed as the default sampler in many open packages such as Stan [193] and Tensorflow [194]. A key idea of HMC distinctive from ESS and MALA is the introduction of an auxiliary momentum variable, which is typically assumed to follow as a Gaussian distribution and independent of the target variable. By doing so, the HMC can produce distant proposals for the target variable, thereby avoiding the slow exploration of the state space that results from the diffusive behavior of simple random-walk proposals.

We adapt the HMC to our example. We shall first take a look at a joint density:

where and . The auxiliary variable is distributed according to the univariate Gaussian distribution with variance . Note that it holds due to the independence between and . Therefore, our ultimate goal is to sample from the joint density (28), and take only by marginalization.

Noting from (28), the negative of joint log-posterior is

The physical analogy of the bivariate function (29) is a Hamiltonian [183,195], which describes the sum of a potential energy , defined at the position , and a kinetic energy , where the auxiliary variable can be interpreted as a momentum variable and the variance denotes a mass.

Now, we construct a Hamiltonian system by taking a derivative of H (29) with respect to and , and by introducing a continuous fictitious time t:

where ∇ represents the gradient operator with respect to .

The Hamiltonian systems (30) and (31) have three nice properties. Assume that is a solution curve of the system, where . Then, the following relationships hold:

- (a)

- Preservation of total energy: for all ;

- (b)

- Preservation of volume: for all ;

- (c)

- Time reversibility: The mapping from state at t, , to the state at time , , is one-to-one, and hence has an inverse .

Three properties are eventually related with the following nice properties of the HMC: (a) a high probability of acceptance of proposals; (b) a simple analytic form of acceptance ratio (no need to consider a hard-to-compute Jacobian factor); and (c) a detailed balance with respect to the target density . For a detailed description and extensive review, see [41].

For practical applications, the differential equation system (30) and (31) cannot be solved analytically and numerical methods are required. As the Hamiltonian H in the system is separable (or equivalently, the joint density is factorizable), to traverse the state space more efficiently, the leapfrog integrator method is typically used, which involves a discretized step of the dynamics. As similar to the construction of MALA, the discretization errors arising from the leapfrog integration are addressed by MH correction step. Algorithm 3 details the HMC to sample from the target density (22). In the algorithm, indexes the sampling iteration within the Gibbs sampler, while represents the index introduced due to the discretization.

One caveat in the HMC is that no matter whether we accept or reject the proposal, we draw a new momentum from the kinetic energy at every iteration. To check this, see the Step a in Algorithm 3, where is drawn from the kinetic density . The momentum is only used to formulate the initial pair , where is the current target state , that will be guided by Hamiltonian dynamics (30) and (31) via leapfrog integrator, eventually reaching the last pair which is used as the proposal The momentum is deleted and we will draw a new momentum in the next iteration. This independent drawing of the momentum is the engine that enables HMC to produce distant proposals, but nevertheless maintains a high probability of acceptance.

| Algorithm 3: HMC to sample from (22) |

| Goal: Sampling from the full conditional posterior distribution

Input: Current state , step size , number of steps L, and mass . Output: A new state .

|

The naive HMC (Algorithm 3) requires the users to specify at least three parameters: a step size , a number of steps L, and a mass , for which to run a simulated Hamiltonian system. A poor choice of either of these parameters will result in a dramatic drop in the efficiency HMC. No-U-Turn Sampler (NUTS) developed by [42] is an extension of HMC, which is designed to automatically turn the parameters while fixing , making it possible to run NUTS with no hand-tuning at all. HMC and NUTS are general-purpose inference engines deployed in Stan.

We would like to highlight a difference between MALA (Algorithm 2) and HMC (Algorithm 3). Although both algorithms utilizes the gradient information (that is, ), the former is based on stochastic differential Equation (26) and the latter is based on ordinary differential Equations (30) and (31). From the algorithmic perspective, MALA exhibits a single loop structure and is designed to directly employ the discretization of the underlying Langevin dynamics: see that the index resulting from the discretization in (27) is directly used as the sampling index in Algorithm 2. On the other hand, HMC has a double loop structure: the inner loop (i.e., Step c in Algorithm 3) solves the Hamiltonian dynamics (30) and (31) to make a proposal, while the outer loop judges the proposals. The index in the inner loop, resulting from the leapfrog integrator, and the sampling index of the outer loop in Algorithm 3 are not related [196]. Therefore, one can set the number of steps L for the leapfrog integrator by an arbitrary integer.

7. Prior Options

7.1. Priors for Variance

Provided the assumption of the basic form (2)–(4), the random error terms in Stage 1 and in Stage 2 are the stochastic sources of (remaining) intra-individual and inter-individual variabilities, respectively [8]. Both terms are assumed to follow univariate Gaussian distributions in the basic model. This assumption can be generalized to multivariate Gaussian distribution, t-distribution, mixture of Gaussian distributions, etc., depending on the exhibition of the data or prior guess of perturbation associated with model matrix (5) [197].

Recall that the basic model assumes that data-level errors are distributed according to with variance , independently across times and subjects . We discuss about the -term in Section 7.3. Therefore, the standard deviation describes a vertical difference (i.e., measurement error) between the observation and theory across time and individuals. We can generalize the basic setting by replacing with (a) () or (b) () to accommodate the heterogeneity the measurement error (a) across subjects and (b) across subjects and time, respectively, provided sufficiently large sampling times [28].

For any prior , the full conditional posterior distribution of (18) is given as

where represents the Euclidean norm of the vector .

Popularly used priors (or ) are (i) the Jeffreys prior [198]; (ii) inverse-gamma prior with shape and scale ; and (iii) half-Cauchy prior with scale . Note that half-Cauchy distribution should be given to the standard deviation , not variance . The first two prior options lead to the conjugate update to sample from the density (32). Although the third one induces non-conjugate update to sample from the density , computationally efficient sampling can be constructed by using parameter expansion technique [199] or slice sampler [45].

7.2. Priors for Intercept and Coefficient Vector

One of the central goals of using nonlinear mixed effects models is to identify significant covariates among the P covariates , explaining each of the model parameters . This is because the function f in Stage 1 (2) is typically derived from a differential equation system. Such a differential equation has model parameters that control the dynamic of the solution of the system, and how the parameters are related with covariates is vital to understand causality. For example, in PK analysis, understanding whether and to what extent weight, renal status, disease status, etc., are associated with drug clearance may dictate how these factors can be considered in a dosing schedule.

We explain popularly used priors for the intercept and coefficient vector by taking the vector-form (a) (Stage 1—(6), Stage 2—(7), and Stage 3—(9)) because it directly embeds the framework of linear regression. For each model parameter index , we re-write the Equation (7) for the purpose of illustration:

where . By the assumption (9), we have priors and (). Note that the Equation (33) is a Bayesian multivariate linear regression (page 149 of [60]), and the only difference from the usual context is that the response vector in (33) is latent. Therefore, almost all Bayesian regression techniques [200,201] can be used to the latent regression (33) provided that the model matrix (5) is efficiently realized in Step 1 within the Gibbs sampler.