Abstract

Two new inertial-type extragradient methods are proposed to find a numerical common solution to the variational inequality problem involving a pseudomonotone and Lipschitz continuous operator, as well as the fixed point problem in real Hilbert spaces with a -demicontractive mapping. These inertial-type iterative methods use self-adaptive step size rules that do not require previous knowledge of the Lipschitz constant. We also show that the proposed methods strongly converge to a solution of the variational inequality and fixed point problems under appropriate standard test conditions. Finally, we present several numerical examples to show the effectiveness and validation of the proposed methods.

Keywords:

variational inequalities; fixed point problem; subgradient extragradient method; strong convergence; tseng’s extragradient method MSC:

47H09; 47H05; 47J20; 49J15; 65K15

1. Introduction

Assume that is a nonempty, closed, and convex subset of a real Hilbert space with the inner product and the induced norm The main contribution of this study is to investigate the convergence analysis of the iterative schemes for solving variational inequality and fixed point problems in real Hilbert spaces. The reason and inspiration for investigating such a common solution problem is its potential applicability to mathematical models whose constraints can be stated as fixed point problems. This is especially relevant in applications such as signal processing, composite minimization, optimum control, and image restoration; see, for example, [1,2,3,4,5]. Let us take a look at both of the problems highlighted by this research.

Let be an operator. First, we look at the classic variational inequality problem [6,7] which is expressed as follows:

The solution set of a problem (1) is denoted by The variational inequality problem has been widely applied to study real world applications, such as partial differential equations, optimization, optimal control, mechanics, mathematical programming, and finance (see [8,9,10,11,12,13,14]). The problem (1) is a significant one in applied sciences. Many authors have committed themselves to investigating not only the theory of existence and the stability of solutions, but also iterative methods for solving such problems.

On the other hand, projection methods are important for determining the numerical solution to variational inequalities. Several authors proposed various projection methods to solve the problem (1) (see for details [15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32]). Most methods for solving the problem (1) use the projection method, which is computed on the feasible set Korpelevich [15] and Antipin [33] established the extragradient method described below. Their method takes the following form:

where According to the above method, each iteration must estimate two projections on the feasible set Of course, if the feasible set has a convoluted structure, this might have an impact on the computing efficacy of the approach adopted. In this part, we will limit our attention to giving various approaches for overcoming this obstacle. The first is the following subgradient extragradient method proposed by Censor et al. [17]. This method is in the following form:

where and

Furthermore, Tseng’s extragradient method [19] requires only one projection for each iteration. This method is written as follows:

where In terms of computation, the method (4) is extremely efficient because it only requires one solution to a minimization problem per iteration. As a result, the method (4) is less computationally expensive and performs better in most situations.

Let be a mapping and the fixed point problem (FPP) for the mapping is to: find such that

The solution set of a fixed point problem is known as the fixed point set of a mapping and is denoted by Most of methods for solving the problem (5) are derived from the standard Mann iteration, specifically, from and construct sequence for all by

where the variable sequence must meet certain requirements in order to accomplish weak convergence. Another formalised iterative approach that is more effective in infinite-dimensional Hilbert spaces for achieving strong convergence is the Halpern iteration. The iterative sequence can be written as follows:

where and the sequence is non-summable and slowly diminishing, i.e.,

Furthermore, it is worth mentioning that, in addition to the Halpern iteration, there is a general form of it, namely the viscosity method [20], in which the cost mapping is merged with a contraction mapping in the iterates. Finally, another technique that provides strong convergence is the hybrid steepest descent method proposed in [34].

Tan et al. [35,36] recently introduced a new numerical method, namely the extragradient viscosity method, for solving variational inequalities involving a constraint set as a fixed point set for a -demicontractive mapping. These methods were obtained by combining the extragradient methods [15,17] with the Mann-type method [37] and the viscosity-type method [20]. The authors proved that all methods have strong convergence when the operator is pseudomonotone and meets the Lipschitz criterion. These methods have the advantage of being numerically computed using optimization tools, as discussed in [35,36].

The primary disadvantage of these methods is that they rely on viscosity and Mann-type techniques to obtain strong convergence. As we all know, achieving strong convergence is critical for iterative sequences, especially in infinite-dimensional spaces. There are only a few techniques with strong convergence that use inertial schemes. The Mann and Viscosity types of steps may be difficult to estimate from an algorithmic perspective, affecting the algorithm’s convergence rate and applicability. These methods increase the number of numerical and computational steps, making the system more complex.

Hence, a natural question arises:

Is it possible to introduce strongly convergent inertial extragradient methods for solving variational inequalities and fixed point problems with a self-adaptive step size rule without requiring Mann and Viscosity-type methods?

Motivated by the above, as well as the works cited in [35,36], we provide the positive answer to the above question by introducing two strong convergence extragradient-type methods for solving pseudomonotone variational inequalities and the -demicontractive fixed point problem in real Hilbert spaces. Furthermore, we avoid the use of any hybrid schemes, such as the Mann-type and the Viscosity scheme, in order to obtain the strong convergence of these methods. We proposed novel methods that leverage inertial schemes and have a strong convergence.

2. Preliminaries

Let be a nonempty, closed, and convex subset of the real Hilbert space. Assume that the sequences and represent the weak and strong convergence of to For each the following information is available to us:

- (1)

- (2)

- (3)

The definition of metric projection of is defined by

It is well-known that is non-expansive and satisfies the following conditions:

- (1)

- (2)

Definition 1.

In [38] suppose that is a nonlinear function with Then, is said to be demiclosed at zero if, for all in the following conclusion holds:

Lemma 1.

In [39] let and are three sequences meet the following requirements:

If for each subsequence of meet

Then,

Definition 2.

In [40,41] for any an operator is said to be

- (1)

- L-Lipschitz continuous if there exists a constant such that

- (2)

- pseudomonotone if

- (3)

- sequentially weakly continuous if a sequence weakly convergent to for any sequence that is weakly convergent to

- (4)

- ρ-demicontractive if there exists a constant such thator equivalently

Next, in order to prove the strong convergence theorems, we assumed the following conditions are satisfied:

- (ℑ 1)

- The solution set

- (ℑ 2)

- The mapping ℑ is pseudomonotone, Lipschitz continuous and sequentially weakly continuous;

- (ℑ 3)

- The is -demicontractive and is demiclosed at zero.

3. Main Results

In this section, we examine at the convergence of two new inertial extragradient methods for solving variational inequality and fixed point problems in detail. These techniques made use of fixed and non-monotone step size criteria.

Lemma 2.

A sequence generated by (15) is convergent to ℷ and bounded by , where .

Proof.

Since the mapping ℑ is Lipschitz continuous, there exists a positive constant It is given that and

Using mathematical induction on the definition of we have

Let and From the definition of , we have

That is, the series is convergent. Next, we need to prove the convergence of Let . For this reason, we have Thus, we obtain

By letting in expression (10), we have as This is a contradiction. Due to the convergence of the series and taking in expression (10), we obtain This completes the proof of lemma. □

Lemma 3.

The step size sequence generated in (24) is monotonically decreasing and bounded by where

Proof.

It is given that ℑ is Lipschitz-continuous with constant and we have

Using mathematical induction on the definition of we have

Let and From the definition of , we have

That is, the series is convergent. Next, we need to prove the convergence of Let . For this reason, we have Thus, we obtain

By letting in expression (13), we have as This is a contradiction. Due to the convergence of the series and taking in (13), we obtain This completes the proof of lemma. □

Lemma 4.

Let be a mapping satisfies the conditions(ℑ 1)– (ℑ 2). Let be a sequence is generated by Algorithm 1. For each we have

| Algorithm 1 Inertial Subgradient Extragradient Method with Non-Monotone Step Size Rule. |

Step 0: Take Moreover, select a non-negative real sequence such that and satisfies the following conditions:

Step 1: Compute

while taken as follows:

Moreover, a positive sequence such that Step 2: Compute

If , then STOP. Else, move to Step 3. Step 3: First, construct a half-space and compute

Step 4: Compute Step 5: Compute

Set and go back to Step 1. |

Proof.

First, we have to compute the following

It is hypothesized that Thus, we have

It also indicates that

We obtain by combining Equations (16) and (18)

We acquire ℑ on as a result of the definition of a mapping ℑ on Thus, we have

Since we have

By letting we have

Thus, we have

We obtain by combining formulas (19) and (20)

From given we have

From (21) and (22) we obtain

□

Lemma 5.

Let satisfies the items (ℑ 1)– (ℑ 2). Let be a sequence is generated by Algorithm 2. Then, for each we have

| Algorithm 2 Inertial Tseng’s Extragradient Method with Non-Monotone Step Size Rule. |

Step 0: Take Moreover, select a non-negative real sequence such that and satisfies the following conditions:

Step 1: Compute

while taken as follows:

Moreover, a positive sequence such that Step 2: Compute

If , then STOP. Otherwise, go to Step 3. Step 3: Compute

Step 4: Compute

Step 5: Compute

Set and move back to Step 1. |

Proof.

From and due to value of we may write

Due to the value of we have

For some we may write

From Equations (26) and (28) we obtain

Due to the definition of a mapping ℑ on we obtain

Since we have

Substituting we have

From Equations (29) and (30) we obtain

□

Theorem 1.

Let be an operator satisfies the conditions(ℑ 1)– (ℑ 3). Then, sequence generated by Algorithm 1 strongly converges to where

Proof. Claim 1:

The sequence is bounded.

Indeed, we have Thus, we obtain

Due to the definition of sequence we can write

for some we have

The above expression is derived from Equation (14) as follows:

Since by Lemma 2, step size sequence implies that there exists a fixed number such that

As a result, there exists a finite natural number such that

By Lemma 4, we may rewrite

From expressions (32), (34) and (36) infer that

Since we obtain

Therefore, we can conclude that the sequence is bounded.

Claim 2:

for some Indeed, it follows from definition of that

Using expression (23) we have

Indeed, it follow from expression (34) that

for some Combining expressions (40)–(42) we obtain

Claim 4:The sequence converges to zero.

Set

and

Then, Claim 4 can be rewritten as follows:

Indeed, from Lemma 1, it suffices to show that for every subsequence of satisfying

This is equivalently to need to show that

and

for every subsequence of satisfying

Suppose that is a subsequence of satisfying

Then

It follows from Claim 2 that

The above relation implies that

Therefore, we obtain

Now, we compute

This together with yields that

From one sees that

Thus, we obtain

The above expression implies that

and

Since the sequence is a bounded, without loss of generality we can assume that converges weakly to some Next, we need to prove that We have expression (48) and Since weakly convergent to and due to sequence also weakly convergent to Next, we need to prove that It gives that

that is equivalent to

As a result of the aforementioned inequality, we have

Consequently, we obtain

Since and is a bounded sequence. By the use of and in (58), we obtain

Additionally, it follows that

Since and Lipschitz condition on mapping we obtain

which together with (60) and (61), we obtain

To prove further, let us take a positive sequence that is convergent to zero and decreasing. For every there exists a least positive integer represented by such that

where the existence of follows from expression (62). Since is decreasing, it is easy to see that the sequence is increasing. If there exists a natural number such that for all Thus, we consider that

Using the above value of we obtain

Combining expressions (63) and (65), we obtain

Along with the definition of pseudomonotone mapping we can write

For all we have

Since the sequence weakly converges to Thus, weakly converges to Let that implies that

Since and we have

By letting in expression (68), we obtain

Let be arbitrary element and Let us consider that

Then From expression (71), we have

Hence, we have

Let Then along a line segment. By the continuity of an operator, converges to as It follows from (74) that

Therefore, is a solution of problem (1). From given we have

From (50), one obtains converges weakly to It follows from (51) that converges weakly to By the demiclosedness of we obtain that Thus, Thus, we have

Using the fact . Thus, we have

Combining Claim 3 and in the light of Lemma 1, we observe that as The proof of Theorem 1 is completed. □

Theorem 2.

Let be an operator satisfies the conditions (ℑ 1)– (ℑ 3). Then, sequence generated by Algorithm 2 is strongly convergent to where

Proof. Claim 1:

The sequence is bounded.

Indeed, we have

Due to the definition of a sequence we have

Thus, we have

where is

The above expression is derived from Equation (24) as follows:

Using Lemma 3, step size sequence such that and

Thus, there exists a finite number such that

By the use of Lemma 5, we may rewrite

From expressions (79), (81) and (83) infer that

Since we obtain

Finally, we can conclude that is a bounded sequence.

Claim 2:

for some Indeed, it follows from definition of that

Using Lemma 5, we have

Indeed, it follow from expression (81) that

for some Combining expressions (87)–(89) we obtain

Claim 4:The sequence converges to zero.

Set

and

Then, Claim 4 can be rewritten as follows:

Indeed, from Lemma 1, it suffices to show that for every subsequence of satisfying

This is equivalently to need to show that

and

for every subsequence of satisfying

Suppose that is a subsequence of satisfying

Then

It follows from Claim 2 that

The above relation implies that

It follows that

The above expression implies that

The proof is similar to the Claim 4 of Theorem 1. So we omit it here. □

4. Numerical Illustrations

In contrast to some previous work in the literature, this part describes the algorithmic repercussions of the presented techniques, as well as an analysis of how differences in control parameters affect the numerical efficacy of the proposed algorithms.

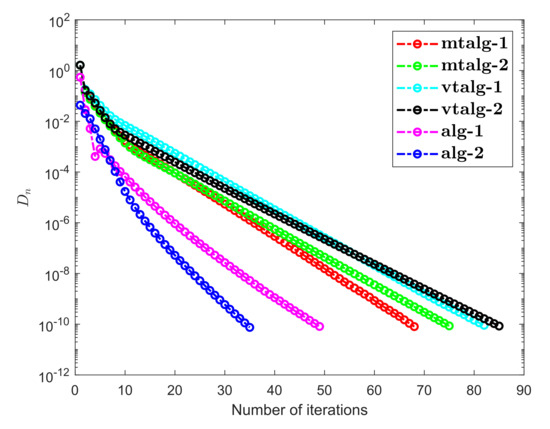

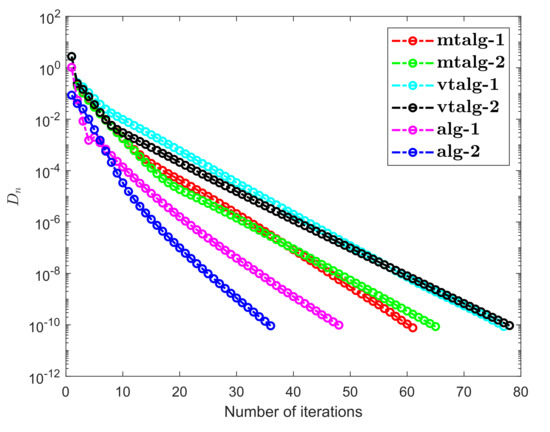

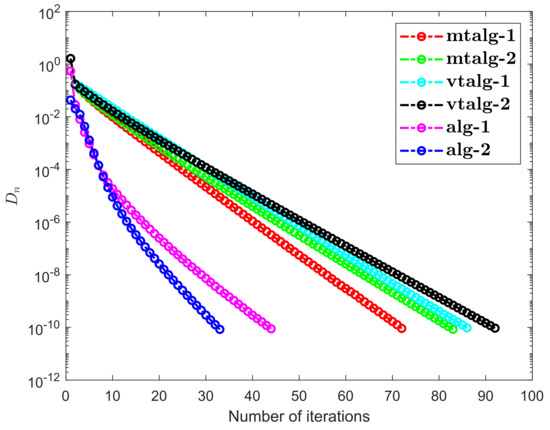

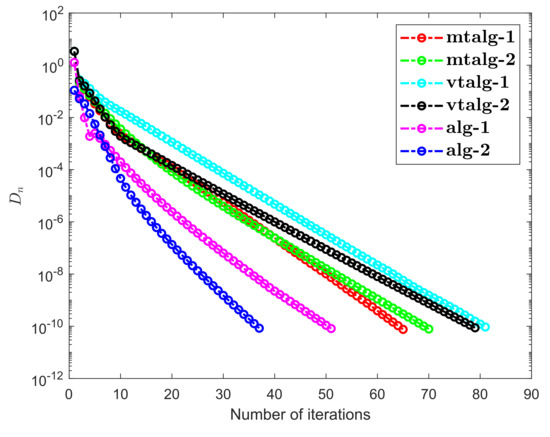

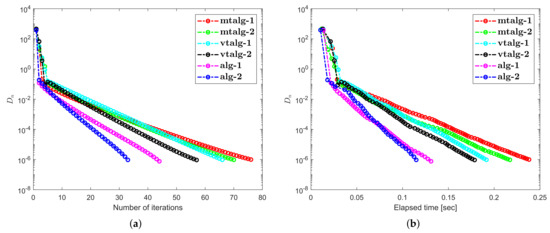

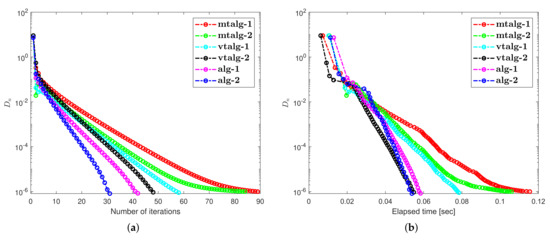

Example 1.

Consider the HpHard problem, which is taken from [42]. Many researchers have considered this example for numerical experiments (see for details, [43,44,45]). Let us say a mapping is defined by

and where

We used as a random matrix and as a skew-symmetric matrix with and during this experiment denotes a diagonal matrix. The practicable set is interpreted as follows:

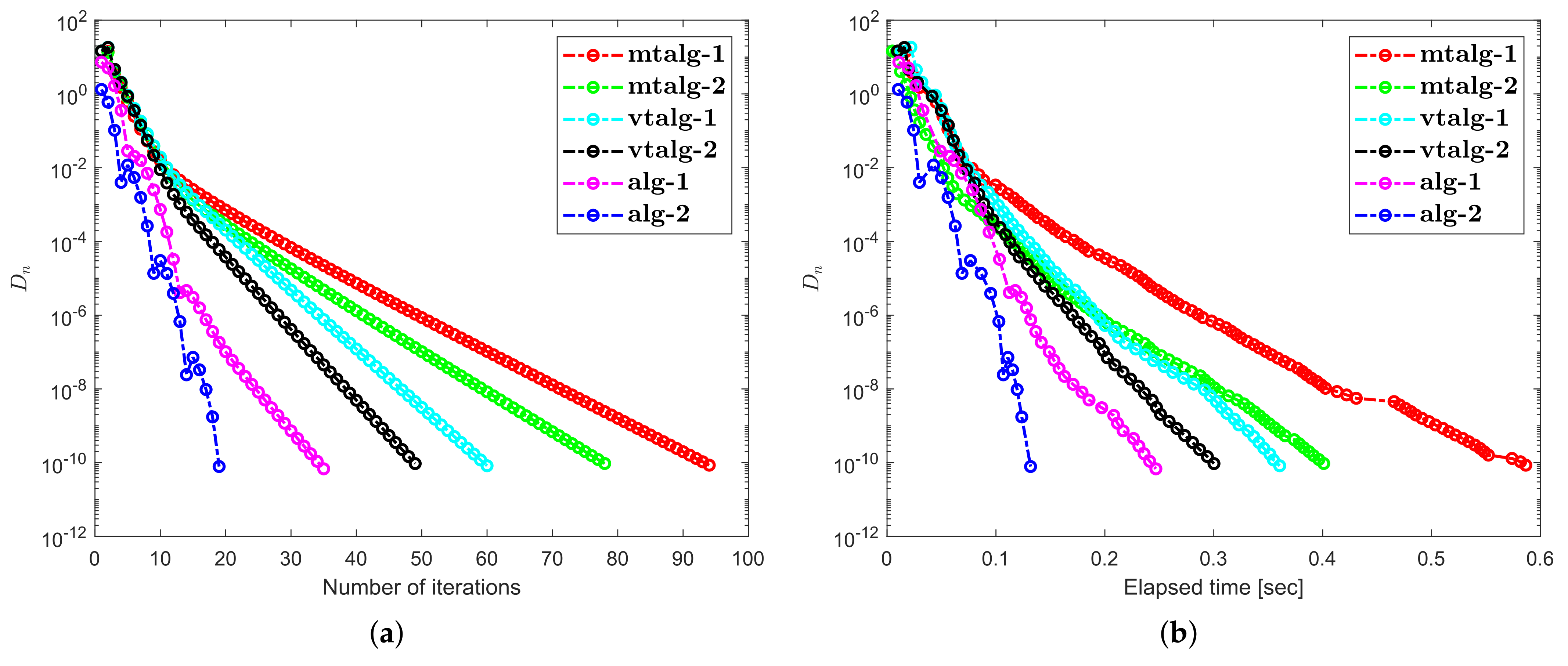

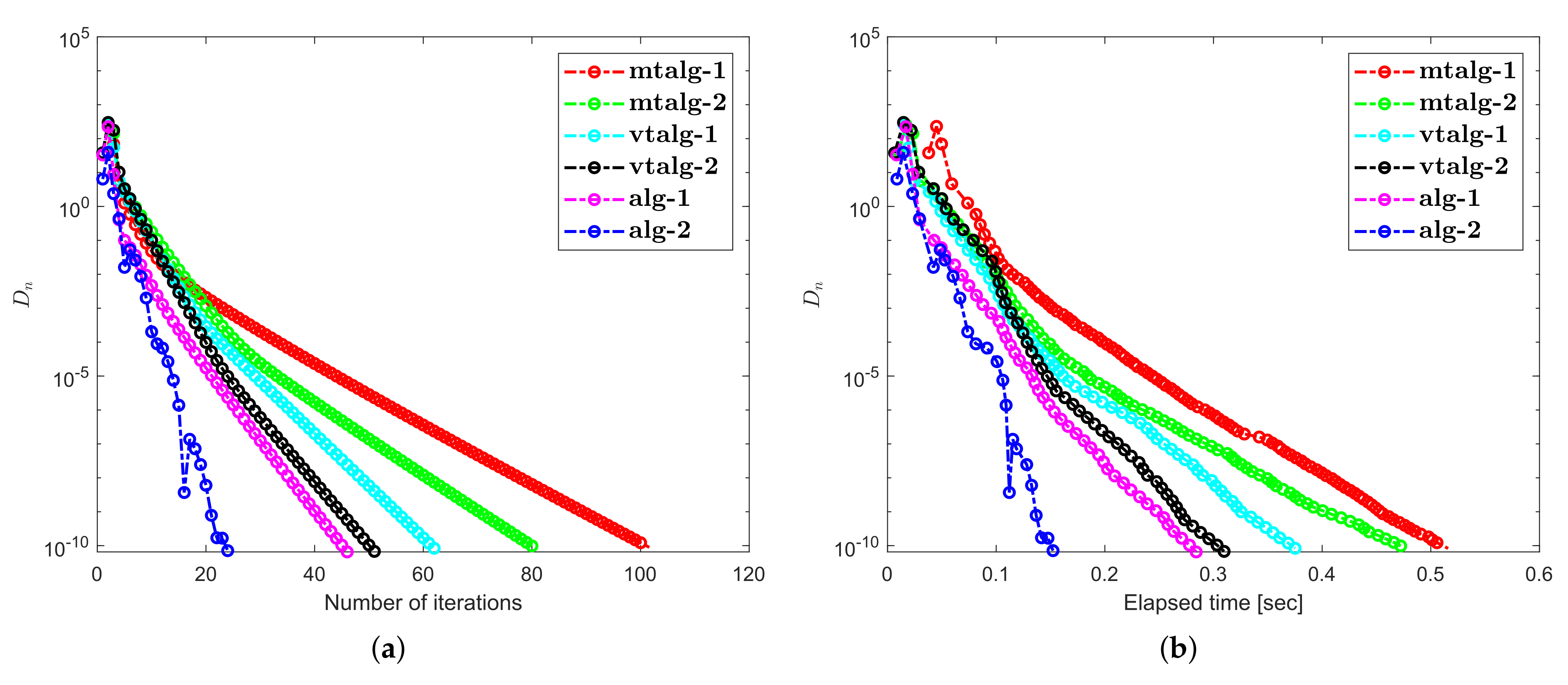

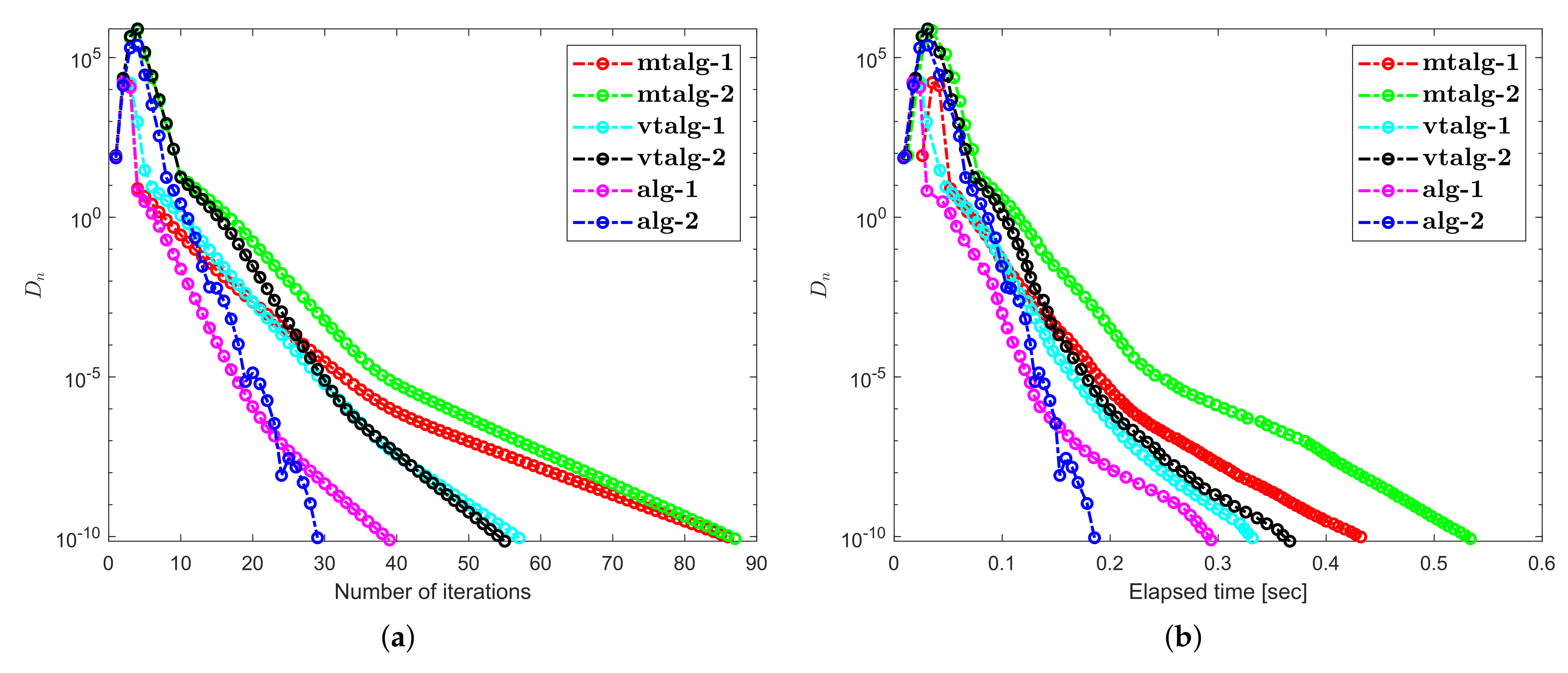

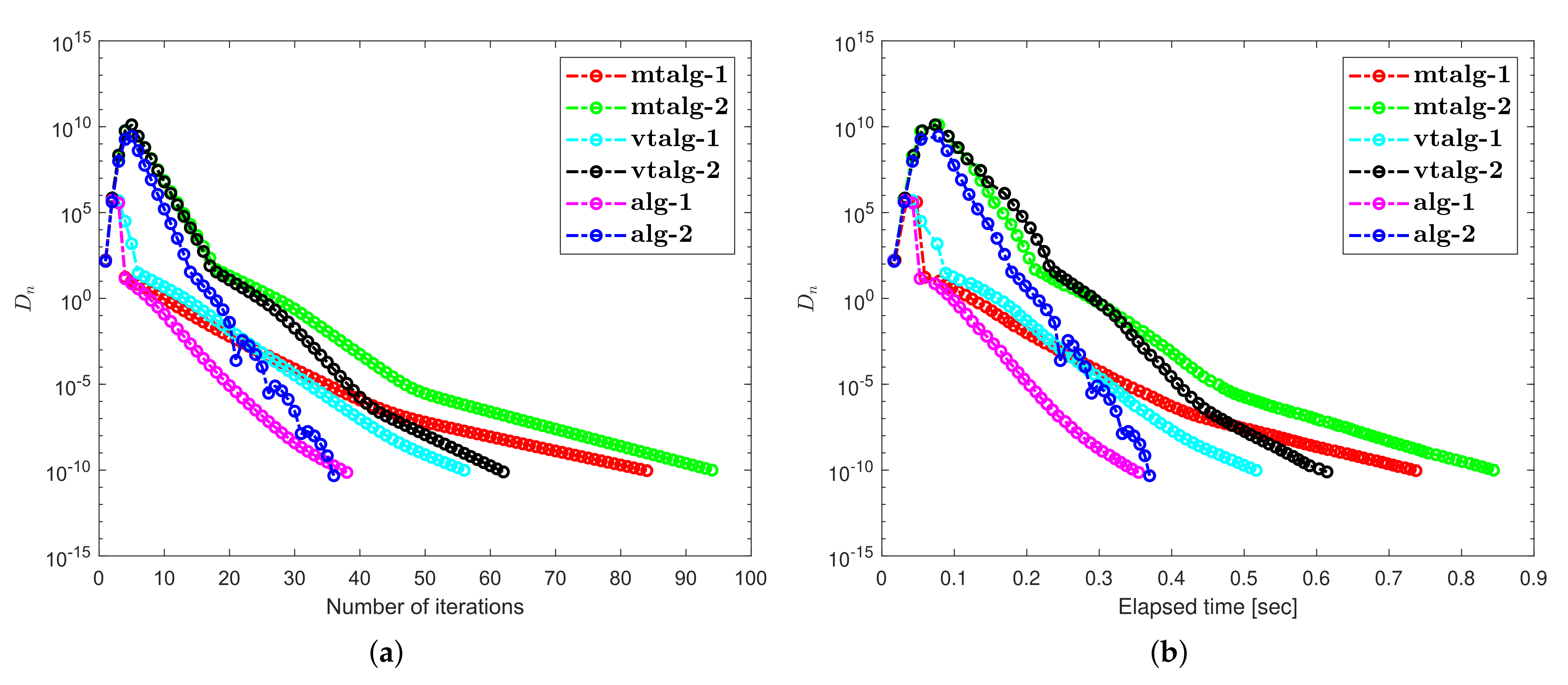

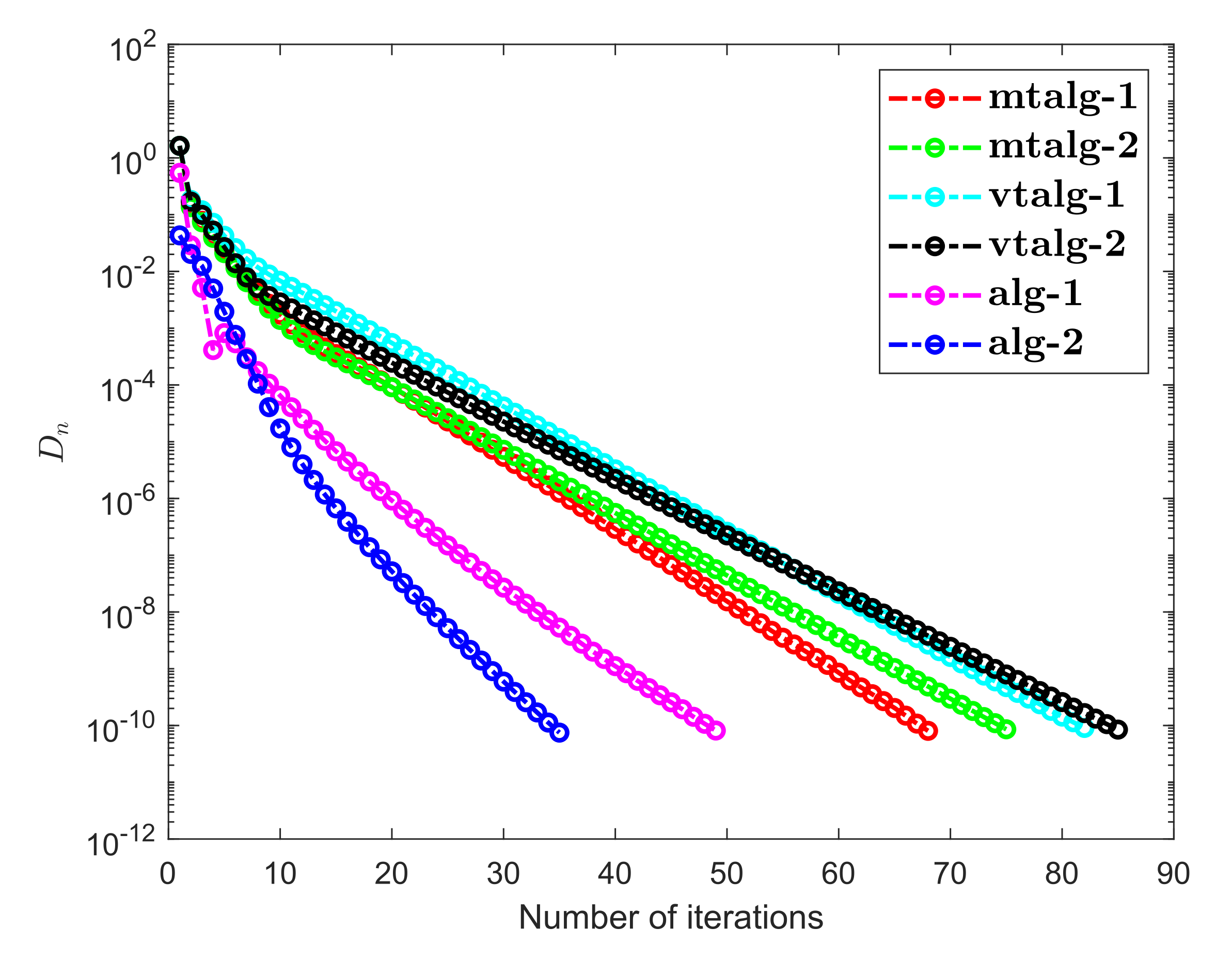

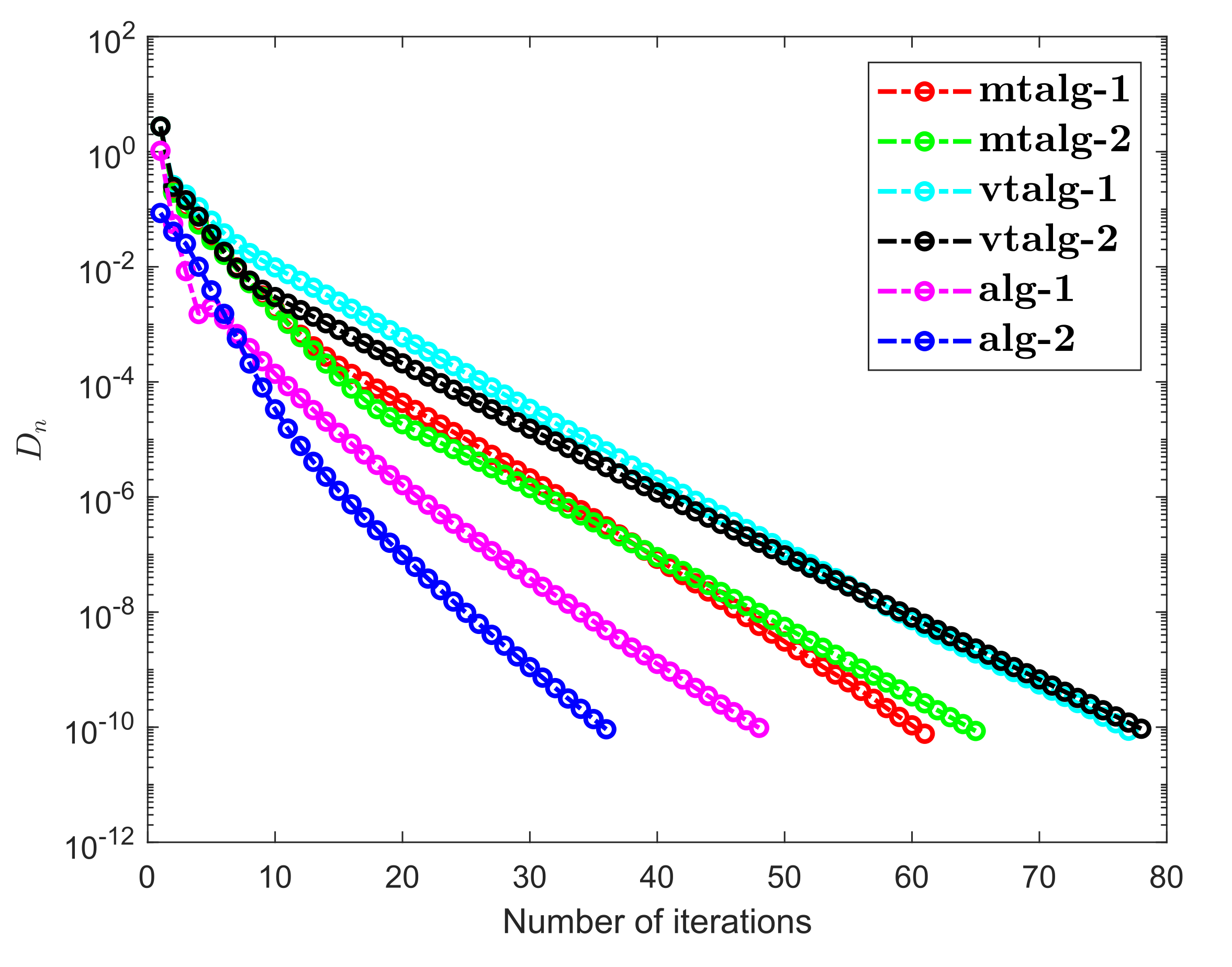

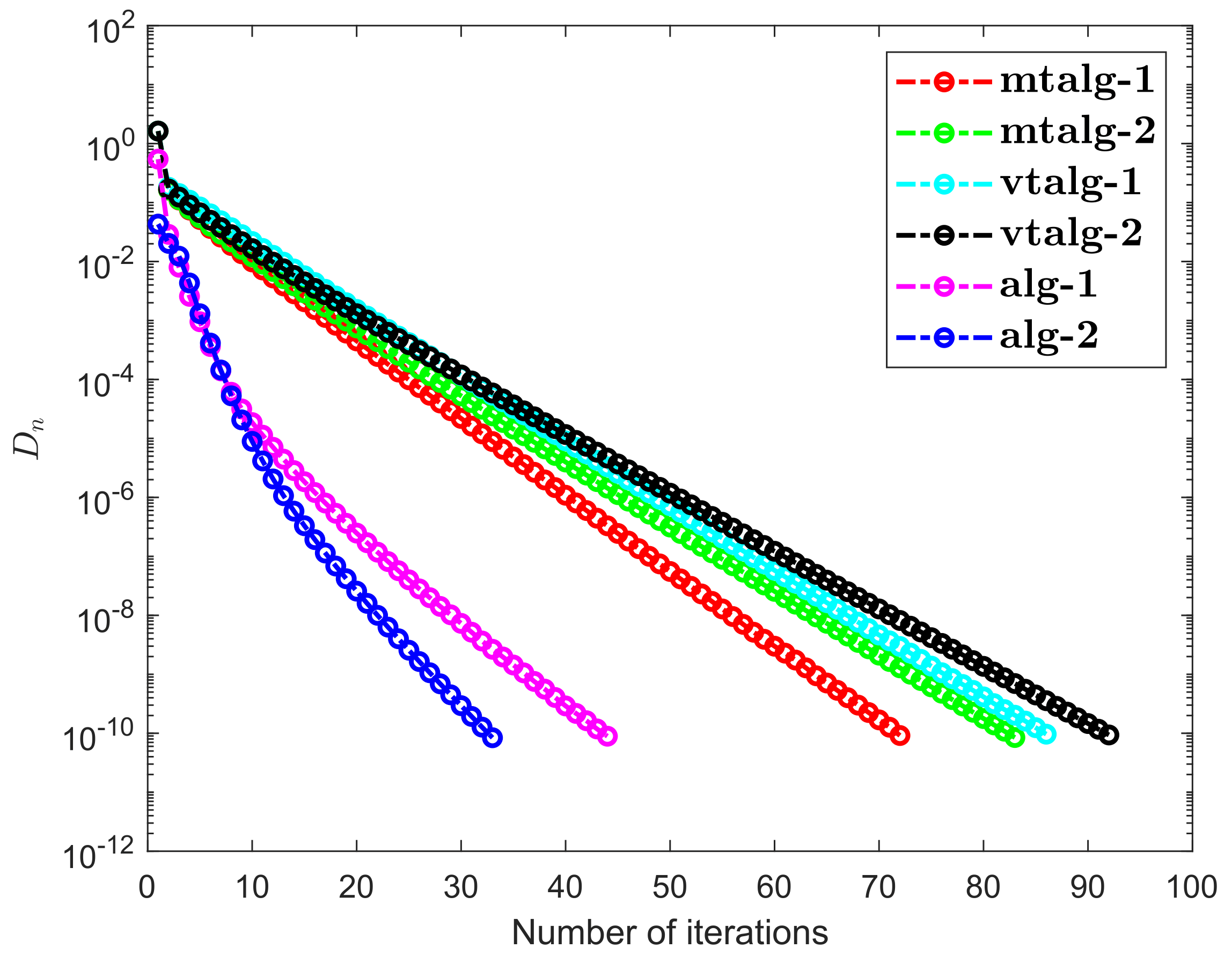

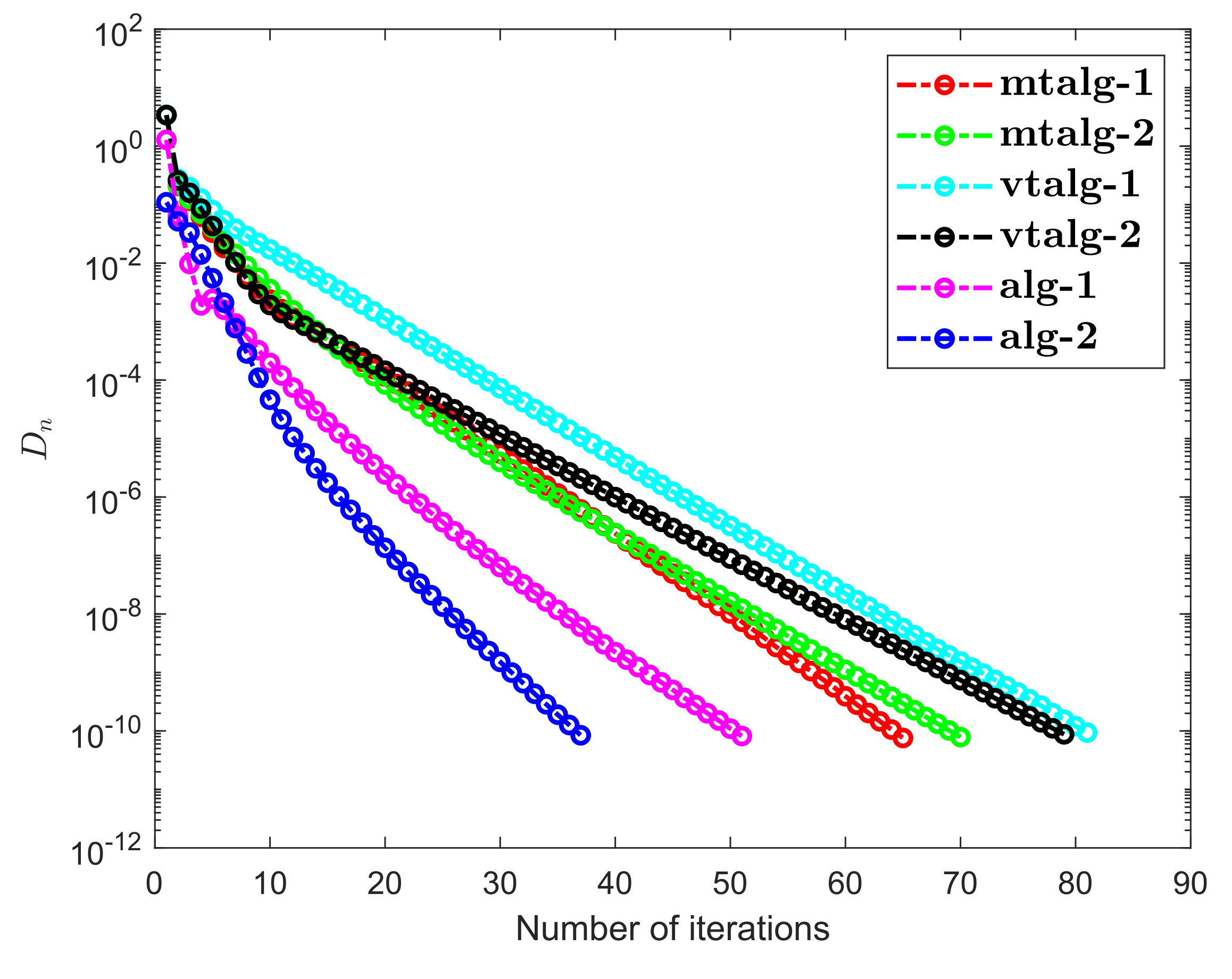

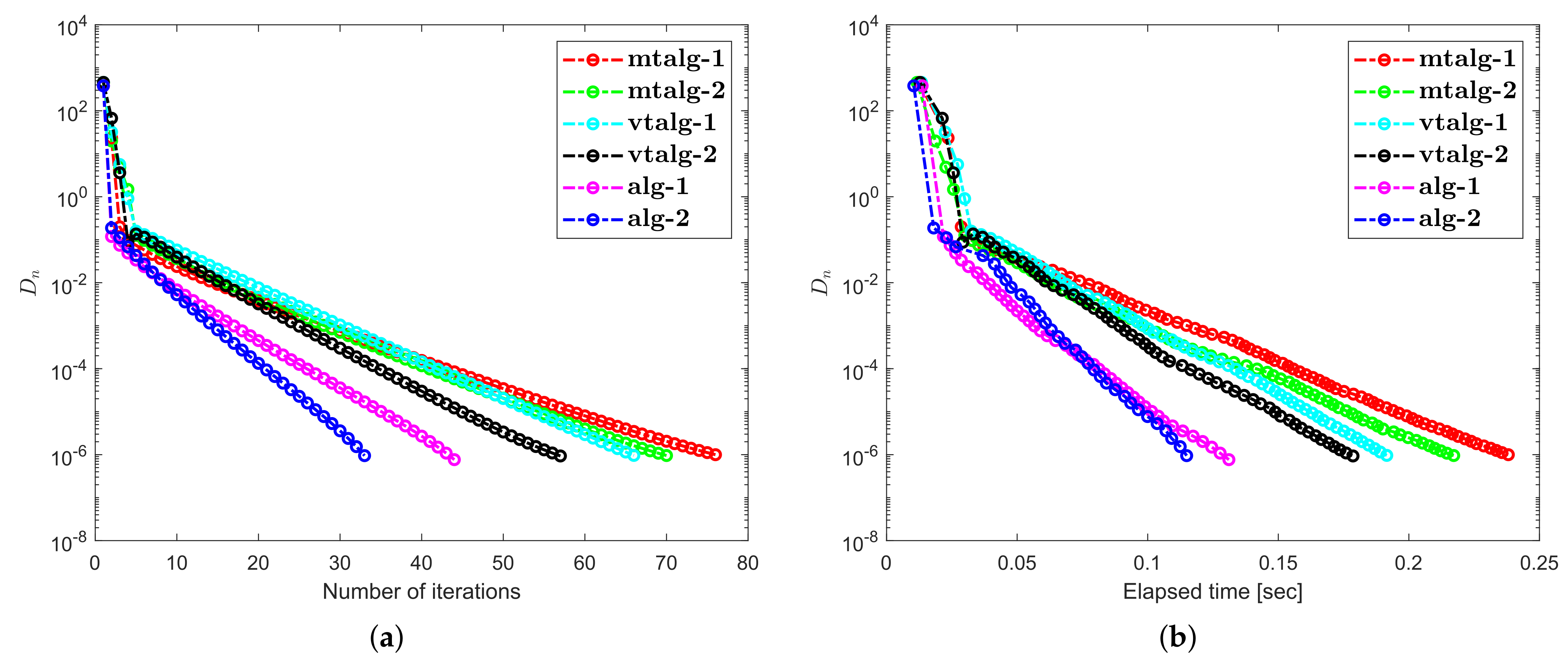

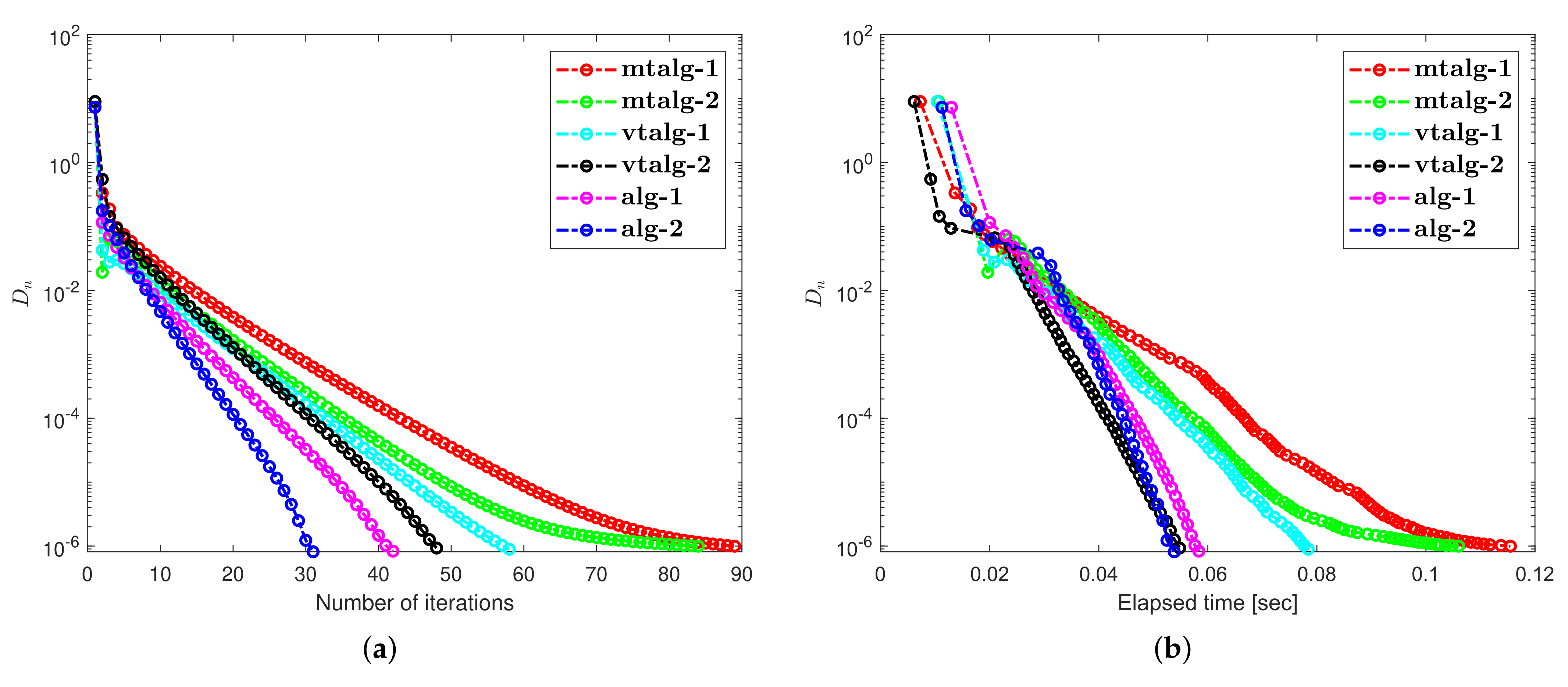

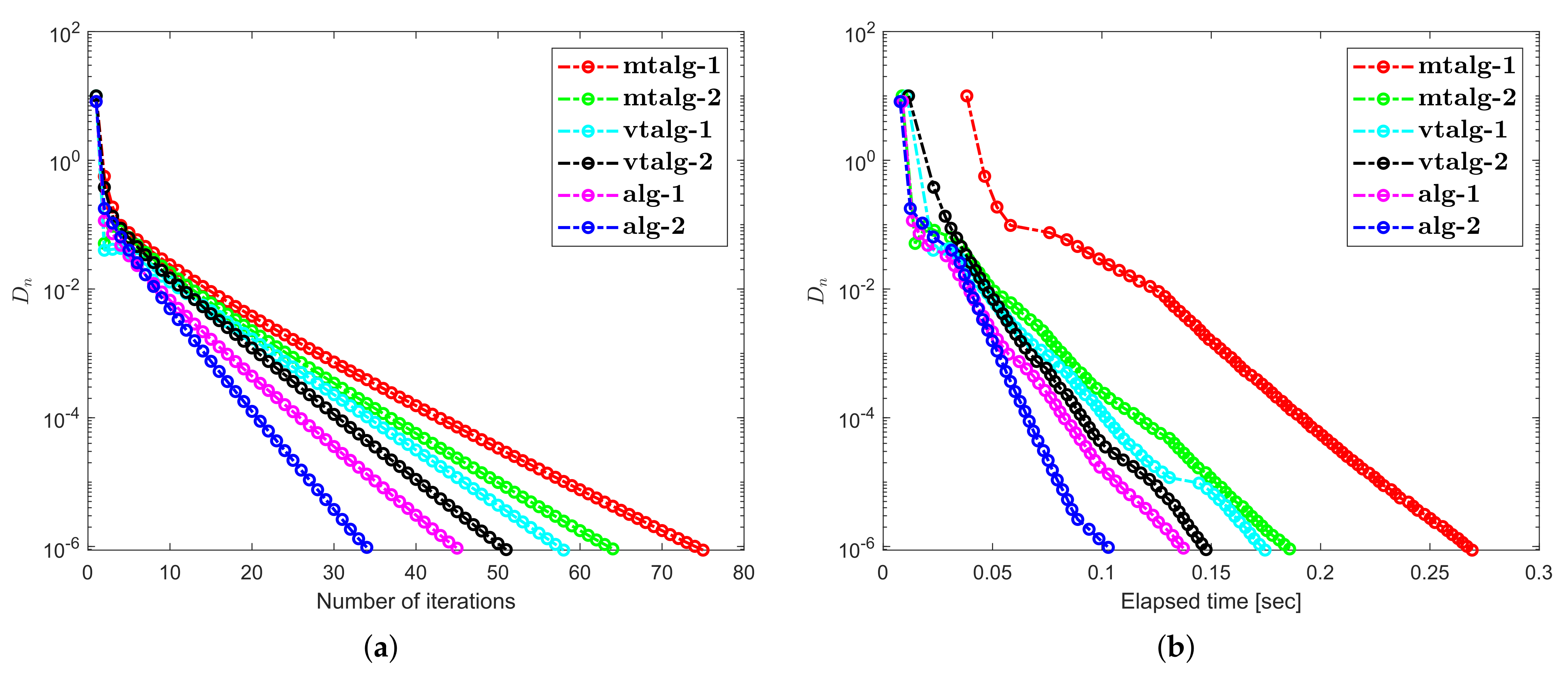

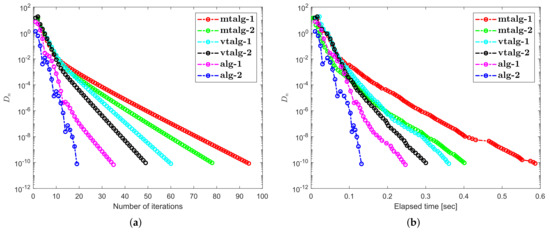

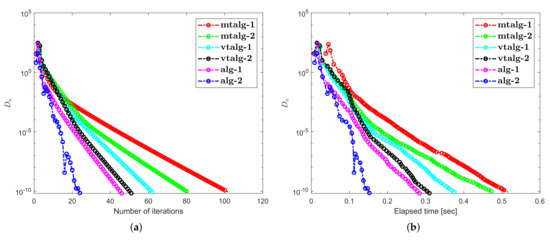

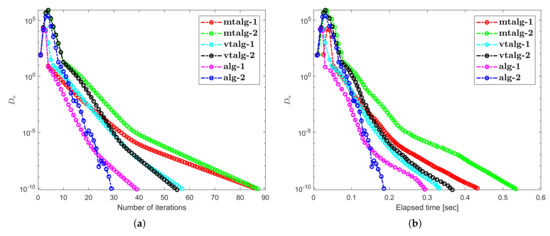

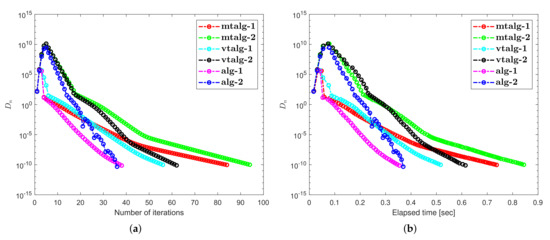

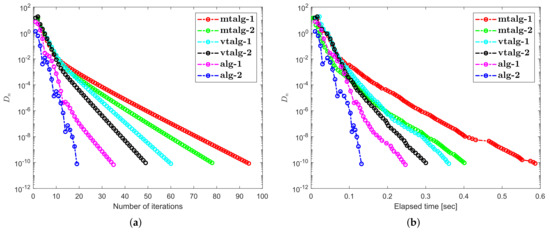

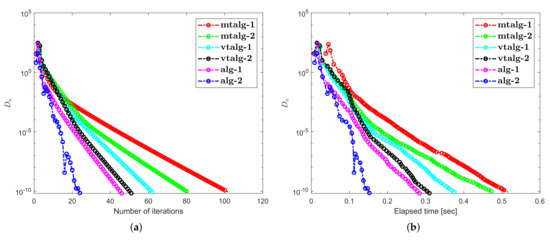

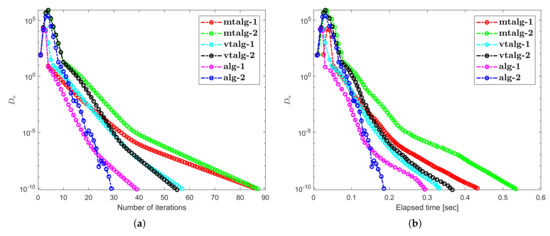

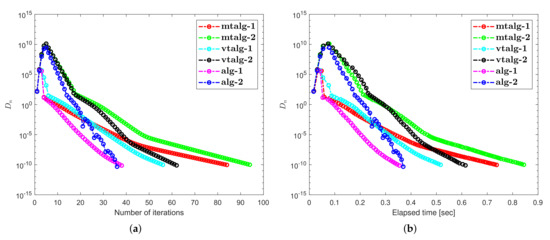

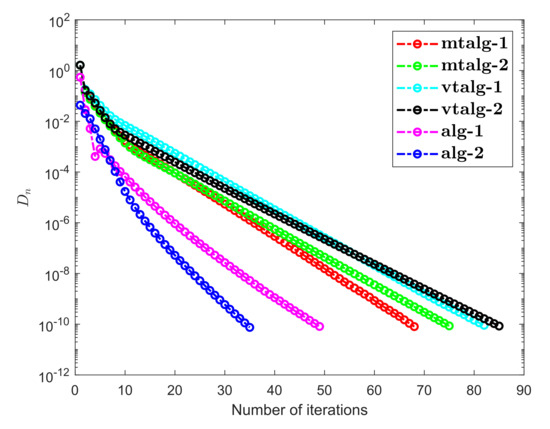

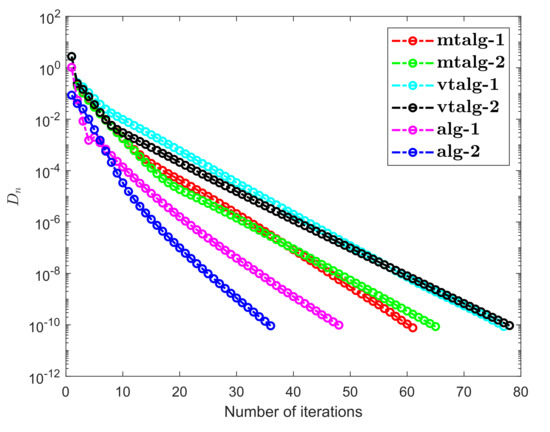

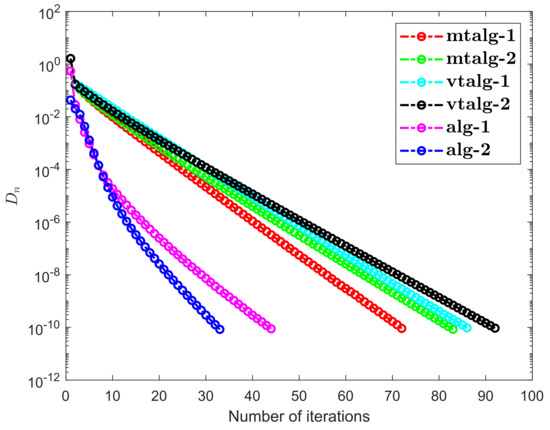

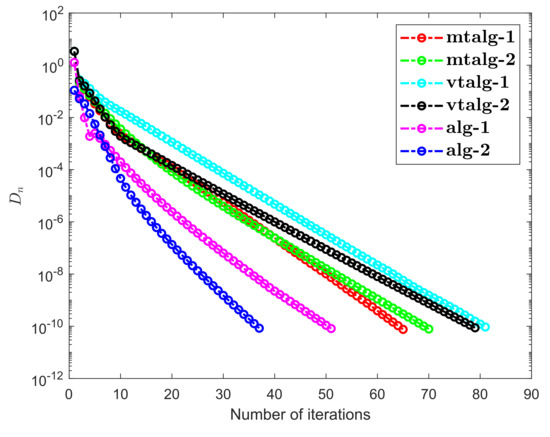

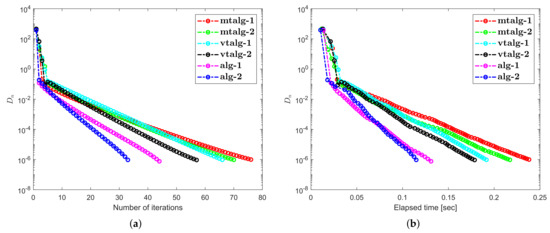

It is obvious that ℑ is monotone and that Lipschitz is continuous by Let be provided by The starting point for this experiment are and dimension of the space is taken differently with stopping criterion Numerical observations for Example 1 are shown in Figure 1, Figure 2, Figure 3 and Figure 4 and Table 1 and Table 2. Control criteria applied are as follows: (1) Algorithm 1 (shortly, alg-1): (2) Algorithm 2 (shortly, alg-2): (3) Algorithm 1 in [36] (shortly, mtalg-1): (4) Algorithm 2 in [36] (shortly, mtalg-2): (5) Algorithm 1 in [35] (shortly, vtalg-1): (6) Algorithm 2 in [35] (shortly, vtalg-2):

Figure 1.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

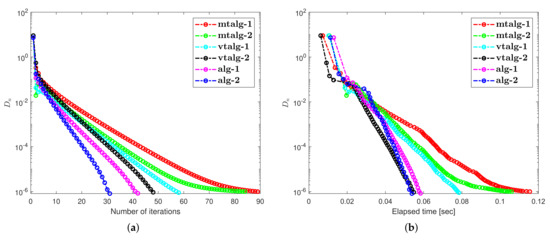

Figure 2.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

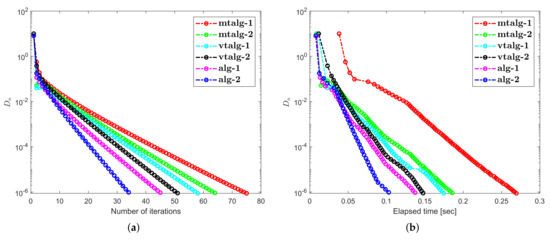

Figure 3.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

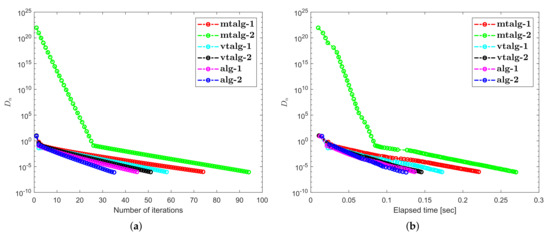

Figure 4.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Table 1.

Example 1 obtained numerical values.

Table 2.

Example 1 obtained numerical values.

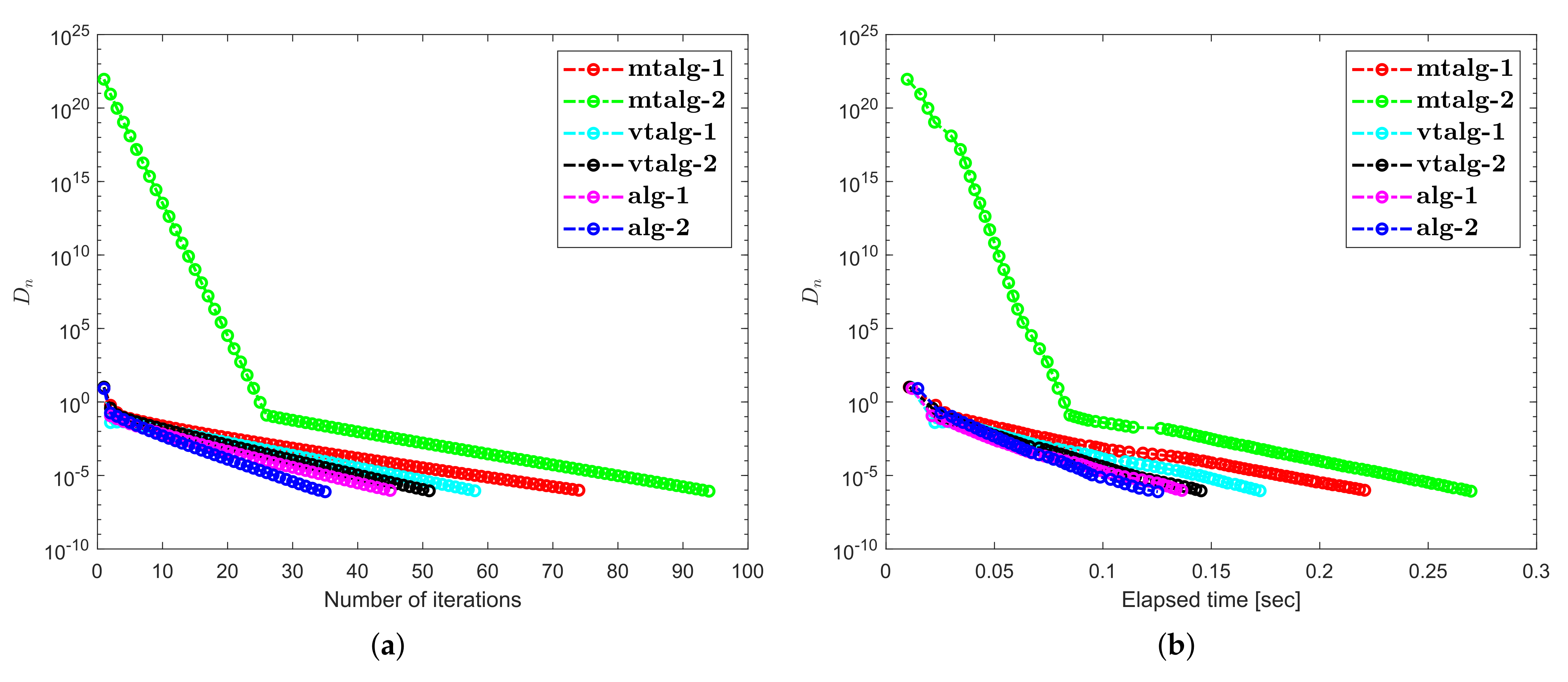

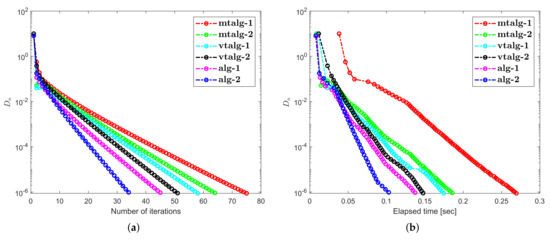

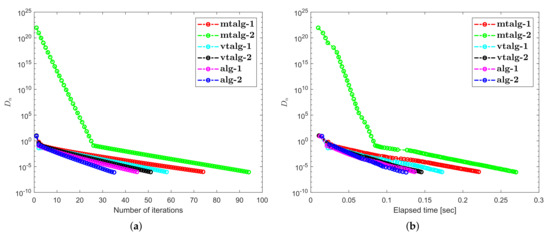

Example 2.

Consider a nonlinear operator is defined by

and the feasible set is a set expressed by . It is easy to check that ℑ is monotone and Lipschitz continuous with the constant . Let E be a matrix defined by

We consider the mapping by where It is obvious to see that is 0-demicontractive and thus The solution of the problem is The starting points for this experiment are used differently with stopping criterion Numerical observations for Example 2 are shown in Figure 5, Figure 6, Figure 7 and Figure 8 and Table 3 and Table 4. Control criteria applied are as follows: (1) Algorithm 1 (shortly, alg-1): (2) Algorithm 2 (shortly, alg-2): (3) Algorithm 1 in [36] (shortly, mtalg-1): (4) Algorithm 2 in [36] (shortly, mtalg-2): (5) Algorithm 1 in [35] (shortly, vtalg-1): (6) Algorithm 2 in [35] (shortly, vtalg-2):

Figure 5.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 6.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 7.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 8.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Table 3.

Example 2 obtained numerical values.

Table 4.

Example 2 obtained numerical values.

Example 3.

Suppose that be a Hilbert space through an inner product

where the induced norm

Let be the unit ball and is defined by

where

It is evident that ℑ is Lipschitz-continuous with Lipschitz constant and monotone [44]. The projection on is inherently explicit, that is,

An operator is of form

A straightforward computation implies that is 0-demicontractive. The solution of the problem is The starting point for this experiment are taken differently with stopping criterion Numerical observations for Example 3 are shown in Figure 9, Figure 10, Figure 11 and Figure 12 and Table 5 and Table 6. Control criteria applied are as follows: (1) Algorithm 1 (shortly, alg-1): (2) Algorithm 2 (shortly, alg-2): (3) Algorithm 1 in [36] (shortly, mtalg-1): (4) Algorithm 2 in [36] (shortly, mtalg-2): (5) Algorithm 1 in [35] (shortly, vtalg-1): (6) Algorithm 2 in [35] (shortly, vtalg-2):

Figure 9.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 10.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 11.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Figure 12.

Computational illustration of Algorithms 1 and 2 with Algorithm 1 in [36], Algorithm 2 in [36] and Algorithm 1 in [35], Algorithm 2 in [35] when .

Table 5.

Example 3 obtained numerical values.

Table 6.

Example 3 obtained numerical values.

5. Discussion about Numerical Illustrations

Regarding the above-mentioned numerical experiments, we have the following findings:

- (1)

- Examples 1–3 reported results for several algorithms in both finite and infinite-dimensional spaces. It is clear to see that the provided algorithms outperformed in terms of number of iterations and elapsed time in almost all situations. All experiments show that the proposed algorithms perform better the previously existing algorithms.

- (2)

- The appearance of unsuitable variable step size causes a hump in the graph of algorithms in Example 2. It does not really effect the overall performance of the algorithms.

- (3)

- Examples 1–3 reported results for different algorithms for both finite and infinite-dimensional spaces. In most cases, we can see that the algorithm’s performance is determined by the nature of the problem and the tolerance value employed.

- (4)

- For large-dimensional problems, all approaches typically took longer and showed significant variation in execution time. The number of iterations, on the other hand, changes slightly less.

- (5)

- It is also observed that a specific formula for stepsize evaluation enhances the algorithm’s efficiency and the pace of convergence. In other words, rather than the fixed stepsize, the appropriate variable stepsize improves the performance of algorithms.

- (6)

- In Examples 2 and 3, it can also be shown that the initial point choice and the complexity of the operators have an influence on the performance of algorithms in terms of the number of iterations and time of execution in seconds.

Author Contributions

Conceptualization, C.K. and N.P.; Funding acquisition, B.P.; Investigation, N.P.; Methodology, N.P.; Project administration, C.K.; Supervision, B.P.; Validation, C.K. and N.P.; Writing—original draft, C.K. and N.P.; Writing—review & editing, C.K. and N.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Chiang Mai University and the NSRF via the Program Management Unit for Human Resources & Institutional Development, Research and Innovation (grant number B05F640183).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Acknowledgments

The authors would like to thanks the referees and editor for reading this paper carefully, providing valuable suggestions and comments, and pointing out a minor errors in the original version of this paper. The first and third authors would like to thank Phetchabun Rajabhat University.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Maingé, P.E. A Hybrid Extragradient-Viscosity Method for Monotone Operators and Fixed Point Problems. SIAM J. Control. Optim. 2008, 47, 1499–1515. [Google Scholar] [CrossRef]

- Maingé, P.E.; Moudafi, A. Coupling viscosity methods with the extragradient algorithm for solving equilibrium problems. J. Nonlinear Convex Anal. 2008, 9, 283–294. [Google Scholar]

- Iiduka, H.; Yamada, I. A subgradient-type method for the equilibrium problem over the fixed point set and its applications. Optimization 2009, 58, 251–261. [Google Scholar] [CrossRef]

- Qin, X.; An, N.T. Smoothing algorithms for computing the projection onto a Minkowski sum of convex sets. Comput. Optim. Appl. 2019, 74, 821–850. [Google Scholar] [CrossRef] [Green Version]

- An, N.T.; Nam, N.M.; Qin, X. Solving k-center problems involving sets based on optimization techniques. J. Glob. Optim. 2019, 76, 189–209. [Google Scholar] [CrossRef]

- Stampacchia, G. Formes bilinéaires coercitives sur les ensembles convexes. Comptes Rendus Hebd. Des Seances Acad. Des Sci. 1964, 258, 4413. [Google Scholar]

- Konnov, I.V. On systems of variational inequalities. Russ. Math. C/C -Izv.-Vyss. Uchebnye Zaved. Mat. 1997, 41, 77–86. [Google Scholar]

- Kassay, G.; Kolumbán, J.; Páles, Z. On Nash stationary points. Publ. Math. 1999, 54, 267–279. [Google Scholar]

- Kassay, G.; Kolumbán, J.; Páles, Z. Factorization of Minty and Stampacchia variational inequality systems. Eur. J. Oper. Res. 2002, 143, 377–389. [Google Scholar] [CrossRef]

- Kinderlehrer, D.; Stampacchia, G. An Introduction to Variational Inequalities and Their Applications; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2000. [Google Scholar] [CrossRef]

- Konnov, I. Equilibrium Models and Variational Inequalities; Elsevier: Amsterdam, The Netherlands, 2007; Volume 210. [Google Scholar]

- Elliott, C.M. Variational and Quasivariational Inequalities Applications to Free—Boundary ProbLems. (Claudio Baiocchi Furthermore, António Capelo). SIAM Rev. 1987, 29, 314–315. [Google Scholar] [CrossRef]

- Nagurney, A. Network Economics: A Variational Inequality Approach; Kluwer Academic Publishers Group: Dordrecht, The Netherlands, 1999. [Google Scholar] [CrossRef]

- Takahashi, W. Introduction to Nonlinear and Convex Analysis; Yokohama Publishers: Yokohama, Japan, 2009. [Google Scholar]

- Korpelevich, G. The extragradient method for finding saddle points and other problems. Matecon 1976, 12, 747–756. [Google Scholar]

- Noor, M.A. Some iterative methods for nonconvex variational inequalities. Comput. Math. Model. 2010, 21, 97–108. [Google Scholar] [CrossRef]

- Censor, Y.; Gibali, A.; Reich, S. The subgradient extragradient method for solving variational inequalities in Hilbert space. J. Optim. Theory Appl. 2010, 148, 318–335. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Censor, Y.; Gibali, A.; Reich, S. Extensions of Korpelevich extragradient method for the variational inequality problem in Euclidean space. Optimization 2012, 61, 1119–1132. [Google Scholar] [CrossRef]

- Tseng, P. A Modified Forward-Backward Splitting Method for Maximal Monotone Mappings. SIAM J. Control. Optim. 2000, 38, 431–446. [Google Scholar] [CrossRef]

- Moudafi, A. Viscosity Approximation Methods for Fixed-Points Problems. J. Math. Anal. Appl. 2000, 241, 46–55. [Google Scholar] [CrossRef] [Green Version]

- Zhang, L.; Fang, C.; Chen, S. An inertial subgradient-type method for solving single-valued variational inequalities and fixed point problems. Numer. Algorithms 2018, 79, 941–956. [Google Scholar] [CrossRef]

- Iusem, A.N.; Svaiter, B.F. A variant of korpelevich’s method for variational inequalities with a new search strategy. Optimization 1997, 42, 309–321. [Google Scholar] [CrossRef]

- Thong, D.V.; Hieu, D.V. Modified subgradient extragradient method for variational inequality problems. Numer. Algorithms 2017, 79, 597–610. [Google Scholar] [CrossRef]

- Thong, D.V.; Hieu, D.V. Weak and strong convergence theorems for variational inequality problems. Numer. Algorithms 2017, 78, 1045–1060. [Google Scholar] [CrossRef]

- Rehman, H.; Kumam, P.; Shutaywi, M.; Alreshidi, N.A.; Kumam, W. Inertial optimization based two-step methods for solving equilibrium problems with applications in variational inequality problems and growth control equilibrium models. Energies 2020, 13, 3292. [Google Scholar] [CrossRef]

- Hammad, H.A.; Rehman, H.; la Sen, M.D. Advanced algorithms and common solutions to variational inequalities. Symmetry 2020, 12, 1198. [Google Scholar] [CrossRef]

- Yordsorn, P.; Kumam, P.; Rehman, H.; Ibrahim, A.H. A weak convergence self-adaptive method for solving pseudomonotone equilibrium problems in a real Hilbert space. Mathematics 2020, 8, 1165. [Google Scholar] [CrossRef]

- Rehman, H.; Gibali, A.; Kumam, P.; Sitthithakerngkiet, K. Two new extragradient methods for solving equilibrium problems. Rev. Real Acad. Cienc. Exactas Fis. Nat. Ser. Mat. 2021, 115. [Google Scholar] [CrossRef]

- Rehman, H.; Kumam, P.; Gibali, A.; Kumam, W. Convergence analysis of a general inertial projection-type method for solving pseudomonotone equilibrium problems with applications. J. Inequalities Appl. 2021, 2021. [Google Scholar] [CrossRef]

- Rehman, H.; Alreshidi, N.A.; Muangchoo, K. A New Modified Subgradient Extragradient Algorithm Extended for Equilibrium Problems with Application in Fixed Point Problems. J. Nonlinear Convex Anal. 2021, 22, 421–439. [Google Scholar]

- Muangchoo, K.; Rehman, H.; Kumam, P. Weak convergence and strong convergence of nonmonotonic explicit iterative methods for solving equilibrium problems. J. Nonlinear Convex Anal. 2021, 22, 663–681. [Google Scholar]

- Rehman, H.; Kumam, P.; Özdemir, M.; Karahan, I. Two generalized non-monotone explicit strongly convergent extragradient methods for solving pseudomonotone equilibrium problems and applications. Math. Comput. Simul. 2021. [Google Scholar] [CrossRef]

- Antipin, A.S. On a method for convex programs using a symmetrical modification of the Lagrange function. Ekon. Mat. Metod. 1976, 12, 1164–1173. [Google Scholar]

- Yamada, I.; Ogura, N. Hybrid Steepest Descent Method for Variational Inequality Problem over the Fixed Point Set of Certain Quasi-nonexpansive Mappings. Numer. Funct. Anal. Optim. 2005, 25, 619–655. [Google Scholar] [CrossRef]

- Tan, B.; Zhou, Z.; Li, S. Viscosity-type inertial extragradient algorithms for solving variational inequality problems and fixed point problems. J. Appl. Math. Comput. 2021. [Google Scholar] [CrossRef]

- Tan, B.; Fan, J.; Qin, X. Inertial extragradient algorithms with non-monotonic step sizes for solving variational inequalities and fixed point problems. Adv. Oper. Theory 2021, 6. [Google Scholar] [CrossRef]

- Mann, W.R. Mean value methods in iteration. Proc. Am. Math. Soc. 1953, 4, 506. [Google Scholar] [CrossRef]

- Zhou, H.; Qin, X. Fixed Points of Nonlinear Operators; De Gruyter: Berlin, Germany, 2020. [Google Scholar]

- Saejung, S.; Yotkaew, P. Approximation of zeros of inverse strongly monotone operators in Banach spaces. Nonlinear Anal. Theory Methods Appl. 2012, 75, 742–750. [Google Scholar] [CrossRef]

- Hicks, T.L.; Kubicek, J.D. On the Mann iteration process in a Hilbert space. J. Math. Anal. Appl. 1977, 59, 498–504. [Google Scholar] [CrossRef] [Green Version]

- Karamardian, S. Complementarity problems over cones with monotone and pseudomonotone maps. J. Optim. Theory Appl. 1976, 18, 445–454. [Google Scholar] [CrossRef]

- Harker, P.T.; Pang, J.S. A damped-Newton method for the linear complementarity problem. Comput. Solut. Nonlinear Syst. Equ. 1990, 26, 265. [Google Scholar]

- Solodov, M.V.; Svaiter, B.F. A New Projection Method for Variational Inequality Problems. SIAM J. Control. Optim. 1999, 37, 765–776. [Google Scholar] [CrossRef]

- Van Hieu, D.; Anh, P.K.; Muu, L.D. Modified hybrid projection methods for finding common solutions to variational inequality problems. Comput. Optim. Appl. 2016, 66, 75–96. [Google Scholar] [CrossRef]

- Dong, Q.L.; Cho, Y.J.; Zhong, L.L.; Rassias, T.M. Inertial projection and contraction algorithms for variational inequalities. J. Glob. Optim. 2017, 70, 687–704. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).