Abstract

This work explores user needs for educational games and gamification that incorporates Generative Artificial Intelligence (GenAI). As GenAI is increasingly incorporated in educational settings, we must consider both the wide-spanning literature on gamification and games that have been shown to benefit learning, and characterize the needs and desires of relevant stakeholders in developing educational games that incorporate GenAI generally, and specifically for higher education. A mixed-methods questionnaire inquired 345 undergraduate students about their perceptions, use patterns, needs, and desires related to GenAI, educational and non-educational games, and text-based games. GenAI tools are widely used for educational purposes already, but mostly as a supplementary source. Despite the wide use, participants expressed being concerned with accuracy, transparency, and quality. Participants also expressed a desire for an educational game/tool to have scaffolded interactions and to help with learning material in math, science, and language arts. Taken together the findings provide a road map and specific recommendations for developing an educational game incorporating GenAI. The roadmap includes instructional design (i.e., the gamified tools’ content and type(s) of instruction and interaction) through information regarding preferred platforms, game genres, gamified properties (e.g., characters, challenges), and lastly, clear information about concerns students have related to trust and equity that will need to be addressed in an educational game incorporating GenAI.

1. Introduction

The field of education has recently experienced a technological shift with the rapid advancement of Generative AI (GenAI) (Alasadi & Baiz, 2023; Baidoo-Anu & Ansah, 2023; Uanachain & Aouad, 2025). GenAI tools offer adaptative, generative and personalized capabilities that can be operationalized at scale, introducing new opportunities for transforming teaching and learning. One educational domain where GenAI shows promise is game-based learning and gamification. GenAI offers properties like dynamic environments (Kiili et al., 2018), adaptive problem solving (Annetta, 2010), collaboration and teamwork (Squire, 2013). These properties align with long-standing evidence that educational games can improve students’ knowledge retention, foster problem-solving skills, and support the transfer of learning (Clark et al., 2016; Wouters et al., 2013). A compelling application of GenAI in educational games lies in its ability to support dynamic, student-driven narratives. For example, rather than requiring developers to manually construct exhaustive decision trees for narrative progression, GenAI allows for dynamic interaction and can generate context-sensitive storylines in response to student inputs. This shift not only reduces design complexity and development time but also allows for greater narrative flexibility.

In this context, text-based games offer an accessible entry point for incorporating GenAI into educational game design. Commonly used GenAI tools rely on text-based interactions and can be leveraged for educational games and gamification (e.g., Gemini, Google, 2025; ChatGPT, OpenAI, 2023). Thus, educators, researchers, and educational game developers can build on established principles from educational games and gamification to design effective, GenAI-supported tools for learning. However, the combination of GenAI and educational games is relatively novel, and its success partly depends on how well it aligns with users’ experiences, expectations, and needs. Given the growing use of GenAI in learning contexts, it is essential to center learners’ voices in the design of such tools (Shehata et al., 2024).

The current study conducts a user needs analysis using a survey to generate insights for educators and game designers developing GenAI-supported educational games. The following sections review related work on educational games and gamification, text-based games, the potential of GenAI incorporation in these domains, and user needs analysis practices. We then present insights from a survey of undergraduate students (n = 345) and discuss the implications of their responses for the design of learner-centered educational games that integrate GenAI.

2. Related Literature

2.1. Generative AI and Education

The rapid advancements in GenAI, driven by large language models (LLMs), have initiated a paradigm shift in education (Alasadi & Baiz, 2023; Baidoo-Anu & Ansah, 2023; Uanachain & Aouad, 2025). Institutions and schools are widely integrating GenAI tools, creating AI literacy modules, and issuing guidelines to support responsible and effective classroom use of GenAI (Ng et al., 2025). As such, a growing body of research has reported that GenAI-supported tools are increasingly implemented in both teaching and learning processes. For example, educators are leveraging GenAI to automate lesson planning (Moundridou et al., 2024) and generate assessment questions (Kaldaras et al., 2024). On the learner side, GenAI enables personalized learning experiences (Udeh, 2025), interactive virtual tutoring (Belawati & Prasetyo, 2025), and real-time formative feedback (Uanachain & Aouad, 2025). Such integration of GenAI into educational settings holds the potential to reshape traditional models of instruction and learning. By offering adaptive and personalized support, GenAI can meet diverse learner needs, enhance student engagement, and enable self-directed learning that goes beyond one-size-fits-all approaches (Ayeni et al., 2024). However, achieving these potential benefits requires careful consideration of issues related to user needs, equity, accessibility, and ethical usage to ensure that these technologies serve all learners fairly and responsibly (Nguyen, 2025). In this study, we focus on the practical application in educational games, a domain where GenAI’s adaptive and generative capabilities can be operationalized at scale.

2.2. Educational Games, Gamification, and GenAI

Educational games are games that are explicitly designed for the purpose of learning (Gee, 2003; Plass et al., 2015). These games integrate content knowledge acquisition, skill-building, and pedagogical frameworks into interactive gameplay experiences aimed at both education and entertainment, a segment of a wider category called “serious games” (Michael & Chen, 2005). Educational games typically blend narrative, simulation, and systems-thinking mechanics, offering situated learning contexts in which learners apply knowledge to solve meaningful challenges (Gee, 2003). Research in this domain demonstrates that educational games can enhance knowledge retention, transfer of learning, and problem-solving skills across a wide range of cognitive domains (Clark et al., 2016; Wouters et al., 2013).

Science-based games enable learners to test hypotheses in simulated environments, while mathematics games can foster conceptual understanding through adaptive problem solving (Annetta, 2010). Importantly, these games facilitate active learning by requiring learners to engage with content dynamically and experientially, rather than passively receiving information in written or auditory form (Kiili et al., 2018). Beyond cognitive outcomes, studies highlight the affective and social dimensions of educational games. Multiplayer and collaborative environments foster teamwork, communication, and interpersonal skills (Squire, 2013), while story-driven educational games can nurture empathy and ethical reasoning—skills aligned with 21st-century learning goals (Schrader & McCreery, 2008). Yet, challenges persist with educational game adoption, including concerns around scalability, costs, curriculum integration, and varied learner acceptance (de Freitas, 2006; H. N. Eukel et al., 2017). These challenges can be alleviated through the inclusion of user (i.e., student) input being incorporated in the design process. Involving learners contributes to educational game design that aligns with user needs and preferences, making games more engaging, accessible, and as a result, more widely adopted.

Recent research has increasingly emphasized adaptive educational games, which can personalize learning through data-driven adjustments to difficulty, feedback, and narrative progression (Plass et al., 2015). The incorporation of GenAI extends these capabilities by enabling real-time personalization grounded in learner’s performance, preferences, and behavioral patterns (Borah et al., 2024; Moon et al., 2025; Oliveira et al., 2023). As a result, educational games can adapt interactions to support students’ strengths, preferences, and knowledge gaps. Personalization can be achieved through adaptive game world building, narrative branching, and interactive dialog systems (French et al., 2023; J. S. Park et al., 2023; Ratican et al., 2023; Xu et al., 2023). It can also include adjusting question difficulty and providing dynamic, more human-like feedback to support the learning process (Anjum et al., 2024). Moreover, GenAI enables feedback loops and performance-based responses without developers hand-authoring exhaustive decision trees (Koivisto & Hamari, 2019). GenAI can also automate goal setting and progress visualization, which have been shown to add to learners’ sense of achievement and learning agency (Seaborn & Fels, 2015), without programmers having to develop dedicated mechanisms to meet those goals.

Gamification is another way to make learning immersive and engaging that has been widely researched and lends itself to GenAI applications. Gamification, the use of game mechanics such as points, badges, and quests in non-game contexts, has been shown to increase motivation (intrinsic and extrinsic, Toda et al., 2019) and engagement in learning (Deterding et al., 2011), and to foster autonomy, persistence, and deeper interaction with learning materials (Hamari et al., 2014). For the purposes of this study, we consider gamification not only as a motivational tool but also as a structural framework to support interactive, learner-centered engagement in text-based games. That said, GenAI-powered gamification comes with important caveats. Critics warn against superficial implementations of gamification, noting that over-reliance on extrinsic rewards may undermine long-term motivation (Hanus & Fox, 2015). To maximize educational impact, gamification should be principled in its alignment with pedagogical intentions, providing meaningful learning experiences rather than merely incentivizing participation (Domínguez et al., 2013; J. J. Lee & Hammer, 2011).

Considerable research has investigated games and gamified environments and indicates that both are useful in education. The exponential growth of GenAI is rapidly reducing barriers to entry into the development of new educational tools that are more engaging for learners. Thus, we frame GenAI integration as a continuation of the broader shift toward adaptive, personalized educational games and gamification.

2.3. Text-Based Games for GenAI Gamification

Text-based games offer a low barrier to incorporating GenAI into educational games due to their lower graphic design and programming requirements. These games use natural language as the primary interface for world modeling and interaction, with minimal or no visual elements. Prior work has developed and studied text-based games and authoring platforms both in educational settings and beyond (Côté et al., 2018; Hausknecht et al., 2020; Madotto et al., 2020; Shridhar et al., 2020). Historically, text-based games involved game designers crafting a finite set of narratives, responses, eventualities, and actions to which players could type in their response. If a player typed in words or phrases that were not programmed into the game, they would get an error or be asked to type a valid input. The advancement of GenAI allows for free-form inputs from users and generates relevant responses to such input (Karpouzis et al., 2024). Moreover, prompt engineering for GenAI tools allows educators and designers with minimal coding and visual design experience to easily build conversational and gamified learning experiences.

The ‘quick and easy’ affordance of GenAI makes it appealing for integrating gamification into text-based learning experiences. However, further characterization of user needs and testing of educational games and gamification incorporating GenAI is required for these newly available GenAI affordances (Oliveira et al., 2023). It is crucial to understand the needs, preferences, and stances of the intended users to ensure the game design is both human-centered and inclusive such that the system can leverage the strengths of diverse users. The following section describes the user needs analysis methodology and its utility in this context.

2.4. Understanding User Needs

Designing high quality, adoptable tools or products requires consideration of the relevant stakeholders’ needs and desires (e.g., Bardzell, 2010; de Freitas & Jarvis, 2006; Kashfi et al., 2017) alongside the stakeholders’ trust requirements (Goldshtein et al., 2022). In the case of educational games, stakeholders can include the students/users, instructors if the game is employed in a school setting, and the developers of the game (Bunt et al., 2024; Goldshtein et al., 2025). User needs analyses can lead to insights pertaining to user behaviors (Owen & Baker, 2020) like the type of game mechanics students prefer; cognitive preferences such as feedback mechanisms (S. S. Lee & Moore, 2024); and educational games’ technological requirements, such as platforms.

Designing an educational game should involve users in various stages of the design to ensure it meets to students’ needs (see Maxim & Arnedo-Moreno, 2025), beginning with conceptualization (Oliveira et al., 2023; E. J. Park, 2021; Ravyse et al., 2017; Suresh Babu & Dhakshina Moorthy, 2024), continuing after developing a prototype (H. Eukel & Morrell, 2021; Eun et al., 2022), and lastly, in assessing the efficacy and user experience of a completed game. Iterative work on the product can then proceed in this user-experience cycle, which can also be characterized as a learning engineering cycle when applied to educational contexts (Baker et al., 2022; Goodell & Kolodner, 2022; McNamara, 2024).

Identifying users’ needs and experiences increases use, improves usability, and builds trust (Chiou & Lee, 2023). For example, previous research on user needs in the domain of educational games has shown that student engagement and participation increase when games incorporate students’ preferred forms and modalities of feedback (Hyland & Hyland, 2019; Zhang, 2020).

Finally, because needs and abilities vary across populations, inclusive design is essential in games, especially for educational games that address specific user needs such as learning disabilities (Hassan, 2024; Lukava et al., 2022; Spiel & Gerling, 2021). These considerations are even more salient with GenAI, which enables highly personalizable experiences that can better adapt to individual learners (Khan et al., 2024; Moon et al., 2025).

The current study focuses on characterizing the needs and preferences of students, as they are a consistent stakeholder, whether an educational game is employed in a school setting or outside it. In exploring more specific contexts (e.g., the classroom), researchers must also consider other relevant stakeholders’ needs in their analysis (e.g., instructors, Jiang & Ziden, 2025; parents and therapists, Stefanidi et al., 2023).

2.5. Current Study

This study reports on a user needs analysis survey conducted with 345 participants to examine students’ preferences and stances related to games, educational games, text-based educational games, GenAI, and the combination of these. Our research questions are as follows:

- RQ1: Based on their experiences, what are students’ perceptions on GenAI in everyday life and educational contexts?

- RQ2: Based on their experiences, what are students’ perceptions of games and educational games?

- RQ3: What are students’ perceptions regarding the incorporation of GenAI in educational games?

Study findings will inform our own work on developing educational gamified tools incorporating GenAI but will also serve as guidelines for other game developers and educational researchers for incorporating GenAI in educational settings, specifically in gamified educational tools.

3. Materials and Methods

3.1. Participants

A total of 345 students consented to participate in the study. Participants’ ages ranged from 18 to over 26, with an average age of 19.35 years (SD = 1.73). One entry was removed from the age analysis due to missing age data. In terms of academic standing, most participants were freshmen (62.32%), followed by sophomores (22.03%), juniors (11.01%), seniors (4.06%), and graduate students (0.58%). The sample was predominantly female (57.10%), with 41.74% male and 1.16% preferring not to disclose their gender. Participants represented a variety of academic disciplines. The most common majors were Business (45.80%), STEM (34.20%), and Social Sciences (6.38%). A smaller proportion reported majors in Arts (3.48%), Humanities (1.16%), or other (8.99%) fields. Within the ‘other’ category, notable concentrations included Nursing, Graphic Design, and Criminal Justice.

3.2. Study Design

This study employed an exploratory mixed-methods survey design to explore user needs and preferences related to the use of GenAI and games in educational and non-educational contexts. The survey included both quantitative (e.g., Likert scale) and qualitative (e.g., open ended) items. The survey was designed to include items spanning several fields: education, educational games, gaming, and use of Generative AI. Prior to the beginning of the survey, all participants were presented with an informed consent statement outlining the purpose of the study, the voluntary nature of participation, and data confidentiality. Surveys were administered online via Qualtrics over a period of four weeks. Approval for the study was granted by the researchers’ Institutional Review Board (IRB). Participants were recruited through the psychology subject pool and received course credit for participation. The full questionnaire is provided in Appendix A.

3.3. Survey Structure

The survey began with demographic information, e.g., age, gender, educational level, and major. The rest of the survey contained 36 questions (a mix of open-ended and multiple choice questions, see Appendix A) and was structured into four sections addressing key themes relevant to GenAI and educational game design:

- Usage of GenAI in personal and educational contexts: Participants were asked about their usage patterns of GenAI tools such as ChatGPT, DALL-E, Perplexity, and Gemini. Participants were asked to report which tools they use, as well as the frequency and context (e.g., personal vs. educational) of their GenAI usage. Participants were further asked how such tools were employed in educational contexts, if applicable. Based on their responses, participants were prompted to elaborate on the tools used, reasons for non-use (if participants reported not using GenAI tools), and learning scenarios in which GenAI played a role (e.g., concept clarification, assignment help, writing support, etc.). In addition to reporting their usage of GenAI tools, participants also rated the tools’ perceived helpfulness in educational contexts. Additionally, respondents indicated the subjects for which they found GenAI most useful (e.g., science, math, language arts) and selected the importance of GenAI in their learning process (e.g., primary resource, supplementary tool, last resort). To explore some limitations of GenAI use in different contexts, a checklist item assessed common challenges associated with GenAI use for learning, such as difficulty understanding responses, misinformation, ethical concern, and lack of guidance. The responses to these questions provided essential insights to inform learner-centered design in educational game development.

- Familiarity with games, educational games, and text-based games: To understand participants’ gaming behaviors and preferences, this section focused on games in both recreational and educational contexts and included a set of questions focused on frequency, modality, and content of gameplay. Participants reported how often they play games, with options ranging from “every day” to “never”. Those who indicated any level of game play were asked to specify their preferred game modalities (e.g., console, computer, phone, etc.) and genres (e.g., strategy, RPGs, etc.), as well as to list examples of games they enjoy. An additional item assessed participants’ preferred game features (e.g., storytelling, problem-solving, multiplayer interaction, etc.). Furthermore, to assess familiarity with educational games, participants were asked whether they had played such games, and if so, to describe them. Follow-up questions probed the location and timing of playing educational games (e.g., at school, home, etc.), what topics the game addressed (e.g., science, math, coding), and the devices used to access them (e.g., PC, mobile, VR). Finally, participants were asked to indicate which STEM-related subjects they would prefer to learn in a future educational game. The survey also included questions aimed at capturing students’ prior experiences and preferences. Responses to these questions offer insights into how gameplay habits in recreational and educational contexts can inform the design of an engaging, learner-centered AI-supported narrative game.

- Perceptions of games within GenAI context: This section of the survey explored participants’ perceptions of the potential future applications of GenAI in learning and gaming contexts. Participants were asked whether they believed GenAI tools could provide new ways to support their learning beyond their current use of those tools, with an open-ended follow-up prompting ideas for additional use cases. They were also asked whether they believed GenAI should be used in games, and if so, to suggest types of games that might benefit from AI integration. Lastly, participants indicated their willingness to engage with GenAI features within gameplay, offering insight into their openness to AI-enhanced gaming experiences.

- Design Recommendations: The final section of the survey focused on eliciting participants’ design preferences for a GenAI-supported educational game. Open-ended questions asked participants to describe the features that would make a GenAI-supported game appealing to them and how they envisioned GenAI enhancing their overall gaming experience. Lastly, participants were encouraged to offer additional suggestions for features they would like to see in a STEM-focused educational game using GenAI.

3.4. Data Processing and Analysis

Survey responses were cleaned and later analyzed using descriptive statistics and thematic coding to derive insights to guide the design of an AI-driven narrative educational game. For multiple choice questions, we calculated descriptive statistics (i.e., means, standard deviations, distributions) to summarize the trends, while open-ended questions were examined through thematic analysis.

3.4.1. Data Cleaning

The raw dataset contained 401 survey responses. A multi-step data cleaning process was employed to ensure data quality and validity. Entries without participant consent and entries with less than 100% completion rate were excluded from the dataset. Duplicate responses were identified and removed by keeping only the first submission associated with each unique participant to capture their first interaction with the questionnaire. Following cleaning, we assessed response validity based on survey completion time. The average completion time was 22.37 min (SD = 93.15). An outlier threshold was defined as two standard deviations above the mean. Implementing the data cleaning steps resulted in a sample consisting of 345 responses.

3.4.2. Thematic Analysis

A total of three raters rated all open-ended questions, with two raters collaboratively coding each question. Seven open-ended questions were coded using a conventional content analysis procedure (e.g., Hsieh & Shannon, 2005). Specifically, for each question, two raters read through all responses to each question and inductively generated a coding schema based on the topics identified in the response. This was followed by comparing the two coding schemas until an agreed upon coding schema was derived. The two raters then separately coded each question according to the agreed coding schema until an agreement rate of 75% was reached. At that point disagreements were resolved through discussion until 100% agreement was reached. When raters were unable to reach an agreement rate of 75%, coding schemas were changed to better reflect the data or adjusted to be more well-defined for both raters, before re-coding the data.

4. Results

This section reports students’ experiences with and attitudes toward GenAI across personal and educational contexts. Following the research questions, we summarize patterns of adoption, common use-cases, perceived usefulness, and concerns that shape how students engage with GenAI tools.

4.1. RQ1—Based on Their Experiences, What Are Students’ Perceptions on GenAI in Everyday Life and Educational Contexts?

Responses to the first section of the survey questionnaire (Usage of GenAI in personal and educational contexts) address this research question. Participants described their GenAI usage patterns in general, and for educational purposes. They also shared their stances on the helpfulness of GenAI tools in general and within educational contexts.

- Usage of GenAI in Personal and Educational Contexts

Participants’ usage of GenAI is described in terms of perceived value of GenAI tools, challenges and reasons for not using GenAI tools, and perceived future potential uses for such tools.

- Overall Use and Perceived Value of GenAI Tools

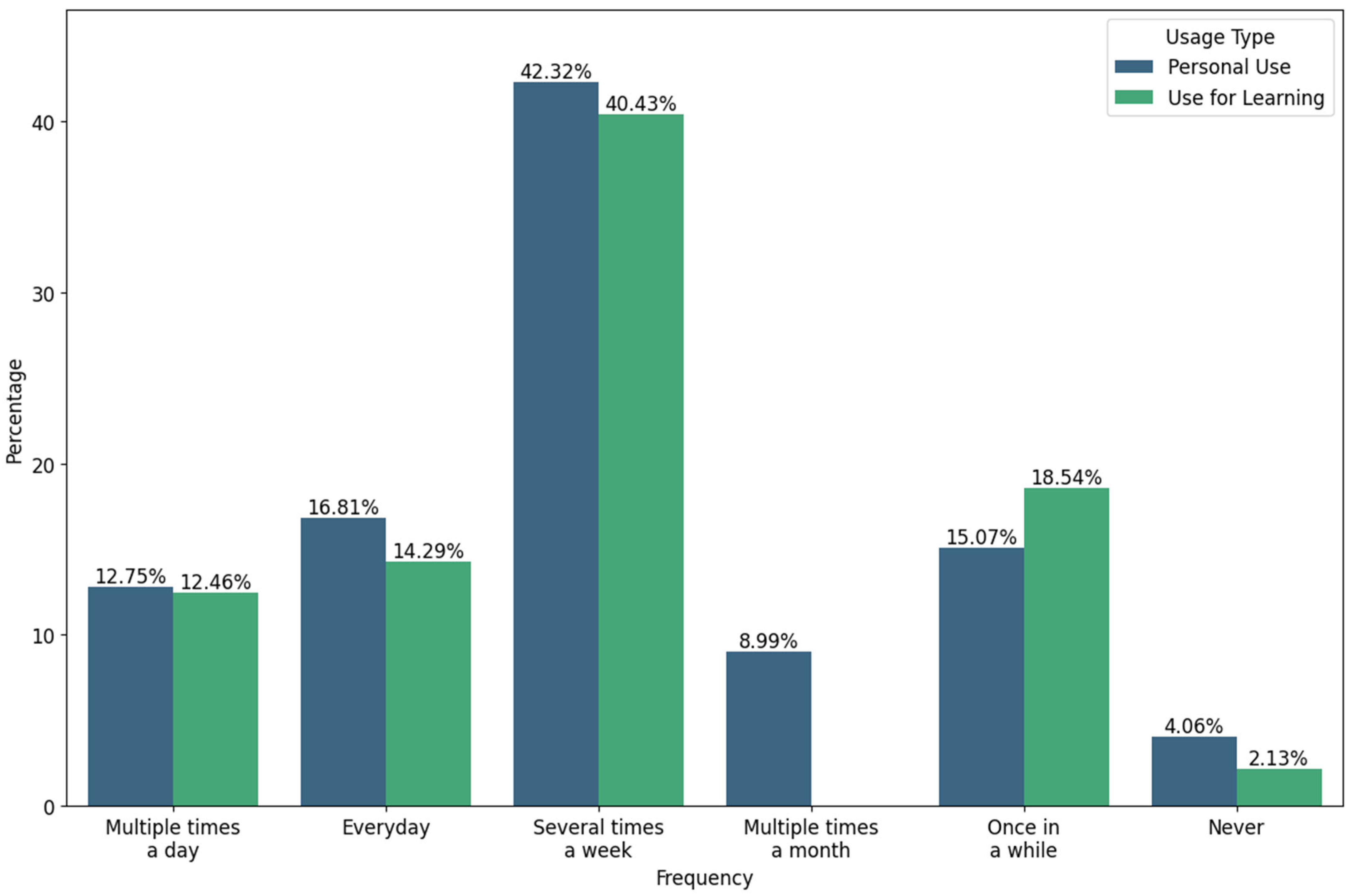

Participants reported widespread use of GenAI tools in both daily life and educational contexts. Over 70% indicated using GenAI tools several times a week or more (Figure 1), with ChatGPT being the most frequently mentioned, followed by tools such as Gemini, Copilot, Grammarly, and Snapchat. Educational usage was particularly prominent as well: over 95% of participants reported using GenAI for both school-related purposes (e.g., assignments) and for informal learning (e.g., self-directed study or information search), with more than 65% indicating use for learning occurred several times a week.

Figure 1.

Reported frequency of GenAI use for personal and educational purposes.

When describing their daily GenAI use, participants most commonly cited support for learning academic concepts (42%), helping with assignments (35%), writing and grammar assistance (23%), and summarizing texts (5%) (Table 1). Other frequently mentioned uses included ideation (26%) and information seeking as a replacement or enhancement to traditional search engines (24%), while only 1.5% reported not using GenAI at all. It is worth noting that when asked about personal use of GenAI, student responses indicate that use for educational purposes (e.g., help with writing tasks, homework) overlaps with non-education related use (e.g., ideation, search for information).

Table 1.

Participants’ reported general use of GenAI. Responses are summarized in themes and percentage counts.

In a multiple-response question specifically about educational use cases (Table 2), the most common uses included searching for and understanding information, brainstorming project ideas, and writing/editing text, with less frequent but notable mentions of language learning.

Table 2.

Participants’ reported educational uses of GenAI tools. The table below shows the percentage of selecting each options (multiple selections allowed).

The perceived value of GenAI for learning was overwhelmingly positive: 97% of participants found these tools helpful, and over 80% rated them as very or extremely helpful. Mathematics, language arts, and science emerged as the most common subject areas in which students applied GenAI for academic support.

- Challenges and reasons for not using

The most commonly reported challenge to using GenAI tools for learning was inaccurate or misleading information (78%). About a third reported ethical concerns (36%) and difficulty interpreting AI responses (35%). Among participants who reported not using GenAI tools for learning, the most common reasons were lack of interest/need or perceived usefulness, concerns about AI unreliability, and a preference for other resources or one’s own work. A small share (0.29%) cited environmental harm.

- Perceived future potential

Many participants reported that GenAI features can be leveraged to aid their learning in ways that extend beyond their current use (70%), some participants (24.6%) were unsure, and 4.4% did not feel that GenAI can be useful for learning. When asked to describe what future uses GenAI may have in education, most participants brought up uses like understanding concepts (31%) and generating practice questions and quizzes (14%). Some participants mentioned GenAI’s ability to assist with their personal learning work such as homework and assignments (2.7%) and help with their writing (7%). More specifically to GenAI, participants mentioned that GenAI can contribute to a more personalized (7%) and engaging (4%) learning experience. A small number of participants (4%) brought up additional responses like generally incorporating GenAI within schools (e.g., ‘I think it would be helpful if ai became more acceptable to use for school, i feel like it is an extremely useful tool and many professors already support using it’ and ‘One engineered for school’), and 10% of participants did not know or did not respond to the question.

4.2. RQ2—Based on Their Experiences, What Are Students’ Perceptions of Games and Educational Games?

The two following questionnaire sections in the survey serve to address our second research question. In these sections, participants reported their experience with games, educational games, and text-based games and shared their perceptions about incorporating GenAI into those types of games. We describe the responses to each subsection separately below.

4.2.1. Familiarity with Games, Educational Games and Text-Based Games

Participant’s familiarity with games, educational games, and text-based games was assessed through their exposure, context of use, and preferred devices. The participants also stated needs and priorities for both general and educational gameplay.

Games (General)

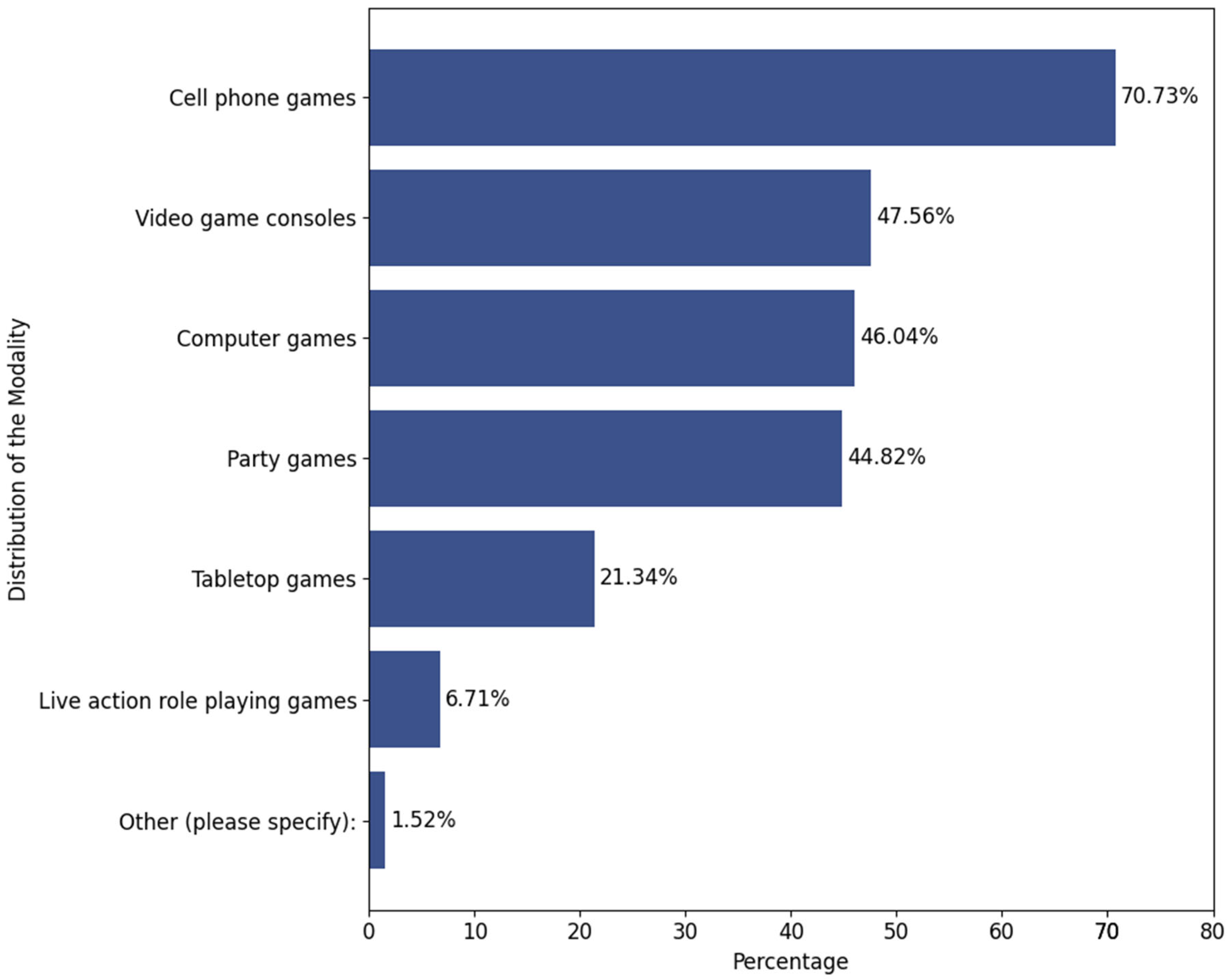

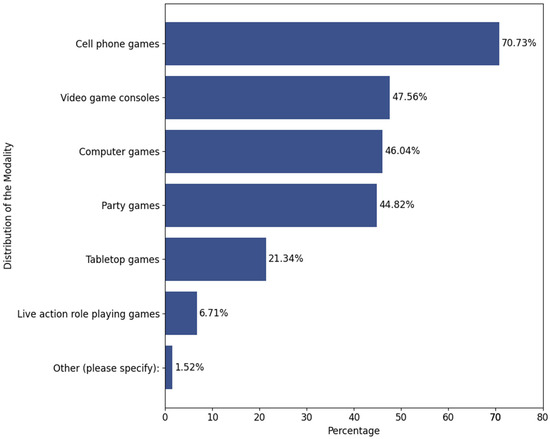

Participants exhibited diverse gaming habits, ranging from daily play (31.9%) to rare or no play (22.9%). While 44% reported playing games at least weekly, a substantial portion engaged occasionally, providing a broad range of prior gaming experience among respondents. The most popular modalities were mobile devices, gaming consoles, and computers (Figure 2). The most popular game genres reported were action/adventure and puzzle. Participants reported rewards and progression as a feature they most enjoy in games, but also mentioned problem-solving, social/multiplayer interaction, and customization as desirable features.

Figure 2.

Proportion of game modalities played by participants. Response options refer to technology-related modalities (cell phone, consoles, personal computers), and in-person modalities (party games, table top games, live action role playing games). In addition, participants could refer to modalities not mentioned by choosing ‘other’ (note. Participants could choose multiple options).

Educational Games

We also asked participants about their experience with educational games. Nearly two-thirds of participants (64.35%) reported having played educational games.

- Contexts

When asked about the stages of education at which they played educational games, participants most commonly reported playing educational games in high school (57.6%), followed by middle school (53.6%) and elementary school (50.7%); fewer reported playing them in college/university (36.6%). With respect to the location, participants reported playing educational games mostly at school (50.7%), followed by at home or at a friend’s house (34.8%). The commonly cited educational game topics were math problem solving (27.8%) and language arts (26.7%), followed by problem-solving skills (20.9%); additional topics included science concept understanding (15.7%), history/social studies (14.5%), creativity (14.2%), and coding/computer skills (9.9%) (these percentages reflect a multi-select item).

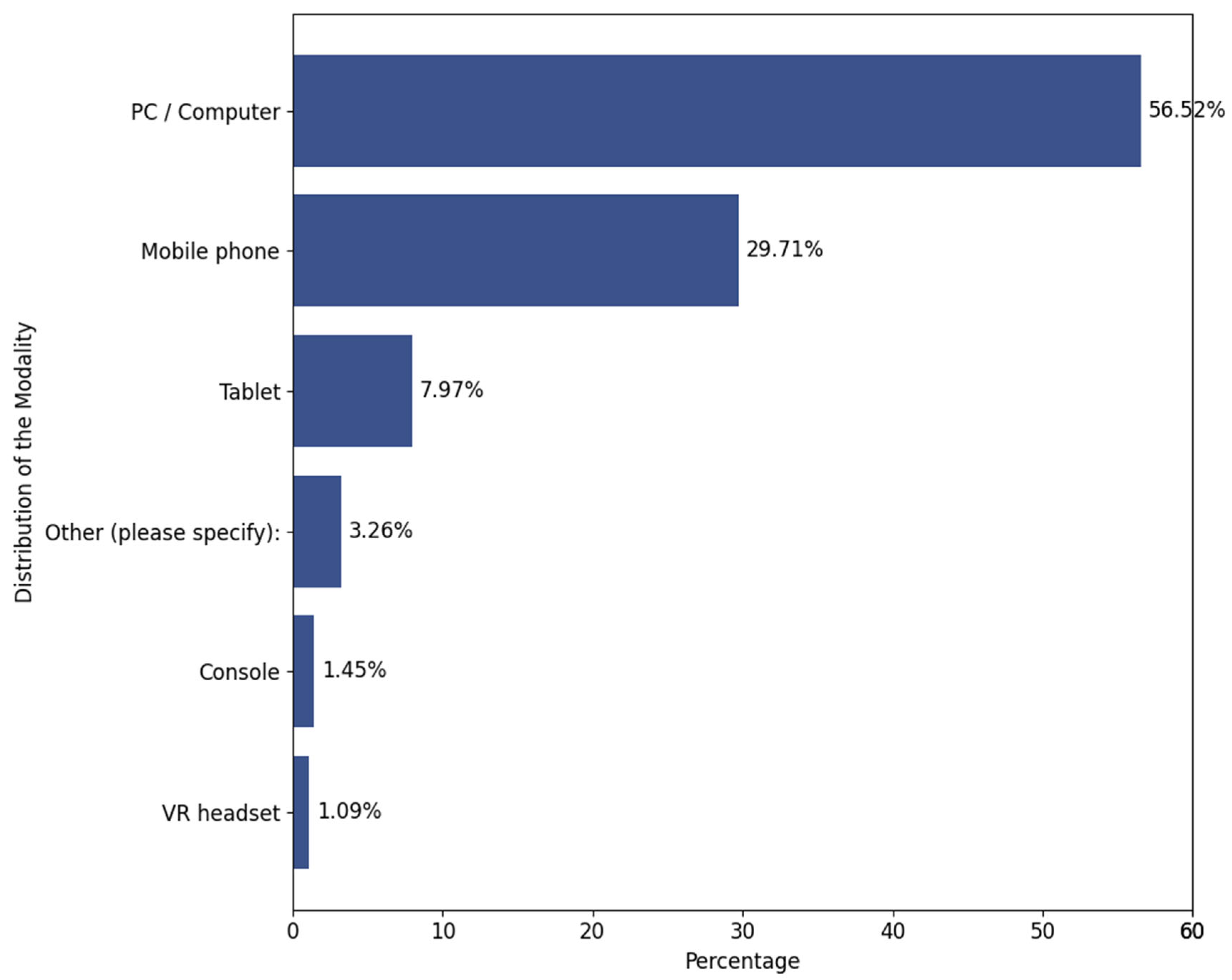

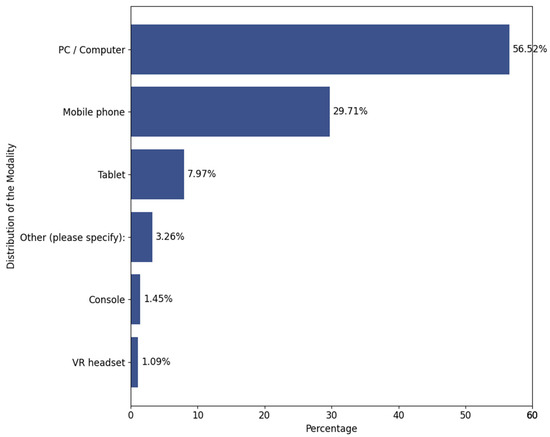

- Devices and user needs/priorities

Unlike the responses for games (Figure 2), participants typically used PCs/computers (56.5%) to play educational games. Some mentioned using mobile phones (29.7%) and tablets (8.0%), and very few cited consoles (1.5%) or VR headsets (1.1%) (Figure 3). When asked which STEM subject would be most useful for a future educational game, participants prioritized science (53.9%), followed by mathematics (24.1%) and technology (14.5%); relatively few selected literature learning (8.1%), health science (5.2%), or environmental science (2.6%).

Figure 3.

Proportions of modalities of educational games played. Response options refer to technology-related modalities (mobile phone, consoles, personal computers, tablets, VR headsets). In addition, participants could refer to modalities not mentioned after choosing ‘other’. (note. Participants could choose multiple options).

Text-Based Games

Participants were also asked about their exposure, preferred features, and challenges associated with text-based games.

- Exposure

When asked specifically about their experience playing text-based games, over 60% of the participants reported either never playing text-based games or being unsure, while 38% reported some level of familiarity (n = 131). Among participants who reported familiarity with text-based games, most named titles that align closely with the genre (e.g., A Dark Room, 20 questions), while 22% were unable to name any specific text-based games. Some participants listed games that are not strictly text-based but include text elements within primarily visual gameplay, such as Legend of Zelda: Breath of the Wild, Pokémon, and Mario Party. These responses suggest that the term “text-based game” may be poorly defined or misunderstood by those less familiar with the genre. The remaining questions about text-based games were answered by this subgroup of 131 participants.

- Preferred Existing Features

Participants were asked about their favorite features of text-based games. Engaging storytelling (74%) and flexibility in choices and outcomes (65%) were voted as most liked features in text-based games. These features were followed by imaginative gameplay (53%), character development (42%), and problem solving (34%). A small percentage of participants (9%) mentioned they do not enjoy text-based games.

- Desired Features

Participants were further asked about features they would like to see in new text-based games being developed. Responses (Table 3) mentioned the desire to have solid and engaging storylines (26.2%), visuals (3.2%), characters (6.2%), and rewards (1.7%). Participants also mentioned wanting the game to be in their preferred genre (15.2%) and referred to specific preferred types of tasks and challenges (10.7%) and game durations (9.7%). Additionally, participants mentioned these elements generally (e.g., “interactive story”) but also envisioned ways in which those elements might be improved using GenAI (e.g., “I would like to see one where you make up your own scenario and generative AI makes up a long adventure based off that prompt.”). This GenAI-specific improvement specifically included mention of personalization and interactive affordances (19.2%).

Table 3.

Categories and examples of responses to the open-ended question ‘What features would you like to see in a text-based game that is being developed (e.g., type of story, duration, type of task, etc.)?’. The percentage for each response is out of a sample of 345. Some responses received multiple codes, when relevant.

- Challenges and uncertainty

When asked about challenges regarding text-based games, a large group of responses (34.4%) included unclear or blank responses, lack of suggestions, or explicit disinterest in text-based games. The ‘other’ category captured unique suggestions from individual participants, such as making the text-based game free to use, focusing more on accessibility, or adding cooperative/multiplayer modes. See Table 3 for examples of all response categories.

4.3. RQ3—What Are Student Thoughts, Desires, and Perceptions Regarding the Incorporation of GenAI in Educational Games?

The last section of the questionnaire was used to answer RQ3. In this section participants could express their thoughts, desires and concerns related to the idea of incorporating GenAI in educational games. This section more explicitly requested participants to think of GenAI features that would be appealing to them, enhance their gaming experience, and add on any additional comments, suggestions, or ideas they may have not been asked about.

4.3.1. Perceptions of Games Within GenAI Context

When asked whether participants think that GenAI can be used in gaming, 41.3% of participants responded ‘Yes’, 51.2% responded ‘Maybe’, and the remaining 7.5% of participants responded ‘No’. While most participants reported either a desire to use GenAI tools in a game (30%) or potentially considering that option (52%), 18% of participants reported not wanting to do that.

4.3.2. Design Recommendations

Participants were asked four open-ended questions probing for their design recommendations. When asked to describe features they would like in a GenAI-supported game (Table 4), many participants brought up improvements in two domains: First, gamified features such as characters (17%), multiplayer (5%), enhanced storytelling (17%), specific game genres (25%), and the desire to form games similar to other games they like (3%). Second, participants mentioned features pertaining to personalized user experiences that they believe GenAI could enhance, such as general personalization (12%), learning assistant (7.5%), and help in solving problems (3%).

Table 4.

Categories and examples of responses to the open-ended question ‘Please describe the features that would make a GenAI-supported game appealing to you. (e.g., game genre, type of story, type of task, type of input from players, or type of response)?’. The percentage for each response is out of a sample of 345.

When asked about ways in which GenAI could enhance their gaming experience (Table 5), participants said they believe GenAI will generally improve games (e.g., ‘…add something extra…’). Participants also mentioned improved visuals (24%) and improvement in the narrative, story, and genre elements (21%), and potentially making the game more fun and engaging (4%). Some participants mentioned enhancing the personalization experience and support in learning (2%). A relatively small percentage of participants (7%) mentioned they did not want GenAI involved in games, fearing that it would have a negative impact on the game quality (e.g., narrative, visuals). While some did not provide specific reasoning, others focused on aspects where GenAI was good for enhancing stories but not visuals, and others the other way around (e.g., ‘I don’t feel like AI could create compelling stories’, or ‘AI shouldn’t touch storytelling, visual art, or programming in any way’).

Table 5.

Categories and examples of responses to the open-ended question ‘How would GenAI enhance your gaming experience? (e.g., AI affecting story, AI affecting visual elements or type of input from players.)’. The percentage for each response is out of a sample of 345.

Lastly, participants were asked ‘Do you have any additional suggestions or comments about features you would like to see in a STEM educational game that uses GenAI (e.g., abilities, type of game, type of character, type of device it is designed for)’? to provide them with an opportunity to bring up any thoughts they were not previously asked about. A total of 37% of the participants responded to this question. Of those participants, 20% indicated they would be interested in availability in multiple modalities (e.g., phone, computer); 30% mentioned they would like the GenAI-powered educational game to be similar to existing games and to have additional gamified features (30%) like more characters, better character design or more choices in the game narrative (e.g., ‘maybe a character that adapts using ai responses instead of general npcs with the same coded lines and interactions.’, ‘choice-based storyline’, or ‘customization, different choices, realistic visuals’); and 9% indicated an interest in personalization in learning.

5. Discussion

This study explored students’ experiences and perspectives on GenAI usage as well as their familiarity with games and educational games, with a focus on potential incorporation of GenAI. The findings are framed through the research questions and suggest implications for educators and educational game designers who wish to leverage GenAI in education/classroom.

5.1. RQ1—Based on Their Experiences, What Are Students’ Perceptions on GenAI in Everyday Life and Educational Contexts?

Survey results reveal that GenAI tools are being widely adopted by students, particularly for education-related uses. A majority of the participants reported weekly use of GenAI, with over 95% using it specifically for educational purposes. Interestingly, when asked about personal use, many of the examples brought up were also related to education. However, over half of the respondents characterized their use of GenAI as a supplemental resource rather than a primary one, suggesting that students leverage these tools primarily as supports to traditional instruction rather than replacements. This aligns with growing expert consensus that GenAI should be used to enhance, not replace, human guided teaching and learning (Kloos et al., 2024). Framing GenAI as an instructional support layer allows for more flexible, responsible, and gradual integration strategies and highlights the need for pedagogical design.

The results provide finer granularity on the types of tasks for which students find GenAI most valuable. Both open-ended responses and checklist selection responses converge on use cases such as concept understanding, brainstorming, search, and writing-related tasks such as drafting and editing. These findings align with previous studies which found GenAI tools are widely adopted in the educational context (Ng et al., 2025). Educators can leverage GenAI for developing cognitive support functions such as ideation, explanation, and content organization that could enhance student learning. Despite the high rates of perceived usefulness of GenAI (e.g., 97% of the students reported it as helpful), a majority of the students cited concerns about accuracy (78%), ethical use (36%), and lack of explainability (35%). These findings carry important instructional design implications for educators: GenAI holds promise as a learning support tool. However, educators must integrate it thoughtfully to mitigate risks of misinformation, over-reliance, and uncritical use. To support responsible integration, educators can (1) incorporate AI literacy instruction by teaching students how to evaluate AI-generated content for accuracy, bias, and reliability; (2) establish responsible use policies, such as requiring students to disclose and reflect on their use of GenAI tools in assignments (Guest et al., 2025); and (3) emphasize transparency and verification through classroom tasks that include explainability prompts (i.e., asking students to justify or critique GenAI outputs) or designing rubrics that reward thoughtful revision and AI-assisted drafting. Together, these strategies promote the effective and ethical use of GenAI in educational settings, ensuring it enhances rather than replaces human-guided learning.

Finally, student reports of current use along with suggestions for future uses of GenAI indicate a broad interest in expanding its educational applications. Subject-specific responses showed the greatest current utility for students in math, language arts, and science, with frequent use reported for assignments and writing. Importantly, the strong interest students expressed in future GenAI integration emphasizes the urgency of developing equitable and functional access, particularly for students who may be less familiar with these tools or lack adequate support.

5.2. RQ2—Based on Their Experiences, What Are Students’ Perceptions of Games and Educational Games?

Survey responses suggest that students are actively engaged with games, and particularly games played on mobile devices, PCs, and consoles. While general gaming trends skew toward mobile platforms, educational games are more commonly accessed via computers. Current topics for educational games are concentrated in math and language arts, but students expressed a desire to see future educational games expand into science. The desire for a shift in topics presents a clear design implication for game designers: educational games designers should optimize for computer use but also consider cross-platform compatibility for different environments. Developing versions that maintain consistent learning objectives and interactions across multiple devices (e.g., tablet, phone, or laptop) may enhance accessibility and sustained use. In addition, an expansion of topics covered by educational games to include sciences is warranted.

Interestingly, despite high general gaming familiarity, students showed relatively low familiarity with text-based games, with over 60% reporting lack of familiarity or uncertainty. Many students appeared to confuse text-based games with text-heavy visual games, such as Role Playing Games (RPGs). Nevertheless, among those who had experience with text-based games, features such as engaging storytelling (74%) and flexible, player-driven choices (65%) were highly valued. These suggest that while there might be a learning curve, students do see potential in narrative-driven experiences. However, it is worth noting that even students who are not familiar with text-based games are still well versed in text-based environments, as most student interactions with GenAI platforms are done in text-based environments. Together, these findings point to several considerations for game designers. To support user onboarding, developers should incorporate tutorials or example playthroughs that clarify the format and mechanics of text-based games. Enhancing text-based gameplay with optional visuals, diagrams, or text-to-speech features can lower the barrier to entry and accommodate diverse learning preferences (Cezarotto et al., 2022; Hassan, 2024). Additionally, developers should leverage existing GenAI platforms as a foundation for building narrative-rich, interactive learning games that align with students’ familiarity with text-based interfaces.

5.3. RQ3—What Are Students’ Thoughts, Desires, and Perceptions Regarding the Incorporation of GenAI in Educational Games?

Student attitudes reflect openness to the use of GenAI in games. Students were either supportive (41%) or open to the possibility (51%) of GenAI features in educational games, while 18% expressed hesitation or specific concerns (e.g., preferring human-designed game plots and visuals). This is partly due to expressed concerns around trust and lack of perceived utility (Đerić et al., 2025; Luo, 2025). Both educators and educational game designers should emphasize transparency and student agency in the design and implementation of GenAI-powered features. To achieve this, several actions are recommended: (1) developers should make features such as adaptive feedback, personalized hints, or scaffolding as opt-in supports rather than default settings, giving students control over how and when GenAI is used; (2) educators should clearly communicate how student data is used and how GenAI contributes to learning, as this transparency is the key to building trust; and (3) educators should focus on creating evaluation strategies that go beyond usability to include measures of learning outcomes, trust, and user acceptance metrics, ensuring that GenAI integration meaningfully supports pedagogical goals. Lastly, as students reported relatively low rates of educational game use in college, there is an opportunity for educators to reintroduce game-based learning at the post-secondary level through GenAI-powered systems that are relevant, personalized, and aligned with students’ evolving digital learning practices.

Student feedback via open-ended questions shows strong interest in using GenAI as a curated narrative engine within educational games. Participants mentioned GenAI’s potential to enhance storytelling (17%) and generate stories in various genres (25%). This aligns with earlier findings from students familiar with text-based games who preferred similar features as well. These insights suggest an opportunity to leverage GenAI for dialog variation and multiple narrative outcomes, along with creating diverse narrative genres, while operating under guardrails to preserve coherence and pedagogical integrity. As such, game designers should have narrative generation be guided by intentional guardrails that balance creative flexibility with educational structure. By carefully constraining and shaping the expressive outputs of GenAI, designers can deliver immersive, responsive narratives that remain aligned with educational goals.

Personalization also emerged as a critical area of value, in addition to narrative features. Students expressed interest in learning assistance (7.5%), game mechanics (e.g., characters, rewards, etc.), and adaptive experiences tailored to their needs (12%). These results highlight key design implications for game designers attempting to create effective GenAI-supported learning games: personalization features should be implemented in ways that actively support learning while maintaining user control. To do this, systems should provide clear, optional support such as tailored hints, feedback explanations or scaffolding prompts. These features should be visible and actionable, allowing students to engage with them as needed rather than by default. Such design choices not only enhance usability but also promote metacognitive awareness and learner autonomy. Implementing these design suggestions will also, in turn, contribute to fostering trust and transparency, which are essential for encouraging the adoption and sustained use of GenAI supported educational games. While many participants viewed GenAI’s role in educational games positively, particularly in enhancing game mechanics like characters, rewards, and personalization, others expressed skepticism about the quality of GenAI-generated content, especially in narrative coherence and visual design, indicating room for improvement in these features. These mixed perceptions may stem from differences in user experience; a post hoc observation suggests that more critical respondents could be frequent gamers or more literate GenAI users with higher expectations for creativity, accuracy, and system performance.

Finally, although not explicitly prompted in research questions, a notable pattern emerged around students requesting step-by-step support in their learning processes. Several open-ended responses described a desire for GenAI to function in a Socratic tutor-like role (Favero et al., 2024; Zare & Mukundan, 2015), guiding learners through problems interactively rather than simply supplying answers. This aligns with broader educational interest in inquiry-based learning approaches (Deák et al., 2021) and highlights the fact that designers of GenAI-supported educational games should integrate socratic prompting and conversational scaffolding to encourage active reasoning and deeper conceptual understanding. Incorporating such guidance mechanisms can transform GenAI from a content provider into a facilitator of reflective and inquiry-driven learning.

6. Conclusions

This paper offers insight into how students perceive, engage with, and envision GenAI tools in educational contexts, particularly within game-based learning environments. Survey results (n = 345) indicate widespread and frequent use of GenAI, especially for educational purposes, though students primarily view these tools as supplemental supports rather than replacements for traditional instruction. Additionally, engagement with educational games and text-based games varied, highlighting the need for clearer definitions, improved onboarding, trust-building, and alignment with students’ evolving digital habits and, in some cases, concerns about GenAI. Overall, the findings point to several key design implications for both educators and educational game designers. While this study centers around the student perspective, implications from the findings are applicable to educators and game designers. Future research can expand on these findings by incorporating perspectives from educators and designers to extend our understanding across diverse learning contexts. Additionally, usability studies on educational games and gamified tools using GenAI will expand on our understanding of student engagement with and preferences for GenAI incorporation. Exploring these complementary angles will help further validate and scale the design implications outlined in this work.

Author Contributions

Conceptualization, M.G., I.A. and F.Y.; methodology, M.G. and F.Y.; formal analysis, I.A., M.G. and V.V.; investigation, M.G. and I.A.; resources, D.M.; data curation, I.A.; writing—original draft preparation, M.G., I.A. and V.V.; writing—review and editing, M.G., I.A., T.A. and D.M.; visualization, I.A.; supervision, T.A.; project administration, M.G.; funding acquisition, T.A. and D.M. All authors have read and agreed to the published version of the manuscript.

Funding

The research reported here was supported by the National Science Foundation, through Grant NSF IIS 2202506 to University of Massachusetts/Arizona State University. The opinions expressed are those of the authors and do not represent views of the NSF.

Institutional Review Board Statement

The study was conducted in accordance with the Declaration of Helsinki, and approved by the Institutional Review Board (or Ethics Committee) of Arizona State University (protocol code STUDY00016553 approved on 7 February 2025).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The current study was preregistered at https://doi.org/10.17605/OSF.IO/5P92F. Survey materials and deidentified data are available at https://osf.io/5p92f/files/osfstorage, accessed on 22 December 2025.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviation

The following abbreviation is used in this manuscript:

| GenAI | Generative Artificial Intelligence |

Appendix A. User Needs Analysis Questionnaire

- I.

- Demographic questions

- 1.

- What is your age: (select number from list: 18–26, over 26)

- 2.

- Please select your current grade level

- Freshman

- Sophomore

- Junior

- Senior

- Graduate

- Other _____

- 3.

- Select your gender:

- Male

- Female

- Prefer not to say

- Prefer to self-describe: ___

- 4.

- What major is your field of study in?

- Science/Technology/Engineering/Mathematics (STEM)

- Humanities (e.g., English, Language, Comparative Literature)

- Social Sciences (e.g., psychology, sociology)

- Business

- Arts

- Other (please specify): ___

- II.

- Usage of Generative AI in daily life

- 1.

- How often do you use generative AI tools (e.g., ChatGPT, Dall-E, Perplexity, Gemini)?

- Multiple times a day

- Everyday

- Several times a week

- Multiple times a month

- Once in a while

- Never

- 2.

- What GenAI tool(s) do you use? ______________

- 3.

- What do you use generative AI tools for in your daily life? Please describe the specific ways in which you use these tools. (open ended)

- III.

- Usage of Generative AI in Education/Learning

- 1.

- Have you ever specifically used generative AI tools (e.g., ChatGPT) at school/college (e.g., help with assignments, general information to support studying) or for educational purposes outside school (e.g., searching for information about things, learning on your own)?

- only school/college

- only outside school

- Both

- neither

- 2.

- [If (1) a/b/c] Which generative AI tool(s) have you used for educational purposes? (Open-ended).Response: ______________________________________

- 3.

- [If (1) d] Please describe the reason(s) for not using generative AI tools for learning. ___

- 4.

- [if (1) a/b/c] In what scenarios have you used generative AI tools for learning? (Select all that apply)

- Searching for information

- Understanding new concepts or topics

- Assisting with assignments or homework

- Researching academic topics

- Practicing or reviewing material

- Brainstorming ideas for projects

- Language learning or translation assistance

- Writing or editing text

- Other (please specify): ____________________

- 5.

- [if (1) a/b/c] Frequency of Use: How often do you use generative AI tools (e.g., ChatGPT) for learning purposes?

- Multiple times a day

- Everyday

- Several times a week

- Multiple times a month

- Once in a while

- Never

- 6.

- [if (1) a/b/c] How helpful do you find generative AI tools for your learning?

- Extremely helpful

- Very helpful

- Somewhat helpful

- Not at all helpful

- 7.

- Challenges with Using Generative AI for Learning[if (1) a/b/c] Have you experienced any of the following challenges when using generative AI tools for learning? (Select all that apply)

- Understanding the AI’s responses

- Inaccurate or misleading information

- Difficulty integrating AI responses into assignments

- Limited availability or access to certain generative AI tools

- Ethical concerns about using AI in academic work (e.g., Academic Integrity, Bias in AI Output, Intellectual Property)

- Lack of guidance on how to use AI effectively

- Other (please specify): ____________________

- I have not experienced any challenges

- 8.

- Preferred Subjects or TopicsWhat are the subjects or topics for which you find generative AI most useful? (Select all that apply)

- Science (e.g., biology, chemistry, physics)

- Mathematics

- Language arts (e.g., English, writing)

- Social studies (e.g., history, geography)

- Programming or computer science

- Language learning (e.g., foreign languages)

- Art or creative subjects

- Other (please specify): ____________________

- 9.

- AI’s Role in Your Learning Process[if (1) a/b/c] What role does generative AI play in your learning process?

- It’s a primary resource.

- It’s a supplementary tool.

- It’s a last resort if other resources (e.g., class materials, instructors) don’t help.

- Other (please specify): ____________________

- IV.

- Familiarity with Games

- How often do you play games (e.g., video games, computer games, phone games, tabletop games, live action role playing games, party games)?

- Every day

- Once a week

- Sometimes

- Only with friends

- Rarely

- Never

- (if (1) NOT f) What modality of games do you like to play? (Select all that apply)

- Video game consoles

- Computer games

- Cell phone games

- Tabletop games

- Live action role playing games

- Party games (e.g., charades, cards against humanity)

- Other (please specify):___

- (if (1) NOT f) What types of games do you typically play? (Select all that apply)

- Action/Adventure

- Strategy

- Puzzle

- Role Playing Games (RPGs)

- Simulation (e.g., life simulation, city-building)

- Sports

- Educational/Learning games

- Other (please specify): ____________________

- (if (1) NOT f) Please list the names of some of the games (of any kind) that you like to play: _____________

- (if (1) NOT f) What features do you enjoy in games that you like? (Select all that apply)

- Storytelling and narrative

- Problem-solving challenges

- Social or multiplayer interaction

- Exploration and open-world settings

- Rewards and progression (levels, badges, etc.)

- Customization (characters, worlds, etc.)

- Other (please specify): ____________________

- V.

- Familiarity with Educational Games

- 1.

- Have you ever played any educational games?

- Yes

- No

- Not sure

- 2.

- [If (1) a, b], What are the names of some of those games? Name and/or briefly describe each game (e.g., topics taught, single vs. multi-player, etc.)? (Open-ended)Response: _______________________________________

- 3.

- [If (1) a, b], At what age did you play these educational games? (Select all that apply)

- During college/university

- During high school

- During middle school

- During elementary school

- 4.

- [If (1) a, b], When do/did you usually play these educational games?

- At school

- At home/friend’s house

- During homework or study sessions

- Other (please specify): ____________________

- 5.

- [If yes, Not Sure], What topics were these educational games focused on? (Select all that apply)

- Math problem solving

- Science concept understanding

- Language arts (reading, writing, learning a language)

- History or social studies

- Problem-solving skills

- Creativity

- Coding or computer skills

- Other (please specify): ____________________

- 6.

- [If (1) a, b] What device(s) did you typically use to play educational games? (Select all that apply)

- PC/Computer

- Tablet (e.g., iPad)

- Mobile phone

- Console (e.g., PlayStation, Xbox)

- VR headset

- Other (please specify): ____________________

- 7.

- If we design a game focused on STEM subjects, which subject(s) would be most useful for you (select all that apply)?

- Mathematics

- Literature learning

- Science (Biology, Chemistry, Physics)

- Technology (Computer Science, Engineering)

- Environmental Science

- Health Science

- Other (please specify): ____________________

- VI.

- Games within the GenAI/ChatGPT context

- 1.

- Do you think that generative AI tools have features that can be leveraged to support your learning beyond your current use(s) described previously?”

- Yes

- Not sure

- No

- 2.

- [If (1) a, b] What kinds of uses of AI do you think could help your learning? (open ended)

- 3.

- Do you think that generative AI (like ChatGPT) can be used for gaming?

- Yes

- Maybe

- No

- 4.

- [If (1) a] What are some games that could benefit from using GenAI? [open ended]

- 5.

- Would you use generative AI tools (e.g., ChatGPT) to play a game, if that was a feature within them?

- Yes

- Maybe

- No

- VII.

- Text-Based Games

- 1.

- Have you ever played text-based games (text-based games are video games that use written text to convey the story and gameplay, and may or may not have graphics or animations.?

- Yes

- No

- Not sure

- 2.

- [If (1) a], please list some text-based games you can think of that you have played: (Open-ended)Response: _______________________________________

- 3.

- [If (1) a], What do you like about text-based games?(Select all that apply)

- Engaging storytelling

- Rich character development

- Flexibility in choices and outcomes

- Imaginative gameplay

- Challenge of problem-solving

- Other (please specify): ____________________

- Do not enjoy text-based games.

- 4.

- What features would you like to see in a text-based game that is being developed (e.g., type of story, duration, type of task, etc.)?Response: _______________________________________

- VIII.

- Design Recommendations: Game Features with Generative AI:

- 1.

- Please describe the features that would make a GenAI-supported game appealing to you. (e.g., game genre, type of story, type of task, type of input from players, or type of response)?

- 2.

- How would GenAI enhance your gaming experience? (e.g., AI affecting story, AI affecting visual elements or type of input from players.)

- 3.

- Do you have any additional suggestions or comments about features you would like to see in a STEM educational game that uses generative AI? (Open-ended) (e.g., abilities, type of game, type of character, type of device it is designed for)

- Thank you very much for your participation!

- If you have any additional comments, suggestions, ideas, or experiences you would like to share regarding Generative AI, Games, Games that use Generative AI, or text-based games, please share them below:

- Response: ________________________________________

References

- Alasadi, E. A., & Baiz, C. R. (2023). Generative AI in education and research: Opportunities, concerns, and solutions. Journal of Chemical Education, 100(8), 2965–2971. [Google Scholar] [CrossRef]

- Anjum, A., Li, Y., Law, N., Charity, M., & Togelius, J. (2024). The ink splotch effect: A case study on ChatGPT as a co-creative game designer. arXiv. [Google Scholar] [CrossRef]

- Annetta, L. A. (2010). The “I’s” have it: A framework for serious educational game design. Review of General Psychology, 14(2), 105–113. [Google Scholar] [CrossRef]

- Ayeni, O. O., Al Hamad, N. M., Chisom, O. N., Osawaru, B., & Adewusi, O. E. (2024). AI in education: A review of personalized learning and educational technology. GSC Advanced Research and Reviews, 18(2), 261–271. [Google Scholar] [CrossRef]

- Baidoo-Anu, D., & Ansah, L. O. (2023). Education in the era of generative artificial intelligence (AI): Understanding the potential benefits of ChatGPT in promoting teaching and learning. Journal of AI, 7(1), 52–62. [Google Scholar] [CrossRef]

- Baker, R. S., Boser, U., & Snow, E. L. (2022). Learning engineering: A view on where the field is at, where it’s going, and the research needed. Technology, Mind, and Behavior, 3, 1–23. [Google Scholar] [CrossRef]

- Bardzell, S. (2010). Feminist HCI: Taking stock and outlining an agenda for design. In Proceedings of the SIGCHI conference on human factors in computing systems (pp. 1301–1310). Association for Computing Machinery. [Google Scholar] [CrossRef]

- Belawati, T., & Prasetyo, D. (2025). Generative AI-based tutoring for enhancing learning engagement and achievement. Open Praxis, 17(2), 211–226. [Google Scholar] [CrossRef]

- Borah, A. R., Nischith, T. N., & Gupta, S. (2024). Improved Learning Based on GenAI. In 2024 2nd international conference on Intelligent data communication technologies and internet of things (IDCIoT) (pp. 1527–1532). IEEE. [Google Scholar] [CrossRef]

- Bunt, L., Greeff, J., & Taylor, E. (2024). Enhancing serious game design: Expert-reviewed, stakeholder-centered framework. JMIR Serious Games, 12, e48099. [Google Scholar] [CrossRef]

- Cezarotto, M., Martinez, P., & Chamberlin, B. (2022). Redesigning for accessibility: Design decisions and compromises in educational game design. International Journal of Serious Games, 9(1), 17–33. [Google Scholar] [CrossRef]

- Chiou, E. K., & Lee, J. D. (2023). Trusting automation: Designing for responsivity and resilience. Human Factors, 65(1), 137–165. [Google Scholar] [CrossRef]

- Clark, D. B., Tanner-Smith, E. E., & Killingsworth, S. S. (2016). Digital games, design, and learning: A systematic review and meta-analysis. Review of Educational Research, 86(1), 79–122. [Google Scholar] [CrossRef]

- Côté, M. A., Kádár, A., Yuan, X., Kybartas, B., Barnes, T., Fine, E., Moore, J., Hausknecht, M., El Asri, L., Adada, M., Tay, W., & Trischler, A. (2018). Textworld: A learning environment for text-based games. In Workshop on computer games (pp. 41–75). Springer International Publishing. [Google Scholar] [CrossRef]

- Deák, C., Kumar, B., Szabó, I., Nagy, G., & Szentesi, S. (2021). Evolution of new approaches in pedagogy and STEM with inquiry-based learning and post-pandemic scenarios. Education Sciences, 11(7), 319. [Google Scholar] [CrossRef]

- de Freitas, S. (2006). Learning in immersive worlds: A review of game-based learning. JISC. Available online: https://files01.core.ac.uk/download/pdf/228144799.pdf (accessed on 1 February 2026).

- de Freitas, S., & Jarvis, S. (2006, December 4–7). A framework for developing serious games to meet learner needs. The Interservice/Industry Training, Simulation & Education Conference (I/ITSEC), Orlando, FL, USA. [Google Scholar]

- Deterding, S., Dixon, D., Khaled, R., & Nacke, L. (2011). From game design elements to gamefulness: Defining “gamification”. In Proceedings of the 15th international academic MindTrek conference (pp. 9–15). Association for Computing Machinery. [Google Scholar] [CrossRef]

- Domínguez, A., Saenz-de-Navarrete, J., de-Marcos, L., Fernández-Sanz, L., Pagés, C., & Martínez-Herráiz, J. J. (2013). Gamifying learning experiences: Practical implications and outcomes. Computers & Education, 63, 380–392. [Google Scholar] [CrossRef]

- Đerić, E., Frank, D., & Milković, M. (2025). Trust in Generative AI tools: A Comparative study of higher education students, teachers, and researchers. Information, 16(7), 622. [Google Scholar] [CrossRef]

- Eukel, H., & Morrell, B. (2021). Ensuring educational escape-room success: The process of designing, piloting, evaluating, redesigning, and re-evaluating educational escape rooms. Simulation & Gaming, 52(1), 18–23. [Google Scholar] [CrossRef]

- Eukel, H. N., Frenzel, J. E., & Cernusca, D. (2017). Educational gaming for pharmacy students–design and evaluation of a diabetes-themed escape room. American Journal of Pharmaceutical Education, 81(7), 6265. [Google Scholar] [CrossRef]

- Eun, S. J., Kim, E. J., & Kim, J. Y. (2022). Development and evaluation of an artificial intelligence–based cognitive exercise game: A pilot study. Journal of Environmental and Public Health, 2022(1), 4403976. [Google Scholar] [CrossRef] [PubMed]

- Favero, L., Pérez-Ortiz, J. A., Käser, T., & Oliver, N. (2024). Enhancing critical thinking in education by means of a Socratic chatbot. In International workshop on AI in education and educational research (pp. 17–32). Springer Nature. [Google Scholar]

- French, F., Levi, D., Maczo, C., Simonaityte, A., Triantafyllidis, S., & Varda, G. (2023). Creative use of OpenAI in education: Case studies from game development. Multimodal Technologies and Interaction, 7(8), 81. [Google Scholar] [CrossRef]

- Gee, J. P. (2003). What video games have to teach us about learning and literacy. Computers in Entertainment (CIE), 1(1), 20. [Google Scholar] [CrossRef]

- Goldshtein, M., Chiou, E. K., Alhashim, A. G., & Roscoe, R. D. (2022). Trust and explainability as tools for improving automated writing evaluation. In Workshop on trust and reliance in AI-human teams at the 2022 ACM CHI conference on human factors in computing systems (pp. 1–10). Available online: https://chi-trait.github.io/papers/2022/CHI_TRAIT_2022_Paper_33.pdf (accessed on 1 February 2026).

- Goldshtein, M., Schroeder, N. L., & Chiou, E. K. (2025). The role of learner trust in generative artificially intelligent learning environments. Journal of Engineering Education, 114(2), e70000. [Google Scholar] [CrossRef]

- Goodell, J., & Kolodner, J. (Eds.). (2022). Learning engineering toolkit: Evidence-based practices from the learning sciences, instructional design, and beyond. Taylor & Francis. [Google Scholar]

- Google. (2025). Gemini (Version 1.0.0) [Large language model]. Available online: https://gemini.google.com/ (accessed on 1 February 2026).

- Guest, O., Suarez, M., Müller, B., van Meerkerk, E., Oude Groote Beverborg, A., de Haan, R., Reyes Elizondo, A., Blokpoel, M., Scharfenberg, N., Kleinherenbrink, A., Camerino, I., Woensdregt, M., Monett, D., Brown, J., Avraamidou, L., Alenda-Demoutiez, J., Hermans, F., & van Rooij, I. (2025). Against the uncritical adoption of ‘AI’ technologies in Academia. Zenodo. [Google Scholar] [CrossRef]

- Hamari, J., Koivisto, J., & Sarsa, H. (2014). Does gamification work?—A literature review of empirical studies on gamification. In 2014 47th Hawaii international conference on system sciences (pp. 3025–3034). IEEE. [Google Scholar] [CrossRef]

- Hanus, M. D., & Fox, J. (2015). Assessing the effects of gamification in the classroom: A longitudinal study on intrinsic motivation, social comparison, satisfaction, effort, and academic performance. Computers & Education, 80, 152–161. [Google Scholar] [CrossRef]

- Hassan, L. (2024). Accessibility of games and game-based applications: A systematic literature review and mapping of future directions. New Media & Society, 26(4), 2336–2384. [Google Scholar] [CrossRef]

- Hausknecht, M., Ammanabrolu, P., Côté, M. A., & Yuan, X. (2020). Interactive fiction games: A colossal adventure. Proceedings of the AAAI Conference on Artificial Intelligence, 34(5), 7903–7910. [Google Scholar] [CrossRef]

- Hsieh, H. F., & Shannon, S. E. (2005). Three approaches to qualitative content analysis. Qualitative Health Research, 15(9), 1277–1288. [Google Scholar] [CrossRef]

- Hyland, K., & Hyland, F. (2019). Contexts and issues in feedback on L2 writing. In Feedback in second language writing: Contexts and issues (pp. 1–22). Cambridge University Press. [Google Scholar]

- Jiang, J., & Ziden, A. A. (2025). Development of a teacher competency model in Game-Based learning: A need analysis. International Journal of Evaluation and Research in Education (IJERE), 14(1), 621. [Google Scholar] [CrossRef]

- Kaldaras, L., Akaeze, H. O., & Reckase, M. D. (2024, August). Developing valid assessments in the era of generative artificial intelligence. In Frontiers in education (Vol. 9, p. 1399377). Frontiers Media SA. [Google Scholar]

- Karpouzis, K., Pantazatos, D., Taouki, J., & Meli, K. (2024, May). Tailoring education with GenAI: A new horizon in lesson planning. In 2024 IEEE global engineering education conference (EDUCON) (pp. 1–10). IEEE. [Google Scholar]

- Kashfi, P., Nilsson, A., & Feldt, R. (2017). Integrating User eXperience practices into software development processes: Implications of the UX characteristics. PeerJ Computer Science, 3, e130. [Google Scholar] [CrossRef]

- Khan, R., Bhaduri, S., Mackenzie, T., Paul, A., Kj, S., & Sen, I. (2024, August 25–29). Path to personalization: A systematic review of genai in engineering education. KDD AI4Edu Workshop, Barcelona, Spain. [Google Scholar]

- Kiili, K., de Freitas, S., Arnab, S., & Lainema, T. (2018). The design principles for flow experience in educational games: A review. British Journal of Educational Technology, 49(2), 210–227. [Google Scholar] [CrossRef]

- Kloos, C. D., Alario-Hoyos, C., Estévez-Ayres, I., Callejo-Pinardo, P., Hombrados-Herrera, M. A., Muñoz-Merino, P. J., Moreno-Marcos, P. M., Mario Muñoz-Organero, M., & Ibáñez, M. B. (2024). How can Generative AI support education? In 2024 IEEE global engineering education conference (EDUCON) (pp. 1–7). IEEE. [Google Scholar]

- Koivisto, J., & Hamari, J. (2019). The rise of motivational information systems: A review of gamification research. International Journal of Information Management, 45, 191–210. [Google Scholar] [CrossRef]

- Lee, J. J., & Hammer, J. (2011). Gamification in education: What, how, why bother? Academic Exchange Quarterly, 15(2), 146. [Google Scholar]

- Lee, S. S., & Moore, R. L. (2024). Harnessing Generative AI (GenAI) for automated feedback in higher education: A systematic review. Online Learning, 28(3), 82–106. [Google Scholar] [CrossRef]

- Lukava, T., Morgado Ramirez, D. Z., & Barbareschi, G. (2022). Two sides of the same coin: Accessibility practices and neurodivergent users’ experience of extended reality. Journal of Enabling Technologies, 16(2), 75–90. [Google Scholar] [CrossRef]

- Luo, J. (2025). How does GenAI affect trust in teacher-student relationships? Insights from students’ assessment experiences. Teaching in Higher Education, 30(4), 991–1006. [Google Scholar]

- Madotto, A., Namazifar, M., Huizinga, J., Molino, P., Ecoffet, A., Zheng, H., Papangelis, A., Yu, D., Khatri, C., & Tur, G. (2020). Exploration based language learning for text-based games. arXiv, arXiv:2001.08868. [Google Scholar]

- Maxim, R. I., & Arnedo-Moreno, J. (2025). Identifying key principles and commonalities in digital serious game design frameworks: Scoping review. JMIR Serious Games, 13, e54075. [Google Scholar] [CrossRef]

- McNamara, D. S. (2024). AIED: From cognitive simulations to learning engineering, with humans in the middle. International Journal of Artificial Intelligence in Education, 34(1), 42–54. [Google Scholar] [CrossRef]

- Michael, D. R., & Chen, S. L. (2005). Serious games: Games that educate, train, and inform. Thomson Course Technology. [Google Scholar]

- Moon, J., Lee, U., Koh, J., Jeong, Y., Lee, Y., Byun, G., & Lim, J. (2025). Generative artificial intelligence in educational game design: Nuanced challenges, design implications, and future research. Technology, Knowledge and Learning, 30(1), 447–459. [Google Scholar] [CrossRef]

- Moundridou, M., Matzakos, N., & Doukakis, S. (2024). Generative AI tools as educators’ assistants: Designing and implementing inquiry-based lesson plans. Computers and Education: Artificial Intelligence, 7, 100277. [Google Scholar] [CrossRef]