Abstract

This study examines the influence of artificial intelligence (AI) system transparency, cognitive load, response bias, and individual values on perceived AI decision integrity. Using a quantitative approach, data were collected through surveys and analyzed via SEM-PLS. The findings highlight that AI transparency and familiarity significantly impact users’ trust and perception of decision fairness. Response biases were found to be increased by the cognitive load and decision fatigue, affecting decision integrity. This study identifies mediating effects of sensitivity to errors and response bias in AI-driven decision-making. Practical implications imply that lowering the cognitive load and increasing transparency will help to increase the acceptance of AI, and incorporating ethical considerations into AI system design helps to minimize bias. This study contributes to AI ethics by emphasizing fairness, explainability, and user-centered trust mechanisms. Future research should explore AI decision-making across industries and cultural contexts. The findings of this study offer managerial, theoretical, and practical insights into responsible AI deployment.

1. Introduction

AI is increasingly influencing decision-making processes in various industries, including finance, healthcare, retail, and beyond. The fast acceptance of AI-driven systems has generated debates about their dependability, transparency, and ethical consequences [1]. Acceptance of AI depends mostly on trust in it [2]; therefore, issues of justice, fairness, and decision integrity must be resolved to build confidence in AI-supported systems [3]. This paper emphasizes the mediating functions of error sensitivity and response bias and investigates the impact of AI decision-influencing elements on the perceived decision integrity.

The cognitive and behavioral components of confidence in AI are increasingly crucial as it becomes more common in decision-making [4]. Decision integrity is influenced by customers’ and stakeholders’ evaluations of AI suggestions, and is based on the suggestion’s reliability, objectivity, and perceived accuracy [5,6]. Users trust and accept AI-driven decisions more when systems are reliable and transparent, according to studies [7]. However, some AI models function as “black boxes,” which makes it hard for users to comprehend their judgments and thus promotes distrust and lowers adoption rates [8].

AI-based decision integrity also depends on user knowledge. Regular users of AI systems can trust them because familiarity lowers ambiguity and boosts their confidence in AI’s advice [9]. People unfamiliar with AI decision-making may be more suspicious, which increases their reaction bias and mistake sensitivity. The cognitive load and decision fatigue are also important in AI acceptance since, if too much mental effort is required to evaluate AI-driven ideas, it might lead to error and trust loss [10]. This study additionally examines how judgements about AI may be made considering task-related expenditures and advantages. Users evaluate AI systems depending on their perceived utility, convenience, and risk, so their general confidence and readiness to rely on AI for significant decisions change. Furthermore, human prejudices and ideals define consumer opinions on AI. Personal experience, ethical concerns, and social expectations all influence different points of view on the reliability and fairness of AI:

- How does AI system reliability and transparency influence the perceived decision integrity?

- What is the role of consumer familiarity with AI in shaping trust and decision confidence?

- How do task-related costs and benefits impact users’ willingness to rely on AI-driven decisions?

- How do individual values and biases affect the perceived AI decision integrity?

The present research highlights a knowledge gap in understanding how AI decision-influencing factors affect the perceived decision integrity of AI, particularly through sensitivity to errors and response bias. Consumer perceptions of AI decision integrity are shaped by cognitive and psychological processes that have not been researched previously. This work utilizes self-determination theory (SDT) and cognitive load theory (CLT) to fill this gap. SDT discusses how autonomy, competence, and relatedness motivate consumers to trust AI judgements, whereas CLT analyses how cognitive effort and decision fatigue affect users’ capacity to critically assess AI outputs. These ideas explain the psychological and cognitive underpinnings of trust in and perceived integrity of AI. This study examines AI decision-influencing variables and perceived decision integrity using SEM-PLS. This study examines how AI systems may improve user trust and eliminate negative bias using error sensitivity and reaction bias. These factors can help governments, organizations, and AI developers create more transparent, ethical, and user-friendly AI systems.

2. Literature Analysis

AI-driven decision-making can influence consumer trust, introduce bias, and raise concerns about cognitive load and decision integrity. Consumers often express concerns about AI’s reliability, decision fatigue, and bias. In this study, we propose that several factors—AI system’s reliability, consumer familiarity, task-related costs and incentives, and individual values—impact the perceived decision integrity of AI through the mediating effects of cognitive load and response bias.

To provide a solid theoretical foundation, this research draws upon SDT [11] and CLT [12]. According to SDT, a customer’s trust is affected by their sense of autonomy, competence, and relatedness. We propose that AI system stability and transparency can enhance a user’s sense of competence and autonomy, while consumer familiarity with AI can boost their confidence. Furthermore, users’ trust in and response to AI are affected by how AI judgments reflect a user’s own beliefs and prejudices.

Meanwhile, CLT explains how cognitive strain impacts decision-making. A high cognitive load and decision fatigue can lead to bias and reduced decision integrity, particularly due to high task-related costs. When under stress, users may be more likely to misuse or reject AI-generated results.

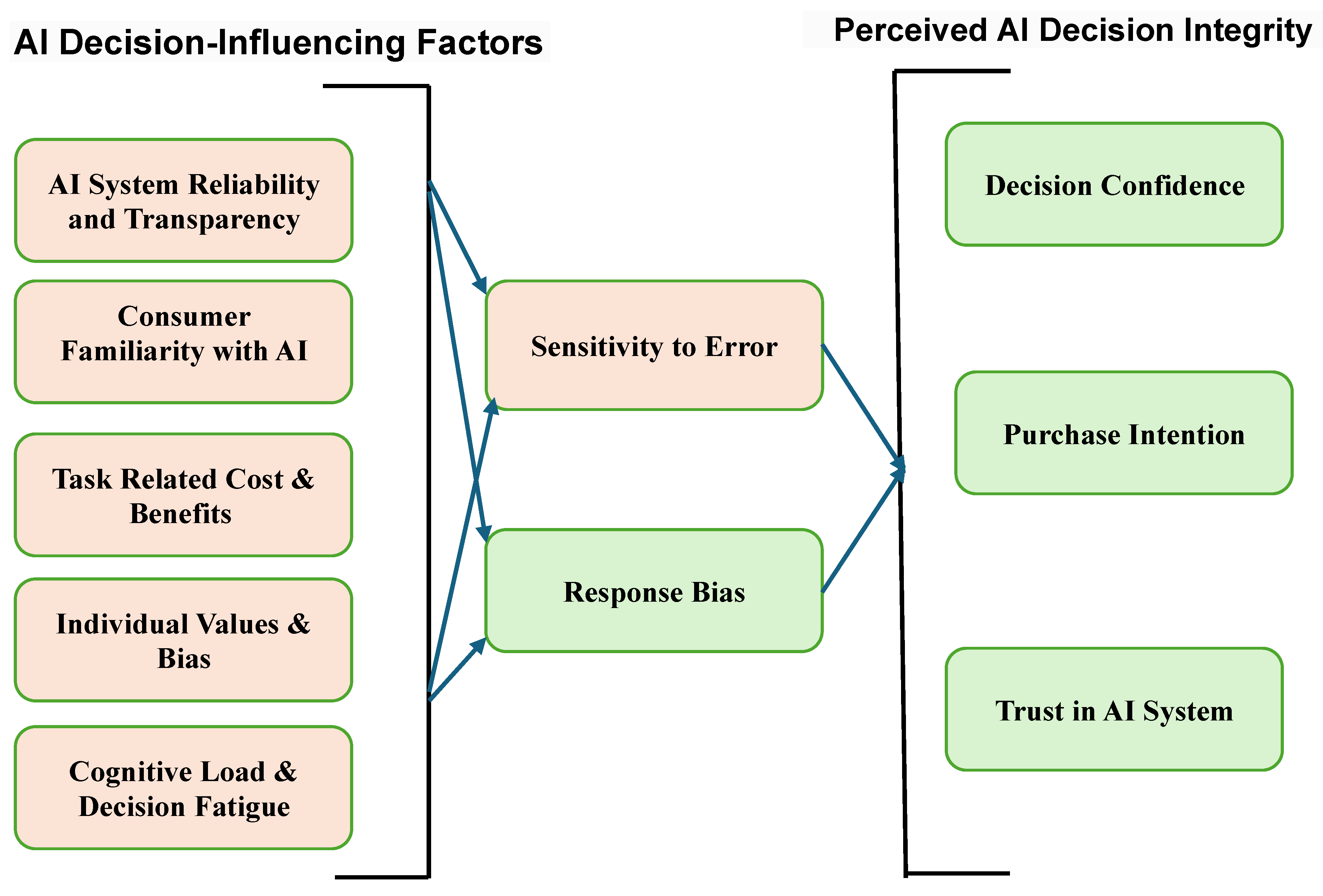

After consideration, the technology adoption model (TAM) and prospect theory (PT) were deemed unsuitable. Previous research on AI adoption often uses models like the TAM or PT. However, the present research purposefully combines SDT and CLT, which better fit its focus on psychology and cognition. The TAM focuses mostly on how helpful and easy to use something seems [13]. These are useful for examining how people accept technology in general, but they do not work as well for investigating the specific reasons why people trust AI to make decisions, such as autonomy, ethical congruence, or personal values. Since our study examines more than simply adoption, also examining perceived decision integrity, SDT is a better foundation for understanding user motivation. SDT enables us to investigate how users’ psychological demands for competence, autonomy, and relatedness affect their trust and motivation in a more detailed way [11,14]. Prospect theory is also useful for modelling decisions made when there is a risk of loss [15], but it does not take into consideration the cognitive constraints or mental effort that come with using AI systems. We need a cognitive theory based on mental strain and effort allocation, since we are interested in decision fatigue, response bias, and sensitivity to error. These are all important parts of CLT [16,17]. Therefore, SDT and CLT are better than the TAM or prospect theory in helping us understand how people decide if AI-generated decisions are fair, clear, and trustworthy, especially when they are under cognitive strain or motivational conflict. The detailed framework of this study is given in Figure 1.

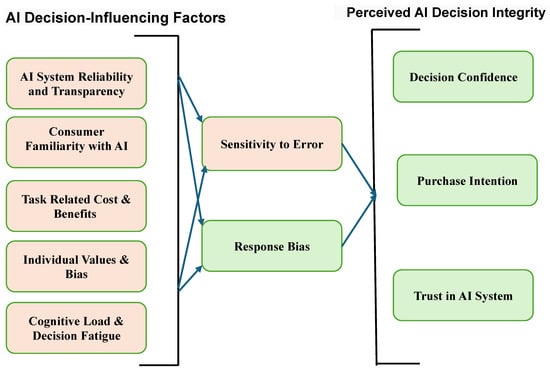

Figure 1.

Conceptual framework of this study.

The conceptual framework for the present research is shown in Figure 1. It combines two different but related theories, SDT and CLT. The framework puts the elements that affect things into two primary groups. The first group contains motivational antecedents, based on SDT, that represent users’ psychological demands for autonomy, competence, and relatedness. These include AI transparency, user familiarity, and personal values. The second set includes cognitive antecedents like decision fatigue, response bias, and sensitivity to error. These are based on CLT and are associated with how much mental effort it takes to work with AI systems. These two groups affect how people think about the integrity of AI decisions in two ways: through user motivation and trust and through cognitive processing and bias. This framework goes beyond traditional models like the technology acceptance model (TAM) by including both motivational and cognitive aspects. It gives a more detailed picture of how people judge AI-generated decisions in situations that are complex, novel, and involve incorporating ethical considerations into AI system design.

3. AI System Reliability and Transparency

AI system stability and transparency are essential for confidence in and ethical use of AI, especially in high-risk areas like healthcare and finance. The dependability of an AI system is its ability to perform frequently, yet generalizing issues and ambiguity persist [18,19]. Researchers suggest including knowledge, reasoning, and strong validation systems [20], as well as explainable AI (XAI) approaches, to improve decision-making transparency [21]. AI-powered recommendations affect purchase intentions by delivering personalized, relevant suggestions based on trust and quality. Customer involvement and fairness affect purchase decisions [22]. Equitable and transparent AI systems, through their recommendations, result in greater pleasure and loyalty [23], fostering confidence using clear, intelligible explanations [24]. Building trust requires transparency, but many AI models have a “black-box” quality that makes them difficult to understand [25]. Particularly in sensitive areas, XAI techniques such as SHAP, LIME, and EBM seek to simplify decision-making procedures [26]. Compliance with ethical standards like the GDPR ensures accountability [27]. Policymakers, developers, and users must collaborate and monitor AI to ensure its reliability and transparency [28].

Reliability and transparency in AI systems are essential for building trust and positively influencing retail purchase behavior [20,29]. Reliable AI ensures security and privacy, while transparency in operations enhances consumer engagement and purchase intentions. However, system failures and psychological stress can negatively impact consumer trust and purchase decisions if not properly managed [30]. Hence, the following hypothesis is proposed:

H1:

AI system reliability and transparency have a significant impact on perceived AI decision Integrity.

4. Consumer Familiarity with AI

Consumers’ familiarity with AI is influenced by factors such as the type of AI application, the perceived benefits, and concerns about risks. Convenience, customization, and enhanced user experiences abound from AI technologies such as personal assistants, service robots, and AI in retail [29,31]. Acceptance can be hampered, nevertheless, by questions regarding data privacy, trust, and ethical considerations [32]. Influenced by social variables, hedonic motivation, and perceived utility and simplicity of use, familiarity with AI might boost customers’ comfort and readiness to utilize these technologies [33,34]. Customer familiarity with AI is a significant factor in decision-making, trust, and the desire to purchase. Consumers who are familiar with AI often trust AI recommendations more, which increases their confidence in their choices [35,36]. Familiarity also lowers uncertainty, which causes AI-powered suggestions to appeal more to customers and raise their buy intentions [22]. AI systems that are transparent and offer explanations for their recommendations are perceived as fairer and help to establish trust [23]. Consumers trust transparent and competent AI systems; this confidence drives higher adoption and implementation of AI suggestions [37].

By improving perspectives on AI technologies like chatbots and recommendation systems, consumer familiarity with AI—especially through human-like characteristics and simplicity of use—boosts purchase inclinations [29]. While Generation Z has the most favorable response to AI in online buying, privacy issues remain a major obstacle, even if AI-driven customization and engagement favorably affect retail sales [38]. Hence, the following hypothesis is proposed:

H2:

Consumer familiarity with AI has a significant impact on perceived AI decision integrity.

5. Task Related Cost and Benefits

Optimizing performance and decision-making depends on an awareness of the expenses and advantages associated with tasks. Studies demonstrate that giving incentives top priority over effort can help to increase task performance; however, this dynamic could reduce the impact of rewards on decision-making [39,40]. The dorsal anterior cingulate cortex (dACC), which independently handles task demands and rewards, provides the neurological foundation of these trade-offs. Cost–benefit analysis (CBA) helps assess both direct and indirect advantages in investment projects; nonetheless, it is still difficult to precisely project results [41]. Task scheduling models in complex systems, such as cloud networks, balance expenses with possible benefits to maximize efficiency [42]. Task shifting has proved to be affordable in health systems, especially in low-income nations [43]. The opportunity costs are quite important for task performance and affect cognitive resource allocation and engagement [44]. Effective decision-making, in many different spheres, depends on the careful balancing of these elements. Task-related costs cover several aspects, including the price, scheduling, resource allocation, and risk control. Models like the reward Witkey business model (RWBM) demonstrate in task pricing that higher rates draw more responses but might compromise the quality of the job [45]. Changing the task pricing depending on various criteria will help to increase completion rates without increasing expenses. Accurate forecasts are vital in cost estimates and scheduling, particularly in software and construction projects where multi-objective optimization and time–cost trade-offs can improve efficiency [46]. Like priority algorithms in product development and the synergy blend technique in software development, resource allocation models aid in enhancing efficiency and lowering costs [47,48]. In engineering and project management, effective risk management techniques are crucial for preventing budget overruns and delays [49]. While knowledge of psychological costs like the response time and mistakes helps guide task performance, task scheduling models—including genetic models—aim to maximize the cost–benefit ratio and improve task execution [50]. Task-related costs in retail purchases comprise pre-transaction (e.g., supplier search), transaction (e.g., product pricing), and post-transaction (e.g., shipping and returns) expenses. Cost savings from used purchases, improved logistics, and customer value gained from smart shopping and technology developments like AR—which may impact decisions and shopping experiences—are among the benefits [51]. Hence, the following hypothesis is proposed:

H3:

Task-related cost and benefits have a significant impact on perceived AI decision integrity.

6. Individual Values and Bias

An individual’s values are the principles by which he or she lives their life and the standards by which they measure success [52]. Like the self-serving and halo effects, prejudices can subtly alter our judgment; values determine our sensitivity to these biases [53,54]. Especially in empowering leadership [55] and government, an understanding of and inclusive strategies for eliminating prejudices can assist in enhancing decision-making [56]. A person’s or a group’s decision-making is heavily impacted by their own set of values and prejudices. While motivating bias, including wishful thinking and affinity bias, can lead to preferences for particular alternatives, cognitive biases—like overconfidence, anchoring, and framing effects—can slant judgment [57]. While, at the group level, shared prejudices might create collective blind spots, at the individual level, personal values shape our view and responses to information [58]. Strategies like raising awareness, promoting other points of view, and applying disciplined decision-making models help to reduce these prejudices and improve the quality of decisions [59,60].

Retail purchases are influenced by individual values and biases, which are illustrated by factors such as deal proneness, the store environment, and social influences [61]. While store atmospherics and pricing perceptions influence trust and purchasing decisions [62], economic circumstances determine buying attitudes. Furthermore, hedonic and utilitarian values motivate retailer loyalty, while consumer traits shape cross-form buying behavior [63]. Hence, the following hypothesis is proposed:

H4:

Individual values and biases have a significant effect on perceived AI decision integrity.

7. Cognitive Load and Decision Fatigue

The cognitive load is the amount of mental effort required to use working memory, which impacts how efficiently one learns. It is three-fold: intrinsic (complexity of content), extraneous (result of presenting techniques) [64], and germane (effort to grasp and learn). According to CLT, overloading the working memory reduces learning [17] and instructional design can maximize it by reducing the amount of content, leveraging multimedia, and minimizing needless details [65]. Subjectively, using self-reports [66], and objectively, by physiological techniques [67], the cognitive load is assessed. Good learning is based on schema building; hence, teaching strategies should be customized to control the cognitive load. Reference [68] mentions that new uses of CLT and cooperative learning [69] should be investigated in further studies. Among practical suggestions are evidence-based teaching practices and technology in line with CLT ideas. The cognitive load, the amount of mental work required to digest information, has a direct impact on decision-making and often results in decision fatigue. Shorter processing intervals and high-cognitive-load (HCL) situations produce more cognitive fatigue (CF) than low-cognitive-load (LCL) settings, which results in tiredness and lowered attentiveness [70]. Extended cognitive activities, such as a two-hour “Go/NoGo” assignment, raise users’ mental demand, reduce their motivation, and impair their mood [71]. With people under a great cognitive load becoming more risk-averse and impatient, decision fatigue causes unstable preferences and poor decision-making [72]. The cognitive load during training improves individuals’ performance in new contexts, such as dynamic scenarios or firefighting simulations [73]. Through speech analysis, cognitive fatigue can be quantified by evaluating elements such as the speech onset time and rate. By regulating cognitive effort and rest intervals, workload management systems—like those used for train drivers—help to preserve performance.

Cognitive load and decision fatigue affect retail purchases by overloading customers with too many options, therefore lowering customers’ decision quality and causing impulse buying [74,75]. While innovations like AR and product bundling streamline decision-making, and hence boost purchase outcomes, in-store displays and tailored suggestions assist in controlling customers’ cognitive load [76,77]. Emotional tiredness also influences store choice and therefore affects customers’ footfall [78].

H5:

Cognitive load and decision fatigue have a significant effect on perceived AI decision integrity.

8. Response Bias

An important part of decision-making is response bias, which is the propensity to favor particular answers regardless of real facts. Response bias is different from stimulus bias, although both can exist and impact judgment, especially when the timing of stimuli changes [79]. Individuals may form biases against particular stimuli to maximize choice outcomes, which may be attributed to individual variances in sensory encoding and, consequently, response bias [80]. Financial incentives are one of several variables that influence response bias; one of them is the correlation between greater activity in the left inferior frontal gyrus (IFG) and more liberal replies [81]. Anxiety, a mediator of economic stress, can amplify response bias as well [82]. To distinguish between response and stimulus bias, models such as the hierarchical diffusion model can be useful [83]. On the other hand, the leaky competing accumulator model can be used to describe how reward biases change the initial point of evidence accumulation [84]. Awareness, especially non-judging, can lessen bias by stabilizing responses under split attention [85], and anxiety mediates the relationship between hardship and prejudice [82], while cognitive control may not. Decisions in healthcare settings are impacted by response bias, which calls for measures to enhance patient outcomes [86,87], and decision-making in financial matters is greatly impacted by response bias, which influences investment and risk-taking behaviors.

AI improves consumer satisfaction in retail by enhancing the simplicity of use, personalization, and engagement of retail experiences, which in turn leads to positive sentiments such as awe, which in turn increases purchase intentions [88]. Regarding security, trust, and system dependability, however, worries could lower pleasure and influence purchase behavior [89]. In AI systems, bias can skew suggestions, distorting fair or accurate outcomes [90]. Retailers could employ varied data and constantly improve AI models in terms of their fairness and dependability to help reduce response bias [91].

9. Sensitive to Error

One of the important aspects of decision-making is error sensitivity, which impacts cognitive bias, risk sensitivity, and post-error adjustments. People typically slow down their judgments (post-error slowdown) after making a mistake to stop more errors, which is a natural response rather than a conscious strategy [92]. People’s view of mistakes also counts: when mistakes have significant repercussions, people become more cautious and exhibit greater brain responses, such as error-related negativity (ERN), which indicates the relevance of an error [93,94]. Under uncertainty, cognitive biases, including overconfidence and regret avoidance, influence decision-making; occasionally, they lead to illogical decisions. But, as error management theory (EMT) suggests, some biases can also be adaptive; this explains why some biases help prevent more expensive mistakes [95]. Particularly in young people, individuals’ decision-making ability correlates with improved sensitivity to expected value, which enables more logical decisions. In high-risk industries like healthcare, enhancing decision dependability and lowering mistakes depends on an awareness of these cognitive processes. Examining how people react to mistakes helps us create better decision-making plans, particularly in situations when mistakes have major repercussions [96].

AI improves retail experiences by increasing their efficiency, personalization, and simplicity of use, which results in increased satisfaction and purchase intentions [88]. However, unlike human blunders, AI mistakes can lead to stress and mistrust, which increases consumer sensitivity [89]. Directly influencing demand satisfaction and loyalty in e-retail are inventory mistakes and system breakdowns. Although it would have financial expenses, implementing AI fairness and guaranteeing error-free transactions can help minimize negative effects. Retailers have to strike a balance between AI’s advantages and drawbacks to maintain consumer confidence and contentment [97].

10. Perceived Decision Integrity

Perceived decision integrity is the conviction that decisions are taken with ethical commitment, honesty, and consistency, and guarantees congruence between intentions, words, and deeds. Encouraging trust and credibility [98,99] is important in public service, healthcare, and management. Behavioral integrity (BI) is a fundamental component since people and companies regularly match their behavior with their ideals, thereby enhancing their performance and confidence [100]. Trust in AI systems is influenced by factors such as transparency, explainability, and user perceptions. Transparency can increase trust, but it may also overwhelm users in specific conditions [101]. Both self-confidence and AI confidence affect decision confidence; personal experience shapes trust and dependability on AI systems. People’s purchase intentions also directly depend on their confidence in AI systems, as people are more inclined to believe AI recommendations when they seem fair and open [102]. Frameworks and technologies like distributed ledger technology (DLT) help to ensure decision integrity, especially in important industries, and therefore promote more confidence and openness in AI decisions [103]. AI improves accuracy and shapes how decisions are presented, thereby influencing decision-making. Particularly in sectors like medicine, where pathologists’ dependence on AI increases with enhanced interpretability of algorithms, trust in AI is mostly shaped by its accuracy and openness. While this may be controlled by providing more information regarding AI’s certainty, incorporating ethical considerations into AI system design, including prejudice and responsibility, must be considered for responsible AI usage. AI can also influence psychological elements, including growing overconfidence [104]. Psychological costs and advantages of AI could alter human judgments, for example the relief from mistakes being rectified [105]. In many fields, including banking, business environments, and healthcare, AI assists decision-making, but human involvement remains vital. Effective decision-making depends on human–AI cooperation, whereby AI shines in data processing and humans provide reasoning and emotional intelligence. However, another issue is how AI affects professional identities in businesses, particularly when it replaces decision-making roles [106]. Constant assessment is required to guarantee moral and balanced cooperation between humans and AI [107]. Hence, the following hypotheses are proposed:

H6:

Response bias mediates the relationship between consumer familiarity and perceived AI decision integrity.

H7:

Sensitivity to error mediates the relationship between individual values and bias and perceived AI decision integrity.

H8:

Response bias mediates the relationship between individual values and bias and perceived AI decision integrity.

H9:

Sensitivity to error mediates the relationship between task-related cost and benefits and perceived AI decision integrity.

H10:

Response bias mediates the relationship between task-related cost and benefits and perceived AI decision integrity.

H11:

Sensitivity to error mediates the relationship between AI system reliability and transparency and perceived AI decision integrity.

H12:

Response bias mediates the relationship between AI system reliability and transparency and perceived AI decision integrity.

H13:

Sensitivity to error mediates the relationship between cognitive load and decision fatigue and perceived AI decision integrity.

H14:

Response bias mediates the relationship between cognitive load and decision fatigue and perceived AI decision integrity.

11. Methodology

11.1. Research Design

The present study conducts a quantitative study using a survey-based approach to examine how AI system transparency, cognitive load, response bias, and personal values affect perceived AI decision integrity. After collecting data with a standardized questionnaire, SEM-PLS was used to investigate variable correlations. Its strong statistical analysis means this technique may be used to investigate complex connections between constructs. Component measuring scales were built from proven earlier research and modified by expert reviews and pilot testing to ensure content validity. Every component was scored on a Likert scale from 1 (strongly disagree) to 5 (strongly agree).

Measurement of trust and reliability in AI systems requires several factors. This study modified constructs, items, and measuring scales from earlier research. Three items regarding the transparency and reliability of AI systems were derived from [108] to measure how consistent and clear AI judgements seem to be for consumers. Three items that measure consumer familiarity with the studied AI system have been adapted from [109]. Three items from [110], about task-related costs and benefits, were adapted. Three items for individual values and bias have been adapted from [54]. Three items for cognitive load [111,112] and decision fatigue have been derived from [113]. Four items for response bias have been taken from [114]. Two items for error sensitivity have been adapted from [115]. Further, nine items for decision integrity have been adapted from [116,117]. Cognitive load and decision fatigue examine the mental work needed to evaluate AI-driven judgments. Consumer knowledge of AI takes previous experience and the simplicity of use of AI outputs into account. Trust is influenced by individual values and bias via individual’s own ethical views. Perceived AI decision assessments evaluate dependability, precision, and fairness. Social desirability bias helps one to evaluate response bias. Sensitivity to error contributes to how much AI errors affect trust. Task-related costs and benefits balance the benefits of depending on AI with its drawbacks. User confidence in AI decision-making determines trust in AI systems; conversely, decision confidence demonstrates how results produced by AI impact users’ assurance in their decisions.

11.2. Sampling Strategy and Data Collection

Retail establishments in Saudi Arabia were studied to obtain consumer views of AI-driven decision integrity. The target demographic consisted of consumers engaging with AI decision-making algorithms. Using a random sample method guarantees objective and representative responses. This study focuses on organized retail, where AI-driven decision-making is increasingly influencing consumer choices. To ensure relevant insights, responses were filtered based on AI familiarity, and only individuals with prior experience or understanding of AI were considered suitable participants. Using the SEM rule, the sample size was calculated and, given the 30 survey questions, it was determined that a minimum of 600 participants were needed. To account for potential non-responsiveness, a total of 1000 questionnaires were distributed, which resulted in 828 completed responses, yielding a response rate of 82.8%. All participants were given explicit instructions before the data collection began, which took place in person. Before the questionnaire was refined and dependability was guaranteed, a pilot test including 50 respondents was carried out.

11.3. Data Analysis Techniques

Preliminary screening was done before data analysis: instances with too many missing values (>10%) were excluded, the Mahalanobis distance was used to discover outliers, and the skewness and kurtosis were used to evaluate normalcy. Tests of validity and reliability ensured the strength of the measuring model. Using Cronbach’s alpha and composite reliability (CR), internal consistency was investigated. Average variance extracted (AVE) was used to evaluate construct validity. Using the Fornell-Larcker criteria and the HTMT ratio (<0.85), discriminant validity was confirmed. Variance inflation factors (VIFs) were used to investigate multicollinearity, for which all values stayed below 5.0, thereby verifying that there were no collinearity issues [118].

This study distinguished constructions as either formative or reflective. Reflective constructions—such as perceived AI decision integrity and trust in AI system—assume, based on validity and reliability tests, that observable indications reflect an underlying variable. Using VIFs and bootstrapping methods on 10,000 samples and formative constructs like AI system reliability and transparency, and assuming that the indications describe the construct, the results were assessed. For managing intricate interactions, structural equation modelling (SEM-PLS) was used with success. Model fit was evaluated by SRMS (<0.08), d_ULS, d_G, Chi-square, and NFI (>0.9). Direct and indirect impacts were investigated using path analysis, while hypotheses were tested using t-values (>1.96) and p-values (<0.05). The model’s fit indices show that it works well. The SRMR value for the estimated model is 0.08, which is acceptable (SRMR < 0.10) and slightly below the line for an effective model fit (SRMR < 0.08), as [119] suggests. The NFI value of 0.903 is also higher than the allowed minimum of 0.90, which means that the fit is good enough. The d_ULS and d_G values are likewise within acceptable ranges, which shows that the empirical and theoretical models converge well. Even though there are some small variations between the saturated model and the structural model, all of these indices confirm the structural model’s validity.

We used Harman’s single-factor test to check for any common method bias (CMB). The first unrotated component only explained 28.4% of the overall variance, which is less than the 50% criterion. This means that CMB is not a significant issue in this study. Also, procedural fixes were put in place to lessen CMB. These included carefully constructing items, using reverse-coded questions, and making sure that respondents’ identities were kept secret to lower social desirability and evaluative apprehension bias.

11.4. Ethical Consideration

This study followed ethical research guidelines to protect participants’ rights and well-being. Informed consent was obtained by clearly explaining the study’s purpose and allowing participants to withdraw at any time. Confidentiality was ensured by anonymizing data and storing them securely. The questionnaire was designed to avoid any discomfort to participants. Additionally, this study received approval from the relevant ethics review boards to ensure compliance with institutional guidelines.

11.5. Data Analysis and Interpretation

Measurement Models

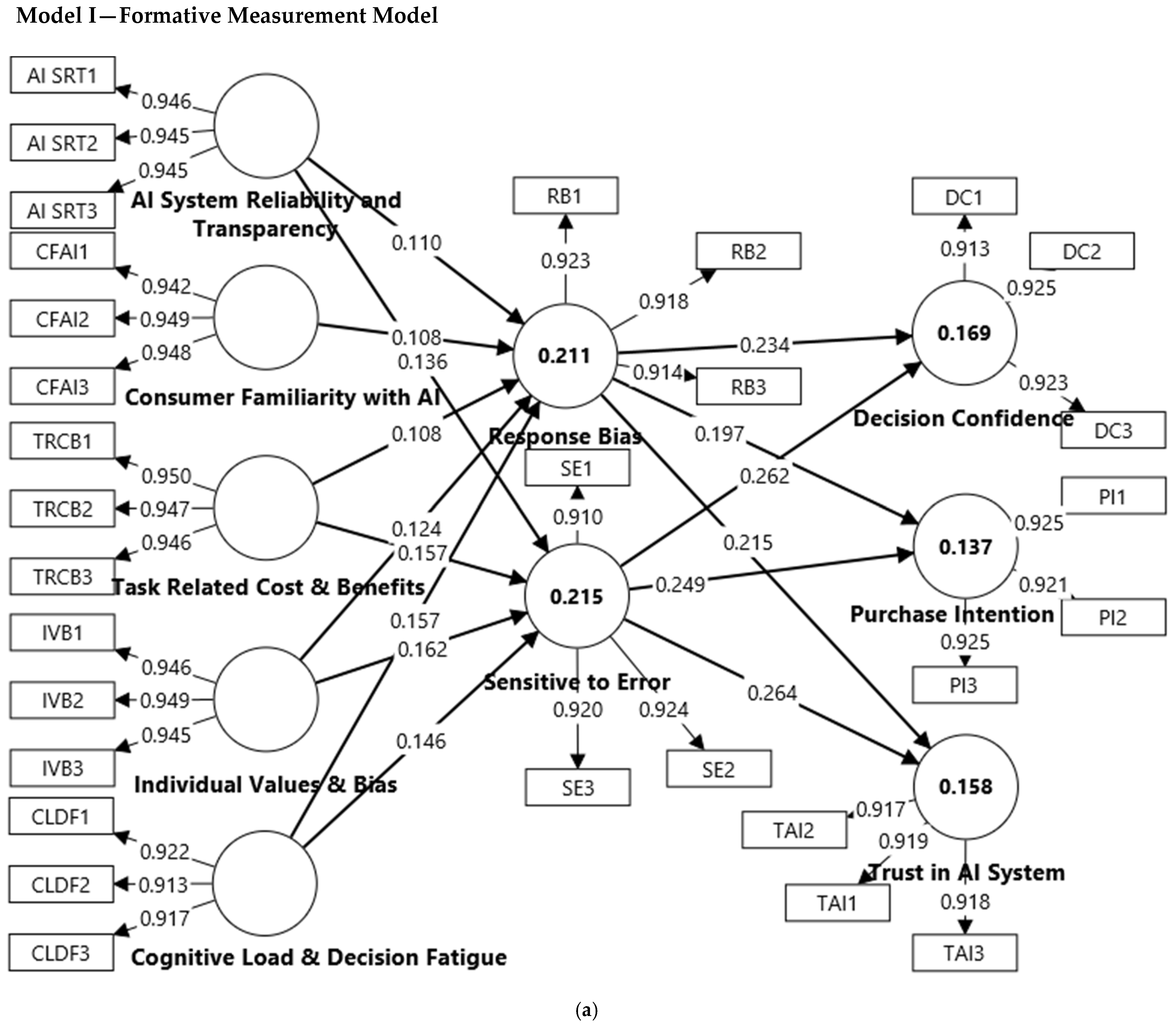

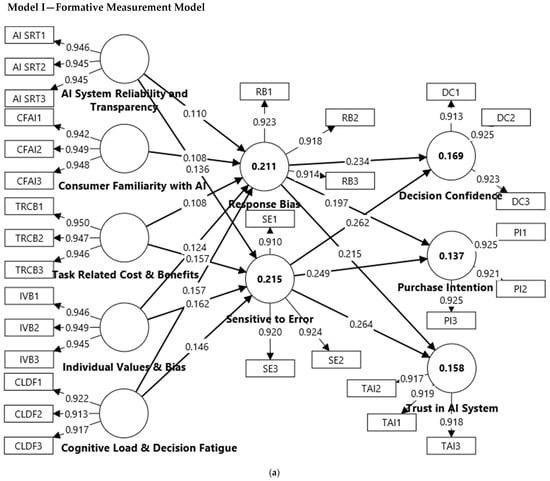

This study ensures a valid assessment of constructions by means of formative and reflective models based on the measurement model. Reflective constructions, including perceived AI decision integrity, trust in AI systems, and decision confidence, presume that indicators are expressions of the latent variable, so changes in the construct influence all indicators. Internal consistency and convergent validity were confirmed by means of Cronbach’s alpha, composite reliability, and AVE evaluation of these factors. Formative constructs—AI system reliability and transparency, cognitive load and decision fatigue, task-related cost and benefits—assume that indications characterize the construct. Using bootstrapping methods and VIF values, they were evaluated to guarantee that every indication significantly adds to the construct. This methodical technique guarantees accurate and valid measurement of important elements affecting AI-driven decision integrity, hence improving the strength of the research.

All of the constructs in Table 1 demonstrate robust reliability and validity. Excellent internal consistency is shown by Cronbach’s alpha values, all of which are above 0.9. With most values being above 0.9, composite reliability (rho_a and rho_c) scores are also quite high, implying strong construct reliability. The Cronbach’s alpha for cognitive load and decision fatigue, at α = 0.906, is indeed borderline for the “excellent” category (Table 1). However, this value is well within the “good” and “excellent” ranges as defined by commonly accepted standards, e.g., [120,121]. With values over the threshold of 0.5, the average variance extracted (AVE) values—ranging from 0.842 to 0.898—confirm that the constructions significantly contribute to the variance in respective indicators. Robust construct validity is shown by constructions like AI system reliability, consumer familiarity with AI, and task-related cost and benefits, which show very high measuring qualities (refer to Figure 2a,b).

Table 1.

Convergent validity—formative.

Figure 2.

(a). Formative measurement model. (b). Reflective measurement model.

Table 2 illustrates that the HTMT (heterotrait–monotrait ratio) values suggest that the majority of the constructs are sufficiently distinct from one another, as none of the HTMT values exceed the recommended threshold of 0.85. For instance, although the scores stay below 0.85, implying they assess different concepts, AI system reliability and transparency show modest HTMT correlations with consumer familiarity with AI (0.617) and individual scores and bias (0.484). Reiteratively, task-related cost and benefits and sensitivity to error exhibit modest associations with other variables (HTMT = 0.592 and 0.399, respectively) and therefore support the discriminant validity [118]. These findings support the robustness of the concept by implying that the constructions do not overlap too much and preserve their distinct measuring qualities.

Table 2.

Discriminant validity—formative.

Table 3 illustrates that the R-square and R-square adjusted values for the constructs show moderate explanatory power. Perceived AI decision integrity has an R-square of 0.283, meaning it explains about 28.3% of the variance in the dependent variables. Similarly, response bias (R-square = 0.211) and sensitivity to error (R-square = 0.215) explain around 21% of the variance in their respective dependent variables. The adjusted R-square values are slightly lower, indicating a minor adjustment for the number of predictors in the model. Overall, these constructs have moderate explanatory power in the model, with perceived AI decision integrity being the strongest.

Table 3.

Model fit and predictive power table.

The model fit indices show a comparison between the saturated and estimated models. The SRMR (standardized root mean square residual) is lower for the saturated model (0.03) compared to the estimated model (0.08), suggesting a better fit for the saturated model. The d_ULS (unweighted least squares discrepancy) and d_G (geodesic discrepancy) are higher for the estimated model (1.91 and 0.341) than for the saturated model (0.267 and 0.282), indicating a poorer fit for the estimated model. The Chi-square value is also higher for the estimated model (6353.978 vs. 5610.708), further suggesting a less optimal fit. Lastly, the NFI (normed fit index) is slightly lower for the estimated model (0.903) than for the saturated model (0.914), indicating a reduced goodness of fit for the estimated model. However, there was a little difference between the saturated (SRMR = 0.03, NFI = 0.914) and estimated models (SRMR = 0.08, NFI = 0.903), although both indices are still within commonly accepted limits [120]. The SRMR value of 0.08 still shows that the model fits well, and the little drop in NFI means that there is only a small trade-off in model parsimony. We also looked at indicator loadings and cross-loadings to make sure the model was strong. They continued to satisfy the model specification without showing any signs of mismatch. Overall, the saturated model fits the data better than the estimated model. The variance inflation factor (VIF) values indicate that most constructs, such as AI system reliability (VIFs between 4.199 and 4.574) and consumer familiarity with AI (VIFs around 4.3), show moderate multicollinearity, but they are not excessively high (generally above 10 would be concerning). The decision confidence, purchase intention, and trust in AI system constructs have lower VIFs (around 1.18), suggesting minimal multicollinearity, which is ideal for model stability (refer to Appendix A).

When comparing the performance of the PLS-SEM, indicator average (IA), and linear model (LM), it is evident that PLS-SEM generally outperforms IA in terms of accuracy, with lower loss values across all variables. For instance, PLS-SEM demonstrates a significant reduction in loss for decision confidence and purchase intention compared to IA, with statistical significance (p-values of 0.000). LM performs marginally better for decision confidence and purchase intention, while PLS-SEM again offers lower loss values for most variables. Even though the linear model is simpler, PLS-SEM is superior for deep research since it captures complicated correlations and subtleties in data. While LM is effective and easier to use, PLS-SEM provides a more precise knowledge of complex dynamics like AI trust and error sensitivity, as shown in Table 4.

Table 4.

Comparison of model performance: PLS-SEM vs. indicator average (IA) and linear model (LM).

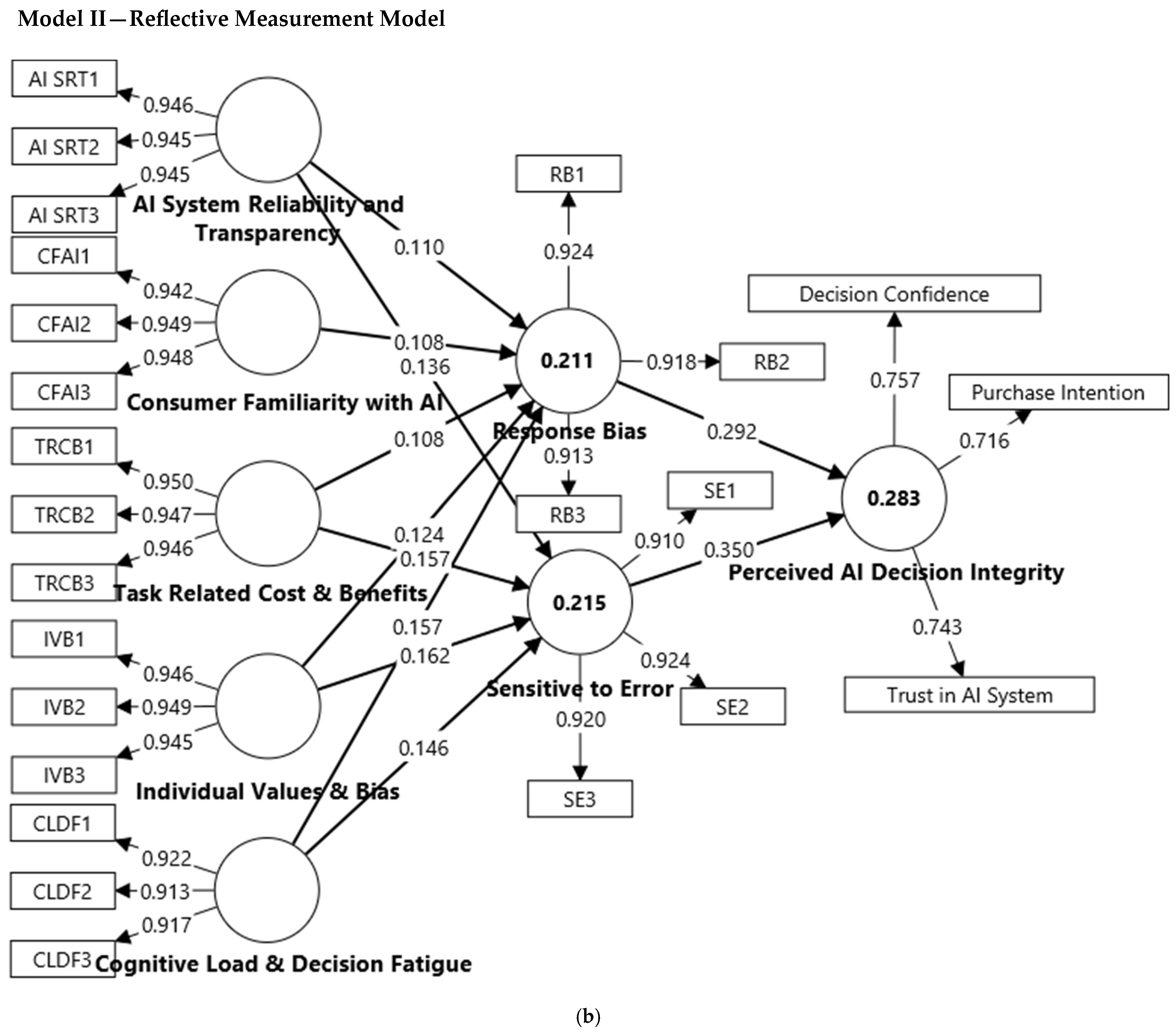

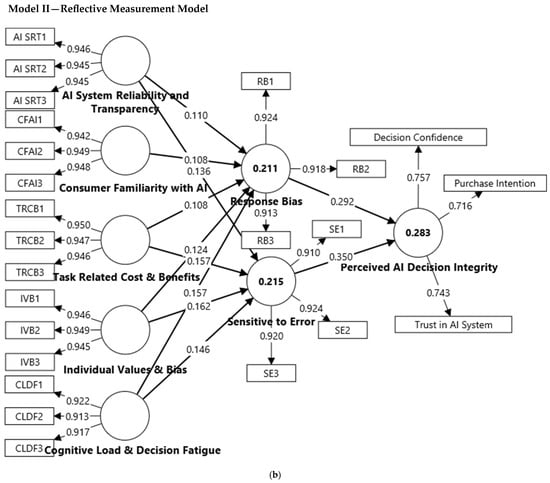

12. Structural Model

In this study, we use partial least squares structural equation modelling (PLS-SEM) to analyze the links between important components that impact perceived AI decision integrity. Between factors including AI system reliability and transparency, cognitive load and decision fatigue, task-related cost and benefits, and individual values and bias, it assesses direct, indirect, and mediator impacts. The model evaluates the response bias and sensitivity to error as mediators and therefore determines their influence on decision integrity. The hypotheses were tested with bootstrapping to guarantee statistical significance, as shown in Figure 3a,b.

Figure 3.

(a). A formative structural model. (b). Reflective structural model.

This study finds significant relationships with AI decision integrity, as shown in Table 5. With a t-statistic of 6.659 and a p-value of 0.000, AI system reliability and transparency → perceived AI decision integrity (H1) has a coefficient of 0.08, which suggests a substantial positive influence. Consumer familiarity with AI (AI) → perceived AI decision integrity (H2) indicates a coefficient of 0.032, a t-statistic of 4.049, and a p-value of 0.000, which indicates that more familiarity favorably influences perceived integrity. Task-related costs and benefits → perceived AI decision integrity (H3) has a coefficient of 0.086, a t-statistic of 7.422, and a p-value of 0.000, which underlines the association between perceived benefits and decision integrity. Individual values and bias → perceived AI decision integrity (H4) indicates a coefficient of 0.093, a t-statistic of 7.689, and a p-value of 0.000, which suggests that personal bias and values help to define the perception of integrity. With a coefficient of 0.097, a t-statistic of 8.93, and a p-value of 0.000, cognitive load and decision fatigue → perceived AI decision integrity (H5) shows that cognitive load and fatigue influence perceived integrity. Each of these links is statistically significant and favorably affects AI decision-making integrity.

Table 5.

Hypothesis testing.

The mediation analysis supports the role of response bias and sensitivity to error in influencing perceived AI decision integrity. H6 (consumer familiarity with AI → response bias → perceived AI decision integrity) is significant with a path coefficient of 0.032 (t = 4.049, p = 0.000), which indicates that familiarity with AI indirectly impacts decision integrity through bias. Similarly, H7 (individual values and bias → sensitivity to error → perceived AI decision integrity) is confirmed with 0.057 (t = 6.451, p = 0.000), which highlights the influence of personal values on error sensitivity. H9 (task-related cost and benefits → sensitivity to error → perceived AI decision integrity) shows a strong mediation effect (0.055, t = 6.314, p = 0.000), which emphasizes that the perceived effort and benefit of AI decisions contribute to error sensitivity. H11 (AI system reliability and transparency → sensitivity to error → perceived AI decision integrity) is also significant (0.048, t = 5.484, p = 0.000), which suggests that trust in AI systems reduces sensitivity to errors, improving perceived integrity. Additionally, H14 (cognitive load and decision fatigue → response bias → perceived AI decision integrity) is supported (0.046, t = 6.837, p = 0.000), which confirms that higher cognitive load increases bias, which negatively impacts AI decision integrity. Overall, the results highlight the importance of mitigating bias and error sensitivity to enhance trust and reliability in AI-driven decision-making.

Indirect effects are more significant than individual mediation effects in determining perceived AI decision integrity, as they show a stronger total impact. However, mediation effects help understand specific mechanisms influencing AI decision integrity, especially sensitivity to error and response bias, which are key mediators. Generally, according to Table 5, although all 14 hypotheses, including mediation effects (H6–H14), are accepted with strong significance (p < 0.001), the mediators with the strongest indirect effects can be ordered by the size of their path coefficients as follows: H10 = 0.031, H6,12 = 0.032, H8 = 0.036, H14 = 0.046, H11 = 0.048, H13 = 0.051, H9 = 0.055, H7 = 0.057.

The f-square values highlight the strength of various relationships in the model. AI system reliability and transparency have a small effect on both response bias (0.008) and sensitivity to error (0.014). Cognitive load and decision fatigue also show small effects, with response bias at 0.025 and sensitivity to error at 0.022. Consumer familiarity with AI has a minimal impact on response bias (0.008), while individual values and biases show a slightly stronger effect on response bias (0.011) and sensitivity to error (0.020). The relationship between response bias and perceived AI decision integrity is moderately strong (0.103), while the connection between sensitivity to error and perceived AI decision integrity is even stronger (0.147). Finally, task-related costs and benefits has a small effects on both response bias (0.008) and sensitive to error (0.018). These results suggest that, while some factors have minor impacts, the influence of sensitivity to error and response bias on perceived AI decision integrity is notable, as shown in Table 6.

Table 6.

F-square effect sizes for key relationships.

However, most of the f-square values are in the relatively small range of 0.008–0.025, yet they are useful for illustrating how people think in complex situations like AI-mediated decision-making. In behavioral and psychological studies, even small impacts can show how little but cumulative changes can affect how people think and what motivates them [122]. So, even if the impact sizes are small when investigated on their own, the results show how important it is to consider both statistical and practical relevance in complex behavioral models. Moreover, the moderate R-square values (between 0.211 and 0.283) also show that the model explains a significant amount of the variance in perceived AI decision integrity, although adding more predictors might make it even better at explaining this phenomenon. In the future, researchers might want to consider adding items like knowledge of algorithmic fairness, digital literacy, emotional reactions to AI, and perceived surveillance risk to the model.

13. Discussion

In this analysis, we observe a set of relationships between various factors that influence decision confidence, purchase intention, response bias, sensitivity to error, and trust in AI systems in retail stores, based on original sample values (O), standard deviations (STDEVs), and t-statistics. The relationships between AI system reliability and transparency and several factors, including decision confidence, purchase intention, response bias, sensitivity to error, and trust in the AI system, are all significant, as indicated by the very low p-values (all p < 0.001) that were obtained. If users trust the AI system to make reliable and transparent decisions, their confidence will be higher. This is shown by the significant positive relationship between these variables [123,124]. For example, AI system reliability and transparency have a moderate positive effect on sensitivity to error (O = 0.136), response bias (O = 0.11), and trust in the AI system (O = 0.06), with high t-values (above 5), which signals that these effects are both significant and reliable [125]. Hence, users are more likely to become aware of any errors (sensitivity to error), may exhibit biases (response bias), and increase their trust in the system.

Similarly, cognitive load and decision fatigue show strong relationships with all the dependent variables, with particularly high impacts on response bias (O = 0.157) and sensitivity to error (O = 0.146), as well as decision confidence (O = 0.075). These relationships have high t-statistics, which suggests they are robust and influential in shaping attitudes toward AI systems [126,127]. When users face cognitive overload or decision fatigue, they experience a reduced capacity for critical thinking and decision-making. This reduces their ability to detect errors (sensitivity to error) and can make them more likely to rely on mental shortcuts, biases, or external sources of authority like AI (response bias) [128,129]. Their confidence in decisions is impacted because the mental strain makes it harder to feel secure in their decisions. As a result, they may place more reliance on the AI system of a retail store for reassurance, even if they are unsure of its accuracy [130].

Additionally, individual values and biases appear to have notable effects, especially on response bias (O = 0.124) and sensitivity to error (O = 0.162), which shows that personal biases significantly affect how individuals perceive AI decisions. Personal values influence how people interpret the world around them, including their interactions with AI systems. If someone values transparency, fairness, or trust, they may scrutinize AI decisions more closely to ensure those values are upheld [131]. If they detect what they perceive to be an error or bias, they are more likely to become sensitive to it and react negatively [132].

Bias can lead shoppers to either over-accept or over-reject AI decisions based on their pre-existing views about technology. For instance, if someone has a bias against technology, they may be more critical of AI decisions, even when they are accurate, which can lead to response bias and increased sensitivity to errors [125,127]. Conversely, if someone has a bias in favor of technology, they might ignore potential flaws in AI outputs, underestimating the sensitivity to errors [130].

Using IPMA (Appendix C), decision confidence factors may be identified. Response bias (performance = 58.987; O = 0.234) and sensitivity to error (performance = 58.581; O = 0.262) have the highest importance and performance gaps, which indicates that these factors affect consumers’ confidence when using AI systems [128]. AI system reliability and transparency (performance = 66.952; O = 0.062) and cognitive load (performance = 58.791; O = 0.075) have lower performance values, which suggests that improving these areas could significantly improve user confidence, echoing [131], who found transparency to be essential for user trust. Personal values and bias (performance = 66.864; O = 0.072) also strongly affect decision confidence. According to [125], addressing response bias, sensitivity to error, transparency, and cognitive burden might boost decision confidence regarding AI systems.

Purchase intention indicates that sensitivity to error (O = 0.249, performance = 58.581) and response bias (O = 0.197, performance = 58.987) have the greatest effect, which shows that individuals are less likely to buy when AI’s conclusions are wrong [128]. AI judgment accuracy substantially affects consumer trust. Complex or overwhelming AI interactions reduce purchase intention, supporting [126]. Also important are cognitive load and decision fatigue (O = 0.067, performance = 58.791). AI system dependability and transparency (O = 0.056, performance = 66.952) and task-related costs and rewards (O = 0.06, performance = 66.753) have small effects, which demonstrates that consumers favor transparent AI systems but are influenced by other psychological variables [123,130].

Lastly, consumer familiarity with AI (O = 0.021, performance = 66.059) has the least impact, which indicates that mere exposure to AI does not strongly drive purchase behavior, a notion supported by [130]. Overall, reducing AI errors, addressing bias, and simplifying decision processes could significantly enhance consumers’ purchase intention [125].

Trust in AI systems reveals that sensitivity to error (O = 0.264, performance = 58.581) and response bias (O = 0.215, performance = 58.987) have the highest impact, which indicates that, when AI systems are prone to errors or bias, users’ trust in them significantly declines [128]. Cognitive load and decision fatigue (O = 0.072, performance = 58.791) also affect trust, which suggests that mentally exhausting AI interactions reduce confidence in AI recommendations, supporting the conclusions of [126].

Meanwhile, AI system reliability and transparency (O = 0.06, performance = 66.952) and individual values and bias (O = 0.07, performance = 66.864) have moderate effects, which reinforces the idea that personal beliefs and system transparency shape trust [130]. The values observed for task-related cost and benefits (O = 0.065, performance = 66.753) further indicate that, when AI systems demonstrate clear advantages, trust improves [125]. However, consumer familiarity with AI (O = 0.023, performance = 66.059) has the least influence, which implies that merely knowing about AI does not automatically lead to trust, which aligns with [132]. These insights highlight the importance of minimizing AI bias and errors while ensuring a seamless user experience to enhance trust (refer to Appendix C).

The findings add to the continuing discussion in the fields of AI ethics and trust by illustrating how people judge AI not just on how useful it is but also on how it affects their cognitive health and moral values. This study builds on what we already know by using SDT and CLT to combine motivational and cognitive factors that are generally left out of standard models. This shows that trust in AI depends on more than just how open and reliable the system is. It also depends on whether the AI promotes autonomy, avoids cognitive overload, and is in line with human values. In doing so, this study presents a dual pathway to understanding of AI trust: one that accounts for psychological fulfilment and one that accounts for cognitive feasibility. This framework helps developers make ethical AI by making them think about both how decisions are made and how consumers feel about them.

However, this study was conducted within a single country, which provided it with depth and consistency, but which also means that the results that may not apply to other cultures. However, this approach was intentional to control sociocultural variability that could otherwise confound the results. Since confidence in AI systems depends on culture, employing a single kind of sample makes it easier to study the psychological and cognitive processes that are going on. Studies have demonstrated that cultural factors, including individuality vs. collectivism, power distance, and avoiding ambiguity, have a significant effect on how people experience AI systems [132,133]. For example, those from cultures that ignore uncertainty may be less likely to trust AI recommendations, whereas people from cultures with significant power distance may be more likely to trust algorithmic authority. This also opens avenues for future cross-cultural research to confirm these results in a wider range of people and examine more closely how cultural values affect cognitive load and motivation in molding people’s views on the integrity of AI decisions.

14. Limitations and Future Direction of Research

While this study does provide several useful contributions, it does have limitations. First, the sample only includes respondents from one nation, which may make it hard to apply the results to other cultures or regulatory environments. Cultural variables, including power distance, uncertainty avoidance, and individualism, may affect how much people trust AI systems. Further study is needed to find out how these aspects affect trust across cultures. Second, this study uses self-reported data, which can still be affected by social desirability or recall bias even when there are safeguards against common method bias. Third, the model includes both cognitive and motivational elements, but it does not take into account other crucial things like how people feel about AI, how safe they think they are from being watched, or how conscious they are of algorithmic fairness. Lastly, this study used a cross-sectional design, which makes it difficult to draw causal conclusions. Longitudinal research would help us understand how trust and perceived decision integrity change over time as people continue to be exposed to AI. These constraints point to a number of areas for future study that might help us better understand how to embrace ethical and trustworthy AI.

To make the results more valid for other situations, future studies should use cross-industry or cross-cultural study designs with multi-group structural equation modelling (SEM) or moderation analysis. Researchers may consider using stratified sampling across nations with different cultural indices based on Hofstede’s dimensions (such as uncertainty avoidance, power distance, and individualism) for cross-cultural research. Using well-known instruments like the cultural intelligence scale [134] or Schwartz’s cultural values framework can help make the construct more valid. To put cross-industry research into action, researchers may choose sectors with varying levels of AI maturity (such as healthcare, retail, and fintech) to examine how the cognitive load and motivating trust change in different situations. Researchers should employ survey instruments that are specialized to their field while keeping the essential ideas the same. This will allow for meaningful comparisons. These changes to the methods can help make the present model more reliable and useful in a wider range of situations.

Therefore, the impact of AI transparency on trust in sectors such as tourism services, healthcare, banking, and retail should be investigated in future studies. This includes consumer involvement, service quality, and comparing black-box AI with explainable AI. Trust and response bias can be highlighted by cultural and demographic disparities in AI adoption. The consumer intention and behavior gap in different cultural contexts can drive the nexus between AI usage and green consumption research in the future [135]. The best way to track how AI decision-making performs over time is to conduct trials in real-world settings. Research on the effects of AI mistakes on trust in decisions is essential, particularly in domains where human lives are on the line. We can learn more about AI bias and decision integrity by using theories like the TAM and prospect theory, and we can reduce the likelihood of AI-driven mistakes and bias by concentrating on ethical AI frameworks.

15. Implications of the Study

This study’s results suggest a number of specific steps that AI system designers and retailers may take to improve consumer trust and encourage ethical AI usage. First, enhancing explainability is essential—designers should incorporate user-centric transparency features such as visual cues, simple language explanations, or step-by-step logic trails that clarify how AI decisions are made, particularly in sensitive domains like healthcare or finance. This makes consumers feel like they have control and that things are fair. Second, simplifying user interfaces can help with cognitive overload. For example, limiting the number of recommendations per screen and putting information in a hierarchy can help people think more clearly and make decisions more quickly. Third, it is important to help people get used to AI systems. This may be done through onboarding tutorials, interactive walkthroughs, or simulated decision settings that enable users, especially those who are less experienced, to feel more comfortable and confident. Fourth, it is becoming more and more critical to make sure that AI suggestions are in line with what people value. Personalization algorithms that include ethical filters like social responsibility or sustainability choices help users feel like their beliefs are being honored. Finally, designers and retailers need to keep an eye on automation bias and how sensitive users are to AI mistakes. This means building in frequent audits, giving users clear ways to report mistakes, and letting them change AI-made judgments when they need to. All of these things together encourage both the use of AI by consumers and the responsible use of AI.

Further, this study provides three significant contributions to the literature on AI trust. First, it brings together SDT and CLT into a dual-theoretical framework. This goes beyond typical acceptance models by examining both the motivational and cognitive aspects of confidence in AI. Second, it makes the concept of “Perceived AI Decision Integrity” more useful by creating a multidimensional construct that connects system transparency, user autonomy, mental effort, and ethical alignment—areas that are generally looked at separately. Third, it turns these theoretical ideas into practical recommendations for AI developers and retailers, encouraging system design that is based on trust. By doing this, this study helps us comprehend ethical AI use in consumer-facing settings in a more complete and psychologically sound way.

16. Conclusions

The present study highlights the intricate relationship between the following factors: AI system transparency, familiarity with the system, cognitive load, individual bias, and perceived decision integrity. System accuracy alone determines trust in AI; psychological and environmental elements, including cognitive bias, decision fatigue, and human values, all play a role.

Explainable AI (XAI) solutions are needed to increase user confidence, since AI system transparency affects trust. Clear, sensible reasoning from AI systems makes outcomes seem fair and consistent to users. Response bias and heightened sensitivity to mistakes may hinder AI adoption, emphasizing the need to address these challenges in AI system design. Consumer awareness of AI positively correlates with trust and decision confidence, which suggests that increased exposure and education might minimize uncertainty. Cognitive load and decision fatigue hinder AI adoption. Overloading customers with data or tough decision-making might generate mental fatigue, reducing their ability to assess AI advice. This research paper highlights how personal biases and preconceptions affect AI perceptions. Technologically inclined consumers may trust AI recommendations more, whereas ethically inclined ones may be more skeptical. To address these differences, AI systems should integrate adaptive learning algorithms that tailor decision-making to user preferences and cognitive tendencies.

Promoting confidence in AI-driven systems requires resolving transparency issues, minimizing cognitive load, and minimizing bias. Companies and policymakers must set moral rules to ensure AI choices meet customer expectations and societal norms. Adaptive AI solutions that address ethical considerations and user-specific decision-making patterns should be studied in the future to enable responsible and trustworthy AI deployment.

Author Contributions

Conceptualization, Y.M.S.; Methodology, Y.M.S. and S.M.F.A.K.; Software, S.M.F.A.K.; Validation, Y.M.S.; Formal analysis, S.M.F.A.K.; Data curation, Y.M.S.; Writing—original draft, S.M.F.A.K.; Writing—review & editing, Y.M.S. and S.M.F.A.K.; Supervision, Y.M.S.; Project administration, Y.M.S.; Funding acquisition, Y.M.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Acknowledgments

The authors gratefully acknowledge the funding of the Deanship of Graduate Studies and Scientific Research, Jazan University, Saudi Arabia, through Project number: JU- 202503293-DGSSR-RP-2025.

Conflicts of Interest

The author declares that there are no conflicts of interest.

Appendix A. VIF

| VIF | |

| AI SRT1 | 4.330 |

| AI SRT2 | 4.252 |

| AI SRT3 | 4.334 |

| CFAI1 | 4.199 |

| CFAI2 | 4.574 |

| CFAI3 | 4.434 |

| CLDF1 | 3.038 |

| CLDF2 | 2.889 |

| CLDF3 | 2.898 |

| Decision Confidence | 1.192 |

| IVB1 | 4.352 |

| IVB2 | 4.551 |

| IVB3 | 4.331 |

| Purchase Intention | 1.174 |

| RB1 | 3.103 |

| RB2 | 3.020 |

| RB3 | 2.827 |

| SE1 | 2.742 |

| SE2 | 3.111 |

| SE3 | 3.082 |

| TRCB1 | 4.682 |

| TRCB2 | 4.456 |

| TRCB3 | 4.367 |

| Trust in AI System | 1.185 |

Appendix B. Indirect Effect: Formative

| Paths | Original Sample (O) | Sample Mean (M) | Standard Deviation (STDEV) | t-Statistics (|O/STDEV|) | p-Values |

| AI System Reliability and Transparency -> Decision Confidence | 0.062 | 0.062 | 0.009 | 6.538 | 0.000 |

| AI System Reliability and Transparency -> Purchase Intention | 0.056 | 0.056 | 0.009 | 6.494 | 0.000 |

| AI System Reliability and Transparency -> Trust in AI System | 0.060 | 0.060 | 0.009 | 6.474 | 0.000 |

| Cognitive Load and Decision Fatigue -> Decision Confidence | 0.075 | 0.075 | 0.009 | 8.518 | 0.000 |

| Cognitive Load and Decision Fatigue -> Purchase Intention | 0.067 | 0.068 | 0.008 | 8.386 | 0.000 |

| Cognitive Load and Decision Fatigue -> Trust in AI System | 0.072 | 0.073 | 0.008 | 8.526 | 0.000 |

| Consumer Familiarity with AI -> Decision Confidence | 0.025 | 0.025 | 0.006 | 3.928 | 0.000 |

| Consumer Familiarity with AI -> Purchase Intention | 0.021 | 0.021 | 0.006 | 3.747 | 0.000 |

| Consumer Familiarity with AI -> Trust in AI System | 0.023 | 0.023 | 0.006 | 3.964 | 0.000 |

| Individual Values and Bias -> Decision Confidence | 0.072 | 0.072 | 0.010 | 7.470 | 0.000 |

| Individual Values and Bias -> Purchase Intention | 0.065 | 0.065 | 0.009 | 7.445 | 0.000 |

| Individual Values and Bias -> Trust in AI System | 0.070 | 0.070 | 0.009 | 7.359 | 0.000 |

| Task Related Cost and Benefits -> Decision Confidence | 0.066 | 0.066 | 0.009 | 7.133 | 0.000 |

| Task Related Cost and Benefits -> Purchase Intention | 0.060 | 0.060 | 0.008 | 7.171 | 0.000 |

| Task Related Cost and Benefits -> Trust in AI System | 0.065 | 0.065 | 0.009 | 7.202 | 0.000 |

Appendix C. IPMA Analysis

| Constructs | Performance | Decision Confidence | Purchase Intention | Trust in AI System |

| AI System Reliability and Transparency | 66.952 | 0.062 | 0.056 | 0.06 |

| Cognitive Load and Decision Fatigue | 58.791 | 0.075 | 0.067 | 0.072 |

| Consumer Familiarity with AI | 66.059 | 0.025 | 0.021 | 0.023 |

| Individual Values and Bias | 66.864 | 0.072 | 0.065 | 0.07 |

| Response Biase | 58.987 | 0.234 | 0.197 | 0.215 |

| Sensitive to Error | 58.581 | 0.262 | 0.249 | 0.264 |

| Task Related Cost and Benefits | 66.753 | 0.066 | 0.06 | 0.065 |

References

- Schmitt, M. Automated machine learning: AI-driven decision making in business analytics. Intell. Syst. Appl. 2023, 18, 200188. [Google Scholar] [CrossRef]

- Chattaraman, V.; Kwon, W.S.; Ross, K.; Sung, J.; Alikhademi, K.; Richardson, B.; Gilbert, J.E. ‘Smart’ Choice? Evaluating AI-Based mobile decision bots for in-store decision-making. J. Bus. Res. 2024, 183, 114801. [Google Scholar] [CrossRef]

- Erman, E.; Furendal, M. The Global Governance of Artificial Intelligence: Some Normative Concerns. Moral Philos. Politics 2022, 9, 267–291. [Google Scholar] [CrossRef]

- Mele, C.; Spena, T.R.; Kaartemo, V.; Marzullo, M.L. Smart nudging: How cognitive technologies enable choice architectures for value co-creation. J. Bus. Res. 2021, 129, 949–960. [Google Scholar] [CrossRef]

- Candrian, C.; Scherer, A. Rise of the machines: Delegating decisions to autonomous AI. Comput. Hum. Behav. 2022, 134, 107308. [Google Scholar] [CrossRef]

- Islam, Q.; Ali Khan, S.M.F. Sustainability-Infused Learning Environments: Investigating the Role of Digital Technology and Motivation for Sustainability in Achieving Quality Education. Int. J. Learn. Teach. Educ. Res. 2024, 23, 519–548. [Google Scholar] [CrossRef]

- Shin, D.; Al-Imamy, S.; Hwang, Y. Cross-cultural differences in information processing of chatbot journalism: Chatbot news service as a cultural artifact. Cross Cult. Strateg. Manag. 2022, 29, 618–638. [Google Scholar] [CrossRef]

- Sung, B.; Phau, I.; Duong, V.C. Opening the ‘black box’ of luxury consumers: An application of psychophysiological method. J. Mark. Commun. 2021, 27, 250–268. [Google Scholar] [CrossRef]

- Nasseef, O.A.; Baabdullah, A.M.; Alalwan, A.A.; Lal, B.; Dwivedi, Y.K. Artificial intelligence-based public healthcare systems: G2G knowledge-based exchange to enhance the decision-making process. Gov. Inf. Q. 2022, 39, 101618. [Google Scholar] [CrossRef]

- Hudon, A.; Demazure, T.; Karran, A.; Léger, P.M.; Sénécal, S. Explainable Artificial Intelligence (XAI): How the Visualization of AI Predictions Affects User Cognitive Load and Confidence. In Information Systems and Neuroscience; Lecture Notes in Information Systems and Organisation; Springer: Cham, Switzerland, 2021. [Google Scholar] [CrossRef]

- Ryan, R.M.; Deci, E.L. Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. Am. Psychol. 2000, 55, 68–78. [Google Scholar] [CrossRef]

- Orru, G.; Longo, L. The Evolution of Cognitive Load Theory and the Measurement of Its Intrinsic, Extraneous and Germane Loads: A Review. In Human Mental Workload: Models and Applications; Springer: Cham, Switzerland, 2019; pp. 23–48. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Deci, E.L.; Ryan, R.M. Self-determination theory: A macrotheory of human motivation, development, and health. Can. Psychol. 2008, 49, 182. [Google Scholar] [CrossRef]

- Kahneman, D.; Tversky, A. Prospect Theory: An Analysis of Decision under Risk. Econometrica 1979, 47, 263–291. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load during problem solving: Effects on learning. Cogn. Sci. 1988, 12, 257–285. [Google Scholar] [CrossRef]

- de Jong, T. Cognitive load theory, educational research, and instructional design: Some food for thought. Instr. Sci. 2010, 38, 105–134. [Google Scholar] [CrossRef]

- Laux, J.; Wachter, S.; Mittelstadt, B. Trustworthy artificial intelligence and the European Union AI act: On the conflation of trustworthiness and acceptability of risk. Regul. Gov. 2024, 18, 3–32. [Google Scholar] [CrossRef] [PubMed]

- Abadie, A.; Roux, M.; Chowdhury, S.; Dey, P. Interlinking organisational resources, AI adoption and omnichannel integration quality in Ghana’s healthcare supply chain. J. Bus. Res. 2023, 162, 113866. [Google Scholar] [CrossRef]

- Robertson, J.; Botha, E.; Oosthuizen, K.; Montecchi, M. Managing change when integrating artificial intelligence (AI) into the retail value chain: The AI implementation compass. J. Bus. Res. 2025, 189, 115198. [Google Scholar] [CrossRef]

- Wani, N.A.; Kumar, R.; Bedi, J.; Rida, I. Explainable AI-driven IoMT fusion: Unravelling techniques, opportunities, and challenges with Explainable AI in healthcare. Inf. Fusion 2024, 110, 102472. [Google Scholar] [CrossRef]

- Ding, L.; Antonucci, G.; Venditti, M. Unveiling user responses to AI-powered personalised recommendations: A qualitative study of consumer engagement dynamics on Douyin. Qual. Mark. Res. 2025, 28, 234–255. [Google Scholar] [CrossRef]

- Angerschmid, A.; Zhou, J.; Theuermann, K.; Chen, F.; Holzinger, A. Fairness and Explanation in AI-Informed Decision Making. Mach. Learn. Knowl. Extr. 2022, 4, 556–579. [Google Scholar] [CrossRef]

- Li, Y.; Wu, B.; Huang, Y.; Liu, J.; Wu, J.; Luan, S. Warmth, Competence, and the Determinants of Trust in Artificial Intelligence: A Cross-Sectional Survey from China. Int. J. Hum. Comput. Interact. 2024, 41, 5024–5038. [Google Scholar] [CrossRef]

- Adadi, A.; Berrada, M. Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI). IEEE Access 2018, 6, 52138–52160. [Google Scholar] [CrossRef]

- Saeed, W.; Omlin, C. Explainable AI (XAI): A systematic meta-survey of current challenges and future opportunities. Knowl. Based Syst. 2023, 263, 110273. [Google Scholar] [CrossRef]

- Hoofnagle, C.J.; van der Sloot, B.; Borgesius, F.Z. The European Union general data protection regulation: What it is and what it means. Inf. Commun. Technol. Law 2019, 28, 65–98. [Google Scholar] [CrossRef]

- Arulprakash, E.; Martin, A. An object-oriented neural representation and its implication towards explainable AI. Int. J. Inf. Technol. 2024, 16, 1303–1318. [Google Scholar] [CrossRef]

- Shehawy, Y.M.; Faisal Ali Khan, S.M.; Ali M Khalufi, N.; Abdullah, R.S. Customer adoption of robot: Synergizing customer acceptance of robot-assisted retail technologies. J. Retail. Consum. Serv. 2025, 82, 104062. [Google Scholar] [CrossRef]

- Li, B.; Chang, Y.; Liu, L.; Liu, H.; Sun, J. How does AI agent (vs. IVR system) service failure impact customer purchase behavior: Mediating effect of customer involvement. Serv. Ind. J. 2024, 45, 702–720. [Google Scholar] [CrossRef]

- Chen, Y.; Wang, H.; Hill, S.R.; Li, B. Consumer attitudes toward AI-generated ads: Appeal types, self-efficacy and AI’s social role. J. Bus. Res. 2024, 185, 114867. [Google Scholar] [CrossRef]

- Gonçalves, A.R.; Pinto, D.C.; Rita, P.; Pires, T. Artificial Intelligence and Its Ethical Implications for Marketing. Emerg. Sci. J. 2023, 7, 313–327. [Google Scholar] [CrossRef]

- Chi, O.H.; Gursoy, D.; Chi, C.G. Tourists’ Attitudes toward the Use of Artificially Intelligent (AI) Devices in Tourism Service Delivery: Moderating Role of Service Value Seeking. J. Travel Res. 2022, 61, 170–185. [Google Scholar] [CrossRef]

- Abidi, S.S.A.; Khan, S.M.F.A. Payment Mode Influencing Consumer Behavior: Cashless Payment Versus Conventional Payment System in India. Manag. Dyn. 2022, 19, 45–56. [Google Scholar] [CrossRef]

- Zhao, T.; Ran, Y.; Wu, B.; Wang, V.L.; Zhou, L.; Wang, C.L. Virtual versus human: Unraveling consumer reactions to service failures through influencer types. J. Bus. Res. 2024, 178, 114657. [Google Scholar] [CrossRef]

- Abdullah, R.S.; Masmali, F.H.; Alhazemi, A.; Onn, C.W.; Ali Khan, S.M.F. Enhancing institutional readiness: A Multi-Stakeholder approach to learning analytics policy with the SHEILA-UTAUT framework using PLS-SEM. Educ. Inf. Technol. 2025. [Google Scholar] [CrossRef]

- Trzebiński, W.; Marciniak, B. Recommender system information trustworthiness: The role of perceived ability to learn, self-extension, and intelligence cues. Comput. Hum. Behav. Rep. 2022, 6, 100193. [Google Scholar] [CrossRef]

- Riegger, A.S.; Klein, J.F.; Merfeld, K.; Henkel, S. Technology-enabled personalization in retail stores: Understanding drivers and barriers. J. Bus. Res. 2021, 123, 140–155. [Google Scholar] [CrossRef]

- Grewal, D.; Roggeveen, A.L.; Benoit, S.; Andrade, M.L.O.; Wetzels, R.; Wetzels, M. A new era of technology-infused retailing. J. Bus. Res. 2025, 188, 115095. [Google Scholar] [CrossRef]

- Shehawy, Y.M.; Khan, S.M.F.A.; Alshammakhi, Q.M. The Knowledgeable Nexus Between Metaverse Startups and SDGs: The Role of Innovations in Community Building and Socio-Cultural Exchange. J. Knowl. Econ. 2025. [Google Scholar] [CrossRef]

- Guo, Y.; Xiang, Y. Cost–benefit analysis of photovoltaic-storage investment in integrated energy systems. Energy Rep. 2022, 8, 66–71. [Google Scholar] [CrossRef]

- Kak, S.M.; Agarwal, P.; Alam, M.A. Task Scheduling Techniques for Energy Efficiency in the Cloud. EAI Endorsed Trans. Energy Web 2022, 9, e6. [Google Scholar] [CrossRef]

- Seidman, G.; Atun, R. Does task shifting yield cost savings and improve efficiency for health systems? A systematic review of evidence from low-income and middle-income countries. Hum. Resour. Health 2017, 15, 29. [Google Scholar] [CrossRef] [PubMed]

- Gleim, M.R.; McCullough, H.; Gabler, C.; Ferrell, L.; Ferrell, O.C. Examining the customer experience in the metaverse retail revolution. J. Bus. Res. 2025, 186, 115045. [Google Scholar] [CrossRef]

- Zheng, H.; Hou, W.; Wang, H. Optimal task pricing in reward witkey business model. J. Inf. Comput. Sci. 2009, 6, 383–390. [Google Scholar]

- Stylianou, C.; Andreou, A.S. Investigating the impact of developer productivity, task interdependence type and communication overhead in a multi-objective optimization approach for software project planning. Adv. Eng. Softw. 2016, 98, 79–96. [Google Scholar] [CrossRef]

- Deng, Q.; Yang, L. Research on product development resource allocation modeling based on hierarchical colored Petri net. Appl. Mech. Mater. 2010, 44–47, 138–142. [Google Scholar] [CrossRef]

- Chiang, H.Y.; Lin, B.M.T. A Decision Model for Human Resource Allocation in Project Management of Software Development. IEEE Access 2020, 8, 38073–38081. [Google Scholar] [CrossRef]

- Bussmann, N.; Giudici, P.; Marinelli, D.; Papenbrock, J. Explainable AI in Fintech Risk Management. Front. Artif. Intell. 2020, 3, 26. [Google Scholar] [CrossRef] [PubMed]